1. Introduction

Due to the rapid growth of remote sensing, multispectral (MS) remote sensing images have exhibited increasing potential for more and more applications ranging from independent land mapping services to government and military activities. However, with the development of image processing and network transmission techniques, it has become easier to tamper with or forge multispectral remote sensing images during the process of processing and transmission. For example, the widespread use of sophisticated image editing tools can make the authenticity and integrity of MS images suffer from a serious threat, even rendering the multispectral images useless. Therefore, ensuring the content integrity of the MS image is a major issue before the multispectral image can be used. A perceptual hash algorithm, also known as a robustness hash algorithm, is able to solve the problems of MS image content authentication, while the classic cryptographic authentication algorithms, such as MD5 and SHA1, are not suitable for this purpose since they are sensitive to each bit of the input image.

A perceptual hash algorithm maps an input image into a compactible feature vector called perceptual hash value, which is a short summary of an image’s perceptual content. Perceptual hash algorithms have been developed as a frontier research topic in the field of digital media content security, and they can be applied for image content authentication, image retrieval, image registration, and digital watermarking. Similar to cryptographic hash functions, the perceptual hash algorithm compresses the representation of the perceptual features of an image to generate a fixed-length sequence, which ensures that perceptually similar images produce similar sequences [

1].

In recent years, a number of perceptual hash algorithms have been proposed to meet the requirements of different types of data [

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15]. Ahmed et al. [

2] propose a perceptual hash algorithm for image authentication, which uses a secret key to randomly modulate image pixels to create a transformed feature space. It offers good robustness and it can detect minute tampering with localization of the tampered area, but it is not able be applied to an MS image with many more bands. Hadmi et al. [

3] propose a novel perceptual image hash algorithm based on block truncation coding. Sun et al. [

4] develop a perceptual hash based on compressive sensing and Fourier–Mellin transformation. The proposed method is robust to a wide range of distortions and attacks, and it yields better identification performances under geometric attacks such as rotation attacks and brightness changes. Yan et al. [

5] propose a multi-scale image hashing method by using the location-context information of the features generated by adaptive and local feature extraction techniques. Cui et al. [

6] propose a hash algorithm for 3D images by selecting suitable Dual-tree complex wavelet transform coefficients to form the final hash sequence. Qin et al. [

7] propose a perceptual hash algorithm for images based on salient structure features, which can be applied in image authentication and retrieval. Chen et al. [

8] propose a perceptual audio hash algorithm based on maximum-likelihood watermarking detection, which can be applied in a content-based search. Yang et al. [

9] propose a wave atom transform (WAT) based image hash algorithm using distributed source coding to reduce the size of hash code, providing a better performance than existing WAT. Tang et al. [

10] propose a perceptual hash algorithm with innovative use of discrete cosine transform and local linear embedding, which can be used in image authentication, image retrieval, copy detection and image forensics. Sun et al. [

11] propose a video hash model based on a deep belief network, which generates the video hash from visual attention features. Chen et al. [

13] propose a Discrete Cosine Transform (DCT) based perceptual hash scheme to track vehicle candidates and achieve a high level of robustness. Qin et al. [

14] exploit the circle-based and the block-based strategies to extract the structural features. Fang et al. [

15] adopt a gradient-based perceptual hash algorithm to encode invariant macro structures of person images to make the representation robust to both illumination and viewpoint changes.

However, only a few researches on the perceptual hashing for multispectral remote sensing image have been carried out. The existing perceptual hash algorithms do not take the characteristics of MS images into consideration and they are not suitable for MS images. Therefore, new perceptual hash algorithms need to be introduced to solve the problems of MS image authentication.

Different from MS remote sensing images, panchromatic (PAN) remote sensing images with the characteristics of high spatial resolution and low spectral resolution can be authenticated by the existing perceptual hash algorithms for normal images, as the digital form of PAN images is the same as normal images. In contrast, an MS remote sensing image is characterized by lower spatial resolution than PAN, but higher spectral resolution, which makes the existing perceptual hash algorithms for imaging unsuitable for multispectral remote sensing image authentication.

An MS image obtains information from the visible and the near-infrared spectrum of reflected light; it is composed of a set of (more than three) monochrome images of the same scene, each of which is referred to as a band and is taken at a specific wavelength. Whereas the normal color image is composed of only three monochrome images, the PAN image has only one band. The bands of the MS remote sensing image represent the earth’s surface in different spectral sampling intervals and have clear physical meanings, while the existing perceptual hash algorithm does not take this into account and cannot perceive the content of each band. Moreover, multispectral images are generally of large sizes (some may be over several GB), while most existing perceptual hash algorithms compute the hash value from an image’s global features and are generally not sensitive to local modification in the multispectral remote sensing images.

This paper addresses the above problems by presenting a novel perceptual hash algorithm for multispectral remote sensing image authentication. In this paper, we made the following contributions:

- (a)

In the proposed hash algorithm, we adopt affinity propagation (AP) clustering to separate the bands into several band subsets to reduce redundancy, in which mutual information (MI) is chosen to measure the similarity of the bands. Therefore, the input image of our algorithm can be of an arbitrary band number to avoid setting the number of band clusters.

- (b)

Based on the analysis of data characteristics of the MS image and the basic principles of perceptual hash, we adopt the band fusion technique to extract the principle features and obtain compact hash values for each band subset, which overcomes the deficiencies in existing perceptual hash algorithms for mono-spectral images.

- (c)

We introduce grid entropy-based adaptive weighted fusion rules to obtain comprehensive features of the grid in the same geographic region. This helps improve the preservation of detailed information as much as possible.

The remainder of this paper is organized as follows.

Section 2 gives a brief introduction to perceptual hash algorithms and discusses the related work.

Section 3 describes our proposed algorithm in detail.

Section 4 presents our experimental results and analysis. Finally, we draw conclusions in

Section 5.

2. Preliminaries

2.1. Overview of Perceptual Hash

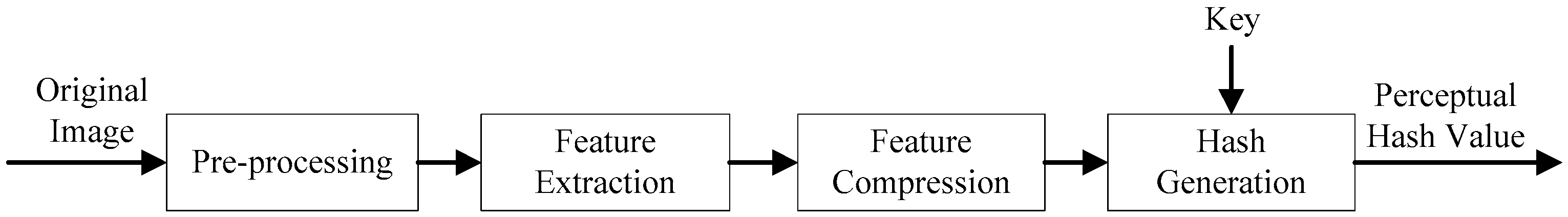

As shown in

Figure 1, perceptual hash algorithms generally consist of the following stages: preprocessing, feature extraction, feature quantification, hash generation.

Pre-processing generally removes redundant information in an image, making it easier to extract features from the image later on. Feature extraction is to extract the principle features of the image using a specific extraction method. Feature compression is to fuzzy up the extracted features in order to enhance robustness. Hash generation is to make the quantitative characteristics more abstract, because the quantitative features may lead to a large amount of data being suitable as an output sequence. For image authentication, a perceptual hash algorithm should possess the following properties:

Compactness: The hash value of the image should be as compact as possible, so that it is easier to transport, store and use.

Sensitivity to tampering: Visually distinct images should have significantly different hash values.

Perceptual robustness: The hash generation should be insensitive to a certain level of distortion with respect to the input image.

Security: Calculation of image hashing depends on a secret key, that is, different secret keys can generate significantly different hash values for the same image.

For the authentication of an MS image, the perceptual hash algorithm has to be sensitive to malicious tampering operations and to be robust to content-preserving ones. Compared with normal images, MS images have higher requirements for measurement accuracy, and their pixels with coordinates can be used for measuring geometrical locations after the image has been corrected and processed, which means the authentication algorithm should have high authentication precision and be able to detect micro-tampering of the image.

The simplest way to generate multispectral image perceptual hashing is to generate the hash value for each band. However, this would lead to a huge data volume of hash values, while the perceptual hash values should be as compact as possible to be convenient for data authentication. In addition, there are some correlations between each band, which result in high redundancy and a great amount of computation time wasted in hash generation.

In this paper, we separate the bands into several band subsets with a clustering algorithm to reduce the content redundancy, and then we adopt a band fusion-based feature extraction technique to obtain the compact feature of each band subset, which could be suitable for hashing computation. Furthermore, grid division is adopted to divide each band into grids and make the hash value more sensitive to local modification in the MS images.

On the other hand, feature extraction is a key stage of the perceptual hash. For remote sensing image authentication, edge characteristics based on perceptual hash can achieve higher precision [

16]. The sensing images would have little value if the edge characteristics had been greatly changed. Additionally, edge characteristics contain effective information for applications such as object extraction, image segmentation and target recognition. Therefore, we adopt edge features as the perceptual feature to generate perceptual hash value.

2.2. Affinity Propagation and Mutual Information-Based Band-Clustering

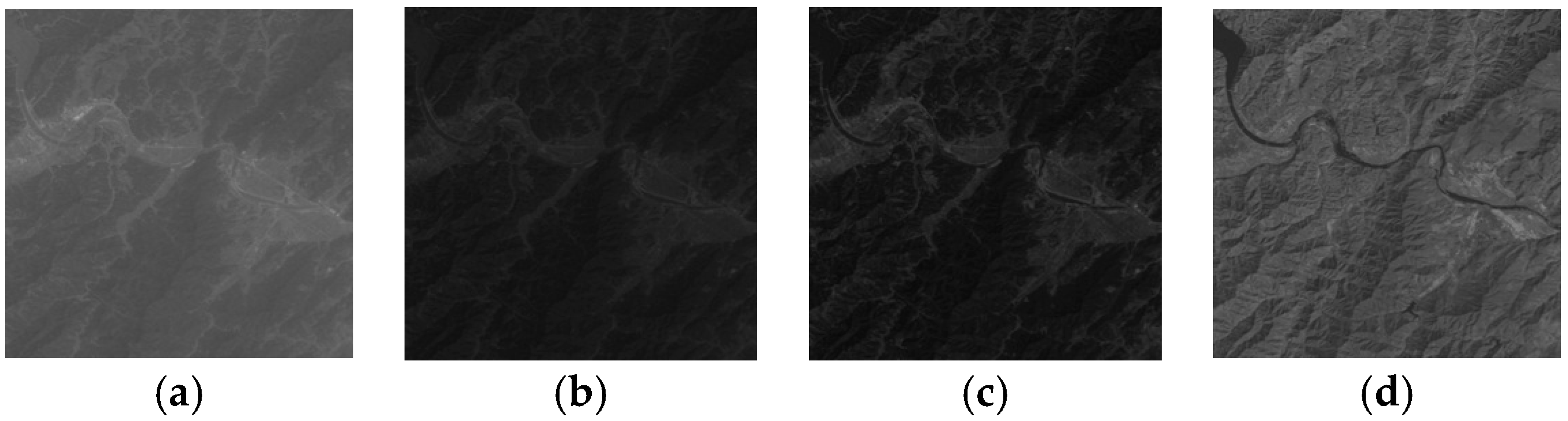

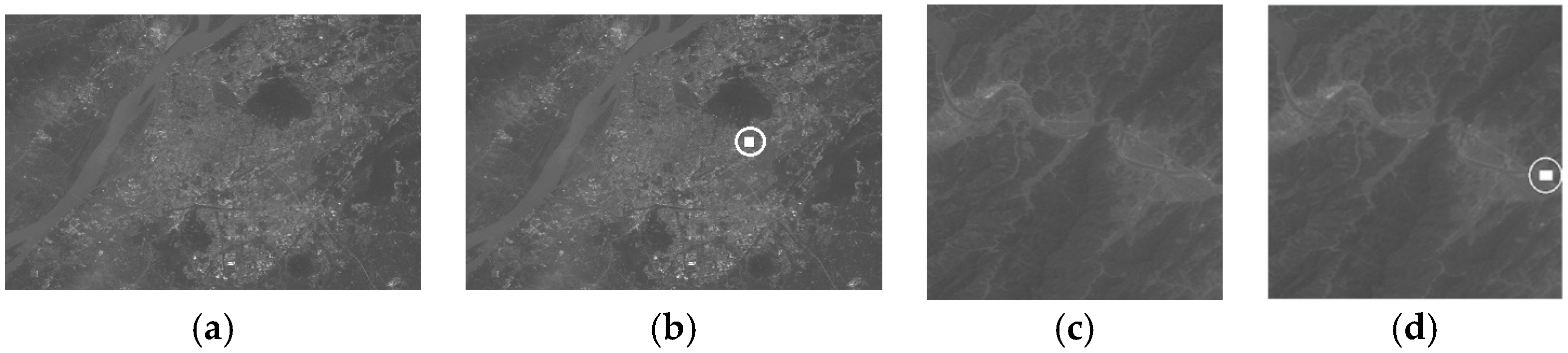

Different bands of a multispectral image have different spectral responses and can be distinguished from each other based on their grayscales. Even in the same image, there are big grayscale differences between the bands, especially between the visible band and infrared band.

Figure 2 shows several comparison instances of different type regions (mountain and urban) of the Landsat thematic mapper (TM) image data of Band 1 (In

R1) and Band 4 (In

R4). Obviously, there are some differences between mountainous areas and the urban areas. Furthermore, even for the same surface feature, the difference between different bands is obvious, as shown in

Figure 2b,d.

Taking this into account, we adopt band clustering to separate the bands into several groups (band subsets). Band-clustering is used to identify the subset of bands that are as independent as possible, and it is adopted to obtain compactable hash values in this algorithm.

In this paper, we use AP clustering [

17,

18] with MI to divide the bands into several band subsets. The AP algorithm is an exemplar-based clustering algorithm that uses max-sum belief propagation to obtain an optimal exemplar which can determine the number of clusters automatically, with a message exchange approach. The final clustering centers will be generated depending on the given dataset. Compared with traditional clustering algorithms, such as K-means clustering, AP clustering does not need to set the clustering number. Therefore, it is an efficient clustering technique to deal with datasets of many instances because of its better clustering performance over traditional methods.

The AP clustering algorithm starts with the construction of a similarity matrix

, in which the element

measures the similarity between band

k (In

Rk) and band

i (In

Ri). In our perceptual hash algorithm, MI is chosen to construct the similarity matrix, i.e., each

s(

i,

j) denotes the mutual information between the

i-th and the

j-th while

L is determined by the number of bands. MI measures the statistical dependence between two random variables and can therefore be used to evaluate the relative utility of each band to classification [

19]. Given two random variables

X and

Y with marginal probability distributions

p(

X) and

p(

X) and joint probability distribution

p(

X,

Y), their MI is defined as:

It follows that MI is related to entropy as:

where

H(

X) and

H(

Y) are respectively the entropies of

X and

Y, and

H(

X,

Y) is their joint entropy.

Treating the band’s multispectral images as random variables, MI can be used to estimate the dependency between them, and was introduced for band selection in [

20,

21]. Using Equation (2), the mutual information between each band of the multispectral image can be calculated, which can be used to evaluate the relative utility of each band to classification.

2.3. Band Fusion-Based Edge Feature Extraction

As mentioned above, edge characteristics-based perceptual hash can achieve higher precision for MS image integrity authentication, so we adopt the band feature fusion technique in order to obtain the robust edge feature of the band sets separated by band-clustering.

So far, many fusion algorithms have been developed for multispectral band fusion. One of the most important fusion techniques is the wavelet-based method, which usually uses the discrete wavelet transform (DWT) in the fusion [

22]. Since the DWT of image signals produces a non-redundant image representation, it can provide better spatial and spectral localization of image information as compared to other multiresolution representations. Therefore, we adopt DWT-based fusion techniques to obtain the robust fusion features of the band subset.

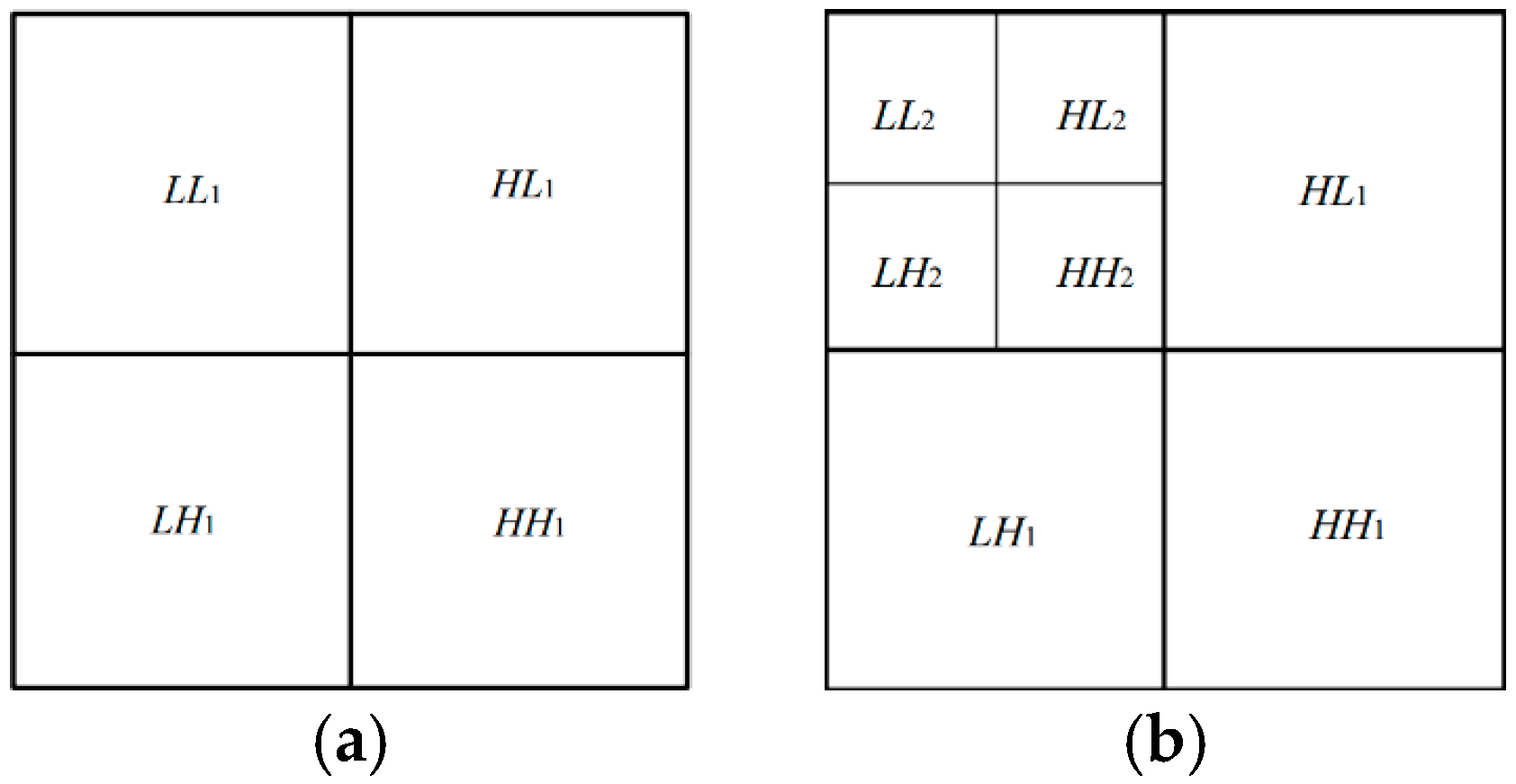

The key step of DWT-based fusion techniques is to define a fusion rule to create a new composite multiresolution representation, which uses different fusion rules to deal with various frequency bands. The process of applying the DWT can be represented as a set of filters. After first-level decomposition, the image is decomposed into a low frequency sub-image (

LL1) which is the approximation of the original image and a group of high-frequency sub-images (

LH1,

HH1, and

HL1) which contain abundant edge information, as shown in

Figure 3a. The low frequency sub-image is the approximation of the original signals for this level and can be further decomposed until the desired resolution is reached.

Figure 3b illustrates wavelet decomposition of an image at level 2. In

Figure 3b,

LL2,

HL2,

LH2, and

HH2 are the sub-images produced after the

LL1 sub-image that are being further decomposed. Since the second-level high-pass sub-images (also called middle frequency sub-images)

LH2,

HH2, and

HL2 also contain abundant edge information, and are more robust than high frequency ones, but more fragile than low frequency ones, we use middle-frequency sub-images to extract the robust bits.

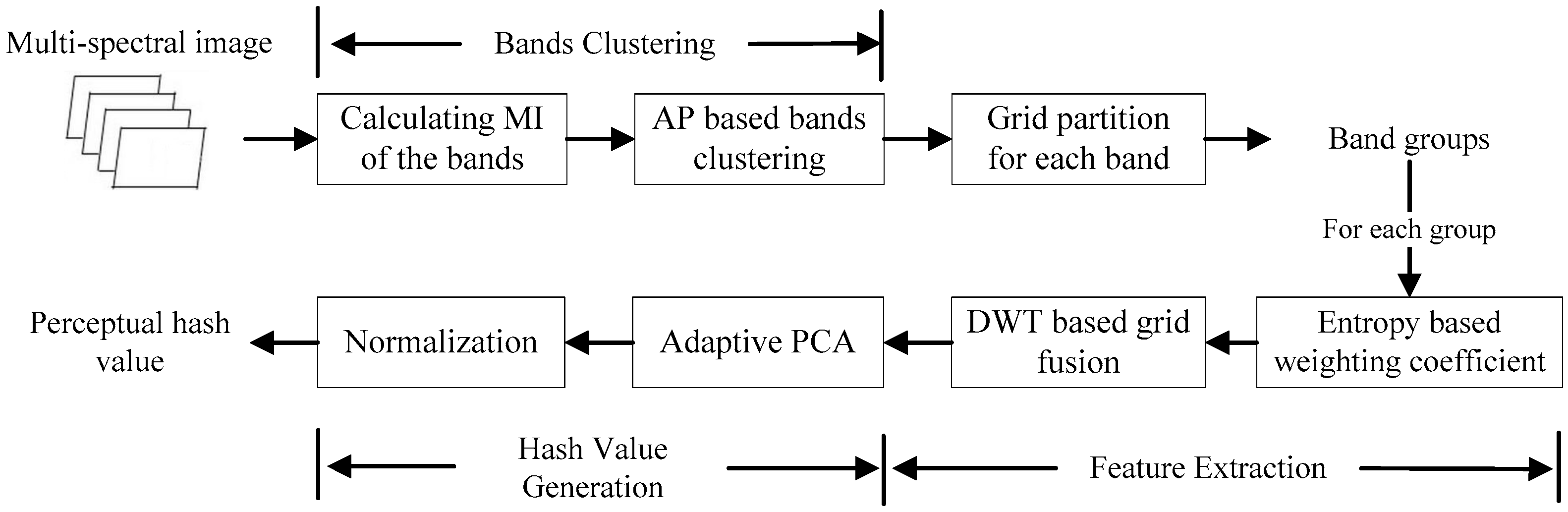

3. Our Perceptual Hash Algorithm Design

The proposed perceptual hash algorithm for MS sensing image authentication consists of three main stages: (a) bands clustering, (b) feature extraction, and (c) hash value generation. The schematic diagram of the proposed algorithm is given in

Figure 4.

3.1. Band-Clustering

We employ AP clustering to divide the original MS image I into several band groups, and we do not need to set the clustering number. Firstly, the similarity matrix is constructed, where each matrix element is the mutual information between InRk and InRi and the matrix size is determined by the number of bands. Then, the messages of similarity matrix are updated repeatedly until some stop after a fixed number of iterations, at which point we need to set the damping factor λ and the maximum number of iterations in advance.

After the step of band-clustering, the bands are divided into band clusters (band subsets). That is to say, the

L bands are divided into

N clusters, and each cluster is denoted as

ICn (

n < N). For each band cluster

ICn:

where

; therefore, the original MS image can also be expressed as:

3.2. Band Fusion-Based Edge Feature Extraction

The band fusion-based feature extraction is intended for encoding the perceptual information from source bands into a single one containing the best aspects of the original bands, which includes two steps: grid division and feature extraction. The details of band fusion-based edge feature extraction on the band subsets are described as follows.

3.2.1. Grid Division

To make the hash value more sensitive to local modification in the MS images, each band is partitioned into

M ×

N grids, and the grid is denoted as

in which

w = 1, 2, …,

M,

h = 1, 2, ...,

N, and

k denote the band number. Thus, the grids in different bands of the same position composed a grid set which is denoted

, as follows.

As the tamper location ability is based on the resolution of the grid division, the higher the resolution of the grid division, the more fine-grained the authentication granularity can be. While the computational cost would be raised at higher resolutions, we need to segment the remote sensing image into more grids in order to increase both the time for computing perceptual hash values, as well as comparing the values. The choice of the grid division resolution thus presents a trade-off between the cost and tamper location ability. Our work aims at designing such an authentication model with good balance between cost and performance.

3.2.2. Feature Extraction

Selecting the fusion rules is of great importance; it directly affects the fusion quality and the sensitivity of the perceptual hash algorithm. For each grid set , the features are extracted and fused based on discrete wavelet transform (DWT) with adaptive weighted rules.

The whole process can be divided into the following steps:

To obtain the fixed length of hash values, each grid is firstly conducted with the normalization of bilinear interpolation to resize with the size of m × m.

Two-level DWT is applied to each resized grid, which is decomposed into different kinds of coefficients at different scales. To extract more robust detailed information for generating hash values, we choose the two-level high-pass coefficients LH2, HH2, and HL2 as the basic perceptual feature.

For each grid, the three sub-bands LH2, HH2, and HL2 are fused into one matrix though the fusion rule of maximum, and the fusion result is expressed as a matrix denoted by .

For each band cluster

ICn, the adaptive weighted fusion is made on the matrix

of the grid in different bands, and the fusion matrix is denoted

. The fusion process should satisfy the followed conditions, i.e., the preservation of as much detailed information as possible. To do this, the fusion coefficient of each matrix is as follows:

where

is the weighted coefficient of the matrix

of the grid in the

kth band, and

is the entropy of the corresponding grid. Obviously,

depends on the entropy of the grid and is different from other areas. Then, the pixel of the matrix

can be computed as follows:

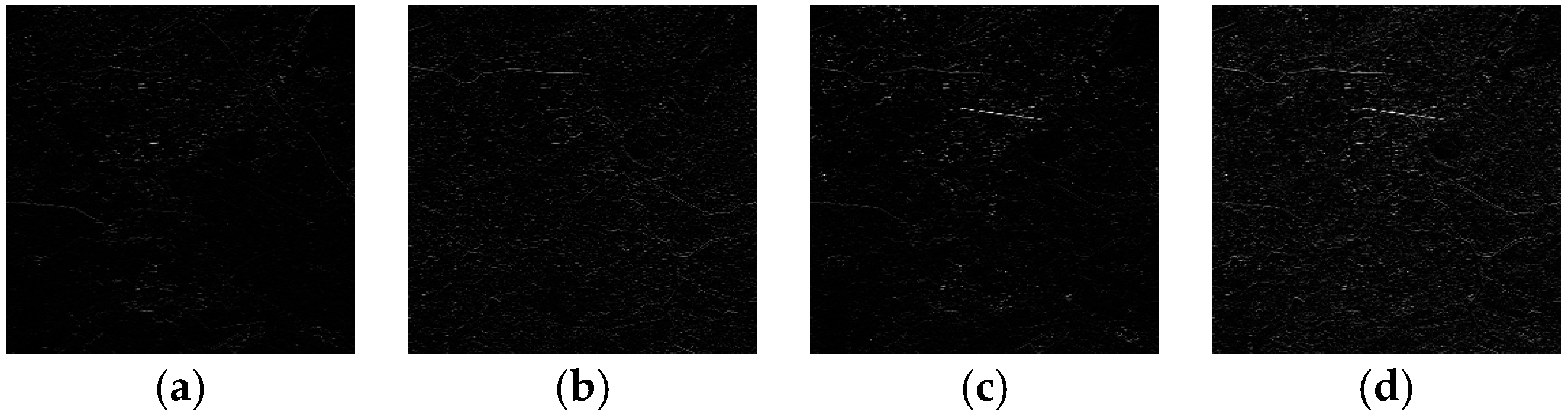

A fusion example of sub-band

HL2 is given in

Figure 5, where

Figure 5a–c show the intermediate frequency components of the grids in In

R3, In

R4 and In

R7 respectively, and

Figure 5d shows the fusion result. It is observed that the fusion result retains the obvious edge features of the grid in several bands.

3.3. Data Compaction Based on Self-Adaptive PCA

Since the hash value has to be as compact as possible in order to keep the content-preserving ones robust, we apply principal component analysis (PCA) on the fused feature fusion matrix

for noise reduction and data compaction. The PCA algorithm reduces the large dimensionality of image data in order to reduce the dimensionality of independent feature space. It is widely used in data reduction and data compression, as it is able to discover the relationships among the variables [

23,

24]. By using PCA on matrix

, the linear correlation of the matrix element can be removed. This means that the noise can be effectively removed and the extracted feature achieves data compression.

The grid’s principal components are then standardized in order to obtain the fixed-length string which is then encrypted by using a cryptographic encryption algorithm that takes RC4 as an example to enhance the security. The encrypted string is the hash value of the grid denoted as .

All of the grid’s perceptual hash values

are put together as the hash value of the clustering, denoted as

PHk:

Finally, the hash value of the original multispectral is denoted

PH as follows.

where

N is the number of band clusters. Clearly, the hash length depends on band clusters and grid division.

3.4. Integrity Authentication

At the receiver, the authentication process is implemented via the comparison between the reconstructed perceptual hash value and the original one: the higher the perceptual hash values’ difference, the greater the corresponding images’ difference. Although the hamming distance is frequently used to evaluate the difference between two sequences, it is not suitable for this purpose, because the length of the hash value may vary along with the change of algorithm parameters. We have adopted the followed “Normalized Hamming Distance” [

25] to evaluate the difference between two hash values:

where

h1 and

h2 are perceptual hash values with

L length. It is observed that the normalized hamming distance

Dis is a float between 0 and 1. If the

Dis of two perceptual hash values of the same area is lower than the threshold

Th, it means that the corresponding area is content-preserving; otherwise, it means that the content of the corresponding area has been tampered with.

Furthermore, the tampering can be located in the corresponding geographic regions by comparing each hash value of each grid. The higher the resolution of the grid division, the more fine-grained the authentication granularity can be. To obtain higher resolutions, we need to divide the image into more grids, compute more unit perceptual hash values, and compare more hash values.

4. Experiments and Discussion

In this section, we evaluate the robustness of our proposed perceptual hash algorithms from two aspects. The first one is the robustness against content-preserving manipulations, wherein perceptually identical images under distortions would have similar hashes, which is important for content-based image identification. The other is the sensitivity to tampering of the image, whereby the image that has been tampered with would have different hashes to the corresponding original image.

All experiments were implemented on a computer with a 2.40 GHz Intel i7 processor and 4.00 GB memory running Windows 10 operating system. The test software was developed using Microsoft Visual Studio 2013 in C++.

4.1. Perceptual Hash Values Generation

There are several parameters in the perceptual hash algorithm, and we describe the parameter settings used in our experimental results in the following. Referring to the existing research [

17,

18], we set the damping factor

λ as 0.5 and the maximum number of iterations as 100 during the band-clustering. The clustering process need not set the clustering number. During the grid division process, the size of non-overlapping grids is 128 × 128 pixels.

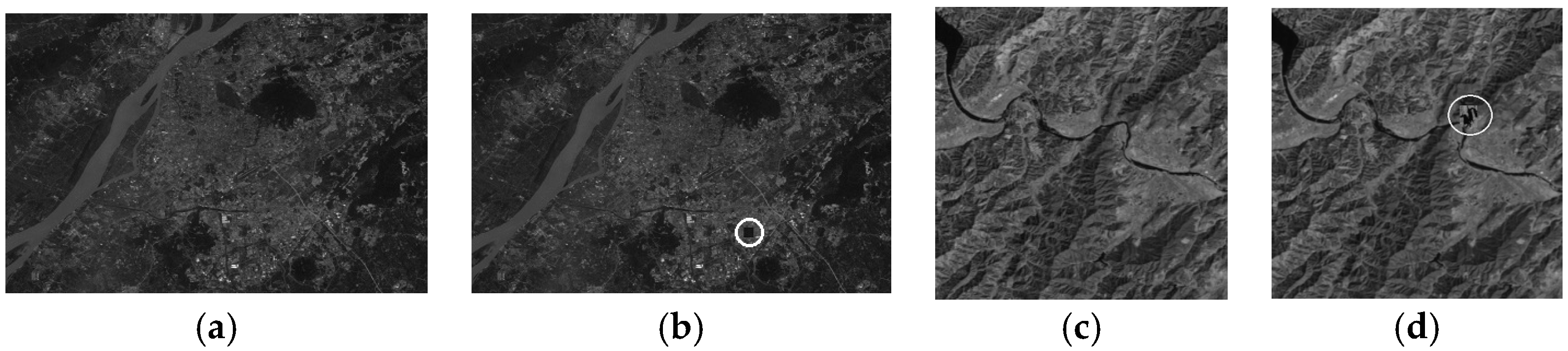

We opted for the Landsat TM image as an example to validate robustness performances and tamper sensitivity.

Figure 6 and

Figure 7 present several bands of the typical TM images to show the comparison between the bands of the same area, in which the content of the same geographical location in each band is quite different. The details of the mutual information between the bands of the above two images are given in

Table 1 and

Table 2.

The results of band clustering for each TM image are the same as below: , , . Thus, InR1, InR2 and InR3 would be divided into a band group and be generated as the perceptual hash value. Similarly, InR5 and InR7 would be generated as the perceptual hash value as a group.

4.2. Performance of Perceptual Robustness

Perceptual robustness is the most significant difference between perceptual hash and cryptography hash. An ideal perceptual hash algorithm should be resistant to commonly-used remote sensing image content-preserving manipulations, which means that the normalized Hamming distance between the two hash values of the original image and the processed one by the content-preserving manipulation should be under the pre-determined threshold T. In this paper, the threshold T is set as 0.05.

In order to evaluate the performance of perceptual robustness for the algorithm, we utilized data compaction and digital watermark embedding as examples of content-preserving manipulations for testing, in which data compaction involves lossy compression (90% JPEG compression) and lossless compression, and digital watermark embedding adopts least significant bit (LSB) embedding. In this paper, to describe the perceptual robustness, we adopt the percentage of the grid’s hash values in which no changes occurred that exceed the threshold

T; the results are shown in

Table 3. It can be seen from

Table 3 that this algorithm can maintain its robustness with respect to the lossless compression of multi-spectral images and LSB watermark embedding, and can maintain near-robustness to lossy compression.

The robustness of the algorithm can be adjusted by the pre-determined threshold T, i.e., the greater the threshold T is, the stronger the robustness of the algorithm. However, the relationship between robustness and sensitivity to tampering is contradictory, and may directly affect sensitivity to tampering if the robustness is overemphasized.

In contrast, cryptographic authentication methods cannot achieve better certification, since they treat the above manipulation as illegal operations and their hash value would be changed dramatically after content-preserving manipulations. We utilized SHA-256 (Secure Hash Algorithm 256) as an example of cryptographic algorithms to compare with our proposed algorithm.

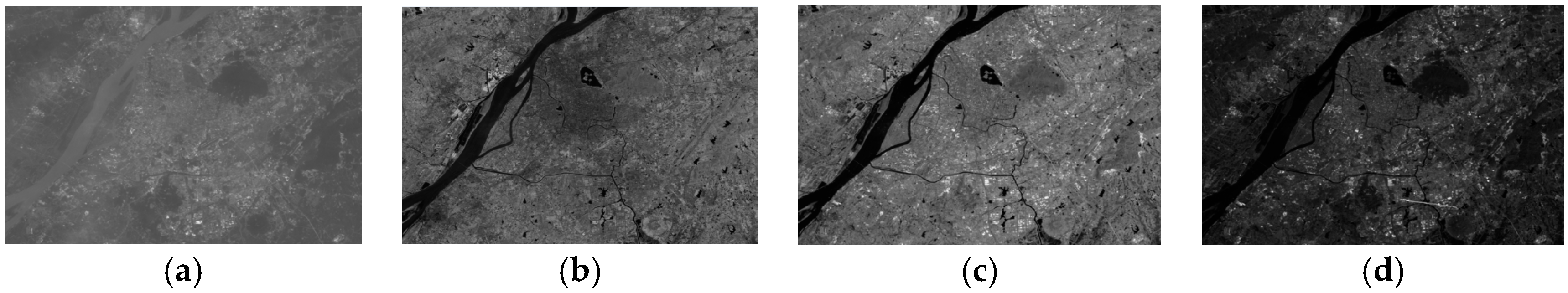

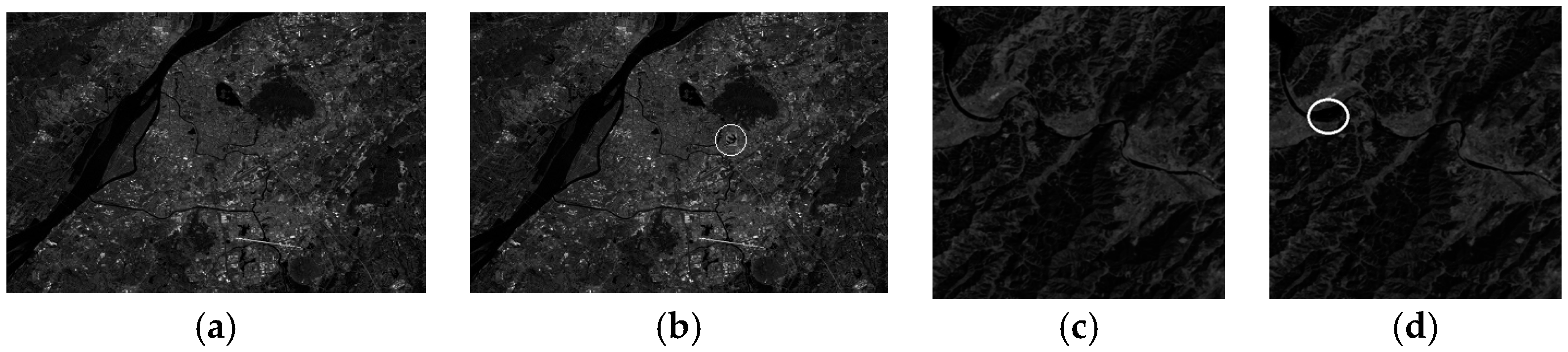

Figure 8 shows examples of content-preserving manipulations;

Figure 8a shows the original grid and

Figure 8b–c show the results of content-preserving manipulations. Obviously, there are almost no differences between each of them, while the hash values of SHA-256 are very different, as shown in

Table 4. By contrast, our proposal keeps the content-preserving manipulations robust, and the perceptual hash values remain unchanged. On the other hand, as the conventional perceptual hash algorithms for images do not take the multiband characteristic of MS images into account and cannot be applied directly to MS images, we do not compare them with our proposed algorithm.

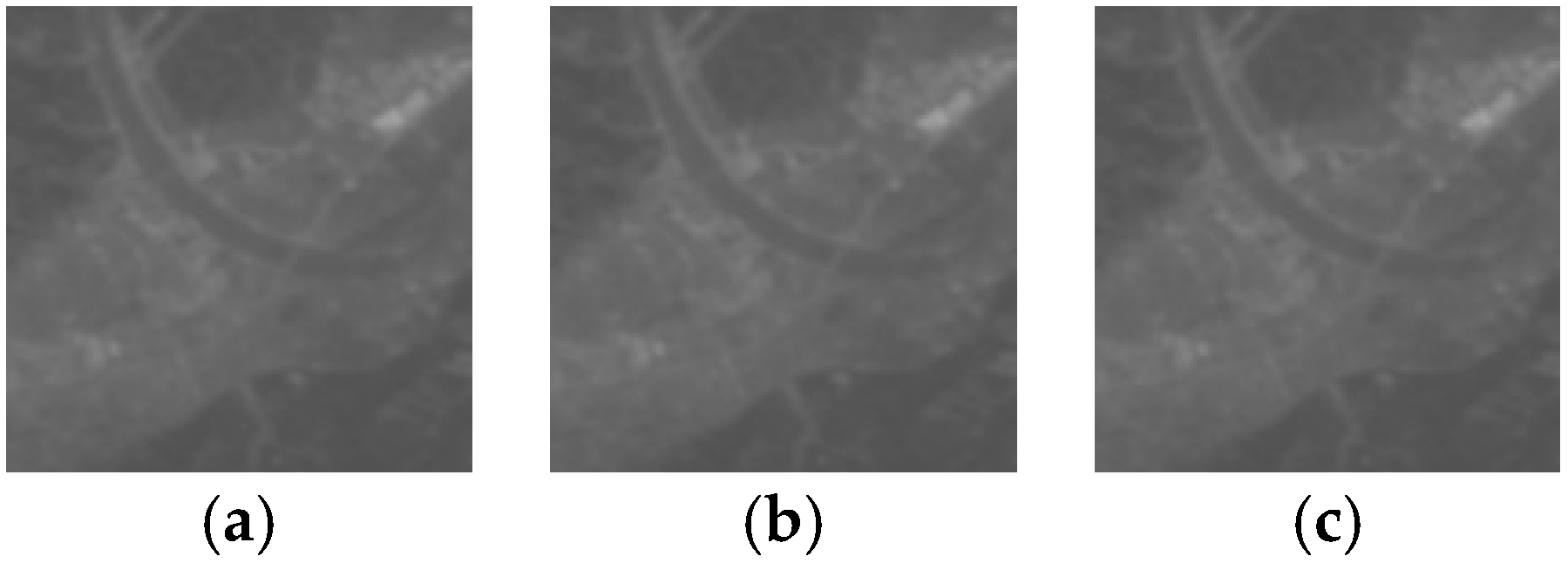

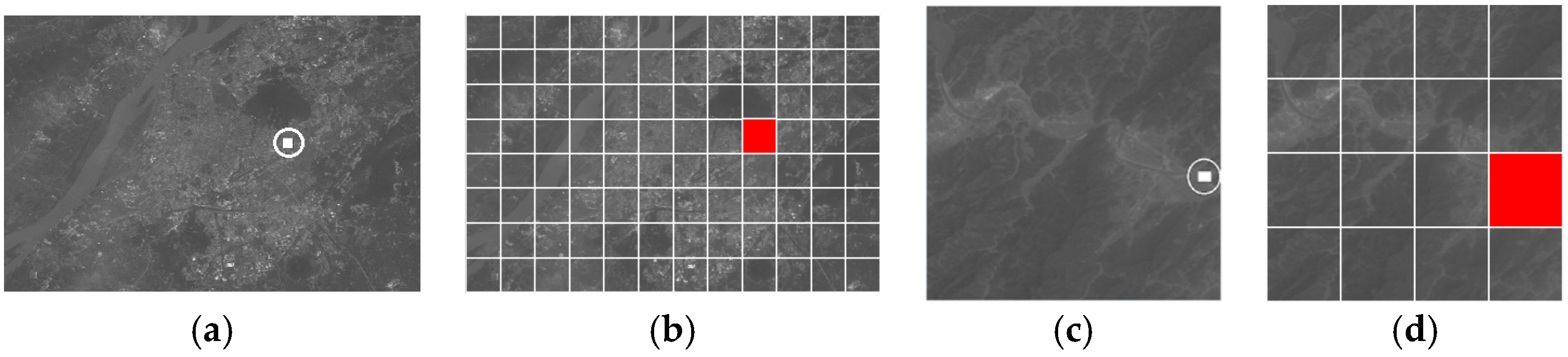

4.3. Performance of Sensitivity to Tampering

The authentication process of a multi-spectral image should be able to detect the local tampering of the bands, which means that the tampered and original images should have significantly different hashes. To test the performance of sensitivity with respect to tampering, we take several kinds of tampering operations, including removing, appending and changing the object, as shown in

Figure 9,

Figure 10 and

Figure 11. When the band of the image is tampered with, the regenerated perceptual hash values of the grid and the whole image would be changed, and the malicious tampering can be detected. Take the tampering of In

R1 of the original image A as an example, as shown in

Figure 9a,b, the perceptual hash values of the image and grid cell would be changed tremendously, as shown in

Table 5.

For the above tampering example, the comparison of the hash values of each grid can be used to locate the tampering with respect to the corresponding geographic region, and the location granularity will depend on the resolution of the grid divisions.

Figure 12 shows the tamper location of Test 1 as an example.

4.4. Security Analysis

As described in

Section 3, the performance of the security of the hash values is dependent on the security of the cryptographic encryption algorithm. The security of the chosen RC4 in this paper is widely researched and recognized, so that the security of our algorithm is guaranteed.

5. Conclusions

In this paper, we have proposed a perceptual hash algorithm for multispectral remote sensing image authentication. In order to compactly represent the perceptual features of the multispectral image, we have adopted an affinity propagation algorithm to classify the MS images into several clusters based on the mutual information of the bands of these images. Dividing each band into a grid, the features of the grid cell at the same location within the cluster are extracted and fused based on DWT, while PCA-based data compression on the fused feature helps reduce the influence of noise. The final perceptual hash value can be acquired after the compressed feature has been encrypted by the cryptographic encryption algorithm. Experimental results have shown that the proposed algorithm is robust against normal content-preserving manipulations, such as data compaction and digital watermark embedding, and has good sensitivity to detect local detailed tampering of the multispectral image. Thus, the algorithm efficiently authenticates the content integrity of multispectral remote sensing images, and overcomes the defects of the existing perceptual hash algorithms which do not consider multispectral remote sensing images.

The aims of future works are outlined as follows: firstly, to improve the robustness against JPEG compression; secondly, to expand the algorithm to include hyperspectral remote sensing images which contain many more bands than multispectral images.