Electric Discharge Machining (EDM), also known as spark machining or spark eroding, enables the machining of intricate shapes in conductive materials regardless of their hardness [

1,

2]. Material removal occurs due to a phenomenon that accompanies pulsed electrical discharges between the working electrode and the workpiece, separated by a dielectric in the machining gap [

1,

3]. The process avoids physical contact between the working material and the workpiece, where the sufficient voltage difference between them gives rise to a plasma channel of high energy density. The high temperature of the inter-electrode gap melts or ablates the workpiece material [

4,

5]. The EDM process maintains a forced dielectric flow with essential functions: dissipating heat from the workpiece and the working electrode, removing solidified particles from the machining gap, and stabilising the process conditions, allowing for the occurrence of subsequent discharges [

6,

7,

8]. The thermal nature of this process causes a change in the physical properties of the surface layer, resulting in the characteristic formation of three thermally modified layers [

9]. In the first layer, microcracks, which are associated with residual stresses, surface porosity, grain boundary growth, and changes in chemical composition might be observed [

10,

11]. EDM has been adopted in several branches of industry, such as automotive, aerospace, chemical, aviation, nuclear, petroleum, and medical, because it can be used when conventional machining techniques are not cost-effective [

12,

13], e.g., when materials are hard to machine—such as titanium alloys and nickel-based superalloys, among others—or to produce small, thin-walled elements with complicated geometry, a.k.a. ”micro-machining” [

14]. The input parameters of the EDM process—current intensity, gap voltage, pulse on time, pulse out time, dielectric type, and working electrode material—can substantially affect the quality of the final product [

3,

15].

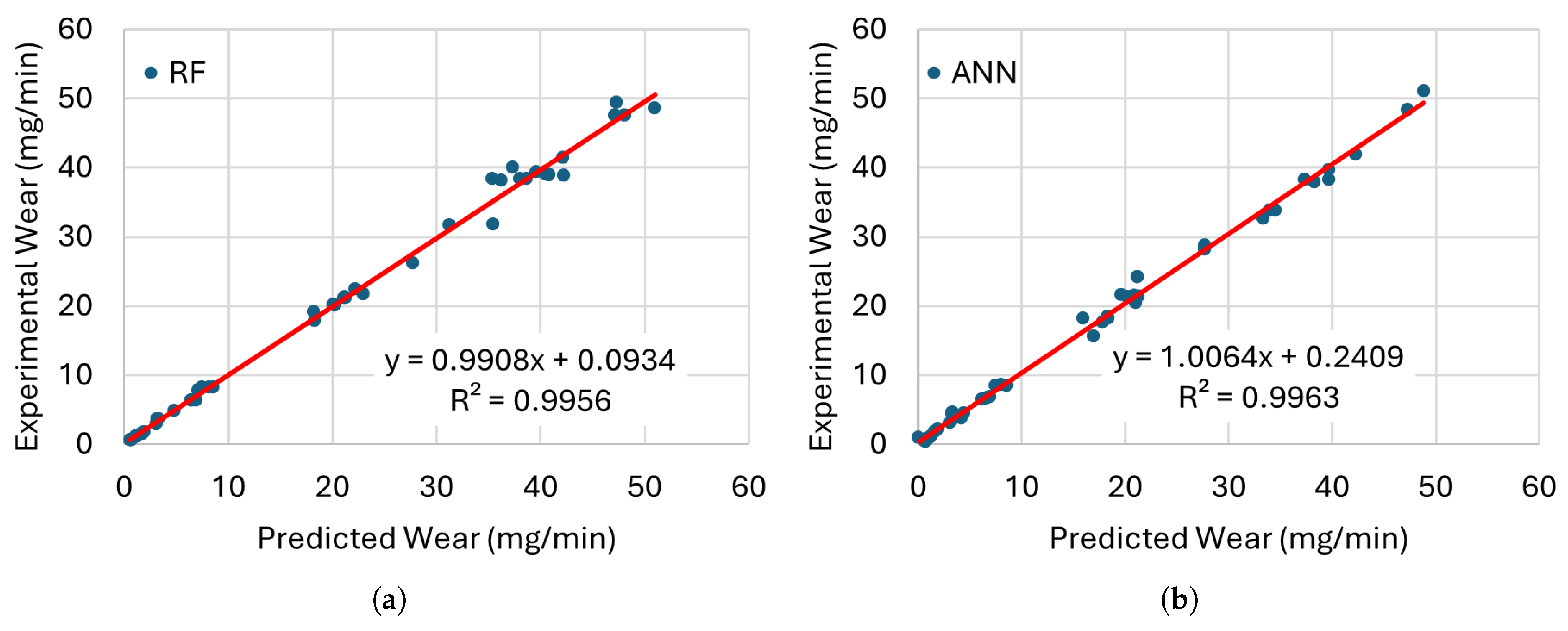

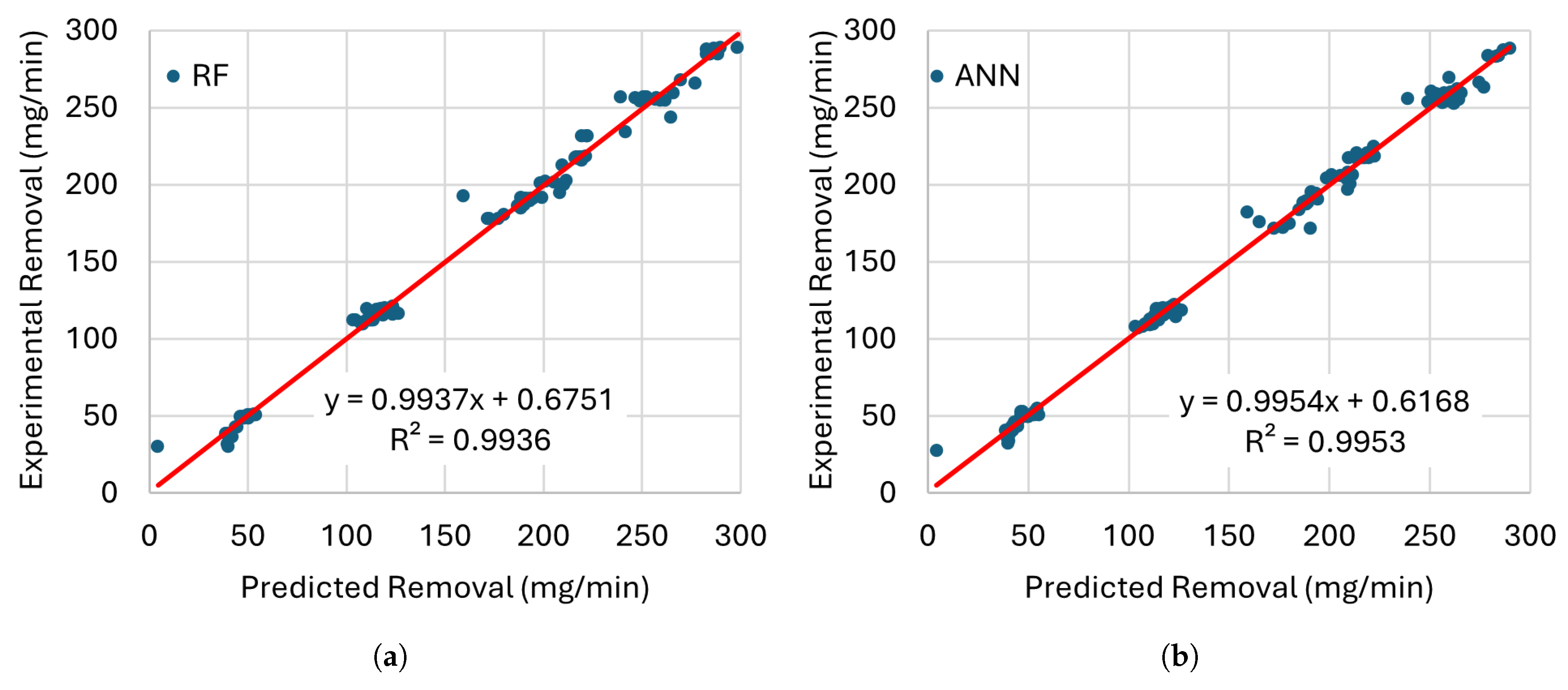

Cetin et al. [

5] evaluate several ML algorithms to predict electro-erosion wear in cryogenic-treated electrodes of mold steels. The authors consider algorithms in the domain of Ensemble Learning (EL), ANN, Boosting, Decision Trees (DTs), and K-Nearest Neighbours (KNNs). The inputs of the ML techniques are the Electrode Material (EM), Cryogenic Process Conditions (CPC), Pulse Current (PC), and Pulse Duration (PD). The results show that the EL models provide an accuracy of almost 99% during the training and testing phases, according to a simple split of the dataset and R-squared (

) metric. In addition, they identified the most relevant characteristics that affect wear patterns. Arunadevi and Prakash [

7] analyse the MRR and Surface Roughness (SR) to machining Al7075 + 10% Al

2Ol

3 materials using WEDM. ANN and LR with pulse time (

), Voltage (Vo), pulse-off (

), Bed Speed (BS), and Current Intensity (CI) are used to predict output parameters and to find input values that maximise MRR and minimise SR. The results show that ANN outperforms LR considering MRR and SR. The authors also identify the optimal solution using a Pareto front. Jatti et al. [

10] present several ML models for the prediction of MRR during EDM of Nickel-Titanium (NiTi), Nickel-Copper (NiCu), and Beryllium-Copper (BeCu) alloys. ML regression and classification models based on RF, DTs, Gradient Boosting (GB), ANN, and Adaptive Boosting (AdaBoost) predict MRR and an MRR value below 5 mm

3/min. The input parameters of the algorithms are Workpiece Electrical Conductivity (WEC), Gap current (Gc), Gap voltage (Gv),

, and

. The results show that GB is the most efficient regression algorithm for predicting MRR with a value

= 0.930, and RF outperforms other classification algorithms based on the F1-score with a value of 1. ML can accurately predict machining performance, support tool design, and process parameter optimisation. In addition, the authors identify that Gc and Vo have a dominant influence on MRR, and cryo-treated electrodes significantly affected MRR. Kaigude et al. [

12] evaluate the prediction of LR, DTs, and RF for SR with AISI D2 steel and EDM and Jatropha oils as dielectric media. The input parameters consider PC, Gv, T

on, and T

off. The results show that RF provides high accuracy prediction with an

value of 0.89 and an MSE of 1.36%. Bhandare and Dabade [

13] propose an ANN for the prediction of MRR, Tool Wear Rate (TWR), and SR considering Gas Dielectric Pressure (GDP), PC, Spark on time (S

on), and Gap Spark Voltage (GSV). The results show the effectiveness of the ANN with a prediction accuracy of 89.09%, 87.30%, and 84.83% for MRR, TWR, and RS, considering the Mean Squared Error (MSE) metric, respectively. Ilani and Banad [

14] propose the EDMNet framework with 12 ML approaches based on Deep ANN (DNN), SVR, Voting (VT), Bootstrap aggregating (Bagging), Extreme Bagging (XBagging), AdaBoost, LR, Ridge regression, Lasso regression, and Elastic Net regression to predict EDM performance. The prediction of MRR, EWR, and SR (R

a) considers CI, Scanning Speed (SS),

, Powder Concentration (PwC), Injection Pressure (IP), Vibration Frequency (VF), and Amplitude of Vibration (AV). The results show that EDMNet can be used as a reproducible standardised benchmarking framework that facilitates ML model comparison in the EDM context. The authors demonstrated that Multiple LR (MLR) exhibits notably inferior performance compared to non-linear learners such as ANN and Decision Tree Regression (DTR), highlighting the complexity of the parameter–response mapping. Cortés-Mendoza et al. [

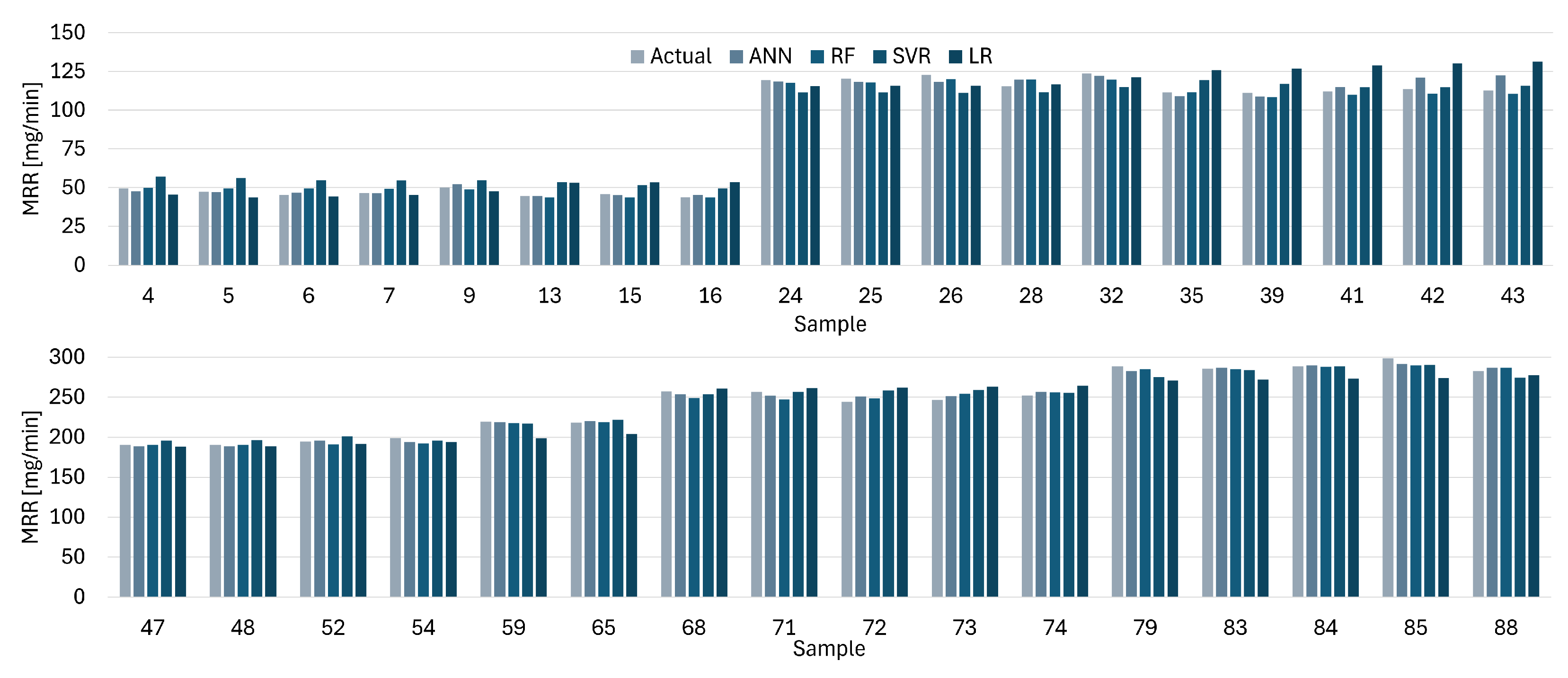

18] study four ML approaches to describe the correlation of the input and output of the dry EDM process with distilled water as a coolant for Inconel 625 and Titanium Grade 2. The prediction models based on LR, RF, SVR, and ANN receive independent variables,

, CI, Vo, Gas Pressure (GP), and Workpiece Material (WM) to estimate MRR, EWR, working electrode velocity (U), and SR parameters (

and

). The average efficiency of the models according to the best

values for MRR, EWR, and U was 0.6735, 0.7955, and 0.7739, using a 5-fold Cross-Validation technique (5CV). Ziyad et al. [

19] develop algorithms for DT, RF, GB, and Extreme GB (XGB) to predict SR with AISI 1060 steel. The prediction strategies consider cutting speed (Vc), Feed rate (F), workpiece Hardness (H), and Machining Environment (ME). The combination of DT, GB, and XGB generates a Super Learner Model (SLM) that improves the predictive efficiency of the models. Additionally, the interpretations of the model’s predictions are clarified using the Shapley additive explanations (SHAP) technique. Ali et al. [

20] address the issue of accurately predicting the MRR, TWR, and SR output parameters in micro-EDM drilling using advanced ML methods. This study aims to develop predictive models for EDM setups using ensemble ML techniques for the TNTZ (Ti-29Nb-13Ta-4.6Zr) machined alloy. An MLR, a DT, and an ANN estimate the output parameters Volume Removal Rate (VRR), Overcut (Ov), Circularity Error (CE), and SR parameters. The evaluation employs two key performance metrics: the Normalised RMSE (NRMSE) and the

factor. The results show that MLR performed poorly due to the non-linear nature of the data (with the lowest

and highest NRMSE), DTR showed moderate accuracy (with

> 75%, and prediction error < 10% in most cases), and ANN delivered the best results (with

> 99% and prediction errors < 5%) in all outputs for training and testing data. Sarker et al. [

21] define a methodology to develop optimised weighted average ensemble models to predict the output parameters of Vc, SR and Spark Gap (SG) in Wire EDM (WEDM) processes. RF, SVR, and Ridge are used as base models, which represent a different modelling paradigm. The two ensemble models aimed to enhance prediction accuracy by minimising the RMSE and Mean Absolute Error (MAE) using weighted averaging of the base models and derived weights. The evaluation considers multiple performance metrics: RMSE, MAE, MSE, R, MAPE, RMSPE, RMSLE, RAE, RRSE, and the Mean Relative Signal-to-Noise (MRSN) ratio to evaluate the prediction of

,

, Spark gap Voltage (SgV), Peak Current (PkC), Wire Tension (WT), and Wire Feed (WF). The results indicated that the RMSE optimised ensemble model achieved the highest prediction accuracy in all response variables, and the MAE optimised ensemble model outperformed individual base models, with a few exceptions. Mandal et al. [

22] study the Monel K500 EDM process using a new metaheuristic technique called the Multiobjective Dragonfly (MODA). MODA receives as input PkC,

, Duty Cycle (DC), and Servo Voltage (SV) to predict the parameters of MRR and EWR. The results show that the model predictions reached

values of 99.40% and 96.60% for MRR and EWR, respectively. Moreover, the methodology considers a Box–Behnken design to prepare the experimental design matrix, and it allows authors to identify a set of non-dominated solutions and the optimal process input parameters. Kumar and Jayswal [

23] present an NN to optimise the prediction of MRR with WEDM a synthetically generated dataset.

,

, CI, Vo, WF Rare (WFR) are the inputs of the NN model to estimate MRR. The results show a

value of 0.9999, a MAE value of 0.0166, and an MSE value of 0.0004 for the best NN model based on the Sigmoid activation function. The authors highlight the near-perfect correlation between the predicted and actual NN values for manufacturers, who can optimise process parameters to achieve desired machining outcomes, thereby enhancing efficiency and reducing costs.