Predicting the Remaining Useful Life of Lithium-Ion Batteries Using 10 Random Data Points and a Flexible Parallel Neural Network

Abstract

1. Introduction

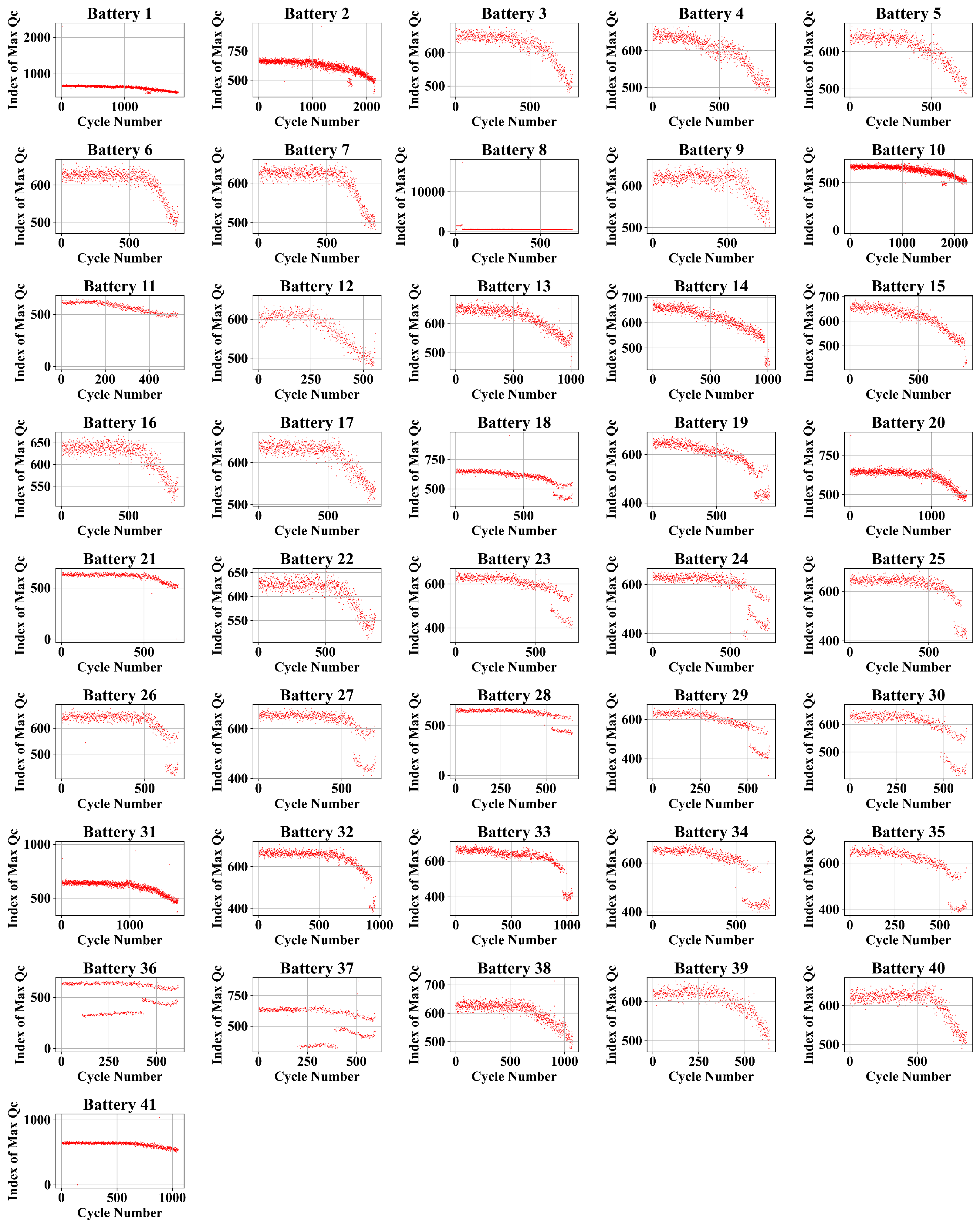

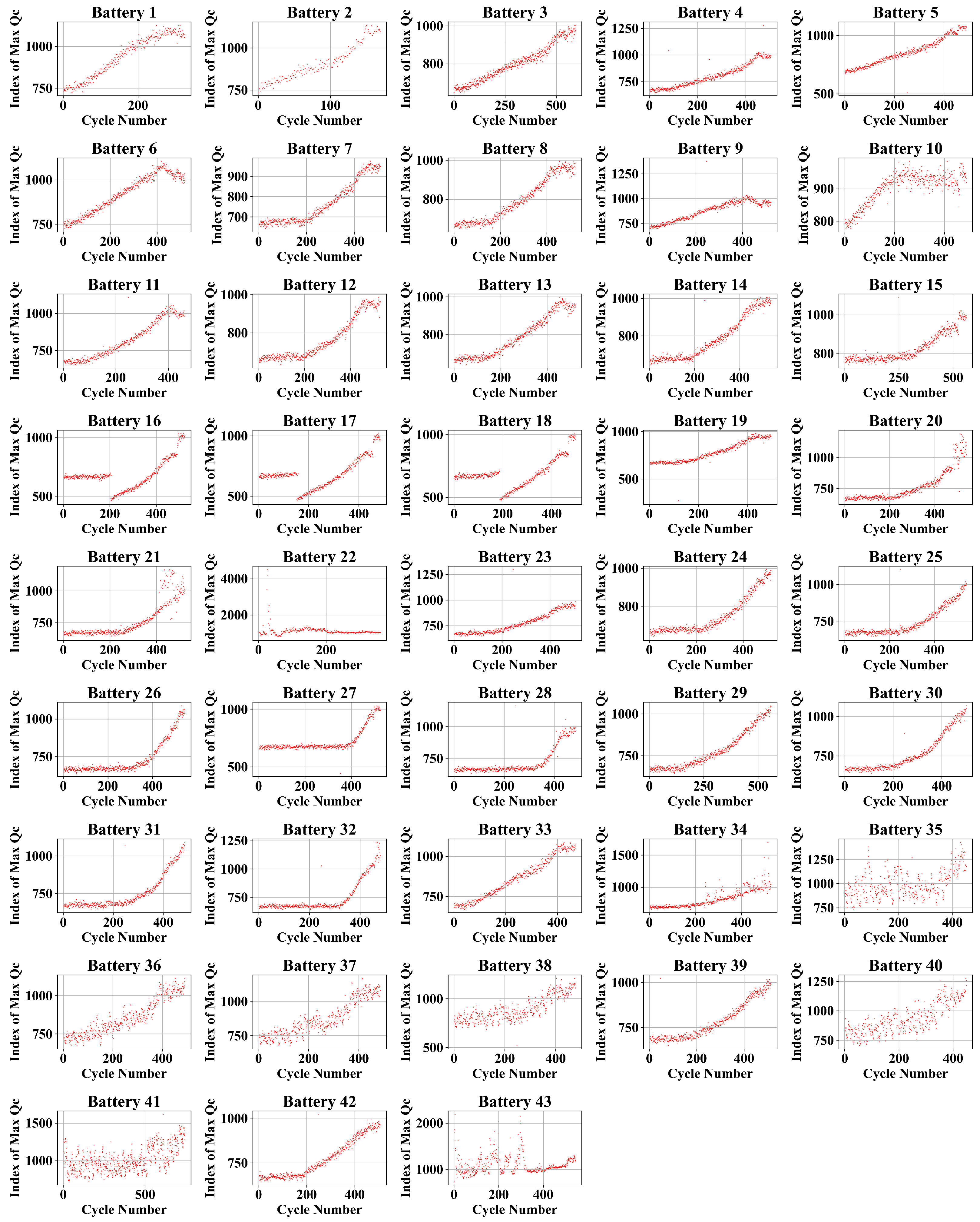

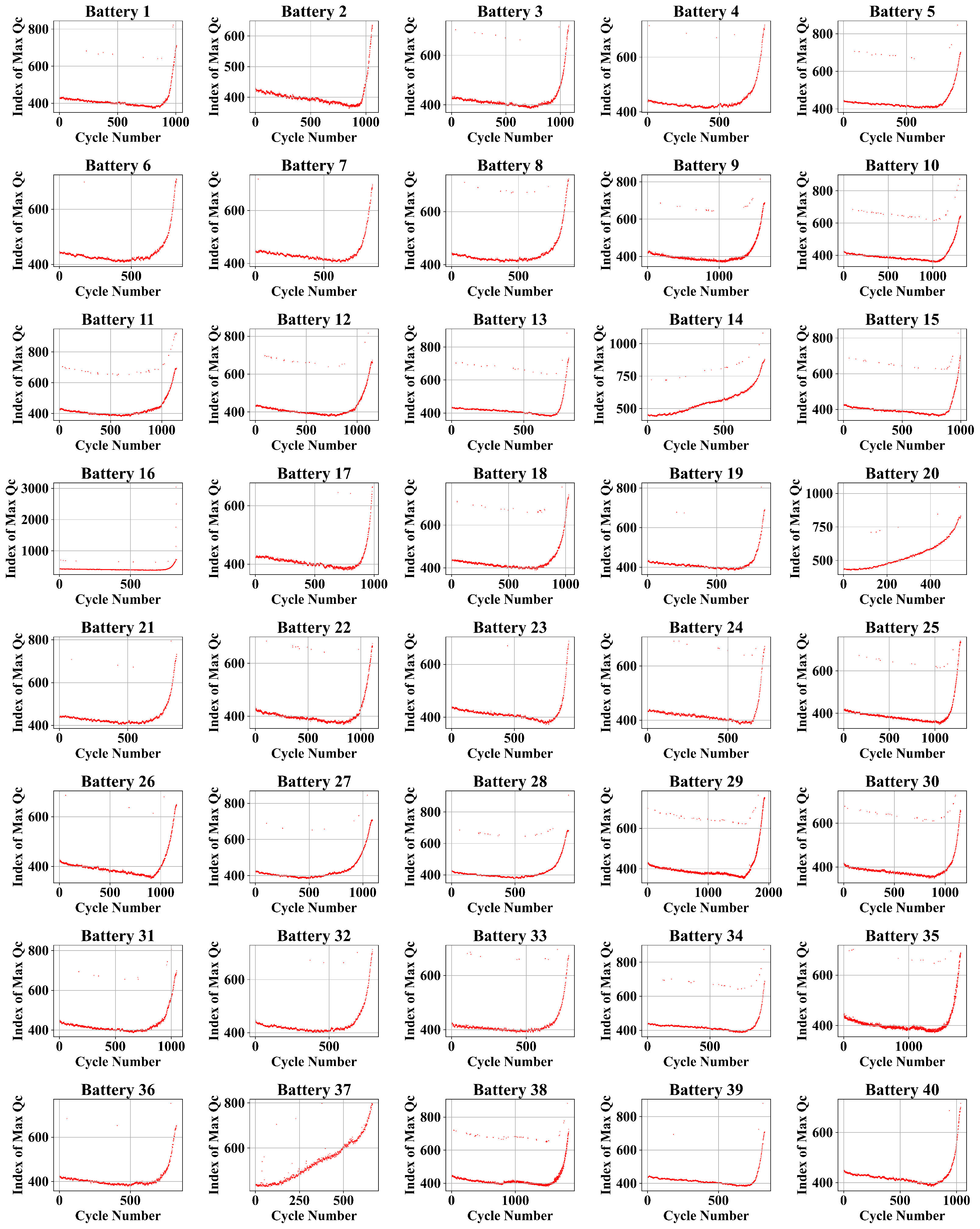

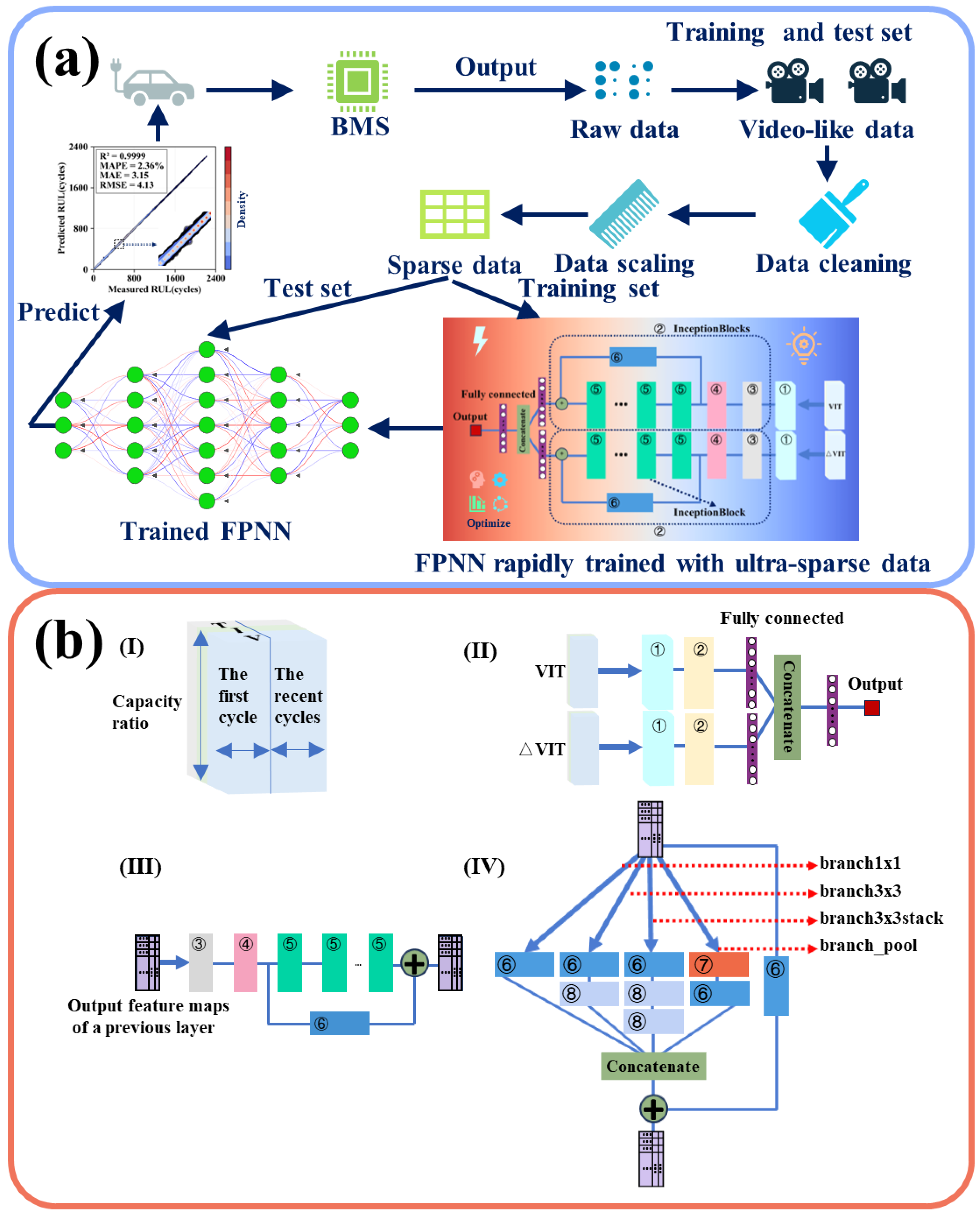

2. Datasets

3. Methodology

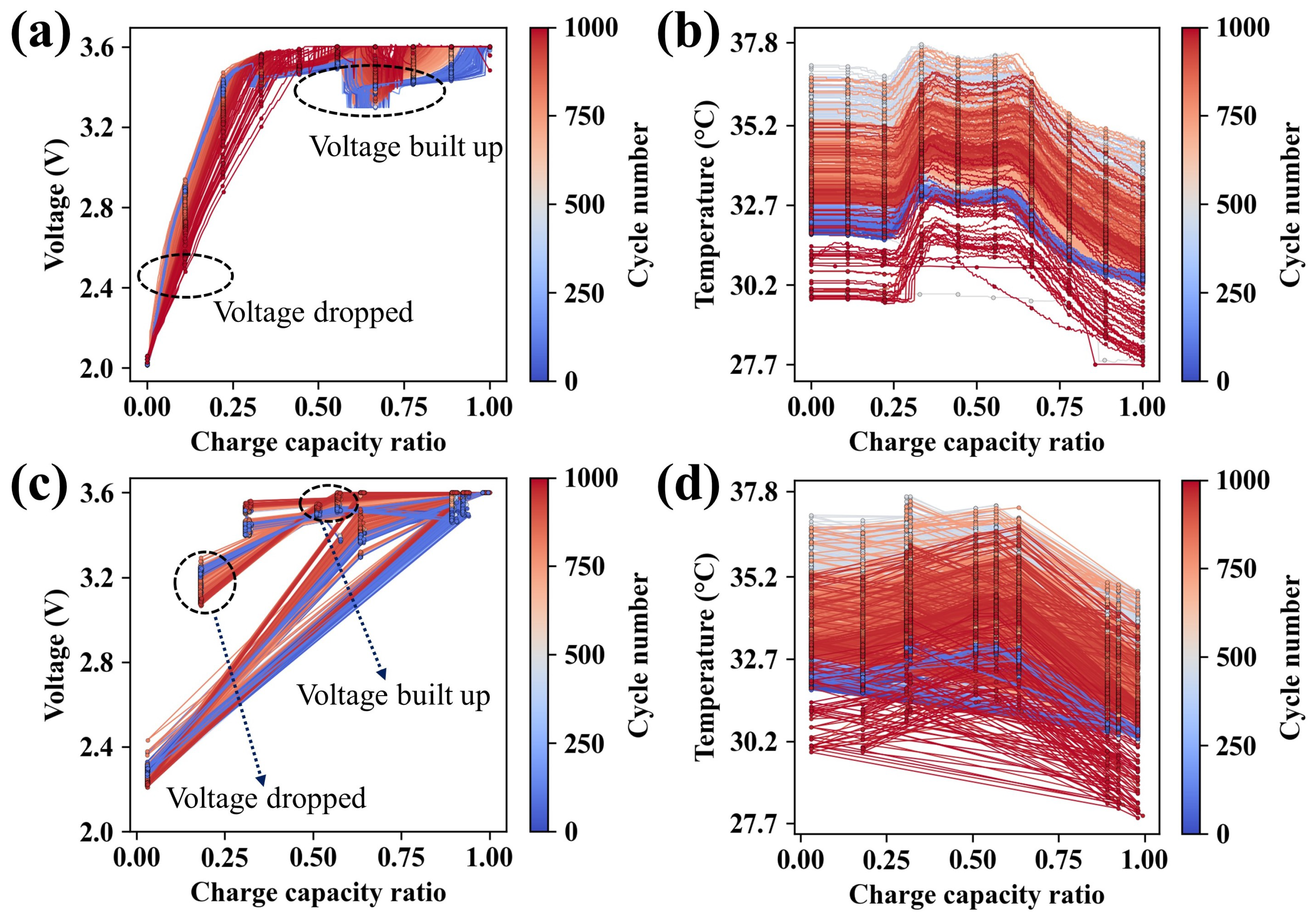

3.1. Data Preprocessing

3.2. Data Sparsification

3.3. Hyperparameter Optimization

4. Results and Discussion

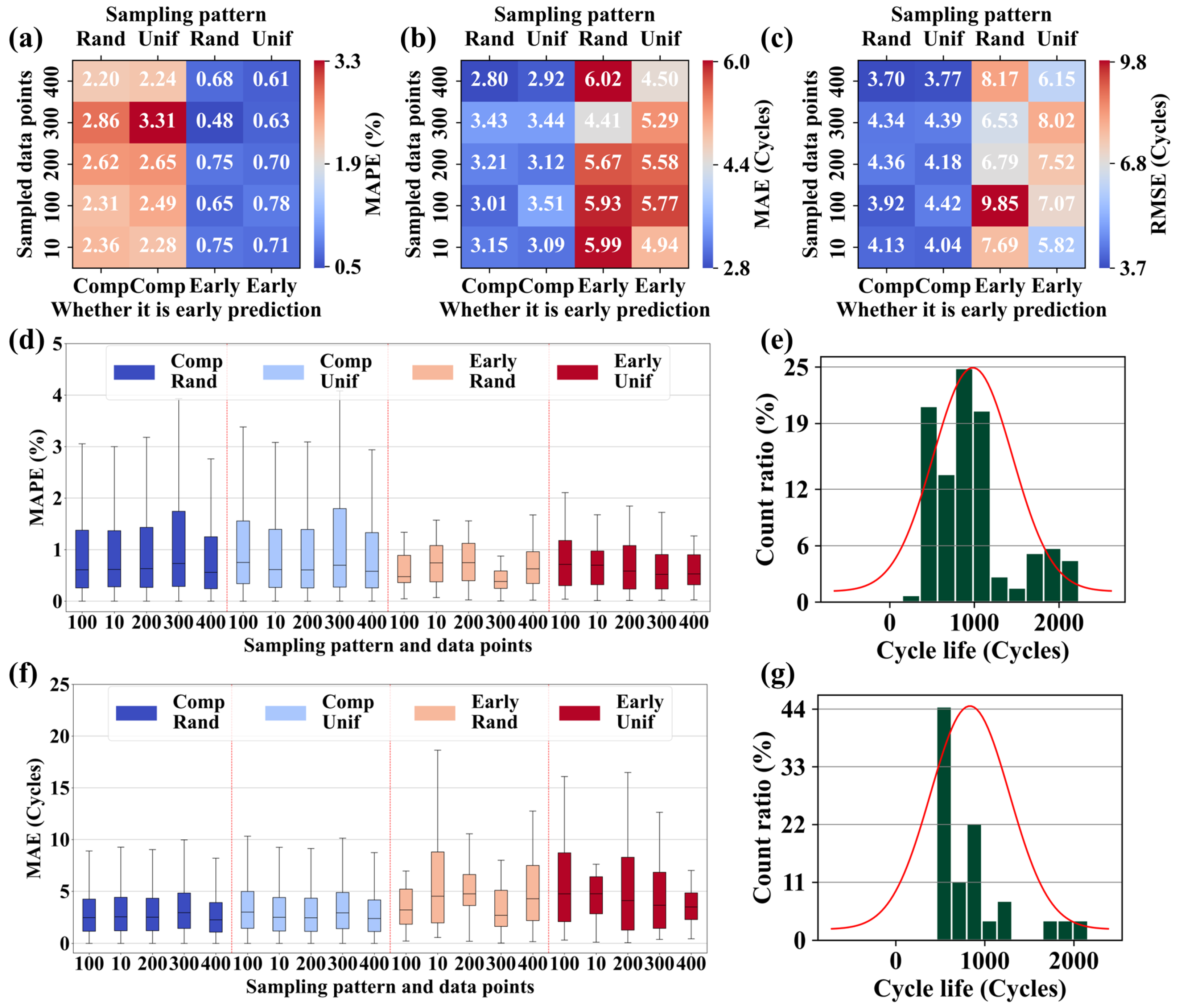

4.1. Predictive Performance under Different Conditions

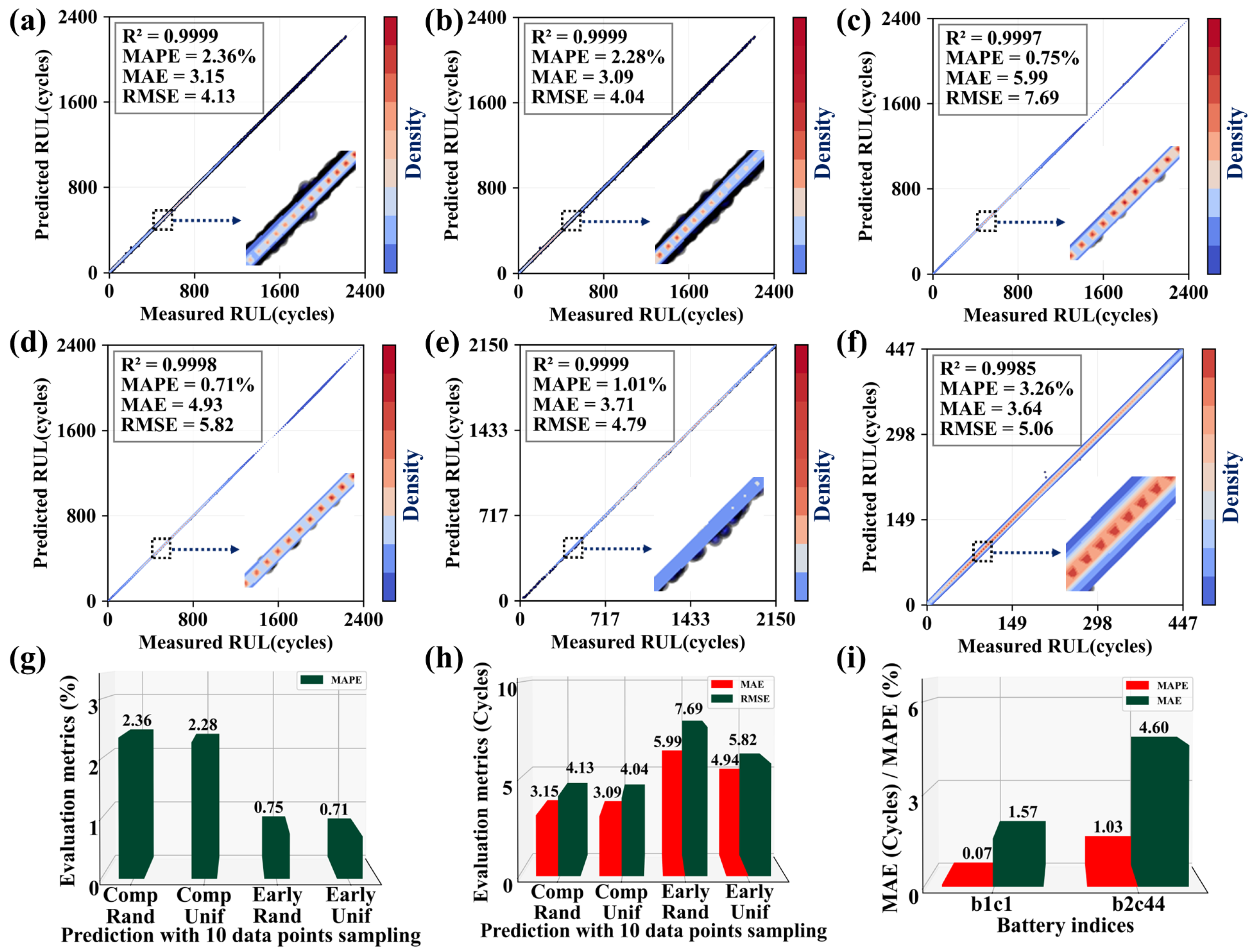

4.2. Predictive Performance under 10 Data Points

4.3. Ablation Experiments

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Nomenclature

| LIBs | lithium-ion batteries |

| ML | machine learning |

| SVM | support vector machine |

| KNN | k-nearest neighbors |

| RUL | remaining useful life |

| EIS | electrochemical impedance spectroscopy |

| GPR | gaussian process regression |

| SOTA | state of the art |

| MAPE | mean absolute percentage error |

| RNN | recurrent neural network |

| CNN | convolutional neural network |

| FPNN | flexible parallel neural network |

| BMS | battery management system |

| NOI | number of inceptionblock |

| MAE | mean absolute error |

| RMSE | root-mean-squared-error |

| CC | constant current |

| CV | constant voltage |

Appendix A

| Sampling Mode | Points | Detach | MAPE (%) | MAE (Cycles) | RMSE (Cycles) |

|---|---|---|---|---|---|

| Random sampling | 10 | None | 2.36 | 3.15 | 4.13 |

| 10 | Initial layers | 3.23 | 3.87 | 5.06 | |

| 10 | Residual | 2.20 | 3.12 | 4.04 | |

| 10 | 3D conv | 3.88 | 5.61 | 7.75 | |

| 10 | 1 block | 2.54 | 3.72 | 4.83 | |

| 10 | 2 blocks | 4.00 | 4.38 | 5.62 | |

| Random sampling | 10 | 3 blocks | 2.68 | 3.72 | 5.02 |

| 10 | A branch | 99.86 | 484.65 | 619.75 | |

| 100 | None | 2.31 | 3.01 | 3.92 | |

| 100 | Initial layers | 6.07 | 7.21 | 8.87 | |

| 100 | Residual | 2.39 | 3.16 | 4.08 | |

| 100 | 3D conv | 4.46 | 5.61 | 7.32 | |

| 100 | 1 block | 3.37 | 4.73 | 6.01 | |

| 100 | 2 blocks | 4.37 | 5.37 | 6.51 | |

| 100 | 3 blocks | 11.17 | 13.62 | 14.35 | |

| 100 | A branch | 99.84 | 484.56 | 619.63 | |

| 200 | None | 2.62 | 3.21 | 4.36 | |

| 200 | Initial layers | NaN | NaN | NaN | |

| 200 | Residual | 1.87 | 2.69 | 3.45 | |

| 200 | 3D conv | 4.36 | 5.92 | 7.44 | |

| 200 | 1 block | 2.7 | 3.99 | 4.85 | |

| 200 | 2 blocks | 2.56 | 3.46 | 4.45 | |

| 200 | 3 blocks | 7.07 | 7.43 | 8.75 | |

| 200 | A branch | 99.85 | 484.58 | 619.65 | |

| 300 | None | 2.86 | 3.43 | 4.34 | |

| 300 | Initial layers | NaN | NaN | NaN | |

| 300 | Residual | 2.24 | 2.87 | 3.84 | |

| 300 | 3D conv | 5.07 | 7.58 | 9.07 | |

| 300 | 1 block | 2.76 | 3.32 | 4.32 | |

| 300 | 2 blocks | 2.59 | 3.28 | 4.29 | |

| 300 | 3 blocks | 3.99 | 5.43 | 6.8 | |

| 300 | A branch | 99.84 | 484.57 | 619.63 | |

| 400 | None | 2.2 | 2.8 | 3.7 | |

| 400 | Initial layers | NaN | NaN | NaN | |

| 400 | Residual | 2.07 | 3.11 | 3.96 | |

| 400 | 3D conv | 3.75 | 5.48 | 7 | |

| 400 | 1 block | 2.88 | 3.5 | 4.61 | |

| 400 | 2 blocks | 5.42 | 8.24 | 10.29 | |

| 400 | 3 blocks | 6.47 | 7.12 | 8.66 | |

| 400 | A branch | 99.85 | 484.63 | 619.72 | |

| Uniform sampling | 10 | None | 2.28 | 3.09 | 4.04 |

| 10 | Initial layers | 2.80 | 3.40 | 4.50 | |

| 10 | Residual | 2.52 | 3.22 | 4.50 | |

| 10 | 3D conv | 4.40 | 6.06 | 8.24 | |

| 10 | 1 block | 2.48 | 2.97 | 3.98 | |

| 10 | 2 blocks | 2.63 | 3.31 | 4.39 | |

| 10 | 3 blocks | 3.44 | 3.91 | 5.09 | |

| 10 | A branch | 99.86 | 484.67 | 619.77 | |

| 100 | None | 2.49 | 3.51 | 4.42 | |

| 100 | Initial layers | 4.53 | 4.93 | 6.17 | |

| 100 | Residual | 2.13 | 2.96 | 3.8 | |

| 100 | 3D conv | 3.95 | 4.96 | 6.56 | |

| 100 | 1 block | 2.6 | 4.05 | 5.2 | |

| 100 | 2 blocks | 2.48 | 3.23 | 4.21 | |

| 100 | 3 blocks | 4.71 | 7 | 8.66 | |

| 100 | A branch | 99.83 | 484.53 | 619.6 | |

| 200 | None | 2.65 | 3.12 | 4.18 | |

| 200 | Initial layers | NaN | NaN | NaN | |

| 200 | Residual | 1.92 | 2.48 | 3.27 | |

| 200 | 3D conv | 3.77 | 5.2 | 6.77 | |

| 200 | 1 block | 2.42 | 2.97 | 3.95 | |

| 200 | 2 blocks | 2.53 | 3.15 | 4.16 | |

| 200 | 3 blocks | 5.84 | 6.39 | 7.82 | |

| 200 | A branch | 99.84 | 484.58 | 619.64 | |

| 300 | None | 3.31 | 3.44 | 4.39 | |

| 300 | Initial layers | NaN | NaN | NaN | |

| 300 | Residual | 2.07 | 2.83 | 3.77 | |

| 300 | 3D conv | 3.54 | 5.05 | 6.64 | |

| 300 | 1 block | 3.65 | 4.04 | 5.12 | |

| 300 | 2 blocks | 2.98 | 3.51 | 4.55 | |

| 300 | 3 blocks | 3.42 | 5.08 | 6.53 | |

| 300 | A branch | 99.84 | 484.56 | 619.61 | |

| 400 | None | 2.24 | 2.92 | 3.77 | |

| 400 | Initial layers | NaN | NaN | NaN | |

| Uniform sampling | 400 | Residual | 2.29 | 2.72 | 3.56 |

| 400 | 3D conv | 3.35 | 4.51 | 6.01 | |

| 400 | 1 block | 2.72 | 3.38 | 4.48 | |

| 400 | 2 blocks | 3.78 | 5.62 | 7.15 | |

| 400 | 3 blocks | 6.75 | 8.75 | 10.17 | |

| 400 | A branch | 99.85 | 484.66 | 619.74 |

| Sampling Mode | Points | Detach | MAPE (%) | MAE (Cycles) | RMSE (Cycles) |

|---|---|---|---|---|---|

| Random sampling | 10 | None | 0.75 | 5.99 | 7.69 |

| 10 | Initial layers | 0.70 | 5.57 | 7.62 | |

| 10 | Residual | 0.76 | 5.41 | 6.24 | |

| 10 | 3D conv | 1.17 | 9.86 | 13.38 | |

| 10 | 1 block | 0.52 | 3.50 | 4.33 | |

| 10 | 2 blocks | 0.91 | 6.21 | 6.96 | |

| 10 | 3 blocks | 0.90 | 7.80 | 10.89 | |

| 10 | A branch | 99.60 | 820.09 | 931.36 | |

| 100 | None | 0.65 | 5.93 | 9.85 | |

| 100 | Initial layers | 1.22 | 9.97 | 12.93 | |

| 100 | Residual | 0.74 | 5.61 | 7.76 | |

| 100 | 3D conv | 0.86 | 6.84 | 10.31 | |

| 100 | 1 block | 0.83 | 7.04 | 9.96 | |

| 100 | 2 blocks | 1.08 | 8.49 | 10.45 | |

| 100 | 3 blocks | 1.7 | 11.44 | 12.41 | |

| 100 | A branch | 99.58 | 819.93 | 932.21 | |

| 200 | None | 0.75 | 5.67 | 6.79 | |

| 200 | Initial layers | NaN | NaN | NaN | |

| 200 | Residual | 0.57 | 4.49 | 6.58 | |

| 200 | 3D conv | 1.2 | 9.8 | 14.38 | |

| 200 | 1 block | 0.62 | 4.5 | 5.49 | |

| 200 | 2 blocks | 0.97 | 7.33 | 9.27 | |

| 200 | 3 blocks | 1.55 | 11.37 | 13.39 | |

| 200 | A branch | 99.58 | 819.95 | 931.22 | |

| 300 | None | 0.48 | 4.41 | 6.53 | |

| 300 | Initial layers | NaN | NaN | NaN | |

| 300 | Residual | 0.64 | 4.29 | 5.67 | |

| 300 | 3D conv | 1.6 | 12.54 | 15.29 | |

| 300 | 1 block | 0.78 | 6.43 | 8.97 | |

| 300 | 2 blocks | 0.7 | 5.99 | 8.02 | |

| 300 | 3 blocks | 0.86 | 7.5 | 11.64 | |

| 300 | A branch | 99.58 | 819.93 | 931.19 | |

| 400 | None | 0.68 | 6.02 | 8.17 | |

| 400 | Initial layers | NaN | NaN | NaN | |

| 400 | Residual | 0.56 | 4.48 | 6.48 | |

| 400 | 3D conv | 1.23 | 9.4 | 12.16 | |

| 400 | 1 block | 0.74 | 6.51 | 9.82 | |

| 400 | 2 blocks | 1.37 | 12.49 | 17.37 | |

| 400 | 3 blocks | 1.08 | 8.45 | 11.23 | |

| 400 | A branch | 99.6 | 820.04 | 931.34 | |

| Uniform sampling | 10 | None | 0.71 | 4.94 | 5.82 |

| 10 | Initial layers | 0.62 | 4.69 | 6.22 | |

| 10 | Residual | 0.51 | 3.63 | 4.25 | |

| 10 | 3D conv | 1.30 | 10.96 | 16.38 | |

| 10 | 1 block | 0.69 | 4.91 | 5.82 | |

| 10 | 2 blocks | 0.52 | 3.50 | 4.54 | |

| 10 | 3 blocks | 0.76 | 6.77 | 10.16 | |

| 10 | A branch | 99.60 | 820.10 | 931.40 | |

| 100 | None | 0.78 | 5.77 | 7.07 | |

| 100 | Initial layers | 0.75 | 6.79 | 9.49 | |

| 100 | Residual | 0.66 | 5.3 | 7.64 | |

| Uniform sampling | 100 | 3D conv | 1.34 | 10.46 | 15.11 |

| 100 | 1 block | 1 | 8.49 | 11.73 | |

| 100 | 2 blocks | 0.76 | 6.92 | 10.09 | |

| 100 | 3 blocks | 0.77 | 5.77 | 7.76 | |

| 100 | A branch | 99.58 | 819.9 | 931.19 | |

| 200 | None | 0.7 | 5.58 | 7.52 | |

| 200 | Initial layers | NaN | NaN | NaN | |

| 200 | Residual | 0.71 | 5.28 | 7.36 | |

| 200 | 3D conv | 0.94 | 7.73 | 11.04 | |

| 200 | 1 block | 0.89 | 6.74 | 8.93 | |

| 200 | 2 blocks | 0.87 | 7.77 | 10.85 | |

| 200 | 3 blocks | 1.01 | 8.93 | 12.87 | |

| 200 | A branch | 99.58 | 819.94 | 931.22 | |

| 300 | None | 0.63 | 5.29 | 8.02 | |

| 300 | Initial layers | NaN | NaN | NaN | |

| 300 | Residual | 0.66 | 4.8 | 6.08 | |

| 300 | 3D conv | 1.42 | 11.78 | 16.94 | |

| 300 | 1 block | 0.66 | 5.54 | 8.09 | |

| 300 | 2 blocks | 0.6 | 5.3 | 8.41 | |

| 300 | 3 blocks | 1.2 | 10.16 | 12.86 | |

| 300 | A branch | 99.58 | 819.93 | 931.19 | |

| 400 | None | 0.61 | 4.5 | 6.15 | |

| 400 | Initial layers | NaN | NaN | NaN | |

| 400 | Residual | 0.63 | 4.76 | 6.36 | |

| 400 | 3D conv | 1.08 | 9.03 | 14.65 | |

| 400 | 1 block | 0.74 | 6.28 | 9.73 | |

| 400 | 2 blocks | 0.97 | 9.77 | 15.15 | |

| 400 | 3 blocks | 1.21 | 8.06 | 9.55 | |

| 400 | A branch | 99.72 | 820.65 | 931.67 |

References

- Shchegolkov, A.V.; Komarov, F.F.; Lipkin, M.S.; Milchanin, O.V.; Parfimovich, I.D.; Shchegolkov, A.V.; Semenkova, A.V.; Velichko, A.V.; Chebotov, K.D.; Nokhaeva, V.A. Synthesis and study of cathode materials based on carbon nanotubes for lithium-ion batteries. Inorg. Mater. Appl. Res. 2021, 12, 1281–1287. [Google Scholar] [CrossRef]

- Guan, Y.; Vasquez, J.C.; Guerrero, J.M.; Wang, Y.; Feng, W. Frequency stability of hierarchically controlled hybrid photovoltaic-battery-hydropower microgrids. IEEE Trans. Ind. Appl. 2015, 51, 4729–4742. [Google Scholar] [CrossRef]

- He, Y.; Liu, X.; Zhang, C.; Chen, Z. A new model for State-of-Charge (SOC) estimation for high-power Li-ion batteries. Appl. Energy 2013, 101, 808–814. [Google Scholar] [CrossRef]

- Liao, L.; Köttig, F. Review of hybrid prognostics approaches for remaining useful life prediction of engineered systems, and an application to battery life prediction. IEEE Trans. Reliab. 2014, 63, 191–207. [Google Scholar] [CrossRef]

- Liu, X.; Wu, J.; Zhang, C.; Chen, Z. A method for state of energy estimation of lithium-ion batteries at dynamic currents and temperatures. J. Power Sources 2014, 270, 151–157. [Google Scholar] [CrossRef]

- Zubi, G.; Dufo-López, R.; Carvalho, M.; Pasaoglu, G. The lithium-ion battery: State of the art and future perspectives. Renew. Sustain. Energy Rev. 2018, 89, 292–308. [Google Scholar] [CrossRef]

- Severson, K.A.; Attia, P.M.; Jin, N.; Perkins, N.; Jiang, B.; Yang, Z.; Chen, M.H.; Aykol, M.; Herring, P.K.; Fraggedakis, D.; et al. Data-driven prediction of battery cycle life before capacity degradation. Nat. Energy 2019, 4, 383–391. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhao, M. Cloud-based in-situ battery life prediction and classification using machine learning. Energy Storage Mater. 2023, 57, 346–359. [Google Scholar] [CrossRef]

- Harris, S.J.; Harris, D.J.; Li, C. Failure statistics for commercial lithium ion batteries: A study of 24 pouch cells. J. Power Sources 2017, 342, 589–597. [Google Scholar] [CrossRef]

- Virkar, A.V. A model for degradation of electrochemical devices based on linear non-equilibrium thermodynamics and its application to lithium ion batteries. J. Power Sources 2011, 196, 5970–5984. [Google Scholar] [CrossRef]

- Zhang, W.J. A review of the electrochemical performance of alloy anodes for lithium-ion batteries. J. Power Sources 2011, 196, 13–24. [Google Scholar] [CrossRef]

- Hu, X.; Li, S.; Peng, H. A comparative study of equivalent circuit models for Li-ion batteries. J. Power Sources 2012, 198, 359–367. [Google Scholar] [CrossRef]

- Wei, J.; Dong, G.; Chen, Z. Remaining useful life prediction and state of health diagnosis for lithium-ion batteries using particle filter and support vector regression. IEEE Trans. Ind. Electron. 2017, 65, 5634–5643. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, Z.; Alsaadi, F.E. Detection of intermittent faults for nonuniformly sampled multi-rate systems with dynamic quantisation and missing measurements. Int. J. Control. 2020, 93, 898–909. [Google Scholar] [CrossRef]

- Xing, Y.; Ma, E.W.; Tsui, K.L.; Pecht, M. An ensemble model for predicting the remaining useful performance of lithium-ion batteries. Microelectron. Reliab. 2013, 53, 811–820. [Google Scholar] [CrossRef]

- Kemper, P.; Li, S.E.; Kum, D. Simplification of pseudo two dimensional battery model using dynamic profile of lithium concentration. J. Power Sources 2015, 286, 510–525. [Google Scholar] [CrossRef]

- Zhang, H.; Miao, Q.; Zhang, X.; Liu, Z. An improved unscented particle filter approach for lithium-ion battery remaining useful life prediction. Microelectron. Reliab. 2018, 81, 288–298. [Google Scholar] [CrossRef]

- He, W.; Williard, N.; Osterman, M.; Pecht, M. Prognostics of lithium-ion batteries based on Dempster–Shafer theory and the Bayesian Monte Carlo method. J. Power Sources 2011, 196, 10314–10321. [Google Scholar] [CrossRef]

- Miao, Q.; Xie, L.; Cui, H.; Liang, W.; Pecht, M. Remaining useful life prediction of lithium-ion battery with unscented particle filter technique. Microelectron. Reliab. 2013, 53, 805–810. [Google Scholar] [CrossRef]

- Ng, S.S.; Xing, Y.; Tsui, K.L. A naive Bayes model for robust remaining useful life prediction of lithium-ion battery. Appl. Energy 2014, 118, 114–123. [Google Scholar] [CrossRef]

- Nuhic, A.; Terzimehic, T.; Soczka-Guth, T.; Buchholz, M.; Dietmayer, K. Health diagnosis and remaining useful life prognostics of lithium-ion batteries using data-driven methods. J. Power Sources 2013, 239, 680–688. [Google Scholar] [CrossRef]

- Patil, M.A.; Tagade, P.; Hariharan, K.S.; Kolake, S.M.; Song, T.; Yeo, T.; Doo, S. A novel multistage Support Vector Machine based approach for Li ion battery remaining useful life estimation. Appl. Energy 2015, 159, 285–297. [Google Scholar] [CrossRef]

- Qin, T.; Zeng, S.; Guo, J. Robust prognostics for state of health estimation of lithium-ion batteries based on an improved PSO–SVR model. Microelectron. Reliab. 2015, 55, 1280–1284. [Google Scholar] [CrossRef]

- Zhao, Q.; Qin, X.; Zhao, H.; Feng, W. A novel prediction method based on the support vector regression for the remaining useful life of lithium-ion batteries. Microelectron. Reliab. 2018, 85, 99–108. [Google Scholar] [CrossRef]

- Chang, Y.; Fang, H.; Zhang, Y. A new hybrid method for the prediction of the remaining useful life of a lithium-ion battery. Appl. Energy 2017, 206, 1564–1578. [Google Scholar] [CrossRef]

- Saha, B.; Goebel, K.; Poll, S.; Christophersen, J. Prognostics methods for battery health monitoring using a Bayesian framework. IEEE Trans. Instrum. Meas. 2008, 58, 291–296. [Google Scholar] [CrossRef]

- Wang, D.; Miao, Q.; Pecht, M. Prognostics of lithium-ion batteries based on relevance vectors and a conditional three-parameter capacity degradation model. J. Power Sources 2013, 239, 253–264. [Google Scholar] [CrossRef]

- Richardson, R.R.; Osborne, M.A.; Howey, D.A. Gaussian process regression for forecasting battery state of health. J. Power Sources 2017, 357, 209–219. [Google Scholar] [CrossRef]

- Richardson, R.R.; Osborne, M.A.; Howey, D.A. Battery health prediction under generalized conditions using a Gaussian process transition model. J. Energy Storage 2019, 23, 320–328. [Google Scholar] [CrossRef]

- Liu, D.; Luo, Y.; Peng, Y.; Peng, X.; Pecht, M. Lithium-ion battery remaining useful life estimation based on nonlinear AR model combined with degradation feature. In Proceedings of the Annual Conference of the PHM Society, Minneapolis, MI, USA, 23 September 2012; Volume 4. [Google Scholar]

- Ren, L.; Zhao, L.; Hong, S.; Zhao, S.; Wang, H.; Zhang, L. Remaining useful life prediction for lithium-ion battery: A deep learning approach. IEEE Access 2018, 6, 50587–50598. [Google Scholar] [CrossRef]

- Chen, J.C.; Chen, T.L.; Liu, W.J.; Cheng, C.; Li, M.G. Combining empirical mode decomposition and deep recurrent neural networks for predictive maintenance of lithium-ion battery. Adv. Eng. Inform. 2021, 50, 101405. [Google Scholar] [CrossRef]

- Ma, G.; Zhang, Y.; Cheng, C.; Zhou, B.; Hu, P.; Yuan, Y. Remaining useful life prediction of lithium-ion batteries based on false nearest neighbors and a hybrid neural network. Appl. Energy 2019, 253, 113626. [Google Scholar] [CrossRef]

- Zhang, W.; Li, X.; Li, X. Deep learning-based prognostic approach for lithium-ion batteries with adaptive time-series prediction and on-line validation. Measurement 2020, 164, 108052. [Google Scholar] [CrossRef]

- Zhang, Y.; Xiong, R.; He, H.; Liu, Z. A LSTM-RNN method for the lithuim-ion battery remaining useful life prediction. In Proceedings of the 2017 Prognostics and System Health Management Conference (Phm-Harbin), Harbin, China, 29–12 July 2017; pp. 1–4. [Google Scholar]

- Zhang, Y.; Xiong, R.; He, H.; Pecht, M.G. Long short-term memory recurrent neural network for remaining useful life prediction of lithium-ion batteries. IEEE Trans. Veh. Technol. 2018, 67, 5695–5705. [Google Scholar] [CrossRef]

- Zheng, S.; Ristovski, K.; Farahat, A.; Gupta, C. Long short-term memory network for remaining useful life estimation. In Proceedings of the 2017 IEEE International Conference on Prognostics and Health Management (ICPHM), Dallas, TX, USA, 19–21 June 2017; pp. 88–95. [Google Scholar]

- Sateesh Babu, G.; Zhao, P.; Li, X.L. Deep convolutional neural network based regression approach for estimation of remaining useful life. In Proceedings of the Database Systems for Advanced Applications: 21st International Conference, DASFAA 2016, Dallas, TX, USA, 16–19 April 2016; Proceedings, Part I 21. Springer: Berlin/Heidelberg, Germany, 2016; pp. 214–228. [Google Scholar]

- An, Q.; Tao, Z.; Xu, X.; El Mansori, M.; Chen, M. A data-driven model for milling tool remaining useful life prediction with convolutional and stacked LSTM network. Measurement 2020, 154, 107461. [Google Scholar] [CrossRef]

- Kara, A. A data-driven approach based on deep neural networks for lithium-ion battery prognostics. Neural Comput. Appl. 2021, 33, 13525–13538. [Google Scholar] [CrossRef]

- Ren, L.; Dong, J.; Wang, X.; Meng, Z.; Zhao, L.; Deen, M.J. A data-driven auto-CNN-LSTM prediction model for lithium-ion battery remaining useful life. IEEE Trans. Ind. Inform. 2020, 17, 3478–3487. [Google Scholar] [CrossRef]

- Chen, D.; Zhang, W.; Zhang, C.; Sun, B.; Cong, X.; Wei, S.; Jiang, J. A novel deep learning-based life prediction method for lithium-ion batteries with strong generalization capability under multiple cycle profiles. Appl. Energy 2022, 327, 120114. [Google Scholar] [CrossRef]

- Yang, Y. A machine-learning prediction method of lithium-ion battery life based on charge process for different applications. Appl. Energy 2021, 292, 116897. [Google Scholar] [CrossRef]

- Zhang, Q.; Yang, L.; Guo, W.; Qiang, J.; Peng, C.; Li, Q.; Deng, Z. A deep learning method for lithium-ion battery remaining useful life prediction based on sparse segment data via cloud computing system. Energy 2022, 241, 122716. [Google Scholar] [CrossRef]

- Jiang, L.; Li, Z.; Hu, C.; Huang, Q.; He, G. Flexible Parallel Neural Network Architecture Model for Early Prediction of Lithium Battery Life. arXiv 2024, arXiv:2401.16102. [Google Scholar]

- Snoek, J.; Larochelle, H.; Adams, R.P. Practical bayesian optimization of machine learning algorithms. Adv. Neural Inf. Process. Syst. 2012, 25, 2960–2968. [Google Scholar]

| Complete/Early | Points | MAPE (%) | MAE (Cycles) | RMSE (Cycles) |

|---|---|---|---|---|

| Complete | 10 | 2.36 | 3.15 | 4.13 |

| 100 | 2.31 | 3.01 | 3.92 | |

| 200 | 2.62 | 3.21 | 4.36 | |

| 300 | 2.86 | 3.43 | 4.34 | |

| 400 | 2.20 | 2.80 | 3.70 | |

| Early | 10 | 0.75 | 5.99 | 7.69 |

| 100 | 0.65 | 5.93 | 9.85 | |

| 200 | 0.75 | 5.67 | 6.79 | |

| 300 | 0.48 | 4.41 | 6.53 | |

| 400 | 0.68 | 6.02 | 8.17 |

| Methods | MAPE (%) | MAE (Cycles) | RMSE (Cycles) | Requirements for Input Data |

|---|---|---|---|---|

| Linear model [7] | 9.1 | — | — | The dense data of the 100 cycles |

| HPR CNN [44] | 5.16 | 46.69 | 64.52 | 20% sparse charging data from the first 10 cycles |

| HPR CNN [44] | 4.15 | 16.09 | 27.47 | 20% sparse charging data from 10 cycles |

| HCNN [43] | 3.55 | 9 | 11 | Dense charging data of the 60 cycles |

| TOP-Net [42] | 3.37 | 8 | 11 | The dense data of the 50 cycles |

| Proposed method | 2.36 | 3.15 | 4.13 | 10 random charging points from each of 10 cycles |

| Proposed method | 0.75 | 5.99 | 7.69 | 10 random charging points from each of the first 10 cycles |

| Complete/Early | Detach | MAPE (%) | MAE (Cycles) | RMSE (Cycles) |

|---|---|---|---|---|

| Complete | None | 2.36 | 3.15 | 4.13 |

| Initial layers | 3.23 | 3.87 | 5.06 | |

| Residual | 2.20 | 3.12 | 4.04 | |

| 3D conv | 3.88 | 5.61 | 7.75 | |

| 1 block | 2.54 | 3.72 | 4.83 | |

| 2 blocks | 4.00 | 4.38 | 5.62 | |

| 3 blocks | 2.68 | 3.72 | 5.02 | |

| A branch | 99.86 | 484.65 | 619.75 | |

| Early | None | 0.75 | 5.99 | 7.69 |

| Initial layers | 0.70 | 5.57 | 7.62 | |

| Residual | 0.76 | 5.41 | 6.24 | |

| 3D conv | 1.17 | 9.86 | 13.38 | |

| 1 block | 0.52 | 3.50 | 4.33 | |

| 2 blocks | 0.91 | 6.21 | 6.96 | |

| 3 blocks | 0.90 | 7.80 | 10.89 | |

| A branch | 99.60 | 820.09 | 931.36 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jiang, L.; Huang, Q.; He, G. Predicting the Remaining Useful Life of Lithium-Ion Batteries Using 10 Random Data Points and a Flexible Parallel Neural Network. Energies 2024, 17, 1695. https://doi.org/10.3390/en17071695

Jiang L, Huang Q, He G. Predicting the Remaining Useful Life of Lithium-Ion Batteries Using 10 Random Data Points and a Flexible Parallel Neural Network. Energies. 2024; 17(7):1695. https://doi.org/10.3390/en17071695

Chicago/Turabian StyleJiang, Lidang, Qingsong Huang, and Ge He. 2024. "Predicting the Remaining Useful Life of Lithium-Ion Batteries Using 10 Random Data Points and a Flexible Parallel Neural Network" Energies 17, no. 7: 1695. https://doi.org/10.3390/en17071695

APA StyleJiang, L., Huang, Q., & He, G. (2024). Predicting the Remaining Useful Life of Lithium-Ion Batteries Using 10 Random Data Points and a Flexible Parallel Neural Network. Energies, 17(7), 1695. https://doi.org/10.3390/en17071695