A Novel Ensemble Approach for the Forecasting of Energy Demand Based on the Artificial Bee Colony Algorithm

Abstract

1. Introduction

2. Literature Review

2.1. Energy Demand Influencing Factors

2.2. Energy Demand Forecasting Method

3. Forecasting Framework and Methods

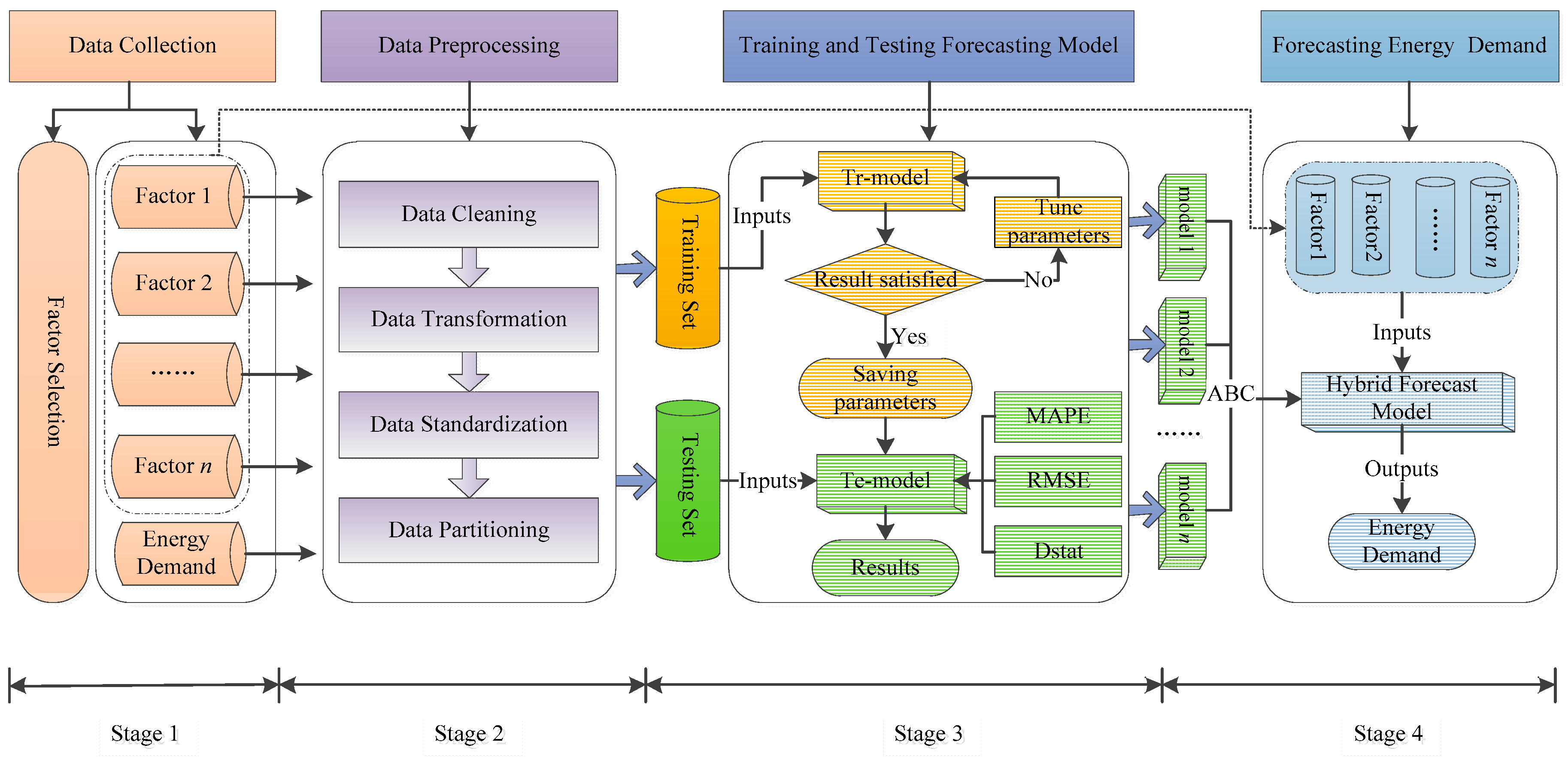

3.1. Ensemble Framework of Energy Demand Forecasting

3.1.1. Factor Selection

3.1.2. Data Preprocessing

- Data cleaning: Data cleaning is mainly utilized to fill in missing values, remove noise, monitor outliers, and deal with differences in datasets.

- Data transformation: The data transformation process involves several methods, including the transformation of multiple files into a unified available data format as well as feature extraction.

- Data standardization: Due to the different dimensions of data, large differences in the magnitude of collected data exist, often leading to large deviations in data analysis. Therefore, it is crucial to conduct the standardized processing of data to eliminate effects of dimensions and magnitude. The following Formula (1) is adopted in this paper:where represents the data of the element corresponding to the influencing factor after standardization, and Xi represents the sequence corresponding to the influencing factor.

- Data partitioning: To train and test the prediction model, observation values need to be divided into training sets and test sets. The data from the training set are used to train the prediction model to obtain optimal parameters, and then the data from the test set are used to test the generalization performance of the model.

3.1.3. Forecasting Model Training and Testing

3.1.4. Forecasting Future Energy Demand

3.2. Base Models

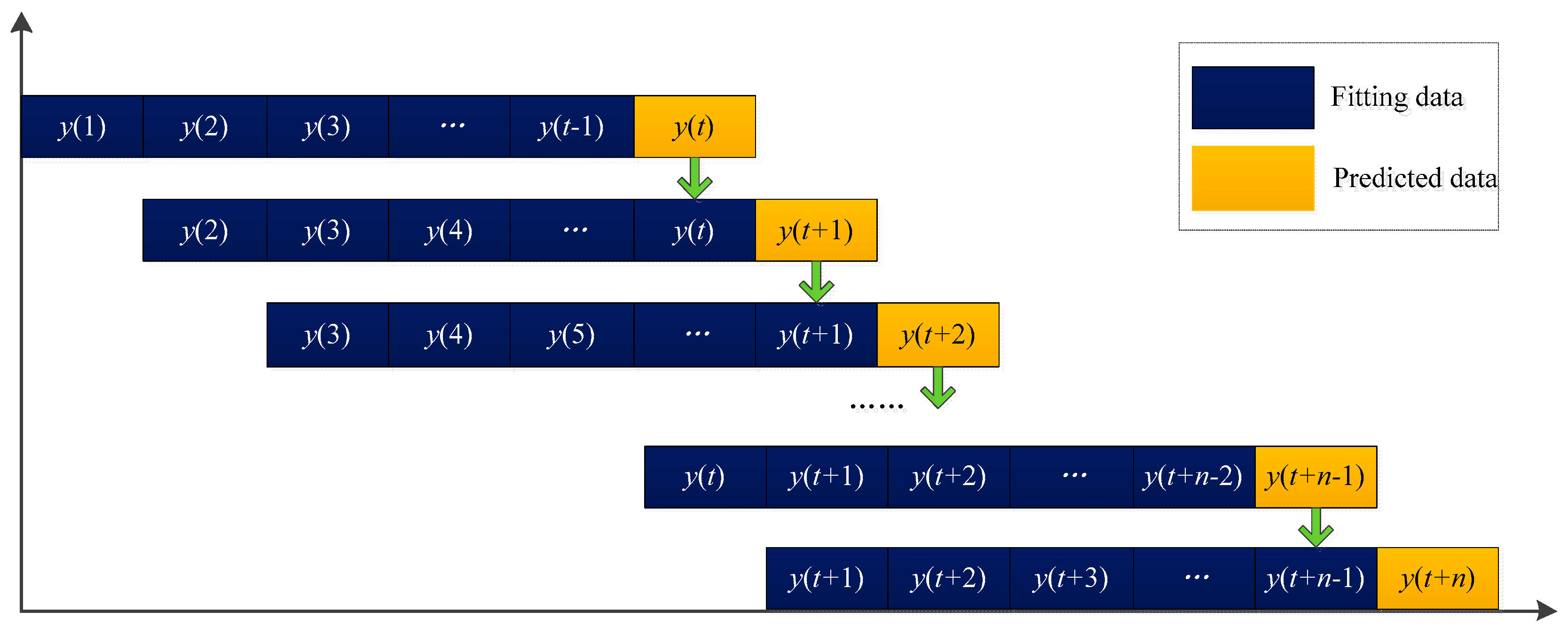

3.2.1. Autoregressive Integrated Moving Average

3.2.2. Second Exponential Smoothing

3.2.3. Support Vector Machine

3.2.4. Artificial Neural Networks

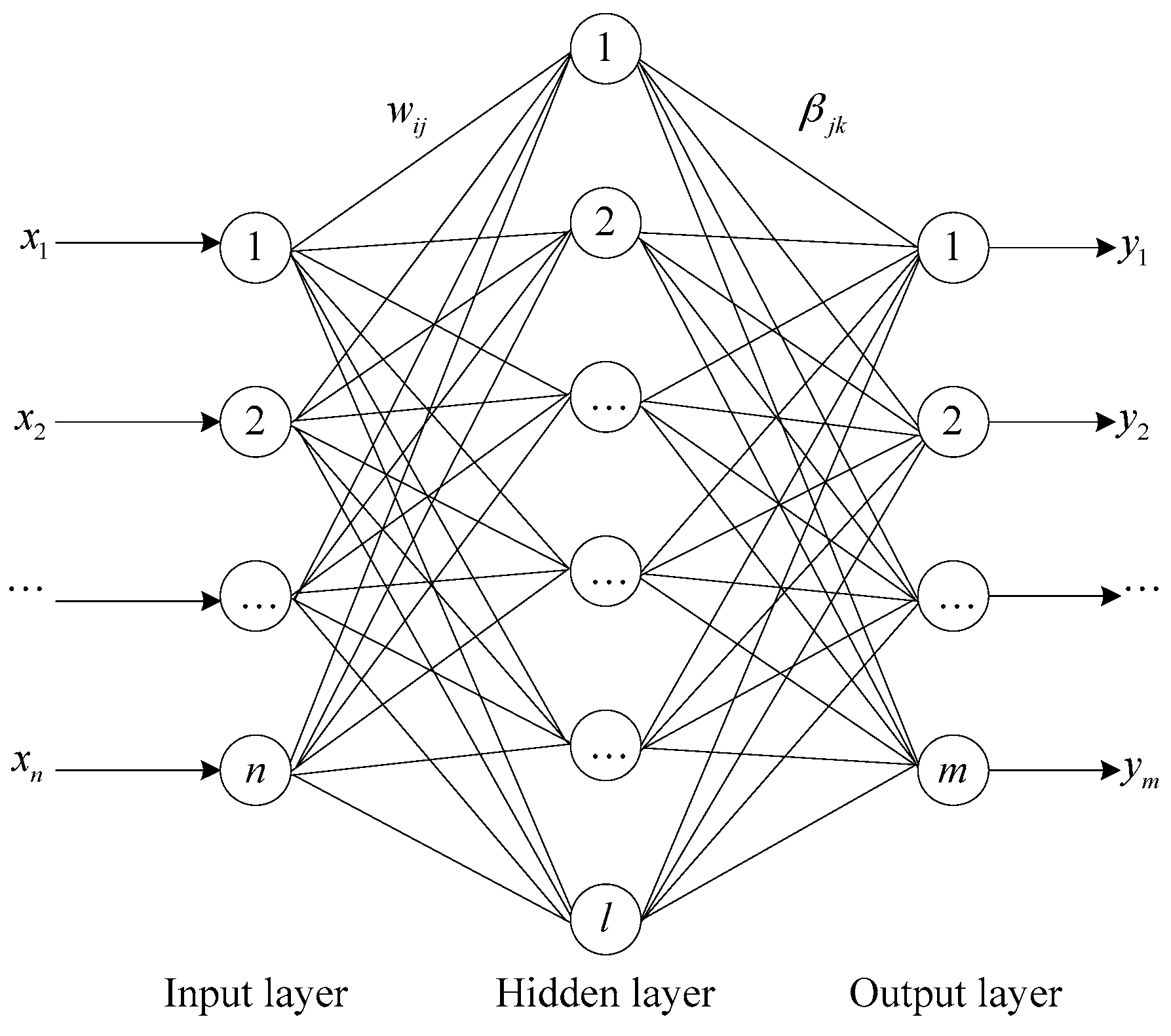

3.2.5. Extreme Learning Machine

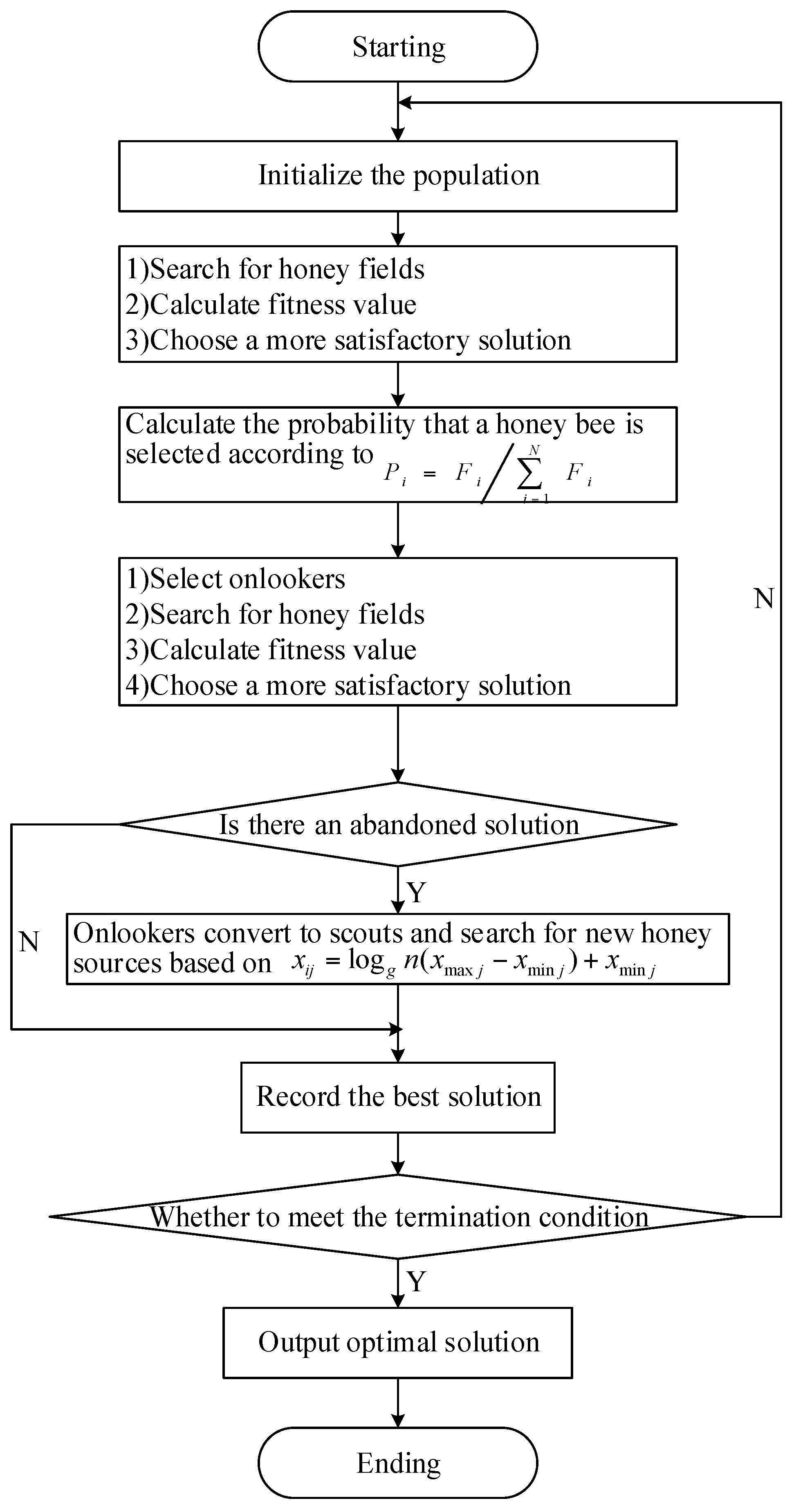

3.3. Artificial Bee Colony Ensemble Algorithm

4. Empirical Analysis

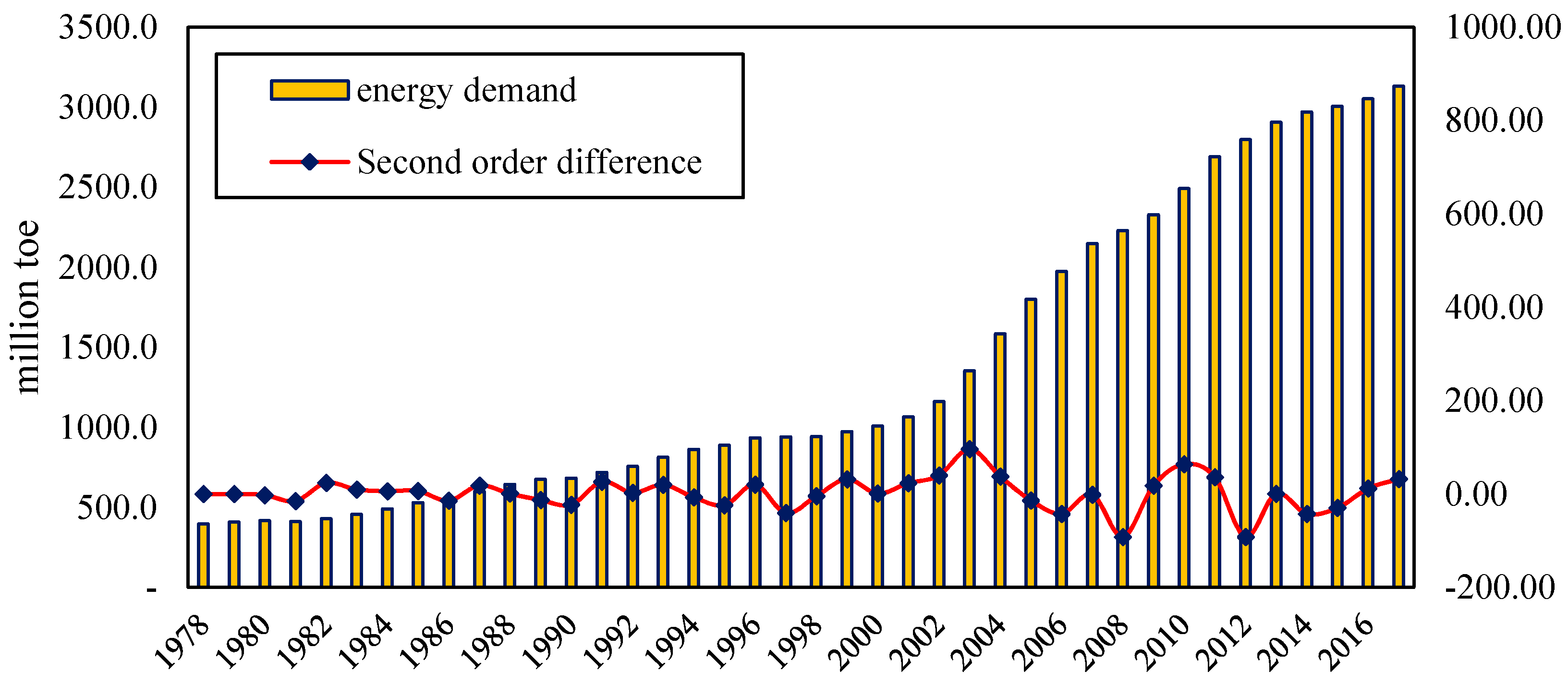

4.1. Datasets

4.2. Error Metric and Statistic Test

4.3. Parameter Settings

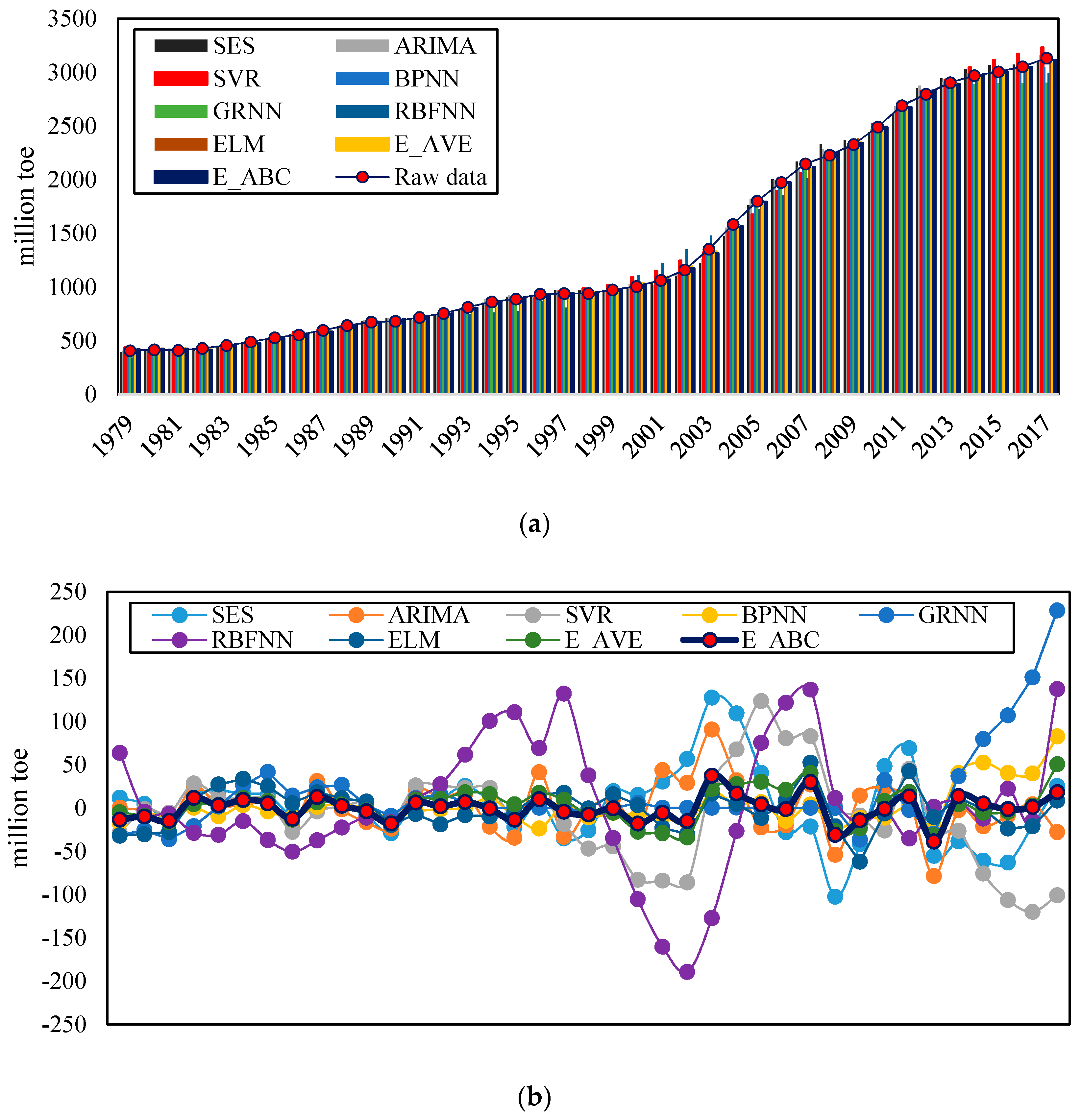

4.4. Forecasting Error and Statistical Test

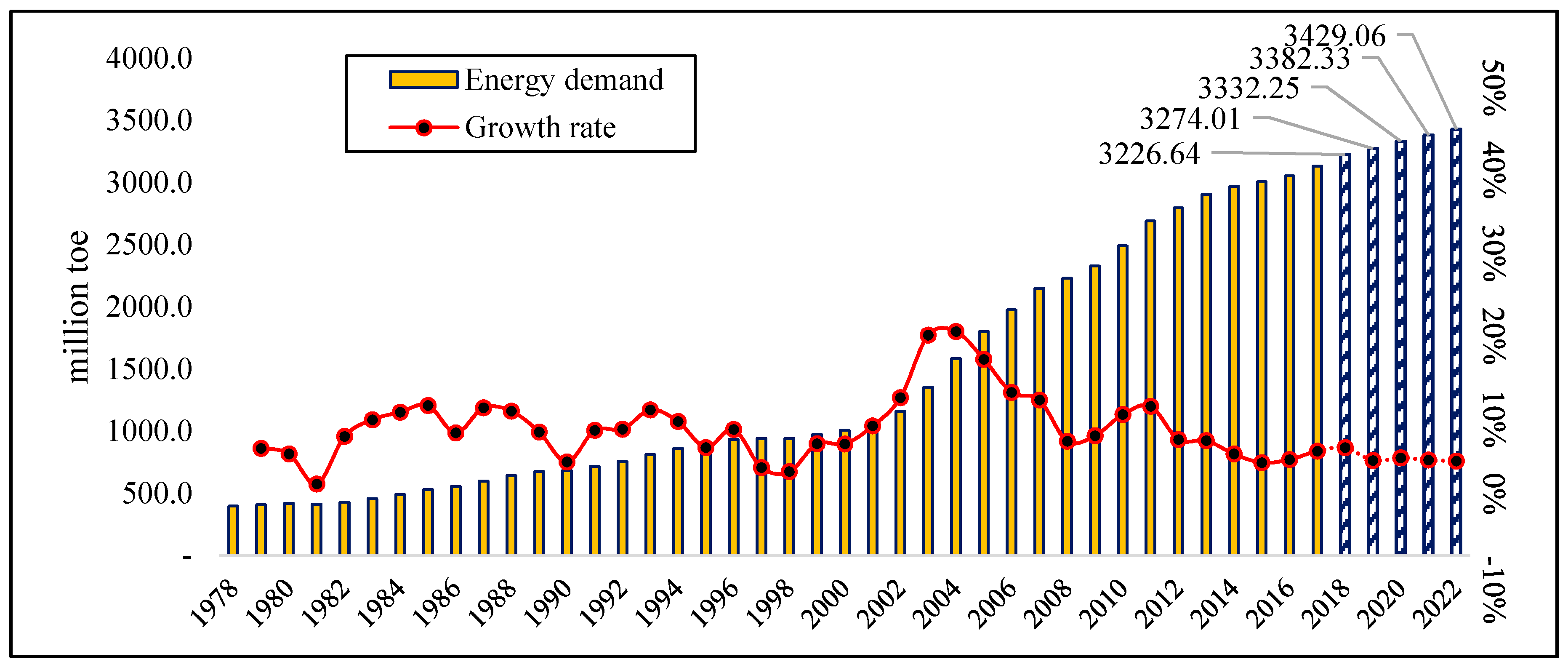

4.5. Future Energy Demand Forecasting Results

4.6. Discussion

5. Conclusions and Further Research

Author Contributions

Acknowledgments

Conflicts of Interest

Appendix A

| Year | GDP | IS | ES | TI | UR | POP | CPI | ED |

|---|---|---|---|---|---|---|---|---|

| Unit | 108 Yuan | / | / | / | % | 104 | / | Million Toe |

| 1978 | 3645.00 | 0.72 | 25.90 | 15.68 | 17.92 | 96,259.00 | 184.00 | 396.6 |

| 1979 | 4062.00 | 0.69 | 25.10 | 14.42 | 19.99 | 97,542.00 | 208.00 | 408.2 |

| 1980 | 4545.00 | 0.70 | 23.80 | 13.26 | 19.39 | 98,705.00 | 238.00 | 417.4 |

| 1981 | 4892.00 | 0.68 | 22.80 | 12.15 | 20.16 | 100,100.00 | 264.00 | 411.6 |

| 1982 | 5323.00 | 0.67 | 21.40 | 11.66 | 21.13 | 101,700.00 | 288.00 | 429.5 |

| 1983 | 5963.00 | 0.67 | 20.50 | 11.07 | 21.62 | 103,000.00 | 316.00 | 456.9 |

| 1984 | 7208.00 | 0.68 | 19.80 | 9.84 | 23.01 | 104,400.00 | 361.00 | 490.2 |

| 1985 | 9098.90 | 0.72 | 19.30 | 8.43 | 23.71 | 105,851.00 | 446.00 | 529.9 |

| 1986 | 10,376.20 | 0.73 | 19.50 | 7.79 | 24.52 | 107,507.00 | 497.00 | 555.3 |

| 1987 | 12,174.60 | 0.73 | 19.10 | 7.12 | 25.32 | 109,300.00 | 565.00 | 598.8 |

| 1988 | 15,180.40 | 0.74 | 19.10 | 6.13 | 25.81 | 111,026.00 | 714.00 | 643.1 |

| 1989 | 17,179.70 | 0.75 | 19.20 | 5.64 | 26.21 | 112,704.00 | 788.00 | 674.6 |

| 1990 | 18,872.90 | 0.73 | 18.70 | 5.23 | 26.41 | 114,333.00 | 833.00 | 683.2 |

| 1991 | 22,005.60 | 0.75 | 19.10 | 4.72 | 26.94 | 115,823.00 | 932.00 | 718.0 |

| 1992 | 27,194.50 | 0.78 | 19.40 | 4.01 | 27.46 | 117,171.00 | 1116.00 | 755.6 |

| 1993 | 35,673.20 | 0.80 | 20.10 | 3.25 | 27.99 | 119,517.00 | 1393.00 | 812.7 |

| 1994 | 48,637.50 | 0.80 | 19.30 | 2.52 | 28.51 | 119,850.00 | 1833.00 | 862.7 |

| 1995 | 61,339.90 | 0.80 | 19.30 | 2.14 | 29.04 | 121,121.00 | 1355.00 | 888.8 |

| 1996 | 71,813.60 | 0.80 | 20.50 | 1.88 | 30.48 | 122,389.00 | 2789.00 | 935.1 |

| 1997 | 79,715.00 | 0.82 | 22.20 | 1.70 | 31.91 | 123,626.00 | 3002.00 | 940.6 |

| 1998 | 85,195.50 | 0.82 | 22.60 | 1.60 | 33.35 | 124,761.00 | 3159.00 | 941.6 |

| 1999 | 90,564.40 | 0.84 | 23.50 | 1.55 | 34.78 | 125,786.00 | 3346.00 | 974.3 |

| 2000 | 100,280.10 | 0.85 | 24.40 | 1.45 | 36.22 | 126,743.00 | 3632.00 | 1007.9 |

| 2001 | 110,863.10 | 0.86 | 24.20 | 1.36 | 37.66 | 127,627.00 | 3869.00 | 1064.6 |

| 2002 | 121,717.40 | 0.86 | 24.70 | 1.31 | 39.09 | 128,453.00 | 4106.00 | 1161.0 |

| 2003 | 137,422.00 | 0.87 | 23.70 | 1.34 | 40.53 | 129,227.00 | 4411.00 | 1353.5 |

| 2004 | 161,840.20 | 0.87 | 23.80 | 1.32 | 41.76 | 129,988.00 | 4925.00 | 1583.8 |

| 2005 | 187,318.90 | 0.88 | 22.40 | 1.26 | 42.99 | 130,756.00 | 5463.00 | 1800.4 |

| 2006 | 219,438.50 | 0.89 | 22.20 | 1.18 | 44.34 | 131,448.00 | 6138.00 | 1974.7 |

| 2007 | 270,232.30 | 0.89 | 22.10 | 1.04 | 45.89 | 132,129.00 | 7081.00 | 2147.8 |

| 2008 | 319,515.50 | 0.89 | 22.00 | 0.91 | 46.99 | 132,802.00 | 8183.00 | 2229.0 |

| 2009 | 349,081.40 | 0.90 | 19.90 | 0.88 | 48.34 | 133,450.00 | 9514.00 | 2328.1 |

| 2010 | 413,030.30 | 0.90 | 21.40 | 0.79 | 49.95 | 134,091.00 | 10,919.00 | 2491.1 |

| 2011 | 489,300.60 | 0.90 | 21.40 | 0.71 | 51.27 | 134,735.00 | 13,134.00 | 2690.3 |

| 2012 | 540,367.40 | 0.90 | 21.80 | 0.74 | 52.57 | 135,404.00 | 14,699.00 | 2797.4 |

| 2013 | 595,244.40 | 0.91 | 22.40 | 0.70 | 53.73 | 136,072.00 | 16,190.00 | 2905.3 |

| 2014 | 643,974.00 | 0.91 | 23.10 | 0.66 | 54.77 | 136,782.00 | 17,778.00 | 2970.6 |

| 2015 | 689,052.10 | 0.92 | 24.20 | 0.62 | 56.10 | 137,462.00 | 19,397.00 | 3005.9 |

| 2016 | 744,127.20 | 0.92 | 24.70 | 0.59 | 57.35 | 138,271.00 | 21,228.00 | 3053.0 |

| 2017 | 827,121.70 | 0.92 | 25.12 | 0.54 | 58.52 | 139,008.00 | 22,902.00 | 3132 |

| Year | GDP | IS | ES | TI | UR | POP | CPI | ED | |

|---|---|---|---|---|---|---|---|---|---|

| Training Set | 1978 | 0.00 | 0.20 | 1.00 | 1.00 | 0.00 | 0.00 | 0.00 | 0.00 |

| 1979 | 0.00 | 0.08 | 0.89 | 0.92 | 0.05 | 0.03 | 0.00 | 0.00 | |

| 1980 | 0.00 | 0.12 | 0.71 | 0.84 | 0.04 | 0.06 | 0.00 | 0.01 | |

| 1981 | 0.00 | 0.06 | 0.57 | 0.77 | 0.06 | 0.09 | 0.00 | 0.01 | |

| 1982 | 0.00 | 0.00 | 0.38 | 0.73 | 0.08 | 0.13 | 0.00 | 0.01 | |

| 1983 | 0.00 | 0.01 | 0.25 | 0.70 | 0.09 | 0.16 | 0.01 | 0.02 | |

| 1984 | 0.00 | 0.05 | 0.15 | 0.61 | 0.13 | 0.19 | 0.01 | 0.03 | |

| 1985 | 0.01 | 0.19 | 0.08 | 0.52 | 0.14 | 0.22 | 0.01 | 0.05 | |

| 1986 | 0.01 | 0.24 | 0.11 | 0.48 | 0.16 | 0.26 | 0.01 | 0.06 | |

| 1987 | 0.01 | 0.25 | 0.06 | 0.43 | 0.18 | 0.31 | 0.02 | 0.07 | |

| 1988 | 0.01 | 0.30 | 0.06 | 0.37 | 0.19 | 0.35 | 0.02 | 0.09 | |

| 1989 | 0.02 | 0.32 | 0.07 | 0.34 | 0.20 | 0.38 | 0.03 | 0.10 | |

| 1990 | 0.02 | 0.24 | 0.00 | 0.31 | 0.21 | 0.42 | 0.03 | 0.10 | |

| 1991 | 0.02 | 0.34 | 0.06 | 0.28 | 0.22 | 0.46 | 0.03 | 0.12 | |

| 1992 | 0.03 | 0.45 | 0.10 | 0.23 | 0.23 | 0.49 | 0.04 | 0.13 | |

| 1993 | 0.04 | 0.53 | 0.19 | 0.18 | 0.25 | 0.54 | 0.05 | 0.15 | |

| 1994 | 0.05 | 0.52 | 0.08 | 0.13 | 0.26 | 0.55 | 0.07 | 0.17 | |

| 1995 | 0.07 | 0.52 | 0.08 | 0.11 | 0.27 | 0.58 | 0.05 | 0.18 | |

| 1996 | 0.08 | 0.53 | 0.25 | 0.09 | 0.31 | 0.61 | 0.11 | 0.20 | |

| 1997 | 0.09 | 0.59 | 0.49 | 0.08 | 0.34 | 0.64 | 0.12 | 0.20 | |

| 1998 | 0.10 | 0.61 | 0.54 | 0.07 | 0.38 | 0.67 | 0.13 | 0.20 | |

| 1999 | 0.11 | 0.66 | 0.67 | 0.07 | 0.42 | 0.69 | 0.14 | 0.21 | |

| 2000 | 0.12 | 0.71 | 0.79 | 0.06 | 0.45 | 0.71 | 0.15 | 0.22 | |

| 2001 | 0.13 | 0.74 | 0.76 | 0.05 | 0.49 | 0.73 | 0.16 | 0.24 | |

| 2002 | 0.14 | 0.76 | 0.83 | 0.05 | 0.52 | 0.75 | 0.17 | 0.28 | |

| 2003 | 0.16 | 0.80 | 0.69 | 0.05 | 0.56 | 0.77 | 0.19 | 0.35 | |

| 2004 | 0.19 | 0.78 | 0.71 | 0.05 | 0.59 | 0.79 | 0.21 | 0.43 | |

| 2005 | 0.22 | 0.82 | 0.51 | 0.05 | 0.62 | 0.81 | 0.23 | 0.51 | |

| 2006 | 0.26 | 0.86 | 0.49 | 0.04 | 0.65 | 0.82 | 0.26 | 0.58 | |

| 2007 | 0.32 | 0.88 | 0.47 | 0.03 | 0.69 | 0.84 | 0.30 | 0.64 | |

| 2008 | 0.38 | 0.88 | 0.46 | 0.02 | 0.72 | 0.85 | 0.35 | 0.67 | |

| 2009 | 0.42 | 0.89 | 0.17 | 0.02 | 0.75 | 0.87 | 0.41 | 0.71 | |

| 2010 | 0.50 | 0.90 | 0.38 | 0.02 | 0.79 | 0.88 | 0.47 | 0.77 | |

| 2011 | 0.59 | 0.90 | 0.38 | 0.01 | 0.82 | 0.90 | 0.57 | 0.84 | |

| 2012 | 0.65 | 0.90 | 0.43 | 0.01 | 0.85 | 0.92 | 0.64 | 0.88 | |

| Testing Set | 2013 | 0.72 | 0.93 | 0.51 | 0.01 | 0.88 | 0.93 | 0.70 | 0.92 |

| 2014 | 0.78 | 0.96 | 0.61 | 0.01 | 0.91 | 0.95 | 0.77 | 0.94 | |

| 2015 | 0.83 | 0.97 | 0.76 | 0.01 | 0.94 | 0.96 | 0.85 | 0.95 | |

| 2016 | 0.90 | 0.98 | 0.83 | 0.00 | 0.97 | 0.98 | 0.93 | 0.97 | |

| 2017 | 1.00 | 1.00 | 0.89 | 0.00 | 1.00 | 1.00 | 1.00 | 1.00 |

Appendix B

| Pseudo-Code of the Ensemble Forecasting Model | |

|---|---|

| Input: | training data set , the number of base model T |

| Output: | ensemble forecasting model H |

| Pseudo-code | |

| 1: | Step 1: training the base model |

| 2: | For t = 1 to T do |

| 3: | Training the forecasting model t with training data set |

| 4: | Get the fitting value of model t, |

| 5: | End |

| 6: | Step2: find the optimal weights for based models |

| 7: | Initialize the weights of based models |

| 8: | Find the optimal base model weights by minimizing the error function with ABC: |

| 9: | |

| 10: | Step3 get the ensemble forecasting model H: |

| 11: | Make predictions using the final model: |

| Pseudo-Code of the Artificial Bee Colony Algorithm | |

| Input: | int N; // the size of the bee colony |

| int M; // the maximum number of cycles | |

| int L; // the control parameter to abandon the nectar source | |

| Output: | set X*; // the optimal solution set |

| Pseudo-code | |

| 1: | initialize the population of solutions |

| 2: | evaluate the population |

| 3: | cycle = 1 |

| 4: | Repeat |

| 5: | send employed bees |

| 6: | calculate the probability of the current nectar sources |

| 7: | send onlooker |

| 8: | send scout |

| 9: | memorize the best nectar sources position achieved currently |

| 10: | cycle = cycle + 1 |

| 11: | until cycle = M |

References

- Le, T.-H.; Nguyen, C.P. Is energy security a driver for economic growth? Evidence from a global sample. Energy Policy 2019, 129, 436–451. [Google Scholar] [CrossRef]

- Sharimakin, A.; Glass, A.J.; Saal, D.S.; Glass, K. Dynamic multilevel modelling of industrial energy demand in Europe. Energy Econ. 2018, 74, 120–130. [Google Scholar] [CrossRef]

- Ji, Q.; Zhang, H.; Zhang, D. The impact of OPEC on East Asian oil import security: A multidimensional analysis. Energy Policy 2019, 126, 99–107. [Google Scholar] [CrossRef]

- Cohen, E. Development of Israel’s natural gas resources: Political, security, and economic dimensions. Resour. Policy 2018, 57, 137–146. [Google Scholar] [CrossRef]

- Wang, Q.; Li, S.; Li, R. Forecasting energy demand in China and India: Using single-linear, hybrid-linear, and non-linear time series forecast techniques. Energy 2018, 161, 821–831. [Google Scholar] [CrossRef]

- Ji, Q.; Bouri, E.; Roubaud, D.; Kristoufek, L. Information interdependence among energy, cryptocurrency and major commodity markets. Energy Econ. 2019, 81, 1042–1055. [Google Scholar] [CrossRef]

- Hribar, R.; Potočnik, P.; Šilc, J.; Papa, G. A comparison of models for forecasting the residential natural gas demand of an urban area. Energy 2019, 167, 511–522. [Google Scholar] [CrossRef]

- He, Y.; Lin, B. Forecasting China’s total energy demand and its structure using ADL-MIDAS model. Energy 2018, 151, 420–429. [Google Scholar] [CrossRef]

- Wang, Z.-X.; Li, Q.; Pei, L.-L. Grey forecasting method of quarterly hydropower production in China based on a data grouping approach. Appl. Math. Model. 2017, 51, 302–316. [Google Scholar] [CrossRef]

- Bates, J.M.; Granger, C.W.J. The Combination of Forecasts. OR 1969, 20, 451–468. [Google Scholar] [CrossRef]

- Samuels, J.D.; Sekkel, R.M. Model Confidence Sets and forecast combination. Int. J. Forecast. 2017, 33, 48–60. [Google Scholar] [CrossRef]

- Hsiao, C.; Wan, S.K. Is there an optimal forecast combination? J. Econ. 2014, 178, 294–309. [Google Scholar] [CrossRef]

- Bedi, J.; Toshniwal, D. Deep learning framework to forecast electricity demand. Appl. Energy 2019, 238, 1312–1326. [Google Scholar] [CrossRef]

- Kim, T.-Y.; Cho, S.-B. Predicting residential energy consumption using CNN-LSTM neural networks. Energy 2019, 182, 72–81. [Google Scholar] [CrossRef]

- Wei, L.; Li, G.; Zhu, X.; Sun, X.; Li, J. Developing a hierarchical system for energy corporate risk factors based on textual risk disclosures. Energy Econ. 2019, 80, 452–460. [Google Scholar] [CrossRef]

- Kim, K.; Hur, J. Weighting Factor Selection of the Ensemble Model for Improving Forecast Accuracy of Photovoltaic Generating Resources. Energies 2019, 12, 3315. [Google Scholar] [CrossRef]

- Cui, J.; Yu, R.; Zhao, D.; Yang, J.; Ge, W.; Zhou, X. Intelligent load pattern modeling and denoising using improved variational mode decomposition for various calendar periods. Appl. Energy 2019, 247, 480–491. [Google Scholar] [CrossRef]

- Ji, Q.; Zhang, D. How much does financial development contribute to renewable energy growth and upgrading of energy structure in China? Energy Policy 2019, 128, 114–124. [Google Scholar] [CrossRef]

- Ji, Q.; Li, J.; Sun, X. Measuring the interdependence between investor sentiment and crude oil returns: New evidence from the CFTC’s disaggregated reports. Financ. Res. Lett. 2019, 30, 420–425. [Google Scholar] [CrossRef]

- Ma, Y.-R.; Ji, Q.; Pan, J. Oil financialization and volatility forecast: Evidence from multidimensional predictors. J. Forecast. 2019, 38, 564–581. [Google Scholar]

- Berk, I.; Ediger, V.Ş. Forecasting the coal production: Hubbert curve application on Turkey’s lignite fields. Resour. Policy 2016, 50, 193–203. [Google Scholar] [CrossRef]

- Mehmanpazir, F.; Khalili-Damghani, K.; Hafezalkotob, A. Modeling steel supply and demand functions using logarithmic multiple regression analysis (case study: Steel industry in Iran). Resour. Policy 2019, 63, 101409. [Google Scholar] [CrossRef]

- Wang, X.; Lei, Y.; Ge, J.; Wu, S. Production forecast of China’s rare earths based on the Generalized Weng model and policy recommendations. Resour. Policy 2015, 43, 11–18. [Google Scholar] [CrossRef]

- Wang, C.; Zhang, H.; Fan, W.; Ma, P. A new chaotic time series hybrid prediction method of wind power based on EEMD-SE and full-parameters continued fraction. Energy 2017, 138, 977–990. [Google Scholar] [CrossRef]

- Yu, L.; Wang, S.; Lai, K.K. Forecasting crude oil price with an EMD-based neural network ensemble learning paradigm. Energy Econ. 2008, 30, 2623–2635. [Google Scholar] [CrossRef]

- Tang, L.; Wu, Y.; Yu, L.A. A randomized-algorithm-based decomposition-ensemble learning methodology for energy price forecasting. Energy 2018, 157, 526–538. [Google Scholar] [CrossRef]

- Kim, S.; Lee, G.; Kwon, G.-Y.; Kim, D.-I.; Shin, Y.-J. Deep Learning Based on Multi-Decomposition for Short-Term Load Forecasting. Energies 2018, 11, 3433. [Google Scholar] [CrossRef]

- Tso, G.K.F.; Yau, K.K.W. Predicting electricity energy consumption: A comparison of regression analysis, decision tree and neural networks. Energy 2007, 32, 1761–1768. [Google Scholar] [CrossRef]

- Adom, P.K.; Bekoe, W. Conditional dynamic forecast of electrical energy consumption requirements in Ghana by 2020: A comparison of ARDL and PAM. Energy 2012, 44, 367–380. [Google Scholar] [CrossRef]

- Ghanbari, A.; Kazemi, S.M.R.; Mehmanpazir, F.; Nakhostin, M.M. A Cooperative Ant Colony Optimization-Genetic Algorithm approach for construction of energy demand forecasting knowledge-based expert systems. Knowl.-Based Syst. 2013, 39, 194–206. [Google Scholar] [CrossRef]

- Wu, Q.; Peng, C. A hybrid BAG-SA optimal approach to estimate energy demand of China. Energy 2017, 120, 985–995. [Google Scholar] [CrossRef]

- Yu, S.-W.; Zhu, K.-J. A hybrid procedure for energy demand forecasting in China. Energy 2012, 37, 396–404. [Google Scholar] [CrossRef]

- Karadede, Y.; Ozdemir, G.; Aydemir, E. Breeder hybrid algorithm approach for natural gas demand forecasting model. Energy 2017, 141, 1269–1284. [Google Scholar] [CrossRef]

- Angelopoulos, D.; Siskos, Y.; Psarras, J. Disaggregating time series on multiple criteria for robust forecasting: The case of long-term electricity demand in Greece. Eur. J. Oper. Res. 2019, 275, 252–265. [Google Scholar] [CrossRef]

- Piltan, M.; Shiri, H.; Ghaderi, S.F. Energy demand forecasting in Iranian metal industry using linear and nonlinear models based on evolutionary algorithms. Energy Convers. Manag. 2012, 58, 1–9. [Google Scholar] [CrossRef]

- Sonmez, M.; Akgüngör, A.P.; Bektaş, S. Estimating transportation energy demand in Turkey using the artificial bee colony algorithm. Energy 2017, 122, 301–310. [Google Scholar] [CrossRef]

- Yuan, X.-C.; Sun, X.; Zhao, W.; Mi, Z.; Wang, B.; Wei, Y.-M. Forecasting China’s regional energy demand by 2030: A Bayesian approach. Resour. Conserv. Recycl. 2017, 127, 85–95. [Google Scholar] [CrossRef]

- He, Y.; Zheng, Y.; Xu, Q. Forecasting energy consumption in Anhui province of China through two Box-Cox transformation quantile regression probability density methods. Measurement 2019, 136, 579–593. [Google Scholar] [CrossRef]

- He, Y.X.; Liu, Y.Y.; Xia, T.; Zhou, B. Estimation of demand response to energy price signals in energy consumption behaviour in Beijing, China. Energy Convers. Manag. 2014, 80, 429–435. [Google Scholar] [CrossRef]

- Forouzanfar, M.; Doustmohammadi, A.; Hasanzadeh, S.; Shakouri, G.H. Transport energy demand forecast using multi-level genetic programming. Appl. Energy 2012, 91, 496–503. [Google Scholar] [CrossRef]

- Liao, H.; Cai, J.-W.; Yang, D.-W.; Wei, Y.-M. Why did the historical energy forecasting succeed or fail? A case study on IEA’s projection. Technol. Forecast. Soc. Chang. 2016, 107, 90–96. [Google Scholar] [CrossRef]

- Ahmad, T.; Chen, H. Deep learning for multi-scale smart energy forecasting. Energy 2019, 175, 98–112. [Google Scholar] [CrossRef]

- Schaer, O.; Kourentzes, N.; Fildes, R. Demand forecasting with user-generated online information. Int. J. Forecast. 2019, 35, 197–212. [Google Scholar] [CrossRef]

- Xu, S.; Chan, H.K.; Zhang, T. Forecasting the demand of the aviation industry using hybrid time series SARIMA-SVR approach. Transp. Res. Part E Logist. Transp. Rev. 2019, 122, 169–180. [Google Scholar] [CrossRef]

- Ünler, A. Improvement of energy demand forecasts using swarm intelligence: The case of Turkey with projections to 2025. Energy Policy 2008, 36, 1937–1944. [Google Scholar] [CrossRef]

- Debnath, K.B.; Mourshed, M. Forecasting methods in energy planning models. Renew. Sustain. Energy Rev. 2018, 88, 297–325. [Google Scholar] [CrossRef]

- Yuan, X.; Chen, C.; Yuan, Y.; Huang, Y.; Tan, Q. Short-term wind power prediction based on LSSVM–GSA model. Energy Convers. Manag. 2015, 101, 393–401. [Google Scholar] [CrossRef]

- Zendehboudi, A.; Baseer, M.A.; Saidur, R. Application of support vector machine models for forecasting solar and wind energy resources: A review. J. Clean. Prod. 2018, 199, 272–285. [Google Scholar] [CrossRef]

- Wei, D.; Wang, J.; Ni, K.; Tang, G. Research and Application of a Novel Hybrid Model Based on a Deep Neural Network Combined with Fuzzy Time Series for Energy Forecasting. Energies 2019, 12, 3588. [Google Scholar] [CrossRef]

- Law, A.; Ghosh, A. Multi-label classification using a cascade of stacked autoencoder and extreme learning machines. Neurocomputing 2019, 358, 222–234. [Google Scholar] [CrossRef]

- Bergmeir, C.; Hyndman, R.J.; Benitez, J.M. Bagging exponential smoothing methods using STL decomposition and Box-Cox transformation. Int. J. Forecast. 2016, 32, 303–312. [Google Scholar] [CrossRef]

- Han, X.; Li, R. Comparison of Forecasting Energy Consumption in East Africa Using the MGM, NMGM, MGM-ARIMA, and NMGM-ARIMA Model. Energies 2019, 12, 3278. [Google Scholar] [CrossRef]

- Zhao, W.; Zhao, J.; Yao, X.; Jin, Z.; Wang, P. A Novel Adaptive Intelligent Ensemble Model for Forecasting Primary Energy Demand. Energies 2019, 12, 1347. [Google Scholar] [CrossRef]

- Galicia, A.; Talavera-Llames, R.; Troncoso, A.; Koprinska, I.; Martínez-Álvarez, F. Multi-step forecasting for big data time series based on ensemble learning. Knowl.-Based Syst. 2019, 163, 830–841. [Google Scholar] [CrossRef]

- Liu, L.; Zong, H.; Zhao, E.; Chen, C.; Wang, J. Can China realize its carbon emission reduction goal in 2020: From the perspective of thermal power development. Appl. Energy 2014, 124, 199–212. [Google Scholar] [CrossRef]

- Wang, J.-J.; Wang, J.-Z.; Zhang, Z.-G.; Guo, S.-P. Stock index forecasting based on a hybrid model. Omega 2012, 40, 758–766. [Google Scholar] [CrossRef]

- Zhu, B.; Wei, Y. Carbon price forecasting with a novel hybrid ARIMA and least squares support vector machines methodology. Omega 2013, 41, 517–524. [Google Scholar] [CrossRef]

- Qu, Z.; Zhang, K.; Mao, W.; Wang, J.; Liu, C.; Zhang, W. Research and application of ensemble forecasting based on a novel multi-objective optimization algorithm for wind-speed forecasting. Energy Convers. Manag. 2017, 154, 440–454. [Google Scholar] [CrossRef]

- Xue, Y.; Jiang, J.; Zhao, B.; Ma, T. A self-adaptive artificial bee colony algorithm based on global best for global optimization. Soft Comput. 2017, 22, 2935–2952. [Google Scholar] [CrossRef]

- Xu, X.; Wei, Z.; Ji, Q.; Wang, C.; Gao, G. Global renewable energy development: Influencing factors, trend predictions and countermeasures. Resour. Policy 2019, 63, 101470. [Google Scholar] [CrossRef]

- Shi, Y.; Tian, Y.; Kou, G.; Peng, Y.; Li, J. Optimization Based Data Mining Theory and Applications; Springer: London, UK, 2011. [Google Scholar]

- Wang, C.; Zhang, H.; Fan, W.; Fan, X. A new wind power prediction method based on chaotic theory and Bernstein Neural Network. Energy 2016, 117, 259–271. [Google Scholar] [CrossRef]

- Qureshi, A.S.; Khan, A.; Zameer, A.; Usman, A. Wind power prediction using deep neural network based meta regression and transfer learning. Appl. Soft Comput. 2017, 58, 742–755. [Google Scholar] [CrossRef]

- Huang, G.-B.; Zhu, Q.-Y.; Siew, C.-K. Extreme learning machine: Theory and applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Pérez, C.J.; Vega-Rodríguez, M.A.; Reder, K.; Flörke, M. A Multi-Objective Artificial Bee Colony-based optimization approach to design water quality monitoring networks in river basins. J. Clean. Prod. 2017, 166, 579–589. [Google Scholar] [CrossRef]

- Lin, Y.; Jia, H.; Yang, Y.; Tian, G.; Tao, F.; Ling, L. An improved artificial bee colony for facility location allocation problem of end-of-life vehicles recovery network. J. Clean. Prod. 2018, 205, 134–144. [Google Scholar] [CrossRef]

- Zhao, Y.; Li, J.; Yu, L. A deep learning ensemble approach for crude oil price forecasting. Energy Econ. 2017, 66, 9–16. [Google Scholar] [CrossRef]

- Peimankar, A.; Weddell, S.J.; Jalal, T.; Lapthorn, A.C. Multi-objective ensemble forecasting with an application to power transformers. Appl. Soft Comput. 2018, 68, 233–248. [Google Scholar] [CrossRef]

- Godarzi, A.A.; Amiri, R.M.; Talaei, A.; Jamasb, T. Predicting oil price movements: A dynamic Artificial Neural Network approach. Energy Policy 2014, 68, 371–382. [Google Scholar] [CrossRef]

- Yu, L.; Zhao, Y.; Tang, L. A compressed sensing based AI learning paradigm for crude oil price forecasting. Energy Econ. 2014, 46, 236–245. [Google Scholar] [CrossRef]

| Literatures | Forecast Range | Case Study | Factors |

|---|---|---|---|

| Hribar, Potočnik, Šilc, and Papa [7] | Daily/City | Natural gas demand | Weather, socioeconomic factors |

| Adom and Bekoe [29] | Yearly/Country | Electrical demand | Macro socioeconomic indicators, population, gross national product, degree of urbanization, industry efficiency, industry value-added |

| Ghanbari, Kazemi, Mehmanpazir, and Nakhostin [30] | Yearly/Country | Energy demand | Population, GDP, CPI, imports, exports, energy intensity, energy efficiency |

| Wu and Peng [31] | Yearly/Country | Energy demand | Economic growth, total population, fixed-asset investments, energy efficiency, energy structure, household energy consumption per capita |

| Yu and Zhu [32] | Yearly/Country | Energy demand | Economic growth, total population, economic structure, urbanization rate, energy structure, energy price |

| Karadede et al. [33] | Yearly/Country | Natural gas demand | Gross national product, population, growth rate |

| Angelopoulos et al. [34] | Yearly/Country | Electricity demand | GDP, unemployment rate, population, weather-related criteria, electricity price, energy efficiency criterion |

| Piltan et al. [35] | Yearly/Country | Energy demand | Number of employees, investment value, gas price, electricity price |

| Sonmez et al. [36] | Yearly/Country | Transport energy demand | Gross domestic product, population |

| Yuan et al. [37] | Yearly/Country | Energy demand | Economic level, industrial structure, demographic change, urbanization process, technological progress |

| He et al. [38] | Yearly/Province | Energy demand | Historical energy consumption, population, GDP growth rate, total GDP, the three major industrial GDP, CPI |

| He et al. [39] | Yearly/City | Energy demand | Population, GDP growth rate, GDP, the three major industrial GDP, CPI |

| Forouzanfar et al. [40] | Yearly/Country | Transport energy demand | Population, gross domestic product, number of vehicles |

| Liao et al. [41] | Yearly/Country | Energy demand | GDP, population, oil price |

| Models | Trend Features | Forecast Period | Number of Variables | |||

|---|---|---|---|---|---|---|

| Linear | Nonlinear | Long | Short | Multiple | Single | |

| Regression-based | ✓ | ✓ | ✓ | |||

| ARIMA | ✓ | ✓ | ✓ | |||

| SVM | ✓ | ✓ | ✓ | |||

| ANN | ✓ | ✓ | ✓ | |||

| ELM | ✓ | ✓ | ✓ | |||

| No. | Factors | r | p |

|---|---|---|---|

| 1 | Gross domestic product (GDP) | 0.965 | 0.0000 |

| 2 | Industrial structure (IS) | 0.887 | 0.0000 |

| 3 | Energy structure (ES) | 0.328 | 0.0387 |

| 4 | Technological innovation (TI) | −0.697 | 0.0000 |

| 5 | Urbanization rate (UR) | 0.978 | 0.0000 |

| 6 | Population (Pop) | 0.873 | 0.0000 |

| 7 | Consumer price index (CPI) | 0.959 | 0.0000 |

| 8 | Energy demand (ED) | 1.000 | —— |

| Model | RMSE | MAPE |

|---|---|---|

| SES | 46.68 | 1.41% |

| ARIMA | 18.31 | 0.51% |

| SVR | 101.90 | 3.31% |

| BPNN | 56.42 | 1.76% |

| GRNN | 152.15 | 4.62% |

| RBFNN | 70.28 | 1.51% |

| ELM | 16.57 | 0.45% |

| E_AVE | 25.52 | 0.51% |

| E_ABC | 9.46 | 0.21% |

| SES | ARIMA | SVR | BPNN | GRNN | RBFNN | ELM | E_AVE | |

|---|---|---|---|---|---|---|---|---|

| ARIMA | 0.00811 | |||||||

| SVR | 0.86552 | 0.99625 | ||||||

| BPNN | 0.00757 | 0.11478 | 0.00005 | |||||

| GRNN | 0.69070 | 0.88984 | 0.45013 | 0.95854 | ||||

| RBFNN | 0.99407 | 0.99977 | 0.97985 | 0.99991 | 0.93608 | |||

| ELM | 0.00782 | 0.12005 | 0.00031 | 0.55476 | 0.06843 | 0.00012 | ||

| E_AVE | 0.00283 | 0.02631 | 0.00005 | 0.27089 | 0.05017 | 0.00005 | 0.16362 | |

| E_ABC | 0.00071 *** | 0.00123 *** | 0.00005 *** | 0.09451 * | 0.04439 ** | 0.00005 *** | 0.01202 ** | 0.01955 ** |

| Article | Model | Time Range | Target/Country | MAPE (%) |

|---|---|---|---|---|

| [30] | Cooperative-ACO-GA | 1971–2007 | Oil/Turkey | 0.72 |

| Cooperative-ACO-GA | Natural gas/Turkey | 1.18 | ||

| Cooperative-ACO-GA | Electricity/Turkey | 0.90 | ||

| [31] | BAG-SA | 1990–2015 | Energy/China | 0.28 |

| [32] | PSO-GA-linear | 1980–2009 | Energy/China | 0.45 |

| PSO-GA-exponential | 0.95 | |||

| PSO-GA-quadratic | 0.29 | |||

| [33] | BGA | 2001–2014 | Natural gas/Turkey | 1.88 |

| BGA-SA | 1.43 | |||

| [35] | PSO | 1980–2003 | electricity consumption/Turkey | 5.24 |

| Real coding GA | 5.93 | |||

| Present study | E_ABC | 1978–2017 | Energy/China | 0.21 |

| Year | GDP (108 Yuan) | IS (/) | ES (/) | TI (/) | UR (%) | POP (104) | CPI (/) |

|---|---|---|---|---|---|---|---|

| 2018 | 900,309 | 0.9267 | 25.34 | 0.5281 | 59.71 | 139,991 | 24,602.38 |

| 2019 | 958,829 | 0.9321 | 25.47 | 0.4343 | 60.75 | 140,971 | 26,353.20 |

| 2020 | 1,021,153 | 0.9363 | 25.38 | 0.3322 | 61.88 | 142,022 | 28,016.92 |

| 2021 | 1,087,528 | 0.9428 | 25.11 | 0.2173 | 63.02 | 143,149 | 29,666.45 |

| 2022 | 1,158,217 | 0.9519 | 24.72 | 0.0875 | 64.15 | 144,347 | 31,267.32 |

| Year | Energy Demand (Million Toe) | Increase Rate |

|---|---|---|

| 2017 | 3132 | -- |

| 2018 | 3226 | 3.02% |

| 2019 | 3274 | 1.47% |

| 2020 | 3332 | 1.78% |

| 2021 | 3382 | 1.50% |

| 2022 | 3429 | 1.38% |

| Total | -- | 9.48% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hao, J.; Sun, X.; Feng, Q. A Novel Ensemble Approach for the Forecasting of Energy Demand Based on the Artificial Bee Colony Algorithm. Energies 2020, 13, 550. https://doi.org/10.3390/en13030550

Hao J, Sun X, Feng Q. A Novel Ensemble Approach for the Forecasting of Energy Demand Based on the Artificial Bee Colony Algorithm. Energies. 2020; 13(3):550. https://doi.org/10.3390/en13030550

Chicago/Turabian StyleHao, Jun, Xiaolei Sun, and Qianqian Feng. 2020. "A Novel Ensemble Approach for the Forecasting of Energy Demand Based on the Artificial Bee Colony Algorithm" Energies 13, no. 3: 550. https://doi.org/10.3390/en13030550

APA StyleHao, J., Sun, X., & Feng, Q. (2020). A Novel Ensemble Approach for the Forecasting of Energy Demand Based on the Artificial Bee Colony Algorithm. Energies, 13(3), 550. https://doi.org/10.3390/en13030550