1. Introduction

In a smart grid, digital information and communication technologies are employed to manage the power grid in a reliable, economical, and sustainable manner. The aim is to achieve high availability and security, prevent failures, increase resiliency and power quality while, at the same time, provide economic and environmental benefits. Increased integration of distributed generation such as photovoltaic panels and wind turbines, together with expansion of electric vehicles and charging to/from the batteries, will require changes in the grid operation, increased flexibility, and dynamic management.

To adapt to these changes, the smart grid employs two-way communication, software, sensors, control, and other digital systems. The advanced metering infrastructure (AMI) is one of the technologies enabling the smart grid: smart meters, which are deployed as part of AMI, measure, communicate, and record energy consumption in intervals of an hour or less. Monitoring devices such as a phasor measurement unit generate state measurements at the granularity of microseconds enabling enhanced monitoring and control.

These data in the energy sector have a potential to provide insights, support decision making, increase grid flexibility and reliability [

1]. Machine learning (ML) has been used for various smart grid tasks because of its ability to learn from data, detect patterns, provide data-driven insights and predictions. Examples include short and long term demand forecasting for aggregated and individual loads [

2], anomaly detection [

3,

4], energy disaggregation [

5], state estimation, generation forecasting encompassing solar and wind, load classification, and intrusion detection.

All those ML applications are dependent on the availability of sufficient historical data. This is especially heightened in the case of complex models such as those found in deep learning (DL) as large data are required for training DL models. Although a few anonymized data sets have been made publicly available [

6,

7], many power companies are hesitant to release their smart meter and other energy data due to privacy and security concerns [

8]. Moreover, risks and privacy concerns have been identified as key issues throughout data driven energy management [

1]. Various techniques have been proposed for dealing with those issues: examples include the work of Guan et al. [

9] on secure data acquisition in smart grid and the work of Liu et al. [

10] on privacy preserving scheme for the smart grid. Nevertheless, privacy and security concerns result in a reluctance to share data and, consequently, impose challenges for the application of ML techniques in the energy domain [

11].

In practice, ML also encounters challenges imposed by missing data and incomplete observations resulting from sensor failures and unreliable communications [

12]. This missing and incomplete data often needs to be recovered or estimated before ML can be applied. Moreover, training ML models may require data difficult to obtain because of associated costs or other reasons. For example, nonintrusive load monitoring (NILM) which deals with inferring the status of individual appliances and their individual energy consumption from aggregated readings requires fine-grained data for individual appliances and/or large quantities of labeled data from very diverse devices. Collecting such data requires installation of sensors on a large scale and can be cost prohibitive.

The generative adversarial networks (GANs) have been proposed for generating data from a target distribution [

13]. If GANs could generate realistic energy data, they could be used to generate data for ML training and for imputing missing values. GAN consists of two networks: the generator and discriminator. The generator is tasked with generating synthetic data whereas the discriminator estimates the probability that the data is real rather than synthetic. The two networks compete with each other: the objective is to train the generator to maximize the probability of the discriminator making a mistake. GANs have mostly been used in computer vision for tasks such as generating realistic-looking images, text to image synthesis [

14], image completion, and resolution up-scaling [

15].

This paper proposes a recurrent generative adversarial network (R-GAN) for generating realistic energy consumption data by learning from real data samples. The focus is on generating data for training ML models in the energy domain, but R-GAN can be applied for other tasks and with different time-series data. Both the generator and discriminator are stacked recurrent neural networks (RNNs) to enable capturing time dependencies present in energy consumption data. R-GAN takes advantage of Wasserstein GANs (WGANs) [

16] and Metropolis-Hastings GAN (MH-GAN) [

17] to improve training stability, overcome the mode collapse, and, consequently, generate more realistic data.

For evaluation of image GANs, scores such as Inception score [

18] have been proposed, and it is even possible to visually evaluate generated images. However, for the non-image data, GAN evaluation is still an open challenge. As the objective here is to generate data for ML training, the quality of the synthetic data is evaluated by measuring the accuracy of the machine learning models trained with that synthetic data. Experiments show that the accuracy of the forecasting model trained with the synthetic data is comparable to the accuracy of the model trained with the real data. Statistical tests further confirm that the distribution of the generated data reassembles that of the real data.

The rest of the paper is organized as follows:

Section 2 discusses the background,

Section 3 presents the related work,

Section 4 describes the R-GAN,

Section 5 explains the experiments and corresponding results, and finally

Section 6 concludes the paper.

4. Methodology

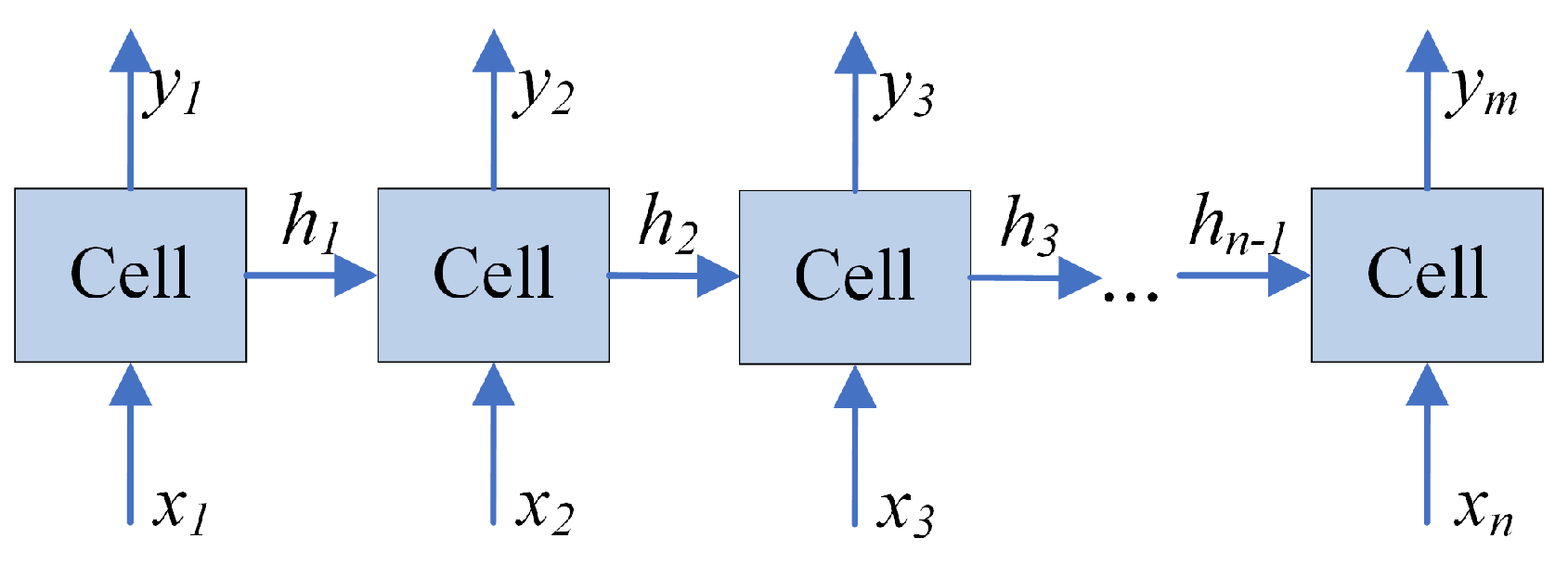

Figure 3 depicts the overview of the R-GAN approach. As R-GANs use RNNs for the generator and discriminator, data first needs to be pre-processed to accommodate the RNN architecture. Accordingly, this section first describes data pre-processing consisting of feature engineering (ARIMA and Fourier transform), normalization, and sample generation (sliding window). Next, R-GAN is described and the evaluation process is introduced.

4.1. Data Pre-Processing

In this paper, the term core features refers to energy consumption features and any other features present in the original data set (e.g., reading hour, weekend/weekday). In the data pre-processing step, these core features are first enriched through feature engineering using auto regressive integrated moving average (ARIMA) and Fourier transform (FT). Next, all features are normalized and the sliding window technique is applied to generate samples for GAN training.

4.1.1. ARIMA

Auto regressive integrated moving average (ARIMA) [

44] models are fitted to time series data to better understand the data or to forecast future values in the time series. The auto regressive (AR) part models the variable of interest as regression of its own past values, the moving average (MA) part uses a linear combination of past error terms for modeling, and Integrated (I) refers to dealing with non-stationarity.

Here, ARIMA is used because of its ability to capture different temporal structures in time series data. As this work is focused on generating energy data, the ARIMA model is fitted to the energy feature. The values obtained from the fitted ARIMA model are added as a new engineered feature to the existing data set. The RNN itself is capable of capturing temporal dependencies, but adding ARIMA further enhances time modeling and, consequently, improves the quality of the generated data as will be demonstrated in

Section 5.

4.1.2. Fourier Transform

Fourier transform (FT) decomposes a time signal into its constituent frequency domain representations [

44]. Using FT, every waveform can be represented as the sum of sinusoidal functions. An inverse Fourier transform synthesizes the original signal from its frequency domain representations. Because FT identifies which frequencies are present in the original signal, FT is suitable for discovering cycles in the data.

Like with ARIMA, only the energy feature is used with FT. The energy time series is decomposed into sinusoidal representations, n dominant frequencies are selected, and a new time series is constructed using these n constituent signals. This new time series represents a new feature. When the number of components n is low, the new time series only captures the most dominant cycles, whereas for a large number of components, the created time series approaches the original time series. One value of n creates one new feature, but several values with their corresponding features are used in order to capture different temporal scales.

The objective of using FT is similar to the one of ARIMA: to capture time dependencies and, consequently, improve quality of the generated data. The experiments demonstrate that both ARIMA and Fourier transform contribute to the quality of the generated data.

4.1.3. Normalization

To bring the values of all features to a similar scale, the data was normalized using

normalization. The values of each feature were scaled to values between 0 and 1 as follows:

where

x is the original value,

and

are the minimum and maximum of that feature vector, and

is the normalized value.

4.1.4. Sliding Window

At this point, data is still in a matrix format as illustrated in

Figure 4 with each row containing readings for a single time step. Note that features in this matrix include all features contained in the original data set (core features) such as appliance status or the day of the week, as well as additional features engineered with ARIMA and FT.

As the generator core is RNN, samples need to be prepared into a form suitable for RNN. To do this, the sliding window technique is applied. As illustrated in

Figure 4, the first

K rows correspond to the first time window and make the first training sample. Then, the window slides for

S steps, and the readings from the time step

S to

make the second sample. Note that in

Figure 4, the step is

although other step sizes could be used. Each sample is a matrix of dimension

, where

K is the window length and

F is the number of features.

4.2. R-GAN

Similar to an image GAN, R-GAN consists of the generator and the discriminator as illustrated in

Figure 3. However, while an image GAN typically uses CNN for the generator and discriminator, R-GAN substitutes CNN with the stacked LSTM and a single dense layer. The architectures of the R-GAN generator and discriminator are shown in

Figure 5. The stacked LSTMs were selected because the LSTM cells are able to store information for longer sequences than Vanilla RNN cells. Moreover, stacked hidden layers allow capturing patterns at different time scales.

Both the generator and discriminator have a similar architecture consisting of stacked LSTM and a dense layer (

Figure 5), but they differ in dimensions of their inputs and outputs because they serve a different purpose: one generates data and the other one classifies samples into fake and real. The generator takes random inputs from the predefined Gaussian latent space and generates time series data. The input has the same dimension as the siding window length

K. The RNN output before the fully connected later has dimension

(window length × cell state dimension). The generated data (generator output) has the same dimensions as the real data after pre-processing: each sample is of dimension

(window length × number of features).

GELU (Gaussian error linear unit) activation function has been selected for the generator and discriminator RNNs [

45] as it recently outperformed rectified linear unit (ReLU) and other activation functions [

45]. The GELU can be approximated by [

45]:

Similar to the ReLU (rectified linear unit) and the ELU (exponential linear unit ) activation functions, GELU enables faster and better convergence of neural networks than the sigmoid function. Moreover, GELU merges ReLU functionality and dropout by multiplying the neuron input by zero or one, but this dropout multiplication is dependent on the input: there is a higher probability of the input to be dropped as the input value decreases [

45]. The stacked RNN is followed by the dense layer in both the generator and discriminator. The dense layer activation function for the generator is GELU because of the same reasons explained with GELU selection in a stacked RNN. In the discriminator, the dense layer activation function is tanh to achieve real/synthetic classifications.

As illustrated in

Figure 3, the generated data together with pre-processed data are passed to the discriminator which learns to differentiate between real and fake samples. After a mini-batch consisting of several real and generated samples is processed, discriminator loss is calculated and the discriminator weights are updated using gradient descent. As R-GAN uses WGAN, the updates are done slightly differently than in the original GAN. In the original GAN work [

13], each discriminator update is followed by the generator update. In contrast, the WGAN algorithm trains the discriminator relatively well before each generator update. Consequently, in

Figure 3, there are several discriminator update loops before each generator update.

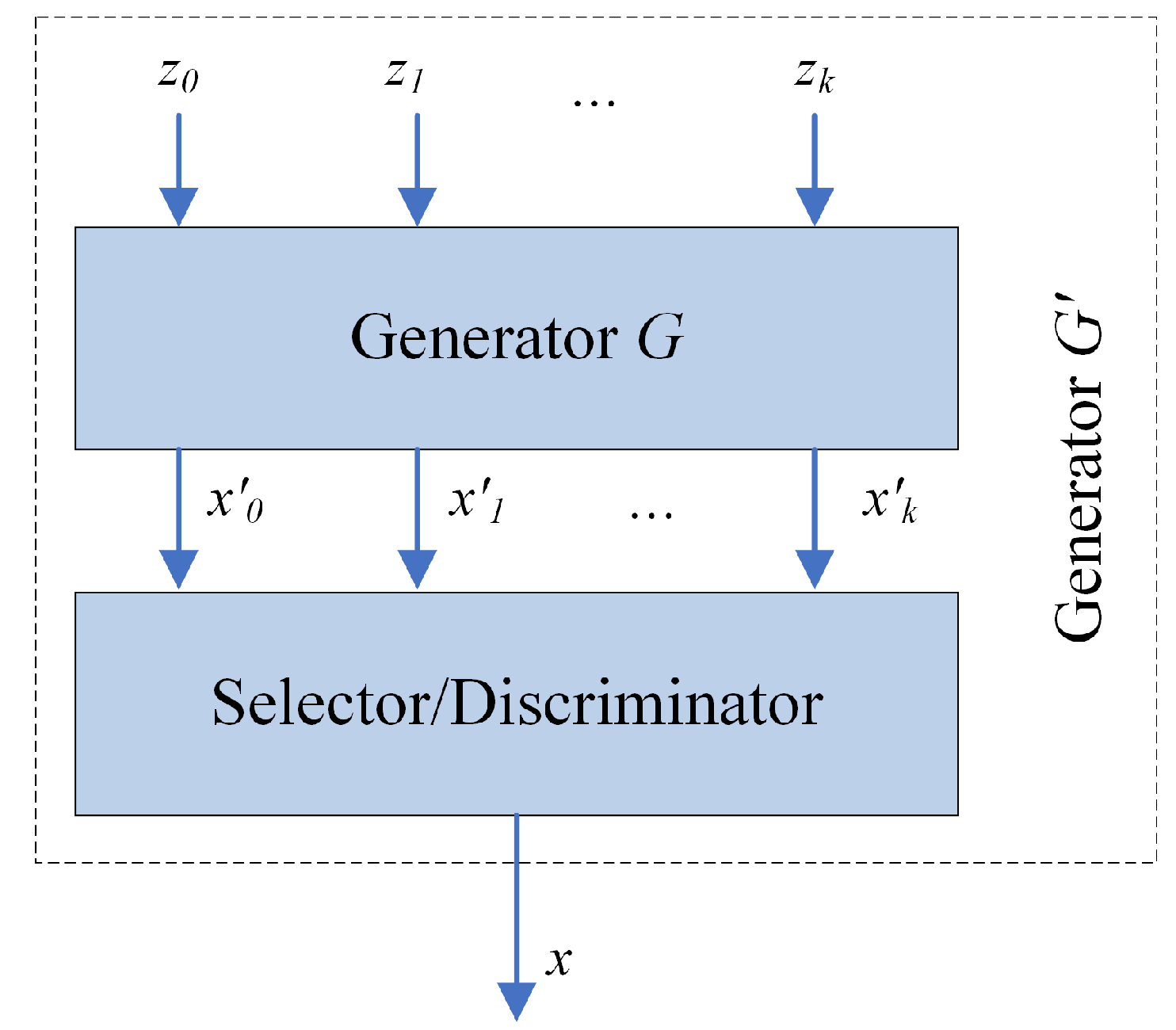

Once the generator and discriminator are trained, the R-GAN is ready to generate data. At this step, typically only the trained generator is used. If the generator was trained perfectly, the resulting generated distribution,

, would be the same as the real one. Unfortunately, GANs may not always converge to the true data distribution, thus taking samples directly from these imperfect generators can produce low quality samples. However, our work takes advantage of the Metropolis-Hastings GAN (MH-GAN) approach [

17] in which both the generator and discriminator play roles in generating samples.

Data generation using MH-GAN approach is illustrated in

Figure 6. The discriminator, together with the trained generator

G, forms a new generator

. The generator

G takes as input random samples

and produces time series samples

. Some of the generated samples are closer to the real data distribution than the others. The discriminator serves as a selector to choose the best sample

x from the set

. The final output is the generated time series sample

x.

4.3. Evaluation Process

The main objective of this work is to generate data for training ML models; therefore, the presented R-GAN is evaluated by assessing the quality of ML models trained with synthetic data. As energy forecasting is a common ML task, it is used here for the evaluation too. In addition to Train on Synthetic, Test on Real (TSTR) and Train on Real, Test on Synthetic (TRTS) approaches proposed by Esteban et al. [

35], two additional metrics are employed: Train on Real, Test on Real (TRTR) and Train on Synthetic, Test on Synthetic (TSTS).

Train on Synthetic, Test on Real (TSTR): A prediction model is trained with synthetic data and tested on real data. TSTR was proposed by Esteban et al. [

35]: they evaluated the GAN model on a clustering task using random forest classifier. In contrast, our study evaluates R-GAN on an energy forecasting task using an RNN forecasting model. Note that this forecasting RNN is different than RNNs used for the GAN generator and discriminator, and could be replaced by a different ML algorithm. RNN was selected because of its recent success in energy forecasting studies [

26]. This forecasting RNN is trained with synthetic data and tested on real data.

Consequently, TSTR evaluates the ability of the synthetic data to be used for training energy forecasting models. If the R-GAN suffers from the mode collapse, TSTR degrades because the generated data do not capture diversity or real data and, consequently, the prediction model does not capture this diversity.

Train on Real, Test on Synthetic (TRTS): This is the reverse of TSTR: a model is trained on the real data and tested on the synthetic data. The process is exactly the same as in TSTR with exception of reversed roles of synthetic and real data. TRTS serves as an evaluation of GAN’s ability to generate realistic looking data. Unlike TSTR, TRTS is not affected by the mode collapse as a limited diversity of synthetic data does not affect forecasting accuracy. As the aim is to generate data for training ML models, TSTR is a more significant metrics than TRTS.

Train on Real, Test on Real (TRTR): This is a traditional evaluation with the model trained and tested on the real data (with separate train and test sets). TRTR does not evaluate the synthetic data itself, but it allows for the comparison of accuracy achieved when a model is trained with real and with synthetic data. Low TRTR and TSTR accuracies indicate that the forecasting model is not capable of capturing variations in data and do not imply low quality of synthetic data. The goal of the presented R-GAN data generation is the TSTR value comparable to the TRTR value, regardless of their absolute values: this demonstrates that the model trained using synthetic data has similar abilities as the model trained with real data.

Train on Synthetic, Test on Synthetic (TSTS): Similar to TRTR, TSTS evaluates the ability of the forecasting model to capture variations in data: TRTR evaluates the accuracy with real data and TSTS with synthetic data. Large discrepancies between TRTR and TSTS indicate that the model is much better with real data than with synthetic, or the other way around. Consequently, this means that the synthetic data does not reassemble the real data.

5. Evaluation

5.1. Data sets and Pre-Processing

The evaluation was carried out on two data sets: UCI (University of California, Irvine) appliances energy prediction data set [

46] and Building Data Genome set [

6]. UCI data set consists of energy consumption readings for different appliances with additional attributes such as temperature and humidity. The reading interval is 10 min and the total number of samples is 19,736. Day of the week and month of the year features were created from reading date/time, resulting in a total of 28 features.

Building Data Genome set contains one year of hourly, whole building electrical meter data for non-residential buildings. In experiments, readings from a single building were used; thus, the number of samples is 24 × 365 = 8760. With this data set, energy consumption, year, month, day of the year, and hour of the day features were used.

For both data sets, the process is the same. The data set is pre-processed as described in

Section 4.1. ARIMA is applied first to create an additional feature: to illustrate this step,

Figure 7 shows original data (energy consumption feature) and ARIMA fitted model for UCI data set.

Next, Fourier transform (FT) is applied. FT can be used with a different number of components resulting in different signal representations; in the experiments, four transformations were considered with 1, 10, 100, and 1000 components. The four representations are illustrated in

Figure 8 on UCI data set. It can be observed that one component results in almost constant values and 10 components capture only large scale trends. As the number of components increases to 100 and 1000, more smaller-scale changes are captured and the representation is closer to the original data. These four transformations with 1, 10, 100, and 1000 components make the four additional features.

At this point, all needed additional features are generated (total of 33 features), and the pre-processing continues with normalization (

Figure 3). To prepare data for RNN, the sliding window technique is applied with the window length

indicating that 60 time steps make one sample, and step

specifying that the window slides for 30 time steps to generate the next sample. This window size and step were determined from the initial experiments.

5.2. Experiments

The R-GAN was implemented in Python with Tensorflow library [

47]. The experiments were performed on a computer with Ubuntu OS, AMD Ryzen 4.20 GHz processor, 128 GB DIMM RAM, and four NVIDIA GeForce RTX 2080 Ti 11GB graphics cards. Training the proposed R-GAN is computationally expensive; therefore, GPU acceleration was used. However, once the model is trained, it does not require significant resources and CPU processing is sufficient.

Both discriminator and generator were stacked LSTMs with the hyperparameters as follows:

The input to the generator consisted of samples of size 60 (to match the window length) drawn from the Gaussian distribution. The generator output was of size (window length × number of features). The discriminator input was of the same dimension as the generator output and the pre-processed real data.

Hyperparameters were selected according to the hyperparameter studies, commonly used settings, or by performing experiments. Keskar et al. [

48] observed that performance degrades for batch sizes larger than commonly used 32–521. To keep in that range, and to be close to the original WGAN work [

16], batch size 100 was used. For each batch, 100 generated synthetic samples and 100 randomly selected real samples were passed to the discriminator for classification. Increasing the cell state dimension typically leads to the increased LSTM accuracy, but also increases the training time [

49]; thus, moderate 128 size was selected.

Greff et al. [

49] observed that the learning rate is the most important LSTM parameter and that there is often a large range (up to two orders of magnitude) of good learning rates.

Figure 9 shows generator and discriminator loss for the learning rates (LR)

,

, and

for UCI data set and the model with ARIMA and FT features. Similar patterns have been observed with Building Genome data, therefore, here we only discuss loss for UCI experiments. The generator and discriminator are competing against each other; thus, improvement in one results in a decline in the other until the other learns to handle the change and causes the decline of its competitor. Hence, in the graph, oscillations are observed indicating that the networks are trying to learn and beat the competitor. For learning rate

, the generator stabilizes quite well, while the discriminator shows fluctuations as it tries to defeat the generator. Oscillations of the objective function are quite common in GANs, and WGAN is used in this work to help with convergence. Nevertheless, as the learning rate increases to

and

, the generator and discriminator are experiencing increased instabilities. Consequently, learning rate

was used for the experiments presented in this paper. Additional hyperparameter tuning has a potential to further improve archived results.

All experiments were carried out with 1500 epochs to allow sufficient time for the system to converge. As can be seen from

Figure 9, for learning rate

, the generator largely stabilizes after around 500 epochs and experiences very little change after 1,000 epochs. At the same time, the discriminator experiences similar oscillation patterns from around 400 epochs onward. Thus, training for 1000 epochs might be sufficient; nevertheless, 1500 epochs allow a chance for further improvements.

R-GAN was evaluated with the four models corresponding to the four sets of features:

Core features only.

Core and ARIMA generated features.

Core and FT generated features.

Core, ARIMA, and FT generated features.

As described in

Section 4.3, ML task, specifically energy forecasting, was used for the evaluation with TRTS, TRTR, TSTS, and TSTR metrics. Forecasting models for those evaluations were also RNNs. Forecasting model hyperparameters for each experiment were tuned using the expected improvement criterion according to Bergstra et al. [

50], which results in different hyperparameters for each set of input features. This way, we ensure that the forecasting model is tuned for the specific use scenario. The following ranges of hyperparameters were considered for the forecasting model:

Hidden layer sizes: 32, 64, 128

Number of layers: 1, 2

Batch sizes: 1, 5, 10, 15, 30, 50

Learning rates: continuous from 0.001 to 0.03

For each of R-GAN models, 7200 samples were generated and then TSTR, TRTS, TRTR, an TSTS approaches with an RNN prediction model were applied. Mean absolute percentage error (MAPE) and mean absolute error (MAE) were selected as evaluation metrics because of their frequent use in energy forecasting studies [

51,

52]. They are calculated as:

where

y is the actual value,

is the predicted value, and

N is the number of samples.

5.3. Results and Discussion—UCI Data Set

This subsection presents results achieved on UCI data set and discusses findings. MAPE and MAE for the four evaluations TRTS, TRTR, TSTR, and TSTS are presented in

Table 1. For the ease of the comparison, the same data is presented in a graph form:

Figure 10 compares models based on MAPE and

Figure 11 based on MAE.

As we are interested in using synthetic data for training ML models, TSTR is a crucial metric. In terms of TSTR, addition of ARIMA and FT features to the core features reduces MAPE from 18.67% to 10.12% and MAE from 62.74 to 52.54. Moreover, it can be observed that for all experiments adding ARIMA and FT features improves the accuracy in terms of both MAPE and MAE.

Because forecasting models always result in some forecasting errors even when trained with real data, it is important to compare the accuracy of the model trained with synthetic data with the one trained with the real data. TRTR evaluates the quality of the forecasting model itself. As can be observed from

Table 1, TSTR accuracy is close to TRTR accuracy for all models irrelevant of the number of features. This indicates that the accuracy of the forecasting model trained with synthetic data is close to the accuracy of the model trained with real data. When the model is trained with real data (TRTR), the MAPE for the model with all features is 10.81% whereas when trained with synthetic data (TRTS) MAPE is 10.12%. Consequently, comparable TSTR and TRTR values demonstrate the usability of synthetic data for ML training.

The accuracy of TSTR is higher than the accuracy of TRTS in terms of both MAPE and MAE for all experiments. Good TRTS accuracy shows that the predictor is able to generalize from real data and that generated samples are similar to real data. However, higher TSTR errors than TRTS errors indicate that the model trained with generated data does not capture the real data as well as the model trained on the real data. A possible reason for this is that, in spite of using techniques for dealing with mode collapse, the variety of generated samples is still not as high as the variety of real data.

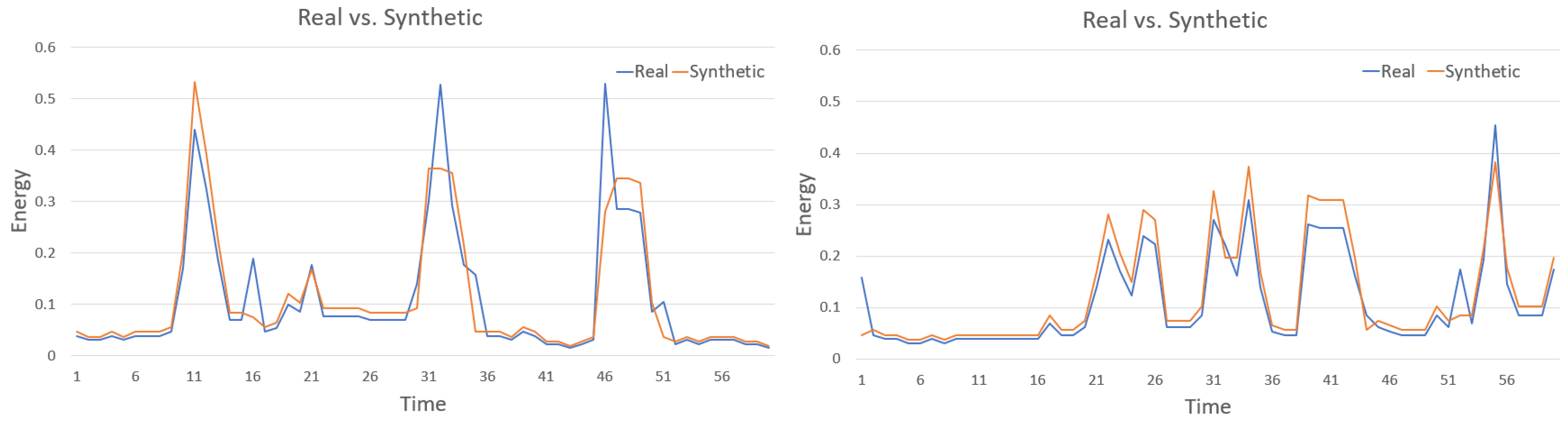

Visual comparison cannot be done in a similar way as in image GANs, but

Figure 12 shows examples of two generated samples compared with the most similar real data samples. It can be observed that the generated patterns are similar, but not the same as the real samples; thus, data looks realistic without being a mere repetition of the training patterns. Although

Figure 12 provides some insight into generated data, already discussed TSTR and its comparison to TRTR are the main metrics evaluating the usability of generated data for ML training.

To further compare real and synthetic data, statistical tests were applied to evaluate if there is a statistically significant difference between the generated and real data distributions. The Kruskal-Wallis H test also referred to “one-way ANOVA on ranks”, and the Mann-Whitney U test were used because both of them are non-parametric tests and do not assume normally distributed data. The parametric equivalent of the Kruskal-Wallis H test is one-way ANOVA (analysis of variance). The null hypothesis for the statistical tests was: the distributions of the real and synthetic populations are equal. The H values and U values together with the corresponding p values for each test and for each of the GAN models are shown in

Table 2. Each test compares the real data with the synthetic data generated with one of the four models. The level of significance

was considered.

As the p value for each test is greater than , the null hypothesis is confirmed: for each of the four synthetic data sets, irrelevant of the number of features, there is little to no evidence that the distribution of the generated data is statistically different than the distribution of the real data. H and U tests provide evidence about the similarity of distributions; nevertheless, TSTR and TRTR remain the main metrics for comparing among the GAN models.

Note the intuitive similarity between reasoning behind R-GAN and a common approach for dealing with the class imbalance problem, SMOTE (Synthetic Minority Over-sampling TEchnique) [

53]. SMOTE takes each minority class sample and creates synthetic samples on lines connecting any/all of the

k minority neighbors. Although R-GAN deals with the regression problem and SMOTE with classification, both create new samples by using knowledge about existing samples. SMOTE does so by putting new samples between existing (real) ones whereas R-GAN learns from the real data, and then it is able to generate samples similar to the real ones. Consequently, R-GAN has a potential to be used for class imbalance problems.

Overall, the results are promising as the forecasting model trained on the synthetic data is achieving similar forecasting accuracy as the one trained on the real data. As illustrated in

Table 1, both MAPE and MAE for the model trained on the synthetic and tested on the real data (TSTR) are close to MAPE and MAE for the model trained and tested on the real data (TRTR).

5.4. Results and Discussion—Building Genome Data Set

This subsection presents the results achieved with Building Genome data. MAPE and MAE for the four evaluations TRTS, TRTR, TSTR, and TSTS are presented in

Table 3. The same data is displayed in a graph form for ease of comparison:

Figure 13 compares models based on MAPE and

Figure 14 based on MAE.

Similar to the UCI data set, TSTR accuracy is close to TRTR accuracy in terms of both, MAPE and MAE, for all models, irrelevant of the number of features. As in the UCI experiments, this indicates that the accuracy of the forecasting model trained with synthetic data is close to the accuracy of the model trained with real data. The best model was with core and FT features: it achieved the MAPE of 4.86% when trained with real data (TRTR) and 5.49% when trained with synthetic data (TSTR).

While with UCI data set, the model with FT and ARIMA features achieved the best results, with Building Genome data, the model with FT (without ARIMA) achieved the best result over all metrics. Note that this is the case even when the model is trained and tested on the real data (TRTR) without any involvement of the generated data: with ARIMA and FT, TRTR MAPE was 6.77% whereas with FT only, MAPE was 4.87%. Thus, this degradation of the model with addition of ARIMA is a consequence of the data set itself and not the result of data generation.

Analyses of the ARIMA feature in the two data sets showed that the Pearson-Correlation between energy consumption and the ARIMA feature was very different for the two data sets. For Building Genome data, the correlation was 0.987 and for UCI data set it was 0.75, indicating a very high linear correlation between the ARIMA and consumption features in Building Genome data. In Building Genome data set, ARIMA was able to model the patterns of the data much better what indicates that the temporal patterns were much more consistent than it was the case with UCI data set. This pattern regularity also explains higher TRTR accuracy of Building Genome models than UCI models: the best TRTR MAPE for Building Genome was 4.86% and for UCI data set it was 10.81%.

High multicollinearity can indicate redundancy of the feature set and can lead to instability of the model and degraded performance [

54,

55,

56]. One way of dealing with multicollinearity is feature selection [

55] which will remove unnecessary features. With such a high correlation in Building Genome data, removal of one of the correlated features is expected to improve performance as confirmed in our experiments with the model without the ARIMA feature performing better. This could be remedied through feature selection [

55]. As the aim of our work is not to improve the accuracy of the forecasting models, but to explore generating synthetic data for machine learning, feature selection is not considered.

From

Table 1 and

Table 3, it can be observed that, as with UCI data set, for Building Genome data set TSTR errors are higher than TRTS errors in terms of both, MAPE and MAE, for all experiments. As noted with UCI experiments, this could be caused by the variety of generated samples not being as high as the variety of real data.

Two statistical tests, the Kruskal-Wallis H test and the Mann-Whitney U test, were applied to evaluate if there is a statistically significant difference between the synthetic and real data. Again, the null hypothesis was: the distributions of the real and synthetic populations are equal.

Table 4 shows H values and U values together with the corresponding p values for each test and for each of the GAN models. The same level of significance

was considered.

For Building Genome experiments, same as for UCI experiments, the p value for each test is greater than and the null hypothesis is confirmed: for each of the four synthetic data sets, irrelevant of the number of features, there is little to no evidence that the distribution of the generated data is statistically different than the distribution of the real data.

Overall, the results for both data sets, UCI and Building Genome data set, exhibit similar trends. As illustrated in

Table 1 and

Table 3, accuracy measures, MAPE and MAE, for the models trained on the synthetics data and tested on the real data (TSTR) are close to MAPE and MAE for the model trained and tested on the real data (TRTR) indicating suitability of generated data for training ML models.

6. Conclusions

Machine learning (ML) has been used for various smart grid tasks, and it is expected that ML role will increase as new technologies emerge. Those ML applications typically require significant quantities of historical data; however, those historical data may not be readily available because of challenges and costs associated collecting data, privacy and security concerns, or other reasons.

This paper investigates generating energy data for machine learning taking advantage of Generative Adversarial Networks (GANs) typically used for generating realistic-looking images. Introduced Recurrent GAN (R-GAN) replaces Convolutional Neural Networks (CNNs) used in image GANs with Recurrent Neural Networks (RNNs) because of RNNs ability to capture temporal dependence in time series data. To deal with convergence instability and to improve the quality of generated data, Wasserstein GANs (WGANs) and Metropolis-Hastings GAN (MH-GAN) techniques were used. Moreover, ARIMA and Fourier Transform were applied to generate new features and, consequently, improve the quality of generated data.

To evaluate the suitability of data generated with R-GANs for machine learning, energy forecasting experiments were conducted. Synthetic data produced with R-GAN was used to train the energy forecasting model and then, the trained model was tested on the real data. Results show that the model trained with synthetic data achieves similar accuracy as the one trained with real data. The addition of features generated by ARIMA and Fourier transform improves the quality of generated data.

Future work will explore the impact of reducing training set size on the quality of the generated data. Also, replacing LSTM with Gated Recurrent Unit (GRU) and further R-GAN tuning will be investigated.