4.1. Proposed Algorithm vs. Jaya

The proposed algorithm and Jaya were coded in Java, and for each of the thirteen configurations in

Table 3, 100 independent runs of either method were executed. The best, mean, and standard deviation of the best-of-run costs from 100 independent runs of either algorithm are presented in

Table 4 for each of the thirteen configurations. Since this is a problem of minimization, numerically lower values of the cost function indicate better performance.

The proposed method outperforms Jaya in the majority of cases on each of the following three metrics: (a) the mean of the 100 best-of-run costs; (b) the best of the 100 best-of-run costs; and (c) the standard deviation of the hundred best-of-run costs.

Results of large-sample unpaired tests between the two means of the best-of-run costs in

Table 4 are shown in

Table 5, where the difference was obtained by subtracting the proposed method’s cost from the corresponding cost produced by the Jaya algorithm. The sample size is 100 for either algorithm for each of the thirteen configurations. For all configurations but one, the proposed method is apparently better, judging by the sign of the

z-score. The

p-values (not shown in this table) corresponding to many of the

z-scores are small, but it is arguable whether they are small enough for the difference to be statistically significant (much depends on the choice, arguably arbitrary, of the level of significance). For the lone negative

z-score, the relatively high value of

p implies that the proposed method is certainly not inferior to Jaya.

That each configuration in

Table 3 represents a certain fixed set of algorithm parameter values implies that a performance comparison confined to any one configuration presents only a fragmented picture of the capabilities of the competing algorithms. For a more meaningful analysis, therefore, a configuration ensemble must be studied. This is what is done in

Table 6 where Wilcoxon signed-rank tests are performed on the data of

Table 4. The thirteen configurations yield as many independent samples of each algorithm’s performance in

Table 6 where two performance metrics are considered separately: the average of the 100 best-of-run costs and the best of the 100 best-of-run costs.

A comparison of the means of the best-of-run costs of the two methods (the “Mean” column under Jaya versus the “Mean” column under “Proposed Algorithm” in

Table 4) is shown in the second column of

Table 6 where a two-sample paired test was performed to test the null hypothesis

Jaya mean - Proposed algorithm mean = 0

against the one-sided alternative

Jaya mean > Proposed algorithm mean.

In this table,

n denotes the effective sample size, obtained by ignoring the zero differences, if any. The

W-statistic was obtained as min(

). The critical

W was obtained from standard tables [

53]. The

z-score was obtained (using the normal distribution approximation) as

where

and

The

p-value was calculated from standard tables of the unit normal distribution. The data in the second column of

Table 6 show that, for the given sample size and the given significance level, the

W-statistic (=13) is less than the critical

W (=21), a fact that allows us to reject the null hypothesis in favor of the alternate hypothesis. Again, the

p-value is less than 0.05; thus, the difference, Jaya mean—Proposed algorithm mean, is significant at the 5% significance level.

The last column of

Table 6 shows the comparison between the two best values. The effective number of samples,

n, is 12 in this case. Unlike the comparison in the second column of the table, the

W statistic (=24) in the last column is larger than the corresponding critical

W (=17), which means that the null hypothesis cannot be rejected at the 5% level. The

z-score and the

p-value, however, do not establish any superiority of Jaya over the proposed method at the 5% level.

The results in

Table 4,

Table 5 and

Table 6 are all about the quality (cost) of the solution without any reference to the computation time needed to obtain that quality. In the next three tables, we present a comparative study of the time (as measured by the number of cost function evaluations) taken by the algorithms to obtain solutions of a given quality. Specifically, we set a cut-off or target cost of 13.5 (following [

42]) and record, for each run of the algorithm, the number of cost evaluations needed to produce, for the very first time in the run, a solution better (that is, numerically smaller) than or equal to that target cost. Let us denote by firstHitEvals the number of evaluations needed by an algorithm to hit (meet or beat) the target for the very first time in a given run (execution) of the algorithm. Given that the algorithms discussed here are really heuristics, once an algorithm hits the target for the very first time in a run, it is generally likely (but never guaranteed) that it will produce better solutions than the target value if the run is allowed to proceed further, that is, if the run consumes more evaluations than firstHitEvals. Note also that it is possible for an algorithm to never hit the target in a given (finite) number of evaluations.

Comparative results on the firstHitEvals metric are presented in

Table 7 where each row was obtained from 100 independent runs of either algorithm. For each of the two competing algorithms, the #Success column shows the number of runs (out of the 100) that did hit the target within the maximum quota of maxEvals evaluations (recall that the maxEvals values for different configurations are given in

Table 3). The proposed method’s success rate is better than its competitor’s in all thirteen cases. Again, the mean of firstHitEvals is smaller (better) for the proposed method in the majority of configurations; the pattern with the standard deviation of firstHitEvals is similar.

Table 8 shows the results of large-sample unpaired tests on the two means of firstHitEvals in

Table 7. Unlike

Table 5, which has a sample size of 100 for either algorithm for each of the 13 configurations,

Table 8 does not in general have the same sample size for the two algorithms for a given configuration, and the sample sizes are not always 100 (this is simply because not all runs in

Table 7 were successful). For example, the sample sizes for the second configuration in

Table 7 (and also in

Table 8) are 34 and 42.

The use of the sample standard deviation in lieu of the population standard deviation in the calculation of the

z-statistic in

Table 8 is not inappropriate because the sample sizes, with the exception of the first configuration, are greater than 30, so that the central limit theorem may be used to justify the normal distribution approximation [

54].

With a few exceptions, the

p-values corresponding to the

z-scores in

Table 8 cannot be used to show the superiority (in a statistically significant way) of the proposed method for individual configurations. As discussed earlier, the overall algorithm behavior across different configurations can be captured by the configuration ensemble. This is done in

Table 9 where Wilcoxon signed rank tests conducted on the data in

Table 7 are presented. The results in

Table 9 show that not only does the proposed algorithm succeed in hitting the target significantly more often than its competitor, it also needs significantly fewer evaluations to achieve that feat.

The next three tables show the performance of the algorithms on the metric of firstHitCost, which is the cost of the solution obtained at firstHitEvals; clearly, firstHitCost is less than or equal to the target cost. The mean and standard deviation of firstHitCost values obtained from 100 independent runs (these runs are the same as the ones used to obtain firstHitEvals in

Table 7) are shown for each of the two competing methods in

Table 10.

Table 11 shows results of large-sample unpaired tests on the two means of firstHitCost in

Table 10. As before, the one-tailed

p-values (not shown in

Table 11) produced by the

z-scores fail to establish a statistically significant outcome with respect to individual configurations, a fact that leads us to the statistical analysis of the configuration ensemble in

Table 12, which presents the results of the Wilcoxon signed rank test on the set of 13 samples in

Table 10.

Table 12 shows that the average cost of the solution produced after consuming firstHitEvals evaluations is statistically significantly better (at the 5% level) for the proposed method.

The best stack designs produced by Jaya and the proposed algorithm, given by the solution vectors (

,

,

) and the corresponding

,

and cost, are shown in

Table 13 where each row corresponds to a given configuration (popSize-maxGen combination). A solution in this table represents, for the corresponding algorithm and the corresponding configuration, the best of all the solutions produced in the 100-run suite. While the proposed method’s best solution produces a better (lower) cost in the majority of cases in

Table 13, neither algorithm is statistically significantly better than the other on this particular metric (as seen in the results of

Table 6). The mean best solution of the proposed method, however, was found to be statistically significantly better than that of Jaya (

Table 6).

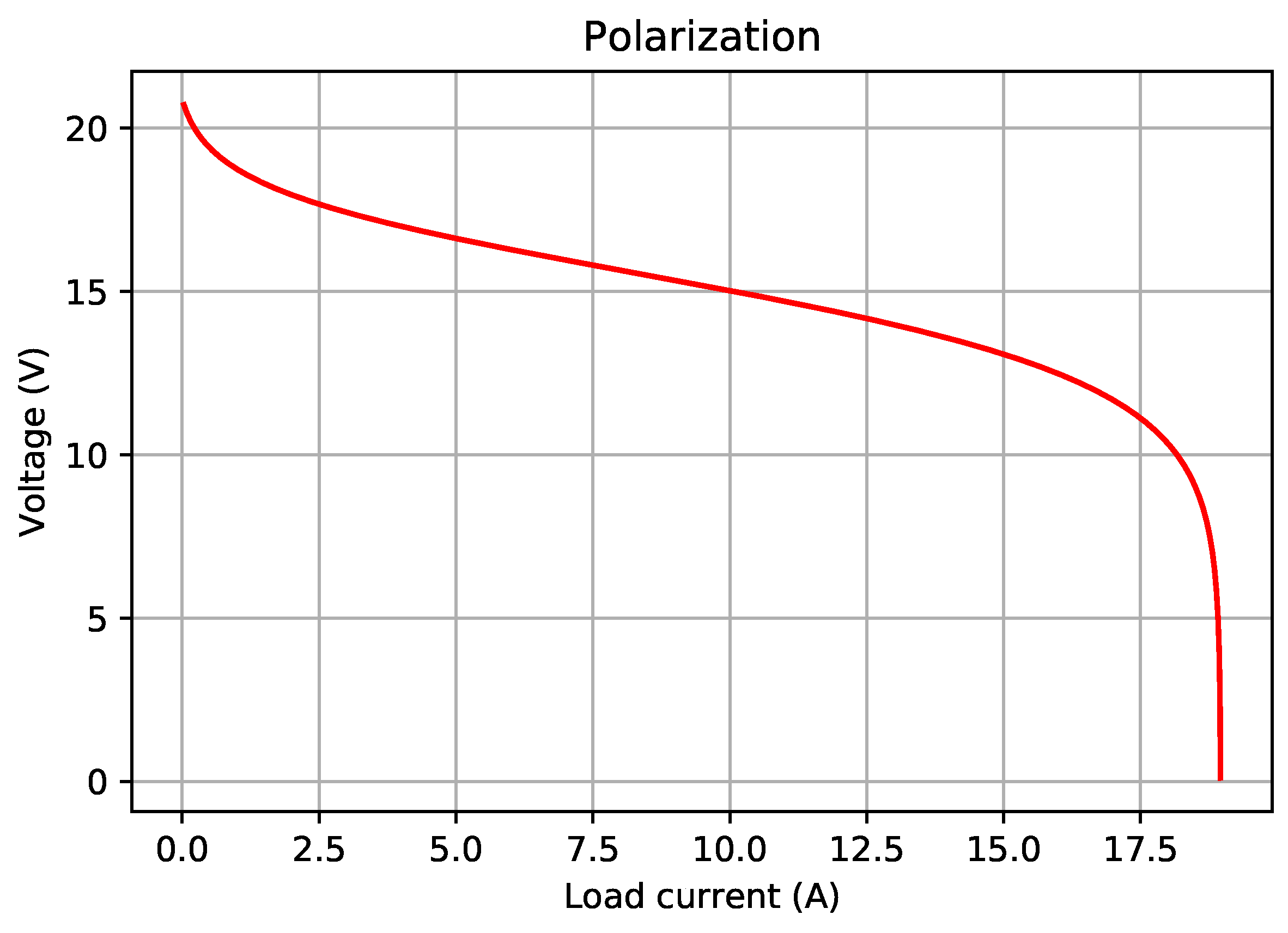

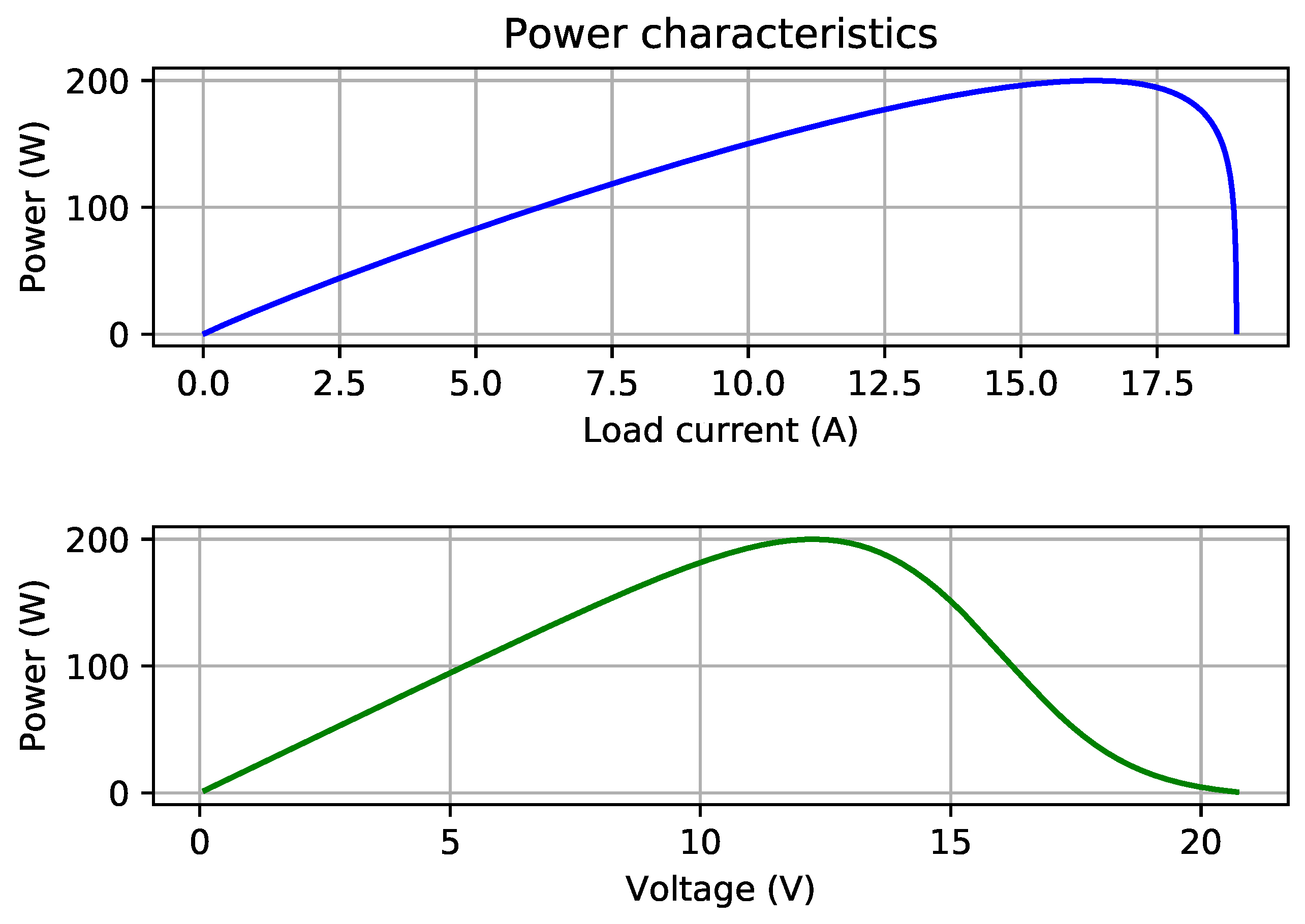

4.2. Effect of Current Step Size on Numerical Calculations of and

The maximum power point corresponding to a given stack configuration vector (

) and a given set of cell-parameter values (

, etc.) cannot be obtained analytically. In other words, the problem of finding

with

given by Equation (

9), is not analytically solvable. A numerical, iterative method has been used in this paper, where a loop over current values is executed in order to numerically find an approximation to the maximum power point. Since the maximum power point needs to be determined for every single cost (objective function) evaluation, the total computation time to complete all such iterations for all runs in a test suite may not be trivial. It is, therefore, good to be able to use a relatively large step size in incrementing the current value in the loop. A large step size, however, reduces the accuracy of the computed

and

. The results presented so far in this paper used a current step of 50 mA. To study whether the step size affects the conclusions about the relative performance of the algorithms, we obtain a new set of results using a step size of 1 mA. These results, arguably more accurate than their 50 mA counterparts, are presented in

Table 14,

Table 15 and

Table 16. A target cost of 13.62 is used in

Table 15 because the previously used target of 13.5 was met by no run in the new set of results. Results of Wilcoxon signed-rank tests on the new set of data are shown in

Table 17 which establishes the proposed method as significantly better than Jaya on the success rate as well as on the mean of best-of-run costs. Jaya is significantly better on the best of best-of-run costs metric, while the methods are statistically tied (the one-tail

p-value is close to 0.5) on the remaining two metrics. Given the vagaries of chance, for stochastic heuristics, the average performance rather than the single best performance is generally considered to be a reliable indicator of a method’s performance. The new data, therefore, do not offer a convincing reason to argue that Jaya performs better than the proposed method. Combining the new results with the old ones for all five performance metrics, we conclude that the proposed heuristic meets or beats the Jaya algorithm.

4.3. Proposed Algorithm vs. Point-Based Stochastic Heuristic

We now present performance comparisons of the proposed method against the heuristic of [

42]. Each of the four maxEvals values reported in Table 9 of [

42] corresponds to two different configurations in

Table 3 of the present paper (see

Table 18).

The best-cost solution vector from Table 4 on p. 536 of reference [

42] is shown in

Table 19 where the values of

,

and

are copied from [

42],

and

are computed by the iterative numerical method described in

Section 4.2 (using two different values of the current step size), and the cost is computed from Equation (

10).

The first row of

Table 19 shows that the current step of 50 mA produces a close agreement of

,

and cost values in this table with the corresponding values in Table 4 of [

42]. For the rest of this subsection, therefore, results corresponding to only the 50 mA step size are used.

Table 9 of [

42] and

Table 4 and

Table 10 of the present paper show that the proposed algorithm outperforms the algorithm of [

42] in all of the following metrics: (i) best of the 100 best-of-run costs; (ii) mean of the 100 best-of-run costs; (iii) standard deviation of the 100 best-of-run costs; (iv) count (out of the one hundred) of successful runs; and (v) mean firstHitCost obtained from the successful runs (the mean firstHitCost of [

42] is slightly better for one of the two cases—Configuration 11—of maxEvals = 4000).

To investigate the statistical significance, if any, of the difference between the means of the best-of-run costs of the two algorithms, we performed unpaired

t-tests, the results of which are presented in

Table 20. We tested the null hypothesis

against the one-sided alternative

, where

and

are the (population) means of the method in [

42] and the proposed method, respectively. We chose a level of significance of 0.05. Since the sample variances differ by a factor of about 10,000, the standard two-sample

t-test cannot be used. We, therefore, used the Smith–Satterthwaite test [

32,

54] corresponding to unequal variances. The test statistic is given by

and its sampling distribution can be approximated by the

t-distribution with

degrees of freedom (rounded down to the nearest integer), where

and

represent the two sample means,

and

are the two sample standard deviations, and

and

are the two sample sizes (100 each).

Table 20 presents, for each configuration, the following:

t-statistic;

degrees of freedom (d.f.);

the critical t value (obtained from standard tables of t-distribution) at 95% (right-tail probability of 0.05) and for the specified degrees of freedom; and

95% confidence interval for the difference of the two means,

at the specified degrees of freedom .

The results show that, for each case, the t-statistic exceeds for the relevant degrees of freedom. Therefore, the null hypothesis is rejected in all of these cases. Furthermore, none of the confidence intervals contains zero. We thus conclude that the improvements produced by the proposed method are statistically significant.

Smith–Satterthwaite tests were also performed for comparing [

42] against Jaya, and the results (see

Table 21) establish Jaya as significantly better than [

42].

4.5. Proposed Algorithm vs. Quasi-Analytical Approach

The best solution produced by the quasi-analytical method in [

43] yields the following stack design:

= 22,

= 1,

= 151.4 cm

2. Plugging these values into Equations (

9) and (

10) gives the maximum power point values and the corresponding costs in

Table 23. All of the best solutions of the proposed method in

Table 13 and

Table 16 have a better (lower) cost than those in

Table 23. The objective function minimized in [

43] is the total stack area given by

, and yet the proposed method’s best solutions produce better (smaller) total areas, while meeting the requirements of the rated voltage and rated power.

The characterization in [

43] of the design vector (22, 1, 154.16) as the best design in [

42] does not seem to be correct. The vector (22, 1, 154.16) is mentioned in [

42] as a “typical” design, not the best or optimal design. In fact, several of the designs in Table 4 of [

42] have cell areas that are smaller than 154.16 cm

2, with two of them even smaller than the “optimal” area of 151.4 cm

2 in [

43]. The design (22, 1, 149.597) from Ref. [

42] outperforms the “optimal” design of (22, 1, 151.4) of [

43] on the cell area metric as well as on the objective (cost) function for both the step sizes (see

Table 19 and

Table 23).