ERIGrid Holistic Test Description for Validating Cyber-Physical Energy Systems

Abstract

1. Introduction

1.1. Challenges in Testing of Cyber-Physical Energy Systems

1.2. Possible Harmonisation

1.3. Scope and Approach

- (a)

- How can experiments be framed to account for the multi-disciplinary setting and wide variety of employed experimental platforms?

- (b)

- To what extent can a template-based approach to experiment description enhance the quality of experiment planning, experiments, and reporting?

2. Background and Related Work

2.1. Related Work

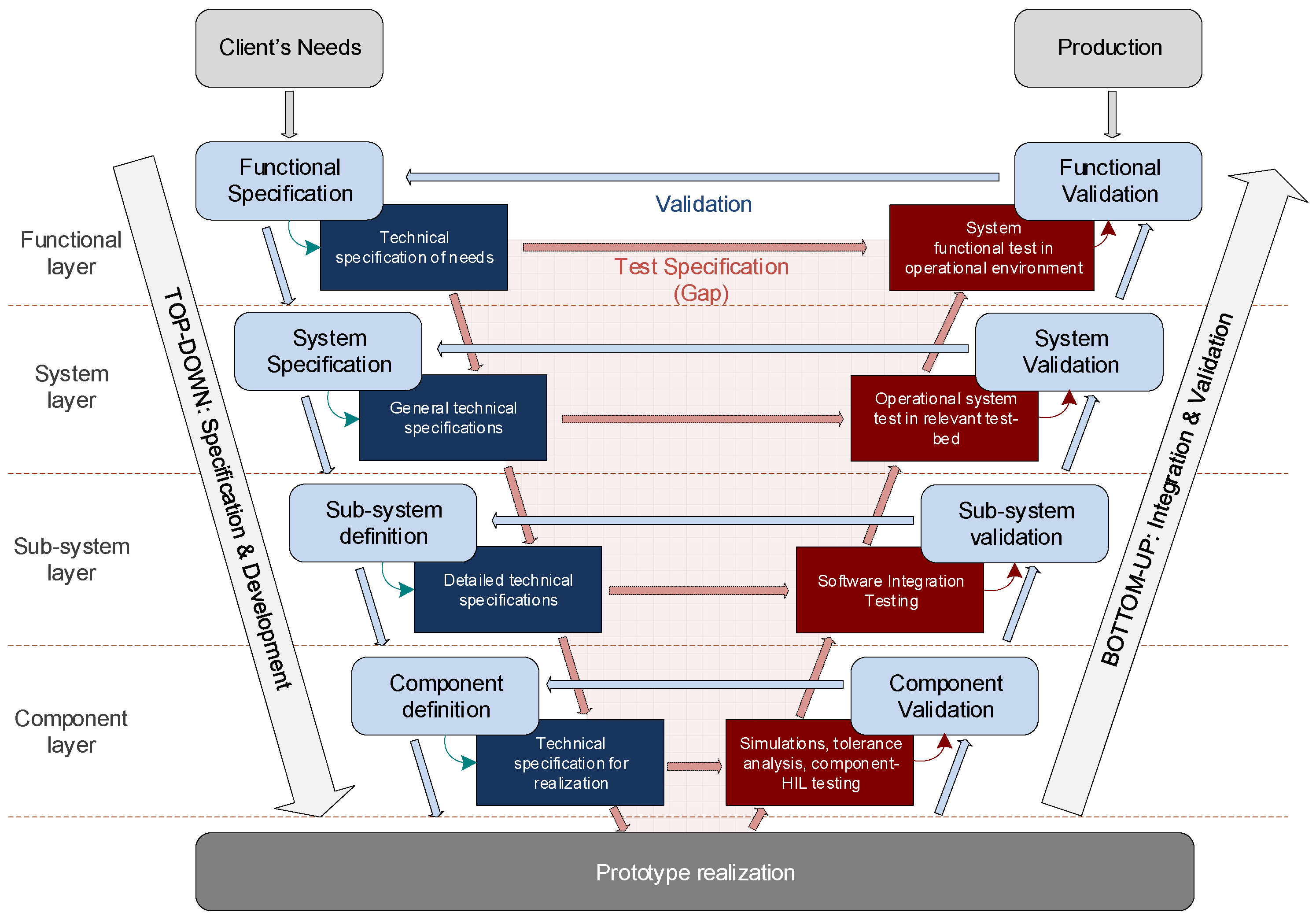

2.2. Test Purposes: Testing in a Technical Development Context

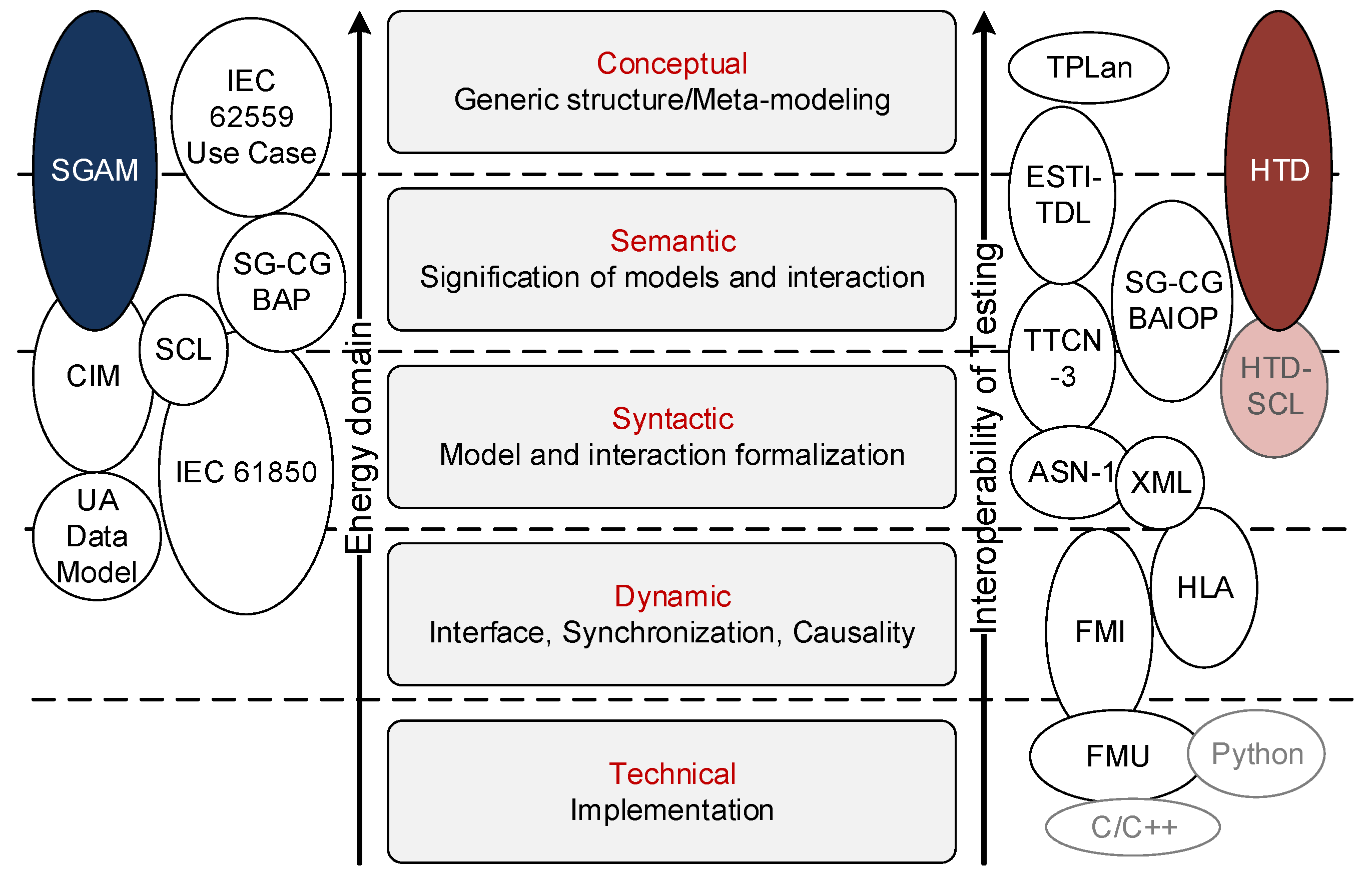

2.3. The Relation between Testing and Energy System Semantics

- (1)

- The energy system semantic: It represents the behaviour and the semantic relations among the different actors of the system. Depending on the considered energy system and the information models, this semantic represent the application relevant purposes, components and structures of the system (i.e., the “real world application”).

- (2)

- The testing semantic: It is the purpose and content of a single or set of tests. It relates the real-world motivation for a test to the concrete system configurations and functions to be included in an experiment.

2.3.1. Energy System Semantic

2.3.2. Testing Semantics

2.4. Testbed Technology

- Co-simulation is the concept of composing coupled simulators that cooperate with each other while running on their own solvers and models. Co-simulation is particularly useful for coupling models with different time scales (transient/steady state) or with distinct natures (continuous/discrete event), in eventually different domains (e.g., power and ICT, electric and thermo) [31,32,33].

- Hardware-in-the-Loop (HIL) is the experimental technique in which a Hardware under Test (HUT) is coupled with a real-time simulation to test under realistic conditions. HIL supports throughout study of transient and steady state operation of the HUT under realistic, yet safe and repeatable, conditions; testing of a HUT in faulty and extreme conditions without damaging laboratory equipment [34,35].

- Remote laboratory coupling and integration of HIL and co-simulation in a holistic framework [36,37,38,39,40,41,42] enables a more complete and realistic consideration of CPES, and coupling of existing physical labs with simulated environments in an integrated and consistent manner. Architectures have been proposed as supports for such cross-infrastructure deployment: using real-time database as the common interchange point [43], dedicated message bus [37,40], Supervisory Control and Data Acquisition (SCADA) as a service [44], and direct peer-2-peer streams [38] using a real-time protocol. Besides providing the required technical base for implementation, these architectures also pave the way to international collaboration by combining several infrastructures and/or replacing non-available components/systems by simulation, increasing the realism of validation and demonstration environments.

2.5. Test Design, Sampling and Evaluation Methodology (Design of Experiments)

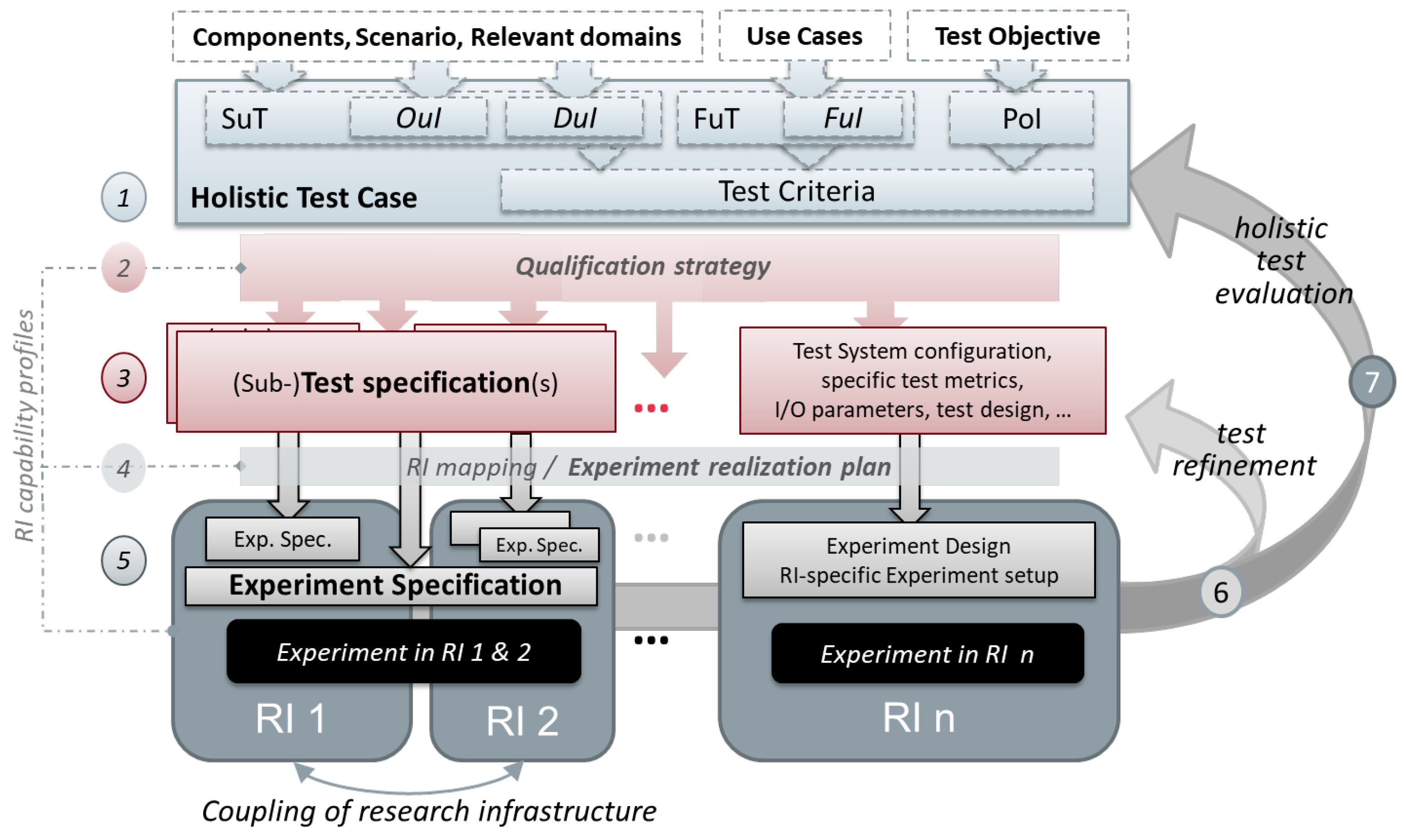

3. Guideline to Holistic Test Description

3.1. Overview of HTD Elements

- Test Case (TC)

- Qualification Strategy (QS)

- Test Specification (TS)

- Experiment Realisation Plan

- Experiment Specification (ES)

- Results Annotation

- Experiment Evaluation

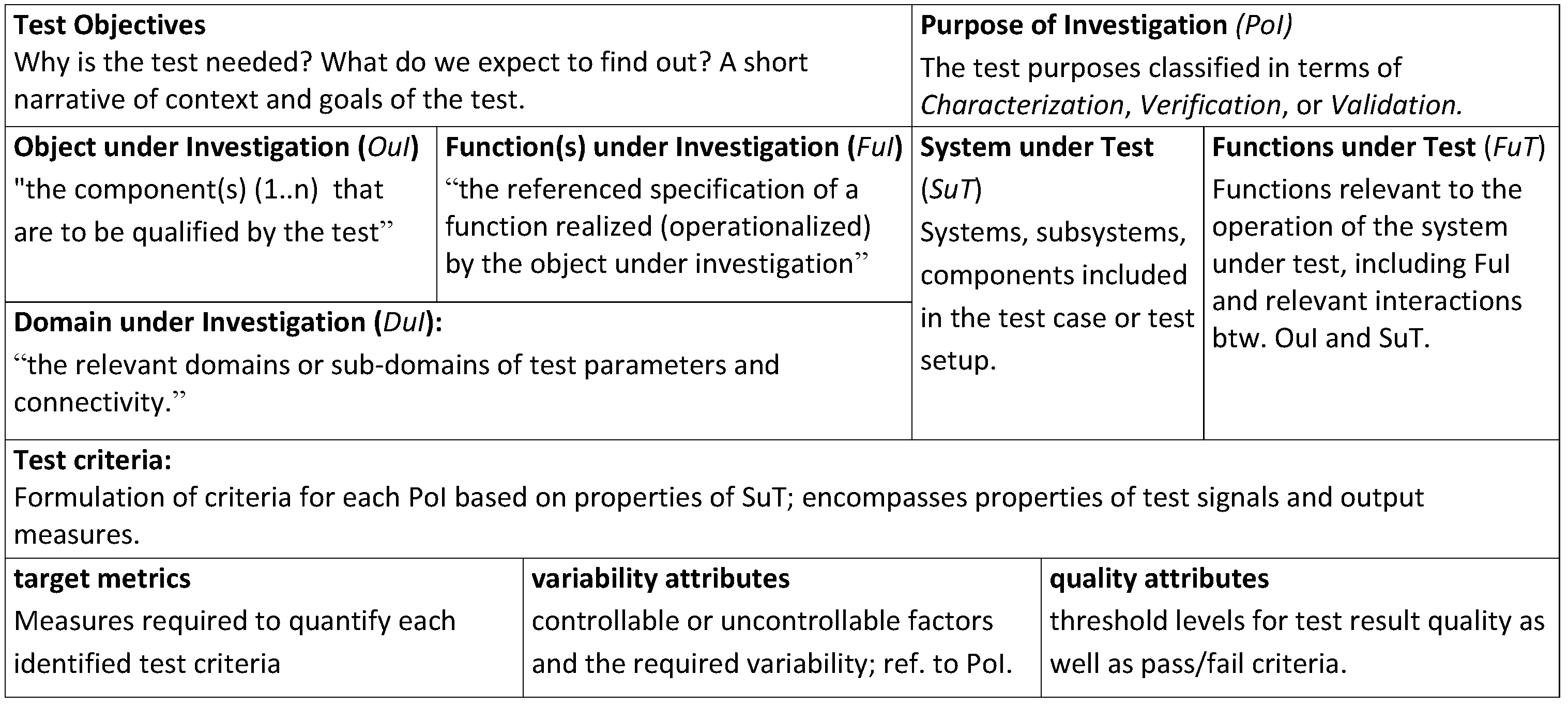

3.1.1. Test Case

3.1.2. Qualification Strategy

3.1.3. Test Specification

3.1.4. Experiment Realisation Plan

3.1.5. Experiment Specification

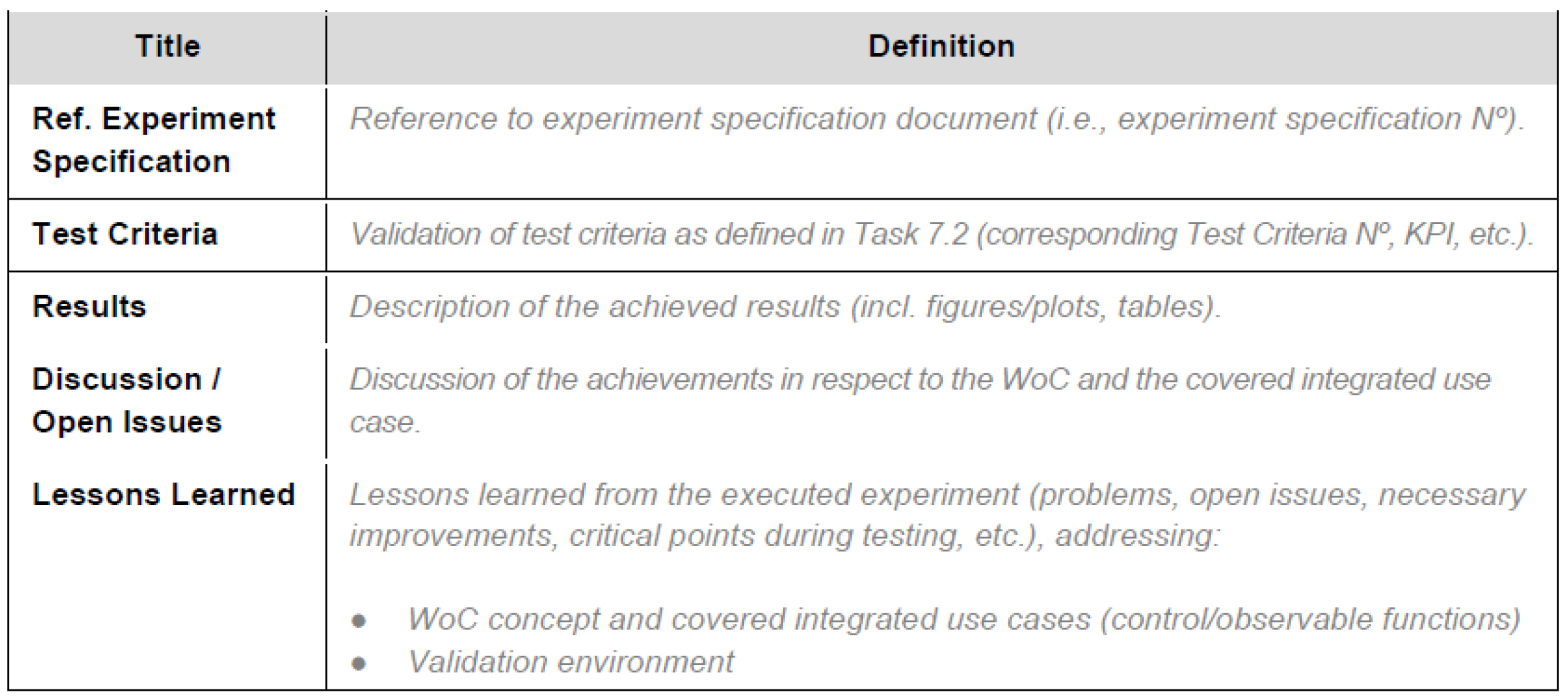

3.1.6. Results Annotation

3.2. Key Aspects in Developing a Holistic Test Description

3.2.1. Formalising Test Objectives: From PoI to TCR, to Evaluation Metrics

- Verification

- Validation

- Characterisation

- Validation tests: Functional requirements and passing criteria are provided as abstract measures, where experiment results are subject to some expert interpretation to decide upon pass/no-pass.Implication for Test Case: Test criteria are formulated qualitatively; a qualitative passing criterion is required (consider who is the expert qualified to pass the judgement).Example: Is a controller ready for deployment in the field? Relevant experts here: development or field engineer.

- Verification test: Tests where requirements are formulated as quantitative measures and thresholds of acceptable values are quantified.Implication for Test Case: Test Criteria are formal and quantified. A passing threshold is defined.Examples: (i) Standard conformance testing; and (ii) passing the set of tests (test harness) applied in software unit-testing.

- Characterisation test: Here, a measure is given without specific requirements for passing the test. Implication for Test Case: Test Criteria are quantified, typically given key metrics or performance indicators. A passing threshold is not defined, but a metric for expected result quality can be provided (validity of experiment, not of OuI).Examples: Characterising performance of a system; characterising the physical parameters of a component for developing an equivalent simulation model.

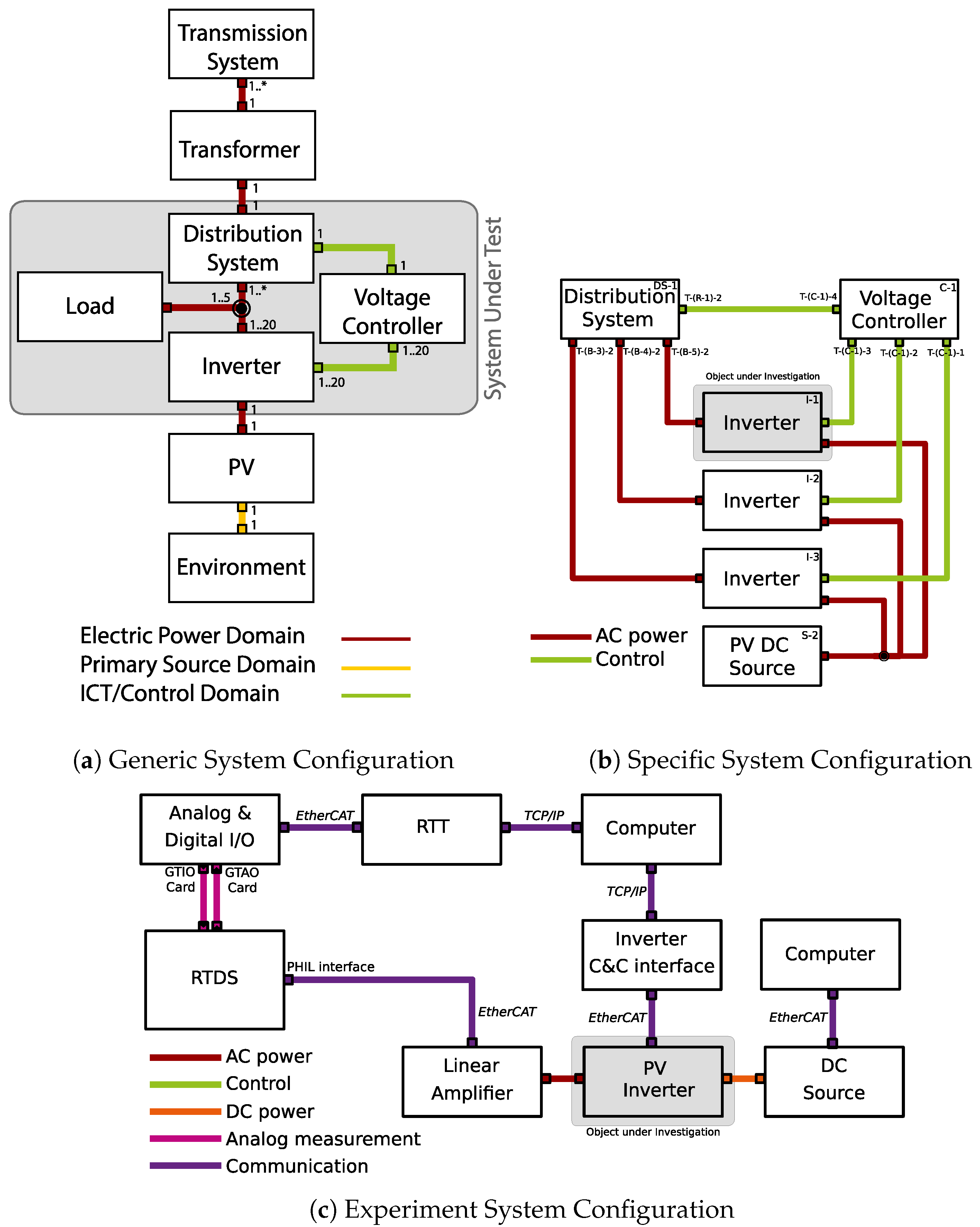

3.2.2. Configuration for Experiments: Abstract System Concept to Experiment Configuration

3.2.3. Experiment Realisation Plan

- precise: The respective system aspect has to be matched 1:1 (e.g., exactly the same model of electric vehicle, the exact grid topology, the same communication protocol, etc.).

- equivalent: The respective aspect has to be matched equivalently (e.g., an electrical vehicle with the same charger and battery size, a grid topology with the same number of nodes, a communication protocol with the same or a better fidelity, etc.).

- nominal: The respective aspect can be matched with some deviations, but they should only lead to marginal influences on objective and results (e.g., a controllable load simulating an electrical vehicle, a grid connection providing similar load/voltage characteristics, some means of communication without regard for the specifications, etc.).

- irrelevant: The respective system aspect does not influence the test objective and results.

3.2.4. Systematically Quantified Test Results: Design of Experiments and Qualification Strategy

4. Application of Holistic Test Description

- reproducibility of experiments in different laboratories, as flexibility in the experiment realisation can be achieved;

- self-contained sharing of test requirements across different test organisations, directly based on HTD documentation;

- supports the scoping of simulation models as part of a test system;

- traceability of the experimental procedures, enabling, for example, reproduction and round robin testing as a pre-cursor to developing standardised test procedures;

- repository creation and streamlining of similar and repeated the test processes, retains domain expertise embedded in the repository;

- creation of modular test specifications, which in turn enables re-use of test components, and supports test automation; and

- plan and coordinate complex tests involving multiple experiments.

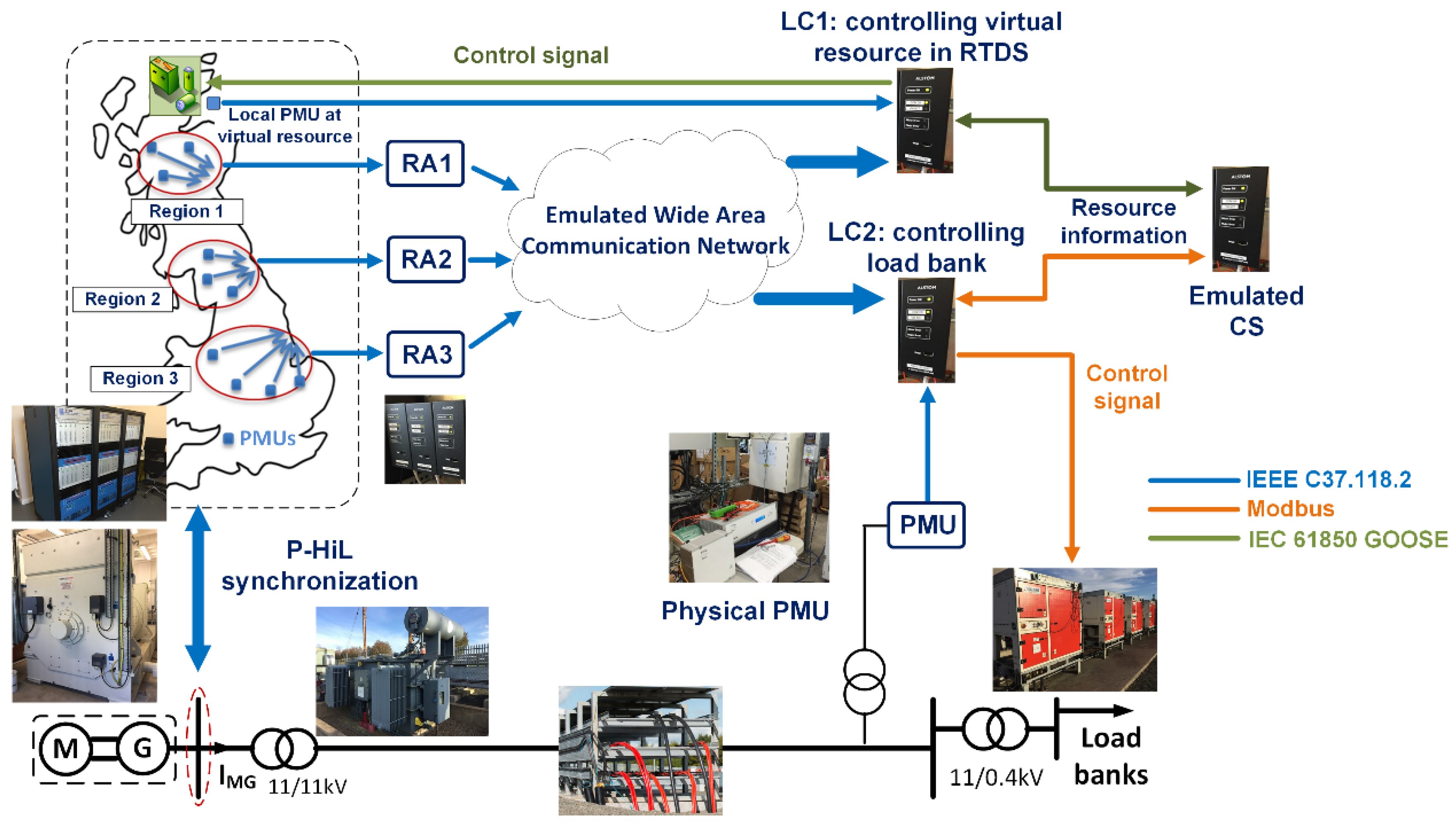

4.1. Illustration Example

- Enabling repeatability of the test using different HIL implementations: Characteristics of different HIL setups between involving a digital grid simulator and control system under test are examined, particularly to understand the impact on test repeatability.

- Enabling the execution of the test in different research infrastructures using different test setups: Focus bise on how a unified approach to the test requirements specification facilitates independent, yet complementary experiments.

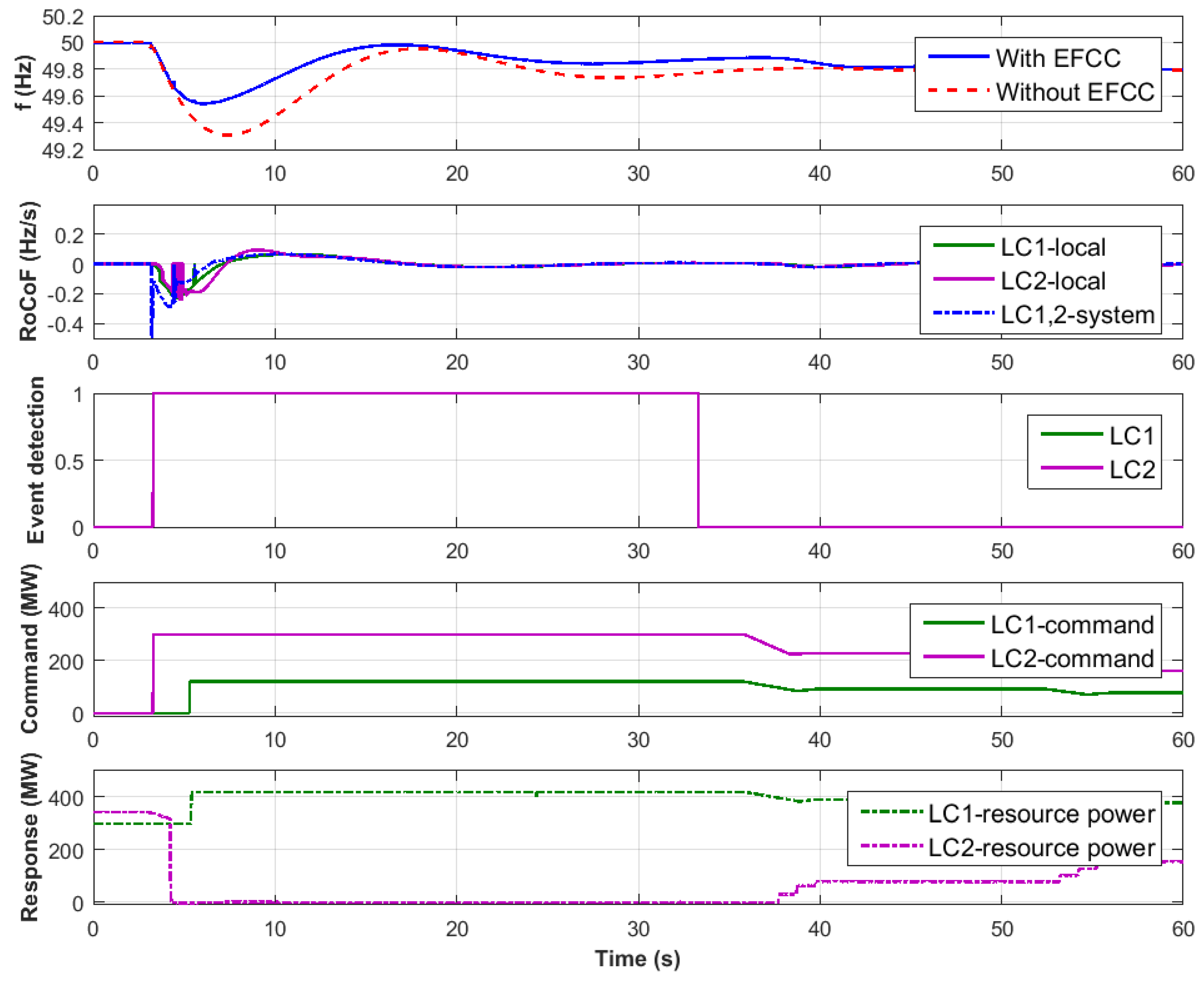

4.1.1. Enhanced Frequency Control Capability (EFCC) Performance Verification

- Verification that that the EFCC control scheme is capable of identifying grid frequency events correctly and deploying an appropriate amount of response to contain the frequency deviation: Verifying scheme sensitivity to frequency events and stability against non-frequency events (e.g., faults) are the focus here.

- Quantification of the enhancement of frequency containment using the EFCC control (i.e., compared to relying solely on primary frequency response): Speed and extent of frequency containment are the focus here.

4.1.2. EFCC Test Case Description

- OuI: Although a wide-area control scheme is being tested, it is the LCs which deploy the energy resources during grid frequency disturbances that are the focus of the test.

- FuI/FuT: Following on from the OuI definition, the LCs ability of determining and deploying the appropriate amount of energy resources in response to a detection of a grid frequency disturbance is the functionality that is being investigated. Note that other functions are present and operational during testing (e.g., the RA aggregation of PMU measurements). These are referred to as the functions under test (FuT), which are an essential part of the SuT, but are not the focus of the test (i.e., a direct verification of their performance is not performed).

- Verify that the LC successfully detects grid frequency disturbances necessitating a response.

- Verify that the LC remains stable against grid frequency disturbances not requiring a response (e.g., over-frequency resulting from a short circuit).

- Verify that the LC deploys the expected amount of resource with reference to the severity of the disturbance.

4.1.3. EFCC Test Specification

- amount of grid frequency containment following a genuine grid frequency event; and

- amount of resource deployed in relation to the event severity and LC settings.

4.1.4. EFCC Experiment Specification

4.1.5. Reflection

- Semantic demarcation between the test objectives and the implementation of the experiment: so long as the test objectives (i.e., OuI) and the ensuing performance criteria to be evaluated are defined, flexibility in the experiment realisation can be achieved. Thus, reproducibility in different HIL setups is possible. This is evidenced by achieving the verification of controllers’ performance connected to physical resources as well as simulated resources. On a larger scale, interfaces in the experiment could span across multiple laboratories.

- The HTD documentation is a practical means of sharing the test requirements across different test organisations or experiment implementations. By extension, traceability of the experimental procedure to the OuI is achieved, which would enable round-robin testing as a pre-cursor to developing standardised test procedures. As presented above, conducting a CHIL experiment paved the way to a more comprehensive PHIL verification for the control system.

4.2. Challenges Addressed and Application Experience

- Difficulty of interpreting component connectivity from experiment descriptions

- Difficulty of replicating sequentially the target metrics and the variability attributes

- Shared understanding of test purpose across domains (e.g., what level of detail is relevant from one domain to cause a relevant influence in another domain)

- Lack of clarity on the domain boundaries

- Lack of comprehensive recording of the domain specific target metrics (e.g., measuring voltage level but not the communication delay during the execution of control system)

- Simulation models are abstract in nature, but abstraction levels vary

- Identification of suitable model-components for a co-simulation setup

- Re-use of simulation components/models

- Proper description and inclusion of all the relevant components to be characterised and validated

- Tracking changes that occur between multiple interdependent experiments

- Misunderstandings between expert groups from different locations

- Lack of full understanding of results in earlier stages with their related uncertainities

- Incompatibility of resolution and type of measurement data and control signals

- Black-box test setup on the other end without mutual test description procedure

- Lack of full understanding on how and where the measurements from a real-time experiment in the other RI is conducted

4.3. Collected Application Evidence

5. Conclusions

- The HTD concepts are in part new and not fully in line with common usage; for example, “system under test”, “function under test/investigation”, and “object under investigation” all relate to the often used terms “system under test” (ETSI-TDL), “Device under Test” (frequently used in hardware testing), “Hardware under Test” (used in HIL context), etc. This creates communication challenges, which may be alleviated by improved training materials.

- Lack of guiding questions: Essentially, it is difficult to fill out the template ad-hoc, only based on the abstract HTD concepts, and not all fields are equally relevant. For example, the “precision of equipment or uncertainty measurement” may not always be part of the experiment planning. Additional guidelines may facilitate the learning process further, and establishing a community of experienced HTD users for knowledge sharing may be practical.

- An HTD-planned experiment may never have been carried out as documented in the templates: as plans change, experiment designs get updated along the way. While this situation cannot be changed, the HTD documentation process may be improved by a systematic versioning or referencing system to facilitate revealing the final experiments.

- Lack of tool integration: The system configuration annotation suffers from being a graphical dead-end. Tooling integration, e.g., between test system SSC and result evaluation, would also encourage detailing and updating test system and experiment descriptions.

Author Contributions

Funding

Conflicts of Interest

References

- Colak, I.; Fulli, G.; Sagiroglu, S.; Yesilbudak, M.; Covrig, C.F. Smart grid projects in Europe: Current status, maturity and future scenarios. Appl. Energy 2015, 152, 58–70. [Google Scholar] [CrossRef]

- Mankins, J.C. Technology readiness assessments: A retrospective. Acta Astronaut. 2009, 65, 1216–1223. [Google Scholar] [CrossRef]

- Strasser, T.; Pröstl Andrén, F.; Lauss, G.; Bründlinger, R.; Brunner, H.; Moyo, C.; Seitl, C.; Rohjans, S.; Lehnhoff, S.; Palensky, P.; et al. Towards holistic power distribution system validation and testing—An overview and discussion of different possibilities. E I Elektrotechnik Inf. 2017, 134, 71–77. [Google Scholar] [CrossRef]

- Brundlinger, R.; Strasser, T.; Lauss, G.; Hoke, A.; Chakraborty, S.; Martin, G.; Kroposki, B.; Johnson, J.; de Jong, E. Lab tests: Verifying that smart grid power converters are truly smart. IEEE Power Energy Mag. 2015, 13, 30–42. [Google Scholar] [CrossRef]

- Steinbrink, C.; Lehnhoff, S.; Rohjans, S.; Strasser, T.I.; Widl, E.; Moyo, C.; Lauss, G.; Lehfuss, F.; Faschang, M.; Palensky, P.; et al. Simulation-based validation of smart grids–status quo and future research trends. In Proceedings of the International Conference on Industrial Applications of Holonic and Multi-Agent Systems, Lyon, France, 28–30 August 2017; pp. 171–185. [Google Scholar]

- Van der Meer, A.A.; Palensky, P.; Heussen, K.; Bondy, D.E.M.; Gehrke, O.; Steinbrink, C.; Blank, M.; Lehnhoff, S.; Widl, E.; Moyo, C.; et al. Cyber-physical energy systems modeling, test specification, and co-simulation based testing. In Proceedings of the 2017 Workshop on Modeling and Simulation of Cyber-Physical Energy Systems (MSCPES), Pittsburgh, PA, USA, 21–21 April 2017; pp. 1–9. [Google Scholar] [CrossRef]

- Blank, M.; Lehnhoff, S.; Heussen, K.; Bondy, D.E.M.; Moyo, C.; Strasser, T. Towards a foundation for holistic power system validation and testing. In Proceedings of the 2016 IEEE 21st International Conference on Emerging Technologies and Factory Automation (ETFA), Berlin, Germany, 6–9 September 2016; pp. 1–4. [Google Scholar] [CrossRef]

- IEC 62559-2: Use Case Methodology—Part 2: Definition of the Templates for Use Cases, Actor List and Requirements List; Technical Report; International Electrotechnical Commision: Geneva, Switzerland, 2015.

- Gottschalk, M.; Uslar, M.; Delfs, C. The Use Case and Smart Grid Architecture Model Approach: The IEC 62559-2 Use Case Template and the SGAM Applied in Various Domains; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Uslar, M.; Rohjans, S.; Neureiter, C.; Pröstl Andrén, F.; Velasquez, J.; Steinbrink, C.; Efthymiou, V.; Migliavacca, G.; Horsmanheimo, S.; Brunner, H.; et al. Applying the Smart Grid Architecture Model for Designing and Validating System-of-Systems in the Power and Energy Domain: A European Perspective. Energies 2019, 12, 258. [Google Scholar] [CrossRef]

- Kaplan, D. Distributed Energy Resources Manager. U.S. Patent 12/905,292, 21 April 2011. [Google Scholar]

- Wang, J.; Chen, C.; Lu, X. Guidelines for Implementing Advanced Distribution Management Systems-Requirements for DMS Integration with DERMS and Microgrids; Technical Report; Argonne National Lab.(ANL): Argonne, IL, USA, 2015. [Google Scholar]

- Vogel, S.; Stevic, M.; Kadavil, R.; Mohanpurkar, M.; Koralewicz, P.; Gevorgian, V.; Hovsapian, R.; Monti, A. Distributed Real-Time Simulation and its Applications to Wind Energy Research. In Proceedings of the 2018 IEEE International Conference on Probabilistic Methods Applied to Power Systems (PMAPS), Boise, ID, USA, 24–28 June 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Palmintier, B.; Lundstrom, B.; Chakraborty, S.; Williams, T.; Schneider, K.; Chassin, D. A Power Hardware-in-the-Loop Platform With Remote Distribution Circuit Cosimulation. IEEE Trans. Ind. Electron. 2015, 62, 2236–2245. [Google Scholar] [CrossRef]

- Ulrich, A.; Jell, S.; Votintseva, A.; Kull, A. The ETSI Test Description Language TDL and its application. In Proceedings of the 2014 2nd International Conference on Model-Driven Engineering and Software Development (MODELSWARD), Lisbon, Portugal, 7–9 January 2014; pp. 601–608. [Google Scholar]

- Strasser, T.I.; Moyo, C.; Bründlinger, R.; Lehnhoff, S.; Blank, M.; Palensky, P.; van der Meer, A.A.; Heussen, K.; Gehrke, O.; Rodriguez, J.E.; et al. An integrated research infrastructure for validating cyber-physical energy systems. In Proceedings of the International Conference on Industrial Applications of Holonic and Multi-Agent Systems, Lyon, France, 28–30 August 2017; pp. 157–170. [Google Scholar]

- Heussen, K.; Bondy, D.; Nguyen, V.; Blank, M.; Klingenberg, T.; Kulmala, A.; Abdulhadi, I.; Pala, D.; Rossi, M.; Carlini, C.; et al. D-NA5.1 Smart Grid Configuration Validation Scenario Description Method; Project Deliverable, H2020 ERIGrid (GA No 654113). 2017. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Papaioannou, I.; Kotsakis, E.; Masera, M. Smart Grid Interoperability Testing Methodology: A Unified Approach Towards a European Framework for Developing Interoperability Testing Specifications. In Proceedings of the EAI International Conference on Smart Cities Interoperability and Standardization, Helsinki, Finland, 29 November 2017. [Google Scholar]

- European Telecommunications Standards Institute. Methods for Testing and Specification (MTS); TPLan: A Notation for Expressing Test Purposes; Technical Report, ETSI ES 202 553 V1.2.1; ESTI: Sophia-Antipolis, France, 2009. [Google Scholar]

- European Telecommunications Standards Institute. ETSI Test Description Language; Technical Report. 2018. Available online: https://tdl.etsi.org (accessed on 13 June 2019).

- Centre for Testing and Interoperability. TTCN-3 Tutorial; Technical Report; ESTI: Sophia-Antipolis, France, 2013. [Google Scholar]

- Forsberg, K.; Mooz, H. System Engineering for Faster, Cheaper, Better. In INCOSE International Symposium; Wiley Online Library: Brighton, UK, 1999; Volume 9, pp. 924–932. [Google Scholar]

- International Electrotechnical Commission. Application Integration at Electric Utilities—System Interfaces for Distribution Management—Part 11: Common Information Model (CIM) Extensions for Distribution; Technical Report, TC 57—Power System Management and Associated Information Exchange; International Electrotechnical Commission: Geneva, Switzerland, 2013. [Google Scholar]

- International Electrotechnical Commission. Energy Management System Application Program Interface (EMS-API)—Part 301: Common Information Model (CIM) Base; Technical Report, TC 57—Power System Management and Associated Information Exchange; International Electrotechnical Commission: Geneva, Switzerland, 2014. [Google Scholar]

- International Electrotechnical Commissione. OPC Unified Architecture—Part 1: Overview and Concepts; Technical Report, TC 65/SC 65E-TR 62541-1:2010; International Electrotechnical Commission: Geneva, Switzerland, 2010. [Google Scholar]

- International Electrotechnical Commission. IEC61850—Power Utility Automation; Technical Report, TC 57—Power System Management and Associated Information Exchange; International Electrotechnical Commission: Geneva, Switzerland, 2003. [Google Scholar]

- CEN-CENELEC-ETSI Smart Grid Coordination Group. Methodologies to Facilitate Smart Grid System Interoperability through Standardization, System Design and Testing; Technical Report; CEN-CENELEC-ETSI: Brussels, Belgium, 2014. [Google Scholar]

- Schieferdecker, I. Test automation with ttcn-3-state of the art and a future perspective. In Proceedings of the IFIP International Conference on Testing Software and Systems, Natal, Brazil, 8–10 November 2010; pp. 1–14. [Google Scholar]

- Zeiss, B.; Kovacs, A.; Pakulin, N.; Stanca-Kaposta, B. A conformance test suite for TTCN-3 tools. Int. J. Softw. Tools Technol. Transf. 2014, 16, 285–294. [Google Scholar] [CrossRef]

- Broy, M.; Jonsson, B.; Katoen, J.P.; Leucker, M.; Pretschner, A. Model-based testing of reactive systems. In Volume 3472 of Springer LNCS; Springer: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Palensky, P.; van der Meer, A.A.; López, C.D.; Jozeph, A.; Pan, K. Co-Simulation of Intelligent Power Systems—Fundamentals, software architecture, numerics, and coupling. IEEE Ind. Electron. Mag. 2017, 11, 34–50. [Google Scholar] [CrossRef]

- Nguyen, V.H.; Besanger, Y.; Tran, Q.T.; Nguyen, T.L. On Conceptual Structuration and Coupling Methods of Co-Simulation Frameworks in Cyber-Physical Energy System Validation. Energies 2017, 10, 1977. [Google Scholar] [CrossRef]

- Faruque, M.O.; Sloderbeck, M.; Steurer, M.; Dinavahi, V. Thermo-electric co-simulation on geographically distributed real-time simulators. In Proceedings of the 2009 IEEE Power Energy Society General Meeting, Calgary, AB, Canada, 26–30 July 2009; pp. 1–7. [Google Scholar]

- Guillaud, X.; Faruque, M.O.; Teninge, A.; Hariri, A.H.; Vanfretti, L.; Paolone, M.; Dinavahi, V.; Mitra, P.; Lauss, G.; Dufour, C.; et al. Applications of Real-Time Simulation Technologies in Power and Energy Systems. IEEE Power Energy Technol. Syst. J. 2015, 2, 103–115. [Google Scholar] [CrossRef]

- Lauss, G.; Faruque, M.O.; Schoder, K.; Dufour, C.; Viehweider, A.; Langston, J. Characteristics and Design of Power Hardware-in-the-Loop Simulations for Electrical Power Systems. IEEE Trans. Ind. Electron. 2016, 63, 406–417. [Google Scholar] [CrossRef]

- Nguyen, V.H.; Besanger, Y.; Tran, Q.T.; Nguyen, T.L.; Boudinet, C.; Brandl, R.; Strasser, T. Using Power-Hardware-in-the-loop Experiments together with Co-simulation in a holistic approach for cyber physical energy system validation. In Proceedings of the 2017 IEEE PES Innovative Smart Grid Technologies Conference Europe (ISGT-Europe), Torino, Italy, 26–29 September 2017. [Google Scholar]

- Lehfuss, F.; Lauss, G.; Seitl, C.; Leimgruber, F.; Nohrer, M.; Strasser, T.I. Coupling of Real-Time and Co-Simulation for the Evaluation of the Large Scale Integration of Electric Vehicles into Intelligent Power Systems. In Proceedings of the 2017 IEEE Vehicle Power and Propulsion Conference (VPPC), Belfort, France, 11–14 December 2017. [Google Scholar]

- Stevic, M.; Estebsari, A.; Vogel, S.; Pons, E.; Bompard, E.; Masera, M.; Monti, A. Multi-site European framework for real-time co-simulation of power systems. IET Gener. Transm. Distrib. 2017, 11, 4126–4135. [Google Scholar] [CrossRef]

- Lundstrom, B.; Chakraborty, S.; Lauss, G.; Bründlinger, R.; Conklin, R. Evaluation of system-integrated smart grid devices using software- and hardware-in-the-loop. In Proceedings of the 2016 IEEE Power Energy Society Innovative Smart Grid Technologies Conference (ISGT), Minneapolis, MN, USA, 6–9 September 2016; pp. 1–5. [Google Scholar]

- Marten, F.; Mand, A.; Bernard, A.; Mielsch, B.; Vogt, M. Result processing approaches for large smart grid co-simulations. Comput. Sci. Res. Dev. 2017, 33, 1–7. [Google Scholar] [CrossRef]

- Vellaithurai, C.B.; Biswas, S.S.; Liu, R.; Srivastava, A. Real Time Modeling and Simulation of Cyber-Power System. In Cyber Physical Systems Approach to Smart Electric Power Grid; Khaitan, S.K., McCalley, J.D., Liu, C.C., Eds.; Springer: Berlin/Heidelberg, Germany, 2015; pp. 43–74. [Google Scholar]

- Liu, R.; Vellaithurai, C.; Biswas, S.S.; Gamage, T.T.; Srivastava, A.K. Analyzing the Cyber-Physical Impact of Cyber Events on the Power Grid. IEEE Trans. Smart Grid 2015, 6, 2444–2453. [Google Scholar] [CrossRef]

- Franchioni, G.; Heckmann, W.; Brundlinger, R.; Numminen, S.; Mayr, C.; Martin, N.; Strasser, T. Final Report Summary; Project Report, FP7 DERri (GA No 228449). 2014. Available online: https://cordis.europa.eu/project/rcn/91201/reporting/en (accessed on 13 June 2019).

- Nguyen, V.H.; Tran, Q.T.; Besanger, Y. SCADA as a service approach for interoperability of micro-grid platforms. Sustain. Energy Grids Netw. 2016, 8, 26–36. [Google Scholar] [CrossRef]

- Fisher, R.A. Design of experiments. Br. Med. J. 1936, 1, 554. [Google Scholar] [CrossRef]

- Robbins, H. Some aspects of the sequential design of experiments. In Herbert Robbins Selected Papers; Springer: Cham, Switzerland, 1985; pp. 169–177. [Google Scholar]

- Taguchi, G.; Yokoyama, Y.; Wu, Y. Taguchi Methods: Design of Experiments; American Supplier Institute: Cairo, Egypt, 1993; Volume 4. [Google Scholar]

- Kleijnen, J.P. Design and analysis of simulation experiments. In Proceedings of the International Workshop on Simulation, Vienna, Austria, 21–25 September 2015; pp. 3–22. [Google Scholar]

- Giunta, A.; Wojtkiewicz, S.; Eldred, M. Overview of modern design of experiments methods for computational simulations. In Proceedings of the 41st Aerospace Sciences Meeting and Exhibit, Reno, NV, USA, 6–9 January 2003; p. 649. [Google Scholar]

- Van der Meer, A.A.; Steinbrink, C.; Heussen, K.; Bondy, D.E.M.; Degefa, M.Z.; Andrén, F.P.; Strasser, T.I.; Lehnhoff, S.; Palensky, P. Design of experiments aided holistic testing of cyber-physical energy systems. In Proceedings of the 2018 Workshop on Modeling and Simulation of Cyber-Physical Energy Systems (MSCPES), Porto, Portugal, 10–10 April 2018; pp. 1–7. [Google Scholar]

- Holistic Test Description Templates, ERIGrid. 2019. Available online: https://github.com/ERIGrid/Holistic-Test-Description (accessed on 13 June 2019).

- Pellegrino, L.; Arnold, G.; Brandl, R.; Nguyen, V.H.; Bourry, F.; Tran, Q.T.; Sansano, E.; Rikos, E.; Heussen, K.; Merino, J.; et al. D-JRA3.3 Improved Lab-Based System Integration Testing Methods; Project Deliverable, H2020 ERIGrid (GA No 654113). 2018. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Nguyen, V.H.; Bourry, F.; Tran, Q.T.; Brandl, R.; Sansano, E.; Rikos, E.; Heussen, K.; Merino, J.; Riaño, S.; Kotsampopoulos, P. D-JRA3.2 Extended Real-Time Simulation and Hardware-in-the-Loop Possibilities; Project Deliverable, H2020 ERIGrid (GA No 654113). 2018. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Gehrke, O.; Jensen, T. Definition of a Common Data Format; SmILES Deliverable Report EU Project No.: 730936; Technical University of Denmark: Lyngby, Denmark, 2018. [Google Scholar]

- Kulmala, A.; Mäki, K.; Rinne, E.; Gehrke, O.; Heussen, K.; Bondy, D.; Verga, M.; Sandroni, C.; Pala, D.; Nguyen, V.; et al. D-NA5.2 Partner Profiles; Project Deliverable, H2020 ERIGrid (GA No 654113). 2017. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Gehrke, O.; Heussen, K.; Merino, J.; Nguyen, V.; Kulmala, A.; Pala, D.; Rikos, E.; Bhandia, R.; van der Meer, A.; Brandl, R.; et al. D-JRA3.1 Improved Hardware and Software Interfaces; Project Deliverable, H2020 ERIGrid (GA No 654113). 2017. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Tabachnick, B.G.; Fidell, L.S. Experimental Designs Using ANOVA; Thomson/Brooks/Cole: San Francisco, CA, USA, 2007. [Google Scholar]

- Antony, J. Design of Experiments for Engineers and Scientists; Butterworth-Heinemann: Oxford, UK, 2003. [Google Scholar]

- Stamatis, D. Six Sigma and Beyond: Design of Experiments; Volume V, Six Sigma and Beyond. Vol. 5, Design of Experiments; St. Lucie Press: Boca Raton, FL, USA, 2002; p. 23. [Google Scholar]

- Montoya, J.; Brandl, R.; Vogt, M.; Marten, F.; Maniatopoulos, M.; Fabian, A. Asynchronous Integration of a Real-Time Simulator to a Geographically Distributed Controller Through a Co-Simulation Environment. In Proceedings of the IECON 2018—44th Annual Conference of the IEEE Industrial Electronics Society, Washington, DC, USA, 21–23 October 2018; pp. 4013–4018. [Google Scholar]

- Hong, Q.; Nedd, M.; Norris, S.; Abdulhadi, I.; Karimi, M.; Terzija, V.; Marshall, B.; Bell, K.; Booth, C. Fast frequency response for effective frequency control in power systems with low inertia. In Proceedings of the 14th IET International Conference on AC and DC Power Transmission, Chengdu, China, 28–30 June 2018; pp. 1–8. [Google Scholar]

- Hong, Q.; Abdulhadi, I.; Roscoe, A.; Booth, C. Application of a MW-scale motor-generator set to establish power-hardware-in-the-loop capability. In Proceedings of the 7th IEEE International Conference on Innovative Smart Grid Technologies, Torino, Italy, 26–29 September 2018. [Google Scholar] [CrossRef]

- Hong, Q. Testing of the Enhanced Frequency Control Capability Scheme: Part 2—Wide Area Mode Tests; Technical Report; University of Strathclyde: Glasgow, UK, 2018. [Google Scholar]

- Widl, E.; Spiegel, M.; Findrik, M.; Ba-jraktari, A.; Bhandia, R.; Steinbrink, C.; Heussen, K.; Jensen, T.; Panagiotis-Timolewn, M.; Dimeas, A.; et al. D-JRA2.2 Smart Grid ICT Assessment Method; Project Deliverable, H2020 ERIGrid (GA No 654113). 2018. Available online: https://cordis.europa.eu/project/rcn/198653/results/en (accessed on 13 June 2019).

- Widl, E. Description of Optimization Strategies; SmILES Deliverable Report EU Project No.: 730936; Technical University of Denmark: Lyngby, Denmark, 2018. [Google Scholar]

- Martini, L.; Brunner, H.; Rodriguez, E.; Caerts, C.; Strasser, T.; Burt, G. Grid of the future and the need for a decentralised control architecture: The web-of-cells concept. CIRED-Open Access Proc. J. 2017, 2017, 1162–1166. [Google Scholar] [CrossRef]

- Migliavacca, G.; Rossi, M.; Six, D.; Džamarija, M.; Horsmanheimo, S.; Madina, C.; Kockar, I.; Morales, J.M. SmartNet: H2020 project analysing TSO-DSO interaction to enable ancillary services provision from distribution networks. CIRED-Open Access Proc. J. 2017, 2017, 1998–2002. [Google Scholar] [CrossRef]

- Pröstl Andrén, F.; Strasser, T.I.; Baut, J.L.; Rossi, M.; Viganó, G.; Croce, G.D.; Horsmanheimo, S.; Azar, A.G.; Ibañez, A.I. Validating Coordination Schemes between Transmission and Distribution System Operators using a Laboratory-Based Approach. arXiv 2019, arXiv:1906.10642. [Google Scholar]

| SC Type | Generic SC | Specific SC | Experiment SC |

|---|---|---|---|

| Described in | Test Case | Test Specification | Experiment Specification |

| Topology | Domain-coupling | SuT components | Testbed and OuI |

| Parameters | NO | Partial, preferred values | YES |

| OuI concrete | NO | YES | YES |

| Non-OuI concrete | NO | NO | YES |

| System Aspect | Precision Level | |

|---|---|---|

| Grid topology | precise | |

| Communication protocols | irrelevant | |

| Communication channel properties | ||

| Latency | precise | |

| Others | nominal | |

| ... | ... | |

| Test ID | Event Size (Generation Loss) | Event Location | LC 1 Location | Available LC 1 Resource | LC 2 Location | Available LC 2 Resource |

|---|---|---|---|---|---|---|

| 0.1 | 1 GW | Region 3 | None-control case | |||

| 1.1 | 1 GW | Region 3 | Region 1 | 300 MW | Region 3 | 300 MW |

| 1.x | - | - | - | - | - | - |

| 2.x | 1.32 GW | Region 1 | Region 1 | 1 GW | Region 3 | 1 GW |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Heussen, K.; Steinbrink, C.; Abdulhadi, I.F.; Nguyen, V.H.; Degefa, M.Z.; Merino, J.; Jensen, T.V.; Guo, H.; Gehrke, O.; Bondy, D.E.M.; et al. ERIGrid Holistic Test Description for Validating Cyber-Physical Energy Systems. Energies 2019, 12, 2722. https://doi.org/10.3390/en12142722

Heussen K, Steinbrink C, Abdulhadi IF, Nguyen VH, Degefa MZ, Merino J, Jensen TV, Guo H, Gehrke O, Bondy DEM, et al. ERIGrid Holistic Test Description for Validating Cyber-Physical Energy Systems. Energies. 2019; 12(14):2722. https://doi.org/10.3390/en12142722

Chicago/Turabian StyleHeussen, Kai, Cornelius Steinbrink, Ibrahim F. Abdulhadi, Van Hoa Nguyen, Merkebu Z. Degefa, Julia Merino, Tue V. Jensen, Hao Guo, Oliver Gehrke, Daniel Esteban Morales Bondy, and et al. 2019. "ERIGrid Holistic Test Description for Validating Cyber-Physical Energy Systems" Energies 12, no. 14: 2722. https://doi.org/10.3390/en12142722

APA StyleHeussen, K., Steinbrink, C., Abdulhadi, I. F., Nguyen, V. H., Degefa, M. Z., Merino, J., Jensen, T. V., Guo, H., Gehrke, O., Bondy, D. E. M., Babazadeh, D., Pröstl Andrén, F., & Strasser, T. I. (2019). ERIGrid Holistic Test Description for Validating Cyber-Physical Energy Systems. Energies, 12(14), 2722. https://doi.org/10.3390/en12142722