1. Introduction

With the development of productivity and society, the demand for electricity for production and living is growing constantly, which has also led to an increased difficulty in power system management. Against this background, electricity load forecasting is of great help for the decision-making process of power market participants and regulators [

1,

2]. However, affected by many potential factors [

3], it is a challenging task to conduct significant work in this field. Exaggerated forecasting can lead to excessive electricity production, which increases unnecessary operating costs and wastes energy. On the other hand, inadequate forecasting can lead to a shortage in energy production, posing political, economic, and security threats to a country or a region.

For decades, many models have been proposed in the field of load forecasting, which can be divided into three general types: statistical models, artificial intelligence (AI) models, and hybrid models.

In statistical models, a potential dynamic relationship between current information and historical data is deemed to exist, and this relationship is described using mathematical statistics methods under strict assumptions. Models of this category, such as the Auto Regressive (AR) model [

4], the Auto Regressive Moving Average (ARMA) model [

5], the Auto Regressive Integrated Moving Average (ARIMA) model [

6], and the Seasonal Model (SM) [

7], have been applied to electricity load forecasting for many years. In 2011, Li et al. [

8] proposed an improved Grey Model (GM) for use in short-term load forecasting. This model adopted a second-order, univariate structure, which overcame the problem of the GM (1,1) being weak in forecasting time series with strong randomness. In 2016, Dudek [

9] proposed a univariate, short-term load forecasting framework based on the Linear Regression (LR) and a periodic pattern that was able to filter out trends and seasonal factors longer than the daily cycle, thus eliminating the non-stationarity of the mean and variance and simplifying the forecasting problem.

From the end of the 20th century until now, owing to the rapid development of computer technology, Artificial Intelligence (AI) forecasting methods have received unprecedented attention and rapidly spread in a short time. In the past two decades, many models with different structures based on AI have been designed and employed in the field of load forecasting, for example, the Artificial Neural Network (ANN) [

10], Self-Organizing Map (SOM) [

11], and Adaptive Network-based Fuzzy Inference System (ANFIS) [

12]. In 2008, Lauret et al. [

13] constructed a model on the basis of the Bayesian Neural Network (BNN) with obvious advantages over traditional neural networks and applied it to the forecasting of short-term load data. In 2017, a model based on Support Vector Regression (SVR) was proposed by Chen et al. [

14], where the previous environment temperature of two hours before demand response events was utilized as an input variable to conduct load forecasting of office buildings, thereby determining the load baseline. Many scientific studies and practical applications indicate that, in a wide variety of cases of time series forecasting, AI technology tends to have better performance than traditional statistical models.

In recent years, with the invention of a variety of forecasting techniques, many hybrid models have been put forward and utilized in various fields. More specifically, it is reasonable to put hybrid forecasting models into two categories. The first category is usually based on an individual forecasting method with the addition of a data preprocessing strategy or an intelligent optimization algorithm or both, forming a model with a multi-layer structure [

15]. Examples of the application of such models in load forecasting are given below. In 2018, Barman et al. [

16] proposed a hybrid short-term load forecasting model based on the Support Vector Machine (SVM), which employs the Grasshopper Optimization Algorithm (GOA) to optimize network parameters to achieve high precision. Li et al. [

17] proposed a hybrid model based on the Extreme Learning Machine (ELM), which incorporates a classical data preprocessing strategy. Rana et al. [

18] proposed a hybrid model called the Advanced Wavelet Neural Network (AWNN). The model firstly decomposes the raw data with a modified wavelet-based strategy and then uses a neural network to forecast. More examples are presented in [

19,

20,

21,

22,

23]. In addition, models of this category are also widely used in other fields such as wind speed forecasting [

24,

25], air pollution forecasting [

26], and forecasting in some high-dimensional data [

27,

28]. Through combinations of different data preprocessing strategies, simple statistical or artificial intelligence forecasting modules, and intelligent optimization algorithms, various hybrid models of this category have been invented. Models in the second category are also called combined forecasting models. The combined forecast theory was initially expounded by Bates and Granger in 1969 [

29], whose core idea was to merge the forecasting results of multiple sub-models in a weighted manner. In [

30,

31], combined forecasting models were applied to wind speed forecasting. In [

32], Shen et al. applied a combined forecasting model to international tourism demand forecasting. In [

33], Jiang et al. employed a combined model for the forecasting of carbon emissions. In the field of electricity load forecasting, Xiao et al. [

34] constructed a model based on multiple neural networks in 2015 and compared it with ARIMA. The comparison showed the advantages of the combined model in terms of the forecasting ability.

A review of various models proposed in previous literature showed that they have many insurmountable problems, which are summarized below.

(1) Due to the overly strict assumptions of statistical models that linear relationships exist within the time series, it is difficult for data in real life to fully meet the required conditions. Therefore, in a lot of fields, bad results are often obtained, especially for nonlinear and nonstationary data with high noise and fluctuations [

35].

(2) It is worth mentioning that although AI technology can better extract the nonlinear characteristics of data, it also has some disadvantages that are difficult to overcome. For example, AI forecasting methods are prone to fall into local optimization and generate an overfitting phenomenon [

36].

(3) To some extent, hybrid models are able to take full advantage of each module, but at the same time, they may produce new defects, which deserve special attention.

First, most studies emphasize the forecasting accuracy, thus underestimating the significance of forecasting stability. It can be found that most of the hybrid models use single-objective optimization algorithms including Particle Swarm Optimization (PSO) [

37], the Genetic Algorithm (GA) [

38], the Evolutionary Algorithm (EA) [

39], the Firefly Algorithm (FA) [

40], or the Cuckoo Search Algorithm (CSA) [

41,

42]. These algorithms can help to improve the forecasting accuracy only but are unable to improve the forecasting stability simultaneously. However, forecasting accuracy and stability are equally important for a model [

43]. The obsession with the former and the neglect of the latter may lead to confusing security problems in applications.

Secondly, many individual forecasting methods used in hybrid models have a limited ability to learn the data features comprehensively. It can be found that a large number of hybrid models use statistical methods or AI methods with the simple structures mentioned above. The application of these methods makes the models lack sufficient global learning ability, which will result in nonoptimal forecasting performance.

Finally, the data preprocessing strategies mainly including Empirical Mode Decomposition (EMD) [

44,

45,

46], Wavelet Transform (WT) [

47,

48], and the Singular Spectral Analysis (SSA) [

49] are not powerful enough to effectively remove outliers and noise in data, thus affecting the results.

Therefore, it is urgent to propose a novel electricity load forecasting model which contains the advantages of each module and overcome the disadvantages mentioned above.

Hopefully, more and more multi-objective optimization algorithms will be invented to solve Multi-Objective Problems (MOPs) in various fields. There are quite a few examples, like the Multi-Objective Particle Swarm Optimization (MOPSO), which is applied in micro-grid system management [

50]; the Non-dominated Sorting Genetic Algorithm-II (NSGA-II), which is applied in redundancy allocation problems [

51]; the Multi-Objective Whale Optimization Algorithm (MOWOA), which is applied in wind speed forecasting [

52]; and the Multi-Objective Evolutionary Algorithm (MOEA), which is utilized in optimizing traffic flow and vehicle emission planning through urban traffic lights [

53]. Multi-objective optimization algorithms can effectively solve problems among multiple conflicting objectives, making the results more in line with the actual needs.

As a popular term, deep neural networks have been successfully used in engineering, economy, security, and other fields. In [

54], a model based on the Convolutional Neural Network (CNN) was applied in facial expression recognition. In [

55], a model based on the Deep Belief Network (DBN) was applied in the field of medical X-ray image analysis. In [

56], a model based on the Long Short-Term Memory network (LSTM) was applied in financial market forecasting. In 2017, a short-term electricity load forecasting model based on deep neural networks was proposed and good experimental results were obtained [

57]. In summary, compared with other methods, deep neural networks have more powerful nonlinear mapping abilities and can extract the deeper characteristics of data. Therefore, when deep neural networks solve nonlinear modeling problems, surprising results may be achieved.

In addition, with the development of signal processing research, researchers have invented some novel and effective denoising strategies and applied them to the data preprocessing of time series. For example, strategies such as the Wavelet Packet Transform (WPT) [

58], Improved Empirical Mode Decomposition (IEMD) [

59], and Ensemble Empirical Mode Decomposition (EEMD) [

60] have been successfully employed in the field of electricity load forecasting to reduce the random disturbance of original data, thus obtaining a better forecasting performance.

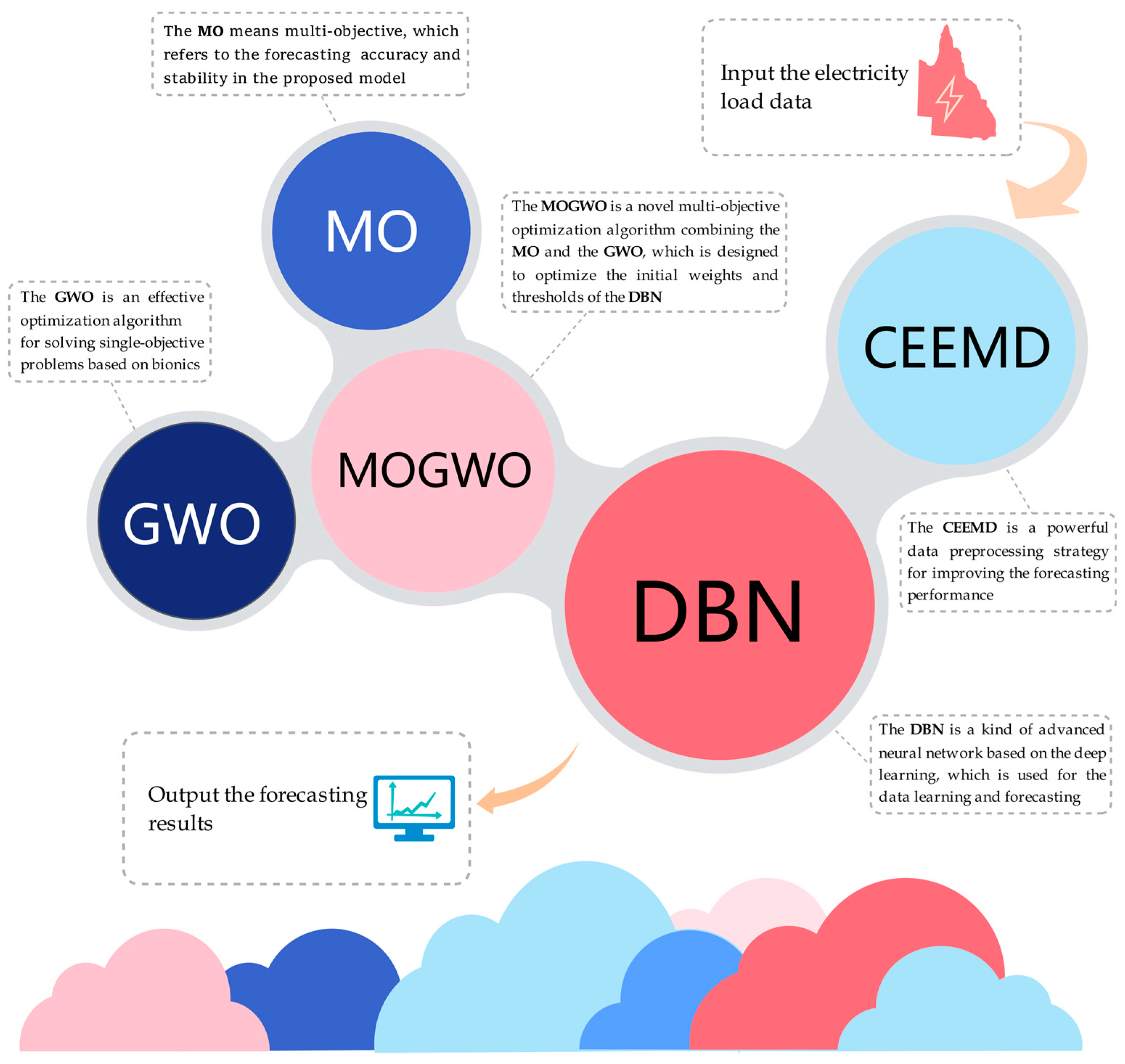

In this paper, a novel hybrid model for electricity load forecasting based on a deep neural network is successfully proposed. The model is improved by a multi-objective optimization algorithm and an advanced data preprocessing strategy. In the proposed model, DBN is used as the core module of data feature learning and forecasting. Meanwhile, the Multi-Objective Grey Wolf Optimizer (MOGWO) is employed to search for the optimal initial weights and thresholds of DBN. In addition, the Complementary Ensemble Empirical Mode Decomposition (CEEMD), an advanced signal processing strategy, is applied in the data preprocessing procedure to remove noise existing in the load series. Finally, scientific and reasonable evaluation methods including various metrics are employed to conduct a comprehensive assessment.

The proposed model successfully introduces a deep neural network into electricity load time series forecasting. In terms of the construction of datasets, this paper divides data sampled in 30-min intervals from Queensland into seven datasets corresponding to Monday to Sunday, respectively. Meanwhile, this paper takes the previous 16 real data samples of each forecasting time point as the input variable of the proposed model, and the benchmark models also follow the above principles when constructing their input variables. The model learns each dataset separately and outputs the results of one-step and multi-step rolling forecasting. The fine results of the proposed model show its excellent forecasting accuracy and stability in modeling data with complex components like load series.

The highlights of the study are as follows:

(1) Based on an emerging deep neural network and improved by an avant-garde multi-objective optimization algorithm as well as an effective data preprocessing strategy, a complex and systematic hybrid forecasting model is constructed. The proposed model can effectively combine the advantages of each module in the structure and thus has better forecasting performance than individual models and hybrid models composed of other simple structures. As it turns out, the proposed model is superior to all compared traditional models.

(2) An algorithm for MOPs is utilized in the proposed model to help to determine the initial network weights and thresholds, thereby promoting the forecasting accuracy and stability simultaneously. This algorithm is an intelligent heuristic optimizer, which iterates according to Pareto’s theory and the bionics principle of the preying behavior of wolves, thus successfully converging to the Pareto optimal fronts of the MOPs and searching for the optimal network parameters.

(3) A powerful denoising strategy is utilized in the preprocessing of electricity load data, which can effectively identify high-frequency noise and remove it to reduce the impact of fluctuations on the forecasting performance. This strategy decomposes and reconstructs the original load series into several sub-sequences, so as to filter out the high-frequency fluctuations in information in the original series and avoid them entering the subsequent data learning process.

(4) The core of the proposed model is a deep neural network, which has a stronger nonlinear mapping and characterization ability than traditional neural networks and statistical methods, due to its special structure and principles. This module is able to conduct comprehensive learning and training for the characteristics and patterns contained in the electricity load series, thus contributing to the satisfying forecasting performance of the proposed model.

(5) The forecasting results are evaluated reasonably and comprehensively by multiple metrics. Meanwhile, in-depth and rigorous discussions are carried out in this paper. Six of the metrics selected are adopted to assess forecasting errors, and the remaining one is used to evaluate the convergence performance of algorithms for MOPs. Moreover, the results of the experiments are further dissected from several perspectives to validate the superiority of the model that is proposed in the study.

The rest is arranged below. The framework of the proposed model is introduced in

Section 2. More details of the methodology are presented in

Section 3. The ideas and steps for effective hypothesis testing are expounded in

Section 4.

Section 5 analyzes the results of the three experiments. In

Section 6, six discussions based on the experimental results are presented. Finally,

Section 7 gives the conclusion of this paper.

5. Experiments

This section objectively presents the process, results, and corresponding analysis of the three experiments. In addition, the data description, the performance metrics used, and the setup of the experiments are explained in detail.

5.1. Data Description

In this study, three experiments were conducted using the electricity load data from Queensland, Australia in 2013, which were sampled at 30-min intervals and can be downloaded from the Australian Energy Market Operator’s website (

http://www.aemo.com.au/).

Considering the difference in daily demand pattern, the collected load data sampled at 30-min intervals were divided into seven datasets, corresponding to Monday to Sunday respectively. The forecasting strategy for this splitting method was curve estimation. In addition, we also noticed that some researchers split data by each time point, and the corresponding forecasting strategy for this splitting method is point estimation. The former data splitting method with curve estimation strategy was adopted in this study for the following three reasons. First, it considers the differences between the behaviors of people on different days, such as when they are at work or on vacation, and treats the corresponding data respectively. The method of grouping days of the same attribute into one dataset helps to reduce the volatility of the sequence caused by the inherent differences between the characteristics of each kind of day, thus improving the forecasting accuracy. Second, the accuracy and efficiency of the model can be both considered by using the former data splitting method and forecasting strategy. In this case, the number of datasets is small, so the cost of training and forecasting is low, and the operation is convenient. Third, under the former data splitting method, there are more elements in each dataset, which means more data can be used in the learning of the model, which is more in line with the requirements of deep neural networks in terms of the size of training samples.

These data are shown in

Table 1 and

Figure 2. In each series, the ratio of training to testing is 3:1.

5.2. The Performance Metrics

In order to comprehensively reflect the error characteristics and the forecasting performance, mean square error (MSE), normalized mean square error (NMSE), root mean square error (RMSE), mean absolute error (MAE), mean absolute percentage error (MAPE), and Theil’s inequality coefficient (TIC) were adopted, which are shown in

Appendix A.

5.3. The Experimental Setup

Three comparative experiments were carefully set up, and the experimental process, results, and corresponding analysis are objectively presented.

Experiment I was conducted with the major purpose of confirming the optimization ability of MOGWO and the capacity of CEEMD to preprocess data.

Experiment II was conducted with the major purpose of verifying the relationships between the main modules in the proposed model and their influences on the forecasting performance. Finally,

Experiment III was conducted to confirm the forecasting ability of the proposed model relative to other mainstream time series forecasting models. All experiments, except the test of MOGWO in

Experiment I, used Series 1–7, and some key parameters were set to be the same within the proposed model, as presented in

Table 2.

Due to the regularity of human behavior at different time points of a day and different days of a week, the time series of electricity load presents great seasonality and periodicity. Therefore, the time series forecasting strategy will have great significance and application prospect in this field. In this paper, a hybrid model based on the deep neural network is introduced into the time series forecasting strategy of load data. The proposed model takes the previous 16 real load data samples before each forecasting time point as the input variable, and the benchmark models in each experiment also use this principle to construct their input variables.

In addition, it is worth noting that in previous studies, many researchers tended to take temperature as an input variable for traditional models, mainly for the following two reasons. First, data collection areas such as New York and Singapore often have extreme low or high temperatures, leading to a large load on air conditioners for heating or cooling during certain periods. Second, those areas are densely populated, and when extreme weather comes, the widespread use of air conditioners causes large fluctuations in the electricity load. For the above reasons, the electricity load in those areas has a relatively large correlation with temperature, so it is considered as an important input variable by many researchers. However, Queensland, Australia, is sparsely populated, extremely low-density, and has a mild climate, according to the Ministry of Commerce of the People’s Republic of China. Take Brisbane, the capital of Queensland, the third largest city in Australia, as an example. It has a total population of about 1.3 million and a population density of 12 people per hectare. The highest average annual temperature is about 24 degrees Celsius, and the lowest average annual temperature is about 15 degrees Celsius. In other words, it is hard to see a reason why people in Queensland are using appliances such as air conditioners on a large scale. Therefore, in the study of Queensland, temperature is not an appropriate input variable, and the time series forecasting strategy based on the internal correlation of the sequence itself is more effective and applicable.

In the process of forecasting, the neural network structure is not fully extended to the next moment in terms of some internal parameters. The corresponding test dataset on Tuesday had 632 elements, so the model learnt 632 times during the forecasting of this test dataset. The test datasets corresponding to other days contained 620 elements, so the model learnt 620 times during the forecasting of them.

The data in each dataset were sampled at intervals of 30 min, and 48 data points were taken as a period. There were no missing values in the datasets. Therefore, for the corresponding dataset on Tuesday, the model forecasted a total of 13.16667 periods, while for the corresponding datasets on the other days, the model forecasted a total of 12.91667 periods. In terms of the parameters of the model, some important parameters, which are shown in

Table 2, remained unchanged in each forecasting period, while the parameters obtained by neural network learning automatically changed in each forecasting process.

All experiments were conducted in MATALB R2018a (MathWorks, Natick, MA, USA), with a computing environment running on Microsoft Windows 10 with a 64-bit, 2.60 GHz Intel Core i7 6700HQ CPU and 8.00 GB of RAM.

5.4. Experiment I

The experiment had two parts. The first part was the validation of the optimization ability of MOGWO, and the second one was a test of the effectiveness of CEEMD.

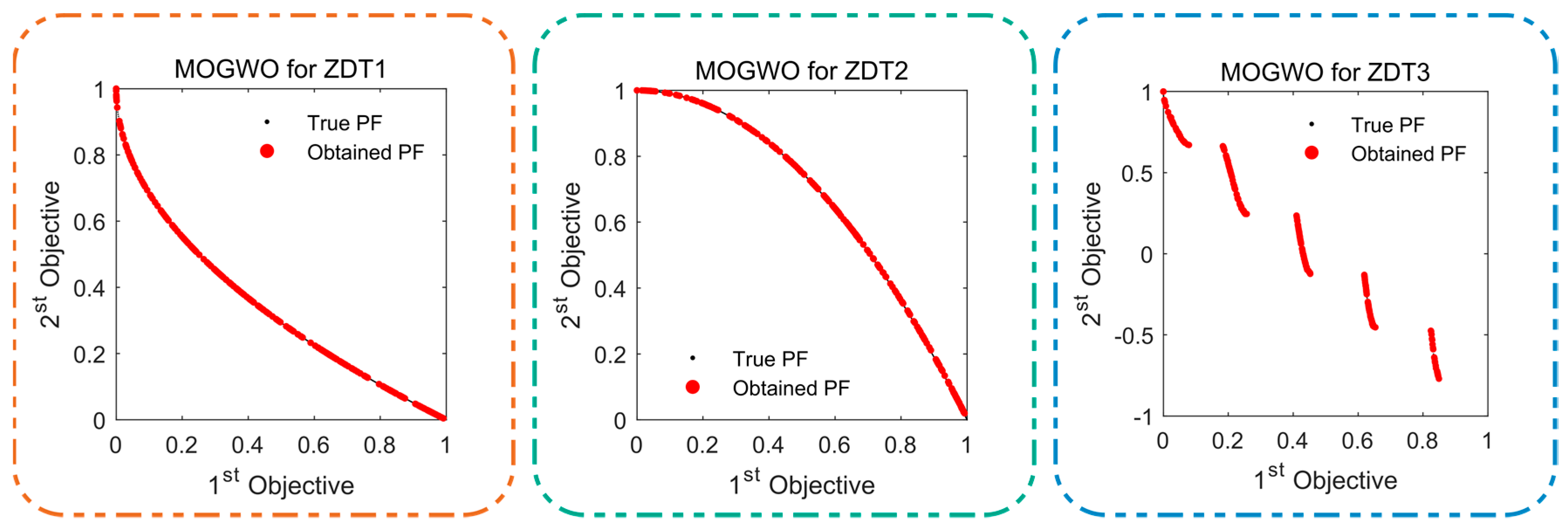

5.4.1. Test of MOGWO

The purpose of this part was to validate the fitting capacity of MOGWO to converge to the real Pareto optimal fronts. The Multi-Objective Dragonfly Algorithm (MODA) and the Multi-Objective Particle Swarm Optimization (MOPSO) were adopted as the controls. MODA is an intelligent swarm multi-objective optimization algorithm based on the hunting behavior of the dragonfly population proposed in recent years, and MOPSO is also a widely used heuristic multi-objective optimization algorithm based on the hunting behavior of the bird population. Their programming mechanisms are similar to that of MOGWO, as all of them are based on the biomimetic principles of animal predation. However, due to the different internal structures of the programs, the search ability of Pareto optimal solutions between these three algorithms is different. To explore this difference and the superiority of MOGWO’s search capability, the ZDT functions ZDT1–3 were employed as test problems. In terms of the performance metric, the Inverted Generational Distance (IGD) [

64], well-known for the evaluation of algorithms for MOPs, was selected. The test functions are shown in

Appendix B, and the formula of IGD is as follows:

where

denotes the distance between a point on the obtained Pareto optimal front and the nearest point on the real Pareto optimal front.

MOGWO’s key parameters were set as in

Table 3, and the common parameters of these three optimizers were set to be the same. In order to eliminate the influence of accidental factors on the experimental results, the experiment was repeated 50 times for each test function. The results are presented in

Table 4, and the typical results of the MOGWO are drawn in

Figure 3. It can be summarized as follows.

In terms of the IGD, MOGWO showed the smallest Ave, Std, and Median values for the three test functions, and the smallest Best values for ZDT1 and ZDT3. It is worth noting that the MOPSO for ZDT2 showed the best IGD in one of the repeated experiments, but this is not enough to explain the significant advantages of MOPSO over MOGWO and MODA, because this may have been an accidental situation.

Intuitively, MOGWO appeared to have better characteristics than MODA and MOPSO in the vast majority of cases, showing a geometric improvement in performance relative to MOPSO in terms of the IGD distribution characteristics, and it was also greatly improved compared with MODA. Take ZDT2 as an example, the standard deviation of MOGWO’s IGD was 0.00025, while the standard deviation of MOPSO’s IGD reached 0.01739 and that of MODA’s IGD also reached 0.00216. At the same time, the best IGD values of the three algorithms in ZDT2 had small differences, but the worst IGD values were very different: MOGWO’s worst IGD was 0.00386, MODA’s worst IGD was 0.01682, and MOPSO’s worst IGD was 0.12234. These characteristics reflect the difference in stability of the three optimization algorithms.

Remark 1. By comparing MOGWO, MODA, and MOPSO, MOGWO was found to show strong advantages over the control group algorithms no matter which test function was being used. Therefore, it is reasonable to apply MOGWO to the proposed model.

5.4.2. Test of CEEMD

This part was done to prove the effectiveness and application prospect of CEEMD in time series forecasting. Since the superiority of the MOGWO had already been demonstrated, two control models were set up in this part: EMD-MOGWO-DBN and EEMD-MOGWO-DBN. Both control models were hybrid models, which were consistent with the proposed model in the overall process. They both decomposed the original data into several sub-sequences by the data preprocessing strategy and then used the DBN optimized by the MOGWO to learn and forecast each sub-sequence respectively. Finally, the forecasts were added together to output the final forecasting results. In addition, the two control models were consistent with the proposed model in terms of the construction and common parameters of the forecasting module DBN and the optimizing module MOGWO. The difference between the control models and the proposed model lay in their different data preprocessing strategies. It should be emphasized that the common parameters of these three data preprocessing strategies—EMD, EEMD, and CEEMD—were also set to be the same. For all models including control models and the proposed model CEEMD-MOGWO-DBN, Series 1–7 were employed, and the average results are presented in

Table 5. In addition,

Figure 4 shows the average results of the MSE, MAE, and MAPE, which are summarized below.

In the comparison of CEEMD-MOGWO-DBN and EMD-MOGWO-DBN, the former was shown to have a great advantage over the latter in terms of the forecasting accuracy. For example, on average, the MSE of CEEMD-MOGWO-DBN was only 5694.99182, while that of EMD-MOGWO-DBN was 13,796.72117. It can be inferred that CEEMD has a better preprocessing capacity than EMD.

The forecasting results of CEEMD-MOGWO-DBN were also shown to be superior to EEMD-MOGWO-DBN. It was observed that CEEMD-MOGWO-DBN had better average error metrics than EEMD-MOGWO-DBN under the condition that the running time was basically unchanged, and the parameters were the same.

Remark 2. In a comparison of the average performance of these models, the proposed CEEMD-MOGWO-DBN model achieved the best results among all models, regardless of the dataset. These comparisons demonstrate the superiority of CEEMD over the other two data preprocessing strategies.

5.5. Experiment II

In this experiment, the proposed model was decomposed into one individual model (DBN) and two hybrid sub-models (CEEMD-DBN and MOGWO-DBN). The difference between these three models and the proposed model was that one or more modules were removed. The DBN model no longer had the data preprocessing and optimization modules CEEMD and MOGWO. For CEEMD-DBN, the optimization module MOGWO was eliminated, and for MOGWO-DBN, the data preprocessing module CEEMD was eliminated. It is worth noting that the remaining modules were consistent with those of the proposed model in terms of the structure and common parameters. At the same time, three comparisons were set up to explore the importance of CEEMD and MOGWO for the overall structure of the proposed model.

Comparison 1 included CEEMD-DBN, MOGWO-DBN, and DBN. Its main purpose was to explore whether the separate use of these two modules (CEEMD or MOGWO) could effectively help improve the forecasting ability of DBN.

Comparison 2 included CEEMD-DBN and MOGWO-DBN, in order to compare which module (CEEMD or MOGWO) improves the forecasting accuracy of DBN better when used alone. The purpose of

Comparison 3, which included CEEMD-MOGWO-DBN, CEEMD-DBN, and MOGWO-DBN, was to explore whether the superposition of the two modules (CEEMD and MOGWO) could further promote the forecasting performance. The experiment was carried out based on Series 1–7, and the average results are presented in

Table 5. In addition,

Figure 5 shows the average results of MSE, MAE, and MAPE. It can be summarized as follows.

In Comparison 1, the performance of the two hybrid sub-models was greatly improved compared with the individual model DBN. According to the averages of the forecasting error metrics for Series 1–7, the MSE, NMSE, RMSE, MAE, MAPE, and TIC of DBN were 13,766.42486, 0.00047, 117.10453, 93.97962, 1.69669%, and 0.01015, while for CEEMD-DBN, the values of these metrics were 8865.38238, 0.00027, 91.86137, 65.54824, 1.15752%, and 0.00800, respectively, and those of MOGWO-DBN were 11,453.52281, 0.00038, 105.44077, 82.93932, 1.48279%, and 0.00916. This shows that the separate utilization of CEEMD or MOGWO is able to improve the forecasting accuracy of DBN.

In Comparison 2, the degree to which CEEMD contributes to the accuracy improvement of DBN was found to be deeper than that of MOGWO. Without a loss of generality, let us focus on the averages of the error metrics. The MSE, NMSE, RMSE, MAE, MAPE, and TIC of CEEMD-DBN were 2588.14043, 0.00011, 13.57940, 17.39107, 0.32527%, and 0.00116 lower than those of MOGWO-DBN in absolute values, respectively. This may be due to some limitations of DBN’s ability to learn certain data features. MOGWO can only give more optimized parameters to DBN, while CEEMD enables to eliminate some data features under the limitations of DBN.

From Comparison 3, CEEMD-MOGWO-DBN has better accuracy compared with the two hybrid sub-models in each verification dataset. On average, the MSE, NMSE, RMSE, MAE, MAPE, and TIC of CEEMD-DBN were 8865.38238, 0.00027, 91.86137, 65.54824, 1.15752%, and 0.00800, and those of MOGWO-DBN were 11,453.52281, 0.00038, 105.44077, 82.93932, 1.48279%, and 0.00916, respectively. However, the metric values of the proposed model were 5694.99182, 0.00018, 72.47942, 52.04767, 0.91989%, and 0.00629, respectively. This seems to show that the simultaneous use of the two modules has a superposition effect on the promotion of the forecasting accuracy.

Remark 3. Through the comparisons above, it can be inferred that CEEMD and MOGWO are compatible with each other and have synergistic significance on the forecasting accuracy. Therefore, it is reasonable to dually utilize CEEMD and MOGWO in the proposed model.

5.6. Experiment III

To verify the superiority of the proposed model over other time series forecasting methods, the proposed model and four representative models were included in this experiment, and Series 1–7 were used as validation datasets. The models for comparison were K-Nearest Neighbor (KNN), Support Vector Machine (SVM), MOPSO-ELM, and CEEMD-BPNN. KNN is a relatively mature statistical learning method and has been widely used in the field of multi-classification. Its main idea is to decide the category of a sample according to the category of one or several neighboring samples. SVM is an artificial intelligence method with supervised learning, which maps data features to high-dimensional space or a hyperplane to complete multi-classification tasks. In this paper, the two models were not added to other modules; they learnt and forecasted the original data directly. CEEMD-BPNN is a hybrid model composed of the data preprocessing module CEEMD and the forecasting module BPNN, while MOPSO-ELM is a hybrid model composed of the optimization module MOPSO and the forecasting module ELM. The difference between the two models is that the former first utilizes the data preprocessing strategy CEEMD to decompose the original data into sub-sequences and then uses the BPNN to learn and forecast respectively, and at last, the results are added to obtain the final output, while the latter uses the ELM optimized by MOPSO to learn and forecast the original data. In this experiment, although their structures were not identical, all comparison models used the same input variables, datasets, and common parameters as the proposed model. The average experimental results are presented in

Table 5, and the results of Series 7 are drawn in

Figure 6 as a typical case to reflect the forecasting ability of various models and to show more details of the forecasting results. It can be summarized as follows.

The proposed model showed an absolute advantage in terms of accuracy when compared with the KNN and SVM, representatives of the statistical and AI modeling methods. In terms of the average values of the error metrics, the proposed model showed the leading position. Compared with CEEMD-BPNN and MOPSO-ELM, the proposed model showed a broad advancement in terms of overall performance, which was embodied by the huge reduction in average error metrics. On average, the MSE, NMSE, and MAE values of the proposed model were less than half of those of CEEMD-BPNN, and the other metrics, such as MAPE, were also less than half.

Remark 4. By comparing several models, this experiment showed the superiority of the proposed model over some popular models, which proves that the proposed model has great applicability and advancement in load forecasting.

6. Discussion

In this section, six topics are discussed to further confirm the advancement of the proposed model. The topics are the significance test, correlation, the performance improvement percentage, the forecasting stability, the sensitivity analysis, and the multistep ahead forecasting.

6.1. Diebold–Mariano Test

The DM test was used to test whether the forecasting results of the proposed model were significantly better than those of the other models for a comparison from a statistical point of view. The relevant content and significance of the DM test were introduced in

Section 4.

Table 6 shows the absolute values of DM statistics between the proposed model and the other ones. From this table, it can be observed that even the minimum value was still 3.06040, which exceeds

. Therefore, it is 99% certain that the null hypothesis is rejected and the alternative hypothesis is accepted. In other words, the proposed model is superior to the other ones in terms of forecasting accuracy from a statistical perspective.

6.2. Correlation

The Pearson correlation coefficient [

67] was utilized to measure the degree of linear correlation between the forecasting values of a model and the real data. In the practical application of this paper, the Pearson correlation coefficient should be between 0 and 1, and the closer it is to 1, the better the performance is. The calculated results of the Pearson correlation coefficient are presented in

Table 7.

The proposed model was observed to have the largest Pearson correlation coefficient among all models in each series. This is another statistical demonstration that the proposed model performs better than the other ones in terms of the forecasting accuracy.

6.3. Performance Improvement Percentage

It is not sufficient to only focus on the absolute difference in forecasting error metrics between the two models when making a comparison. In many cases, it is necessary to know the relative difference. Therefore, the degree to which the proposed model improves its forecasting performance relative to the other models was explored.

The performance improvement percentage is defined as

where

refers to a kind of error metric of the proposed model, and

represents that of a model for comparison.

Table 8 shows the performance improvement percentage of the MOGWO on the IGD compared with the other two algorithms in the former part of

Experiment I.

Table 9 shows the improvement percentage of the average performance of the proposed model on various error metrics compared with the other models in the latter part of

Experiment I and

Experiments II–III.

The following facts can be found.

For MOPSO and MODA, MOGWO’s performance was shown to be greatly improved. This was not only reflected in the improvement in the IGD by over 30% on average, but also in the improvement in IGD’s standard deviation of over 80% when compared with the other two algorithms in repeated experiments, indicating that MOGWO has a stronger and more stable optimization ability.

On the condition that the running time is basically the same, the performance of the proposed model was shown to be much better than that of EEMD-MOGWO-DBN in various error metrics, on average, especially for MAE, which improved by 15.22625%. Compared with EMD-MOGWO-DBN, its average performance improved more, and the highest improvement occurred in NMSE by 53.60657%.

The combined use of CEEMD and MOGWO made the proposed model perform very well. For example, compared with DBN, CEEMD-DBN, and MOGWO-DBN, the average MSE of the proposed model improved greatly by 58.63129%, 35.76146%, and 50.27738%, respectively, through the superposition of CEEMD and MOGWO.

For the traditional time series modeling methods adopted in experiments, the proposed model improved the forecasting performance to a great extent. Compared with individual forecasting models such as KNN and SVM, the improvement of average values of some metrics even reached over 70%. For example, the average MSE of the proposed model was 74.41091% better than that of KNN and 82.75761% better than that of SVM. In addition, compared with hybrid models composed of classical neural network structures, including MOPSO-ELM and CEEMD-BPNN, the proposed model also showed an improvement of 40%–75% in terms of average error metrics.

6.4. The Forecasting Stability

In previous experiments and areas of discussion, the forecasting accuracy of models was explored from various perspectives, while here, the forecasting stability is described in detail. Usually, the forecasting stability of a model is embodied by the variance or standard deviation of the forecasting errors.

Table 10 presents the standard deviation estimators of the forecasting errors of all models.

The proposed model obviously showed the minimum forecasting error standard deviation on all validation datasets. This demonstrates that, in the proposed model, both high forecasting accuracy and stability are achieved.

6.5. The Sensitivity Analysis

In the proposed model, two parameters have significant effects on the performance: One is the ratio that divides the standard deviation of the added noise by that of the original data in the CEEMD strategy, and the other is the population size in the MOGWO. In this discussion, two comparisons were set up to verify whether the proposed model is robust within a certain range of these two parameters. In

Comparison A, the ratios mentioned above in CEEMD were set as 0.3, 0.4, 0.5, 0.6, and 0.7, and the other parameters were the same as the original experiments. In

Comparison B, the population sizes were set as 10, 15, 20, 25, and 30, and the other parameters were the same as in the original experiments. Both

Comparison A and

Comparison B used Series 5 as the validation data, and the results are shown in

Table 11.

From the table, it can be seen that the model’s performance for Comparison A and Comparison B was similar with the change of independent variables. In other words, the MAPE of the model decreased sharply, then decreased slowly, then increased slowly and then increased sharply with the increase of the ratio in the CEEMD or the population size in the MOGWO. The variations in the other error metrics with the independent variables were basically consistent with that of MAPE, which presents like a quadratic function.

Based on these phenomena, it can be further concluded that the proposed model is stable within a certain parameter range. For example, for Series 5, the ratio should be between 0.4 and 0.6, and the population size should be between 15 and 25. Therefore, the proposed model has favorable robustness under certain conditions.

6.6. Multistep Ahead Forecasting

In the field of electricity load forecasting, one-step ahead forecasting may be not enough to make perfect arrangements. Therefore, a comparison of the proposed model and the other models in

Experiment III is presented for the two-step and three-step ahead forecasting in Series 1–7. The average results are shown in

Table 12.

By comparing

Table 5 and

Table 12, it can be found that as the steps increased, almost all models showed a certain degree of increase in the forecasting error metrics. Even so, the minimum value of the average MAPE of the models for comparison in the two-step ahead forecasting reached 2.34632% and that of the models for comparison in the three-step ahead forecasting reached 2.70164%. On the contrary, during multistep ahead forecasting, the proposed model obtained the minimum average values of all the error metrics for the seven datasets. For the two-step ahead forecasting, the average MAPE of the proposed model reached a satisfying value of 1.25907%. And for the three-step ahead forecasting, the average MAPE of the proposed model was 1.59609%. In addition, the proposed model showed multiple reductions in other error metrics relative to the other models in multistep ahead forecasting.

Based on the above facts, it can be inferred that the proposed model can be effectively utilized in the multistep ahead forecasting of electricity load series. Therefore, it is reasonable to conclude that the proposed model can better learn the characteristics of data than models composed by other structures due to its special structure and principles, so it can often achieve excellent forecasting performance.