Adaptive Damping Control Strategy of Wind Integrated Power System

Abstract

1. Introduction

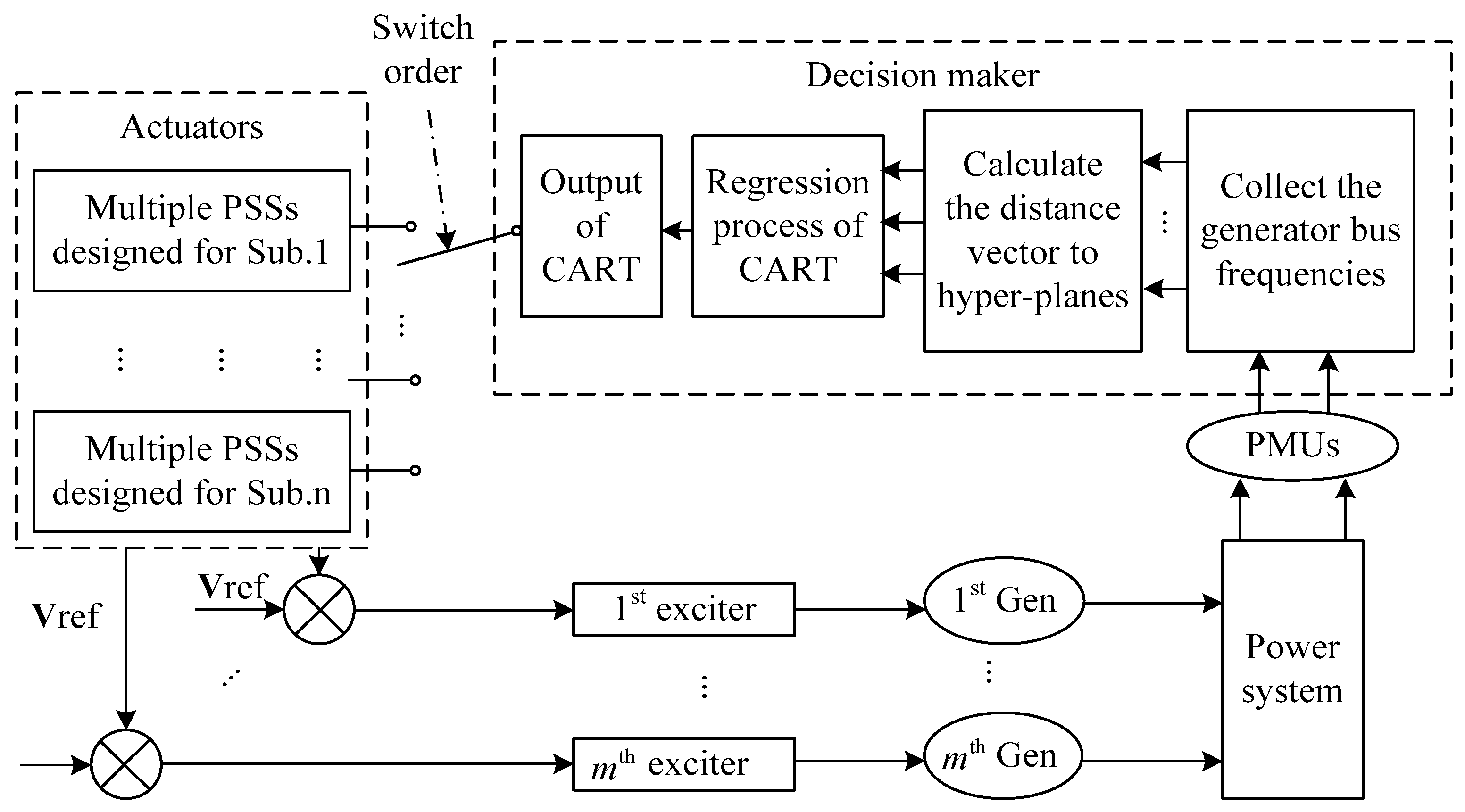

2. The CART-Based Adaptive Damping Control Scheme

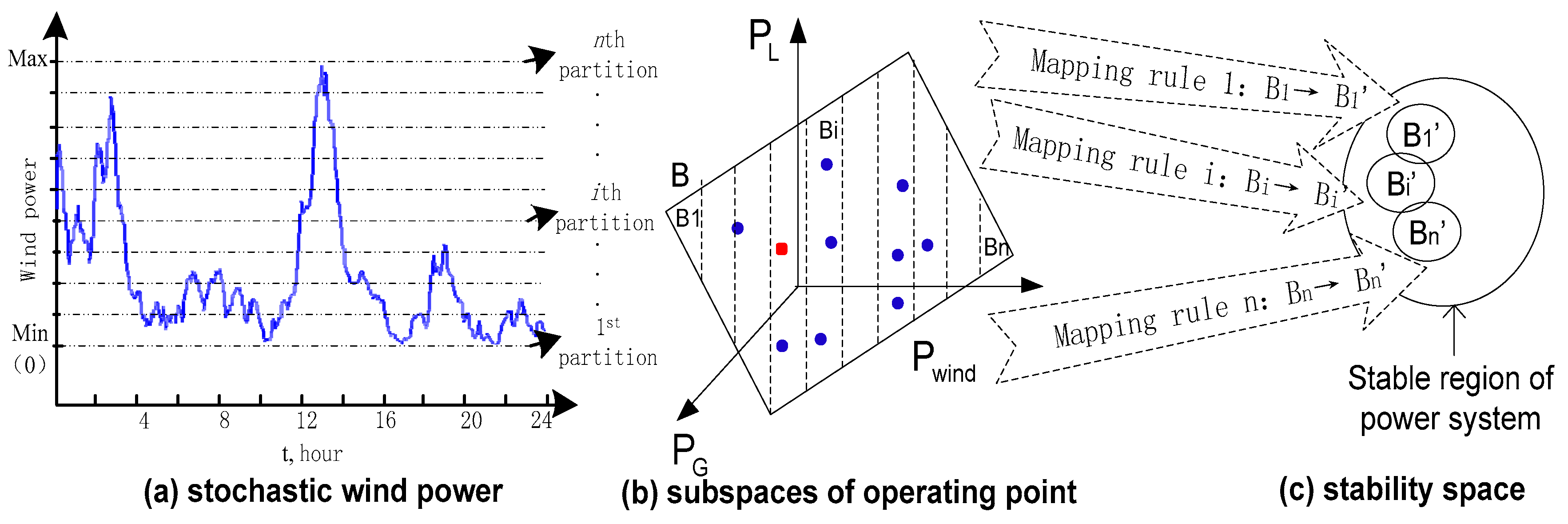

2.1. The Formation of Subspaces

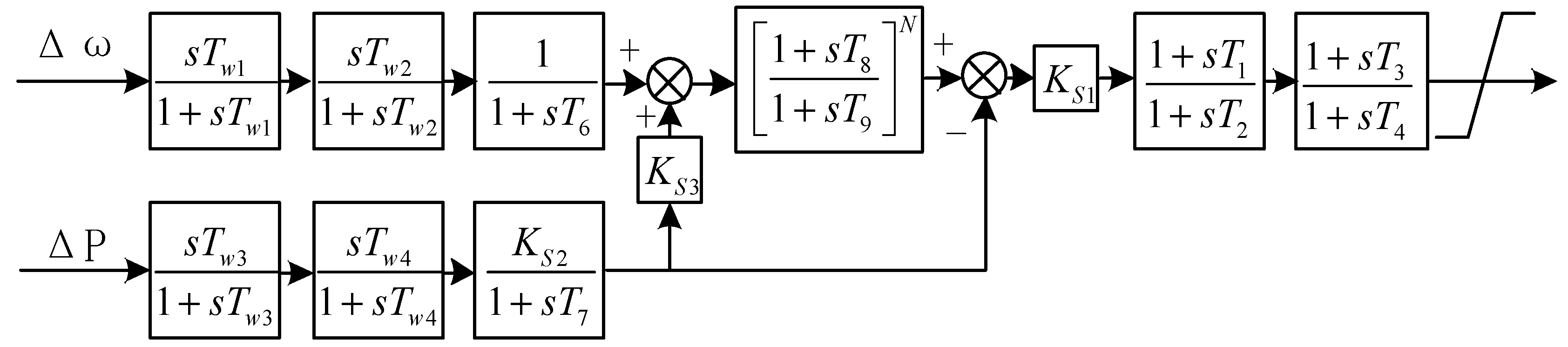

2.2. Coordinated Design of PSSs

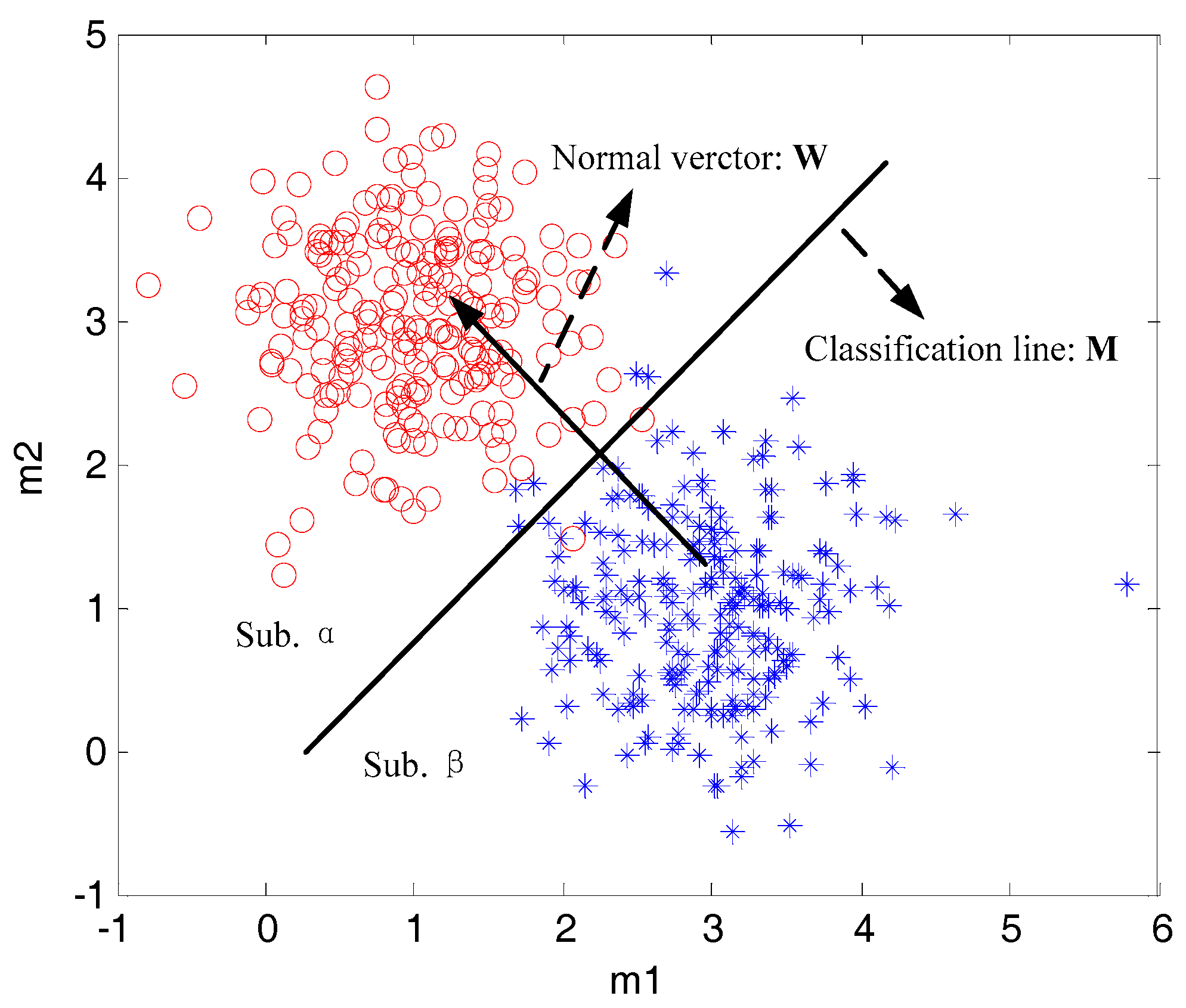

2.3. The CART-Based Adaptive Damping Control Scheme

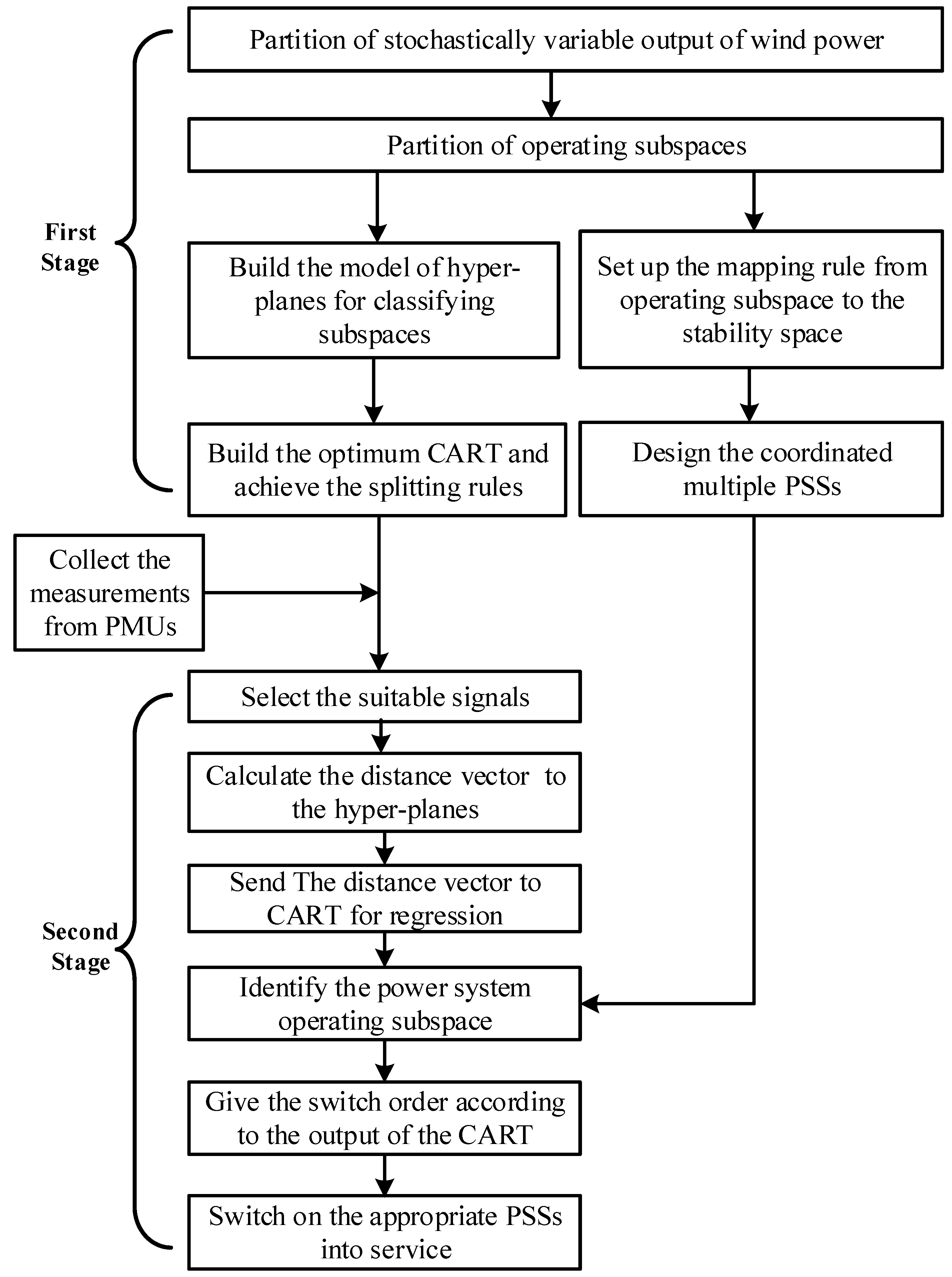

2.4. Design Procedure of Adaptive Control Scheme

3. Results and Analysis

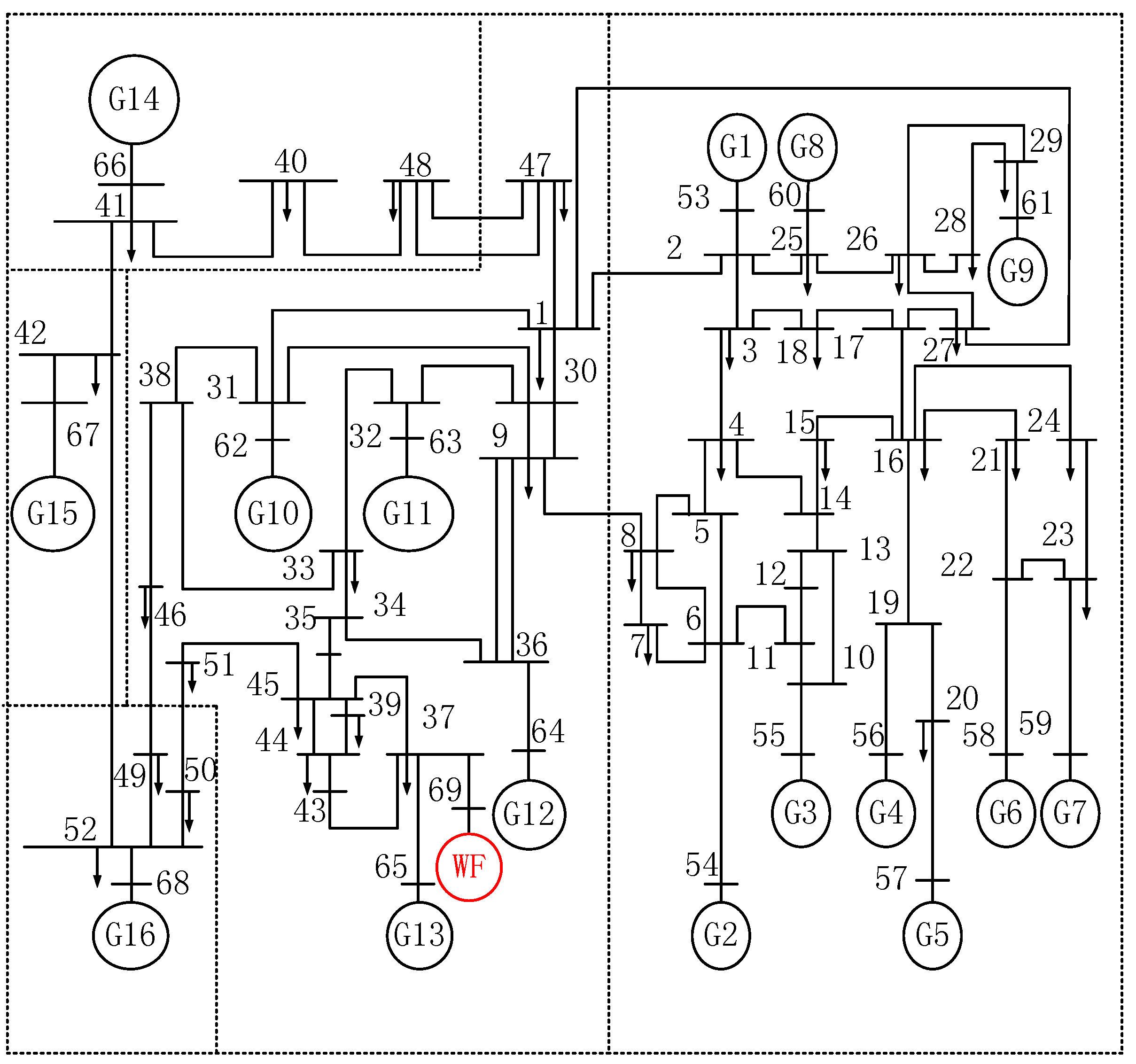

3.1. Test System

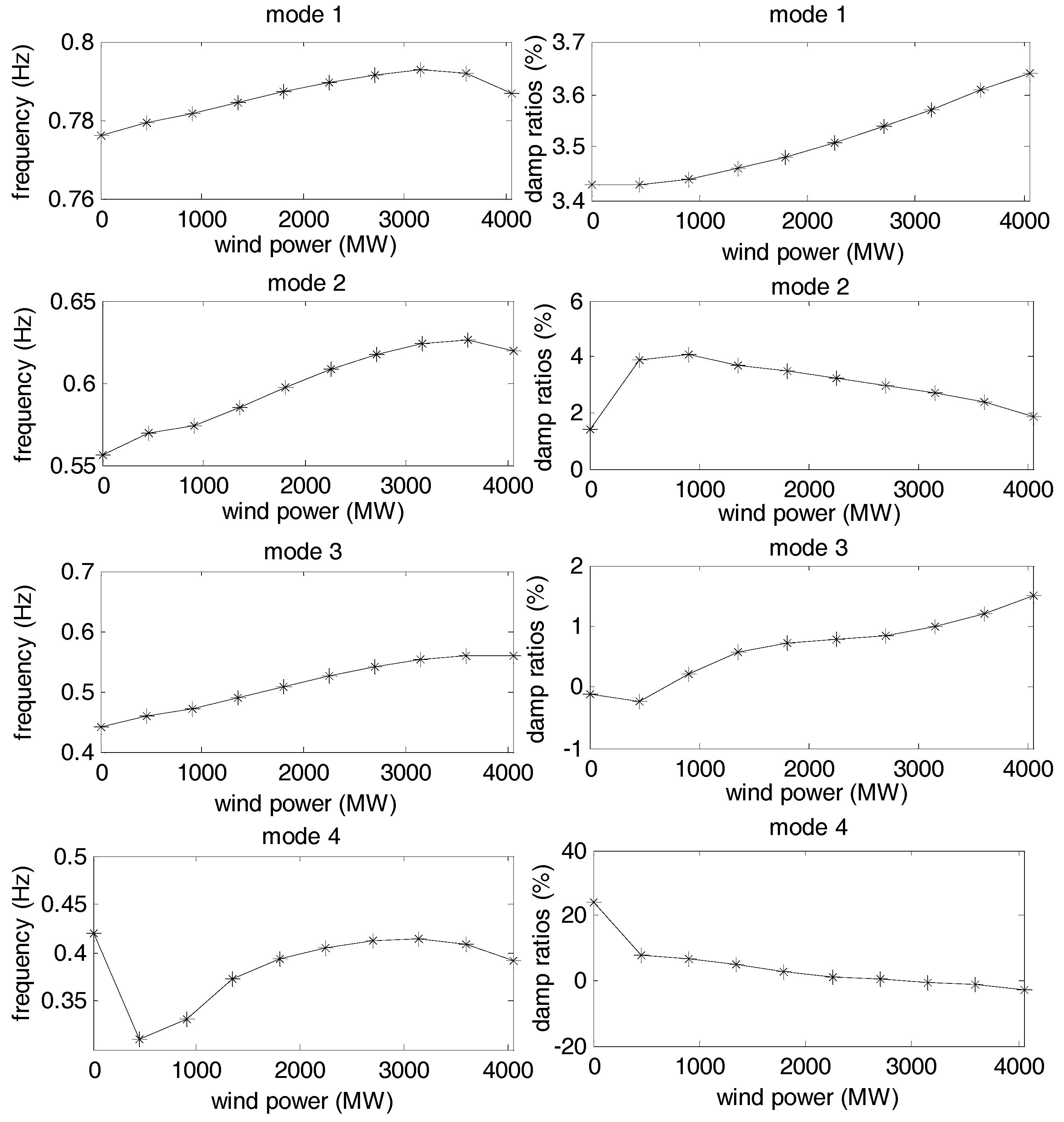

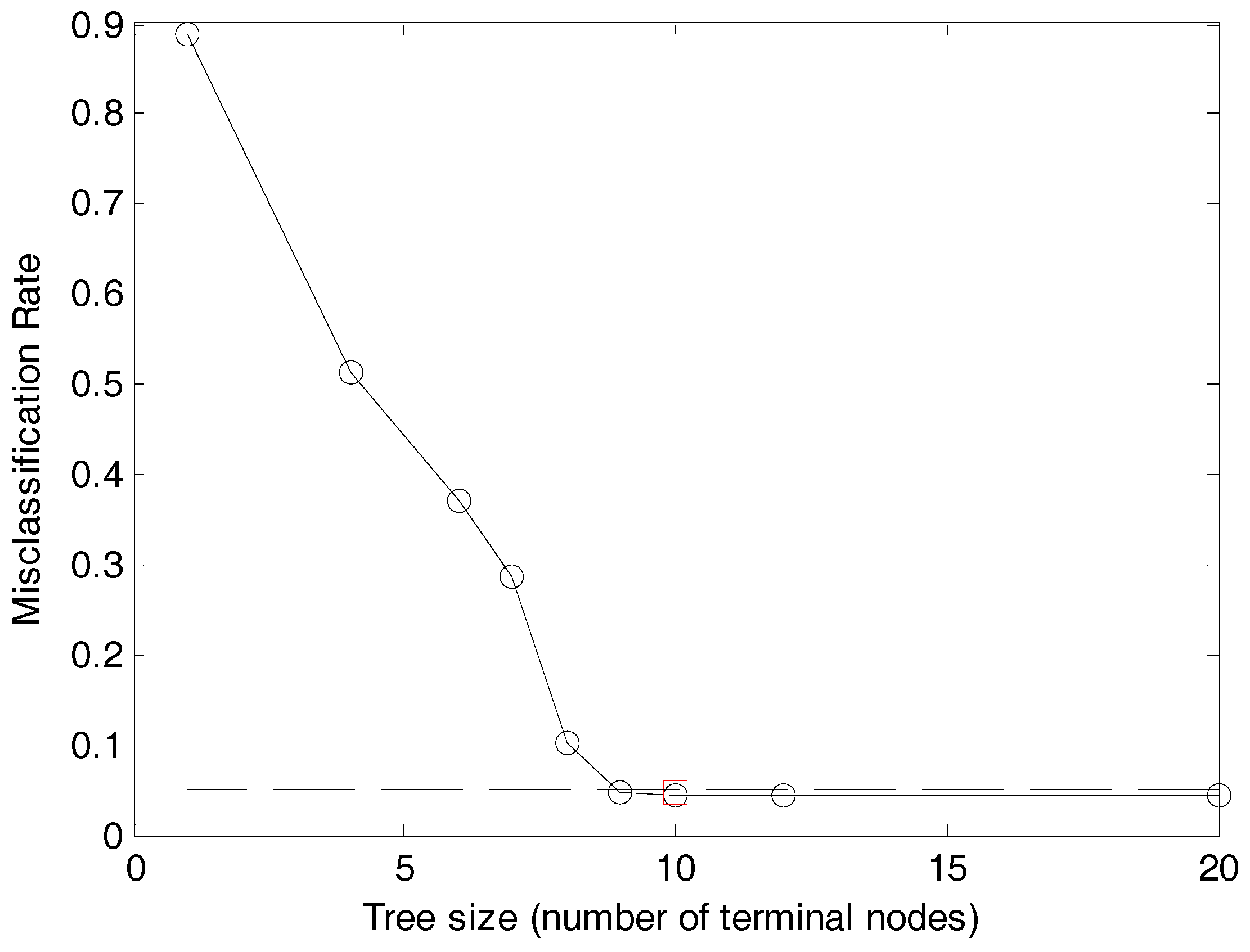

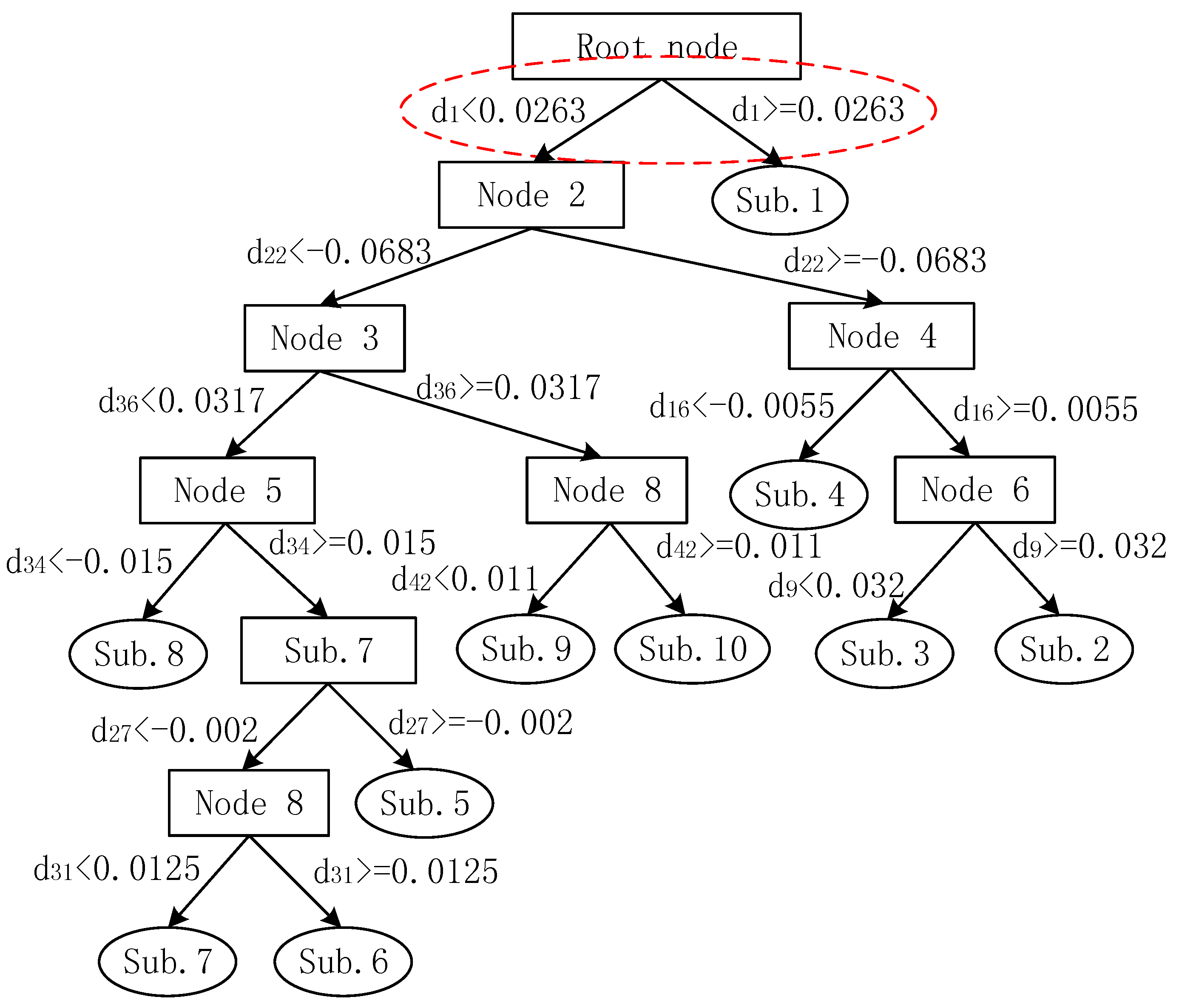

3.2. Formation of the CART

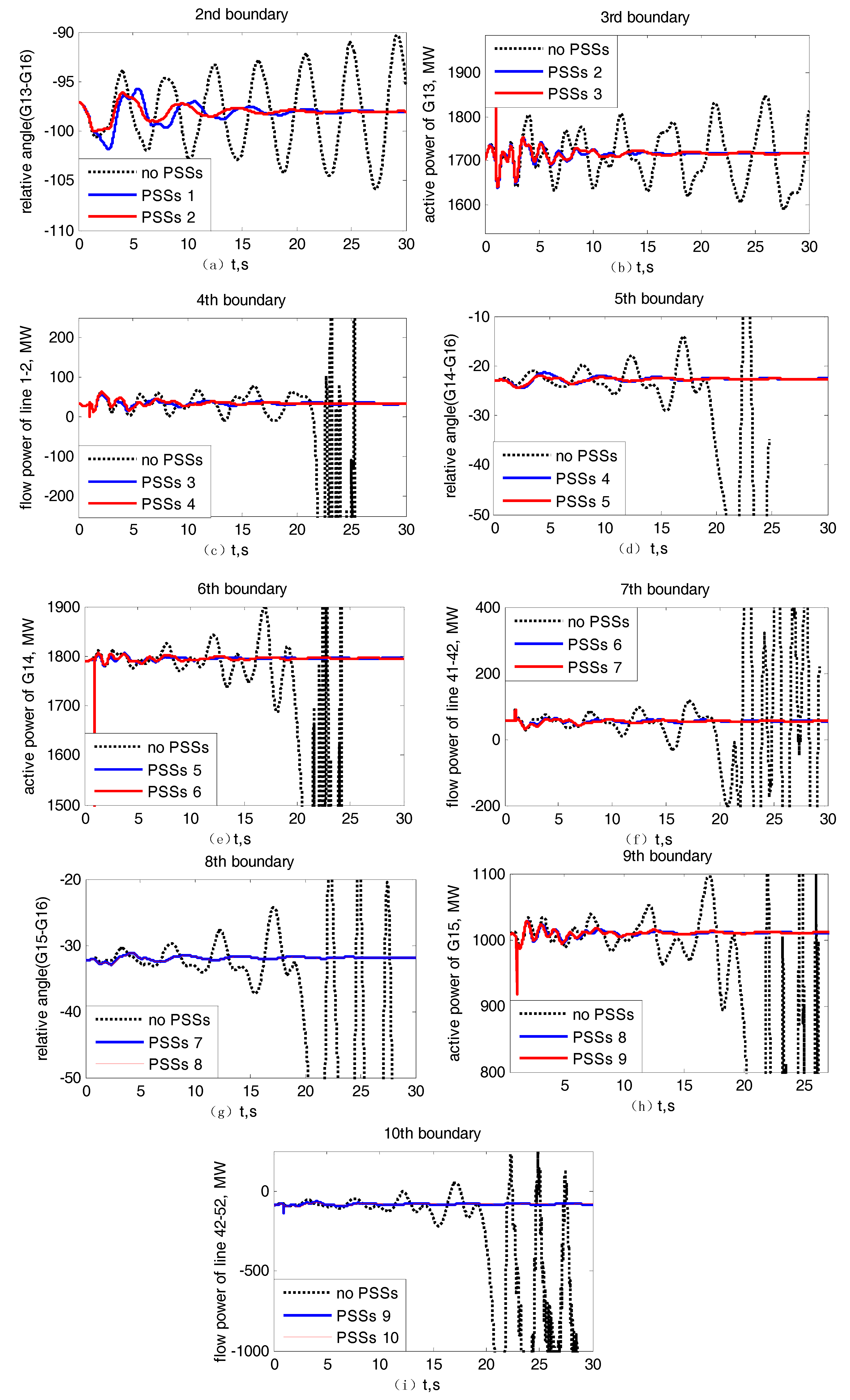

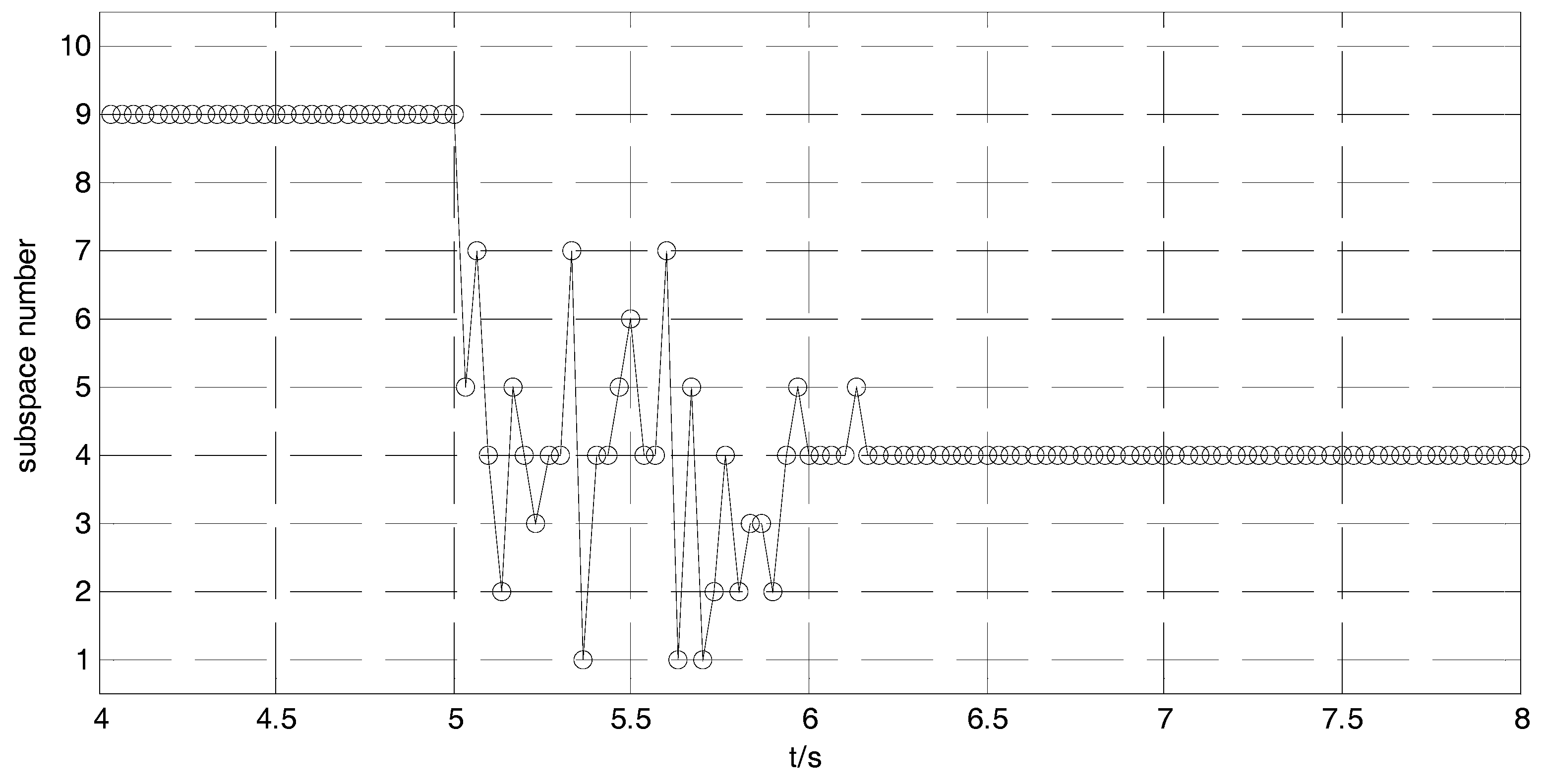

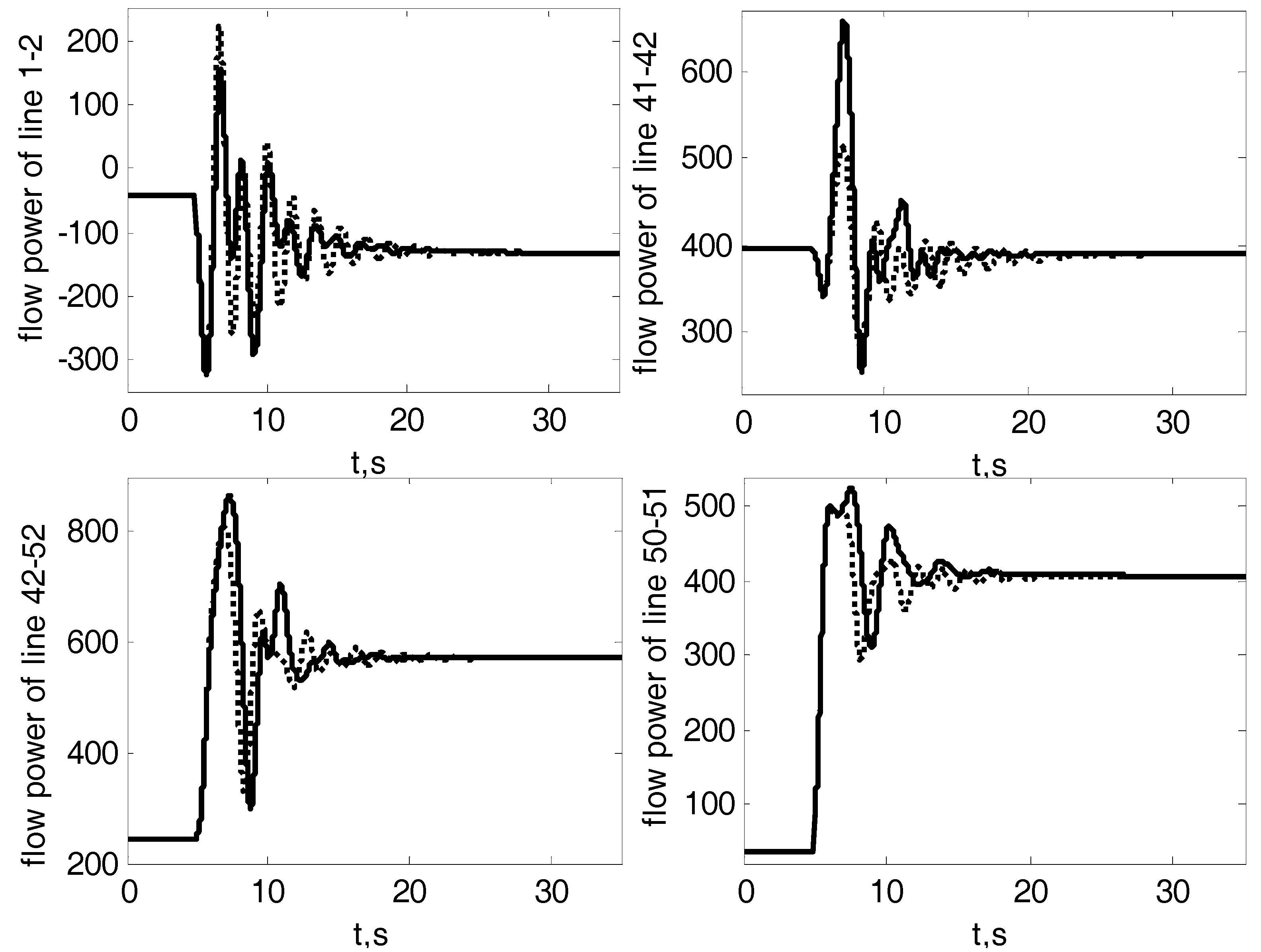

3.3. Simulation Results and Discussions

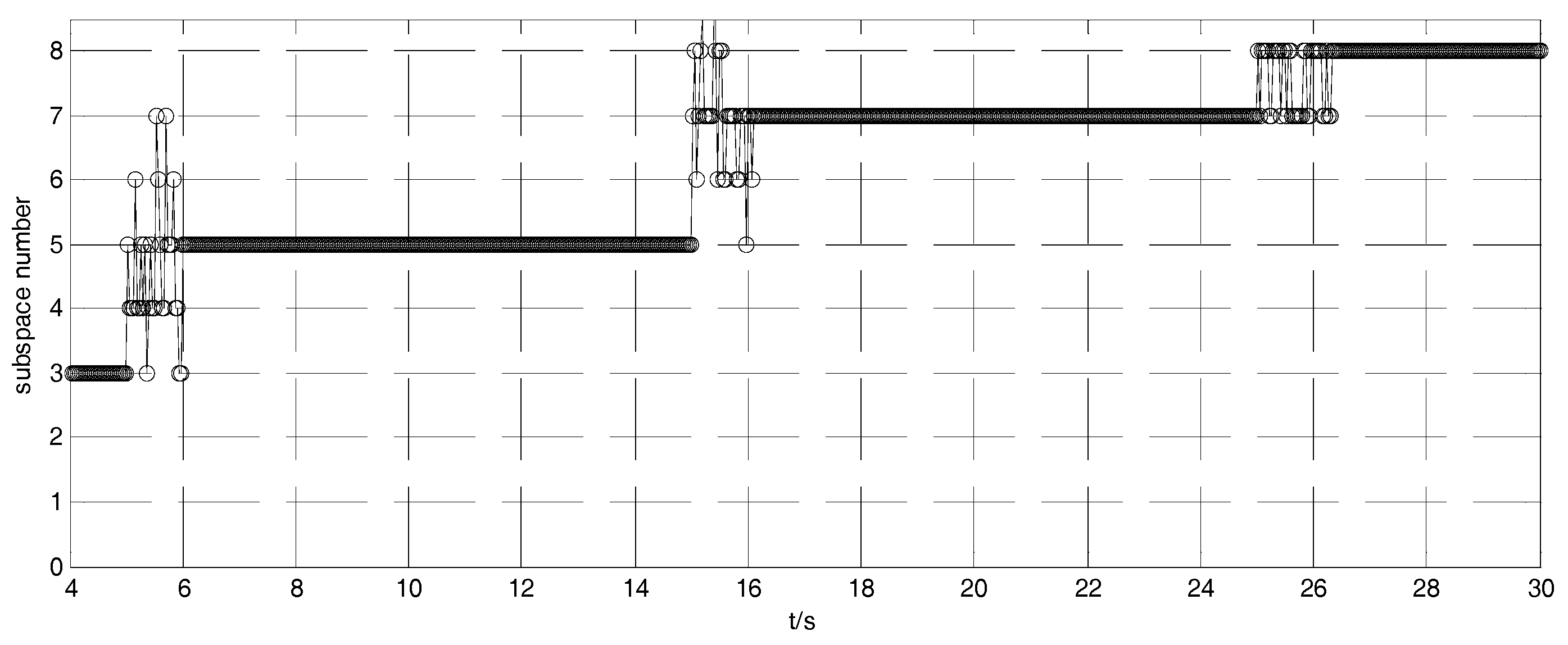

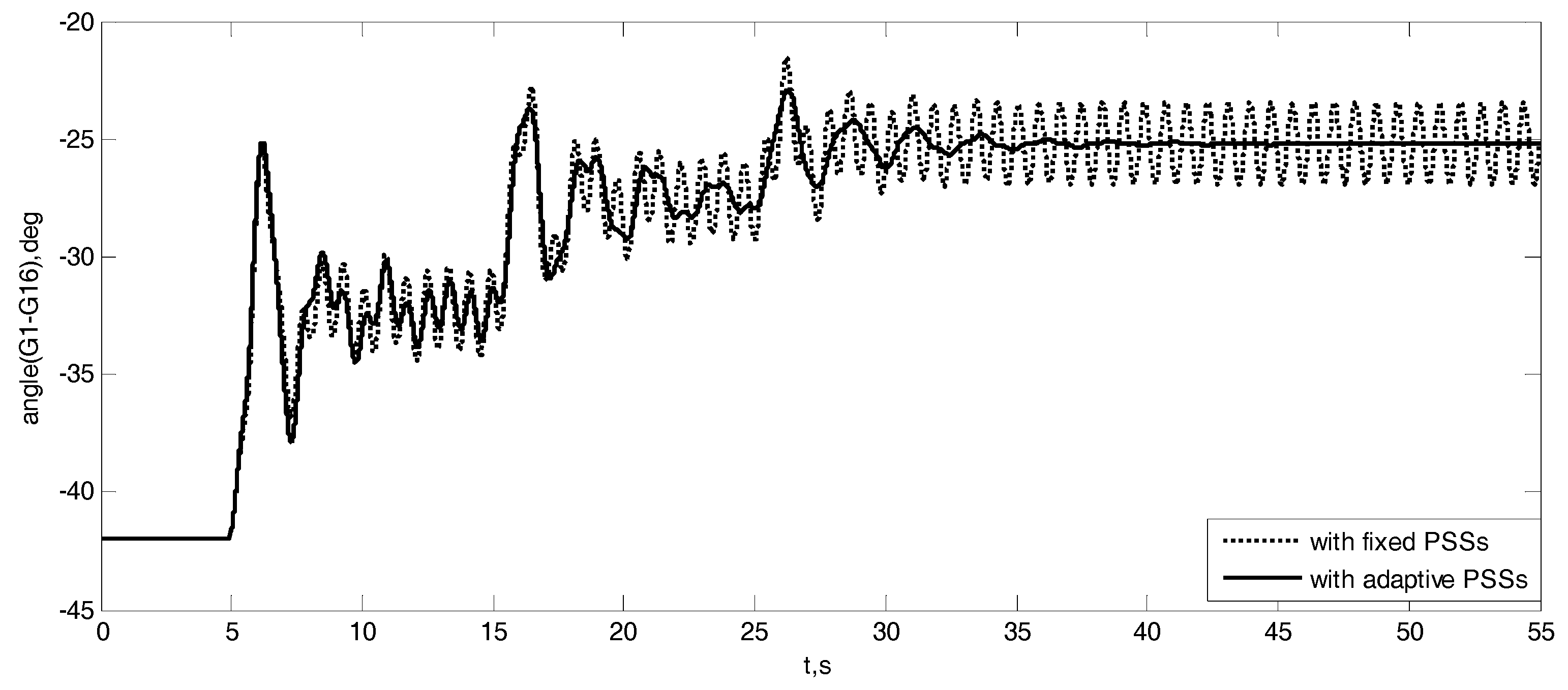

3.4. Test System with Multiple Wind Farms

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

Nomenclature

| generator speed deviation, p.u. | |

| electromagnetic power deviation, p.u. | |

| time constants of washout blocks, s | |

| number of low-pass filters | |

| the gain of PSS | |

| the measurements from circles | |

| the measurements from stars | |

| the means of measurements | |

| the means of measurements | |

| the covariance of measurements of subspaces | |

| the covariance of measurements of subspaces | |

| M | the classification line |

| W | the normal vector |

| the distance vector | |

| the direction vector of hyper-plane | |

| the middle point | |

| f | frequency of oscillation mode, Hz |

References

- Kunder, P. Power System Stability and Control; McGraw-Hill: New York, NY, USA 1994. [Google Scholar]

- Yu, X.; Zhang, W.; Zang, H.; Yang, H. Wind power interval forecasting based on confidence interval optimization. Energies 2018, 11, 3336. [Google Scholar] [CrossRef]

- Bai, W.; Lee, D.; Lee, K.Y. Stochastic dynamic AC optimal power flow based on a multivariate short-term wind power scenario forecasting model. Energies 2018, 12, 2138. [Google Scholar] [CrossRef]

- Zhao, X.; Lin, Z.; Fu, B.; He, L.; Fang, N. Research on automatic generation control with wind power participation based on predictive optimal 2-degree-of-freedom PID strategy for multi-area interconnected power system. Energies 2018, 11, 3325. [Google Scholar] [CrossRef]

- Divshali, P.H.; Choi, B.J.; Liang, H. Multi-agent transactive energy management system considering high levels of renewable energy source and electric vehicles. IET Gener. Transm. Distrib 2017, 11, 3713–3721. [Google Scholar] [CrossRef]

- Athari, M.H.; Wang, Z. Impacts of wind power uncertainty on grid vulnerability to cascading overload failures. IEEE Trans. Sustain. Energy 2018, 9, 128–137. [Google Scholar] [CrossRef]

- Zhang, J.; Ju, P.; Yu, Y.; Wu, F. Responses and stability of power system under small Gauss type random excitation. Sci. China Technol. Sci. 2012, 55, 1873–1880. [Google Scholar] [CrossRef]

- Charles, K.; Urasaki, N.; Senjyu, T.; Elsayed Lotfy, M.; Liu, L. Robust load frequency control schemes in power system using optimized PID and model predictive controllers. Energies 2018, 11, 3070. [Google Scholar] [CrossRef]

- Mansiri, K.; Sukchai, S.; Sirisamphanwong, C. Fuzzy control for smart PV-battery system management to stabilize grid voltage of 22 kV distribution system in Thailand. Energies 2018, 11, 1730. [Google Scholar] [CrossRef]

- Majumder, R.; Chaudhuri, B.; Pal, B.C. A probabilistic approach to model-based adaptive control for damping of interarea oscillations. IEEE Trans. Power Syst. 2005, 20, 367–374. [Google Scholar] [CrossRef]

- Chaudhuri, B.; Majumder, R.; Pal, B.C. Application of multiple-model adaptive control strategy for robust damping of interarea oscillations in power system. IEEE Trans. Contr. Syst. Technol. 2004, 12, 727–736. [Google Scholar] [CrossRef]

- Wang, T.; Pal, A.; Thorp, J.; Yang, Y. Use of polytopic convexity in developing an adaptive interarea oscillation damping scheme. IEEE Trans. Power Syst. 2017, 32, 2509–2520. [Google Scholar] [CrossRef]

- Ye, H.; Liu, Y. Design of model predictive controllers for adaptive damping of inter-area oscillations. Int. J. Electr. Power Energy Syst. 2013, 45, 509–518. [Google Scholar] [CrossRef]

- Bernabeu, E.E.; Thorp, J.S.; Centeno, V. Methodology for a security/dependability adaptive protection scheme based on data mining. IEEE Trans. Power Syst. 2012, 27, 104–111. [Google Scholar] [CrossRef]

- Shi, X.; Lei, X.; Huang, Q.; Huang, S.; Ren, K.; Hu, Y. Hourly day-ahead wind power prediction using the hybrid model of variational model decomposition and long short-term memory. Energies 2018, 11, 3227. [Google Scholar] [CrossRef]

- Cai, K.; Alalibo, B.P.; Cao, W.; Liu, Z.; Wang, Z.; Li, G. Hybrid approach for detecting and classifying power quality disturbances based on the variational mode decomposition and deep stochastic configuration network. Energies 2018, 11, 3040. [Google Scholar] [CrossRef]

- Rogers, G. Power System Oscillations; Kluwer: Boston, MA, USA, 2000. [Google Scholar]

- Salford Systems, CART. 2008. Available online: www.salford-systems.com (accessed on 12 May 2008).

- Fisher, R. The use of multiple measurements in taxonomic problems. Ann. Eugen. 1936, 7, 179–188. [Google Scholar] [CrossRef]

- He, J.; Lu, C.; Wu, X.; Li, P.; Wu, J. Design and experiment of wide area HVDC supplementary damping controller considering time delay in China southern power grid. IET Gener. Transm. Distrib. 2009, 3, 17–25. [Google Scholar] [CrossRef]

- Wang, J.; Fu, C.; Zhang, Y. Design of WAMS-based multiple HVDC damping control system. IEEE Trans. Smart Grid 2011, 2, 363–374. [Google Scholar] [CrossRef]

- Huo, J.; Xue, A.; Wang, Q.; Bi, T.; Li, C. Research on clustering algorithm for dynamic equivalence of doubly-fed wind farms. In Proceedings of the 2014 International Conference on Power System Technology, Chengdu, China, 20–22 October 2014. [Google Scholar]

- Yi, D.; Liu, C.; Tian, X. The power output characteristics of Jiuquan wind power base and its reactive power compensation. In Proceedings of the 2013 IEEE PES Asia-Pacific Power and Energy Engineering Conference, Kowloon, China, 8–11 December 2013. [Google Scholar]

- Bernabeu, E. Methodology for a Security-Dependability Adaptive Protection Scheme Based on Data Mining. Ph.D. Thesis, Department of Electrical and Computer Engineering, Virginia Polytechnic Institute and State University, Blacksburg, VA, USA, 2009. [Google Scholar]

| Subspace | Wind Power Outputs | Modes | 1 | 2 | 3 | 4 |

|---|---|---|---|---|---|---|

| 1 | 0 | f (Hz) | 0.6752 | 0.6414 | 0.5621 | 0.3482 |

| damp (%) | 7.39 | 14.04 | 11.95 | 24.73 | ||

| 2 | 450 | f (Hz) | 0.6973 | 0.6463 | 0.5746 | 0.3839 |

| damp (%) | 10.81 | 13.47 | 12.94 | 22.6 | ||

| 3 | 900 | f (Hz) | 0.6917 | 0.6505 | 0.5895 | 0.4046 |

| damp (%) | 14.09 | 12.98 | 15.14 | 21.85 | ||

| 4 | 1350 | f (Hz) | 0.8942 | 0.6687 | 0.6175 | 0.5589 |

| damp (%) | 6.55 | 16.13 | 10.08 | 40.78 | ||

| 5 | 1800 | f (Hz) | 0.8441 | 0.5556 | 0.5513 | 0.4962 |

| damp (%) | 6.35 | 9.13 | 32.6 | 19.94 | ||

| 6 | 2250 | f (Hz) | 0.8455 | 0.5914 | 0.5811 | 0.5631 |

| damp (%) | 6.56 | 15.89 | 27.81 | 10.08 | ||

| 7 | 2700 | f (Hz) | 0.8469 | 0.6016 | 0.5727 | 0.5400 |

| damp (%) | 6.27 | 19.28 | 10.06 | 55.07 | ||

| 8 | 3150 | f (Hz) | 0.8473 | 0.6255 | 0.5771 | 0.5177 |

| damp (%) | 6.45 | 17.92 | 10.14 | 58.6 | ||

| 9 | 3600 | f (Hz) | 0.6511 | 0.6048 | 0.5452 | 0.3948 |

| damp (%) | 11.43 | 1023 | 18.7 | 23.18 | ||

| 10 | 4048 | f (Hz) | 0.8572 | 0.6258 | 0.5738 | 0.4817 |

| damp (%) | 6.27 | 14.02 | 10.03 | 24.3 |

| Sub. No. | Mode 1 | Mode 2 | Mode 3 | Mode 4 | ||||

|---|---|---|---|---|---|---|---|---|

| Gen. No. | PF | Gen. No. | PF | Gen. No. | PF | Gen. No. | PF | |

| Sub. 1 | 15 | 1.00 | 13 | 1.00 | 14 | 1.00 | 13 | 1.00 |

| 14 | 0.37 | 9 | 0.19 | 16 | 0.49 | 9 | 0.28 | |

| Sub. 2 | 15 | 1.00 | 13 | 1.00 | 14 | 1.00 | 13 | 1.00 |

| 14 | 0.38 | 9 | 0.20 | 16 | 0.68 | 14 | 0.19 | |

| Sub. 3 | 15 | 1.00 | 13 | 1.00 | 14 | 1.00 | 13 | 1.00 |

| 14 | 0.38 | 9 | 0.27 | 16 | 0.82 | 16 | 0.21 | |

| Sub. 4 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 13 | 1.00 |

| 14 | 0.39 | 9 | 0.29 | 14 | 0.97 | 16 | 0.23 | |

| Sub. 5 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 13 | 1.00 |

| 14 | 0.39 | 9 | 0.29 | 14 | 0.78 | 15 | 0.38 | |

| Sub. 6 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 13 | 1.00 |

| 14 | 0.39 | 9 | 0.28 | 14 | 0.67 | 15 | 0.61 | |

| Sub. 7 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 13 | 1.00 |

| 14 | 0.39 | 9 | 0.27 | 14 | 0.61 | 14 | 0.87 | |

| Sub. 8 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 14 | 1.00 |

| 14 | 0.39 | 9 | 0.28 | 14 | 0.58 | 15 | 0.95 | |

| Sub. 9 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 14 | 1.00 |

| 14 | 0.39 | 9 | 0.29 | 14 | 0.59 | 15 | 1.00 | |

| Sub. 10 | 15 | 1.00 | 13 | 1.00 | 16 | 1.00 | 15 | 1.00 |

| 14 | 0.40 | 9 | 0.35 | 14 | 0.62 | 14 | 0.68 | |

| Substation No. | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

|---|---|---|---|---|---|---|---|---|---|---|

| Classified Sub.1 | 492 | 10 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Classified Sub. 2 | 8 | 478 | 8 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |

| Classified Sub. 3 | 0 | 11 | 481 | 10 | 0 | 0 | 0 | 0 | 0 | 0 |

| Classified Sub. 4 | 0 | 1 | 9 | 474 | 15 | 0 | 0 | 0 | 0 | 0 |

| Classified Sub. 5 | 0 | 0 | 0 | 15 | 468 | 15 | 0 | 0 | 0 | 0 |

| Classified Sub. 6 | 0 | 0 | 0 | 0 | 17 | 471 | 12 | 0 | 0 | 0 |

| Classified Sub. 7 | 0 | 0 | 0 | 0 | 0 | 13 | 479 | 13 | 0 | 0 |

| Classified Sub. 8 | 0 | 0 | 0 | 0 | 0 | 1 | 9 | 477 | 6 | 0 |

| Classified Sub. 9 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 10 | 488 | 5 |

| Classified Sub.10 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 5 | 495 |

| Mode No. | Adaptive PSSs | Fixed PSSs | ||||||

|---|---|---|---|---|---|---|---|---|

| Eigenanalysis | Prony Analysis | Eigenanalysis | Prony Analysis | |||||

| f (Hz) | Damp (%) | f (Hz) | Damp (%) | f (Hz) | Damp (%) | f (Hz) | Damp (%) | |

| Mode 1 | 0.5589 | 40.78 | 0.538 | 46.064 | 0.4978 | 30.46 | 0.479 | 20.145 |

| Mode 2 | 0.6175 | 10.08 | 0.617 | 10.412 | 0.5536 | 7.24 | 0.565 | 6.88 |

| Mode 3 | 0.6687 | 16.13 | 0.633 | 17.953 | 0.5986 | 15.20 | 0.614 | 12.989 |

| Mode 4 | 0.8942 | 6.55 | 0.854 | 6.666 | 0.8725 | 5.64 | 0.887 | 5.994 |

| Sub. No. | 1st WF Outputs | 2nd WF Outputs | 3rd WF Outputs |

|---|---|---|---|

| 1 | 450 | 450 | 450 |

| 2 | 450 | 450 | 900 |

| 3 | 450 | 900 | 450 |

| 4 | 450 | 900 | 900 |

| 5 | 900 | 450 | 450 |

| 6 | 900 | 450 | 900 |

| 7 | 900 | 900 | 450 |

| 8 | 900 | 900 | 900 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Deng, J.; Suo, J.; Yang, J.; Peng, S.; Chi, F.; Wang, T. Adaptive Damping Control Strategy of Wind Integrated Power System. Energies 2019, 12, 135. https://doi.org/10.3390/en12010135

Deng J, Suo J, Yang J, Peng S, Chi F, Wang T. Adaptive Damping Control Strategy of Wind Integrated Power System. Energies. 2019; 12(1):135. https://doi.org/10.3390/en12010135

Chicago/Turabian StyleDeng, Jun, Jun Suo, Jing Yang, Shutao Peng, Fangde Chi, and Tong Wang. 2019. "Adaptive Damping Control Strategy of Wind Integrated Power System" Energies 12, no. 1: 135. https://doi.org/10.3390/en12010135

APA StyleDeng, J., Suo, J., Yang, J., Peng, S., Chi, F., & Wang, T. (2019). Adaptive Damping Control Strategy of Wind Integrated Power System. Energies, 12(1), 135. https://doi.org/10.3390/en12010135