Measurement of Economic Forecast Accuracy: A Systematic Overview of the Empirical Literature

Abstract

1. Introduction

2. Methodology

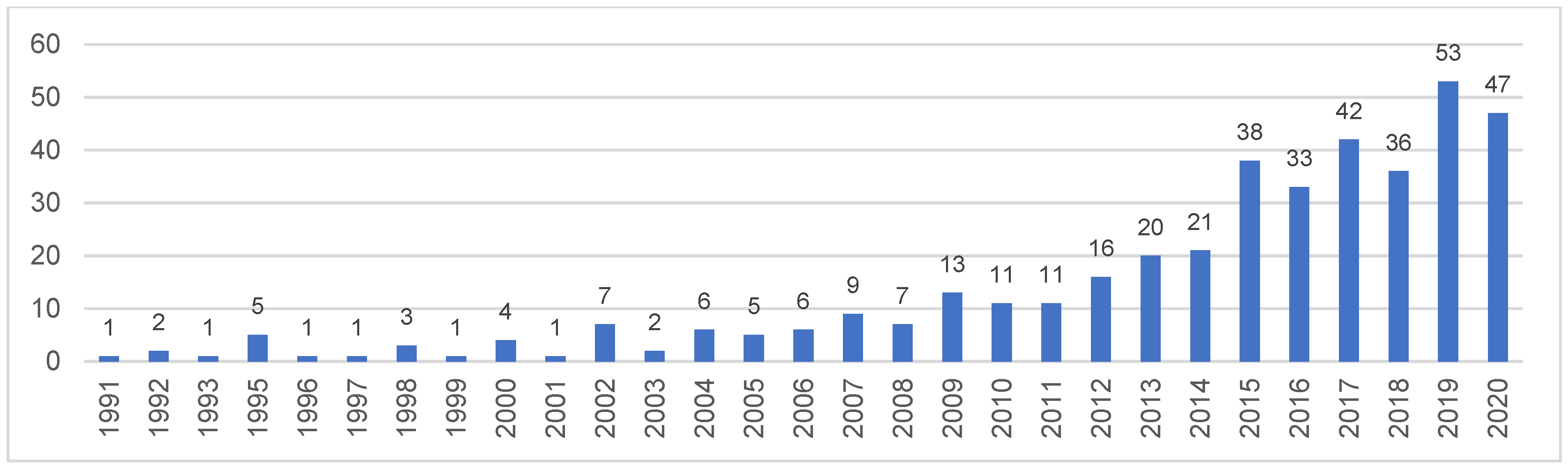

3. Citation-Based Analysis

4. Content Analysis

4.1. Theoretical Background

4.2. Methodology Development

4.2.1. Measures

4.2.2. Statistical Tests

The Morgan-Granger-Newbold (MGN) Test

- are actual values.

- and are two forecast values.

The Diebold-Mariano (DM) Test

- are actual data series.

- are the ith competing h-step forecasting series.

The Harvey-Lebourne-Newbold (HLN) Tests

- (1)

- Variations of the MGN test

- (2)

- Modifications of the Diebold-Mariano (DM) test

4.2.3. Strategies

4.3. Empirical Findings

5. Conclusions

Funding

Informed Consent Statement

Conflicts of Interest

References

- Abel, Joshua, Robert Rich, Joseph Song, and Joseph Tracy. 2016. The Measurement and Behavior of Uncertainty: Evidence from the ECB Survey of Professional Forecasters. Journal of Applied Econometrics 31: 533–50. [Google Scholar] [CrossRef]

- Abideen, Ahmed Zainul, Fazeeda Binti Mohamad, and Yudi Fernando. 2020. Lean simulations in production and operations management—A systematic literature review and bibliometric analysis. Journal of Modelling in Management 16. [Google Scholar] [CrossRef]

- Abreu, Ildeberta. 2011. International Organisations’ vs. Private Analysts’ Forecasts: An Evaluation. Working Papers 20/2011. Lisbon: Banco de Portugal. [Google Scholar]

- Ager, Philipp, Marcus Kappler, and Steffen Osterloh. 2009. The accuracy and efficiency of the Consensus Forecasts: A further application and extension of the pooled approach. International Journal of Forecasting 25: 167–81. [Google Scholar] [CrossRef]

- Ahlburg, Dennis. 1992. A commentary on error measures: Error measures and the choice of a forecast method. International Journal of Forecasting 8: 99–111. [Google Scholar] [CrossRef]

- An, Zidong, Joao Tovar Jalles, and Parkash Loungani. 2018. How Well Do Economists Forecast Recessions? IMF Working Paper WP/18/39. Washington, DC: International Monetary Fund. [Google Scholar]

- Armstrong, Scott J. 2001. Combining Forecasts. In Principles of Forecasting, 1st ed. Edited by Scott J. Armstrong. London: Springer International Publishing, pp. 417–39. [Google Scholar]

- Armstrong, J. Scott, and Fred Collopy. 1992. Error Measures For Generalizing About Forecasting Methods: Empirical Comparisons. International Journal of Forecasting 8: 69–80. [Google Scholar] [CrossRef]

- Armstrong, J. Scott, and Robert Fildes. 1995. On the Selection of Error Measures for Comparisons Among Forecasting Methods. Journal of Forecasting 14: 67–71. [Google Scholar] [CrossRef]

- Ashiya, Masahiro. 2005. Twenty-two years of Japanese institutional forecasts. Applied Financial Economics Letters 12: 79–84. [Google Scholar] [CrossRef][Green Version]

- Barrell, Ray. 2001. Forecasting the world economy. In Understanding Economic Forecasts. Edited by David F. Hendry and Neil R. Ericsson. Cambridge: The MIT Press, pp. 149–69. [Google Scholar]

- Bates, John. M., and Clive W. J. Granger. 1969. The Combination of Forecasts. Journal of the Operational Research Society 20: 451–68. [Google Scholar] [CrossRef]

- Billio, Monica, Roberto Casarin, Francesco Ravazzolo, and Herman K.van Dijk. 2013. Time-varying combinations of predictive densities using nonlinear filtering. Journal of Econometrics 177: 213–32. [Google Scholar] [CrossRef]

- Blaskowitz, Oliver, and Helmut Herwartz. 2009. Adaptive forecasting of the EURIBOR swap term structure. Journal of Forecasting 28: 575–94. [Google Scholar] [CrossRef]

- Boothe, Paul, and Debra Glassman. 1987. Comparing exchange rate forecasting models: Accuracy versus profitability. International Journal of Forecasting 3: 65–79. [Google Scholar] [CrossRef]

- Bratu, Mihaela. 2012. Strategies to Improve the Accuracy of Macroeconomic Forecasts in United States of America. Munich: Lap Lambert, p. 155. [Google Scholar]

- Bratu, Mihaela. 2013. Improvements in Assessing the Forecasts Accuracy—A Case Study for Romanian Macroeconomic Forecasts. Serbian Journal of Management 8: 53–65. [Google Scholar] [CrossRef]

- Bunn, Derek W. 1989. Forecasting with more than one model. Journal of Forecasting 8: 161–66. [Google Scholar] [CrossRef]

- Capistrán, Carlos, and Gabriel López-Moctezuma. 2014. Forecast revisions of Mexican inflation and GDP growth. International Journal of Forecasting 30: 177–91. [Google Scholar] [CrossRef][Green Version]

- Carbone, Robert, and Scott J. Armstrong. 1982. Evaluation of extrapolative forecasting methods: Results of a survey of academicians and practitioners. Journal of Forecasting 1: 215–17. [Google Scholar] [CrossRef]

- Carvalho, Fabia A., and Andre Minella. 2012. Survey forecasts in Brazil: A prismatic assessment of epidemiology, performance, and determinants. Journal of International Money and Finance 31: 1371–91. [Google Scholar] [CrossRef]

- Chen, Zhuo, and Yuhong Yang. 2004. Assessing Forecast Accuracy Measures. Available online: https://www.researchgate.net/publication/228774888_Assessing_forecast_accuracy_measures (accessed on 3 November 2021).

- Chen, Hao, Qiulan Wan, and Yurong Wang. 2014. Refined Diebold-Mariano Test Methods for the Evaluation of Wind Power Forecasting Models. Energies 7: 4185–98. [Google Scholar] [CrossRef]

- Chen, Qiwei, Mauro Costantini, and Bruno Deschamps. 2016. How accurate are professional forecasts in Asia? Evidence from ten countries. International Journal of Forecasting 32: 154–67. [Google Scholar] [CrossRef][Green Version]

- Chen, Chao, Jamie Twycross, and Jonathan M. Garibaldi. 2017. A new accuracy measure based on bounded relative error for time series forecasting. PLoS ONE 12: e0174202. [Google Scholar] [CrossRef] [PubMed]

- Christoffersen, Peter F., and Francis X. Diebold. 1998. Co-integration and Long Horizon Forecasting. Journal of Business and Economic Statistics 16: 450–58. [Google Scholar] [CrossRef]

- Clark, Todd E., and Michael W. McCracken. 2001. Tests of equal forecast accuracy and encompassing for nested models. Journal of Econometrics 105: 85–110. [Google Scholar] [CrossRef]

- Clark, Todd E., and Michael W. McCracken. 2009. Improving Forecast Accuracy by Combining Recursive and Rolling Forecasts. International Economic Review 50: 363–95. [Google Scholar] [CrossRef]

- Clark, Todd E., and Michael W. McCracken. 2013. Chapter 20—Advances in Forecast Evaluation. Handbook of Economic Forecasting 2: 1107–201. [Google Scholar] [CrossRef]

- Clements, Michael P. 2014. Forecast uncertainty—Ex ante and ex post: U.S. inflation and output growth. Journal of Business and Economic Statistics 32: 206–16. [Google Scholar] [CrossRef]

- Clements, Michael P., and David F. Hendry. 1993. On the limitations of comparing mean square forecast errors. Journal of Forecasting 12: 617–37. [Google Scholar] [CrossRef]

- Clements, Michael P., and David F. Hendry. 2001. Economic Forecasting: Some Lessons from Recent Research. Working Paper No. 82. Frankfurt am Main: European Central Bank. [Google Scholar]

- Clements, Michael P., and David F. Hendry. 2004. An Overview of Economic Forecasting. In A Companion to Economic Forecasting, 1st ed. Edited by Michael P. Clements and David F. Hendry. Malden: Blackwell Publishing, pp. 1–18. [Google Scholar]

- Clements, Michael P., and David F. Hendry. 2008. Economic Forecasting in a Changing World. Capitalism and Society 3: 1–18. [Google Scholar] [CrossRef]

- Clements, Michael P., and Nick Taylor. 2001. Robust evaluation of fixed-event forecast rationality. Journal of Forecasting 20: 285–95. [Google Scholar] [CrossRef]

- Clements, Michael P., Fred Joutz, and Herman O. Stekler. 2007. An Evaluation of the Forecasts of the Federal Reserve: A Pooled Approach. Journal of Applied Econometrics 22: 121–36. [Google Scholar] [CrossRef]

- Cooper, Philip J., and Charles R. Nelson. 1975. The ex-ante prediction performance of the St. Louis and FRBMIT-PENN econometric models and some results on composite predictors. Journal of Money, Credit, and Banking 7: 1–32. [Google Scholar] [CrossRef]

- Coroneo, Laura, and Fabrizio Iacone. 2020. Comparing predictive accuracy in small samples using fixedsmoothing asymptotics. Journal of Applied Econometrics 35: 391–409. [Google Scholar] [CrossRef]

- Costantini, Mauro, and Robert Kunst. 2011. Combining forecasts based on multiple encompassing tests in a macroeconomic core system. Journal of Forecasting 30: 579–96. [Google Scholar] [CrossRef]

- Croushore, Dean. 2011. Frontiers of Real-Time Data Analysis. Journal of Economic Literature 49: 72–100. [Google Scholar] [CrossRef]

- Croushore, Dean, and Tom Stark. 2001. A Real-Time Data Set for Macroeconomists. Journal of Econometrics 105: 111–30. [Google Scholar] [CrossRef]

- Dang, Xin, Wlater J. Mayer, and Wenxian Xu. 2014. More Powerful and Robust Diebold-Mariano and Morgan-Granger-Newbold Tests. Working Paper. Oxford: University of Mississippi. [Google Scholar]

- Davies, Anthony, and Kajal Lahiri. 1995. A new framework for analyzing survey forecasts using three-dimensional panel data. Journal of Econometrics 68: 205–27. [Google Scholar] [CrossRef]

- Davydenko, Andrey, and Robert Fildes. 2013. Measuring forecasting accuracy: The case of judgmental adjustments to SKU-level demand forecasts. International Journal of Forecasting 29: 510–22. [Google Scholar] [CrossRef]

- De Menezes, Lilian M., Derek W. Bunn, and James W. Taylor. 2000. Review of guidelines for the use of combined forecasts. European Journal of Operational Research 120: 190–204. [Google Scholar] [CrossRef]

- Deschamps, Bruno, and Paolo Bianchi. 2012. An evaluation of Chinese macroeconomic forecasts. Journal of Chinese Economic and Business Studies 10: 229–46. [Google Scholar] [CrossRef]

- Dhrymes, Phoebus J., E. Philip Howre, Saul H. Hymans, Jan Kmenta, Edward E. Leamer, Richard E. Quanot, James B. Ramsey, Harold T. Shapiro, and Victor Zarnowitz. 1972. Criteria for evaluation of econometric models. Annals of Economic and Social Measurement 1: 291–324. [Google Scholar]

- Diebold, Francis X. 2015. Comparing Predictive Accuracy, Twenty Years Later: A Personal Perspective on the Use and Abuse of Diebold–Mariano Tests. Journal of Business & Economic Statistics 33: 1. [Google Scholar] [CrossRef]

- Diebold, Francis X., and Robert S. Mariano. 1995. Comparing forecast accuracy. Journal of Business & Economic Statistics 13: 253–65. [Google Scholar]

- Dovern, Jonas, and Nils Jannsen. 2017. Systematic Errors in Growth Expectations over the Business Cycle. International Journal of Forecasting 33: 760–69. [Google Scholar] [CrossRef]

- Dovern, Jonas, and Johannes Weisser. 2011. Accuracy, unbiasedness and efficiency of professional macroeconomic forecasts: An empirical comparison for the G7. International Journal of Forecasting 27: 452–65. [Google Scholar] [CrossRef]

- Dovern, Jonas, Urlich Fritsche, Prakash Loungani, and Natalia Tamirisa. 2015. Information rigidities: Comparing average and individual forecasts for a large international panel. International Journal of Forecasting 31: 144–54. [Google Scholar] [CrossRef]

- Fair, Ray. 1986. Evaluating the predictive accuracy of models. Handbook of Econometrics 3: 1979–95. [Google Scholar]

- Fildes, Robert. 1992. The evaluation of extrapolative forecasting methods. International Journal of Forecasting 8: 81–98. [Google Scholar] [CrossRef]

- Fildes, Robert, and Fotios Petropoulos. 2015. Simple versus complex selection rules for forecasting many time series. Journal of Business Research 68: 1692–701. [Google Scholar] [CrossRef]

- Franses, Philip Hans, Henk C. Kranendonk, and Debby Lanser. 2011. One Model and Various Experts: Evaluating Dutch Macroeconomic Forecasts. International Journal of Forecasting 28: 482–95. [Google Scholar] [CrossRef]

- Galvão Bandeira, Saymon, Symone Gomes Soares Alcalá, Roberto Oliveira Vita, and Talles Marcelo Gonçalves de Andrade Barbosa. 2020. Comparison of selection and combination strategies for demand forecasting methods. Production 30: 1–13. [Google Scholar] [CrossRef]

- Geweke, John, and Gianni Amisano. 2011. Optimal prediction pools. Journal of Econometrics 164: 130–41. [Google Scholar] [CrossRef]

- Giacalone, Massimiliano. 2021. Optimal forecasting accuracy using Lp-norm combination. Metron 1: 1–44. [Google Scholar] [CrossRef]

- Giacomini, Raffaella, and Halbert White. 2006. Tests of conditional predictive ability. Econometrica 74: 1545–78. [Google Scholar] [CrossRef]

- Glocker, Christian, and Serguei Kaniovski. 2021. Macroeconometric forecasting using a cluster of dynamic factor models. Empirical Economics 1: 1–52. [Google Scholar] [CrossRef]

- Golinelli, Roberto, and Giuseppe Parigi. 2008. Real time squared: A real-time data set for real-time GDP forecasting. International Journal of Forecasting 24: 368–85. [Google Scholar] [CrossRef]

- Golinelli, Roberto, and Giuseppe Parigi. 2014. Tracking world trade and GDP in real time. International Journal of Forecasting 30: 847–62. [Google Scholar] [CrossRef]

- González Cabanillas, Laura, and Alessio Terzi. 2012. The Accuracy of the European Commission’s Forecasts Re-Examined. European Economy, Economic Papers 476. Brussels: European Commission. [Google Scholar]

- Gorr, Wilpen L. 2009. Forecast accuracy measures for exception reporting using receiver operating characteristic curves. International Journal of Forecasting 25: 48–61. [Google Scholar] [CrossRef]

- Granger, Clive W. J., and Yongil Jeon. 2003. A Time-Distance Criterion for Evaluating forecasting models. International Journal of Forecasting 19: 199–215. [Google Scholar] [CrossRef]

- Granger, Clive W.J., and Paul Newbold. 1986. Forecasting economic time series, 2nd ed. San Diego: Academic Press, Inc. [Google Scholar]

- Granger, Clive W. J., and Hashem M. Pesaran. 2000. Economic and Statistical Measures of Forecast Accuracy. Journal of Forecasting 19: 537–560. [Google Scholar] [CrossRef]

- Grilli, Luca, Gresa Latifi, and Boris Mrkajic. 2019. Institutional determinants of venture capital activity. Journal of Economic Surveys 33: 1094–122. [Google Scholar] [CrossRef]

- Groemling, Michael. 2002. Evaluation and Accuracy of Economic Forecasts. Historical Social Research/Historische Sozialforschung 27: 242–55. [Google Scholar]

- Guisinger, Amy Y., and Tara M. Sinclair. 2015. Okuns Law in real time. International Journal of Forecasting 31: 185–87. [Google Scholar] [CrossRef]

- Gupta, Monika, and Mohammad Haris Minai. 2019. An Empirical Analysis of Forecast Performance of the GDP Growth in India. Global Business Review 20: 368–86. [Google Scholar] [CrossRef]

- Hall, Stephen G., and James Mitchell. 2007. Combining density forecasts. International Journal of Forecasting 23: 1–13. [Google Scholar] [CrossRef]

- Harvey, David, Stephen Leybourne, and Paul Newbold. 1997. Testing the Equality of Prediction Mean Squared Errors. International Journal of Forecasting 13: 281–91. [Google Scholar] [CrossRef]

- Harvey, David I., Stephen J. Leybourne, and Emily J. Whitehouse. 2017. Forecast evaluation tests and negative long-run variance estimates in small samples. International Journal of Forecasting 33: 833–47. [Google Scholar] [CrossRef]

- Heilemann, Ullrich, and Herman Stekler. 2007. Introduction to “The future of macroeconomic forecasting”. International Journal of Forecasting 23: 159–65. [Google Scholar] [CrossRef]

- Huang, Jim Yuh, Joseph C. P. Shieh, and Yu-Cheng Kao. 2016. Starting points for a new researcher in behavioral finance. International Journal of Managerial Finance 12: 92–103. [Google Scholar]

- Hyndman, Rob J., and Anne B. Koehler. 2006. Another look at measures of forecast accuracy. International Journal of Forecasting 22: 679–88. [Google Scholar] [CrossRef]

- Isiklar, Gultekin, Kajal Lahiri, and Prakash Loungani. 2006. How quickly do forecasters incorporate news? Evidence from cross-country surveys. Journal of Applied Econometrics 21: 703–25. [Google Scholar] [CrossRef]

- Jansen, Dennis W., and Ruby P. Kishnan. 1996. An evaluation of Federal Reserve forecasting. Journal of Macroeconomics 18: 89–100. [Google Scholar] [CrossRef]

- Joutz, Fred, and Herman O. Stekler. 2000. An evaluation of the predictions of the Federal Reserve. International Journal of Forecasting 16: 17–38. [Google Scholar] [CrossRef]

- Kang, Yanfei, Wei Cao, Fotios Petropoulos, and Feng Li. 2021. Forecast with forecasts: Diversity matters. European Journal of Operational Research 1: 1–25. [Google Scholar] [CrossRef]

- Kapetanios, George, James Mitchell, Simon Price, and Nicholas Fawcett. 2015. Generalised density forecast combinations. Journal of Econometrics 188: 150–65. [Google Scholar] [CrossRef]

- Karamouzis, Nicholas, and Raymond Lombra. 1989. Federal Reserve policymaking: An overview and analysis of the policyprocess. Carnegie Rochester Conference Series on Public Policy 30: 7–62. [Google Scholar] [CrossRef]

- Keane, Michael P., and David E. Runkle. 1990. Testing the rationality of price forecasts: New evidence from panel data. American Economic Review 80: 714–35. [Google Scholar]

- Klein, Lawrence R. 1971. An Essay on the Theory of Economic Prediction. Chicago: Markham Publishing Company. [Google Scholar]

- Koehler, Anne B. 2001. The asymmetry of the sAPE measure and other comments on the M3-competition. International Journal of Forecasting 17: 570–74. [Google Scholar]

- Koning, Alex J., Philip Hans Franses, Michèle Hibon, and Herman O. Stekler. 2005. The M3 competition: Statistical tests of the results. International Journal of Forecasting 21: 397–409. [Google Scholar] [CrossRef]

- Kourentzes, Nikolaos, Fotios Petropoulos, and Juan R. Trapero. 2014. Improving forecasting by estimating time series structural components across multiple frequencies. International Journal of Forecasting 30: 291–302. [Google Scholar] [CrossRef]

- Kourentzes, Nikolaos, Devon Barrow, and Fotios Petropoulos. 2019. Another look at forecast selection and combination: Evidence from forecast pooling. International Journal of Production Economics 209: 226–35. [Google Scholar] [CrossRef]

- Krkoska, L.ibor, and Utku Teksoz. 2009. How reliable are forecasts of GDP growth and inflation for countries with limited coverage? Economic Systems 33: 376–88. [Google Scholar] [CrossRef]

- Lahiri, Kajal, and Gultekin Isiklar. 2009. Estimating international transmission of shocks using GDP forecasts: India and its trading partners. In Development Macroeconomics, Essays in Memory of Anita Ghatak, 1st ed. Edited by Subrata Ghatak and Paul Levine. New York: Routledge, pp. 123–62. [Google Scholar]

- Lam, Lillie, Laurence Fung, and Ip-wing Yu. 2008. Comparing Forecast Performance of Exchange Rate Models; Working Paper 0808. Hong Kong: Hong Kong Monetary Authority.

- Lamont, Owen A. 2002. Macroeconomic forecasts and microeconomic forecasters. Journal of Economic Behavior & Organization 48: 265–80. [Google Scholar]

- Lewis, Christine, and Nigel Pain. 2014. Lessons from OECD Forecasts during and after the Financial Crisis. OECD Journal: Economic Studies 1: 9–39. [Google Scholar] [CrossRef]

- Llewellyn, John, and Haruhito Arai. 1984. International Aspects of Forecasting Accuracy. OECD Economic Studies 1: 73–117. [Google Scholar]

- Loungani, Prakash. 2001. How Accurate are Private Sector Forecasts? Cross-country Evidence from Consensus Forecasts of Output Growth. International Journal of Forecasting 17: 419–32. [Google Scholar] [CrossRef]

- Loungani, Prakash, Herman O. Stekler, and Natalia Tamirisa. 2013. Information rigidity in growth forecasts: Some cross-country evidence. International Journal of Forecasting 29: 605–21. [Google Scholar] [CrossRef]

- Makridakis, Spyros. 1988. Metaforecasting: Ways of improving forecasting accuracy and usefulness. International Journal of Forecasting 4: 467–91. [Google Scholar] [CrossRef]

- Makridakis, Spyros. 1993. Accuracy measures: Theoretical and practical concerns. International Journal of Forecasting 9: 527–29. [Google Scholar] [CrossRef]

- Makridakis, Spyros, and Michele Hibon. 2000. The M3-Competition: Results, conclusions and implications. International Journal of Forecasting 16: 451–76. [Google Scholar] [CrossRef]

- Makridakis, Spyros, Michele Hibon, and Claus Moser. 1979. Accuracy of Forecasting: An Empirical Investigation. Journal of the Royal Statistical Society 4: 97–145. [Google Scholar] [CrossRef]

- Makridakis, Spyros, Allan Andersen, Robert Carbone, Robert Fildes, Michele Hibon, Rudolf Lewandowski, Joseph Newton, Emanuel Parzen, and Robert Winkler. 1982. The Accuracy of Extrapolation (Time Series) Methods: Results of a Forecasting Competition. Journal of Forecasting 1: 111–53. [Google Scholar] [CrossRef]

- Makridakis, Spyros, Robin M. Hogarth, and Anil Gaba. 2009. Forecasting and uncertainty in the economic and business world. International Journal of Forecasting 25: 794–812. [Google Scholar] [CrossRef]

- Mariano, Roberto S. 2004. Testing Forecast Accuracy. In A Companion to Economic Forecasting, 1st ed. Edited by Michael P. Clements and David F. Hendry. Malden: Blackwell Publishing, pp. 284–98. [Google Scholar]

- Mayer, Walter, Gary Madden, and Xin Dang. 2020. Predictive Accuracy Tests for Prediction of Economic Growth Based on Broadband Infrastructure. In Applied Economics in the Digital Era, 1st ed. Edited by James Alleman, Paul N. Rappoport and Hamoudia Mohsen. London: Springer International Publishing, pp. 137–49. [Google Scholar]

- Meese, Richard A., and Kenneth Rogoff. 1983. Empirical Exchange Rate Models of the Seventies. Journal of International Economics 14: 3–24. [Google Scholar] [CrossRef]

- Meese, Richard A., and Kenneth Rogoff. 1988. Was it Real? The Exchange Rate–Interest Differential Relation Over the Modern Floating-Rate Period. Journal of Finance 43: 933–48. [Google Scholar] [CrossRef]

- Messina, Jeffrey D., Tara M. Sinclair, and Herman O. Stekler. 2015. What can we learn from revisions to the Greenbook forecasts? Journal of Macroeconomics 45: 54–62. [Google Scholar] [CrossRef]

- Mincer, Jacob, and Victor Zarnowitz. 1969. The evaluation of economic forecasts. In Economic Forecasts and Expectations, 1st ed. Edited by Jacob Mincer. New York: National Bureau of Economic Research, pp. 3–46. [Google Scholar]

- Morgan, W. A. 1939. A Test for the Significance of the Difference Between the two Variances in a Sample From a Normal Bivariate Population. Biometrika 31: 13–19. [Google Scholar] [CrossRef]

- Nowotarski, Jakub, Bidong Liu, Rafał Weron, and Tao Hong. 2016. Improving short term load forecast accuracy via combining sister forecasts. Energy 98: 40–49. [Google Scholar] [CrossRef]

- Pesaran, Hashem M., and Spyros Skouras. 2004. Decision-Based Methods for Forecast Evaluation. In A Companion to Economic Forecasting, 1st ed. Edited by Michael P. Clements and David F. Hendry. Malden: Blackwell Publishing, pp. 241–67. [Google Scholar]

- Petropoulos, Fotios, Nikolaos Kourentzes, Konstantinos Nikolopoulos, and Enno Siemsen. 2018. Judgmental selection of forecasting models. Journal of Operations Management 60: 34–46. [Google Scholar] [CrossRef]

- Pinar, Mehmet, Thanasis Stengos, and Ege M. Yazgan. 2017. Quantile forecast combination using stochastic dominance. Empirical Economics 55: 1717–55. [Google Scholar] [CrossRef] [PubMed]

- Prasad, Punam, Sivasankaran Narayanasamy, Samit Paul, Subir Chattopadhyay, and Palanisamy Saravanan. 2018. Review of literature on working capital management and future research agenda. Journal of Economic Surveys 33: 827–61. [Google Scholar] [CrossRef]

- Qi, Min, and Guoqiang Peter Zhang. 2001. An investigation of model selection criteria for neural network time series forecasting. European Journal of Operational Research 132: 666–80. [Google Scholar] [CrossRef]

- Romer, Christina D., and David H. Romer. 2000. Federal Reserve private information and the behaviour of interest rates. American Economic Review 90: 429–57. [Google Scholar] [CrossRef]

- Salisu, Afees A., Raymond Swaray, and Tirimisiyu F. Oloko. 2019. Improving the predictability of the oil–US stock nexus: The role of macroeconomic variables. Economic Modelling 76: 153–71. [Google Scholar] [CrossRef]

- Shen, Shujie, Gang Li, and Haiyan Song. 2009. Is the Time-Varying Parameter Model the Preferred Approach to Tourism Demand Forecasting? Statistical Evidence. In Advances in Tourism Economics, 1st ed. Edited by Alvaro Matias, Manuela Sarmento and Peter Nijkamp. London: Springer International Publishing, pp. 107–20. [Google Scholar]

- Sheng, Xuguang. 2015. Evaluating the economic forecasts of FOMC members. International Journal of Forecasting 31: 165–75. [Google Scholar] [CrossRef]

- Shittu, Olanrewaju I., and OlaOluwa S. Yaya. 2009. Measuring forecast performance of ARMA & ARFIMA models: An application to US Dollar/UK pound foreign exchange rate. European Journal of Scientific Research 32: 168–78. [Google Scholar]

- Simionescu, Mihaela. 2014a. The Accuracy Assessment of Macroeconomic Forecasts based on Econometric Models for Romania. Procedia Economics and Finance 8: 671–77. [Google Scholar] [CrossRef]

- Simionescu, Mihaela. 2014b. The Performance of Predictions Based on the Dobrescu Macromodel for the Romanian Economy. Romanian Journal of Economic Forecasting 17: 179–95. [Google Scholar] [CrossRef]

- Sims, Christopher A. 2002. The role of models and probabilities in the monetary policy process. Brookings Papers on Economic Activity 2: 1–40. [Google Scholar] [CrossRef]

- Sinclair, Tara M., Herman O. Stekler, and Fred Joutz. 2010. Can the Fed Predict the State of the Economy? Economics Letters 108: 28–32. [Google Scholar] [CrossRef]

- Snyder, Hannah. 2019. Literature review as a research methodology: An overview and guidelines. Journal of Business Research 104: 333–39. [Google Scholar] [CrossRef]

- Sorin, Gabriel Anton, and Anca Elena Afloarei Nucu. 2020. Enterprise Risk Management: A Literature Review and Agenda for Future Research. Journal of Risk and Financial Management 13: 281. [Google Scholar] [CrossRef]

- Swanson, Norman R., and Dick van Dijk. 2012. Are Statistical Reporting Agencies Getting It Right? Data Rationality and Business Cycle Asymmetry. Journal of Business & Economic Statistics 24: 24–42. [Google Scholar] [CrossRef][Green Version]

- Tashman, Leonard J. 2000. Out-of-sample tests of forecasting accuracy: An analysis and review. International Journal of Forecasting 16: 437–50. [Google Scholar] [CrossRef]

- Theil, Henri. 1966. Applied Economic Forecasting. Amsterdam: North-Holland. [Google Scholar]

- Timmermann, Allan. 2006. Forecast combinations. Handbook of Economic Forecasting 1: 135–96. [Google Scholar] [CrossRef]

- Tinbergen, Jan. 1939. Statistical Testing of Business Cycle Theories: Part II: Business Cycles in the United States of America, 1919–1932. New York: Agaton Press. [Google Scholar]

- Tranfield, David, David Denyer, and Palminder Smart. 2003. Towards a Methodology for Developing Evidence-Informed Management Knowledge by Means of Systematic Review. British Journal of Management 14: 207–22. [Google Scholar] [CrossRef]

- Wallis, Kenneth F. 1989. Macroeconomic forecasting: A survey. Economic Journal 99: 28–61. [Google Scholar] [CrossRef]

- Wang, Xun, and Fotios Petropoulos. 2016. To select or to combine? The inventory performance of model and expert forecasts. International Journal of Production Research 54: 5271–82. [Google Scholar] [CrossRef]

- West, Kenneth D. 1996. Asymptotic Inference about Predictive Ability. Econometrica 64: 1067–84. [Google Scholar] [CrossRef]

- West, D. Kenneth. 2006. Forecast evaluation. Handbook of Economic Forecasting 1: 99–134. [Google Scholar]

- Westerlund, Joakim, and Paresh Kumar Narayan. 2015. Testing for predictability in conditionally hetoroscedastic stock returns. Journal of Financial Econometrics 13: 342–75. [Google Scholar] [CrossRef]

- Woschnagg, Elisabeth, and Jana Cipan. 2004. Evaluating Forecast Accuracy. In 406347 UK Ökonometrische Prognose. Vienna: University of Vienna, Department of Economics. Available online: https://homepage.univie.ac.at/robert.kunst/procip.pdf (accessed on 6 October 2021).

- Yokuma, J. Thomas, and Scott J. Armstrong. 1995. Beyond Accuracy: Comparison of Criteria Used to Select Forecasting Methods. International Journal of Forecasting 11: 591–97. [Google Scholar] [CrossRef][Green Version]

- Zarnowitz, Victor. 1991. Has Macro-Forecasting Failed? NBER Working Paper No. 3867. Cambridge: The National Bureau of Economic Research. [Google Scholar]

- Zarnowitz, Victor, and Louis A. Lambros. 1987. Consensus and uncertainty in economic prediction. Journal of Political Economy 95: 591–621. [Google Scholar] [CrossRef]

| Phase | Aim/s | Guideline Questions |

|---|---|---|

| Phase 1: Designing the review | Research questions identified. Overall review approach considered. Research strategy established to identify relevant literature. |

|

| Phase 2: Conducting the review | Articles selected, classified, and described. |

|

| Phase 3: Analysis | Content analysis of selected research articles performed. |

|

| Phase 4: Writing the review | Literature review reported and structured. |

|

| Inclusion Criteria | Description |

|---|---|

| Theoretical framework | Include all articles that offer a contribution to the development of a theoretical framework on the research topic. |

| Methodology development | Include all studies that contribute to the development of methodology in the field of the analysis of economic forecasts accuracy. |

| Empirical findings | Include all articles that contribute to the application to empirical research of the methods analyzed. |

| Document Type | Number of Research Work | % of the Total |

|---|---|---|

| Articles | 340 | 84.4 |

| Proceeding Papers | 51 | 12.7 |

| Review Articles | 6 | 1.5 |

| Book Chapters | 2 | 0.5 |

| Editorial Materials | 2 | 0.5 |

| Early Access | 1 | 0.2 |

| Reprints | 1 | 0.2 |

| Total | 403 | 100.0 |

| Serial Number | Title of the Journal | Number of Articles | Average Number of Citations Per Year from the Web of Science Core Collection |

|---|---|---|---|

| 1 | International Journal of Forecasting | 15 | 28.4 |

| 2 | Journal of Forecasting | 13 | 10.04 |

| 3 | Economic Modelling | 8 | 19.88 |

| 4 | Energies | 8 | 10.63 |

| 5 | Journal of Business & Economic Statistics | 8 | 148.62 |

| 6 | Romanian Journal of Economic Forecasting | 6 | 1.1 |

| 7 | Applied Energy | 5 | 46.25 |

| 8 | Empirical Economics | 5 | 2.21 |

| 9 | Energy | 5 | 12 |

| 10 | Journal of Econometrics | 5 | 9 |

| 11 | Journal of Empirical Finance | 4 | 2.6 |

| 12 | Quantitative Finance | 4 | 3.4 |

| 13 | Technological Forecasting and Social Change | 4 | 4.55 |

| 14 | Computational Economics | 3 | 3 |

| 15 | European Journal of Operational Research | 3 | 6 |

| 16 | Journal of Applied Econometrics | 3 | 9.8 |

| 17 | Journal of Banking Finance | 3 | 4.71 |

| 18 | Journal of Economic Behaviour Organization | 3 | 9,7 |

| 19 | Journal of Economic Surveys | 3 | 6.32 |

| 20 | Journal of Financial Economic Policy | 3 | 1.1 |

| 21 | Renewable Energy | 3 | 11.71 |

| 22 | Review of Accounting Studies | 3 | 4.44 |

| 23 | Science of the Total Environment | 3 | 21.67 |

| 24 | Sustainability | 3 | 7 |

| 25 | Water | 3 | 17.6 |

| No. | Title of the Paper | Author(s) | Number of Citations | Average Number of Citations Per Year | Year of Publication | Journal |

|---|---|---|---|---|---|---|

| 1 | Comparing Predictive Accuracy | Diebold, F.X.; Mariano, R.S. | 3340 | 128.46 | 1995 | Journal of Business & Economic Statistics |

| 2 | Error Measures for Generalizing About Forecasting Methods-Empirical Comparisons | Armstrong, J.S.; Collopy, F. | 637 | 21.97 | 1992 | International Journal of Forecasting |

| 3 | Economic and statistical measures of forecast accuracy | Granger, C.W.J.; Pesaran, M.H. | 183 | 7.96 | 2000 | Journal of Forecasting |

| 4 | Review of guidelines for the use of combined forecasts | de Menezes, L.M.; Bunn, D.W.; Taylor, J.W. | 122 | 5.81 | 2000 | European Journal of Operational Research |

| 5 | A Model-Selection Approach to Assessing The Information in the Term Structure Using Linear-Models and Artificial Neural Networks | Swanson, N.R.; White, H. | 122 | 4.69 | 1995 | Journal of Business & Economic Statistics |

| 6 | Can Internet Search Queries Help to Predict Stock Market Volatility? | Dimpfl, T.; Jank, S. | 113 | 22.60 | 2016 | European Financial Management |

| 7 | Macroeconomic forecasts and microeconomic forecasters | Lamont, O.A. | 99 | 5.21 | 2002 | Journal of Economic Behaviour & Organization |

| 8 | The state of macroeconomic forecasting | Fildes, R.; Stekler, H. | 81 | 4.26 | 2002 | Journal of Macroeconomics |

| 9 | Cointegration and long-horizon forecasting | Christoffersen, P.F.; Diebold, F.X. | 73 | 3.17 | 1998 | Journal of Business & Economic Statistics |

| 10 | How does Google search affect trader positions and crude oil prices? | Li, X.; Ma, J.; Wang, S.; Zhang, X. | 64 | 10.67 | 2015 | Economic Modelling |

| 11 | The M3 competition: Statistical tests of the results | Koning, A.J.; Franses, P.H.; Hibon, M.; Stekler, H.O. | 54 | 3.38 | 2005 | International Journal of Forecasting |

| 12 | Backtesting Parametric Value-at-Risk With Estimation Risk | Escanciano, J.C.; Olmo, J. | 54 | 4.91 | 2010 | Journal of Business & Economic Statistics |

| 13 | Credit Spreads as Predictors of Real-Time Economic Activity: A Bayesian Model-Averaging Approach | Faust, J.; Gilchrist, S.; Wright; J.H.; Zakrajsek, E. | 48 | 6.00 | 2013 | Review of Economics and Statistics |

| 14 | Tests of Equal Predictive Ability With Real-Time Data | Clark, T.E.; McCracken, M.W. | 40 | 3.33 | 2009 | Journal of Business & Economic Statistics |

| 15 | Do investor expectations affect sell-side analysts’ forecast bias and forecast accuracy? | Walther, B.R.; Willis, R.H. | 39 | 4.88 | 2013 | Review of Accounting Studies |

| 16 | Time-varying combinations of predictive densities using nonlinear filtering | Billio, M.; Casarin, R.; Ravazzolo, F.; van Dijk, H.K. | 39 | 4.88 | 2013 | Journal of Econometrics |

| 17 | Forecast Uncertainty-Ex Ante and Ex Post: US Inflation and Output Growth | Clements, M.R. | 37 | 5.29 | 2014 | Journal of Business & Economic Statistics |

| 18 | Improving the predictability of the oil-US stock nexus: The role of macroeconomic variables | Salisu, A.A.; Swaray, R.; Oloko, T.F. | 36 | 18.00 | 2019 | Economic Modelling |

| 19 | The Measurement and Behavior of Uncertainty: Evidence from the ECB Survey of Professional Forecasters | Abel, J.; Rich, R.; Song, J.; Tracy, J. | 29 | 5.80 | 2016 | Journal of Applied Econometrics |

| 20 | Generalised density forecast combinations | Kapetanios, G.; Mitchell, J.; Price, S.; Fawcett, N. | 21 | 3.50 | 2015 | Journal of Econometrics |

| Measure | Symbol | Calculation | Explanation of Variables |

|---|---|---|---|

| Scale-Dependent Measures | |||

| Mean Square Error | MSE | Mean | et denotes the forecast error. It is defined by the equation et = Yt–Ft, where Yt denotes the observation at time t and Ft denotes the forecast of Yt. |

| Root Mean Square Error | RMSE | ||

| Mean Absolute Error | MAE | ||

| Median Absolute Error | MdAE | ||

| Measures Based on Percentage Error | |||

| Mean Absolute Percentage Error | MAPE | The percentage error is the ratio between the forecast error and observation value: . The advantage of percentage errors is scale independency, and therefore, it is a very common measure in the analysis of forecast performance across different datasets. | |

| Median Absolute Percentage Error | MdAPE | ||

| Root Mean Square Percentage Error | RMSPE | ||

| Root Median Square Percentage Error | RMdSPE | ||

| Measures Based on Relative Errors | |||

| Mean Relative Absolute Error | MRAE | rt = et / et* is the relative error, where et* denotes the forecast error obtained from the benchmark method. Usually, the benchmark method is the random walk, where Ft is equal to the last observation. | |

| Median Relative Absolute Error | MdRAE | ||

| Geometric Mean Relative Absolute Error | GMRAE | ||

| Relative Measures | |||

| Relative Mean Absolute Error | ReIMAE | Instead of applying relative errors, the authors use relative measures. In the calculation of ReIMAE (Relative Mean Absolute Error), MAEb denotes the MAE from the benchmark method. When the benchmark method is a random walk, and the forecasts are all one-step forecasts, the relative RMSE is Theil’s U statistic (Theil 1966), sometimes called U2. | |

| U Theil’s statistic (1) | U1 | ||

| U Theil’s statistic (2) | U2 | ||

| Measure | Symbol | Advantages | Limits |

|---|---|---|---|

| Scale-Dependent Measures | |||

| Mean Square Error | MSE | Oftentimes, the RMSE is preferred to the MSE, as it is on the same scale as the data. Historically, the RMSE and MSE have been popular, largely because of their theoretical relevance in statistical modeling. The RMSE is useful as a relative measure to compare forecasts for the same series across different models. The smaller the error, the better the forecasting ability of that model according to the RMSE criterion. The mean absolute error (MAE) is less sensitive to large deviations than the usual squared loss. | Scale-dependent measures are on the same scale as the data. Therefore, none of them are meaningful for assessing a method’s accuracy across multiple series. The sensitivity of the RMSE to outliers is the most common limitation of using of this measure. |

| Root Mean Square Error | RMSE | ||

| Mean Absolute Error | MAE | ||

| Median Absolute Error | MdAE | ||

| Measures Based on Percentage Error | |||

| Mean Absolute Percentage Error | MAPE | Measures based on percentage errors have the advantage because they are scale-independent. Therefore, they are frequently used to compare forecast accuracy between different data series. Additionally, these measures have an easy interpretation. In this group of measures, the Mean Absolute Percentage Error (MAPE) is the most applied measure. | These measures can produce infinite or undefined errors if zero values occur on the data. Moreover, percentage errors can have an extremely skewed distribution when the actual values are close to zero. |

| Median Absolute Percentage Error | MdAPE | ||

| Root Mean Square Percentage Error | RMSPE | ||

| Root Median Square Percentage Error | RMdSPE | ||

| Measures Based on Relative Errors | |||

| Mean Relative Absolute Error | MRAE | Measures based on the relative errors are an alternative to the percentages for the calculation of scale-independent measurements. They imply dividing each error by the error obtained using some benchmark method of forecasting. Since these measures are not scale-dependent, they were recommended by Armstrong and Collopy (1992) and by Fildes (1992) for estimating the forecast accuracy across multiple series. | A deficiency of measures based on relative errors is that the forecast error obtained from the benchmark method can be small. In fact, the relative error has infinite variance because the forecast error obtained from the benchmark method has positive probability density at 0. When the errors are small, as they can be with intermittent series, use of the naïve method as a benchmark is no longer possible because it would involve division by zero. |

| Median Relative Absolute Error | MdRAE | ||

| Geometric Mean Relative Absolute Error | GMRAE | ||

| Relative Measures | |||

| Relative Mean Absolute Error | ReIMAE | An advantage of these methods is their interpretability. For example, relative MAE measures the improvement possible from the proposed forecast method relative to the benchmark forecast method. When RelMAE < 1, the proposed method is better than the benchmark method and when RelMAE > 1, the proposed method is worse than the benchmark method. | These measures require several forecasts on the same series to enable a MAE (or MSE) to be computed. One common situation where it is not possible to use such measures is where one is measuring the out-of-sample forecast accuracy at a single forecast horizon across multiple series. It makes no sense to compute the MAE across series (due to their different scales). |

| U Theil’s statistic (1) | U1 | ||

| U Theil’s statistic (2) | U2 | ||

| Subject of Research | Title of the Paper | Author/s | Year of Publication | Empirical Findings |

|---|---|---|---|---|

| Evaluation of economic forecast accuracy | The evaluation of economic forecasts | Mincer, Jacob, and Victor Zarnowitz | 1969 | Forecast accuracy decreases with an increase in length of the predictive span. |

| Accuracy of Forecasting: An Empirical Investigation | Makridakis, Spyros, and Michele Hibon | 1979 | Simpler methods perform well compared to the more complex and statistically sophisticated ARMA models. | |

| Comparing exchange rate forecasting models: Accuracy versus profitability | Boothe, Paul, and Debra Glassman | 1987 | The highest economic forecast accuracy is realized applying simple time-series models such as the random walk. | |

| The accuracy of economic forecasts related to GDP, unemployment, and inflation | Forecast smoothing and the optimal underutilization of information at the Federal Reserve | Scotese, Carol A. | 1994 | Testing forecasts for real GNP and inflation do not confirm significant biases in either the real GNP or inflation forecasts. |

| An Evaluation of the Forecasts of the Federal Reserve: A Pooled Approach | Clements, Michael P., Fred Joutz, and Herman O. Stekler. | 2007 | There is evidence of systematic bias and of forecast smoothing of the inflation forecasts. | |

| Introduction to “The future of macroeconomic forecasting” | Heilemann, Ullrich, and Herman Stekler | 2007 | Unsuitable forecasting methods and unsuitable expectations regarding the degree of performance are the most important reasons for the lack of accuracy in G7 macroeconomic predictions. | |

| One Model and Various Experts: Evaluating Dutch Macroeconomic Forecasts | Franses, Philip Hans, Henk C. Kranendonk, and Debby Lanser | 2011 | The model forecasts are biased for a range of variables, and expert forecasts are far more accurate than the model forecasts, particularly when the forecast horizon is short. | |

| Strategies to Improve the Accuracy of Macroeconomic Forecasts in United States of America | Bratu, Mihaela | 2012 | The Holt–Winters method offers more accurate forecasts for inflation in the US when the initial expectations are provided by the Survey of Professional Forecasters. | |

| Comparing the accuracy of various econometric forecasting models | The Accuracy Assessment of Macroeconomic Forecasts based on Econometric Models for Romania | Simionescu, Mihaela | 2014a | Comparing the accuracy of various econometric forecasting models (AR, VAR, and VARMA), it is concluded that vector autoregressive moving average (VARMA) models generate the most accurate forecasts. |

| Testing of a tendency to overestimate economic growth | Lessons from OECD Forecasts during and after the Financial Crisis | Lewis, Christine, and Nigel Pain | 2014 | It is confirmed that economic growth is repeatedly overestimated in the projections, which failed to anticipate the extent of the slowdown and, later, the weak pace of the economic recovery. |

| Evaluating the economic forecasts of FOMC members | Sheng, Xuguang | 2015 | The analysis of economic forecast accuracy concerning real GDP, inflation, and unemployment rates made by the Federal Open Market Committee confirmed a tendency to underpredict real GDP and overpredict inflation and unemployment rates. | |

| The accuracy of economic forecasts for the exchange rate | Comparing forecast performance of exchange rate models | Lam, Lillie, Laurence Fung, and Ip-wing Yu | 2008 | Exchange rate predictability is explored using different theoretical and empirical models, such as the purchasing power parity, uncovered interest rate parity, and sticky-price monetary models, models based on the Bayesian model averaging technique, and a combination of these. The forecast based on combined models is more accurate than the forecast that uses only one model. |

| Measuring forecast performance of ARMA & ARFIMA models: An application to US Dollar/UK pound foreign exchange rate | Shittu, Olanrewaju, I., and OlaOluwa S. Yaya | 2009 | Analyzing the forecast accuracy of ARIMA and ARFIMA models using the example of the US dollar/UK pound foreign exchange rate, it was concluded that estimated forecast values from the ARFIMA model is more realistic and closely reflects the current economic reality. | |

| The effects of business cycles on the accuracy of economic forecasts | How Accurate are Private Sector Forecasts? Cross-country Evidence from Consensus Forecasts of Output Growth | Loungani, Prakash | 2001 | Forecasts for recessions are subject to a large systematic forecast error. |

| Can the Fed Predict the State of the Economy? | Sinclair, Tara M., Herman O. Stekler, and Fred Joutz | 2010 | The Federal Reserve’s Greenbook projections overestimate the annual rate of change in real GDP in periods of recession and underestimate it in periods of economic growth. | |

| Systematic Errors in Growth Expectations over the Business Cycle | Dovern, Jonas, and Nils Jannsen | 2017 | Forecasts for recessions are subject to a large negative systematic forecast error, while forecasts for recoveries are subject to a positive systematic forecast error. | |

| How well do economists forecast recessions? | An, Zidong, Joao Tovar Jalles, and Parkash Loungani | 2018 | Forecasts are revised much more quickly in periods of recession than in non-recession periods, but not rapidly enough to be able to avoid large forecast errors. | |

| Comparison in forecast accuracy among advanced and emerging economies | Information rigidities: Comparing average and individual forecasts for a large international panel | Dovern, Jonas, Urlich Fritsche, Prakash Loungani, and Natalia Tamirisa | 2015 | There are significant discrepancies in forecast performance among advanced and emerging economies, particularly in terms of forecast accuracy. |

| How to improve the predictability of the oil-US stock nexus | Improving the predictability of the oil–US stock nexus: The role of macroeconomic variables | Salisu, Afees A., Raymond Swaray and Tirimisiyu F. Oloko | 2019 | ‘It is important to pre-test the predictors for persistence, endogeneity, and conditional heteroscedasticity, particularly when modeling with high-frequency series’. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Buturac, G. Measurement of Economic Forecast Accuracy: A Systematic Overview of the Empirical Literature. J. Risk Financial Manag. 2022, 15, 1. https://doi.org/10.3390/jrfm15010001

Buturac G. Measurement of Economic Forecast Accuracy: A Systematic Overview of the Empirical Literature. Journal of Risk and Financial Management. 2022; 15(1):1. https://doi.org/10.3390/jrfm15010001

Chicago/Turabian StyleButurac, Goran. 2022. "Measurement of Economic Forecast Accuracy: A Systematic Overview of the Empirical Literature" Journal of Risk and Financial Management 15, no. 1: 1. https://doi.org/10.3390/jrfm15010001

APA StyleButurac, G. (2022). Measurement of Economic Forecast Accuracy: A Systematic Overview of the Empirical Literature. Journal of Risk and Financial Management, 15(1), 1. https://doi.org/10.3390/jrfm15010001