Deep Reinforcement Learning in Agent Based Financial Market Simulation

Abstract

1. Introduction

- Proposal and verification of a deep reinforcement learning framework that learns meaningful trading strategies in agent based artificial market simulations

- Effective engineering of deep reinforcement learning networks, market features, action space, and reward function.

2. Related Work

2.1. Stock Trading Strategies

2.2. Deep Reinforcement Learning

2.3. Financial Market Simulation

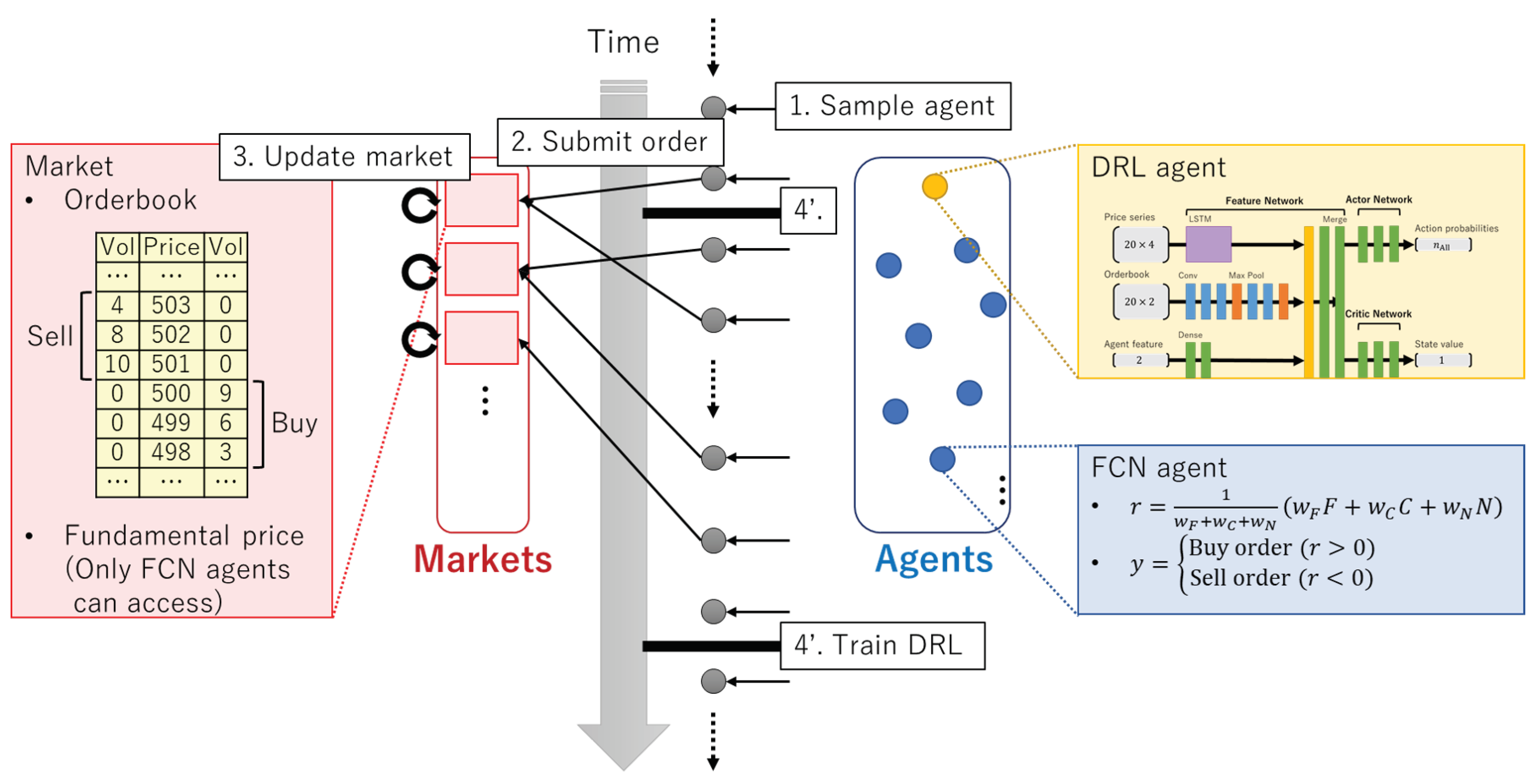

3. Simulation Framework Overview

4. Simulator Description

4.1. Markets

- Limit order (LMT)

- Market order (MKT)

- Cancel order (CXL)

- Tick size

- Initial fundamental price

- Fundamental volatility

4.2. Agents

- Deep Reinforcement Learning (DRL) agent

- Fundamental-Chart-Noise (FCN) agent

4.3. Simulation Progress

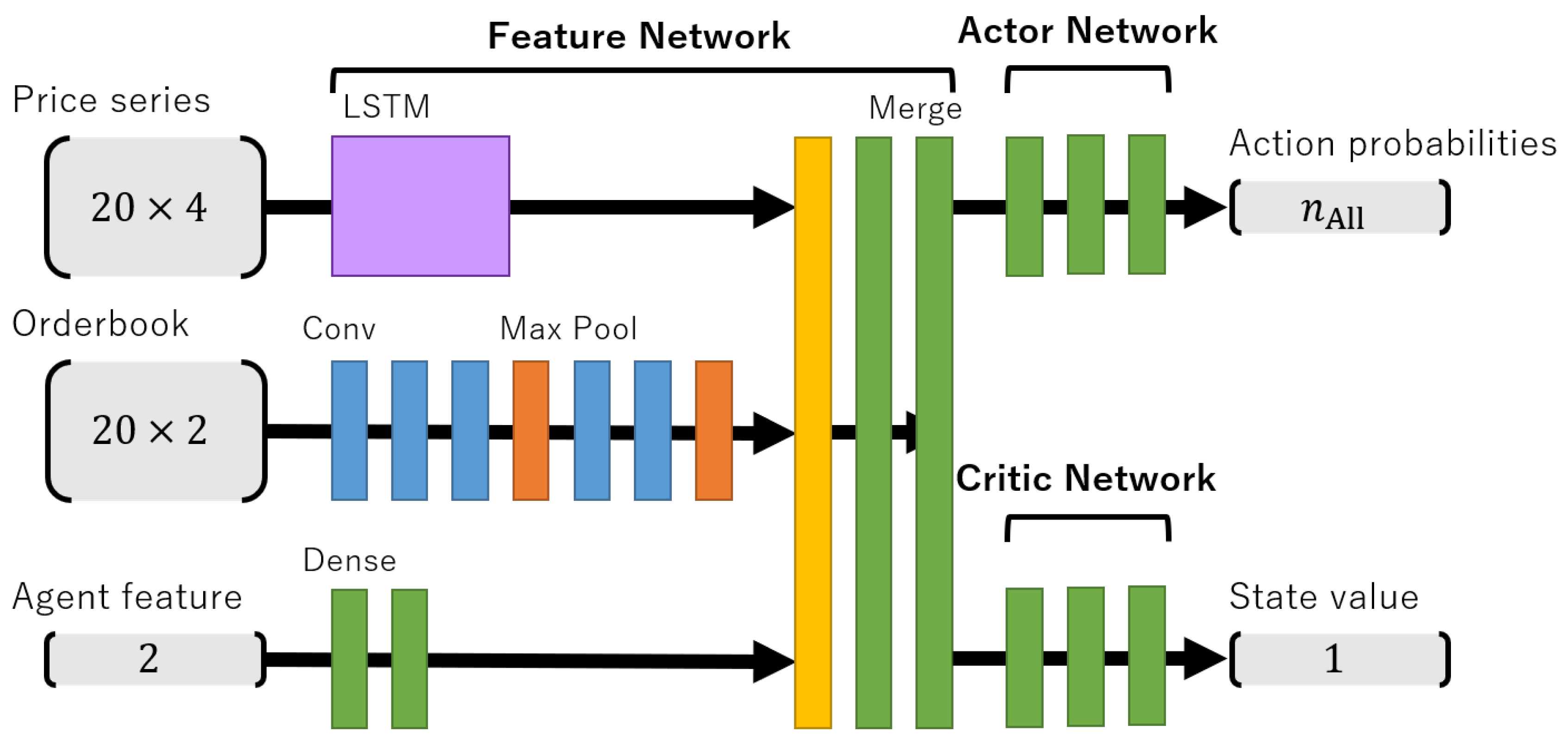

5. Model Description

5.1. Deep Reinforcement Learning Model

5.2. Feature Engineering

5.3. Actor Network

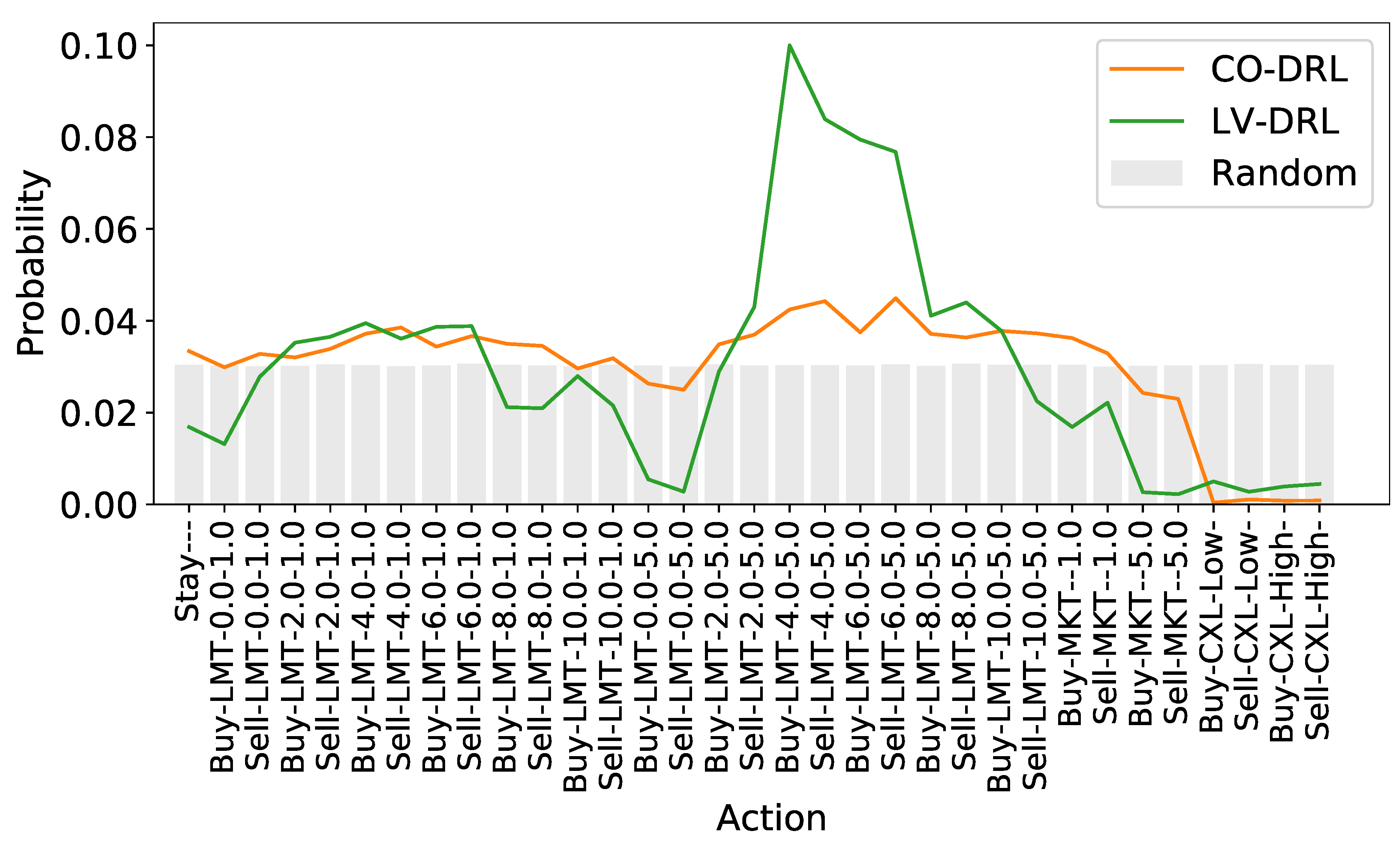

- Side: [Buy, Sell, Stay]

- Market: [0, 1, ...]

- Type: [LMT, MKT, CXL]

- Price: [0, 2, 4, 6, 8, 10]

- Volume: [1, 5]

5.4. Reward Calculation

5.5. Network Architecture

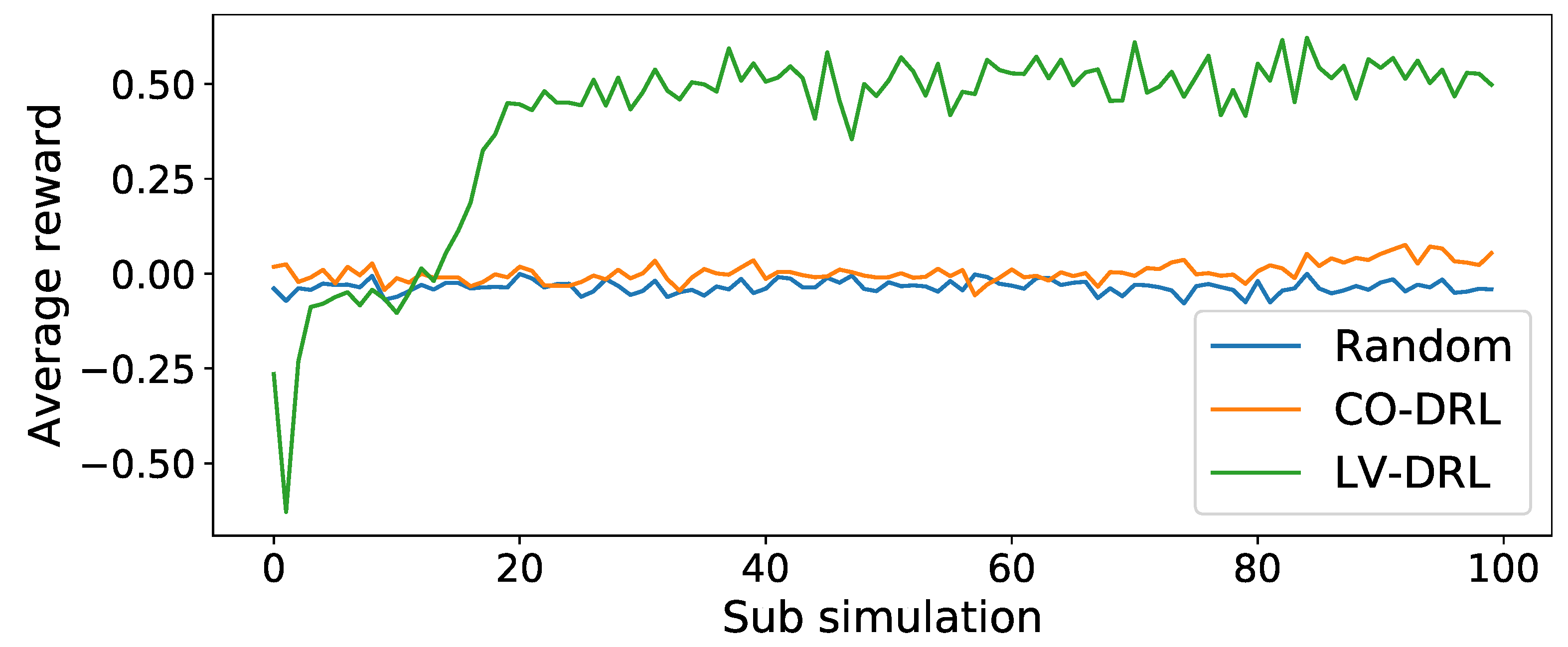

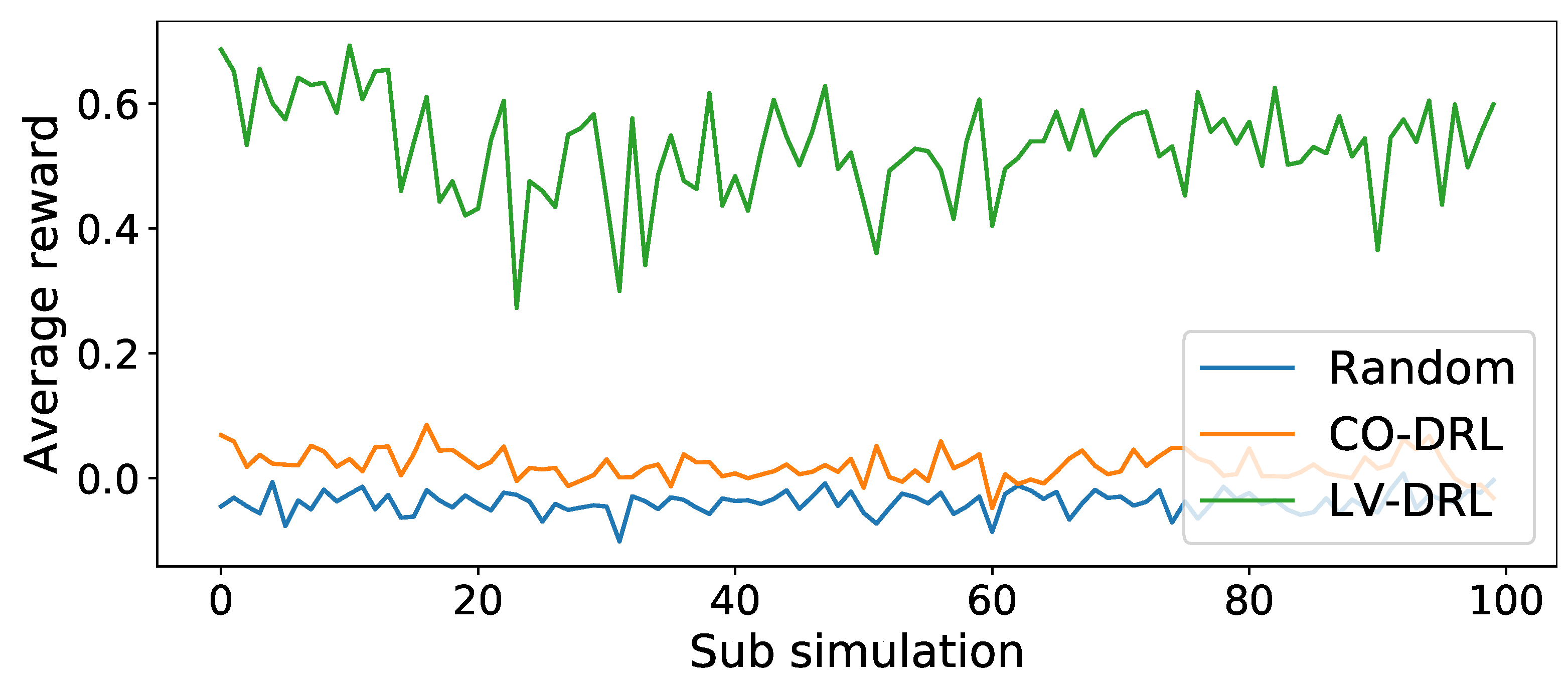

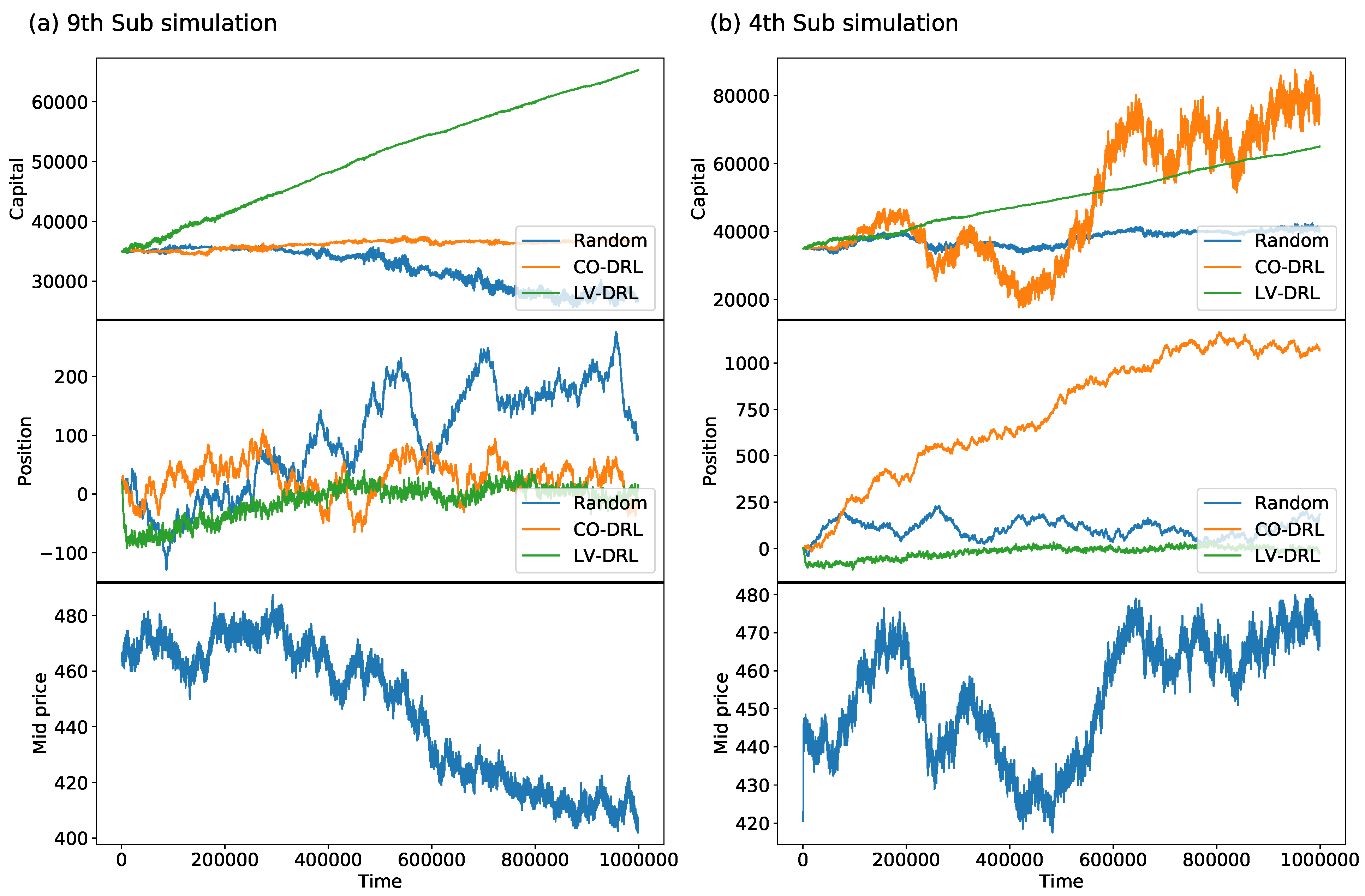

6. Experiments

- Whether the model can learn trading strategies on the simulator

- Whether the learned strategies are valid

- Average reward

- Sharpe ratio

- Maximum drawdown

6.1. Simulator Settings

- Tick size

- Initial fundamental price

- Fundamental volatility

- Number of agents

- Scale of exponential distribution for sampling fundamental weights

- Scale of exponential distribution for sampling chart weights

- Scale of exponential distribution for sampling noise weights

- Time window size

- Order margin

- Initial inventory of DRL agent

- Initial cash amount of DRL agent

6.2. Results

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Aaker, David A., and Robert Jacobson. 1994. The financial information content of perceived quality. Journal of Marketing Research 31: 191–201. [Google Scholar] [CrossRef]

- Arthur, W. Brian. 1999. Complexity and the economy. Science 284: 107–9. [Google Scholar] [CrossRef] [PubMed]

- Bailey, David H., Jonathan Borwein, Marcos Lopez de Prado, and Qiji Jim Zhu. 2014. Pseudo-mathematics and financial charlatanism: The effects of backtest overfitting on out-of-sample performance. Notices of the American Mathematical Society 61: 458–71. [Google Scholar] [CrossRef]

- Bao, Wei, Jun Yue, and Yulei Rao. 2017. A deep learning framework for financial time series using stacked autoencoders and long-short term memory. PLoS ONE 12: e0180944. [Google Scholar] [CrossRef] [PubMed]

- Brewer, Paul, Jaksa Cvitanic, and Charles R Plott. 2013. Market microstructure design and flash crashes: A simulation approach. Journal of Applied Economics 16: 223–50. [Google Scholar] [CrossRef]

- Chiarella, Carl, and Giulia Iori. 2002. A simulation analysis of the microstructure of double auction markets. Quantitative Finance 2: 346–53. [Google Scholar] [CrossRef]

- Chong, Eunsuk, Chulwoo Han, and Frank C Park. 2017. Deep learning networks for stock market analysis and prediction: Methodology, data representations, and case studies. Expert Systems with Applications 83: 187–205. [Google Scholar] [CrossRef]

- Dayan, Peter, and Bernard W Balleine. 2002. Reward, motivation, and reinforcement learning. Neuron 36: 285–98. [Google Scholar] [CrossRef]

- Deng, Yue, Feng Bao, Youyong Kong, Zhiquan Ren, and Qionghai Dai. 2016. Deep direct reinforcement learning for financial signal representation and trading. IEEE Transactions on Neural Networks and Learning Systems 28: 653–64. [Google Scholar] [CrossRef]

- Donier, Jonathan, Julius Bonart, Iacopo Mastromatteo, and J.-P. Bouchaud. 2015. A fully consistent, minimal model for non-linear market impact. Quantitative Fnance 15: 1109–21. [Google Scholar] [CrossRef]

- Eberlein, Ernst, and Ulrich Keller. 1995. Hyperbolic distributions in finance. Bernoulli 1: 281–99. [Google Scholar] [CrossRef]

- Fama, Eugene F., and Kenneth R. French. 1993. Common risk factors in the returns on stocks and bonds. Journal of Financial Economics 33: 3–56. [Google Scholar] [CrossRef]

- Fischer, Thomas, and Christopher Krauss. 2018. Deep learning with long short-term memory networks for financial market predictions. European Journal of Operational Research 270: 654–69. [Google Scholar] [CrossRef]

- Friedman, Daniel, and John Rust. 1993. The Double Auction Market: Institutions, Theories and Evidence. New York: Routledge. [Google Scholar]

- Gu, Shixiang, Ethan Holly, Timothy Lillicrap, and Sergey Levine. 2017. Deep reinforcement learning for robotic manipulation with asynchronous off-policy updates. Paper Presented at the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, May 29–June 3; pp. 3389–96. [Google Scholar]

- Gupta, Jayesh K., Maxim Egorov, and Mykel Kochenderfer. 2017. Cooperative multi-agent control using deep reinforcement learning. Paper Presented at the International Conference on Autonomous Agents and Multiagent Systems, São Paulo, Brazil, May 8–12; pp. 66–83. [Google Scholar]

- Harvey, Andrew C., and Albert Jaeger. 1993. Detrending, stylized facts and the business cycle. Journal of Applied Econometrics 8: 231–47. [Google Scholar] [CrossRef]

- Hessel, Matteo, Joseph Modayil, Hado Van Hasselt, Tom Schaul, Georg Ostrovski, Will Dabney, Dan Horgan, Bilal Piot, Mohammad Azar, and David Silver. 2018. Rainbow: Combining improvements in deep reinforcement learning. Paper Presented at the Thirty-Second AAAI Conference on Artificial Intelligence, New Orleans, LO, USA, February 2–7. [Google Scholar]

- Hill, Paula, and Robert Faff. 2010. The market impact of relative agency activity in the sovereign ratings market. Journal of Business Finance & Accounting 37: 1309–47. [Google Scholar]

- Hirano, Masanori, Kiyoshi Izumi, Hiroyasu Matsushima, and Hiroki Sakaji. 2019. Comparison of behaviors of actual and simulated hft traders for agent design. Paper Presented at the 22nd International Conference on Principles and Practice of Multi-Agent Systems, Torino, Italy, October 28–31. [Google Scholar]

- Hochreiter, Sepp, and Jürgen Schmidhuber. 1997. Long short-term memory. Neural Computation 9: 1735–80. [Google Scholar] [CrossRef]

- Horgan, Dan, John Quan, David Budden, Gabriel Barth-Maron, Matteo Hessel, Hado Van Hasselt, and David Silver. 2018. Distributed prioritized experience replay. arXiv arXiv:1803.00933. [Google Scholar]

- Jennings, Nicholas R. 1995. Controlling cooperative problem solving in industrial multi-agent systems using joint intentions. Artificial Intelligence 75: 195–240. [Google Scholar] [CrossRef]

- Jiang, Zhengyao, and Jinjun Liang. 2017. Cryptocurrency portfolio management with deep reinforcement learning. Paper Presented at the 2017 Intelligent Systems Conference (IntelliSys), London, UK, September 7–8; pp. 905–13. [Google Scholar]

- Jiang, Zhengyao, Dixing Xu, and Jinjun Liang. 2017. A deep reinforcement learning framework for the financial portfolio management problem. arXiv arXiv:1706.10059. [Google Scholar]

- Kalashnikov, Dmitry, Alex Irpan, Peter Pastor, Julian Ibarz, Alexander Herzog, Eric Jang, Deirdre Quillen, Ethan Holly, Mrinal Kalakrishnan, Vincent Vanhoucke, and et al. 2018. Qt-opt: Scalable deep reinforcement learning for vision-based robotic manipulation. arXiv arXiv:1806.10293. [Google Scholar]

- Kim, Gew-rae, and Harry M. Markowitz. 1989. Investment rules, margin, and market volatility. Journal of Portfolio Management 16: 45. [Google Scholar] [CrossRef]

- Konda, Vijay R., and John N Tsitsiklis. 2000. Actor-critic algorithms. In Advances in Neural Information Processing Systems. Cambridge: MIT Press, pp. 1008–14. [Google Scholar]

- Kraus, Sarit. 1997. Negotiation and cooperation in multi-agent environments. Artificial Intelligence 94: 79–97. [Google Scholar] [CrossRef]

- Ladley, Dan. 2012. Zero intelligence in economics and finance. The Knowledge Engineering Review 27: 273–86. [Google Scholar] [CrossRef]

- Lahmiri, Salim, and Stelios Bekiros. 2019. Crypto. Chaos, Solitonscurrency Forecasting with Deep Learning Chaotic Neural Networks & Fractals 118: 35–40. [Google Scholar]

- Lahmiri, Salim, and Stelios Bekiros. 2020. Intelligent forecasting with machine learning trading systems in chaotic intraday bitcoin market. Chaos, Solitons & Fractals 133: 109641. [Google Scholar]

- Lample, Guillaume, and Devendra Singh Chaplot. 2017. Playing fps games with deep reinforcement learning. Paper Presented at the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, February 4–9. [Google Scholar]

- LeBaron, Blake. 2001. A builder’s guide to agent-based financial markets. Quantitative Finance 1: 254–61. [Google Scholar] [CrossRef]

- LeBaron, Blake. 2002. Building the santa fe artificial stock market. School of International Economics and Finance, Brandeis, 1117–47. [Google Scholar]

- Lee, Kimin, Honglak Lee, Kibok Lee, and Jinwoo Shin. 2018. Training confidence-calibrated classifiers for detecting out-of-distribution samples. Paper Presented at the International Conference on Learning Representations, Vancouver, BC, Canada, April 30–May 3. [Google Scholar]

- Leong, Kelvin, and Anna Sung. 2018. Fintech (financial technology): What is it and how to use technologies to create business value in fintech way? International Journal of Innovation, Management and Technology 9: 74–78. [Google Scholar] [CrossRef]

- Levine, Ross, and Asli Demirgüç-Kunt. 1999. Stock Market Development and Financial Intermediaries: Stylized Facts. Washington, DC: The World Bank. [Google Scholar]

- Levy, Moshe, Haim Levy, and Sorin Solomon. 1994. A microscopic model of the stock market: Cycles, booms, and crashes. Economics Letters 45: 103–11. [Google Scholar] [CrossRef]

- Li, Jiwei, Will Monroe, Alan Ritter, Dan Jurafsky, Michel Galley, and Jianfeng Gao. 2016. Deep reinforcement learning for dialogue generation. Paper Presented at the 2016 Conference on Empirical Methods in Natural Language Processing, Austin, TX, USA, November 1–5; pp. 1192–202. [Google Scholar] [CrossRef]

- Littman, Michael L. 2001. Value-function reinforcement learning in markov games. Cognitive Systems Research 2: 55–66. [Google Scholar] [CrossRef]

- Long, Wen, Zhichen Lu, and Lingxiao Cui. 2019. Deep learning-based feature engineering for stock price movement prediction. Knowledge-Based Systems 164, 163–73. [Google Scholar] [CrossRef]

- Lux, Thomas, and Michele Marchesi. 1999. Scaling and criticality in a stochastic multi-agent model of a financial market. Nature 397: 498–500. [Google Scholar] [CrossRef]

- Magdon-Ismail, Malik, Amir F. Atiya, Amrit Pratap, and Yaser S. Abu-Mostafa. 2004. On the maximum drawdown of a brownian motion. Journal of Applied Probability 41: 147–61. [Google Scholar] [CrossRef]

- Meng, Terry Lingze, and Matloob Khushi. 2019. Reinforcement learning in financial markets. Data 4: 110. [Google Scholar] [CrossRef]

- Mizuta, Takanobu. 2016. A Brief Review of Recent Artificial Market Simulation (Agent-based Model) Studies for Financial Market Regulations and/or Rules. Available online: https://ssrn.com/abstract=2710495 (accessed on 8 April 2020).

- Mnih, Volodymyr, Adria Puigdomenech Badia, Mehdi Mirza, Alex Graves, Timothy Lillicrap, Tim Harley, David Silver, and Koray Kavukcuoglu. 2016. Asynchronous methods for deep reinforcement learning. Paper Presented at the International Conference on Machine Learning, New York, NY, USA, June 19–24; pp. 1928–37. [Google Scholar]

- Mnih, Volodymyr, Koray Kavukcuoglu, David Silver, Alex Graves, Ioannis Antonoglou, Daan Wierstra, and Martin Riedmiller. 2013. Playing atari with deep reinforcement learning. arXiv arXiv:1312.5602. [Google Scholar]

- Mnih, Volodymyr, Koray Kavukcuoglu, David Silver, Andrei A. Rusu, Joel Veness, Marc G. Bellemare, Alex Graves, Martin Riedmiller, Andreas K. Fidjeland, Georg Ostrovski, and et al. 2015. Human-level control through deep reinforcement learning. Nature 518: 529–33. [Google Scholar] [CrossRef]

- Muranaga, Jun, and Tokiko Shimizu. 1999. Market microstructure and market liquidity. Bank for International Settlements 11: 1–28. [Google Scholar]

- Nair, Arun, Praveen Srinivasan, Sam Blackwell, Cagdas Alcicek, Rory Fearon, Alessandro De Maria, Vedavyas Panneershelvam, Mustafa Suleyman, Charles Beattie, Stig Petersen, and et al. 2015. Massively parallel methods for deep reinforcement learning. arXiv arXiv:1507.04296. [Google Scholar]

- Nelson, Daniel B. 1991. Conditional heteroskedasticity in asset returns: A new approach. Econometrica: Journal of the Econometric Society 59: 347–70. [Google Scholar] [CrossRef]

- Nevmyvaka, Yuriy, Yi Feng, and Michael Kearns. 2006. Reinforcement learning for optimized trade execution. Paper Presented at the 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, June 25–29; pp. 673–80. [Google Scholar]

- Pan, Xinlei, Yurong You, Ziyan Wang, and Cewu Lu. 2017. Virtual to real reinforcement learning for autonomous driving. arXiv arXiv:1704.03952. [Google Scholar]

- Raberto, Marco, Silvano Cincotti, Sergio M. Focardi, and Michele Marchesi. 2001. Agent-based simulation of a financial market. Physica A: Statistical Mechanics and its Applications 299: 319–27. [Google Scholar] [CrossRef]

- Raman, Natraj, and Jochen L Leidner. 2019. Financial market data simulation using deep intelligence agents. Paper Presented at the International Conference on Practical Applications of Agents and Multi-Agent Systems, Ávila, Spain, June 26–28; pp. 200–11. [Google Scholar]

- Ritter, Gordon. 2018. Reinforcement learning in finance. In Big Data and Machine Learning in Quantitative Investment. Hoboken: John Wiley & Sons, pp. 225–50. [Google Scholar]

- Rust, John, Richard Palmer, and John H. Miller. 1992. Behaviour of trading automata in a computerized double auction market. Santa Fe: Santa Fe Institute. [Google Scholar]

- Sallab, Ahmad E. L., Mohammed Abdou, Etienne Perot, and Senthil Yogamani. 2017. Deep reinforcement learning framework for autonomous driving. Electronic Imaging 2017: 70–6. [Google Scholar] [CrossRef]

- Samanidou, Egle, Elmar Zschischang, Dietrich Stauffer, and Thomas Lux. 2007. Agent-based models of financial markets. Reports on Progress in Physics 70: 409. [Google Scholar] [CrossRef]

- Schaul, Tom, John Quan, Ioannis Antonoglou, and David Silver. 2015. Prioritized experience replay. arXiv arXiv:1511.05952. [Google Scholar]

- Schulman, John, Sergey Levine, Pieter Abbeel, Michael Jordan, and Philipp Moritz. 2015. Trust region policy optimization. Paper Presented at the International Conference on Machine Learning, Lille, France, July 6–11; pp. 1889–97. [Google Scholar]

- Sensoy, Murat, Lance Kaplan, and Melih Kandemir. 2018. Evidential deep learning to quantify classification uncertainty. In Advances in Neural Information Processing Systems 31. Red Hook: Curran Associates, Inc., pp. 3179–89. [Google Scholar]

- Sharpe, William F. 1994. The sharpe ratio. Journal of Portfolio Management 21: 49–58. [Google Scholar] [CrossRef]

- Silva, Francisco, Brígida Teixeira, Tiago Pinto, Gabriel Santos, Zita Vale, and Isabel Praça. 2016. Generation of realistic scenarios for multi-agent simulation of electricity markets. Energy 116: 128–39. [Google Scholar] [CrossRef]

- Silver, David, Aja Huang, Chris J. Maddison, Arthur Guez, Laurent Sifre, George Van Den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Veda Panneershelvam, Marc Lanctot, and et al. 2016. Mastering the game of go with deep neural networks and tree search. Nature 529: 484. [Google Scholar] [CrossRef]

- Silver, David, Guy Lever, Nicolas Heess, Thomas Degris, Daan Wierstra, and Martin Riedmiller. 2014. Deterministic policy gradient algorithms. Paper Presented at the 31st International Conference on Machine Learning, Beijing, China, June 22–24. [Google Scholar]

- Silver, David, Julian Schrittwieser, Karen Simonyan, Ioannis Antonoglou, Aja Huang, Arthur Guez, Thomas Hubert, Lucas Baker, Matthew Lai, Adrian Bolton, and et al. 2017. Mastering the game of go without human knowledge. Nature 550: 354–59. [Google Scholar] [CrossRef]

- Silver, Nate. 2012. The Signal and the Noise: Why So Many Predictions Fail-but Some Don’t. New York: Penguin Publishing Group. [Google Scholar]

- Sironi, Paolo. 2016. FinTech Innovation: From Robo-Advisors to Goal Based Investing and Gamification. Hoboken: John Wiley & Sons. [Google Scholar]

- Solomon, Sorin, Gerard Weisbuch, Lucilla de Arcangelis, Naeem Jan, and Dietrich Stauffer. 2000. Social percolation models. Physica A: Statistical Mechanics and its Applications 277: 239–47. [Google Scholar]

- Spooner, Thomas, John Fearnley, Rahul Savani, and Andreas Koukorinis. 2018. Market making via reinforcement learning. Paper Presented at the 17th International Conference on Autonomous Agents and MultiAgent Systems, Stockholm, Sweden, July 10–15; pp. 434–42. [Google Scholar]

- Stauffer, Dietrich. 2001. Percolation models of financial market dynamics. Advances in Complex Systems 4: 19–27. [Google Scholar] [CrossRef]

- Streltchenko, Olga, Yelena Yesha, and Timothy Finin. 2005. Multi-agent simulation of financial markets. In Formal Modelling in Electronic Commerce. Berlin and Heidelberg: Springer, pp. 393–419. [Google Scholar]

- Sutton, Richard S., and Andrew G. Barto. 1998. Introduction to Reinforcement Learning. Cambridge: MIT Press, vol. 2. [Google Scholar]

- Sutton, Richard S., and Andrew G. Barto. 2018. Reinforcement Learning: An Introduction. Cambridge: MIT Press. [Google Scholar]

- Sutton, Richard S, David A McAllester, Satinder P Singh, and Yishay Mansour. 2000. Policy gradient methods for reinforcement learning with function approximation. In Advances in Neural Information Processing Systems. Cambridge: MIT Press, pp. 1057–63. [Google Scholar]

- Tashiro, Daigo, Hiroyasu Matsushima, Kiyoshi Izumi, and Hiroki Sakaji. 2019. Encoding of high-frequency order information and prediction of short-term stock price by deep learning. Quantitative Finance 19: 1499–506. [Google Scholar] [CrossRef]

- Tsantekidis, Avraam, Nikolaos Passalis, Anastasios Tefas, Juho Kanniainen, Moncef Gabbouj, and Alexandros Iosifidis. 2017. Forecasting stock prices from the limit order book using convolutional neural networks. Paper Presented at the 2017 IEEE 19th Conference on Business Informatics (CBI), Thessaloniki, Greece, July 24–26; pp. 7–12. [Google Scholar]

- Van Hasselt, Hado, Arthur Guez, and David Silver. 2016. Deep reinforcement learning with double q-learning. Paper Presented at the Thirtieth AAAI conference on artificial intelligence, Phoenix, AZ, USA, February 12–17. [Google Scholar]

- Vinyals, Oriol, Igor Babuschkin, Wojciech M Czarnecki, Michaël Mathieu, Andrew Dudzik, Junyoung Chung, David H Choi, Richard Powell, Timo Ewalds, Petko Georgiev, and et al. 2019. Grandmaster level in starcraft ii using multi-agent reinforcement learning. Nature 575: 350–54. [Google Scholar] [CrossRef] [PubMed]

- Vytelingum, Perukrishnen, Rajdeep K. Dash, Esther David, and Nicholas R. Jennings. 2004. A risk-based bidding strategy for continuous double auctions. Paper Presented at the 16th Eureopean Conference on Artificial Intelligence, ECAI’2004, Valencia, Spain, August 22–27, vol. 16, p. 79. [Google Scholar]

- Wang, Ziyu, Tom Schaul, Matteo Hessel, Hado Van Hasselt, Marc Lanctot, and Nando De Freitas. 2015. Dueling network architectures for deep reinforcement learning. arXiv arXiv:1511.06581. [Google Scholar]

- Zarkias, Konstantinos Saitas, Nikolaos Passalis, Avraam Tsantekidis, and Anastasios Tefas. 2019. Deep reinforcement learning for financial trading using price trailing. Paper Presented at the 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, England, May 12–17; pp. 3067–71. [Google Scholar]

| Training Simulation | Validation Simulation | |||||

|---|---|---|---|---|---|---|

| Model | ||||||

| Random | ||||||

| CO-DRL | ||||||

| LV-DRL | ||||||

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Maeda, I.; deGraw, D.; Kitano, M.; Matsushima, H.; Sakaji, H.; Izumi, K.; Kato, A. Deep Reinforcement Learning in Agent Based Financial Market Simulation. J. Risk Financial Manag. 2020, 13, 71. https://doi.org/10.3390/jrfm13040071

Maeda I, deGraw D, Kitano M, Matsushima H, Sakaji H, Izumi K, Kato A. Deep Reinforcement Learning in Agent Based Financial Market Simulation. Journal of Risk and Financial Management. 2020; 13(4):71. https://doi.org/10.3390/jrfm13040071

Chicago/Turabian StyleMaeda, Iwao, David deGraw, Michiharu Kitano, Hiroyasu Matsushima, Hiroki Sakaji, Kiyoshi Izumi, and Atsuo Kato. 2020. "Deep Reinforcement Learning in Agent Based Financial Market Simulation" Journal of Risk and Financial Management 13, no. 4: 71. https://doi.org/10.3390/jrfm13040071

APA StyleMaeda, I., deGraw, D., Kitano, M., Matsushima, H., Sakaji, H., Izumi, K., & Kato, A. (2020). Deep Reinforcement Learning in Agent Based Financial Market Simulation. Journal of Risk and Financial Management, 13(4), 71. https://doi.org/10.3390/jrfm13040071