Learning Designers as Expert Evaluators of Usability: Understanding Their Potential Contribution to Improving the Universality of Interface Design for Health Resources

Abstract

1. Introduction

1.1. Expert and Composite Usability Methods as Alternatives to User-Based Evaluations

1.2. Heuristic Evaluations, Cognitive Walkthroughs, Compared to Expert Review

1.3. Expert UEM versus Gold Standard ‘Usability Testing’ Method

1.4. Learning Designers as Double Composite Experts for Usability Evaluations

2. Materials and Methods

2.1. Study Description

2.2. Prototype Development and Overall Evaluation Approach

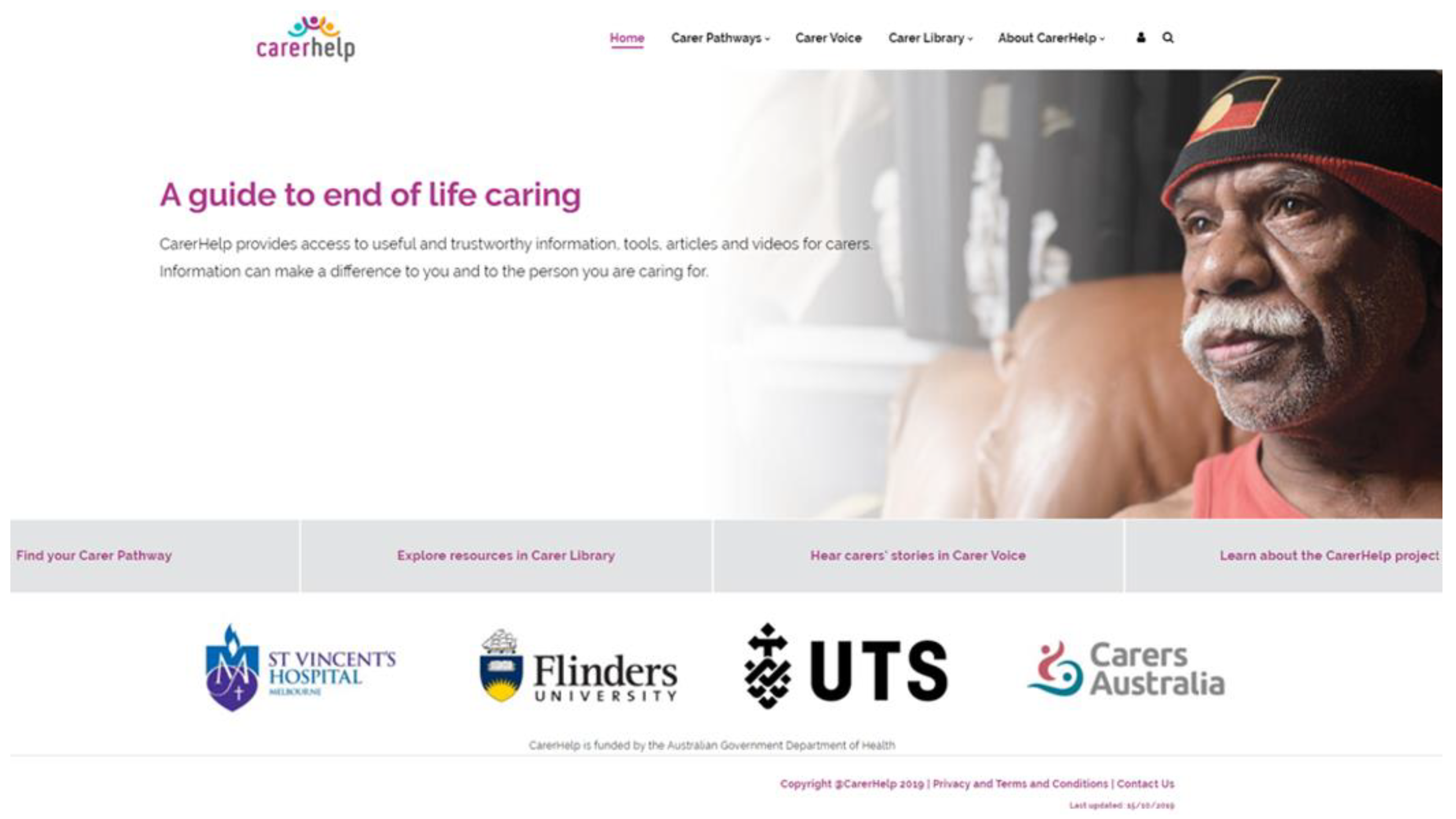

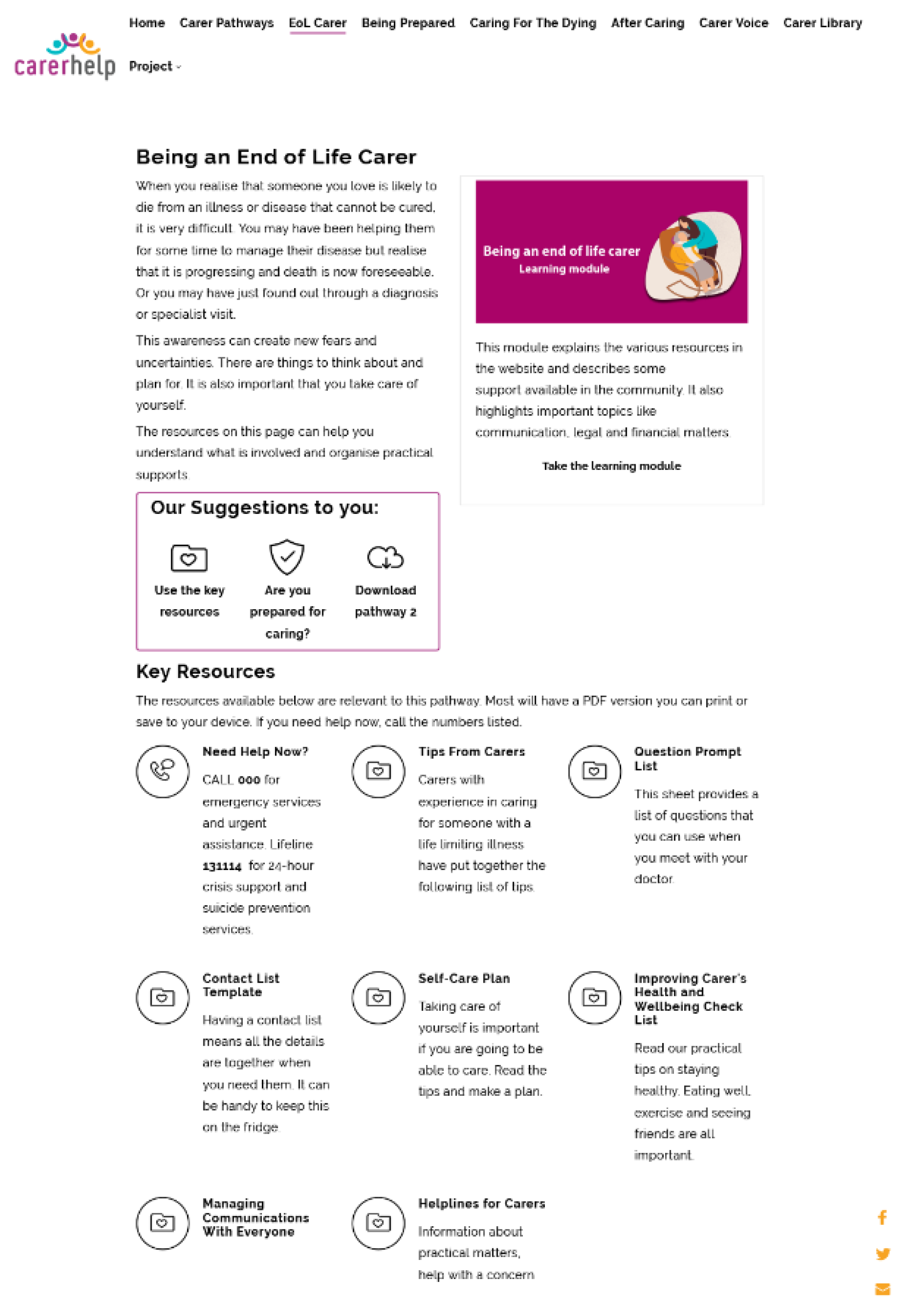

2.3. The Palliative Care Website Prototype

2.4. Expert Review Methodology

2.4.1. Evaluator Group—Palliative Care Healthcare Professionals

2.4.2. Evaluator Group—Digital Experts: Learning Designers (LDs)

2.5. Expert Review Protocol

- An avoider of everything online

- A novice or learner or beginner

- Mostly confident—having intermediate skills

- An expert who is confident in finding and using online information

2.6. User-Based Evaluation Methodology

Usability Testing Participants

2.7. Usability Testing Session Protocol

2.8. Data Analysis

2.8.1. Expert Evaluator Feedback

2.8.2. Meta-Aggregation of Usability Errors across Review Groups

- Content-specific

- Design or content construction

- Information flow

- Navigation

- Embedded resource or activity

- Pedagogy or educational strategies

- Minor typographical or grammatical errors

- Major typographical or grammatical issues

- Frequency of the occurrence within the interface

- Impact of the error (if it occurs) on users to overcome

- The persistence of the error within the interface continuously affects evaluator interactions

3. Results

3.1. Demographics of Expert Reviewers

3.1.1. HCP Demographics

3.1.2. LD Demographics

3.2. RSQ1: Comparative Analysis of Usability Error Data from Expert Evaluators

3.2.1. Analysis of Frequency and Usability Error Types Detected by Expert Reviewers (LD versus HCP)

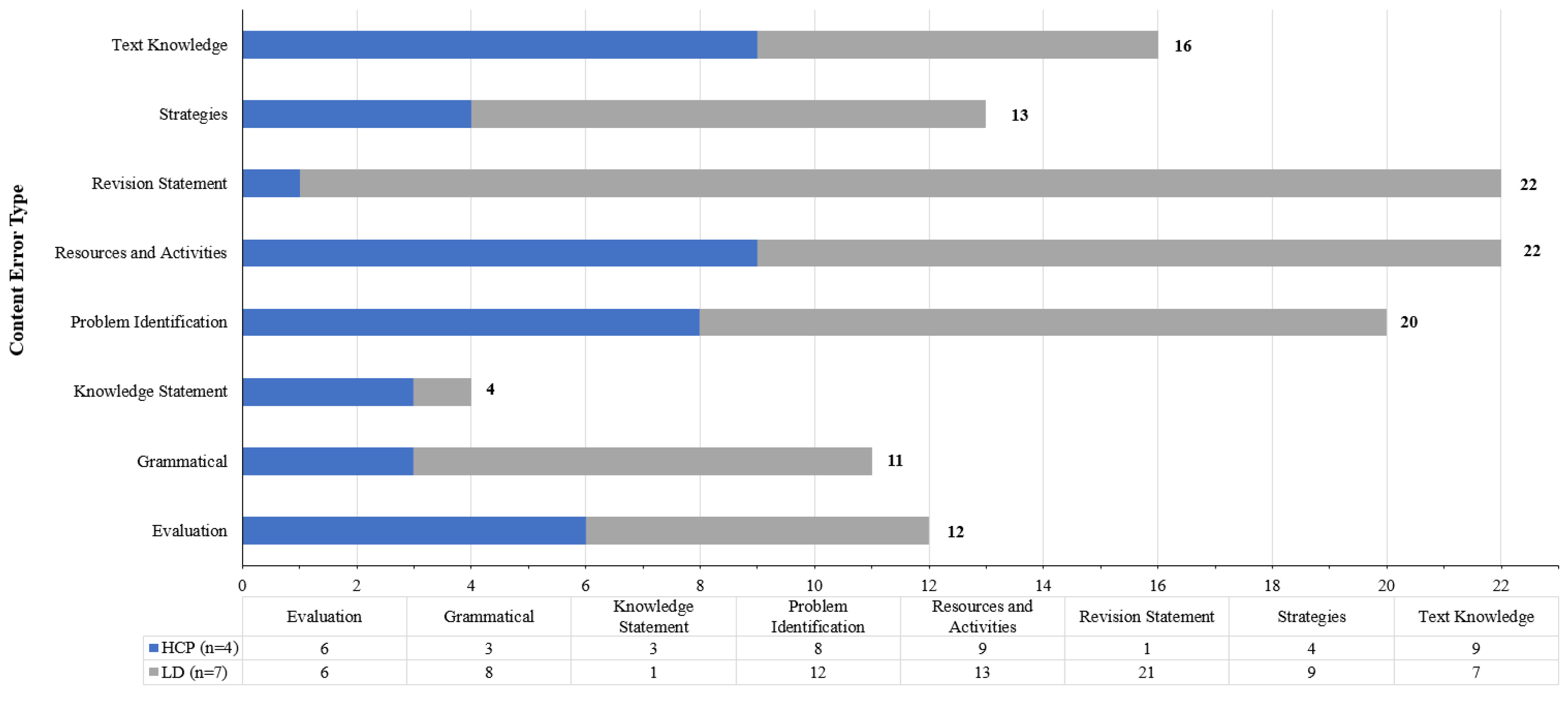

3.2.2. Comparison of Content-Specific Errors Identified by HCP and LD Reviewers

“Need to make sure that this toolkit provides information for carers on how to improve and sustain person’s quality of life when at home” (HCP reviewer),

‘Caring for someone who is dying is the end of a journey of caring …—could be something like: Caring for someone dying also means that your role of carer will come to an end after the person has died. These resources help you to be prepared for dealing with the end-of-life care’ (LD Reviewer),

‘I think language is okay, but there are just too many words’ (HCP Reviewer),

‘…appropriate to also insert a link here to take the users back to the first page, rather than telling them to go to and use the menu (where is that?) to get back to the main page.’ (LD Reviewer).

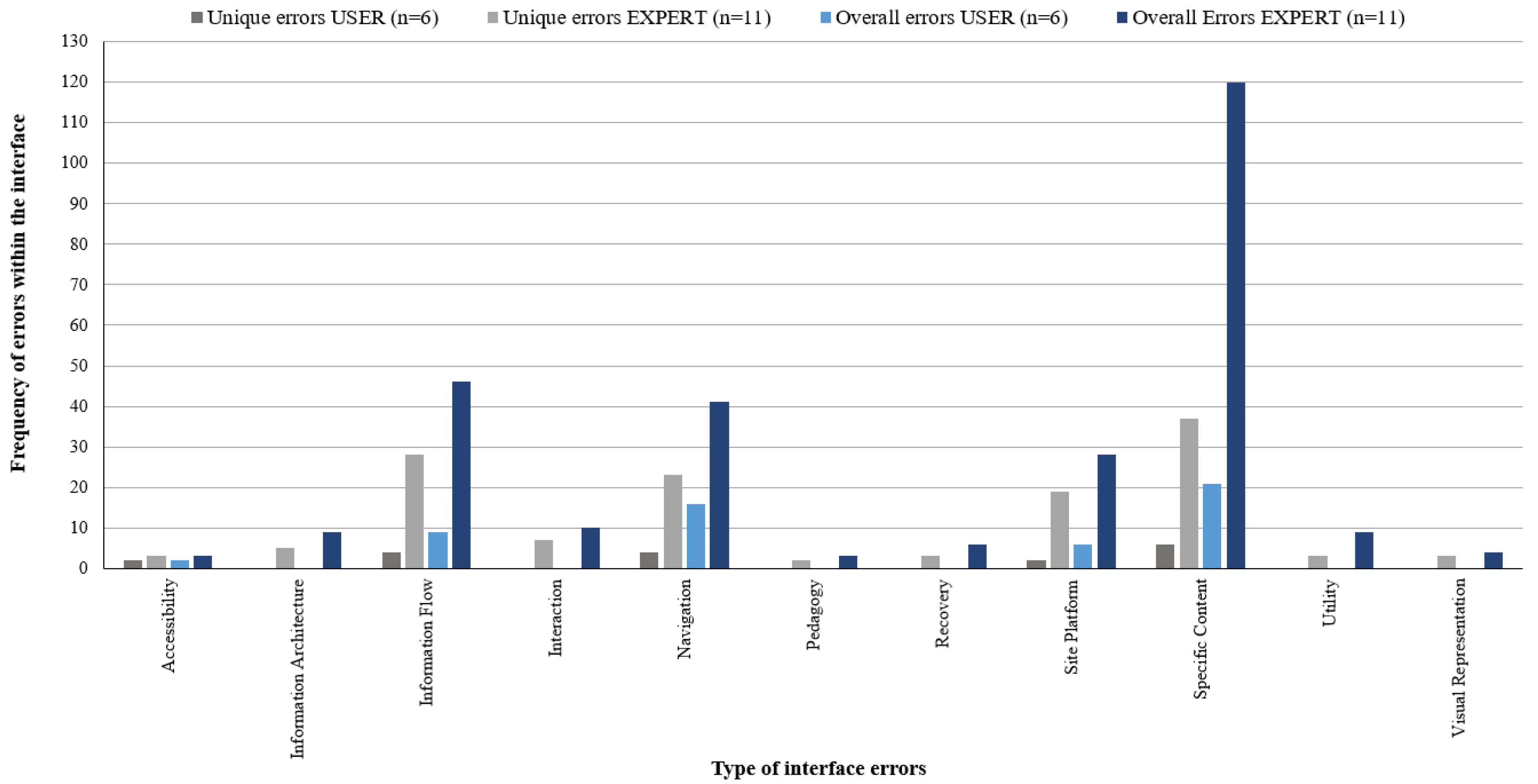

3.3. RSQ2: The Acuity of Expert Usability Error Data Was Compared to End-User Usability Error Data Generated from Formal Testing of the Prototype Undertaken with Primary Carers as Representatives from the End-User Group

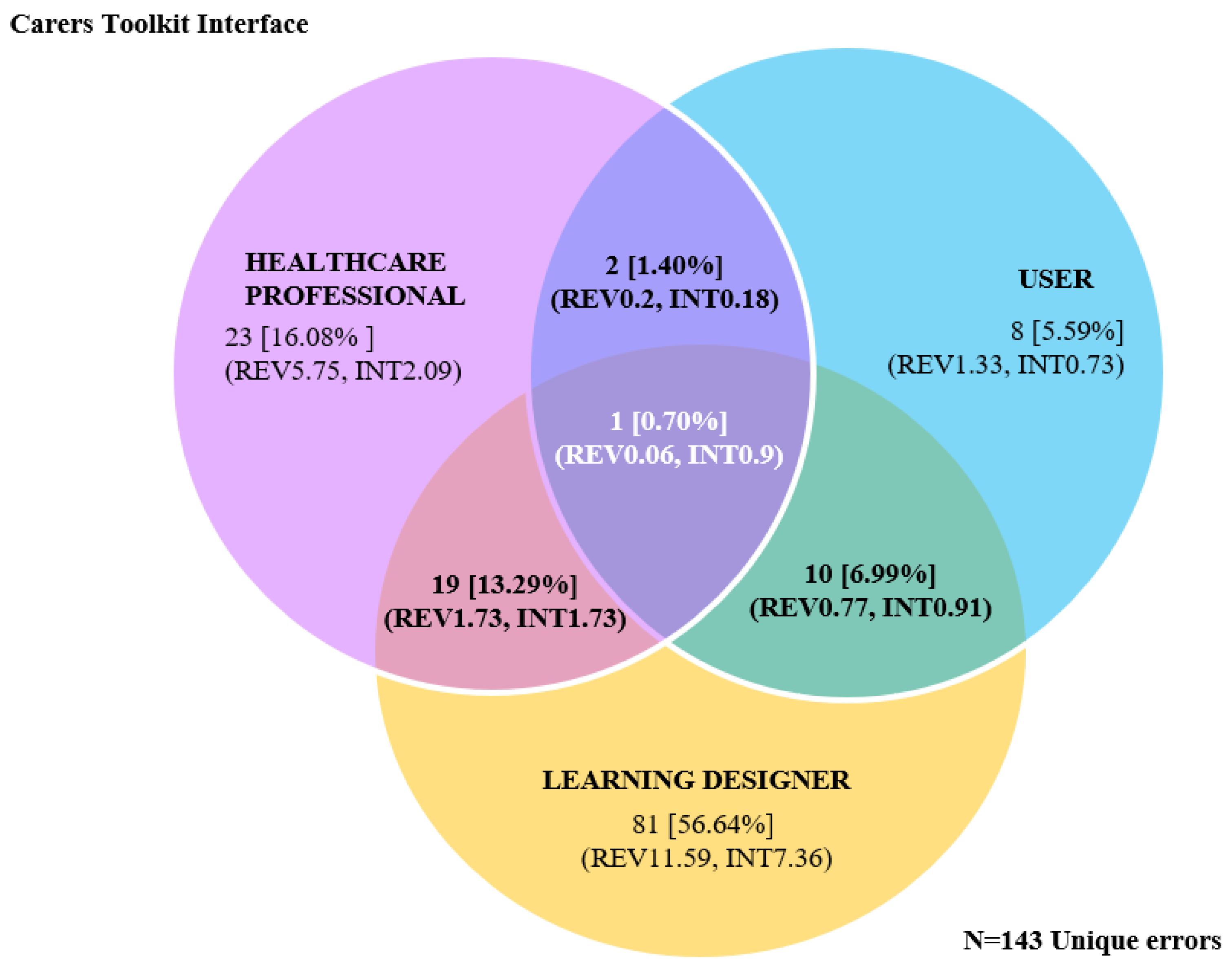

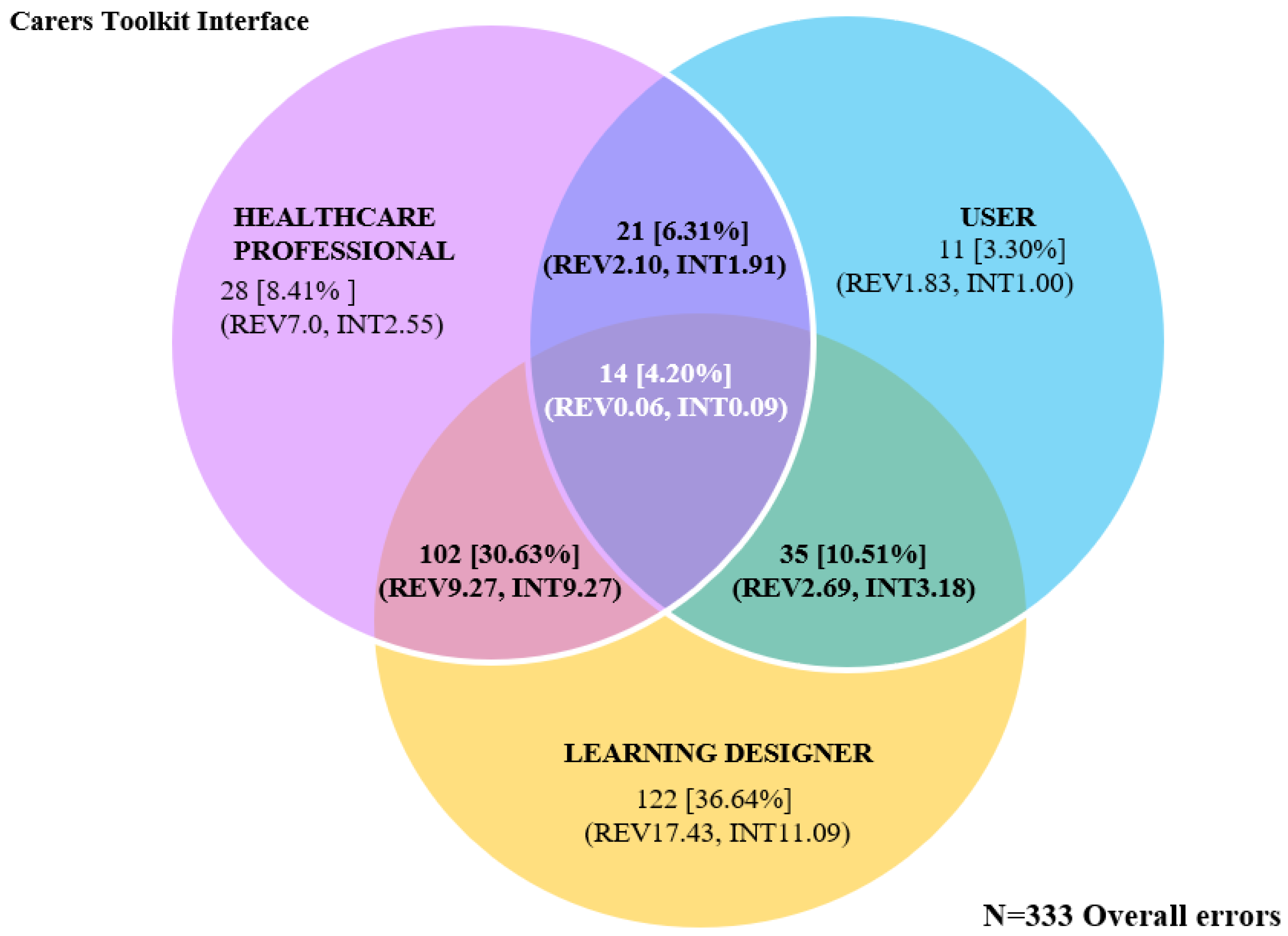

Evaluator Group Comparisons between LDs, HCPs, and USERs (Carers)

4. Discussion

4.1. Ease of Recruitment of Experts and End Users

4.2. The Types of Feedback Provided

4.3. Error Identification

4.4. Potential Role of Learning Designers in Usability Evaluations

4.5. Can Expert Evaluators Replace End Users in the Development of Digital Health Resources?

4.6. Study Limitations

4.7. Future Research

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Data Tables

| HCP Experience (Years) | S-R TA | LD Experience (Years) | S-R TA | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 6–10 | 11–15 | 16–20 | Int | Exp | Total | 1–5 | 11–15 | 16–20 | >21 | Expert | Total | ||

| n = 2 | n = 1 | n = 1 | n = 1 | n = 3 | 77 | n = 2 | n = 3 | n = 1 | n = 1 | n = 7 | 202 | ||

| Errors Identified (%Total) | 44 (57.1) | 17 (22.1) | 16 (20.8) | 16 (20.8) | 61 (79.2) | 54 (26.7) | 44 (14.7) | 71 (35.2) | 33 (16.3) | 202 (100) | |||

| Ave error/user | 22 | 17 | 16 | 16 | 20.3 | Total (%) | 27 | 22 | 71 | 33 | 28.86 | Total (%) | |

| Type of Error | Accessibility | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 2 | 3 | 3 (1.5) |

| Inform architect. | 3 | 0 | 1 | 1 | 3 | 4 (5.2) | 1 | 2 | 0 | 2 | 5 | 5 (2.5) | |

| Inform flow | 6 | 1 | 2 | 2 | 7 | 9 (11.7) | 15 | 9 | 7 | 6 | 37 | 37 (18.3) | |

| Interaction | 0 | 0 | 1 | 1 | 0 | 1 (1.3) | 2 | 2 | 5 | 0 | 9 | 9 (4.5) | |

| Navigation | 8 | 3 | 1 | 1 | 11 | 12 (15.6) | 7 | 8 | 12 | 2 | 29 | 29 (14.4) | |

| Pedagogy | 2 | 0 | 0 | 0 | 2 | 2 (2.6) | 0 | 0 | 1 | 0 | 1 | 1 (0.5) | |

| Recovery | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 4 | 6 | 6 (3.0) | |

| Site platform | 1 | 2 | 0 | 0 | 3 | 3 (3.9) | 8 | 6 | 10 | 1 | 25 | 25 (12.4) | |

| Specific content | 22 | 11 | 10 | 10 | 33 | 43 (55.9) | 18 | 16 | 28 | 15 | 77 | 77 (38.1) | |

| Utility | 2 | 0 | 1 | 1 | 2 | 3 (3.9) | 2 | 0 | 4 | 0 | 6 | 6 (3.0) | |

| Visual Repres. | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 1 | 1 | 4 | 4 (2.0) | |

| Nielsen’s Severity Rating | High (1) | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 5 | 5 | 2 | 14 | 14 (6.93) |

| High-Med (1–2) | 4 | 1 | 1 | 1 | 5 | 6 (7.8) | 0 | 1 | 0 | 2 | 3 | 3 (1.49) | |

| Medium (2) | 15 | 2 | 3 | 3 | 17 | 20 (26.0) | 14 | 18 | 24 | 12 | 68 | 68 (33.7) | |

| Med-Low (2–3) | 9 | 6 | 7 | 7 | 15 | 22 (28.6) | 3 | 6 | 10 | 2 | 21 | 21 (10.4) | |

| Low (3) | 16 | 8 | 5 | 5 | 24 | 29 (37.7) | 35 | 14 | 32 | 15 | 96 | 96 (47.52) | |

| Area of toolkit | Site | 4 | 0 | 1 | 1 | 4 | 5 (6.5) | 2 | 2 | 6 | 3 | 13 | 13 (6.4) |

| Menu | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 2 | 0 | 4 | 4 (2.0) | |

| Home | 6 | 1 | 3 | 3 | 7 | 10 (13.0) | 7 | 8 | 11 | 7 | 33 | 33 (16.3) | |

| Carer Pathway | 6 | 5 | 2 | 2 | 11 | 13 (16.9) | 12 | 7 | 8 | 5 | 32 | 32 (15.8) | |

| Being Prepared | 3 | 3 | 0 | 0 | 6 | 6 (7.8) | 2 | 1 | 4 | 3 | 10 | 10 (5.0) | |

| Being a Carer | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 1 | 1 (0.5) | |

| Being EoL Carer | 3 | 0 | 2 | 2 | 3 | 5 (6.5) | 5 | 4 | 7 | 2 | 18 | 18 (8.9) | |

| Caring for Dying | 1 | 0 | 3 | 3 | 1 | 4 (5.2) | 4 | 1 | 4 | 3 | 12 | 12 (5.9) | |

| Learning Module | 8 | 3 | 2 | 2 | 11 | 13 (16.9) | 7 | 10 | 17 | 2 | 36 | 36 (17.8) | |

| After Caring | 4 | 3 | 0 | 0 | 7 | 7 (9.1) | 3 | 1 | 2 | 1 | 7 | 7 (3.5) | |

| Carer Library | 6 | 0 | 3 | 3 | 6 | 9 (11.7) | 1 | 6 | 5 | 4 | 16 | 16 (7.9) | |

| Carer Voice | 1 | 2 | 0 | 0 | 3 | 3 (3.9) | 6 | 4 | 5 | 2 | 17 | 17 (8.4) | |

| About Project | 2 | 0 | 0 | 0 | 2 | 2 (2.6) | 2 | 0 | 0 | 1 | 3 | 3 (1.5) | |

| Content Errors Identified | |||

|---|---|---|---|

| Content Error Groups: Error Definition/Examples | HCP (n = 4) | LD (n = 7) | Total (%) |

| 1. Evaluation: Positive or negative comments, judgements, or preferences “I don’t like the statement ‘caring for someone dying is a major task’” [ERPC4] “Way too much information and duplication … by this time I have given up as it feels like a maze” [ERLD5] | 6 | 6 | 12 (10.0) |

| 2. Grammatical: Spelling or grammatical corrections “People often provide care when someone is older, seriously or has a disability. Think the work ill is missing from seriously” [ERPC2] “Last sentence ‘provide’ should be ‘provided’” [ERLD3] | 3 | 8 | 11 (9.17) |

| 3. Knowledge statement problem with specific content knowledge “Not sure that ‘caring for someone who is dying is the end of a journey of caring’. The dying part is the most intense and most profound and this statement has it over with before the experience has concluded. I would focus on the profound elements of caring for someone dying not the end of the caring role” [ERPC2] “Our other modules on about slide four but they are not consistent. Why are some listed in a module but not in others? I may worry I am missing information I need to know?” [ERLD7] | 3 | 1 | 4 (3.33) |

| 4. Problem identification: Explicit reference to an issue or problem “I think language is okay, but there are just too many words” [ERPC4] “…appropriate to also insert a link here to take the users back to the first page, rather than telling them to go to and use the menu (where is that?) to get back to the main page.” [ERLD4] | 8 | 12 | 20 (16.67) |

| 5. Resources and activities: Explicit reference to embedded resources or learning activities “Need to make sure that this toolkit provides information for carers on how to improve and sustain person’s quality of life when at home” [ERPC4] “CarerHelp Sheets and Videos may require some description because they are specific to the site …not readily apparent what these maybe” [ERLD4] | 9 | 13 | 22 (18.33) |

| 6. Revision statement: Explicit text statement with the intent to change current to an ideal state “Dying is poorly recognised generally. I think it should be assumed that people using it are seeking assistance for a dying loved one. Maybe a reference that dying can occur over a period of time and is characterised by consistent deterioration would be better upfront…If the person you are caring for is dying, then this resource will help you to prepare for the likely changes that will occur in the future.” [ERPC2] “Caring for someone who is dying is the end of a journey of caring …—could be something like: ‘Caring for someone dying also means that your role of carer will come to an end after the person has died. These resources help you be prepared for dealing with the end of life care” [ERLD5] | 1 | 21 | 22 (18.33) |

| 7. Strategies: Explicit reference to underlying strategies or need to apply strategies to content “Not enough information on how this toolkit will help carers—carers will ask, how is this going to help me?” [ERPC3] “… my conclusion is that the Carer Pathways is the entry point that links off to everything else. Maybe these needs explaining more as the starting point, and if you’re returning to the site, you can use the other menus to navigate if you know where you want to go.” [ERLD7] | 4 | 9 | 13 (10.83) |

| 8. Text knowledge: Comments or statements from reviewers on learnings from the text “There are too many words on this page – I don’t think carers will like being told how to feel…” [ERPC4] “… “You might care for a short time or for a long time” could also mean care in the context of how long you personally ‘care’ about the situation rather than the length of time you may have to provide a level of care.” [ERLD1] | 9 | 7 | 16 (13.33) |

| Total (%Total) | 43 (35.8) | 77 (64.2) | 120 |

| Mean error/reviewer (SD) | 10.8 (3.1) | 11.0 (5.9) | |

| Errors Identified by Reviewer Groups | ||||||||

|---|---|---|---|---|---|---|---|---|

| HCP (n = 4) | LDs (n = 7) | USE (n = 6) | Experts * (n = 11) | |||||

| Error Type | # Unique | ˇ Overall | # Unique | ˇ Overall | # Unique | ˇ Overall | # Unique | ˇ Overall |

| Accessibility | 0 | 0 | 3 | 3 | 2 | 2 | 3 | 3 |

| Information architecture | 4 | 4 | 3 | 5 | 0 | 0 | 5 | 9 |

| Information flow | 6 | 9 | 24 | 37 | 4 | 9 | 28 | 46 |

| Interaction | 1 | 1 | 6 | 9 | 0 | 0 | 7 | 10 |

| Navigation | 8 | 12 | 17 | 29 | 4 | 16 | 23 | 41 |

| Pedagogy | 2 | 2 | 1 | 1 | 0 | 0 | 2 | 3 |

| Recovery | 0 | 0 | 3 | 6 | 0 | 0 | 3 | 6 |

| Site platform | 3 | 3 | 17 | 25 | 2 | 6 | 19 | 28 |

| Specific content | 16 | 43 | 28 | 77 | 6 | 21 | 37 | 120 |

| Utility | 2 | 3 | 2 | 6 | 0 | 0 | 3 | 9 |

| Visual representation | 0 | 0 | 3 | 4 | 0 | 0 | 3 | 4 |

| Total errors | 42 | 77 | 107 | 202 | 18 | 54 | 133 | 279 |

| ^ %Total—Unique [N = 167] | 25.15 | 64.07 | 10.78 | 88.08 ^ | ||||

| ^ %Total—Overall errors [N = 333] | 23.12 | 60.66 | 16.22 | 83.78 | ||||

| Mean error by reviewer | 10.50 | 19.25 | 15.29 | 28.86 | 3.00 | 9.00 | 12.09 | 25.36 |

| Mean error across interface | 3.82 | 7.00 | 9.73 | 18.36 | 1.64 | 4.91 | 12.09 | 25.36 |

| Interface Errors Identified by Reviewer Groups | ||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| HCP ˇ (n = 4) | LD ˇ (n = 7) | USE ˇ (n = 6) | HCP + LD * (n = 11) | HCP + USE * (n = 10) | LD + USE * (n = 13) | HCP + LD + USE * (n = 17) | ||||||||

| Interface Area | Unique | Overall | Unique | Overall | Unique | Overall | Unique | Overall | Unique | Overall | Unique | Overall | Unique | Overall |

| Accessibility | 0 | 0 | 3 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 2 | 0 | 0 |

| Information architecture | 2 | 2 | 1 | 1 | 0 | 0 | 2 | 6 | 0 | 0 | 0 | 0 | 0 | 0 |

| Information flow | 4 | 5 | 20 | 25 | 3 | 3 | 2 | 14 | 0 | 0 | 2 | 8 | 0 | 0 |

| Interaction | 1 | 1 | 5 | 8 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |

| Navigation | 4 | 6 | 13 | 20 | 1 | 3 | 3 | 8 | 1 | 7 | 2 | 13 | 0 | 0 |

| Pedagogy | 1 | 1 | 0 | 0 | 0 | 0 | 1 | 2 | 0 | 0 | 0 | 0 | 0 | 0 |

| Recovery | 0 | 0 | 3 | 6 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Site platform | 2 | 2 | 12 | 18 | 0 | 0 | 1 | 2 | 0 | 0 | 4 | 12 | 0 | 0 |

| Specific content | 8 | 10 | 20 | 33 | 4 | 5 | 7 | 65 | 1 | 14 | 0 | 0 | 1 | 14 |

| Utility | 1 | 1 | 1 | 4 | 0 | 0 | 2 | 4 | 0 | 0 | 0 | 0 | 0 | 0 |

| Visual representation | 0 | 0 | 3 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Total errors | 23 | 28 | 81 | 122 | 8 | 11 | 19 | 102 | 2 | 21 | 10 | 35 | 1 | 14 |

| % Total—Unique [N = 143] | 16.08 | 56.64 | 5.59 | 13.29 | 1.40 | 6.99 | 0.70 | |||||||

| %Total—Overall errors [N = 333] | 8.41 | 36.64 | 3.30 | 30.63 | 6.31 | 10.51 | 4.20 | |||||||

| Mean error/reviewer | 5.75 | 7.00 | 11.57 | 17.43 | 1.33 | 1.83 | 1.73 | 9.27 | 0.20 | 2.10 | 0.77 | 2.69 | 0.06 | 0.82 |

| Mean error/interface | 2.09 | 2.55 | 7.36 | 11.09 | 0.73 | 1.00 | 1.73 | 9.27 | 0.18 | 1.91 | 0.91 | 3.18 | 0.09 | 1.27 |

References

- Kujala, S.; Kauppinen, M. Identifying and selecting users for user-centered design. In Proceedings of the Third Nordic Conference on Human-Computer Interaction, Tampere, Finland, 23–27 October 2004; pp. 297–303. [Google Scholar]

- Van Velsen, L.; Wentzel, J.; Van Gemert-Pijnen, J.E.W.C. Designing eHealth that matters via a multidisciplinary requirements development approach. JMIR Res. Protoc. 2013, 2, e21. [Google Scholar] [CrossRef]

- Årsand, E.; Demiris, G. User-centered methods for designing patient-centric self-help tools. Inform. Health Soc. Care 2008, 33, 158–169. [Google Scholar] [CrossRef]

- Matthew-Maich, N.; Harris, L.; Ploeg, J.; Markle-Reid, M.; Valaitis, R.; Ibrahim, S.; Gafni, A.; Isaacs, S. Designing, implementing, and evaluating mobile health technologies for managing chronic conditions in older adults: A scoping review. JMIR mHealth uHealth 2016, 4, e29. [Google Scholar] [CrossRef]

- CareSearch. Care of the Dying Person. Available online: https://www.caresearch.com.au/caresearch/tabid/6220/Default.aspx (accessed on 30 January 2023).

- Carers Australia. The Economic Value of Informal Care in Australia in 2015. Available online: https://www2.deloitte.com/content/dam/Deloitte/au/Documents/Economics/deloitte-au-economic-value-informal-care-Australia-2015-140815.pdf (accessed on 26 January 2023).

- Hanratty, B.; Lowson, E.; Holmes, L.; Addington-Hall, J.; Arthur, A.; Grande, G.; Payne, S.; Seymour, J. A comparison of strategies to recruit older patients and carers to end-of-life research in primary care. BMC Health Serv. Res. 2012, 12, 342. [Google Scholar] [CrossRef] [PubMed]

- Cox, A.; Illsley, M.; Knibb, W.; Lucas, C.; O’Driscoll, M.; Potter, C.; Flowerday, A.; Faithfull, S. The acceptability of e-technology to monitor and assess patient symptoms following palliative radiotherapy for lung cancer. Palliat. Med. 2011, 25, 675–681. [Google Scholar] [CrossRef]

- Aoun, S.; Slatyer, S.; Deas, K.; Nekolaichuk, C. Family caregiver participation in palliative care research: Challenging the myth. J. Pain Symptom. Manag. 2017, 53, 851–861. [Google Scholar] [CrossRef] [PubMed]

- Finucane, A.M.; O’Donnell, H.; Lugton, J.; Gibson-Watt, T.; Swenson, C.; Pagliari, C. Digital health interventions in palliative care: A systematic meta-review. NPJ Digit. Med. 2021, 4, 64. [Google Scholar] [CrossRef] [PubMed]

- Kars, M.C.; van Thiel, G.J.M.W.; van der Graaf, R.; Moors, M.; de Graeff , A.; van Delden, J.J.M. A systematic review of reasons for gatekeeping in palliative care research. Palliat. Med. 2015, 30, 533–548. [Google Scholar] [CrossRef] [PubMed]

- Vogel, R.I.; Petzel, S.V.; Cragg, J.; McClellan, M.; Chan, D.; Dickson, E.; Jacko, J.A.; Sainfort, F.; Geller, M.A. Development and pilot of an advance care planning website for women with ovarian cancer: A randomized controlled trial. Gynecol. Oncol. 2013, 131, 430–436. [Google Scholar] [CrossRef]

- Australian Bureau of Statistics (ABS). Disability, Ageing and Carers, Australia: Summary of Findings; 4430.0. Available online: https://www.abs.gov.au/statistics/health/disability/disability-ageing-and-carers-australia-summary-findings/latest-release#data-download (accessed on 18 January 2023).

- Travis, D. Usability Testing with Hard-to-Find Participants. Available online: https://www.userfocus.co.uk/articles/surrogates.html (accessed on 12 January 2023).

- Lievesley, M.A.; Yee, J.S.R. Surrogate users: A pragmatic approach to defining user needs. In Proceedings of the CHI Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 28 April–3 May 2007; pp. 1789–1794. [Google Scholar]

- Dave, K.; Mason, J. Empowering learning designers through design thinking. In Proceedings of the ICCE 2020—28th International Conference on Computers in Education, Proceedings, Virtual, 23–27 November 2020; pp. 497–502. [Google Scholar]

- Schmidt, M.; Earnshaw, Y.; Tawfik, A.A.; Jahnke, I. Methods of user centered design and evaluation for learning designers. In Learner and User Experience Research: An Introduction for the Field of Learning Design & Technology; EDTech: Provo, UT, USA, 2020; Available online: https://edtechbooks.org/ux/ucd_methods_for_lx (accessed on 15 January 2023).

- Redish, J.; Bias, J.G.; Bailey, R.; Molich, R.; Dumas, J.; Spool, J.M. Usability in practice: Formative usability evaluations—Evolution and revolution. In Proceedings of the CHI’02 Extended Abstracts on Human Factors in Computing Systems, Minneapolis, MN, USA, 20–25 April 2002; pp. 885–890. [Google Scholar]

- Sauro, J. Are You Conducting a Heuristic Evaluation or Expert Review? Available online: https://measuringu.com/he-expert/ (accessed on 10 January 2023).

- Johnson, C.M.; Turley, J.P. A new approach to building web-based interfaces for healthcare consumers. Electron. J. Health Inform. 2007, 2, e2. [Google Scholar] [CrossRef]

- Schriver, K.A. Evaluating text quality: The continuum from text-focused to reader-focused methods. IEEE Trans. Prof. Commun. 1989, 32, 238–255. [Google Scholar] [CrossRef]

- Jaspers, M.W. A comparison of usability methods for testing interactive health technologies: Methodological aspects and empirical evidence. Int. J. Med. Inform. 2009, 78, 340–353. [Google Scholar] [CrossRef]

- Wilson, C.E. Heuristic evaluation. In User Interface Inspection Methods; Wilson, C.E., Ed.; Morgan Kaufmann: Burlington, MA, USA, 2014; pp. 1–32. [Google Scholar]

- Mahatody, T.; Sagar, M.; Kolski, C. State of the art on the cognitive walkthrough method, its variants and evolutions. Int. J. Hum-Comput. Interact. 2010, 26, 741–785. [Google Scholar] [CrossRef]

- Khajouei, R.; Zahiri Esfahani, M.; Jahani, Y. Comparison of heuristic and cognitive walkthrough usability evaluation methods for evaluating health information systems. J. Am. Med. Inform. Assoc. 2017, 24, e55–e60. [Google Scholar] [CrossRef] [PubMed]

- Kneale, L.; Mikles, S.; Choi, Y.K.; Thompson, H.; Demiris, G. Using scenarios and personas to enhance the effectiveness of heuristic usability evaluations for older adults and their care team. J. Biomed. Inform. 2017, 73, 43–50. [Google Scholar] [CrossRef] [PubMed]

- Davids, M.R.; Chikte, U.M.E.; Halperin, M.L. An efficient approach to improve the usability of e-learning resources: The role of heuristic evaluation. Adv. Physiol. Educ. 2013, 37, 242–248. [Google Scholar] [CrossRef] [PubMed]

- Nickerson, R.S.; Landauer, T.K. Human-Computer Interaction: Background and Issues. In Handbook of Human-Computer Interaction, 2nd ed.; Helander, M.G., Landauer, T.K., Prabhu, P.V., Eds.; Elsevier B.V.: Amsterdam, The Netherlands, 1997; pp. 3–31. [Google Scholar]

- Rosenbaum, S. The Future of Usability Evaluation: Increasing Impact on Value. In Maturing Usability: Quality in Software, Interaction and Value; Springer: London, UK, 2008; pp. 344–378. [Google Scholar] [CrossRef]

- Georgsson, M.; Staggers, N.; Årsand, E.; Kushniruk, A.W. Employing a user-centered cognitive walkthrough to evaluate a mHealth diabetes self-management application: A case study and beginning method validation. J. Biomed. Inform. 2019, 91, 103110. [Google Scholar] [CrossRef] [PubMed]

- Zhang, J.; Johnson, T.R.; Patel, V.L.; Paige, D.L.; Kubose, T. Using usability heuristics to evaluate patient safety of medical devices. J. Biomed. Inform. 2003, 36, 23–30. [Google Scholar] [CrossRef] [PubMed]

- Sauro, J. How Effective Are Heuristic Evaluations. Available online: https://measuringu.com/effective-he/ (accessed on 15 January 2023).

- Six, J.M. Usability Testing Versus Expert Reviews. Available online: https://www.uxmatters.com/mt/archives/2009/10/usability-testing-versus-expert-reviews.php (accessed on 29 January 2023).

- Nielsen, J. How to Conduct a Heuristic Evaluation. Available online: https://www.nngroup.com/articles/how-to-conduct-a-heuristic-evaluation/ (accessed on 20 January 2023).

- Zoom Video Communications Zoom Meeting Software; Zoom Video Communications: San Jose, CA, USA, 2020.

- Sauro, J.; Lewis, J.R. What sample sizes do we need?: Part 2: Formative studies. In Quantifying the User Experience; Morgan Kaufmann: Burlington, MA, USA, 2012; pp. 143–184. [Google Scholar]

- Nielsen, J. Why You Only Need to Test with 5 Users. Available online: https://www.nngroup.com/articles/why-you-only-need-to-test-with-5-users/ (accessed on 18 January 2023).

- Nielsen, J.; Landauer, T.K. A mathematical model of the finding of usability problems. In Proceedings of the INTERACT’93 and CHI’93 Conference on Human Factors in Computing Systems, Amsterdam, The Netherlands, 24–29 April 1993; pp. 206–213. [Google Scholar]

- Saroyan, A. Differences in expert practice: A case from formative evaluation. Instr. Sci. 1992, 21, 451–472. [Google Scholar] [CrossRef]

- Nielsen, J. Severity Ratings for Usability Problems. Available online: https://www.nngroup.com/articles/how-to-rate-the-severity-of-usability-problems/ (accessed on 21 January 2023).

- Georgsson, M.; Staggers, N. An evaluation of patients’ experienced usability of a diabetes mHealth system using a multi-method approach. J. Biomed. Inform. 2016, 59, 115–129. [Google Scholar] [CrossRef]

- Hvannberg, E.T.; Law, E.L.; Lérusdóttir, M.K. Heuristic evaluation: Comparing ways of finding and reporting usability problems. Interact. Comput. 2006, 19, 225–240. [Google Scholar] [CrossRef]

- Yamada, J.; Shorkey, A.; Barwick, M.; Widger, K.; Stevens, B.J. The effectiveness of toolkits as knowledge translation strategies for integrating evidence into clinical care: A systematic review. BMJ Open 2015, 5, e006808. [Google Scholar] [CrossRef] [PubMed]

- Lu, J.; Schmidt, M.; Lee, M.; Huang, R. Usability research in educational technology: A state-of-the-art systematic review. Educ. Technol. Res. Dev. 2022, 70, 1951–1992. [Google Scholar] [CrossRef]

- Sitko, B.M. Knowing how to write: Metacognition and writing instruction. In Metacognition in Educational Theory and Practice; Hacker, D.J., Dunlosky, J., Graesser, A.C., Eds.; Taylor & Francis Group: Florence, SC, USA, 1998; pp. 93–116. [Google Scholar]

- Lathan, C.E.; Sebrechts, M.M.; Newman, D.J.; Doarn, C.R. Heuristic evaluation of a web-based interface for Internet telemedicine. Telemed. J. 1999, 5, 177–185. [Google Scholar] [CrossRef]

- Fu, L.; Salvendy, G.; Turley, L. Effectiveness of user testing and heuristic evaluation as a function of performance classification. Behav. Inf. Technol. 2002, 21, 137–143. [Google Scholar] [CrossRef]

- Nielsen, J.; Molich, R. Heuristic evaluation of user interfaces. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Washington, DC, USA, 1–5 April 1990; pp. 249–256. [Google Scholar]

- Liu, L.S.; Shih, P.C.; Hayes, G.R. Barriers to the adoption and use of personal health record systems. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Seattle, WA, USA, 6–12 May 2011; pp. 363–370. [Google Scholar]

- Tang, Z.; Johnson, T.R.; Tindall, R.D.; Zhang, J. Applying heuristic evaluation to improve the usability of a telemedicine system. Telemed. J. E-Health 2006, 12, 24–34. [Google Scholar] [CrossRef]

- Law, L.; Hvannberg, E.T. Complementarity and convergence of heuristic evaluation and usability test: A case study of universal brokerage platform. In Proceedings of the Second Nordic Conference on Human-Computer Interaction, Aarhus, Denmark, 19–23 October 2002; pp. 71–80. [Google Scholar]

- Jeffries, R.; Miller, J.R.; Wharton, C.; Uyeda, K. User interface evaluation in the real world: A comparison of four techniques. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, New Orleans, LA, USA, 27 April–2 May 1991; pp. 119–124. [Google Scholar]

- Barac, R.; Stein, S.; Bruce, B.; Barwick, M. Scoping review of toolkits as a knowledge translation strategy in health. BMC Med. Inform. Decis. Mak. 2014, 14, 121. [Google Scholar] [CrossRef]

- Goodman-Deane, J.; Bradley, M.; Clarkson, P.J. Digital Technology Competence and Experience in the UK Population: Who Can Do What. Published Online, Contemporary Ergonomics & Human Factors, 9 April 2020. Available online: https://publications.ergonomics.org.uk/uploads/Digital-technology-competence-and-experience-in-the-UK-population-who-can-do-what.pdf (accessed on 15 January 2023).

- Parker, D.; Hudson, P.; Tieman, J.; Thomas, K.; Saward, D.; Ivynian, S. Evaluation of an online toolkit for carers of people with a life-limiting illness at the end-of-life: Health professionals’ perspectives. Aust. J. Prim. Health 2021, 27, 473–478. [Google Scholar] [CrossRef]

| Content Error Descriptor | Definition of Error Descriptor |

|---|---|

| 1. Evaluation | Positive or negative comments from reviewers, judgements, or preferences |

| 2. Grammatical | Spelling or grammatical corrections |

| 3. Knowledge Statement | Problem with specific content knowledge |

| 4. Problem Identification | Explicit reference to an issue or problems |

| 5. Resources/Activities | Explicit reference to embedded resources and learning activities |

| 6. Revision Statement | Explicit verbalisation or text statement with the intent to change the current to an ideal state |

| 7. Strategies: | Explicit reference to underlying strategies or the need to apply strategies to the content |

| 8. Text Knowledge | Comments or statements from reviewers on learnings from the text |

| Professional Position | Practise Setting | Post-Qual. Exp. (Years) 1 | S-R Tech. Ability 2 |

|---|---|---|---|

| Nurse/ Director of Service | Acute and Community Care | 14 | Expert |

| General Practitioner/ Director | Acute and Community Care | 20 | Intermediate |

| Social Worker | Acute and Community Care | 7 | Expert |

| Nurse Practitioner | Community Care | 10 | Expert |

| Professional Position | Post-Qual. Exp. 1 (Years) | S-A Tech. Ability 2 |

|---|---|---|

| Learning Designer | 27 | Expert |

| Educational Designer | 15 | Expert |

| Educational Technologist | 12 | Expert |

| Educational Designer | 20 | Expert |

| Learning Designer | 3 | Expert |

| Learning Designer | 5 | Expert |

| Educational Designer | 12 | Expert |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Adams, A.; Miller-Lewis, L.; Tieman, J. Learning Designers as Expert Evaluators of Usability: Understanding Their Potential Contribution to Improving the Universality of Interface Design for Health Resources. Int. J. Environ. Res. Public Health 2023, 20, 4608. https://doi.org/10.3390/ijerph20054608

Adams A, Miller-Lewis L, Tieman J. Learning Designers as Expert Evaluators of Usability: Understanding Their Potential Contribution to Improving the Universality of Interface Design for Health Resources. International Journal of Environmental Research and Public Health. 2023; 20(5):4608. https://doi.org/10.3390/ijerph20054608

Chicago/Turabian StyleAdams, Amanda, Lauren Miller-Lewis, and Jennifer Tieman. 2023. "Learning Designers as Expert Evaluators of Usability: Understanding Their Potential Contribution to Improving the Universality of Interface Design for Health Resources" International Journal of Environmental Research and Public Health 20, no. 5: 4608. https://doi.org/10.3390/ijerph20054608

APA StyleAdams, A., Miller-Lewis, L., & Tieman, J. (2023). Learning Designers as Expert Evaluators of Usability: Understanding Their Potential Contribution to Improving the Universality of Interface Design for Health Resources. International Journal of Environmental Research and Public Health, 20(5), 4608. https://doi.org/10.3390/ijerph20054608