Feigning Adult ADHD on a Comprehensive Neuropsychological Test Battery: An Analogue Study

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

2.1.1. ADHD Group

2.1.2. Control Group

2.1.3. Simulation Group

2.2. Materials

2.2.1. Demographic Information

2.2.2. Self-Reported Symptoms of ADHD

2.2.3. Performance Validity

2.2.4. Neuropsychological Performance Assessment

2.3. Procedure

2.4. Data Analysis

3. Results

3.1. Neuropsychological Test Performance

3.2. CFADHD-Specific Cut-Off Scores

4. Discussion

Limitations

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Rogers, R. Introduction to Response Styles. In Clinical Assessment of Malingering and Deception; Rogers, R., Bender, S.D., Eds.; Guilford Press: New York, NY, USA, 2018; pp. 3–17. [Google Scholar]

- Sweet, J.J.; Heilbronner, R.L.; Morgan, J.E.; Larrabee, G.J.; Rohling, M.L.; Boone, K.B.; Kirkwood, M.W.; Schroeder, R.W.; Suhr, J.A. American Academy of Clinical Neuropsychology (AACN) 2021 Consensus Statement on Validity Assessment: Update of the 2009 AACN Consensus Conference Statement on Neuropsychological Assessment of Effort, Response Bias, and Malingering. Clin. Neuropsychol. 2021, 35, 1053–1106. [Google Scholar] [CrossRef] [PubMed]

- Boone, K.B. The Need for Continuous and Comprehensive Sampling of Effort/Response Bias during Neuropsychological Examinations. Clin. Neuropsychol. 2009, 23, 729–741. [Google Scholar] [CrossRef] [PubMed]

- Lippa, S.M. Performance Validity Testing in Neuropsychology: A Clinical Guide, Critical Review, and Update on a Rapidly Evolving Literature. Clin. Neuropsychol. 2018, 32, 391–421. [Google Scholar] [CrossRef] [PubMed]

- Hoelzle, J.B.; Ritchie, K.A.; Marshall, P.S.; Vogt, E.M.; Marra, D.E. Erroneous Conclusions: The Impact of Failing to Identify Invalid Symptom Presentation When Conducting Adult Attention-Deficit/Hyperactivity Disorder (ADHD) Research. Psychol. Assess. 2019, 31, 1174–1179. [Google Scholar] [CrossRef]

- Nelson, J.M.; Lovett, B.J. Assessing ADHD in College Students: Integrating Multiple Evidence Sources with Symptom and Performance Validity Data. Psychol. Assess. 2019, 31, 793–804. [Google Scholar] [CrossRef]

- Marshall, P.S.; Hoelzle, J.B.; Heyerdahl, D.; Nelson, N.W. The Impact of Failing to Identify Suspect Effort in Patients Undergoing Adult Attention-Deficit/Hyperactivity Disorder (ADHD) Assessment. Psychol. Assess. 2016, 28, 1290–1302. [Google Scholar] [CrossRef]

- Wallace, E.R.; Garcia-Willingham, N.E.; Walls, B.D.; Bosch, C.M.; Balthrop, K.C.; Berry, D.T.R. A Meta-Analysis of Malingering Detection Measures for Attention-Deficit/Hyperactivity Disorder. Psychol. Assess. 2019, 31, 265–270. [Google Scholar] [CrossRef]

- Soble, J.R.; Webber, T.A.; Bailey, C.K. An Overview of Common Stand-Alone and Embedded PVTs for the Practicing Clinician: Cutoffs, Classification Accuracy, and Administration Times. In Validity Assessment in Clinical Neuropsychological Practice: Evaluating and Managing Noncredible Performance; Schroeder, R.W., Martin, P.K., Eds.; Guilford Press: New York, NY, USA, 2021; pp. 126–149. [Google Scholar]

- Miele, A.S.; Gunner, J.H.; Lynch, J.K.; McCaffrey, R.J. Are Embedded Validity Indices Equivalent to Free-Standing Symptom Validity Tests? Arch. Clin. Neuropsychol. 2012, 27, 10–22. [Google Scholar] [CrossRef]

- Brennan, A.M.; Meyer, S.; David, E.; Pella, R.; Hill, B.D.; Gouvier, W.D. The Vulnerability to Coaching across Measures of Effort. Clin. Neuropsychol. 2009, 23, 314–328. [Google Scholar] [CrossRef]

- Suhr, J.A.; Gunstad, J. Coaching and Malingering: A Review. In Assessment of Malingered Neuropsychological Deficits; Larrabee, G.J., Ed.; Oxford University Press: Oxford, UK, 2007; pp. 287–311. [Google Scholar]

- Rüsseler, J.; Brett, A.; Klaue, U.; Sailer, M.; Münte, T.F. The Effect of Coaching on the Simulated Malingering of Memory Impairment. BMC Neurol. 2008, 8, 37. [Google Scholar] [CrossRef]

- Erdodi, L.A.; Lichtenstein, J.D. Invalid before Impaired: An Emerging Paradox of Embedded Validity Indicators. Clin. Neuropsychol. 2017, 31, 1029–1046. [Google Scholar] [CrossRef] [PubMed]

- Erdodi, L.A.; Roth, R.M.; Kirsch, N.L.; Lajiness-O’Neill, R.; Medoff, B. Aggregating Validity Indicators Embedded in Conners’ CPT-II Outperforms Individual Cutoffs at Separating Valid from Invalid Performance in Adults with Traumatic Brain Injury. Arch. Clin. Neuropsychol. 2014, 29, 456–466. [Google Scholar] [CrossRef] [PubMed]

- Erdodi, L.A. Multivariate Models of Performance Validity: The Erdodi Index Captures the Dual Nature of Non-Credible Responding (Continuous and Categorical). Assessment 2022. [Google Scholar] [CrossRef]

- Fuermaier, A.B.M.; Tucha, L.; Koerts, J.; Weisbrod, M.; Grabemann, M.; Zimmermann, M.; Mette, C.; Aschenbrenner, S.; Tucha, O. Evaluation of the CAARS Infrequency Index for the Detection of Noncredible ADHD Symptom Report in Adulthood. J. Psychoeduc. Assess. 2016, 34, 739–750. [Google Scholar] [CrossRef]

- Fuermaier, A.B.M.; Tucha, O.; Koerts, J.; Lange, K.W.K.W.; Weisbrod, M.; Aschenbrenner, S.; Tucha, L. Noncredible Cognitive Performance at Clinical Evaluation of Adult ADHD: An Embedded Validity Indicator in a Visuospatial Working Memory Test. Psychol. Assess. 2017, 29, 1466–1479. [Google Scholar] [CrossRef]

- Harrison, A.G.; Armstrong, I.T. Development of a Symptom Validity Index to Assist in Identifying ADHD Symptom Exaggeration or Feigning. Clin. Neuropsychol. 2016, 30, 265–283. [Google Scholar] [CrossRef] [PubMed]

- Harrison, A.G.; Edwards, M.J.; Parker, K.C.H. Identifying Students Faking ADHD: Preliminary Findings and Strategies for Detection. Arch. Clin. Neuropsychol. 2007, 22, 577–588. [Google Scholar] [CrossRef]

- Jachimowicz, G.; Geiselman, R.E. Comparison of Ease of Falsification of Attention Deficit Hyperactivity Disorder Diagnosis Using Standard Behavioral Rating Scales. Cogn. Sci. 2004, 2, 6–20. [Google Scholar]

- Lee Booksh, R.; Pella, R.D.; Singh, A.N.; Drew Gouvier, W. Ability of College Students to Simulate ADHD on Objective Measures of Attention. J. Atten. Disord. 2010, 13, 325–338. [Google Scholar] [CrossRef]

- Quinn, C.A. Detection of Malingering in Assessment of Adult ADHD. Arch. Clin. Neuropsychol. 2003, 18, 379–395. [Google Scholar] [CrossRef]

- Smith, S.T.; Cox, J.; Mowle, E.N.; Edens, J.F. Intentional Inattention: Detecting Feigned Attention-Deficit/Hyperactivity Disorder on the Personality Assessment Inventory. Psychol. Assess. 2017, 29, 1447–1457. [Google Scholar] [CrossRef] [PubMed]

- Walls, B.D.; Wallace, E.R.; Brothers, S.L.; Berry, D.T.R. Utility of the Conners’ Adult ADHD Rating Scale Validity Scales in Identifying Simulated Attention-Deficit Hyperactivity Disorder and Random Responding. Psychol. Assess. 2017, 29, 1437–1446. [Google Scholar] [CrossRef] [PubMed]

- Tucha, L.; Fuermaier, A.B.M. Detection of Feigned Attention Deficit Hyperactivity Disorder. J. Neural Transm. 2015, 122, S123–S134. [Google Scholar] [CrossRef] [PubMed]

- Fuermaier, A.B.M.; Tucha, O.; Koerts, J.; Tucha, L.; Thome, J.; Faltraco, F. Feigning ADHD and Stimulant Misuse among Dutch University Students. J. Neural Transm. 2021, 128, 1079–1084. [Google Scholar] [CrossRef]

- Parker, A.; Corkum, P. ADHD Diagnosis: As Simple as Administering a Questionnaire or a Complex Diagnostic Process? J. Atten. Disord. 2016, 20, 478–486. [Google Scholar] [CrossRef]

- Mostert, J.C.; Onnink, A.M.H.; Klein, M.; Dammers, J.; Harneit, A.; Schulten, T.; van Hulzen, K.J.E.; Kan, C.C.; Slaats-Willemse, D.; Buitelaar, J.K.; et al. Cognitive Heterogeneity in Adult Attention Deficit/Hyperactivity Disorder: A Systematic Analysis of Neuropsychological Measurements. Eur. Neuropsychopharmacol. 2015, 25, 2062–2074. [Google Scholar] [CrossRef]

- Pievsky, M.A.; McGrath, R.E. The Neurocognitive Profile of Attention-Deficit/Hyperactivity Disorder: A Review of Meta-Analyses. Arch. Clin. Neuropsychol. 2018, 33, 143–157. [Google Scholar] [CrossRef]

- Crippa, A.; Marzocchi, G.M.; Piroddi, C.; Besana, D.; Giribone, S.; Vio, C.; Maschietto, D.; Fornaro, E.; Repossi, S.; Sora, M.L. An Integrated Model of Executive Functioning Is Helpful for Understanding ADHD and Associated Disorders. J. Atten. Disord. 2015, 19, 455–467. [Google Scholar] [CrossRef]

- Fuermaier, A.B.M.; Tucha, L.; Aschenbrenner, S.; Kaunzinger, I.; Hauser, J.; Weisbrod, M.; Lange, K.W.; Tucha, O. Cognitive Impairment in Adult ADHD-Perspective Matters! Neuropsychology 2015, 29, 45. [Google Scholar] [CrossRef]

- Fuermaier, A.B.M.; Fricke, J.A.; de Vries, S.M.; Tucha, L.; Tucha, O. Neuropsychological Assessment of Adults with ADHD: A Delphi Consensus Study. Appl. Neuropsychol. 2019, 26, 340–354. [Google Scholar] [CrossRef]

- Fish, J. Rehabilitation of Attention Disorders. In Neuropsychological Rehabilitation: The International Handbook; Wilson, B.A., Winegardner, J., Heugten, C.M., van Ownsworth, T., Eds.; Routledge: New York, NY, USA, 2017; pp. 172–186. [Google Scholar]

- Spikman, J.M.; Kiers, H.A.; Deelman, B.G.; van Zomeren, A.H. Construct Validity of Concepts of Attention in Healthy Controls and Patients with CHI. Brain Cogn. 2001, 47, 446–460. [Google Scholar] [CrossRef] [PubMed]

- Sturm, W. Aufmerksamkeitsstörungen. In Lehrbuch der Klinischen Neuropsychologie; Sturm, W., Herrmann, M., Münte, T.F., Eds.; Spektrum. Suhr: Würzburg, Germany, 2009; pp. 421–443. [Google Scholar]

- Rabin, L.A.; Barr, W.B.; Burton, L.A. Assessment Practices of Clinical Neuropsychologists in the United States and Canada: A Survey of INS, NAN, and APA Division 40 Members. Arch. Clin. Neuropsychol. 2005, 20, 33–65. [Google Scholar] [CrossRef] [PubMed]

- Fuermaier, A.B.M.; Dandachi-Fitzgerald, B.; Lehrner, J. Attention Performance as an Embedded Validity Indicator in the Cognitive Assessment of Early Retirement Claimants. Psychol. Inj. Law 2022, 3. [Google Scholar] [CrossRef]

- Conners, C.K.; Staff, M.H.S.; Connelly, V.; Campbell, S.; MacLean, M.; Barnes, J. Conners’ Continuous Performance Test II (CPT II V. 5). Multi-Health Syst. Inc. 2000, 29, 175–196. [Google Scholar] [CrossRef]

- Greenberg, L.M.; Kindschi, C.L.; Dupuy, T.R.; Hughes, S.J. Test of Variables of Attention Continuous Performance Test; The TOVA Company: Los Alamitos, CA, USA, 1994. [Google Scholar]

- Busse, M.; Whiteside, D. Detecting Suboptimal Cognitive Effort: Classification Accuracy of the Conner’s Continuous Performance Test-II, Brief Test of Attention, and Trail Making Test. Clin. Neuropsychol. 2012, 26, 675–687. [Google Scholar] [CrossRef]

- Erdodi, L.A.; Pelletier, C.L.; Roth, R.M. Elevations on Select Conners’ CPT-II Scales Indicate Noncredible Responding in Adults with Traumatic Brain Injury. Appl. Neuropsychol. 2018, 25, 19–28. [Google Scholar] [CrossRef]

- Ord, J.S.; Boettcher, A.C.; Greve, K.W.; Bianchini, K.J. Detection of Malingering in Mild Traumatic Brain Injury with the Conners’ Continuous Performance Test-II. J. Clin. Exp. Neuropsychol. 2010, 32, 380–387. [Google Scholar] [CrossRef]

- Lange, R.T.; Iverson, G.L.; Brickell, T.A.; Staver, T.; Pancholi, S.; Bhagwat, A.; French, L.M. Clinical Utility of the Conners’ Continuous Performance Test-II to Detect Poor Effort in U.S. Military Personnel Following Traumatic Brain Injury. Psychol. Assess. 2013, 25, 339–352. [Google Scholar] [CrossRef]

- Shura, R.D.; Miskey, H.M.; Rowland, J.A.; Yoash-Gantz, R.E.; Denning, J.H. Embedded Performance Validity Measures with Postdeployment Veterans: Cross-Validation and Efficiency with Multiple Measures. Appl. Neuropsychol. 2016, 23, 94–104. [Google Scholar] [CrossRef]

- Sharland, M.J.; Waring, S.C.; Johnson, B.P.; Taran, A.M.; Rusin, T.A.; Pattock, A.M.; Palcher, J.A. Further Examination of Embedded Performance Validity Indicators for the Conners’ Continuous Performance Test and Brief Test of Attention in a Large Outpatient Clinical Sample. Clin. Neuropsychol. 2018, 32, 98–108. [Google Scholar] [CrossRef]

- Harrison, A.G.; Armstrong, I.T. Differences in Performance on the Test of Variables of Attention between Credible vs. Noncredible Individuals Being Screened for Attention Deficit Hyperactivity Disorder. Appl. Neuropsychol. Child 2020, 9, 314–322. [Google Scholar] [CrossRef] [PubMed]

- Morey, L.C. Examining a Novel Performance Validity Task for the Detection of Feigned Attentional Problems. Appl. Neuropsychol. 2017, 26, 255–267. [Google Scholar] [CrossRef]

- Williamson, K.D.; Combs, H.L.; Berry, D.T.R.; Harp, J.P.; Mason, L.H.; Edmundson, M. Discriminating among ADHD Alone, ADHD with a Comorbid Psychological Disorder, and Feigned ADHD in a College Sample. Clin. Neuropsychol. 2014, 28, 1182–1196. [Google Scholar] [CrossRef] [PubMed]

- Pollock, B.; Harrison, A.G.; Armstrong, I.T. What Can We Learn about Performance Validity from TOVA Response Profiles? J. Clin. Exp. Neuropsychol. 2021, 43, 412–425. [Google Scholar] [CrossRef]

- Ord, A.S.; Miskey, H.M.; Lad, S.; Richter, B.; Nagy, K.; Shura, R.D. Examining Embedded Validity Indicators in Conners Continuous Performance Test-3 (CPT-3). Clin. Neuropsychol. 2021, 35, 1426–1441. [Google Scholar] [CrossRef]

- Scimeca, L.M.; Holbrook, L.; Rhoads, T.; Cerny, B.M.; Jennette, K.J.; Resch, Z.J.; Obolsky, M.A.; Ovsiew, G.P.; Soble, J.R. Examining Conners Continuous Performance Test-3 (CPT-3) Embedded Performance Validity Indicators in an Adult Clinical Sample Referred for ADHD Evaluation. Dev. Neuropsychol. 2021, 46, 347–359. [Google Scholar] [CrossRef]

- Conners, C.K. Conners Continuous Performance Test; Multi-Health Systems: Toronto, ON, USA, 2014. [Google Scholar]

- Fiene, M.; Bittner, V.; Fischer, J.; Schwiecker, K.; Heinze, H.-J.; Zaehle, T. Untersuchung Der Simulationssensibilität Des Alertness-Tests Der Testbatterie Zur Aufmerksamkeitsprüfung (TAP). Zeitschrift Für Neuropsychol. 2015, 26, 73–86. [Google Scholar] [CrossRef]

- Stevens, A.; Bahlo, S.; Licha, C.; Liske, B.; Vossler-Thies, E. Reaction Time as an Indicator of Insufficient Effort: Development and Validation of an Embedded Performance Validity Parameter. Psychiatry Res. 2016, 245, 74–82. [Google Scholar] [CrossRef] [PubMed]

- Czornik, M.; Seidl, D.; Tavakoli, S.; Merten, T.; Lehrner, J. Motor Reaction Times as an Embedded Measure of Performance Validity: A Study with a Sample of Austrian Early Retirement Claimants. Psychol. Inj. Law 2022, 15, 200–212. [Google Scholar] [CrossRef]

- Stroop, J.R. Studies of Interference in Serial Verbal Reactions. J. Exp. Psychol. 1935, 18, 643–662. [Google Scholar] [CrossRef]

- Erdodi, L.A.; Sagar, S.; Seke, K.; Zuccato, B.G.; Schwartz, E.S.; Roth, R.M. The Stroop Test as a Measure of Performance Validity in Adults Clinically Referred for Neuropsychological Assessment. Psychol. Assess. 2018, 30, 755–766. [Google Scholar] [CrossRef] [PubMed]

- Khan, H.; Rauch, A.A.; Obolsky, M.A.; Skymba, H.; Barwegen, K.C.; Wisinger, A.M.; Ovsiew, G.P.; Jennette, K.J.; Soble, J.R.; Resch, Z.J. Comparison of Embedded Validity Indicators from the Stroop Color and Word Test Among Adults Referred for Clinical Evaluation of Suspected or Confirmed Attention-Deficit/Hyperactivity Disorder. Psychol. Assess. 2022, 34, 697–703. [Google Scholar] [CrossRef] [PubMed]

- Lee, C.; Landre, N.; Sweet, J.J. Performance Validity on the Stroop Color and Word Test in a Mixed Forensic and Patient Sample. Clin. Neuropsychol. 2019, 33, 1403–1419. [Google Scholar] [CrossRef] [PubMed]

- White, D.J.; Korinek, D.; Bernstein, M.T.; Ovsiew, G.P.; Resch, Z.J.; Soble, J.R. Cross-Validation of Non-Memory-Based Embedded Performance Validity Tests for Detecting Invalid Performance among Patients with and without Neurocognitive Impairment. J. Clin. Exp. Neuropsychol. 2020, 42, 459–472. [Google Scholar] [CrossRef]

- Arentsen, T.J.; Boone, K.B.; Lo, T.T.Y.; Goldberg, H.E.; Cottingham, M.E.; Victor, T.L.; Ziegler, E.; Zeller, M.A. Effectiveness of the Comalli Stroop Test as a Measure of Negative Response Bias. Clin. Neuropsychol. 2013, 27, 1060–1076. [Google Scholar] [CrossRef]

- Eglit, G.M.L.; Jurick, S.M.; Delis, D.C.; Filoteo, J.V.; Bondi, M.W.; Jak, A.J. Utility of the D-KEFS Color Word Interference Test as an Embedded Measure of Performance Validity. Clin. Neuropsychol. 2020, 34, 332–352. [Google Scholar] [CrossRef] [PubMed]

- Wechsler, D. Wechsler Adult Intelligence Scale, 4th ed.; American Psychological Association: Worcester, MA, USA, 2008. [Google Scholar]

- Erdodi, L.A.; Abeare, C.A.; Lichtenstein, J.D.; Tyson, B.T.; Kucharski, B.; Zuccato, B.G.; Roth, R.M. Wechsler Adult Intelligence Scale-Fourth Edition (WAIS-IV) Processing Speed Scores as Measures of Noncredible Responding: The Third Generation of Embedded Performance Validity Indicators. Psychol. Assess. 2017, 29, 148–157. [Google Scholar] [CrossRef]

- Ovsiew, G.P.; Resch, Z.J.; Nayar, K.; Williams, C.P.; Soble, J.R. Not so Fast! Limitations of Processing Speed and Working Memory Indices as Embedded Performance Validity Tests in a Mixed Neuropsychiatric Sample. J. Clin. Exp. Neuropsychol. 2020, 42, 473–484. [Google Scholar] [CrossRef]

- Reitan, R.M. The Validity of the Trail Making Test as an Indicator of Organic Brain Damage. Percept. Mot. Skills 1958, 8, 271–276. [Google Scholar] [CrossRef]

- Jurick, S.M.; Eglit, G.M.L.; Delis, D.C.; Bondi, M.W.; Jak, A.J. D-KEFS Trail Making Test as an Embedded Performance Validity Measure. J. Clin. Exp. Neuropsychol. 2022, 44, 62–72. [Google Scholar] [CrossRef]

- Ausloos-Lozano, J.E.; Bing-Canar, H.; Khan, H.; Singh, P.G.; Wisinger, A.M.; Rauch, A.A.; Ogram Buckley, C.M.; Petry, L.G.; Jennette, K.J.; Soble, J.R.; et al. Assessing Performance Validity during Attention-Deficit/Hyperactivity Disorder Evaluations: Cross-Validation of Non-Memory Embedded Validity Indicators. Dev. Neuropsychol. 2022, 47, 247–257. [Google Scholar] [CrossRef] [PubMed]

- Ashendorf, L.; Clark, E.L.; Sugarman, M.A. Performance Validity and Processing Speed in a VA Polytrauma Sample. Clin. Neuropsychol. 2017, 31, 857–866. [Google Scholar] [CrossRef] [PubMed]

- Erdodi, L.A.; Hurtubise, J.L.; Charron, C.; Dunn, A.; Enache, A.; McDermott, A.; Hirst, R.B. The D-KEFS Trails as Performance Validity Tests. Psychol. Assess. 2018, 30, 1082–1095. [Google Scholar] [CrossRef]

- Greiffenstein, M.F.; Baker, W.J.; Gola, T. Validation of Malingered Amnesia Measures with a Large Clinical Sample. Psychol. Assess. 1994, 6, 218–224. [Google Scholar] [CrossRef]

- Bing-Canar, H.; Phillips, M.S.; Shields, A.N.; Ogram Buckley, C.M.; Chang, F.; Khan, H.; Skymba, H.V.; Ovsiew, G.P.; Resch, Z.J.; Jennette, K.J.; et al. Cross-Validation of Multiple WAIS-IV Digit Span Embedded Performance Validity Indices Among a Large Sample of Adult Attention Deficit/Hyperactivity Disorder Clinical Referrals. J. Psychoeduc. Assess. 2022, 40, 678–688. [Google Scholar] [CrossRef]

- Sugarman, M.A.; Axelrod, B.N. Embedded Measures of Performance Validity Using Verbal Fluency Tests in a Clinical Sample. Appl. Neuropsychol. 2015, 22, 141–146. [Google Scholar] [CrossRef]

- Whiteside, D.M.; Kogan, J.; Wardin, L.; Phillips, D.; Franzwa, M.G.; Rice, L.; Basso, M.; Roper, B. Language-Based Embedded Performance Validity Measures in Traumatic Brain Injury. J. Clin. Exp. Neuropsychol. 2015, 37, 220–227. [Google Scholar] [CrossRef]

- Harrison, A.G.; Edwards, M.J. Symptom Exaggeration in Post-Secondary Students: Preliminary Base Rates in a Canadian Sample. Appl. Neuropsychol. 2010, 17, 135–143. [Google Scholar] [CrossRef]

- Suhr, J.; Hammers, D.; Dobbins-Buckland, K.; Zimak, E.; Hughes, C. The Relationship of Malingering Test Failure to Self-Reported Symptoms and Neuropsychological Findings in Adults Referred for ADHD Evaluation. Arch. Clin. Neuropsychol. 2008, 23, 521–530. [Google Scholar] [CrossRef]

- Sullivan, B.K.; May, K.; Galbally, L. Symptom Exaggeration by College Adults in Attention-Deficit Hyperactivity Disorder and Learning Disorder Assessments. Appl. Neuropsychol. 2007, 14, 189–207. [Google Scholar] [CrossRef]

- Abramson, D.A.; White, D.J.; Rhoads, T.; Carter, D.A.; Hansen, N.D.; Resch, Z.J.; Jennette, K.J.; Ovsiew, G.P.; Soble, J.R. Cross-Validating the Dot Counting Test Among an Adult ADHD Clinical Sample and Analyzing the Effect of ADHD Subtype and Comorbid Psychopathology. Assessment 2021, 30, 264–273. [Google Scholar] [CrossRef] [PubMed]

- Phillips, M.S.; Wisinger, A.M.; Lapitan-Moore, F.T.; Ausloos-Lozano, J.E.; Bing-Canar, H.; Durkin, N.M.; Ovsiew, G.P.; Resch, Z.J.; Jennette, K.J.; Soble, J.R. Cross-Validation of Multiple Embedded Performance Validity Indices in the Rey Auditory Verbal Learning Test and Brief Visuospatial Memory Test-Revised in an Adult Attention Deficit/Hyperactivity Disorder Clinical Sample. Psychol. Inj. Law 2022. [Google Scholar] [CrossRef]

- Jennette, K.J.; Williams, C.P.; Resch, Z.J.; Ovsiew, G.P.; Durkin, N.M.; O’Rourke, J.J.F.; Marceaux, J.C.; Critchfield, E.A.; Soble, J.R. Assessment of Differential Neurocognitive Performance Based on the Number of Performance Validity Tests Failures: A Cross-Validation Study across Multiple Mixed Clinical Samples. Clin. Neuropsychol. 2022, 36, 1915–1932. [Google Scholar] [CrossRef] [PubMed]

- Martin, P.K.; Schroeder, R.W. Base Rates of Invalid Test Performance across Clinical Non-Forensic Contexts and Settings. Arch. Clin. Neuropsychol. 2020, 35, 717–725. [Google Scholar] [CrossRef]

- Marshall, P.; Schroeder, R.; O’Brien, J.; Fischer, R.; Ries, A.; Blesi, B.; Barker, J. Effectiveness of Symptom Validity Measures in Identifying Cognitive and Behavioral Symptom Exaggeration in Adult Attention Deficit Hyperactivity Disorder. Clin. Neuropsychol. 2010, 24, 1204–1237. [Google Scholar] [CrossRef]

- Sibley, M.H. Empirically-Informed Guidelines for First-Time Adult ADHD Diagnosis. J. Clin. Exp. Neuropsychol. 2021, 43, 340–351. [Google Scholar] [CrossRef]

- American Psychiatric Association. Diagnostic and Statistical Manual of Mental Disorders (DSM 5); American Psychiatric Association: Washington, DC, USA, 2013; ISBN 9780890425541. [Google Scholar]

- Retz-Junginger, P.; Retz, W.; Blocher, D.; Stieglitz, R.-D.; Georg, T.; Supprian, T.; Wender, P.H.; Rösler, M. Reliability and Validity of the German Short Version of the Wender-Utah Rating Scale. Nervenarzt 2003, 74, 987–993. [Google Scholar] [CrossRef]

- Rösler, M.; Retz-Junginger, P.; Retz, W.; Stieglitz, R. Homburger ADHS-Skalen Für Erwachsene. Untersuchungsverfahren Zur Syndromalen Und Kategorialen Diagnostik Der Aufmerksamkeitsdefizit-/Hyperaktivitätsstörung (ADHS) Im Erwachsenenalter; Hogrefe: Göttingen, Germany, 2008. [Google Scholar]

- Tombaugh, T.N. Test of Memory Malingering: TOMM; Multihealth Systems: North York, ON, Canada, 1996. [Google Scholar]

- Greve, K.W.; Bianchini, K.J.; Doane, B.M. Classification Accuracy of the Test of Memory Malingering in Traumatic Brain Injury: Results of a Known-Groups Analysis. J. Clin. Exp. Neuropsychol. 2006, 28, 1176–1190. [Google Scholar] [CrossRef]

- Schuhfried, G. Vienna Test System: Psychological Assessment; Schuhfried: Vienna, Austria, 2013. [Google Scholar]

- Tucha, L.; Fuermaier, A.B.M.; Aschenbrenner, S.; Tucha, O. Vienna Test System (VTS): Neuropsychological Test Battery for the Assessment of Cognitive Functions in Adult ADHD (CFADHD); Schuhfried: Vienna, Austria, 2013. [Google Scholar]

- Rodewald, K.; Weisbrod, M.; Aschenbrenner, S. Vienna Test System (VTS): Trail Making Test—Langensteinbach Version (TMT-L); Schuhfried: Vienna, Austria, 2012. [Google Scholar]

- Sturm, W. Vienna Test System (VTS): Perceptual and Attention Functions—Selective Attention (WAFS); Schuhfried: Vienna, Austria, 2011. [Google Scholar]

- Kirchner, W.K. Age Differences in Short-Term Retention of Rapidly Changing Information. J. Exp. Psychol. 1958, 55, 352–358. [Google Scholar] [CrossRef]

- Schellig, D.; Schuri, U. Vienna Test System (VTS): N-Back Verbal (NBV); Schuhfried: Vienna, Austria, 2012. [Google Scholar]

- Rodewald, K.; Weisbrod, M.; Aschenbrenner, S. Vienna Test System (VTS): 5-Point Test (5 POINT)—Langensteinbach Version; Schuhfried: Vienna, Austria, 2014. [Google Scholar]

- Gmehlin, D.; Stelzel, C.; Weisbrod, M.; Kaiser, S.; Aschenbrenner, S. Vienna Test System (VTS): Task Switching (SWITCH); Schuhfried: Vienna, Austria, 2017. [Google Scholar]

- Sturm, W. Vienna Test System (VTS): Perceptual and Attention Functions—Vigilance (WAFV); Schuhfried: Vienna, Austria, 2012. [Google Scholar]

- Kaiser, S.; Aschenbrenner, S.; Pfüller, U.; Roesch-Ely, D.; Weisbrod, M. Vienna Test System (VTS): Response Inhibition (INHIB); Schuhfried: Vienna, Austria, 2016. [Google Scholar]

- Schuhfried, G. Vienna Test System (VTS): Stroop Interference Test (STROOP); Schuhfried: Vienna, Austria, 2016. [Google Scholar]

- IBM Corp. IBM SPSS Statistics for Macintosh, Version 25.0; IBM Corp.: Armonk, NY, USA, 2017.

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2022. [Google Scholar]

- Sjoberg, D.D.; Whiting, K.; Curry, M.; Lavery, J.A.; Larmarange, J. Reproducible Summary Tables with the Gtsummary Package. R J. 2021, 13, 570–580. [Google Scholar] [CrossRef]

- Aust, F.; Barth, M. Papaja: Prepare Reproducible APA Journal Articles with R Markdown. 2022. Available online: https://github.com/crsh/papaja (accessed on 8 January 2023).

- Wickham, H.; Averick, M.; Bryan, J.; Chang, W.; McGowan, L.D.; François, R.; Grolemund, G.; Hayes, A.; Henry, L.; Hester, J.; et al. Welcome to the Tidyverse. J. Open Source Softw. 2019, 4, 1686. [Google Scholar] [CrossRef]

- Kuhn, M.; Vaughan, D.; Hvitfeldt, E. Yardstick: Tidy Characterizations of Model Performance. 2022. Available online: https://github.com/tidymodels/yardstick (accessed on 8 January 2023).

- Braw, Y. Response Time Measures as Supplementary Validity Indicators in Forced-Choice Recognition Memory Performance Validity Tests: A Systematic Review. Neuropsychol. Rev. 2022, 32, 71–98. [Google Scholar] [CrossRef] [PubMed]

- Kanser, R.J.; Rapport, L.J.; Bashem, J.R.; Hanks, R.A. Detecting Malingering in Traumatic Brain Injury: Combining Response Time with Performance Validity Test Accuracy. Clin. Neuropsychol. 2019, 33, 90–107. [Google Scholar] [CrossRef] [PubMed]

| Characteristic | ADHD n = 57 1 | Control n = 60 1 | Simulation n = 151 1 | p-Value 2 |

|---|---|---|---|---|

| Age (years) | 32 (12) | 20 (1) | 19 (1) | <0.001 |

| Gender (f/m) | 19 (33%)/ 38 (67%) | 43 (72%)/ 17 (28%) | 120 (79%)/ 31 (21%) | <0.001 |

| Education (years) | 10 (1) | 13 (1) | 13 (1) | <0.001 |

| ADHD Symptoms (childhood) | 41 (18) | 14 (9) | 13 (9) | <0.001 |

| ADHD Symptoms (adulthood) | 34 (9) | 11 (7) | 10 (7) | <0.001 |

| ADHD | Control | Simulation | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Raw Score | PR < 9 | Raw Score | PR < 9 | Raw Score | PR < 9 | ||||||||||

| Test | Median | MAD | Min | Max | % | Median | MAD | Min | Max | % | Median | MAD | Min | Max | % |

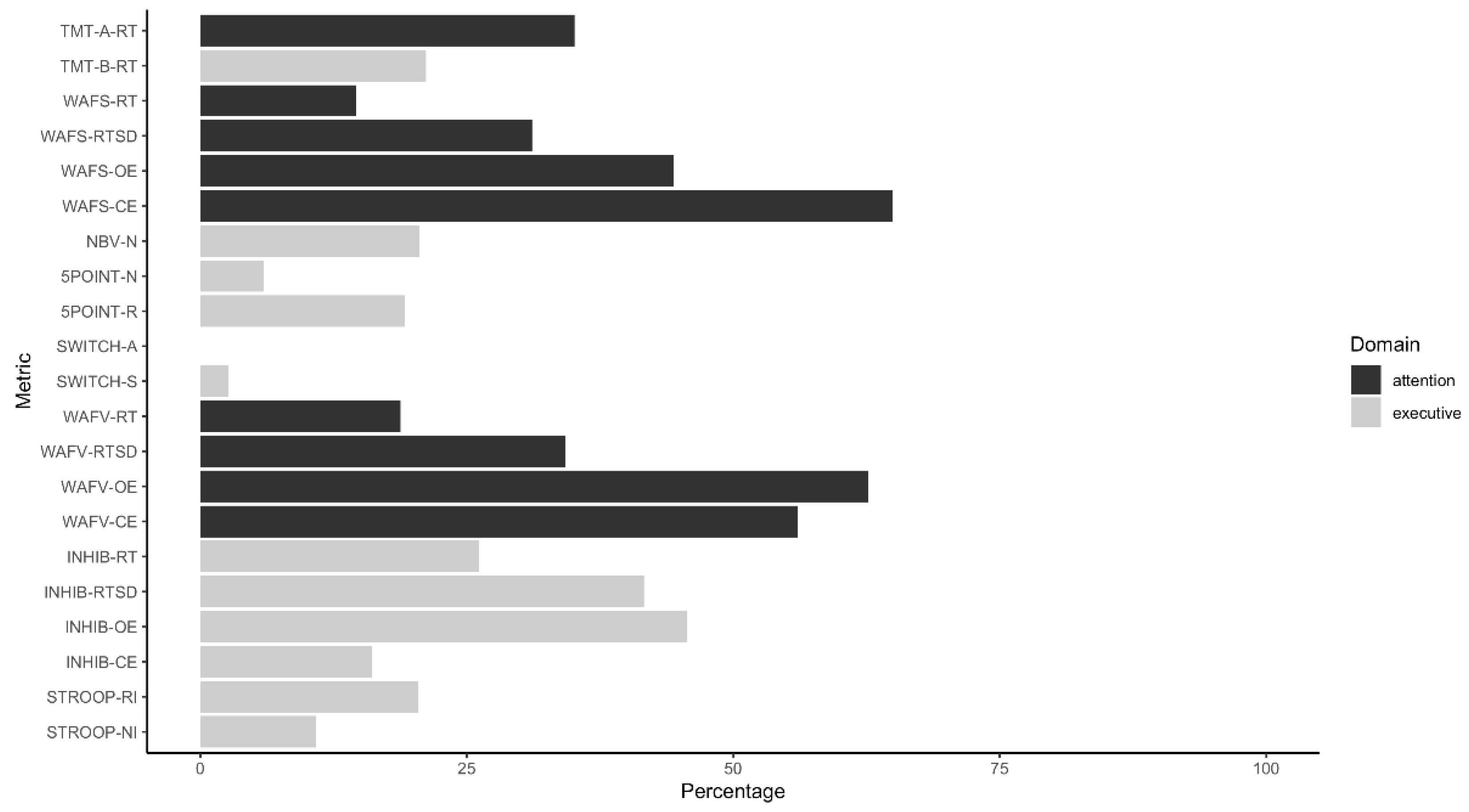

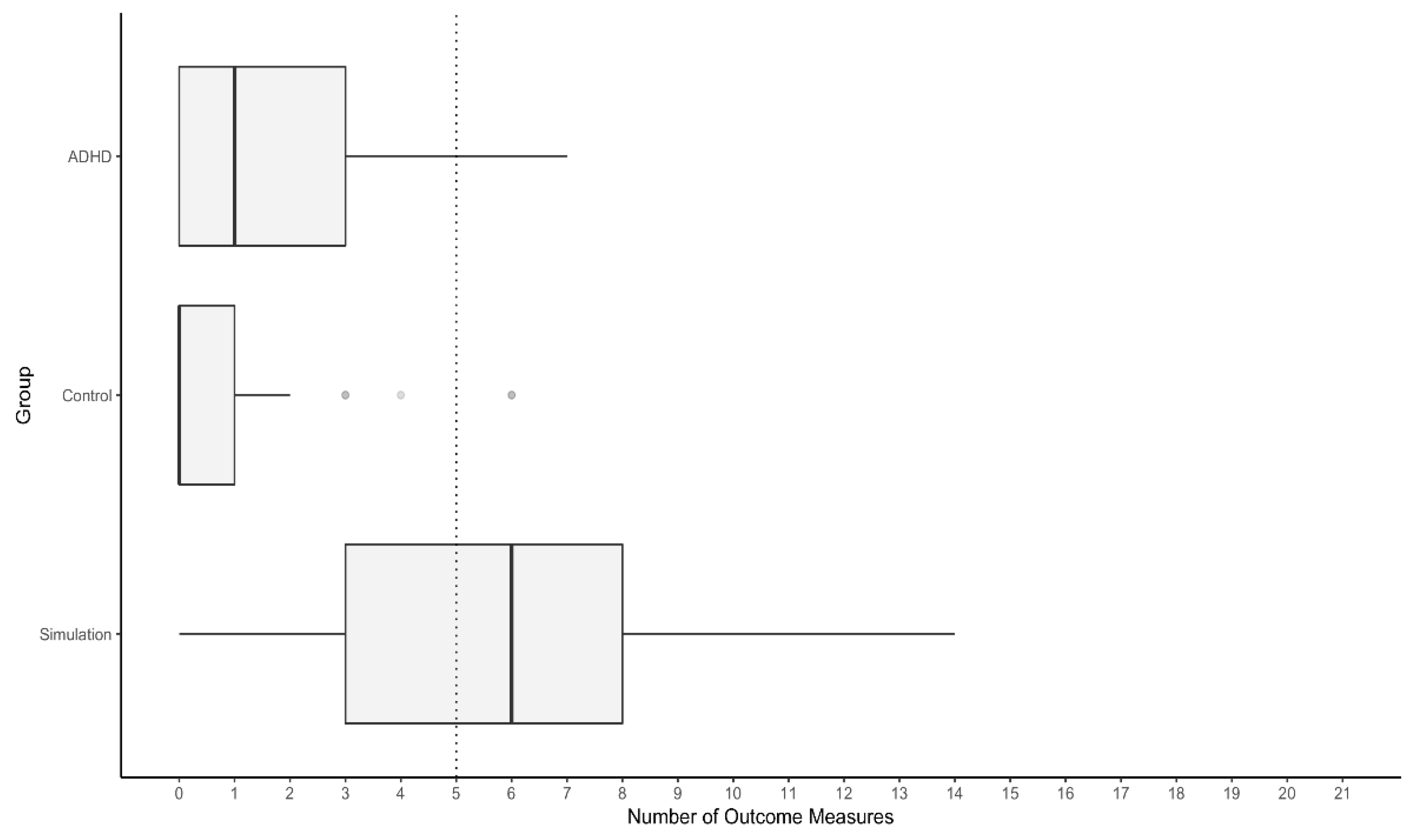

| TMT-A RT | 20.10 | 3.30 | 12.50 | 40.60 | 35.09 | 14.90 | 1.30 | 10.60 | 24.00 | 0.00 | 20.80 | 6.20 | 11.60 | 151.90 | 35.10 |

| TMT-B RT | 32.80 | 7.50 | 15.30 | 96.60 | 21.05 | 20.80 | 3.95 | 13.00 | 43.10 | 0.00 | 33.00 | 9.80 | 13.60 | 529.00 | 19.21 |

| WAFS RT | 368 | 63.00 | 150.00 | 694.00 | 19.30 | 326.50 | 53.00 | 200.00 | 524.00 | 8.33 | 406.00 | 62.00 | 71.00 | 710.00 | 30.46 |

| WAFS RTSD | 1.25 | 0.08 | 1.12 | 9.33 | 29.82 | 1.21 | 0.05 | 1.11 | 2.80 | 20.00 | 1.36 | 0.06 | 1.14 | 11.86 | 73.51 |

| WAFS OE | 0.00 | 0.00 | 0.00 | 16.00 | 24.56 | 0.00 | 0.00 | 0.00 | 9.00 | 8.33 | 3.00 | 3.00 | 0.00 | 17.00 | 67.55 |

| WAFS CE | 3.00 | 1.00 | 0.00 | 49.00 | 15.79 | 2.00 | 1.00 | 0.00 | 10.00 | 10.00 | 10.00 | 5.00 | 0.00 | 87.00 | 77.48 |

| NBV N | 11.00 | 2.00 | 1.00 | 15.00 | 22.81 | 14.00 | 1.00 | 5.00 | 15.00 | 1.67 | 9.00 | 2.00 | 0.00 | 15.00 | 20.53 |

| 5POINT N | 23.00 | 5.00 | 11.00 | 45.00 | 21.05 | 31.00 | 7.00 | 12.00 | 50.00 | 0.00 | 26.00 | 8.00 | 4.00 | 48.00 | 4.64 |

| 5POINT R | 1.00 | 1.00 | 0.00 | 22.00 | 3.51 | 1.00 | 1.00 | 0.00 | 14.00 | 6.67 | 2.00 | 1.00 | 0.00 | 27.00 | 7.28 |

| SWITCH A | 0.11 | 2.11 | −8.00 | 34.00 | 15.79 | 0.02 | 0.02 | −0.05 | 0.13 | 5.00 | 0.03 | 0.04 | −0.17 | 10.00 | 11.26 |

| SWITCH S | 0.24 | 0.15 | −0.40 | 1.54 | 10.53 | 0.19 | 0.10 | −0.24 | 0.49 | 3.33 | 0.10 | 0.11 | −0.45 | 0.72 | 6.62 |

| WAFV RT | 464 | 65.00 | 355 | 662 | 17.54 | 418 | 51.50 | 247 | 652 | 6.67 | 548 | 69.00 | 69 | 751 | 32.45 |

| WAFV RTSD | 1.26 | 0.05 | 1.17 | 1.43 | 12.28 | 1.25 | 0.05 | 1.12 | 3.86 | 11.67 | 1.31 | 0.07 | 0.00 | 18.68 | 35.76 |

| WAFV OE | 2.00 | 2.00 | 0.00 | 18.00 | 26.32 | 1.00 | 1.00 | 0.00 | 11.00 | 10.00 | 9.00 | 4.00 | 0.00 | 25.00 | 82.12 |

| WAFV CE | 2.00 | 1.00 | 0.00 | 17.00 | 24.56 | 1.00 | 1.00 | 0.00 | 8.00 | 13.33 | 7.00 | 4.00 | 0.00 | 298.00 | 76.82 |

| INHIB RT | 0.28 | 0.03 | 0.20 | 0.51 | 7.02 | 0.26 | 0.03 | 0.21 | 0.41 | 1.67 | 0.31 | 0.05 | 0.21 | 0.56 | 25.17 |

| INHIB RTSD | 0.10 | 0.03 | 0.05 | 3.25 | 21.05 | 0.08 | 0.02 | 0.04 | 0.33 | 3.33 | 0.15 | 0.05 | 0.04 | 1.83 | 51.66 |

| INHIB OE | 4.00 | 3.00 | 0.00 | 33.00 | 28.07 | 2.00 | 2.00 | 0.00 | 46.00 | 15.00 | 15.00 | 10.00 | 0.00 | 121.00 | 65.56 |

| INHIB CE | 12.00 | 4.00 | 1.00 | 35.00 | 19.30 | 12.00 | 3.00 | 1.00 | 32.00 | 8.33 | 19.00 | 5.00 | 2.00 | 43.00 | 43.05 |

| STROOP RI | 0.18 | 0.10 | −0.03 | 0.88 | 31.58 | 0.12 | 0.04 | 0.00 | 0.51 | 8.33 | 0.22 | 0.12 | −0.71 | 2.31 | 33.11 |

| STROOP NI | 0.11 | 0.08 | −0.12 | 0.85 | 10.53 | 0.11 | 0.04 | 0.00 | 1.16 | 0.00 | 0.10 | 0.06 | −0.29 | 1.00 | 13.25 |

| Test | Indicator | Cut-off Score |

|---|---|---|

| TMT-A | RT | ≥27.1 |

| TMT-B | RT | ≥54.6 |

| WAFS | RT | ≥532 |

| WAFS | RTSD | ≥1.43 |

| WAFS | OE | ≥4 |

| WAFS | CE | ≥8 |

| NBV | N | ≤6 |

| 5POINT | N | ≤12 |

| 5POINT | R | ≥4 |

| SWITCH | A | ≥11 |

| SWITCH | S | ≥0.54 |

| WAFV | RT | ≥636 |

| WAFV | RTSD | ≥1.35 |

| WAFV | OE | ≥7 |

| WAFV | CE | ≥7 |

| INHIB | RT | ≥0.364 |

| INHIB | RTSD | ≥0.165 |

| INHIB | OE | ≥18 |

| INHIB | CE | ≥27 |

| STROOP | RI | ≥0.448 |

| STROOP | NI | ≥0.323 |

| Base Rate | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 10% | 20% | 30% | 40% | 50% | ||||||||

| Metric | Sensitivity | Specificity | PPV | NPV | PPV | NPV | PPV | NPV | PPV | NPV | PPV | NPV |

| TMT-A RT | 35.10 | 91.23 | 30.78 | 92.67 | 50.01 | 84.90 | 63.17 | 76.63 | 72.73 | 67.83 | 80.01 | 58.43 |

| TMT-B RT | 21.19 | 91.23 | 21.16 | 91.24 | 37.65 | 82.24 | 50.87 | 72.98 | 61.69 | 63.46 | 70.73 | 53.65 |

| WAFS RT | 14.57 | 91.23 | 15.58 | 90.58 | 29.34 | 81.03 | 41.58 | 71.36 | 52.55 | 61.56 | 62.42 | 51.64 |

| WAFS RTSD | 31.13 | 91.23 | 28.28 | 92.26 | 47.01 | 84.12 | 60.33 | 75.55 | 70.29 | 66.52 | 78.01 | 56.98 |

| WAFS OE | 44.37 | 92.98 | 41.26 | 93.77 | 61.25 | 86.99 | 73.04 | 79.59 | 80.83 | 71.49 | 86.34 | 62.57 |

| WAFS CE | 64.90 | 91.23 | 45.12 | 95.90 | 64.91 | 91.23 | 76.02 | 85.85 | 83.14 | 79.59 | 88.09 | 72.22 |

| NBV N | 20.53 | 91.23 | 20.64 | 91.18 | 36.91 | 82.12 | 50.08 | 72.82 | 60.94 | 63.26 | 70.06 | 53.44 |

| 5POINT N | 5.96 | 96.49 | 15.88 | 90.23 | 29.81 | 80.41 | 42.13 | 70.54 | 53.11 | 60.62 | 62.94 | 50.64 |

| 5POINT R | 19.21 | 91.23 | 19.57 | 91.04 | 35.37 | 81.87 | 48.41 | 72.49 | 59.34 | 62.88 | 68.65 | 53.03 |

| SWITCH A | 0.00 | 90.57 | 0.00 | 89.07 | 0.00 | 78.37 | 0.00 | 67.88 | 0.00 | 57.60 | 0.00 | 47.52 |

| SWITCH S | 2.65 | 90.57 | 3.03 | 89.33 | 6.56 | 78.82 | 10.74 | 68.46 | 15.77 | 58.25 | 21.92 | 48.19 |

| WAFV RT | 18.79 | 91.23 | 19.23 | 91.00 | 34.88 | 81.80 | 47.87 | 72.39 | 58.82 | 62.76 | 68.18 | 52.91 |

| WAFV RTSD | 34.23 | 91.23 | 30.24 | 92.58 | 49.38 | 84.73 | 62.58 | 76.40 | 72.23 | 67.54 | 79.60 | 58.11 |

| WAFV OE | 62.67 | 91.23 | 44.25 | 95.65 | 64.11 | 90.72 | 75.38 | 85.08 | 82.65 | 78.57 | 87.72 | 70.96 |

| WAFV CE | 56.00 | 91.23 | 41.50 | 94.91 | 61.48 | 89.24 | 73.23 | 82.87 | 80.97 | 75.67 | 86.46 | 67.46 |

| INHIB RT | 26.17 | 91.23 | 24.90 | 91.75 | 42.73 | 83.17 | 56.12 | 74.25 | 66.55 | 64.96 | 74.90 | 55.27 |

| INHIB RTSD | 41.61 | 91.23 | 34.52 | 93.36 | 54.25 | 86.21 | 67.03 | 78.47 | 75.98 | 70.09 | 82.59 | 60.97 |

| INHIB OE | 45.64 | 91.23 | 36.63 | 93.79 | 56.53 | 87.03 | 69.04 | 79.66 | 77.62 | 71.57 | 83.88 | 62.66 |

| INHIB CE | 16.11 | 94.74 | 25.38 | 91.04 | 43.35 | 81.87 | 56.74 | 72.49 | 67.11 | 62.88 | 75.37 | 53.04 |

| STROOP RI | 20.41 | 91.23 | 20.54 | 91.16 | 36.77 | 82.09 | 49.93 | 72.79 | 60.80 | 63.23 | 69.94 | 53.41 |

| STROOP NI | 10.88 | 91.23 | 12.12 | 90.21 | 23.68 | 80.37 | 34.72 | 70.49 | 45.27 | 60.56 | 55.37 | 50.59 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Becke, M.; Tucha, L.; Butzbach, M.; Aschenbrenner, S.; Weisbrod, M.; Tucha, O.; Fuermaier, A.B.M. Feigning Adult ADHD on a Comprehensive Neuropsychological Test Battery: An Analogue Study. Int. J. Environ. Res. Public Health 2023, 20, 4070. https://doi.org/10.3390/ijerph20054070

Becke M, Tucha L, Butzbach M, Aschenbrenner S, Weisbrod M, Tucha O, Fuermaier ABM. Feigning Adult ADHD on a Comprehensive Neuropsychological Test Battery: An Analogue Study. International Journal of Environmental Research and Public Health. 2023; 20(5):4070. https://doi.org/10.3390/ijerph20054070

Chicago/Turabian StyleBecke, Miriam, Lara Tucha, Marah Butzbach, Steffen Aschenbrenner, Matthias Weisbrod, Oliver Tucha, and Anselm B. M. Fuermaier. 2023. "Feigning Adult ADHD on a Comprehensive Neuropsychological Test Battery: An Analogue Study" International Journal of Environmental Research and Public Health 20, no. 5: 4070. https://doi.org/10.3390/ijerph20054070

APA StyleBecke, M., Tucha, L., Butzbach, M., Aschenbrenner, S., Weisbrod, M., Tucha, O., & Fuermaier, A. B. M. (2023). Feigning Adult ADHD on a Comprehensive Neuropsychological Test Battery: An Analogue Study. International Journal of Environmental Research and Public Health, 20(5), 4070. https://doi.org/10.3390/ijerph20054070