Predictive Model for Human Activity Recognition Based on Machine Learning and Feature Selection Techniques

Abstract

1. Introduction

2. Conceptual Information

2.1. Fundamentals Related to Human Activity Recognition

2.2. Human Activity Recognition

2.3. HAR Dataset

3. Building Predictive Models for HAR

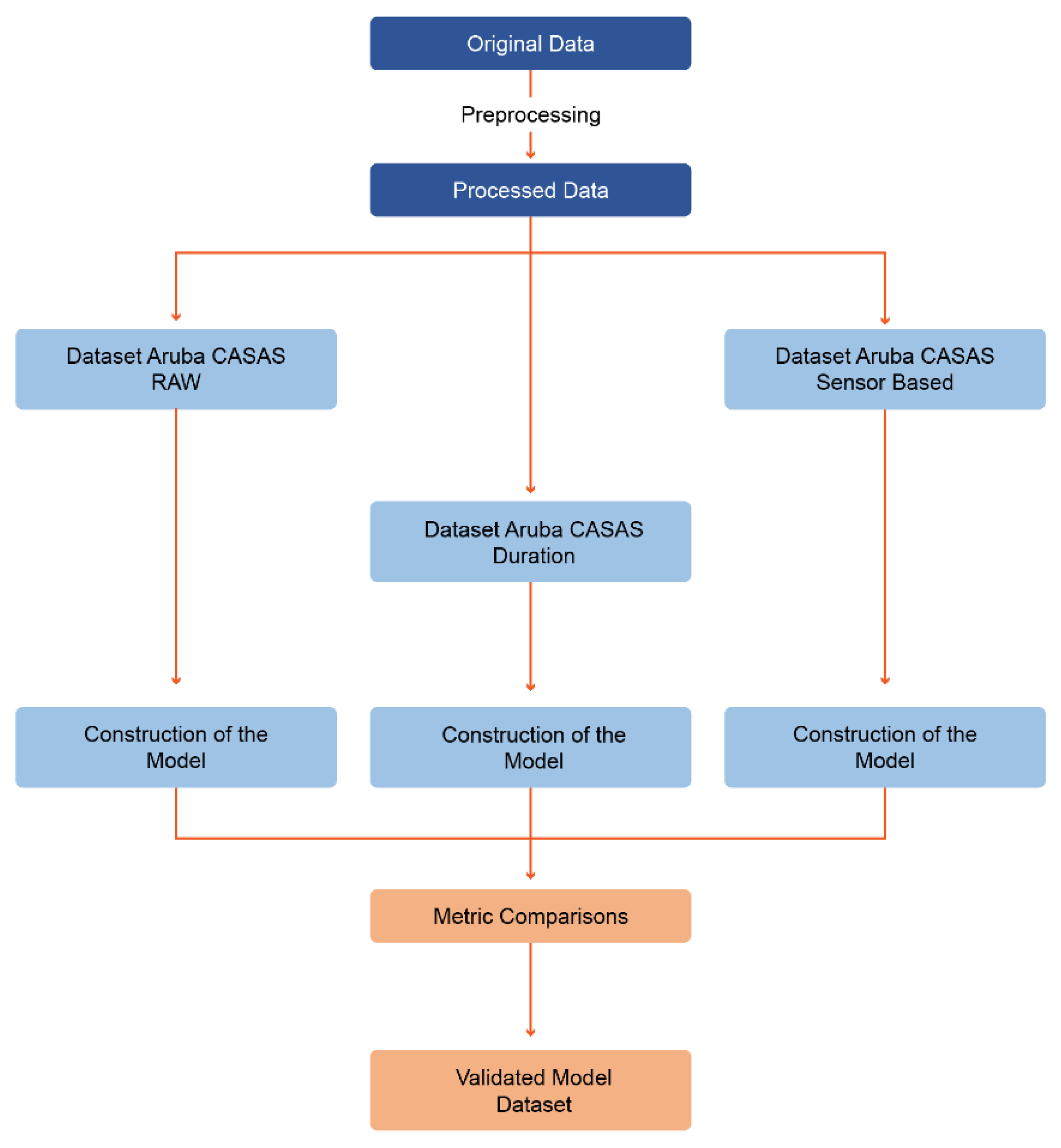

3.1. Pre-Processing of Datasets

- −

- The Aruba CASAS–raw dataset has a total of 47 features, of which 39 correspond to the category of count features, five (5) to the category of average features, and the remaining three (3) to the category of original features.

- −

- The Aruba CASAS–duration dataset has a total of 49 features, of which 41 correspond to the category of count features, five (5) to the category of average features, and the remaining three (3) to the category of original features.

- −

- The Aruba CASAS–sensor-based dataset is made up of a total of 67 features, of which 39 correspond to the category of count features, five (5) to the category of average features, 20 to the category of aggregation features, and the remaining three (3) to the category of original features.

3.2. Aggregation Functions

- -

- Range: is the difference between the largest value and the smallest value in a data set.

- -

- Standard deviation: defined as the square root of the variance. The variance is the sum of all the squared differences of each occurrence value to the mean, divided by the number of sensors 𝑆 minus 1.

- -

- Skewness: defined as the quotient of the third central moment 𝑚3 of a data set and the standard deviation cubed.

- -

- Kurtosis: defined as the quotient of the fourth central moment of a data set 𝑚4, and the standard deviation σ to the fourth power.

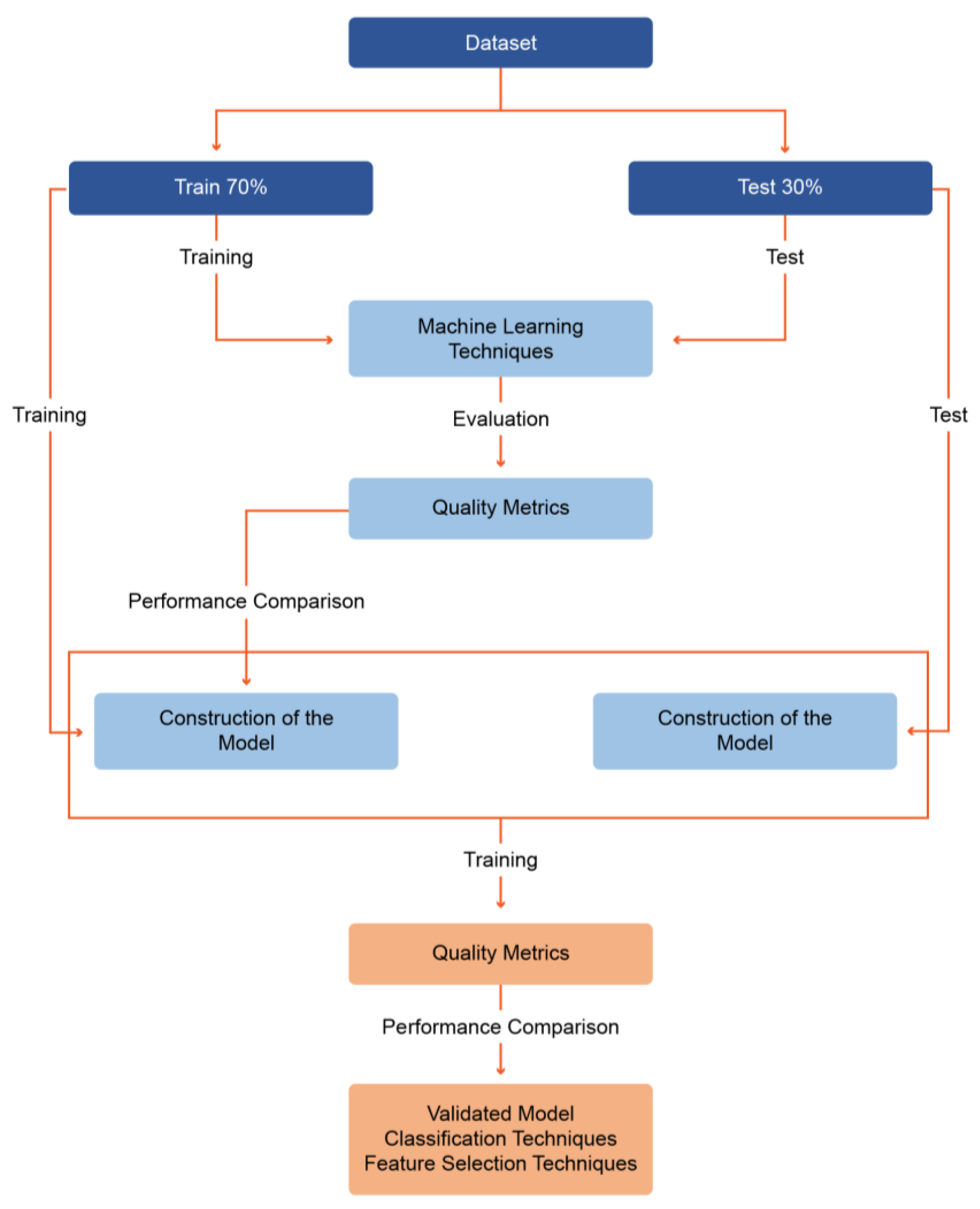

3.3. Model Construction

3.4. Experimentation

4. Experimentation Scenarios

4.1. Experimental Scenario No. 1: Comparative Analysis of Classification Techniques on Data Subsets

4.2. Experimental Scenario No. 2: Comparative Analysis of the Hybridization of Selection and Classification Techniques on Data Subsets

- -

- In the Aruba CASAS–raw dataset, the two combinations presented the same results in terms of recall, F-Measure, and ROC area. LMT with Gain Ratio using 24 features presented the lowest FP-Rate at 0.5%.

- -

- In the evaluation of the Aruba CASAS–duration dataset, the combination with the best recall (95.90%) and ROC area (99.70%) was LMT with One R, using 33 features.

- -

- Regarding the evaluation of the Aruba CASAS–sensor-based dataset, the results for the two hybridizations of classification and selection techniques used coincided with the respective results of the precision, recall, F-Measure, and ROC area metrics.

4.3. Experimental Scenario No. 3: Comparative Analysis of the Best Results Obtained, Applying Cross-Validation

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- U.S. National Library of Medicine. Neurodegenerative Diseases. 2019. Available online: https://medlineplus.gov/spanish/degenerativenervediseases.html (accessed on 26 August 2022).

- World Health Organization. Dementia. 2019. Available online: https://www.who.int/news-room/fact-sheets/detail/dementia (accessed on 26 August 2022).

- Li, R.; Lu, B.; McDonald-Maier, K.D. Cognitive assisted living ambient system: A survey. Digit. Commun. Netw. 2015, 1, 229–252. [Google Scholar] [CrossRef]

- Memon, M.; Wagner, S.R.; Pedersen, C.F.; Aysha Beevi, F.H.; Hansen, F.O. Ambient Assisted Living healthcare frameworks, platforms, standards, and quality attributes. Sensors 2014, 14, 4312–4341. [Google Scholar] [CrossRef] [PubMed]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A public domain dataset for human activity recognition using smartphones. In Proceedings of the 21st European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning (ESANN 2013), Bruges, Belgium, 24–26 April 2013; pp. 437–442. [Google Scholar]

- Lara, Ó.D.; Labrador, M.A. A survey on human activity recognition using wearable sensors. IEEE Commun. Surv. Tutor. 2013, 15, 1192–1209. [Google Scholar] [CrossRef]

- Aggarwal, J.K.; Ryoo, M.S. Human activity analysis: A review. ACM Comput. Surv. 2011, 43, 1–43. [Google Scholar] [CrossRef]

- Reed, K.L.; Sanderson, S.N. Concepts of Occupational Therapy. 1999. Available online: https://books.google.com.co/books?hl=es&lr=&id=1ZE47g_IRTwC&oi=fnd&pg=PR7&dq=Concepts+of+Occupational+Therapy.&ots=sMksfVhmYK&sig=wlabmL9W01HtUuzpARaj6BUDtHI#v=onepage&q=ConceptsofOccupationalTherapy.&f=false (accessed on 26 August 2022).

- Kwon, B.; Kim, J.; Lee, S. An enhanced multi-view human action recognition system for virtual training simulator. In Proceedings of the 2016 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA), Jeju, Korea, 13–16 December 2016; pp. 1–4. [Google Scholar] [CrossRef]

- De-La-Hoz-Franco, E.; Ariza-Colpas, P.; Quero, J.M.; Espinilla, M. Sensor-based datasets for human activity recognition—A systematic review of literature. IEEE Access 2018, 6, 59192–59210. [Google Scholar] [CrossRef]

- Van Kasteren, T.L.M.; Englebienne, G.; Kröse, B.J.A. Activity recognition using semi-Markov models on real world smart home datasets. J. Ambient. Intell. Smart Environ. 2010, 2, 311–325. [Google Scholar] [CrossRef]

- Cook, D.J.; Crandall, A.S.; Thomas, B.L.; Krishnan, N.C. CASAS: A smart home in a box. Computer 2013, 46, 62–69. [Google Scholar] [CrossRef]

- Cook, D.J. Learning setting-generalized activity models for smart spaces. IEEE Intell. Syst. 2012, 27, 32–38. [Google Scholar] [CrossRef]

- Singla, G.; Cook, D.J.; Schmitter-Edgecombe, M. Recognizing independent and joint activities among multiple residents in smart environments. J. Ambient. Intell. Humaniz. Comput. 2010, 1, 57–63. [Google Scholar] [CrossRef]

- Chavarriaga, R.; Sagha, H.; Calatroni, A.; Digumarti, S.T.; Tröster, G.; Millán, J.D.; Roggen, D. The Opportunity challenge: A benchmark database for on-body sensor-based activity recognition. Pattern Recognit. Lett. 2013, 34, 2033–2042. [Google Scholar] [CrossRef]

- Banos, O.; Garcia, R.; Holgado-Terriza, J.A.; Damas, M.; Pomares, H.; Rojas, I.; Saez, A.; Villalonga, C. mHealthDroid: A Novel Framework for Agile Development of Mobile Health Applications. Ambient. Assist. Living Dly. Act. 2014, 8868, 91–98. [Google Scholar] [CrossRef]

- Shahi, A.; Woodford, B.J.; Lin, H. Dynamic real-time segmentation and recognition of activities using a multi-feature windowing approach. Pac.-Asia Conf. Knowl. Discov. Data Min. 2017, 10526, 26–38. [Google Scholar] [CrossRef]

- Mitra, S.; Acharya, T. Data Mining: Multimedia, Soft Computing, and Bioinformatics. In Technometrics; John Wiley & Sons: Hoboken, NJ, USA, 2003; Volume 46. [Google Scholar] [CrossRef]

- Witten, I.H.; Frank, E.; Hall, M.A. Data Mining: Practical Machine Learning Tools and Techniques. In Complementary Literature None; Morgan Kaufmann Publishers: Burlington, MA, USA, 2011; Available online: http://books.google.com/books?id=bDtLM8CODsQC&pgis=1 (accessed on 26 August 2022).

- Moine, J.M.; Haedo, A.; Gordillo, S. Comparative Study of Data Mining Methodologies. XIII Workshop of Computer Science Researchers. 2011, pp. 278–281. Available online: http://sedici.unlp.edu.ar/handle/10915/20034 (accessed on 26 August 2022).

- Rice, J.A. Mathematical Statistics and Data Analysis; Cengage Learning: Boston, MA, USA, 2006. [Google Scholar]

- Landwehr, N.; Hall, M.; Frank, E. Logistic model trees. Mach. Learn. 2005, 59, 161–205. [Google Scholar] [CrossRef]

- Quinlan, J.R. C4.5: Programs for Machine Learning by J. Ross Quinlan. Morgan Kaufmann Publishers, Inc., 1993. Mach. Learn. 1994, 16, 235–240. [Google Scholar] [CrossRef]

- Frank, E.; Wang, Y.; Inglis, S.; Holmes, G.; Witten, I.H. Using model trees for classification. Mach. Learn. 1998, 32, 63–76. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Marks Hall, G.H. WEKA: Practical Machine Learning Tools and Techniques with Java Implementations. 1994. Available online: https://researchcommons.waikato.ac.nz/bitstream/handle/10289/1040/uowcswp199911.pdf?sequence=1&isAllowed=y (accessed on 26 August 2022).

- Cohen, W.W. Fast Effective Rule Induction. In Proceedings of the Twelfth International Conference on Machine Learning, Tahoe, CA, USA, 9–12 July 1995. [Google Scholar]

- Kohavi, R.; Provost, F. Glossary of Terms. Mach. Learn. 1998, 2, 271–274. [Google Scholar] [CrossRef]

- Wolpert, D.H. Stacked generalization. Neural Netw. 1992, 5, 241–259. [Google Scholar] [CrossRef]

- Holte, R.C. Very Simple Classification Rules Perform Well on Most Commonly Used Datasets. Mach. Learn. 1993, 11, 63–91. [Google Scholar] [CrossRef]

- Cessie, S.L.; Van Houwelingen, J.C. Ridge Estimators in Logistic Regression. J. R. Stat. Society. Ser. C (Appl. Stat.) 1992, 41, 191–201. Available online: http://www.jstor.org/stable/2347628 (accessed on 26 August 2022). [CrossRef]

- Van Der Malsburg, C. Frank Rosenblatt: Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms; Brain Theory (February); Springer: Berlin/Heidelberg, Germany, 1986; pp. 245–248. [Google Scholar] [CrossRef]

- Friedman, J.; Hastie, T.; Tibshirani, R. Additive logistic regression: A statistical view of boosting (with discussion and a rejoinder by the authors). Ann. Stat. 2000, 28, 337–407. [Google Scholar] [CrossRef]

- Read, J.; Puurula, A.; Bifet, A. Multi-label Classification with Meta-Labels. In Proceedings of the 2014 IEEE International Conference on Data Mining, Shenzhen, China, 14–17 December 2014; pp. 941–946. [Google Scholar] [CrossRef]

- Breiman, L. Bagging predictors. Mach. Learn. 1996, 24, 123–140. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. Experiments with a New Boosting Algorithm. In Proceedings of the 13th International Conference on Machine Learning, Murray Hill, NY, USA, 22 January 1996; pp. 148–156. [Google Scholar]

- Kittler, J.; Hatef, M.; Duin, R.P.W.; Matas, J. On combining classifiers. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 226–239. [Google Scholar] [CrossRef]

- Kohavi, R. Wrappers for Performance Enhancement and Obvious Decision Graphs. 1995. Available online: https://dl.acm.org/citation.cfm?id=241090 (accessed on 26 August 2022).

- Eibe, F.; Holmes, G.; Witten, I.H. Weka 3—Data Mining with Open Source Machine Learning Software in Java. 2007. Available online: https://www.cs.waikato.ac.nz/ml/weka/ (accessed on 26 August 2022).

- Ho, T.K. The Random Subspace Method for Constructing Decision Forests. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 832–844. [Google Scholar] [CrossRef]

- Aha, D.W.; Kibler, D.; Albert, M.K. Instance-Based Learning Algorithms. Mach. Learn. 1991, 6, 37–66. [Google Scholar] [CrossRef]

- Cleary, J.G.; Trigg, L.E. An Instance-based Learner Using an Entropic Distance Measure. Elsevier 1995, 5, 1–14. [Google Scholar] [CrossRef]

- Frank, E.; Hall, M.; Pfahringer, B. Locally Weighted Naive Bayes. 2003, pp. 249–256. Available online: http://arxiv.org/abs/1212.2487 (accessed on 26 August 2022).

- Hall, M.A.; Holmes, G. Benchmarking Attribute Selection Techniques for Discrete Class Data Mining. IEEE Trans. Knowl. Data Eng. 2003, 15, 1437–1447. [Google Scholar] [CrossRef]

- Robnik-Šikonja, M.; Kononenko, I. An adaptation of Relief for attribute estimation in regression. In Proceedings of the Fourteenth International Conference on Machine Learning (ICML’97), San Francisco, CA, USA, 8–12 July 1997; Volume 5, pp. 296–304. Available online: http://dl.acm.org/citation.cfm?id=645526.657141 (accessed on 26 August 2022).

- Camaré, L.J.M. Machine Learning from Unbalanced Data Sets and Its Application in Medical Diagnosis and Prognosis. Ph.D. Thesis, Instituto Nacional de Aastrofísica, Optica y Electrónica, Puebla, Mexico, 2008. Available online: https://inaoe.repositorioinstitucional.mx/jspui/bitstream/1009/533/1/MenaCaLJ.pdf (accessed on 26 August 2022).

| Data Collection Type [6] | Recognition Type [7,8] | Application Areas [7,9] | |

|---|---|---|---|

| Wearable devices and sensors: accelerometers, gyroscopes, GPS, electrocardiogram, magnetometer, and heart rate, among others | Environmental sensors: binary sensors and cameras | Gestures, actions, interactions, and activities (e.g., daily living) | Computer vision, video surveillance (e.g., banks or airports), sport technique analysis, interaction with video games through gestures, military tactics, assisted living environments for health care of the elderly people or other diseases |

| Dataset | Event | Occupancy | Devices | Datatype | Context |

|---|---|---|---|---|---|

| Van Kasteren [11] | Activities | Single | Wireless Sensor Network and sensors | Binary values | Two houses (kitchen and bathroom) |

| CASAS Kyoto [12] | Activities | Single | Motion, associated with objects and telephone sensors | Datetime, sensor id, and value (binary or numerical) | Washington State University smart workplace |

| CASAS Aruba [13] | Activities | Multi-occupancy | Motion, door, and temperature sensors | Datetime, sensor id, and value (binary or numerical) | Washington State University smart workplace |

| CASAS Multiresident [14] | Activities | Multi-occupancy | Motion, item, cabinet, water, burner, phone, and temperature sensors | Datetime, sensor id, value (binary), inhabitant id, and task id | Washington State University smart apartment |

| UCI HAR [5] | Actions | N/A | Accelerometer and gyroscope | Normalized values between [−1, 1] | Handset mounted: on the left side of the belt and placed according to the user’s preference |

| Opportunity [15] | Activities | Interleaved and hierarchical naturalistic activities | Inertial sensors and accelerometers | Text file (array, each row is a sample) | A room simulating a studio flat |

| mHealth [16] | Actions | N/A | Accelerometer, electrocardiogram, gyroscope, and magnetometer | Fine-grained real-valued sensor readings of actions over a short time interval | Chest, wrist, and ankle |

| Count Features | Average Features | Aggregation Features | Original Features | Total | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Door Contact Sensors | Motion Sensors | Number of Events | Duration of Activity | Thermometers | Thermometers | Start of Activity | End of Activity | Class | ||

| Features | D001-open, D001-close, D002-open, D002-close, D003-open, D003-close, D004-open y D004-close (Total: 8) | M001, M002, M003, M004, M005, M006, M007, M008, M009, M010, M011, M012, M013, M014, M015, M016, M017, M018, M019, M020, M021, M022, M023, M024, M025, M026, M027, M028, M029, M030 y M031 (Total: 31) | Events (Total: 1) | Duration (Total: 1) | T001, T002, T003, T004 Y T005 (Total: 5) | T001-RANGE, T001-DESV, T001-BIAS, T001-KURT, T002-RANGE, T002-DESV, T002-BIAS, T002-KURT, T003-RANGE, T003-DESV, T003-BIAS, T003-KURT, T004-RANGO, T004-DESV, T004-BIAS, T004-KURT, T005-RANGE, T005-DESV, T005-BIAS y T005-KURT (Total: 20) | Start date and time (Total: 1) | Start date and time (Total: 1) | Activity (Total: 1) | 69 Features |

| Dataset | Count Features | Average Features | Aggregation Features | Original Features | Number of Features | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Door Contact Sensors | Motion Sensors | Number of Events | Duration of Activity | Thermometers | Thermometers | Start of Activity | End of Activity | Class | ||

| Aruba CASAS–raw | 8 | 31 | 0 | 0 | 5 | 0 | 1 | 1 | 1 | 47 |

| Aruba CASAS–duration | 8 | 31 | 1 | 1 | 5 | 0 | 1 | 1 | 1 | 49 |

| Aruba CASAS–sensor-based | 8 | 31 | 0 | 0 | 5 | 20 | 1 | 1 | 1 | 67 |

| Dataset | Training Subset | Testing Subset | Total Instances | ||

|---|---|---|---|---|---|

| Instances | Percentage | Instances | Percentage | ||

| Aruba CASAS–raw | 4460 | 69.9% | 1916 | 30.1% | 6376 |

| Aruba CASAS–duration | 4460 | 69.9% | 1916 | 30.1% | 6376 |

| Aruba CASAS–sensor-based | 4460 | 69.9% | 1916 | 30.1% | 6376 |

| Dataset/Class | Sleeping | Bed to Toilet | Meal Preparation | Relax | House Keeping | Eating | Leave Home | Enter Home | Work | |

|---|---|---|---|---|---|---|---|---|---|---|

| Aruba CASAS–raw | Train | 265 | 112 | 1105 | 2036 | 23 | 181 | 298 | 319 | 121 |

| Test | 136 | 45 | 482 | 878 | 9 | 71 | 133 | 112 | 50 | |

| Aruba CASAS–duration | Train | 265 | 112 | 1105 | 2036 | 23 | 181 | 298 | 319 | 121 |

| Test | 136 | 45 | 482 | 878 | 9 | 71 | 133 | 112 | 50 | |

| Aruba CASAS–sensor-based | Train | 265 | 112 | 1105 | 2036 | 23 | 181 | 298 | 319 | 121 |

| Test | 136 | 45 | 482 | 878 | 9 | 71 | 133 | 112 | 50 | |

| Subcategories | Technique | Function |

|---|---|---|

| Decision Tree | Logistic Model Trees—LMT [22] | Build logistic model trees. |

| J48 (C4.5 decision tree) [23] | Decision tree based on algorithm C4.5. | |

| Reduced-Error Pruning Tree—REPTree [24] | Fast tree learning using pruning in error reduction. | |

| RandomForest [25] | Construction of random trees | |

| Random Tree [24] | Build a tree that considers a random number of given features at each node. | |

| DecisionStump [26] | Build one-level decision trees | |

| Rules | JRip [27] | RIPPER (Reduced Incremental Pruning to Produce Error Reduction) algorithm for fast, efficient rule induction. |

| Partial Decision Trees—PART [24] | Obtains rules from decision trees built using J4.8. | |

| Decision Table [28] | Construct a simple decision table for the majority classifier. | |

| ZeroR, Stacking [29] | Predict the majority class (if nominal) or the average value (if numeric). | |

| OneR [30] | One rule classifier | |

| Functions | Logistic [31] | Build linear logistic regression models. |

| MultilayerPerceptron [32] | Backpropagation Neural Network | |

| Multiclassifiers (Meta) | Random Committee [24] | Build a set of random base classifiers |

| Stacking [29] | Combine multiple classifiers using the stacking method. | |

| LogitBoost [33] | Perform additive logistic regression | |

| Classification Via Regression [24] | It performs classification using a regression method | |

| MultiClass Classifier [34] | Use a two-class classifier for multiclass data sets | |

| Bagging [35] | A bag classifier works by regression as well. | |

| AdaBoostM1 [36] | Use the AdaBoostM1 method. | |

| Vote [37] | Combine classifiers using average probability estimates or numerical predictions | |

| CVParameterSelection [38] | Performs parameter selection through cross-validation | |

| MultiScheme [39] | Uses cross-validation to select a classifier from multiple candidates | |

| AttributeSelectedClassifier [24] | Reduces the dimensionality of the data by selecting attributes. | |

| RandomSubSpace [40] | Build a decision tree-based classifier that maintains the highest accuracy on the training data. | |

| Filtered Classifier [39] | Run a classifier on filtered data | |

| Lazy algorithms | IB1 Instance-based Learning Algorithms [41] | Instance-based learning is a basic nearest neighbor |

| IB2 Instance-based Learning Algorithms [41] | K nearest neighbor classifier. | |

| IB3 Instance-based Learning Algorithms [41] | K nearest neighbor classifier. | |

| KStar [42] | A nearest neighbor with a generalized distance function | |

| LWL [43] | A general algorithm for locally heavy learning. |

| Algorithm | Feature Prioritization |

|---|---|

| GainRatio | 12, 11, 9, 8, 35, 25, 18, 28, 13, 27, 26, 24, 36, 39, 16, 40, 14, 22, 23, 37, 29, 34, 30, 38, 19, 10, 15, 44, 43, 41, 42, 31, 2, 45, 17, 21, 5, 4, 20, 33, 32, 1, 0, 7, 3, 6, 46, 47 |

| InfoGain | 18, 27, 28, 24, 22, 26, 29, 9, 25, 8, 23, 39, 43, 16, 42, 12, 44, 45, 11, 41, 30, 19, 14, 13, 35, 38, 36, 37, 31, 21, 15, 17, 40, 34, 33, 1, 0, 32, 10, 5, 20, 4, 2, 6, 7, 3, 46, 47 |

| OneR | 27, 28, 24, 18, 26, 25, 22, 23, 30, 9, 8, 39, 16, 12, 11, 29, 43, 38, 31, 35, 13, 42, 14, 36, 37, 41, 17, 44, 45, 15, 32, 40, 5, 4, 34, 10, 2, 6, 19, 7, 21, 3, 20, 33, 0, 1, 46, 47 |

| ReliefF | 44, 43, 42, 18, 9, 28, 27, 8, 41, 24, 26, 12, 25, 39, 22, 23, 29, 38, 13, 35, 40, 31, 36, 16, 37, 19, 30, 11, 45, 14, 4, 5, 15, 33, 17, 32, 21, 34, 10, 3, 6, 1, 7, 0, 2, 20, 46, 47 |

| TP Rate | FP Rate | Precision | Recall | F-Measure | MCC | ROC Area | PRC Area | Class |

|---|---|---|---|---|---|---|---|---|

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Sleeping |

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Bed_to_Toilet |

| 0.983 | 0.012 | 0.963 | 0.983 | 0.973 | 0.964 | 0.996 | 0.981 | Meal_Preparation |

| 0.998 | 0.005 | 0.994 | 0.998 | 0.996 | 0.993 | 1.000 | 1.000 | Relax |

| 0.889 | 0.000 | 1.000 | 0.889 | 0.941 | 0.943 | 1.000 | 0.989 | Housekeeping |

| 0.986 | 0.003 | 0.933 | 0.986 | 0.959 | 0.958 | 1.000 | 0.987 | Eating |

| 0.000 | 0.001 | 0.000 | 0.000 | 0.000 | −0.002 | 0.927 | 0.103 | Wash_Dishes |

| 0.541 | 0.015 | 0.727 | 0.541 | 0.621 | 0.604 | 0.981 | 0.698 | Leave_Home |

| 0.759 | 0.033 | 0.582 | 0.759 | 0.659 | 0.641 | 0.979 | 0.644 | Enter_Home |

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Work |

| 0.939 | 0.008 | 0.934 | 0.939 | 0.935 | 0.929 | 0.996 | 0.945 | Weighted Avg |

| TP Rate | FP Rate | Precision | Recall | F-Measure | MCC | ROC Area | PRC Area | Class |

|---|---|---|---|---|---|---|---|---|

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Sleeping |

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Bed_to_Toilet |

| 0.983 | 0.012 | 0.965 | 0.983 | 0.974 | 0.966 | 0.995 | 0.978 | Meal_Preparation |

| 0.999 | 0.005 | 0.994 | 0.999 | 0.997 | 0.994 | 1.000 | 0.999 | Relax |

| 0.889 | 0.001 | 0.889 | 0.889 | 0.889 | 0.888 | 1.000 | 0.967 | Housekeeping |

| 0.986 | 0.002 | 0.959 | 0.986 | 0.972 | 0.971 | 1.000 | 0.987 | Eating |

| 0.000 | 0.001 | 0.000 | 0.000 | 0.000 | -0.003 | 0.923 | 0.098 | Wash_Dishes |

| 0.564 | 0.018 | 0.701 | 0.564 | 0.625 | 0.605 | 0.980 | 0.692 | Leave_Home |

| 0.714 | 0.032 | 0.580 | 0.714 | 0.640 | 0.619 | 0.978 | 0.639 | Enter_Home |

| 1.000 | 0.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | Work |

| 0.939 | 0.008 | 0.933 | 0.939 | 0.935 | 0.929 | 0.995 | 0.944 | Weighted Avg |

| Dataset | Quality Metrics | Classification Technique | ||||

|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | ||

| Aruba CASAS—raw | 0.50% | 94.80% | 94.50% | 94.50% | 99.60% | LMT |

| 0.60% | 94.60% | 94.20% | 94.00% | 99.70% | LogitBoost | |

| 0.60% | 94.30% | 94.10% | 94.10% | 99.70% | ClassificationViaRegression | |

| 0.50% | 94.30% | 94.00% | 94.00% | 99.00% | J48 | |

| Aruba CASAS—duration | 0.50% | 95.70% | 95.60% | 95.60% | 99.00% | J48 |

| 0.60% | 95.70% | 95.60% | 95.50% | 99.30% | JRIP | |

| 0.60% | 95.40% | 95.40% | 95.40% | 99.60% | LMT | |

| 0.70% | 95.20% | 95.30% | 95.10% | 99.80% | RandomSubSpace | |

| Aruba CASAS—sensor based | 0.70% | 94.80% | 94.50% | 94.40% | 99.70% | LMT |

| 0.60% | 94.60% | 94.20% | 94.00% | 99.70% | LogitBoost | |

| 0.60% | 94.30% | 94.10% | 94.10% | 99.70% | ClassificationViaRegression | |

| 0.60% | 94.20% | 93.90% | 93.90% | 99.00% | J48 | |

| Class | LMT + Gain Ratio (27 Features) | LMT + Gain Ratio (24 Features) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Bed_to_Toilet | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Meal_Preparation | 0.10% | 99.80% | 98.50% | 99.20% | 99.90% | 0.10% | 99.60% | 98.50% | 99.10% | 99.80% |

| Relax | 0.60% | 99.30% | 99.80% | 99.50% | 100.00% | 0.40% | 99.50% | 99.40% | 99.50% | 99.90% |

| Housekeeping | 0.00% | 100.00% | 88.90% | 94.10% | 100.00% | 0.10% | 90.00% | 100.00% | 94.70% | 100.00% |

| Eating | 0.20% | 94.70% | 100.00% | 97.30% | 100.00% | 0.30% | 92.20% | 100.00% | 95.90% | 100.00% |

| Leave_Home | 1.50% | 73.00% | 54.90% | 62.70% | 98.10% | 1.50% | 73.30% | 55.60% | 63.20% | 98.10% |

| Enter_Home | 3.30% | 59.00% | 75.90% | 66.40% | 97.90% | 3.20% | 59.40% | 75.90% | 66.70% | 97.90% |

| Work | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Average | 0.60% | 95.20% | 94.90% | 94.90% | 99.70% | 0.50% | 95.10% | 94.90% | 94.90% | 99.70% |

| Class | JRIP + One R (47 Features) | LMT + One R (33 Features) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.00% | 100.00% | 98.50% | 99.30% | 99.60% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Bed_to_Toilet | 0.00% | 100.00% | 97.80% | 98.90% | 98.90% | 0.00% | 100.00% | 97.80% | 98.90% | 100.00% |

| Meal_Preparation | 0.30% | 99.20% | 98.60% | 98.90% | 99.20% | 0.10% | 99.80% | 98.60% | 99.20% | 99.90% |

| Relax | 0.50% | 99.40% | 99.50% | 99.50% | 99.60% | 0.70% | 99.20% | 99.70% | 99.40% | 99.80% |

| Housekeeping | 0.10% | 87.50% | 77.80% | 82.40% | 88.90% | 0.00% | 100.00% | 77.80% | 87.50% | 100.00% |

| Eating | 0.40% | 90.90% | 98.60% | 94.60% | 98.80% | 0.30% | 92.10% | 98.60% | 95.20% | 98.80% |

| Leave_Home | 2.20% | 73.50% | 81.20% | 77.10% | 98.30% | 2.30% | 72.50% | 83.50% | 77.60% | 98.50% |

| Enter_Home | 1.30% | 75.30% | 65.20% | 69.90% | 97.00% | 1.30% | 75.30% | 62.50% | 68.30% | 98.20% |

| Work | 0.10% | 98.00% | 100.00% | 99.00% | 100.00% | 0.10% | 98.00% | 96.00% | 97.00% | 100.00% |

| Average | 0.50% | 95.80% | 95.80% | 95.80% | 99.20% | 0.60% | 95.90% | 95.90% | 95.80% | 99.70% |

| Class | LMT + Info Gain (47 Features) | LMT + Gain Ratio (31 Features) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.10% | 99.30% | 100.00% | 99.60% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Bed_to_Toilet | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Meal_Preparation | 0.10% | 99.80% | 99.20% | 99.50% | 100.00% | 0.20% | 99.40% | 98.30% | 98.90% | 100.00% |

| Relax | 0.50% | 99.40% | 99.80% | 99.60% | 99.90% | 0.60% | 99.30% | 99.80% | 99.50% | 100.00% |

| Housekeeping | 0.00% | 100.00% | 44.40% | 61.50% | 91.00% | 0.00% | 100.00% | 88.90% | 94.10% | 100.00% |

| Eating | 0.20% | 94.70% | 100.00% | 97.30% | 100.00% | 0.20% | 94.60% | 98.60% | 96.60% | 100.00% |

| Leave_Home | 1.50% | 73.00% | 54.90% | 62.70% | 98.00% | 1.50% | 73.30% | 55.60% | 63.20% | 98.10% |

| Enter_Home | 3.30% | 59.00% | 75.90% | 66.40% | 97.80% | 3.20% | 59.40% | 75.90% | 66.70% | 97.90% |

| Work | 0.10% | 98.00% | 100.00% | 99.00% | 100.00% | 0.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Average | 0.50% | 95.10% | 94.90% | 94.80% | 99.70% | 0.60% | 95.10% | 94.90% | 94.80% | 99.70% |

| Algorithm | Feature Prioritization |

|---|---|

| GainRatio | 13, 14, 11, 10, 37, 27, 20, 30, 15, 29, 26, 28, 38, 41, 18, 42, 16, 24, 25, 39, 2, 31, 3, 36, 32, 40, 21, 12, 17, 46, 43, 44, 33, 45, 4, 47, 19, 23, 7, 6, 22, 35, 34, 1, 0, 8, 5, 9, 48, 49 |

| InfoGain | 2, 20, 29, 30, 26, 3, 24, 28, 31, 11, 27, 10, 25, 41, 45, 18, 44, 14, 46, 47, 13, 43, 32, 21, 16, 15, 37, 40, 38, 39, 33, 23, 17, 19, 42, 36, 35, 1, 0, 34, 12, 7, 22, 6, 4, 9, 5, 8, 48, 49 |

| OneR | 29, 30, 26, 20, 28, 2, 27, 24, 25, 32, 3, 11, 10, 41, 18, 14, 31, 13, 45, 40, 46, 44, 37, 15, 43, 33, 16, 38, 47, 39, 19, 17, 34, 42, 7, 36, 6, 12, 4, 23, 8, 21, 9, 35, 5, 22, 1, 0, 48, 49 |

| ReliefF | 46, 45, 44, 20, 11, 30, 3, 29, 10, 43, 26, 28, 27, 14, 41, 24, 2, 25, 31, 40, 15, 37, 42, 33, 38, 18, 39, 21, 32, 13, 47, 16, 6, 7, 35, 17, 19, 23, 34, 36, 12, 1, 9, 0, 8, 5, 4, 22, 48, 49 |

| Dataset | Quality Metrics | Hybridization Classification Technique + Feature Selection | ||||

|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | ||

| Aruba CASAS–raw | 0.60% | 95.20% | 94.90% | 94.90% | 99.70% | LMT + Gain Ratio (27 Features) |

| 0.50% | 95.10% | 94.90% | 94.90% | 99.70% | LMT + Gain Ratio (24Features) | |

| Aruba—duration | 0.50% | 95.80% | 95.80% | 95.80% | 99.20% | JRIP + One R (47 Features) |

| 0.60% | 95.90% | 95.90% | 95.80% | 99.70% | LMT + One R (33Features) | |

| Aruba—sensor based | 0.50% | 95.10% | 94.90% | 94.80% | 99.70% | LMT + Info Gain (47 Features) |

| 0.60% | 95.10% | 94.90% | 94.80% | 99.70% | LMT + Gain Ratio (31Features) | |

| ID | Attribute | Priority | ID | Attribute | Priority | ID | Attribute | Priority |

|---|---|---|---|---|---|---|---|---|

| 1 | M018 | 70.12 | 12 | D004-close | 51.97 | 23 | M026 | 47.49 |

| 2 | M019 | 69.94 | 13 | D004-open | 51.80 | 24 | M004 | 47.43 |

| 3 | M015 | 69.08 | 14 | M030 | 51.49 | 25 | T001 | 47.36 |

| 4 | M009 | 68.30 | 15 | M007 | 51.18 | 26 | M022 | 47.34 |

| 5 | M017 | 68.12 | 16 | M003 | 50.89 | 27 | M005 | 47.25 |

| 6 | duration | 66.02 | 17 | M020 | 50.78 | 28 | M027 | 47.03 |

| 7 | M016 | 65.04 | 18 | M002 | 50.58 | 29 | T005 | 46.96 |

| 8 | M013 | 64.17 | 19 | T003 | 48.58 | 30 | M028 | 46.85 |

| 9 | M014 | 59.61 | 20 | M029 | 47.98 | 31 | M008 | 46.52 |

| 10 | M021 | 55.99 | 21 | T004 | 47.65 | 32 | M006 | 45.94 |

| 11 | events | 52.15 | 22 | T002 | 47.63 | 33 | M023 | 45.87 |

| Class | LMT + Gain Ratio (24 Features) | ||||

|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.00% | 99.20% | 99.60% | 99.40% | 100.00% |

| Bed_to_Toilet | 0.00% | 98.20% | 100.00% | 99.10% | 100.00% |

| Meal_Preparation | 0.30% | 99.20% | 99.00% | 99.10% | 99.50% |

| Relax | 0.80% | 99.10% | 99.40% | 99.20% | 99.70% |

| Housekeeping | 0.10% | 86.40% | 82.60% | 84.40% | 96.40% |

| Eating | 0.10% | 98.30% | 96.10% | 97.20% | 99.80% |

| Leave_Home | 2.00% | 65.20% | 53.40% | 58.70% | 97.20% |

| Enter_Home | 3.30% | 63.20% | 73.40% | 67.90% | 97.50% |

| Work | 0.10% | 97.50% | 97.50% | 97.50% | 100.00% |

| Average | 0.80% | 94.10% | 94.10% | 94.10% | 99.30% |

| Class | LMT + One R (33 Features) | ||||

|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.00% | 99.60% | 99.60% | 99.60% | 99.80% |

| Bed_to_Toilet | 0.00% | 99.10% | 100.00% | 99.60% | 100.00% |

| Meal_Preparation | 0.40% | 98.80% | 98.60% | 98.70% | 99.60% |

| Relax | 0.80% | 99.10% | 99.30% | 99.20% | 99.70% |

| Housekeeping | 0.20% | 66.70% | 69.60% | 68.10% | 94.90% |

| Eating | 0.10% | 97.10% | 93.40% | 95.20% | 97.70% |

| Leave_Home | 2.80% | 62.80% | 66.80% | 64.70% | 97.10% |

| Enter_Home | 2.30% | 68.00% | 63.30% | 65.60% | 97.20% |

| Work | 0.20% | 94.50% | 99.20% | 96.80% | 100.00% |

| Average | 0.80% | 94.10% | 94.10% | 94.00% | 99.20% |

| Class | LMT + Gain Ratio (31 Features) | ||||

|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | |

| Sleeping | 0.10% | 98.90% | 100.00% | 99.40% | 100.00% |

| Bed_to_Toilet | 0.10% | 97.40% | 100.00% | 98.70% | 100.00% |

| Meal_Preparation | 0.40% | 98.80% | 99.00% | 98.90% | 99.70% |

| Relax | 0.70% | 99.20% | 99.20% | 99.20% | 99.40% |

| Housekeeping | 0.10% | 86.40% | 82.60% | 84.40% | 90.70% |

| Eating | 0.00% | 99.40% | 94.50% | 96.90% | 99.80% |

| Leave_Home | 2.10% | 64.90% | 53.40% | 58.60% | 97.30% |

| Enter_Home | 3.30% | 63.10% | 73.40% | 67.80% | 97.70% |

| Work | 0.10% | 95.90% | 97.50% | 96.70% | 99.90% |

| Average | 0.80% | 94.00% | 94.00% | 93.90% | 99.30% |

| Dataset | Quality Metrics | Hybridization Classification Technique + Feature Selection (10-Fold Cross Validation) | ||||

|---|---|---|---|---|---|---|

| FP Rate | Precision | Recall | F-Measure | ROC Area | ||

| Aruba CASAS–raw | 0.80% | 94.10% | 94.10% | 94.10% | 99.30% | LMT + Gain Ratio (24 Features) |

| Aruba CASAS–duration | 0.80% | 94.10% | 94.10% | 94.00% | 99.20% | LMT + One R (33 Features) |

| Aruba CASAS–sensor based | 0.80% | 94.00% | 94.00% | 93.90% | 99.30% | LMT + Gain Ratio (31 Features) |

| Models | F | Probability | The Critical Value for F |

|---|---|---|---|

| M1 (LMT + Gain Ratio 24 Features) vs. M2 (LMT + One R 33 Features) | 0.058300716 | 0.812269355 | 4.493998478 |

| M1 (LMT + Gain Ratio 24 Features) vs. M3 (LMT + Info Gain 47 Features) | 0.034326866 | 0.855341542 | 4.493998478 |

| M2 (LMT + One R 33 Features) vs. M3 (LMT + Info Gain 47 Features) | 0.00182054 | 0.966494302 | 4.493998478 |

| Class | Aruba CASAS–Raw (LMT + Gain Ratio 24 Features) | Aruba CASAS–Duration (LMT + One R 33 Features) | Aruba CASAS–Sensor-based (LMT + Gain Ratio 31 Features) | |||

|---|---|---|---|---|---|---|

| Recall | ROC Area | Recall | ROC Area | Recall | ROC Area | |

| Sleeping | 100.00% | 100.00% | 100.00% | 100.00% | 100.00% | 100.00% |

| Bed_to_Toilet | 100.00% | 100.00% | 97.80% | 100.00% | 100.00% | 100.00% |

| Meal_Preparation | 98.50% | 99.80% | 98.60% | 99.90% | 98.30% | 100.00% |

| Relax | 99.40% | 99.90% | 99.70% | 99.80% | 99.80% | 100.00% |

| Housekeeping | 100.00% | 100.00% | 77.80% | 100.00% | 88.90% | 100.00% |

| Eating | 100.00% | 100.00% | 98.60% | 98.80% | 98.60% | 100.00% |

| Leave_Home | 55.60% | 98.10% | 83.50% | 98.50% | 55.60% | 98.10% |

| Enter_Home | 75.90% | 97.90% | 62.50% | 98.20% | 75.90% | 97.90% |

| Work | 100.00% | 100.00% | 96.00% | 100.00% | 100.00% | 100.00% |

| Average | 94.90% | 99.70% | 95.90% | 99.70% | 94.90% | 99.70% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Patiño-Saucedo, J.A.; Ariza-Colpas, P.P.; Butt-Aziz, S.; Piñeres-Melo, M.A.; López-Ruiz, J.L.; Morales-Ortega, R.C.; De-la-hoz-Franco, E. Predictive Model for Human Activity Recognition Based on Machine Learning and Feature Selection Techniques. Int. J. Environ. Res. Public Health 2022, 19, 12272. https://doi.org/10.3390/ijerph191912272

Patiño-Saucedo JA, Ariza-Colpas PP, Butt-Aziz S, Piñeres-Melo MA, López-Ruiz JL, Morales-Ortega RC, De-la-hoz-Franco E. Predictive Model for Human Activity Recognition Based on Machine Learning and Feature Selection Techniques. International Journal of Environmental Research and Public Health. 2022; 19(19):12272. https://doi.org/10.3390/ijerph191912272

Chicago/Turabian StylePatiño-Saucedo, Janns Alvaro, Paola Patricia Ariza-Colpas, Shariq Butt-Aziz, Marlon Alberto Piñeres-Melo, José Luis López-Ruiz, Roberto Cesar Morales-Ortega, and Emiro De-la-hoz-Franco. 2022. "Predictive Model for Human Activity Recognition Based on Machine Learning and Feature Selection Techniques" International Journal of Environmental Research and Public Health 19, no. 19: 12272. https://doi.org/10.3390/ijerph191912272

APA StylePatiño-Saucedo, J. A., Ariza-Colpas, P. P., Butt-Aziz, S., Piñeres-Melo, M. A., López-Ruiz, J. L., Morales-Ortega, R. C., & De-la-hoz-Franco, E. (2022). Predictive Model for Human Activity Recognition Based on Machine Learning and Feature Selection Techniques. International Journal of Environmental Research and Public Health, 19(19), 12272. https://doi.org/10.3390/ijerph191912272