Clinical Validation of a Deep-Learning Segmentation Software in Head and Neck: An Early Analysis in a Developing Radiation Oncology Center

Abstract

1. Introduction

2. Materials and Methods

2.1. Patients

2.2. Auto-Segmentation Process

2.3. Evaluation Metrics

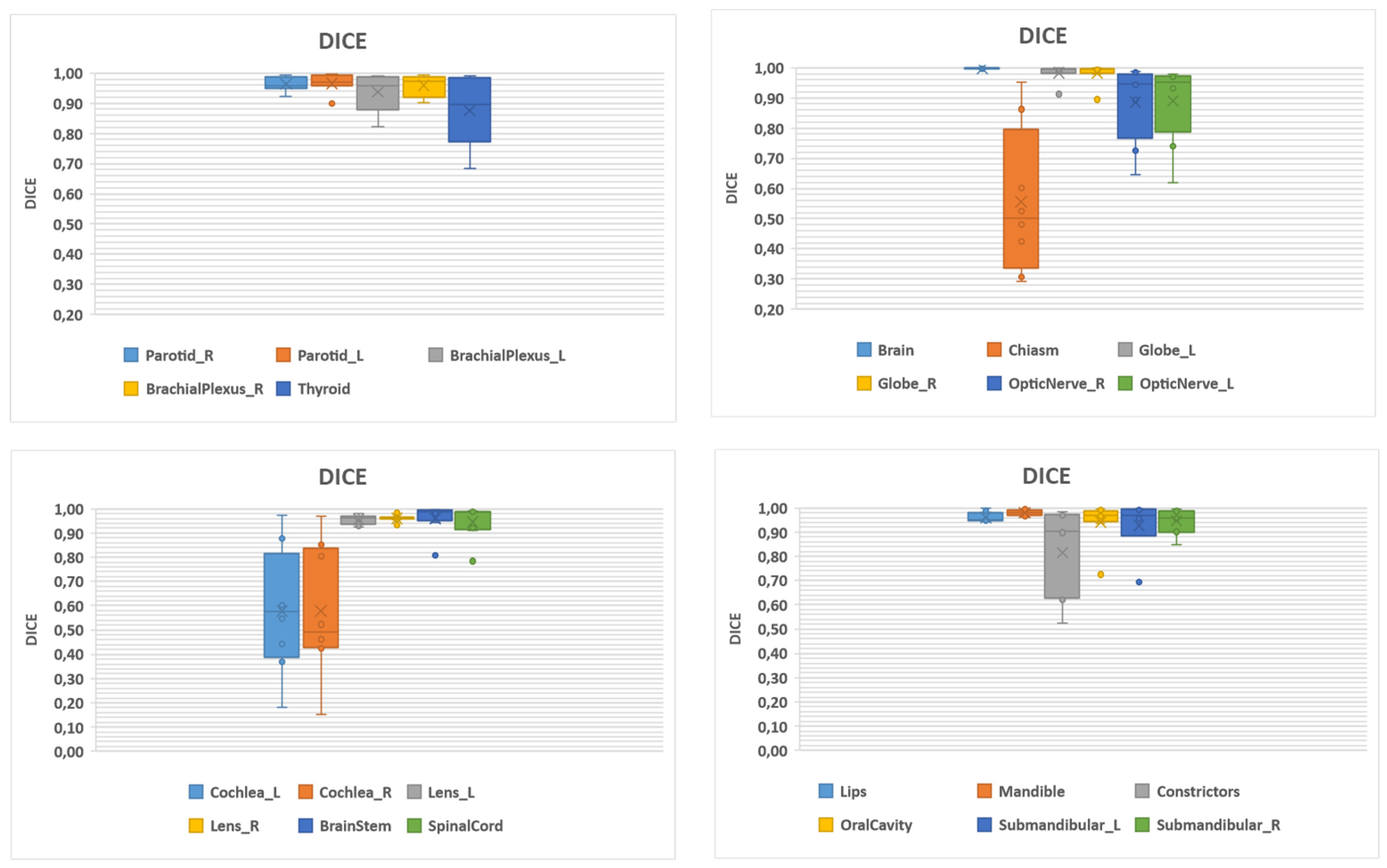

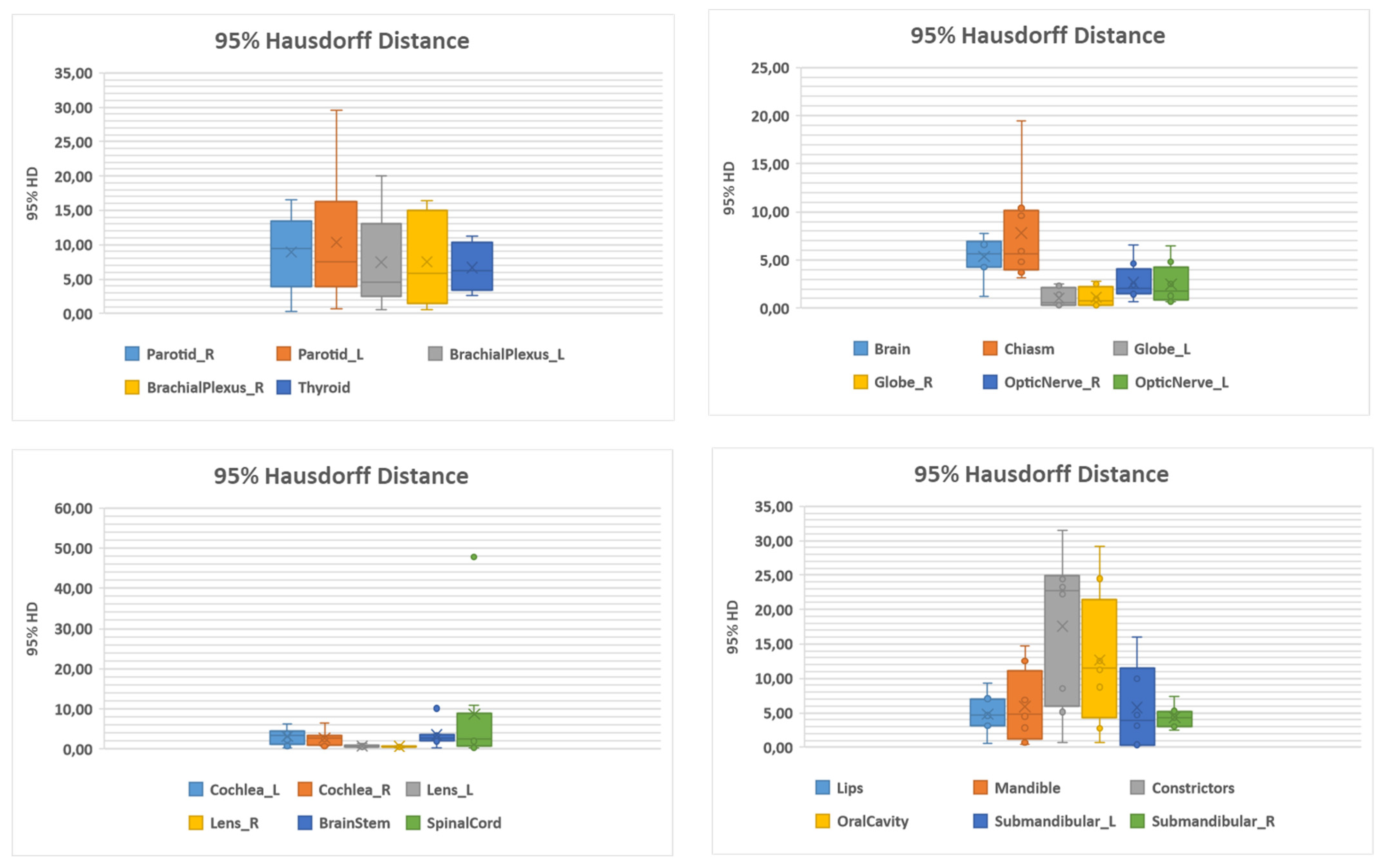

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- AIOM. I Numeri del Cancro in Italia. Available online: https://www.aiom.it/i-numeri-del-cancro-in-italia/ (accessed on 24 March 2022).

- Patterson, J.M.; Lu, L.; Watson, L.-J.; Harding, S.; Ness, A.R.; Thomas, S.; Waylen, A.; Pring, M.; Waterboer, T.; Sharp, L. Associations between markers of social functioning and depression and quality of life in survivors of head and neck cancer: Findings from the Head and Neck Cancer 5000 study. Psycho-Oncol. 2022, 31, 478–485. [Google Scholar] [CrossRef]

- Pfister, D.G.; Spencer, S.; Adelstein, D.; Adkins, D.; Anzai, Y.; Brizel, D.M.; Bruce, J.Y.; Busse, P.M.; Caudell, J.J.; Cmelak, A.J.; et al. Head and Neck Cancers, Version 2.2020, NCCN Clinical Practice Guidelines in Oncology. J. Natl. Compr. Cancer Netw. 2020, 18, 873–898. [Google Scholar] [CrossRef]

- Lo Nigro, C.; Denaro, N.; Merlotti, A.; Merlano, M. Head and neck cancer: Improving outcomes with a multidisciplinary approach. Cancer Manag. Res. 2017, 9, 363–371. [Google Scholar] [CrossRef]

- Machiels, J.-P.; Leemans, C.R.; Golusinski, W.; Grau, C.; Licitra, L.; Gregoire, V. Squamous cell carcinoma of the oral cavity, larynx, oropharynx and hypopharynx: EHNS–ESMO–ESTRO Clinical Practice Guidelines for diagnosis, treatment and follow-up†. Ann. Oncol. 2020, 31, 1462–1475. [Google Scholar] [CrossRef]

- Grégoire, V.; Guckenberger, M.; Haustermans, K.; Lagendijk, J.J.W.; Ménard, C.; Pötter, R.; Slotman, B.J.; Tanderup, K.; Thorwarth, D.; van Herk, M.; et al. Image guidance in radiation therapy for better cure of cancer. Mol. Oncol. 2020, 14, 1470–1491. [Google Scholar] [CrossRef]

- LaVigne, A.W.; Margalit, D.N.; Rawal, B.; Puzanov, M.; Annino, D.J.; Goguen, L.A.; Sher, D.J.; Schoenfeld, J.D.; Chau, N.G.; Lorch, J.H.; et al. IMRT-based treatment of unknown primary malignancy of the head and neck: Outcomes and improved toxicity with decreased mucosal dose and larynx sparing. Head Neck 2019, 41, 959–966. [Google Scholar] [CrossRef]

- Kam, M.K.M.; Leung, S.-F.; Zee, B.; Chau, R.M.C.; Suen, J.J.S.; Mo, F.; Lai, M.; Ho, R.; Cheung, K.; Yu, B.K.H.; et al. Prospective randomized study of intensity-modulated radiotherapy on salivary gland function in early-stage nasopharyngeal carcinoma patients. J. Clin. Oncol. 2007, 25, 4873–4879. [Google Scholar] [CrossRef]

- Brouwer, C.L.; Steenbakkers, R.J.; van den Heuvel, E.; Duppen, J.C.; Navran, A.; Bijl, H.P.; Chouvalova, O.; Burlage, F.R.; Meertens, H.; Langendijk, J.A.; et al. 3D Variation in delineation of head and neck organs at risk. Radiat. Oncol. 2012, 7, 32. [Google Scholar] [CrossRef]

- Geets, X.; Daisne, J.-F.; Arcangeli, S.; Coche, E.; Poel, M.D.; Duprez, T.; Nardella, G.; Grégoire, V. Inter-observer variability in the delineation of pharyngo-laryngeal tumor, parotid glands and cervical spinal cord: Comparison between CT-scan and MRI. Radiother. Oncol. 2005, 77, 25–31. [Google Scholar] [CrossRef]

- Piras, A.; Boldrini, L.; Menna, S.; Venuti, V.; Pernice, G.; Franzese, C.; Angileri, T.; Daidone, A. Hypofractionated Radiotherapy in Head and Neck Cancer Elderly Patients: A Feasibility and Safety Systematic Review for the Clinician. Front. Oncol. 2021, 11, 761393. [Google Scholar] [CrossRef]

- Brouwer, C.L.; Steenbakkers, R.J.H.M.; Bourhis, J.; Budach, W.; Grau, C.; Grégoire, V.; van Herk, M.; Lee, A.; Maingon, P.; Nutting, C.; et al. CT-based delineation of organs at risk in the head and neck region: DAHANCA, EORTC, GORTEC, HKNPCSG, NCIC CTG, NCRI, NRG Oncology and TROG consensus guidelines. Radiother. Oncol. 2015, 117, 83–90. [Google Scholar] [CrossRef]

- Cusumano, D.; Boldrini, L.; Dhont, J.; Fiorino, C.; Green, O.; Güngör, G.; Jornet, N.; Klüter, S.; Landry, G.; Mattiucci, G.C.; et al. Artificial Intelligence in magnetic Resonance guided Radiotherapy: Medical and physical considerations on state of art and future perspectives. Phys. Med. 2021, 85, 175–191. [Google Scholar] [CrossRef]

- Fionda, B.; Boldrini, L.; D’Aviero, A.; Lancellotta, V.; Gambacorta, M.A.; Kovács, G.; Patarnello, S.; Valentini, V.; Tagliaferri, L. Artificial intelligence (AI) and interventional radiotherapy (brachytherapy): State of art and future perspectives. J. Contemp. Brachyther. 2020, 12, 497–500. [Google Scholar] [CrossRef]

- Cusumano, D.; Lenkowicz, J.; Votta, C.; Boldrini, L.; Placidi, L.; Catucci, F.; Dinapoli, N.; Antonelli, M.V.; Romano, A.; de Luca, V.; et al. A deep learning approach to generate synthetic CT in low field MR-guided adaptive radiotherapy for abdominal and pelvic cases. Radiother. Oncol. 2020, 153, 205–212. [Google Scholar] [CrossRef]

- Brouwer, C.L.; Dinkla, A.M.; Vandewinckele, L.; Crijns, W.; Claessens, M.; Verellen, D.; van Elmpt, W. Machine learning applications in radiation oncology: Current use and needs to support clinical implementation. Phys. Imaging Radiat. Oncol. 2020, 16, 144–148. [Google Scholar] [CrossRef]

- Wong, J.; Fong, A.; McVicar, N.; Smith, S.; Giambattista, J.; Wells, D.; Kolbeck, C.; Giambattista, J.; Gondara, L.; Alexander, A. Comparing deep learning-based auto-segmentation of organs at risk and clinical target volumes to expert inter-observer variability in radiotherapy planning. Radiother. Oncol. 2020, 144, 152–158. [Google Scholar] [CrossRef]

- Wong, J.; Huang, V.; Wells, D.; Giambattista, J.; Giambattista, J.; Kolbeck, C.; Otto, K.; Saibishkumar, E.P.; Alexander, A. Implementation of deep learning-based auto-segmentation for radiotherapy planning structures: A workflow study at two cancer centers. Radiat. Oncol. 2021, 16, 101. [Google Scholar] [CrossRef]

- Wong, J.; Huang, V.; Giambattista, J.A.; Teke, T.; Kolbeck, C.; Giambattista, J.; Atrchian, S. Training and Validation of Deep Learning-Based Auto-Segmentation Models for Lung Stereotactic Ablative Radiotherapy Using Retrospective Radiotherapy Planning Contours. Front. Oncol. 2021, 11, 626499. [Google Scholar] [CrossRef] [PubMed]

- Vinod, S.K.; Min, M.; Jameson, M.G.; Holloway, L.C. A review of interventions to reduce inter-observer variability in volume delineation in radiation oncology. J. Med. Imaging Radiat. Oncol. 2016, 60, 393–406. [Google Scholar] [CrossRef]

- Patrick, H.M.; Souhami, L.; Kildea, J. Reduction of inter-observer contouring variability in daily clinical practice through a retrospective, evidence-based intervention. Acta Oncol. 2021, 60, 229–236. [Google Scholar] [CrossRef]

- Ayyalusamy, A.; Vellaiyan, S.; Subramanian, S.; Ilamurugu, A.; Satpathy, S.; Nauman, M.; Katta, G.; Madineni, A. Auto-segmentation of head and neck organs at risk in radiotherapy and its dependence on anatomic similarity. Radiat. Oncol. J. 2019, 37, 134–142. [Google Scholar] [CrossRef] [PubMed]

- Nikolov, S.; Blackwell, S.; Zverovitch, A.; Mendes, R.; Livne, M.; de Fauw, J.; Patel, Y.; Meyer, C.; Askham, H.; Romera-Paredes, B.; et al. Clinically Applicable Segmentation of Head and Neck Anatomy for Radiotherapy: Deep Learning Algorithm Development and Validation Study. J. Med. Internet Res. 2021, 23, e26151. [Google Scholar] [CrossRef] [PubMed]

- Van Dijk, L.V.; den Bosch, L.V.; Aljabar, P.; Peressutti, D.; Both, S.; Steenbakkers, R.J.H.M.; Langendijk, J.A.; Gooding, M.J.; Brouwer, C.L. Improving automatic delineation for head and neck organs at risk by Deep Learning Contouring. Radiother. Oncol. 2020, 142, 115–123. [Google Scholar] [CrossRef] [PubMed]

- Lim, J.Y.; Leech, M. Use of auto-segmentation in the delineation of target volumes and organs at risk in head and neck. Acta Oncol. 2016, 55, 799–806. [Google Scholar] [CrossRef]

- Eekers, D.B.; In’t Ven, L.; Roelofs, E.; Postma, A.; Alapetite, C.; Burnet, N.G.; Calugaru, V.; Compter, I.; Coremans, I.E.M.; Høyer, M.; et al. The EPTN consensus-based atlas for CT- and MR-based contouring in neuro-oncology. Radiother. Oncol. 2018, 128, 37–43. [Google Scholar] [CrossRef]

- Scoccianti, S.; Detti, B.; Gadda, D.; Greto, D.; Furfaro, I.; Meacci, F.; Simontacchi, G.; di Brina, L.; Bonomo, P.; Giacomelli, I.; et al. Organs at risk in the brain and their dose-constraints in adults and in children: A radiation oncologist’s guide for delineation in everyday practice. Radiother. Oncol. 2015, 114, 230–238. [Google Scholar] [CrossRef]

- Yeghiazaryan, V.; Voiculescu, I. Family of boundary overlap metrics for the evaluation of medical image segmentation. J. Med. Imaging 2018, 5, 015006. [Google Scholar] [CrossRef]

- Ibragimov, B.; Xing, L. Segmentation of organs-at-risks in head and neck CT images using convolutional neural networks. Med. Phys. 2017, 44, 547–557. [Google Scholar] [CrossRef]

- La Macchia, M.; Fellin, F.; Amichetti, M.; Cianchetti, M.; Gianolini, S.; Paola, V.; Lomax, A.J.; Widesott, L. Systematic evaluation of three different commercial software solutions for automatic segmentation for adaptive therapy in head-and-neck, prostate and pleural cancer. Radiat. Oncol. 2012, 7, 160. [Google Scholar] [CrossRef]

- Mattiucci, G.C.; Boldrini, L.; Placidi, L.; Azario, L.; Dinapoli, N.; Chiloiro, G.; Pasini, D.; Piccari, D.; Gambacorta, M.A.; Balducci, M.; et al. Beyond geometrical overlap: A Dosimetrical Evaluation of automated volumes Adaptation (DEA) in head and neck replanning. Tech. Innov. Patient Support Radiat. Oncol. 2017, 3–4, 1–6. [Google Scholar] [CrossRef][Green Version]

- Vrtovec, T.; Močnik, D.; Strojan, P.; Pernuš, F.; Ibragimov, B. Auto-segmentation of organs at risk for head and neck radiotherapy planning: From atlas-based to deep learning methods. Med. Phys. 2020, 47, e929–e950. [Google Scholar] [CrossRef] [PubMed]

| Structure | Number | DSC | 95% HD (mm) | ||

|---|---|---|---|---|---|

| Mean (SD) | Median (Range) | Mean (SD) | Median (Range) | ||

| Brachial plexus L | 12 | 0.94 (0.06) | 0.96 (0.82–0.99) | 7.47 (6.71) | 4.51 (0.58–20.01) |

| Brachial plexus R | 12 | 0.96 (0.04) | 0.97 (0.90–0.99) | 7.53 (6.46) | 5.84 (0.52–16.42) |

| Brain | 12 | 1.00 (0.01) | 1.00 (0.99–1.00) | 5.35 (7.90) | 5.60 (1.26–7.79) |

| Brainstem | 12 | 0.96 (0.06) | 0.99 (0.81–0.1) | 3.46 (2.88) | 2.78 (0.29–10.12) |

| Cochlea L | 12 | 0.58 (0.26) | 0.57 (0.18–0.98) | 3.11 (1.99) | 3.34 (0.29–6.24) |

| Cochlea R | 12 | 0.58 (0.27) | 0.49 (0.15–0.97) | 2.69 (1.88) | 2.78 (0.73–6.51) |

| Optic chiasm | 12 | 0.56 (0.24) | 0.50 (0.29–0.95) | 7.79 (5.36) | 5.63 (3.20–19.41) |

| Pharyngeal constrictors | 12 | 0.82 (0.19) | 0.90 (0.52–0.99) | 17.59 (11.15) | 22.71 (0.74–31.52) |

| Eye globe L | 12 | 0.98 (0.03) | 1.00 (0.91–1.00) | 1.03 (0.93) | 0.59 (0.27–2.50) |

| Eye globe R | 12 | 0.98 (0.04) | 1.00 (0.89–1.00) | 1.13 (1.01) | 0.75 (0.29–2.84 |

| Lens L | 12 | 0.96 (0.02) | 0.96 (0.92–0.98) | 0.75 (0.39) | 0.58 (0.28–1.44) |

| Lens R | 12 | 0.96 (0.01) | 0.96 (0.93–0.98) | 0.57 (0.16) | 0.58 (0.27–0.74) |

| Lips | 12 | 0.96 (0.02) | 0.95 (0.94–1.00) | 4.79 (2.83) | 4.62 (0.53–9.37) |

| Mandible | 12 | 0.98 (0.01) | 0.98 (0.96–1.00) | 5.93 (5.26) | 4.75 (0.37–14.72) |

| Optic nerve L | 12 | 0.89 (0.14) | 0.95 (0.62–0.98) | 2.67 (1.96) | 2.03 (0.65–6.57) |

| Optic nerve R | 12 | 0.89 (0.13) | 0.95 (0.65–0.99) | 2.49 (2.09) | 1.74 (0.65–6.48) |

| Oral cavity | 12 | 0.94 (0.09) | 0.97 (0.72–1.00) | 12.67 (9.79) | 11.56 (0.65–29.11) |

| Parotid L | 12 | 0.97 (0.03) | 0.97 (0.90–1.00) | 8.96 (5.79) | 9.44 (0.29–16.57) |

| Parotid R | 12 | 0.96 (0.02) | 0.96 (0.92–0.99) | 10.33 (9.37) | 7.50 (0.64–29.54) |

| Spinal cord | 12 | 0.95 (0.07) | 0.99 (0.78–0.99) | 8.70 (16.12) | 2.59 (0.29–47.74) |

| Submandibular gland L | 11 | 0.93 (0.12) | 0.97 (0.69–1) | 5.75 (6.17) | 3.92 (0.29–16.04) |

| Submandibular gland R | 11 | 0.95 (0.05) | 0.96 (0.85–0.99) | 4.39 (1.69) | 4.36 (2.55–7.44) |

| Thyroid | 12 | 0.88 (0.11) | 0.90 (0.69–0.99) | 6.71 (3.49) | 6.24 (2.55–11.21) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

D’Aviero, A.; Re, A.; Catucci, F.; Piccari, D.; Votta, C.; Piro, D.; Piras, A.; Di Dio, C.; Iezzi, M.; Preziosi, F.; et al. Clinical Validation of a Deep-Learning Segmentation Software in Head and Neck: An Early Analysis in a Developing Radiation Oncology Center. Int. J. Environ. Res. Public Health 2022, 19, 9057. https://doi.org/10.3390/ijerph19159057

D’Aviero A, Re A, Catucci F, Piccari D, Votta C, Piro D, Piras A, Di Dio C, Iezzi M, Preziosi F, et al. Clinical Validation of a Deep-Learning Segmentation Software in Head and Neck: An Early Analysis in a Developing Radiation Oncology Center. International Journal of Environmental Research and Public Health. 2022; 19(15):9057. https://doi.org/10.3390/ijerph19159057

Chicago/Turabian StyleD’Aviero, Andrea, Alessia Re, Francesco Catucci, Danila Piccari, Claudio Votta, Domenico Piro, Antonio Piras, Carmela Di Dio, Martina Iezzi, Francesco Preziosi, and et al. 2022. "Clinical Validation of a Deep-Learning Segmentation Software in Head and Neck: An Early Analysis in a Developing Radiation Oncology Center" International Journal of Environmental Research and Public Health 19, no. 15: 9057. https://doi.org/10.3390/ijerph19159057

APA StyleD’Aviero, A., Re, A., Catucci, F., Piccari, D., Votta, C., Piro, D., Piras, A., Di Dio, C., Iezzi, M., Preziosi, F., Menna, S., Quaranta, F., Boschetti, A., Marras, M., Miccichè, F., Gallus, R., Indovina, L., Bussu, F., Valentini, V., ... Mattiucci, G. C. (2022). Clinical Validation of a Deep-Learning Segmentation Software in Head and Neck: An Early Analysis in a Developing Radiation Oncology Center. International Journal of Environmental Research and Public Health, 19(15), 9057. https://doi.org/10.3390/ijerph19159057