The UNICEF/Washington Group Child Functioning Module—Accuracy, Inter-Rater Reliability and Cut-Off Level for Disability Disaggregation of Fiji’s Education Management Information System

Abstract

1. Introduction

- (1)

- Determine the validity (sensitivity and specificity) of different cut-off levels of the CFM for predicting the presence of disabilities in primary school aged Fijian children compared to standard clinical assessments of impairment.

- (2)

- Determine the inter-rater reliability between teacher and parent CFM responses.

2. Materials and Methods

2.1. Study Design and Sampling

2.2. Test Methods

2.2.1. Index Test—Child Functioning Module

2.2.2. Reference Standard (Clinical) Tests

2.2.3. Implementation of the Index Test and Clinical Tests

2.3. Data Analysis

- Sn = true positives/total cases

- Sp = true negatives/total controls

- Positive LR = Sn/(false positives/total controls)

- Negative LR = (false negatives/total cases)/Sp

3. Results

3.1. Participant Demographics and Distribution of Impairments

3.2. Validity (Sensitivity and Specificity) of Different Cut-Off Levels of the CFM

3.2.1. Diagnostic Accuracy of the CFM

3.2.2. Cross-Tabulation of CFM Results by Clinical Test Results

3.2.3. ROC Curve Analysis Implications for Cut-Off Level

3.2.4. Domains without Clinical Reference Standard

3.2.5. Impairments Represented within Cut-Off Levels across the CFM-13

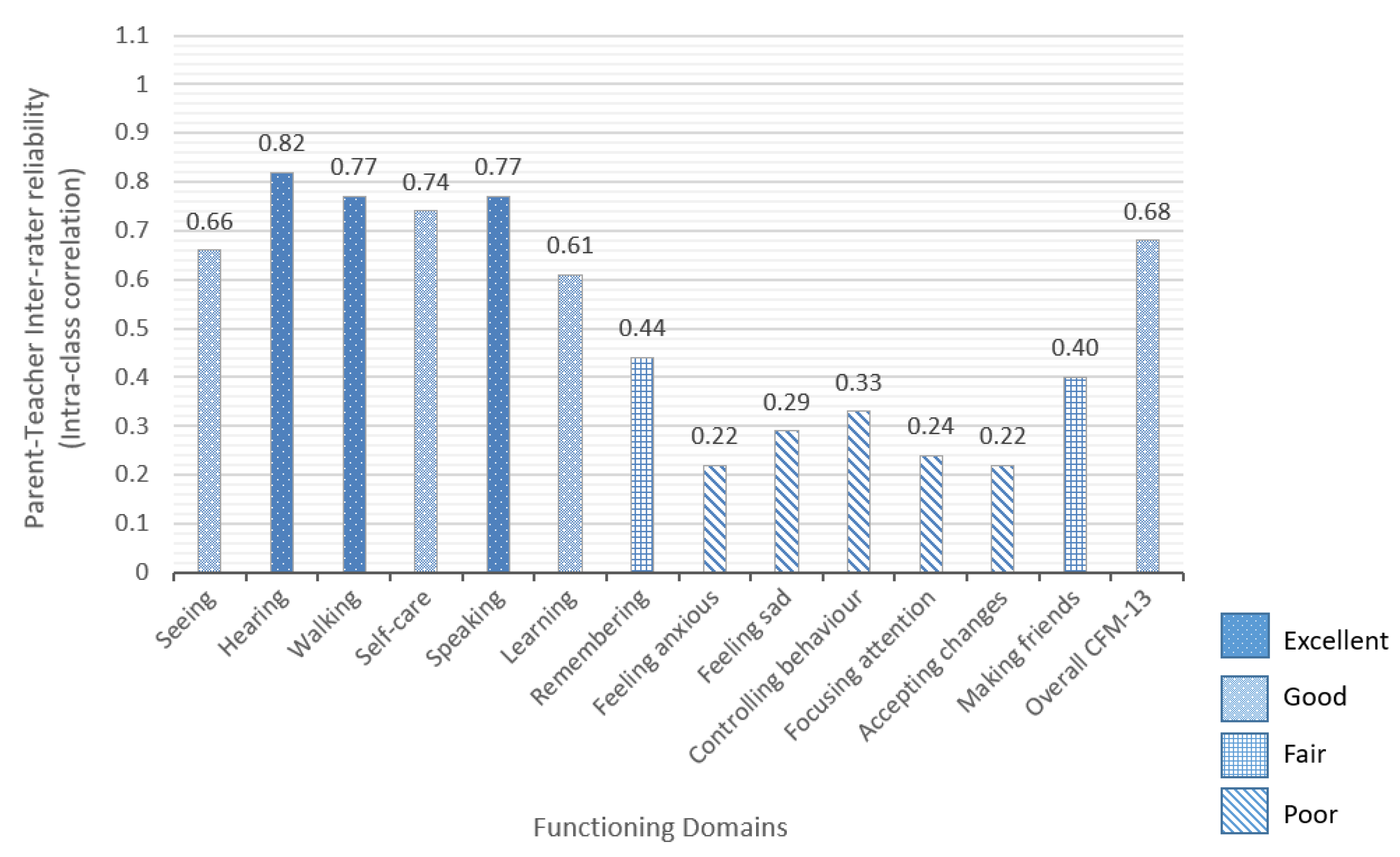

3.3. Inter-Rater Reliability of the CFM

4. Discussion, Limitations and Further Research

Limitations

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A. Differences between the CFM Draft Version Used in this Study and the Final Version

Appendix B. Description and Implementation of the Assessments

References

- United Nations. Convention on the Rights of Persons with Disabilities and Optional Protocol; United Nations: New York, NY, USA, 2006; Available online: https://www.un.org/development/desa/disabilities/ convention-on-the-rights-of-persons-with-disabilities.html (accessed on 1 March 2019).

- United Nations Educational, Scientific and Cultural Organization (UNESCO). Global Education Monitoring Report 2017/8—Accountability in Action: Meeting Our Commitments; UNESCO Publishing: Paris, France, 2017; Available online: http://unesdoc.unesco.org/images/0025/002593/259338e.pdf (accessed on 1 March 2019).

- United Nations Economic and Social Commission for Asia and the Pacific (UNESCAP). Incheon Strategy to “Make the Right Real” for Persons with Disabilities in Asia and the Pacific; United Nations Publication: Bangkok, Thailand, 2012. [Google Scholar]

- United Nations Department of Economic and Social Affairs (UNDESA). United Nations Expert Group Meeting on Disability Data and Statistics, Monitoring and Evaluation: The Way Forward—A Disability-Inclusive Agenda Towards 2015 and Beyond; UNDESA & UNESCO: New York, NY, USA, 2014; Available online: http://www.un.org/disabilities/ (accessed on 1 March 2019).

- CRPD Secretariat. Operationalizing the 2030 Agenda: Ways Forward to Improve Monitoring and Evaluation of Disability Inclusion; Technical Note by the Secretariat; United Nations Department of Economic and Social Affairs: New York, NY, USA, 2015; Available online: http://www.un.org/disabilities/documents/desa/operationalizing_2030_agenda.pdf (accessed on 1 March 2019).

- United Nations Development Programme (UNDP); International Labour Organization (ILO); United Nations International Children’s Emergency Fund (UNICEF); World Health Organization (WHO); Office of the United Nations High Commissioner for Human Rights (OHCHR); United Nations Population Fund (UNFPA); United Nations Special Rapporteur on the rights of persons with disabilities; United Nations Programme to Promote the Rights of Persons with Disability (UNPPRPD); International Disability Alliance (IDA); International Disability and Development Consortium (IDDC). Disability Data Disaggregation Joint Statement by the Disability Sector 2016. Available online: http://www.washingtongroup-disability.com/wp-content/uploads/2016/01/Joint-statement-on-disaggregation-of-data-by-disability-Final.pdf (accessed on 1 March 2019).

- Massey, M.; Chepp, V.; Zablotsky, B.; Creamer, L. Analysis of Cognitive Interview Testing of Child Disability Questions in Five Countries; National Center for Health Statistics, Questionnaire Design Research Laboratory: Hyattsville, MD, USA, 2014.

- Mont, D.; Alur, S.; Loeb, M.; Miller, K. Cognitive Analysis of Survey Questions for Identifying Out-of-School Children with Disabilities in India. In International Measurement of Disability: Purpose, Method and Application—The Work of the Washington Group on Disability Statistics; Altman, B., Ed.; Social Indicators Research Series, 61; Springer: Basel, Switzerland, 2016; pp. 167–181. [Google Scholar]

- Crialesi, R.; De Palma, E.; Battisti, A. Building a ‘module on child functioning and disability’. In International Measurement of Disability: Purpose, Method and Application—The Work of the Washington Group on Disability Statistics; Altman, B., Ed.; Social Indicators Research Series, 61; Springer: Basel, Switzerland, 2016; pp. 151–166. [Google Scholar]

- Visser, M.; Nel, M.; Bronkhorst, C.; Brown, L.; Ezendam, Z.; Mackenzie, K.; van der Merwe, D.; Venter, M. Childhood disability population-based surveillance: Assessment of the Ages and Stages Questionnaire Third Edition and Washington Group on Disability Statistics/UNICEF module on child functioning in a rural setting in South Africa. Afr. J. Disabil. 2016, 5, 265. [Google Scholar] [CrossRef] [PubMed]

- Mactaggart, I.; Cappa, C.; Kuper, H.; Loeb, M.; Polack, S. Field testing a draft version of the UNICEF/Washington Group Module on child functioning and disability. Background, methodology and preliminary findings from Cameroon and India. ALTER Eur. J. Disabil. Res. 2016, 10, 345–360. [Google Scholar] [CrossRef]

- Mactaggart, I.; Kuper, H.; Murthy, G.V.S.; Oye, J.; Polack, S. Measuring disability in population based surveys: The interrelationship between clinical impairments and reported functional limitations in Cameroon and India. PLoS ONE 2016, 11, e0164470. [Google Scholar] [CrossRef] [PubMed]

- Mactaggart, I. Measuring Disability in Population-Based Surveys: The Relationship between Clinical Impairments, Self-Reported Functional Limitations and Equal Opportunities in Two Low and Middle Income Country Settings. Ph.D. Thesis, London School of Hygiene & Tropical Medicine, London, UK, 2017. [Google Scholar]

- Loeb, M.; Mont, D.; Cappa, C.; De Palma, E.; Madans, J.; Crialesi, R. The development and testing of a module on child functioning for identifying children with disabilities on surveys. I: Background. Disabil. Health J. 2018, 11, 495–501. [Google Scholar] [CrossRef] [PubMed]

- Cappa, C.; Mont, D.; Loeb, M.; Misunas, C.; Madans, J.; Comic, T.; de Castro, F. The development and testing of a module on child functioning for identifying children with disabilities on surveys. III: Field testing. Disabil. Health J. 2018, 11, 510–518. [Google Scholar] [CrossRef] [PubMed]

- Massey, M. The development and testing of a module on child functioning for identifying children with disabilities on surveys. II: Question development and pretesting. Disabil. Health J. 2018, 11, 502–509. [Google Scholar] [CrossRef] [PubMed]

- USAID Office of Education. How-To Note: Collecting Data on Disability in Education Programming; United States Agency for International Development: Washington, DC, USA, 2018. Available online: https://usaideducationdata.org/sart/data/How-To%20Note%20on%20Collecting%20Data%20on%20Disability%202018.pdf (accessed on 1 March 2019).

- Madden, R.; Fortune, N.; Cheeseman, D.; Mpofu, E.; Bundy, A. Fundamental questions before recording or measuring functioning and disability. Disabil. Rehabil. 2013, 35, 1092–1096. [Google Scholar] [CrossRef] [PubMed]

- Mont, D. Measuring Disability Prevalence; SP Discussion Paper No. 0706; Disability & Development Team, The World Bank: Washington, DC, USA, 2007. [Google Scholar]

- United Nations International Children’s Emergency Fund (UNICEF). Module on Child Functioning Tabulation Plan, Narrative and Syntax; UNICEF: New York, NY, USA, 2017. [Google Scholar]

- Sprunt, B.; Hoq, M.; Sharma, U.; Marella, M. Validating the UNICEF/Washington Group Child Functioning Module for Fijian schools to identify seeing, hearing and walking difficulties. Disabil. Rehabil. 2019, 41, 201–211. [Google Scholar] [CrossRef] [PubMed]

- Sprunt, B.; Cormack, F.; Marella, M. Measurement accuracy of Fijian teacher and parent responses to cognition questions from the UNICEF/Washington Group Child Functioning Module compared to computerised neuropsychological tests. Disabil. Rehabil. unpublished.

- Sprunt, B.; Marella, M. Measurement accuracy: Enabling human rights for Fijian students with speech difficulties. Int. J. Speech-Lang. Pathol. 2018, 20, 89–97. [Google Scholar] [CrossRef] [PubMed]

- Sprunt, B.; Marella, M.; Sharma, U. Disability disaggregation of Education Management Information Systems (EMISs) in the Pacific: A review of system capacity. Knowl. Manag. Dev. J. 2016, 11, 41–68. [Google Scholar]

- Rutjes, A.W.S.; Reitsma, J.B.; Vandenbroucke, J.P.; Glas, A.S.; Bossuyt, P.M.M. Case-control and two-gate designs in diagnostic accuracy studies. Clin. Chem. 2005, 51, 1335–1341. [Google Scholar] [CrossRef] [PubMed]

- Cook, C.; Cleland, J.; Huijbregts, P. Creation and Critique of Studies of Diagnostic Accuracy: Use of the STARD and QUADAS Methodological Quality Assessment Tools. J. Man. Manip. Therapy 2007, 15, 93–102. [Google Scholar] [CrossRef] [PubMed]

- The Joanna Briggs Institute. The Joanna Briggs Reviewers’ Manual 2015—The Systematic Review of Studies of Diagnostic Test Accuracy; The Joanna Briggs Institute: Adelaide, Australia, 2015; Available online: http://joannabriggs.org/assets/docs/sumari/Reviewers-Manual_The-systematic-review-of-studies-of-diagnostic-test-accuracy.pdf (accessed on 1 March 2019).

- Flahault, A.; Cadilhac, M.; Thomas, G. Sample size calculation should be performed for design accuracy in diagnostic test studies. J. Clin. Epidemiol. 2005, 58, 859–862. [Google Scholar] [CrossRef] [PubMed]

- Stevens, G.A.; White, R.A.; Flaxman, S.R.; Price, H.; Jonas, J.B.; Keeffe, J.; Leasher, J.; Naidoo, K.; Pesudovs, K.; Resnikoff, S.; et al. Original article: Global Prevalence of Vision Impairment and Blindness. Magnitude and Temporal Trends, 1990–2010. Ophthalmology 2013, 120, 2377–2384. [Google Scholar] [CrossRef] [PubMed]

- Atijosan, O.; Kuper, H.; Rischewski, D.; Simms, V.; Lavy, C. Musculoskeletal impairment survey in Rwanda: Design of survey tool, survey methodology, and results of the pilot study (a cross sectional survey). BMC Musculoskelet. Disord. 2007, 8, 30–39. [Google Scholar] [CrossRef] [PubMed]

- McLeod, S.; Harrison, L.J.; McCormack, J. The Intelligibility in Context Scale: Validity and Reliability of a Subjective Rating Measure. J. Speech Lang. Hear. Res. 2012, 55, 648–656. [Google Scholar] [CrossRef]

- Luciana, M. Practitioner review: Computerized assessment of neuropsychological function in children: Clinical and research applications of the Cambridge neurological testing automated battery (CANTAB). J. Child Psychol. Psychiatry Allied Discip. 2003, 44, 649–663. [Google Scholar] [CrossRef]

- Hsieh, J. Receiver Operating Characteristic (ROC) Curve. In Encyclopedia of Epidemiology [Internet]; SAGE Publications, Inc.: Thousand Oaks, CA, USA, 2008; Available online: http://dx.doi.org/10.4135/9781412953948.n394 (accessed on 26 December 2018).

- Carter, J.V.; Pan, J.; Rai, S.N.; Galandiuk, S. ROC-ing along: Evaluation and interpretation of receiver operating characteristic curves. Surgery 2016, 159, 1638–1645. [Google Scholar] [CrossRef] [PubMed]

- Altman, D.G.; Bland, J.M. Diagnostic tests 3: Receiver operating characteristic plots. BMJ Br. Med. J. 1994, 309, 188. [Google Scholar] [CrossRef]

- Loeb, M.; Cappa, C.; Crialesi, R.; De Palma, E. Measuring child functioning: The UNICEF/Washington Group Module. Salud Pública de México 2017, 59, 485–487. [Google Scholar] [CrossRef] [PubMed]

- Hallgren, K.A. Computing inter-rater reliability for observational data: An overview and tutorial. Tutor. Quant. Methods Psychol. 2012, 8, 23–34. [Google Scholar] [CrossRef] [PubMed]

- Cicchetti, D.V. Guidelines, criteria, and rules of thumb for evaluating normed and standardized assessment instruments in psychology. Psychol. Assess. 1994, 6, 284–290. [Google Scholar] [CrossRef]

- Nunnally, J. Psychometric Methods; McGraw-Hill: New York, NY, USA, 1978. [Google Scholar]

- Koo, T.K.; Li, M.Y. A Guideline of Selecting and Reporting Intraclass Correlation Coefficients for Reliability Research. J. Chiropr. Med. 2016, 15, 155–163. [Google Scholar] [CrossRef] [PubMed]

- Hinkle, D.; Wiersma, W.; Jurs, S.G. Applied Statistics for the Behavioral Sciences, 5th ed.; Houghton Mifflin College Division: Boston, MA, USA, 2003. [Google Scholar]

- WHO. International Classification of Functioning, Disability and Health (ICF); WHO: Geneva, Switzerland, 2001; Available online: http://www.who.int/classifications/icf/en/ (accessed on 1 March 2019).

- Mitra, S. Measurement, Data and Country Context. Disability, Health and Human Development; Palgrave Macmillan US: New York, NY, USA, 2018; pp. 33–60. [Google Scholar]

- Fiji Ministry of Education Heritage & Arts. Policy on Special and Inclusive Education. Suva. 2016. Available online: http://www.education.gov.fj/images/Special_and_Inclusive_Education_Policy_-_2016.pdf (accessed on 26 December 2018).

- Messick, S. Validity of psychological assessment: Validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. Am. Psychol. 1995, 50, 741–749. [Google Scholar] [CrossRef]

- Shepard, L.A. The centrality of test use and consequences for test validity. Educ. Meas. Issues Pract. 1997, 16, 5–24. [Google Scholar] [CrossRef]

- Schmidt, R.L.; Factor, R.E. Understanding Sources of Bias in Diagnostic Accuracy Studies. Arch. Pathol. Lab. Med. 2013, 137, 558–565. [Google Scholar] [CrossRef] [PubMed]

- Fiji Bureau of Statistics. 2017 Population and Housing Census—Release No. 2; Fiji Bureau of Statistics: Suva, Fiji, 2018. Available online: https://www.statsfiji.gov.fj/ (accessed on 1 March 2019).

- WHO. Childhood Hearing Loss: Strategies for Prevention and Care; World Health Organization: Geneva, Switzerland, 2016. [Google Scholar]

- Pascolini, D.; Smith, A. Hearing impairment in 2008: A compilation of available epidemiological studies. Int. J. Audiol. 2009, 48, 473–485. [Google Scholar] [CrossRef] [PubMed]

- Jacob, A.; Rupa, V.; Job, A.; Joseph, A. Hearing impairment and otitis media in a rural primary school in South India. Int. J. Pediatr. Otorhinolaryngol. 1997, 39, 133–138. [Google Scholar] [CrossRef]

- McPherson, B.; Law, M.M.S.; Wong, M.S.M. Hearing screening for school children: Comparison of low-cost, computer-based and conventional audiometry. Child Care Health Dev. 2010, 36, 323–331. [Google Scholar] [CrossRef] [PubMed]

- International Centre for Evidence in Disability (ICED). The Telengana Disability Study, India Country Report; London School of Hygiene and Tropical Medicine (LSHTM): London, UK, 2014; Available online: http://disabilitycentre.lshtm.ac.uk/files/2014/12/India-Country-Report.pdf (accessed on 21 February 2015).

- Hopf, S.C.; McLeod, S.; McDonagh, S.H. Validation of the Intelligibility in Context Scale for school students in Fiji. Clin. Linguist. Phon. 2017, 31, 487–502. [Google Scholar] [CrossRef] [PubMed]

- McLeod, S.; Crowe, K.; Shahaeian, A. Intelligibility in context scale: Normative and validation data for English-speaking preschoolers. Lang. Speech Hear. Serv. Sch. 2015, 46, 266–276. [Google Scholar] [CrossRef] [PubMed]

- Washington, K.N.; McDonald, M.M.; McLeod, S.; Crowe, K.; Devonish, H. Validation of the Intelligibility in Context Scale for Jamaican Creole-Speaking Preschoolers. Am. J. Speech-Lang. Pathol. 2017, 26, 750–761. [Google Scholar] [CrossRef] [PubMed]

- Hopf, S.C.; McLeod, S.; McDonagh, S.H. Linguistic multi-competence of Fiji school students and their conversational partners. Int. J. Multiling. 2018, 15, 72–91. [Google Scholar] [CrossRef]

- Ng, K.Y.M.; To, C.K.S.; McLeod, S. Validation of the Intelligibility in Context Scale as a screening tool for preschoolers in Hong Kong. Clin. Linguist. Phon. 2014, 28, 316–328. [Google Scholar] [CrossRef] [PubMed]

- Phạm, B.; McLeod, S.; Harrison, L.J. Validation and norming of the Intelligibility in Context Scale in Northern Viet Nam. Clin. Linguist. Phon. 2017, 31, 665–681. [Google Scholar] [CrossRef] [PubMed]

- Luciana, M.; Nelson, C.A. Assessment of neuropsychological function through use of the Cambridge Neuropsychological Testing Automated Battery: Performance in 4- to 12-year-old children. Dev. Neuropsychol. 2002, 22, 595–624. [Google Scholar] [CrossRef] [PubMed]

- Roque, D.T.; AATeixeira, R.; Zachi, E.C.; Ventura, D.F. The use of the Cambridge Neuropsychological Test Automated Battery (CANTAB) in neuropsychological assessment: Application in Brazilian research with control children and adults with neurological disorders. Psychol. Neurosci. 2011, 4, 255. [Google Scholar] [CrossRef]

- Fried, R.; Hirshfeld-Becker, D.; Petty, C.; Batchelder, H.; Biederman, J. How informative is the CANTAB to assess executive functioning in children with ADHD? A controlled study. J. Atten. Disord. 2012, 19, 468–475. [Google Scholar] [CrossRef] [PubMed]

- Nkhoma, O.W.W.; Duffy, M.E.; Davidson, P.W.; Cory-Slechta, D.A.; McSorley, E.M.; Strain, J.J.; O’Brien, G.M. Nutritional and cognitive status of entry-level primary school children in Zomba, rural Malawi. Int. J. Food Sci. Nutr. 2013, 64, 282–291. [Google Scholar] [CrossRef] [PubMed]

- Matsuura, N.; Ishitobi, M.; Arai, S.; Kawamura, K.; Asano, M.; Inohara, K.; Narimoto, T.; Wada, Y.; Hiratani, M.; Kosaka, H. Distinguishing between autism spectrum disorder and attention deficit hyperactivity disorder by using behavioral checklists, cognitive assessments, and neuropsychological test battery. Asian J. Psychiatry 2014, 12, 50–57. [Google Scholar] [CrossRef] [PubMed]

| n = 472, Unless Otherwise Stated | Cases (n = 231, 48.9%) | Controls (n = 241, 51.1%) | |||

|---|---|---|---|---|---|

| n | % | n | % | ||

| Gender | Male | 145 | 62.8 | 118 | 49.0 |

| Female | 86 | 37.2 | 123 | 51.0 | |

| Age (years) | 5–7 | 43 | 18.6 | 52 | 21.6 |

| 8–9 | 52 | 22.5 | 53 | 22.0 | |

| 10–11 | 42 | 18.2 | 59 | 24.5 | |

| 12–13 | 51 | 22.1 | 57 | 23.7 | |

| 14–15 | 43 | 18.6 | 20 | 8.3 | |

| Ethnicity | i-Taukei (Fijian) | 141 | 61.0 | 159 | 66.0 |

| Indo-Fijian | 75 | 32.5 | 78 | 32.4 | |

| Other | 15 | 6.5 | 4 | 1.7 | |

| Type of school | Special | 176 | 76.2 | 56 | 23.2 |

| Mainstream primary | 55 | 23.8 | 185 | 76.8 | |

| Parent/guardian respondent | Mother | 130 | 56.3 | 144 | 59.8 |

| Father | 44 | 19.0 | 61 | 25.3 | |

| Other * | 57 | 24.7 | 36 | 14.9 | |

| Highest level of education of parent | Primary | 57 | 25.4 | 52 | 22.3 |

| Secondary | 125 | 55.8 | 146 | 62.7 | |

| Higher education | 42 | 18.8 | 35 | 15.0 | |

| Area of Residence | Urban | 63 | 27.3 | 44 | 18.3 |

| Peri-urban | 112 | 48.5 | 68 | 28.2 | |

| Rural | 45 | 19.5 | 79 | 32.8 | |

| Remote | 11 | 4.8 | 50 | 20.7 | |

| Code Used in This Paper | Domain | CFM Question | Response Categories | |

|---|---|---|---|---|

| CFM-13 | CFM-7 | Seeing | ** Does (child’s name) have difficulty seeing? |

|

| Hearing | ** Does (child’s name) have difficulty hearing sounds like peoples’ voices or music? | |||

| Walking | ** Does (child’s name) have difficulty walking 100 metres on level ground? Does (child’s name) have difficulty walking 500 metres on level ground? | |||

| Speaking | When (child’s name) speaks does he/she have any difficulty being understood by:

| |||

| Learning | Compared with children of the same age, does (name) have difficulty learning things? | |||

| Remembering | Compared with children of the same age, does (name) have difficulty remembering things? | |||

| Focusing attention | Does (name) have difficulty focusing on an activity that he/she enjoys doing? | |||

| Self-care | Does (name) have difficulty with self-care such as feeding or dressing him/herself? | |||

| Accepting changes to routine | Does (name) have difficulty accepting changes in his/her routine? | |||

| Making friends | Does (name) have difficulty making friends? | |||

| Anxiety/ worry | How often does (name) seem anxious, nervous or worried? |

| ||

| Depression/sadness | How often does (name) seem sad or depressed? | |||

| Controlling behaviour | Compared with children of the same age, how much difficulty does (name) have controlling his/her behaviour? |

| ||

| Domain | AUC | Youden Index “some difficulty” | Youden Index “a lot of difficulty” | |||

|---|---|---|---|---|---|---|

| Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Overall CFM-7 | 0.763 | 0.786 | 0.31 | 0.38 | 0.36 | 0.39 |

| Seeing | 0.848 | 0.823 | 0.69 | 0.61 | 0.13 | 0.35 |

| Hearing | 0.847 | 0.846 | 0.66 | 0.67 | 0.38 | 0.49 |

| Walking | 0.889 | 0.869 | 0.73 | 0.69 | 0.57 | 0.47 |

| Speaking | 0.975 | 0.909 | 0.88 | 0.70 | 0.75 | 0.60 |

| Learning | 0.774 | 0.822 | 0.51 | 0.60 | 0.21 | 0.27 |

| Remembering | 0.663 | 0.781 | 0.29 | 0.54 | 0.14 | 0.17 |

| Focusing attention | 0.623 | 0.686 | 0.24 | 0.37 | 0.05 | 0.10 |

| CFM | Total n (%) | Impairment Level Based on Reference (Clinical) Assessments *, n (%) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Difficulty in any CFM-7 Domain * | Parent, n = 472 | Teacher, n = 392 | None | Mild | Moderate | Severe | ||||

| Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |||

| No | 84 (17.8) | 85 (21.7) | 78 (33.9) | 74 (43.8) | 2 (10.5) | 2 (11.8) | 3 (4.8) | 6 (10.9) | 1 (0.6) | 3 (2.0) |

| Some | 212 (44.9) | 154 (39.3) | 109 (47.4) | 66 (39.1) | 8 (42.1) | 10 (58.8) | 33 (52.4) | 26 (47.3) | 62 (38.8) | 52 (34.4) |

| A lot | 122 (25.8) | 104 (26.5) | 41 (17.8) | 27 (16.0) | 9 (47.4) | 5 (29.4) | 25 (39.7) | 19 (34.5) | 47 (29.4) | 53 (35.1) |

| Cannot do | 54 (11.4) | 49 (12.5) | 2 (0.9) | 2 (1.2) | 0 (0.0) | 0 (0.0) | 2 (3.2) | 4 (7.3) | 50 (31.3) | 43 (28.5) |

| Cut-Off Level | ≥ Some Difficulty/Weekly * | ≥ Lot of Difficulty/Daily * | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Respondent | Parent n (%) | Teacher n (%) | ICC | 95% CI | Sig. | Parent n (%) | Teacher n (%) | ICC | 95% CI | Sig. |

| Self-care | 95 (20.1) | 84 (21.6) | 0.72 | 0.66–0.77 | 0.000 | 11 (2.3) | 24 (6.2) | 0.42 | 0.30–0.53 | 0.000 |

| Feeling anxious * | 117 (24.8) | 103 (26.9) | 0.26 | 0.10–0.40 | 0.002 | 42 (8.9) | 51 (13.3) | 0.09 | −0.02–0.25 | 0.186 |

| Feeling sad * | 121 (25.7) | 89 (23.4) | 0.19 | 0.01–0.34 | 0.021 | 36 (7.6) | 35 (9.2) | 0.08 | −0.03–0.25 | 0.211 |

| Controlling behaviour Ω | NA | NA | - | - | - | 67 (14.2) | 72 (18.8) | 0.20 | 0.02–0.34 | 0.015 |

| Accepting changes | 232 (49.4) | 153 (39.2) | 0.14 | −0.05–0.29 | 0.075 | 39 (8.3) | 27 (6.9) | 0.09 | −0.12–0.25 | 0.190 |

| Making friends | 79 (16.8) | 85 (21.9) | 0.34 | 0.19–0.46 | 0.000 | 14 (3.0) | 21 (5.4) | 0.25 | 0.82–0.38 | 0.003 |

| CFM-13 (Highest Level of Difficulty on Any Question) | Impairment Level Based on Reference Standard (Clinical) Assessments, n(%) | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Controls | Cases | |||||||||

| n | No Impairment | Mild Impairment | Moderate Impairment | Severe Impairment | ||||||

| P | T | Parent n= 230 | Teacher n= 169 | Parent n= 19 | Teacher n= 17 | Parent n= 63 | Teacher n= 55 | Parent n= 160 | Teacher n= 151 | |

| Some difficulty | 189 | 117 | 113 (59.8) | 62 (53.0) | 7 (3.7) | 6 (5.1) | 25 (13.2) (39.7) | 18 (15.4) (33.3) | 44 (23.3) (27.5) | 31 (26.5) (20.5) |

| ≥ Lot of difficulty | 231 | 198 | 70 (30.3) | 40 (20.2) | 11 (4.8) | 9 (4.5) | 35 (15.2) | 32 (16.2) | 115 (49.8) | 117 (59.1) |

| Intraclass correlation, 95% confidence intervals, significance | ICC = 0.61 (95%CI 0.47–0.71, 0.000) | ICC = 0.85 (95%CI 0.58–0.94, 0.000) | ICC = 0.06 (95%CI −0.62–0.46, 0.408) | ICC = 0.55 (95%CI 0.38–0.68, 0.000) | ||||||

| CFM-13 (Highest Level of Difficulty on Any Question) | Impairment Level Based on Reference Standard (Clinical) Assessments, n(%) | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Controls | Cases | |||||||||

| n | No Vision Impairment (≥6/9 ¥) | Mild VI (<6/9 ≥6/18 ¥) | Moderate VI (<6/18 ≥6/60 ¥) | Severe-Blind (<6/60 ¥) | ||||||

| P | T | Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Some difficulty | 169 | 109 | 149 (88.2) | 101 (92.7) | 2 (1.2) | 2 (1.8) | 4 (2.4) | 1 (0.9) | 14 (8.3) | 5 (4.6) |

| ≥ Lot of difficulty | 196 | 157 | 176 (89.8) | 134 (85.4) | 3 (1.5) | 2 (1.3) | 7 (3.6) | 7 (4.5) | 10 (5.1) | 14 (8.9) |

| n | No Hearing Impairment(<26 dBA) | Mild HI (26–40 dBA) | Moderate HI (41–60 dBA) | Severe-Profound HI (≥61 dBA) | ||||||

| P | T | Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Some difficulty | 164 | 103 | 138 (84.1) | 85 (82.5) | 15 (9.1) | 11 (10.7) | 8 (4.9) | 4 (3.9) | 3 (1.8) | 3 (2.9) |

| ≥ Lot of difficulty | 145 | 132 | 110 (66.3) | 78 (59.1) | 15 (9.0) | 16 (12.1) | 12 (7.2) | 11 (8.3) | 29 (17.5) | 27 (20.5) |

| n | No musculoskeletal impairment (MSI) ^ | Mild MSI (5–24%) ^ | Moderate MSI (25–49%) ^ | Severe MSI (50–90%) ^ | ||||||

| P | T | Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Some difficulty | 175 | 111 | 169 (96.6) | 105 (94.6) | 3 (1.7) | 2 (1.8) | 3 (1.7) | 3 (2.7) | 0 (0.0) | 1 (0.9) |

| ≥ Lot of difficulty | 208 | 185 | 172 (82.7) | 152 (82.2) | 6 (2.9) | 6 (3.2) | 11 (5.3) | 11 (5.9) | 19 (9.1) | 16 (8.6) |

| n | No speech impairment (4.0–5.0 ICS) ₱ | Inconclusive speech function (2.5 < 4.0 ICS) ₱ | Moderate speech impairment (1.8 < 2.5 ICS) ₱ | Severe speech impairment (1.0 < 1.8 ICS) ₱ | ||||||

| P | T | Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Some difficulty | 185 | 114 | 141 (76.2) | 72 (63.2) | 38 (20.5) | 31 (27.2) | 4 (2.2) | 3 (2.6) | 2 (1.1) | 8 (7.0) |

| ≥ Lot of difficulty | 226 | 194 | 71 (31.4) | 71 (36.6) | 90 (39.8) | 68 (35.1) | 17 (7.5) | 16 (8.2) | 48 (21.2) | 39 (20.1) |

| n | Average/better cognitive function | Low average cognitive function | Moderate cognitive Impairment | Severe cognitive impairment | ||||||

| P | T | Parent | Teacher | Parent | Teacher | Parent | Teacher | Parent | Teacher | |

| Some difficulty | 91 | 67 | 14 (15.4) | 6 (9.0) | 35 (38.5) | 27 (40.3) | 14 (15.4) | 16 (23.9) | 28 (30.8) | 18 (26.9) |

| ≥ Lot of difficulty | 108 | 102 | 5 (4.6) | 3 (2.9) | 24 (22.2) | 16 (15.7) | 30 (27.8) | 25 (24.5) | 49 (45.4) | 58 (56.9) |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sprunt, B.; McPake, B.; Marella, M. The UNICEF/Washington Group Child Functioning Module—Accuracy, Inter-Rater Reliability and Cut-Off Level for Disability Disaggregation of Fiji’s Education Management Information System. Int. J. Environ. Res. Public Health 2019, 16, 806. https://doi.org/10.3390/ijerph16050806

Sprunt B, McPake B, Marella M. The UNICEF/Washington Group Child Functioning Module—Accuracy, Inter-Rater Reliability and Cut-Off Level for Disability Disaggregation of Fiji’s Education Management Information System. International Journal of Environmental Research and Public Health. 2019; 16(5):806. https://doi.org/10.3390/ijerph16050806

Chicago/Turabian StyleSprunt, Beth, Barbara McPake, and Manjula Marella. 2019. "The UNICEF/Washington Group Child Functioning Module—Accuracy, Inter-Rater Reliability and Cut-Off Level for Disability Disaggregation of Fiji’s Education Management Information System" International Journal of Environmental Research and Public Health 16, no. 5: 806. https://doi.org/10.3390/ijerph16050806

APA StyleSprunt, B., McPake, B., & Marella, M. (2019). The UNICEF/Washington Group Child Functioning Module—Accuracy, Inter-Rater Reliability and Cut-Off Level for Disability Disaggregation of Fiji’s Education Management Information System. International Journal of Environmental Research and Public Health, 16(5), 806. https://doi.org/10.3390/ijerph16050806