A Mixed Methods Evaluation of Sharing Air Pollution Results with Study Participants via Report-Back Communication

Abstract

1. Introduction

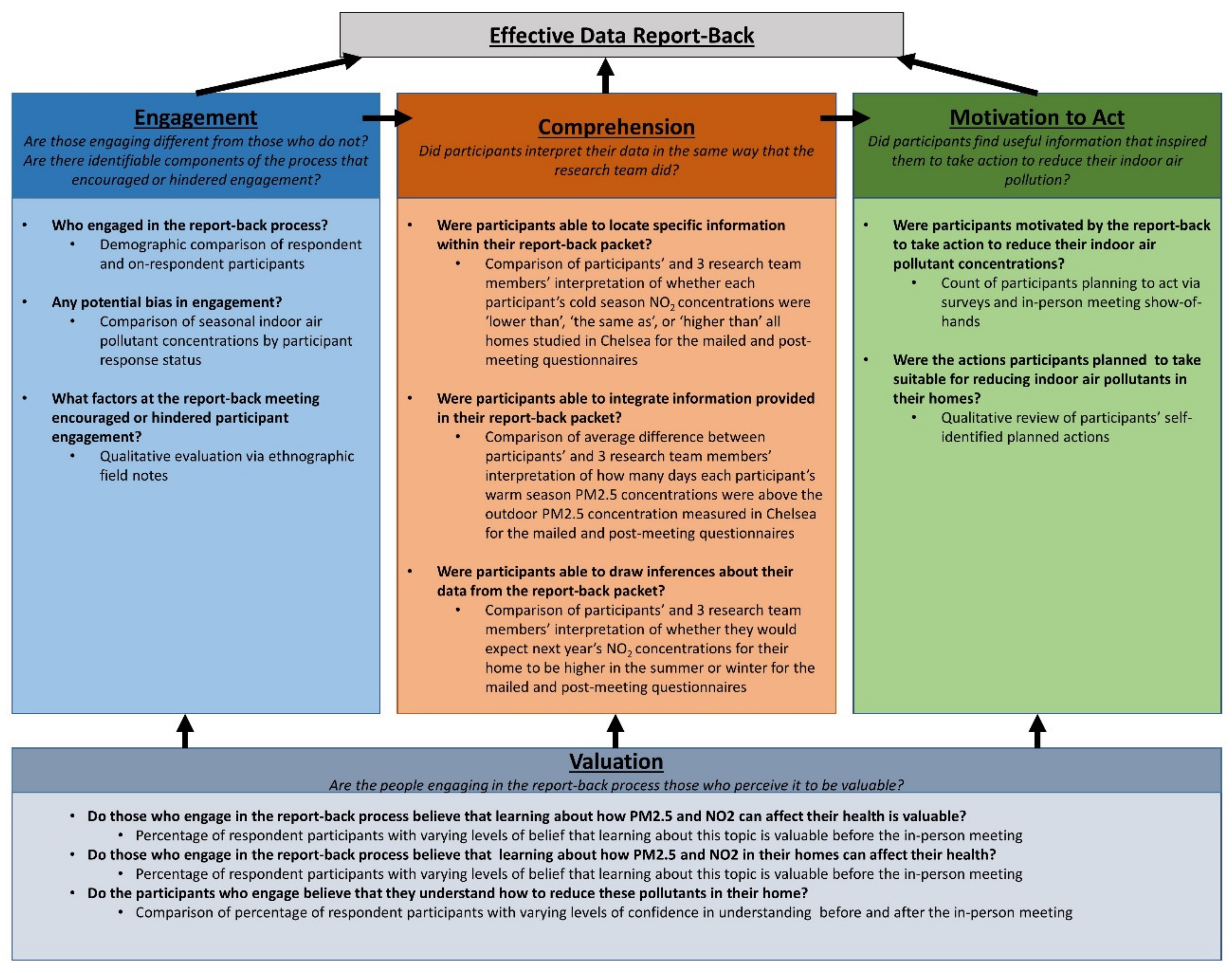

Evaluation Framework

2. Materials and Methods

2.1. Process

2.2. Questionnaire Development

2.3. Engagement Analysis

2.4. Comprehension Analysis

2.5. Motivation to Action

2.6. Valuation Analysis

2.7. Qualitative Analysis

3. Results

3.1. Engagement

3.2. Understandability

3.3. Actionability

3.4. Valuation

4. Discussion

4.1. Engagement

4.2. Understandability

4.3. Valuation

4.4. Actionabiltiy

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Brody, J.G.; Dunagan, S.C.; Morello-Frosch, R.; Brown, P.; Patton, S.; Rudel, R.A. Reporting Individual Results for Biomonitoring and Environmental Exposures: Lessons Learned from Environmental Communication Case Studies. Environ. Heal. 2014, 13, 40. [Google Scholar] [CrossRef] [PubMed]

- Adams, C.; Brown, P.; Morello-Frosch, R.; Brody, J.G.; Rudell, R.; Zota, A.; Dunagan, S.; Tovar, J.; Patton, S. Disentangling the Exposure Experience: The Roles of Community Context and Report-Back of Environmental Exposure Data. J. Health Soc. Behav. 2011, 52, 180–196. [Google Scholar] [CrossRef] [PubMed]

- Ramirez-Andreotta, M.D.; Brody, J.G.; Lothrop, N.; Loh, M.; Beamer, P.I.; Brown, P. Reporting Back Environmental Exposure Data and Free Choice Learning. Environ. Heal. 2016, 15, 2. [Google Scholar] [CrossRef] [PubMed]

- Silent Spring Institute. Reporting Individual Exposure Results; Silent Spring Institute: Newton, MA, USA, 2019. [Google Scholar]

- Morello-Frosch, R.; Varshavsky, J.; Liboiron, M.; Brown, P.; Brody, J.G. Communicating Results in Post-Belmont Era Biomonitoring Studies: Lessons from Genetics and Neuroimaging Research. Environ. Res. 2015, 136, 363–372. [Google Scholar] [CrossRef] [PubMed]

- Morello-Frosch, R.; Brody, J.G.; Brown, P.; Altman, R.G.; Rudel, R.A.; Pérez, C. Toxic Ignorance and Right-to-Know in Biomonitoring Results Communication: A Survey of Scientists and Study Participants. Environ. Heal. 2009, 8, 6. [Google Scholar] [CrossRef] [PubMed]

- US EPA. Exposure Levels for Evaluation of Polychlorinated Biphenyls (PCBs) in Indoor School Air; EPA: Washington, DC, USA, 2017.

- Tomsho, K.S.; Basra, K.; Rubin, S.M.; Miller, C.B.; Juang, R.; Broude, S.; Martinez, A.; Hornbuckle, K.C.; Heiger-Bernays, W.; Scammell, M.K. Community Reporting of Ambient Air Polychlorinated Biphenyl Concentrations near a Superfund Site. Environ. Sci. Pollut. Res. 2018, 25, 16389–16400. [Google Scholar] [CrossRef] [PubMed]

- Silent Spring Institute. Reporting Individual Exposure Results. Ethics in Community Research: Our Findings; Silent Spring Institute: Newton, MA, USA, 2017. [Google Scholar]

- Ramirez-Andreotta, M.; Brody, J.; Lothrop, N.; Loh, M.; Beamer, P.; Brown, P. Improving Environmental Health Literacy and Justice through Environmental Exposure Results Communication. Int. J. Environ. Res. Public Health 2016, 13, 690. [Google Scholar] [CrossRef] [PubMed]

- Fitzpatrick-Lewis, D.; Yost, J.; Ciliska, D.; Krishnaratne, S. Communication about Environmental Health Risks: A Systematic Review. Environ. Heal. 2010, 9, 67. [Google Scholar] [CrossRef] [PubMed]

- Martinez, A.; Hadnott, B.N.; Awad, A.M.; Herkert, N.J.; Tomsho, K.; Basra, K.; Scammell, M.K.; Heiger-Bernays, W.; Hornbuckle, K.C. Release of Airborne Polychlorinated Biphenyls from New Bedford Harbor Results in Elevated Concentrations in the Surrounding Air. Environ. Sci. Technol. Lett. 2017. [Google Scholar] [CrossRef] [PubMed]

- Byrne, G. An Overview of Mixed Method Research. Artic. J. Res. Nurs. 2009, 14. [Google Scholar] [CrossRef]

- Dunagan, S.C.; Brody, J.G.; Morello-Frosch, R.; Brown, P.; Goho, S.; Tovar, J.; Patton, S.; Danford, R. When Pollution Is Personal: Handbook for Reporting Results to Participants in Biomonitoring and Personal Exposure Studies; Silent Spring Institute: Newton, MA, USA, 2013. [Google Scholar]

- Schollaert, C.; Alvarez, M.; Gillooly, S.E.; Tomsho, K.S.; Bongiovanni, R.; Chacker, S.; Aguilar, T.; Vallarino, J.; Adamkiewicz, G.; Scammell, M.K. Reporting Results of a Community-Based in-Home Exposure Monitoring Study: Developing Methods and Materials. Prog. Community Heal. Partnerships Res. Educ. Action 2019. [Under Review]. [Google Scholar]

- CDC. CDC Coffee Break: Using Mixed Methods in Program Evaluation; CDC: Atlanta, GA, USA, 2012.

- Gillooly, S.E.; Zhou, Y.; Vallarino, J.; Chu, M.T.; Michanowicz, D.R.; Levy, J.I.; Adamkiewicz, G. Development of an In-Home, Real-Time Air Pollutant Sensor Platform and Implications for Community Use. Environ. Pollut. 2019, 244, 440–450. [Google Scholar] [CrossRef] [PubMed]

- Emerson, R.M.; Fretz, R.I.; Shaw, L.L. Writing Ethnographic Fieldnotes, 1st ed.; The University of Chicago Press: Chicago, IL, USA, 1995. [Google Scholar]

- Denzin, N.K.; Lincoln, Y.S. The SAGE Handbook of Qualitative Research, 5th ed.; Denzin, N.K., Lincoln, Y.S., Eds.; SAGE Publications: Thousand Oaks, CA, USA, 2000. [Google Scholar]

- Murray, T.S.; Kirsch, I.S.; Jenkins, L.B. Adult Literacy in OECD Countries: Technical Report on the First International Adult Literacy Survey; National Center for Education Statistics: Washington, DC, USA, 1997. [Google Scholar]

- Gaissmaier, W.; Wegwarth, O.; Skopec, D.; Müller, A.-S.; Broschinski, S.; Politi, M.C. Numbers Can Be Worth a Thousand Pictures: Individual Differences in Understanding Graphical and Numerical Representations of Health-Related Information. Health Psychol. 2012, 31, 286–296. [Google Scholar] [CrossRef] [PubMed]

- Sullivan, G.M.; Artino, A.R., Jr. Analyzing and Interpreting Data from Likert-Type Scales. J. Grad. Med. Educ. 2013, 5, 541–542. [Google Scholar] [CrossRef] [PubMed]

- Rao, J.N.K.; Scott, A.J. The Analysis of Categorical Data from Complex Sample Surveys: Chi-Squared Tests for Goodness of Fit and Independence in Two-Way Tables. J. Am. Stat. Assoc. 1981, 76, 221–230. [Google Scholar] [CrossRef]

- Lawley, D.N. A Generalization of Fisher’s z Test. Biometrika 1938, 30, 180. [Google Scholar] [CrossRef]

- Bernard, H.R. Harvey R. Social Research Methods: Qualitative and Quantitative Approaches, 2nd ed.; SAGE Publications: Thousand Oaks, CA, USA, 2013. [Google Scholar]

- Frank, M.; Tofighi, G.; Gu, H.; Fruchter, R. Engagement Detection in Meetings; Dblp Computer Science Bibliography: Stanford, CA, USA, 2016. [Google Scholar]

- Matsumoto, D. Culture and Nonverbal Behavior. In The SAGE Handbook of Nonverbal Communication; SAGE Publications, Inc.: Thousand Oaks, CA, USA, 2006; pp. 219–236. [Google Scholar] [CrossRef]

- Koo, T.K.; Li, M.Y. A Guideline of Selecting and Reporting Intraclass Correlation Coefficients for Reliability Research. J. Chiropr. Med. 2016, 15, 155–163. [Google Scholar] [CrossRef] [PubMed]

- Rey, D.; Neuhäuser, M. Wilcoxon-Signed-Rank Test. In International Encyclopedia of Statistical Science; Springer Berlin Heidelberg: Berlin, Heidelberg, 2011; pp. 1658–1659. [Google Scholar] [CrossRef]

- McCrum-Gardner, E. Which Is the Correct Statistical Test to Use? Br. J. Oral Maxillofac. Surg. 2008, 46, 38–41. [Google Scholar] [CrossRef] [PubMed]

| Non-Respondent | Respondent | p-value | |

|---|---|---|---|

| n = 41 | n = 31 | ||

| Educational Attainment | |||

| <0.001 | |||

| Less than high school diploma or GED | 15 (36.6) | 3 (9.7) | |

| High school diploma or GED | 8 (19.5) | 3 (9.7) | |

| Some college but no degree | 5 (12.2) | 5 (16.1) | |

| Associate degree | 7 (17.1) | 1 (3.2) | |

| Bachelor’s degree | 5 (12.2) | 8 (25.8) | |

| Post graduate degree (masters or doctoral) | 1 (2.4) | 11 (35.5) | |

| Hispanic or Latin-x | |||

| No, not Hispanic or Latin-x | 12 (29.3) | 25 (80.6) | <0.001 |

| Race | |||

| <0.001 | |||

| American Indian or Alaska Native, Black or African American, Native Hawaiian or Other Pacific Islander, Other | 0 (0.0) | 1 (3.2) | |

| Asian | 1 (2.4) | 1 (3.2) | |

| Black or African American | 1 (2.4) | 3 (9.7) | |

| White | 9 (22.0) | 21 (67.7) | |

| Other | 30 (73.2) | 5 (16.1) | |

| Participant Language Preference: Spanish | |||

| Spanish | 19 (46.3) | 3 (9.7) | 0.002 |

| Annual Household Income | |||

| 0.098 | |||

| Less than $10,000 | 11 (26.8) | 4 (12.9) | |

| $10,000–$24,999 | 11 (26.8) | 3 (9.7) | |

| $25,000–$49,999 | 4 (9.8) | 6 (19.4) | |

| $50,000–$99,999 | 7 (17.1) | 9 (29.0) | |

| $100,000+ | 3 (7.3) | 7 (22.6) | |

| Refused to answer | 4 (9.8) | 1 (3.2) | |

| Do not know | 1 (2.4) | 1 (3.2) | |

| Average Age of Participant | |||

| Mean (SD) | 0.007 | ||

| 47.63 (14.51) | 57.34 (14.41) | ||

| PM2.5 (ug/m3) | |||

| Respondents | Non-Respondents | p-value | |

| Summer | 4.6 | 5.7 | 0.1683 |

| Winter | 3.4 | 5.9 | <0.001 |

| NO2 (parts per billion ) | |||

| Respondents | Non-Respondents | p-value | |

| Summer | 10.5 | 20.8 | <0.001 |

| Winter | 30.9 | 28.2 | 0.3392 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tomsho, K.S.; Schollaert, C.; Aguilar, T.; Bongiovanni, R.; Alvarez, M.; Scammell, M.K.; Adamkiewicz, G. A Mixed Methods Evaluation of Sharing Air Pollution Results with Study Participants via Report-Back Communication. Int. J. Environ. Res. Public Health 2019, 16, 4183. https://doi.org/10.3390/ijerph16214183

Tomsho KS, Schollaert C, Aguilar T, Bongiovanni R, Alvarez M, Scammell MK, Adamkiewicz G. A Mixed Methods Evaluation of Sharing Air Pollution Results with Study Participants via Report-Back Communication. International Journal of Environmental Research and Public Health. 2019; 16(21):4183. https://doi.org/10.3390/ijerph16214183

Chicago/Turabian StyleTomsho, Kathryn S., Claire Schollaert, Temana Aguilar, Roseann Bongiovanni, Marty Alvarez, Madeleine K. Scammell, and Gary Adamkiewicz. 2019. "A Mixed Methods Evaluation of Sharing Air Pollution Results with Study Participants via Report-Back Communication" International Journal of Environmental Research and Public Health 16, no. 21: 4183. https://doi.org/10.3390/ijerph16214183

APA StyleTomsho, K. S., Schollaert, C., Aguilar, T., Bongiovanni, R., Alvarez, M., Scammell, M. K., & Adamkiewicz, G. (2019). A Mixed Methods Evaluation of Sharing Air Pollution Results with Study Participants via Report-Back Communication. International Journal of Environmental Research and Public Health, 16(21), 4183. https://doi.org/10.3390/ijerph16214183