Methodology to Derive Objective Screen-State from Smartphones: A SMART Platform Study

Abstract

1. Introduction

2. Materials and Methods

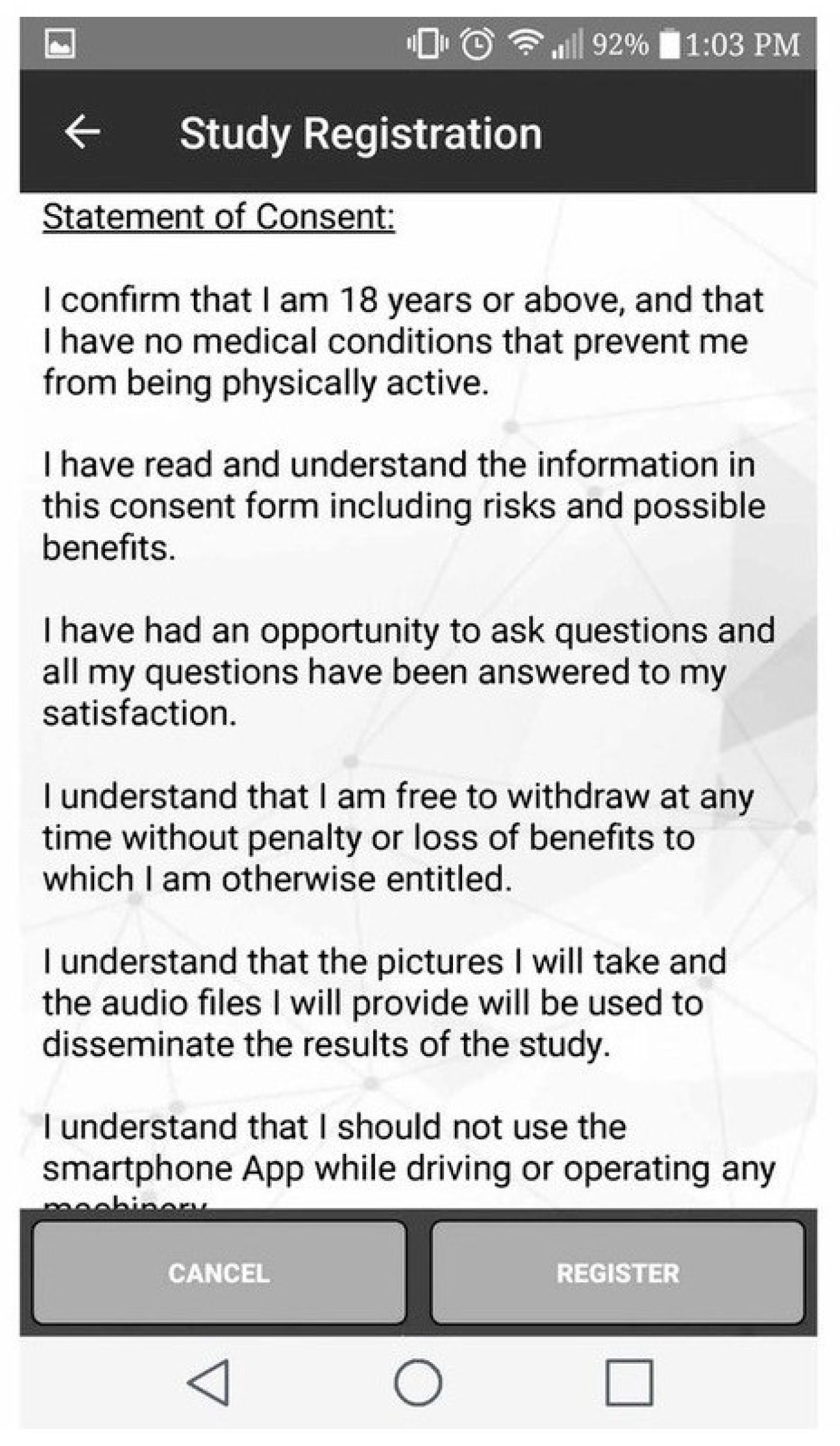

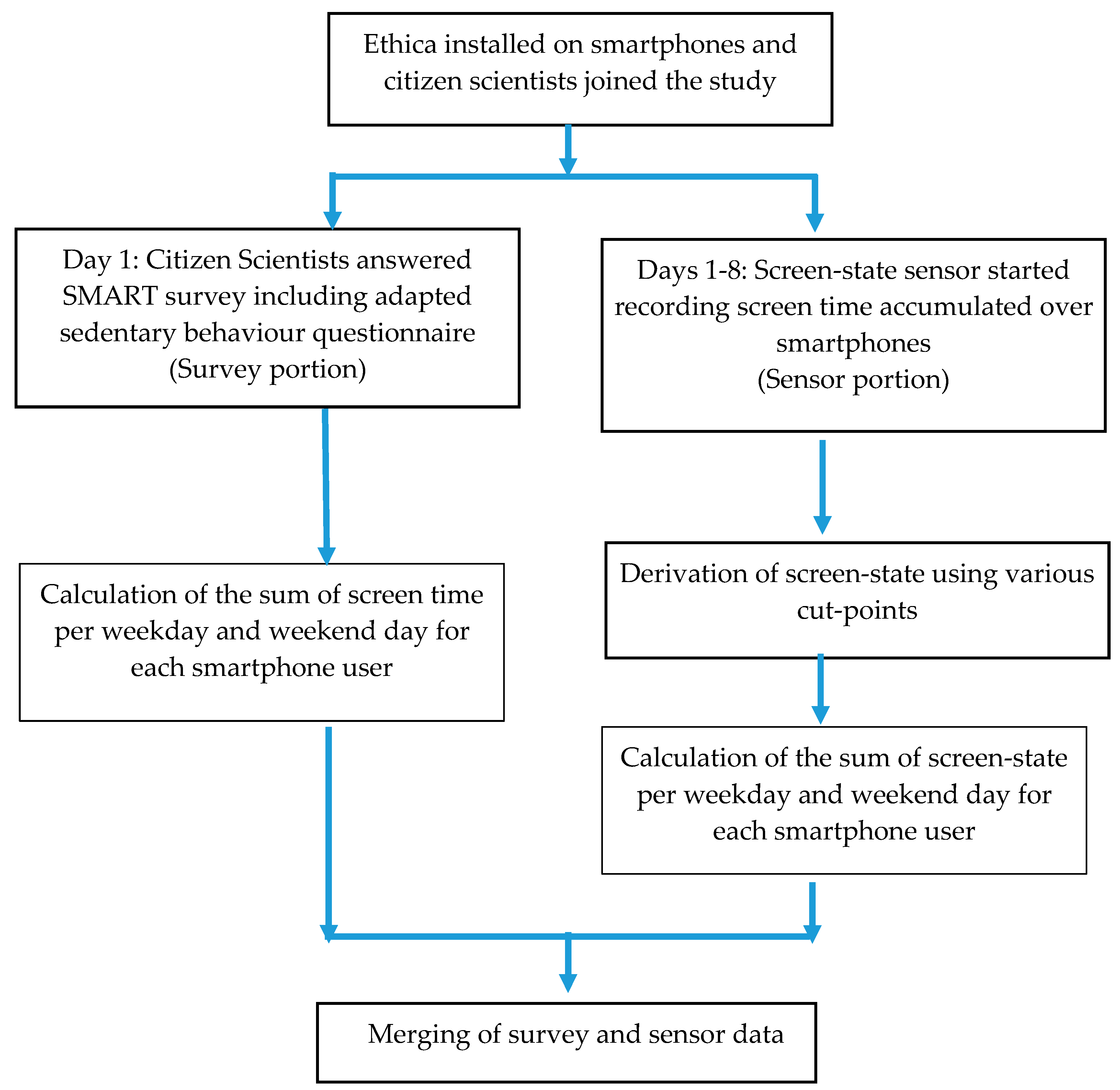

2.1. Study Design

2.2. Study Recruitment and Participants

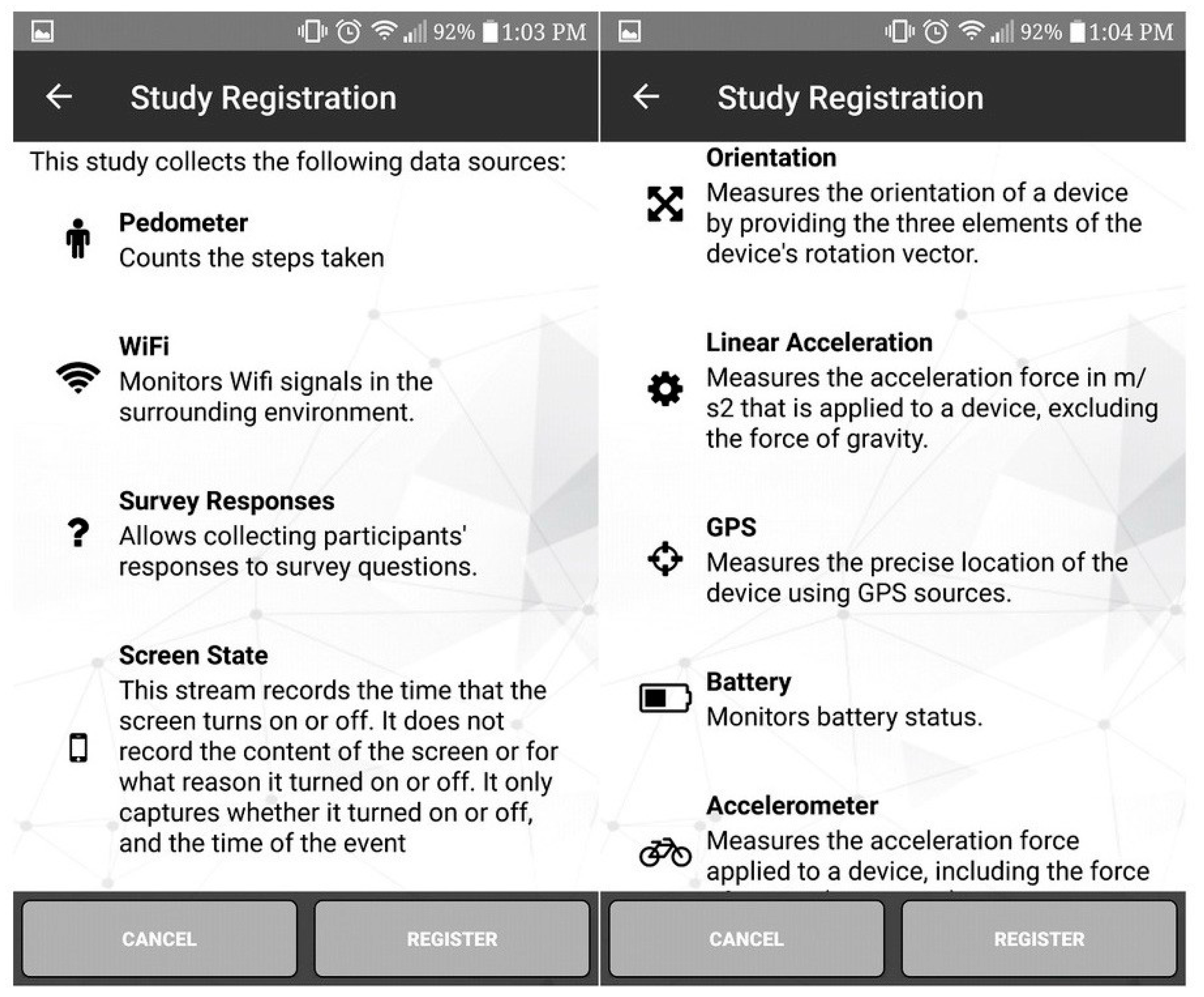

2.3. Data Collection Tools

- On a typical WEEKDAY (from when you wake up until you go to bed), how much time do you spend watching TELEVISION?

- On a typical WEEKDAY, how much time do you spend doing INTERNET SURFING (watching videos, reading news, etc.) or GENERAL WORK (office work, emails, paying bills, etc.) on a DESKTOP/LAPTOP/TABLET?

- On a typical WEEKDAY, how much time do you spend doing INTERNET SURFING (watching videos, reading news, etc.) or GENERAL WORK (office work, emails, paying bills, etc.) on a SMARTPHONE?

- On a typical WEEKDAY, how much time do you spend playing games on a DESKTOP/LAPTOP or TELEVISION SCREEN?

- On a typical WEEKDAY, how much time do you spend playing games on a SMARTPHONE or a HANDHELD VIDEO GAME CONSOLE?

- On a typical WEEKDAY, how much time do you spend TEXTING?

- On a typical WEEKDAY, how much time do you spend SITTING and READING a paper-based BOOK/MAGAZINE?

- On a typical WEEKDAY, how much time do you spend SITTING and READING an ELECTRONIC BOOK/MAGAZINE on a DESKTOP/LAPTOP/TABLET?

- On a typical WEEKDAY, how much time do you spend SITTING and READING an ELECTRONIC BOOK/MAGAZINE on a SMARTPHONE?

- On a typical WEEKDAY, how much time do you spend SITTING and LISTENING to MUSIC?

- On a typical WEEKDAY, how much time do you spend SITTING and TALKING on the PHONE?

- On a typical WEEKDAY, how much time do you spend SITTING and PLAYING a MUSICAL INSTRUMENT?

- On a typical WEEKDAY, how much time do you spend SITTING and doing ARTWORK/ CRAFT

- On a typical WEEKDAY, how much time do you spend DRIVING/RIDING in a CAR/BUS/ TRAIN, or any other mode of MOTORISED TRANSPORTATION?

- Citizen scientists who provided screen-state data on at least 2 weekdays and 1 weekend day and completed that adapted sedentary behavior questionnaire [26].

- Citizen scientists who did not turn off the app during the 8 days of study participation.

2.4. Methodology to Derive Objective Screen-State

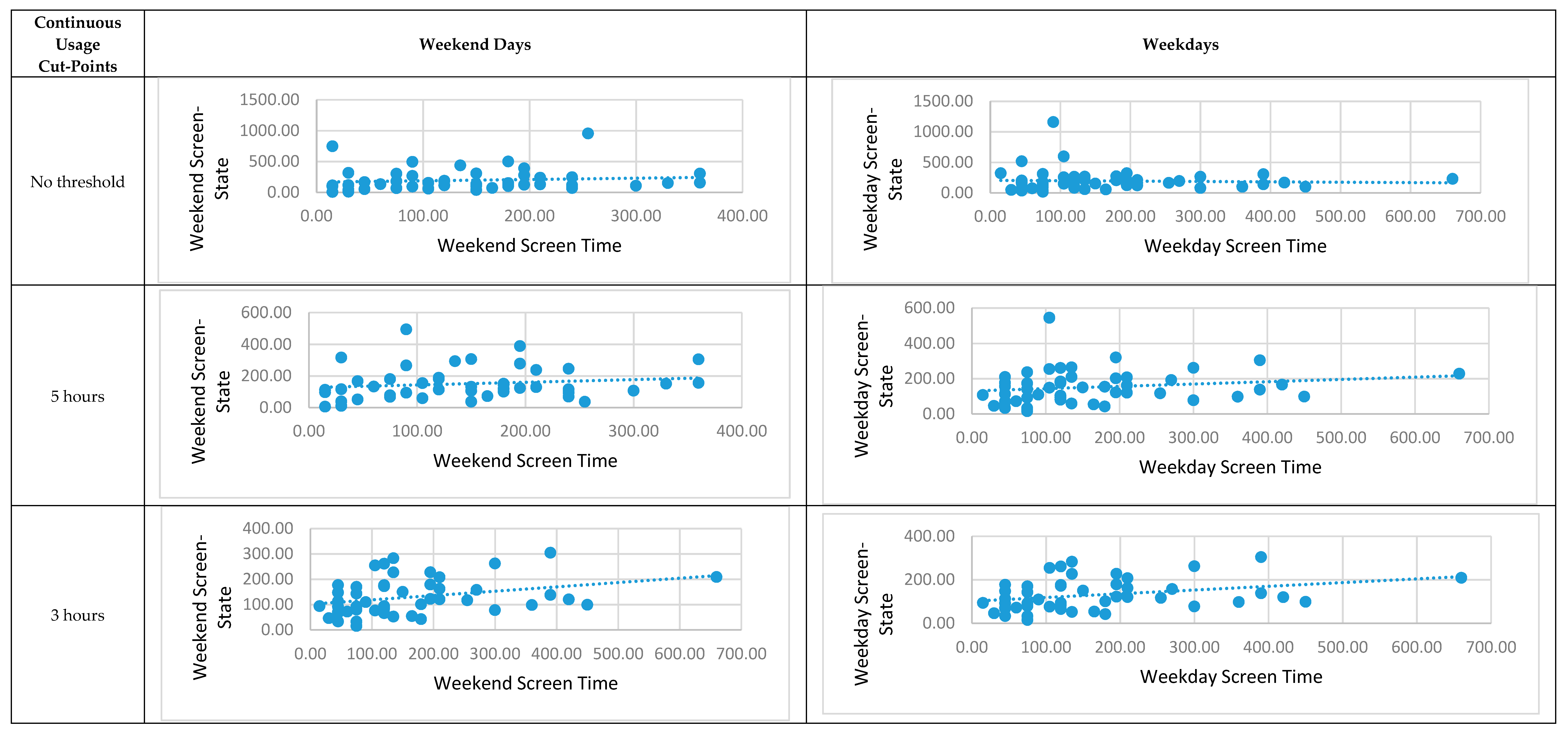

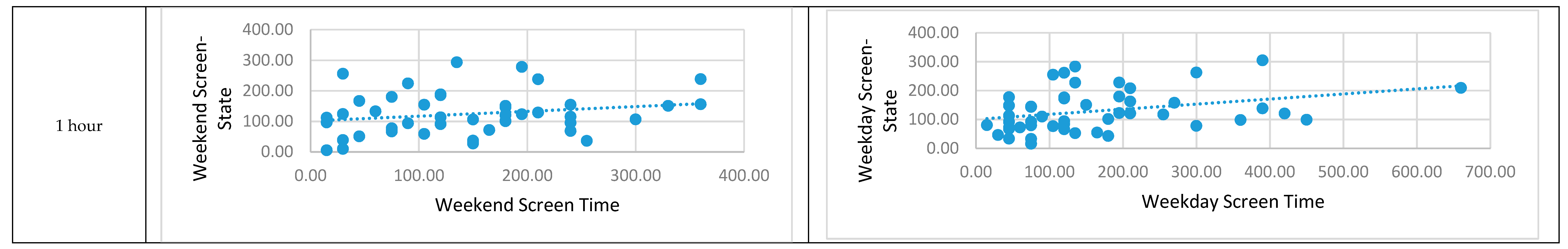

2.5. Statistical Analyses

3. Results

4. Discussion

Limitations and Strengths

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Stiglic, N.; Viner, R.M. Effects of screen time on the health and well-being of children and adolescents: A systematic review of reviews. BMJ Open. 2019, 9, e023191. [Google Scholar] [CrossRef]

- Robinson, T.N.; Banda, J.A.; Hale, L.; Lu, A.S.; Fleming-Milici, F.; Calvert, S.L.; Wartella, E. Screen media exposure and obesity in children and adolescents. Pediatrics 2017, 140 (Suppl. 2), S97–S101. [Google Scholar] [CrossRef] [PubMed]

- Lane, A.; Harrison, M.; Murphy, N. Screen time increases risk of overweight and obesity in active and inactive 9-year-old Irish children: A cross sectional analysis. J. Phys. Act. Health 2014, 11, 985–991. [Google Scholar] [CrossRef] [PubMed]

- Canadian Paediatric Society, Digital Health Task Force, Ottawa, Ontario. Screen time and young children: Promoting health and development in a digital world. Paediatrics Child Health 2017, 22, 461–468. [Google Scholar]

- Anderson, D.R.; Subrahmanyam, K. Digital screen media and cognitive development. Pediatrics 2017, 140 (Suppl. 2), S57–S61. [Google Scholar] [CrossRef] [PubMed]

- Edelson, L.R.; Mathias, K.C.; Fulgoni, V.L., 3rd; Karagounis, L.G. Screen-based sedentary behavior and associations with functional strength in 6–15 year-old children in the United States. BMC Public Health 2016, 16, 116. [Google Scholar] [CrossRef]

- Martin, K. Electronic Overload: The Impact of Excessive Screen Use on Child and Adolescent Health and Wellbeing; Department of Sport and Recreation: Perth, Western Australia, 2011.

- Tremblay, M.S.; LeBlanc, A.G.; Kho, M.E.; Saunders, T.J.; Larouche, R.; Colley, R.C.; Goldfield, G.; Gorber, S.C. Systematic review of sedentary behaviour and health indicators in school-aged children and youth. Int. J. Behav. Nutr. Phys. Act. 2011, 8, 98. [Google Scholar] [CrossRef]

- Carson, V.; Hunter, S.; Kuzik, N.; Gray, C.E.; Poitras, V.J.; Chaput, J.P.; Saunders, T.J.; Katzmarzyk, P.T.; Okely, A.D.; Connor Gorber, S.; et al. Systematic review of sedentary behaviour and health indicators in school-aged children and youth: An update. Appl. Physiol. Nutr. Metab. 2016, 41, S240–S265. [Google Scholar] [CrossRef] [PubMed]

- Busch, V.; Ananda Manders, L.; Rob Josephus de Leeuw, J. Screen time associated with health behaviors and outcomes in adolescents. Am. J. Health Behav. 2013, 37, 819–830. [Google Scholar] [CrossRef] [PubMed]

- Sirard, J.R.; Laska, M.N.; Patnode, C.D.; Farbakhsh, K.; Lytle, L.A. Adolescent physical activity and screen time: Associations with the physical home environment. Int. J. Behav. Nutr. Phy. Act. 2010, 7, 82. [Google Scholar] [CrossRef]

- Segev, A.; Mimouni-Bloch, A.; Ross, S.; Silman, Z.; Maoz, H.; Bloch, Y. Evaluating computer screen time and its possible link to psychopathology in the context of age: A cross-sectional study of parents and children. PLoS ONE 2015, 10, e0140542. [Google Scholar] [CrossRef] [PubMed]

- Haug, S.; Castro, R.P.; Kwon, M.; Filler, A.; Kowatsch, T.; Schaub, M.P. Smartphone use and smartphone addiction among young people in Switzerland. J. Behav. Addict. 2015, 4, 299–307. [Google Scholar] [CrossRef] [PubMed]

- Lee, K.E.; Kim, S.-H.; Ha, T.-Y.; Yoo, Y.M.; Han, J.J.; Jung, J.H.; Jang, J.Y. Dependency on smartphone use and its association with anxiety in Korea. Public Health Rep. 2016, 131, 411–419. [Google Scholar] [CrossRef] [PubMed]

- Lunden, I. Smartphone Users Globally By 2020, Overtaking Basic Fixed Phone Subscriptions. 2015. Available online: http://techcrunch.com/2015/06/02/6-1b-smartphone-users-globally-by-2020-overtaking-basic-fixed-phone-subscriptions/. (accessed on 9 May 2019).

- Fletcher, E.A.; McNaughton, S.A.; Crawford, D.; Cleland, V.; Della Gatta, J.; Hatt, J.; Dollman, J.; Timperio, A. Associations between sedentary behaviours and dietary intakes among adolescents. Public Health Nutr. 2018, 21, 1115–1122. [Google Scholar] [CrossRef] [PubMed]

- Pearson, N.; Biddle, S.J. Sedentary behavior and dietary intake in children, adolescents, and adults: A systematic review. Am. J. Prev. Med. 2011, 41, 178–188. [Google Scholar] [CrossRef] [PubMed]

- Leech, R.M.; McNaughton, S.A.; Timperio, A. The clustering of diet, physical activity and sedentary behavior in children and adolescents: A review. Int. J. Behav. Nutr. Phys. Act. 2014, 11, 4. [Google Scholar] [CrossRef] [PubMed]

- Stuckey, M.I.; Carter, S.W.; Knight, E. The role of smartphones in encouraging physical activity in adults. Int. J. Gen. Med. 2017, 10, 293–303. [Google Scholar] [CrossRef] [PubMed]

- Schoeppe, S.; Alley, S.; Van Lippevelde, W.; Bray, N.A.; Williams, S.L.; Duncan, M.J.; Vandelanotte, C. Efficacy of interventions that use apps to improve diet, physical activity and sedentary behaviour: A systematic review. Int. J. Behav. Nutr. Phys. Act. 2016, 13, 127. [Google Scholar] [CrossRef]

- Kobayashi, T.; Boase, J. No such effect? The implications of measurement error in self-report measures of mobile communication use. Commun. Methods. Meas. 2012, 6, 126–143. [Google Scholar] [CrossRef]

- Moreno, M.A.; Jelenchick, L.; Koff, R.; Eikoff, J.; Diermyer, C.; Christakis, D.A. Internet use and multitasking among older adolescents: An experience sampling approach. Comput. Hum. Behav. 2012, 28, 1097–1102. [Google Scholar] [CrossRef]

- Sigmundová, D.; Sigmund, E.; Badura, P.; Vokáčová, J.; Trhlíková, L.; Bucksch, J. Weekday-weekend patterns of physical activity and screen time in parents and their pre-schoolers. BMC Public Health 2016, 16, 898. [Google Scholar] [CrossRef] [PubMed]

- Katapally, T.R. SMART: A mobile health and citizen scientist platform. Available online: https://www.smartstudysask.com/about (accessed on 9 May 2019).

- Katapally, T.R.; Bhawra, J.; Leatherdale, S.T.; Ferguson, L.; Longo, J.; Rainham, D.; Larouche, R.; Osgood, N. The SMART study, a mobile health and citizen science methodological platform for active living surveillance, integrated knowledge translation, and policy interventions: Longitudinal study. JMIR Public Health Surveill. 2018, 4, e31. [Google Scholar] [CrossRef] [PubMed]

- Rosenberg, D.E.; Norman, G.J.; Wagner, N.; Patrick, K.; Calfas, K.J.; Sallis, J.F. Reliability and validity of the Sedentary Behavior Questionnaire (SBQ) for adults. J. Phys. Act. Health 2010, 7, 697–705. [Google Scholar] [CrossRef] [PubMed]

- Gunnell, K.E.; Brunet, J.; Bélanger, M. Out with the old, in with the new: Assessing change in screen time when measurement changes over time. Prev. Med. Rep. 2018, 9, 37–41. [Google Scholar] [CrossRef] [PubMed]

- Grekin, E.R.; Beatty, J.R.; Ondersma, S.J. Mobile health interventions: Exploring the use of common relationship factors. JMIR Mhealth Uhealth 2019, 7, e11245. [Google Scholar] [CrossRef] [PubMed]

- Song, T.; Qian, S.; Yu, P. Mobile health interventions for self-control of unhealthy alcohol use: Systematic review. JMIR Mhealth Uhealth 2019, 7, e10899. [Google Scholar] [CrossRef] [PubMed]

- Yang, Q.; Van Stee, S.K. The comparative effectiveness of mobile phone interventions in improving health outcomes: Meta-analytical review. JMIR Mhealth Uhealth 2019, 7, e11244. [Google Scholar] [CrossRef] [PubMed]

- Khouja, J.N.; Munafò, M.R.; Tilling, K.; Wiles, N.J.; Joinson, C.; Etchells, P.J.; John, A.; Hayes, F.M.; Gage, S.H.; Cornish, R.P. Is screen time associated with anxiety or depression in young people? Results from a UK birth cohort. BMC Public Health 2019, 19, 82. [Google Scholar] [CrossRef]

| n | Subjective Screen Time | Objective Screen-State | |||||

|---|---|---|---|---|---|---|---|

| Continuous Usage Cut-Points | Notification Cut-Points | ||||||

| No Filter | 5 Seconds | 10 Seconds | 15 Seconds | 20 Seconds | |||

| Mean | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | ||

| 47 | 165.00 (131.34) | 6 hours | 162.71 (98.52) | 161.96 (98.17) | 160.07 (98.42) | 158.42 (98.25) | 157.51 (98.10) |

| 47 | 165.00 (131.34) | 5 hours | 160.43 (96.58) | 159.68 (96.22) | 157.79 (96.49) | 156.13 (96.30) | 155.21 (96.14) |

| 47 | 165.00 (131.34) | 4 hours | 157.64 (94.82) | 156.89 (94.43) | 154.99 (94.67) | 153.33 (94.43) | 152.41 (94.24) |

| 47 | 165.00 (131.34) | 3 hours | 156.06 (94.13) | 155.31 (93.73) | 153.42 (93.96) | 151.76 (93.70) | 150.84 (93.50) |

| 45 | 166.25 (132.43) | 2 hours | 135.74 (73.08) | 135.02 (72.66) | 133.11 (72.65) | 131.37 (71.97) | 130.43 (71.61) |

| 45 | 166.25 (132.43) | 1 hours | 134.94 (73.08) | 134.22 (72.66) | 132.30 (72.64) | 130.56 (71.95) | 129.62 (71.58) |

| 49 | 165.00 (131.34) | All recorded time (No threshold) | 200.47 (180.37) | 199.73 (180.30) | 197.86 (180.72) | 196.26 (180.74) | 195.34 (180.83) |

| n | Subjective Screen Time | Objective Screen-State | |||||

|---|---|---|---|---|---|---|---|

| Continuous Usage Cut-Points | Notification cut-points | ||||||

| No Filter | 5 Seconds | 10 Seconds | 15 Seconds | 20 Seconds | |||

| Mean | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | ||

| 47 | 168.06 (142.02) | 6 hours | 154.65 (97.57) | 153.95 (97.20) | 154.19 (97.12) | 154.19 (97.15) | 153.31 (96.98) |

| 47 | 168.06 (142.02) | 5 hours | 152.58 (95.62) | 151.88 (95.24) | 152.08 (95.18) | 152.03 (95.20) | 151.15 (95.02) |

| 47 | 168.06 (142.02) | 4 hours | 150.05 (93.80) | 149.34 (93.39) | 149.49 (93.31) | 149.40 (93.30) | 148.51 (93.09) |

| 47 | 168.06 (142.02) | 3 hours | 148.62 (93.05) | 147.91 (92.63) | 148.04 (92.57) | 147.92 (92.55) | 147.03 (92.33) |

| 44 | 168.06 (142.02) | 2 hours | 147.87 (91.43) | 147.16 (90.97) | 147.27 (90.87) | 147.12 (90.81) | 146.23 (90.59) |

| 45 | 169.25 (143.10) | 1 hours | 128.42 (73.30) | 127.74 (72.85) | 127.50 (72.53) | 127.89 (70.98) | 126.98 (70.61) |

| 49 | 168.06 (142.02) | All recorded time (No threshold) | 204.49 (186.42) | 203.80 (186.37) | 205.00 (187.28) | 206.03 (188.23) | 205.15 (188.34) |

| n | Subjective Screen Time | Objective Screen-State | |||||

|---|---|---|---|---|---|---|---|

| Continuous Usage Cut-Points | Notification Cut-Points | ||||||

| No Filter | 5 Seconds | 10 Seconds | 15 Seconds | 20 Seconds | |||

| Mean | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | ||

| 47 | 144.18 (91.28) | 6 hours | 156.43 (100.09) | 155.63 (99.97) | 153.38 (100.63) | 153.90 (104.19) | 152.87 (104.14) |

| 47 | 144.18 (91.28) | 5 hours | 154.84 (97.16) | 154.04 (97.02) | 151.78 (97.68) | 152.30 (101.36) | 151.27 (101.30) |

| 47 | 144.18 (91.28) | 4 hours | 154.84 (97.16) | 154.04 (97.02) | 151.78 (97.68) | 152.30 (101.36) | 151.27 (101.30) |

| 47 | 144.18 (91.28) | 3 hours | 154.84 (97.16) | 154.04 (97.02) | 151.78 (97.68) | 152.30 (101.36) | 151.27 (101.30) |

| 45 | 145.31 (91.90) | 2 hours | 127.24 (71.95) | 126.47 (71.73) | 124.17 (72.08) | 125.39 (69.89) | 124.35 (69.71) |

| 45 | 145.31 (91.90) | 1 hours | 127.24 (71.95) | 126.47 (71.73) | 124.17 (72.08) | 125.39 (69.89) | 124.35 (69.71) |

| 49 | 144.18 (91.28) | All recorded time (No threshold) | 200.37 * (177.58) | 199.59 * (177.67) | 197.37 ** (178.41) | 197.8 ** (179.98) | 196.81 ** (180.12) |

| n | Subjective Screen Time | Objective Screen-State | |||||

|---|---|---|---|---|---|---|---|

| Continuous Usage Cut-Points | Notification Cut-Points | ||||||

| No Filter | 5 Seconds | 10 Seconds | 15 Seconds | 20 Seconds | |||

| Mean | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | Mean (SD) (min/day) | ||

| 49 | 142.65 (90.22) | 6 hours | 153.00 (99.45) | 152.22 (99.32) | 150.02 (99.90) | 150.51 (103.36) | 149.52 (103.36) |

| 49 | 142.65 (90.22) | 5 hours | 151.47 (96.57) | 150.69 (96.42) | 148.49 (97.01) | 148.98 (100.57) | 147.99 (100.49) |

| 49 | 142.65 (90.22) | 4 hours | 151.47 (96.57) | 150.69 (96.42) | 148.49 (97.01) | 148.98 (100.57) | 147.99 (100.49) |

| 49 | 142.65 (90.22) | 3 hours | 151.47 (96.57) | 150.69 (96.42) | 148.49 (97.01) | 148.98 (100.57) | 147.99 (100.49) |

| 49 | 142.65 (90.22) | 2 hours | 147.32 (91.65) | 146.55 (91.51) | 144.36 (92.05) | 144.85 (95.83) | 143.87 (95.72) |

| 47 | 143.70 (90.82) | 1 hours | 124.90 (71.31) | 124.15 (71.08) | 121.91 (71.37) | 123.02 (69.28) | 122.02 (69.07) |

| 51 | 142.65 (90.22) | All recorded time (No threshold) | 223.41 * (251.57) | 222.64 * (251.70) | 220.46 * (252.33) | 220.85 * (253.27) | 219.89 * (253.44) |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Katapally, T.R.; Chu, L.M. Methodology to Derive Objective Screen-State from Smartphones: A SMART Platform Study. Int. J. Environ. Res. Public Health 2019, 16, 2275. https://doi.org/10.3390/ijerph16132275

Katapally TR, Chu LM. Methodology to Derive Objective Screen-State from Smartphones: A SMART Platform Study. International Journal of Environmental Research and Public Health. 2019; 16(13):2275. https://doi.org/10.3390/ijerph16132275

Chicago/Turabian StyleKatapally, Tarun Reddy, and Luan Manh Chu. 2019. "Methodology to Derive Objective Screen-State from Smartphones: A SMART Platform Study" International Journal of Environmental Research and Public Health 16, no. 13: 2275. https://doi.org/10.3390/ijerph16132275

APA StyleKatapally, T. R., & Chu, L. M. (2019). Methodology to Derive Objective Screen-State from Smartphones: A SMART Platform Study. International Journal of Environmental Research and Public Health, 16(13), 2275. https://doi.org/10.3390/ijerph16132275