Barriers to and Facilitators of the Evaluation of Integrated Community-Wide Overweight Intervention Approaches: A Qualitative Case Study in Two Dutch Municipalities

Abstract

:1. Introduction

2. Materials and Methods

2.1. Design

2.2. Sampling

2.3. Data Collection

2.3.1. Interviews

2.3.2. Observations

2.3.3. Document Analysis

2.4. Data Analysis

3. Results

3.1. Case Description

“Sometimes (I have) the feeling of: ‘where to start?’ and (it feels like) droplets in the ocean” (A1).

“… real vision, policy, and making decisions (at strategic level)” (A1).

3.2. Evaluation Barriers and Facilitators

3.2.1. Motivating Factors to Evaluate

“The programme manager is an enthusiastic person who can transfer her energy properly. So that works very well” (B4).

“My role (as programme manager) is to question (..) them (the stakeholders) about the benefit of their activities. So in that way we try to collect data (about evaluation)” (B1).

“(…) it was good to have the evaluation expert from the JOGG-office visit us and discuss the evaluation possibilities and effects to be shown at local level (...), this works better than the training sessions alone, which are more general” (B1).

“(…) the training sessions were too formal, extensive, detail oriented and exaggerated” (A1).

3.2.2. Perceived Feasibility of Evaluation

“There is so much to it (evaluation) if you want to do it well. A lot of time, money (..), and expertise” (A1).“If you want to do it (evaluation) properly, you have to make a big investment” (B2).

“I do notice that practice is more stubborn (than the ideal situation), so then you’ll have to look further and search for your own pathway” (B3).“You want to write things down as specifically as possible in such an (evaluation) plan. However, JOGG is also characterized by (..) a bottom-up approach and setting goals together. And sometimes I perceive that as quite a tension” (B4).

3.2.3. Knowledge of and Attitudes to Evaluation

“I did some research during my study but not like a youth monitor or something. I would not know what questions to ask or when such a research is representative or reliable (…)” (A1).

“it has no scientific value” (B2) and “it is not something official” (B4).

“And it is the question whether it (the evaluation) is worth the investment” (B2).

“When you evaluate, you know what works and what does not (..). And you can keep partners on board, show results, (create) a positive atmosphere, and attract other parties” (A1).“It is important to see whether what you do achieves effects” (B2).

3.2.4. Evaluation Resources

“Within the entire process we have allocated time and money in order to conduct a proper evaluation” (B2).

and“The development process of the activity monitor by the JOGG-office took a long time and it still is not finalized” (B3),“It is not really a useful tool” (B1;A1).“The activity-monitor has limitations in space to insert all retrieved data” (B3).

“(…) other JOGG-municipalities do not use it” (A3).“It is not easy to use and needs an update in lay-out” (A3).

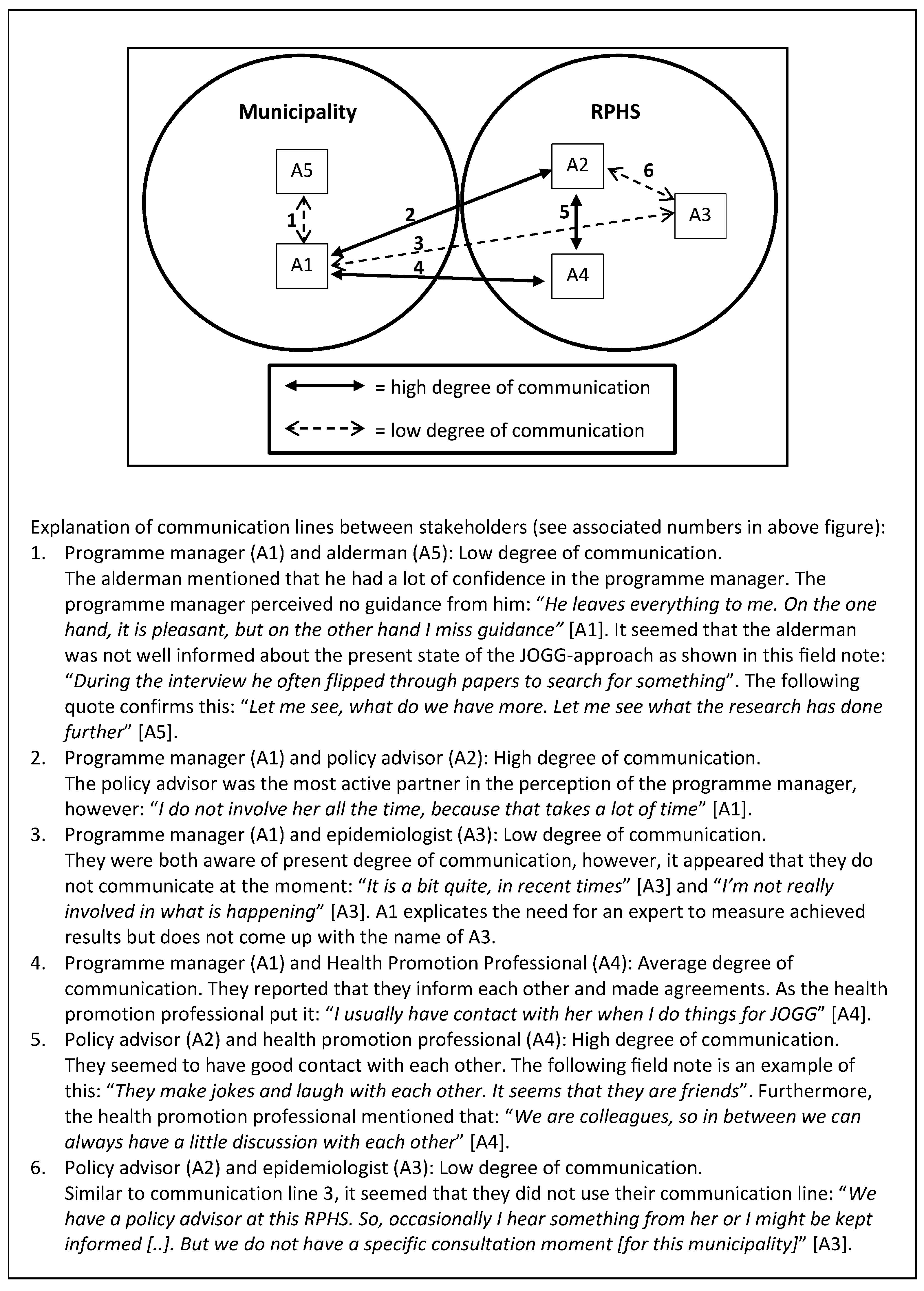

3.2.5. Communication and Involvement with Evaluation

“If you are really involved then it is easier to see where evaluation can do something or add something” (A3).

“The programme manager acts as a spider in the web, both inside and outside the municipality” (text fragment drawn from action plan).

3.2.6. Support from Decision Makers

“One cannot do everything, it is really quite broad. So at a certain point, choices need to be made” (B3).

“Sometimes they do think (evaluation) is important and then they say: ‘make money available’. So, that is always a little between the devil and the deep blue sea” (B2).

“The programme manager went to the financial department on the day it became clear that additional money was needed for a JOGG-approach related activity”.

“If you really need money, then you can (write) entire plans and (go) to the Municipal Council. However, then my eight hours a week (for the JOGG-program) are lost due to that” (A1).

“We have actually never discussed evaluation (in the working group Interventions)” (A4).

“I’m not going to make a whole action plan to create a support base for research among politicians and private partners. Then it is better to invest my energy in performing those activities and setting up and organizing working group meetings. Because at least something happens then” (A1).

“I really liked working with the RPHS epidemiologist (…). I think that this is also an advice to other municipalities, ensure that employable hours are made available within the RPHS or for another researcher who can work with you” (B1).

“Employable hours just cost money, (…) so the RPHS cannot just donate employable hours to the municipality” (A1).

4. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Abbreviations

| ICIA | Integrated Community-wide Intervention Approach |

| EPODE | Ensemble Prévenons l’Obésité Des Enfants (French) or “Together Let’s Prevent Childhood Obesity” (English) |

| JOGG | Jongeren op Gezond Gewicht (Dutch) or Youth on Health Weight (English) |

| JOGG-office | National Coordination Office of the JOGG-approach |

| RPHS | Regional Public Health Service |

References

- Bleich, S.N.; Segal, J.; Wu, Y.; Wilson, R.; Wang, Y. Systematic review of community-based childhood obesity prevention studies. Pediatrics 2013, 132, e201–e210. [Google Scholar] [CrossRef] [PubMed]

- Mitchell, N.S.; Catenacci, V.A.; Wyatt, H.R.; Hill, J.O. Obesity: Overview of an epidemic. Psychiatr. Clin. N. Am. 2011, 34, 717–732. [Google Scholar] [CrossRef] [PubMed]

- Ng, M.; Fleming, T.; Robinson, M.; Thomson, B.; Graetz, N.; Margono, C.; Mullany, E.C.; Biryukov, S.; Abbafati, C.; Abera, S.F.; et al. Global, regional, and national prevalence of overweight and obesity in children and adults during 1980–2013: A systematic analysis for the global burden of disease study 2013. Lancet 2014, 384, 766–781. [Google Scholar] [CrossRef]

- Olds, T.; Maher, C.; Zumin, S.; Peneau, S.; Lioret, S.; Castetbon, K.; Bellisle; de Wilde, J.; Hohepa, M.; Maddison, R.; et al. Evidence that the prevalence of childhood overweight is plateauing: Data from nine countries. Int. J. Pediatr. Obes. 2011, 6, 342–360. [Google Scholar] [CrossRef] [PubMed]

- Rokholm, B.; Baker, J.L.; Sorensen, T.I. The levelling off of the obesity epidemic since the year 1999—A review of evidence and perspectives. Obes. Rev. 2010, 11, 835–846. [Google Scholar] [CrossRef] [PubMed]

- Swinburn, B.A.; Sacks, G.; Hall, K.D.; McPherson, K.; Finegood, D.T.; Moodie, M.L.; Gortmaker, S.L. The global obesity pandemic: Shaped by global drivers and local environments. Lancet 2011, 378, 804–814. [Google Scholar] [CrossRef]

- Wake, M.; Clifford, S.A.; Patton, G.C.; Waters, E.; Williams, J.; Canterford, L.; Carlin, J.B. Morbidity patterns among the underweight, overweight and obese between 2 and 18 years: Population-based cross-sectional analyses. Int. J. Obes. 2013, 37, 86–93. [Google Scholar] [CrossRef] [PubMed]

- French, S.A.; Story, M.; Perry, C.L. Self-esteem and obesity in children and adolescents: A literature review. Obes. Res. 1995, 3, 479–490. [Google Scholar] [CrossRef] [PubMed]

- Daniels, S.R. The consequences of childhood overweight and obesity. Future Child. 2006, 16, 47–67. [Google Scholar] [CrossRef] [PubMed]

- Guo, S.S.; Wu, W.; Chumlea, W.C.; Roche, A.F. Predicting overweight and obesity in adulthood from body mass index values in childhood and adolescence. Am. J. Clin. Nutr. 2002, 76, 653–658. [Google Scholar] [PubMed]

- Roberto, C.A.; Swinburn, B.; Hawkes, C.; Huang, T.T.K.; Costa, S.A.; Ashe, M.; Zwicker, L.; Cawley, J.H.; Brownell, K.D. Patchy progress on obesity prevention: Emerging examples, entrenched barriers, and new thinking. Lancet 2015, 385, 2400–2409. [Google Scholar] [CrossRef]

- Flynn, M.A.; McNeil, D.A.; Maloff, B.; Mutasingwa, D.; Wu, M.; Ford, C.; Tough, S.C. Reducing obesity and related chronic disease risk in children and youth: A synthesis of evidence with “best practice” recommendations. Obes. Rev. 2006, 7 (Suppl. 1), 7–66. [Google Scholar] [CrossRef] [PubMed]

- Huang, T.T.; Glass, T.A. Transforming research strategies for understanding and preventing obesity. JAMA 2008, 300, 1811–1813. [Google Scholar] [CrossRef] [PubMed]

- Stice, E.; Shaw, H.; Marti, C.N. A meta-analytic review of obesity prevention programs for children and adolescents: The skinny on interventions that work. Psychol. Bull. 2006, 132, 667–691. [Google Scholar] [CrossRef] [PubMed]

- Swinburn, B.; Egger, G.; Raza, F. Dissecting obesogenic environments: The development and application of a framework for identifying and prioritizing environmental interventions for obesity. Prev. Med. 1999, 29, 563–570. [Google Scholar] [CrossRef] [PubMed]

- Huang, T.; Drewnowski, A.; Kumanyika, S.; Glass, T. A systems-oriented multilevel framework for addressing obesity in the 21st century. Prev. Chronic Dis. 2009, 6, A82. [Google Scholar] [PubMed]

- Johnston, L.M.; Matteson, C.L.; Finegood, D.T. Systems science and obesity policy: A novel framework for analyzing and rethinking population-level planning. Am. J. Public Health 2014, 104, 1270–1278. [Google Scholar] [CrossRef] [PubMed]

- Bemelmans, W.; Verschuuren, M.; Savelkoul, M.; Wendel-Vos, G. An Eu-Wide Overview of Community-Based Initiatives to Reduce Childhood Obesity; Dutch Institute for Public Health and the Environment (RIVM): Bilthoven, The Netherlands, 2011. [Google Scholar]

- Dietz, W.H.; Gortmaker, S.L. Preventing obesity in children and adolescents 1. Annu. Rev. Public Health 2001, 22, 337–353. [Google Scholar] [CrossRef] [PubMed]

- Foltz, J.L.; May, A.L.; Belay, B.; Nihiser, A.J.; Dooyema, C.A.; Blanck, H.M. Population-level intervention strategies and examples for obesity prevention in children. Annu. Rev. Nutr. 2012, 32, 391–415. [Google Scholar] [CrossRef] [PubMed]

- Hendriks, A.M.; Gubbels, J.S.; De Vries, N.K.; Seidell, J.C.; Kremers, S.P.; Jansen, M.W. Interventions to promote an integrated approach to public health problems: An application to childhood obesity. J. Environ. Public Health 2012, 2012, 913236. [Google Scholar] [CrossRef] [PubMed]

- Ministerie VWS. Landelijke Nota Gezondheidsbeleid: Gezondheid Dichtbij (National Health Policy); Rijksoverheid: The Hague, The Netherlands, 2011. [Google Scholar]

- Allender, S.; Nichols, M.; Foulkes, C.; Reynolds, R.; Waters, E.; King, L.; Gill, T.; Armstrong, R.; Swinburn, B. The development of a network for community-based obesity prevention: The co-ops collaboration. BMC Public Health 2011, 11, 132. [Google Scholar] [CrossRef] [PubMed]

- Borys, J.M.; Le Bodo, Y.; Jebb, S.A.; Seidell, J.C.; Summerbell, C.; Richard, D.; De Henauw, S.; Moreno, L.A.; Romon, M.; Visscher, T.L.; et al. Epode approach for childhood obesity prevention: Methods, progress and international development. Obes. Rev. 2012, 13, 299–315. [Google Scholar] [CrossRef] [PubMed]

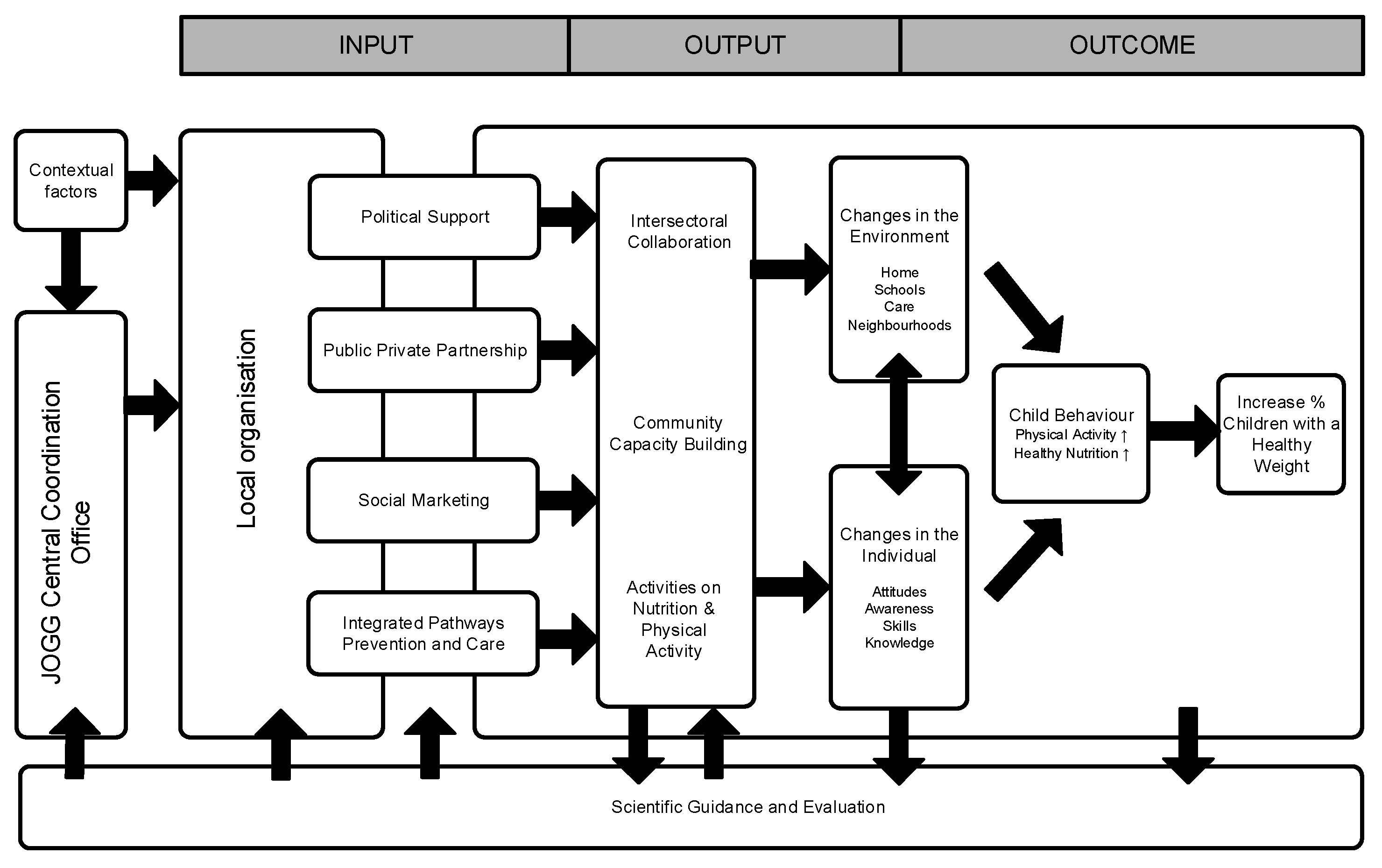

- Van Koperen, T.M.; Jebb, S.A.; Summerbell, C.D.; Visscher, T.L.; Romon, M.; Borys, J.M.; Seidell, J.C. Characterizing the EPODE logic model: Unravelling the past and informing the future. Obes. Rev. 2013, 14, 162–170. [Google Scholar] [CrossRef] [PubMed]

- Van Koperen, T.M.; van der Kleij, M.J.J.; Renders, C.M.; Crone, M.R.; Hendriks, A.M.; Jansen, M.; van de Gaar, V.M.; Raat, J.H.; Ruiter, E.L.M.; Molleman, G.R.M.; et al. Design of ciao, a research program to support the development of an integrated approach to prevent overweight and obesity in the netherlands. BMC Obes. 2014, 1, 1–12. [Google Scholar] [CrossRef] [PubMed]

- King, L.; Gill, T.; Allender, S.; Swinburn, B. Best practice principles for community-based obesity prevention: Development, content and application. Obes. Rev. 2011, 12, 329–338. [Google Scholar] [CrossRef] [PubMed]

- Trochim, W.M.K. Introduction to Evaluation. Available online: http://www.socialresearchmethods.net/kb/intreval.htm (accessed on 13 October 2015).

- Patton, M.Q. Qualitative Evaluation and Research Methods, 2 ed.; Sage: Newbury Park, CA, USA, 1990; p. 532. [Google Scholar]

- Atienza, A.A.; King, A.C. Community-based health intervention trials: An overview of methodological issues. Epidemiol. Rev. 2002, 24, 72–79. [Google Scholar] [CrossRef] [PubMed]

- Summerbell, C.; Hillier, F. Community interventions and initiatives to prevent obesity. In Obesity Epidemiology: From Aetiology to Public Health; Crawford, D., Jeffery, R.W., Ball, K., Brug, J., Eds.; Oxford University Press: Oxford, UK, 2010; pp. 395–408. [Google Scholar]

- Sanson-Fisher, R.W.; Bonevski, B.; Green, L.W.; D’Este, C. Limitations of the randomized controlled trial in evaluating population-based health interventions. Am. J. Prev. Med. 2007, 33, 155–161. [Google Scholar] [CrossRef] [PubMed]

- Yin, R.K.; Kaftarian, S.J. Introduction: Challenges of community-based program outcome evaluations. Eval. Program Plan. 1997, 20, 293–297. [Google Scholar] [CrossRef]

- Goodman, R.M. Principals and tools for evaluating community-based prevention and health promotion programs. J. Public Health Manag. Pract. 1998, 4, 37–47. [Google Scholar] [CrossRef] [PubMed]

- Nastasi, B.K.; Hitchcock, J. Challenges of evaluating multilevel interventions. Am. J. Community Psychol. 2009, 43, 360–376. [Google Scholar] [CrossRef] [PubMed]

- Storm, I.; Savelkoul, M.; Busch, M.C.M.; Maas, J.; Schuit, A.J. Intersectoral Collaboration in Addressing Health Inequalities: A Survey of 16 Municipalities in The Netherlands; Dutch Institute for Public Health and the Environment (RIVM): Bilthoven, The Netherlands, 2010. [Google Scholar]

- Moore, L.; Gibbs, L. Evaluation of community-based obesity interventions. In Preventing Childhood Obesity: Evidence Policy and Practice; Waters, E., Swinburn, B., Seidell, J., Uauy, R., Eds.; John Wiley & Sons: Chichester, UK, 2010; pp. 157–166. [Google Scholar]

- Napp, D.; Gibbs, D.; Jolly, D.; Westover, B.; Uhl, G. Evaluation barriers and facilitators among community-based hiv prevention programs. AIDS Educ. Prev. 2002, 14, 38–48. [Google Scholar] [CrossRef] [PubMed]

- Huckel Schneider, C.; Milat, A.; Moore, G. Barriers and facilitators to evaluation of health policies. In Proceedings of the International Conference on Public Policy, Grenoble, France, 26–28 June 2013; p. 19.

- Taut, S.M.; Alkin, M.C. Program staffs perceptions of barriers to evaluation implementation. Am. J. Eval. 2003, 24, 213–226. [Google Scholar] [CrossRef]

- Saunders, M.; Lewis, P.; Thornhill, A. Research Methods for Business Students; Pearson Education: Harlow, UK, 2009; p. 614. [Google Scholar]

- Gray, D.E. Doing Research in the Real World, 3rd ed.; Sage: London, UK, 2014. [Google Scholar]

- Patton, M.Q. Qualitative Evaluation and Research Methods, 4 ed.; Sage: Newbury Park, CA, USA, 2015; p. 270. [Google Scholar]

- Preskill, H.; Boyle, S. A multidisciplinary model of evaluation capacity building. Am. J. Eval. 2008, 29, 443–459. [Google Scholar] [CrossRef]

- Holloway, I.; Wheeler, S. Qualitative Research in Nursing and Healthcare; John Wiley & Sons: Chichester, UK, 2013; p. 368. [Google Scholar]

- Noor, K.B.M. Case study: A strategic research methodology. Am. J. Appl. Sci. 2008, 5, 1602–1604. [Google Scholar] [CrossRef]

- Green, J.; Thorogood, N. Qualitative Methods for Health Research, 3rd ed.; Sage: London, UK, 2014. [Google Scholar]

- Taylor-Powell, E.; Boyd, H.H. Evaluation capacity building in complex organizations. In Program Evaluation in a Complex Organisational System: Lessons from Cooperative Extension. New Directions for Evaluation, 120th ed.; Braverman, M.T., Negle, M., Arnold, M.E., Rennekamp, R.A., Eds.; Jossey-Bass: San Fransisco, CA, USA, 2008; Volume 120, pp. 55–69. [Google Scholar]

- Patton, M.Q. Utilization-Focused Evaluation, 4th ed.; Guilford: New York, NY, USA, 2008. [Google Scholar]

- Laverack, G.; Labonte, R. A planning framework for community empowerment goals within health promotion. Health Policy Plan. 2000, 15, 255–262. [Google Scholar] [CrossRef] [PubMed]

- Jacobs, G. Participatie en empowerment in de gezondheidsbevordering: Professionals in de knel tussen ideaal en praktijk? J. Soc. Interv. Theory Pract. 2005, 14, 29–39. [Google Scholar] [CrossRef]

- Robinson, K.L.; Driedger, M.S.; Elliott, S.J.; Eyles, J. Understanding facilitators of and barriers to health promotion practice. Health Promot. Pract. 2006, 7, 467–476. [Google Scholar] [CrossRef] [PubMed]

- Bamberger, M.; Rugh, J.; Mabry, L. Real World Evaluation. Working under Budget, Time, Data and Political Constraints, 2nd ed.; Sage: Thousand Oakes, CA, USA, 2012; p. 666. [Google Scholar]

- Yin, R.K. Case Study Research: Design and Methods; SAGE Publications: Thousand Oaks, CA, USA, 2009; p. 219. [Google Scholar]

- Baarda, D.B.; De Goede, M.P.M.; Teunissen, J. Basisboek Kwalitatief Onderzoek; Stenfert Kroese: Groningen, The Netherlands, 2005; p. 369. [Google Scholar]

| Respondent * | Function | Gender | Age (Years) | Level of Education | Organization | Years of Service | Working Time for JOGG (Hours) ** |

|---|---|---|---|---|---|---|---|

| A1 | Programme manager | F | 23 | BS | Municipality | 2 | 8.00 weekly |

| A2 | Policy advisor | F | 28 | MS | RPHS | 2 | 1.25 weekly |

| A3 | Epidemiologist | F | 43 | PhD | RPHS | 4 | 0.24 weekly |

| A4 | Health promotion professional | F | 27 | MS | RPHS | 4 | 0.96 weekly |

| A5 | Alderman | M | 62 | - | Municipality | 4 | - |

| B1 | Programme manager | F | 48 | BS | Municipality & RPHS | 2 & 13 | 20.00 weekly |

| B2 | Policy advisor | F | 55 | MS | Municipality | 14 | 8.00 weekly |

| B3 | Epidemiologist | F | 38 | MS | RPHS | 12 | 4.00 weekly |

| B4 | Epidemiologist & Policy advisor (RPHS-employee) | F | 37 | MS | RPHS | 11 | 1.20 weekly |

| B5 | Alderman | M | 55 | - | Municipality | 4 | - |

| Themes | Subthemes | Barriers (−)/Facilitators (+) | Examples |

|---|---|---|---|

| The need for an evaluation motive | |||

| Motivating factors to evaluate | Person to motivate evaluation | + | An evaluation expert who provides expertise and support to start the evaluation process |

| − | Lack of a person who stimulates performance of evaluation | ||

| Demand for evaluation | + | External funder or alderman to ask for evaluation results | |

| − | The programme manager does not give a command to start the evaluation process | ||

| Evaluation feasibility | |||

| Perceived feasibility of evaluation | Assumptions on feasibility of evaluation | + | Existence of a realistic perception of evaluation |

| − | Negative perceptions on feasibility of evaluation as presented in theory and evaluation models | ||

| Capabilities of those involved | + | Trust in interpretation of tasks | |

| − | Lack of trust in capabilities of those that should be involved in the evaluation: programme manager and epidemiologist. | ||

| Knowledge and attitudes on evaluation | Positive attitude towards evaluation | + | Evaluation is regarded as important |

| − | Doubt about possibilities to show effects of ICIA | ||

| Knowledge on evaluation | + | Availability of a person with sufficient knowledge on what the (process of) programme evaluation implies and how to conduct such an evaluation | |

| − | Lack of evaluation knowledge (i.e., oral informal process evaluation has no scientific value) | ||

| Perception of own capabilities | + | High self-efficacy to conduct evaluation | |

| − | Negative perception of one’s own capabilities to evaluate | ||

| Evaluation feasibility | |||

| Evaluation resources | Financial resources | + | Allocated financial resources for evaluation process |

| − | Limited resources to hire personnel, resulting in limited time | ||

| Time | + | Allocated hours for involvement of epidemiologist or evaluation expert | |

| − | Lack of personnel for data collection; Lack of time for evaluation education | ||

| Availability of evaluation instruments | + | Availability of generic suitable evaluation instruments (i.e., questionnaires, logic model) | |

| − | Non-functioning or incomplete evaluation instruments | ||

| Commitment of evaluation stakeholders | |||

| Communication and involvement with evaluation | Communication on evaluation | + | Regular and high degree of communication between programme manager and epidemiologist on evaluation |

| − | Low degree of communication on evaluation between programme manager and epidemiologist; Low degree of communication between programme manager and alderman | ||

| Involvement in evaluation | + | Active involvement of stakeholders helps to see the added value of evaluation | |

| − | Involvement of members of the programme structure at strategic as well as tactical and operational level | ||

| Support form decision-makers at multiple levels | Political support (tactical) | + | Evaluation is considered important by alderman and city council |

| − | Competing themes that reduce attention and make fewer resources available; Politicians only interested in long-term goals and not in mid-term or process evaluations | ||

| Managerial support (strategic) | + | A pro-active attitude of department management to generate resources and support; a clear policy vision of RPHS supportive to the approach and evaluation | |

| − | A time consuming policy process to generate extra financial resources | ||

| Support from implementers (operational) | + | Stakeholders have a common goal | |

| − | Limited interest in evaluation from those who implement the ICIA | ||

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons by Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Van Koperen, T.M.; De Kruif, A.; Van Antwerpen, L.; Hendriks, A.-M.; Seidell, J.C.; Schuit, A.J.; Renders, C.M. Barriers to and Facilitators of the Evaluation of Integrated Community-Wide Overweight Intervention Approaches: A Qualitative Case Study in Two Dutch Municipalities. Int. J. Environ. Res. Public Health 2016, 13, 390. https://doi.org/10.3390/ijerph13040390

Van Koperen TM, De Kruif A, Van Antwerpen L, Hendriks A-M, Seidell JC, Schuit AJ, Renders CM. Barriers to and Facilitators of the Evaluation of Integrated Community-Wide Overweight Intervention Approaches: A Qualitative Case Study in Two Dutch Municipalities. International Journal of Environmental Research and Public Health. 2016; 13(4):390. https://doi.org/10.3390/ijerph13040390

Chicago/Turabian StyleVan Koperen, Tessa M., Anja De Kruif, Lisa Van Antwerpen, Anna-Marie Hendriks, Jacob C. Seidell, Albertine J. Schuit, and Carry M. Renders. 2016. "Barriers to and Facilitators of the Evaluation of Integrated Community-Wide Overweight Intervention Approaches: A Qualitative Case Study in Two Dutch Municipalities" International Journal of Environmental Research and Public Health 13, no. 4: 390. https://doi.org/10.3390/ijerph13040390

APA StyleVan Koperen, T. M., De Kruif, A., Van Antwerpen, L., Hendriks, A.-M., Seidell, J. C., Schuit, A. J., & Renders, C. M. (2016). Barriers to and Facilitators of the Evaluation of Integrated Community-Wide Overweight Intervention Approaches: A Qualitative Case Study in Two Dutch Municipalities. International Journal of Environmental Research and Public Health, 13(4), 390. https://doi.org/10.3390/ijerph13040390