Self-Supervised Contrastive Learning and GAN-Based Denoising for High-Fidelity HumanNeRF Images

Highlights

- This paper proposes CD-GAN, a novel self-supervised denoising framework that effectively combines contrastive learning with Generative Adversarial Networks (GANs) to remove complex, structured noise from images generated by HumanNeRF.

- The method operates without the need for any paired “clean” ground truth data by leveraging the intrinsic stochasticity of the HumanNeRF rendering process to construct positive and negative sample pairs for training.

- The proposed method significantly enhances the visual quality of dynamic human neural renderings by not only suppressing noise but also preserving and enhancing critical high-frequency details such as skin texture and clothing wrinkles, providing crucial support for downstream applications like virtual reality and digital avatars.

- The innovative integration of contrastive learning within a self-supervised denoising paradigm offers a new and extensible solution for addressing image quality issues in other neural rendering scenarios.

Abstract

1. Introduction

- I.

- Proposing an innovative self-supervised denoising architecture that combines contrastive learning and generative adversarial networks.

- II.

- Designing an efficient self-supervised contrastive learning strategy tailored to the characteristics of neural rendering.

- III.

- Constructing a multi-objective joint optimization loss function that achieves a balance between denoising and detail enhancement.

2. Related Work

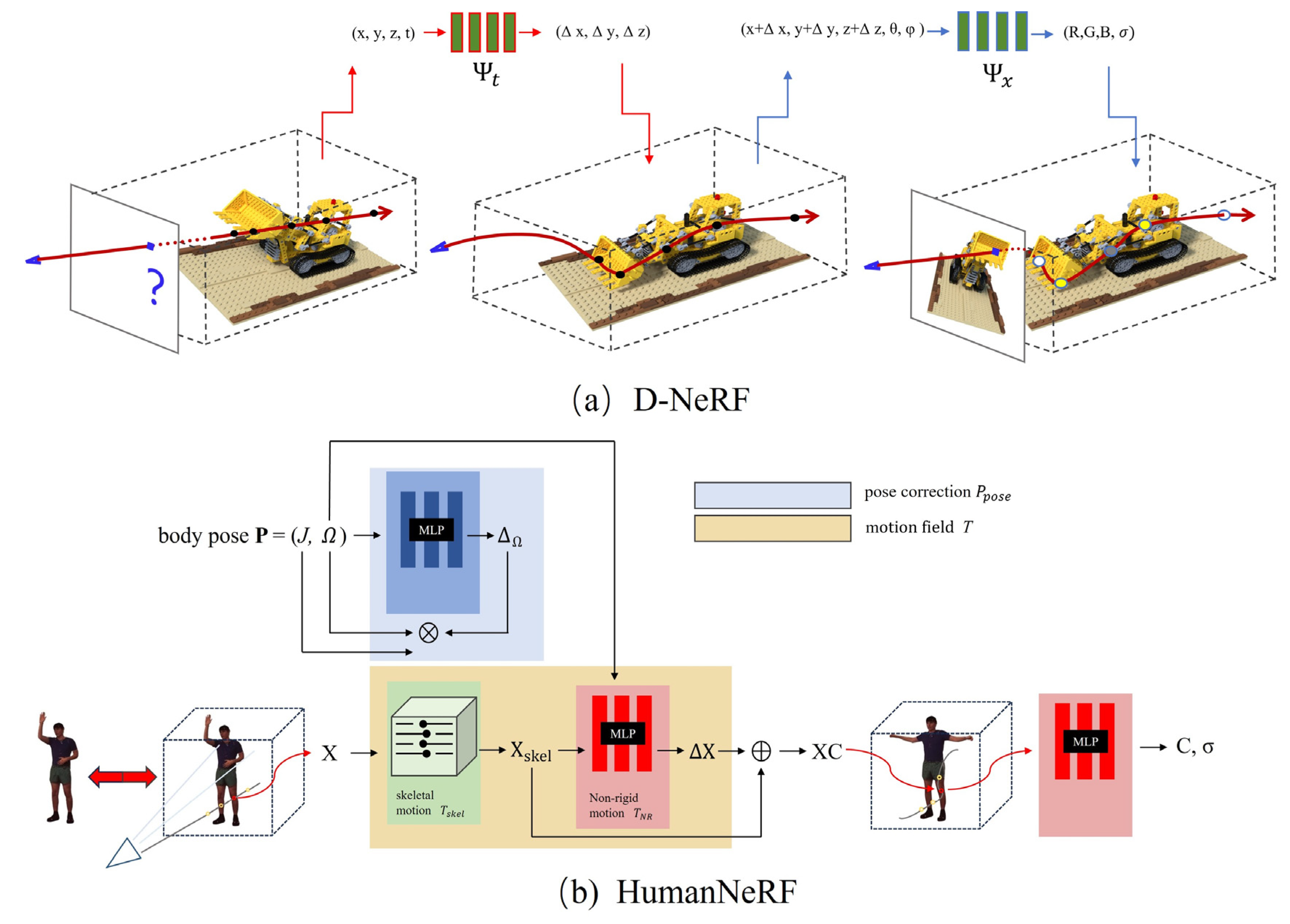

2.1. Dynamic Scenes and Human NeRF

2.2. Deep Learning-Based Image Denoising

| Category | Representative Works | Core Idea | Key Limitation |

|---|---|---|---|

| Supervised Denoising | DnCNN [33], FFDNet [34] | End-to-end mapping learned from paired “clean-noisy” data | Relies on clean ground truth, which is unavailable in NeRF scenes |

| General Self-supervised Denoising | Noise2Noise [35], Noise2Self [36] | Training using the statistical properties of noise | Struggles with structured noise related to geometry |

| Specialized Self-supervised Denoising | CD-GAN | Combines contrastive learning and GANs, trained using rendering stochasticity |

2.2.1. CNN-Based Supervised Denoising

2.2.2. Self-Supervised and Unsupervised Denoising

2.3. Quality Enhancement and Denoising for NeRF

3. Methodology

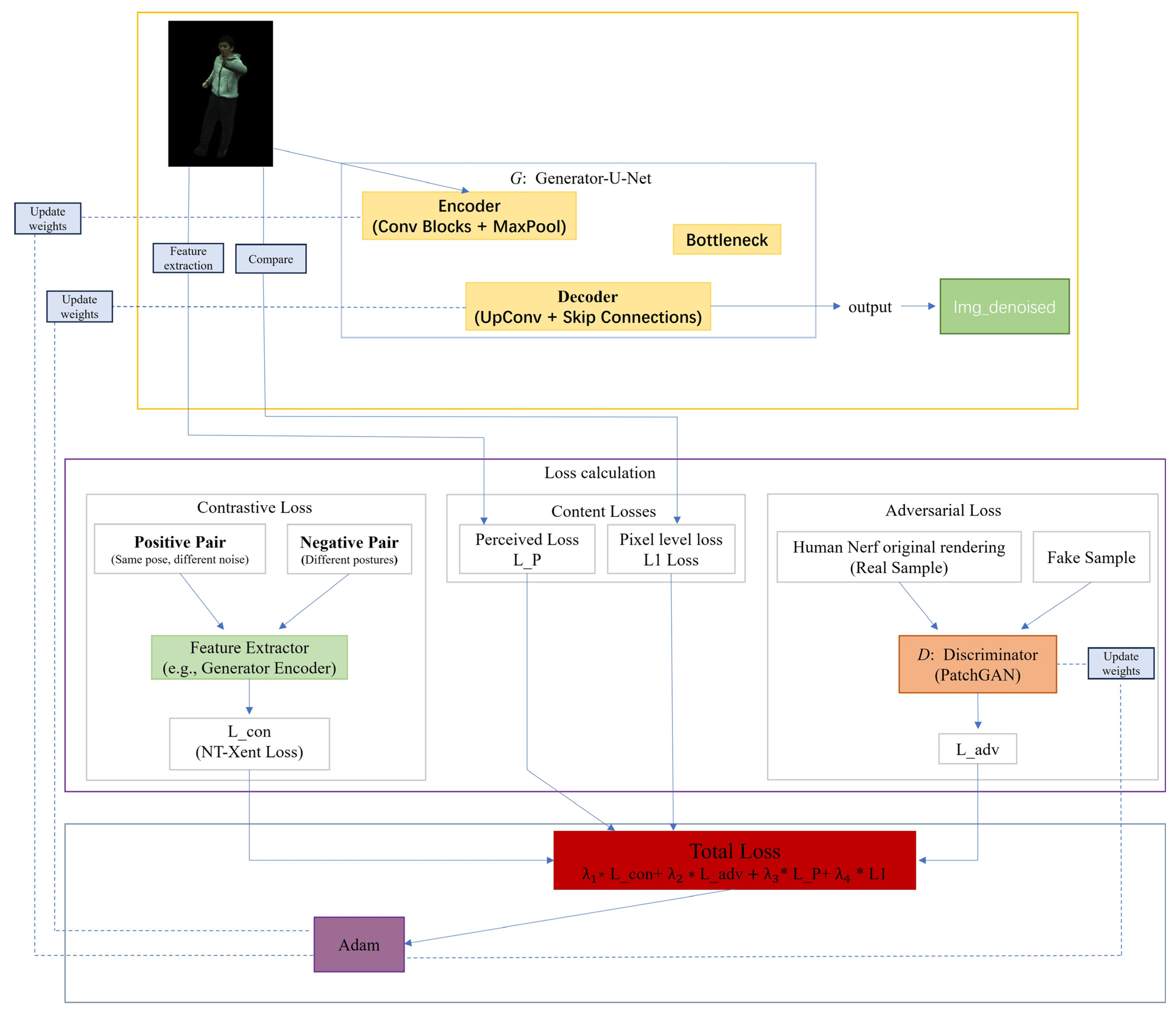

3.1. The Overall Framework of CD-GAN

3.2. Detailed Explanation of Network Architecture

3.2.1. Generator Network

- I.

- Encoder: The encoder part is responsible for extracting multi-scale, hierarchical features from the input image. It consists of a series of repeated convolutional blocks, each containing two 3 × 3 convolutional layers, followed by a Rectified Linear Unit (ReLU) activation function and batch normalization [40]. After each convolutional block, this paper uses a 2 × 2 max-pooling layer for downsampling, which halves the spatial resolution of the feature maps while doubling the number of feature channels. This process allows the network to progressively expand its receptive field, transitioning from capturing low-level edge and texture information to understanding higher-level semantic content.

- II.

- Decoder: The goal of the decoder part is to progressively restore the abstract features extracted by the encoder into a high-resolution image. Its structure is symmetric to the encoder and is implemented through a series of upsampling blocks. Each upsampling block first uses a 2 × 2 transposed convolution to double the resolution of the feature map, then, through skip connections, concatenates the feature map of the current decoder layer with the feature map from the corresponding level of the encoder along the channel dimension. This step is crucial as it directly “injects” the high-resolution, low-level details captured early by the encoder into the decoding process, greatly mitigating the information loss caused by downsampling, and is essential for reconstructing sharp edges and fine textures. The concatenated feature map is then processed through two 3 × 3 convolutional layers.

- III.

- Output Layer: In the final layer of the decoder, this paper use a 1 × 1 convolutional layer to map the multi-channel feature map back to a three-channel RGB image. Finally, a Tanh activation function is used to normalize the output pixel values to the range of [−1, 1].

3.2.2. Discriminator Network

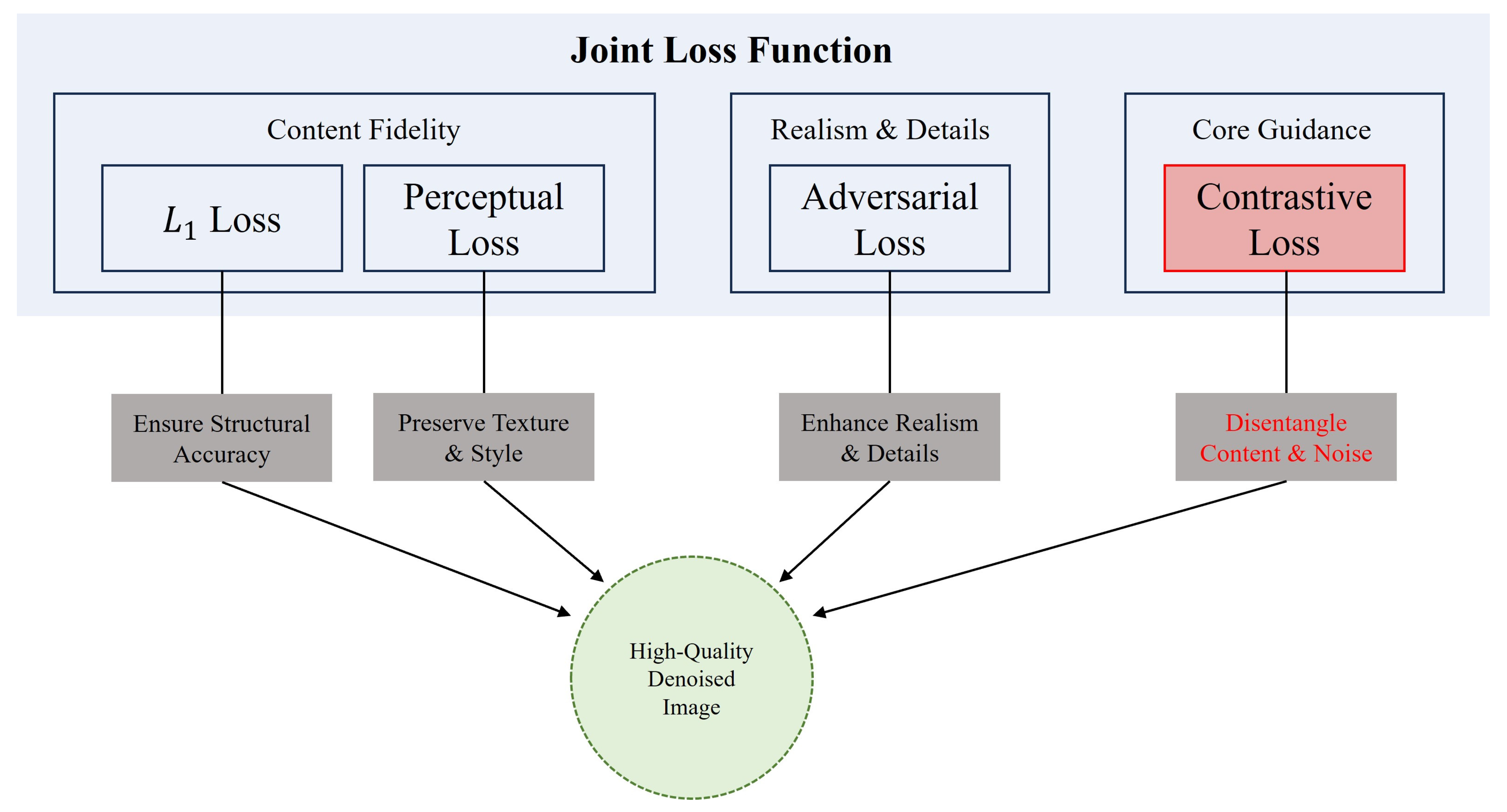

3.3. Joint Loss Function

3.3.1. Adversarial Loss

3.3.2. Self-Supervised Contrastive Loss

3.3.3. Content Preservation Loss

- I.

- Pixel-level Loss: This paper uses the loss [44] to constrain the similarity between the generated image and the input image at the pixel level. L1 loss tends to produce sharper edges, and its definition is:

- II.

- Perceptual Loss: To preserve consistency in high-level semantics and textural style, a perceptual loss [45] is employed. This is achieved using a VGG19 network [46,47], pre-trained on ImageNet with its weights frozen, which is denoted as Φ. The perceptual loss is then defined as the Euclidean distance between the feature maps of the generated and input images, extracted from specific intermediate layers of the VGG network:

3.4. Methodological Discussion

3.4.1. Noise Disentanglement and Content Generation Under the Self-Supervised Paradigm

3.4.2. Synergy and Balance of Multi-Objective Losses

4. Experiments

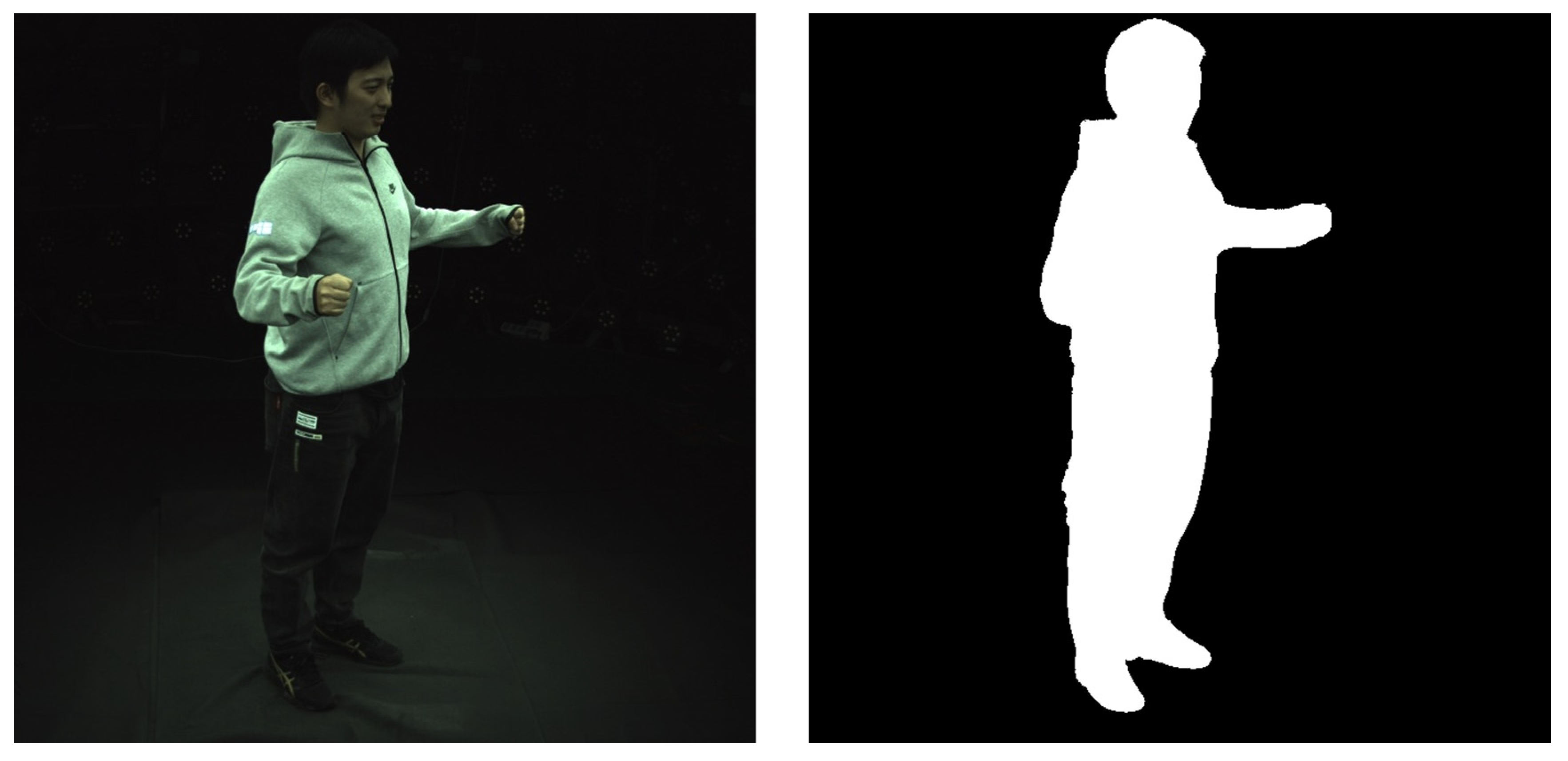

4.1. Datasets and Training Details

4.1.1. Datasets Preprocessing

- I.

- Data Filtering and Reorganization: We extracted the image sequences and corresponding camera intrinsic and extrinsic parameters for each camera view from the original dataset. For ease of management, we stored all views’ images uniformly in an “images” folder.

- II.

- Foreground Mask Generation: To enable the model to focus on the human subject and ignore background interference, we generated precise foreground masks for each image frame. These binary masks accurately segment the human silhouette and are uniformly stored in a masks folder. During training, these masks are used to ensure that ray sampling and loss calculation are performed only within the human body region.

- III.

- Parameter Integration and Packaging: We integrated the camera parameters, SMPL pose parameters, and pre-calculated human mesh information for all frames and packaged them into .pkl and .yaml files, such as cameras.pkl and mesh_infos.pkl. This packaging process not only improves data loading efficiency but also makes the entire dataset structure clearer and more modular.

4.1.2. Training Details

4.2. Evaluation Metrics

4.3. Ablation Study

4.4. Quantitative Analysis

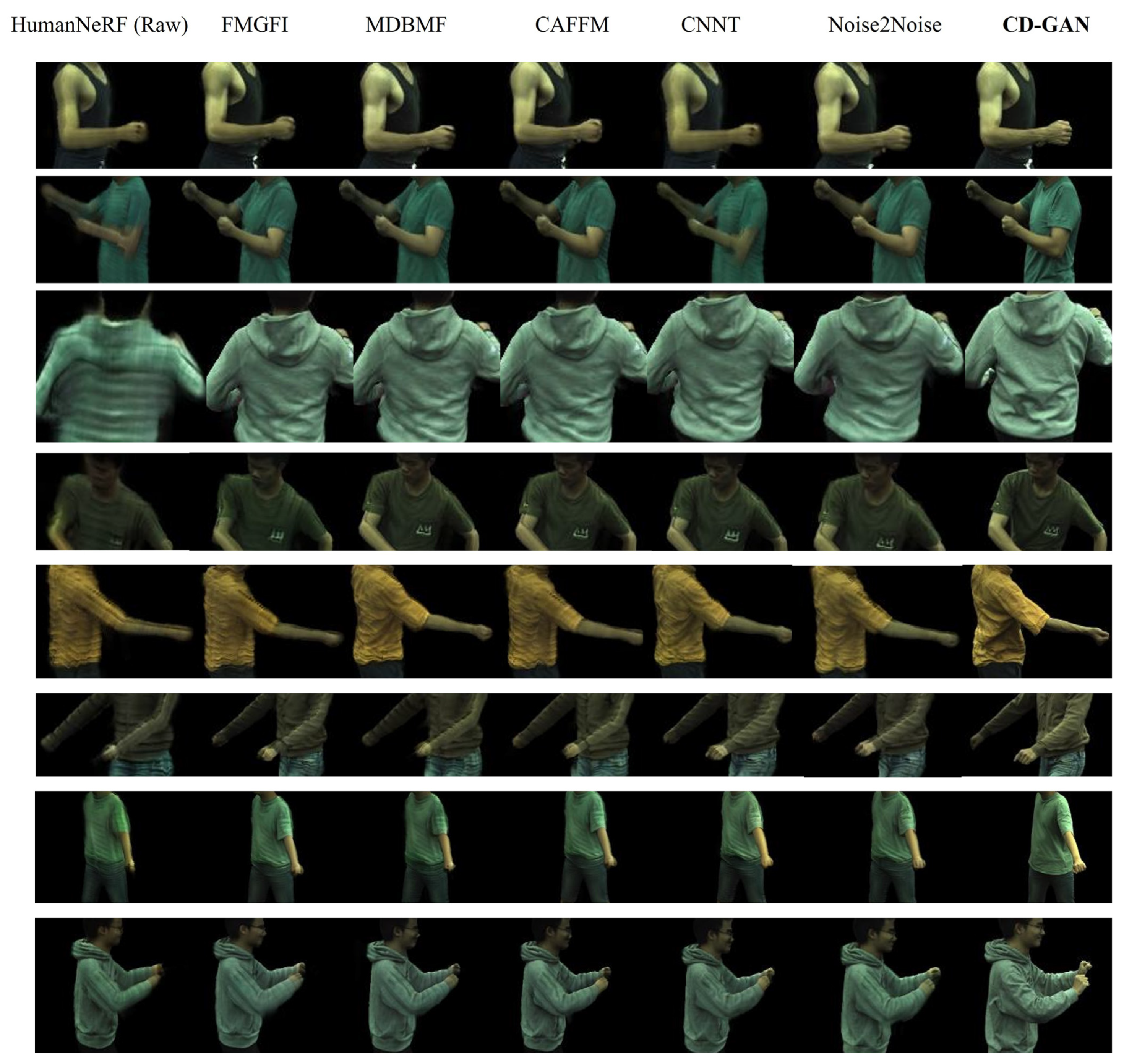

4.5. Qualitative Analysis

5. Limitations and Future Work

- I.

- Computational Overhead: As a post-processing step based on a U-Net architecture, CD-GAN adds significant computational cost during inference compared to direct rendering, limiting its applicability in real-time HumanNeRF applications.

- II.

- Generalization to Extreme Cases: Our current model may underperform in highly complex scenarios not well represented in the ZJU-MoCap dataset, such as scenes with extremely sparse input views, rapidly changing dynamic lighting, or highly transparent clothing materials.

- III.

- Domain Specificity: The contrastive learning strategy is tightly coupled with the stochasticity of neural rendering, requiring specific data preparation (e.g., generating noisy–noisy pairs), which may hinder immediate transferability to other general image-to-image tasks.

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Detailed Quantitative Results

| Subject 377 | Subject 386 | |||||

| PSNR ↑ | MSE ↓ | LPIPS ↓ | PSNR ↑ | MSE ↓ | LPIPS ↓ | |

| FMGFI | 28.54 | 0.0014 | 0.062 | 29.24 | 0.0012 | 0.0765 |

| MDBMF | 28.86 | 0.0013 | 0.0763 | 31.55 | 0.0007 | 0.0303 |

| CAFFM | 28.24 | 0.0015 | 0.0705 | 30.97 | 0.0008 | 0.0323 |

| CNNT | 25.68 | 0.0027 | 0.0752 | 27.96 | 0.0016 | 0.0877 |

| Noise2Noise | 30.00 | 0.0010 | 0.0404 | 28.54 | 0.0014 | 0.0625 |

| CD-GAN | 30.97 | 0.0008 | 0.0036 | 33.98 | 0.0004 | 0.0285 |

| Subject 387 | Subject 392 | |||||

| PSNR ↑ | MSE ↓ | LPIPS ↓ | PSNR ↑ | MSE ↓ | LPIPS ↓ | |

| FMGFI | 29.21 | 0.0012 | 0.0784 | 27.45 | 0.0018 | 0.0737 |

| MDBMF | 30.00 | 0.0010 | 0.0553 | 29.59 | 0.0011 | 0.0705 |

| CAFFM | 31.55 | 0.0007 | 0.0777 | 28.24 | 0.0015 | 0.0671 |

| CNNT | 30.46 | 0.0009 | 0.0596 | 28.54 | 0.0014 | 0.0847 |

| Noise2Noise | 31.55 | 0.0007 | 0.0622 | 28.86 | 0.0013 | 0.0539 |

| CD-GAN | 32.22 | 0.0006 | 0.0502 | 30.00 | 0.0010 | 0.0233 |

| Subject 393 | Subject 394 | |||||

| PSNR ↑ | MSE ↓ | LPIPS ↓ | PSNR ↑ | MSE ↓ | LPIPS ↓ | |

| FMGFI | 26.02 | 0.0025 | 0.0884 | 28.54 | 0.0014 | 0.0676 |

| MDBMF | 28.24 | 0.0015 | 0.0831 | 26.99 | 0.0020 | 0.1052 |

| CAFFM | 26.77 | 0.0021 | 0.0848 | 28.24 | 0.0015 | 0.0886 |

| CNNT | 27.96 | 0.0016 | 0.1132 | 29.59 | 0.0011 | 0.0605 |

| Noise2Noise | 27.77 | 0.0017 | 0.1125 | 27.45 | 0.0018 | 0.0860 |

| CD-GAN | 29.21 | 0.0012 | 0.0791 | 30.46 | 0.0009 | 0.0601 |

| Subject 313 | Subject 390 | |||||

| PSNR ↑ | MSE ↓ | LPIPS ↓ | PSNR ↑ | MSE ↓ | LPIPS ↓ | |

| FMGFI | 29.50 | 0.0012 | 0.0710 | 30.00 | 0.0010 | 0.0669 |

| MDBMF | 28.50 | 0.0015 | 0.0700 | 29.00 | 0.0013 | 0.0647 |

| CAFFM | 24.50 | 0.0023 | 0.0650 | 25.92 | 0.0019 | 0.0591 |

| CNNT | 25.00 | 0.0022 | 0.0850 | 26.69 | 0.0018 | 0.0786 |

| Noise2Noise | 28.90 | 0.0013 | 0.0720 | 29.52 | 0.0011 | 0.0679 |

| CD-GAN | 30.10 | 0.0009 | 0.0450 | 30.14 | 0.0009 | 0.0422 |

References

- Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Barron, J.T.; Ramamoorthi, R.; Ng, R. Nerf: Representing scenes as neural radiance fields for view synthesis. Commun. ACM 2021, 65, 99–106. [Google Scholar] [CrossRef]

- Weng, C.Y.; Curless, B.; Srinivasan, P.P.; Barron, J.T.; Kemelmacher-Shlizerman, I. Humannerf: Free-viewpoint rendering of moving people from monocular video. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 16210–16220. [Google Scholar]

- Hu, S.; Hong, F.; Pan, L.; Mei, H.; Yang, L.; Liu, Z. Sherf: Generalizable human nerf from a single image. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 1–6 October 2023; pp. 9352–9364. [Google Scholar]

- Işık, M.; Rünz, M.; Georgopoulos, M.; Khakhulin, T.; Starck, J.; Agapito, L.; Nießner, M. Humanrf: High-fidelity neural radiance fields for humans in motion. ACM Trans. Graph. TOG 2023, 42, 1–12. [Google Scholar] [CrossRef]

- Ma, C.; Liu, Y.L.; Wang, Z.; Liu, W.; Liu, X.; Wang, Z. Humannerf-se: A simple yet effective approach to animate humannerf with diverse poses. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024; pp. 1460–1470. [Google Scholar]

- Shetty, A.; Habermann, M.; Sun, G.; Luvizon, D.; Golyanik, V.; Theobalt, C. Holoported characters: Real-time free-viewpoint rendering of humans from sparse rgb cameras. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024; pp. 1206–1215. [Google Scholar]

- Zhu, M.; Xu, Z. CCNet: A Cross-Channel Enhanced CNN for Blind Image Denoising. Comput. Intell. 2025, 41, e70063. [Google Scholar] [CrossRef]

- Chae, J.; Hong, S.; Kim, S.; Yoon, S.; Kim, G. CNN-based TEM image denoising from first principles. arXiv 2025, arXiv:2501.11225. [Google Scholar] [CrossRef]

- Hu, Y.; Tian, C.; Zhang, J.; Zhang, S. Efficient image denoising with heterogeneous kernel-based CNN. Neurocomputing 2024, 592, 127799. [Google Scholar] [CrossRef]

- Abbasnejad, I.; Zambetta, F.; Salim, F.; Wiley, T.; Chan, J.; Gallagher, R.; Abbasnejad, E. SCONE-GAN: Semantic Contrastive learning-based Generative Adversarial Network for an end-to-end image translation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 1111–1120. [Google Scholar]

- Li, T.; Katabi, D.; He, K. Return of unconditional generation: A self-supervised representation generation method. Adv. Neural Inf. Process. Syst. 2024, 37, 125441–125468. [Google Scholar]

- Zhang, H.; Yang, M.; Wang, H.; Qiu, Y. A strategy for improving GAN generation: Contrastive self-adversarial training. Neurocomputing 2025, 637, 129864. [Google Scholar] [CrossRef]

- Yamaguchi, Y.; Yoshida, I.; Kondo, Y.; Numada, M.; Koshimizu, H.; Oshiro, K.; Saito, R. Edge-preserving smoothing filter using fast M-estimation method with an automatic determination algorithm for basic width. Sci. Rep. 2023, 13, 5477. [Google Scholar] [CrossRef]

- Ullah, F.; Kumar, K.; Rahim, T.; Khan, J.; Jung, Y. A new hybrid image denoising algorithm using adaptive and modified decision-based filters for enhanced image quality. Sci. Rep. 2025, 15, 8971. [Google Scholar] [CrossRef]

- Wang, T.; Hu, Z.; Guan, Y. An efficient lightweight network for image denoising using progressive residual and convolutional attention feature fusion. Sci. Rep. 2024, 14, 9554. [Google Scholar]

- Rehman, A.; Zhovmer, A.; Sato, R.; Mukouyama, Y.S.; Chen, J.; Rissone, A.; Puertollano, R.; Liu, J.; Vishwasrao, H.D.; Shroff, H.; et al. Convolutional neural network transformer (CNNT) for fluorescence microscopy image denoising with improved generalization and fast adaptation. Sci. Rep. 2024, 14, 18184. [Google Scholar] [CrossRef] [PubMed]

- Lehtinen, J.; Munkberg, J.; Hasselgren, J.; Laine, S.; Karras, T.; Aittala, M.; Aila, T. Noise2Noise: Learning image restoration without clean data. arXiv 2018, arXiv:1803.04189. [Google Scholar] [CrossRef]

- Tretschk, E.; Tewari, A.; Golyanik, V.; Zollhöfer, M.; Lassner, C.; Theobalt, C. Non-rigid neural radiance fields: Reconstruction and novel view synthesis of a dynamic scene from monocular video. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 12959–12970. [Google Scholar]

- Zhang, X.; Srinivasan, P.P.; Deng, B.; Debevec, P.; Freeman, W.T.; Barron, J.T. Nerfactor: Neural factorization of shape and reflectance under an unknown illumination. ACM Trans. Graph. (ToG) 2021, 40, 1–18. [Google Scholar] [CrossRef]

- Pumarola, A.; Corona, E.; Pons-Moll, G.; Moreno-Noguer, F. D-nerf: Neural radiance fields for dynamic scenes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 10318–10327. [Google Scholar]

- Fang, J.; Yi, T.; Wang, X.; Xie, L.; Zhang, X.; Liu, W.; Nießner, M.; Tian, Q. Fast dynamic radiance fields with time-aware neural voxels. In Proceedings of the SIGGRAPH Asia 2022 Conference Papers, Daegu, Republic of Korea, 6–9 December 2022; pp. 1–9. [Google Scholar]

- Xu, W.; Huang, M.; Xu, Q. A DNeRF Image Denoising Method Based on MSAF-DT. IET Image Process. 2025, 19, e70122. [Google Scholar] [CrossRef]

- Yan, W.; Chen, Y.; Zhou, W.; Cong, R. Mvoxti-dnerf: Explicit multi-scale voxel interpolation and temporal encoding network for efficient dynamic neural radiance field. IEEE Trans. Autom. Sci. Eng. 2024, 22, 5096–5107. [Google Scholar] [CrossRef]

- Park, K.; Sinha, U.; Barron, J.T.; Bouaziz, S.; Goldman, D.B.; Seitz, S.M.; Martin-Brualla, R. Nerfies: Deformable neural radiance fields. In Proceedings of the IEEE/CVF international Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 5865–5874. [Google Scholar]

- Park, K.; Sinha, U.; Hedman, P.; Barron, J.T.; Bouaziz, S.; Goldman, D.B.; Martin-Brualla, R.; Seitz, S.M. Hypernerf: A higher-dimensional representation for topologically varying neural radiance fields. arXiv 2021, arXiv:2106.13228. [Google Scholar] [CrossRef]

- Kania, K.; Yi, K.M.; Kowalski, M.; Trzciński, T.; Tagliasacchi, A. Conerf: Controllable neural radiance fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 18623–18632. [Google Scholar]

- Loper, M.; Mahmood, N.; Romero, J.; Pons-Moll, G.; Black, M.J. SMPL: A skinned multi-person linear model. In Seminal Graphics Papers: Pushing the Boundaries; Association for Computing Machinery: New York, NY, USA, 2023; Volume 2, pp. 851–866. [Google Scholar]

- Peng, S.; Dong, J.; Wang, Q.; Zhang, S.; Shuai, Q.; Zhou, X.; Bao, H. Animatable neural radiance fields for modeling dynamic human bodies. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 14314–14323. [Google Scholar]

- Mu, J.; Sang, S.; Vasconcelos, N.; Wang, X. Actorsnerf: Animatable few-shot human rendering with generalizable nerfs. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 1–6 October 2023; pp. 18391–18401. [Google Scholar]

- Peng, S.; Zhang, Y.; Xu, Y.; Wang, Q.; Shuai, Q.; Bao, H.; Zhou, X. Neural body: Implicit neural representations with structured latent codes for novel view synthesis of dynamic humans. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 9054–9063. [Google Scholar]

- Liu, L.; Habermann, M.; Rudnev, V.; Sarkar, K.; Gu, J.; Theobalt, C. Neural actor: Neural free-view synthesis of human actors with pose control. ACM Trans. Graph. (TOG) 2021, 40, 1–16. [Google Scholar] [CrossRef]

- Zhao, H.; Zhang, L.; Rosin, P.L.; Lai, Y.K.; Wang, Y. HairManip: High quality hair manipulation via hair element disentangling. Pattern Recognit. 2024, 147, 110132. [Google Scholar] [CrossRef]

- Zhang, K.; Zuo, W.; Chen, Y.; Meng, D.; Zhang, L. Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising. IEEE Trans. Image Process. 2017, 26, 3142–3155. [Google Scholar] [CrossRef]

- Zhang, K.; Zuo, W.; Zhang, L. FFDNet: Toward a fast and flexible solution for CNN-based image denoising. IEEE Trans. Image Process. 2018, 27, 4608–4622. [Google Scholar] [CrossRef]

- Mansour, Y.; Heckel, R. Zero-shot noise2noise: Efficient image denoising without any data. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 14018–14027. [Google Scholar]

- Batson, J.; Royer, L. Noise2self: Blind denoising by self-supervision. In Proceedings of the 36th International Conference on Machine Learning, PMLR 97, Long Beach, CA, USA, 9–15 June 2019; pp. 524–533. [Google Scholar]

- Barron, J.T.; Mildenhall, B.; Tancik, M.; Hedman, P.; Martin-Brualla, R.; Srinivasan, P.P. Mip-nerf: A multiscale representation for anti-aliasing neural radiance fields. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 5855–5864. [Google Scholar]

- Verbin, D.; Hedman, P.; Mildenhall, B.; Zickler, T.; Barron, J.T.; Srinivasan, P.P. Ref-nerf: Structured view-dependent appearance for neural radiance fields. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 47, 9426–9437. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, 5–9 October 2015, Proceedings, Part III 18; Springer International Publishing: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar]

- Balestriero, R.; Baraniuk, R.G. Batch normalization explained. arXiv 2022, arXiv:2209.14778. [Google Scholar] [CrossRef]

- Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. arXiv 2014, arXiv:1406.2661. [Google Scholar] [CrossRef]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the International Conference on Machine Learning, Virtual, 13–18 July 2020; pp. 1597–1607. [Google Scholar]

- Khaertdinov, B.; Asteriadis, S.; Ghaleb, E. Dynamic temperature scaling in contrastive self-supervised learning for sensor-based human activity recognition. IEEE Trans. Biom. Behav. Identity Sci. 2022, 4, 498–507. [Google Scholar] [CrossRef]

- Isola, P.; Zhu, J.Y.; Zhou, T.; Efros, A.A. Image-to-image translation with conditional adversarial networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1125–1134. [Google Scholar]

- Johnson, J.; Alahi, A.; Fei-Fei, L. Perceptual losses for real-time style transfer and super-resolution. In Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, October 11–14, 2016, Proceedings, Part II 14; Springer International Publishing: Berlin/Heidelberg, Germany, 2016; pp. 694–711. [Google Scholar]

- Mascarenhas, S.; Agarwal, M. A comparison between VGG16, VGG19 and ResNet50 architecture frameworks for Image Classification. In Proceedings of the 2021 International conference on disruptive technologies for multi-disciplinary research and applications (CENTCON), Bengaluru, India, 19–21 November, 2021; Volume 1, pp. 96–99. [Google Scholar]

- Shaha, M.; Pawar, M. Transfer learning for image classification. In Proceedings of the 2018 Second International Conference on Electronics, Communication and Aerospace Technology (ICECA), Coimbatore, India, 29–31 March 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 656–660. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

| Model Variant | L1 | L_adv | L_con | L_P | PSNR ↑ | MSE ↓ | LPIPS ↓ |

|---|---|---|---|---|---|---|---|

| HumanNeRF (Raw) | — | — | — | — | 27.21 | 0.0019 | 0.0892 |

| Baseline (L1 only) | √ | — | — | — | 28.53 | 0.0014 | 0.0641 |

| w/o Contrastive | √ | √ | — | √ | 29.62 | 0.0011 | 0.0640 |

| w/o Adversarial | √ | — | √ | √ | 30.46 | 0.0009 | 0.0534 |

| w/o Perceptual | √ | √ | √ | — | 30.97 | 0.0008 | 0.0515 |

| CD-GAN (Ours) | √ | √ | √ | √ | 32.22 | 0.0006 | 0.0502 |

| Method | PSNR ↑ | MSE ↓ | LPIPS ↓ |

|---|---|---|---|

| FMGFI | 28.56 | 0.0015 | 0.0731 |

| MDBMF | 29.10 | 0.0013 | 0.0694 |

| CAFFM | 28.05 | 0.0015 | 0.0681 |

| CNNT | 27.74 | 0.0017 | 0.0806 |

| Noise2Noise | 29.07 | 0.0013 | 0.0697 |

| CD-GAN | 30.89 | 0.0008 | 0.0415 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xu, Q.; Xu, W.; Huang, M.; Yan, W.; Guo, Y. Self-Supervised Contrastive Learning and GAN-Based Denoising for High-Fidelity HumanNeRF Images. Sensors 2026, 26, 249. https://doi.org/10.3390/s26010249

Xu Q, Xu W, Huang M, Yan W, Guo Y. Self-Supervised Contrastive Learning and GAN-Based Denoising for High-Fidelity HumanNeRF Images. Sensors. 2026; 26(1):249. https://doi.org/10.3390/s26010249

Chicago/Turabian StyleXu, Qian, Wenxuan Xu, Meng Huang, Weiqing Yan, and Yang Guo. 2026. "Self-Supervised Contrastive Learning and GAN-Based Denoising for High-Fidelity HumanNeRF Images" Sensors 26, no. 1: 249. https://doi.org/10.3390/s26010249

APA StyleXu, Q., Xu, W., Huang, M., Yan, W., & Guo, Y. (2026). Self-Supervised Contrastive Learning and GAN-Based Denoising for High-Fidelity HumanNeRF Images. Sensors, 26(1), 249. https://doi.org/10.3390/s26010249