Multi-Calib: A Scalable LiDAR–Camera Calibration Network for Variable Sensor Configurations

Abstract

1. Introduction

- First, we adopt a large-scale pre-trained Swin Transformer as a unified backbone for joint feature extraction from both RGB (Red Green Blue) images and depth maps. Leveraging pre-trained weights significantly reduces the training cost and improves memory efficiency during iterative inference.

- Second, we design a cross-modal channel-wise attention mechanism that facilitates multi-scale feature integration between camera and LiDAR modalities. This mutually guided attention module enhances salient and correspondingly aligned features via context-aware reinforcement.

- Finally, we implement a configurable calibration head architecture that accommodates varying numbers of cameras. Each head independently predicts the extrinsics for its corresponding monocular image–depth map pair. This modular design avoids cross-view feature entanglement, effectively mitigating the artifacts caused by shared-head architectures and preserving viewpoint-specific calibration fidelity.

- To address multi-sensor calibration, we present Multi-Calib, an online deep learning model with explicit depth supervision capable of aligning multiple LiDAR sensors and cameras.

- We introduce a cross-modal channel-wise attention module for mutually guided fusion between camera and LiDAR features, achieving highly efficient integration.

- A novel loss function is developed based on the intrinsic correlation between translation and rotation errors, effectively improving LiDAR–camera registration accuracy.

2. Related Work

2.1. Offline Calibration

2.2. Online Calibration

3. Method

3.1. Problem Formulation

3.2. Network Architecture

3.3. Loss Functions

Regression Loss

4. Experiments

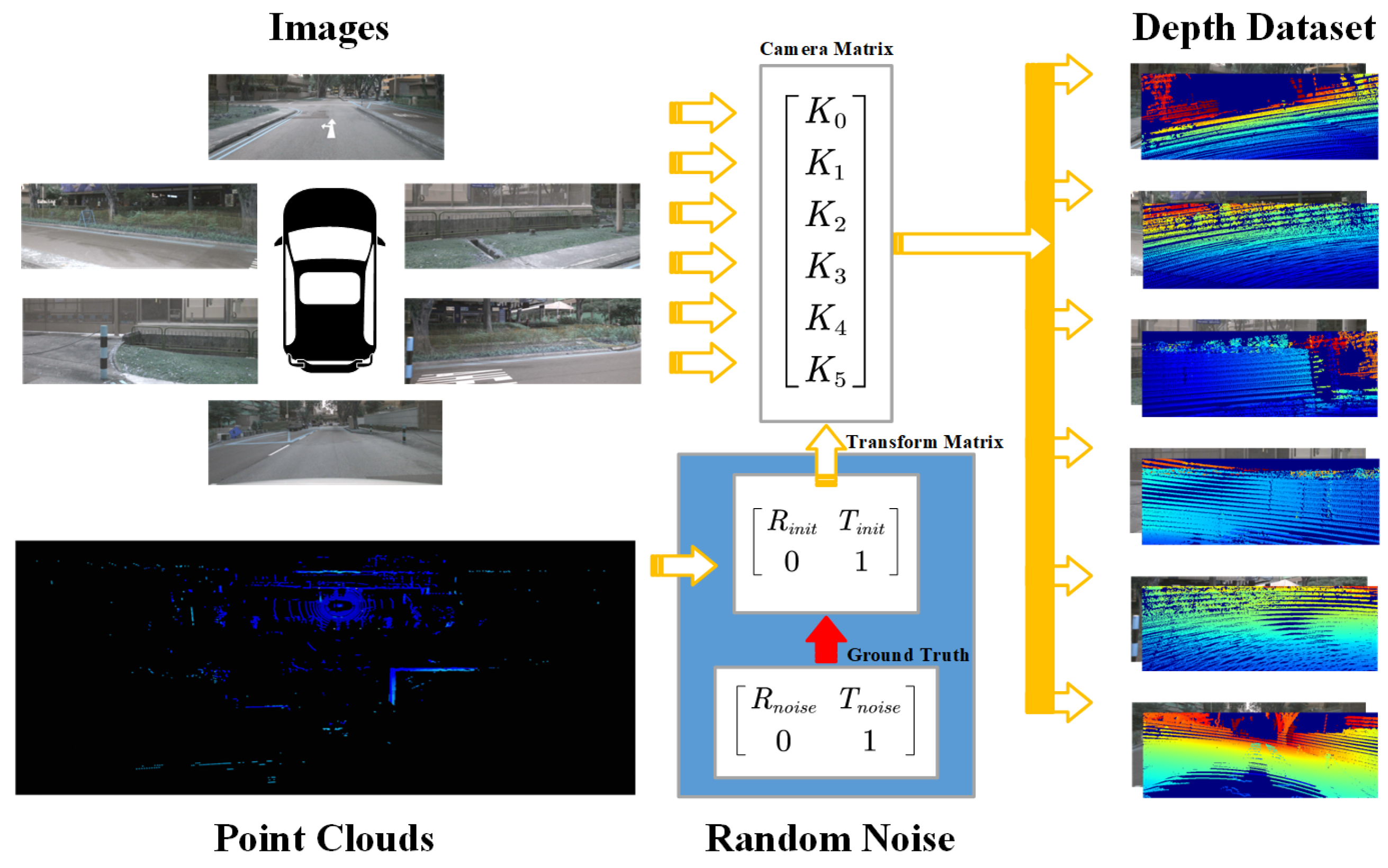

4.1. Dataset Preparation

4.2. Implementation Details

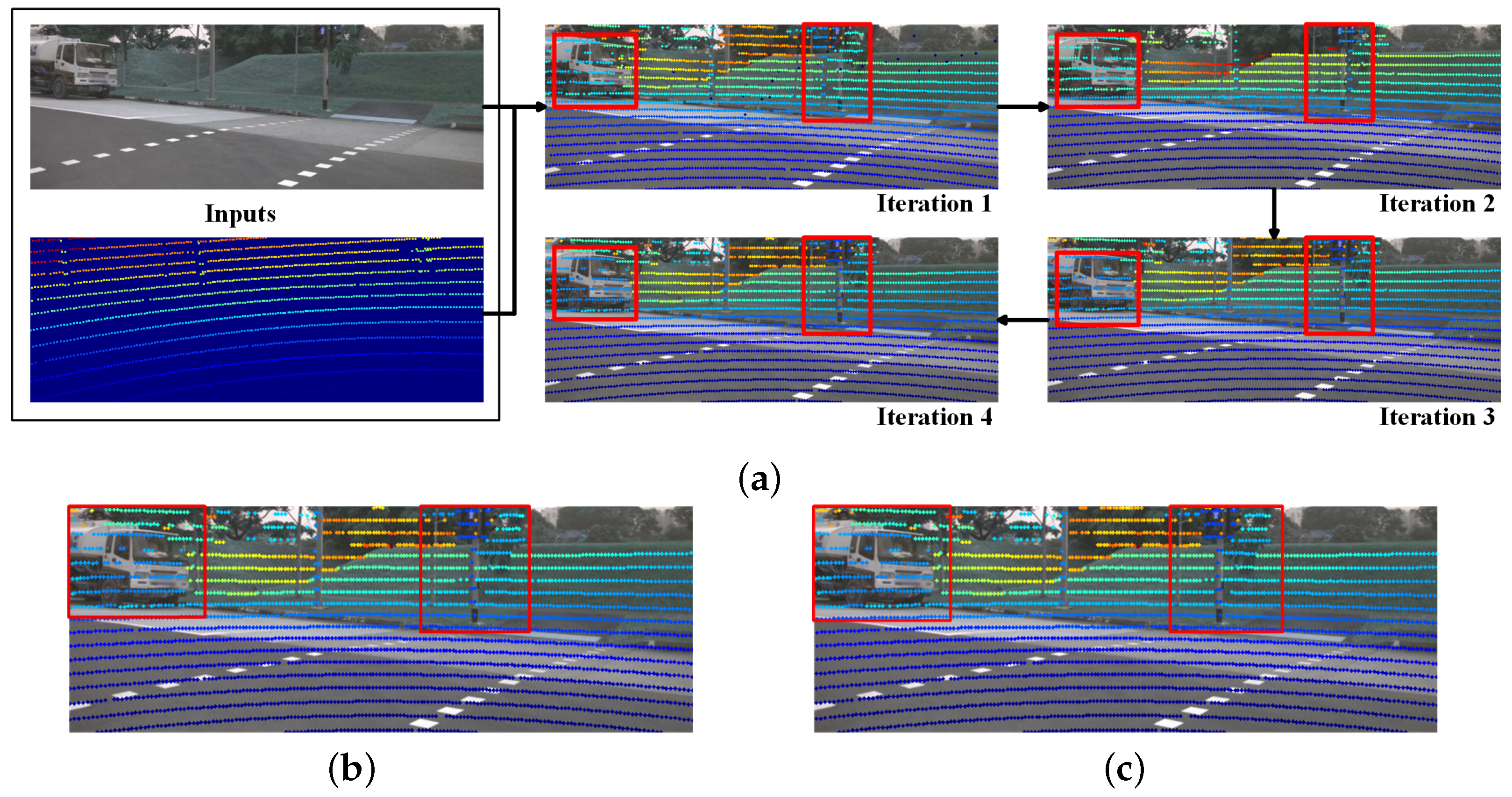

4.3. Comparison to State-of-the-Art Models

- CMRNet uses the fewest iterations, but its three separate models do not share parameters, resulting in a total model size of 106.20 M—which is significantly larger than ours (78.79 M). In addition, our model is scalable to any number of LiDAR–camera pairs, which exceeds the capacity of all of them.

- Unlike LCCNet, which requires training five separate whole models for iterative refinement, our method adopts a unified frozen Swin Transformer as a shared backbone across all iterations, significantly reducing model size and the training cost. As shown in Table 1, the total size of our model is only 78.79 M, with just 5.99 M parameters requiring training. In contrast, LCCNet trains five independent models (66.75 M each), resulting in a total size exceeding 333.75 M.

4.4. Ablation Studies

4.4.1. Backbone Selection

4.4.2. Module Ablation

4.4.3. Calibration Pairs Analysis

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Song, Z.; Yang, L.; Xu, S.; Liu, L.; Xu, D.; Jia, C.; Jia, F.; Wang, L. GraphBEV: Towards robust BEV feature alignment for multi-modal 3D object detection. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024; Springer Nature: Cham, Switzerland, 2024; pp. 347–366. [Google Scholar]

- Liu, Z.; Tang, H.; Amini, A.; Yang, X.; Mao, H.; Rus, D.L.; Han, S. BEVFusion: Multi-task multi-sensor fusion with unified bird’s-eye view representation. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), London, UK, 29 May–2 June 2023; pp. 2774–2781. [Google Scholar]

- Li, Y.; Yu, A.W.; Meng, T.; Caine, B.; Ngiam, J.; Peng, D.; Shen, J.; Lu, Y.; Zhou, D.; Le, Q.V.; et al. Deepfusion: Lidar-camera deep fusion for multi-modal 3D object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 17182–17191. [Google Scholar]

- Zhao, L.; Zhou, H.; Zhu, X.; Song, X.; Li, H.; Tao, W. LIF-Seg: LiDAR and camera image fusion for 3D LiDAR semantic segmentation. IEEE Trans. Multimed. 2023, 26, 1158–1168. [Google Scholar] [CrossRef]

- Gu, S.; Yang, J.; Kong, H. A cascaded LiDAR-camera fusion network for road detection. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 13308–13314. [Google Scholar]

- Fan, R.; Wang, H.; Cai, P.; Liu, M. SNE-RoadSeg: Incorporating Surface Normal Information into Semantic Segmentation for Accurate Freespace Detection. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 340–356. [Google Scholar]

- Zhang, J.; Liu, Y.; Wen, M.; Yue, Y.; Zhang, H.; Wang, D. L2V2T2-Calib: Automatic and unified extrinsic calibration toolbox for different 3D LiDAR, visual camera and thermal camera. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Anchorage, AK, USA, 4–7 June 2023; pp. 1–7. [Google Scholar]

- Yan, G.; He, F.; Shi, C.; Wei, P.; Cai, X.; Li, Y. Joint camera intrinsic and LiDAR-camera extrinsic calibration. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), London, UK, 29 May–2 June 2023; pp. 11446–11452. [Google Scholar]

- Jiao, J.; Chen, F.; Wei, H.; Wu, J.; Liu, M. LCE-Calib: Automatic LiDAR-frame/event camera extrinsic calibration with a globally optimal solution. IEEE/ASME Trans. Mechatron. 2023, 28, 2988–2999. [Google Scholar] [CrossRef]

- Lai, Z.; Wang, Y.; Guo, S.; Meng, X.; Li, J.; Li, W.; Han, S. Laser reflectance feature assisted accurate extrinsic calibration for non-repetitive scanning LiDAR and camera systems. Opt. Express 2022, 30, 16242–16263. [Google Scholar] [CrossRef] [PubMed]

- Beltrán, J.; Guindel, C.; De La Escalera, A.; García, F. Automatic extrinsic calibration method for LiDAR and camera sensor setups. IEEE Trans. Intell. Transp. Syst. 2022, 23, 17677–17689. [Google Scholar] [CrossRef]

- Domhof, J.; Kooij, J.F.; Gavrila, D.M. A joint extrinsic calibration tool for radar, camera and LiDAR. IEEE Trans. Intell. Veh. 2021, 6, 571–582. [Google Scholar] [CrossRef]

- Xiao, Y.; Li, Y.; Meng, C.; Li, X.; Ji, J.; Zhang, Y. CalibFormer: A transformer-based automatic LiDAR-camera calibration network. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 13–17 May 2024; pp. 16714–16720. [Google Scholar]

- Zhu, J.; Xue, J.; Zhang, P. CalibDepth: Unifying depth map representation for iterative LiDAR-camera online calibration. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), London, UK, 29 May–2 June 2023; pp. 726–733. [Google Scholar]

- Sun, Y.; Li, J.; Wang, Y.; Xu, X.; Yang, X.; Sun, Z. ATOP: An attention-to-optimization approach for automatic LiDAR-camera calibration via cross-modal object matching. IEEE Trans. Intell. Veh. 2022, 8, 696–708. [Google Scholar] [CrossRef]

- Ren, S.; Zeng, Y.; Hou, J.; Chen, X. CorrI2P: Deep image-to-point cloud registration via dense correspondence. IEEE Trans. Circuits Syst. Video Technol. 2022, 33, 1198–1208. [Google Scholar] [CrossRef]

- Liao, Y.; Li, J.; Kang, S.; Li, Q.; Zhu, G.; Yuan, S.; Dong, Z.; Yang, B. SE-Calib: Semantic edge-based LiDAR–camera boresight online calibration in urban scenes. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1000513. [Google Scholar] [CrossRef]

- Jeon, Y.; Seo, S.W. EFGHNet: A versatile image-to-point cloud registration network for extreme outdoor environment. IEEE Robot. Autom. Lett. 2022, 7, 7511–7517. [Google Scholar] [CrossRef]

- Yuan, K.; Guo, Z.; Wang, Z.J. RGGNet: Tolerance aware LiDAR-camera online calibration with geometric deep learning and generative model. IEEE Robot. Autom. Lett. 2020, 5, 6956–6963. [Google Scholar] [CrossRef]

- Lv, X.; Wang, B.; Dou, Z.; Ye, D.; Wang, S. LCCNet: LiDAR and camera self-calibration using cost volume network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 2894–2901. [Google Scholar]

- Zhu, A.; Xiao, Y.; Liu, C.; Tan, M.; Cao, Z. Lightweight LiDAR-camera alignment with homogeneous local-global aware representation. IEEE Trans. Intell. Transp. Syst. 2024, 25, 15922–15933. [Google Scholar] [CrossRef]

- Shi, J.; Zhu, Z.; Zhang, J.; Liu, R.; Wang, Z.; Chen, S.; Liu, H. CalibRCNN: Calibrating camera and LiDAR by recurrent convolutional neural network and geometric constraints. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 10197–10202. [Google Scholar]

- Ye, C.; Pan, H.; Gao, H. Keypoint-based LiDAR-camera online calibration with robust geometric network. IEEE Trans. Instrum. Meas. 2021, 71, 2503011. [Google Scholar] [CrossRef]

- Lv, X.; Wang, S.; Ye, D. CFNet: LiDAR-camera registration using calibration flow network. Sensors 2021, 21, 8112. [Google Scholar] [CrossRef] [PubMed]

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? The KITTI vision benchmark suite. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 3354–3361. [Google Scholar]

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuScenes: A multimodal dataset for autonomous driving. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Jeju, Republic of Korea, 13–19 June 2020; pp. 11621–11631. [Google Scholar]

- Zhou, L.; Li, Z.; Kaess, M. Automatic extrinsic calibration of a camera and a 3D lidar using line and plane correspondences. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 5562–5569. [Google Scholar]

- Pusztai, Z.; Hajder, L. Accurate Calibration of LiDAR-Camera Systems Using Ordinary Boxes. In Proceedings of the IEEE International Conference on Computer Vision Workshops (ICCVW), Venice, Italy, 22–29 October 2017; pp. 394–402. [Google Scholar]

- Tsai, D.; Worrall, S.; Shan, M.; Lohr, A.; Nebot, E. Optimising the Selection of Samples for Robust LiDAR-Camera Calibration. In Proceedings of the 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), Indianapolis, IN, USA, 19–22 September 2021; pp. 2631–2638. [Google Scholar]

- Kim, E.s.; Park, S.Y. Extrinsic calibration between camera and LiDAR sensors by matching multiple 3D planes. Sensors 2019, 20, 52. [Google Scholar] [CrossRef] [PubMed]

- Yuan, C.; Liu, X.; Hong, X.; Zhang, F. Pixel-level extrinsic self calibration of high resolution LiDAR and camera in targetless environments. IEEE Robot. Autom. Lett. 2021, 6, 7517–7524. [Google Scholar] [CrossRef]

- Ou, N.; Cai, H.; Yang, J.; Wang, J. Targetless extrinsic calibration of camera and low-resolution 3-D LiDAR. IEEE Sens. J. 2023, 23, 10889–10899. [Google Scholar] [CrossRef]

- Tu, D.; Wang, B.; Cui, H.; Liu, Y.; Shen, S. Multi-camera-LiDAR auto-calibration by joint structure-from-motion. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Kyoto, Japan, 23–27 October 2022; pp. 2242–2249. [Google Scholar]

- Peršić, J.; Petrović, L.; Marković, I.; Petrović, I. Spatiotemporal multisensor calibration via Gaussian processes moving target tracking. IEEE Trans. Robot. 2021, 37, 1401–1415. [Google Scholar] [CrossRef]

- Pandey, G.; McBride, J.R.; Savarese, S.; Eustice, R.M. Automatic extrinsic calibration of vision and lidar by maximizing mutual information. J. Field Robot. 2015, 32, 696–722. [Google Scholar] [CrossRef]

- Pandey, G.; McBride, J.; Savarese, S.; Eustice, R. Automatic targetless extrinsic calibration of a 3D lidar and camera by maximizing mutual information. In Proceedings of the Twenty-Sixth AAAI Conference on Artificial Intelligence, Toronto, ON, Canada, 22–26 July 2012; pp. 2053–2059. [Google Scholar]

- Jiang, P.; Osteen, P.; Saripalli, S. SemCal: Semantic LiDAR–Camera Calibration Using Neural Mutual Information Estimator. In Proceedings of the 2021 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI), Karlsruhe, Germany, 23–25 September 2021; pp. 1–7. [Google Scholar]

- Park, C.; Moghadam, P.; Kim, S.; Sridharan, S.; Fookes, C. Spatiotemporal camera-LiDAR calibration: A targetless and structureless approach. IEEE Robot. Autom. Lett. 2020, 5, 1556–1563. [Google Scholar] [CrossRef]

- Cattaneo, D.; Vaghi, M.; Ballardini, A.L.; Fontana, S.; Sorrenti, D.G.; Burgard, W. CMRNet: Camera to LiDAR-map registration. In Proceedings of the IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 1283–1289. [Google Scholar]

- Duan, Z.; Hu, X.; Ding, J.; An, P.; Huang, X.; Ma, J. A robust LiDAR-camera self-calibration via rotation-based alignment and multi-level cost volume. IEEE Robot. Autom. Lett. 2023, 9, 627–634. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 17–30 June 2016; pp. 770–778. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

| Method | SCNet | CMRNet | LCCNet | Ours |

|---|---|---|---|---|

| Per model size (M) | - | 35.40 | 66.75 | 54.83 |

| Trainable Params | - | 35.40 | 66.75 | 5.99 |

| Total size | - | 106.20 | 333.75 | 78.79 |

| Iterations | 5 | 3 | 5 | 5 |

| Camera num | 1 | 1 | 1 | 6 1 |

| Method | Transl Error (cm) | Rot Error (°) | ||||

|---|---|---|---|---|---|---|

| Mean | Median | Std | Mean | Median | Std | |

| LCCNet | 2.006 | 1.643 | 1.415 | 0.215 | 0.169 | 0.187 |

| SCNet | 1.368 | 1.212 | 0.975 | 0.148 | 0.129 | 0.122 |

| Ours | 2.651 | 2.168 | 3.401 | 0.246 | 0.1840 | 0.347 |

| Tasks | Metrics | w/o Noise | ±0.12 m/±1° | ±0.5 m/±10° | ±1.5 m/±20° |

|---|---|---|---|---|---|

| 3D Object-Det [20] | NDS | 71.15 | 69.07 (↓ 2.08) | 49.69 (↓ 21.46) | 26.44 (↓ 44.71) |

| mAP | 68.41 | 65.73 (↓ 2.68) | 37.01 (↓ 31.40) | 5.64 (↓ 62.77) | |

| BEV Map-Seg [20] | mIoU | w/o Noise | ±0.12m/±1° | ±0.5m/±10° | ±1.5m/±20° |

| Drivable Area | 83.04 | 82.35 (↓ 0.69) | 74.66 (↓ 8.38) | 63.51 (↓ 19.53) | |

| Ped Crossing | 58.56 | 57.64 (↓ 0.92) | 44.20 (↓ 14.36) | 18.73 (↓ 39.83) | |

| Walkway | 57.81 | 55.86 (↓ 1.95) | 39.01 (↓ 18.80) | 19.59 (↓ 38.22) | |

| Stop Line | 47.61 | 46.75 (↓ 0.86) | 36.52 (↓ 11.09) | 12.56 (↓ 35.05) | |

| Carpark Area | 77.58 | 70.53 (↓ 7.05) | 50.95 (↓ 26.63) | 17.45 (↓ 60.13) | |

| Divider | 48.42 | 44.77 (↓ 3.65) | 25.51 (↓ 22.91) | 14.73 (↓ 33.69) 2 |

| Backbone | Transl Error (cm) | Rot Error (°) | ||||

|---|---|---|---|---|---|---|

| X | Y | Z | Rot | Pitch | Yaw | |

| ResNet50 (Frozen) [41] | 41.09 | 53.47 | 19.11 | 1.81 | 2.07 | 5.31 |

| ResNet101 (Frozen) [41] | 41.67 | 51.41 | 19.32 | 1.81 | 2.07 | 5.42 |

| Swin-Transformer (Frozen) [42] | 24.06 | 26.90 | 20.43 | 1.43 | 1.64 | 4.37 |

| ResNet50 (Trainable) | 23.52 | 27.49 | 17.37 | 1.50 | 1.76 | 3.94 |

| ResNet101 (Trainable) | 24.91 | 29.11 | 19.04 | 1.54 | 1.84 | 4.11 |

| Swin-Transformer (Trainable) | 26.52 | 32.17 | 24.75 | 1.98 | 2.34 | 4.75 |

| Swin-Transformer (Partially) | 20.36 | 23.90 | 13.97 | 1.29 | 1.48 | 3.78 3 |

| FPN | CCFusion | Matrix Loss | (cm) | (°) |

|---|---|---|---|---|

| no | no | no | 38.98 45.47 27.82 | 3.64 3.80 7.00 |

| yes | no | no | 39.42 (+0.40) 40.25 (−5.22) 27.54 (−0.28) | 3.41 (−0.23) 3.76 (−0.04) 7.06 (+0.06) |

| yes | yes | no | 29.83 (−9.59) 32.36 (−7.89) 20.43 (−7.11) | 3.03 (−0.38) 3.19 (−0.57) 5.69 (−1.37) |

| yes | yes | yes | 24.06 (−5.80) 26.90 (−5.46) 16.64 (−3.79) | 1.43 (−1.60) 1.64 (−1.55) 4.37 (−1.32) 4 |

| Errors/Pairs | Axis | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|---|

| Translation (cm) | X | 26.05 | 25.75 | 24.99 | 26.62 | 26.52 | 20.36 |

| Y | 15.65 | 22.76 | 25.01 | 24.72 | 27.28 | 23.90 | |

| Z | 12.89 | 15.81 | 16.27 | 16.51 | 17.50 | 13.97 | |

| Rotation (°) | X | 1.35 | 1.56 | 1.62 | 1.62 | 1.68 | 1.29 |

| Y | 1.43 | 1.73 | 1.77 | 1.79 | 1.88 | 1.47 | |

| Z | 3.66 5 | 4.51 | 4.63 | 4.57 | 4.78 | 3.78 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hu, L.; Wei, C.; Wang, M.; Wu, Z.; Xu, Y. Multi-Calib: A Scalable LiDAR–Camera Calibration Network for Variable Sensor Configurations. Sensors 2025, 25, 7321. https://doi.org/10.3390/s25237321

Hu L, Wei C, Wang M, Wu Z, Xu Y. Multi-Calib: A Scalable LiDAR–Camera Calibration Network for Variable Sensor Configurations. Sensors. 2025; 25(23):7321. https://doi.org/10.3390/s25237321

Chicago/Turabian StyleHu, Leyun, Chao Wei, Meijing Wang, Zengbin Wu, and Yang Xu. 2025. "Multi-Calib: A Scalable LiDAR–Camera Calibration Network for Variable Sensor Configurations" Sensors 25, no. 23: 7321. https://doi.org/10.3390/s25237321

APA StyleHu, L., Wei, C., Wang, M., Wu, Z., & Xu, Y. (2025). Multi-Calib: A Scalable LiDAR–Camera Calibration Network for Variable Sensor Configurations. Sensors, 25(23), 7321. https://doi.org/10.3390/s25237321