Clustered Federated Spatio-Temporal Graph Attention Networks for Skeleton-Based Action Recognition

Abstract

1. Introduction

- We introduce an attention–descriptor-driven federated clustering paradigm for skeleton HAR, turning spatio-temporal attention into a privacy-friendly client representation for dynamic cluster assignment.

- We propose an attention–similarity-weighted cross-cluster fusion that balances personalization and consistency via a lightweight update, without changing the client-side training pipeline.

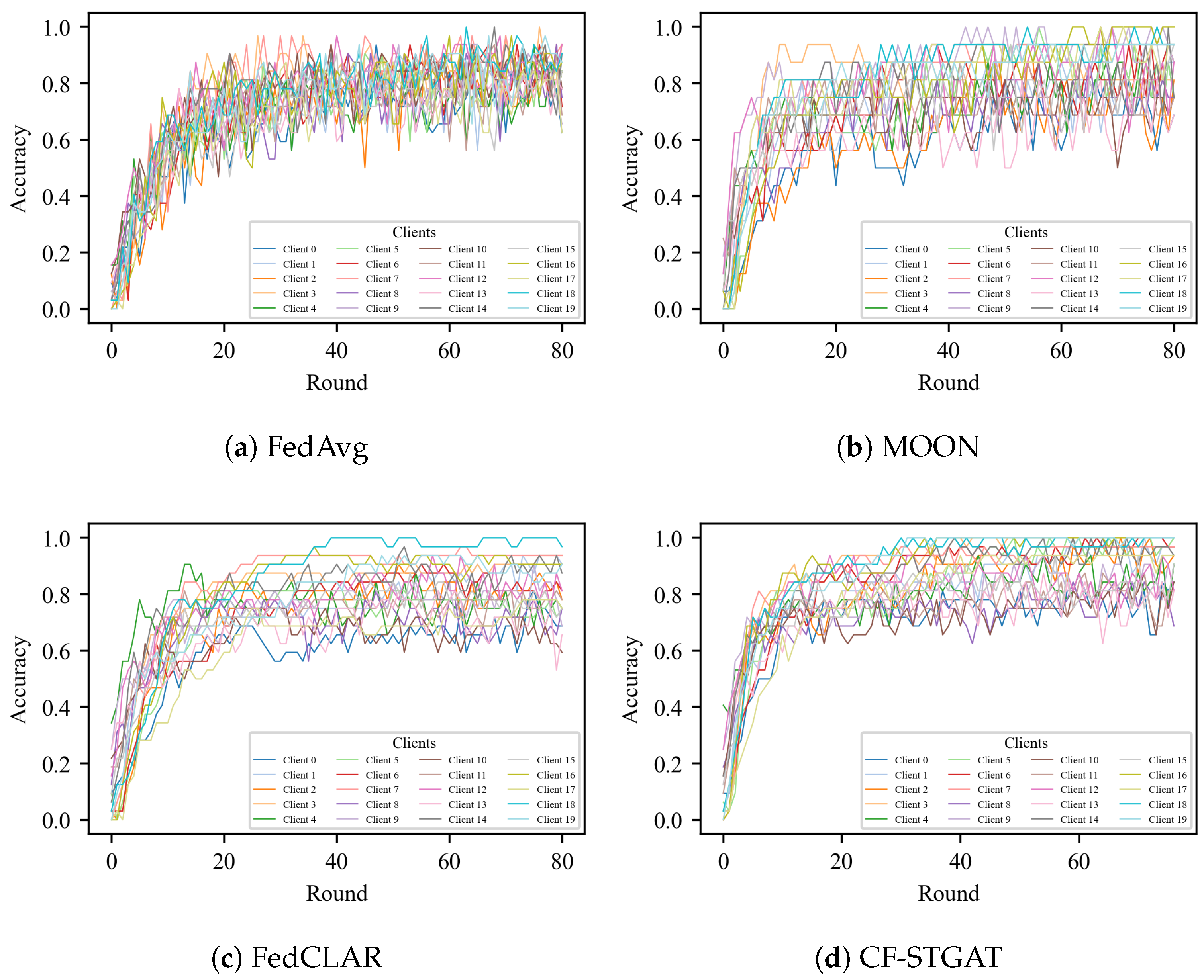

- We perform comprehensive experiments on NTU 60/120 with X-Sub/X-Setup, benchmarking against FedAvg, FedProx, MOON, and clustered baselines FedCLAR. CF-STGAT achieves consistent accuracy gains and smoother convergence.

2. Related Work

2.1. Spatio-Temporal Model for Skeleton-Based Action Recognition

2.2. Federated Learning for Skeleton-Based Action Recognition

3. Methods

3.1. Preliminary

3.1.1. Spatio-Temporal Graph Building

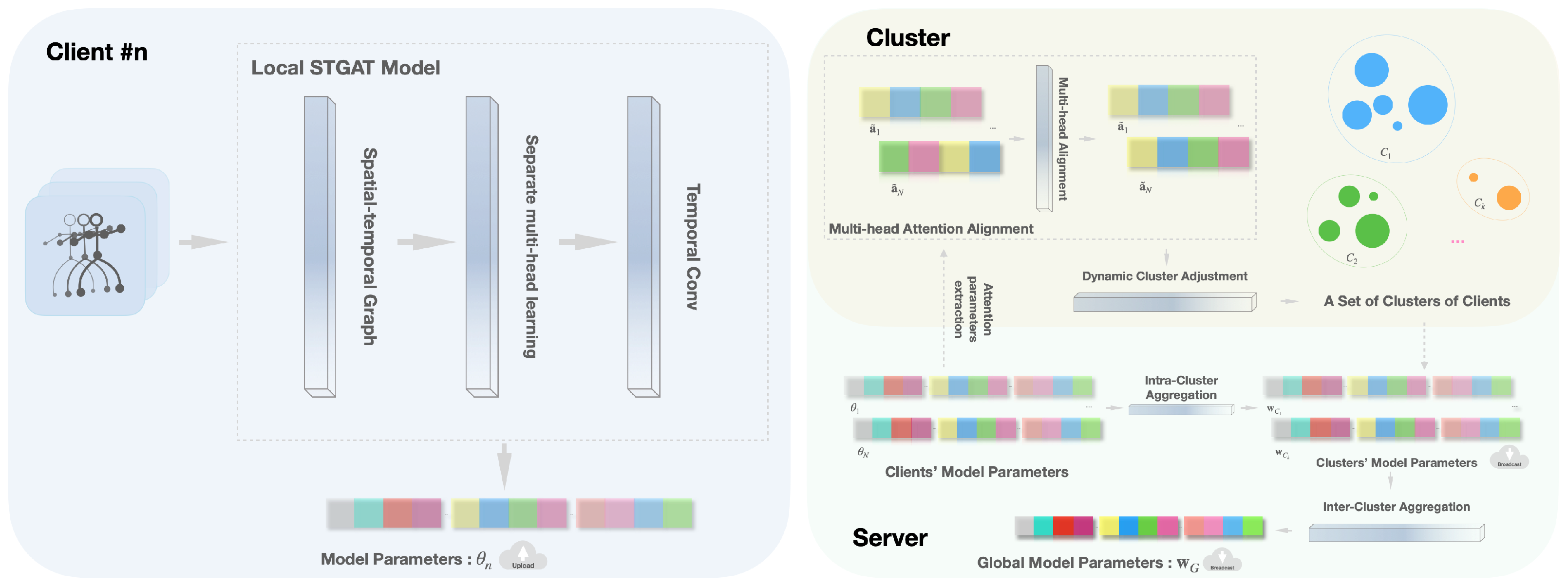

3.1.2. STGAT Network

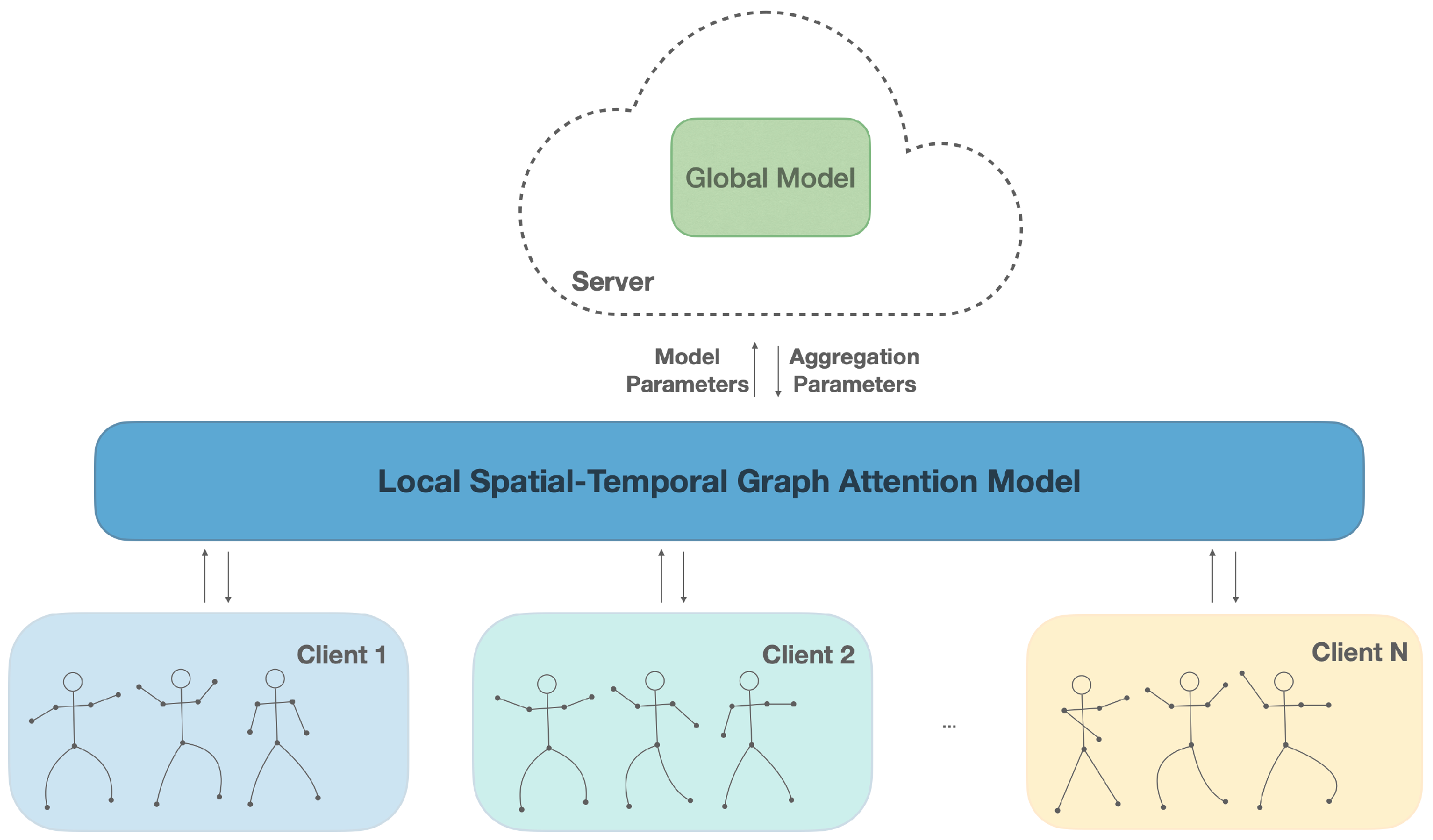

3.2. Fed-STGAT

3.2.1. Local Updates

3.2.2. Aggregation

3.2.3. Limitation of Fed-STGAT

3.3. Dynamic Cluster Adjustment

3.4. Cluster Federated Aggregation

4. Experimental Results

4.1. Datasets

4.2. The Details of Implementation

4.3. Experiments Results and Discussion

4.3.1. General Results

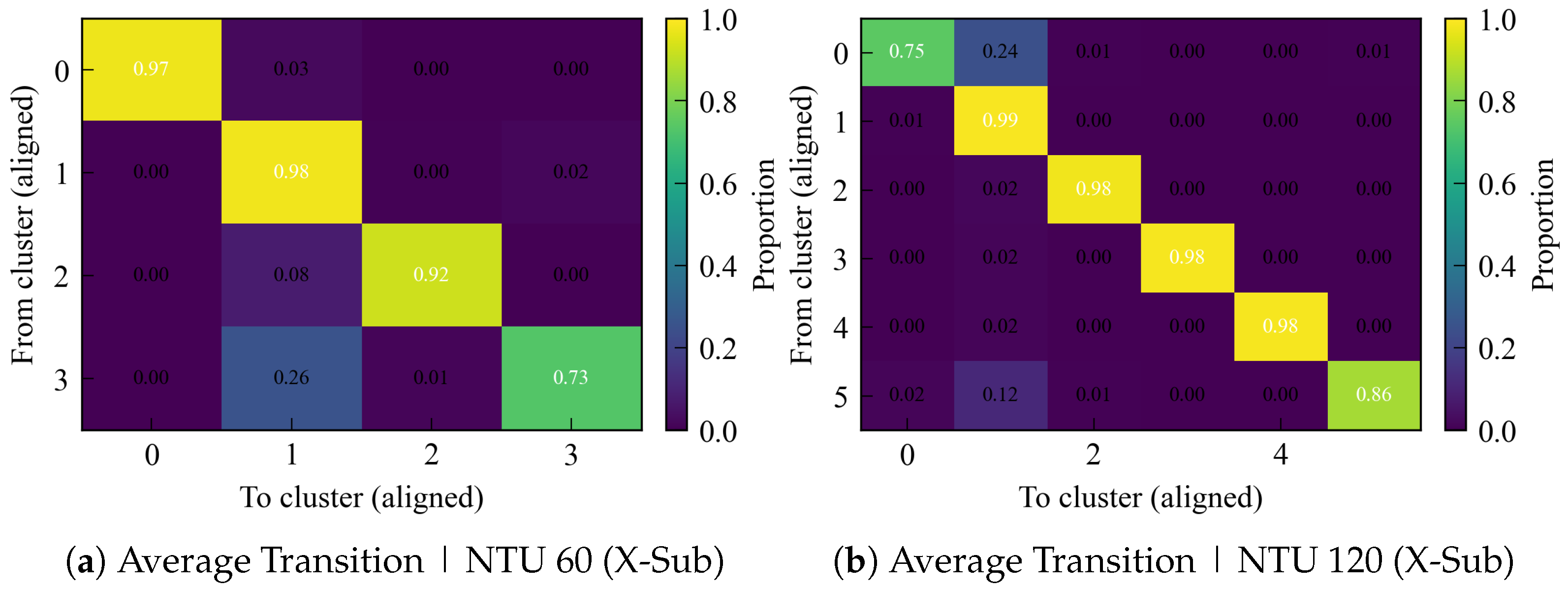

4.3.2. Clustering Stability Analysis

4.3.3. Effectiveness of Clustering

4.3.4. Effectiveness of Fusion

4.3.5. Discussion and Future Work

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Dai, C.; Lu, S.; Liu, C.; Guo, B. A light-weight skeleton human action recognition model with knowledge distillation for edge intelligent surveillance applications. Appl. Soft Comput. 2024, 151, 111166. [Google Scholar] [CrossRef]

- Terreran, M.; Barcellona, L.; Ghidoni, S. A general skeleton-based action and gesture recognition framework for human–robot collaboration. Robot. Auton. Syst. 2023, 170, 104523. [Google Scholar] [CrossRef]

- Yan, S.; Xiong, Y.; Lin, D. Spatial temporal graph convolutional networks for skeleton-based action recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; Volume 32. [Google Scholar]

- Xiao, L.; Yang, X.; Peng, T.; Li, H.; Guo, R. Skeleton-Based Activity Recognition for Process-Based Quality Control of Concealed Work via Spatial–Temporal Graph Convolutional Networks. Sensors 2024, 24, 1220. [Google Scholar] [CrossRef] [PubMed]

- Shi, L.; Zhang, Y.; Cheng, J.; Lu, H. Two-stream adaptive graph convolutional networks for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 12026–12035. [Google Scholar]

- Chen, Y.; Zhang, Z.; Yuan, C.; Li, B.; Deng, Y.; Hu, W. Channel-wise topology refinement graph convolution for skeleton-based action recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 13359–13368. [Google Scholar]

- Liu, Z.; Zhang, H.; Chen, Z.; Wang, Z.; Ouyang, W. Disentangling and unifying graph convolutions for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 143–152. [Google Scholar]

- Hu, L.; Liu, S.; Feng, W. Skeleton-based action recognition with local dynamic spatial–temporal aggregation. Expert Syst. Appl. 2023, 232, 120683. [Google Scholar] [CrossRef]

- Li, C.; Niu, D.; Jiang, B.; Zuo, X.; Yang, J. Meta-har: Federated representation learning for human activity recognition. In Proceedings of the Web Conference 2021, Ljubljana, Slovenia, 19–23 April 2021; pp. 912–922. [Google Scholar]

- Li, T.; Sahu, A.K.; Zaheer, M.; Sanjabi, M.; Talwalkar, A.; Smith, V. Federated optimization in heterogeneous networks. In Proceedings of the Machine Learning and Systems, Austin, TX, USA, 2–4 March 2020; Volume 2, pp. 429–450. [Google Scholar]

- Li, Q.; He, B.; Song, D. Model-contrastive federated learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 10713–10722. [Google Scholar]

- Presotto, R.; Civitarese, G.; Bettini, C. Fedclar: Federated clustering for personalized sensor-based human activity recognition. In Proceedings of the 2022 IEEE International Conference on Pervasive Computing and Communications (PerCom), Pisa, Italy, 21–25 March 2022; IEEE: New York, NY, USA, 2022; pp. 227–236. [Google Scholar]

- Kim, T.S.; Reiter, A. Interpretable 3d human action analysis with temporal convolutional networks. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; IEEE: New York, NY, USA, 2017; pp. 1623–1631. [Google Scholar]

- Lea, C.; Flynn, M.D.; Vidal, R.; Reiter, A.; Hager, G.D. Temporal convolutional networks for action segmentation and detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 156–165. [Google Scholar]

- Nguyen, H.C.; Nguyen, T.H.; Scherer, R.; Le, V.H. Deep learning for human activity recognition on 3D human skeleton: Survey and comparative study. Sensors 2023, 23, 5121. [Google Scholar] [CrossRef] [PubMed]

- Duan, H.; Zhao, Y.; Chen, K.; Lin, D.; Dai, B. Revisiting skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 2969–2978. [Google Scholar]

- Chi, H.g.; Ha, M.H.; Chi, S.; Lee, S.W.; Huang, Q.; Ramani, K. Infogcn: Representation learning for human skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 20186–20196. [Google Scholar]

- Lee, J.; Lee, M.; Lee, D.; Lee, S. Hierarchically decomposed graph convolutional networks for skeleton-based action recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 1–6 October 2023; pp. 10444–10453. [Google Scholar]

- Feng, M.; Meunier, J. Skeleton graph-neural-network-based human action recognition: A survey. Sensors 2022, 22, 2091. [Google Scholar] [CrossRef] [PubMed]

- Zhou, Y.; Yan, X.; Cheng, Z.Q.; Yan, Y.; Dai, Q.; Hua, X.S. Blockgcn: Redefine topology awareness for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024; pp. 2049–2058. [Google Scholar]

- Gao, Z.; Wang, P.; Lv, P.; Jiang, X.; Liu, Q.; Wang, P.; Xu, M.; Li, W. Focal and global spatial-temporal transformer for skeleton-based action recognition. In Proceedings of the Asian Conference on Computer Vision, Macau, China, 4–8 December 2022; pp. 382–398. [Google Scholar]

- Ahn, D.; Kim, S.; Hong, H.; Ko, B.C. Star-transformer: A spatio-temporal cross attention transformer for human action recognition. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 2–7 January 2023; pp. 3330–3339. [Google Scholar]

- Do, J.; Kim, M. Skateformer: Skeletal-temporal transformer for human action recognition. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; Springer: Cham, Switzerland, 2024; pp. 401–420. [Google Scholar]

- Qin, X.; Cai, R.; Yu, J.; He, C.; Zhang, X. An efficient self-attention network for skeleton-based action recognition. Sci. Rep. 2022, 12, 4111. [Google Scholar] [CrossRef] [PubMed]

- Xin, W.; Liu, R.; Liu, Y.; Chen, Y.; Yu, W.; Miao, Q. Transformer for skeleton-based action recognition: A review of recent advances. Neurocomputing 2023, 537, 164–186. [Google Scholar] [CrossRef]

- Ren, B.; Liu, M.; Ding, R.; Liu, H. A survey on 3d skeleton-based action recognition using learning method. Cyborg Bionic Syst. 2024, 5, 0100. [Google Scholar] [CrossRef] [PubMed]

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; y Arcas, B.A. Communication-efficient learning of deep networks from decentralized data. In Proceedings of the Artificial Intelligence and Statistics, PMLR, Fort Lauderdale, FL, USA, 20–22 April 2017; pp. 1273–1282. [Google Scholar]

- Li, Q.; Wen, Z.; Wu, Z.; Hu, S.; Wang, N.; Li, Y.; Liu, X.; He, B. A survey on federated learning systems: Vision, hype and reality for data privacy and protection. IEEE Trans. Knowl. Data Eng. 2021, 35, 3347–3366. [Google Scholar] [CrossRef]

- Liu, B.; Lv, N.; Guo, Y.; Li, Y. Recent advances on federated learning: A systematic survey. Neurocomputing 2024, 597, 128019. [Google Scholar] [CrossRef]

- Zhao, J.; Bagchi, S.; Avestimehr, S.; Chan, K.; Chaterji, S.; Dimitriadis, D.; Li, J.; Li, N.; Nourian, A.; Roth, H. The federation strikes back: A survey of federated learning privacy attacks, defenses, applications, and policy landscape. ACM Comput. Surv. 2025, 57, 1–37. [Google Scholar] [CrossRef]

- Karimireddy, S.P.; Kale, S.; Mohri, M.; Reddi, S.; Stich, S.; Suresh, A.T. Scaffold: Stochastic controlled averaging for federated learning. In Proceedings of the International Conference on Machine Learning, PMLR, Virtual, 13–18 July 2020; pp. 5132–5143. [Google Scholar]

- Wang, J.; Liu, Q.; Liang, H.; Joshi, G.; Poor, H.V. Tackling the objective inconsistency problem in heterogeneous federated optimization. In Proceedings of the 34th International Conference on Neural Information Processing Systems, Vancouver BC Canada, 6–12 December 2020; Volume 33, pp. 7611–7623. [Google Scholar]

- Mehta, M.; Shao, C. A greedy agglomerative framework for clustered federated learning. IEEE Trans. Ind. Informatics 2023, 19, 11856–11867. [Google Scholar] [CrossRef]

- Ma, J.; Zhou, T.; Long, G.; Jiang, J.; Zhang, C. Structured federated learning through clustered additive modeling. In Proceedings of the 37th International Conference on Neural Information Processing Systems, New Orleans, LA, USA, 10–16 December 2023; Volume 36, pp. 43097–43107. [Google Scholar]

- Chen, C.; Xu, Z.; Hu, W.; Zheng, Z.; Zhang, J. FedGL: Federated graph learning framework with global self-supervision. Inf. Sci. 2024, 657, 119976. [Google Scholar] [CrossRef]

- Li, X.; Wu, Z.; Zhang, W.; Zhu, Y.; Li, R.H.; Wang, G. FedGTA: Topology-Aware Averaging for Federated Graph Learning. VLDB Endow. 2023, 17, 41–50. [Google Scholar] [CrossRef]

- Huang, W.; Wan, G.; Ye, M.; Du, B. Federated graph semantic and structural learning. In Proceedings of the Thirty-Second International Joint Conference on Artificial Intelligence, Macao, China, 19–25 August 2023; pp. 3830–3838. [Google Scholar]

- Liu, Y.; Lou, Y.; Liu, Y.; Cao, Y.; Wang, H. Label leakage in vertical federated learning: A survey. In Proceedings of the IJCAI, Jeju, Republic of Korea, 3–9 August 2024. [Google Scholar]

- Li, Z.; Yan, C.; Zhang, X.; Gharibi, G.; Yin, Z.; Jiang, X.; Malin, B.A. Split learning for distributed collaborative training of deep learning models in health informatics. AMIA Annu. Symp. Proc. 2024, 2023, 1047–1056. [Google Scholar] [PubMed]

- Ye, M.; Shen, W.; Du, B.; Snezhko, E.; Kovalev, V.; Yuen, P.C. Vertical federated learning for effectiveness, security, applicability: A survey. ACM Comput. Surv. 2025, 57, 1–32. [Google Scholar] [CrossRef]

- Shahroudy, A.; Liu, J.; Ng, T.T.; Wang, G. NTU RGB+D: A large scale dataset for 3D human activity analysis. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1010–1019. [Google Scholar]

- Liu, J.; Shahroudy, A.; Perez, M.; Wang, G.; Duan, L.Y.; Kot, A.C. NTU RGB+D 120: A large-scale benchmark for 3D human activity understanding. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 2684–2701. [Google Scholar] [CrossRef] [PubMed]

- Arivazhagan, M.G.; Aggarwal, V.; Singh, A.K.; Choudhary, S. Federated learning with personalization layers. arXiv Prepr. 2019, arXiv:1912.00818. [Google Scholar]

- Collins, L.; Hassani, H.; Mokhtari, A.; Shakkottai, S. Exploiting shared representations for personalized federated learning. In Proceedings of the International Conference on Machine Learning, PMLR, Virtual, 18–24 July 2021; pp. 2089–2099. [Google Scholar]

| Dataset | Protocol | Classes | Train Clients | Test Clients |

|---|---|---|---|---|

| NTU 60 | X-Sub | 60 | 20 | 20 |

| NTU 60 | X-Setup | 60 | 8 | 9 |

| NTU 120 | X-Sub | 120 | 53 | 53 |

| NTU 120 | X-Setup | 120 | 16 | 16 |

| Model Backbone: STGAT | NTU 60 | NTU 120 | ||

|---|---|---|---|---|

| X-Sub (%) | X-Setup (%) | X-Sub (%) | X-Setup (%) | |

| Fed-STGAT * | 89.72 | 86.46 | 72.35 | 78.71 |

| FedProx | 89.31 (−0.41) | 87.49 (+1.03) | 75.36 (+3.01) | 80.65 (+1.94) |

| MOON | 88.01 (−1.71) | 86.96 (+0.50) | 73.31 (1.04) | 79.86 (+1.15) |

| FedCLAR | 89.88 (+0.16) | 87.24 (+0.78) | 75.55 (+3.20) | 80.83 (+2.12) |

| CF-STGAT (our) | 90.56 (+0.84) | 90.55 (+4.09) | 80.33 (+7.98) | 82.89 (+4.18) |

| Setup | ARI | Churn |

|---|---|---|

| , | 0.846 ± 0.213 | 0.040 ± 0.056 |

| , | 0.674 ± 0.326 | 0.064 ± 0.077 |

| , | 0.750 ± 0.236 | 0.064 ± 0.064 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yu, T.; Pinto, S.; Gomes, T.; Tavares, A.; Xu, H. Clustered Federated Spatio-Temporal Graph Attention Networks for Skeleton-Based Action Recognition. Sensors 2025, 25, 7277. https://doi.org/10.3390/s25237277

Yu T, Pinto S, Gomes T, Tavares A, Xu H. Clustered Federated Spatio-Temporal Graph Attention Networks for Skeleton-Based Action Recognition. Sensors. 2025; 25(23):7277. https://doi.org/10.3390/s25237277

Chicago/Turabian StyleYu, Tao, Sandro Pinto, Tiago Gomes, Adriano Tavares, and Hao Xu. 2025. "Clustered Federated Spatio-Temporal Graph Attention Networks for Skeleton-Based Action Recognition" Sensors 25, no. 23: 7277. https://doi.org/10.3390/s25237277

APA StyleYu, T., Pinto, S., Gomes, T., Tavares, A., & Xu, H. (2025). Clustered Federated Spatio-Temporal Graph Attention Networks for Skeleton-Based Action Recognition. Sensors, 25(23), 7277. https://doi.org/10.3390/s25237277