Telehealth-Enabled In-Home Elbow Rehabilitation for Brachial Plexus Injuries Using Deep-Reinforcement-Learning-Assisted Telepresence Robots

Abstract

1. Introduction

2. Literature Review

3. Theoretical Background

3.1. Overview of Deep Reinforcement Learning (DRL)

- S is the state space;

- A is the action space;

- P is the state transition probability;

- R is the reward function, R: S × A → R.

3.2. Deep Deterministic Policy Gradient (DDPG) Algorithm

- Actor: The actor is a neural network that takes the current state as input and outputs a continuous action or set of actions. The actor’s role is to learn the optimal policy function.

- Critic: The critic evaluates the action output by the actor by computing the Q-value. The critic’s role is to learn the optimal value function.

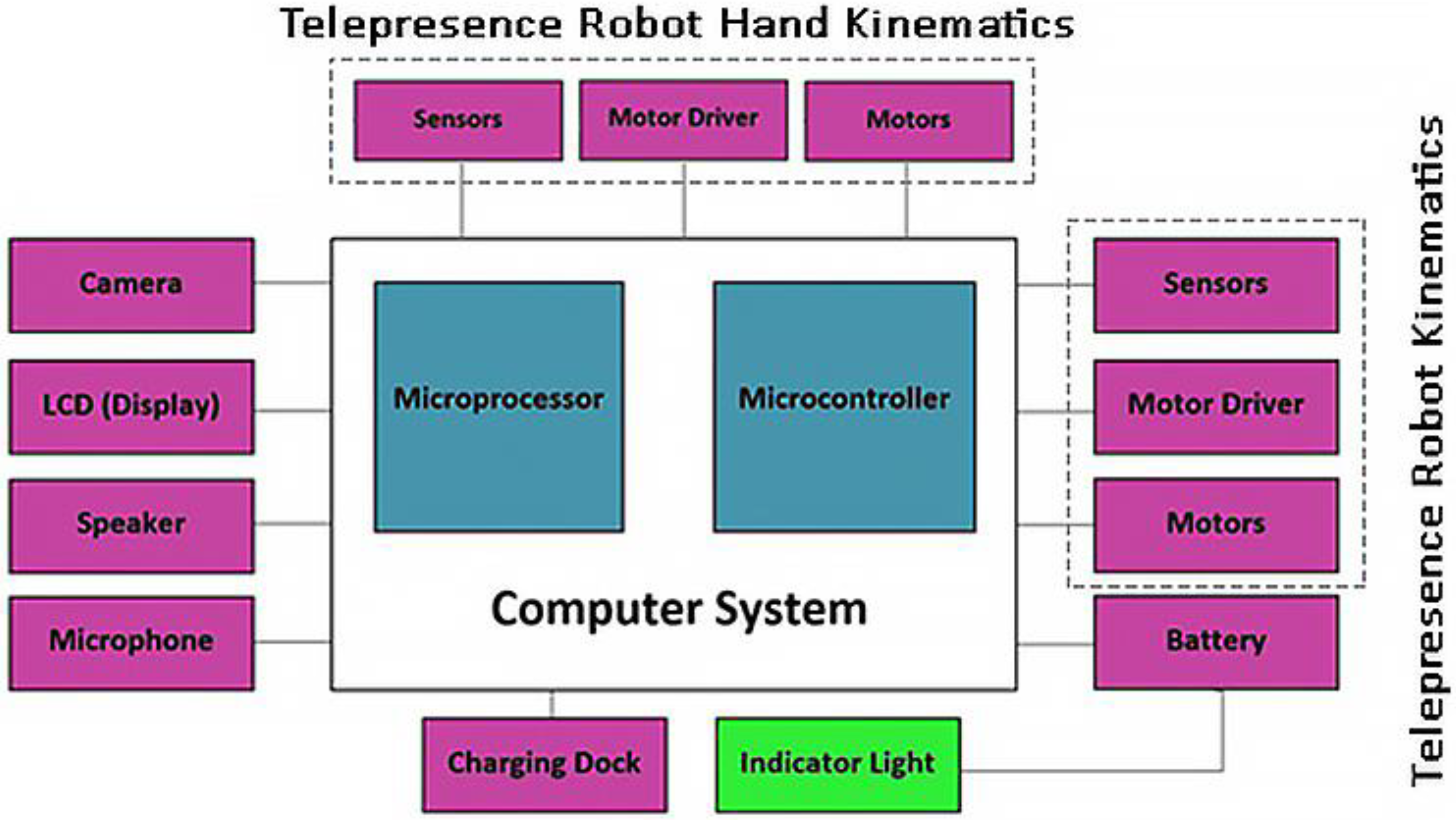

4. Telepresence Robots

- Mobility and Navigation: Most telepresence robots have wheels and can move around. They use various sensors, such as LIDAR or ultrasonic sensors, for navigation. The control of robot mobility can be through a remote user or automated using algorithms.

- Communication: This is central to the concept of telepresence. Robots usually have a camera, microphone, and speakers that facilitate video conferencing. The transmission of audio–visual data should be in real time or with minimal latency.

- Robotic Arm: The telepresence robot is equipped with a robotic arm that assists the BPI patient in elbow flexion.

- User Interface: Telepresence robots usually have an interface allowing remote users to control them. This could be through a web application, desktop software, or even a mobile app.

- Autonomy and Battery Life: Since these robots are mobile, they need to be battery-powered. Battery life and the ability to autonomously return to a charging station when the battery is low are important considerations.

4.1. Operation of the Telepresence Robot for Elbow Flexion Exercises

4.1.1. Sensing Phase

Types of Sensors

- Force Sensors: Force-sensing resistors (FSRs), like the load cell or piezoelectric force sensor, measure the amount of force exerted on the robotic arm. A load cell typically uses a strain gauge that changes its electrical resistance when deformed by force. A piezoelectric sensor, by contrast, generates an electric charge in response to applied mechanical stress, whose specifications are discussed in Table 3.

- 2.

- Position and Angle Sensors: Since the robot needs to know the arm’s position and the elbow joint’s angle, position- and potentiometer-based angle sensors are used. These sensors give information about the spatial configuration of the patient’s arm, which is vital for adjusting the assistance provided, whose specifications are discussed in Table 4.

Mathematical Equations and Relations

- Force Sensors: For strain gauge-based force sensors, the change in resistance, ΔR, is proportional to the strain, ε, which is proportional to the force, F, applied. This relationship can be expressed in Equation (8), as follows:

- 2.

- Position and Angle Sensors: Resistance varies linearly with the rotation angle for potentiometer-based angle sensors. If is the resistance at 0 degrees and Rmax is the maximum resistance at the maximum rotation angle, the relationship can be expressed in Equation (9), as follows:

4.1.2. Deep Deterministic Policy Gradient (DDPG) Phase

- : Force exerted by the patient (measured using sensors, as described previously);

- : Mass of the patient’s forearm and arm;

- : Desired acceleration of the patient’s arm during the exercise;

- : Gravitational acceleration (9.81 m/s2);

- : Angle between the forearm and the vertical movement;

- : Coefficient of friction between the patient’s arm and the robot’s arm;

- : Force exerted by the telepresence robot on the patient’s arm.

- Desired Acceleration (): The desired acceleration can be determined based on the trajectory planned for the elbow flexion movement. The DDPG algorithm considers various factors, including the current state of the patient’s arm, the desired state, and other constraints to compute the desired acceleration.

- Frictional Force (): The friction between the robot’s arm and the patient’s arm needs to be considered as mentioned here in Equation (10):

- Force Required for Desired Acceleration (): From Newton’s second law, the force required to achieve the desired acceleration is given by Equation (11), as follows:

- Force to Counteract Gravity (): The component of the gravitational force in the direction of the movement is described in Equation (12), as follows:

- Total Force by Telepresence Robot (): The total force that the robot needs to apply is the sum of the force required for the desired acceleration, the force to counteract gravity, and the frictional force. Additionally, the force exerted by the patient () needs to be considered, as mentioned here in Equation (13):

4.1.3. Action Execution Phase

4.1.4. Feedback and Learning Phase

| Algorithm 1: DDPG for telepresence robot-assisted elbow flexion | |

| 1 | Initialize: |

| 2 | Actor network with weights |

| 3 | Critic network with weights |

| 4 | Target Actor network with weights |

| 5 | Target Critic network with weights |

| 6 | Replay buffer |

| 7 | Soft update factor |

| 8 | Noise process |

| 9 | Discount factor |

| 10 | for episode = 1 to M do |

| 11 | Initialize the state (sensor readings from robot’s arm) |

| 12 | Reset the noise process N |

| 13 | for t = 1 to do |

| 14 | Choose action a from actor network with added noise: |

| 15 | Execute action a and observe reward and new state |

| 16 | Store in replay buffer |

| 17 | Sample a random minibatch of from |

| 18 | Calculate target Q-value using target networks: |

| 19 | |

| 20 | Update the Critic network by minimizing the loss: |

| 21 | |

| 22 | Update the Actor policy using the sampled policy gradient: |

| 23 | |

| 24 | Soft update target networks: |

| 25 | |

| 26 | |

| 27 | |

| 28 | end for |

| 29 | end for |

5. Experimental Setup

5.1. Patient’s Home Setup

5.1.1. Telepresence Robot Equipped with DDPG-Based Assistance

5.1.2. Patient Interaction with the Robot

- Stage 1: Preparation and PositioningBefore the interaction, the patient needs to be appropriately positioned. The robot should be adjustable so that its arm is at the same height as the patient’s arm while the patient lies in bed.

- Stage 2: Calibration of Robotic ArmBefore the exercise, the robotic arm needs to be calibrated to ensure the sensors accurately capture the force the patient applied. This might include adjusting the sensitivity of the sensors and making sure the robot’s arm mimics a human arm’s natural range of motion.

- Stage 3: Initial Grip and Force MeasurementThe patient grips the robotic arm, and an initial force measurement is taken to establish the baseline strength of the patient’s grip and upward force. This baseline is essential for the DDPG algorithm to understand how much assistance is needed.

- Stage 4: Elbow Flexion ExerciseAs the patient attempts to move their arm upward for the elbow flexion exercise, the force sensors on the robotic arm continuously measure the amount of force being exerted by the patient.

- Stage 5: Assistance from Robotic ArmSimultaneously, the DDPG algorithm processes the sensor data and calculates the appropriate amount of assistance. The robotic arm will then exert a controlled force that aids the patient in moving their arm upward. This assistance is dynamically adjusted in real time based on the force the patient is applying.

- Stage 6: Verbal Interaction and EncouragementThe telepresence robot may also have a speaker and microphone, allowing for verbal communication between the patient and the healthcare provider. The healthcare provider can offer live feedback, instructions, and encouragement to the patient through the robot.

- Stage 7: Completion and Data LoggingOnce the exercise is complete, the robotic arm will gently lower the patient’s arm back to the initial position. The data regarding the forces exerted by the patient and the assistance provided by the robotic arm are logged for further analysis.

- Stage 8: Post-Exercise FeedbackAfter the exercise, the patient might be asked to provide feedback on the difficulty of the exercise and the effectiveness of the assistance provided by the robotic arm. This feedback can be useful for calibrating the robot for future sessions.

5.2. Remote Healthcare Provider’s Setup

5.2.1. Monitoring Station

- Computer Setup: The healthcare provider uses a computer with internet connectivity.

- Software Interface: A specialized software interface is installed on the computer, which allows the healthcare provider to connect to the telepresence robot remotely.

- Display: A dual-monitor setup allows for the simultaneous viewing of the patient through the robot’s camera and real-time statistics.

5.2.2. Doctor Interaction

6. Results and Discussion

6.1. DDPG Algorithm Analysis

6.2. Improvement in Force Exerted by Patient

- Conventional rehabilitation group (N = 30 patients):

- ◦

- Mean force exerted = 35 N, Standard deviation = 5 N;

- Telepresence robot-assisted group (N = 30 patients):

- ◦

- Mean force exerted = 40 N, Standard deviation = 5 N.

- and are the sample means of the two groups.

- and are the sample standard deviations of the two groups.

- and are the sample sizes of the two groups.

6.3. Decrease in Assistance Force by Robotic Arm

6.4. Increase in Range of Motion (ROM)

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Alvi, M. Difference in the Population Size between Rural and Urban Areas of Pakistan. MPRA 2018, 90054. [Google Scholar] [CrossRef]

- Dukan, R.; Gerosa, T.; Masmejean, E.H. Daily Life Impact of Brachial Plexus Reconstruction in Adults: 10 Years Follow-Up. J. Hand Surg. 2022, 48, 1167.e1–1167.e7. [Google Scholar] [CrossRef] [PubMed]

- Costales, R.; Socolovsky, M. Adult Brachial Plexus Injuries: Determinants of Treatment (Timing, Injury Type, Injury Pattern). In Operative Brachial Plexus Surgery; Springer International Publishing: Cham, Switzerland, 2021; pp. 133–139. [Google Scholar] [CrossRef]

- Škrabalo, V.A.; Galović, M.; Bobek, D. Rehabilitation after Traumatic Brachial Plexus Injury. Fiz. I Rehabil. Med. 2022, 36, 87–88. [Google Scholar] [CrossRef]

- Mertens, M.G.; Meert, L.; Struyf, F.; Schwank, A.; Meeus, M. Exercise therapy is effective for improvement in range of motion, function and pain in patients with frozen shoulder: A systematic review and meta-analysis. Arch. Phys. Med. Rehabil. 2021, 103, 998–1012.e14. [Google Scholar] [CrossRef] [PubMed]

- Gutowski, K.A.; Orenstein, H.H. Restoration of Elbow Flexion after Brachial Plexus Injury: The Role of Nerve and Muscle Transfers. Plast. Reconstr. Surg. 2000, 106, 1348–1357. [Google Scholar] [CrossRef] [PubMed]

- David Wu, C.B. Expanding Tele-rehabilitation of Stroke Through In-home Robot-assisted Therapy. Int. J. Phys. Med. Rehabil. 2013, 2. [Google Scholar] [CrossRef]

- Mishra, A.; Mishra, A.; Khaparkar, S. IOT Based Real Time Tele-healthcare System. Glob. J. Res. Anal. 2023, 12, 59–64. [Google Scholar] [CrossRef]

- Jin, F.; Zou, M.; Peng, X.; Lei, H.; Ren, Y. Deep Learning-Enhanced Internet of Things for Activity Recognition in Post-Stroke Rehabilitation. IEEE J. Biomed. Health Inform. 2023, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Majhi, B.; Kashyap, A. Early Prediction of Parkinson’s Disease Using Motor, Non-Motor Features and Machine Learning Techniques. In Deep Learning, Machine Learning and IoT in Biomedical and Health Informatics; CRC Press: Boca Raton, FL, USA, 2022; pp. 139–155. [Google Scholar] [CrossRef]

- Wang, X.; Xie, J.; Guo, S.; Li, Y.; Sun, P.; Gan, Z. Deep reinforcement learning-based rehabilitation robot trajectory planning with optimized reward functions. Adv. Mech. Eng. 2021, 13, 168781402110670. [Google Scholar] [CrossRef]

- Bhatt, S.P.; Rochester, C.L. Expanding Implementation of Tele-Pulmonary Rehabilitation: The New Frontier. Ann. Am. Thorac. Soc. 2022, 19, 3–5. [Google Scholar] [CrossRef]

- Candemir, I. Tele-pulmonary rehabilitation and remote assessment of exercise capacity. Eurasian J. Pulmonol. 2022, 24, 73–76. [Google Scholar] [CrossRef]

- Morone, G.; Pirrera, A.; Iannone, A.; Giansanti, D. Development and Use of Assistive Technologies in Spinal Cord Injury: A Narrative Review of Reviews on the Evolution, Opportunities, and Bottlenecks of Their Integration in the Health Domain. Healthcare 2023, 11, 1646. [Google Scholar] [CrossRef]

- Jarrassé, N.; Proietti, T.; Crocher, V.; Robertson, J.; Sahbani, A.; Morel, G.; Roby-Brami, A. Robotic exoskeletons: A perspective for the rehabilitation of arm coordination in stroke patients. Front. Hum. Neurosci. 2014, 8, 947. [Google Scholar] [CrossRef] [PubMed]

- Hohl, K.; Giffhorn, M.; Jackson, S.; Jayaraman, A. A framework for clinical utilization of robotic exoskeletons in rehabilitation. J. Neuroeng. Rehabil. 2022, 19, 115. [Google Scholar] [CrossRef]

- Tang, D.; Lv, X.; Zhang, Y.; Qi, L.; Shen, C.; Shen, W. A Review on Soft Exoskeletons for Arm Rehabilitation. Recent Pat. Eng. 2023, 18, e250523217346. [Google Scholar] [CrossRef]

- Kamal, A.; Ismail, Z.; Shehata, I.M.; Djirar, S.; Talbot, N.C.; Ahmadzadeh, S.; Shekoohi, S.; Cornett, E.M.; Fox, C.J.; Kaye, A.D. Telemedicine, E-Health, and Multi-Agent Systems for Chronic Pain Management. Clin. Pract. 2023, 13, 470–482. [Google Scholar] [CrossRef]

- Rangappa, P.; Rao, K.; Carmra, T.; Karanth, S.; Chacko, J. Tele-medicine, tele-rounds, and tele-intensive care unit in the COVID-19 pandemic. Indian J. Med. Spec. 2021, 12, 4. [Google Scholar] [CrossRef]

- Zaher, T. Tele-Medicine in Health Care: A Necessity or Novelty. Afro-Egypt. J. Infect. Endem. Dis. 2022, 103–104. [Google Scholar] [CrossRef]

- Piotrowicz, E.; Stepnowska, M.; Leszczyńska-Iwanicka, K.; Piotrowska, D.; Kowalska, M.; Tylka, J.; Piotrowski, W.; Piotrowicz, R. Quality of life in heart failure patients undergoing home-based telerehabilitation versus outpatient rehabilitation—A randomized controlled study. Eur. J. Cardiovasc. Nurs. 2015, 14, 256–263. [Google Scholar] [CrossRef]

- Gao, Y.; Wang, N.; Zhang, L.; Liu, N. Effectiveness of home-based cardiac telerehabilitation in patients with heart failure: A systematic review and meta-analysis of andomized controlled trials. J. Clin. Nurs. 2023, 32, 7661–7676. [Google Scholar] [CrossRef]

- Zhong, W.; Fu, C.; Xu, L.; Sun, X.; Wang, S.; He, C.; Wei, Q. Effects of home-based cardiac telerehabilitation programs in patients undergoing percutaneous coronary intervention: A systematic review and meta-analysis. BMC Cardiovasc. Disord. 2023, 23, 101. [Google Scholar] [CrossRef] [PubMed]

- Miura, H.; Shimada, Y.; Fukui, N.; Ikariyama, H.; Togashi, T.; Yamada, S.; Konishi, H.; Aoki, T.; Nakanishi, M.; Noguchi, T. Disease management using home-based cardiac rehabilitation for patients with heart failure. J. Cardiol. Cases 2023, 28, 157–160. [Google Scholar] [CrossRef] [PubMed]

- Levine, B.A.; McAlinden, E.; Hu, T.M.-J.; Fang, F.M.; Alaoui, A.; Angelus, P.; Welsh, J.; Mun, S.K. Home Monitoring of Congestive Heart Failure Patients. In Proceedings of the 1st Transdisciplinary Conference on Distributed Diagnosis and Home Healthcare, 2006. D2H2., Marriott Crystal Gateway Hotel, Arlington, Virginia, 2–4 April 2006; IEEE: Piscataway, NJ, USA, 2006; pp. 33–36. [Google Scholar] [CrossRef]

- Tabak, M.; Vollenbroek-Hutten, M.; Valk, P.; Palen, J.; Hermens, H. A telerehabilitation intervention for patients with Chronic Obstructive Pulmonary Disease: A randomized controlled pilot trial. Clin. Rehabil. 2013, 28, 582–591. [Google Scholar] [CrossRef] [PubMed]

- Fandim, J.V.; Costa, L.O.; Yamato, T.P.; Almeida, L.; Maher, C.G.; Dear, B.; Kamper, S.J.; Saragiotto, B.T. Telerehabilitation for neck pain. Cochrane Database Syst. Rev. 2021. [Google Scholar] [CrossRef]

- De Marchi, F.; Contaldi, E.; Magistrelli, L.; Cantello, R.; Comi, C.; Mazzini, L. Telehealth in Neurodegenerative Diseases: Opportunities and Challenges for Patients and Physicians. Brain Sci. 2021, 11, 237. [Google Scholar] [CrossRef]

- Fennelly, O.; Cunningham, C.; Grogan, L.; Cronin, H.; O’shea, C.; Roche, M.; Lawlor, F.; O’hare, N. Successfully implementing a national electronic health record: A rapid umbrella review. Int. J. Med. Inform. 2020, 144, 104281. [Google Scholar] [CrossRef] [PubMed]

- Johnson, R.W.; Williams, S.A.; Gucciardi, D.F.; Bear, N.; Gibson, N. Can an online exercise prescription tool improve adherence to home exercise programmes in children with cerebral palsy and other neurodevelopmental disabilities? A randomised controlled trial. BMJ Open 2020, 10, e040108. [Google Scholar] [CrossRef] [PubMed]

- Akbari, A.; Haghverd, F.; Behbahani, S. Robotic Home-Based Rehabilitation Systems Design: From a Literature Review to a Conceptual Framework for Community-Based Remote Therapy During COVID-19 Pandemic. Front. Robot. AI 2021, 8, 612331. [Google Scholar] [CrossRef]

- Razfar, N.; Kashef, R.; Mohammadi, F. Automatic Post-Stroke Severity Assessment Using Novel Unsupervised Consensus Learning for Wearable and Camera-Based Sensor Datasets. Sensors 2023, 23, 5513. [Google Scholar] [CrossRef]

- Hao, J.; Pu, Y.; Chen, Z.; Siu, K.-C. Effects of virtual reality-based telerehabilitation for stroke patients: A systematic review and meta-analysis of randomized controlled trials. J. Stroke Cerebrovasc. Dis. 2023, 32, 106960. [Google Scholar] [CrossRef]

- Shen, C.; Gonzalez, Y.; Klages, P.; Qin, N.; Jung, H.; Chen, L.; Nguyen, D.; Jiang, S.; Jia, X. Intelligent inverse treatment planning via deep reinforcement learning, a proof-of-principle study in high dose-rate brachytherapy for cervical cancer. Phys. Med. Biol. 2019, 64, 115013. [Google Scholar] [CrossRef]

- Yau, K.-L.; Chong, Y.-W.; Fan, X.; Wu, C.; Saleem, Y.; Lim, P.C. Reinforcement Learning Models and Algorithms for Diabetes Management. IEEE Access 2023, 11, 28391–28415. [Google Scholar] [CrossRef]

- Stasolla, F.; Lopez, A.; Akbar, K.; Vinci, L.A.; Cusano, M. Matching Assistive Technology, Telerehabilitation, and Virtual Reality to Promote Cognitive Rehabilitation and Communication Skills in Neurological Populations: A Perspective Proposal. Technologies 2023, 11, 43. [Google Scholar] [CrossRef]

- Whiley, P. Markov Decision Process. IMA J. Manag. Math. 1992, 4, 395. [Google Scholar] [CrossRef][Green Version]

- Huang, Y. Deep Q-Networks. In Deep Reinforcement Learning; Springer: Singapore, 2020; pp. 135–160. [Google Scholar] [CrossRef]

- Sewak, M. Deterministic Policy Gradient and the DDPG. In Deep Reinforcement Learning; Springer: Singapore, 2019; pp. 173–184. [Google Scholar] [CrossRef]

- Anupama, R.; Shaji, A.P.; George, N.; Shihabudeen, S.; Antony, A.; Rishikesh, P.H. Telepresence Robot. SSRN Electron. J. 2021. [Google Scholar] [CrossRef]

- Naseer, F.; Nasir Khan, M.; Nawaz, Z.; Awais, Q. Telepresence Robots and Controlling Techniques in Healthcare System. Comput. Mater. Contin. 2023, 74, 6623–6639. [Google Scholar] [CrossRef]

- Davin, K.; Mollere Doucet, B.M. Telepresence Robotics in OT Education. Am. J. Occup. Ther. 2023, 77 (Suppl. 2), 7711505192p1. [Google Scholar] [CrossRef]

- Yang, L.; Jones, B.; Neustaedter, C.; Singhal, S. Shopping Over Distance through a Telepresence Robot. Proc. ACM Hum.-Comput. Interact. 2018, 2, 191. [Google Scholar] [CrossRef]

- Nordtug, M.; Johannessen, L.E. The social robot? Analyzing whether and how the telepresence robot AV1 affords socialization. Converg. Int. J. Res. New Media Technol. 2023, 29, 1683–1697. [Google Scholar] [CrossRef]

| Research Paper | Technology Used | Advantages | Disadvantages |

|---|---|---|---|

| [1] | Telehealth robotic system |

|

|

| [5] | Telehealth swallowing assessment tool |

|

|

| [6] | Robotic gait therapy |

|

|

| [7] | Robotic exoskeleton |

|

|

| [16] | Deep reinforcement learning for dose optimization |

|

|

| [17] | Deep reinforcement learning for insulin optimization |

|

|

| [This Study] | Telepresence robot with mechanical arm |

|

|

| Technical Specifications | Unit | Min–Max Value |

|---|---|---|

| Robot speed | m/s | 0–3.25 |

| Robot momentum | N.m | 0–0.93 |

| Robot height | Ft | 5′3″ |

| Robot width | Ft | 1′5″ |

| Robot breadth | Ft | 0′6″ |

| Robot weight | Kg | 14 |

| Robot battery | Ah | 35 |

| Technical Specifications | Details |

|---|---|

| Sensor Type | Force-Sensing Resistor (FSR) (piezoelectric sensor) |

| Interface | Arduino-Compatible |

| Force Range | [0.2 N to 20 N] |

| Sensitivity | [0.1 N] |

| Response Time | [<5 ms] |

| Operating Temperature | [−30 °C to +70 °C] |

| Dimensions | [Diameter: 15 mm, Thickness: 0.2 mm] |

| Output | Analog Voltage |

| Application | Measuring force exerted by patient’s hand |

| Additional Features | [Durable, Flexible] |

| Technical Specifications | Details |

|---|---|

| Sensor Type | Flex Sensor |

| Interface | Arduino-Compatible |

| Bend Detection Range | [0° to 90°] |

| Sensitivity | [Change in resistance with bend] |

| Response Time | [<10 ms] |

| Operating Temperature | [−40 °C to +85 °C] |

| Dimensions | [Length: 55 mm, Width: 6 mm] |

| Output | Analog Resistance Change |

| Application | Measuring angle of joint movement |

| Additional Features | [Thin, Lightweight, Flexible] |

| Demographic | Details |

|---|---|

| Total Patients | 406 |

| Gender Distribution | Male: 384 (94.6%) |

| Female: 22 (5.4%) | |

| Average Age | 28.38 years |

| Most Common Cause | Motorcycle Accidents (79%) |

| Lesion Location | Right Plexus: 45.9% |

| Left Plexus: 54.1% | |

| Type of Lesion | Complete: 46.1% |

| C5/C6 Roots: 30.1% | |

| C5/C6/C7 Roots: 20.9% | |

| Lower Roots (C8/T1): 2.9% | |

| Associated Injuries | Head Trauma: 34.2% |

| Long Bones: 38.8% | |

| Clavicle Fractures: 25.9% | |

| Thoracic Trauma: 12.9% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Khan, M.N.; Altalbe, A.; Naseer, F.; Awais, Q. Telehealth-Enabled In-Home Elbow Rehabilitation for Brachial Plexus Injuries Using Deep-Reinforcement-Learning-Assisted Telepresence Robots. Sensors 2024, 24, 1273. https://doi.org/10.3390/s24041273

Khan MN, Altalbe A, Naseer F, Awais Q. Telehealth-Enabled In-Home Elbow Rehabilitation for Brachial Plexus Injuries Using Deep-Reinforcement-Learning-Assisted Telepresence Robots. Sensors. 2024; 24(4):1273. https://doi.org/10.3390/s24041273

Chicago/Turabian StyleKhan, Muhammad Nasir, Ali Altalbe, Fawad Naseer, and Qasim Awais. 2024. "Telehealth-Enabled In-Home Elbow Rehabilitation for Brachial Plexus Injuries Using Deep-Reinforcement-Learning-Assisted Telepresence Robots" Sensors 24, no. 4: 1273. https://doi.org/10.3390/s24041273

APA StyleKhan, M. N., Altalbe, A., Naseer, F., & Awais, Q. (2024). Telehealth-Enabled In-Home Elbow Rehabilitation for Brachial Plexus Injuries Using Deep-Reinforcement-Learning-Assisted Telepresence Robots. Sensors, 24(4), 1273. https://doi.org/10.3390/s24041273