1. Introduction

Due to insufficient light intensity or performance limitations of imaging equipment, low-light images inevitably exist in many machine vision applications, such as intelligent monitoring, automatic driving and mine monitoring. Especially in the field of space-based remote sensing, a large object distance makes it difficult for the sensor to receive enough energy to obtain images with high brightness. Although extending the exposure time can play a role in improvement, carriers such as satellites generally need to maintain a moving state, and it is difficult to meet the needs of both aspects at the same time. Low-light image enhancement (LLIE) helps alleviate the requirement of the movement mechanism to obtain high-quality image information. In addition to low brightness, low-light images often have problems such as poor contrast and strong noise, which hinder subsequent analysis and processing, so it is of great significance to study the enhancement of low-light images. So far, many methods have been proposed to realize LLIE. They can be broadly divided into two categories: traditional methods and deep learning-based methods.

Traditional low-light image enhancement methods are mainly based on histogram equalization [

1,

2,

3], Retinex theory [

4,

5,

6], wavelet transform [

7], defogging or dehazing models [

8,

9,

10] or multi-graph fusion [

11]. The first two methods have been studied the most. Although the histogram equalization method has a simple principle and fast calculation, the enhancement effect is poor. Methods based on Retinex theory usually divide a low-light image into a reflection component and an illumination component based on some prior knowledge, among which the estimated reflection component is the enhancement result. However, the ideal assumption of the reflection component as the enhancement result is not always true, which may lead to degraded enhancement, with effects such as the loss of detail or color distortion. In addition, it is very difficult to find effective prior knowledge, and inaccurate prior knowledge may lead to artifacts or color deviations in the enhancement results. In addition to these mainstream research directions, LECARM [

12] thoroughly studied an LLIE architecture based on the response characteristics of the camera. It analyzed the camera response model parameters and estimated the exposure ratio of each pixel using illumination estimation technology to realize the enhancement of low-light images. But, the exposure ratio estimation method used by LECARM is LIME [

13] based on Retinex theory. Various parameters need to be designed manually, which is difficult to control.

Since KG Lore et al. proposed LLNet [

14] in 2017, low-light image enhancement methods based on deep learning have attracted the attention of more and more researchers. Compared with traditional methods, deep learning-based solutions often have better accuracy. Such methods generally use a large set of labeled pairwise data to drive a pre-defined network to extract image features and realize enhancement. Some dehazing methods can also be used to improve brightness. SID-Net [

15] integrates adversarial learning and contrastive learning into a unified net to efficiently enhance pictures. More can be found in [

16]. However, in the field of LLIE, it is difficult to determine a fixed standard for the target image, and it is extremely difficult to obtain ideal paired data. Moreover, preset target images will drive the model to learn more unique features of the dataset and reduce the generalization ability. In this respect, zero-reference methods in the form of unsupervised learning can enhance low-light input images without requiring references from the dataset, which reduces the dependence on the dataset and is beneficial in expanding the adaptation scenarios of the model. Based on the idea of generation and confrontation, a zero-reference attention-guided U-Net network was designed in EnlightenGAN [

17]. It uses a global discriminator, a local discriminator and self-feature preserving loss to restrain the training process. The ZeroDCE [

18] proposed by Guo et al. uses a variety of images taken at different exposures to train a lightweight depth curve estimation network. A series of elaborately designed loss functions are used to realize training without references.

Although each method can achieve a certain degree of enhancement, there are still some problems. EnlightenGAN establishes the model based on a complete U-Net, which is relatively complex and is not conducive to real-time applications. The fixed-exposure loss function used by ZeroDCE ignores the brightness distribution in the original images, which will limit the contrast of the results. And, its enhancement process requires many iterations, which is contrary to high-speed processing. The subsequently proposed ZeroDCE++ [

19] improves the speed by compressing the size of input images and reducing the number of convolution operations. But, it is easy to lose important information when the scale of targets of interest is small. Most other similar methods also use multiple iterations [

18,

19,

20,

21] or complicated model structures [

17,

22] to achieve enhancement, limiting the processing speed of the algorithm.

In order to solve the above problems, this paper proposes the Zero-Reference Camera Response Network (ZRCRN). Inspired by the CRM, the ZRCRN realizes an efficient framework. Without multiple iterations, it just uses a simple network structure to achieve high-quality enhancement, which effectively improves the processing speed. Two reference-free loss functions are also designed to restrict the training process to improve the generalization ability of the model.

The main contributions of this research can be summarized as follows:

A fast LLIE method is proposed, namely, the ZRCRN, which establishes a double-layer parameter-generating network to automatically extract the exposure ratio of a camera response model to realize enhancement. The process is simplified, and the speed can reach more than twice that of similar methods. In addition, the ZRCRN can still obtain an accuracy comparable to SOTA methods.

A contrast-preserving brightness loss is proposed to retain the brightness distribution in the original input and enhance the contrast of the final output. It converts the input image to a single-channel brightness diagram to avoid serious color deviations caused by subsequent operations and linearly stretches the brightness diagram to obtain the expected target. It can effectively improve the brightness and contrast without requiring references from the dataset, which can improve the generalization ability of the model.

An edge-preserving smoothness loss is proposed to remove noise and enhance details. The variation trend in the input image pixel values is selectively promoted or suppressed to reach the desired goal. While maintaining the advantage of zero references, it can also drive the model to achieve the effect of sharpening and noise reduction, further refining its performance.

The rest of this paper is organized as follows. The related works on low-light image enhancement are reviewed in

Section 2. In

Section 3, we introduce the details of our method. Numerous experiments on standard datasets have been implemented to evaluate the enhancement performance and are presented in

Section 4. The discussion and conclusion are finally given in the last two sections.

3. Methods

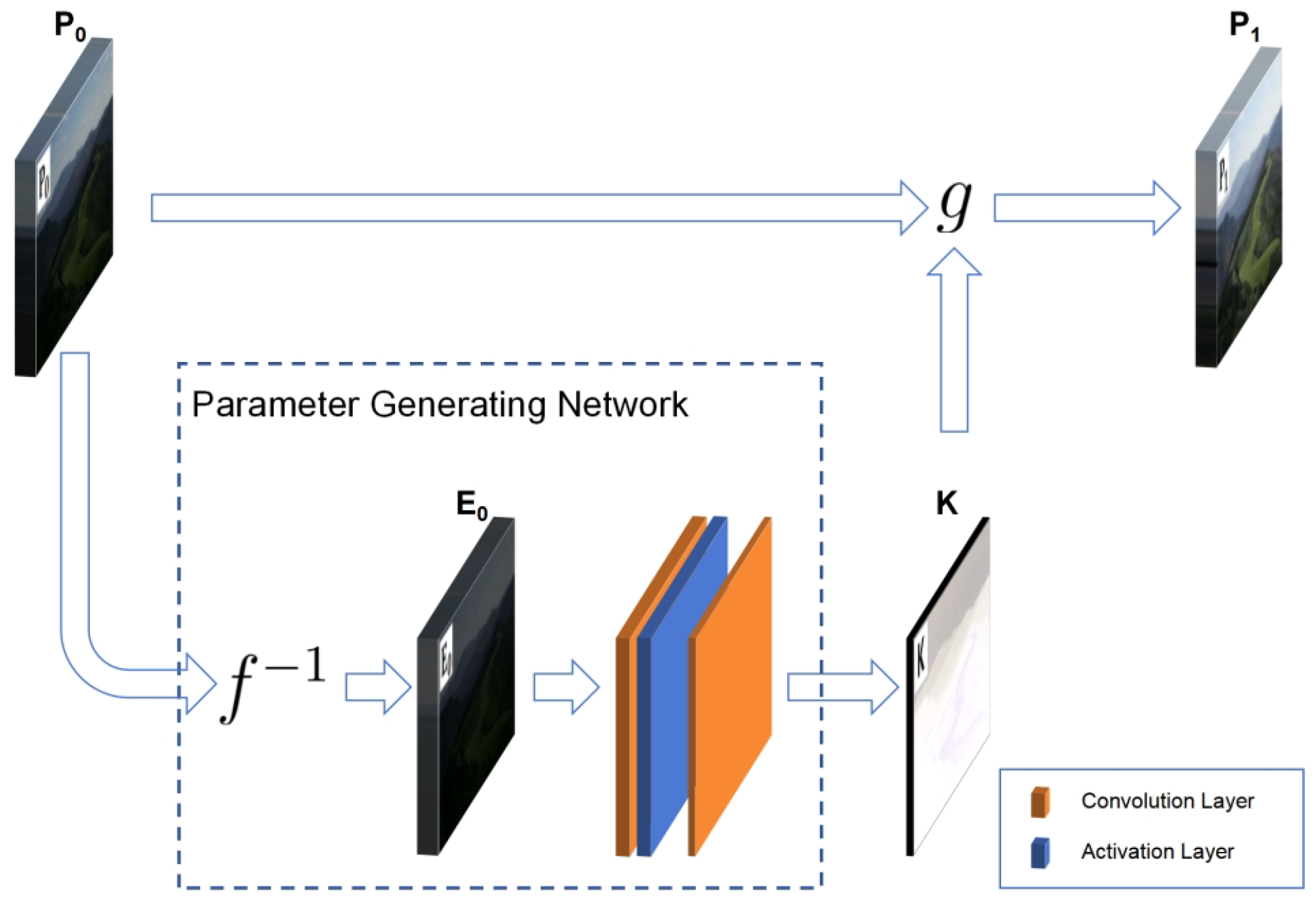

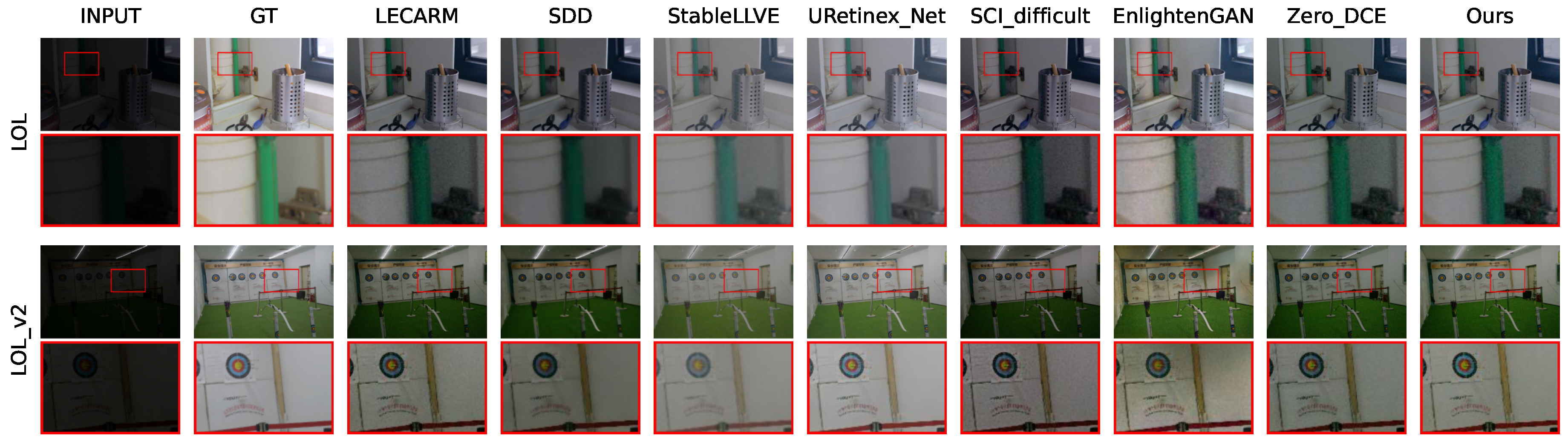

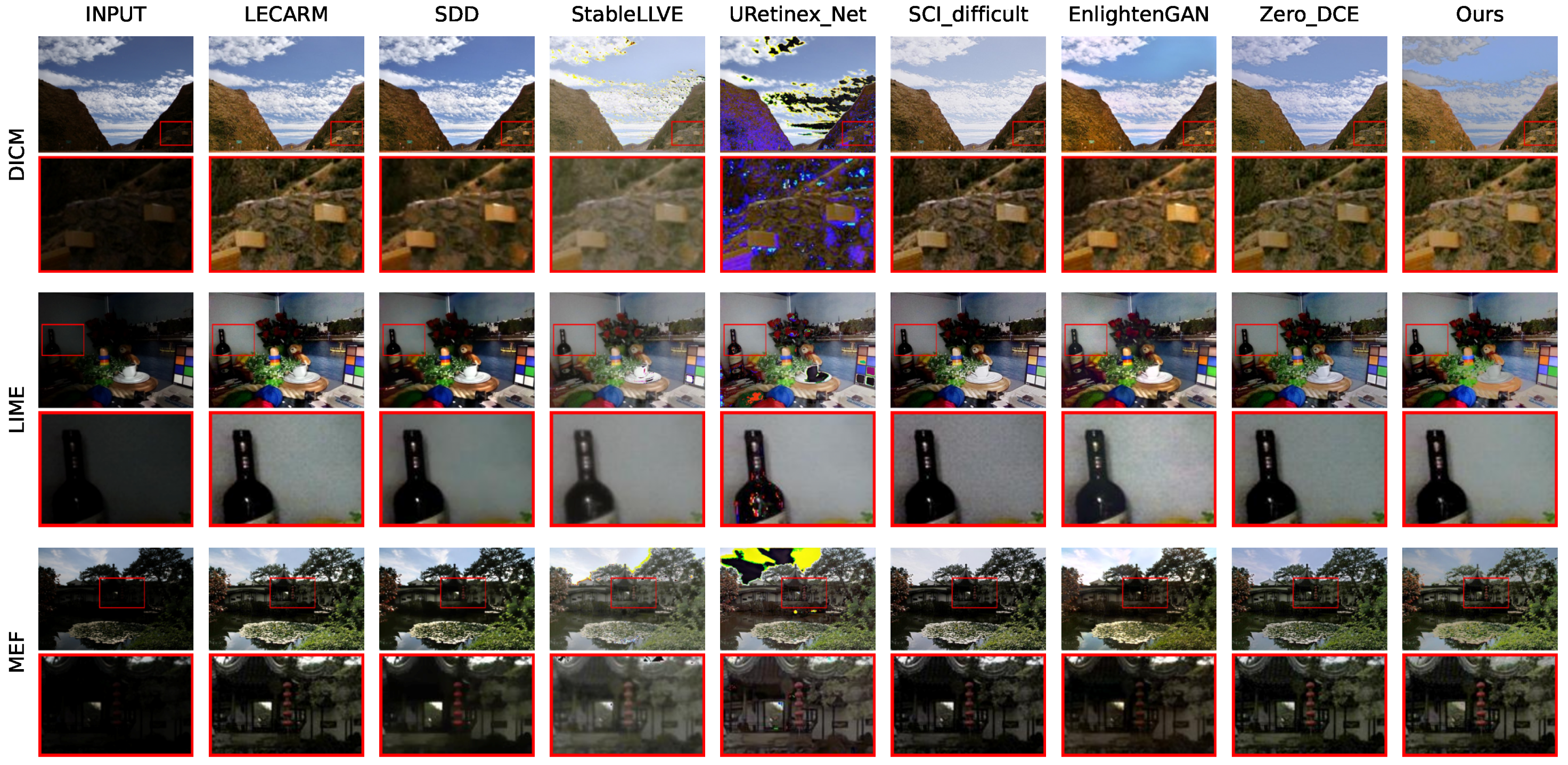

The framework of the ZRCRN is shown in

Figure 2. Based on the CRM, the original low-light input image

is first inverted to the radiation map

according to the sigmoid CRF to reduce complexity. A streamlined parameter-generating network (PGN) is then established to extract the pixel-wise exposure ratio

from the radiation map. Finally, the corresponding BTF calls

to perform one transformation on

to achieve LLIE. The CRM, including the sigmoid CRF, is shown in Equation (

3). The designs of the parameter-generating network and the loss functions are detailed below.

3.1. Parameter-Generating Network

The image quality in low-light scenarios can be improved by adjusting the camera exposure parameters. Extending the exposure time or increasing the aperture size can increase both the brightness and contrast of the final image. At the same time, according to the CRM, the adjustment of exposure parameters can be represented by the parameter k. As long as an appropriate k can be obtained, LLIE can be realized by the CRF or BTF.

In LLIE tasks,

k represents the exposure ratio between the low-light image and the normal-light image. The ideal normal-light image should have a fixed average brightness, and the camera needs to be configured to different exposures for different scenes to obtain this target value. In practice, the scene and exposure of the low-light image are fixed, so the exposure ratio should be a function of the low-light input image. In addition, considering that low-light images often have the problem of uneven illumination, which also needs to be tackled in the enhancement, the optimal exposure ratio should vary at different coordinates. Accordingly, the exposure ratio should be a matrix matching

pixel by pixel, and the objective function

should meet the following relationship:

in which

is the exposure ratio matrix. Although

can be obtained from the features extracted from

directly, a deeper and more complex model may be needed to achieve a good fit since

is obtained by the camera through a CRF transformation, and the CRF is a collection of many transformations. In addition, the main function of

is to raise the environmental radiation to the level of the normal illumination scene, which is independent of the complex CRF transformation inside the camera. Therefore, the exposure ratio parameter

required by the enhancement can be obtained by the following process: first, inversely transform

by the CRF to obtain the radiation map in the low-light scene (

), and then extract

from

. This process can be expressed as the following equation:

At this time, the required objective function (

) has weaker nonlinearity, which is conducive to achieving a better fit using a simpler model and improving the processing speed.

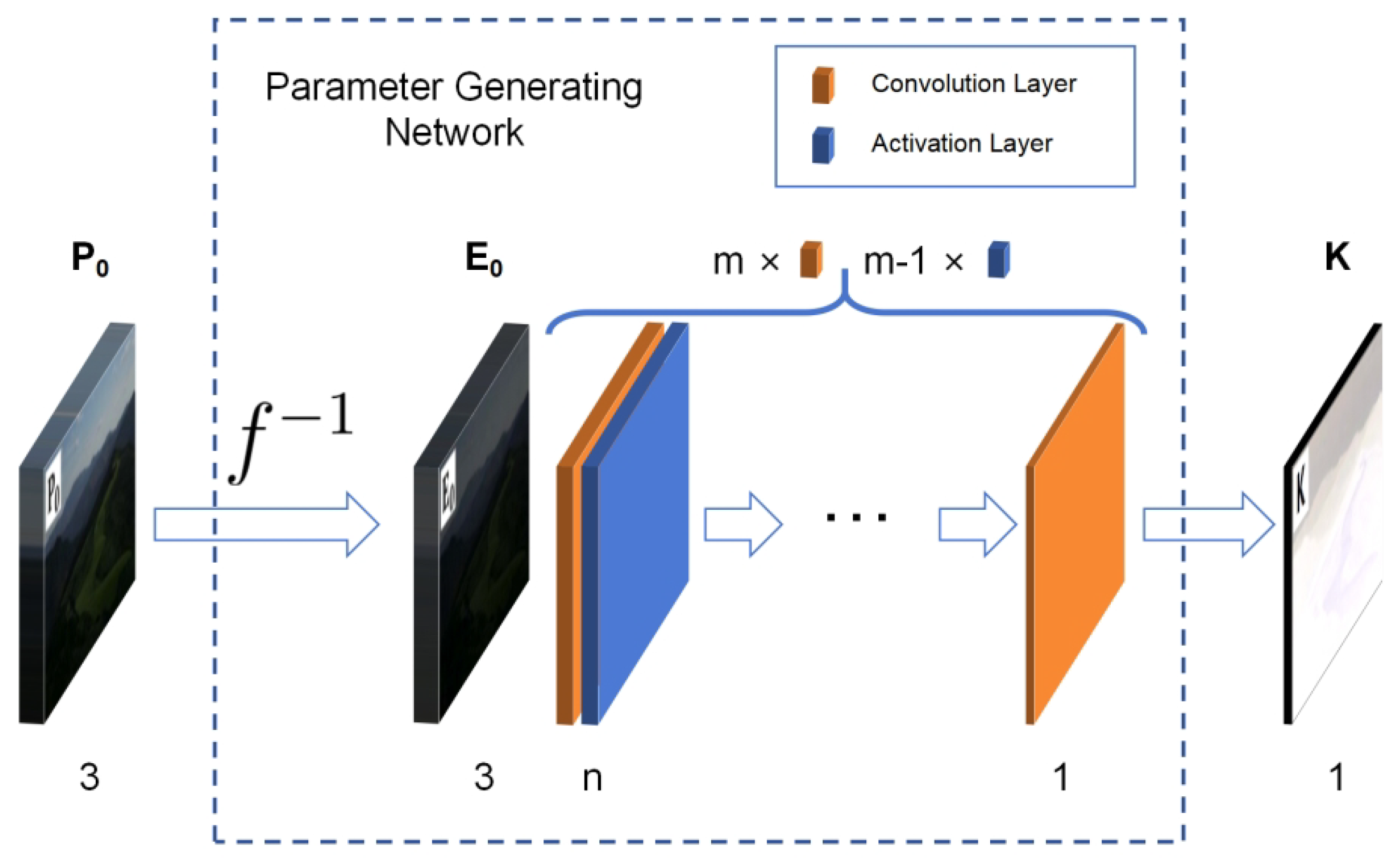

Convolution requires very few parameters to achieve the extraction of important information in the matrix. It has the characteristics of translation invariance and locality and is widely used in the field of image processing. The PGN is built using

m convolution layers and

activation layers, with each convolution layer followed by one activation layer, except for the last one. The kernel size of the convolution layer is

. The height and width of the output image remain unchanged by padding. The activation layer uses the LeakyReLU function with a negative slope of 0.01 as the activation function. The first convolution layer has 3 channels. The last has 1 channel. All output channels of the other convolution layers are

n. The overall parameter-generating network structure is shown in

Figure 3. A larger

m or

n can improve the representation ability of the model. Their best values were determined to be 2 and 2 through experiments described in the next section.

3.2. Loss Function

The loss function plays an important role in learning-based methods. It controls the direction of the training process. With it, a model can acquire specific abilities. A loss function should be derivable to implement backpropagation. The MSE is a commonly used loss function. It measures the distance between two instances and is globally derivable. But, it needs a reference, which is not always available in a dataset. Considering that some traditional methods have unique advantages in enhancing specific properties of an image, we propose two loss functions to generate references according to the low-light inputs.

Aiming at the three main problems of low brightness, low contrast and strong noise, two loss functions are designed to constrain the training process: contrast-preserving brightness loss and edge-preserving smoothness loss. Both are essentially MSE loss functions. Some attributes of the original image are separately extracted to construct the target image with restricted enhancement. Then, the corresponding properties are calculated from the output of the network. The deviation between these two elements is minimized so as to achieve the enhancement effect.

3.2.1. Contrast-Preserving Brightness Loss

The original low-light image () has three RGB channels and low pixel values in each channel. The brightness map is extracted from , and linear stretching is performed to obtain the target brightness map. The specific process is as follows:

In order to reduce the impact on color information, the RGB channel is fused into a single channel to obtain the brightness map. The conversion formula uses the following, which is more suitable for human perception:

where

represents the value of the

i-th pixel in the image. It is a vector consisting of a red channel value (

), a green channel value (

) and a blue channel value (

).

After applying

to each pixel of

, the brightness map (

) is dark. Then, it is linearly stretched to enhance the brightness and contrast. The following equation is used to extend the pixel values of

linearly to the

interval to obtain the expected brightness map (

):

where

and

represent the maximum and minimum values of all elements in the argument matrix. Considering the purpose of increasing the brightness,

b can be directly set as the theoretical maximum value of 1. Since the brightness distribution of the original image is concentrated close to the minimum value, the value of

a should be more carefully determined. The larger

a is, the smaller the width of the brightness distribution interval and the lower the contrast. If

a is too small, the visual effect of the target image will obviously be darker, and the corresponding model will not effectively enhance the brightness of the original image. The best value of

a was determined to be 0.2 through experiments described in the next section.

Once

is obtained, the contrast-preserving brightness loss can be calculated by the following equation:

where

N represents the total count of pixels in the image.

denotes the

i-th element (including three RGB channels) of the enhancement result (

).

is the

i-th element of

. The brightness distribution of a low-light image is generally concentrated in a small range close to zero. Linearly stretching it to

can improve the brightness and contrast. Compared with the commonly used fixed target brightness of 0.6 [

18,

19,

21,

22], the contrast-preserving brightness loss is more conducive to retaining the spatial distribution information of the brightness in the original image and improving the contrast of the enhancement results.

3.2.2. Edge-Preserving Smoothness Loss

In addition to the brightness and contrast, noise is also a major problem in low-light images. Suppressing the variation trend in the input image pixel values can remove noise. The variation trends in the image pixel values are always represented as a gradient diagram. Noise appears as a large number of non-zero isolated points in the gradient diagram. To realize proper noise reduction, the gradient map and edge map are generated based on , the values in the non-edge region of the gradient map are set to zero and the gradient in the edge region is properly amplified to obtain the target gradient map. Specifically, it includes the following three steps:

Edge Detection. The main basis for generating the edge diagram is the Canny algorithm [

32]. Firstly, horizontal and vertical Gaussian filters are applied to

to suppress the influence of noise on edge detection. The size and standard deviation of the filter inherit the default parameters of 5 and 1. Then, the gradient information is extracted. The horizontal and vertical Sobel operators of size

are used to calculate the gradient diagram in two directions of each of the three RGB channels from the filtered result. And, the amplitude of the total gradient at each position is obtained by summing the gradient amplitudes in each channel. Next, the double-threshold algorithm is used to find the positions of the strong and weak edges. Finally, the edge diagram (

) is obtained by judging the connectivity and removing the isolated weak edge. The edge refinement operation with non-maximum suppression from the original Canny algorithm is skipped. So, there is no need to calculate the direction of the total gradient. The main purpose is to keep the gradient edge with a larger width in the original image and make the enhancement result more natural. The two thresholds

and

of the double-threshold algorithm have a great influence on the edge detection results. Their best values, as determined by the experiments described in the next section, are 0.707 and 1.414, respectively.

Gradient Extraction. The horizontal and vertical Sobel operators are applied directly to

to obtain a total of six gradient maps in three channels and two directions. Then, the total gradient amplitude (the sum of the gradient amplitudes in all channels) is calculated as the pixel value in the final gradient map (

). The entire gradient extraction process can be expressed as the following equation:

Selectively Scaling. The edge diagram (

) is used as a mask to multiply the gradient map (

) element by element, which can filter out the non-edge part of

. Then, the result is scaled by an amplification factor (

) to obtain the expected target image (

):

where ⨀ indicates the multiplication of two metrics pixel by pixel. The gradient at the edge of the low-light image will be enhanced correspondingly after the brightness is enhanced. So, the target gradient map should also amplify this part. The best value of

was determined to be 2 by the experiments described in the next section.

After obtaining

, denoting the total count of elements in the image by

N, the edge-preserving smoothness loss can be calculated by the following formula:

Although each step in the calculation of the loss function is derivable, the total gradient amplitude is solved by the root operation, and the problem of dividing by 0 can easily occur. In practice, an additional minimum of 1 × 10−8 is added to the base number to ensure that the training process is continuous.

The gradient map of the low-light image often contains a large number of non-zero isolated points. Making them close to zero can achieve smoothness. Enlarging the gradients at the edge positions is beneficial in retaining the details of the original image. To the best of our knowledge, in zero-reference LLIE, the loss function is currently less able to suppress noise. Although some work tried to constrain the brightness difference in the neighborhood, the main objective of increasing the consistency of neighborhood brightness differences between the result and the original image [

18,

19] is to maintain the brightness distribution trend. If the brightness difference tends to zero [

21,

27,

28], it will lead to global smoothing, the easy loss of details and lower contrast. The edge-preserving smoothness loss attempts to separate the edge region specifically, enhancing the variation in this area and suppressing others to build references, which can further improve precision.

3.2.3. Total Loss Function

Finally, according to the multi-loss combination strategy proposed by A. Kendall et al. [

30], two learnable parameters are added to combine the two loss functions together to obtain the total loss function:

where p and q are two learnable parameters with an initial value of zero. To a certain extent, they can release us from the adjustment of weights and improve precision.

In practice, the expected target images required for each loss can be calculated according to the images in the training dataset before formal training and directly loaded during training to enhance efficiency.