Research on Convolutional Neural Network Inference Acceleration and Performance Optimization for Edge Intelligence

Abstract

:1. Introduction

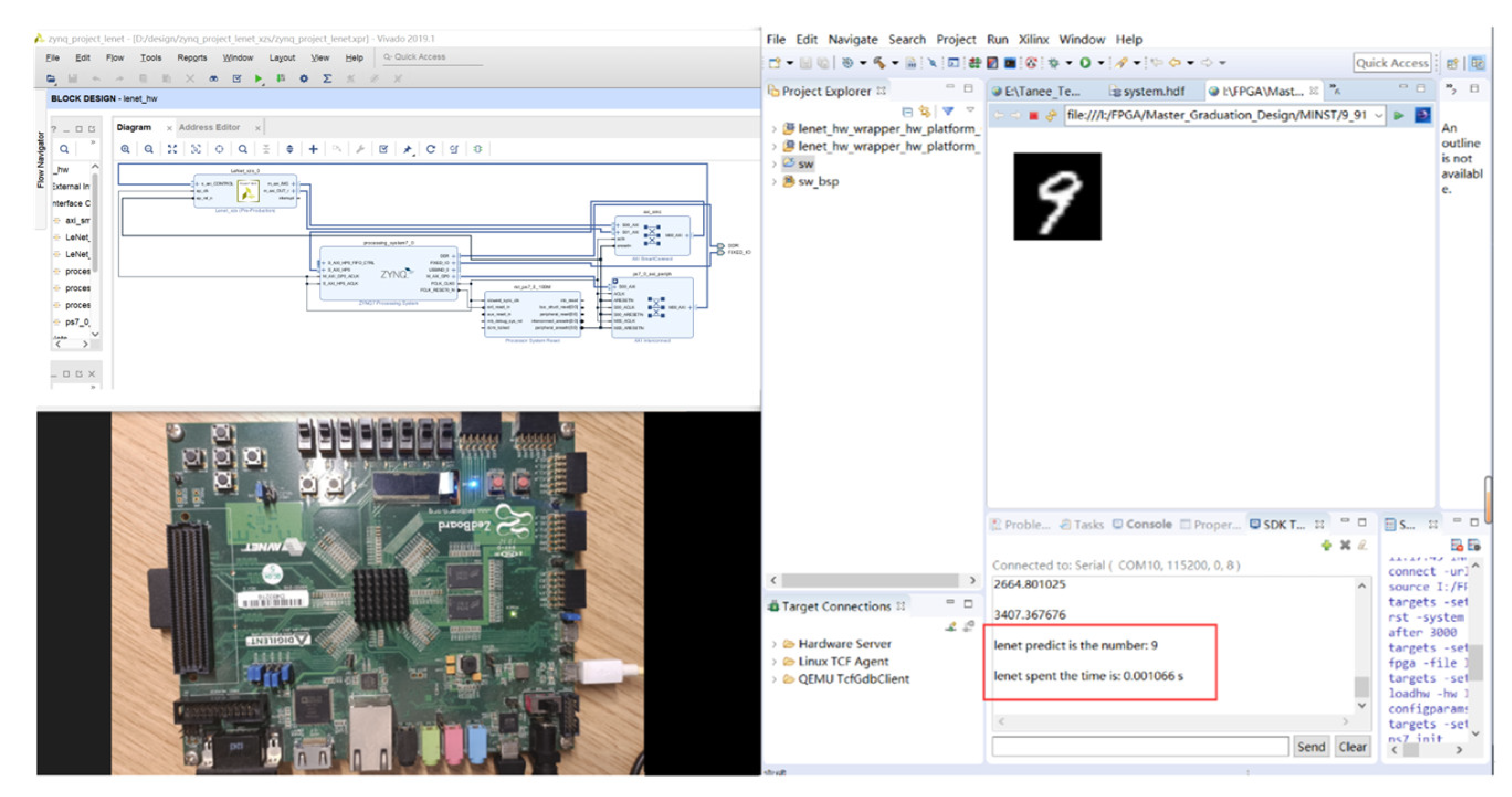

- We designed energy-efficient accelerators for the LeNet-5 network using Vivado high-level synthesis (HLS), implementing convolutional calculations, activation, pooling, and fully connected operations on the PL side.

- We applied Gaussian filtering and histogram equalization algorithms on the PS side to perform noise filtering on images, enhancing the differentiation between target characters and background noise, highlighting character details for improved recognition by the Lenet-5 convolutional neural network on the FPGA platform.

- We quantized weight parameters and analyzed resource consumption for different data types to determine the optimal solution. We then transformed our fixed-point quantization into a parameterized quantization to ensure compatibility with various FPGA platforms.

- We designed two different optimization schemes for the convolution calculations and compared our experimental results, demonstrating that the designed accelerators achieved faster speeds and lower power consumption compared to platforms like CPU.

2. Related Work

3. Methodology

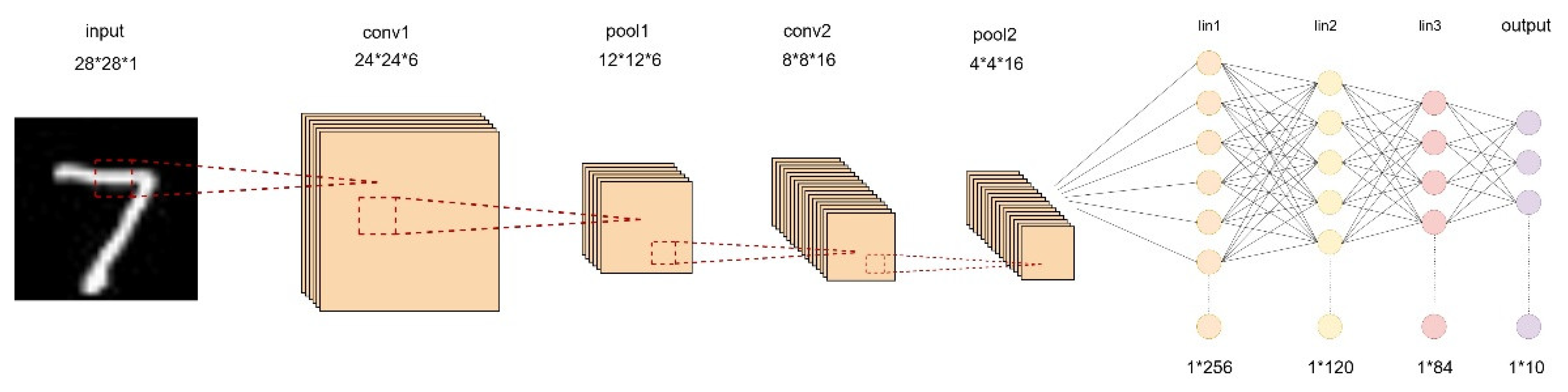

3.1. Optimization of the LeNet-5 Model

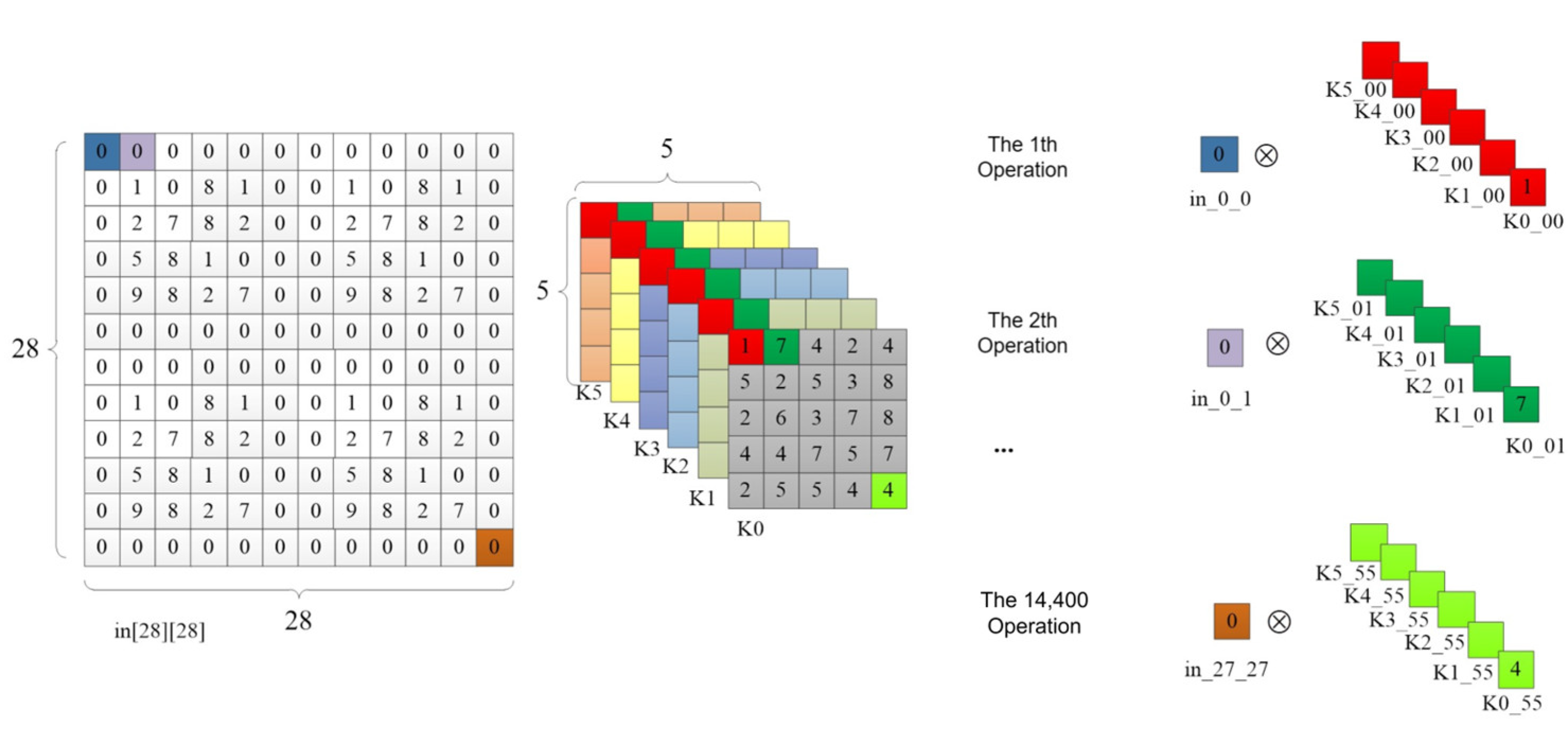

3.2. Convolution Calculation

3.3. Image Enhancement Algorithms

3.4. CNN Accelerator Strategy

3.4.1. Loop Unrolling

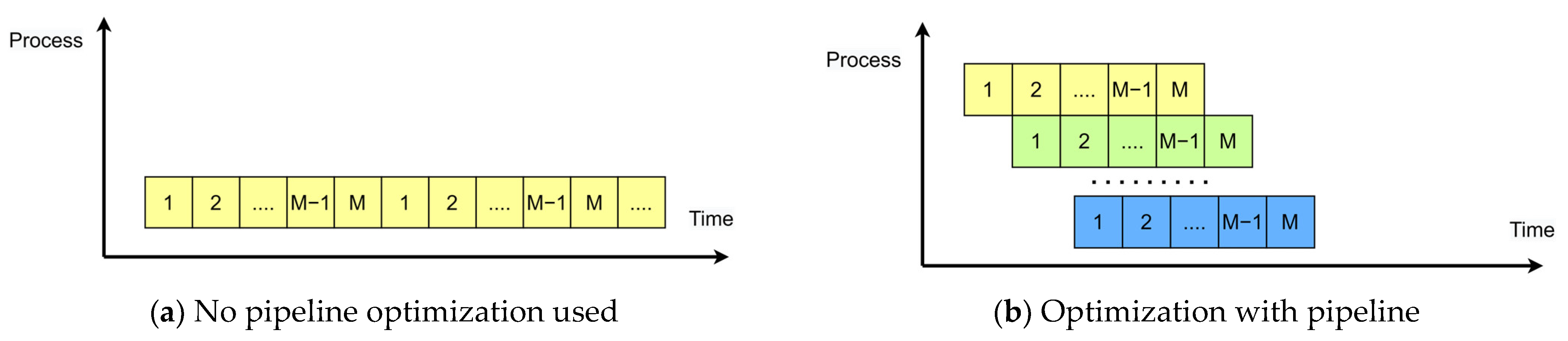

3.4.2. Pipeline Design

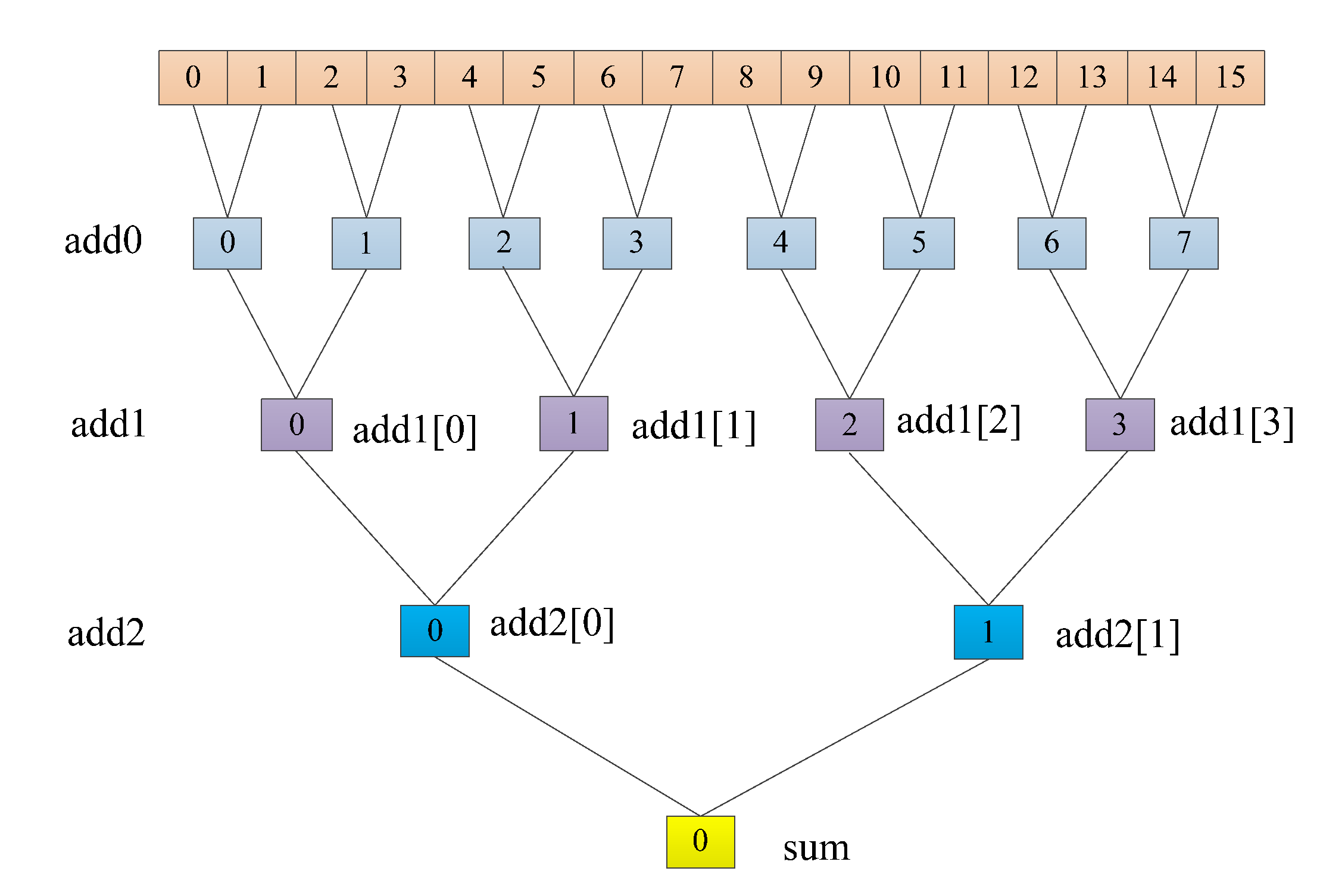

3.4.3. Adder Tree

4. Accelerator Implementation

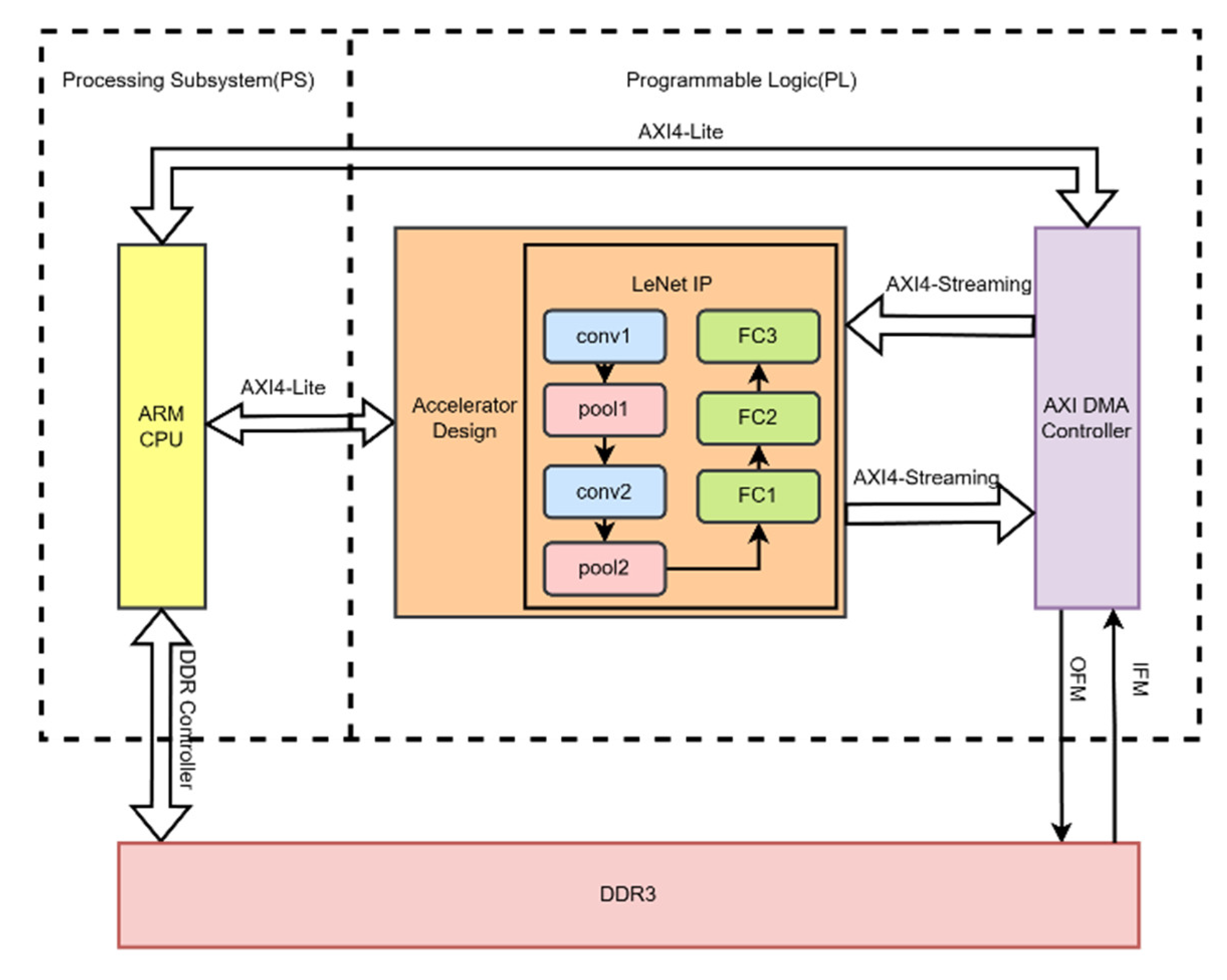

4.1. Hardware Accelerator Architecture

4.2. UNROLL Accelerator

4.3. PIPELINE Accelerator

4.4. Fixed-Point Parameters

5. Experimental Evaluation

6. Conclusions

- The separation of network training on a CPU platform and network inference acceleration on an FPGA platform can be improved for a more integrated system. Future work should focus on accelerating the backpropagation process to enhance the system’s completeness.

- Most FPGA platforms operate at frequencies ranging from 100 to 300 MHz. In this design, a frequency of 100 MHz was used to ensure correct data transfer. Optimizations can be applied to data transfer to increase clock frequencies.

- Exploring the fusion of multiple FPGAs, where multiple FPGAs collaborate, is an area that has not been extensively studied in this work. Many planning and allocation issues need to be addressed in this direction, making it a potential future research area.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Rajabi, M.; Hasanzadeh, R.P. A Modified adaptive hysteresis smoothing approach for image denoising based on spatial domain redundancy. Sens. Imaging 2021, 22, 42. [Google Scholar] [CrossRef]

- Rajabi, M.; Golshan, H.; Hasanzadeh, R.P. Non-local adaptive hysteresis despeckling approach for medical ultrasound images. Biomed. Signal Process. Control 2023, 85, 105042. [Google Scholar] [CrossRef]

- Ghaderzadeh, M.; Hosseini, A.; Asadi, F.; Abolghasemi, H.; Bashash, D.; Roshanpoor, A. Automated detection model in classification of B-lymphoblast cells from normal B-lymphoid precursors in blood smear microscopic images based on the majority voting technique. Sci. Program. 2022, 2022, 4801671. [Google Scholar] [CrossRef]

- Yu, G.; Wang, T.; Guo, G.; Liu, H. SFHG-YOLO: A Simple Real-Time Small-Object-Detection Method for Estimating Pineapple Yield from Unmanned Aerial Vehicles. Sensors 2023, 23, 9242. [Google Scholar] [CrossRef] [PubMed]

- Slam, W.; Li, Y.; Urouvas, N. Frontier Research on Low-Resource Speech Recognition Technology. Sensors 2023, 23, 9096. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Han, Y.; Wang, C.; Zhao, Q.; Chen, X.; Chen, M. In-edge ai: Intelligentizing mobile edge computing, caching and communication by federated learning. IEEE Netw. 2019, 33, 156–165. [Google Scholar] [CrossRef]

- Li, E.; Zhou, Z.; Chen, X. Edge intelligence: On-demand deep learning model co-inference with device-edge synergy. In Proceedings of the 2018 Workshop on Mobile Edge Communications, Budapest, Hungary, 20 August 2018; pp. 31–36. [Google Scholar]

- Wang, X.; Han, Y.; Leung, V.C.; Niyato, D.; Yan, X.; Chen, X. Convergence of edge computing and deep learning: A comprehensive survey. IEEE Commun. Surv. Tutor. 2020, 22, 869–904. [Google Scholar] [CrossRef]

- Benardos, P.; Vosniakos, G.-C. Optimizing feedforward artificial neural network architecture. Eng. Appl. Artif. Intell. 2007, 20, 365–382. [Google Scholar] [CrossRef]

- Bi, Q.; Goodman, K.E.; Kaminsky, J.; Lessler, J. What is Machine Learning? A Primer for the Epidemiologist. Am. J. Epidemiol. 2019, 188, 2222–2239. [Google Scholar] [CrossRef]

- Tang, Z.; Shao, K.; Zhao, D.; Zhu, Y. Recent progress of deep reinforcement learning: From AlphaGo to AlphaGo Zero. Control Theory Appl. 2017, 34, 1529–1546. [Google Scholar] [CrossRef]

- Zeng, L.; Chen, X.; Zhou, Z.; Yang, L.; Zhang, J. Coedge: Cooperative dnn inference with adaptive workload partitioning over heterogeneous edge devices. IEEE/ACM Trans. Netw. 2020, 29, 595–608. [Google Scholar] [CrossRef]

- Zhang, W.; Yang, D.; Peng, H.; Wu, W.; Quan, W.; Zhang, H.; Shen, X. Deep reinforcement learning based resource management for DNN inference in industrial IoT. IEEE Trans. Veh. Technol. 2021, 70, 7605–7618. [Google Scholar] [CrossRef]

- Guo, X.-t.; Xie, X.-s.; Lang, X. Pruning feature maps for efficient convolutional neural networks. Optik 2023, 281, 170809. [Google Scholar] [CrossRef]

- Liu, Z.; Li, J.; Shen, Z.; Huang, G.; Yan, S.; Zhang, C. Learning efficient convolutional networks through network slimming. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2736–2744. [Google Scholar]

- Qin, H.; Gong, R.; Liu, X.; Shen, M.; Wei, Z.; Yu, F.; Song, J. Forward and backward information retention for accurate binary neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 2250–2259. [Google Scholar]

- Chen, K.; Tao, W. Once for all: A two-flow convolutional neural network for visual tracking. IEEE Trans. Circuits Syst. Video Technol. 2017, 28, 3377–3386. [Google Scholar] [CrossRef]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef] [PubMed]

- Jiang, J.; Jiang, M.; Zhang, J.; Dong, F. A CPU-FPGA Heterogeneous Acceleration System for Scene Text Detection Network. IEEE Trans. Circuits Syst. II Express Briefs 2022, 69, 2947–2951. [Google Scholar] [CrossRef]

- Zhai, J.; Li, B.; Lv, S.; Zhou, Q. FPGA-based vehicle detection and tracking accelerator. Sensors 2023, 23, 2208. [Google Scholar] [CrossRef]

- Zhang, J.-F.; Lee, C.-E.; Liu, C.; Shao, Y.S.; Keckler, S.W.; Zhang, Z. SNAP: A 1.67—21.55 TOPS/W sparse neural acceleration processor for unstructured sparse deep neural network inference in 16nm CMOS. In Proceedings of the 2019 Symposium on VLSI Circuits, Kyoto, Japan, 9–14 June 2019; pp. C306–C307. [Google Scholar]

- Venkat, A.; Tullsen, D.M. Harnessing ISA diversity: Design of a heterogeneous-ISA chip multiprocessor. ACM SIGARCH Comput. Archit. News 2014, 42, 121–132. [Google Scholar] [CrossRef]

- Nannipieri, P.; Giuffrida, G.; Diana, L.; Panicacci, S.; Zulberti, L.; Fanucci, L.; Hernandez, H.G.M.; Hubner, M. ICU4SAT: A General-Purpose Reconfigurable Instrument Control Unit Based on Open Source Components. In Proceedings of the 2022 IEEE Aerospace Conference (AERO), Big Sky, MT, USA, 5–12 March 2022; pp. 1–9. [Google Scholar]

- Zulberti, L.; Monopoli, M.; Nannipieri, P.; Fanucci, L. Highly-Parameterised CGRA Architecture for Design Space Exploration of Machine Learning Applications Onboard Satellites. Authorea Prepr. 2023. [Google Scholar] [CrossRef]

- Huang, K.-Y.; Juang, J.-C.; Tsai, Y.-F.; Lin, C.-T. Efficient FPGA Implementation of a Dual-Frequency GNSS Receiver with Robust Inter-Frequency Aiding. Sensors 2021, 21, 4634. [Google Scholar] [CrossRef]

- Li, Z.; Wang, L.; Guo, S.; Deng, Y.; Dou, Q.; Zhou, H.; Lu, W.L. An 8-bit fixed-point CNN hardware inference engine. In Proceedings of the 2017 IEEE International Symposium on Parallel and Distributed Processing with Applications and 2017 IEEE International Conference on Ubiquitous Computing and Communications (ISPA/IUCC), Guangzhou, China, 12–15 December 2017; pp. 12–15. [Google Scholar]

- Wei, K.; Honda, K.; Amano, H. An implementation methodology for Neural Network on a Low-end FPGA Board. In Proceedings of the 2020 Eighth International Symposium on Computing and Networking (CANDAR), Okinawa, Japan, 24–27 November 2020; pp. 228–234. [Google Scholar]

- Huang, Q.; Wang, D.; Dong, Z.; Gao, Y.; Cai, Y.; Li, T.; Wu, B.; Keutzer, K.; Wawrzynek, J. Codenet: Efficient deployment of input-adaptive object detection on embedded fpgas. In Proceedings of the 2021 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Virtual Event, 28 February–2 March 2021; pp. 206–216. [Google Scholar]

- Ma, N.; Zhang, X.; Zheng, H.-T.; Sun, J. Shufflenet v2: Practical guidelines for efficient cnn architecture design. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 116–131. [Google Scholar]

- Zhang, X.; Wang, J.; Zhu, C.; Lin, Y.; Xiong, J.; Hwu, W.-m.; Chen, D. AccDNN: An IP-based DNN generator for FPGAs. In Proceedings of the 2018 IEEE 26th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM), Boulder, CO, USA, 29 April–1 May 2018; p. 210. [Google Scholar]

- Guan, Y.; Liang, H.; Xu, N.; Wang, W.; Shi, S.; Chen, X.; Sun, G.; Zhang, W.; Cong, J. FP-DNN: An automated framework for mapping deep neural networks onto FPGAs with RTL-HLS hybrid templates. In Proceedings of the 2017 IEEE 25th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM), Napa, CA, USA, 30 April–2 June 2017; pp. 152–159. [Google Scholar]

- Ahmad, A.; Pasha, M.A. Towards design space exploration and optimization of fast algorithms for convolutional neural networks (CNNs) on FPGAs. In Proceedings of the 2019 Design, Automation & Test in Europe Conference & Exhibition (DATE), Florence, Italy, 25–29 March 2019; pp. 1106–1111. [Google Scholar]

- Liang, Y.; Lu, L.; Xiao, Q.; Yan, S. Evaluating fast algorithms for convolutional neural networks on FPGAs. IEEE Trans. Comput. -Aided Des. Integr. Circuits Syst. 2019, 39, 857–870. [Google Scholar] [CrossRef]

- Bao, C.; Xie, T.; Feng, W.; Chang, L.; Yu, C. A power-efficient optimizing framework fpga accelerator based on winograd for yolo. IEEE Access 2020, 8, 94307–94317. [Google Scholar] [CrossRef]

- Podili, A.; Zhang, C.; Prasanna, V. Fast and efficient implementation of convolutional neural networks on FPGA. In Proceedings of the 2017 IEEE 28Th International Conference on Application-Specific Systems, Architectures and Processors (ASAP), Seattle, WA, USA, 10–12 July 2017; pp. 11–18. [Google Scholar]

- Zhang, C.; Wu, D.; Sun, J.; Sun, G.; Luo, G.; Cong, J. Energy-efficient CNN implementation on a deeply pipelined FPGA cluster. In Proceedings of the 2016 International Symposium on Low Power Electronics and Design, San Francisco, CA, USA, 8–10 August 2016; pp. 326–331. [Google Scholar]

- Qiu, J.; Wang, J.; Yao, S.; Guo, K.; Li, B.; Zhou, E.; Yu, J.; Tang, T.; Xu, N.; Song, S. Going deeper with embedded FPGA platform for convolutional neural network. In Proceedings of the 2016 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Monterey, CA, USA, 21–23 February 2016; pp. 26–35. [Google Scholar]

- Ajili, M.T.; Hara-Azumi, Y. Multimodal Neural Network Acceleration on a Hybrid CPU-FPGA Architecture: A Case Study. IEEE Access 2022, 10, 9603–9617. [Google Scholar] [CrossRef]

- Herkle, A.; Rossak, P.; Mandry, H.; Becker, J.; Ortmanns, M. Comparison of measurement and readout strategies for RO-PUFs on Xilinx Zynq-7000 SoC FPGAs. In Proceedings of the 2020 IEEE International Symposium on Circuits and Systems (ISCAS), Sevilla, Spain, 12–14 October 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–5. [Google Scholar]

- Lammie, C.; Olsen, A.; Carrick, T.; Azghadi, M.R. Low-Power and High-Speed Deep FPGA Inference Engines for Weed Classification at the Edge. IEEE Access 2019, 7, 51171–51184. [Google Scholar] [CrossRef]

- Medus, L.D.; Iakymchuk, T.; Frances-Villora, J.V.; Bataller-Mompeán, M.; Rosado-Muñoz, A. A Novel Systolic Parallel Hardware Architecture for the FPGA Acceleration of Feedforward Neural Networks. IEEE Access 2019, 7, 76084–76103. [Google Scholar] [CrossRef]

- Chetlur, S.; Woolley, C.; Vandermersch, P.; Cohen, J.; Tran, J.; Catanzaro, B.; Shelhamer, E. cudnn: Efficient primitives for deep learning. arXiv 2014, arXiv:1410.0759. [Google Scholar] [CrossRef]

- Hu, X.; Zhang, P. Accelerated Design of Convolutional Neural Network based on FPGA. Int. Core J. Eng. 2021, 7, 195–201. [Google Scholar]

- Park, S.-S.; Park, K.-B.; Chung, K.-S. Implementation of a CNN accelerator on an Embedded SoC Platform using SDSoC. In Proceedings of the 2nd International Conference on Digital Signal Processing, Tokyo, Japan, 25–27 February 2018; pp. 161–165. [Google Scholar]

- Bjerge, K.; Schougaard, J.H.; Larsen, D.E. A scalable and efficient convolutional neural network accelerator using HLS for a system-on-chip design. Microprocess. Microsyst. 2021, 87, 104363. [Google Scholar] [CrossRef]

| Layer Type | Input | Output | Kernel | Stride |

|---|---|---|---|---|

| Conv | 28 × 28 × 1 | 24 × 24 × 6 | 5 × 5 | 1 |

| Pool | 24 × 24 × 6 | 12 × 12 × 6 | 2 × 2 | 2 |

| Conv | 12 × 12 × 6 | 8 × 8 × 16 | 5 × 5 | 1 |

| Pool | 8 × 8 × 16 | 4 × 4 × 16 | 2 × 2 | 2 |

| FC | 1 × 256 | 1 × 120 | 256 × 120 | – |

| FC | 1 × 120 | 1 × 84 | 120 × 84 | – |

| FC | 1 × 84 | 1 × 10 | 84 × 10 | – |

| Row | Code |

|---|---|

| 1 | for(int i = 0; i < 24;i++){ |

| 2 | for(int j = 0; j < 24; j++){ |

| 3 | for(int y = 0; y < 5; y++){ |

| 4 | for(int x = 0; x < 5; x++){ |

| 5 | #pragma HLS PIPELINE |

| 6 | for(int k = 0; k < 6; k++){ |

| 7 | out[i][j][k] += in[i + y][j + x] × Kw[k][y][x]; |

| 8 | }}}}} |

| 9 | for(int i = 0; i < 24; i++){ |

| 10 | for(int j = 0; j < 24; j++){ |

| 11 | #pragma HLS PIPELINE |

| 12 | for(int k = 0; k < 6; k++){ |

| 13 | out[i][j][k] += Kb[k]; |

| 14 | }}} |

| Row | Code |

|---|---|

| 1 | for (int i = 0; i < 120; i++){ |

| 2 | sum = 0; |

| 3 | for(int j_set = 0; j_set < 16; j_set++){ |

| 4 | #pragma HLS PIPELINE |

| 5 | for(int j = 0; j < 16; j++){ |

| 6 | tmp[j] = in[j + j_set × 16]*fc1_w[i][j + j_set × 16]; |

| 7 | } |

| 8 | for(int k = 0; k < 8; k++){ |

| 9 | add0[k] = tmp[k × 2] + tmp[k × 2 + 1]; |

| 10 | } |

| 11 | for(int k = 0; k < 4; k++){ |

| 12 | add1[k] = add0[k × 2] + add0[k × 2 + 1]; |

| 13 | } |

| 14 | for(int k = 0; k < 2; k++){ |

| 15 | add2[k] = add1[k × 2] + add1[k × 2 + 1]; |

| 16 | } |

| 17 | sum += add2[0] + add2[1]; |

| 18 | } |

| 19 | out[i] = sum; |

| 20 | } |

| FPGA Resource | BRAM | DSP | FF | LUT |

|---|---|---|---|---|

| Available quantity | 1090 | 900 | 437,200 | 218,600 |

| Defined as floating point | 260 | 1282 | 134,701 | 202,357 |

| Defined as integer | 0 | 256 | 17,049 | 5523 |

| Defined as fixed point | 0 | 1024 | 86,264 | 114,800 |

| Defined as floating point | 260 | 1282 | 134,701 | 202,357 |

| Design | Unoptimized | UNROLL | PIPELINE |

|---|---|---|---|

| BRAM | 78 | 98 | 102 |

| DSP | 10 | 112 | 177 |

| FF | 3461 | 17,374 | 22,762 |

| LUT | 6569 | 27,371 | 39,443 |

| Power | 1.874 w | 2.029 w | 2.193 w |

| Time | 20.37 ms | 16.02 ms | 1.07 ms |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liang, Y.; Tan, J.; Xie, Z.; Chen, Z.; Lin, D.; Yang, Z. Research on Convolutional Neural Network Inference Acceleration and Performance Optimization for Edge Intelligence. Sensors 2024, 24, 240. https://doi.org/10.3390/s24010240

Liang Y, Tan J, Xie Z, Chen Z, Lin D, Yang Z. Research on Convolutional Neural Network Inference Acceleration and Performance Optimization for Edge Intelligence. Sensors. 2024; 24(1):240. https://doi.org/10.3390/s24010240

Chicago/Turabian StyleLiang, Yong, Junwen Tan, Zhisong Xie, Zetao Chen, Daoqian Lin, and Zhenhao Yang. 2024. "Research on Convolutional Neural Network Inference Acceleration and Performance Optimization for Edge Intelligence" Sensors 24, no. 1: 240. https://doi.org/10.3390/s24010240

APA StyleLiang, Y., Tan, J., Xie, Z., Chen, Z., Lin, D., & Yang, Z. (2024). Research on Convolutional Neural Network Inference Acceleration and Performance Optimization for Edge Intelligence. Sensors, 24(1), 240. https://doi.org/10.3390/s24010240