BATMAN: A Brain-like Approach for Tracking Maritime Activity and Nuance

Abstract

1. Introduction

2. Materials and Methods

2.1. Datasets

2.1.1. Imagery

2.1.2. AIS Data

2.1.3. Static Contextual Data

2.1.4. Dynamic Contextual Data

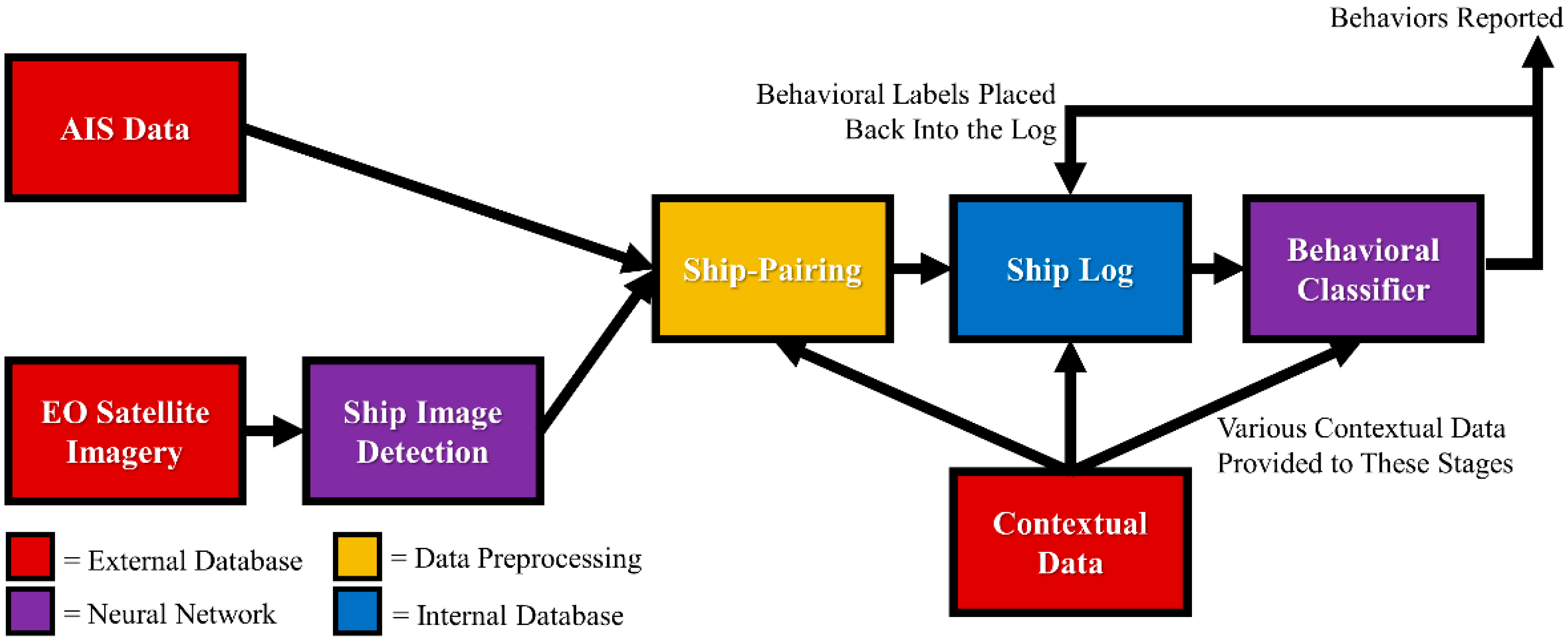

2.2. BATMAN Pipeline

2.2.1. Objectives and Structure

2.2.2. Data Format

2.2.3. The Ship Log

2.2.4. Pipeline Data Flow

2.3. Algorithms

2.3.1. YOLO and Other Methods for Ship Detection

2.3.2. Ship-Pairing Algorithm

2.3.3. AIS Data Feature Engineering

2.3.4. Behavioral Classifier Data Ingestion

2.3.5. Behavioral Classifier Neural Network Approaches

2.3.6. Behavioral Classifier Traditional Approaches

2.3.7. Generating Behavioral Truth Labels for Training

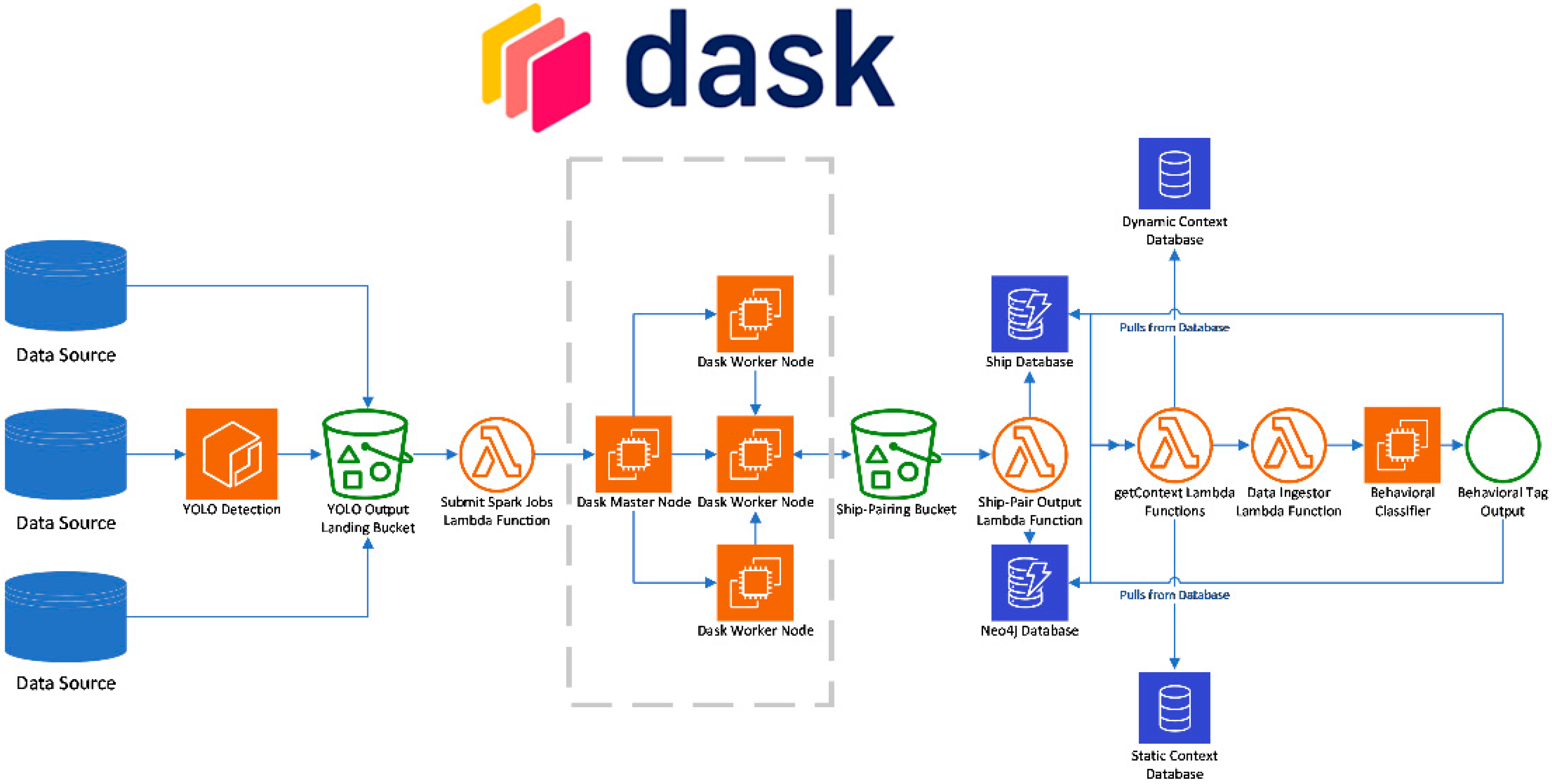

2.4. Deployment Platforms

2.4.1. NVIDIA DGX-1 Training

2.4.2. AWS Deployment

3. Results

3.1. Ship Detection and Classification

3.2. Ship-Pairing Algorithm

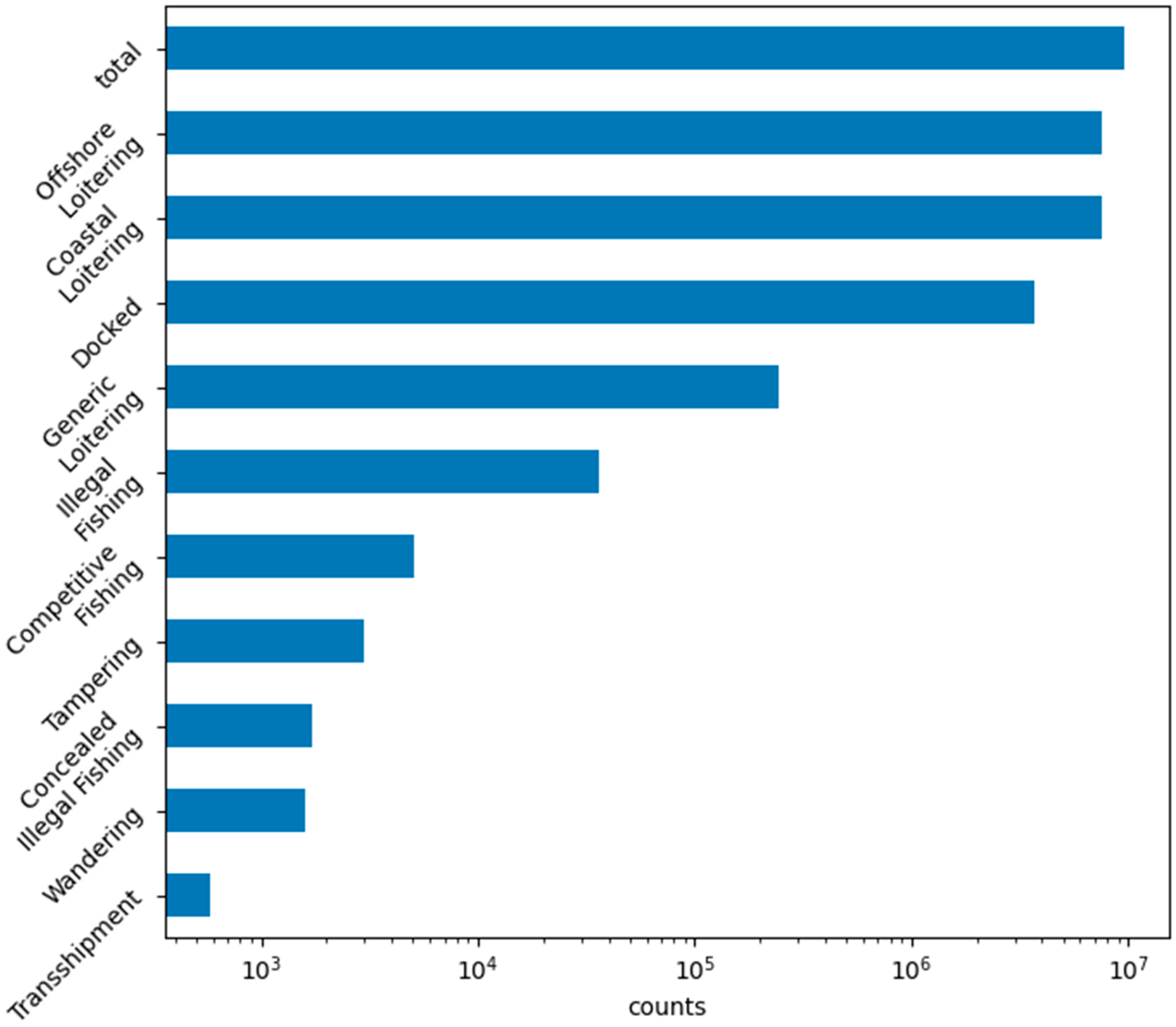

3.3. Truth Label Generation

3.4. Behavioral Classifier

4. Discussion

4.1. Effects of False Negatives and False Positives

4.2. Ship-Pairing Dynamics

4.3. Behavioral Classification Dynamics

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Boylan, B.M. Increased Maritime Traffic in the Arctic: Implications for Governance of Arctic Sea Routes. Mar. Policy 2021, 131, 104566. [Google Scholar] [CrossRef]

- Warren, C.; Steenbergen, D.J. Fisheries Decline, Local Livelihoods and Conflicted Governance: An Indonesian Case. Ocean Coast. Manag. 2021, 202, 105498. [Google Scholar] [CrossRef]

- Gladkikh, T.; Séraphin, H.; Gladkikh, V.; Vo-Thanh, T. Introduction: Luxury yachting—A growing but largely unknown industry. In Luxury Yachting; Gladkikh, T., Séraphin, H., Gladkikh, V., Vo-Thanh, T., Eds.; Springer International Publishing: Cham, Switzerland, 2022; pp. 1–8. ISBN 978-3-030-86405-7. [Google Scholar]

- Mir Mohamad Tabar, S.A.; Petrossian, G.A.; Mazlom Khorasani, M.; Noghani, M. Market Demand, Routine Activity, and Illegal Fishing: An Empirical Test of Routine Activity Theory in Iran. Deviant Behav. 2021, 42, 762–776. [Google Scholar] [CrossRef]

- Regan, J. Varied Incident Rates of Global Maritime Piracy: Toward a Model for State Policy Change. Int. Crim. Justice Rev. 2022, 32, 374–387. [Google Scholar] [CrossRef]

- Oxford Analytica. Russia and Europe Both Stand to Lose in Gas War; Oxford Analytica: Oxford, UK, 2022. [Google Scholar]

- Dark Shipping Detection. Spire Maritime. Available online: https://insights.spire.com/maritime/dark-shipping-detection (accessed on 22 November 2022).

- Improve Maritime Domain Awareness with SEAker—HawkEye 360; HawkEye 360: Herndon, VA, USA, 2022.

- Kiyofuji, H.; Saitoh, S. Use of Nighttime Visible Images to Detect Japanese Common Squid Todarodes Pacificus Fishing Areas and Potential Migration Routes in the Sea of Japan. Mar. Ecol. Prog. Ser. 2004, 276, 173–186. [Google Scholar] [CrossRef]

- Miler, R.K.; Kościelski, M.; Zieliński, M. European Maritime Safety Agency (EMSA) in the Way to Enhance Safety at EU Seas. Zesz. Nauk. Akad. Mar. Wojennej 2008, 2, 105–114. [Google Scholar]

- Nguyen, D.; Simonin, M.; Hajduch, G.; Vadaine, R.; Tedeschi, C.; Fablet, R. Detection of abnormal vessel behaviours from AIS data using GeoTrackNet: From the laboratory to the ocean. In Proceedings of the 2020 21st IEEE International Conference on Mobile Data Management (MDM), Versailles, France, 30 June—3 July 2020; pp. 264–268. [Google Scholar]

- Malarky, L.; Lowell, B. Avoiding Detection: Global Case Studies of Possible AIS Avoidance; Oceana: Washington, DC, USA, 2018. [Google Scholar]

- Nguyen, D.; Vadaine, R.; Hajduch, G.; Garello, R.; Fablet, R. A multi-task deep learning architecture for maritime surveillance using AIS data streams. In Proceedings of the 2018 IEEE 5th International Conference on Data Science and Advanced Analytics (DSAA), Turin, Italy, 1–4 October 2018; pp. 331–340. [Google Scholar]

- Lane, R.O.; Nevell, D.A.; Hayward, S.D.; Beaney, T.W. Maritime anomaly detection and threat assessment. In Proceedings of the 2010 13th International Conference on Information Fusion, Edinburgh, UK, 26–29 July 2010; pp. 1–8. [Google Scholar]

- Eriksen, T.; Skauen, A.N.; Narheim, B.; Helleren, O.; Olsen, O.; Olsen, R.B. Tracking ship traffic with space-based AIS: Experience gained in first months of operations. In Proceedings of the 2010 International WaterSide Security Conference, Carrara, Italy, 3–5 November 2010; pp. 1–8. [Google Scholar]

- Rodger, M.; Guida, R. Classification-Aided SAR and AIS Data Fusion for Space-Based Maritime Surveillance. Remote Sens. 2020, 13, 104. [Google Scholar] [CrossRef]

- Xiu, S.; Wen, Y.; Yuan, H.; Xiao, C.; Zhan, W.; Zou, X.; Zhou, C.; Chhattan Shah, S. A Multi-Feature and Multi-Level Matching Algorithm Using Aerial Image and AIS for Vessel Identification. Sensors 2019, 19, 1317. [Google Scholar] [CrossRef]

- Liu, Y.; Yao, L.; Xiong, W.; Zhou, Z. GF-4 satellite and automatic identification system data fusion for ship tracking. IEEE Geosci. Remote Sens. Lett. 2019, 16, 281–285. [Google Scholar] [CrossRef]

- Rodger, M.; Guida, R. Mapping dark shipping zones using Multi-Temporal SAR and AIS data for Maritime Domain Awareness. In Proceedings of the IGARSS 2022—2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; pp. 3672–3675. [Google Scholar]

- Chaturvedi, S.K.; Yang, C.-S.; Ouchi, K.; Shanmugam, P. Ship Recognition by Integration of SAR and AIS. J. Navig. 2012, 65, 323–337. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, L.; Wang, Y.; Feng, P.; He, R. ShipRSImageNet: A Large-Scale Fine-Grained Dataset for Ship Detection in High-Resolution Optical Remote Sensing Images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 8458–8472. [Google Scholar] [CrossRef]

- Liu, Z.; Yuan, L.; Weng, L.; Yang, Y. A high resolution optical satellite image dataset for ship recognition and some new baselines. In Proceedings of the 6th International Conference on Pattern Recognition Applications and Methods—ICPRAM, Porto, Portugal, 24–26 February 2017; pp. 324–331. [Google Scholar]

- Shao, Z.; Wu, W.; Wang, Z.; Du, W.; Li, C. SeaShips: A Large-Scale Precisely Annotated Dataset for Ship Detection. IEEE Trans. Multimed. 2018, 20, 2593–2604. [Google Scholar] [CrossRef]

- Rekavandi, A.M.; Xu, L.; Boussaid, F.; Seghouane, A.-K.; Hoefs, S.; Bennamoun, M. A Guide to Image and Video Based Small Object Detection Using Deep Learning: Case Study of Maritime Surveillance. arXiv 2022, arXiv:2207.12926. [Google Scholar] [CrossRef]

- International Maritime Association. International Shipping Facts and Figures–Information Resources on Trade, Safety, Security, and the Environment; International Maritime Association: London, UK, 2011. [Google Scholar]

- Liu, Y.; Sun, P.; Wergeles, N.; Shang, Y. A Survey and Performance Evaluation of Deep Learning Methods for Small Object Detection. Expert Syst. Appl. 2021, 172, 114602. [Google Scholar] [CrossRef]

- Kisantal, M.; Wojna, Z.; Murawski, J.; Naruniec, J.; Cho, K. Augmentation for Small Object Detection. arXiv 2019, arXiv:1902.07296. [Google Scholar] [CrossRef]

- Google Earth. Available online: https://earth.google.com/web/ (accessed on 22 November 2022).

- MarineCadastre. Available online: https://marinecadastre.gov/accessais/ (accessed on 14 September 2022).

- SunCalc. Available online: https://www.suncalc.org/ (accessed on 14 September 2022).

- United States Coast Guard Vessel Traffic Data. Available online: https://marinecadastre.gov/ais/#:~:text=Vessel%20traffic%20data%2C%20or%20Automatic,international%20waters%20in%20real%20time (accessed on 8 July 2022).

- Coast Guard Issues Warning to Mariners Turning off AIS 2921; National Fisherman: Portland, ME, USA, 2021.

- Submarine Cable Map 2022. Available online: https://submarine-cable-map-2022.telegeography.com/ (accessed on 12 September 2022).

- Marine Protected Areas (MPAs) Fishery Management Areas Map & GIS Data; NOAA: Silver Spring, MA, USA, 2019.

- Protected Planet—Thematic Areas; Protected Planet; UN: Gland, Switzerland, 2023.

- Claus, S.; De Hauwere, N.; Vanhoorne, B.; Deckers, P.; Dias, F.S.; Hernandez, F.; Mees, J. Marine Regions: Towards a Global Standard for Georeferenced Marine Names and Boundaries, Marine Geodesy. Mar. Reg. 2014, 37, 99–125. [Google Scholar] [CrossRef]

- Global Energy Monitor. Global Energy Monitor. Available online: https://globalenergymonitor.org/ (accessed on 6 June 2022).

- Status of Conventions; International Maritime Organization: London, UK, 2022; Available online: https://www.imo.org/en/About/Conventions/Pages/StatusOfConventions.aspx (accessed on 8 June 2022).

- Paris MoU. Paris MoU. Available online: https://www.parismou.org/ (accessed on 8 June 2022).

- Tokyo MoU. Tokyo MoU. Available online: https://www.tokyo-mou.org/ (accessed on 8 June 2022).

- World Weather Online API. World Weather Online. Available online: https://www.worldweatheronline.com/ (accessed on 4 October 2022).

- Air Pollution API. Open Weather Map. Available online: https://openweathermap.org/api/air-pollution (accessed on 26 September 2022).

- IMO 2020—Cutting Sulphur Oxide Emissions; International Maritime Organization: London, UK, 2020.

- Hoeser, T.; Bachofer, F.; Kuenzer, C. Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review—Part II: Applications. Remote Sens. 2020, 12, 3053. [Google Scholar] [CrossRef]

- Hoeser, T.; Kuenzer, C. Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review-Part I: Evolution and Recent Trends. Remote Sens. 2020, 12, 1667. [Google Scholar] [CrossRef]

- Voinov, S.; Krause, D.; Schwarz, E. Towards automated vessel detection and type recognition from VHR optical satellite images. In Proceedings of the IGARSS 2018—2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 4823–4826. [Google Scholar]

- Chen, C.; He, C.; Hu, C.; Pei, H.; Jiao, L. A Deep Neural Network Based on an Attention Mechanism for SAR Ship Detection in Multiscale and Complex Scenarios. IEEE Access 2019, 7, 104848–104863. [Google Scholar] [CrossRef]

- Fan, W.; Zhou, F.; Bai, X.; Tao, M.; Tian, T. Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images. Remote Sens. 2019, 11, 2862. [Google Scholar] [CrossRef]

- Gao, L.; He, Y.; Sun, X.; Jia, X.; Zhang, B. Incorporating Negative Sample Training for Ship Detection Based on Deep Learning. Sensors 2019, 19, 684. [Google Scholar] [CrossRef]

- He, Y.; Sun, X.; Gao, L.; Zhang, B. Ship detection without sea-land segmentation for large-scale high-resolution optical satellite images. In Proceedings of the IGARSS 2018—2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 717–720. [Google Scholar]

- You, Y.; Cao, J.; Zhang, Y.; Liu, F.; Zhou, W. Nearshore Ship Detection on High-Resolution Remote Sensing Image via Scene-Mask R-CNN. IEEE Access 2019, 7, 128431–128444. [Google Scholar] [CrossRef]

- You, Y.; Li, Z.; Ran, B.; Cao, J.; Lv, S.; Liu, F. Broad Area Target Search System for Ship Detection via Deep Convolutional Neural Network. Remote Sens. 2019, 11, 1965. [Google Scholar] [CrossRef]

- Zhang, S.; Wu, R.; Xu, K.; Wang, J.; Sun, W. R-CNN-Based Ship Detection from High Resolution Remote Sensing Imagery. Remote Sens. 2019, 11, 631. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, Y.; Shi, Z.; Zhang, J.; Wei, M. Rotationally Unconstrained Region Proposals for Ship Target Segmentation in Optical Remote Sensing. IEEE Access 2019, 7, 87049–87058. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. arXiv 2016, arXiv:1506.02640. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. 6. arXiv 2019, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLO9000: Better, Faster, Stronger; IEEE: Piscataway, NJ, USA, 2017; pp. 7263–7271. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.-Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. In Computer Vision—ECCV 2016; Leibe, B., Matas, J., Sebe, N., Welling, M., Eds.; Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2016; Volume 9905, pp. 21–37. ISBN 978-3-319-46447-3. [Google Scholar]

- Lin, T.-Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision; IEEE: Piscataway, NJ, USA, 2018. [Google Scholar]

- Chen, L.; Shi, W.; Deng, D. Improved YOLOv3 Based on Attention Mechanism for Fast and Accurate Ship Detection in Optical Remote Sensing Images. Remote Sens. 2021, 13, 660. [Google Scholar] [CrossRef]

- Hu, J.; Zhi, X.; Shi, T.; Zhang, W.; Cui, Y.; Zhao, S. PAG-YOLO: A Portable Attention-Guided YOLO Network for Small Ship Detection. Remote Sens. 2021, 13, 3059. [Google Scholar] [CrossRef]

- Jiang, J.; Fu, X.; Qin, R.; Wang, X.; Ma, Z. High-Speed Lightweight Ship Detection Algorithm Based on YOLO-V4 for Three-Channels RGB SAR Image. Remote Sens. 2021, 13, 1909. [Google Scholar] [CrossRef]

- Long, Z.; Suyuan, W.; Zhongma, C.; Jiaqi, F.; Xiaoting, Y.; Wei, D. Lira-YOLO: A Lightweight Model for Ship Detection in Radar Images. J. Syst. Eng. Electron. 2020, 31, 950–956. [Google Scholar] [CrossRef]

- Tang, G.; Zhuge, Y.; Claramunt, C.; Men, S. N-YOLO: A SAR Ship Detection Using Noise-Classifying and Complete-Target Extraction. Remote Sens. 2021, 13, 871. [Google Scholar] [CrossRef]

- Wang, W.; Zhang, X.; Sun, W.; Huang, M. A Novel Method of Ship Detection under Cloud Interference for Optical Remote Sensing Images. Remote Sens. 2022, 14, 3731. [Google Scholar] [CrossRef]

- Xu, Q.; Li, Y.; Shi, Z. LMO-YOLO: A Ship Detection Model for Low-Resolution Optical Satellite Imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 4117–4131. [Google Scholar] [CrossRef]

- Zhu, H.; Xie, Y.; Huang, H.; Jing, C.; Rong, Y.; Wang, C. DB-YOLO: A Duplicate Bilateral YOLO Network for Multi-Scale Ship Detection in SAR Images. Sensors 2021, 21, 8146. [Google Scholar] [CrossRef]

- Patel, K.; Bhatt, C.; Mazzeo, P.L. Improved Ship Detection Algorithm from Satellite Images Using YOLOv7 and Graph Neural Network. Algorithms 2022, 15, 473. [Google Scholar] [CrossRef]

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and efficient object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscataway, NJ, USA, 2020. [Google Scholar]

- Karaca, A.C. Robust and Fast Ship Detection In SAR Images With Complex Backgrounds Based on EfficientDet Model. In Proceedings of the 2021 5th International Symposium on Multidisciplinary Studies and Innovative Technologies (ISMSIT), Ankara, Turkey, 21–23 October 2021; pp. 334–339. [Google Scholar]

- O’Shea, K.; Nash, R. An Introduction to Convolutional Neural Networks. arXiv 2015, arXiv:1511.08458. [Google Scholar] [CrossRef]

- Arik, S.O.; Pfister, T. TabNet: Attentive Interpretable Tabular Learning. arXiv 2019, arXiv:1908.07442. [Google Scholar] [CrossRef]

- Padhi, I.; Schiff, Y.; Melnyk, I.; Rigotti, M.; Mroueh, Y.; Dognin, P.; Ross, J.; Nair, R.; Altman, E. Tabular Transformers for Modeling Multivariate Time Series. arXiv 2020, arXiv:2011.01843. [Google Scholar] [CrossRef]

- Raj, A.; Bosch, J.; Olsson, H.H.; Wang, T.J. Modelling data pipelines. In Proceedings of the 2020 46th Euromicro Conference on Software Engineering and Advanced Applications (SEAA), Portoroz, Slovenia, 26–28 October 2022; IEEE: Piscataway, NJ, USA, 2020; pp. 13–20. [Google Scholar]

- Khan, H.M.; Yunze, C. Ship Detection in SAR Image Using YOLOv2. In Proceedings of the 2018 37th Chinese Control Conference (CCC), Wuhan, China, 25–27 July 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 9495–9499. [Google Scholar]

- Zhang, T.; Zhang, X.; Li, J.; Xu, X.; Wang, B.; Zhan, X.; Xu, Y.; Ke, X.; Zeng, T.; Su, H.; et al. SAR Ship Detection Dataset (SSDD): Official Release and Comprehensive Data Analysis. Remote Sens. 2021, 13, 3690. [Google Scholar] [CrossRef]

- Zhang, Y.; Guo, L.; Wang, Z.; Yu, Y.; Liu, X.; Xu, F. Intelligent Ship Detection in Remote Sensing Images Based on Multi-Layer Convolutional Feature Fusion. Remote Sens. 2020, 12, 3316. [Google Scholar] [CrossRef]

- Gallego, A.-J.; Pertusa, A.; Gil, P. Automatic Ship Classification from Optical Aerial Images with Convolutional Neural Networks. Remote Sens. 2018, 10, 511. [Google Scholar] [CrossRef]

- Li, K.; Wan, G.; Cheng, G.; Meng, L.; Han, J. Object Detection in Optical Remote Sensing Images: A Survey and a New Benchmark. ISPRS J. Photogramm. Remote Sens. 2020, 159, 296–307. [Google Scholar] [CrossRef]

- Parisi, G.I.; Kemker, R.; Part, J.L.; Kanan, C.; Wermter, S. Continual Lifelong Learning with Neural Networks: A Review. Neural Netw. 2019, 113, 54–71. [Google Scholar] [CrossRef]

| Geographical (Static) | Atmospheric (Dynamic) | Legal (Static) |

|---|---|---|

| NOAA [34] | World Weather Online [41] | UN Rules [38] |

| Protected Planet [35] | OpenWeatherMap [42] | Port State Control Lists [39,40] |

| MarineRegions [36] | IMO 2020 [43] | |

| Global Energy Monitor [37] | ||

| TeleGeography [33] |

| Dataset | Format |

|---|---|

| Marine Cadastre AIS | Compressed ZIP files containing CSV files |

| Google Earth EO | JPEG files and XML files |

| Static contextual | CSV and GeoJSON files |

| Dynamic contextual | JSON formatted API response |

| Behavior | Definition | Additional Conditions |

|---|---|---|

| Wandering | Cargo ships in seldom traveled areas | More than 10 km from coast, >50% waypoints untraveled |

| Transshipment | Pairs of ships at sea loitering in close proximity | More than 10 km from coast, proximity < 20 m |

| Coastal Loitering | Loitering near the coast and away from ports | Less than 10 km from coast |

| Offshore Loitering | Ships loitering out of port away from the coast | Loitering > 10 km from coast |

| Illegal Fishing | Fishing vessels loitering in protected zones | - |

| Concealed Illegal Fishing | Dark fishing vessels loitering in protected zones | - |

| Competitive Fishing | Dark fishing vessels loitering at sea | - |

| Generic Loitering | Ships loitering out of port | Located 5 km or further from port |

| Docked | Ships loitering in port | Within 5 km of port |

| Tampering | Diving or dredging vessels loitering near pipelines/undersea cables | Within 2 km of pipelines/cables |

| Publication | Base Network | Imagery | Dataset | Train Size | Test Size | mAP | Recall | Precision |

|---|---|---|---|---|---|---|---|---|

| Khan and Yunze, 2018 [75] | YOLOv2 | SAR | Sentinel-1 | 6003 | 74 | - | 95.95% | - |

| Long et al., 2020 [63] | YOLOv3 | SAR | SSDD [76] | 878 | 282 | 89.72% | - | - |

| Long et al., 2020 [63] | Tiny-YOLOv3 | SAR | SSDD [76] | 878 | 282 | 88.69% | - | - |

| Zhang et al., 2020 [77] | YOLOv3 | EO + IR | DSDR | 1146 | 738 | - | 84.62% | 78.09% |

| Hu et al., 2021 [61] | YOLOv4 | EO | MASATI [78] | 1675 | 711 | 91.00% | - | - |

| Jiang et al., 2021 [62] | YOLOv4 | SAR | SSDD [76] | 812 | 348 | 96.32% | 95.96% | 96.98% |

| Tang et al., 2021 [64] | YOLOv5 | SAR | GaoFen-3 | ~10,285 | ~1714 | 90.97% | 92.65% | 70.80% |

| Zhu et al., 2021 [67] | YOLOv5 | SAR | SSDD [76] | 812 | 348 | 64.90% | 97.50% | 87.80% |

| Zhu et al., 2021 [67] | YOLOv5 | SAR | HRSID [22] | ~3923 | ~1681 | 72.00% | 94.90% | 72.40% |

| Wang et al., 2022 * [65] | YOLOv5 | EO | DIOR [79] | - | - | - | 68.20% | - |

| Wang et al., 2022 * [65] | YOLOv5 | EO | CDIOR | - | - | - | 81.90% | - |

| Xu et al., 2022 ** [66] | YOLOv4 | EO | WFV *** | - | - | 92.32% | 90.53% | 94.93% |

| Xu et al., 2022 ** [66] | YOLOv4 | EO | PMS *** | - | - | 93.07% | 90.75% | 94.93% |

| Approach | Tm (m) | Tt (s) | t (s) | sp | Im (m) | Im/Tm | n | rt |

|---|---|---|---|---|---|---|---|---|

| Extensive (Pmax = 106) | 104 | 104 | 439.81 | 469 | 1342.55 | 0.13 | 3493.35 | 7.94 |

| 104 | 103 | 460.25 | 452 | 1368.31 | 0.14 | 3303.35 | 7.18 | |

| 104 | 102 | 458.96 | 441 | 1485.64 | 0.15 | 2968.42 | 6.47 | |

| 103 | 104 | 414.34 | 302 | 366.59 | 0.37 | 823.81 | 1.99 | |

| 103 | 103 | 426.71 | 289 | 373.24 | 0.37 | 774.30 | 1.81 | |

| 103 | 102 | 433.04 | 273 | 390.81 | 0.39 | 698.55 | 1.61 | |

| 102 | 104 | 410.65 | 70 | 47.31 | 0.47 | 147.96 | 0.36 | |

| 102 | 103 | 423.82 | 63 | 49.02 | 0.49 | 128.52 | 0.30 | |

| 102 | 102 | 424.94 | 55 | 51.60 | 0.52 | 106.59 | 0.25 | |

| Greedy (Pmax = 1) | 104 | 104 | 405.53 | 469 | 1352.15 | 0.14 | 3468.55 | 8.55 |

| 104 | 103 | 437.46 | 452 | 1376.33 | 0.14 | 3284.10 | 7.51 | |

| 104 | 102 | 419.27 | 441 | 1494.92 | 0.15 | 2949.99 | 7.04 | |

| 103 | 104 | 405.80 | 293 | 342.49 | 0.34 | 855.50 | 2.11 | |

| 103 | 103 | 412.65 | 280 | 348.12 | 0.35 | 804.32 | 1.95 | |

| 103 | 102 | 425.27 | 267 | 375.44 | 0.38 | 711.17 | 1.67 | |

| 102 | 104 | 442.90 | 69 | 46.29 | 0.46 | 149.06 | 0.34 | |

| 102 | 103 | 410.19 | 62 | 47.91 | 0.48 | 129.41 | 0.32 | |

| 102 | 102 | 405.61 | 54 | 50.37 | 0.50 | 107.21 | 0.26 |

| Algorithm | Recall | Precision | F1Mean |

|---|---|---|---|

| CART | 55.2% | 64.5% | 57.6% |

| ERT | 61.5% | 76.0% | 65.9% |

| GBT | 61.2% | 73.1% | 65.2% |

| Dense Network | 51.5% | 61.9% | 55.2% |

| Convolutional Network | 44.6% | 62.7% | 48.0% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jones, A.; Koehler, S.; Jerge, M.; Graves, M.; King, B.; Dalrymple, R.; Freese, C.; Von Albade, J. BATMAN: A Brain-like Approach for Tracking Maritime Activity and Nuance. Sensors 2023, 23, 2424. https://doi.org/10.3390/s23052424

Jones A, Koehler S, Jerge M, Graves M, King B, Dalrymple R, Freese C, Von Albade J. BATMAN: A Brain-like Approach for Tracking Maritime Activity and Nuance. Sensors. 2023; 23(5):2424. https://doi.org/10.3390/s23052424

Chicago/Turabian StyleJones, Alexander, Stephan Koehler, Michael Jerge, Mitchell Graves, Bayley King, Richard Dalrymple, Cody Freese, and James Von Albade. 2023. "BATMAN: A Brain-like Approach for Tracking Maritime Activity and Nuance" Sensors 23, no. 5: 2424. https://doi.org/10.3390/s23052424

APA StyleJones, A., Koehler, S., Jerge, M., Graves, M., King, B., Dalrymple, R., Freese, C., & Von Albade, J. (2023). BATMAN: A Brain-like Approach for Tracking Maritime Activity and Nuance. Sensors, 23(5), 2424. https://doi.org/10.3390/s23052424