k-Tournament Grasshopper Extreme Learner for FMG-Based Gesture Recognition

Abstract

1. Introduction

2. Related Work

2.1. Pruning of Extreme Learning Machine

| Algorithm 1: Pseudocode of an extreme learning machine [2]. |

1. Given a training set , activation function , and number of hidden nodes ; 2. Assign random input weights , and biases , for ; 3. Calculate the hidden layer output matrix H; 4. Calculate the output weight matrix

where is the Moore–Penrose generalized inverse of matrix H and ; The output weight matrix ; |

2.2. Sensors for FMG

2.3. Hand Gesture Recognition Based on FMG Sensors

3. Proposed k-Tournament Grasshopper Extreme Learner

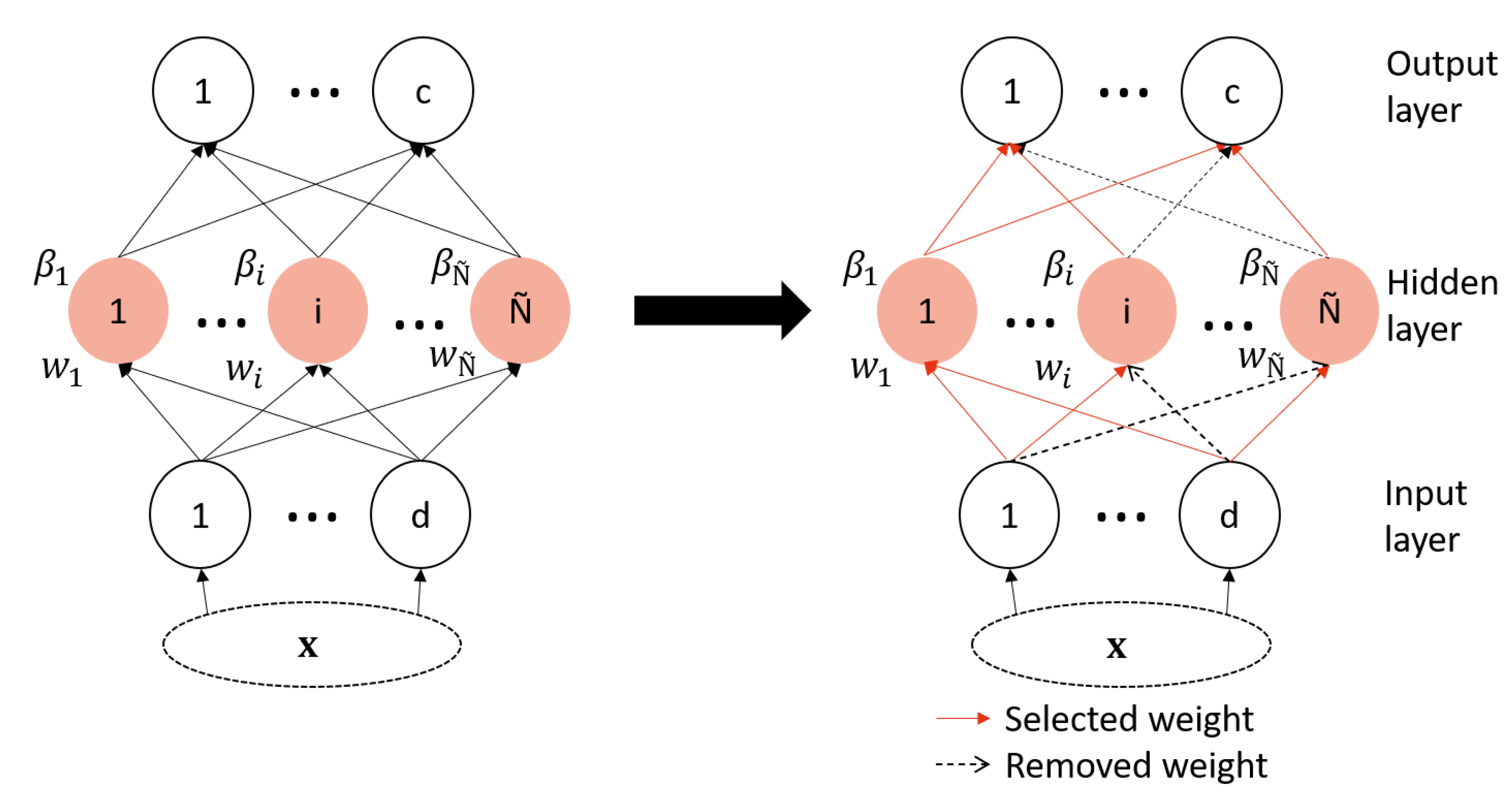

3.1. ELM Weights Selection

3.2. k-Tournament Grasshopper Extreme Learning Machine for Selection Problems

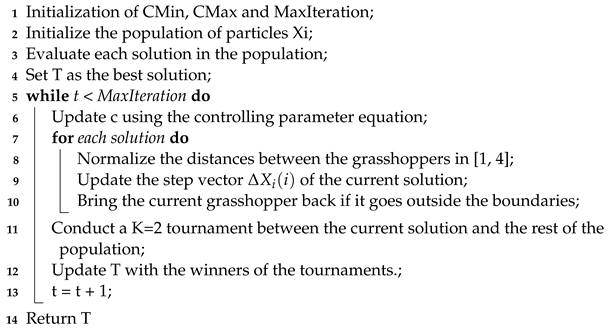

| Algorithm 2: k-Tournament grasshopper optimization algorithm. |

|

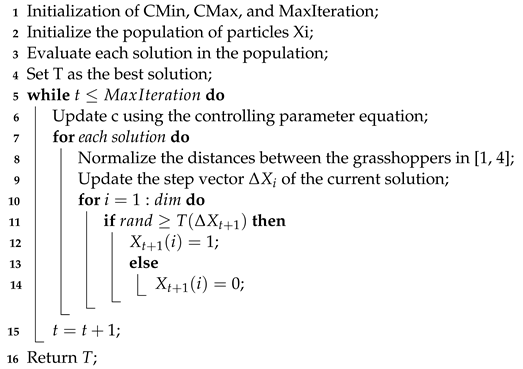

| Algorithm 3: Binary grasshopper optimization algorithm (BGOA) [43]. |

|

3.3. k-Tournament Grasshopper Extreme Learner

| Algorithm 4: Pseudocode of the proposed k-tournament grasshopper extreme learner. |

|

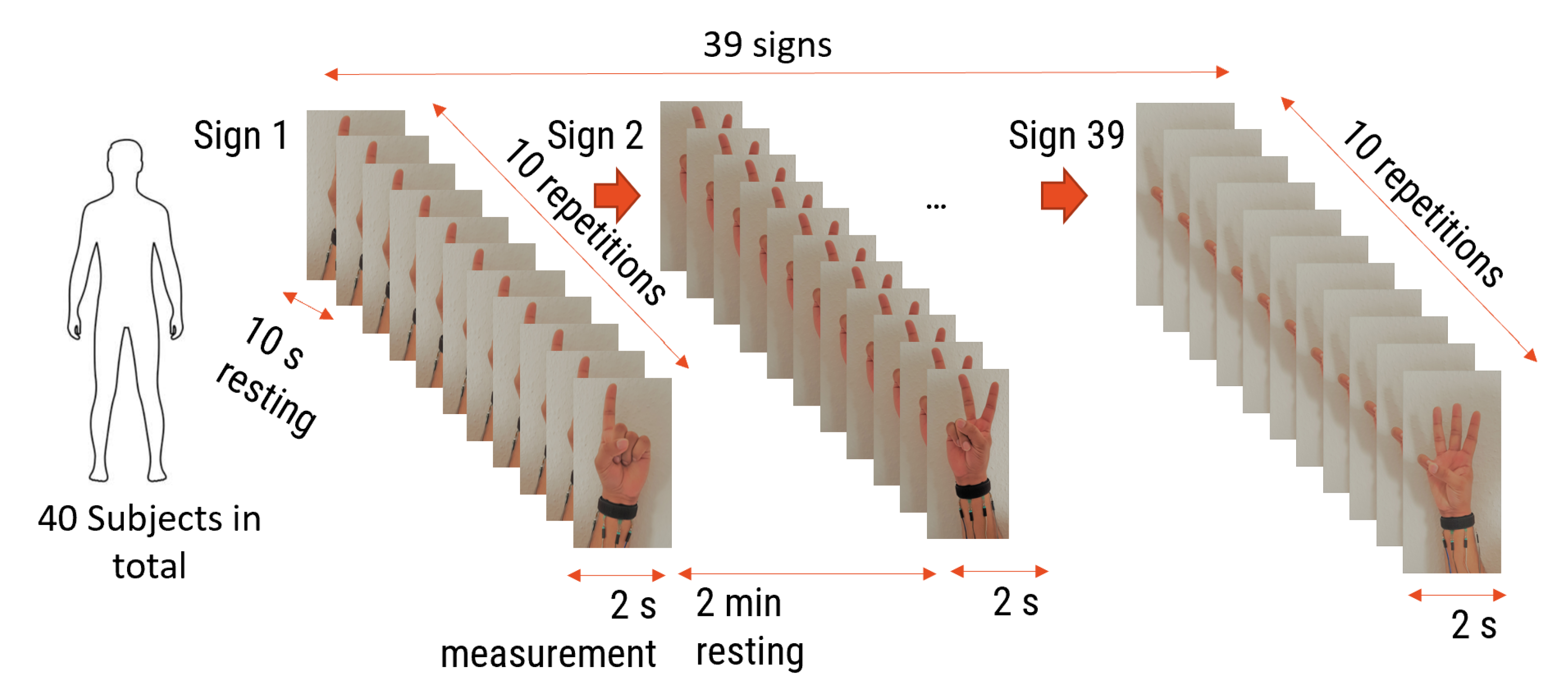

4. Experimental Investigations

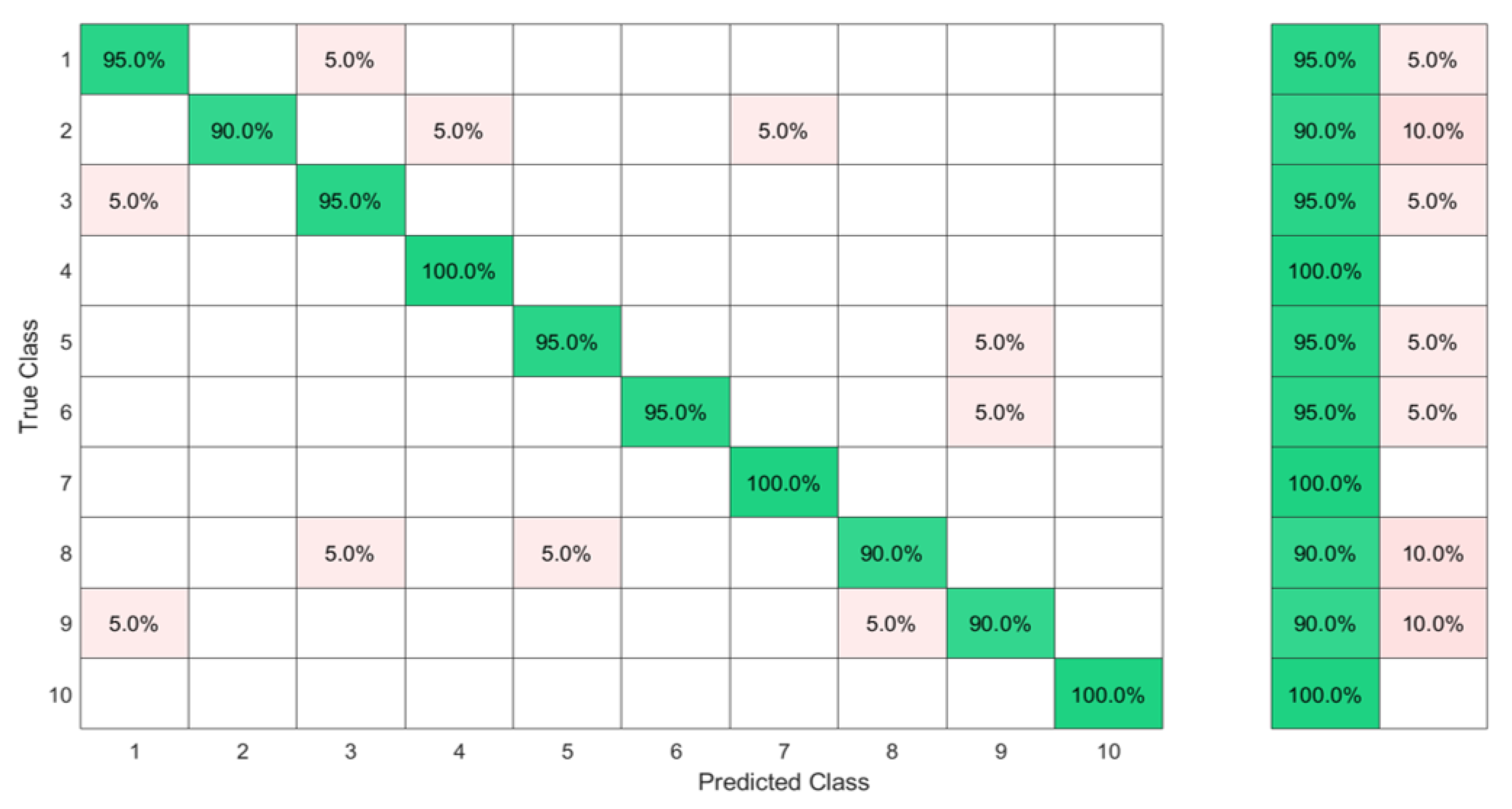

4.1. Comparison with the State of the Art of FMG-Based Gesture Recognition

4.2. Investigation of the Sensors and Subject Number Influence

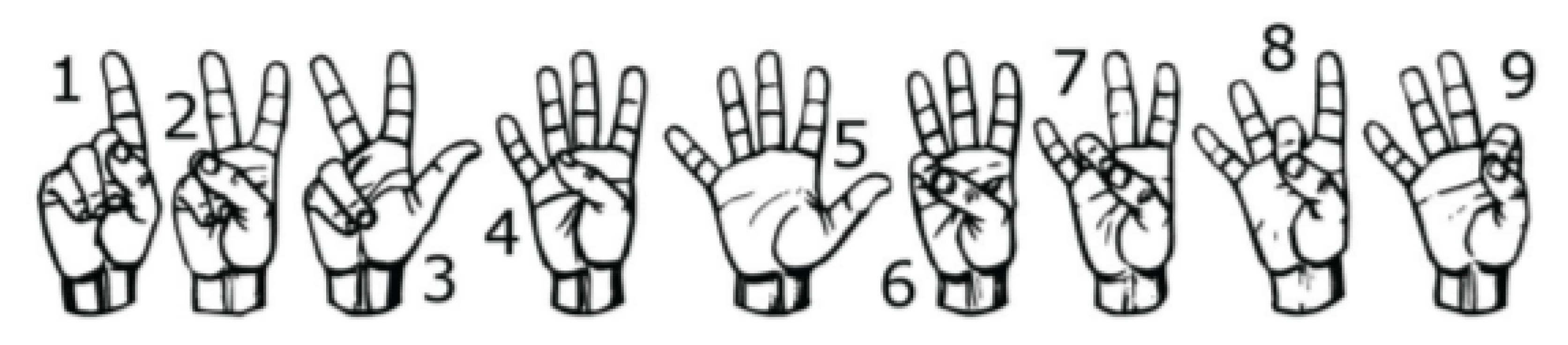

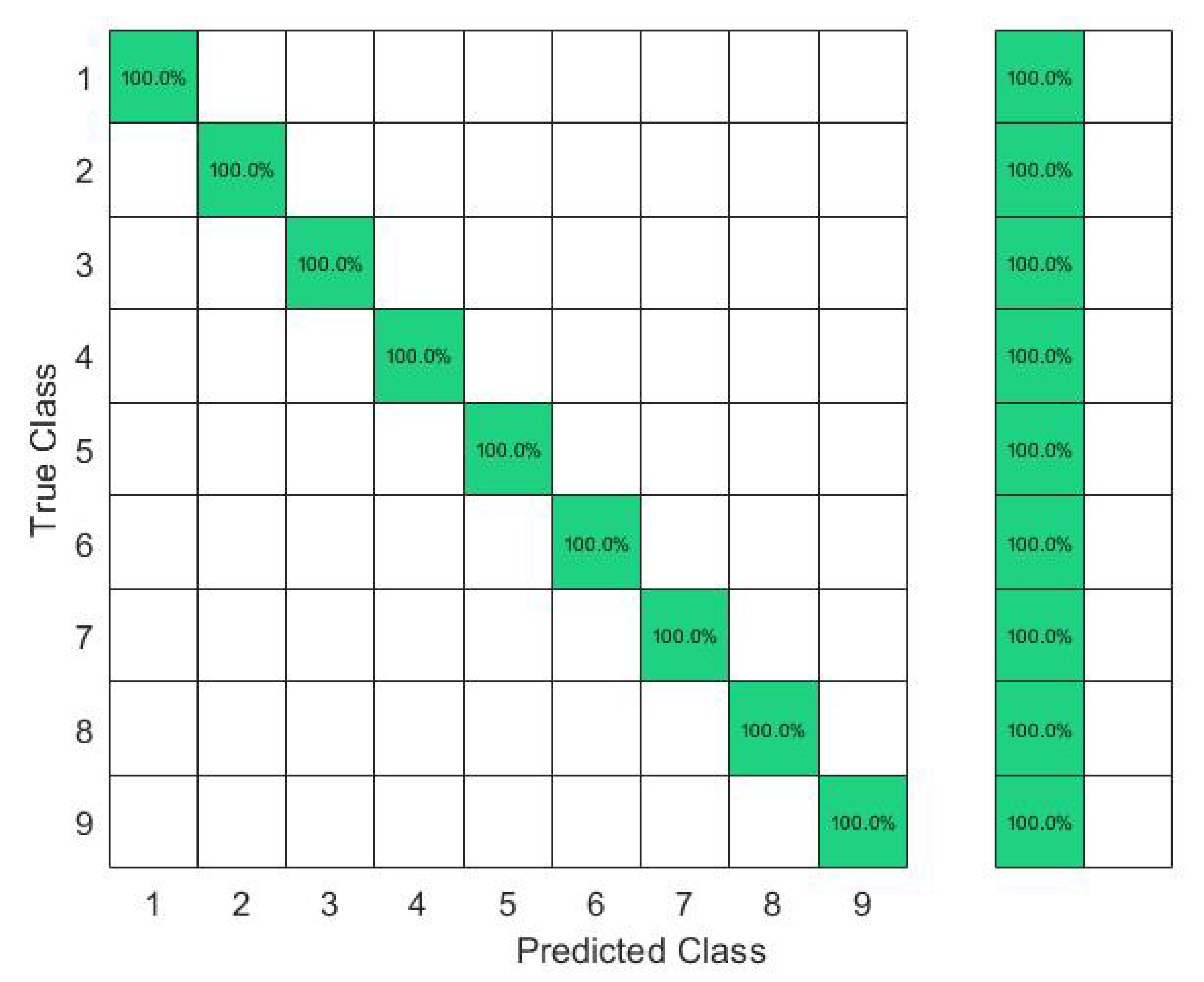

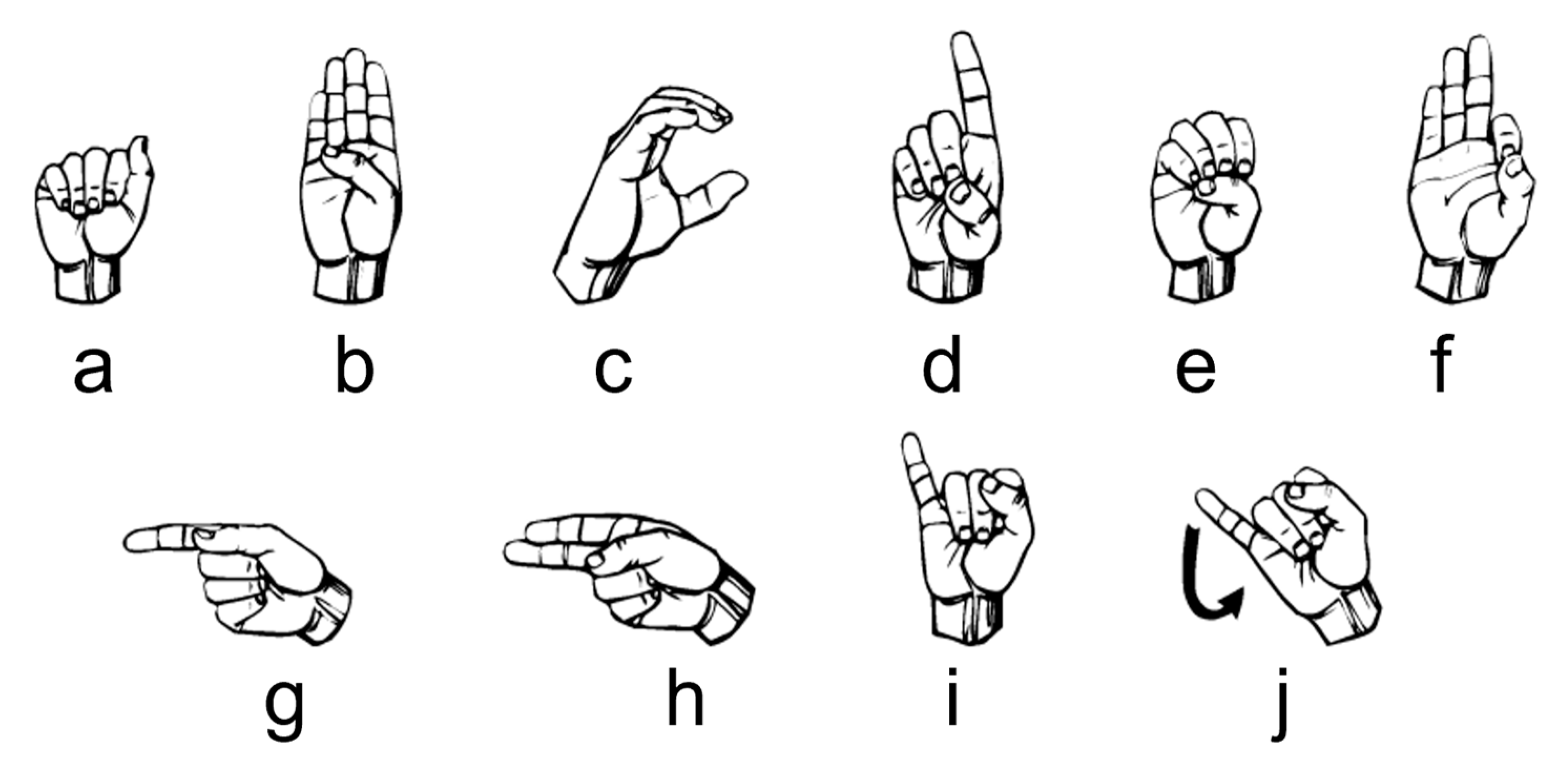

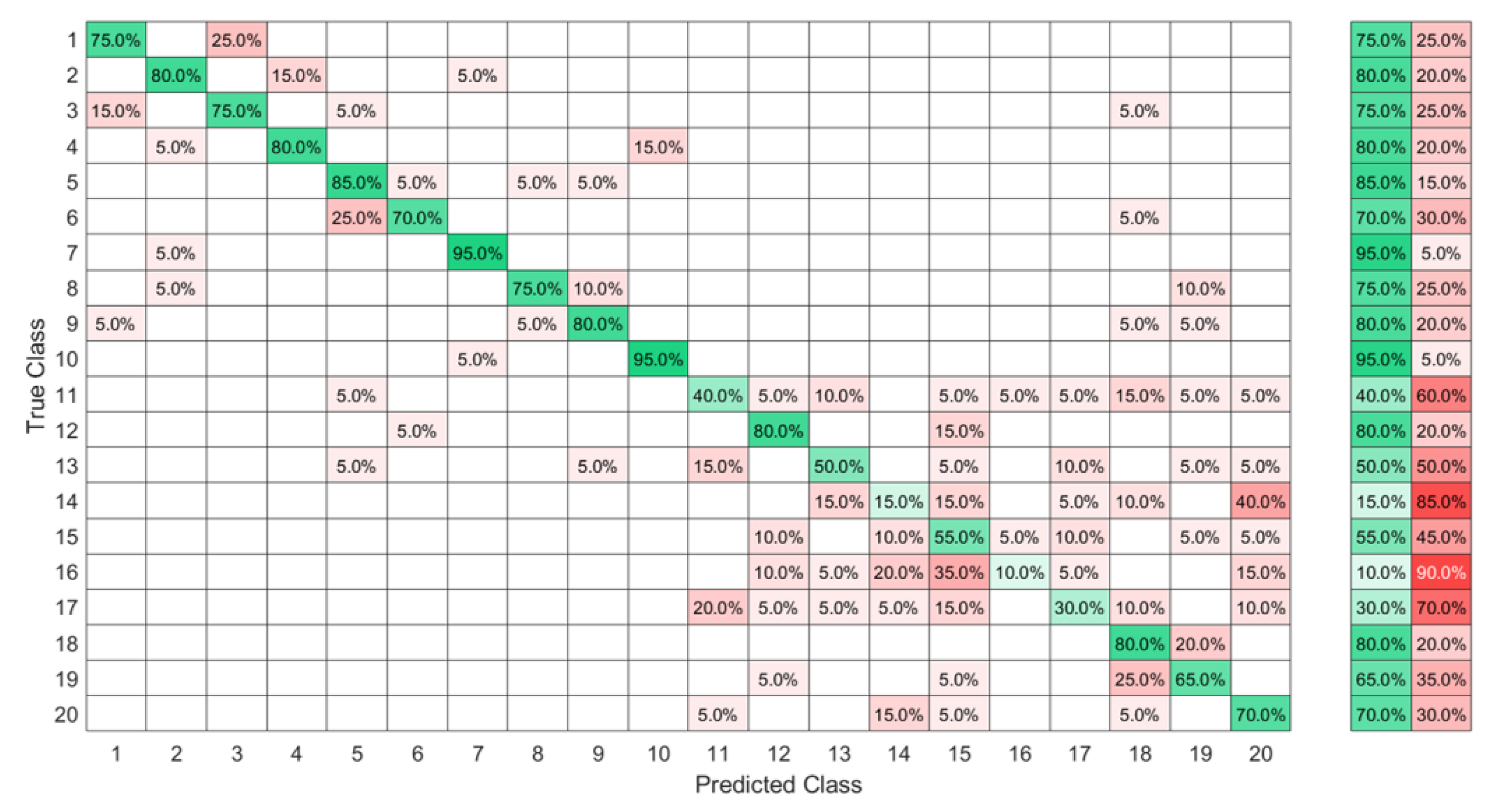

4.3. Recognition of ASL Signs with a Middle Ambiguity Level

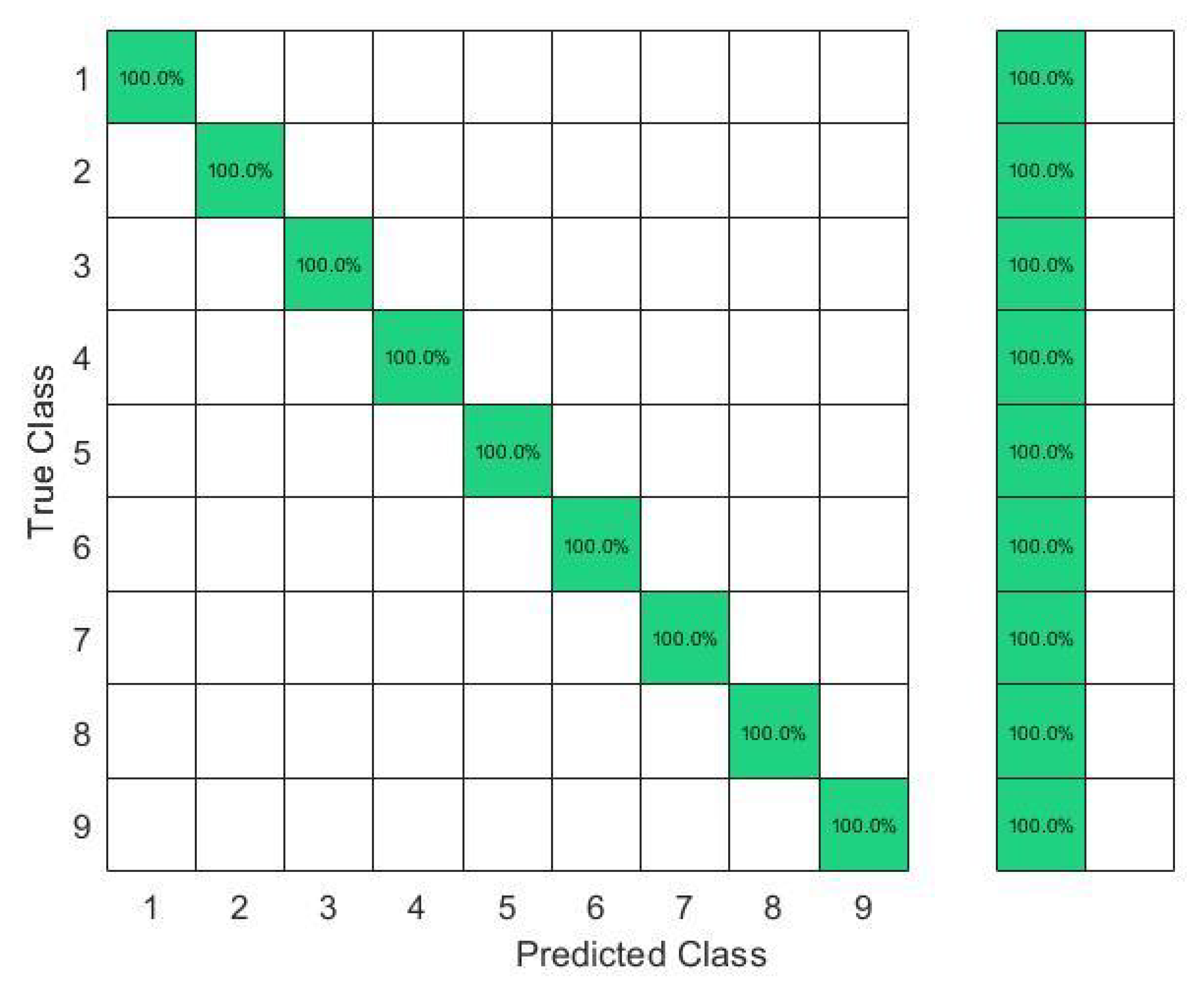

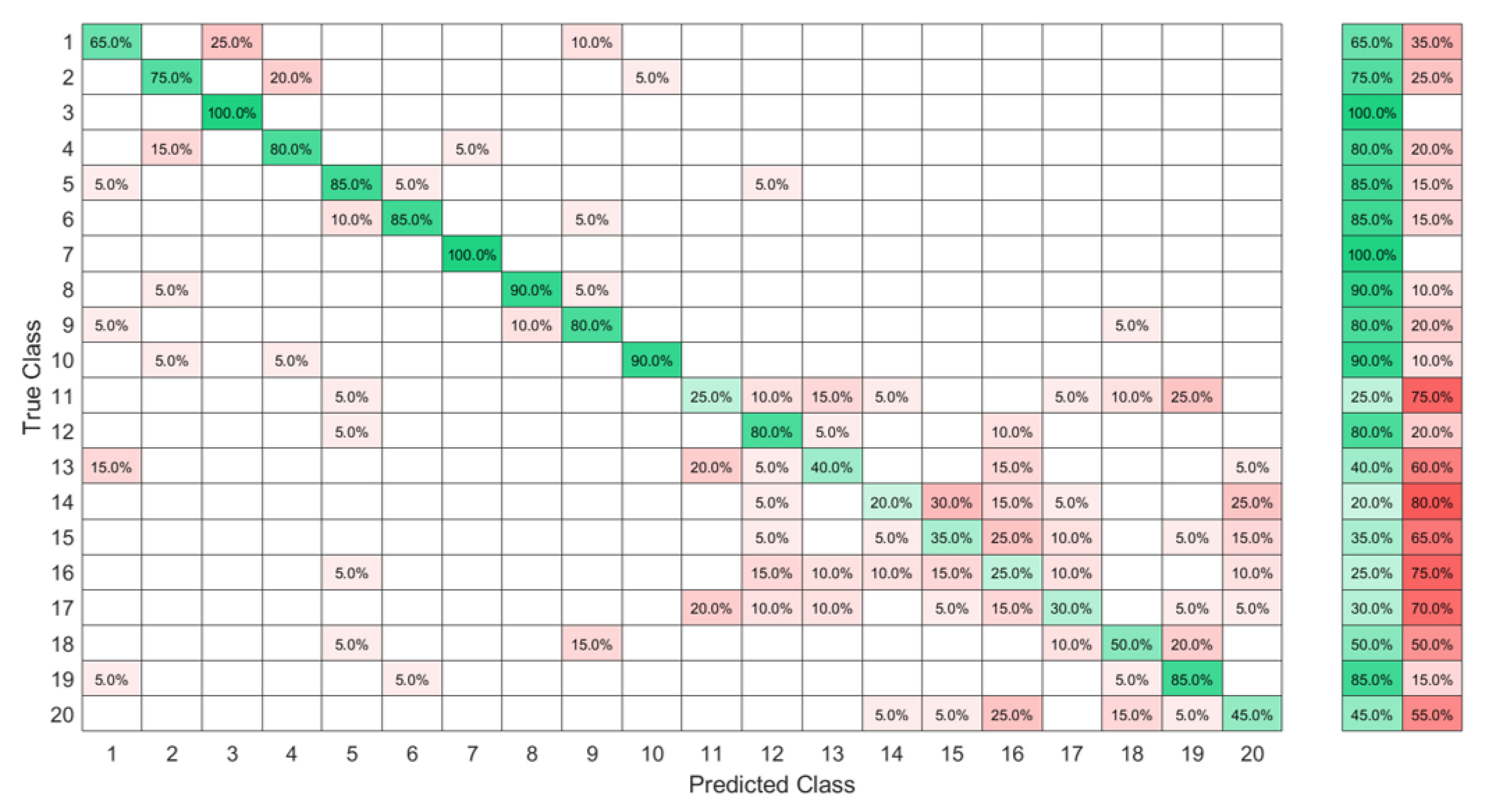

4.4. Recognition of ASL Signs with a High Ambiguity Level

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Albadr, M.A.A.; Tiun, S.; AL-Dhief, F.T.; Sammour, M.A.M. Spoken language identification based on the enhanced self-adjusting extreme learning machine approach. PLoS ONE 2018, 13, e0194770. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Z.; Chen, Z.; Chen, Y.; Wang, S.; Wang, H. A Class Incremental Extreme Learning Machine for Activity Recognition. Cogn. Comput. 2014, 6, 423–431. [Google Scholar] [CrossRef]

- Song, S.; Wang, M.; Lin, Y. An improved algorithm for incremental extreme learning machine. Syst. Sci. Control Eng. 2020, 8, 308–317. [Google Scholar] [CrossRef]

- Miche, Y.; Sorjamaa, A.; Bas, P.; Simula, O.; Jutten, C.; Lendasse, A. OP-ELM: Optimally Pruned Extreme Learning Machine. IEEE Trans. Neural Netw. 2010, 21, 158–162. [Google Scholar] [CrossRef] [PubMed]

- Miche, Y.; Sorjamaa, A.; Lendasse, A. OP-ELM: Theory, experiments and a toolbox. In Proceedings of the International Conference on Artificial Neural Networks, Prague, Czech Republic, 3–6 September 2008; Springer: Berlin/Heidelberg, Germany, 2008; pp. 145–154. [Google Scholar] [CrossRef]

- Kaloop, M.R.; El-Badawy, S.M.; Ahn, J.; Sim, H.B.; Hu, J.W.; El-Hakim, R.T.A. A hybrid wavelet-optimally-pruned extreme learning machine model for the estimation of international roughness index of rigid pavements. Int. J. Pavement Eng. 2022, 23, 862–876. [Google Scholar] [CrossRef]

- Pouzols, F.M.; Lendasse, A. Evolving fuzzy optimally pruned extreme learning machine for regression problems. Evol. Syst. 2010, 1, 43–58. [Google Scholar] [CrossRef]

- Alencar, A.S.C.; Neto, A.R.R.; Gomes, J.P.P. A new pruning method for extreme learning machines via genetic algorithms. Appl. Soft Comput. 2016, 44, 101–107. [Google Scholar] [CrossRef]

- Ahila, R.; Sadasivam, V.; Manimala, K. An integrated PSO for parameter determination and feature selection of ELM and its application in classification of power system disturbances. Appl. Soft Comput. 2015, 32, 23–37. [Google Scholar] [CrossRef]

- Saremi, S.; Mirjalili, S.; Lewis, A. Grasshopper Optimisation Algorithm: Theory and application. Adv. Eng. Softw. 2017, 105, 30–47. [Google Scholar] [CrossRef]

- Wang, J.; Tang, L.; Bronlund, J.E. Surface EMG Signal Amplification and Filtering. Int. J. Comput. Appl. 2013, 82, 15–22. [Google Scholar] [CrossRef]

- Al-Betar, M.A.; Awadallah, M.A.; Faris, H.; Aljarah, I.; Hammouri, A.I. Natural selection methods for Grey Wolf Optimizer. Expert Syst. Appl. 2018, 113, 481–498. [Google Scholar] [CrossRef]

- Amft, O.; Junker, H.; Lukowicz, P.; Troster, G.; Schuster, C. Sensing muscle activities with body-worn sensors. In Proceedings of the International Workshop on Wearable and Implantable Body Sensor Networks (BSN’06), Cambridge, MA, USA, 3–5 April 2006; pp. 4–141, ISSN 2376-8886. [Google Scholar] [CrossRef]

- Xiao, Z.G.; Menon, C. A Review of Force Myography Research and Development. Sensors 2019, 19, 4557. [Google Scholar] [CrossRef] [PubMed]

- Vecchi, F.; Freschi, C.; Micera, S.; Sabatini, A.M.; Dario, P.; Sacchetti, R.; Vecchi, F.; Sabatini, P.R.; Sacchetti, R. Experimental evaluation of two commercial force sensors for applications in biomechanics and motor control. In Proceedings of the IFESS 2000 and NP 2000 Proceedings: 5th Annual Conference of the International Functional Electrical Stimulation Society and 6th Triennial Conference "Neural Prostheses: Motor Systems; Center for Sensory-Motor Interaction (SMI), Department of Health Science and Technology, Aalborg University: Aalborg, Denmark, 2000. [Google Scholar]

- Hollinger, A.; Wanderley, M.M. Evaluation of Commercial Force-Sensing Resistors. In Proceedings of the International Conference on New Interfaces for Musical Expression NIME-06, Paris, France, 4–8 June 2006. [Google Scholar]

- Saadeh, M.Y.; Trabia, M.B. Identification of a force-sensing resistor for tactile applications. J. Intell. Mater. Syst. Struct. 2012, 24, 813–827. [Google Scholar] [CrossRef]

- Barioul, R.; Ghribi, S.F.; Abbasi, M.B.; Fasih, A.; Jmeaa-Derbel, H.B.; Kanoun, O. Wrist Force Myography (FMG) Exploitation for Finger Signs Distinguishing. In Proceedings of the 2019 5th IEEE International Conference on Nanotechnology for Instrumentation and Measurement (NanofIM), Sfax, Tunisia, 30–31 October 2019. [Google Scholar] [CrossRef]

- Jiang, X.; Merhi, L.K.; Xiao, Z.G.; Menon, C. Exploration of Force Myography and surface Electromyography in hand gesture classification. Med. Eng. Phys. 2017, 41, 63–73. [Google Scholar] [CrossRef] [PubMed]

- Wan, B.; Wu, R.; Zhang, K.; Liu, L. A new subtle hand gestures recognition algorithm based on EMG and FSR. In Proceedings of the 2017 IEEE 21st International Conference on Computer Supported Cooperative Work in Design (CSCWD), Wellington, New Zealand, 26–28 April 2017; pp. 127–132. [Google Scholar] [CrossRef]

- Dementyev, A.; Paradiso, J.A. WristFlex: Low-power Gesture Input with Wrist-worn Pressure Sensors. In Proceedings of the Proceedings of the 27th Annual ACM Symposium on User Interface Software and Technology, Honolulu, HI, USA, 5–8 October 2014; ACM: New York, NY, USA, 2014. UIST ’14. pp. 161–166. [Google Scholar] [CrossRef]

- Jiang, X.; Merhi, L.K.; Menon, C. Force Exertion Affects Grasp Classification Using Force Myography. IEEE Trans. -Hum.-Mach. Syst. 2018, 48, 219–226. [Google Scholar] [CrossRef]

- Ferigo, D.; Merhi, L.K.; Pousett, B.; Xiao, Z.G.; Menon, C. A Case Study of a Force-myography Controlled Bionic Hand Mitigating Limb Position Effect. J. Bionic Eng. 2017, 14, 692–705. [Google Scholar] [CrossRef]

- Cho, E.; Chen, R.; Merhi, L.K.; Xiao, Z.; Pousett, B.; Menon, C. Force Myography to Control Robotic Upper Extremity Prostheses: A Feasibility Study. Front. Bioeng. Biotechnol. 2016, 4, 18. [Google Scholar] [CrossRef]

- Barioul, R.; Ghribi, S.F.; Derbel, H.B.J.; Kanoun, O. Four Sensors Bracelet for American Sign Language Recognition based on Wrist Force Myography. In Proceedings of the 2020 IEEE International Conference on Computational Intelligence and Virtual Environments for Measurement Systems and Applications (CIVEMSA), Tunis, Tunisia, 22–24 June 2020. [Google Scholar] [CrossRef]

- Atitallah, B.B.; Abbasi, M.B.; Barioul, R.; Bouchaala, D.; Derbel, N.; Kanoun, O. Simultaneous Pressure Sensors Monitoring System for Hand Gestures Recognition. In Proceedings of the 2020 IEEE Sensors, Rotterdam, The Netherlands, 25–28 October 2020. [Google Scholar] [CrossRef]

- Ahmadizadeh, C.; Merhi, L.K.; Pousett, B.; Sangha, S.; Menon, C. Toward Intuitive Prosthetic Control: Solving Common Issues Using Force Myography, Surface Electromyography, and Pattern Recognition in a Pilot Case Study. IEEE Robot. Autom. Mag. 2017, 24, 102–111. [Google Scholar] [CrossRef]

- Sadarangani, G.P.; Menon, C. A preliminary investigation on the utility of temporal features of Force Myography in the two-class problem of grasp vs. no-grasp in the presence of upper-extremity movements. Biomed. Eng. Online 2017, 16, 59. [Google Scholar] [CrossRef]

- Jiang, X.; Tory, L.; Khoshnam, M.; Chu, K.H.T.; Menon, C. Exploration of Gait Parameters Affecting the Accuracy of Force Myography-Based Gait Phase Detection. In Proceedings of the 2018 7th IEEE International Conference on Biomedical Robotics and Biomechatronics (Biorob), Enschede, The Netherlands, 26–29 August 2018. [Google Scholar] [CrossRef]

- Islam, M.R.U.; Waris, A.; Kamavuako, E.N.; Bai, S. A comparative study of motion detection with FMG and sEMG methods for assistive applications. J. Rehabil. Assist. Technol. Eng. 2020, 7, 205566832093858. [Google Scholar] [CrossRef]

- Godiyal, A.K.; Pandit, S.; Vimal, A.K.; Singh, U.; Anand, S.; Joshi, D. Locomotion mode classification using force myography. In Proceedings of the 2017 IEEE Life Sciences Conference (LSC), Sydney, Australia, 13–15 December 2017. [Google Scholar] [CrossRef]

- Anvaripour, M.; Saif, M. Hand gesture recognition using force myography of the forearm activities and optimized features. In Proceedings of the 2018 IEEE International Conference on Industrial Technology (ICIT), Lyon, France, 19–22 February 2018; pp. 187–192. [Google Scholar] [CrossRef]

- Jiang, S.; Gao, Q.; Liu, H.; Shull, P.B. A novel, co-located EMG-FMG-sensing wearable armband for hand gesture recognition. Sens. Actuators A Phys. 2020, 301, 111738. [Google Scholar] [CrossRef]

- Ramalingame, R.; Barioul, R.; Li, X.; Sanseverino, G.; Krumm, D.; Odenwald, S.; Kanoun, O. Wearable Smart Band for American Sign Language Recognition With Polymer Carbon Nanocomposite-Based Pressure Sensors. IEEE Sens. Lett. 2021, 5, 1–4. [Google Scholar] [CrossRef]

- Al-Hammouri, S.; Barioul, R.; Lweesy, K.; Ibbini, M.; Kanoun, O. Six Sensors Bracelet for Force Myography based American Sign Language Recognition. In Proceedings of the 2021 IEEE 18th International Multi-Conference on Systems, Signals & Devices (SSD), Monastir, Tunisia, 22–25 March 2021. [Google Scholar] [CrossRef]

- Reed, R. Pruning algorithms-a survey. IEEE Trans. Neural Netw. 1993, 4, 740–747. [Google Scholar] [CrossRef] [PubMed]

- Tian, Y.; Yu, Y. A new pruning algorithm for extreme learning machine. In Proceedings of the 2017 IEEE International Conference on Information and Automation (ICIA), Macau SAR, China, 18–20 July 2017. [Google Scholar] [CrossRef]

- Freire, A.L.; Neto, A.R.R. A Robust and Optimally Pruned Extreme Learning Machine. In Advances in Intelligent Systems and Computing; Springer International Publishing: Cham, Switzerland, 2017; pp. 88–98. [Google Scholar] [CrossRef]

- Cui, L.; Zhai, H.; Wang, B. An Improved Pruning Algorithm for ELM Based on the PCA. In Proceedings of the 2017 International Conference on Robotics and Artificial Intelligence-ICRAI, Shanghai, China, 29–31 December 2017; ACM Press: New York, NY, USA, 2017. [Google Scholar] [CrossRef]

- de Campos Souza, P.V.; Araujo, V.S.; Guimaraes, A.J.; Araujo, V.J.S.; Rezende, T.S. Method of pruning the hidden layer of the extreme learning machine based on correlation coefficient. In Proceedings of the 2018 IEEE Latin American Conference on Computational Intelligence (LA-CCI), Gudalajara, Mexico, 7–9 November 2018. [Google Scholar] [CrossRef]

- Li, X.; Wang, K.; Jia, C. Data-Driven Control of Ground-Granulated Blast-Furnace Slag Production Based on IOEM-ELM. IEEE Access 2019, 7, 60650–60660. [Google Scholar] [CrossRef]

- de Campos Souza, P.V.; Torres, L.C.B.; Silva, G.R.L.; de Padua Braga, A.; Lughofer, E. An Advanced Pruning Method in the Architecture of Extreme Learning Machines Using L1-Regularization and Bootstrapping. Electronics 2020, 9, 811. [Google Scholar] [CrossRef]

- Mafarja, M.; Aljarah, I.; Faris, H.; Hammouri, A.I.; Al-Zoubi, A.M.; Mirjalili, S. Binary grasshopper optimisation algorithm approaches for feature selection problems. Expert Syst. Appl. 2019, 117, 267–286. [Google Scholar] [CrossRef]

- Huang, G.B. What are Extreme Learning Machines? Filling the Gap Between Frank Rosenblatt’s Dream and John von Neumann’s Puzzle. Cogn. Comput. 2015, 7, 263–278. [Google Scholar] [CrossRef]

- Anam, K.; Al-Jumaily, A. Evaluation of extreme learning machine for classification of individual and combined finger movements using electromyography on amputees and non-amputees. Neural Netw. 2017, 85, 51–68. [Google Scholar] [CrossRef]

- Chorowski, J.; Wang, J.; Zurada, J.M. Review and performance comparison of SVM- and ELM-based classifiers. Neurocomputing 2014, 128, 507–516. [Google Scholar] [CrossRef]

- Ibrahim, H.T.; Mazher, W.J.; Ucan, O.N.; Bayat, O. A grasshopper optimizer approach for feature selection and optimizing SVM parameters utilizing real biomedical data sets. Neural Comput. Appl. 2018, 31, 5965–5974. [Google Scholar] [CrossRef]

- Shi, J.; Cai, Y.; Zhu, J.; Zhong, J.; Wang, F. SEMG based hand motion recognition using cumulative residual entropy and extreme learning machine. Med. Biol. Eng. Comput. 2012, 51, 417–427. [Google Scholar] [CrossRef] [PubMed]

- Huang, G.B.; Zhou, H.; Ding, X.; Zhang, R. Extreme Learning Machine for Regression and Multiclass Classification. IEEE Trans. Syst. Man, Cybern. Part (Cybernetics) 2012, 42, 513–529. [Google Scholar] [CrossRef] [PubMed]

- Carrara, F.; Elias, P.; Sedmidubsky, J.; Zezula, P. LSTM-based real-time action detection and prediction in human motion streams. Multimed. Tools Appl. 2019, 78, 27309–27331. [Google Scholar] [CrossRef]

| Title 1 | Interlink FSR (FSR402) | Flexiforce (FLX-A201-F) |

|---|---|---|

| Minimum actuation force (N) | 0.1 | N/A |

| Force sensitivity range (N) | 0.1–10 | 0 to 4.4, 0 to 445 |

| Single-part force repeatability | ±2% | ±2.5% |

| Part-to-part force repeatability | ±6% | ±40% |

| Drift | <5% per log10 (time) | <5% per log10 (time) |

| Hysteresis | +10% | <4.5% |

| Response time (μs) | <3 | <5 |

| Linearity error | N/A | <±3% |

| Sensors | Features | Subjects | Gestures | Classifier | Accuracy |

|---|---|---|---|---|---|

| 8 colocated | MAV, WL | 5 | 10 | LDA | 91.6% |

| sEMG FMG | ZC, SSC | ||||

| [33] | |||||

| 8 FMG | MAV | 5 | 10 | LDA | 80% |

| Self-produced | |||||

| [33] | |||||

| 16 FMG | RAW signal | 12 | 16 | LDA | 96.70% |

| [19] | |||||

| 8 nanocomposite sensors | min, max, mean, RMS, median, STD | 10 | 10 | ELM | 93% |

| [34] |

| Work | Hand Signs | Sensor No. | Sensor | Classifier | Accuracy in % | Observations | Subjects |

|---|---|---|---|---|---|---|---|

| [33] | 10 | 8 | Customized | LDA | 80.00 | 50 | 5 |

| This work | 10 | 8 | FSR | KTGEL | 88.00 | 50 | 5 |

| [34] | 10 | 8 | Customized | ELM | 93.00 | 100 | 10 |

| This work | 10 | 8 | FSR | KTGEL | 98 | 100 | 10 |

| ELM | KTGEL | |

|---|---|---|

| Additional feature selection algorithm | yes | no |

| Initial number of features | 13 | 48 |

| Initial number of hidden nodes | 3000 | 3000 |

| Final number of hidden nodes | 3000 | 1000 |

| Training time with feature selection in seconds | 9.5 | 2.5 |

| Testing time in seconds | 0.22 | 0.04 |

| Testing accuracy in % | 95 | 94 |

| Precision in % | 97 | 96 |

| Sensitivity in % | 97 | 97 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Barioul, R.; Kanoun, O. k-Tournament Grasshopper Extreme Learner for FMG-Based Gesture Recognition. Sensors 2023, 23, 1096. https://doi.org/10.3390/s23031096

Barioul R, Kanoun O. k-Tournament Grasshopper Extreme Learner for FMG-Based Gesture Recognition. Sensors. 2023; 23(3):1096. https://doi.org/10.3390/s23031096

Chicago/Turabian StyleBarioul, Rim, and Olfa Kanoun. 2023. "k-Tournament Grasshopper Extreme Learner for FMG-Based Gesture Recognition" Sensors 23, no. 3: 1096. https://doi.org/10.3390/s23031096

APA StyleBarioul, R., & Kanoun, O. (2023). k-Tournament Grasshopper Extreme Learner for FMG-Based Gesture Recognition. Sensors, 23(3), 1096. https://doi.org/10.3390/s23031096