1. Introduction

Structural-vibration-based occupant localization is a perception methodology where occupants’ locations in an indoor environment are determined by analyzing the floor vibrations due to their footfall patterns. Specifically, this method employs the measurements of accelerometers that are fixed to the floor. Henceforth, the terms vibro-localization and vibro-measurements will refer to such localization techniques and the measurements used in these techniques, respectively. Vibro-localization techniques facilitate a myriad of applications ranging from smart home monitoring and event classification [

1,

2,

3,

4,

5] to human gait assessment [

6,

7,

8,

9,

10,

11,

12] and occupant identification and tracking [

13,

14,

15,

16,

17,

18,

19,

20].

Energy-based vibro-localization techniques utilize the energy that is inherent in vibro-measurements as a localization feature, since the signal energy serves as a consistent metric for gauging the magnitude of the vibro-measurements [

21,

22,

23,

24,

25]. Specifically, higher signal amplitudes result in larger energy values registered by the sensors, and vice versa. By employing this notion, energy-based vibro-localization techniques characterize the relationship between the signal energy and the length of the propagation path. Therefore, these techniques offer a simplified approach to occupant localization, thereby reducing the need for exhaustive signal analysis. In this study, the terms signal energy, power, intensity, and strength are used interchangeably despite their nuanced differences.

Dispersion is a natural phenomenon where different frequency components of structural waves propagate at different velocities in the medium, i.e., the floor. This phenomenon has been seen as one of the major contributors to localization errors; hence, substantial scholarly endeavors have been directed toward examining the floor’s dispersive attributes on localization outcomes. These researchers have mitigated the dispersive effects inherent in the floor by isolating narrow frequency bands. These bands, derived via continuous wavelet transformation, remain unaffected by dispersion [

26,

27,

28,

29]. Kwon and Agha [

26], for instance, presented a successful human activity recognition system utilizing floor vibro-measurements. Their technique employed a feature extraction step based on wavelet packet decomposition coupled with statistical measures to capture the unique characteristics of different activities. The empirical findings emphasized the efficacy of the proposed technique in the precise identification of diverse human activities. Racic et al. [

27] presented a technique for the detection and classification of human activities via floor vibrations. They employed an approach involving a combination of wavelet-based feature extraction and a support vector machine classifier to accurately identify different activities, demonstrating the potential of floor vibro-measurements for activity monitoring and recognition in smart environments. Such narrowband filtering essentially compromises the spatial resolution of the localization technique at hand; this challenge, especially in the context of radio-localization, has been discussed in detail (for instance, [

30]).

In their study, Mirshekari et al. [

31] employed the wavelet transformation to isolate less-dispersion-affected narrowband frequency spectra, crucial for precise time-difference-of-arrival (TDoA) estimation. This innovative multilateration algorithm, unlike conventional methods, does not rely on prior wave velocity knowledge and adapts effectively to different surfaces. It also integrates a sensor elimination approach to enhance vibro-localization accuracy. Selecting the nearest sensors minimizes wave propagation through the floor, mitigating signal attenuation. This improves the signal-to-noise ratio (SNR), reduces the distortion, and enhances the TDoA and location estimations. Localization with four sensors is favored over more, as additional distant sensors introduce attenuation and lower accuracy. Sensor elimination employs TDoA estimations to exclude distant sensors.

The warped frequency transformation technique has been utilized to discern the dispersion curve and to mitigate perturbations attributed to dispersion [

32,

33]. There exist system-theoretic techniques that characterize the dynamic behavior of the floor via transfer function estimation [

34,

35,

36]. Additionally, the Green’s function has been employed to account for wave reflections and their dispersion [

37]. Despite their precision in empirical analyses, these techniques exhibit limitations in their capacity to generalize the complex material properties and boundary conditions inherent in floors.

On the other hand, Bahroun et al. [

38] presented their work formalizing the group velocities, i.e., a major component of the signal energy, as a function of the propagation path distance. These promising results paved the way for model-based techniques which tend to explain the wave phenomena from the data. Alajlouni et al. [

39], for instance, showed their hypothesis of an energy-decay model (energy logarithmically decays with propagation distance) as a localization model. In their work, Pai et al. [

40] analyzed whether a relationship exists between the occupant’s footfall patterns and the measured signal characteristics in an empirical case study. In light of their work, the authors assert that there is no evidence of a monotonic relationship between the amplitude or kurtosis of the measured signal and the propagation distance. Parametric energy-decay models highlight the potential for further improvement [

41,

42,

43].

Along with parametric decay models, Poston et al. [

44], in an alternative attempt, moved the localization framework to a probabilistic framework by modeling the probability of detection and false alarm. Alajlouni et al. [

41] took a similar approach to localize the occupant by maximizing the sensor likelihood functions given the hypothesis of the sensor’s time domain measurements and the energy-decay model proposed in their earlier work. Wu et al. [

42] proposed G-Fall, a device-free fall detection system based on floor vibrations collected using geophone sensors. Their system utilizes hidden Markov models (HMMs) and an energy-based vibro-localization technique, achieving precise and user-independent fall detection with a significant reduction in false alarm rates.

1.1. Limitations of the Existing Approaches

One of the inherent challenges limiting the ability and success of vibro-localization techniques is that these techniques are single-shot estimators: each heel-strike and its corresponding vibro-measurement vectors are unique; hence, repeated measurements for a single step are not easily attainable. Therefore, most of the common estimation frameworks cannot be directly employed in such localization systems. This challenge brings about the following limitations in the landscape of vibro-localization techniques.

Limitations of ideal sensor models: Accelerometers are not ideal because they tend to introduce random and systematic errors in the vibro-measurement vector during the signal acquisition time. While not trivial, the characterization of errors stemming from signal acquisition is not a central aspect of the existing literature. A complete understanding about localization errors cannot be achieved unless such sensor imperfections are categorically identified and incorporated into vibro-localization frameworks.

Limitations in uncertainty quantification: The extent to which measurement errors contribute to localization errors is still unknown. In other words, the sensing errors in vibro-measurements are yet to be tied to the localization errors. It is imperative to account for errors in vibro-measurement vector for a successful localization technique.

Limitations in information reliability assessment: Along with the measurement imperfections, a myriad of uncertainty sources drive the success and failure cases of the energy-based vibro-localization techniques, such as reflections, dispersion, etc. To remedy the adverse effects of any unreliable sensor information at a given time, a metric needs to be proposed to measure the reliability of the information that each vibro-measurement vector carries.

1.2. Baseline Study and Overview of the Fundamental Differences

In this subsection, we present a comprehensive comparison between the established baseline in vibro-localization, as outlined by Alajlouni and Tarazaga [

45], and the proposed technique. The fundamental differences between these two methodologies are summarized in

Table 1. The comparison encompasses the key elements of vibro-localization, including the type of localization features measured, the known parameters assumed in both techniques, the calibrated parameters used during offline processing, and the final output produced during online processing. This comparative analysis aims to highlight the enhancements and novel contributions of the proposed technique.

In contrast, our study builds upon and extends this model by considering non-linear factors that may affect the energy–distance relationship. These factors could include environmental characteristics and multi-path effects, which cannot be accounted for in a purely linear model.

To emphasize the contributions of this study, it is essential to contrast our approach with the closest-related work, as encapsulated in [

41]. While [

41] initiates the exploration of the problem space by deriving probability density functions (PDFs) of the energy of the acquired vibro-measurement vectors, it does so with the simplifying assumption of neglecting the cross-terms as they are zero-mean random variables. Our work diverges fundamentally at this juncture, where we incorporate all terms in our derivation. This inclusion introduces a more comprehensive model that acknowledges the potential influence of cross-terms.

Further diverging from [

41], we abandon the assumption that energy measurements

are independent and identically distributed. This work demonstrates, through Proof 1 and Corollary 1, that the

’s are, in fact, sampled from distinct distributions for each sensor. We substantiate this claim by providing the PDFs and the first two statistical moments for these distributions. This nuanced understanding of the energy measurements’ distribution is pivotal to enhancing the accuracy of vibro-localization techniques, thereby marking a significant stride forward from the state of the art.

1.3. Summary of the Contributions

This paper presents an energy-based vibro-localization technique that addresses the sensor imperfections and their effects on the localization results. The proposed technique employs a family of accelerometers placed on a floor to generate multiple vibro-measurement vectors for the same step. Furthermore, the proposed technique employs two corrective steps in the localization time: (i) a comprehensive uncertainty quantification to minimize the effect of the internal errors occurring during the signal acquisition time present in the vibro-measurement vectors; and (ii) an information-theoretic BSE algorithm to address the external sources of uncertainty such as reflections and dispersion. The following points summarize the proposed technique’s contributions.

Vibro-localization technique with comprehensive uncertainty quantification (addressing limitations 1 and 2): The proposed vibro-localization technique employs an explicit error model for each sensor. Therefore, a complete uncertainty quantification of the localization errors due to the measurement errors can be minimized with our technique.

Information-theoretic BSE algorithm (addressing limitation 3): The paper introduces a BSE algorithm. The proposed BSE algorithm divides the sensors into two distinct subsets: the ones that show some consistency among them, and the ones which are divergent in nature. By leveraging a greedy information-theoretic approach, it decides whether a sensor should be placed in the former set, or vice versa. This algorithm guarantees a locally optimal subset of the sensors in minimizing the localization errors.

Empirical validation (addressing limitations 1–3): Data from previously conducted controlled experiments were employed to validate and benchmark the proposed technique. The results demonstrated significant improvements over the baseline [

45] approach in terms of both accuracy and precision.

Quantification of the empirical precision and accuracy (addressing limitation 3): This paper employs the results of the empirical validation study to quantify an empirical correlation between the precision and accuracy achieved with the proposed vibro-localization technique. By employing such correlation metrics, we gain better insights about the technique’s performance and failures.

1.4. Organization of the Paper

This paper is structured into six distinct sections to provide a comprehensive overview of the research topic.

The first section,

Section 1, serves as the introductory portion of the article. In this section, readers are introduced to the primary problem that the research addresses. Additionally, it offers a review of the existing literature on the subject, ensuring that readers have a foundational understanding of the context and the significance of the problem at hand.

Following the Introduction,

Section 2 delves into the specifics of the proposed technique. This section provides the details of the uncertainty quantification of the localization outcomes due to the errors in the vibro-measurement vectors. A probabilistic technique is employed to quantify the localization uncertainties. Here, we also provide details of the BSE algorithm used in the elimination of Byzantine sensors.

In the subsequent section,

Section 3, the focus is on the controlled experiments that were carried out. This section provides a detailed account of the experimental setup and procedure, laying the groundwork for the results that follow.

Section 4 presents the results obtained from the experiments. It offers insights into the technique’s performance and reliability, discussing the outcomes in the context of the proposed method’s effectiveness.

Section 5 encapsulates the primary findings of the research. It presents the conclusions drawn from the study and sheds light on potential avenues for future work. This section serves to summarize the research’s contributions and provide future research directions.

2. Method

In this section, the manuscript provides its principal contribution: a new energy-based vibro-localization technique. As an individual traverses the environment, the force exerted by their heel-strikes on the floor induces structural vibration waves within it. These waves propagate through the floor and reach the sensors placed on the floor thereby generating vibro-measurements. Along the propagation path, the vibration wave is deformed and disturbed by various factors that are internal and external to the floor. The proposed technique employs these deformed vibro-measurements of the floor to determine the locations of occupants, despite challenges posed by measurement uncertainties and the potential presence of Byzantine sensors.

Definitions and notation: Consider the problem of estimating a single footstep location

that belongs to an occupant located at

. In this estimation problem, the vibro-measurements

that are obtained by the

ith of

m sensors placed under a floor between time steps

are employed. Let

be the index set of all the sensors and sensor

be located at

in a rectangular localization space

. The localization space

is an arbitrary closed shape within which all the sensors and the occupants are contained. Assume, the vibro-measurements

are disrupted with measurement error

, where the true vibro-measurements are

. We derive the relationship between the true vibro-measurements and the sensor’s output as

We often use a shorthand notation to represent these quantities in vector form associated with the time snapshot between . Let us define these vectors for the sake of clarity: , and .

Given these definitions, we derive a probabilistic localization framework that makes use of the signal energy of the vibro-measurement vector

and its PDF with a novel BSE algorithm to benefit from as many sensors as possible in estimating the location vector

. The proposed localization technique employs a parametric energy-decay model to estimate the distance between the sensor and the occupant.

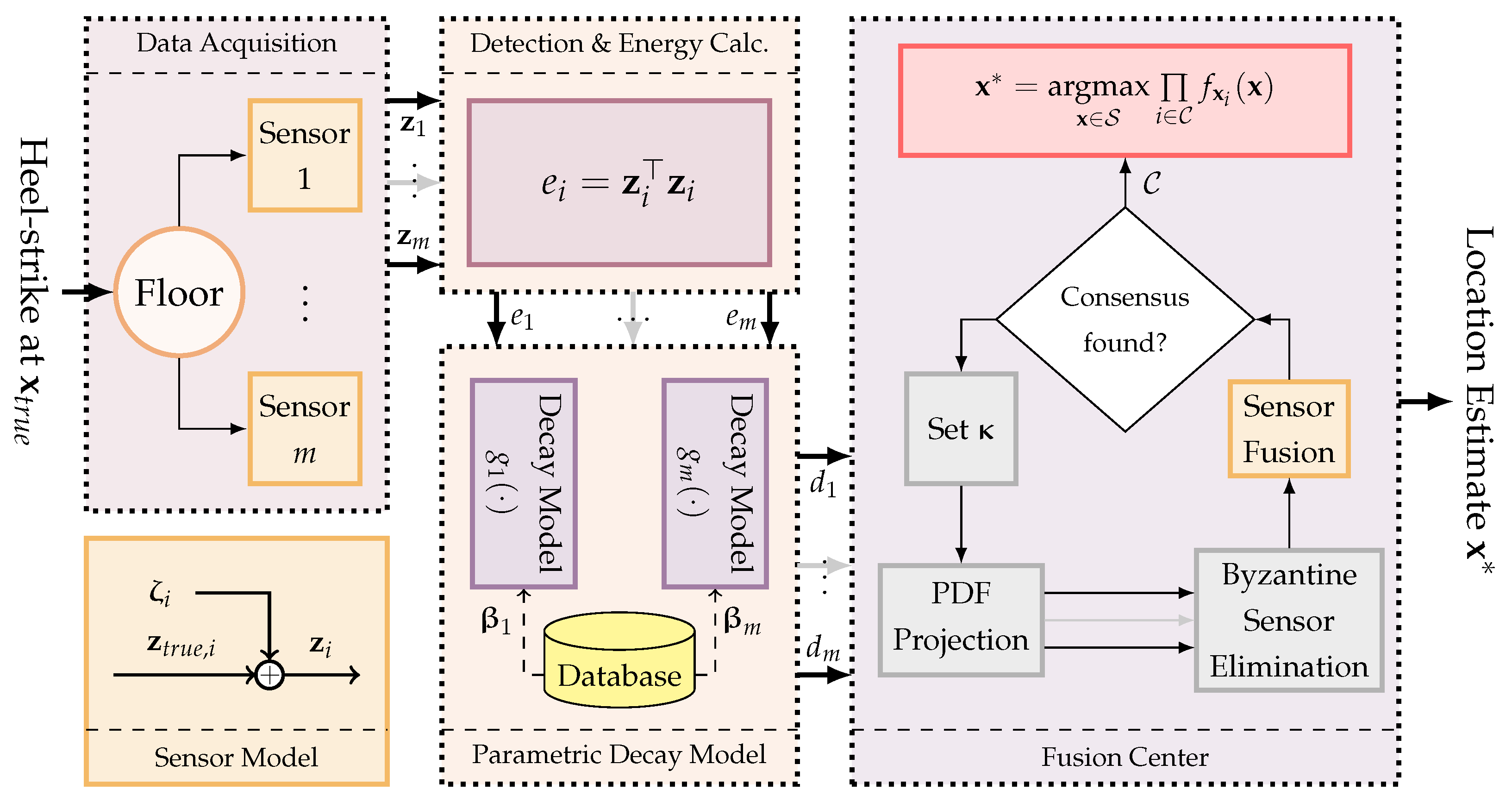

Figure 1 provides a graphical representation of the concepts of the proposed localization.

The energy

of the stochastic vibro-measurement vector

can be derived by employing Rayleigh’s theorem, as shown in Theorem A1 in

Appendix A. The signal energy of a random measurement vector

is

By plugging Equation (

1) into Equation (

2), we derive the energy as

Notice that

; therefore, the relationship between the measured energy

and the energy that was supposed to be registered,

, is given by

where

represents the error in the signal energy.

We assume the disturbance vector is an independently and identically distributed normal random vector, i.e., . Therefore, we can show that . With this, we present our proposition showing that can be approximated with a normally distributed random variable when the number of samples n is sufficiently large.

Proposition 1. The energy of a stochastic vibro-measurement vector can be approximated with a normally distributed random variable with a mean and variance . The details of this assertion can be seen below.

Proof. If both sides of Equation (

3) are divided by the variance

, we have

Recall ; therefore, . The left-hand side of the equation above is a random variable that is the squared sum of n independently and identically distributed normal random variables that have unit variance.

Thus, we can show that

follows a noncentral chi-squared distribution with n degrees of freedom and the noncentrality parameter

(cf. Theorem A2 in

Appendix A).

By invoking Theorem A3 in

Appendix A, we derive an approximated PDF of

with a normal distribution when the number of time samples is sufficiently large (empirically

),

Finally, the scalability property of the normal distribution can be employed to derive the distribution of the signal energy

, where the mean and variance are characterized as shown below:

and

□

As a consequence of Proposition 1, we present the next corollary:

Corollary 1. If the sensor is calibrated such that the sensor bias is negligibly small, i.e., and the variance in the measurement error is known , then the energy distribution can be parameterized with the number of samples n and the unknown true energy that the sensor was supposed to register:where the mean and variance of the energy distribution are given byand 2.1. Parametric Energy Decay and Localization Framework

In this work, we exploit the notion that the signal energy decreases as the structural vibration wavefronts propagate along a path. Based on this concept, we propose a localization function

that maps the signal energy

concerning the vibro-measurement vector

and the directionality of the occupant

to a location vector

. The localization function

can be represented as

where

represents a known calibration vector of a parametric energy-decay model

that maps the energy

to the distance

. The calibration vectors

,

, are obtained in a pre-deployment calibration step. A graphical representation of Equation (

8) is shown in

Figure 2. It should be noted that the parametric energy-decay model

is assumed to be a monotonically decreasing function for some positive energy measurement

; therefore, it is bijective and its inverse exists. In this work, we employed a curve that represents the relationship between the energy of the vibro-measurement vectors and the propagation distance as a power curve. Formally, this curve can be shown below,

, where the calibration vector is given by

.

In this framework, the occupant location with respect to the ith sensor is parameterized with its representation in a polar coordinate system centered at sensor location . As an accelerometer by itself provides only ranging information, i.e., the distance between the occupant and itself, we assume the directionality component is completely unknown. Therefore, we can simply model it with a random variable that follows a uniform distribution between the range , which is denoted as .

The defining properties of the location estimate

can be obtained by studying its PDF

. The derivation of the PDF

, given the PDF of the signal energy

, is a straightforward application of the density transformation theorem shown in Theorem A4 in

Appendix A. The following section provides the signal model with which the signal energy

can be computed from the vibro-measurement vector

.

2.2. Derivation of the pdf for Location Estimation

Given the localization function

that maps

to

, we can derive the PDF of the location estimate

using the density transformation theorem, as presented in Theorem A4 in

Appendix A,

because

and

are independent from each other:

where

and

. Therefore,

where

denotes the Jacobian matrix of the inverse localization function

evaluated at

.

The definition of the Jacobian matrix

is given as

where

By plugging Equation (

10) into Equation (9), we derive the PDF fully:

Equation (

11) shows the PDFs

assign a probability value for an occupant located at vector

given the inverse of the parametric decay function

and sensor location

. This equation is one of the fundamental contributions of this study. Notice the term in the denominator, i.e.,

, in Equation (

11), results in an inverse relationship between the probability values and the distance between the sensor and the impact location.

2.3. Sensor Fusion

Given the PDFs

for all sensors indexed by

, we aim to find a joint PDF that represents the collective localization outcome using the vibro-measurements of

m sensors. As all the sensors are independent of each other, we can represent the joint PDF as given below:

Let

be an independent variable denoting the ratio between the unknown true energy

and measured signal energy

. Notice that

; thus,

for small

. Given this definition, we can reparameterize the joint PDF which forms the basis for the sensor fusion algorithm used in this paper,

2.4. Byzantine Sensor Elimination

Byzantine sensors, as illustrated in

Figure 3, are those that provide misleading or incorrect data, often deviating from the true value or introducing conflicting information into a sensor network. In the figure, sensors

A and

B are examples of informative sensors (shown in images (a) and (b)) while

C and

D are instances of Byzantine sensors (images (c) and (d)). The PDFs of the Byzantine sensors have sharp peaks, indicating high precision, but they show offsets from the true value, revealing their low accuracy. When such a sensor’s data are fused with data from other sensors, it can significantly distort the resulting joint likelihood, leading to erroneous conclusions or alternative hypotheses about the occupant’s location.

The second row of the figure provides insights into the effects of fusing data from a Byzantine sensor with informative ones. For instance, image (f) showcases the fusion result of an informative Sensor A with the Byzantine Sensor C. The resulting uniform distribution across the localization space highlights the lack of consensus between the two, emphasizing the detrimental impact of the Byzantine sensor on the fusion process. Similarly, image (g) depicts the fusion of Sensor B with Sensor C, producing an offset peak that suggests an alternative hypothesis about the occupant’s location. To ensure the reliability of a sensor network, it is crucial to identify and eliminate such Byzantine influences. By observing the fusion results and identifying distributions that deviate from expected patterns or the true value, one can iteratively pinpoint and remove Byzantine sensors, enhancing the overall accuracy and trustworthiness of the network.

In vibro-localization systems, there exist many factors that can easily render a sensor as a Byzantine. For instance, the parametric energy-decay model assumes the wavefronts detected by an accelerometer travel in a direct path from the step location to the sensor observing the vibration phenomenon. However, this assumption seldom holds for indoor environments as the wavefronts may be reflected by various objects or boundaries that exist in the environment. To address and circumvent these undesired scenarios, we introduce an algorithm that identifies a consensus-forming subset of sensors within to counteract the influence of Byzantine sensors.

During the initialization phase of the information-theoretic BSE algorithm, a comprehensive computation is carried out to determine all conceivable pairwise joint PDFs and their corresponding entropies. Subsequently, an initial consensus set is established using the sensor pair

that produces the maximum entropy after fusion from a distinct pair of the index set

. Formally, we represent this initiation phase as given below:

Furthermore, we represent the joint PDF encapsulating the existing consensus at any point in time, denoted as

, as follows:

Throughout each iterative phase, a candidate sensor, denoted as

k (which is distinct from the pair

), is selected from the set

. This sensor undergoes an evaluation against the prevailing consensus set

, achieved by fusing its PDF with the consensus PDF

. A subsequent determination is predicated upon the entropy, or more precisely, the surprisal of the resulting hypothesis with respect to the current consensus. Should the integration of sensor

k not attenuate the joint PDF to a uniform distribution (as exemplified in case (f) in

Figure 3) or not decrease the average entropy, it is incorporated into the consensus set. Otherwise, sensor

k is classified as Byzantine. Finally, the above procedure undergoes an iterative repetition with varying vectors of

. This iteration progresses in the direction of the gradient of

calculated with respect to

vector. The process persists until a local maximum in the entropy landscape, corresponding to an (possibly local) optimal consensus, is identified. The proposed BSE algorithm, coupled with the vibro-localization technique, is outlined in Algorithm 1.

At an initial glance, readers might find similarities between the algorithm above and the RANSAC algorithm [

46], an acronym for random sample consensus; however, they are fundamentally distinct. RANSAC is an algorithm employed in fields such as computer vision and computational geometry to robustly estimate model parameters, even in the presence of outliers. In contrast, the information-theoretic BSE algorithm is tailored for sensor networks to counteract Byzantine sensors using information-theoretic strategies. While both algorithms seek to establish reliability (or robustness) and consensus (or agreement) within datasets, their approaches, and primary use cases are notably distinct. A side-by-side comparison of their characteristics is presented in

Table 2.

| Algorithm 1: Vibro-localization using the Information-Theoretic BSE Algorithm |

1: procedure BSE()

2:

3: for all , do ▹ Initialization of the consensus set

4:

5: if then

6:

7: end if

8: end for

9: for all do ▹ Attempt to expand the consensus set

10:

11: if then

12:

13: end if

14: end for

15: return

16: end procedure

17:

18: while do ▹ Gradient Descent

19: ▹ Update in the gradient direction

20:

21:

22:

23:

24: end while |

3. Experiments

The proposed technique’s effectiveness and performance were assessed through a series of controlled experiments, the details of which are outlined in this section.

3.1. Experimental Setup

To evaluate our vibro-localization approach, we employed the empirical data from a set of controlled experiments in a corridor situated on the fourth floor of Goodwin Hall, an operational building on Virginia Tech’s campus. In these experiments, two participants traversed a pre-defined 16-meter path.

Figure 4 represents the step locations constituting the traversed path—represented with green circles (

∘)—as well as the sensor locations—represented with black squares (

■)—overlayed. We derived our reference points from these investigations and utilized identical experimental data as in [

41,

45]. The corridor’s concrete floor housed the sensors, which were attached to uniform steel mounts welded to the flanges of the structural I-beams beneath. In this study, the experimental testbed utilized is embedded within the structural framework of the building. Due to this integration, a photograph of the testbed would not substantially add to the understanding of the setup, as it predominantly features standard structural components of the building. The crucial aspects of our setup are its configuration and the placement of the sensors and equipment, which are more effectively conveyed through the schematic representation provided in

Figure 4. Eleven PCB Piezotronics model 352B accelerometers, detecting dynamic out-of-plane acceleration within the frequency range of (2, 10,000)

and with an average sensitivity of 1000 millivolts per

g (where

m/s

2), recorded the structural vibrations. These devices captured data from 162 steps taken by each participant, amounting to a total of 324 steps. The data collection was facilitated by VTI Instruments EMX-4250 digital signal analyzer cards, connected to the accelerometers via coaxial cables and equipped with anti-aliasing filters and a high-precision 24-bit analog-to-digital converter. The accelerometer data were sampled at a rate of 1024 Hz. For a comprehensive insight into the experimental design, readers are directed to the foundational study [

45].

Data and model validity: The preliminary study gathered vibration data during low-activity periods, ensuring minimal movement in the vicinity of the instrumented corridor. The data revealed that the sensors’ noise profiles were normally distributed with zero mean and consistent variance. Thus, the signal model in Equation (

1) aptly represents the vibration measurements. Given this observation, we confidently state that the energy-related random variables, represented as

for

, align with the experimental findings.

Signal detection problem: A primary distinction exists between our implementation of the baseline and the original work presented in the publication [

45]: the signal detection algorithm. The baseline study, in its methodology, adopted a tight-window approach. This approach was characterized by the identification of the first time instance that the signal broke the SNR envelope and the peak of the signal. In this study, on the other hand, we used a stochastic signal detection algorithm to denote the time steps in which the signal magnitude broke the noise floor and eventually dissipated below the noise floor. To contrast our method, the detection algorithm employed in this study took a more flexible stance: instead of strictly searching for the time instance, we signal peaks, our algorithm was designed to be more lenient. It permitted “silent” periods, which are intervals without significant signal activity, both before and after the vibration. This choice of algorithm led to a notable difference in the signal energy values when compared to the baseline study.

Figure 5 graphically demonstrates the difference between the signal detection algorithm employed by the baseline and the proposed technique with an impulse response curve of an underdamped second-order system. The black line represents the elements of the “noisy” vibro-measurement vector. The dashed red and green lines represent the results of the baseline and proposed detection algorithm, respectively. This distinction in approach not only highlights the variability in signal processing techniques but also underscores the potential impact of these choices on the final results and interpretations.

3.2. Implementation

In the course of our data processing, we discretized the localization space . This discretization was achieved by segmenting it into a total of 270,000 grid cells, specifically arranged in a configuration. By evaluating the PDFs only at the center of each grid cell, we avoided the intricate surface integrals and achieved greater computational efficiency.

The calibration vectors are obtained in an offline processing step, where the signal energy and their known distance measurements are used to fit the parametric decay models, denoted as . These measurements can be obtained by exciting the floor, for instance, by hitting it with a hammer, at known locations. This process facilitates the accurate calibration of the system by correlating the known physical impacts at specific points with the resulting signal characteristics. In this work, we only used the first 27 steps of Occupant-1’s data to obtain these calibration vectors for all sensors.

In the online processing, when a footstep is detected, the signal energy of the vibration measurements are used to minimize the log-transformed loss function Equation (

15) by adjusting the value of vector

in the direction of its gradient. Consequently, the information-theoretic BSE algorithm is employed in each iteration of gradient descent to discard Byzantine sensors. The gradient descent steps are repeated until the algorithm converges to a solution and forms a consensus among the sensors.

To evaluate the proposed technique, we employed a combinatorial study to analyze the effect of the number of sensors used on the localization metrics. Specifically, we employed all the possible combinations of with the given sensor configuration in the experimental data. This yields cases to evaluate for each step and occupant. In other words, each step is re-evaluated 2036 times, yielding 329832 () data points for each occupant. Therefore, in our analysis, we are able to provide different defining statistical characteristics of the localization error. This approach also enables us to remedy various uncertainty sources such as the effects of sensor placement, and differences in propagation paths while enriching the results independent of the individual sensor performance. The source code of this study is available on request.

4. Results

This section presents the empirical outcomes derived from our proposed vibro-localization technique. These empirical results benchmark the efficacy of our approach in terms of accuracy and precision. By analyzing these findings in detail, we aim to provide a deeper look into the technique’s performance under various conditions and scenarios.

In

Figure 6, three representative sets (out of 659664 cases) of outcomes are depicted, each corresponding to a different number of sensors (

) employed for the localization of identical step data for two occupants. The left column presents results for the first occupant, while the right column pertains to the second occupant. The sensor locations within this figure are marked by square markers (

■), and sensors deemed non-Byzantine by the algorithm are highlighted with circular markers (∘). The green plus (

+) and red cross (

×) markers, respectively, represent the ground truth

and the estimated location vector

. In the scenarios labeled (a) for the first occupant and (b) for the second, utilizing two sensors, the norm of the localization errors were 1.39 and 1.1784 meters, respectively. Both sensors were considered as the consensus set due to the lack of alternative sensor choices. As the sensor count increased to six, as shown in labels (c) for the first occupant and (d) for the second, the observed errors were 1.39 for the first occupant and 1.172 meters for the second occupant. However, the first occupant’s results did not show significant improvement with the additional sensors. In these cases, the initial sensors were adaptively substituted with new sensors for consensus. With a further increase to eleven sensors, as indicated in labels (e) and (f), the localization errors reduced to 0.3 centimeters for the first occupant and 54.4 centimeters for the second, both accompanied by an updated consensus set.

To evaluate the influence of the sensor count on the localization outcomes, especially in terms of accuracy and precision, the quantile function, represented as

, of the localization error was plotted against the number of sensors, as illustrated in

Figure 7 and

Figure 8. This function yields the error value

x for a given probability

p, satisfying the condition

. In essence, it serves as the inverse of the cumulative distribution function (CDF) for the random variable

x. For clarity,

corresponds to the median of the localization error across varying sensor counts.

In

Figure 7, the quartiles of the sample localization errors—the first (25th percentile), second (50th percentile or median), and third (75th percentile)—are plotted against the number of sensors available in the localization system. In other words, the number of sensors listed in the figure represents the sensor count before the proposed BSE algorithm eliminates a subset from the sensor pool. The data for the first and second occupants are differentiated by red and black colors, respectively. For the first occupant, the solid (

–), dash-dotted (

– -), and dotted (

· ·) red lines represent the respective quartiles of the localization error. For the second occupant, the solid (–), dash-dotted (– -), and dotted (· ·) black lines serve the same purpose. The figure indicates a reduction in the localization error with an increasing number of sensors. This trend is consistent across all quartiles. Notably, as more sensors are introduced, the disparity between the first and third quartiles diminishes, highlighting enhanced accuracy in both the optimal and suboptimal conditions.

As is evident from

Table 3, a consistent trend across both occupants and all quartiles is seen: as the number of sensors increases, the localization error (measured in all metrics) decreases. For instance, the median error for the first occupant decreases from 2.4481 with two sensors to 1.7931 meters with eleven sensors in the proposed technique (see

Table 3). Similarly, for the second occupant, it reduces from 2.3852 to 1.6147 meters for the same number of sensors. The standard deviation, representing the error variability, also shows a steady decrease, an evidence of a growth in consistency, as more sensors are employed. This reduction in error and variability is a clear indication of enhanced accuracy and reliability in the localization process.

Figure 8 presents the precision of the entire localization system in terms of entropy, or the surprisal, encoded in the joint PDFs. Akin to the previous figure, the data for the first and second occupants are differentiated by red and black colors, and the same styling is used to represent the same quartiles. The figure distinctly shows a decrease in uncertainty as the number of sensors grows. This trend is consistent across all quartiles. Significantly, with the addition of more sensors, the gap between the first and third quartiles narrows, indicating enhanced precision in both the best- and worst-case scenarios.

Figure 9 synthesizes the insights derived from

Figure 7 and

Figure 8, demonstrating a discernible correlation between the accuracy and precision metrics obtained with the proposed localization technique. The figure demonstrates that enhancements in precision are parallel with improvements in accuracy. This trend can be observed for both of the occupants, even with different numbers of sensors used in the localization system. This trend, as can be seen in the figure, can be described as quasi-linear curves, where the range of the lines differs with the number of sensors used in the technique. Also, it can be seen in the figure that sub-meter localization accuracy is viable even with two sensors if the sensors yield a certain level of measurement precision. On the other hand, for the higher number of sensors, this goal is more attainable as their curves span more in the sub-meter region. Furthermore, note that the magnitude of the improvement in accuracy with the improved precision differs for different numbers of sensors employed in the system. In other words, more desirable results become prominent when more sensors are used.

The empirical PDFs and empirical CDFs are non-parametric tools employed to analyze the distribution of data points in a sample without assuming an inherent distribution. The empirical PDFs provide a histogram-like representation, highlighting the relative frequencies of various data values, while the empirical CDFs capture the cumulative probability for each value.

Figure 10 depicts the empirical PDF, represented with solid lines, and CDF, represented with dash-dotted lines, of the normed localization error derived from location estimates for both occupants’ data. The plots on the left and right represent these curves of the first and second occupants’ data, respectively. The solid blue and brown curves represent the empirical PDF of the proposed and baseline techniques while the dash-dotted curves represent the empirical CDF. As can be seen in the figures, the proposed technique shows relatively higher accumulations in lower regions in the error axis than the baseline suggesting the error characteristics of the proposed technique will more likely fall on the smaller regions than the baseline. Another way to present this observation is through the empirical CDFs. For instance, a major takeaway from the empirical CDF curves is that 80% of the errors of the proposed and baseline techniques are equal to or less than 2.29 and 3.10 meters for both occupants, respectively.

Table 3 tabulates the overall landscape of the resulting error characteristics of the proposed technique and the baseline by providing a statistical analysis comparing the performance of the two localization methods. Various descriptive statistical metrics such as the mean, standard deviation, median, root mean square (RMS), minimum, and maximum values, all expressed in meters, are presented. The results span varying numbers of sensors, from 2 to 11, and are differentiated for two distinct occupants.

For the first occupant, the proposed method consistently outperforms the baseline across all metrics. The weighted average mean localization error for the proposed method is 1.58 meters, a notable improvement from the baseline’s 2.31 meters. Similarly, for the second occupant, the proposed method achieves a weighted average mean error of 1.48 meters, significantly lower than the baseline’s 2.28 meters. Also, one interesting finding from this result set is that the proposed localization technique can achieve sub-meter localization accuracy and precision when enough sensors are employed (cf. std. dev. of Occupant-1 and Occupant-2 with 10 and 11 sensors; cf. median localization error of Occupant-2 with 11 sensors). This table underlines the enhanced accuracy and precision of the proposed vibro-localization technique over the baseline for various sensor configurations and both occupants.

In

Figure 11 and

Figure 12, we analyze the relationship between average sensor distance and localization error for two scenarios: considering all sensors and after the BSE algorithm is applied. We used regression analysis to understand the error behavior, with the slope indicating the error increase as occupants move farther from sensors. The correlation between the localization error and the sensor distance assesses the technique’s effectiveness in mitigating systemic errors. An ideal localization technique should minimize these slopes and correlations.

Table 4 compares our results with [

31].

5. Conclusions and Future Work

In this study, we proposed a novel vibro-localization technique that can address two types of uncertainty sources: (i) uncertainties due to sensor imperfections, and (ii) uncertainties due to complexities in wave propagation. To achieve minimum localization errors, the proposed technique coupled with an information-theoretic BSE algorithm employs an uncertainty quantification on the error contributions to the sensors. In this way, the proposed technique minimizes the effect of the errors present in the vibro-measurement technique as well as other sources of uncertainties mentioned above.

In order to benchmark the proposed method, we employed a set of previously conducted controlled experiments. The essence of this validation study was to gauge the efficacy and performance of our proposed technique, especially when contrasted against an existing methodology in the literature. In the experimental setup, which consisted of two participants traversing a 16-meter path, the structural vibrations captured by the closest eleven accelerometers among a much bigger family of accelerometers available in the environment were used. The proposed technique provided significant improvements in almost all localization metrics while best-case and worst-case scenarios became less extreme. The proposed localization technique coupled with the proposed BSE algorithm yielded a 31.47% decrease in the mean localization error.

In this study, we established a consistent empirical relationship between two key metrics of localization systems: accuracy and precision. Although a localization system’s accuracy may not be known post-deployment, we can always determine its precision from the estimates it provides. Therefore, the empirical relationship between these two metrics may be employed to estimate the system’s accuracy without recalibrating it. Although the link between accuracy and precision might be inferential, it has important consequences for the use of these systems in practice.

We have used this correlation to devise a method for estimating the system’s accuracy during operation without the need for additional calibration. Notably, this correlation holds true across different users, which suggests that our findings are robust and widely applicable. Additionally, our results offer a standardized approach to designing experiments. With the established correlation, the number of sensors required for an experiment can be decided based on the desired accuracy and the sensor precision.

Delving deeper into the results, it became evident that the flexibility in our approach, which allowed for silent periods in the signal, could reduce the strong emphasis on signal processing and signal detection steps in real-world scenarios. In other words, a relaxed window around the vibro-measurement vector, which signifies when the vibro-measurements break the noise floor and when it dies down, should be enough for the proposed algorithm to accurately localize an occupant. This is a convenient improvement, as in the employment of such localization systems the event detection problem constitutes a major drawback.

The advancements made in vibro-localization techniques, as presented in this study, open up several promising avenues for further exploration. One potential direction is the integration of global optimization techniques to enhance the accuracy and robustness of localization. By leveraging such techniques, we could refine the estimation process, ensuring that the solution converges to a global minimum, thereby minimizing localization errors. Additionally, a joint solution approach that simultaneously addresses the location estimation problem and the BSE problem could be explored. Such a holistic approach would ensure that the system not only accurately determines the location but also effectively handles unreliable sensor data in a unified framework. This could further streamline the process and potentially lead to real-time localization capabilities with higher reliability.