Proposing UGV and UAV Systems for 3D Mapping of Orchard Environments

Abstract

:1. Introduction

1.1. General Context of RGB-Depth Cameras

1.2. Use of RGB-D Cameras and Related Research in Agriculture

1.3. 3D Mapping Procedures

1.4. 3D Mapping Using Aerial-Based Systems

1.5. Aim of the Present Study

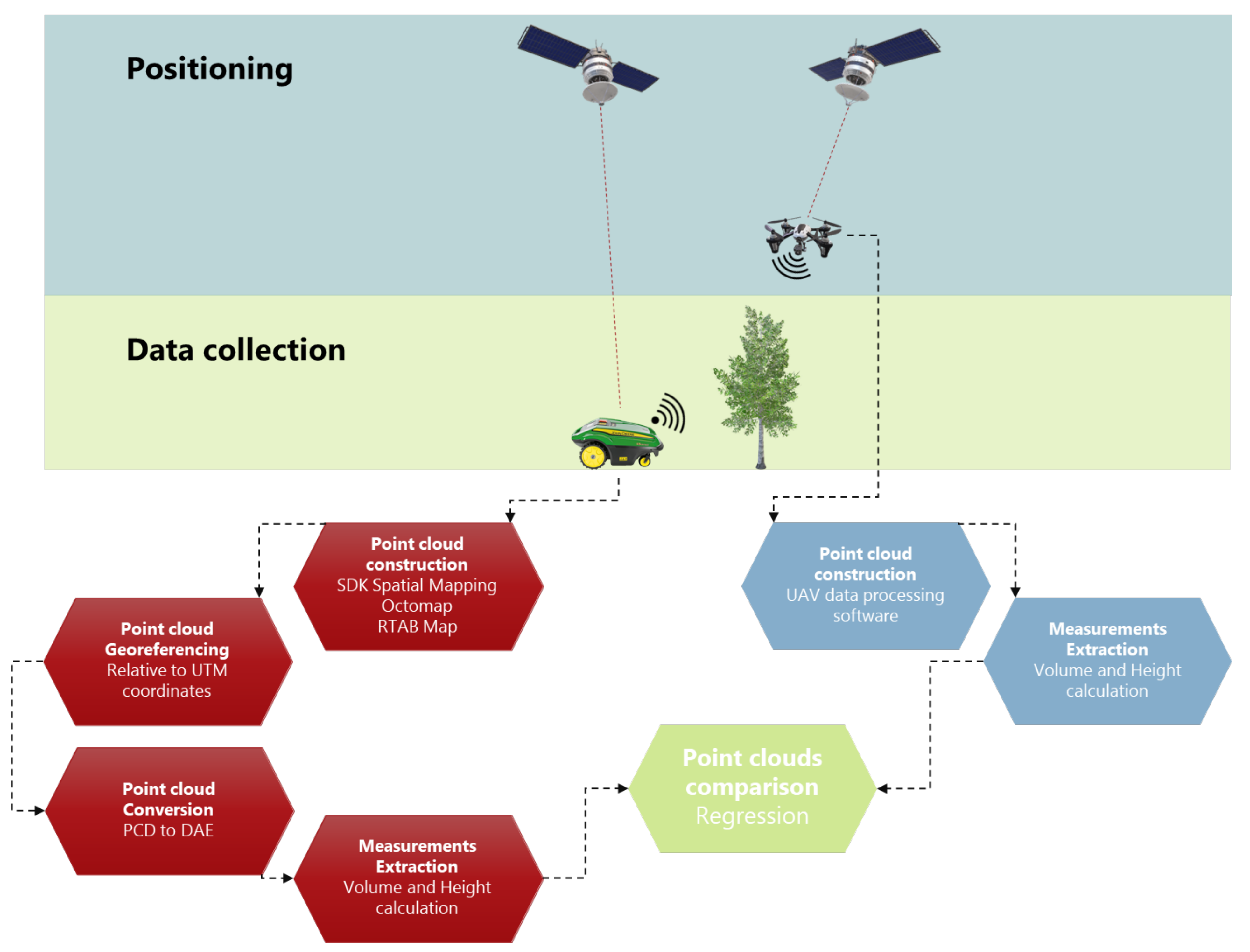

2. Materials and Methods

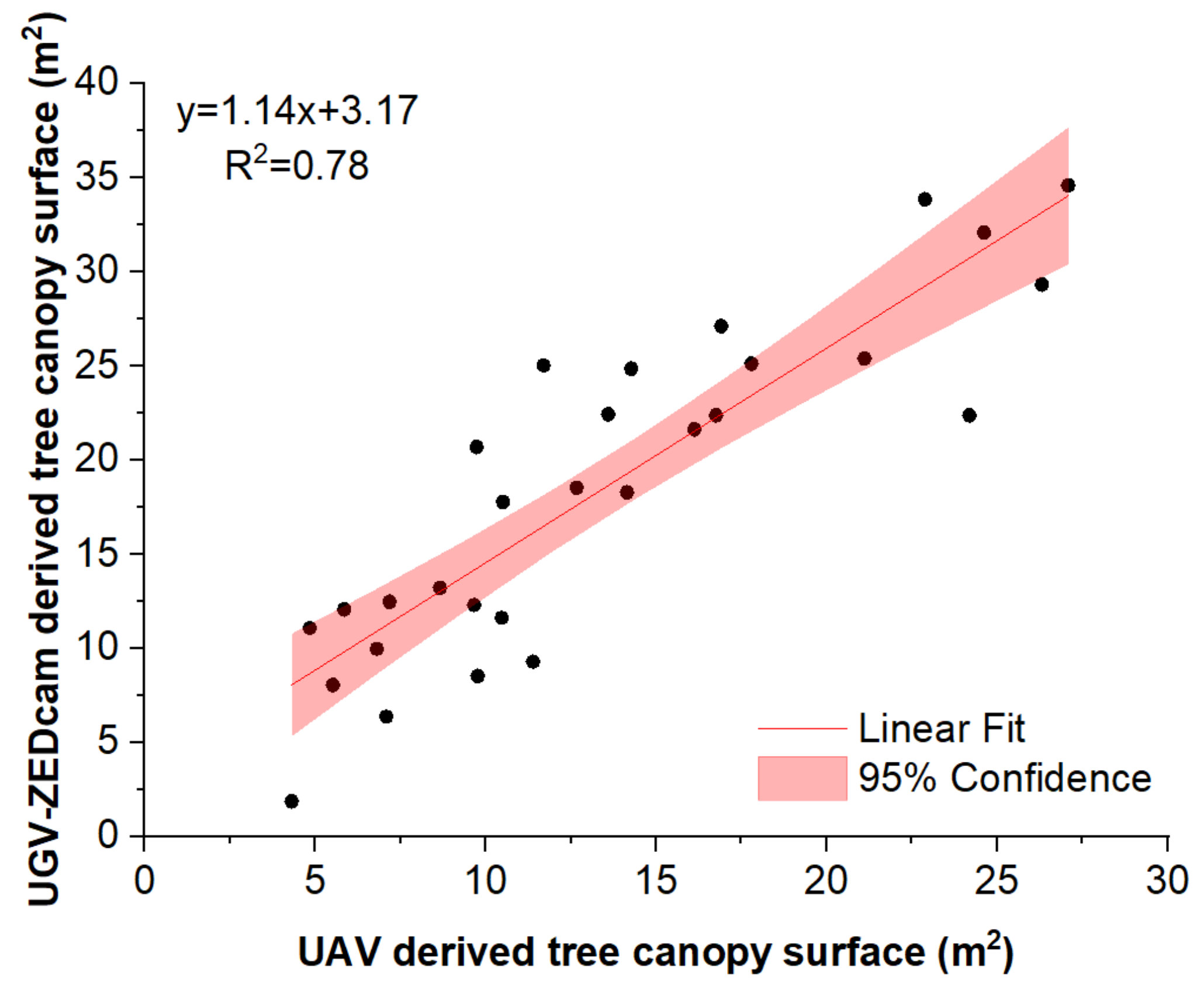

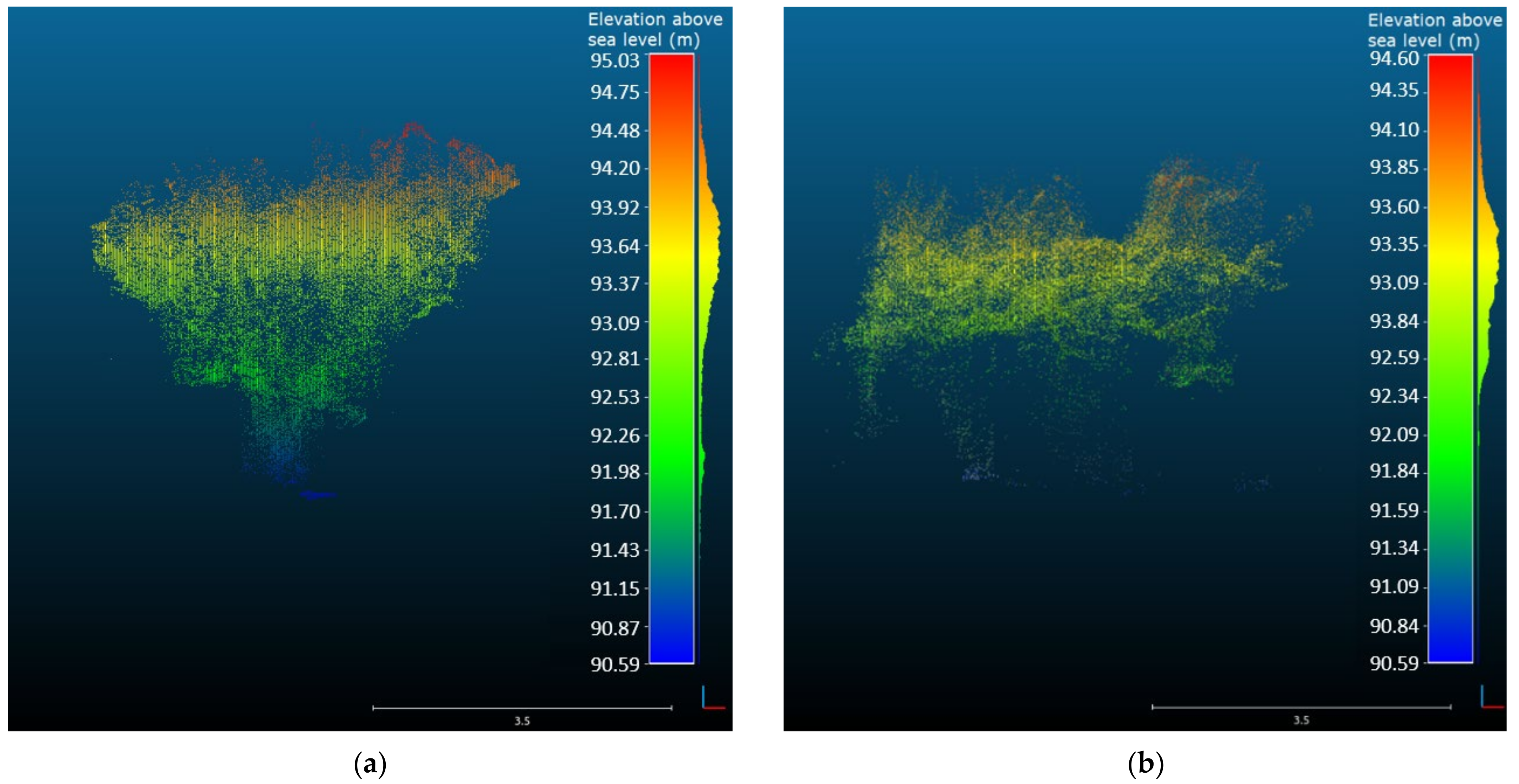

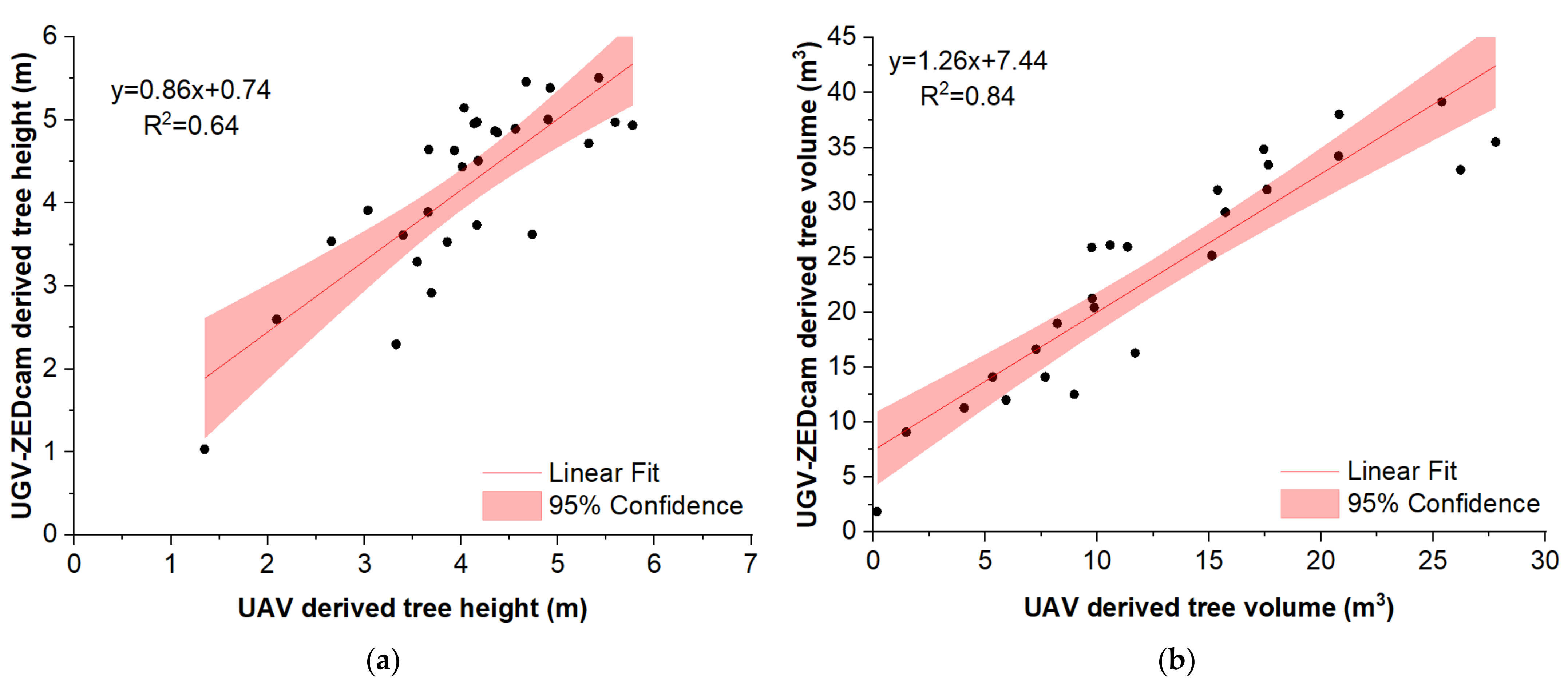

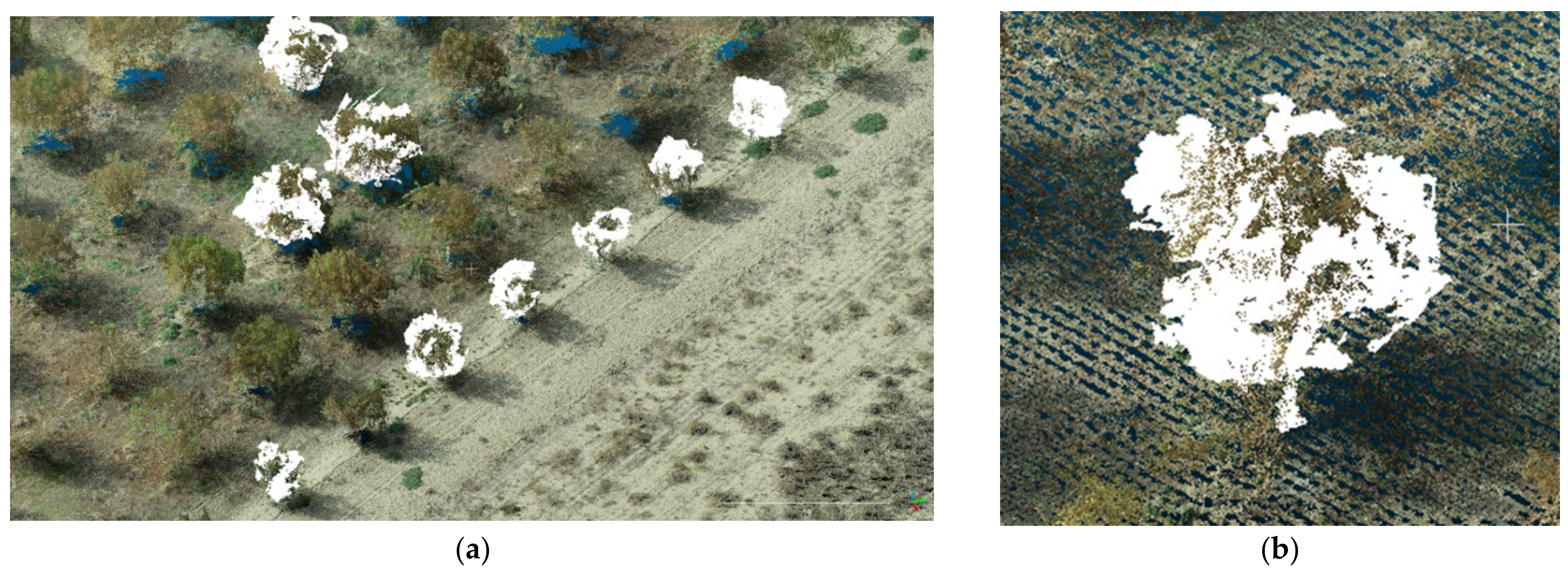

3. Results and Discussion

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Han, J.; Shao, L.; Xu, D.; Shotton, J. Enhanced Computer Vision with Microsoft Kinect Sensor: A Review. IEEE Trans. Cybern. 2013, 43, 1318–1334. [Google Scholar] [CrossRef] [PubMed]

- Ganganath, N.; Leung, H. Mobile robot localization using odometry and kinect sensor. In Proceedings of the 2012 IEEE International Conference on Emerging Signal Processing Applications, Las Vegas, NV, USA, 12–14 January 2012; pp. 91–94. [Google Scholar]

- Benos, L.; Tagarakis, A.C.; Dolias, G.; Berruto, R.; Kateris, D.; Bochtis, D. Machine Learning in Agriculture: A Comprehensive Updated Review. Sensors 2021, 21, 3758. [Google Scholar] [CrossRef] [PubMed]

- Lindner, L.; Sergiyenko, O.; Rivas-López, M.; Ivanov, M.; Rodríguez-Quiñonez, J.C.; Hernández-Balbuena, D.; Flores-Fuentes, W.; Tyrsa, V.; Muerrieta-Rico, F.N.; Mercorelli, P. Machine vision system errors for unmanned aerial vehicle navigation. In Proceedings of the 2017 IEEE 26th International Symposium on Industrial Electronics (ISIE), Edinburgh, UK, 19–21 June 2017; pp. 1615–1620. [Google Scholar]

- Zhao, Y.; Gong, L.; Huang, Y.; Liu, C. A review of key techniques of vision-based control for harvesting robot. Comput. Electron. Agric. 2016, 127, 311–323. [Google Scholar] [CrossRef]

- Fu, L.; Tola, E.; Al-Mallahi, A.; Li, R.; Cui, Y. A novel image processing algorithm to separate linearly clustered kiwifruits. Biosyst. Eng. 2019, 183, 184–195. [Google Scholar] [CrossRef]

- Fu, L.; Gao, F.; Wu, J.; Li, R.; Karkee, M.; Zhang, Q. Application of consumer RGB-D cameras for fruit detection and localization in field: A critical review. Comput. Electron. Agric. 2020, 177, 105687. [Google Scholar] [CrossRef]

- Khoshelham, K.; Elberink, S.O. Accuracy and Resolution of Kinect Depth Data for Indoor Mapping Applications. Sensors 2012, 12, 1437–1454. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Liu, H.; Qu, D.; Xu, F.; Zou, F.; Song, J.; Jia, K. Approach for accurate calibration of RGB-D cameras using spheres. Opt. Express 2020, 28, 19058–19073. [Google Scholar] [CrossRef]

- Menna, F.; Remondino, F.; Battisti, R.; Nocerino, E. Geometric investigation of a gaming active device. In Proceedings of the Videometrics, Range Imaging, and Applications XI; Remondino, F., Shortis, M.R., Eds.; SPIE: Bellingham, WA, USA, 2011; Volume 8085, pp. 173–187. [Google Scholar]

- Henry, P.; Krainin, M.; Herbst, E.; Ren, X.; Fox, D. RGB-D Mapping: Using Depth Cameras for Dense 3D Modeling of Indoor Environments BT—Experimental Robotics. In Proceedings of the 12th International Symposium on Experimental Robotics, New Delhi and Agra, India, 18–21 December 2010; Khatib, O., Kumar, V., Sukhatme, G., Eds.; Springer: Berlin/Heidelberg, Germany, 2014; pp. 477–491. [Google Scholar]

- Endres, F.; Hess, J.; Sturm, J.; Cremers, D.; Burgard, W. 3-D Mapping with an RGB-D camera. IEEE Trans. Robot. 2014, 30, 177–187. [Google Scholar] [CrossRef]

- Butkiewicz, T. Low-cost coastal mapping using Kinect v2 time-of-flight cameras. In Proceedings of the 2014 Oceans—St. John’s, St. John’s, NL, Canada, 14–19 September 2014; pp. 1–9. [Google Scholar]

- Pan, H.; Guan, T.; Luo, Y.; Duan, L.; Tian, Y.; Yi, L.; Zhao, Y.; Yu, J. Dense 3D reconstruction combining depth and RGB information. Neurocomputing 2016, 175, 644–651. [Google Scholar] [CrossRef]

- Herbst, E.; Henry, P.; Ren, X.; Fox, D. Toward object discovery and modeling via 3-D scene comparison. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 2623–2629. [Google Scholar]

- Wang, K.; Zhang, G.; Bao, H. Robust 3D Reconstruction with an RGB-D Camera. IEEE Trans. Image Process. 2014, 23, 4893–4906. [Google Scholar] [CrossRef] [Green Version]

- Benos, L.; Bechar, A.; Bochtis, D. Safety and ergonomics in human-robot interactive agricultural operations. Biosyst. Eng. 2020, 200, 55–72. [Google Scholar] [CrossRef]

- Benos, L.; Tsaopoulos, D.; Bochtis, D. A Review on Ergonomics in Agriculture. Part II: Mechanized Operations. Appl. Sci. 2020, 10, 3484. [Google Scholar] [CrossRef]

- Hernández-López, J.-J.; Quintanilla-Olvera, A.-L.; López-Ramírez, J.-L.; Rangel-Butanda, F.-J.; Ibarra-Manzano, M.-A.; Almanza-Ojeda, D.-L. Detecting objects using color and depth segmentation with Kinect sensor. Procedia Technol. 2012, 3, 196–204. [Google Scholar] [CrossRef] [Green Version]

- Marin, G.; Agresti, G.; Minto, L.; Zanuttigh, P. A multi-camera dataset for depth estimation in an indoor scenario. Data Br. 2019, 27, 104619. [Google Scholar] [CrossRef]

- Tran, T.M.; Ta, K.D.; Hoang, M.; Nguyen, T.V.; Nguyen, N.D.; Pham, G.N. A study on determination of simple objects volume using ZED stereo camera based on 3D-points and segmentation images. Int. J. Emerg. Trends Eng. Res. 2020, 8, 1990–1995. [Google Scholar] [CrossRef]

- Sarker, M.M.; Ali, T.A.; Abdelfatah, A.; Yehia, S.; Elaksher, A. A cost-effective method for crack detection and measurement on concrete surface. ISPRS-Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 237–241. [Google Scholar] [CrossRef] [Green Version]

- Burdziakowski, P. Low cost real time UAV stereo photogrammetry modelling technique-accuracy considerations. In Proceedings of the E3S Web of Conferences; EDP Sciences: Les Ulis, France, 2018; Volume 63, p. 00020. [Google Scholar]

- Gupta, T.; Li, H. Indoor mapping for Smart Cities—An affordable approach: Using kinect sensor and ZED stereo camera. In Proceedings of the 2017 International Conference on Indoor Positioning and Indoor Navigation, IPIN 2017, Sapporo, Japan, 18–21 September 2017; Institute of Electrical and Electronics Engineers Inc.: New York, NY, USA, 2017; Volume 2017, pp. 1–8. [Google Scholar]

- Condotta, I.C.F.S.; Brown-Brandl, T.M.; Pitla, S.K.; Stinn, J.P.; Silva-Miranda, K.O. Evaluation of low-cost depth cameras for agricultural applications. Comput. Electron. Agric. 2020, 173, 105394. [Google Scholar] [CrossRef]

- Andújar, D.; Dorado, J.; Fernández-Quintanilla, C.; Ribeiro, A. An Approach to the Use of Depth Cameras for Weed Volume Estimation. Sensors 2016, 16, 972. [Google Scholar] [CrossRef] [Green Version]

- Azzari, G.; Goulden, M.L.; Rusu, R.B. Rapid Characterization of Vegetation Structure with a Microsoft Kinect Sensor. Sensors 2013, 13, 2384–2398. [Google Scholar] [CrossRef] [Green Version]

- Andújar, D.; Ribeiro, A.; Fernández-Quintanilla, C.; Dorado, J. Using depth cameras to extract structural parameters to assess the growth state and yield of cauliflower crops. Comput. Electron. Agric. 2016, 122, 67–73. [Google Scholar] [CrossRef]

- Hui, F.; Zhu, J.; Hu, P.; Meng, L.; Zhu, B.; Guo, Y.; Li, B.; Ma, Y. Image-based dynamic quantification and high-accuracy 3D evaluation of canopy structure of plant populations. Ann. Bot. 2018, 121, 1079–1088. [Google Scholar] [CrossRef] [PubMed]

- Jiang, Y.; Li, C.; Paterson, A.H.; Sun, S.; Xu, R.; Robertson, J. Quantitative Analysis of Cotton Canopy Size in Field Conditions Using a Consumer-Grade RGB-D Camera. Front. Plant. Sci. 2018, 8, 2233. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Vit, A.; Shani, G. Comparing RGB-D Sensors for Close Range Outdoor Agricultural Phenotyping. Sensors 2018, 18, 4413. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wang, Z.; Walsh, K.B.; Verma, B. On-Tree Mango Fruit Size Estimation Using RGB-D Images. Sensors 2017, 17, 2738. [Google Scholar] [CrossRef] [Green Version]

- Wang, D.; Li, W.; Liu, X.; Li, N.; Zhang, C. UAV environmental perception and autonomous obstacle avoidance: A deep learning and depth camera combined solution. Comput. Electron. Agric. 2020, 175, 105523. [Google Scholar] [CrossRef]

- Milella, A.; Marani, R.; Petitti, A.; Reina, G. In-field high throughput grapevine phenotyping with a consumer-grade depth camera. Comput. Electron. Agric. 2019, 156, 293–306. [Google Scholar] [CrossRef]

- Sa, I.; Lehnert, C.; English, A.; McCool, C.; Dayoub, F.; Upcroft, B.; Perez, T. Peduncle Detection of Sweet Pepper for Autonomous Crop Harvesting—Combined Color and 3-D Information. IEEE Robot. Autom. Lett. 2017, 2, 765–772. [Google Scholar] [CrossRef] [Green Version]

- Konolige, K. Projected texture stereo. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation, Anchorage, AK, USA, 3–7 May 2010; pp. 148–155. [Google Scholar]

- Hornung, A.; Wurm, K.M.; Bennewitz, M.; Stachniss, C.; Burgard, W. OctoMap: An efficient probabilistic 3D mapping framework based on octrees. Auton. Robot. 2013, 34, 189–206. [Google Scholar] [CrossRef] [Green Version]

- ROS—Robot Operating System. Available online: https://www.ros.org/ (accessed on 13 December 2021).

- De Silva, K.T.D.S.; Cooray, B.P.A.; Chinthaka, J.I.; Kumara, P.P.; Sooriyaarachchi, S.J. Comparative Analysis of Octomap and RTABMap for Multi-Robot Disaster Site Mapping; Institute of Electrical and Electronics Engineers (IEEE): New York, NY, USA, 2019; pp. 433–438. [Google Scholar]

- Anagnostis, A.; Tagarakis, A.C.; Kateris, D.; Moysiadis, V.; Sørensen, C.G.; Pearson, S.; Bochtis, D. Orchard Mapping with Deep Learning Semantic Segmentation. Sensors 2021, 21, 3813. [Google Scholar] [CrossRef]

- Zhang, C.; Kovacs, J.M. The application of small unmanned aerial systems for precision agriculture: A review. Precis. Agric. 2012, 13, 693–712. [Google Scholar] [CrossRef]

- Christiansen, M.; Laursen, M.; Jørgensen, R.; Skovsen, S.; Gislum, R. Designing and Testing a UAV Mapping System for Agricultural Field Surveying. Sensors 2017, 17, 2703. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Gašparović, M.; Zrinjski, M.; Barković, Đ.; Radočaj, D. An automatic method for weed mapping in oat fields based on UAV imagery. Comput. Electron. Agric. 2020, 173, 105385. [Google Scholar] [CrossRef]

- Veeranampalayam Sivakumar, A.N.; Li, J.; Scott, S.; Psota, E.; JJhala, A.; Luck, J.D.; Shi, Y. Comparison of Object Detection and Patch-Based Classification Deep Learning Models on Mid- to Late-Season Weed Detection in UAV Imagery. Remote Sens. 2020, 12, 2136. [Google Scholar] [CrossRef]

- Kerkech, M.; Hafiane, A.; Canals, R. Vine disease detection in UAV multispectral images using optimized image registration and deep learning segmentation approach. Comput. Electron. Agric. 2020, 174, 105446. [Google Scholar] [CrossRef]

- Barrero, O.; Perdomo, S.A. RGB and multispectral UAV image fusion for Gramineae weed detection in rice fields. Precis. Agric. 2018, 19, 809–822. [Google Scholar] [CrossRef]

- Grimstad, L.; From, P.J. The Thorvald II Agricultural Robotic System. Robotics 2017, 6, 24. [Google Scholar] [CrossRef] [Green Version]

- MeshLab. Available online: https://www.meshlab.net/ (accessed on 17 November 2020).

- CloudCompare. Available online: http://www.cloudcompare.org/ (accessed on 17 November 2020).

- Moysiadis, V.; Tsolakis, N.; Katikaridis, D.; Sørensen, C.G.; Pearson, S.; Bochtis, D. Mobile Robotics in Agricultural Operations: A Narrative Review on Planning Aspects. Appl. Sci. 2020, 10, 3453. [Google Scholar] [CrossRef]

- Paulus, S.; Behmann, J.; Mahlein, A.-K.; Plümer, L.; Kuhlmann, H. Low-Cost 3D Systems: Suitable Tools for Plant Phenotyping. Sensors 2014, 14, 3001–3018. [Google Scholar] [CrossRef] [Green Version]

- Guzman, R.; Navarro, R.; Beneto, M.; Carbonell, D. Robotnik—Professional service robotics applications with ROS. Stud. Comput. Intell. 2016, 625, 253–288. [Google Scholar] [CrossRef]

- Benos, L.; Kokkotis, C.; Tsatalas, T.; Karampina, E.; Tsaopoulos, D.; Bochtis, D. Biomechanical Effects on Lower Extremities in Human-Robot Collaborative Agricultural Tasks. Appl. Sci. 2021, 11, 11742. [Google Scholar] [CrossRef]

- Rodríguez-Quiñonez, J.C.; Sergiyenko, O.; Flores-Fuentes, W.; Rivas-lopez, M.; Hernandez-Balbuena, D.; Rascón, R.; Mercorelli, P. Improve a 3D distance measurement accuracy in stereo vision systems using optimization methods’ approach. Opto-Electron. Rev. 2017, 25, 24–32. [Google Scholar] [CrossRef]

- Lindner, L.; Sergiyenko, O.; Rodríguez-Quiñonez, J.C.; Tyrsa, V.; Mercorelli, P.; Fuentes, W.F.; Murrieta-Rico, F.N.; Nieto-Hipolito, J.I. Continuous 3D scanning mode using servomotors instead of stepping motors in dynamic laser triangulation. In Proceedings of the 2015 IEEE 24th International Symposium on Industrial Electronics (ISIE), Buzios, Brazil, 3–5 June 2015; pp. 944–949. [Google Scholar]

- Rueda-Ayala, V.P.; Peña, J.M.; Höglind, M.; Bengochea-Guevara, J.M.; Andújar, D. Comparing UAV-Based Technologies and RGB-D Reconstruction Methods for Plant Height and Biomass Monitoring on Grass Ley. Sensors 2019, 19, 535. [Google Scholar] [CrossRef] [PubMed] [Green Version]

| Sensor | RGB | ||

| Lens | f/1.8 aperture | ||

| Depth range | 0.2–20 m | ||

| Field of view (horizontal, vertical, diagonal) | 110° (H), 70° (V), 120° (D) | ||

| Single image and depth resolution (pixels) | Resolution (pixels) | Frame rate (Frames per second) | |

| HD2K | 2208 × 1242 | 15 FPS | |

| HD1080 | 1920 × 1080 | 30/15 FPS | |

| HD720 | 1280 × 720 | 60/30/15 FPS | |

| VGA | 672 × 376 | 100/60/30/15 FPS | |

| Complementary sensors | Accelerometer, Gyroscope, Barometer, Magnetometer, Temperature sensor | ||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tagarakis, A.C.; Filippou, E.; Kalaitzidis, D.; Benos, L.; Busato, P.; Bochtis, D. Proposing UGV and UAV Systems for 3D Mapping of Orchard Environments. Sensors 2022, 22, 1571. https://doi.org/10.3390/s22041571

Tagarakis AC, Filippou E, Kalaitzidis D, Benos L, Busato P, Bochtis D. Proposing UGV and UAV Systems for 3D Mapping of Orchard Environments. Sensors. 2022; 22(4):1571. https://doi.org/10.3390/s22041571

Chicago/Turabian StyleTagarakis, Aristotelis C., Evangelia Filippou, Damianos Kalaitzidis, Lefteris Benos, Patrizia Busato, and Dionysis Bochtis. 2022. "Proposing UGV and UAV Systems for 3D Mapping of Orchard Environments" Sensors 22, no. 4: 1571. https://doi.org/10.3390/s22041571

APA StyleTagarakis, A. C., Filippou, E., Kalaitzidis, D., Benos, L., Busato, P., & Bochtis, D. (2022). Proposing UGV and UAV Systems for 3D Mapping of Orchard Environments. Sensors, 22(4), 1571. https://doi.org/10.3390/s22041571