Automated License Plate Recognition for Resource-Constrained Environments

Abstract

:1. Introduction

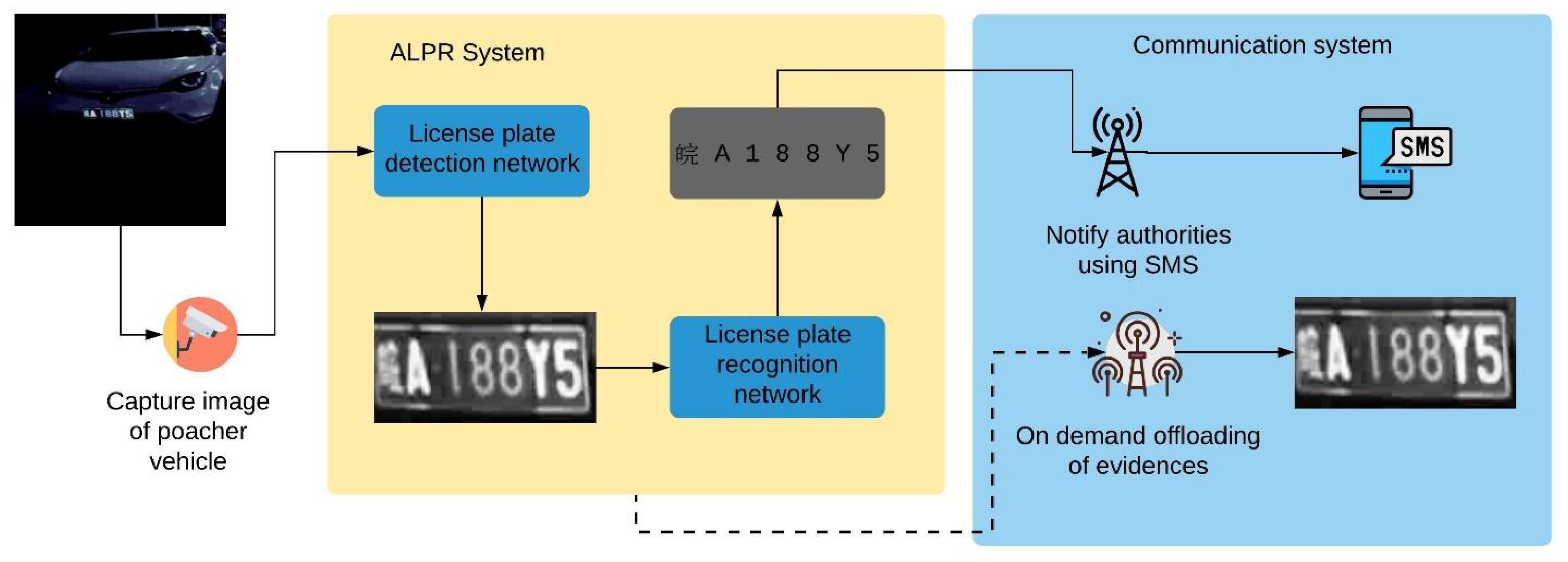

- The system executes autonomously in real time on an edge platform with constrained memory and computational capabilities.

- The system is feasible, low cost and energy efficient to be deployed in the wild or remote areas, where there is no reliable Internet connection or a power grid.

- The system operates at nighttime without additional lighting that is visible to the naked eye.

2. Background and Related Studies

2.1. Overview of LP Recognition Approaches

2.2. LP Recognition in Constrained Environment

2.3. ALPR Using Edge Devices

2.4. ALPR with Synthetic and Nighttime Images

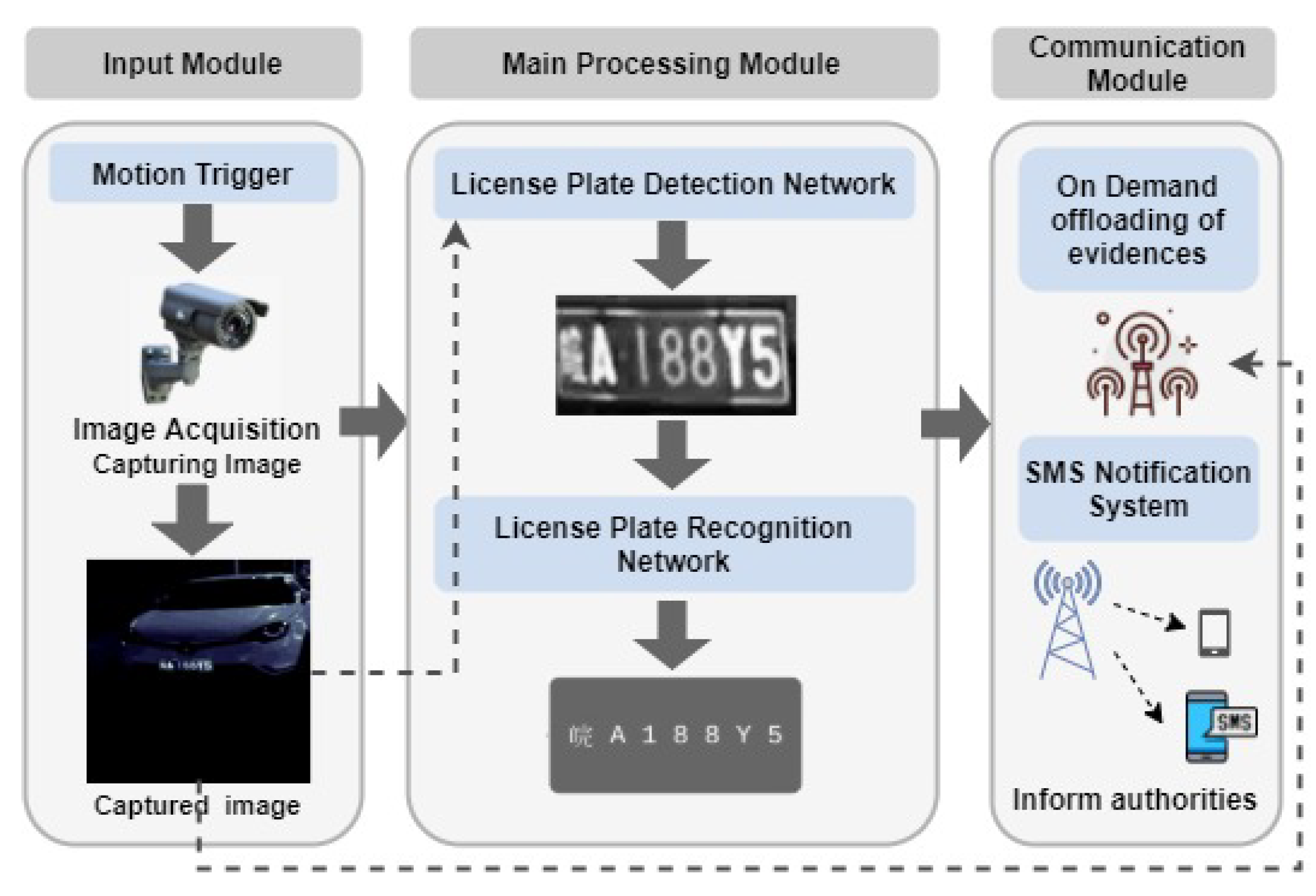

3. System Design and Methodology

3.1. Design Aspects of the Proposed ALPR System

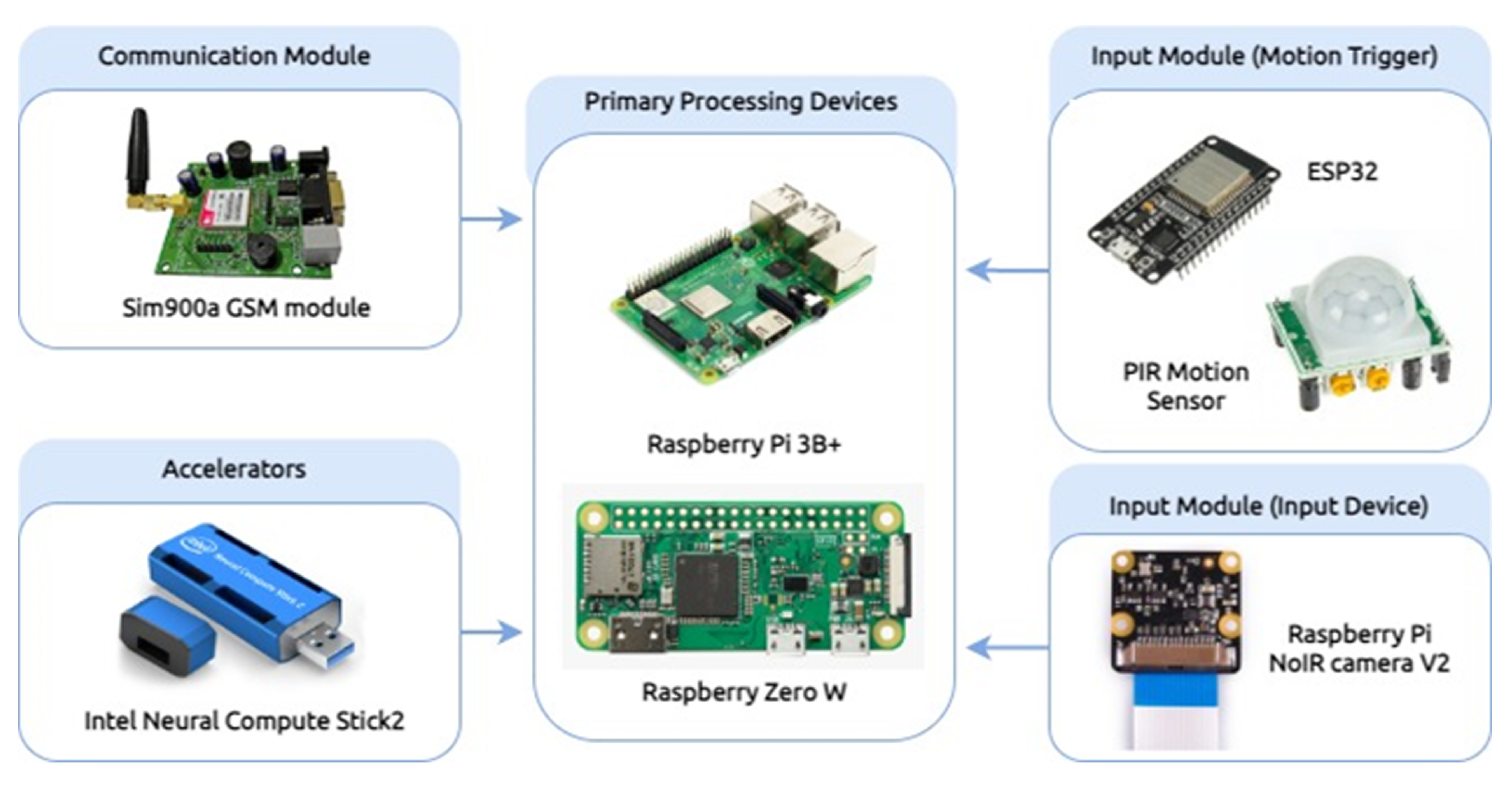

3.1.1. Cost-Effective Mobile-Sensing Data Communication Specifications

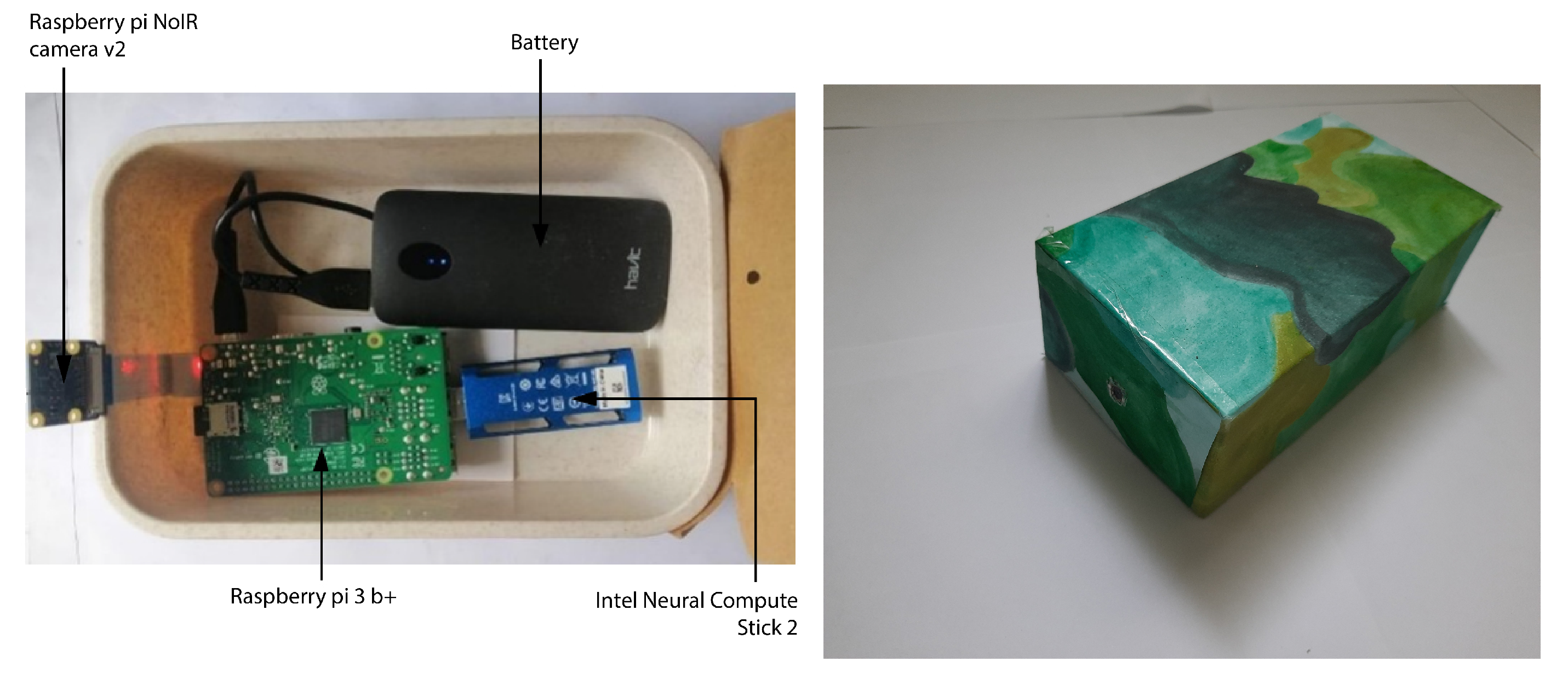

Raspberry Pi 3 Model B+

Raspberry Pi Zero

Intel Neural Compute Stick 2

Raspberry Pi Camera Module

GSM Module Sim 900a

3.1.2. Input Module

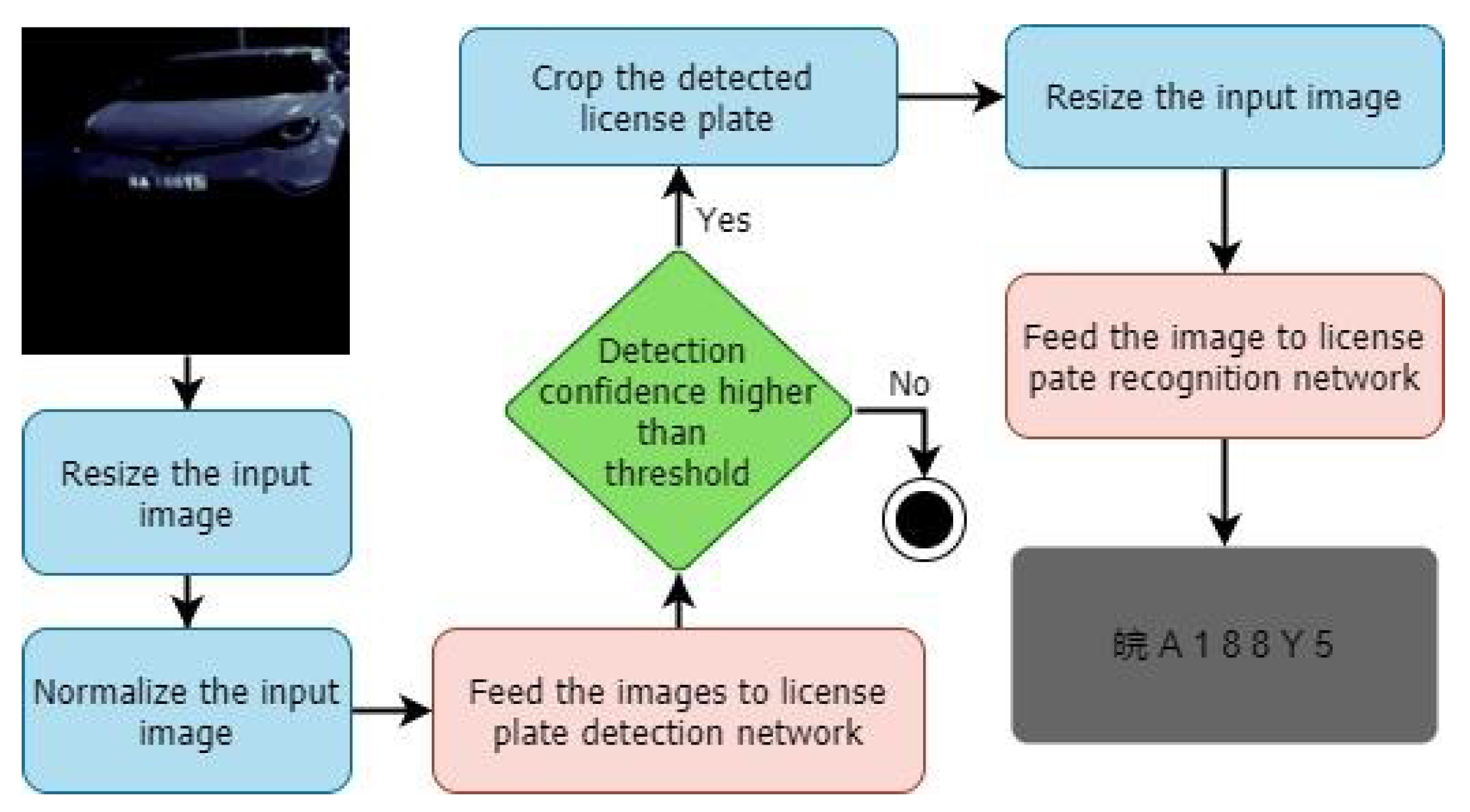

3.1.3. Main Processing Module

3.1.4. Communication Module

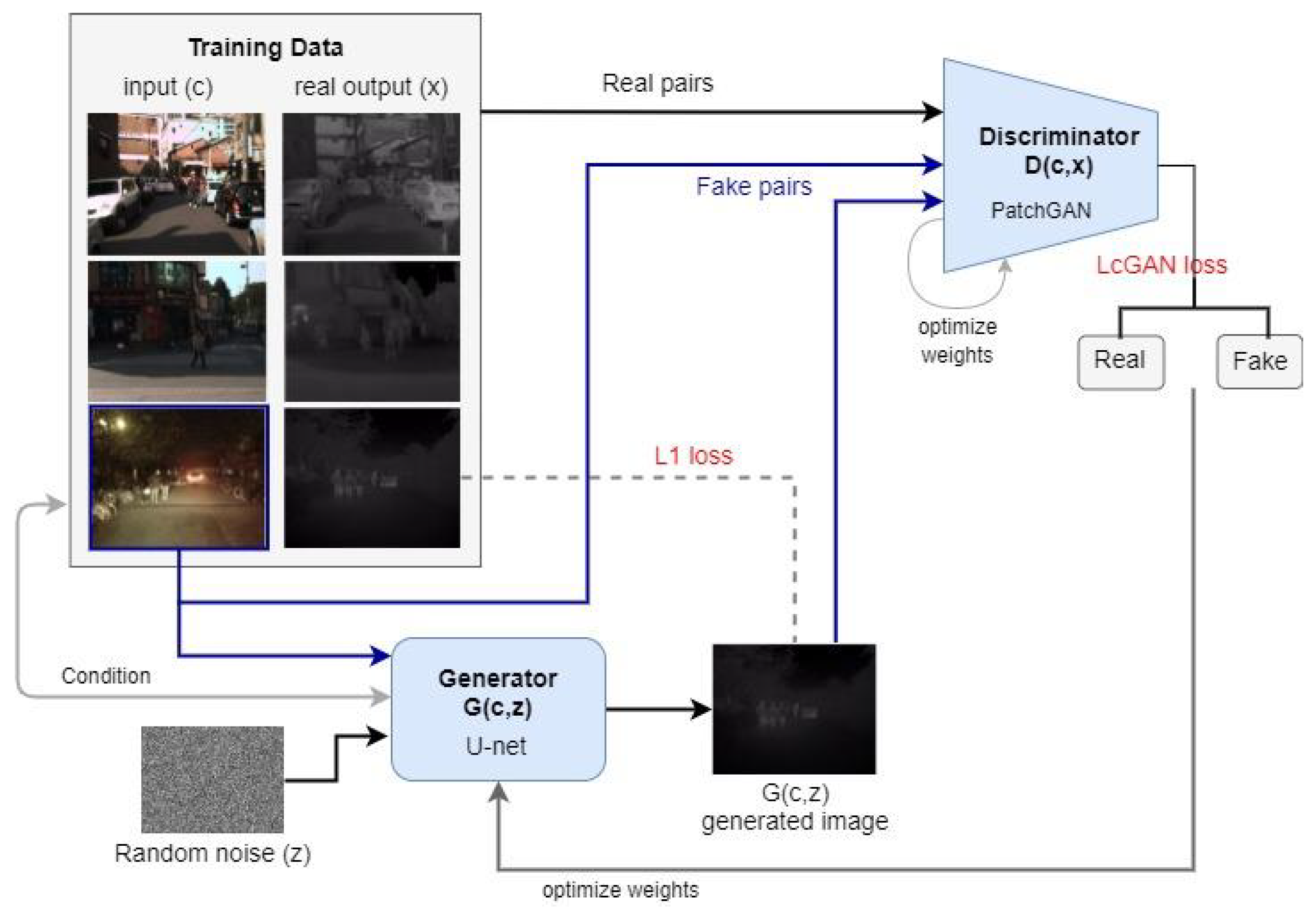

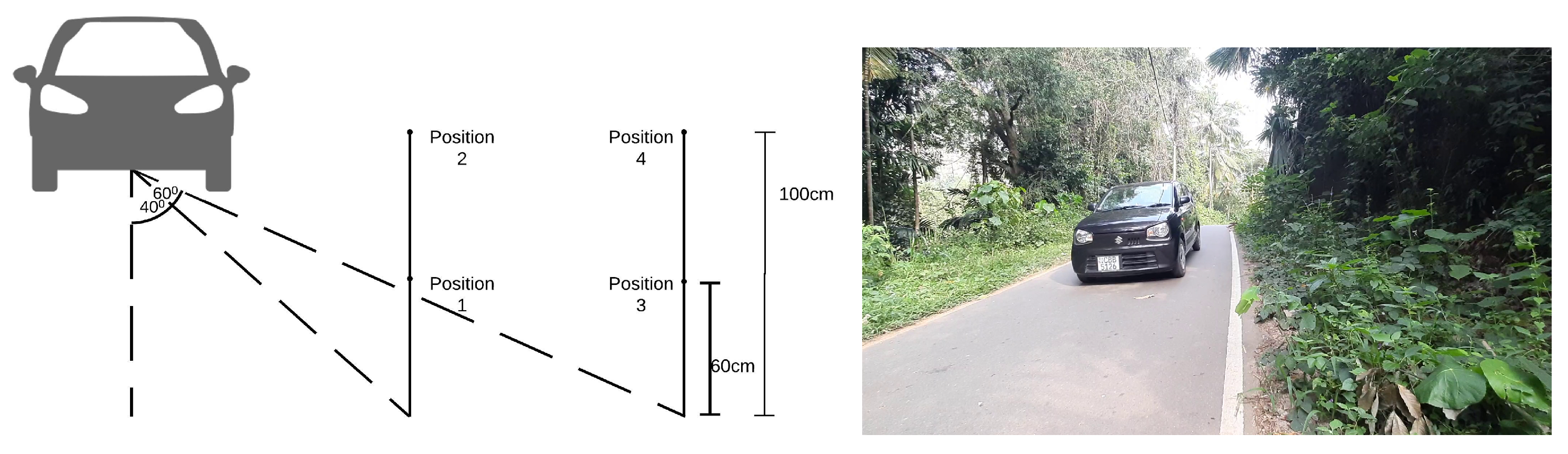

3.2. Environment Simulation Techniques

3.3. License Plate Detection and Recognition Algorithms

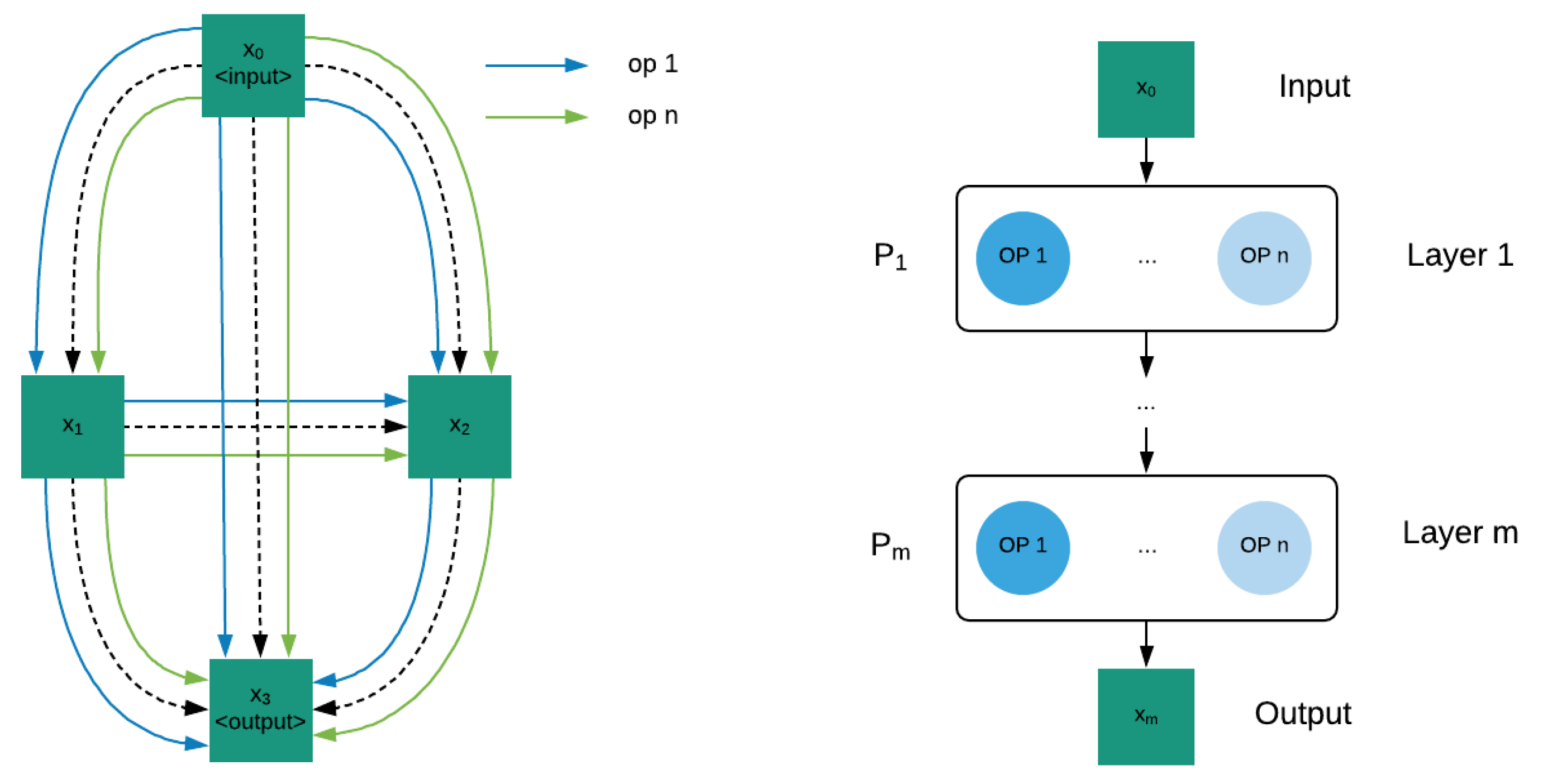

3.3.1. Search Space

3.3.2. Search Strategy

| Algorithm 1: Bi-level optimization. |

|

- : operation weights

- : architecture weights

- : learning rate for operation weight update

- : learning rate for architecture weight update

- : loss

- : number of iterations for inner optimization

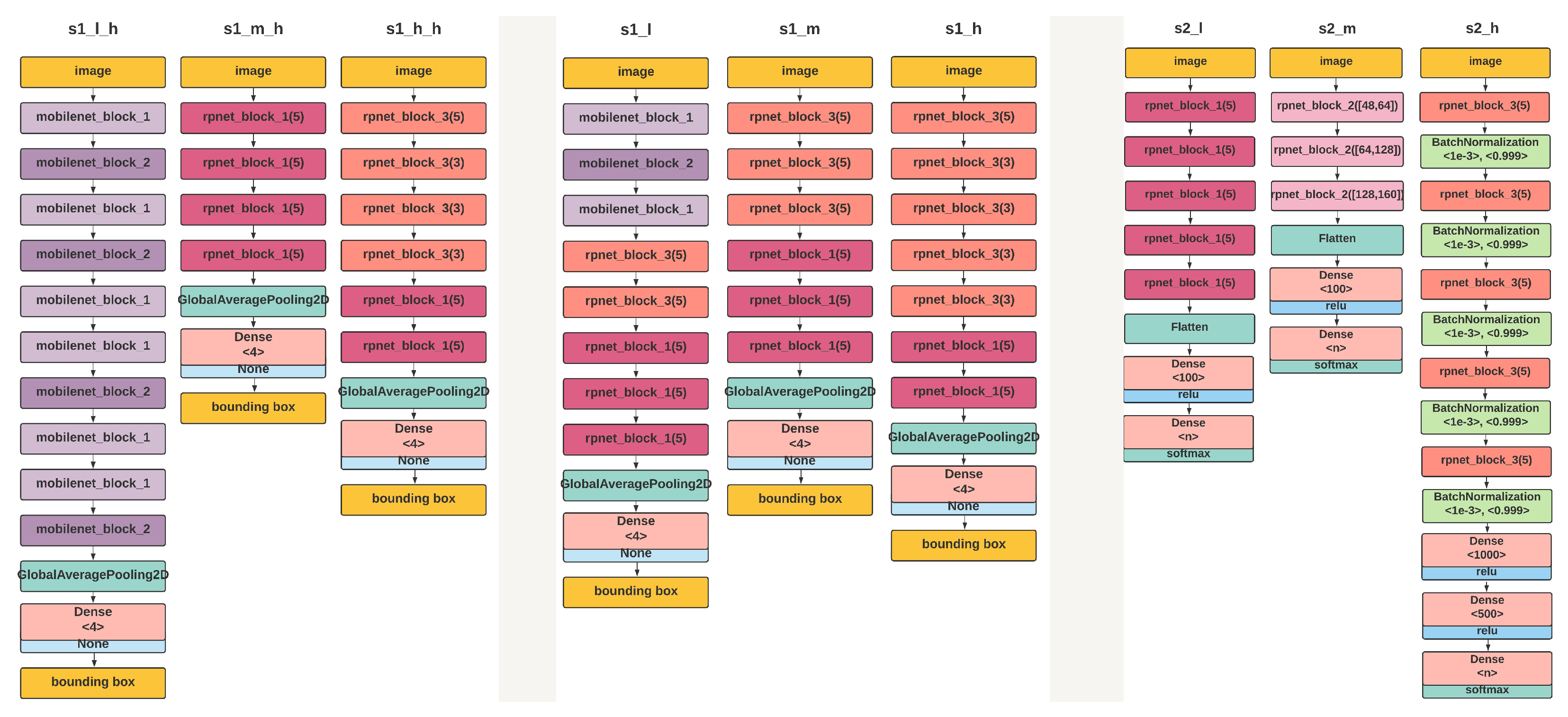

3.3.3. Lite LP-Net Architectures

3.3.4. Performance Estimation Strategy

4. System Evaluation

4.1. Data Set

4.2. Experiment Setup

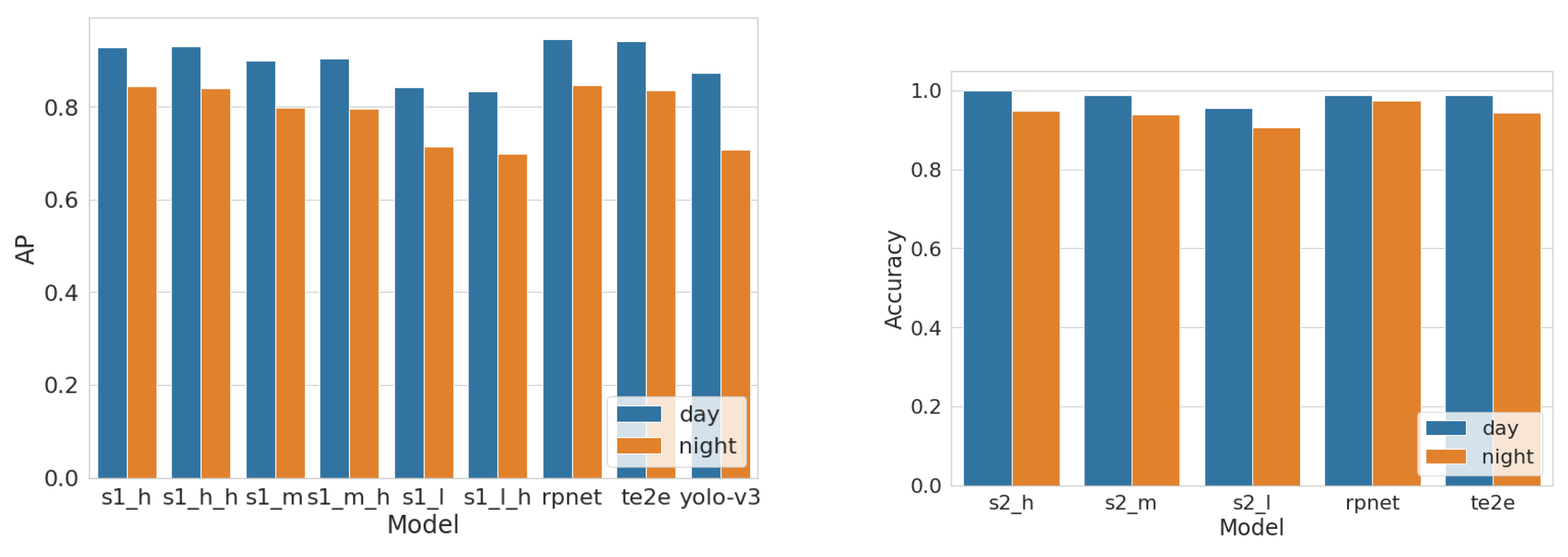

4.3. Model Performance

4.4. Hardware Performance

- The probabilistic estimation of the number of vehicles passing through an operation unit is not readily available for a given case study. Thus, we considered the maximum possible processing load on the unit for a general case.

- The worst-case power consumption gives an upper bound for the unit’s power consumption. Thus, using a power supply that satisfies the maximum power requirements can satisfy the power consumption of the unit under any other condition.

5. Discussion and Lessons Learned

5.1. Study Contributions

- Designed and developed a lightweight and low-cost night-vision vehicle number plate detection and recognition model with competitive accuracies.

- Developed a license plate reading system capable of operating without Internet connection and powered by batteries for an extended period. Thus, supported mobile communication with minimum resources.

- Supported SMS sending that contains the identified license plate number to a given phone number (e.g., send to the wildlife department in the considered case study).

- Designed in small size in appearance and deployed discreetly in the field. Thus, in the considered case study, the poachers may not notice these camera traps and equipment.

- Analysed the trade-offs and explored the impact of the constraints such as accuracy and power consumption.

- Maximized resource use and minimized the end-to-end delay.

5.2. Solution Assessment

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Khan, L.U.; Yaqoob, I.; Tran, N.H.; Kazmi, S.M.A.; Dang, T.N.; Hong, C.S. Edge-Computing-Enabled Smart Cities: A Comprehensive Survey. IEEE Internet Things J. 2020, 7, 10200–10232. [Google Scholar] [CrossRef] [Green Version]

- Hossain, S.A.; Anisur Rahman, M.; Hossain, M. Edge computing framework for enabling situation awareness in IoT based smart city. J. Parallel Distrib. Comput. 2018, 122, 226–237. [Google Scholar] [CrossRef]

- Chakraborty, T.; Datta, S.K. Home Automation Using Edge Computing and Internet of Things. In International Symposium on Consumer Electronics (ISCE); IEEE: Kuala Lumpur, Malaysia, 2017; pp. 47–49. [Google Scholar]

- Gamage, G.; Sudasingha, I.; Perera, I.; Meedeniya, D. Reinstating Dlib Correlation Human Trackers Under Occlusions in Human Detection based Tracking. In Proceedings of the 18th International Conference on Advances in ICT for Emerging Regions (ICTer), Colombo, Sri Lanka, 26–29 September 2018; IEEE: Colombo, Sri Lanka, 2018; pp. 92–98. [Google Scholar] [CrossRef]

- Padmasiri, H.; Madurawe, R.; Abeysinghe, C.; Meedeniya, D. Automated Vehicle Parking Occupancy Detection in Real-Time. In Moratuwa Engineering Research Conference (MERCon); IEEE: Colombo, Sri Lanka, 2020; pp. 644–649. [Google Scholar] [CrossRef]

- Wang, X.; Huang, J. Vehicular Fog Computing: Enabling Real-Time Traffic Management for Smart Cities. IEEE Wirel. Commun. 2018, 26, 87–93. [Google Scholar] [CrossRef]

- Deng, S.; Zhao, H.; Fang, W.; Yin, J.; Dustdar, S.; Zomaya, A.Y. Edge Intelligence: The Confluence of Edge Computing and Artificial Intelligence. IEEE Internet Things J. 2020, 7, 7457–7469. [Google Scholar] [CrossRef] [Green Version]

- Zhou, Z.; Chen, X.; Li, E.; Zeng, L.; Luo, K.; Zhang, J. Edge Intelligence: Paving the Last Mile of Artificial Intelligence With Edge Computing. Proc. IEEE 2019, 107, 1738–1762. [Google Scholar] [CrossRef] [Green Version]

- Shashirangana, J.; Padmasiri, H.; Meedeniya, D.; Perera, C. Automated License Plate Recognition: A Survey on Methods and Techniques. IEEE Access 2021, 9, 11203–11225. [Google Scholar] [CrossRef]

- Yu, W.; Liang, F.; He, X.; Hatcher, W.G.; Lu, C.; Lin, J.; Yang, X. A Survey on the Edge Computing for the Internet of Things. IEEE Access 2018, 6, 6900–6919. [Google Scholar] [CrossRef]

- Xue, H.; Huang, B.; Qin, M.; Zhou, H.; Yang, H. Edge Computing for Internet of Things: A Survey. In 2020 International Conferences on Internet of Things (iThings) and IEEE Green Computing and Communications (GreenCom) and IEEE Cyber, Physical and Social Computing (CPSCom) and IEEE Smart Data (SmartData) and IEEE Congress on Cybermatics (Cybermatics); IEEE: Rhodes, Greece, 2020; pp. 755–760. [Google Scholar] [CrossRef]

- Wu, B.; Dai, X.; Zhang, P.; Wang, Y.; Sun, F.; Wu, Y.; Tian, Y.; Vajda, P.; Jia, Y.; Keutzer, K. FBNet: Hardware-Aware Efficient ConvNet Design via Differentiable Neural Architecture Search. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; Computer Vision Foundation/IEEE: Long Beach, CA, USA, 2019; pp. 10734–10742. [Google Scholar]

- Xu, Y.; Xie, L.; Zhang, X.; Chen, X.; Qi, G.; Tian, Q.; Xiong, H. PC-DARTS: Partial Channel Connections for Memory-Efficient Architecture Search. arXiv 2020, arXiv:1907.05737. [Google Scholar]

- Xu, Z.; Yang, W.; Meng, A.; Lu, N.; Huang, H.; Ying, C.; Huang, L. Towards end-to-end license plate detection and recognition: A large dataset and baseline. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 255–271. [Google Scholar]

- Lee, S.; Son, K.; Kim, H.; Park, J. Car plate recognition based on CNN using embedded system with GPU. In Proceedings of the 2017 10th International Conference on Human System Interactions (HSI), Ulsan, Korea, 17–19 July 2017; pp. 239–241. [Google Scholar] [CrossRef]

- Arth, C.; Limberger, F.; Bischof, H. Real-Time License Plate Recognition on an Embedded DSP-Platform. In Proceedings of the 2007 IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 18–23 June 2007; pp. 1–8. [Google Scholar] [CrossRef]

- Rizvi, S.T.H.; Patti, D.; Björklund, T.; Cabodi, G.; Francini, G. Deep Classifiers-Based License Plate Detection, Localization and Recognition on GPU-Powered Mobile Platform. Future Internet 2017, 9, 66. [Google Scholar] [CrossRef]

- Izidio, D.; Ferreira, A.; Barros, E. An Embedded Automatic License Plate Recognition System using Deep Learning. In Proceedings of the VIII Brazilian Symposium on Computing Systems Engineering (SBESC), Salvador, Brazil, 5–8 November 2018; pp. 38–45. [Google Scholar] [CrossRef]

- Liew, C.; On, C.K.; Alfred, R.; Guan, T.T.; Anthony, P. Real time mobile based license plate recognition system with neural networks. In Journal of Physics: Conference Series; IOP Publishing Ltd.: Bristol, UK, 2020; Volume 1502, p. 012032. [Google Scholar]

- Wu, S.; Zhai, W.; Cao, Y. PixTextGAN: Structure aware text image synthesis for license plate recognition. IET Image Process. 2019, 13, 2744–2752. [Google Scholar] [CrossRef]

- Chang, I.S.; Park, G. Improved Method of License Plate Detection and Recognition using Synthetic Number Plate. J. Broadcast Eng. 2021, 26, 453–462. [Google Scholar]

- Barreto, S.C.; Lambert, J.A.; de Barros Vidal, F. Using Synthetic Images for Deep Learning Recognition Process on Automatic License Plate Recognition. In Proceedings of the Mexican Conference on Pattern Recognition, Querétaro, Mexico, 26–29 June 2019; pp. 115–126. [Google Scholar]

- Harrysson, O. License Plate Detection Utilizing Synthetic Data from Superimposition. Master’s Thesis, Lund University, Lund, Sweden, 2019. [Google Scholar]

- Björklund, T.; Fiandrotti, A.; Annarumma, M.; Francini, G.; Magli, E. Automatic license plate recognition with convolutional neural networks trained on synthetic data. In Proceedings of the 2017 IEEE 19th International Workshop on Multimedia Signal Processing (MMSP), Luton, UK, 16–18 October 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Zeni, L.F.; Jung, C. Weakly Supervised Character Detection for License Plate Recognition. In Proceedings of the 2020 33rd SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), Porto de Galinhas, Brazil, 7–10 November 2020; pp. 218–225. [Google Scholar]

- Saluja, R.; Maheshwari, A.; Ramakrishnan, G.; Chaudhuri, P.; Carman, M. Ocr on-the-go: Robust end-to-end systems for reading license plates & street signs. In Proceedings of the 2019 International Conference on Document Analysis and Recognition (ICDAR), Sydney, Australia, 20–25 September 2019; pp. 154–159. [Google Scholar]

- Matas, J.; Zimmermann, K. Unconstrained licence plate and text localization and recognition. In Proceedings of the IEEE Intelligent Transportation Systems Conference, Vienna, Austria, 13–16 September 2005; pp. 225–230. [Google Scholar]

- Zhang, X.; Shen, P.; Xiao, Y.; Li, B.; Hu, Y.; Qi, D.; Xiao, X.; Zhang, L. License plate-location using AdaBoost Algorithm. In Proceedings of the 2010 IEEE International Conference on Information and Automation, Harbin, China, 20–23 June 2010; pp. 2456–2461. [Google Scholar] [CrossRef]

- Boonsim, N.; Prakoonwit, S. Car make and model recognition under limited lighting conditions at night. Pattern Anal. Appl. 2017, 20, 1195–1207. [Google Scholar] [CrossRef] [Green Version]

- Xie, L.; Ahmad, T.; Jin, L.; Liu, Y.; Zhang, S. A New CNN-Based Method for Multi-Directional Car License Plate Detection. IEEE Trans. Intell. Transp. Syst. 2018, 19, 507–517. [Google Scholar] [CrossRef]

- Laroca, R.; Zanlorensi, L.A.; Gonçalves, G.R.; Todt, E.; Schwartz, W.R.; Menotti, D. An efficient and layout-independent automatic license plate recognition system based on the YOLO detector. arXiv 2019, arXiv:1909.01754. [Google Scholar]

- Montazzolli, S.; Jung, C. Real-Time Brazilian License Plate Detection and Recognition Using Deep Convolutional Neural Networks. In Proceedings of the 2017 30th SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), Rio de Janeiro, Brazil, 17–20 October 2017. [Google Scholar] [CrossRef]

- Wang, F.; Man, L.; Wang, B.; Xiao, Y.; Pan, W.; Lu, X. Fuzzy-based algorithm for color recognition of license plates. Pattern Recognit. Lett. 2008, 29, 1007–1020. [Google Scholar] [CrossRef]

- Anagnostopoulos, C.N.; Anagnostopoulos, I.; Loumos, V.; Kayafas, E. A License Plate-Recognition Algorithm for Intelligent Transportation System Applications. IEEE Trans. Intell. Transp. Syst. 2006, 7, 377–392. [Google Scholar] [CrossRef]

- Selmi, Z.; Ben Halima, M.; Alimi, A. Deep Learning System for Automatic License Plate Detection and Recognition. In Proceedings of the 2017 14th IAPR International Conference on Document Analysis and Recognition (ICDAR), Kyoto, Japan, 9–15 November 2017; pp. 1132–1138. [Google Scholar] [CrossRef]

- Luo, L.; Sun, H.; Zhou, W.; Luo, L. An Efficient Method of License Plate Location. In Proceedings of the 2009 First International Conference on Information Science and Engineering, Lisboa, Portugal, 23–26 March 2009; pp. 770–773. [Google Scholar]

- Busch, C.; Domer, R.; Freytag, C.; Ziegler, H. Feature based recognition of traffic video streams for online route tracing. In Proceedings of the VTC ’98 48th IEEE Vehicular Technology Conference. Pathway to Global Wireless Revolution (Cat. No.98CH36151), Ottawa, ON, Canada, 21–21 May 1998; IEEE: Ottawa, ON, Canada, 1998; Volume 3, pp. 1790–1794. [Google Scholar]

- Sarfraz, M.; Ahmed, M.; Ghazi, S.A. Saudi Arabian license plate recognition system. In Proceedings of the 2003 International Conference on Geometric Modeling and Graphics, London, UK, 16–18 July 2003; pp. 36–41. [Google Scholar]

- Sanyuan, Z.; Mingli, Z.; Xiuzi, Y. Car plate character extraction under complicated environment. In Proceedings of the 2004 IEEE International Conference on Systems, Man and Cybernetics (IEEE Cat. No.04CH37583), The Hague, The Netherlands, 10–13 October 2004; Volume 5, pp. 4722–4726. [Google Scholar]

- Yoshimori, S.; Mitsukura, Y.; Fukumi, M.; Akamatsu, N.; Pedrycz, W. License plate detection system by using threshold function and improved template matching method. In Proceedings of the IEEE Annual Meeting of the Fuzzy Information, Banff, AB, Canada, 27–30 June 2004; Volume 1, pp. 357–362. [Google Scholar]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar]

- Laroca, R.; Severo, E.; Zanlorensi, L.; Oliveira, L.; Gonçalves, G.; Schwartz, W.; Menotti, D. A Robust Real-Time Automatic License Plate Recognition Based on the YOLO Detector. In Proceedings of the 2018 International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–10. [Google Scholar]

- Hsu, G.S.; Ambikapathi, A.M.; Chung, S.L.; Su, C.P. Robust license plate detection in the wild. In Proceedings of the 2017 14th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Lecce, Italy, 29 August–1 September 2017; pp. 1–6. [Google Scholar]

- Das, S.; Mukherjee, J. Automatic License Plate Recognition Technique using Convolutional Neural Network. Int. J. Comput. Appl. 2017, 169, 32–36. [Google Scholar] [CrossRef]

- Yonetsu, S.; Iwamoto, Y.; Chen, Y.W. Two-Stage YOLOv2 for Accurate License-Plate Detection in Complex Scenes. In Proceedings of the 2019 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 11–13 January 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- Berg, A.; Öfjäll, K.; Ahlberg, J.; Felsberg, M. Detecting Rails and Obstacles Using a Train-Mounted Thermal Camera. In Proceedings of the Scandinavian Conference on Image Analysis, Copenhagen, Denmark, 15–17 June 2015; pp. 492–503. [Google Scholar] [CrossRef] [Green Version]

- Siegel, R. Land mine detection. IEEE Instrum. Meas. Mag. 2002, 5, 22–28. [Google Scholar] [CrossRef]

- Zhang, L.; Gonzalez-Garcia, A.; van de Weijer, J.; Danelljan, M.; Khan, F.S. Synthetic Data Generation for End-to-End Thermal Infrared Tracking. IEEE Trans. Image Process. 2019, 28, 1837–1850. [Google Scholar] [CrossRef] [Green Version]

- Isola, P.; Zhu, J.; Zhou, T.; Efros, A.A. Image-to-Image Translation with Conditional Adversarial Networks. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5967–5976. [Google Scholar]

- Zhu, J.; Park, T.; Isola, P.; Efros, A.A. Unpaired Image-to-Image Translation Using Cycle-Consistent Adversarial Networks. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2242–2251. [Google Scholar]

- Ismail, M. License plate Recognition for moving vehicles case: At night and under rain condition. In Proceedings of the 2017 Second International Conference on Informatics and Computing (ICIC), Jayapura, Indonesia, 1–3 November 2017; pp. 1–4. [Google Scholar] [CrossRef]

- Mahini, H.; Kasaei, S.; Dorri, F.; Dorri, F. An Efficient Features - Based License Plate Localization Method. In Proceedings of the 18th International Conference on Pattern Recognition (ICPR’06), Hong Kong, China, 20–24 August 2006; Volume 2, pp. 841–844. [Google Scholar] [CrossRef]

- Chen, Y.-T.; Chuang, J.-H.; Teng, W.-C.; Lin, H.-H.; Chen, H.-T. Robust license plate detection in nighttime scenes using multiple intensity IR-illuminator. In Proceedings of the 2012 IEEE International Symposium on Industrial Electronics, Hangzhou, China, 28–31 May 2012; pp. 893–898. [Google Scholar] [CrossRef]

- Azam, S.; Islam, M. Automatic License Plate Detection in Hazardous Condition. J. Vis. Commun. Image Represent. 2016, 36. [Google Scholar] [CrossRef]

- Shi, W.; Cao, J.; Zhang, Q.; Li, Y.; Xu, L. Edge Computing: Vision and Challenges. IEEE Internet Things J. 2016, 3, 637–646. [Google Scholar] [CrossRef]

- Shashirangana, J.; Padmasiri, H.; Meedeniya, D.; Perera, C.; Nayak, S.R.; Nayak, J.; Vimal, S.; Kadry, S. License Plate Recognition Using Neural Architecture Search for Edge Devices. Int. J. Intell. Syst. Spec. Issue Complex Ind. Intell. Syst. 2021, 36, 1–38. [Google Scholar] [CrossRef]

- Hochstetler, J.; Padidela, R.; Chen, Q.; Yang, Q.; Fu, S. Embedded deep learning for vehicular edge computing. In Proceedings of the 2018 3rd ACM/IEEE Symposium on Edge Computing, Bellevue, WA, USA, 25–27 October 2018; pp. 341–343. [Google Scholar] [CrossRef]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:cs.CV/1704.04861. [Google Scholar]

- Yi, S.; Hao, Z.; Qin, Z.; Li, Q. Fog computing: Platform and applications. In Proceedings of the 3rd Workshop on Hot Topics in Web Systems and Technologies, HotWeb 2015, Washington, DC, USA, 12–13 November 2016; pp. 73–78. [Google Scholar] [CrossRef] [Green Version]

- Ha, K.; Chen, Z.; Hu, W.; Richter, W.; Pillai, P.; Satyanarayanan, M. Towards wearable cognitive assistance. In Proceedings of the Proceedings of the 12th Annual International Conference on Mobile Systems, Applications, and Services, Bretton Woods, NH, USA, 16–19 June 2014; pp. 68–81. [Google Scholar] [CrossRef] [Green Version]

- Chun, B.G.; Ihm, S.; Maniatis, P.; Naik, M.; Patti, A. CloneCloud: Elastic execution between mobile device and cloud. In Proceedings of the EuroSys 2011 Conference, Salzburg, Austria, 10–13 April 2011; pp. 301–314. [Google Scholar] [CrossRef]

- Luo, X.; Xie, M. Design and Realization of Embedded License Plate Recognition System Based on DSP. In Proceedings of the 2010 Second International Conference on Computer Modeling and Simulation, Sanya, China, 22–24 January 2010; Volume 2, pp. 272–276. [Google Scholar] [CrossRef]

- Li, H.; Wang, P.; Shen, C. Toward End-to-End Car License Plate Detection and Recognition with Deep Neural Networks. IEEE Trans. Intell. Transp. Syst. 2019, 20, 1126–1136. [Google Scholar] [CrossRef]

- Jamtsho, Y.; Riyamongkol, P.; Waranusast, R. Real-time Bhutanese license plate localization using YOLO. ICT Express 2020, 6, 121–124. [Google Scholar] [CrossRef]

- Francis-Mezger, P.; Weaver, V.M. A Raspberry Pi Operating System for Exploring Advanced Memory System Concepts. In Proceedings of the International Symposium on Memory Systems; Association for Computing Machinery: New York, NY, USA, 2018; MEMSYS’18; pp. 354–364. [Google Scholar] [CrossRef]

- Tolmacheva, A.; Ogurtsov, D.; Dorrer, M. Justification for choosing a single-board hardware computing platform for a neural network performing image processing. IOP Conf. Ser. Mater. Sci. Eng. 2020, 734, 012130. [Google Scholar] [CrossRef]

- Pagnutti, M.; Ryan, R.; Cazenavette, G.; Gold, M.; Harlan, R.; Leggett, E.; Pagnutti, J. Laying the foundation to use Raspberry Pi 3 V2 camera module imagery for scientific and engineering purposes. J. Electron. Imaging 2017, 26, 013014. [Google Scholar] [CrossRef] [Green Version]

- Win, H.H.; Thwe, T.T.; Swe, M.M.; Aung, M.M. Call and Send Messages by Using GSM Module. J. Myanmar Acad. Arts Sci. 2019, XVII, 99–107. [Google Scholar]

- Odat, E.; Shamma, J.S.; Claudel, C. Vehicle classification and speed estimation using combined passive infrared/ultrasonic sensors. IEEE Trans. Intell. Transp. Syst. 2017, 19, 1593–1606. [Google Scholar] [CrossRef]

- Zappi, P.; Farella, E.; Benini, L. Tracking motion direction and distance with pyroelectric IR sensors. IEEE Sens. J. 2010, 10, 1486–1494. [Google Scholar] [CrossRef]

- Hwang, S.; Park, J.; Kim, N.; Choi, Y.; Kweon, I.S. Multispectral Pedestrian Detection: Benchmark Dataset and Baselines. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 4510–4520. [Google Scholar] [CrossRef] [Green Version]

- Padmasiri, H.; Shashirangana, J. Lite-LPNet. 2021. Available online: https://github.com/heshanpadmasiri/Lite-LPNet (accessed on 20 October 2021).

- Brock, A.; Lim, T.; Ritchie, J.M.; Weston, N. SMASH: One-Shot Model Architecture Search through HyperNetworks. arXiv 2018, arXiv:1708.05344. [Google Scholar]

| Related Study | Description | Techniques | Type (D/N/S) | Performance |

|---|---|---|---|---|

| [15] | Use a NVIDIA Jetson TX1 embedded board with GPU. Provides LP recognition without a detection line. Not robust to broken or reflective plates. | AlexNet (CNN) | D | AC = 95.25% |

| [16] | Real-time LP recognition on an embedded DSP platform, Operation under daytime condition with sufficient daylight or artificial light from street lamps, High performance with low image resolution. | SVM | D | F = 86% |

| [17] | Real-time LP recognition on GPU powered mobile platform by simplifying a trained neural network developed for desktop/ server environment. | CNN | D, N, S | AC = 94% |

| [18] | Implemented in a Raspberry Pi3 with a Pi NoIR v2 camera module. Robust to angle, lighting and noise variations, Free from character segmentation to reduce errors in character mis-segmentation. | CNN | D, S | AC = 97% |

| [19] | A portable ALPR model trained on a desktop computer and exported to an Android mobile device. | CNN | D | AC = 77.2% |

| Study | NT | Syn. | Synthesised Method | Performance |

|---|---|---|---|---|

| [20] | ✓ | GAN-based | AC = 84.57% | |

| [21] | ✓ | GAN-based | AC = 91.5% | |

| [22] | ✓ | Augmentation (rotation, size and noise) | AC = 62.47% | |

| [23] | ✓ | Augmentation, superimposition, GAN-based | AP = 99.32% | |

| [24] | ✓ | Illumination and pose conditions | R = 93% | |

| [25] | ✓ | Random modifications (colour, blur, noise) | AC = 99.98% | |

| [26] | ✓ | ✓ | Random modifications (colour, depth) | AC = 85.3% |

| [27] | ✓ | ✓ | Intensity changes | FN = 1.5% |

| [17] | ✓ | ✓ | Illumination and pose conditions | AC = 94% |

| [28] | ✓ | AC = 96% | ||

| [29] | ✓ | AC = 93% | ||

| [14] | ✓ | AP = 95.5% | ||

| [30] | ✓ | F = 98.32% | ||

| [31] | ✓ | AC = 95.7% | ||

| [32] | ✓ | AC = 93.99% | ||

| [33] | ✓ | AC = 92.6% | ||

| [34] | ✓ | AC = 86% | ||

| [35] | ✓ | AC = 96.2% |

| Hardware Tier | Specification | Cost (as of January-2022) |

|---|---|---|

| Low-tier | Raspberry Pi Zero | USD 10.60 |

| Mid-tier | Raspberry Pi 3 B+ | USD 38.63 |

| High-tier | Raspberry Pi 3b+, Intel Neural Compute Stick 2 | USD 38.63 + USD 89.00 |

| Data Set | CCPD Day Time Image | Synthesised Nighttime Image from CCPD | Sri Lankan LP Images-Day Time | Sri Lankan LP Images-Nighttime |

|---|---|---|---|---|

| Sample image |  |  |  |  |

| No. of images | 200,000 | 200,000 | 100 | 100 |

| Model | Resource | Performance Measure | ||||

|---|---|---|---|---|---|---|

| Name | Requirement | Latency (s) | Model Size (MB) | AP (Daytime) | AP (Synthetic) | AP (Real) |

| s1_h | Raspberry Pi 3b+, Intel® NCS2 | 0.012 | 0.7776 | 0.9284 | 0.8451 | 0.85 |

| s1_h_h | Raspberry Pi 3b+, Intel® NCS2 | 0.011 | 0.8707 | 0.9299 | 0.8401 | 0.9 |

| s1_m | Raspberry Pi 3b+ | 0.157 | 0.6869 | 0.9005 | 0.7982 | 0.85 |

| s1_m_h | Raspberry Pi 3b+ | 0.004 | 0.6830 | 0.9029 | 0.7962 | 1.0 |

| s1_l | Raspberry Pi Zero | 4.54 | 0.5568 | 0.8422 | 0.7146 | 0.95 |

| s1_l_h | Raspberry Pi Zero | 4.08 | 0.5625 | 0.8327 | 0.6987 | 0.95 |

| Model | Resource | Performance Measure | ||||

|---|---|---|---|---|---|---|

| Name | Requirement | Latency (s) | Model Size (MB) | Accuracy (Daytime) | Accuracy (Synthetic) | Accuracy (Real) |

| s2_h | Raspberry Pi 3b+, Intel® NCS2 | 0.021 | 4.5 | 0.9987 | 0.9476 | 0.9873 |

| s2_m | Raspberry Pi 3b+ | 0.148 | 11.7 | 0.9877 | 0.9382 | 0.9882 |

| s2_l | Raspberry Pi Zero | 6.2 | 4.5 | 0.9565 | 0.9054 | 0.9586 |

| Experiment | Number of Images | Number of Correct Images | Camera Position | ||

|---|---|---|---|---|---|

| Low-Tier | Mid-Tier | High-Tier | |||

| 1 | 27 | 25 | 26 | 26 | 1 |

| 2 | 35 | 30 | 31 | 34 | 1 |

| 3 | 33 | 30 | 31 | 33 | 2 |

| 4 | 29 | 24 | 25 | 28 | 2 |

| 5 | 25 | 21 | 23 | 25 | 3 |

| 6 | 28 | 22 | 25 | 27 | 3 |

| 7 | 30 | 25 | 26 | 28 | 4 |

| 8 | 26 | 19 | 23 | 25 | 4 |

| Hardware Tier | Power Consumption (W) | Average Battery Life (h) |

|---|---|---|

| Low-tier | 0.8 | 132.15 |

| Mid-tier | 5.15 | 11.03 |

| High-tier | 6.2 | 13.04 |

| Study | Dataset | Resource Requirement | Accuracy | Latency |

|---|---|---|---|---|

| Lee et al. [15] | Nearly 500 images | NVIDIA Jetson TX1 embedded board | 95.24% (daytime) | N/A |

| Arth et al. [16] | Test set 1: 260 images Test set 2: 2600 images Different weather and illumination types | Single Texas Instruments TM C64 fixed point DSP with 1MB of cache, Extra 16 MB SDRAM | 96% (daytime) | 0.05211 s |

| Rezvi et al. [17] | Italian rear LP with 788 crops | Quadro K2200, Jetson TX1 embedded board, Nvidia Shield K1 tablet | Det: 61%, Rec: 92% (daytime) | Det: 0.026 s, Rec: 0.027 s (Quadro K2200) |

| Izidio et al. [18] | Custom dataset with 1190 images, | Raspberry Pi3 (ARM Cortex-A53 CPU) | Det: 99.37%, Rec: 99.53% (daytime) | 4.88 s |

| Proposed high-tier solution | CCPD (200,000 images), Synthetic nighttime dataset (CCPD), Real nighttime 100 images | Raspberry Pi 3B+, Intel® NCS2 | Det: 90%, Rec: 98.73% (nighttime) | Det: 0.011 s Rec: 0.02176 s |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Padmasiri, H.; Shashirangana, J.; Meedeniya, D.; Rana, O.; Perera, C. Automated License Plate Recognition for Resource-Constrained Environments. Sensors 2022, 22, 1434. https://doi.org/10.3390/s22041434

Padmasiri H, Shashirangana J, Meedeniya D, Rana O, Perera C. Automated License Plate Recognition for Resource-Constrained Environments. Sensors. 2022; 22(4):1434. https://doi.org/10.3390/s22041434

Chicago/Turabian StylePadmasiri, Heshan, Jithmi Shashirangana, Dulani Meedeniya, Omer Rana, and Charith Perera. 2022. "Automated License Plate Recognition for Resource-Constrained Environments" Sensors 22, no. 4: 1434. https://doi.org/10.3390/s22041434

APA StylePadmasiri, H., Shashirangana, J., Meedeniya, D., Rana, O., & Perera, C. (2022). Automated License Plate Recognition for Resource-Constrained Environments. Sensors, 22(4), 1434. https://doi.org/10.3390/s22041434