1. Introduction

Visual simultaneous localization and mapping (Visual SLAM) and Visual Odometry (VO) estimate the 6 DoF camera pose from a sequence of camera images. They have various applications, such as autonomous robots and virtual and augmented reality (VR/AR).

Indoor environments contain low-texture surfaces such as the floor, walls, and ceiling, which leads to performance degradation for pure point-based methods [

1]. Robust pose estimation performance can be improved by adding geometric structural features present in indoor scenes, such as lines and planes, to the systems [

2,

3,

4,

5,

6,

7]. These works extend the working scenarios to low-textured environments.

A technique to leverage the structural regularity in indoor scenes is based on the MW/AW assumption, which can reduce the rotation drift. This technique has been employed by [

8,

9,

10,

11,

12,

13]. These systems benefit from the MW/AW assumption to the rotation estimation. They decouple the rotational and translational motion estimation and estimate drift-free rotational motion from structural regularities in man-made environments, which reduces the rotation error in the whole trajectory. However, the MW/AW assumption does not strictly hold in indoor scenes, which makes the range of applications limited. Zhou et al. [

14] proposed using a single mean shift iteration algorithm to estimate the Manhattan dominant direction by a set of normal vectors. In [

8], the absolute, drift-free rotation is estimated by tracking the MF from surface normal vectors. The translational motion is recovered by minimizing the de-rotated reprojection error with available depth point features. These approaches only use planes to search MF, which means that we at least need to detect two orthogonal planes in each frame. However, in practice, detecting two orthogonal planes is not very easy. To address this problem, Line and Plane based Visual Odometry (LPVO) [

9] uses all tracked points (with and without depth) to estimate translation. They combine lines and planes to estimate drift-free rotation by a mean shift algorithm. To tackle the drift in translation estimation, Linear RGB-D SLAM (L-SLAM) [

10] adds orthogonal planar features within a linear Kalman Filter framework based on LPVO. Atlanta Frame SLAM (AF-SLAM) [

11] extends L-SLAM to cover more general structural environments with the AW assumption while maintaining linear computational complexity. [

13] estimates the translation part by using point-line-plane tracking and adds parallel and perpendicular planar constraints to improve the tracking accuracy. [

15] designed a short-term tracking module to track the clustered line features. In addition, a long-term searching module is designed to generate abundant sets of vanishing points (VPs) candidates and retrieve the optimal one. To optimize the model, [

15] constructs a least square problem to provide refined VPs with the clusters of structural line features in each frame. To cope with dynamic scenarios, [

16] uses a 2D tracker to track the moving object in bounding boxes. This method can effectively exclude the dynamic background and remove the outlier point and line features. [

17] presents a semantic planar SLAM system to improve pose estimation and mapping by using cues from an instance planar segmentation network. [

18] eliminates line features that are consistent with the motion direction. The structural line features are selected according to the direction information of vanishing points for a stronger geometric constraint on the pose estimation.

However, the decoupled scheme needs the MW assumption for every frame, which is very limiting. The indoor environments are not strictly conforming to the assumption, leading to performance degradation or even tracking failures. To address this issue, [

19] uses planes to distinguish whether the scenes conform to the MW assumption, and then it chooses a decoupled or a non-decoupled tracking strategy to obtain the camera motion pose. Additionally, [

5] proposes directly adding parallel and perpendicular constraints of planes to reduce drift errors in indoor environments without the MW assumption. [

20] incorporates the MW assumption at the local map optimization stage instead of the tracking stage. Then, a local map optimization approach is proposed to combine the point and line reprojection error, the Manhattan Axes (MA) alignment, and the structural constraints of the scene. This method reduces the influence of punctual dissatisfaction with some constraints.

This paper proposes an RGB-D VO algorithm using points and lines to achieve robust pose features and good performance. We leverage the structural regularities in indoor scenes to improve tracking performance. The proposed method automatically recognizes whether the scene conforms to the MW assumption and chooses different tracking strategies. Moreover, we model the MW scenes as a Mixture of Manhattan Frames (MMF) [

21], which consists of multiple independent MFs. We detect MFs with dominant directions extracted from parallel lines. Finally, we use dominant directions in local map bundle adjustment (BA) to improve rotation estimation. The proposed RGB-D VO system is shown in

Figure 1. In summary, the main contributions of this work are as follows:

A robust and general RGB-D VO framework for indoor environments is proposed. It is more suitable for real-world scenes because it can choose different tracking methods (decoupled and non-decoupled pose estimation methods) for different scenes.

A novel drift-free rotation estimation approach is proposed. We detect the dominant directions for every frame by clustering the parallel lines. These dominant directions are tracked to detect MFs. Then, we use a mean-shift algorithm to obtain rotation estimation.

An accurate and efficient local map bundle adjustment strategy combines points and lines reprojection errors with the rotation constraints from the multi-view dominant directions observations.

We compare the proposed method with other works in the literature, as shown in

Table 1. All works are open source. To verify the effectiveness of the proposed method, we evaluate the proposed method on synthetic and real-world RGB-D benchmark datasets.

2. Materials and Methods

2.1. System Overview

In this work, we use

to represent the camera pose of the

th frame, where

and

denote the rotation and translation from the world frame to the camera frame, respectively. We also use a set of unit vectors

to represent the dominant directions in the global map, and these vectors constitute all MFs saved in the Manhattan map

. Each MF contains the three mutually orthogonal dominant directions. These concepts are visualized in

Figure 2. In addition, we use

to represent the dominant directions in

th frame. The rotation matrix

represents the orientation from

th MF to

th camera frame.

With the RGB-D camera as the sensor input, the proposed system is built on top of the tracking and local mapping components of Oriented FAST and Rotated BRIEF SLAM2 (ORB-SLAM2) [

22]. The overall framework is shown in

Figure 3. We then describe each module of the proposed VO system.

The tracking thread is used to estimate the pose of each frame and select appropriate keyframes as input to the local mapping thread. In the tracking thread, for each frame, we extract point and line features from the RGB image and surface normals from the depth image, which are performed in parallel. Then, we extract the dominant directions from parallel lines to estimate the MFs in the current frame. The points, lines, and dominant directions are tracked and matched to estimate the camera pose. We divide the scenes into MW scenes and non-MW scenes. For MW scenes, we use a decoupled method to estimate the rotational and translational motion. For non-MW scenes, we combine point and line features with the dominant direction observations to estimate the whole 6 DoF camera pose. Based on the initial pose estimation, the camera motion is refined with the matched landmarks from the local map. Finally, the results on the keyframe are inferenced. We take both point and line features into account to decide whether a new keyframe should be inserted. Instead of a fixed reasonable threshold, the ratio-based method is use to create a new keyframe [

20].

Map points, map lines, dominant directions, a set of keyframes, a covisibility graph, and a spanning tree jointly make up the stored map. The covisibility graph is maintained to link any two keyframes observing common landmarks. Whenever a keyframe is inserted, the local mapping thread is implemented to process the new keyframe and update the covisibility graph by the number of covisible landmarks. The map point culling and the map line culling are performed to improve tracking performance by retaining the high-quality map points and map lines. Furthermore, we merge the dominant directions to maintain the orientation difference between any two directions. Besides, a local map bundle adjustment procedure is performed to estimate keyframes poses, together with map points, map lines, and dominant directions observed by these keyframes. Finally, a keyframe culling procedure is conducted to remove the redundant keyframes. A keyframe is considered to be removed when more than 90% of map points can be observed by other keyframes (usually at least 3).

2.2. Feature Detection and Matching

In this paper, we use ORB features [

23] to address the rotation, scale, and illumination changes. They can be extracted and matched quickly. The lines are extracted by Line Segment Detector (LSD) [

24] and represented by Line Band Descriptor (LBD) [

25]. The unit surface normal vectors are extracted from the depth image [

9]. These procedures are conducted in parallel.

After extracting 2D features in the frame

, we use

to represent the 2D point feature and

to represent the line segment in image coordinates. Let

denote the start point and end point in the line segment

, respectively. The normalized line function of the observed 2D line segment is denoted as

, formally:

Once the 2D features have been detected and described, it is easy to obtain the 3D positions in camera coordinates according to the camera intrinsic parameters and the depth image. The 3D points and lines are denoted as and , respectively. To match point features, we still use the same strategy as ORB-SLAM2 to match. We jointly use both the LBD descriptor and geometric constraints to match line features between consecutive frames.

2.3. Dominant Direction

After obtaining the 3D position of lines, we classify the 3D line vectors to obtain parallel line clusters. The dominant directions are extracted from the parallel lines. The dominant directions are tracked and matched to detect the MFs and estimate the camera pose. We solve a least square problem for every parallel line cluster to determine its dominant direction:

where

and

is the number of lines in this parallel line cluster. Each column

represents a unit direction vector of the line in this cluster. Then, we obtain the initial set of dominant directions

of the current frame

, and each dominant direction is a unit vector.

Unlike point and line features, the dominant directions are matched directly in the global map. To match the

th dominant direction

of the

th frame and the

th dominant direction

in the global map, we formulated it as:

We choose those pairs whose absolute values of cosine satisfy a given threshold (3 in this letter) as the candidate matches. As a result, we choose the dominant direction whose angular difference between and is the closest to 1 as the correct match.

Sometimes, the angular difference between two dominant directions in the global map may be smaller than the threshold after the local map BA. In that case, we merge the two dominant directions by an iterative to maintain the orientation difference between any two directions.

2.4. Manhattan Frame Detection

For MF in the th frame, it can be represented by three mutually perpendicular dominant directions . To detect an MF in , we compute the angular difference between two different dominant directions in . We think the two dominant directions are orthogonal if the angular difference meets the orthogonal threshold (at least 87 in this work). Any three dominant directions, which are mutually orthogonal, constitute an MF. If only two perpendicular dominant directions are found, the third direction can be obtained by taking the cross-product between the two dominant directions. At the same time, we add the newly created third dominant direction to the current frame’s dominant direction set . The rotation matrix from this MF to the current frame is represented as .

Like the method in [

19], we save the MFs in the scene to a Manhattan map

. Through the Manhattan map

, we can obtain the full and partial MF observations and the corresponding frames that observe the MF first.

2.5. Pose Estimation

Two different strategies are used to estimate the camera pose from world coordinates to camera coordinates , depending on whether the scenes conform to the MW assumption. For non-MW scenes, we directly estimate the 6 DoF camera pose with a feature tracking method. In MW scenes, we decouple the camera pose to separately estimate the rotational and translational motion.

2.5.1. Non-MW Scenes

In non-MW scenes, the tracked dominant directions are used to estimate the camera motion by combining the point-line tracking. The dominant directions only provide the orientation constraints, independent of translation. Then, the full camera pose is estimated by minimizing the following cost function:

where

,

, and

are the set of all point, line, and dominant direction matches, respectively. Let

denote the robust Huber cost function. The point reprojection error between observed 2D features and corresponding matched 3D features is defined as

where

is the 3D map point in world coordinates corresponding to the 2D point feature

in the image plane. The projection function

transforms a 3D point

in camera coordinates into the image plane:

where the focal length

,

and principal point

,

belong to camera intrinsic parameters. The line reprojection error is formulated based on the point-to-line distance between the 2D line segment

and the 3D endpoints

and

from the matched 3D line

. The error function is formulated as

We define the dominant direction observation errors based on the 3D–3D correspondence, formally:

where

,

are the dominant directions in world coordinates and camera coordinates, respectively. Then these data associations are employed to optimize the current camera pose using the Levenberg Marquardt (LM) algorithm implemented in g2o [

26].

2.5.2. MW Scenes

Compared to estimating the camera pose directly from frame-to-frame tracking, the pose estimation can be decoupled in MW scenes. To reduce the drift caused by frame-to-frame tracking, we leverage the structural constraints in scenes to estimate the drift-free rotation. The translation estimation is recovered from the feature tracking. The process is shown in

Figure 4.

For the rotation estimation, the set of dominant directions can be obtained using the method described in

Section 2.3. Then, all MFs in the current frame can be detected using the method described in

Section 2.4. To check whether an MF

in the current frame

is present in the Manhattan map

, we match the dominant direction in the current frame with the dominant direction in the global map using the method described in

Section 2.3. For three dominant directions that constitute the MF

, if we can find that at least two directions are matched with the dominant directions in the global map and

has been present in

, then we obtain the corresponding frame

in which

was first observed. If

does not contain any previously observed MF, then we use the feature-tracking method (

Section 2.5.1) instead of a decoupled method to solve the camera pose.

We use the popular mean shift algorithm [

8,

9,

14] for MF tracking to estimate the rotation matrix. Firstly, we calculate the initial relative rotation

from MF

to the current frame

with the reference frame

and the last frame

:

Secondly, we transform the unit direction vectors of lines and the surface normal vectors in the current frame to MF

using the transposed initial rotation matrix

. We project the unit direction vectors of lines and the surface normal vectors onto tangent planes to compute a mean shift. Then, the mean shift result is transformed back to the unit sphere from the tangential plane. Finally, we obtain the updated rotation matrix

. However, to make

still satisfy the orthogonality constraint, we transform

onto SO (3) manifold using singular value decomposition (SVD):

Then, we can obtain the rotation matrix

from world coordinates to the current camera frame

using the reference frame

:

More details on the sphere mean-shift method can be found in [

8,

9,

14].

Once we obtain the drift-free rotation estimation, the 3 DoF translation estimation can be calculated by using the point-line reprojection errors. Note that we do not use the dominant direction observation errors in this process since they only provide rotational constraints. Furthermore, we simplify the original non-linear optimization problem into a linear one:

where

and

are the rotation-assisted point and line errors, respectively:

where we refer

as the

th row of a vector.

,

represents the endpoints of the 3D line

. Then, we solve this BA problem using the LM algorithm.

After estimating the camera pose, we project the points, lines, and dominant directions in the local map to the current frame to obtain more correspondence. The current camera pose is optimized again with the resulting matches.

2.6. Local Map Bundle Adjustment

When a new keyframe is inserted, the next step is to perform a local map BA procedure, which refines the camera poses and landmarks in the local map.

is the definition of the variable set to be optimized.

represents all keyframes to be optimized, including the newly inserted keyframe and all local keyframes that are connected to it in the covisibility graph.

,

, and

represent all the map points, map lines, and dominant directions observed by these keyframes, respectively. We also fix some keyframes that observe these points, lines, and dominant directions but do not belong to

, denoted by

. We minimize the following cost function to estimate

:

3. Results

To evaluate the performance of the proposed method, we conduct experiments in synthesized and real-world sequences. Additionally, we compare it with other state-of-the-art approaches. All the experiments have been performed on an Intel Core i5-10400 CPU @ 2.90 GHz/16 GB RAM, without GPU parallelization. Additionally, we disable the bundle adjustment and loop closure modules of ORB-SLAM2 and SP-SLAM to make a fair comparison.

ORB-SLAM2 [

22] is a feature-point based RGB-D SLAM system, and our method is based on it. MSC-VO is an RGB-D VO system using point, line, MW constraints, and a non-decoupled pose estimation method. ManhattanSLAM is an RGB-D SLAM system using point, line, plane, MMF constraints, and decoupled pose estimation methods. RGB-D SLAM is a SLAM system using point, line, plane, MW constraints, and decoupled pose estimation methods. SP-SLAM is an RGB-D SLAM system using point, plane, and non-decoupled pose estimation method. This information is also shown in

Table 1.

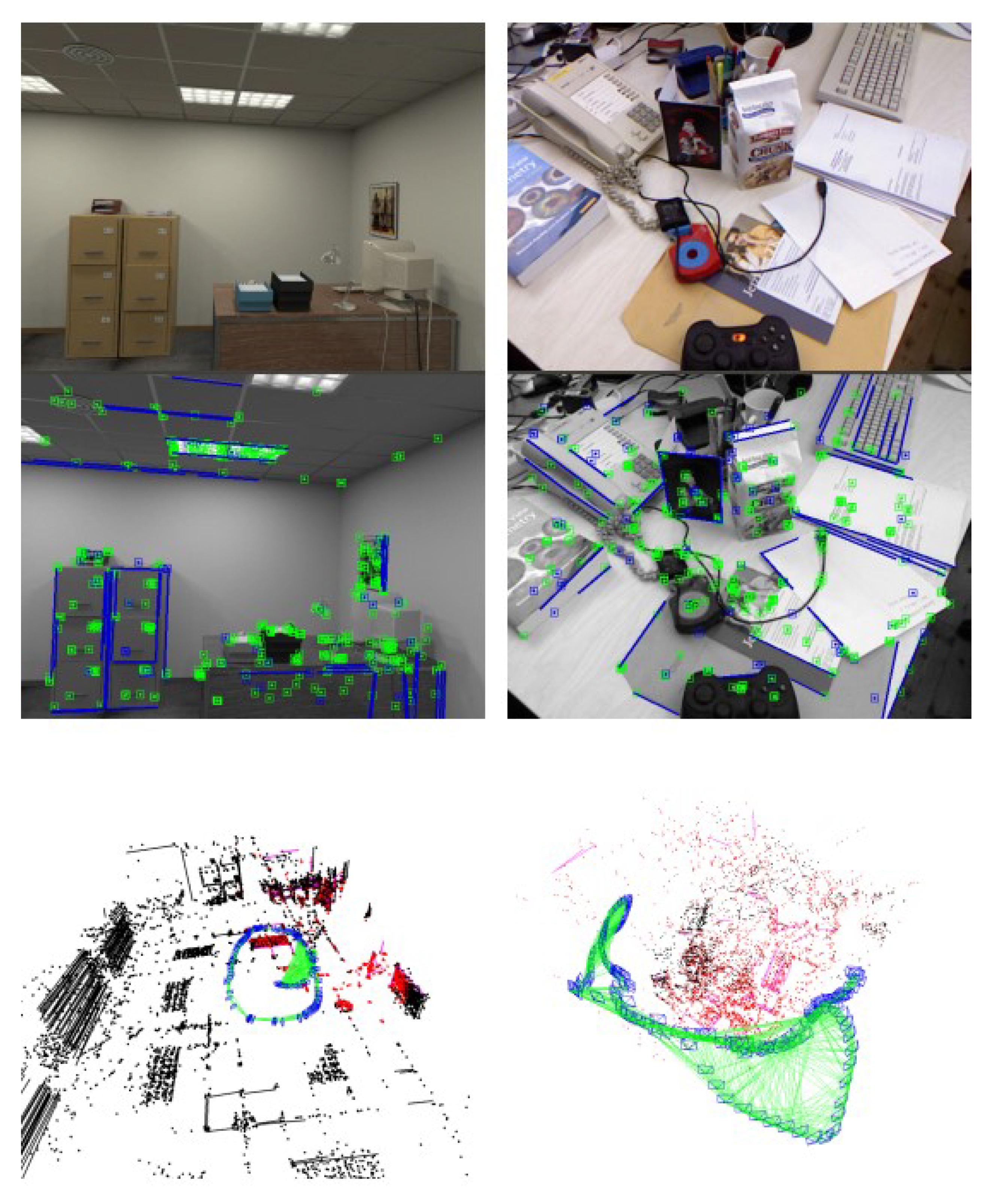

3.1. ICL-NUIM Dataset

Imperial College London and National University of Ireland Maynooth (ICL-NUIM) [

27] dataset is a synthesized dataset containing two low-texture scenes with ground truth trajectories: living room and office, as shown on the left side of

Figure 1. The scenes are rendered based on a rigid Manhattan World model. Furthermore, this dataset contains large structured areas and low-textured surfaces such as floors, walls, and ceilings.

Table 2 shows the performance of our method based on the translation root mean square error (RMSE) of the absolute trajectory error (ATE). We compared the proposed method with the state-of-the-art systems, including MSC-VO, ManhattanSLAM, RGB-D SLAM, SP-SLAM, and ORB-SLAM2. The comparison of the RMSE is also shown in

Figure 5.

Figure 6 shows the percentage of MFs detected from each sequence in the ICL-NUIM dataset.

3.2. TUM RGB-D Dataset

Technical University of Munich (TUM) RGB-D Benchmark [

28] is a popular dataset to evaluate RGB-D VO/SLAM systems. Unlike the ICL-NUIM dataset, it consists of several real-world camera sequences, which contain different indoor scenes such as cluttered scenes, and different structure and texture scenes, as shown in

Figure 7. Based on this, it can evaluate our system’s robustness and accuracy in both MW and non-MW scenes.

We selected 11 sequences in the TUM RGB-D dataset and divided them into three groups. Then we distinguished them according to the number of textures, structures and planes and whether they strictly follow the MW assumption.

Table 3 shows the differences between sequences.

Table 4 shows the performance comparison of our method based on the translation RMSE (ATE), and other systems, including MSC-VO, ManhattanSLAM, RGB-D SLAM, SP-SLAM, and ORB-SLAM2. Local map for the fr3-longoffice sequence is shown in

Figure 8. Relevant data are shown in

Figure 9,

Figure 10 and

Figure 11.

3.3. Time Consumption

The average running time of each operation of the proposed method and ManhattanSLAM can be found in

Table 5. We obtained the average results by running on seven different sequences in the TUM RGB-D benchmark.

3.4. Drift

We evaluated our system on the Texas A&M University (TAMU) RGB-D dataset [

29] to test the amount of accumulated drift and robustness over time. Unlike the ICL-NUIM and TUM RGB-D datasets, the TAMU dataset does not provide ground-truth poses and contains long indoor sequences. Due to the camera trajectory being a loop, we can calculate the Trajectory Endpoint Drift (TED) [

29], which computes the Euclidean distance between the starting and end points of the trajectory, to represent the accumulated drift. The output trajectory is shown on the right side of

Figure 12.

5. Conclusions

In this letter, we propose an accurate and efficient RGB-D Visual Odometry system leveraging the structural regularity in indoor environments, which can robustly run in general indoor scenes. This is achieved by leveraging the dominant directions extracted from parallel lines in scenes to improve localization accuracy. On the one hand, the dominant directions can be used to solve the drift-free rotation estimation in MW scenes. On the other hand, they can also provide a rotation constraint to incorporate point and lines reprojection errors to optimize the camera pose. All these contributions can improve the accuracy of the computed trajectory for our method, as shown in our experiments. Furthermore, our pipeline is designed to address the different scenes: MW scenes and non-MW scenes, which means our system can work in a wider range of environments.

The estimation accuracy of the line affects the calculation of the dominant direction. If the uncertainty of the 3D coordinates of the recovered line is too large, the calculation and matching of the dominant direction will be affected, and the relative MF cannot be matched. In the future, we would like to add a loop closure module and improve the dominant direction detection to further discard unstable observations. We will also try to implement the proposed method with a monocular camera and IMU, which is beneficial for the Manhattan Frame detection, and possibly extend it to outdoor environments.