A Novel Velocity-Based Control in a Sensor Space for Parallel Manipulators

Abstract

1. Introduction

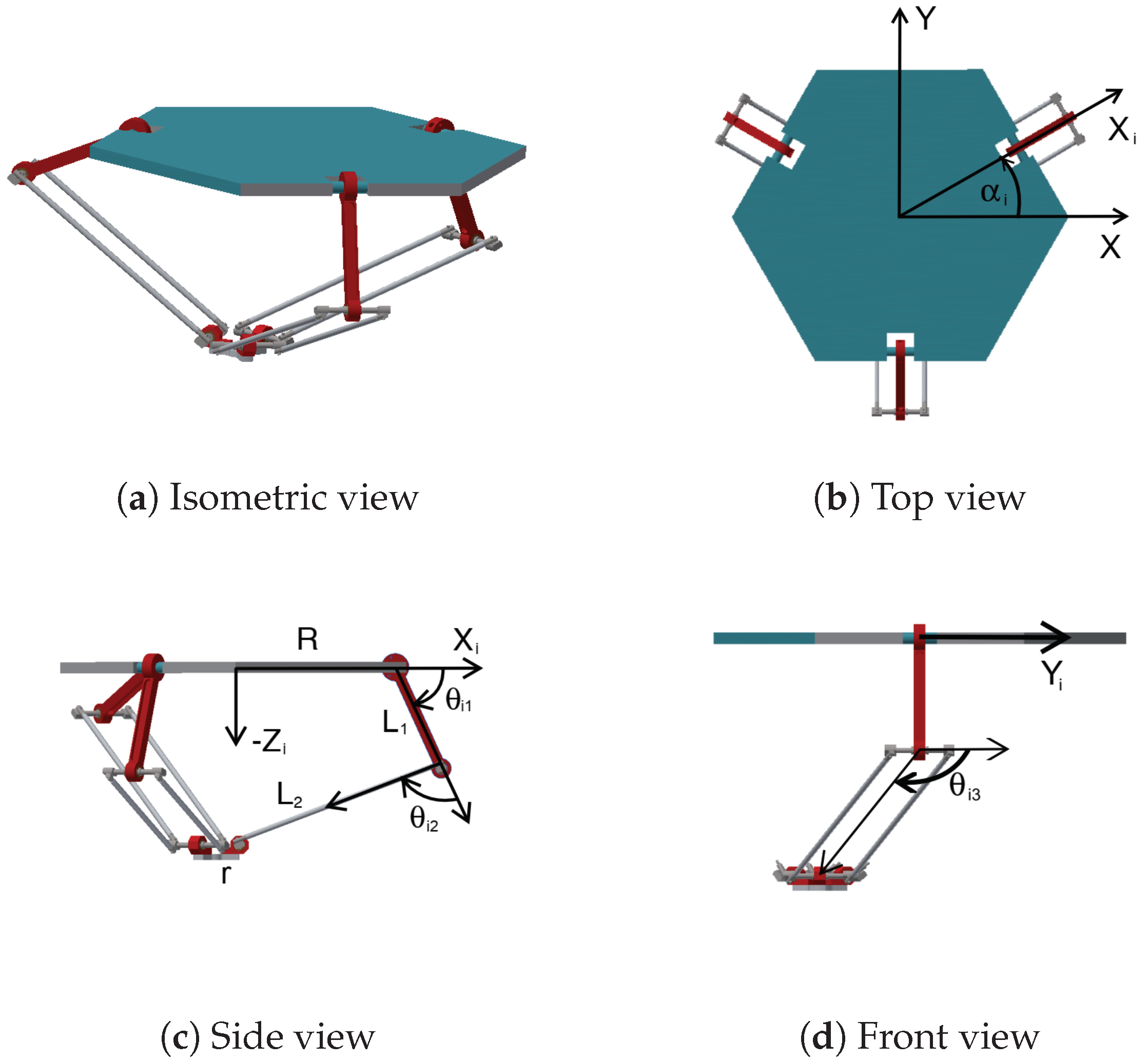

2. Delta-Type Parallel Robots

2.1. Forward and Inverse Kinematic Model of the Delta Robot

2.2. Computation of the Delta Robot’s Jacobian

3. Camera-Space Manipulation with a Linear Camera Model

Varying Weights

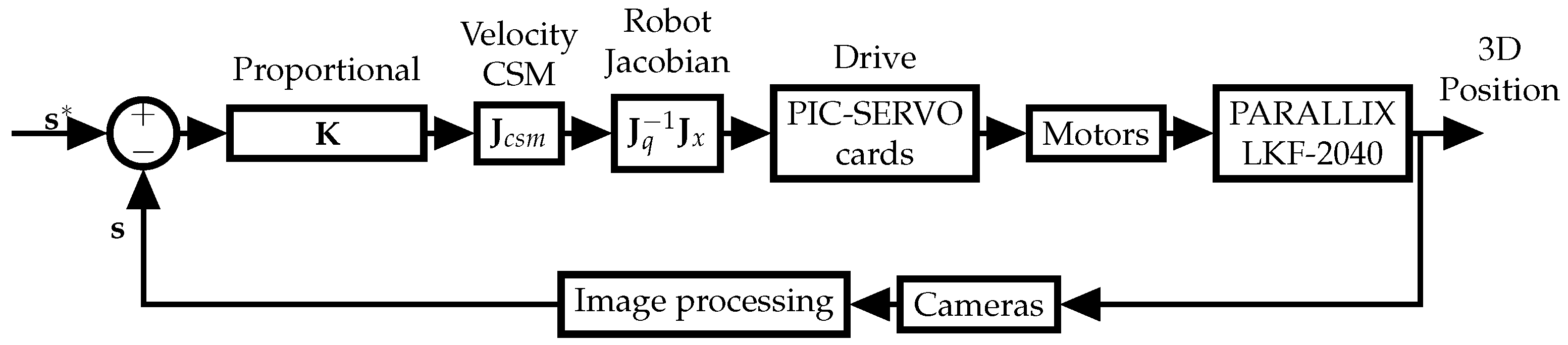

4. CSM-Based Velocity Control

Control Law

5. Materials and Methods

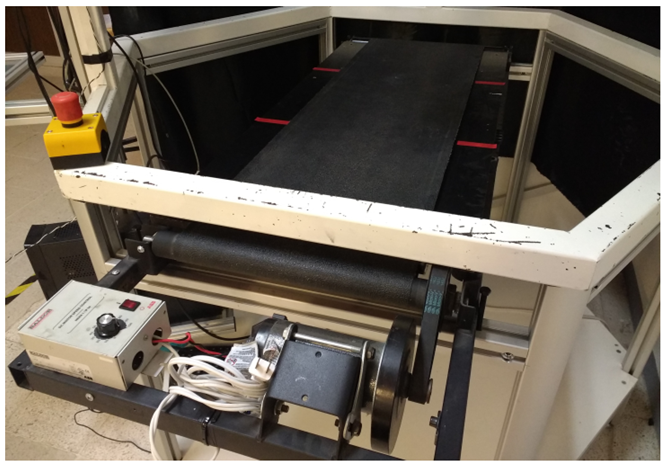

5.1. Hardware

5.2. Software

5.3. Position Measuring System

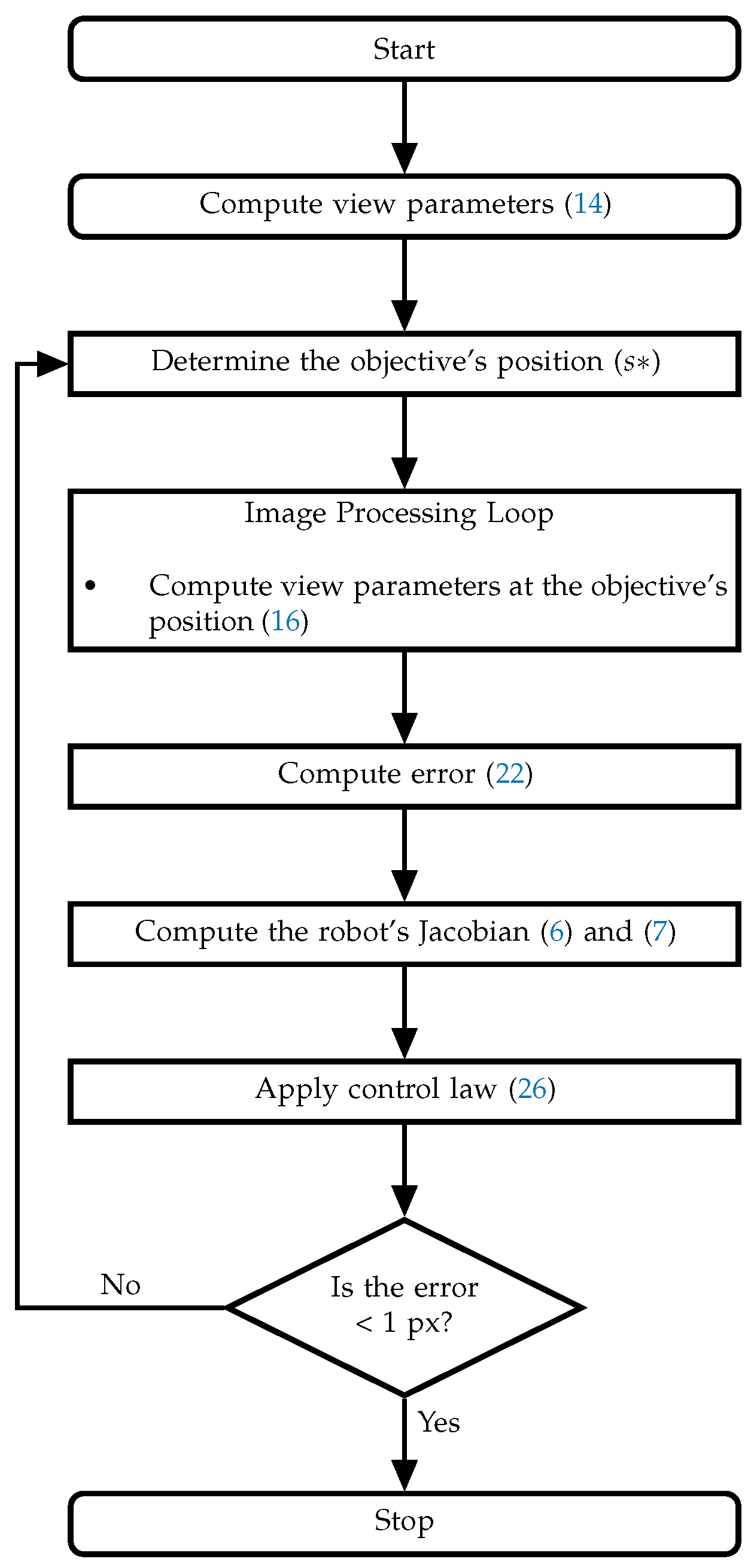

5.4. System Operation

- (1)

- The first set of experiments consisted of a series of static positioning tasks, i.e., using the proposed control, the robot tracked a static target placed randomly inside the robot’s workspace. Ten tasks were executed, each repeated 3 times, for a total of 30 trials. Once the robot’s end-effector was within 2 mm of the target position, the robot held its position for 50 control cycles, and the task’s mean squared error was computed. During these experiments, the control gain matrix () was chosen of the form where I is the identity matrix and ; this value was tuned heuristically.

- (2)

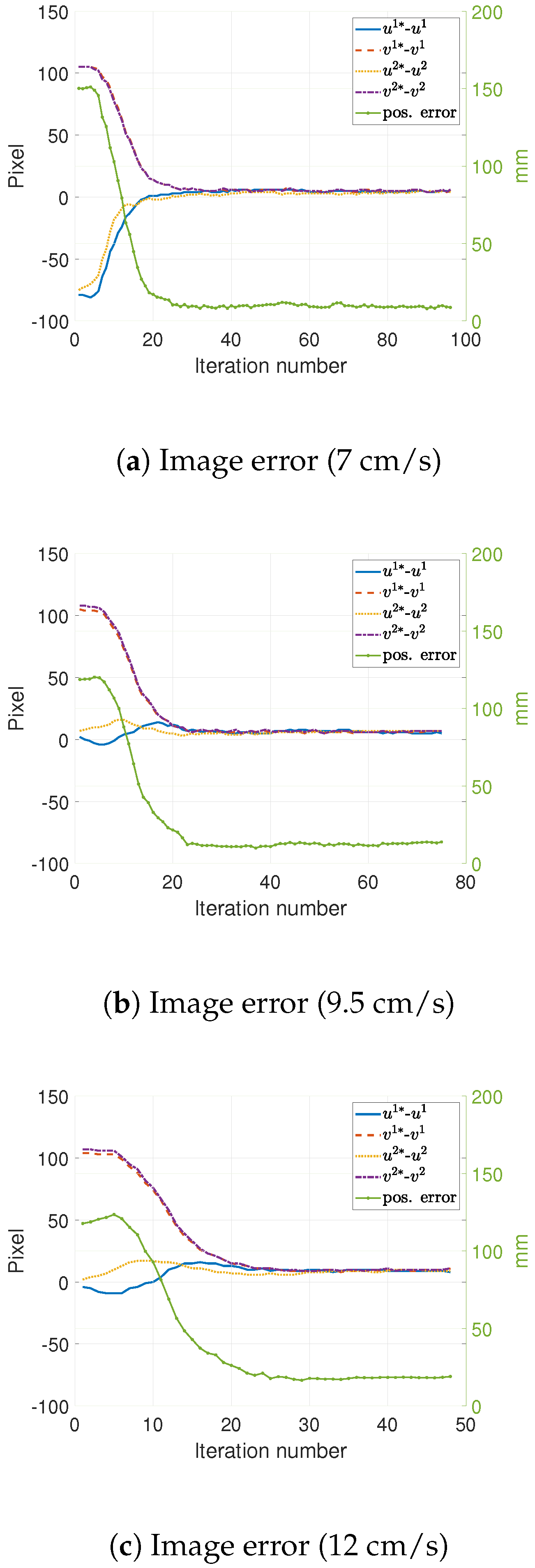

- For the second set of experiments, the target was placed on a conveyor belt (approximately in the middle of the belt, width-wise) running at a constant speed. That is, the target moved following a linear trajectory referred to as “constant speed linear trajectory”. Three different speeds were used: 7, 9.5, and 12 cm/s.Additionally, three different control gain matrices () were tested, of the form , where . The value of k was chosen as large as possible while maintaining no osculations on the task’s positioning response.For each speed, the task was performed 10 times with each of the possible matrices. Finally, each task was also carried out under two conditions regarding the target’s velocity () compensation in the control law; (1) an estimate obtained by means of a Kalman filter was used, and (2) no estimation was used (the compensation was set to zero) yielding a simpler implementation but producing a larger error. However, this error can be reduced by increasing the control gain. In each case, the robot’s tracking error was measured.

- (3)

- For the last set of experiments, the target was moved freehand along different trajectories inside the robot’s workspace. This experiment was referred to as “freehanded trajectory”. These trajectories were: a circle, a square, an eight shape, a lemniscate, a zig-zag, and a decreasing spiral. The control gain matrix () was chosen to be a diagonal matrix with a value of 2.7 on its non-zero terms.

- (1)

- We obtained the coordinates of the objective point in pixels () and loaded the vision parameters previously estimated during the “pre-plan”.

- (2)

- The program entered the control cycle, setting a convergence criterion of error in each camera coordinate () less than 1 pixel.

- (3)

- The centroid of the visual marker attached to the robot’s end-effector () was obtained and the error was computed (22).

- (4)

- (5)

- We performed (19) to obtain the speeds to be injected into the robot’s controller.

- (6)

- We repeated until the convergence was obtained.

6. Results and Discussion

6.1. Results

6.2. Discussion

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

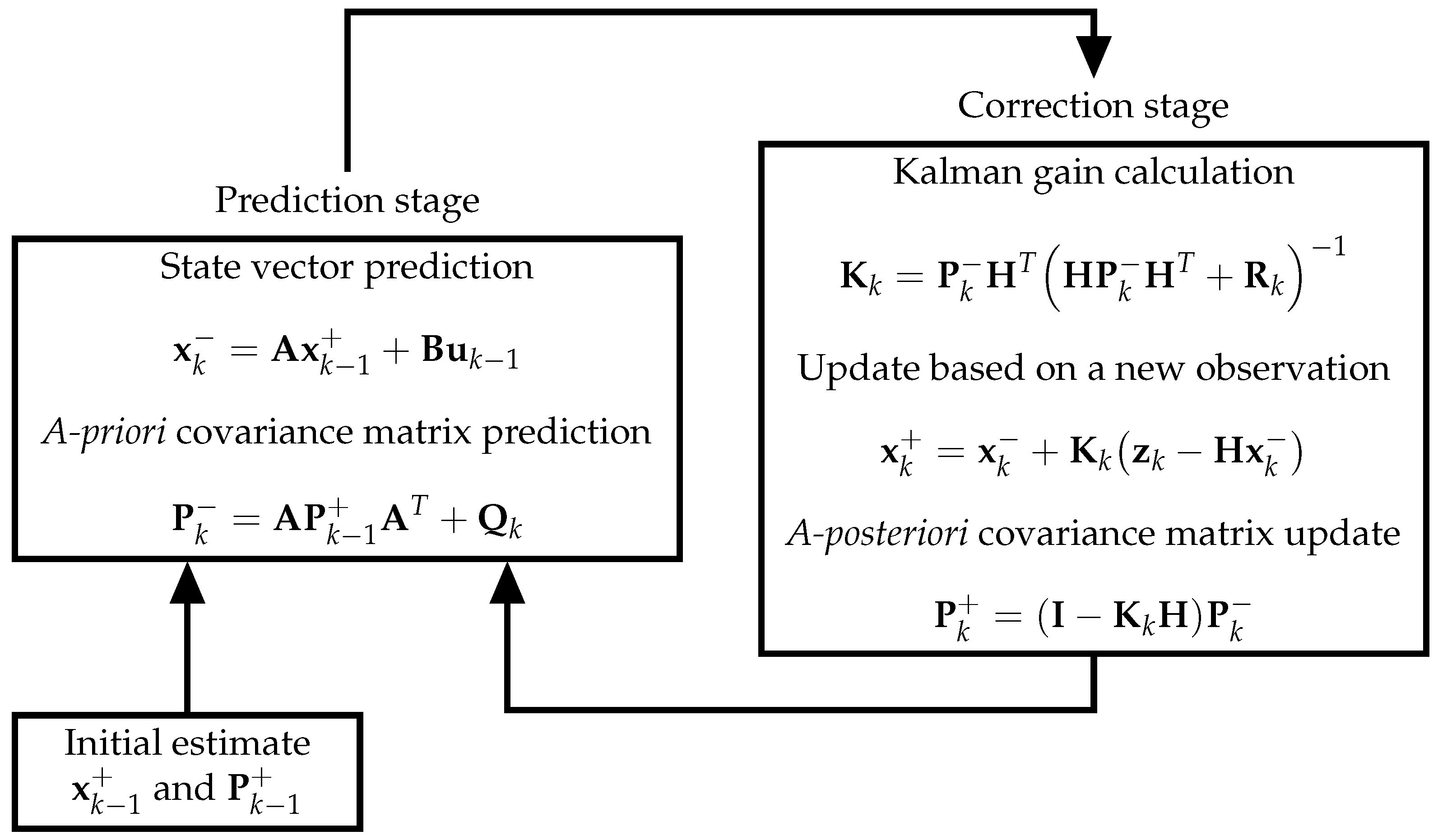

Appendix A.1. Target Velocity Estimation in Camera Space via Kalman Filter

Appendix A.2. Target Velocity Estimation

References

- Merlet, J.P. Parallel Robots; Springer Science & Business Media: Dordrecht, The Netherlands, 2006. [Google Scholar]

- Pandilov, Z.; Dukovski, V. Several open problems in parallel robotics. Acta Tech. Corviniensis-Bull. Eng. 2011, 4, 77. [Google Scholar]

- Lee, L.W.; Chiang, H.H.; Li, I.H. Development and Control of a Pneumatic-Actuator 3-DOF Translational Parallel Manipulator with Robot Vision. Sensors 2019, 19, 1459. [Google Scholar] [CrossRef] [PubMed]

- Mostashiri, N.; Dhupia, J.S.; Verl, A.W.; Xu, W. A review of research aspects of redundantly actuated parallel robotsw for enabling further applications. IEEE/ASME Trans. Mechatronics 2018, 23, 1259–1269. [Google Scholar] [CrossRef]

- Bonev, I.A.; Ryu, J.; Kim, S.G.; Lee, S.K. A closed-form solution to the direct kinematics of nearly general parallel manipulators with optimally located three linear extra sensors. IEEE Trans. Robot. Autom. 2001, 17, 148–156. [Google Scholar] [CrossRef]

- Bentaleb, T.; Iqbal, J. On the improvement of calibration accuracy of parallel robots—Modeling and optimization. J. Theor. Appl. Mech. 2020, 58, 261–272. [Google Scholar] [CrossRef]

- Huynh, B.P.; Kuo, Y.L. Dynamic Hybrid Filter for Vision-Based Pose Estimation of a Hexa Parallel Robot. J. Sens. 2021, 2021, 9990403. [Google Scholar] [CrossRef]

- Amjad, J.; Humaidi, A.I.A. Design of Augmented Nonlinear PD Controller of Delta/Par4-Like Robot. J. Control Sci. Eng. 2019, 2019, 11. [Google Scholar] [CrossRef]

- Cherubini, A.; Navarro-Alarcon, D. Sensor-based control for collaborative robots: Fundamentals, challenges, and opportunities. Front. Neurorobot. 2021, 113. [Google Scholar] [CrossRef]

- Bonilla, I.; Reyes, F.; Mendoza, M.; González-Galván, E.J. A dynamic-compensation approach to impedance control of robot manipulators. J. Intell. Robot. Syst. 2011, 63, 51–73. [Google Scholar] [CrossRef]

- Chaumette, F.; Hutchinson, S. Visual servo control. I. Basic approaches. IEEE Robot. Autom. Mag. 2006, 13, 82–90. [Google Scholar] [CrossRef]

- Weiss, L.; Sanderson, A.; Neuman, C. Dynamic sensor-based control of robots with visual feedback. IEEE J. Robot. Autom. 1987, 3, 404–417. [Google Scholar] [CrossRef]

- Lin, C.J.; Shaw, J.; Tsou, P.C.; Liu, C.C. Vision servo based Delta robot to pick-and-place moving parts. In Proceedings of the 2016 IEEE International Conference on Industrial Technology (ICIT), Taipei, Taiwan, 14–17 March 2016; pp. 1626–1631. [Google Scholar] [CrossRef]

- Xiaolin, R.; Hongwen, L. Uncalibrated Image-Based Visual Servoing Control with Maximum Correntropy Kalman Filter. IFAC-PapersOnLine 2020, 53, 560–565. [Google Scholar] [CrossRef]

- Garrido, R.; Trujano, M.A. Stability Analysis of a Visual PID Controller Applied to a Planar Parallel Robot. Int. J. Control Autom. Syst. 2019, 17, 1589–1598. [Google Scholar] [CrossRef]

- Skaar, S.B.; Brockman, W.H.; Hanson, R. Camera-space manipulation. Int. J. Robot. Res. 1987, 6, 20–32. [Google Scholar] [CrossRef]

- González-Galván, E.J.; Cruz-Ramırez, S.R.; Seelinger, M.J.; Cervantes-Sánchez, J.J. An efficient multi-camera, multi-target scheme for the three-dimensional control of robots using uncalibrated vision. Robot. Comput.-Integr. Manuf. 2003, 19, 387–400. [Google Scholar] [CrossRef]

- González, A.; Gonzalez-Galvan, E.J.; Maya, M.; Cardenas, A.; Piovesan, D. Estimation of camera-space manipulation parameters by means of an extended Kalman filter: Applications to parallel robots. Int. J. Adv. Robot. Syst. 2019, 16, 1729881419842987. [Google Scholar] [CrossRef]

- Rendón-Mancha, J.M.; Cárdenas, A.; García, M.A.; Lara, B. Robot positioning using camera-space manipulation with a linear camera model. IEEE Trans. Robot. 2010, 26, 726–733. [Google Scholar] [CrossRef]

- Coronado, E.; Maya, M.; Cardenas, A.; Guarneros, O.; Piovesan, D. Vision-based Control of a Delta Parallel Robot via Linear Camera-Space Manipulation. J. Intell. Robot. Syst. 2017, 85, 93–106. [Google Scholar] [CrossRef]

- Huynh, P.; Arai, T.; Koyachi, N.; Sendai, T. Optimal velocity based control of a parallel manipulator with fixed linear actuators. In Proceedings of the 1997 IEEE/RSJ International Conference on Intelligent Robot and Systems. Innovative Robotics for Real-World Applications. IROS’97, Grenoble, France, 11 September 1997; Volume 2, pp. 1125–1130. [Google Scholar] [CrossRef]

- Chavez-Romero, R.; Cardenas, A.; Maya, M.; Sanchez, A.; Piovesan, D. Camera Space Particle Filter for the Robust and Precise Indoor Localization of a Wheelchair. J. Sens. 2016, 2016, 1–11. [Google Scholar] [CrossRef]

- Lopez-Lara, J.G.; Maya, M.E.; González, A.; Cardenas, A.; Felix, L. Image-based control of Delta parallel robots via enhanced LCM-CSM to track moving objects. Ind. Robot. Int. J. Robot. Res. Appl. 2020, 47, 559–567. [Google Scholar] [CrossRef]

- Zelenak, A.; Peterson, C.; Thompson, J.; Pryor, M. The advantages of velocity control for reactive robot motion. In Proceedings of the ASME 2015 Dynamic Systems and Control Conference, DSCC 2015, Columbus, OH, USA, 28–30 October 2015; p. V003T43A003. [Google Scholar] [CrossRef]

- Duchaine, V.; Gosselin, C.M. General Model of Human-Robot Cooperation Using a Novel Velocity Based Variable Impedance Control. In Proceedings of the Second Joint EuroHaptics Conference and Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems (WHC’07), Tsukuba, Japan, 22–24 March 2007; pp. 446–451. [Google Scholar] [CrossRef]

- Xie, Y.; Wang, W.; Zhao, C.; Skaar, S.B.; Wang, Q. A Differential Evolution Approach for Camera Space Manipulation. In Proceedings of the 2020 2nd World Symposium on Artificial Intelligence (WSAI), Guangzhou, China, 27–29 June 2020; pp. 103–107. [Google Scholar]

- Wang, Y.; Wang, Y.; Liu, Y. Catching Object in Flight Based on Trajectory Prediction on Camera Space. In Proceedings of the 2018 IEEE International Conference on Real-Time Computing and Robotics (RCAR), Kandima, Maldives, 1–5 August 2018; pp. 304–309. [Google Scholar]

- Xue, T.; Liu, Y. Trajectory Prediction of a Flying Object Based on Hybrid Mapping Between Robot and Camera Space. In Proceedings of the 2018 IEEE International Conference on Real-Time Computing and Robotics (RCAR), Kandima, Maldives, 1–5 August 2018; pp. 567–572. [Google Scholar]

- Sekkat, H.; Tigani, S.; Saadane, R.; Chehri, A. Vision-Based Robotic Arm Control Algorithm Using Deep Reinforcement Learning for Autonomous Objects Grasping. Appl. Sci. 2021, 11, 7917. [Google Scholar] [CrossRef]

- Xin, J.; Cheng, H.; Ran, B. Visual servoing of robot manipulator with weak field-of-view constraints. Int. J. Adv. Robot. Syst. 2021, 18, 1729881421990320. [Google Scholar] [CrossRef]

- Mok, C.; Baek, I.; Cho, Y.S.; Kim, Y.; Kim, S.B. Pallet Recognition with Multi-Task Learning for Automated Guided Vehicles. Appl. Sci. 2021, 11, 11808. [Google Scholar] [CrossRef]

- Singh, A.; Kalaichelvi, V.; Karthikeyan, R. A survey on vision guided robotic systems with intelligent control strategies for autonomous tasks. Cogent Eng. 2022, 9, 2050020. [Google Scholar] [CrossRef]

- Clavel, R. DELTA, a fast robot with parallel geometry. In Proceedings of the 18th International Symposium on Industrial Robots, Lusanne, Switzerland, 26–28 April 1988; Burckhardt, C.W., Ed.; Springer: New York, NY, USA, 1988; pp. 91–100. [Google Scholar]

- Balmaceda-Santamaría, A.L.; Castillo-Castaneda, E.; Gallardo-Alvarado, J. A Novel Reconfiguration Strategy of a Delta-Type Parallel Manipulator. Int. J. Adv. Robot. Syst. 2016, 13, 15. [Google Scholar] [CrossRef]

- Maya, M.; Castillo, E.; Lomelí, A.; González-Galván, E.; Cárdenas, A. Workspace and payload-capacity of a new reconfigurable Delta parallel robot. Int. J. Adv. Robot. Syst. 2013, 10, 56. [Google Scholar] [CrossRef]

- López, M.; Castillo, E.; García, G.; Bashir, A. Delta robot: Inverse, direct, and intermediate Jacobians. Proc. Inst. Mech. Eng. Part C J. Mech. Eng. Sci. 2006, 220, 103–109. [Google Scholar] [CrossRef]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Liu, Y.; Shi, D.; Skaar, S.B. Robust industrial robot real-time positioning system using VW-camera-space manipulation method. Ind. Robot. Int. J. 2014, 41, 70–81. [Google Scholar] [CrossRef]

- Galassi, M.; Theiler, J. GNU Scientific Library. 1996. Available online: https://www.gnu.org/software/gsl/ (accessed on 1 June 2017).

- Bradski, G.; The OpenCV Library. Dr. Dobb’s Journal of Software Tools. 2000. Available online: http://opencv.org/ (accessed on 1 July 2016).

- Angel, L.; Traslosheros, A.; Sebastian, J.M.; Pari, L.; Carelli, R.; Roberti, F. Vision-Based Control of the RoboTenis System. In Recent Progress in Robotics: Viable Robotic Service to Human: An Edition of the Selected Papers from the 13th International Conference on Advanced Robotics; Lee, S., Suh, I.H., Kim, M.S., Eds.; Springer: Berlin/Heidelberg, Germany, 2008; pp. 229–240. [Google Scholar] [CrossRef]

- Bonilla, I.; Mendoza, M.; González-Galván, E.J.; Chávez-Olivares, C.; Loredo-Flores, A.; Reyes, F. Path-tracking maneuvers with industrial robot manipulators using uncalibrated vision and impedance control. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2012, 42, 1716–1729. [Google Scholar] [CrossRef]

- Özgür, E.; Dahmouche, R.; Andreff, N.; Martinet, P. High speed parallel kinematic manipulator state estimation from legs observation. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; pp. 424–429. [Google Scholar] [CrossRef]

- Chen, G.; Peng, R.; Wang, Z.; Zhao, W. Pallet recognition and localization method for vision guided forklift. In Proceedings of the 2012 8th International Conference on Wireless Communications, Networking and Mobile Computing, Shanghai, China, 21–23 September 2012; Volume 2. [Google Scholar] [CrossRef]

- Lu, X.; Liu, M. Optimal Design and Tuning of PID-Type Interval Type-2 Fuzzy Logic Controllers for Delta Parallel Robots. Int. J. Adv. Robot. Syst. 2016, 13, 96. [Google Scholar] [CrossRef]

| Error (mm) | |

|---|---|

| Average | 1.086 |

| Max. | 1.36 |

| Min. | 0.76 |

| Std. Dev. | 0.195 |

| Conveyor Speed | RMS Tracking Error (mm) | |

|---|---|---|

| 7 cm/s | 2.3 | 11.2137 |

| 2.7 | 9.6279 | |

| 3.1 | 8.8899 | |

| 9.5 cm/s | 2.3 | 14.8628 |

| 2.7 | 12.3435 | |

| 3.1 | 11.7613 | |

| 12 cm/s | 2.3 | 27.8823 |

| 2.7 | 20.9840 | |

| 3.1 | 18.6458 |

| Trajectory | RMS Tracking Error (mm) | Final Error (mm) |

|---|---|---|

| circle | 7.521 | 1.44 |

| square | 10.471 | 1.41 |

| decreasing spiral | 9.021 | 1.23 |

| lemniscate | 9.661 | 1.16 |

| zig-zag | 10.788 | 1.29 |

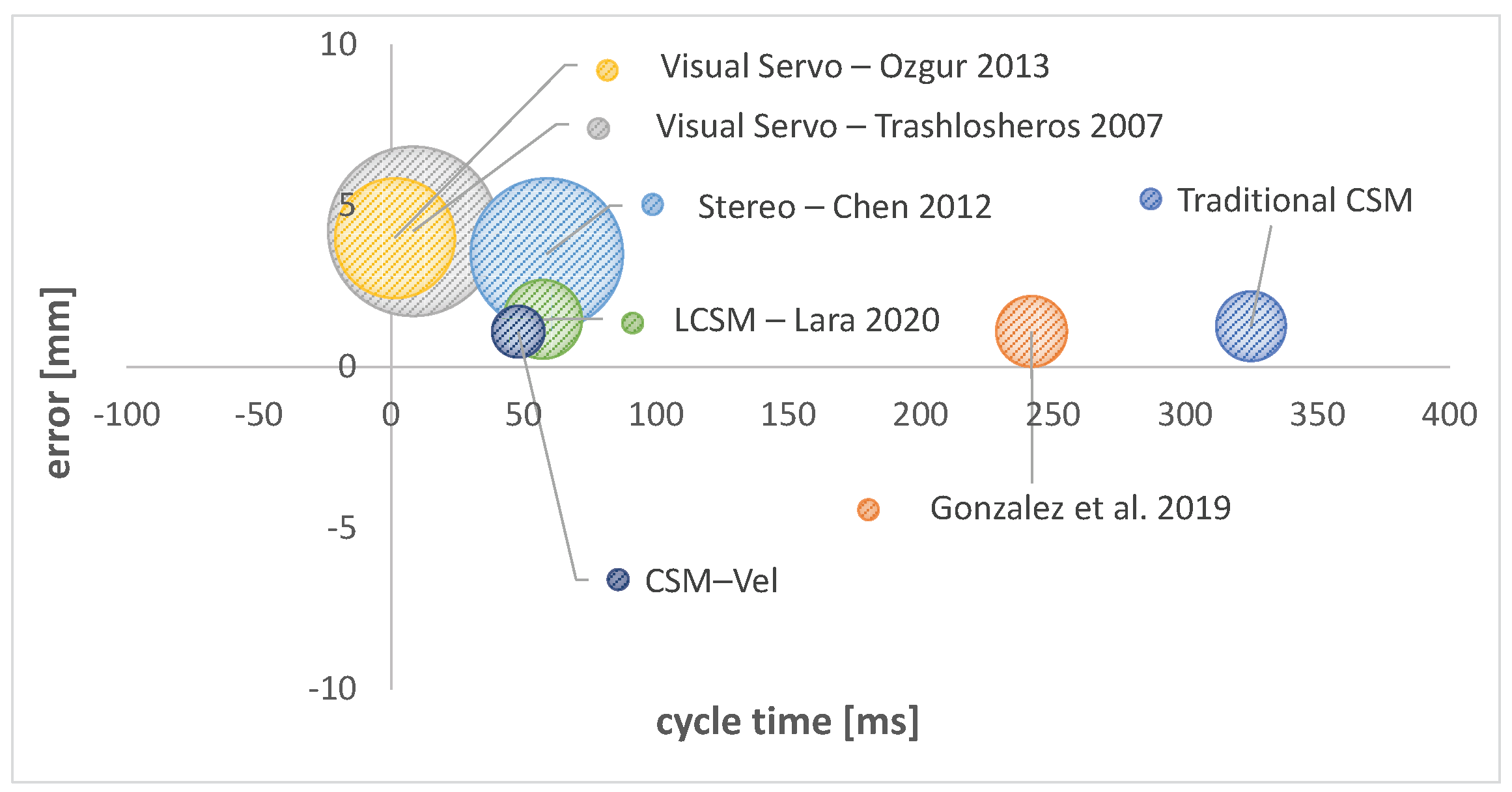

| Control Cycle Time [ms] | Static Error (mm) | Std Dev (mm) | Tracking Error (mm) @ vel (mm/s) | Method |

|---|---|---|---|---|

| 325 | 1.26 | 0.34 | NA | Traditional CSM |

| 242 | 1.11 | 0.35 | NA | Gonzalez et al. [18] |

| 8.33 | 4.21 | 2 | 20@800 | Visual Servo—Trashlosheros [41] |

| NA | 0.4 | 0.21 | NA | CSM—Bonilla [42] |

| 1.4 | 4 | 1 | NA | Visual Servo—Özgür [43] |

| 58.8 | 3.5 | 1.6 | NA | Stereo—Chen [44] |

| 57.3 | 1.48 | 0.43 | NA | LCSM—Lara et al. [23] |

| 48 | 1.09 | 0.19 | 8.89@70, 11.76@95, 18.65@120 | CSM—Vel |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Loredo, A.; Maya, M.; González, A.; Cardenas, A.; Gonzalez-Galvan, E.; Piovesan, D. A Novel Velocity-Based Control in a Sensor Space for Parallel Manipulators. Sensors 2022, 22, 7323. https://doi.org/10.3390/s22197323

Loredo A, Maya M, González A, Cardenas A, Gonzalez-Galvan E, Piovesan D. A Novel Velocity-Based Control in a Sensor Space for Parallel Manipulators. Sensors. 2022; 22(19):7323. https://doi.org/10.3390/s22197323

Chicago/Turabian StyleLoredo, Antonio, Mauro Maya, Alejandro González, Antonio Cardenas, Emilio Gonzalez-Galvan, and Davide Piovesan. 2022. "A Novel Velocity-Based Control in a Sensor Space for Parallel Manipulators" Sensors 22, no. 19: 7323. https://doi.org/10.3390/s22197323

APA StyleLoredo, A., Maya, M., González, A., Cardenas, A., Gonzalez-Galvan, E., & Piovesan, D. (2022). A Novel Velocity-Based Control in a Sensor Space for Parallel Manipulators. Sensors, 22(19), 7323. https://doi.org/10.3390/s22197323