AI Based Digital Twin Model for Cattle Caring

Abstract

:1. Introduction

2. Related Work

3. Data Mining and Analysing

3.1. Data Processing

3.1.1. Data Segmentation

3.1.2. Data Cleaning

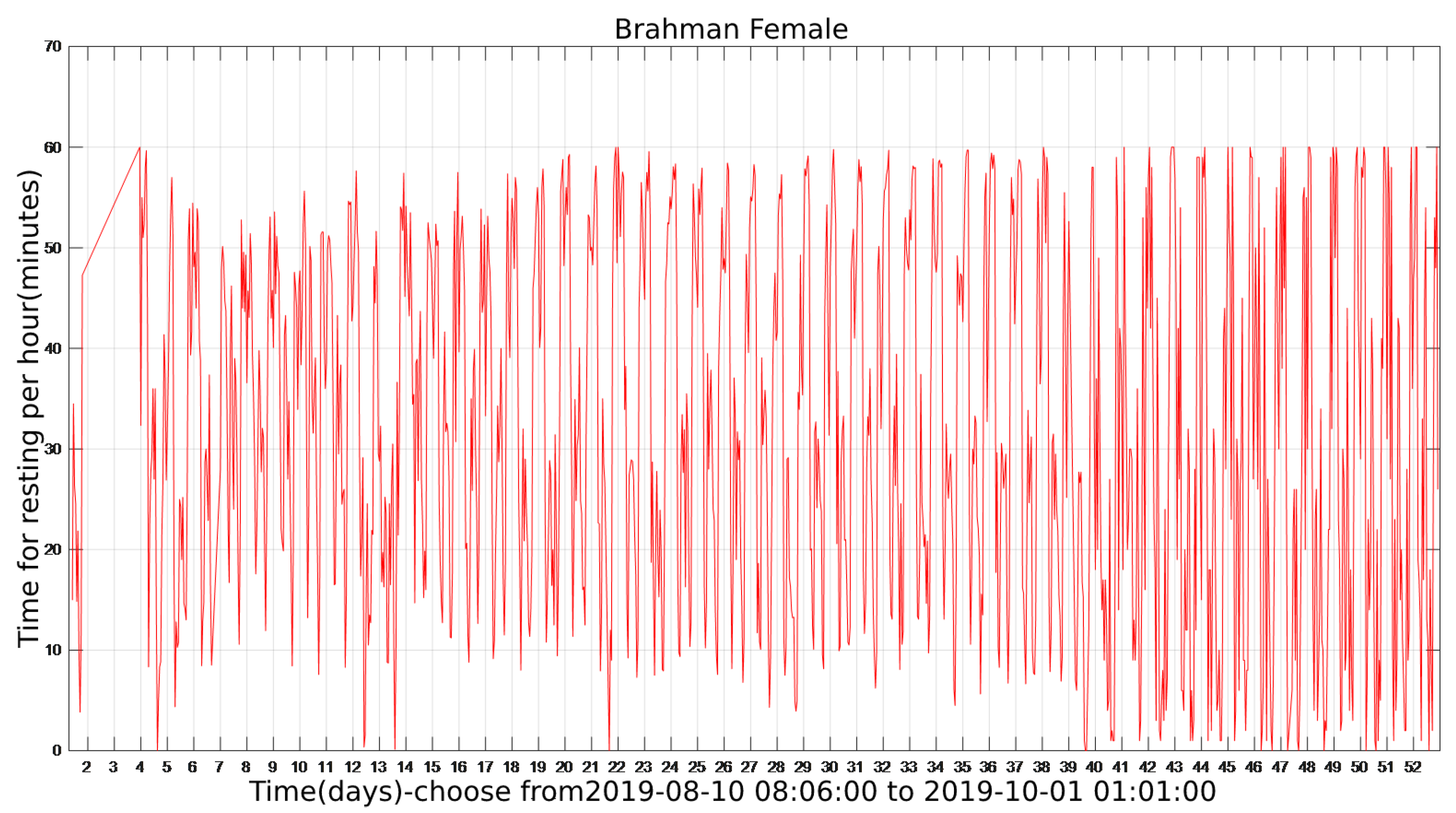

3.2. The State of Cattle throughout the Sampling Period

3.3. The Average 24 h State of Cattle

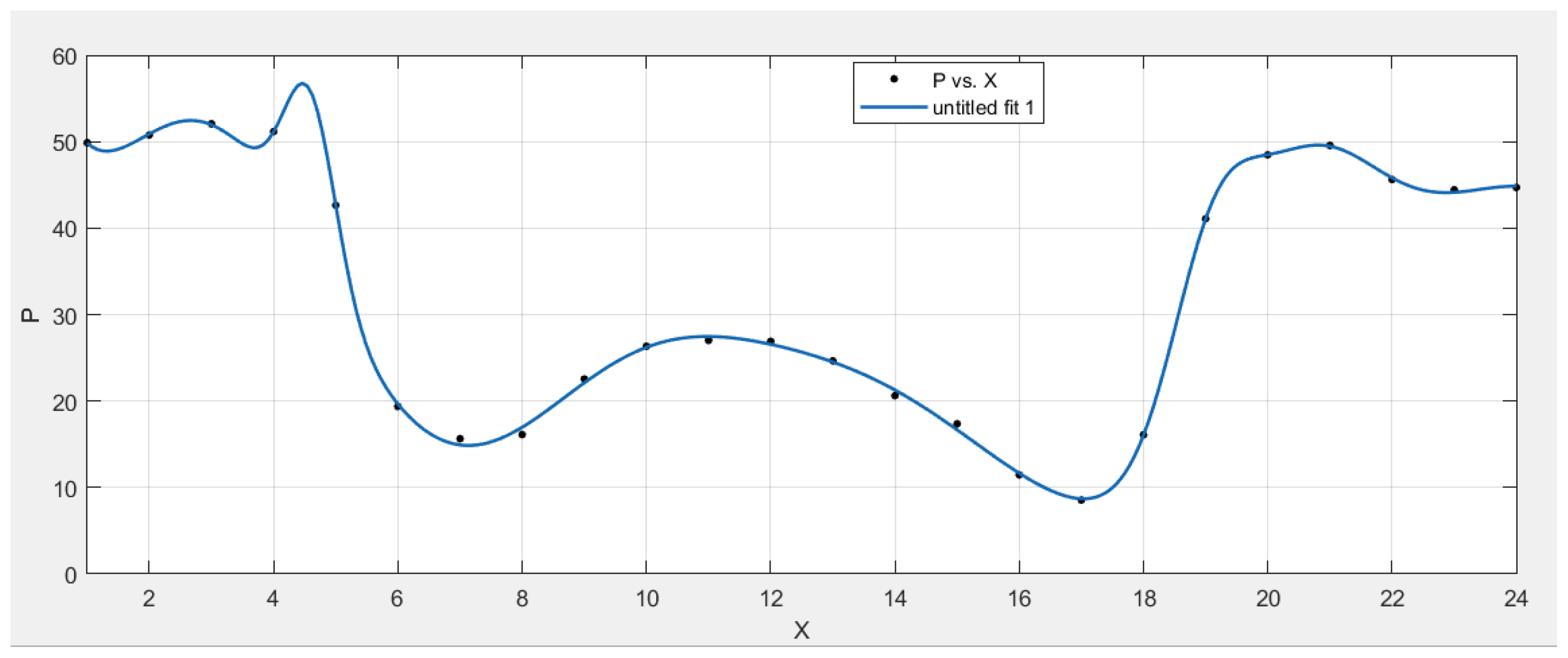

3.4. Fitting Curve for the Average State Period (24 h)

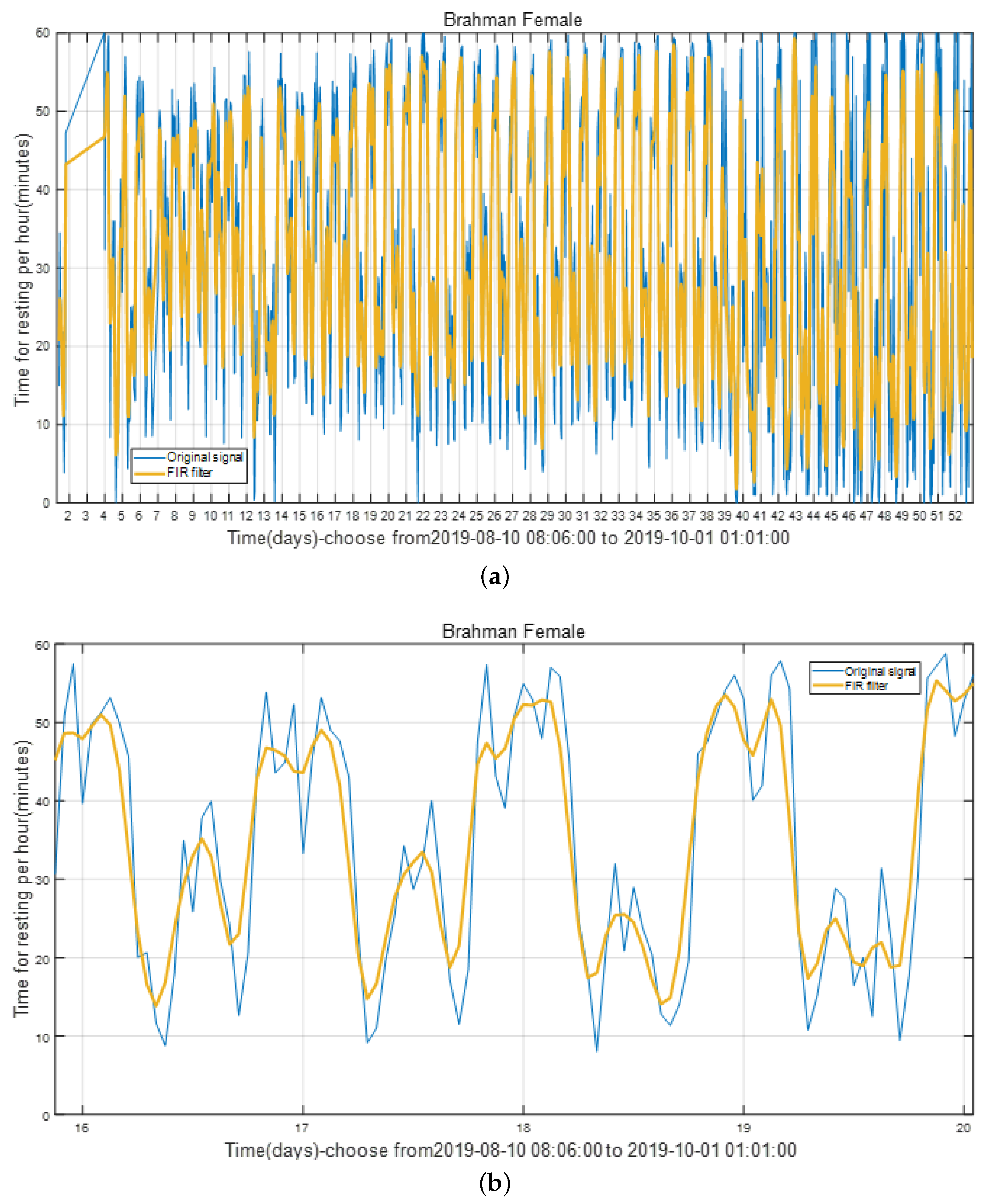

3.5. Noise Reduction Using Low-Pass Finite Impulse Response (FIR) Filter

4. Prediction Based on LSTM Model

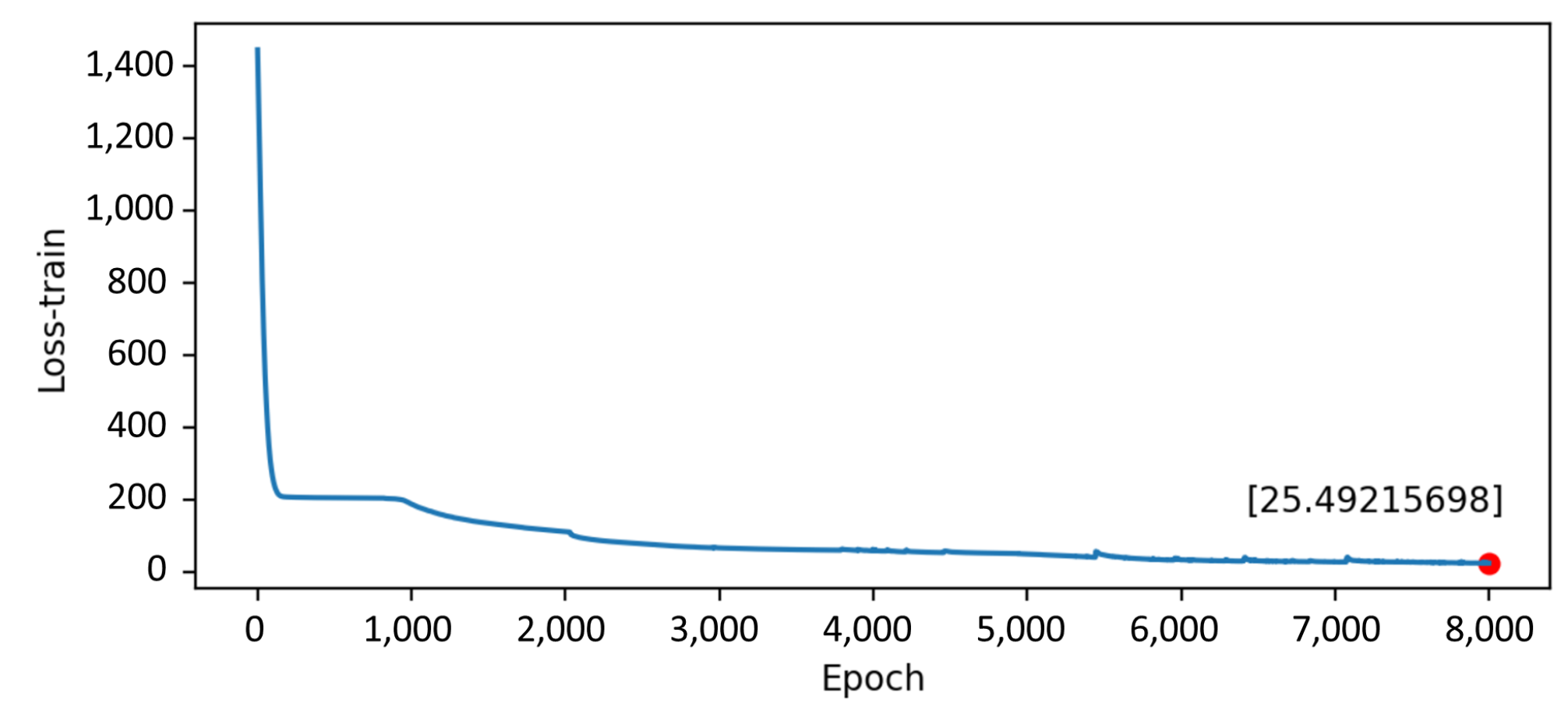

4.1. Build the LSTM Model of the Cattle State

4.2. Using the LSTM Model to Predict the State of Cattle

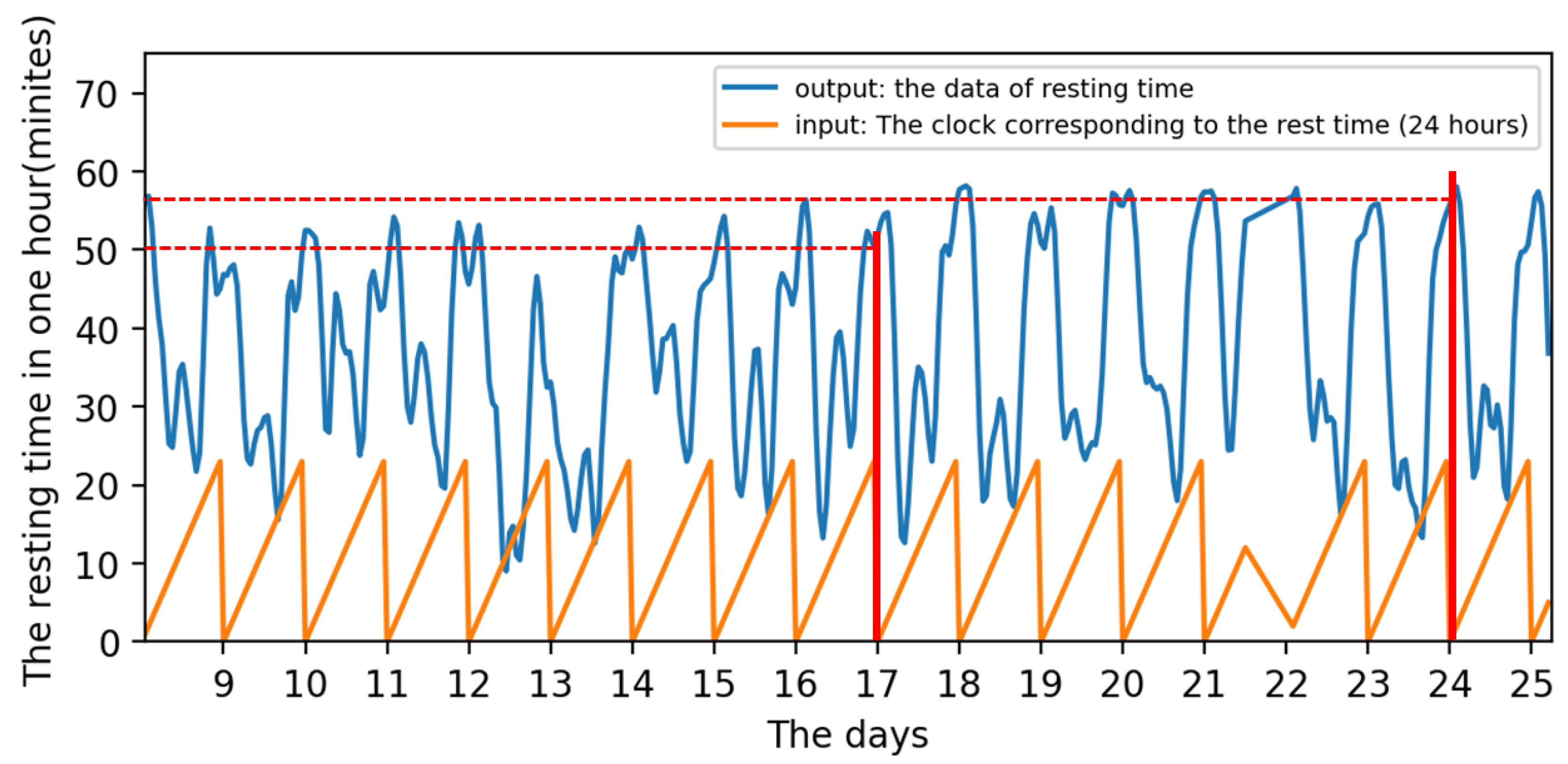

- Input: The number of hours on the clock each day (24 h).

- Output: The resting time during this hour (e.g., The resting time at 7:00 means that the resting time during one hour from 7:00 to 7:59).

- Training:Both the input and output data are periodicities. The distinction is that the input in this cycle has a set value and trend, whereas the output in each cycle has a varied value. For example, the input is 0 at 0:00 a.m. on Day 17th and 0:00 a.m. on Day 24th, as shown by the two red lines in Figure 11, but the output is different. In other words, the same input might result in multiple outcomes regardless of time. Although the input is the same, the input’s matching time series is not. As a result, when a single input correlates to numerous outputs in a time series, the LSTM model can successfully handle the problem.

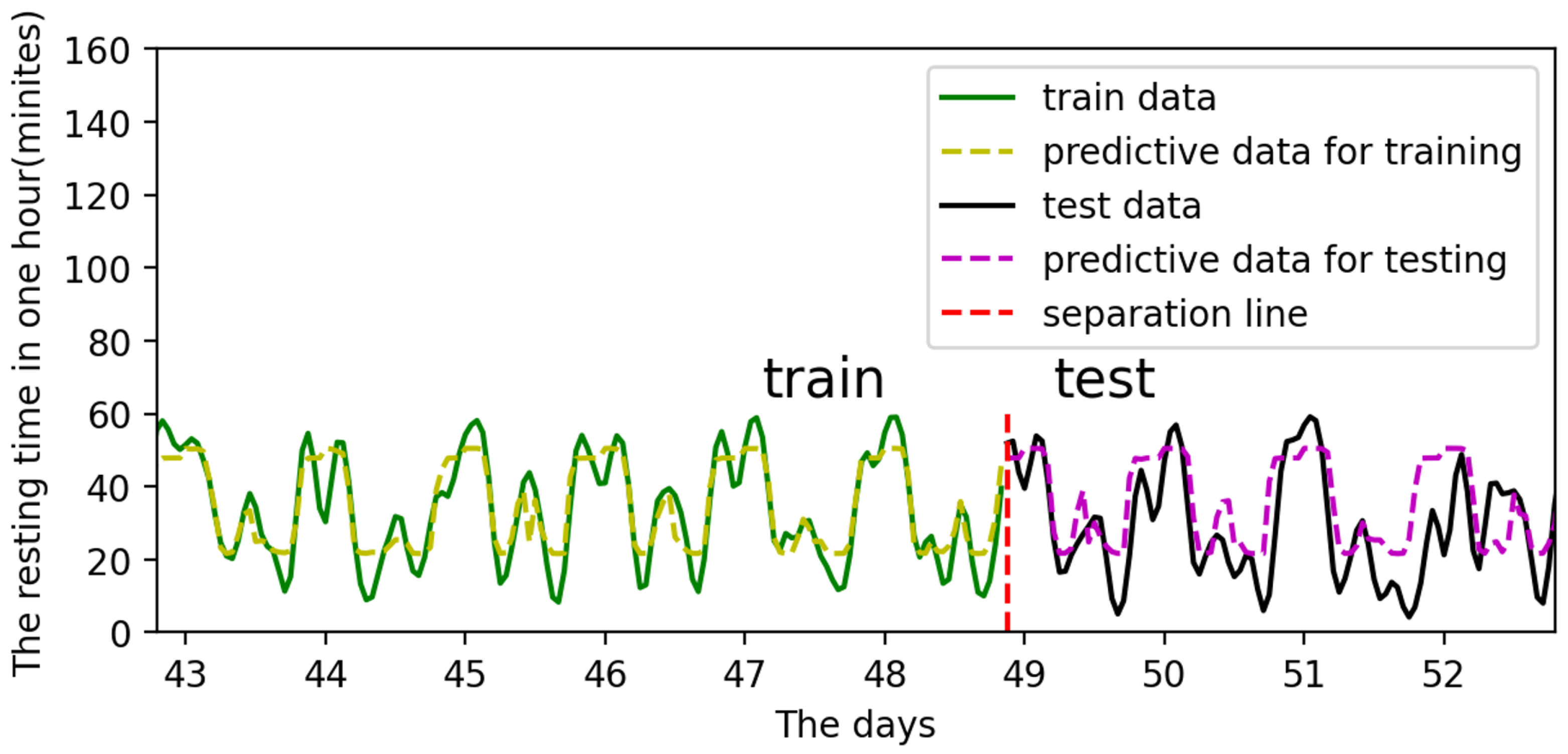

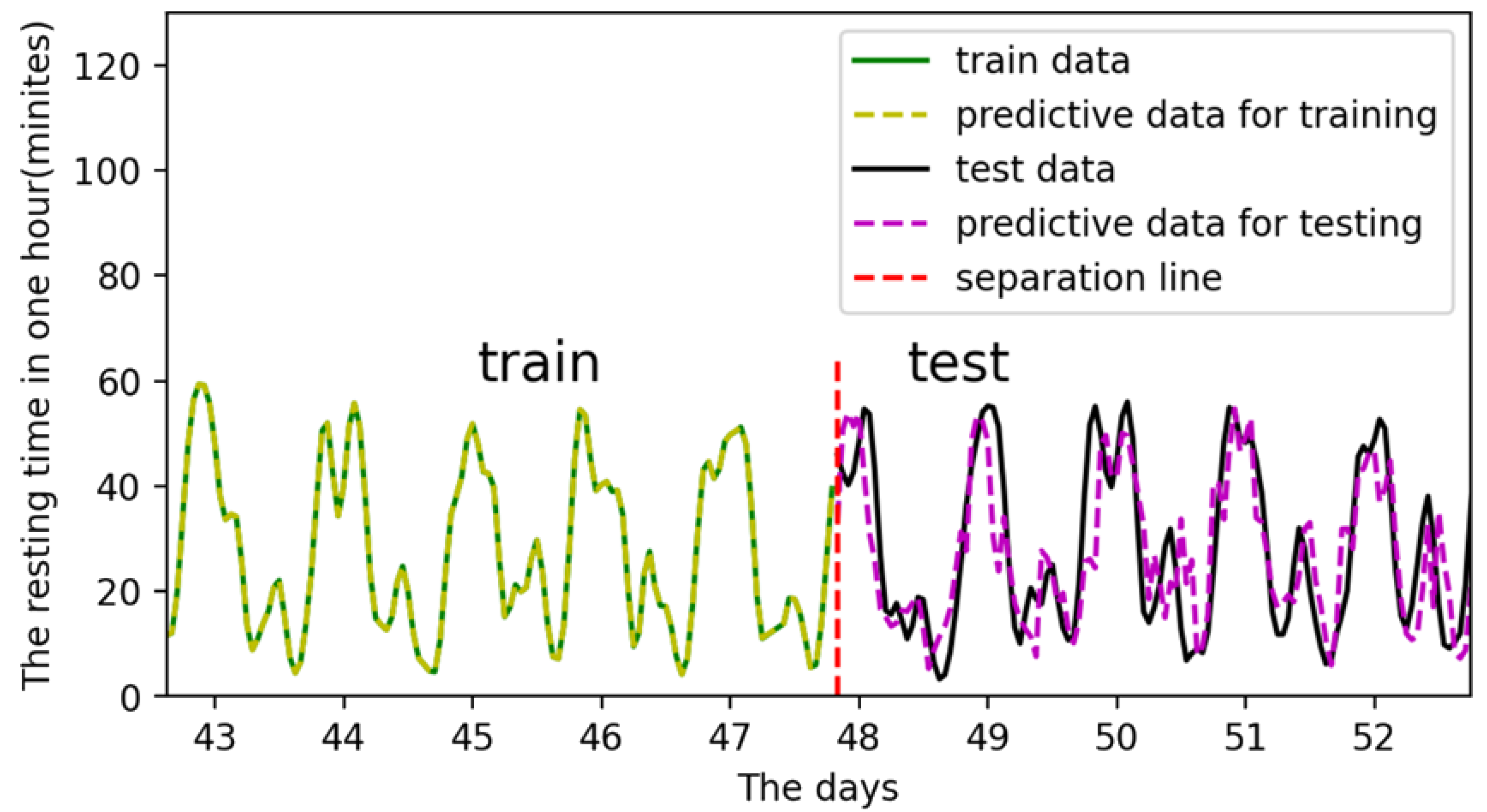

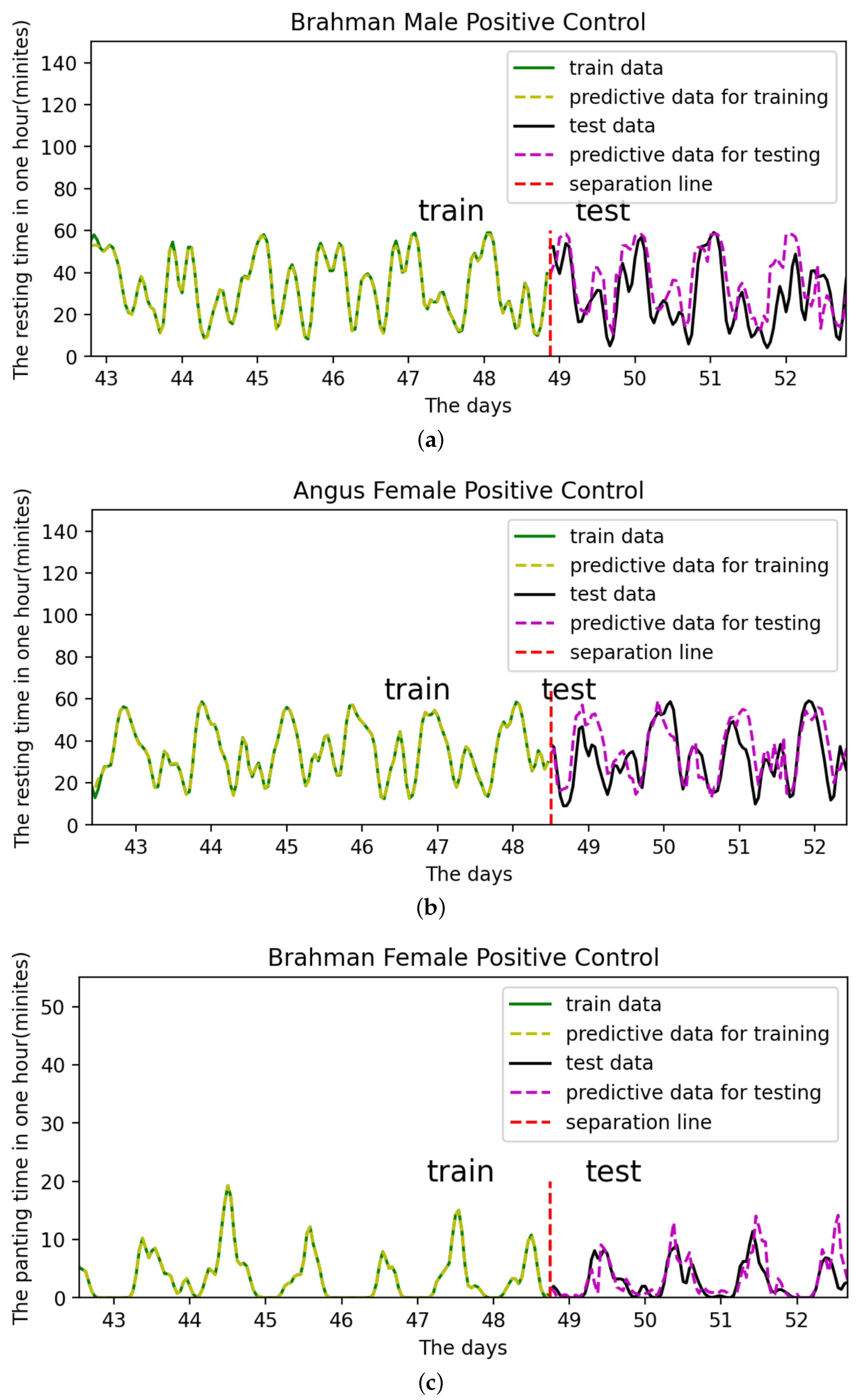

- Testing and prediction:In total, 90% of the data is used for training, and 10% for prediction and testing. For example, the input data sets for training are through , while the data sets for testing are through . The training outcomes are depicted in Figure 12.

4.3. Parameter Optimization

- Hidden units size: 4, 8, 16, 32, 64, 128, 256.

- The number of LSTM layers: 1, 2, 3, 4, 5, 6, 7.

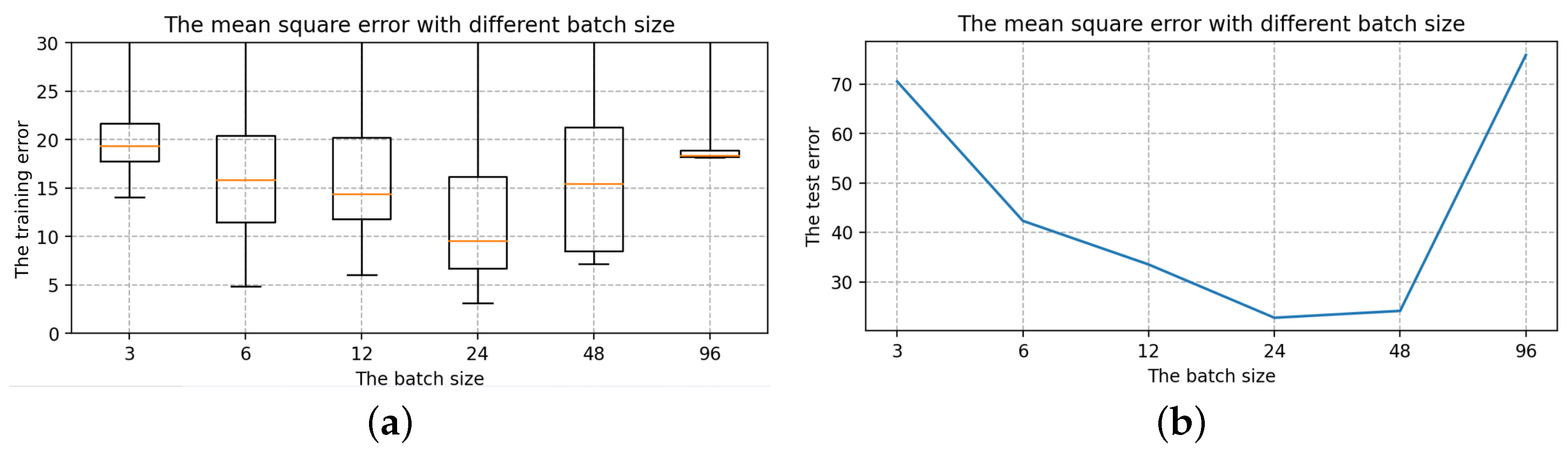

- The batch size: 3, 6, 12, 24, 48, 96.

- The epoch size: 100, 500, 1000, 2000, 5000, 10,000, 20,000.

- Selection of the number of LSTM layersThe number of hidden units is 16, the batch size is 24, and the epoch size is 2000, all of which are randomly chosen. Only the number of layers in the LSTM is modified with the other parameters fixed: 1, 2, 3, 4, 5, 6, 7. The box diagram for the mean square deviation in the model learning process is shown in Figure 14.The top line and bottom line represent the edge’s maximum and minimum values, respectively. The upper quartile is represented by the box’s upper edge, while the box’s lower edge represents the lower quartile. The orange line represents the median. Comparing the seven box charts, increasing the number of layers has a minor impact on the mean square error of model training [33].When the number of layers is 5, 6 and 7, the error of the LSTM model will be stabilized to a fixed value immediately after a short training. As shown in Figure 14, the box plot has many outliers (that is, large outliers, black circles in the figure), and the median, upper quartile, and lower quartile overlap. However, in terms of model performance, using more LSTM layers, the running speed will be slower and it becomes more complex, and the result of the model operation is affected [34,35]. The loss error of the test set is positively correlated with that of the training set, and it is the smallest when the number of layers is 2. As a result, two layers of LSTM are best for this model.

- Selection of the hidden units sizeTo determine the size of the hidden units, we keep the batch size and epoch size unchanged and run the LSTM model with different hidden units size, i.e., 4, 8, 16, 32, 64, 128, 256. The box diagram of the mean square is shown in Figure 15. In terms of error size and ultimate training effect, the choice of 128 hidden units is the best for training the data, with the majority of the mean square error values falling below 25, and the loss error of the test set is the smallest.

- Selection of the batch sizeThe batch size, which can be 3, 6, 12, 24, 48, or 96, is altered when using two layers of LSTM with 128 hidden units. The box diagram is shown in Figure 16. The batch size refers to the number of samples fed into the model at once and divides the original data set into batch size data sets for independent training. This method helps to speed up training while also consuming less memory [36]. To some extent, batch size training can help to prevent the problem of overfitting [37]. As a result, when building the model, an acceptable batch size should be chosen. When the batch size is 24, the minimum value of the produced mean square deviation data set is the smallest in terms of minimum value and median, as well as the test error value.

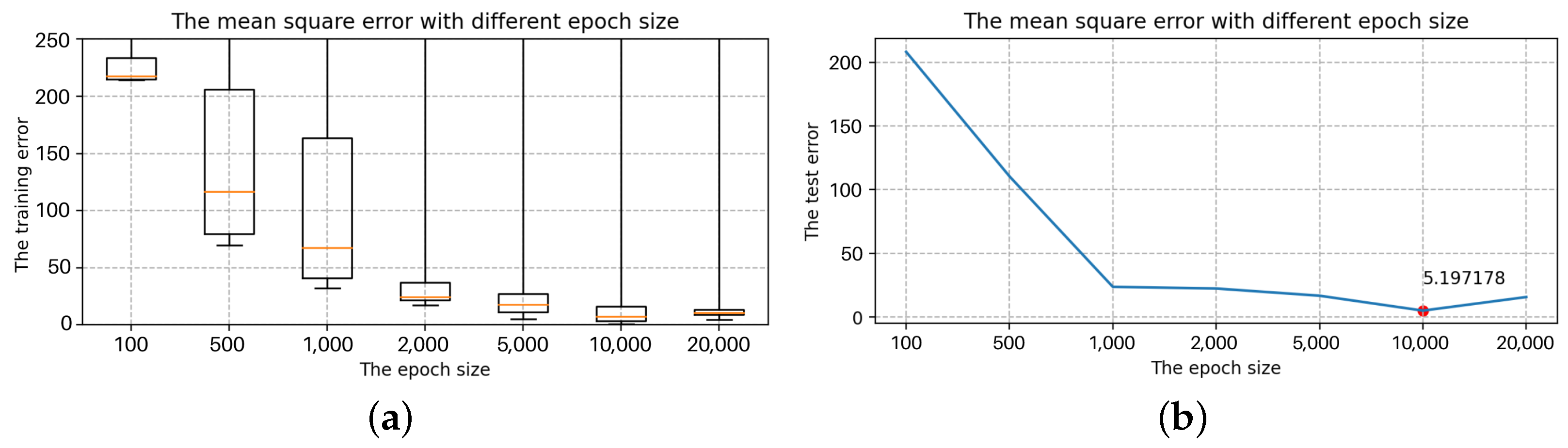

- Selection of the epoch sizeSelect two layers of LSTM with 128 hidden units and the batch size is 24, but the epoch size can be any of 100, 500, 1000, 2000, 5000, 10,000, or 20,000. Figure 17 shows a box diagram for the mean square deviation in the model learning process.The epoch size is the number of times the learning algorithm works in the entire training data set. An epoch means that each sample in the training data set has the opportunity to update internal model parameters [38]. In theory, the more training sessions there are, the better the fit and the lower the error. In practice, however, overfitting occurs when the epoch size exceeds a specific threshold, causing the training outcomes to deteriorate [39]. The epoch size of 100, 500, 1000, 2000, 5000, 10,000, and 20,000 is chosen in Figure 17. The inaccuracy rapidly decreases and approaches zero as the epoch size increases from 100 to 10,000. When the epoch size increases to 20,000, the error is still tiny, but it is greater than when the epoch size is 10,000, indicating an overfitting occurrence. Therefore, the model with a 10,000 epoch size has the best effect.

5. Results and Analysis

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| DL | Deep Learning |

| LSTM | Long Short-Term Memory Network |

| RNN | Recurrent Neural Network |

| FIR | Finite impulse response |

| IIR | Infinite Impulse Response |

References

- Haag, S.; Anderl, R. Digital twin–proof of concept. Manuf. Lett. 2018, 15, 64–66. [Google Scholar] [CrossRef]

- Schleich, B.; Anwer, N.; Mathieu, L.; Wartzack, S. Shaping the digital twin for design and production engineering. CIRP Ann. 2017, 66, 141–144. [Google Scholar] [CrossRef]

- Pargmann, H.; Euhausen, D.; Faber, R. Intelligent big data processing for wind farm monitoring and analysis based on cloud-technologies and digital twins: A quantitative approach. In Proceedings of the 2018 IEEE 3rd International Conference on Cloud Computing and Big Data Analysis (ICCCBDA), Chengdu, China, 20–22 April 2018; pp. 233–237. [Google Scholar]

- Verdouw, C.; Kruize, J.W. Digital twins in farm management: Illustrations from the FIWARE accelerators SmartAgriFood and Fractals. In Proceedings of the 7th Asian-Australasian Conference on Precision Agriculture Digital, Hamilton, New Zealand, 16–19 October 2017; pp. 1–5. [Google Scholar]

- Grieves, M.; Vickers, J. Digital twin: Mitigating unpredictable, undesirable emergent behavior in complex systems. In Transdisciplinary Perspectives on Complex Systems; Springer: Berlin/Heidelberg, Germany, 2017; pp. 85–113. [Google Scholar]

- Boschert, S.; Rosen, R. Digital twin—The simulation aspect. In Mechatronic Futures; Springer: Berlin/Heidelberg, Germany, 2016; pp. 59–74. [Google Scholar]

- Yang, F.; Wang, K.; Han, Y.; Qiao, Z. A cloud-based digital farm management system for vegetable production process management and quality traceability. Sustainability 2018, 10, 4007. [Google Scholar] [CrossRef]

- Tao, F.; Zhang, M.; Liu, Y.; Nee, A.Y. Digital twin driven prognostics and health management for complex equipment. Cirp Ann. 2018, 67, 169–172. [Google Scholar] [CrossRef]

- Grober, T.; Grober, O. Improving the efficiency of farm management using modern digital technologies. In Proceedings of the E3S Web of Conferences; EDP Sciences: Les Ulis, France, 2020; Volume 175, p. 13003. [Google Scholar]

- Cojocaru, L.E.; Burlacu, G.; Popescu, D.; Stanescu, A.M. Farm Management Information System as ontological level in a digital business ecosystem. In Service Orientation in Holonic and Multi-Agent Manufacturing and Robotics; Springer: Berlin/Heidelberg, Germany, 2014; pp. 295–309. [Google Scholar]

- Tekinerdogan, B.; Verdouw, C. Systems Architecture Design Pattern Catalogfor Developing Digital Twins. Sensors 2020, 20, 5103. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Wagner, N.; Antoine, V.; Mialon, M.M.; Lardy, R.; Silberberg, M.; Koko, J.; Veissier, I. Machine learning to detect behavioural anomalies in dairy cows under subacute ruminal acidosis. Comput. Electron. Agric. 2020, 170, 105233. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef]

- Gers, F.A.; Schmidhuber, J.; Cummins, F. Learning to forget: Continual prediction with LSTM. In Proceedings of the 1999 Ninth International Conference on Artificial Neural Networks ICANN 99, Edinburgh, UK, 7–10 September 1999. [Google Scholar]

- Williams, R.J.; Zipser, D. A learning algorithm for continually running fully recurrent neural networks. Neural Comput. 1989, 1, 270–280. [Google Scholar] [CrossRef]

- Sundermeyer, M.; Schlüter, R.; Ney, H. LSTM neural networks for language modeling. In Proceedings of the Thirteenth Annual Conference of the International Speech Communication Association, Portland, OR, USA, 9–13 September 2012. [Google Scholar]

- Hu, W.; He, Y.; Liu, Z.; Tan, J.; Yang, M.; Chen, J. Toward a Digital Twin: Time Series Prediction Based on a Hybrid Ensemble Empirical Mode Decomposition and BO-LSTM Neural Networks. J. Mech. Des. 2021, 143, 051705. [Google Scholar] [CrossRef]

- Schmidhuber, J.; Hochreiter, S. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar]

- Greenwood, P.L.; Gardner, G.E.; Ferguson, D.M. Current situation and future prospects for the Australian beef industry—A review. Asian-Australas. J. Anim. Sci. 2018, 31, 992. [Google Scholar] [CrossRef]

- Cabrera, V.E.; Barrientos-Blanco, J.A.; Delgado, H.; Fadul-Pacheco, L. Symposium review: Real-time continuous decision making using big data on dairy farms. J. Dairy Sci. 2020, 103, 3856–3866. [Google Scholar] [CrossRef] [PubMed]

- Huang, Y.; Zhang, Q. Agricultural Cybernetics; Springer: Berlin/Heidelberg, Germany, 2021. [Google Scholar]

- Li, L.; Wang, H.; Yang, Y.; He, J.; Dong, J.; Fan, H. A digital management system of cow diseases on dairy farm. In Proceedings of the International Conference on Computer and Computing Technologies in Agriculture, Nanchang, China, 22–25 October 2010; Springer: Berlin/Heidelberg, Germany, 2010; pp. 35–40. [Google Scholar]

- Kolb, W.M. Curve Fitting for Programmable Calculators; Imtec, 1984. [Google Scholar]

- Buttchereit, N.; Stamer, E.; Junge, W.; Thaller, G. Evaluation of five lactation curve models fitted for fat: Protein ratio of milk and daily energy balance. J. Dairy Sci. 2010, 93, 1702–1712. [Google Scholar] [CrossRef] [PubMed]

- Rabiner, L.; Kaiser, J.; Herrmann, O.; Dolan, M. Some comparisons between FIR and IIR digital filters. Bell Syst. Tech. J. 1974, 53, 305–331. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A.; Bengio, Y. Deep Learning; MIT Press: Cambridge, UK, 2016; Volume 1. [Google Scholar]

- Xie, T.; Yu, H.; Wilamowski, B. Comparison between traditional neural networks and radial basis function networks. In Proceedings of the 2011 IEEE International Symposium on Industrial Electronics, Gdańsk, Poland, 27–30 June 2011; pp. 1194–1199. [Google Scholar]

- Zhang, J.; Wang, P.; Yan, R.; Gao, R.X. Long short-term memory for machine remaining life prediction. J. Manuf. Syst. 2018, 48, 78–86. [Google Scholar] [CrossRef]

- Tang, Y.; Yu, F.; Pedrycz, W.; Yang, X.; Wang, J.; Liu, S. Building trend fuzzy granulation based LSTM recurrent neural network for long-term time series forecasting. IEEE Trans. Fuzzy Syst. 2021, 30, 1599–1613. [Google Scholar] [CrossRef]

- Yaqub, M.; Asif, H.; Kim, S.; Lee, W. Modeling of a full-scale sewage treatment plant to predict the nutrient removal efficiency using a long short-term memory (LSTM) neural network. J. Water Process Eng. 2020, 37, 101388. [Google Scholar] [CrossRef]

- Domun, Y.; Pedersen, L.J.; White, D.; Adeyemi, O.; Norton, T. Learning patterns from time-series data to discriminate predictions of tail-biting, fouling and diarrhoea in pigs. Comput. Electron. Agric. 2019, 163, 104878. [Google Scholar] [CrossRef]

- Spitzer, M.; Wildenhain, J.; Rappsilber, J.; Tyers, M. BoxPlotR: A web tool for generation of box plots. Nat. Methods 2014, 11, 121–122. [Google Scholar] [CrossRef]

- Koutnik, J.; Greff, K.; Gomez, F.; Schmidhuber, J. A clockwork rnn. In Proceedings of the International Conference on Machine Learning, Bejing, China, 22–24 June 2014; pp. 1863–1871. [Google Scholar]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K.; et al. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv 2016, arXiv:1609.08144. [Google Scholar]

- Radiuk, P.M. Impact of training set batch size on the performance of convolutional neural networks for diverse datasets. Inf. Technol. Manag. Sci. 2017, 20, 20–24. [Google Scholar] [CrossRef]

- Mu, N.; Yao, Z.; Gholami, A.; Keutzer, K.; Mahoney, M. Parameter re-initialization through cyclical batch size schedules. arXiv 2018, arXiv:1812.01216. [Google Scholar]

- Brownlee, J. What is the Difference Between a Batch and an Epoch in a Neural Network. Mach. Learn. Mastery 2018, 20. Available online: https://machinelearningmastery.com/difference-between-a-batch-and-an-epoch/ (accessed on 26 April 2022).

- Huang, G.; Sun, Y.; Liu, Z.; Sedra, D.; Weinberger, K.Q. Deep networks with stochastic depth. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 646–661. [Google Scholar]

| State | Description |

|---|---|

| Rest | Standing still, lying, and transition between these 2 events, while lying, allowed to do any kind of movement with head/neck/legs (e.g., tongue rolling). |

| Rumination | Rhythmic circular/side-to-side movements of the jaw not associated with eating or medium activity, interrupted by brief (<5 s) pauses during the time that bolus is swallowed, followed by a continuation of rhythmic jaw movements. |

| Panting (Heavy Breathing) | Fast and shallow movement of thorax visible when looking animal from side, along with forward heaving movement of body while breathing. May or may not have open mouth, salivation, and/or extended tongue. |

| High Activity | Includes any combination of running, mounting, head-butting, repetitive head-weaving/tossing, leaping, buck-kicking, rearing and head tossing. |

| Eating | Muzzle/tongue physically contacts and manipulates feed, often but not always followed by visible chewing. |

| Grazing | Eating (see above definition) growing grass and pasture, while either standing in place or moving at slow, even or uneven pace between patches. |

| Walking | Slow movement, limb movement, except running. |

| Medium Activity | Any activity other than the above states. |

| Category | Number |

|---|---|

| Angus Female | 13 |

| Angus Male | 14 |

| Brahman Female | 14 |

| Brahman Male | 5 |

| Brangus Female | 10 |

| Brangus Male | 0 |

| Charolais Female | 3 |

| Charolais Male | 1 |

| crossbred Female | 38 |

| crossbred Male | 0 |

| Total number | 98 |

| Fitting Method | The Best Number of Items | Variance |

|---|---|---|

| Gaussian Fitting | 8 | 3.0037 |

| Sum of sine | 8 | 20.1288 |

| Polynomial | 9 | 245.3264 |

| Fourier | 8 | 25.4590 |

| Hidden Neurons | 128 |

| Batch size | 24 |

| Epoch size | 10,000 |

| LSTM layers | 2 |

| Loss-train | 0.57953376 |

| Loss-test | 5.197178 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Han, X.; Lin, Z.; Clark, C.; Vucetic, B.; Lomax, S. AI Based Digital Twin Model for Cattle Caring. Sensors 2022, 22, 7118. https://doi.org/10.3390/s22197118

Han X, Lin Z, Clark C, Vucetic B, Lomax S. AI Based Digital Twin Model for Cattle Caring. Sensors. 2022; 22(19):7118. https://doi.org/10.3390/s22197118

Chicago/Turabian StyleHan, Xue, Zihuai Lin, Cameron Clark, Branka Vucetic, and Sabrina Lomax. 2022. "AI Based Digital Twin Model for Cattle Caring" Sensors 22, no. 19: 7118. https://doi.org/10.3390/s22197118

APA StyleHan, X., Lin, Z., Clark, C., Vucetic, B., & Lomax, S. (2022). AI Based Digital Twin Model for Cattle Caring. Sensors, 22(19), 7118. https://doi.org/10.3390/s22197118