1. Introduction

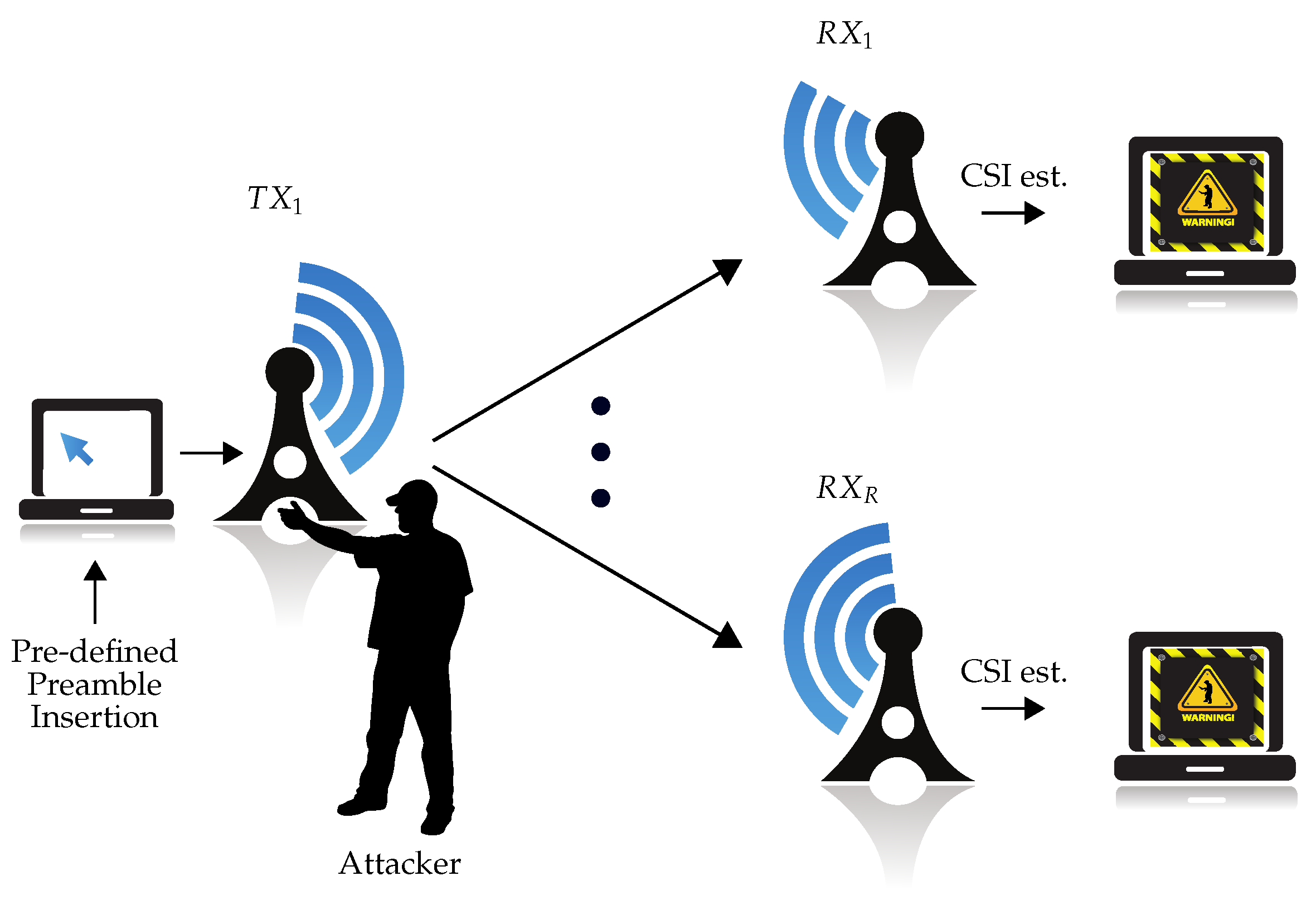

Wireless networks in critical infrastructures require a high level of security. Therefore, different network security threats need to be considered in such applications. Among them, physical tampering with a device is one that is missing in many applications. As discussed by [

1], a possible physical tamper attack is the altering of the orientation of surveillance cameras that monitor a critical infrastructure. Radio-frequency (RF) fingerprint-based localization systems using physical (PHY) layer measurements (e.g., received signal strength indication (RSSI) [

2], channel impulse response (CIR) [

3], channel state information (CSI) [

4], etc.) comprise another example in which physical tampering can significantly distort the system function (e.g., by changing antenna characteristics). According to the European Union Agency for Cybersecurity (ENISA) [

5], physical tamper attacks within IoT applications are one of the main threats faced by healthcare organizations as well. An assumption in all these cases is that the transceivers must not be tampered for the system to work correctly. Thus, the functionality of the systems is destroyed with high probability, if the transceivers are tampered. In order to recognize such attacks, a physical tamper attack detection mechanism is required.

To address this issue, radio channel characteristics, which are observed by measurements [

1,

3,

6,

7], can help us to detect the tamper attack. Such measurements are the CIR [

3], CSI [

1,

6,

7], and received packet features [

8,

9]. However, the characteristics are not solely influenced by the tamper attack, but also by regular environmental changes. As one of the first works, the proposed CIR method in [

3] was only tested in environments with few dynamic elements and, thus, experienced high misdetection rates in dynamic environments. Reference [

7] also investigated the feasibility of using a commercial off-the-shelf (COTS) Wi-Fi device as the physical tamper detector based on CSI collection in an almost static environment. To achieve resilience to regular environmental changes, Reference [

1] proposed to increase space diversity by using multiple antennas at the receiver. However, others proposed to make use of machine learning (ML) approaches to tackle this issue. Reference [

6] showed that a semi-supervised deep learning (DL) algorithm with a postprocessing unit can extract the characteristics of environments and outperforms the approach by [

1]. References [

8,

9] also showed that their ML approaches for the detection of removal/addition of sensors within IoT applications perform with high accuracy in a dynamic environment. The summary of the aforementioned works can be seen in

Table 1.

To perform physical tamper attack detection, three main strategies have been applied in the literature. These are: (i) distance computation between previous measurements and new measurements either with hypothesis testing for CIR values in [

3] or with direct threshold detection for the CSI in [

1]; (ii) distance computation to a lower-dimensional signal representation obtained in a DL framework in [

6]; and (iii) a direct detection using the ML algorithms in [

7,

8,

9].

The most recent methods, namely (ii) and (iii), outperform previous methods in terms of the attack detection accuracy. However, it is a cumbersome task to directly compare the aforementioned methods due to their different communication systems. Therefore, since currently, orthogonal frequency division multiplexing (OFDM) is one of the most common transmission technologies [

10], we followed [

1,

6,

7] and based our proposed physical tamper attack detection methods on the estimated CSI in an OFDM-based wireless system.

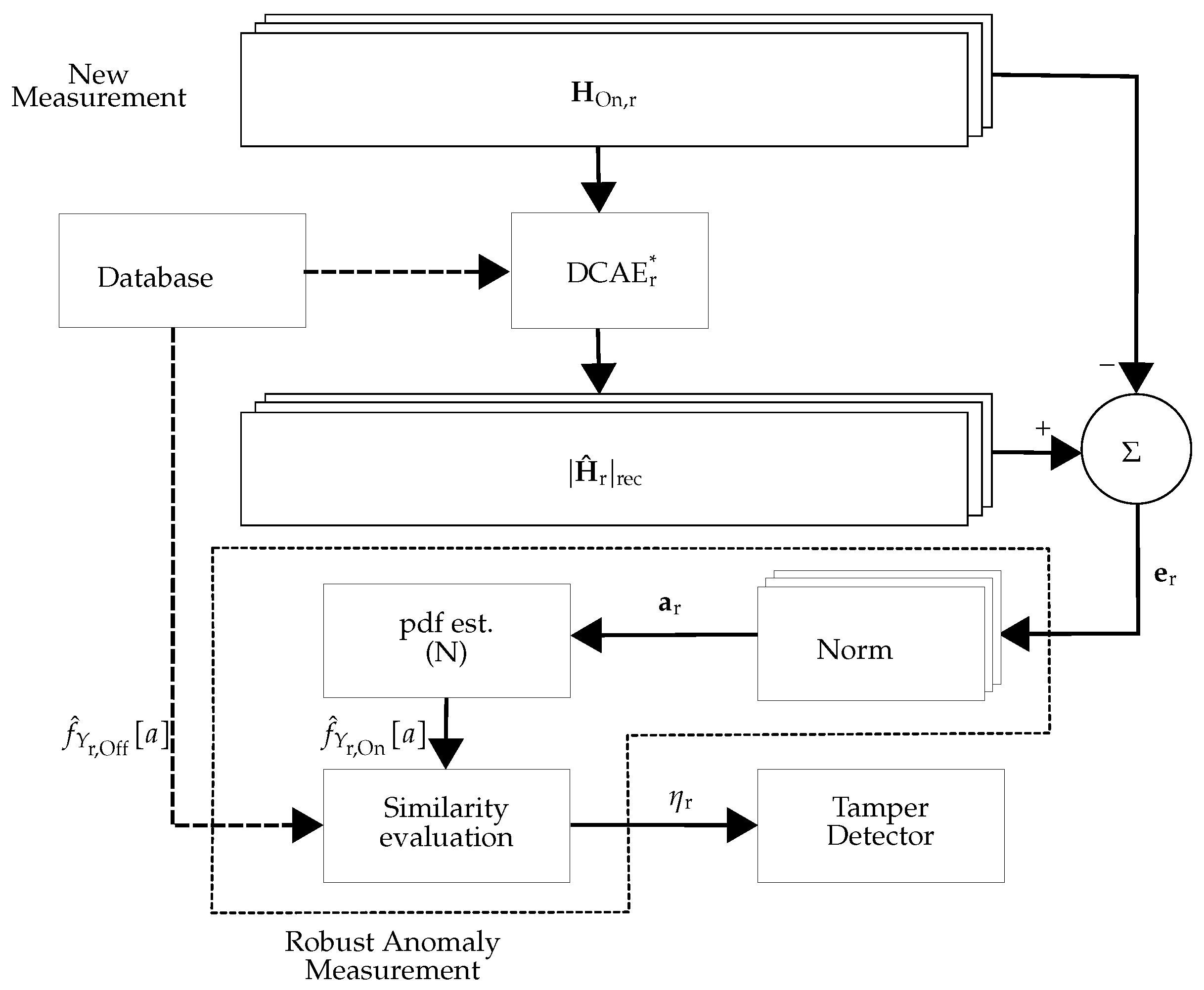

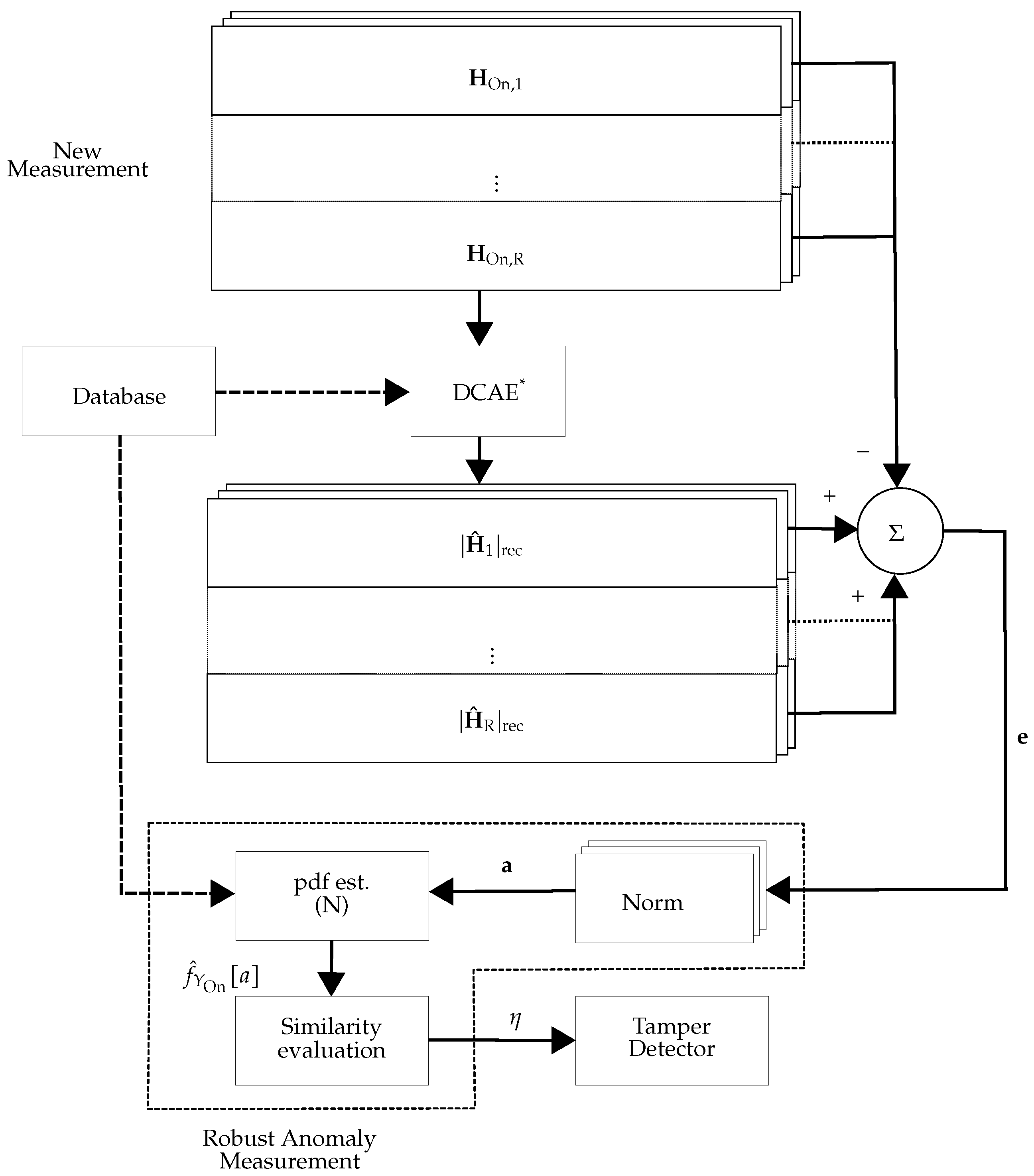

To detect physical tamper attacks in an OFDM-based system, Reference [

1] proposed to use multiple antennas at the receiver and calculate the distances between tamper-free CSI in the offline and online phases. This approach was based on the assumption that environmental variations will not affect all CSI received from different antennas at the receiver. In [

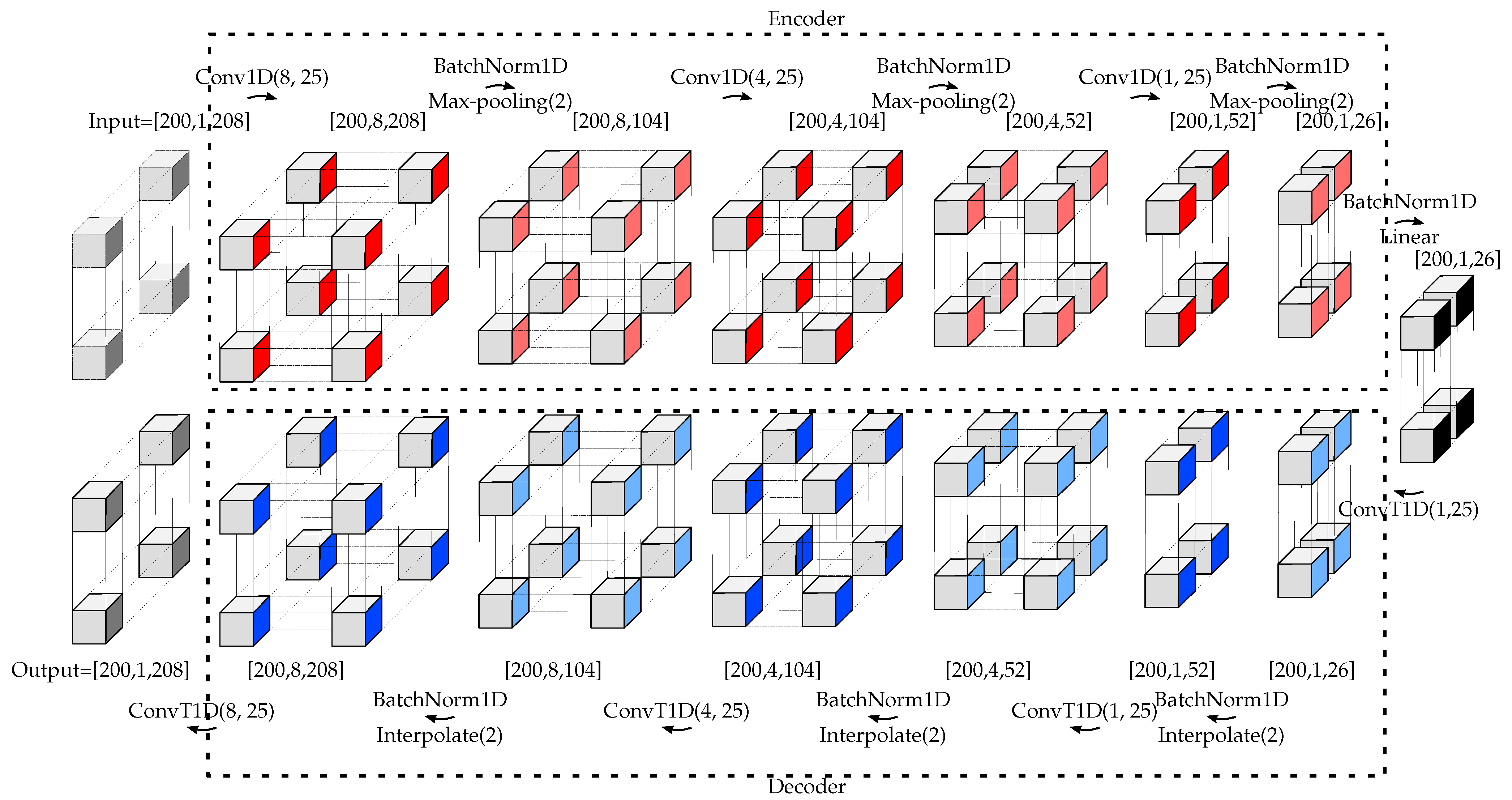

6], a mixed DL approach, including a deep convolutional autoencoder (DCAE) and a postprocessing, was applied. The DCAE tried to reconstruct the measured CSI with lower-dimensional features. The reconstructed version was then compared to the measurement and used (after robust postprocessing) for attack detection. The disadvantages of [

6] were the time delay introduced by the necessary postprocessing unit, no possibility for using multiple CSI estimates at the receiver(s), and the high number of parameters that have to be adjusted manually. In [

7], a fully DL approach (cf. [

6]) was applied, where a deep neural network with two hidden layers was used. The network used the CSI as the input and output the probability of a tamper attack at predetermined reference positions. While the computational complexity of the method was low, it aimed to identify 1 of N authorized positions, which is a different problem compared to the problem described in [

1,

6]. Therefore, the method in [

7] could not be applied to the problem and was not compared with the proposed methods. (In this work, the performance comparison was made with [

1,

6] in the Experimental Section).

As almost all modern wireless communication systems support multiple-input multiple-output (MIMO), we were motivated to extend the proposed methods in [

6] for the case that multiple CSI estimates at the receiver(s) are available (multi-CSI estimates can be available from either a single receiver with multiple antennas or multiple receivers, each with a single antenna). In this work, we thus expanded the framework of [

6] to multi-CSI estimates at the receiver(s). Moreover, the drawback of [

6] motivated us to propose using a fully DL approach to detect the physical tamper attack. As shown in [

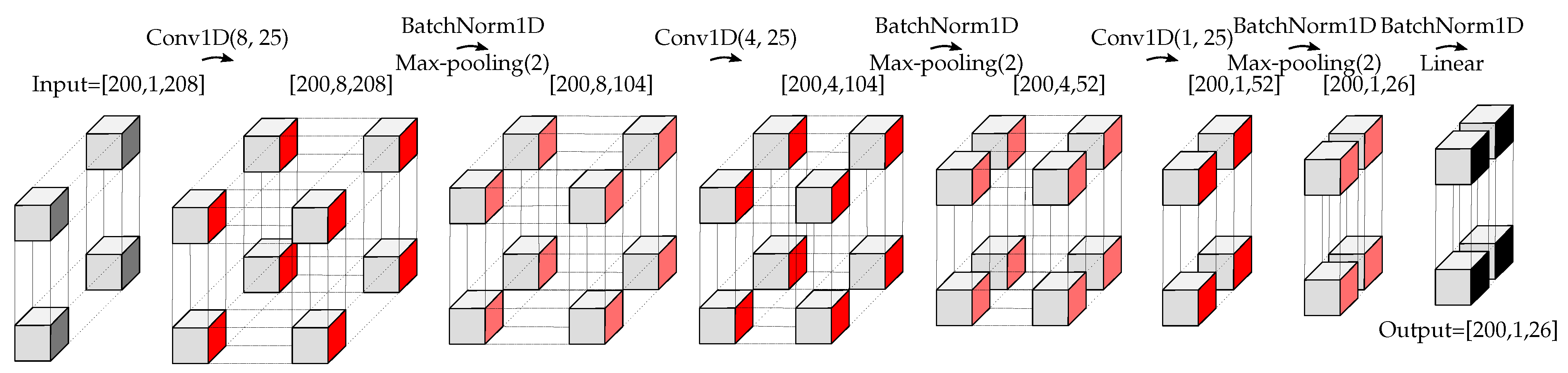

6], a DL approach for dimensionality reduction followed by a postprocessing unit detects the physical tamper attack with a high time delay due to using the postprocessing unit. To solve that, we proposed to use a fully DL approach to simultaneously reduce the dimensionality of the input data along with the anomaly detection task. As stated in [

11], support vector data description (SVDD) [

12] is one of the popular approaches for anomaly detection. We show that a well-tuned version of the proposed method in [

13], namely the Deep SVDD, can be used for physical tamper attack detection. In order to fairly evaluate the physical tamper attack detection methods, both the detection performance and the efficiency (e.g., the time delay due to computational complexity in [

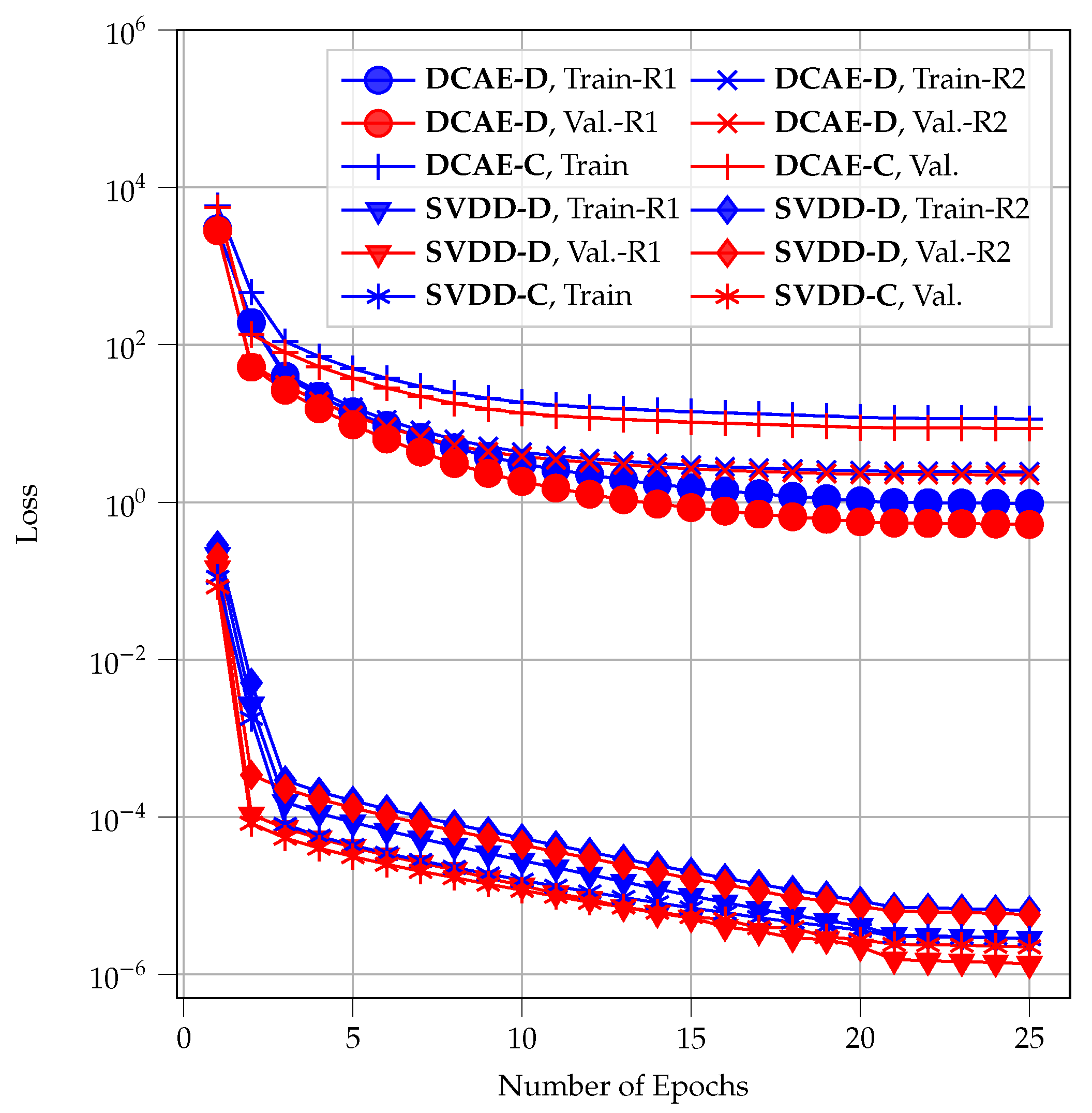

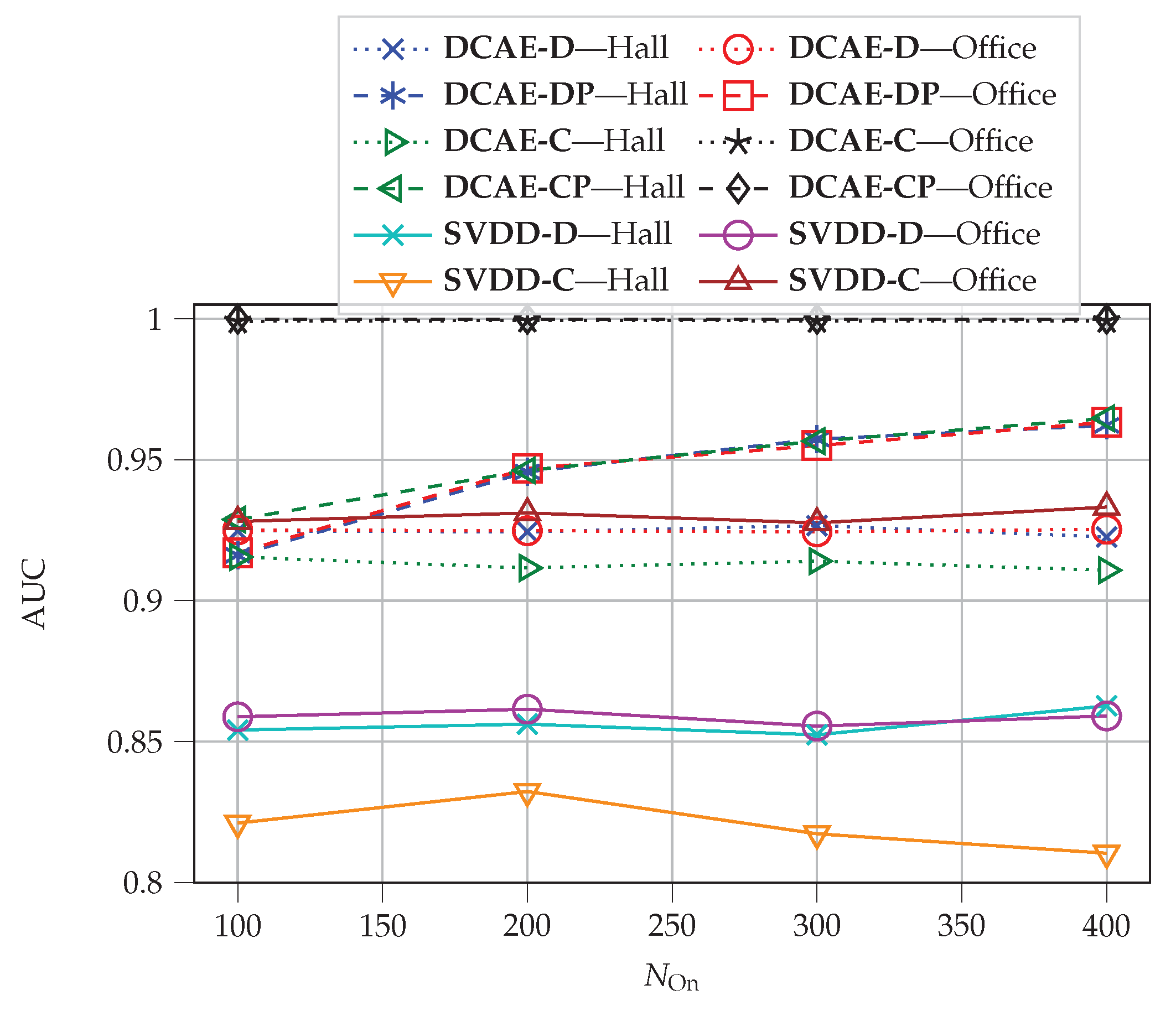

6]) were taken into account, which was neglected in previous works. The proposed methods offer different characteristics in detection performance, time complexity, and database space budget.

In summary, this paper extends the aforementioned works in two ways: (i) simultaneous with the detection performance analysis, we evaluate efficiency by means of time delay and database space budget; (ii) centralized or decentralized detectors are considered in the design of the proposed methods. In detail, the contributions of the paper are as follows:

Extending the framework for physical tamper attack detection presented in [

6] for the case that multiple CSI estimates at the receiver (s) are available: We suggest two distinct approaches, i.e., centralized and decentralized processing. We show that centralized processing has better detection performance and requires lower database space, while having higher time complexity.

Proposing the Deep SVDD framework to overcome complexity and latency limitations: We apply Deep SVDD to the physical tamper attack detection problem and show that it has significantly lower complexity compared to the DCAE approach, while having only slightly decreased detection performance.

Complexity analysis: We characterize the algorithmic complexity by the number of mathematical operations and required database space to compare all investigated methods. We show that there is a trade-off between detection performance and complexity in the proposed methods.

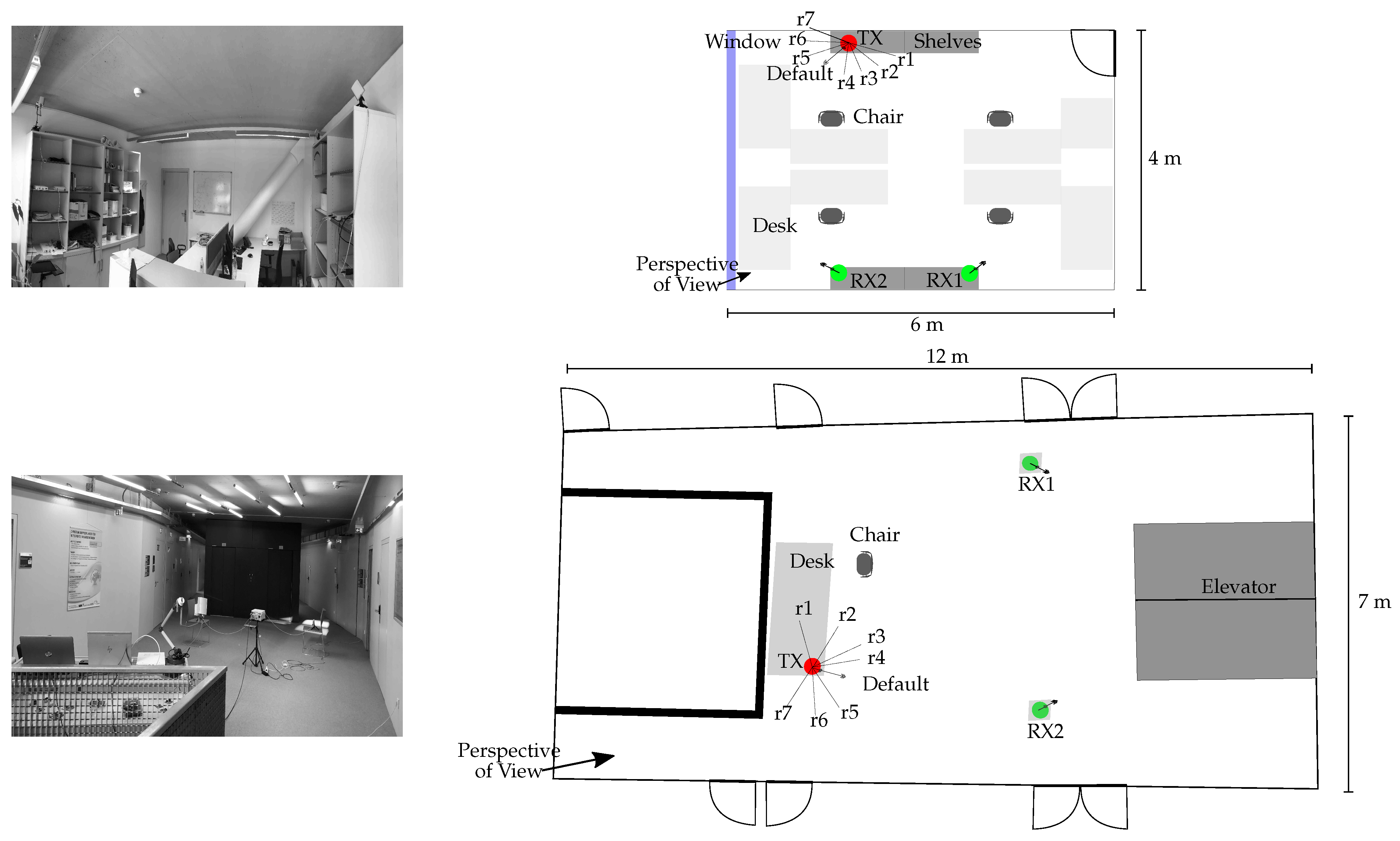

We evaluate all methods on experimental data from a measurement campaign in a university building.

The rest of this paper is organized as follows:

Section 2 introduces the tamper attack detection framework. The tamper attack detection methods will be presented in

Section 3. The experimental results are discussed in

Section 4. Finally,

Section 5 concludes the paper.