Hyper-Parameter Optimization of Stacked Asymmetric Auto-Encoders for Automatic Personality Traits Perception

Abstract

:1. Introduction

- HPT with the classical method and parameter optimization [13,14,15,16]: The fine-tuning of weights and biases (parameters) can provide useful information about the problem, but their size and initial value rely on HPT. Moreover, the number of parameters in deep neural networks (DNNs) and high dimensional datasets is enormous, and calculating the optimum value of these parameters is complicated, not easily implemented, and requires computational systems with remarkable capabilities.

- Hyper-parameter and parameter optimization [17,18,19]: Adaptive hyper-parameters are obtained by parameter training. The critical disadvantage is that with each possible vector of hyper-parameters, the parameters must be optimized, which causes runtime errors in the computational system and requires expensive training and large storage capacity to save the best parameters value over epochs. Additionally, all possible combinations of hyper-parameters are computationally infeasible. Hence, this method is not applicable in a large model such as deep learning [20,21].

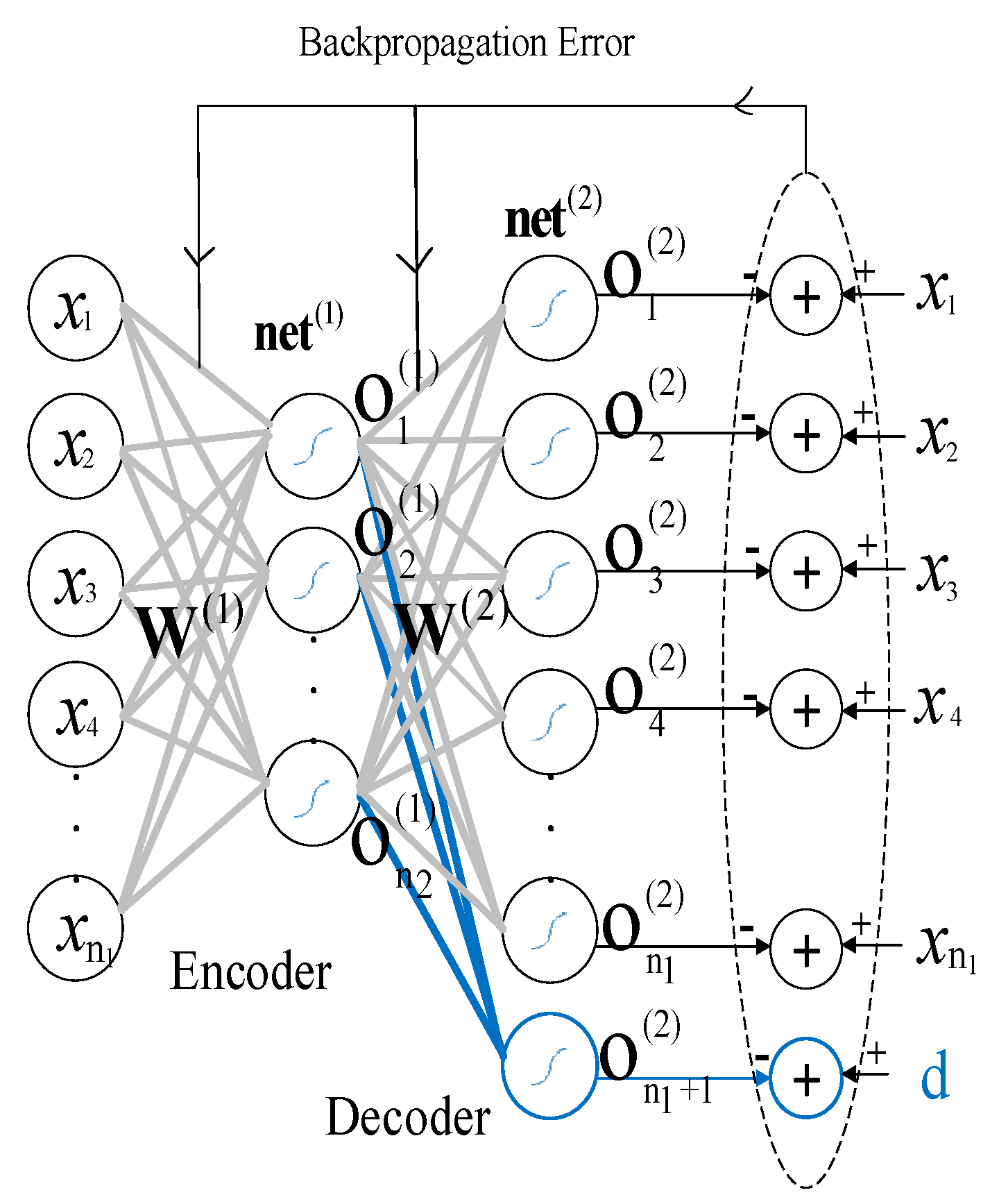

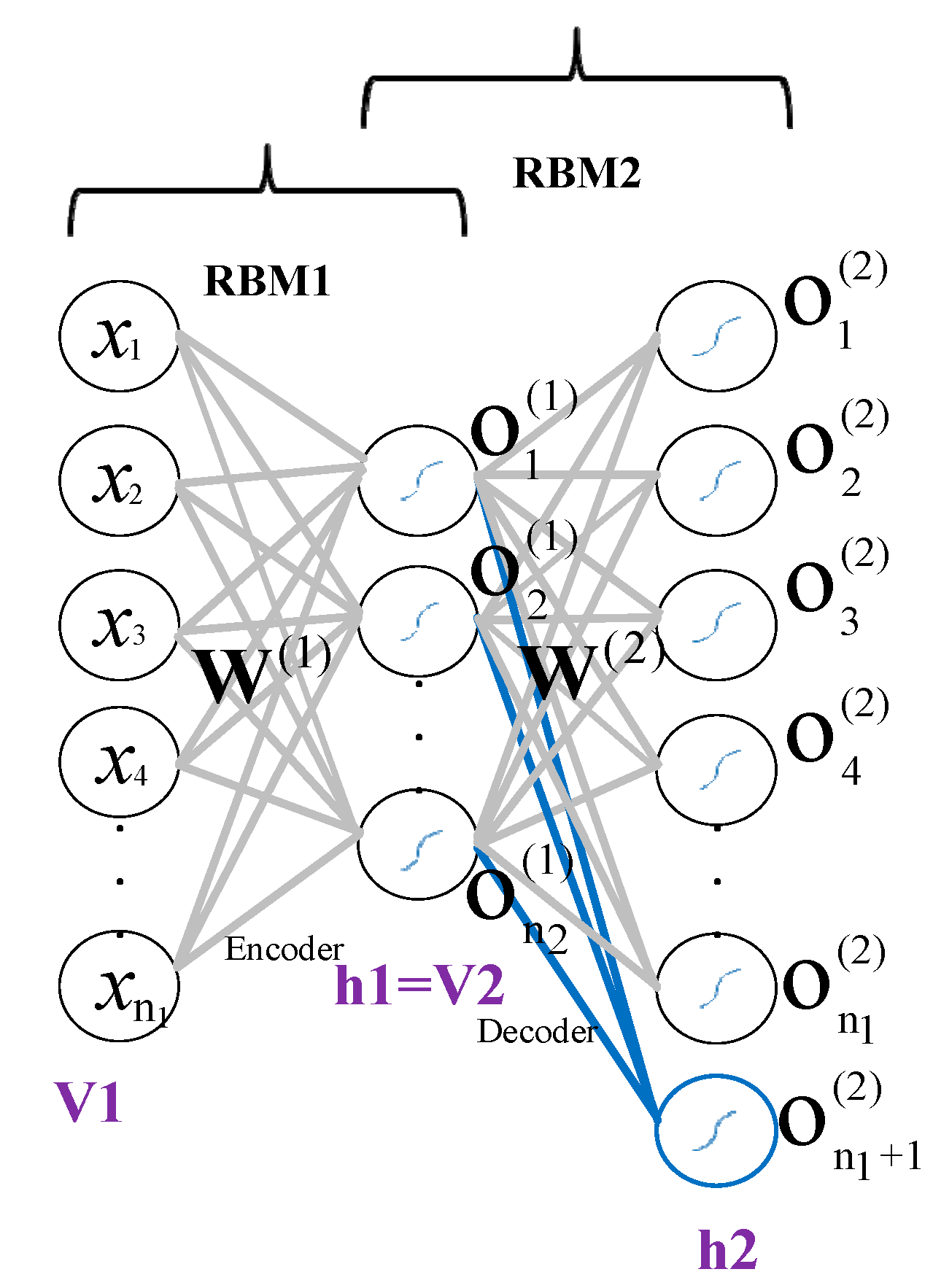

- Hyper-parameter optimization (HPO) and parameter tuning with back-propagation [4,11,22]: The main drawback is that although optimization methods are efficient in finding global optima, the gradient may vanish when back-propagating. As a result, not all network parameters are tuned well, which impacts results [23]. To tackle the poor-tuning process of deep neural network parameters, an asymmetric auto-encoder (AsyAE) was presented in our previous work for automatic personality perception (APP) from speech [24]. We showed that AsyAE could improve the model outcome results compared with conventional auto-encoders by semi-supervised training of parameters, and it can be effectively employed in deep learning. However, the stacked asymmetric auto-encoder (SAAE) hyper-parameters were chosen by trial-and-error, which was time-consuming, and two personality traits achieved lower accuracy than other prior research [24].

2. Related Works

2.1. Hyper-Parameter Tuning in ML

2.2. Automatic Personality Perception

3. Dataset

4. Feature Extraction

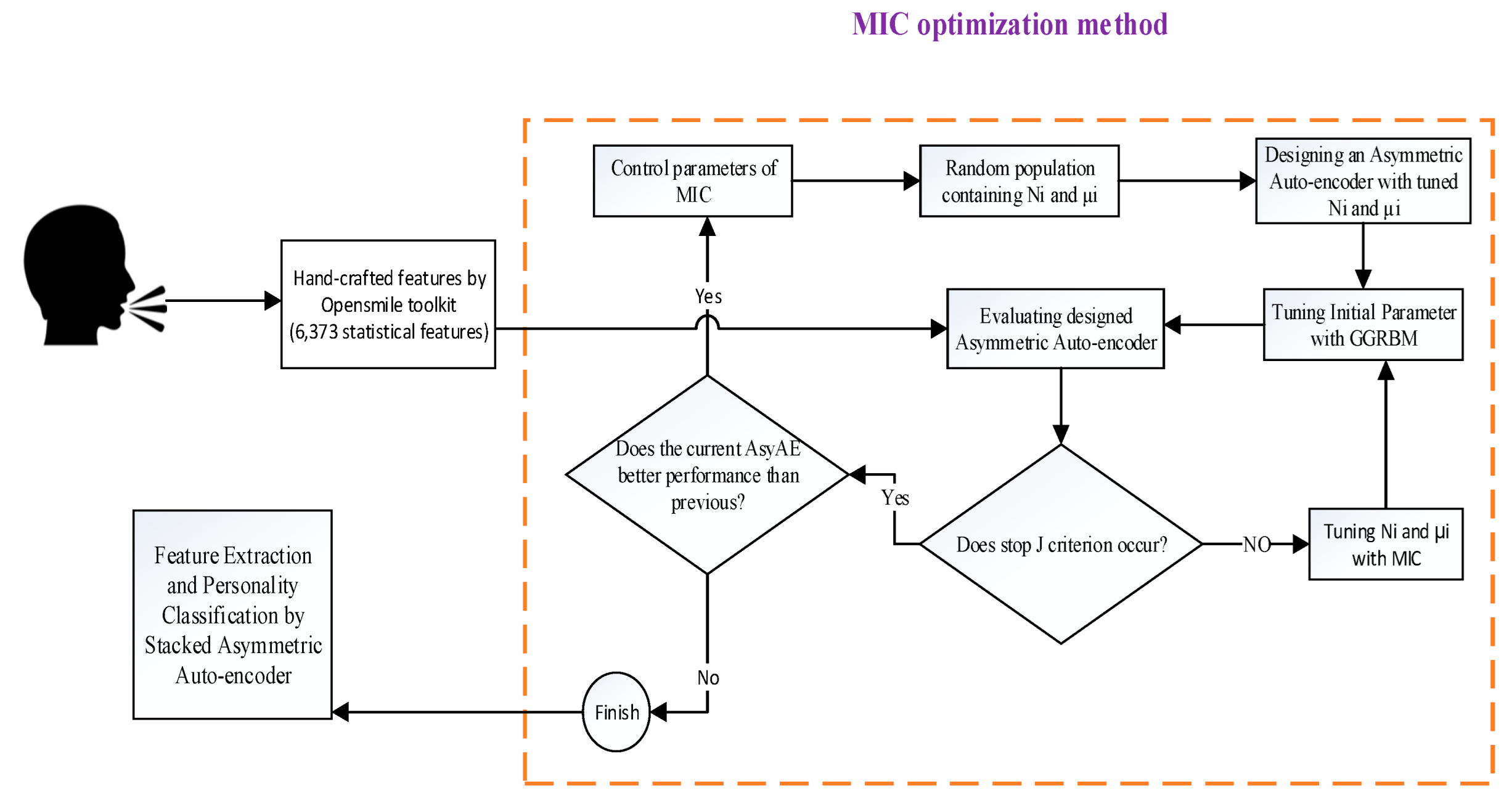

5. Proposed Method

5.1. The Proposed Optimization Method

| Algorithm 1: Implementation of MIC |

| Step 1: Set the MIC parameters randomly. |

| Step 2: Generate the initial population randomly. |

| Step 3: Transfer 25% of the best individuals of each island into InBS (Accept). |

| Step 4: Update Belief space whith Equations (1)–(7). |

| Step 5: Transfer 25% of offspring into each island (Influ). |

| Step 6: If stop criterion < ζ |

| Stop algoriyhm. |

| Else |

| Go to Step 7. |

| Step 7: Create Interactive population space by using the following three methods: |

| EM: m of the best individuals of four islands are selected and replaced with an old population. |

| MM: The a × m of the best individuals are selected and merged with (a − 1) × m, which is obtained from the old population in islands. |

| LM: According to two random numbers, µ and λ, some individuals of a random island can immigrate to and emigrate from another random island. |

| Step 8: Go to Step 3. |

5.2. Stacked Asymmetric Auto-Encoder HPO Using MIC

- (1)

- Stacked asymmetric auto-encoder

- (2)

- Optimizing some hyper-parameters of a stacked asymmetric auto-encoder

- number of neurons in each hidden layer

- learning rate value

- initial parameters

- number of hidden layers

- maximum epoch of network training

- preventing over-fitting and under-fitting

| Algorithm 2: Optimizing SAAE hyperparameters |

| Set the initial parameters Old_max = 0, G_max = 2.08 (upper band of Loss), OldEv_Asy = 0 (the first AsyAE performance) and the other randomly. |

| Set the input matrix of AsyAE. |

| Set = 1 ( indicates the number of hidden layer) |

| Set NewEv_Asy = 1 (the th AsyAE performance) |

| While NewEv_Asy> OldEv_Asy |

| OldEv_Asy = NewEv_Asy |

| While (G_max-Old_max) > 0.1 |

| Optimize (,) with MIC. |

| Initialize the AsyAE parameter randomly. |

| Tune AsyAE initial parameters with GGRBM. |

| Train AsyAE while J increases. |

| Evaluate Equation (25). |

| If the value of Equation (25) ≥ Old_max |

| Old_max = the current value of Equation (25), |

| NewEv_Asy = the value of Equation (25). |

| End if |

| Set the encoder layer output of th AsyAE as the input of th AsyAE. |

| = + 1. |

| End while |

| End while |

6. Simulations and Results

6.1. The Results of the MIC on Three Optimization Benchmarks

- The average of iterations where the stop criterion is reached for examining convergence speed (AvI).

- The average of obtained best optima point (AvP).

- The smallest iteration at which the stop criterion occurs (SI).

- The best-obtained optima point (BOP).

- Calculating the standard deviation (SD) for proving the efficiency and robustness of the algorithm.

- The number of successful runs divided by the total number of runs called success rate (SR).

6.2. The Results of Personality Perception with The MIC Method

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Bischl, B.; Binder, M.; Lang, M.; Pielok, T.; Richter, J.; Coors, S.; Thomas, J.; Ullmann, T.; Becker, M.; Boulesteix, A.-L. Hyperparameter optimization: Foundations, algorithms, best practices and open challenges. arXiv 2021, arXiv:107.05847. [Google Scholar]

- Szepannek, G.; Lübke, K. Explaining Artificial Intelligence with Care. In KI-Künstliche Intell.; 2022; Volume 16, pp. 1–10. [Google Scholar] [CrossRef]

- Khodadadian, A.; Parvizi, M.; Teshnehlab, M.; Heitzinger, C. Rational Design of Field-Effect Sensors Using Partial Differential Equations, Bayesian Inversion, and Artificial Neural Networks. Sensors 2022, 22, 4785. [Google Scholar] [CrossRef]

- Guo, C.; Li, L.; Hu, Y.; Yan, J. A Deep Learning Based Fault Diagnosis Method With hyperparameter Optimization by Using Parallel Computing. IEEE Access 2020, 8, 131248–131256. [Google Scholar] [CrossRef]

- Feurer, M.; Hutter, F. Hyperparameter optimization. In Automated Machine Learning; Springer: Cham, Switzerland, 2019; pp. 3–33. [Google Scholar]

- Yang, L.; Shami, A. On hyperparameter optimization of machine learning algorithms: Theory and practice. Neurocomputing 2020, 415, 295–316. [Google Scholar] [CrossRef]

- Wu, D.; Wu, C. Research on the Time-Dependent Split Delivery Green Vehicle Routing Problem for Fresh Agricultural Products with Multiple Time Windows. Agriculture 2022, 12, 793. [Google Scholar] [CrossRef]

- Peng, Y.; Gong, D.; Deng, C.; Li, H.; Cai, H.; Zhang, H. An automatic hyperparameter optimization DNN model for precipitation prediction. Appl. Intell. 2021, 52, 2703–2719. [Google Scholar] [CrossRef]

- Yi, H.; Bui, K.-H.N. An automated hyperparameter search-based deep learning model for highway traffic prediction. IEEE Trans. Intell. Transp. Syst. 2020, 22, 5486–5495. [Google Scholar] [CrossRef]

- Kinnewig, S.; Kolditz, L.; Roth, J.; Wick, T. Numerical Methods for Algorithmic Systems and Neural Networks; Institut für Angewandte Mathematik, Leibniz Universität Hannover: Hannover, Germany, 2022. [Google Scholar] [CrossRef]

- Han, J.-H.; Choi, D.-J.; Park, S.-U.; Hong, S.-K. Hyperparameter optimization using a genetic algorithm considering verification time in a convolutional neural network. J. Electr. Eng. Technol. 2020, 15, 721–726. [Google Scholar] [CrossRef]

- Yao, R.; Guo, C.; Deng, W.; Zhao, H. A novel mathematical morphology spectrum entropy based on scale-adaptive techniques. ISA Trans. 2022, 126, 691–702. [Google Scholar] [CrossRef] [PubMed]

- Raji, I.D.; Bello-Salau, H.; Umoh, I.J.; Onumanyi, A.J.; Adegboye, M.A.; Salawudeen, A.T. Simple deterministic selection-based genetic algorithm for hyperparameter tuning of machine learning models. Appl. Sci. 2022, 12, 1186. [Google Scholar] [CrossRef]

- Harichandana, B.; Kumar, S. LEAPMood: Light and Efficient Architecture to Predict Mood with Genetic Algorithm driven Hyperparameter Tuning. In Proceedings of the 2022 IEEE 16th International Conference on Semantic Computing (ICSC), Laguna Hills, CA, USA, 26–28 January 2022; pp. 1–8. [Google Scholar]

- Guido, R.; Groccia, M.C.; Conforti, D. Hyper-Parameter Optimization in Support Vector Machine on Unbalanced Datasets Using Genetic Algorithms. In Optimization in Artificial Intelligence and Data Sciences; Springer: Berlin/Heidelberg, Germany, 2022; pp. 37–47. [Google Scholar]

- Thavasimani, K.; Srinath, N.K. Hyperparameter optimization using custom genetic algorithm for classification of benign and malicious traffic on internet of things-23 dataset. Int. J. Electr. Comput. Eng. 2022, 12, 4031–4041. [Google Scholar] [CrossRef]

- Awad, M. Optimizing the Topology and Learning Parameters of Hierarchical RBF Neural Networks Using Genetic Algorithms. Int. J. Appl. Eng. Res. 2018, 13, 8278–8285. [Google Scholar]

- Faris, H.; Mirjalili, S.; Aljarah, I. Automatic selection of hidden neurons and weights in neural networks using grey wolf optimizer based on a hybrid encoding scheme. Int. J. Mach. Learn. Cybern. 2019, 10, 2901–2920. [Google Scholar] [CrossRef]

- Li, A.; Spyra, O.; Perel, S.; Dalibard, V.; Jaderberg, M.; Gu, C.; Budden, D.; Harley, T.; Gupta, P. A generalized framework for population based training. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; pp. 1791–1799. [Google Scholar]

- Luo, G. A review of automatic selection methods for machine learning algorithms and hyper-parameter values. Netw. Model. Anal. Health Inform. Bioinform. 2016, 5, 18. [Google Scholar] [CrossRef]

- An, Z.; Wang, X.; Li, B.; Xiang, Z.; Zhang, B. Robust visual tracking for UAVs with dynamic feature weight selection. Appl. Intell. 2022. [Google Scholar] [CrossRef]

- Bhandare, A.; Kaur, D. Designing convolutional neural network architecture using genetic algorithms. Int. J. Adv. Netw. Monit. Control 2021, 6, 26–35. [Google Scholar] [CrossRef]

- Tan, H.H.; Lim, K.H. Vanishing gradient mitigation with deep learning neural network optimization. In Proceedings of the 2019 7th International Conference on Smart Computing & Communications (ICSCC), Sarawak, Malaysia, 28–30 June 2019; pp. 1–4. [Google Scholar]

- Zaferani, E.J.; Teshnehlab, M.; Vali, M. Automatic Personality Traits Perception Using Asymmetric Auto-Encoder. IEEE Access 2021, 9, 68595–68608. [Google Scholar] [CrossRef]

- Cho, H.; Kim, Y.; Lee, E.; Choi, D.; Lee, Y.; Rhee, W. Basic enhancement strategies when using bayesian optimization for hyperparameter tuning of deep neural networks. IEEE Access 2020, 8, 52588–52608. [Google Scholar] [CrossRef]

- Sun, Y.; Xue, B.; Zhang, M.; Yen, G.G.; Lv, J. Automatically designing CNN architectures using the genetic algorithm for image classification. IEEE Trans. Cybern. 2020, 50, 3840–3854. [Google Scholar] [CrossRef]

- Cabada, R.Z.; Rangel, H.R.; Estrada, M.L.B.; Lopez, H.M.C. Hyperparameter optimization in CNN for learning-centered emotion recognition for intelligent tutoring systems. Soft Comput. 2020, 24, 7593–7602. [Google Scholar] [CrossRef]

- Deng, W.; Liu, H.; Xu, J.; Zhao, H.; Song, Y. An improved quantum-inspired differential evolution algorithm for deep belief network. IEEE Trans. Instrum. Meas. 2020, 69, 7319–7327. [Google Scholar] [CrossRef]

- Guo, Y.; Li, J.-Y.; Zhan, Z.-H. Efficient hyperparameter optimization for convolution neural networks in deep learning: A distributed particle swarm optimization approach. Cybern. Syst. 2020, 52, 36–57. [Google Scholar] [CrossRef]

- Ozcan, T.; Basturk, A. Static facial expression recognition using convolutional neural networks based on transfer learning and hyperparameter optimization. Multimed. Tools Appl. 2020, 79, 26587–26604. [Google Scholar] [CrossRef]

- Gülcü, A.; Kuş, Z. Hyper-parameter selection in convolutional neural networks using microcanonical optimization algorithm. IEEE Access 2020, 8, 52528–52540. [Google Scholar] [CrossRef]

- Kong, D.; Wang, S.; Ping, P. State-of-health estimation and remaining useful life for lithium-ion battery based on deep learning with Bayesian hyperparameter optimization. Int. J. Energy Res. 2022, 46, 6081–6098. [Google Scholar] [CrossRef]

- Chowdhury, A.A.; Hossen, M.A.; Azam, M.A.; Rahman, M.H. Deepqgho: Quantized greedy hyperparameter optimization in deep neural networks for on-the-fly learning. IEEE Access 2022, 10, 6407–6416. [Google Scholar] [CrossRef]

- Chen, H.; Miao, F.; Chen, Y.; Xiong, Y.; Chen, T. A hyperspectral image classification method using multifeature vectors and optimized KELM. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 2781–2795. [Google Scholar] [CrossRef]

- Phan, L.V.; Rauthmann, J.F. Personality computing: New frontiers in personality assessment. Soc. Personal. Psychol. Compass 2021, 15, e12624. [Google Scholar] [CrossRef]

- Koutsombogera, M.; Sarthy, P.; Vogel, C. Acoustic Features in Dialogue Dominate Accurate Personality Trait Classification. In Proceedings of the 2020 IEEE International Conference on Human-Machine Systems (ICHMS), Rome, Italy, 7–9 September 2020; pp. 1–3. [Google Scholar]

- Aslan, S.; Güdükbay, U.; Dibeklioğlu, H. Multimodal assessment of apparent personality using feature attention and error consistency constraint. Image Vis. Comput. 2021, 110, 104163. [Google Scholar] [CrossRef]

- Xu, J.; Tian, W.; Lv, G.; Liu, S.; Fan, Y. Prediction of the Big Five Personality Traits Using Static Facial Images of College Students With Different Academic Backgrounds. IEEE Access 2021, 9, 76822–76832. [Google Scholar] [CrossRef]

- Kampman, O.; Siddique, F.B.; Yang, Y.; Fung, P. Adapting a virtual agent to user personality. In Advanced Social Interaction with Agents; Springer: Berlin/Heidelberg, Germany, 2019; pp. 111–118. [Google Scholar]

- Suen, H.-Y.; Hung, K.-E.; Lin, C.-L. Intelligent video interview agent used to predict communication skill and perceived personality traits. Hum.-Cent. Comput. Inf. Sci. 2020, 10, 1–12. [Google Scholar] [CrossRef]

- Liam Kinney, A.W.; Zhao, J. Detecting Personality Traits in Conversational Speech. Stanford University: Stanford, CA, USA, 2017; Available online: https://web.stanford.edu/class/cs224s/project/reports_2017/Liam_Kinney.pdf (accessed on 15 June 2022).

- Jalaeian Zaferani, E.; Teshnehlab, M.; Vali, M. Automatic personality recognition and perception using deep learning and supervised evaluation method. J. Appl. Res. Ind. Eng. 2022, 9, 197–211. [Google Scholar]

- Mohammadi, G.; Vinciarelli, A.; Mortillaro, M. The voice of personality: Mapping nonverbal vocal behavior into trait attributions. In Proceedings of the 2nd international workshop on Social signal processing, Firenze, Italy, 29 October 2010; pp. 17–20. [Google Scholar]

- Rosenberg, A. Speech, Prosody, and Machines: Nine Challenges for Prosody Research. In Proceedings of the 9th International Conference on Speech Prosody 2018, Poznań, Poland, 13–16 June 2018; pp. 784–793. [Google Scholar]

- Junior, J.C.S.J.; Güçlütürk, Y.; Pérez, M.; Güçlü, U.; Andujar, C.; Baró, X.; Escalante, H.; Guyon, I.; van Gerven, M.; van Lier, R. First impressions: A survey on computer vision-based apparent personality trait analysis. arXiv 2019, arXiv:1804.08046v1. [Google Scholar]

- Schuller, B.; Weninger, F.; Zhang, Y.; Ringeval, F.; Batliner, A.; Steidl, S.; Eyben, F.; Marchi, E.; Vinciarelli, A.; Scherer, K. Affective and behavioural computing: Lessons learnt from the first computational paralinguistics challenge. Comput. Speech Lang. 2019, 53, 156–180. [Google Scholar] [CrossRef]

- Hutter, F.; Kotthoff, L.; Vanschoren, J. Automated Machine Learning: Methods, Systems, Challenges; Springer: Berlin/Heidelberg, Germany, 2019. [Google Scholar]

- Harada, T.; Alba, E. Parallel genetic algorithms: A useful survey. ACM Comput. Surv. 2020, 53, 1–39. [Google Scholar] [CrossRef]

- Chentoufi, M.A.; Ellaia, R. A novel multi-population passing vehicle search algorithm based co-evolutionary cultural algorithm. Comput. Sci. 2021, 16, 357–377. [Google Scholar]

- Liu, Z.-H.; Tian, S.-L.; Zeng, Q.-L.; Gao, K.-D.; Cui, X.-L.; Wang, C.-L. Optimization design of curved outrigger structure based on buckling analysis and multi-island genetic algorithm. Sci. Prog. 2021, 104, 368504211023277. [Google Scholar] [CrossRef]

- Shah, P.; Kobti, Z. Multimodal fake news detection using a Cultural Algorithm with situational and normative knowledge. In Proceedings of the 2020 IEEE Congress on Evolutionary Computation (CEC), Glasgow, UK, 19–24 July 2020; pp. 1–7. [Google Scholar]

- Al-Betar, M.A.; Awadallah, M.A.; Doush, I.A.; Hammouri, A.I.; Mafarja, M.; Alyasseri, Z.A.A. Island flower pollination algorithm for global optimization. J. Supercomput. 2019, 75, 5280–5323. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, L.; Gu, X. A hybrid co-evolutionary cultural algorithm based on particle swarm optimization for solving global optimization problems. Neurocomputing 2012, 98, 76–89. [Google Scholar] [CrossRef]

- da Silva, D.J.A.; Teixeira, O.N.; de Oliveira, R.C.L. Performance Study of Cultural Algorithms Based on Genetic Algorithm with Single and Multi Population for the MKP. Bio-Inspired Computational Algorithms and Their Applitions; IntechOpen: London, UK, 2012; pp. 385–404. [Google Scholar]

- Zhao, X.; Tang, Z.; Cao, F.; Zhu, C.; Periaux, J. An Efficient Hybrid Evolutionary Optimization Method Coupling Cultural Algorithm with Genetic Algorithms and Its Application to Aerodynamic Shape Design. Appl. Sci. 2022, 12, 3482. [Google Scholar] [CrossRef]

- Muhamediyeva, D. Fuzzy cultural algorithm for solving optimization problems. J. Phys. Conf. Ser. 2020, 1441, 012152. [Google Scholar] [CrossRef]

- Xu, W.; Wang, R.; Zhang, L.; Gu, X. A multi-population cultural algorithm with adaptive diversity preservation and its application in ammonia synthesis process. Neural Comput. Appl. 2012, 21, 1129–1140. [Google Scholar] [CrossRef]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef] [PubMed]

- Cho, K.H.; Raiko, T.; Ilin, A. Gaussian-bernoulli deep boltzmann machine. In Proceedings of the 2013 International Joint Conference on Neural Networks (IJCNN), Dallas, TX, USA, 4–9 August 2013; pp. 1–7. [Google Scholar]

- Ogawa, S.; Mori, H. A gaussian-gaussian-restricted-boltzmann-machine-based deep neural network technique for photovoltaic system generation forecasting. IFAC-Pap. 2019, 52, 87–92. [Google Scholar] [CrossRef]

- Tharwat, A.; Gaber, T.; Ibrahim, A.; Hassanien, A.E. Linear discriminant analysis: A detailed tutorial. AI Commun. 2017, 30, 169–190. [Google Scholar] [CrossRef]

- Jamil, M.; Yang, X.-S. A literature survey of benchmark functions for global optimisation problems. Int. J. Math. Model. Numer. Optim. 2013, 4, 150–194. [Google Scholar] [CrossRef]

- Mohammadi, G.; Vinciarelli, A. Automatic personality perception: Prediction of trait attribution Based Prosodic Features. IEEE Trans. Affect. Comput. 2012, 3, 273–284. [Google Scholar] [CrossRef]

- Chastagnol, C.; Devillers, L. Personality traits detection using a parallelized modified SFFS algorithm. Computing 2012, 15, 16. [Google Scholar]

- Mohammadi, G.; Vinciarelli, A. Automatic personality perception: Prediction of trait attribution based on prosodic features extended abstract. In Proceedings of the 2015 International Conference on Affective Computing and Intelligent Interaction (ACII), Xi’an, China, 21–24 September 2015; pp. 484–490. [Google Scholar]

- Solera-Ureña, R.; Moniz, H.; Batista, F.; Cabarrão, R.; Pompili, A.; Astudillo, R.; Campos, J.; Paiva, A.; Trancoso, I. A semi-supervised learning approach for acoustic-prosodic personality perception in under-resourced domains. In Proceedings of the 18th Annual Conference of the International Speech Communication Association, INTERSPEECH 2017, Stockholm, Sweden, 20–24 August 2017; pp. 929–933. [Google Scholar]

- Carbonneau, M.-A.; Granger, E.; Attabi, Y.; Gagnon, G. Feature learning from spectrograms for assessment of personality traits. IEEE Trans. Affect. Comput. 2017, 11, 25–31. [Google Scholar] [CrossRef]

- Liu, Z.-T.; Rehman, A.; Wu, M.; Cao, W.; Hao, M. Speech personality recognition based on annotation classification using log-likelihood distance and extraction of essential audio features. IEEE Trans. Multimed. 2020, 23, 3414–3426. [Google Scholar] [CrossRef]

| 4 Energy Related LLD | Group |

|---|---|

| Sum of Auditory Spectrum (Loudness) | Prosodic |

| Sum of RASTA-Style Filtered Auditory Spectrum | Prosodic |

| RMS Energy, Zero-Crossing Rate | Prosodic |

| 55 Spectral LLD | Group |

| RASTA-Style Auditory Spectrum, Bands 1–26 (0–8 kHz) | Spectral |

| MFCC 1-14 | Cepstral |

| Spectral Energy 250–650 Hz, 1 k–4 kHz | Spectral |

| Spectral Roll Off Point 0.25, 0.50, 0.75, 0.90 | Spectral |

| Spectral Flux, Centroid, Entropy, Slope, Harmonicity | Spectral |

| Spectral Psychoacoustic Sharpness | Spectral |

| Spectral Variance, Skewness, Kurtosis | Spectral |

| 6 Voicing Related LLD | Group |

| F0 (SHS & Viterbi Smoothing) | Prosodic |

| Probability of Voicing | Sound Quality |

| Log. HNR, Jitter (Local, Delta), Shimmer (Local) | Sound Quality |

| Mean Values Arithmetic Mean A∆, B, Arithmetic Mean of Positive Values A∆, B, Root-Quadratic Mean, Flatness Moments: Standard Deviation, Skewness, Kurtosis Temporal Centroid A∆, B Percentiles Quartiles 1–3, Inter-Quartile Ranges 1–2, 2–3, 1–3, 1%—tile, 99%—tile, Range 1–99% Extrema Relative Position of Maximum and Minimum, Full Range (Maximum–Minimum) Peaks and Valleys A Mean of Peak Amplitudes, Difference of Mean of Peak Amplitudes to Arithmetic Mean, Peak to Peak Distances: Mean and Standard Deviation, Peak Range Relative to Arithmetic Mean, Range of Peak Amplitude Values, Range of Valley Amplitude Values Relative to Arithmetic Mean, Valley-Peak (Rising) Slopes: Mean and Standard Deviation, Peak-Valley (Falling) Slopes: Mean and Standard Deviation Up-Level Times: 25%, 50%, 75%, 90% Rise and Curvature Time Relative Time in which Signal is Rising, Relative Time in which Signal has Left Curvative Segment Lengths A Mean, Standard Deviation, Minimum, Maximum Regression A∆, B Linear Regression: Slope, Offset, Quadratic Error, Quadratic Regression: Coefficients a and b, Offset c, Quadratic Error Linear Prediction LP Analysis Gain (Amplitude Error), LP Coefficients 1–5 A Functionals applied only to energy related and spectral LLDs (group A) B Functionals applied only to voicing related LLDs (group B) ∆ Functionals applied only to ∆LLDs ∆ Functionals not applied only to ∆LLDs | |

| Name | Formula | Range | |

|---|---|---|---|

| Rastrigin | |||

| Ackley | |||

| Griewang | 0 |

| Benchmark Functions | Optimization Algorithm | AvI | AvP | SI | BOP | SD | SR (%) | AvI | AvP | SI | BOP | SD | SR (%) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Rastrigin | MIC by LM | 83.4 | 6.7 × 10−5 | 61 | 7.2 × 10−5 | 4.7 × 10−5 | 100 | 307.1 | 7.8 × 10−4 | 254 | 4.8 × 10−4 | 2.4 × 10−4 | 100 |

| MIC by EM | 120.5 | 7.1 × 10−5 | 101 | 8.8 × 10−6 | 5.8 × 10−5 | 100 | 321.5 | 7.4 × 10−4 | 187 | 1.8 × 10−4 | 5.6 × 10−4 | 100 | |

| MIC by MM | 572.5 | 9.9 × 10−3 | 131 | 4.9 × 10−3 | 4.3 × 10−3 | 100 | 2000 | 0.46 | 2000 | 6.1 × 10−4 | 1.32 | 60 | |

| GA | 617.1 | 1.2 × 10−5 | 324 | 6.8 × 10−4 | 4.6 × 10−3 | 40 | 1178.4 | 1.34 | 926 | 6.8 × 10−4 | 3.20 | 50 | |

| DE | 1000 | 0.22 | 1000 | 0.14 | 0.47 | 0 | 2000 | 4.72 | 2000 | 0.10 | 2.55 | 0 | |

| ES | 1000 | 3.48 | 1000 | 1.94 | 2.39 | 0 | 2000 | 37.4 | 2000 | 24.7 | 14.4 | 0 | |

| PSO | 985.7 | 0.82 | 857 | 6.8 × 10−4 | 0.71 | 20 | 2000 | 10.9 | 2000 | 3.13 | 5.60 | 0 | |

| Ackley | MIC by LM | 467.2 | 4.4 × 10−15 | 355 | 4.4 × 10−15 | 0 | 100 | 1039.6 | 4.4 × 10−15 | 956 | 4.4 × 10−15 | 0 | 100 |

| MIC by EM | 788.4 | 4.4 × 10−15 | 462 | 4.4 × 10−15 | 0 | 100 | 1154.8 | 3.1 × 10−14 | 937 | 4.4 × 10−15 | 1.9 × 10−15 | 100 | |

| MIC by MM | 725.2 | 1.4 × 10−9 | 324 | 3.5 × 10−10 | 9.2 × 10−10 | 100 | 2000 | 2.48 | 2000 | 2.24 | 0.20 | 0 | |

| GA | 957.5 | 7.3 × 10−2 | 565 | 7.2 × 10−3 | 1.58 | 70 | 1895.1 | 2.86 | 951 | 0.01 | 1.53 | 10 | |

| DE | 1000 | 1.69 | 1000 | 1.24 | 4.47 | 0 | 2000 | 5.35 | 2000 | 4.34 | 0.67 | 0 | |

| ES | 1000 | 4.96 | 1000 | 3.20 | 3.09 | 0 | 2000 | 5.43 | 2000 | 5.23 | 1.9 × 10−1 | 0 | |

| PSO | 557.5 | 8.6 × 10−4 | 344 | 6.2 × 10−4 | 4.1 × 10−4 | 100 | 839.5 | 4.5 × 10−3 | 162 | 8.8 × 10−4 | 7.9 × 10−3 | 100 | |

| Griewang | MIC by LM | 154.7 | 6.2 × 10−14 | 38 | 1.2 × 10−14 | 2.8 × 10−14 | 100 | 106 | 8.4 × 10−14 | 92 | 8.7 × 10−14 | 4.4 × 10−14 | 100 |

| MIC by EM | 171.2 | 8.4 × 10−14 | 43 | 6.4 × 10−14 | 1.5 × 10−14 | 100 | 489 | 1.1 × 10−13 | 94 | 9.1 × 10−14 | 2.5 × 10−14 | 100 | |

| MIC by MM | 775.4 | 3.3 × 10−13 | 146 | 9.6 × 10−14 | 3.2 × 10−13 | 100 | 2000 | 0.27 | 2000 | 9.1 × 10−13 | 0.16 | 20 | |

| GA | 909.8 | 0.09 | 84 | 0.8 × 10−3 | 0.18 | 10 | 2000 | 0.21 | 2000 | 0.09 | 1.1 × 10−1 | 0 | |

| DE | 337.4 | 9.1 × 10−3 | 44 | 7.3 × 10−3 | 1.1 × 10−3 | 100 | 993 | 0.01 | 588 | 7.9 × 10−3 | 8.8 × 10−1 | 70 | |

| ES | 1000 | 0.36 | 1000 | 1.2 × 10−1 | 0.20 | 0 | 2000 | 0.81 | 2000 | 0.76 | 0.19 | 0 | |

| PSO | 555.2 | 0.02 | 258 | 6.6 × 10−2 | 2.8 × 10−2 | 30 | 2000 | 0.77 | 2000 | 0.37 | 3.1 × 10−2 | 0 | |

| Methods | Benchmarks | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Rastrigin | Ackley | Griewang | |||||||

| AvP | SD | SR% | AvP | SD | SR% | AvP | SD | SR% | |

| Xin Zhao et al., 2022 [55] | 2.1 × 10−13 | 4.1 × 10−14 | 100 | 8.2 × 10−15 | 1.3 × 10−15 | 100 | 3.78 × 10−13 | 1.7 × 10−13 | 100 |

| Chentoufi et al., 2021 [49] | 0.99 | 1.31 | 100 | 1.0 × 10−15 | 6.4 × 10−16 | 43 | 8.3 × 10−4 | 5.4 × 10−4 | 67 |

| MIC_LM | 7.8 × 10−4 | 2.4 × 10−4 | 100 | 4.4 × 10−15 | 0 | 100 | 8.4 × 10−14 | 4.4 × 10−14 | 100 |

| MIC_EM | 7.4 × 10−4 | 5.6 × 10−4 | 100 | 3.1 × 10−14 | 1.9 × 10−15 | 100 | 1.1 × 10−13 | 2.5 × 10−14 | 100 |

| MIC_MM | 0.46 | 1.32 | 60 | 2.48 | 0.20 | 0 | 0.27 | 0.16 | 20 |

| Methods | Traits | ||||

|---|---|---|---|---|---|

| Neu. | Ext. | Ope. | Agr. | Con. | |

| Mohammadi et al., 2010 [43] | N/A (63) | N/A (76.3) | N/A (57.9) | N/A (63) | N/A (72) |

| Mohammadi et al., 2012 [63] | N/A (65.9) | N/A (73.5) | N/A (60.1) | N/A (63.1) | N/A (71.3) |

| Chastagnol et al., 2012 [64] | 58 (N/A) | 75.5 (N/A) | 73.4 (N/A) | 65 (N/A) | 62.2 (N/A) |

| Mohammadi et al., 2015 [65] | N/A (66.1) | N/A (71.4) | N/A (58.6) | N/A (58.8) | N/A (72.5) |

| Solera-Urena et al., 2017 [66] | 65.1 (64.7) | 75 (75.1) | 59.1 (58.2) | 60.3 (60.2) | 75.7 (75.6) |

| Carbonneau et al., 2017 [67] | 70.8 (N/A) | 75.2 (N/A) | 56.3 (N/A) | 64.9 (N/A) | 63.8 (N/A) |

| Zhen-Tao Liu et al., 2020 [68] | N/A (69.2) | N/A (76.3) | N/A (74.7) | N/A (65.3) | N/A (73.3) |

| Our privuse work 2021 [24] | 77.1 (76.9) | 76.6 (72.9) | 81.2 (70.4) | 80.7 (68.7) | 78.5 (69.5) |

| Proposed method | 89.8 (80.5) | 82.2 (83.4) | 87.1 (84.7) | 85.8 (76.2) | 81.8 (72.6) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jalaeian Zaferani, E.; Teshnehlab, M.; Khodadadian, A.; Heitzinger, C.; Vali, M.; Noii, N.; Wick, T. Hyper-Parameter Optimization of Stacked Asymmetric Auto-Encoders for Automatic Personality Traits Perception. Sensors 2022, 22, 6206. https://doi.org/10.3390/s22166206

Jalaeian Zaferani E, Teshnehlab M, Khodadadian A, Heitzinger C, Vali M, Noii N, Wick T. Hyper-Parameter Optimization of Stacked Asymmetric Auto-Encoders for Automatic Personality Traits Perception. Sensors. 2022; 22(16):6206. https://doi.org/10.3390/s22166206

Chicago/Turabian StyleJalaeian Zaferani, Effat, Mohammad Teshnehlab, Amirreza Khodadadian, Clemens Heitzinger, Mansour Vali, Nima Noii, and Thomas Wick. 2022. "Hyper-Parameter Optimization of Stacked Asymmetric Auto-Encoders for Automatic Personality Traits Perception" Sensors 22, no. 16: 6206. https://doi.org/10.3390/s22166206

APA StyleJalaeian Zaferani, E., Teshnehlab, M., Khodadadian, A., Heitzinger, C., Vali, M., Noii, N., & Wick, T. (2022). Hyper-Parameter Optimization of Stacked Asymmetric Auto-Encoders for Automatic Personality Traits Perception. Sensors, 22(16), 6206. https://doi.org/10.3390/s22166206