Microsoft Azure Kinect Calibration for Three-Dimensional Dense Point Clouds and Reliable Skeletons

Abstract

:1. Introduction

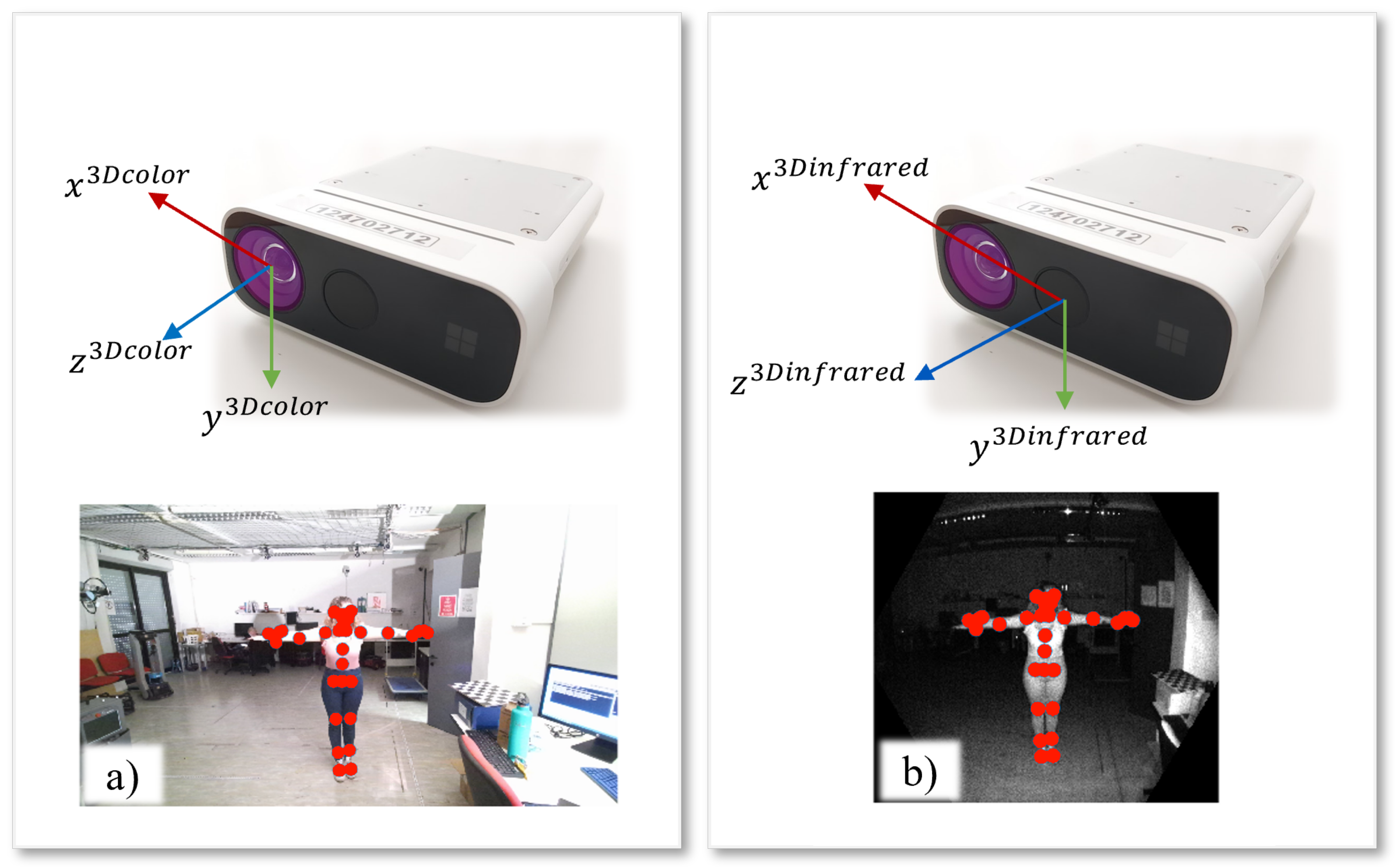

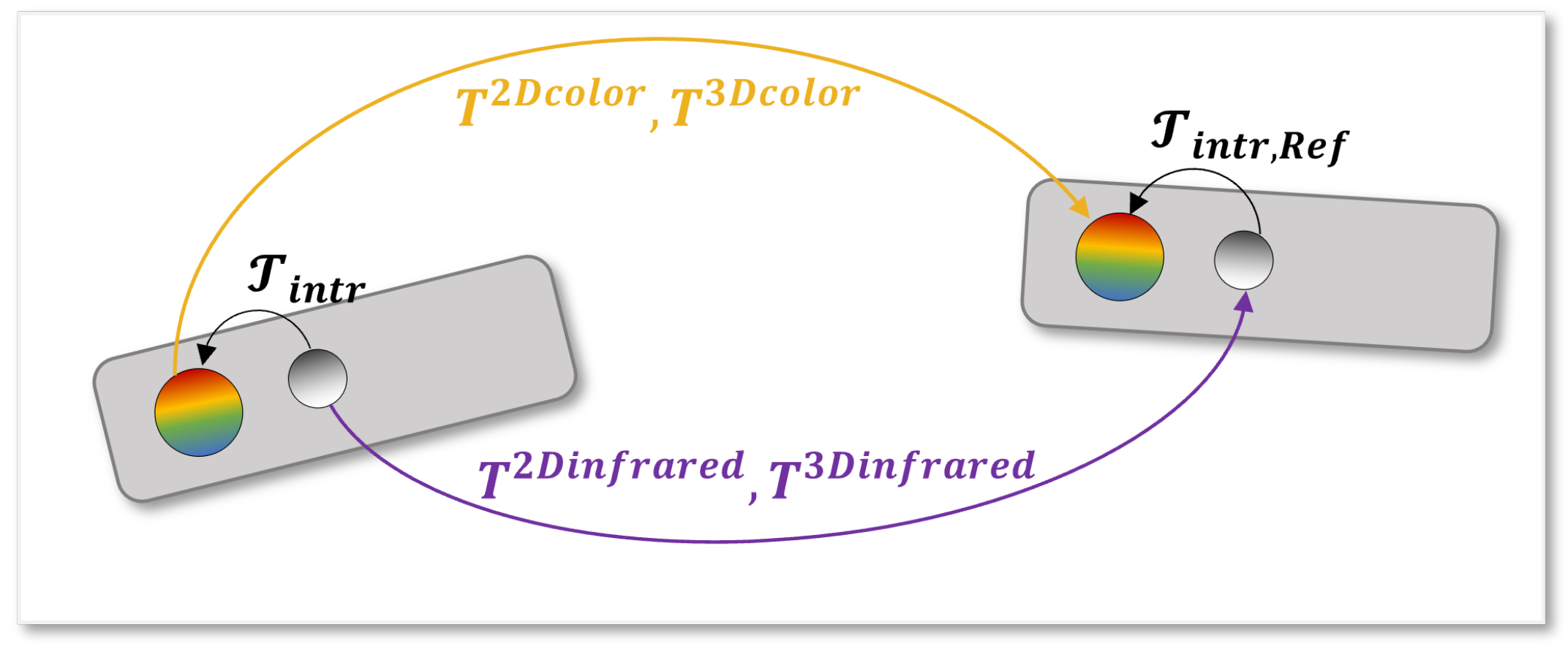

- A two-camera system composed of Azure Kinects has been considered and the specific physical characteristics of these sensors have been studied to devise different calibration methodologies.

- Four different methodologies based on the data coming from color cameras and infrared cameras with or without the associated depth information have been compared in two real scenarios (dense point clouds of real objects for measures analysis, and people skeletal joints extracted from SDK Body tracking algorithm).

- A careful analysis of results provides a guideline for the best calibration techniques according to the element to be calibrated, i.e., point clouds with color or infrared resolutions and skeletal joints.

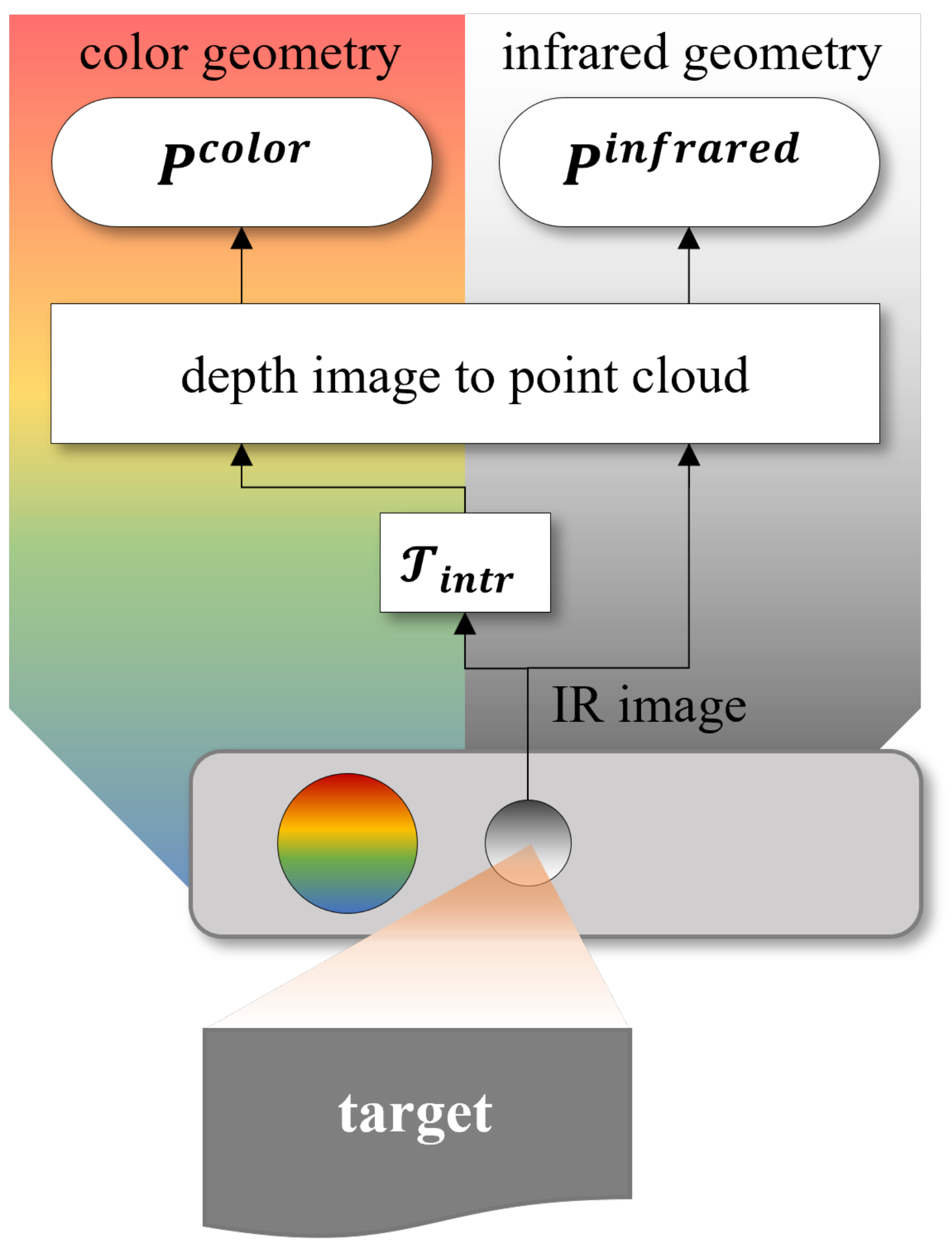

2. Methodology

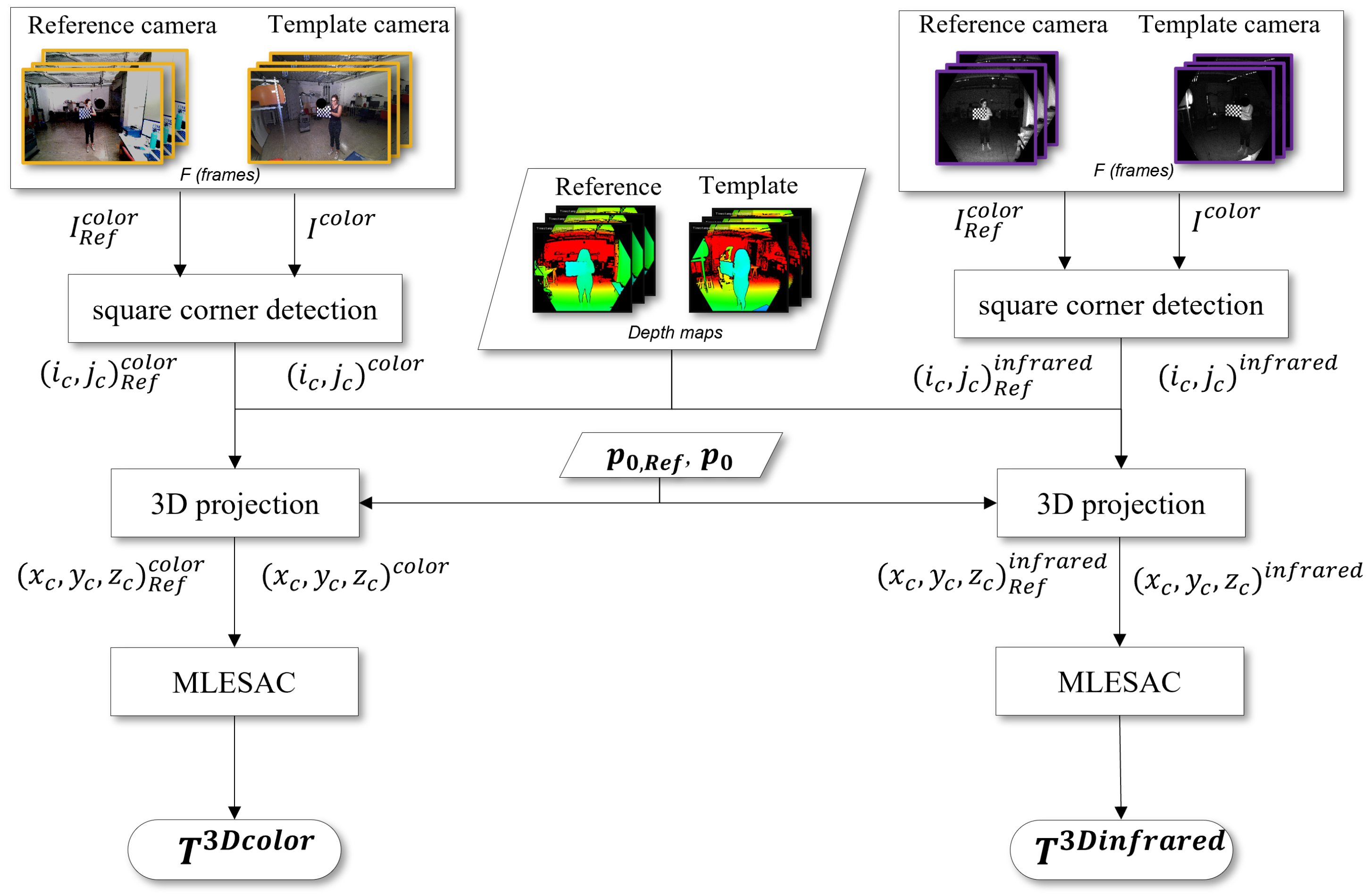

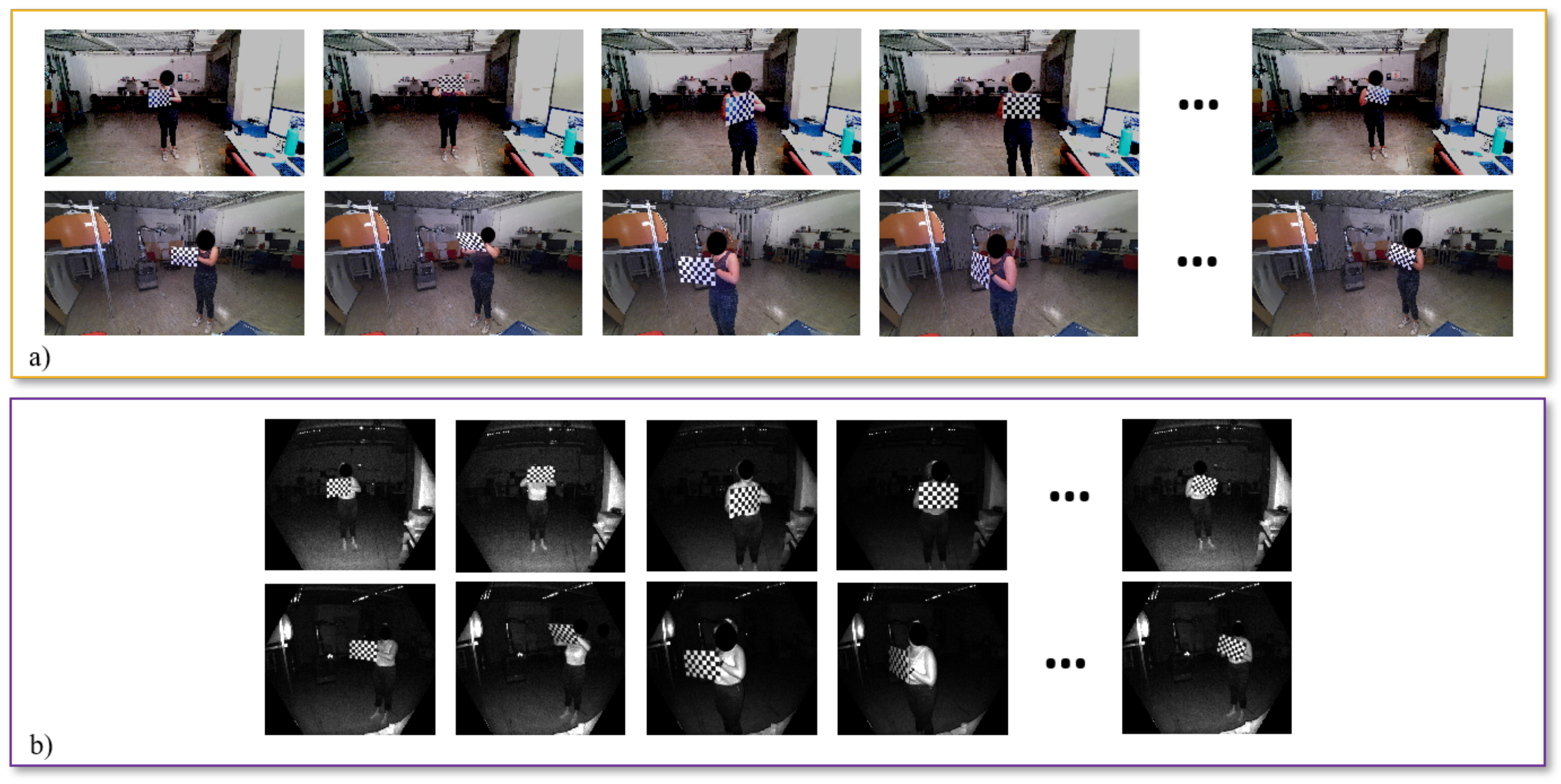

- if the chessboard corners are extracted from RGB images, i.e., with the geometry of the color cameras;

- if the chessboard corners are detected in the IR images, i.e., with the geometry of the infrared cameras.

- if the chessboard corners are taken from RGB images and then projected in the 3D space, using the geometry of the color camera;

- if the chessboard corners are extracted from the IR images and then projected in 3D, using the geometry of the infrared camera.

2.1. 2D Calibration Procedures

2.2. 3D Calibration Procedures

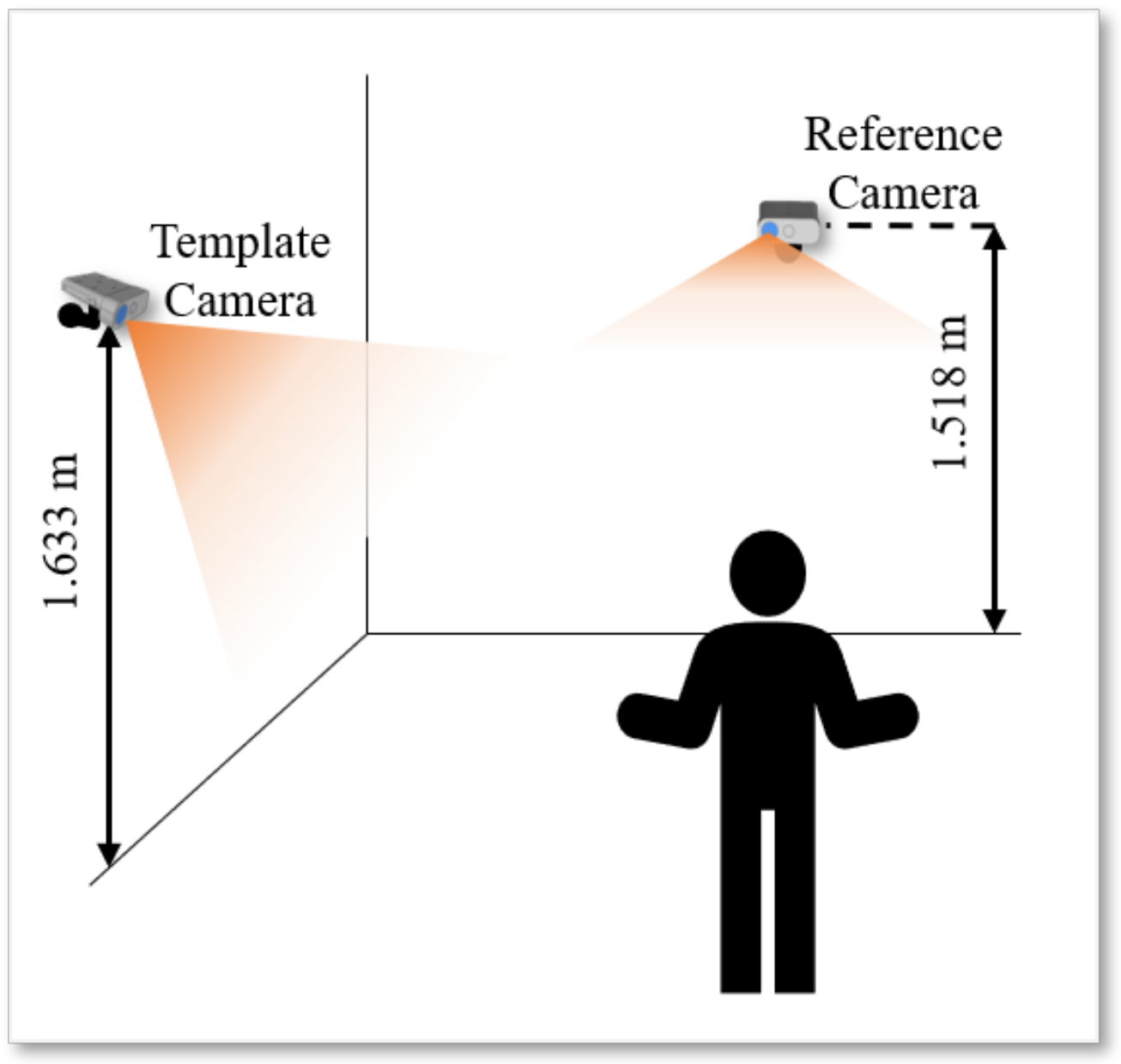

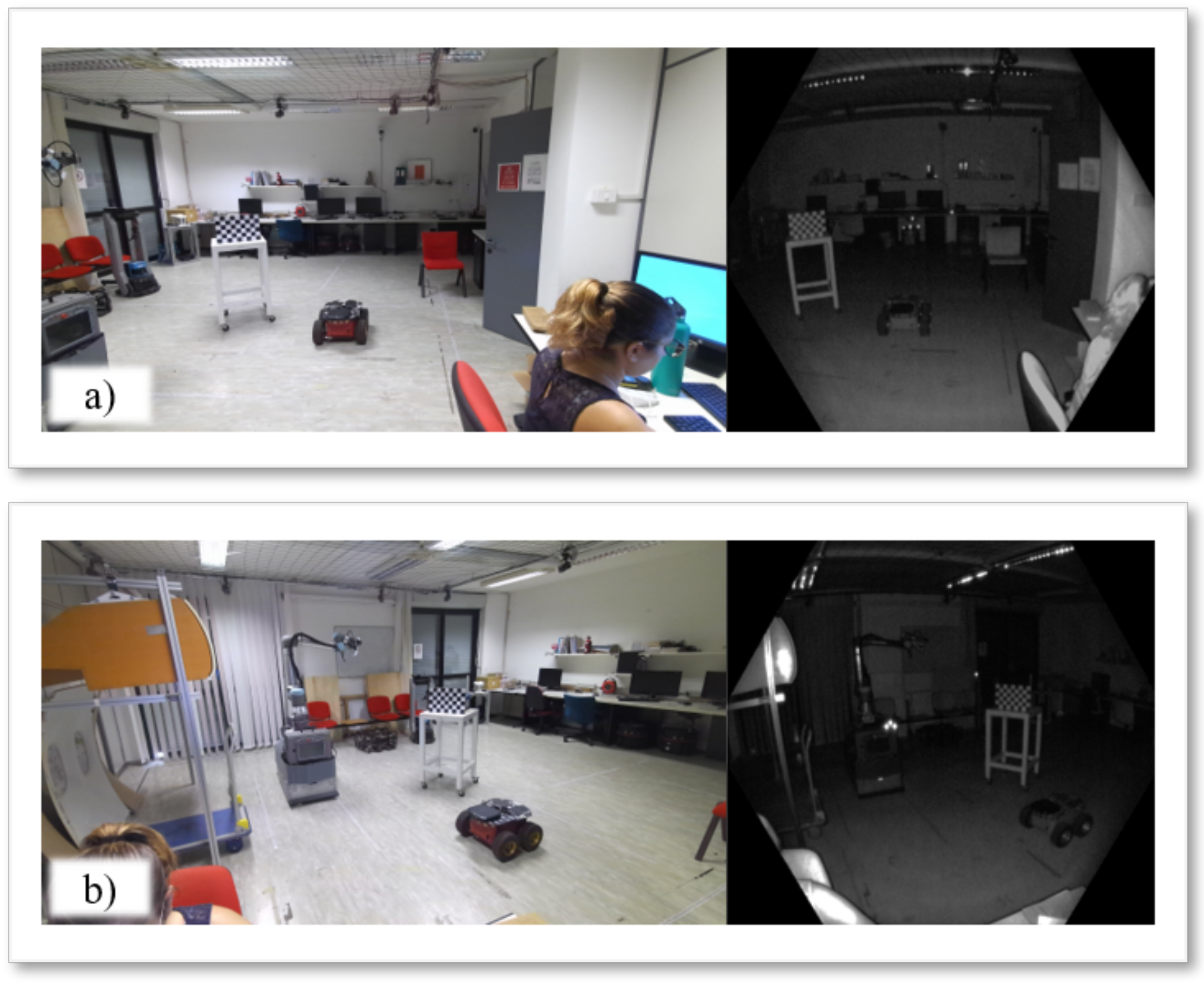

3. Experimental Setup

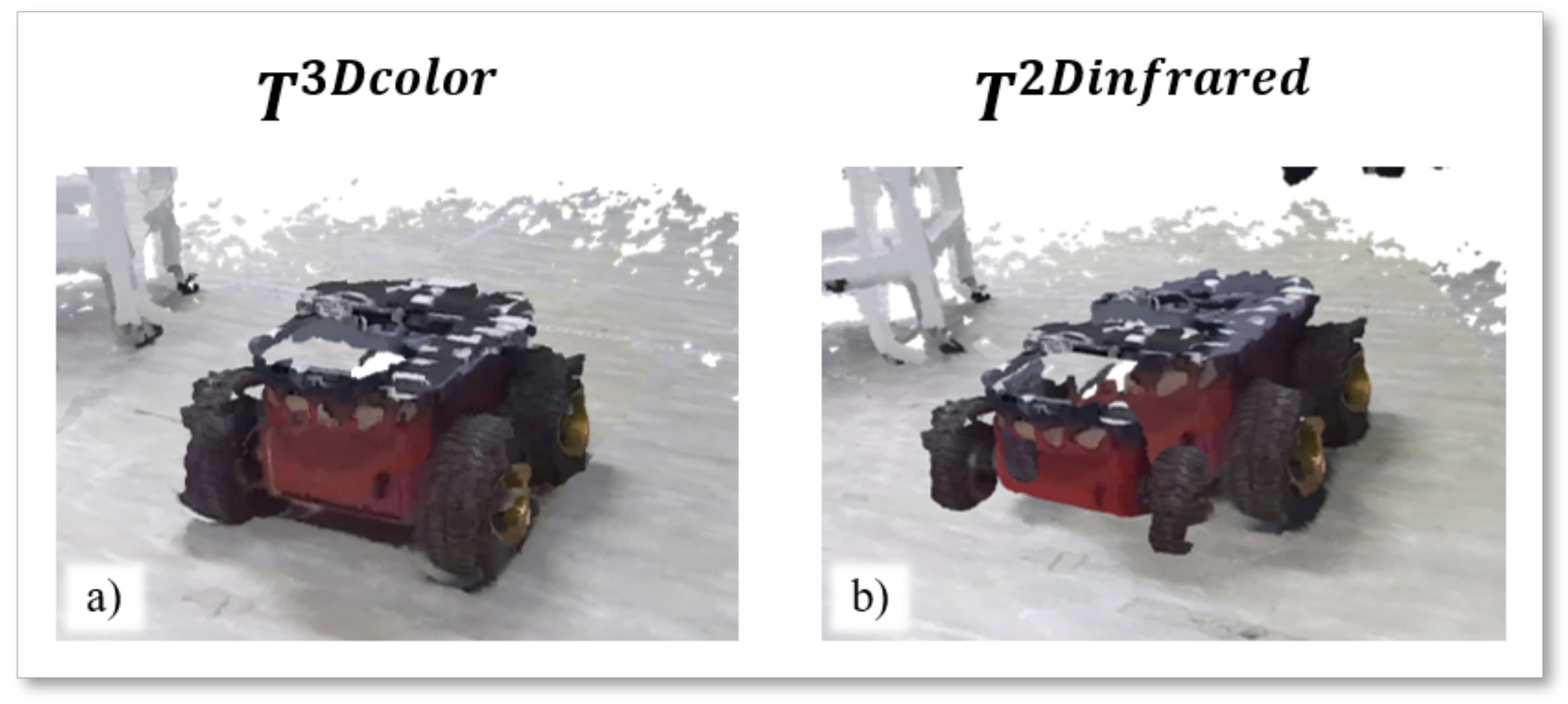

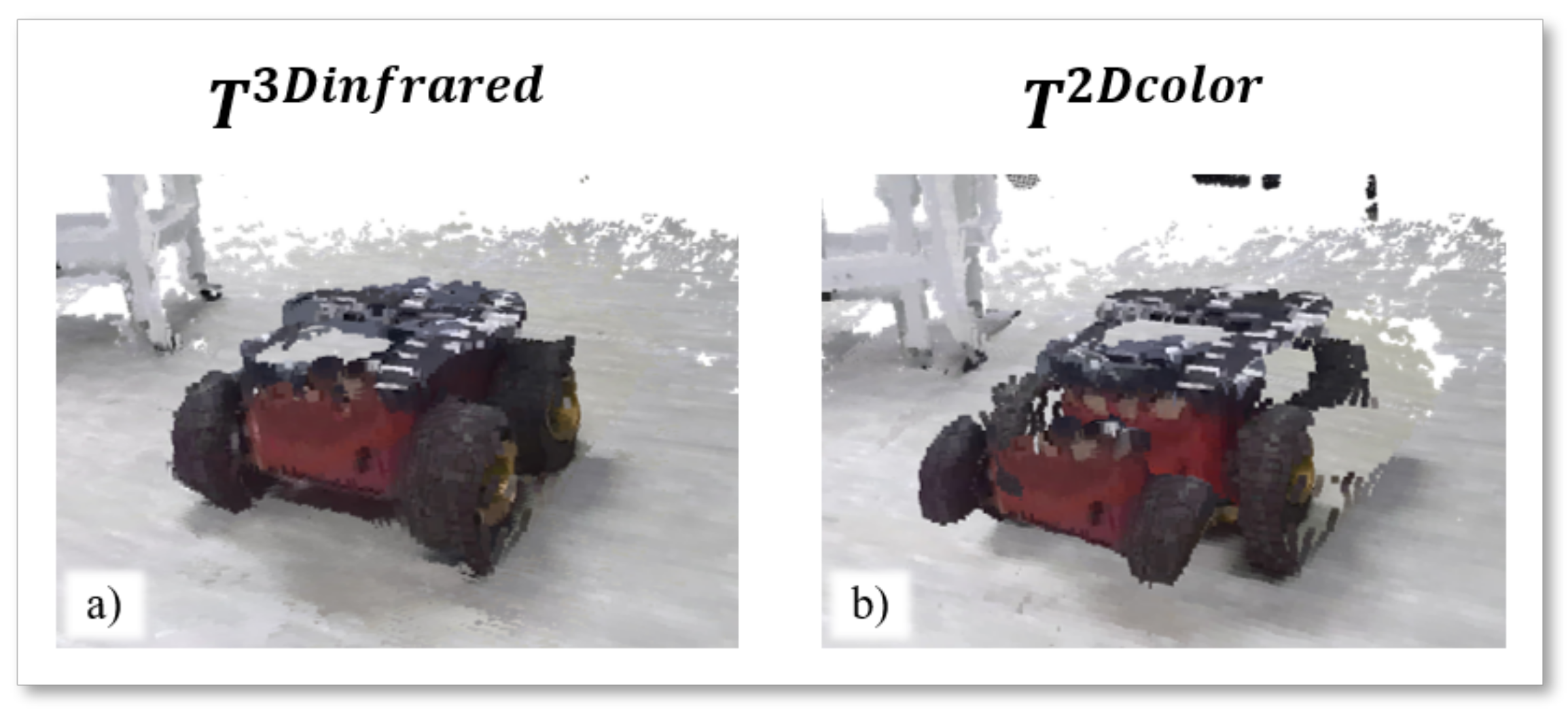

- To state the capability of aligning point clouds, two analyses have been proposed: in a static scenario, a still object is placed in the two camera FOVs; in a dynamic scenario, a moving target is framed simultaneously by the two cameras. After the calibration phase, the point clouds in both infrared and color geometries, grabbed by the Template camera, are transferred into the coordinate system of the Reference.

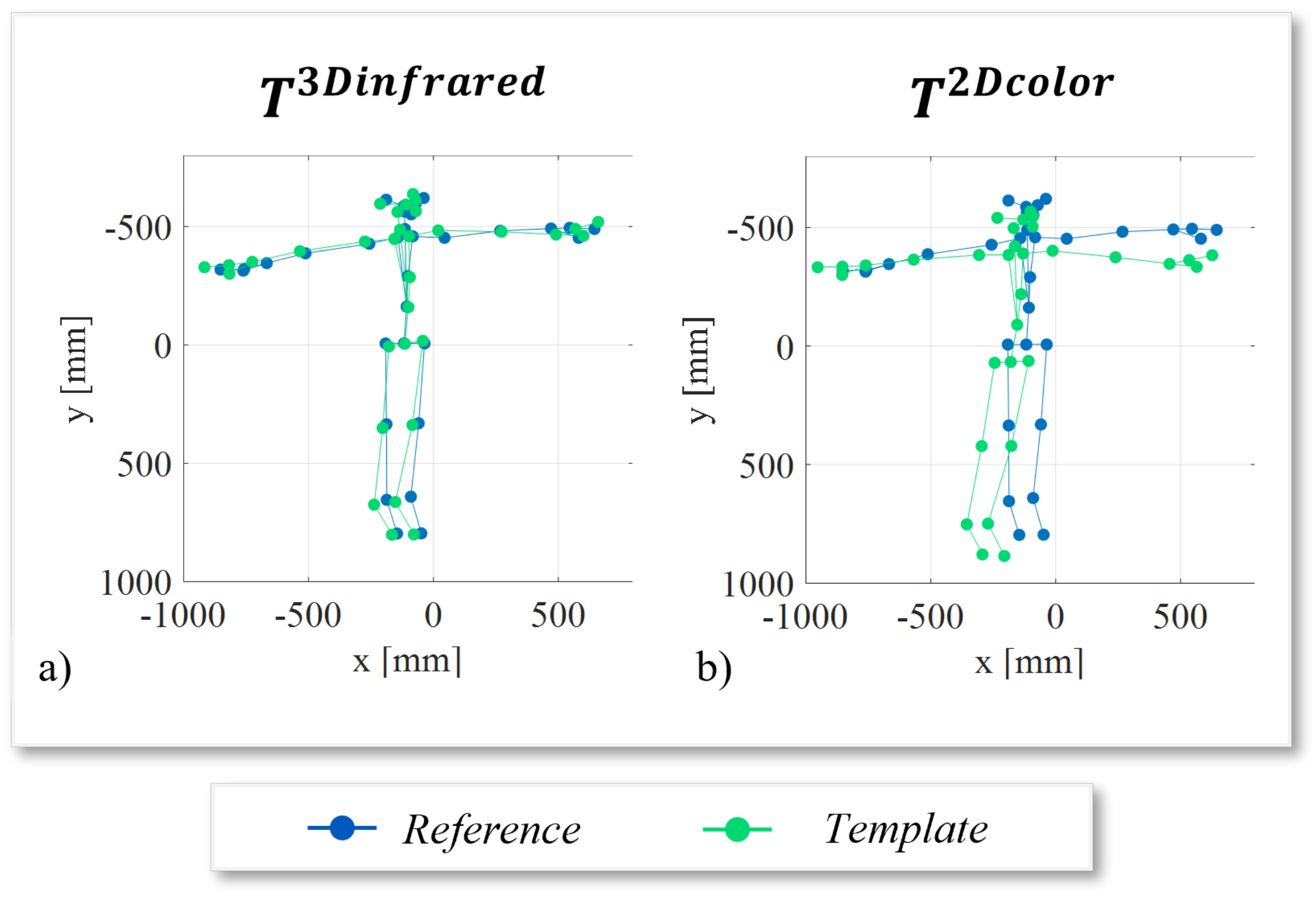

- A subject stands still with open arms in front of the two cameras and the corresponding skeletal joints are extracted from the Azure Kinect Body Tracking library. The skeleton from the Template camera is transferred into the coordinate system of the Reference. In this case, ten consecutive frames have been collected to calculate the average position of each joint to reduce intrinsic errors [11] and average involuntary movements of the subject.

4. Calibration Analysis

- , in the point cloud experiment are the 3D coordinates of points in correspondence taken from the Reference point cloud and the Template one after the application of estimated transformation. J is the total number of points in correspondence.

- , in the skeleton experiment are homologous 3D joint coordinates in the same reference system. Here, is the total number of the joints.

4.1. Point Cloud Experiment

4.2. Skeleton Experiment

5. Discussion

- In general, 3D procedures outperform 2D ones as depth information is added to the calibration. This is due to the effectiveness of depth estimation and intrinsic transformations used to project 2D image points in the 3D space.

- The alignment of point clouds in the geometry of the color camera has the lowest error value when using a calibration procedure working in 3D starting from RGB images, since both the point cloud in color geometry and the chessboard corner coordinates enabling the calibration follow the same interpolation procedures carried out by the general SDK functions.

- The alignment of point cloud in the geometry of the infrared camera has the lowest error when the calibration works starting from IR images. Even in this case, the calibration performed in the same geometry of the point cloud produces the best result.

- The alignment of skeletons shows the best result while calibration is performed in 3D starting from IR images. It further confirms the previous statement.

- In all experiments, the standard deviations of the RMSE values state that the variability in error computations is always lower than the improvement in aligning both point clouds and skeletal joints.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cicirelli, G.; Attolico, C.; Guaragnella, C.; D’Orazio, T. A kinect-based gesture recognition approach for a natural human robot interface. Int. J. Adv. Robot. Syst. 2015, 12, 22. [Google Scholar] [CrossRef]

- Da Silva Neto, J.G.; da Lima Silva, P.J.; Figueredo, F.; Teixeira, J.M.X.N.; Teichrieb, V. Comparison of RGB-D sensors for 3D reconstruction. In Proceedings of the 2020 22nd Symposium on Virtual and Augmented Reality (SVR), Porto de Galinhas, Brazil, 7–10 November 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 252–261. [Google Scholar]

- Nicora, M.L.; André, E.; Berkmans, D.; Carissoli, C.; D’Orazio, T.; Delle Fave, A.; Gebhard, P.; Marani, R.; Mira, R.M.; Negri, L.; et al. A human-driven control architecture for promoting good mental health in collaborative robot scenarios. In Proceedings of the 2021 30th IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), Vancouver, BC, Canada, 8–12 August 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 285–291. [Google Scholar]

- Ahad, M.A.R.; Antar, A.D.; Shahid, O. Vision-based Action Understanding for Assistive Healthcare: A Short Review. In Proceedings of the CVPR Workshops, Long Beach, CA, USA, 16–20 June 2019; pp. 1–11. [Google Scholar]

- Cicirelli, G.; Marani, R.; Petitti, A.; Milella, A.; D’Orazio, T. Ambient Assisted Living: A Review of Technologies, Methodologies and Future Perspectives for Healthy Aging of Population. Sensors 2021, 21, 3549. [Google Scholar] [CrossRef] [PubMed]

- Ni, Z.; Shen, Z.; Guo, C.; Xiong, G.; Nyberg, T.; Shang, X.; Li, S.; Wang, Y. The application of the depth camera in the social manufacturing: A review. In Proceedings of the 2016 IEEE International Conference on Service Operations and Logistics, and Informatics (SOLI), Beijing, China, 10–12 July 2016; pp. 66–70. [Google Scholar] [CrossRef]

- Weßeler, P.; Kaiser, B.; te Vrugt, J.; Lechler, A.; Verl, A. Camera based path planning for low quantity-high variant manufacturing with industrial robots. In Proceedings of the 2018 25th International Conference on Mechatronics and Machine Vision in Practice (M2VIP), Stuttgart, Germany, 20–22 November 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1–6. [Google Scholar]

- Alhayani, B.S. Visual sensor intelligent module based image transmission in industrial manufacturing for monitoring and manipulation problems. J. Intell. Manuf. 2021, 32, 597–610. [Google Scholar] [CrossRef]

- D’Orazio, T.; Marani, R.; Renò, V.; Cicirelli, G. Recent trends in gesture recognition: How depth data has improved classical approaches. Image Vis. Comput. 2016, 52, 56–72. [Google Scholar] [CrossRef]

- Sun, Y.; Weng, Y.; Luo, B.; Li, G.; Tao, B.; Jiang, D.; Chen, D. Gesture recognition algorithm based on multi-scale feature fusion in RGB-D images. IET Image Process. 2020, 14, 3662–3668. [Google Scholar] [CrossRef]

- Microsoft Azure Kinect SDK; Azure Kinect SDK v1.4.1; Microsoft: Redmond, WA, USA, 2020.

- Albert, J.A.; Owolabi, V.; Gebel, A.; Brahms, C.M.; Granacher, U.; Arnrich, B. Evaluation of the pose tracking performance of the azure kinect and kinect v2 for gait analysis in comparison with a gold standard: A pilot study. Sensors 2020, 20, 5104. [Google Scholar] [CrossRef]

- Kramer, J.B.; Sabalka, L.; Rush, B.; Jones, K.; Nolte, T. Automated Depth Video Monitoring For Fall Reduction: A Case Study. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 294–295. [Google Scholar]

- Lee, C.; Kim, J.; Cho, S.; Kim, J.; Yoo, J.; Kwon, S. Development of real-time hand gesture recognition for tabletop holographic display interaction using azure kinect. Sensors 2020, 20, 4566. [Google Scholar] [CrossRef]

- McGlade, J.; Wallace, L.; Hally, B.; White, A.; Reinke, K.; Jones, S. An early exploration of the use of the Microsoft Azure Kinect for estimation of urban tree Diameter at Breast Height. Remote Sens. Lett. 2020, 11, 963–972. [Google Scholar] [CrossRef]

- Uhlár, Á.; Ambrus, M.; Kékesi, M.; Fodor, E.; Grand, L.; Szathmáry, G.; Rácz, K.; Lacza, Z. Kinect Azure-Based Accurate Measurement of Dynamic Valgus Position of the Knee—A Corrigible Predisposing Factor of Osteoarthritis. Appl. Sci. 2021, 11, 5536. [Google Scholar] [CrossRef]

- Romeo, L.; Marani, R.; Malosio, M.; Perri, A.G.; D’Orazio, T. Performance analysis of body tracking with the microsoft azure kinect. In Proceedings of the 2021 29th Mediterranean Conference on Control and Automation (MED), Puglia, Italy, 22–25 June 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 572–577. [Google Scholar]

- Tölgyessy, M.; Dekan, M.; Chovanec, L.; Hubinský, P. Evaluation of the Azure Kinect and Its Comparison to Kinect V1 and Kinect V2. Sensors 2021, 21, 413. [Google Scholar] [CrossRef]

- Olagoke, A.S.; Ibrahim, H.; Teoh, S.S. Literature survey on multi-camera system and its application. IEEE Access 2020, 8, 172892–172922. [Google Scholar] [CrossRef]

- Di Leo, G.; Liguori, C.; Pietrosanto, A.; Sommella, P. A vision system for the online quality monitoring of industrial manufacturing. Opt. Lasers Eng. 2017, 89, 162–168. [Google Scholar] [CrossRef]

- Long, L.; Dongri, S. Review of Camera Calibration Algorithms. In Advances in Computer Communication and Computational Sciences; Bhatia, S.K., Tiwari, S., Mishra, K.K., Trivedi, M.C., Eds.; Springer: Singapore, 2019; pp. 723–732. [Google Scholar]

- Cui, Y.; Zhou, F.; Wang, Y.; Liu, L.; Gao, H. Precise calibration of binocular vision system used for vision measurement. Opt. Express 2014, 22, 9134–9149. [Google Scholar] [CrossRef]

- Kümmerle, J.; Kühner, T.; Lauer, M. Automatic calibration of multiple cameras and depth sensors with a spherical target. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1–8. [Google Scholar]

- Wohlfeil, J.; Grießbach, D.; Ernst, I.; Baumbach, D.; Dahlke, D. Automatic Camera System Calibration with a Chessboard Enabling Full Image Coverage. Int. Arch. Photogramm. Remote. Sens. Spat. Inf. Sci. 2019, 42, 1715–1722. [Google Scholar] [CrossRef] [Green Version]

- Darwish, W.; Bolsée, Q.; Munteanu, A. Robust Calibration of a Multi-View Azure Kinect Scanner Based on Spatial Consistency. In Proceedings of the 2020 International Conference on 3D Immersion (IC3D), Brussels, Belgium, 15 December 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–6. [Google Scholar]

- Cioppa, A.; Deliege, A.; Magera, F.; Giancola, S.; Barnich, O.; Ghanem, B.; Van Droogenbroeck, M. Camera calibration and player localization in soccernet-v2 and investigation of their representations for action spotting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 4537–4546. [Google Scholar]

- Hammarstedt, P.; Sturm, P.; Heyden, A. Degenerate cases and closed-form solutions for camera calibration with one-dimensional objects. In Proceedings of the Tenth IEEE International Conference on Computer Vision (ICCV’05), Beijing, China, 17–21 October 2005; IEEE: Piscataway, NJ, USA, 2005; Volume 1, pp. 317–324. [Google Scholar]

- Sturm, P.F.; Maybank, S.J. On plane-based camera calibration: A general algorithm, singularities, applications. In Proceedings of the 1999 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (Cat. No PR00149), Fort Collins, CO, USA, 23–25 June 1999; IEEE: Piscataway, NJ, USA, 1999; Volume 1, pp. 432–437. [Google Scholar]

- Agrawal, M.; Davis, L.S. Camera calibration using spheres: A semi-definite programming approach. In Proceedings of the IEEE International Conference on Computer Vision, Madison, WI, USA, 18–20 June 2003; Volume 3, p. 782. [Google Scholar]

- De França, J.A.; Stemmer, M.R.; França, M.B.D.M.; Piai, J.C. A new robust algorithmic for multi-camera calibration with a 1D object under general motions without prior knowledge of any camera intrinsic parameter. Pattern Recognit. 2012, 45, 3636–3647. [Google Scholar] [CrossRef]

- Bu, L.; Huo, H.; Liu, X.; Bu, F. Concentric circle grids for camera calibration with considering lens distortion. Opt. Lasers Eng. 2021, 140, 106527. [Google Scholar] [CrossRef]

- Ha, H.; Perdoch, M.; Alismail, H.; So Kweon, I.; Sheikh, Y. Deltille grids for geometric camera calibration. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 5344–5352. [Google Scholar]

- Sagitov, A.; Shabalina, K.; Sabirova, L.; Li, H.; Magid, E. ARTag, AprilTag and CALTag Fiducial Marker Systems: Comparison in a Presence of Partial Marker Occlusion and Rotation. In Proceedings of the ICINCO (2), Madrid, Spain, 26–28 July 2017; pp. 182–191. [Google Scholar]

- Li, B.; Heng, L.; Koser, K.; Pollefeys, M. A multiple-camera system calibration toolbox using a feature descriptor-based calibration pattern. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 1301–1307. [Google Scholar]

- Sun, J.; Chen, X.; Gong, Z.; Liu, Z.; Zhao, Y. Accurate camera calibration with distortion models using sphere images. Opt. Laser Technol. 2015, 65, 83–87. [Google Scholar] [CrossRef]

- Shin, K.Y.; Mun, J.H. A multi-camera calibration method using a 3-axis frame and wand. Int. J. Precis. Eng. Manuf. 2012, 13, 283–289. [Google Scholar] [CrossRef]

- Halloran, B.; Premaratne, P.; Vial, P.J. Robust one-dimensional calibration and localisation of a distributed camera sensor network. Pattern Recognit. 2020, 98, 107058. [Google Scholar] [CrossRef]

- Rameau, F.; Park, J.; Bailo, O.; Kweon, I.S. MC-Calib: A generic and robust calibration toolbox for multi-camera systems. Comput. Vis. Image Underst. 2022, 217, 103353. [Google Scholar] [CrossRef]

- Perez, A.J.; Perez-Cortes, J.C.; Guardiola, J.L. Simple and precise multi-view camera calibration for 3D reconstruction. Comput. Ind. 2020, 123, 103256. [Google Scholar] [CrossRef]

- Lee, S.H.; Yoo, J.; Park, M.; Kim, J.; Kwon, S. Robust extrinsic calibration of multiple RGB-D cameras with body tracking and feature matching. Sensors 2021, 21, 1013. [Google Scholar] [CrossRef] [PubMed]

- Zhang, C.; Huang, T.; Zhao, Q. A new model of RGB-D camera calibration based on 3D control field. Sensors 2019, 19, 5082. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ferstl, D.; Reinbacher, C.; Riegler, G.; Rüther, M.; Bischof, H. Learning Depth Calibration of Time-of-Flight Cameras. In Proceedings of the BMVC, Swansea, UK, 7–10 September 2015; pp. 1–102. [Google Scholar]

- Microsoft Azure Kinect SDK; Azure Kinect Body Tracking SDK v1.1.0; Microsoft: Redmond, WA, USA, 2021.

- Lin, K.; Wang, L.; Luo, K.; Chen, Y.; Liu, Z.; Sun, M.T. Cross-domain complementary learning using pose for multi-person part segmentation. IEEE Trans. Circuits Syst. Video Technol. 2020, 31, 1066–1078. [Google Scholar] [CrossRef]

- Microsoft Azure Kinect SDK; Azure Kinect SDK Functions Documentation; Microsoft: Redmond, WA, USA, 2020.

- Douskos, V.; Kalisperakis, I.; Karras, G. Automatic Calibration of Digital Cameras Using Planar Chess-Board Patterns. Available online: https://www.researchgate.net/publication/228345254_Automatic_calibration_of_digital_cameras_using_planar_chess-board_patterns (accessed on 7 June 2022).

- Geiger, A.; Moosmann, F.; Car, Ö.; Schuster, B. Automatic camera and range sensor calibration using a single shot. In Proceedings of the 2012 IEEE International Conference on Robotics and Automation, Saint Paul, MN, USA, 14–18 May 2012; IEEE: Piscataway, NJ, USA, 2012; pp. 3936–3943. [Google Scholar]

- Zhang, Z. A flexible new technique for camera calibration. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 1330–1334. [Google Scholar] [CrossRef] [Green Version]

- Zhang, L.; Rastgar, H.; Wang, D.; Vincent, A. Maximum Likelihood Estimation sample consensus with validation of individual correspondences. In Proceedings of the International Symposium on Visual Computing, Las Vegas, NV, USA, 30 November–2 December 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 447–456. [Google Scholar]

- Chum, O.; Matas, J.; Kittler, J. Locally optimized RANSAC. In Proceedings of the Joint Pattern Recognition Symposium, Magdeburg, Germany, 10–12 September 2003; Springer: Berlin/Heidelberg, Germany, 2003; pp. 236–243. [Google Scholar]

- Vinayak, R.K.; Kong, W.; Valiant, G.; Kakade, S. Maximum likelihood estimation for learning populations of parameters. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 6448–6457. [Google Scholar]

| 24.427 | 0.918 | 45.485 | 0.804 | |

| 37.283 | 0.955 | 20.162 | 0.592 | |

| 21.426 | 0.608 | 36.833 | 0.735 | |

| 33.194 | 0.758 | 9.872 | 0.268 | |

| 25.340 | 0.666 | 36.683 | 2.383 | |

| 39.446 | 2.299 | 13.046 | 0.765 | |

| 20.868 | 1.233 | 33.122 | 2.198 | |

| 34.039 | 2.024 | 7.429 | 0.606 | |

| Joints of the Skeleton | ||

|---|---|---|

| 124.602 | 1.349 | |

| 44.256 | 4.640 | |

| 111.247 | 1.889 | |

| 35.410 | 5.490 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Romeo, L.; Marani, R.; Perri, A.G.; D’Orazio, T. Microsoft Azure Kinect Calibration for Three-Dimensional Dense Point Clouds and Reliable Skeletons. Sensors 2022, 22, 4986. https://doi.org/10.3390/s22134986

Romeo L, Marani R, Perri AG, D’Orazio T. Microsoft Azure Kinect Calibration for Three-Dimensional Dense Point Clouds and Reliable Skeletons. Sensors. 2022; 22(13):4986. https://doi.org/10.3390/s22134986

Chicago/Turabian StyleRomeo, Laura, Roberto Marani, Anna Gina Perri, and Tiziana D’Orazio. 2022. "Microsoft Azure Kinect Calibration for Three-Dimensional Dense Point Clouds and Reliable Skeletons" Sensors 22, no. 13: 4986. https://doi.org/10.3390/s22134986

APA StyleRomeo, L., Marani, R., Perri, A. G., & D’Orazio, T. (2022). Microsoft Azure Kinect Calibration for Three-Dimensional Dense Point Clouds and Reliable Skeletons. Sensors, 22(13), 4986. https://doi.org/10.3390/s22134986