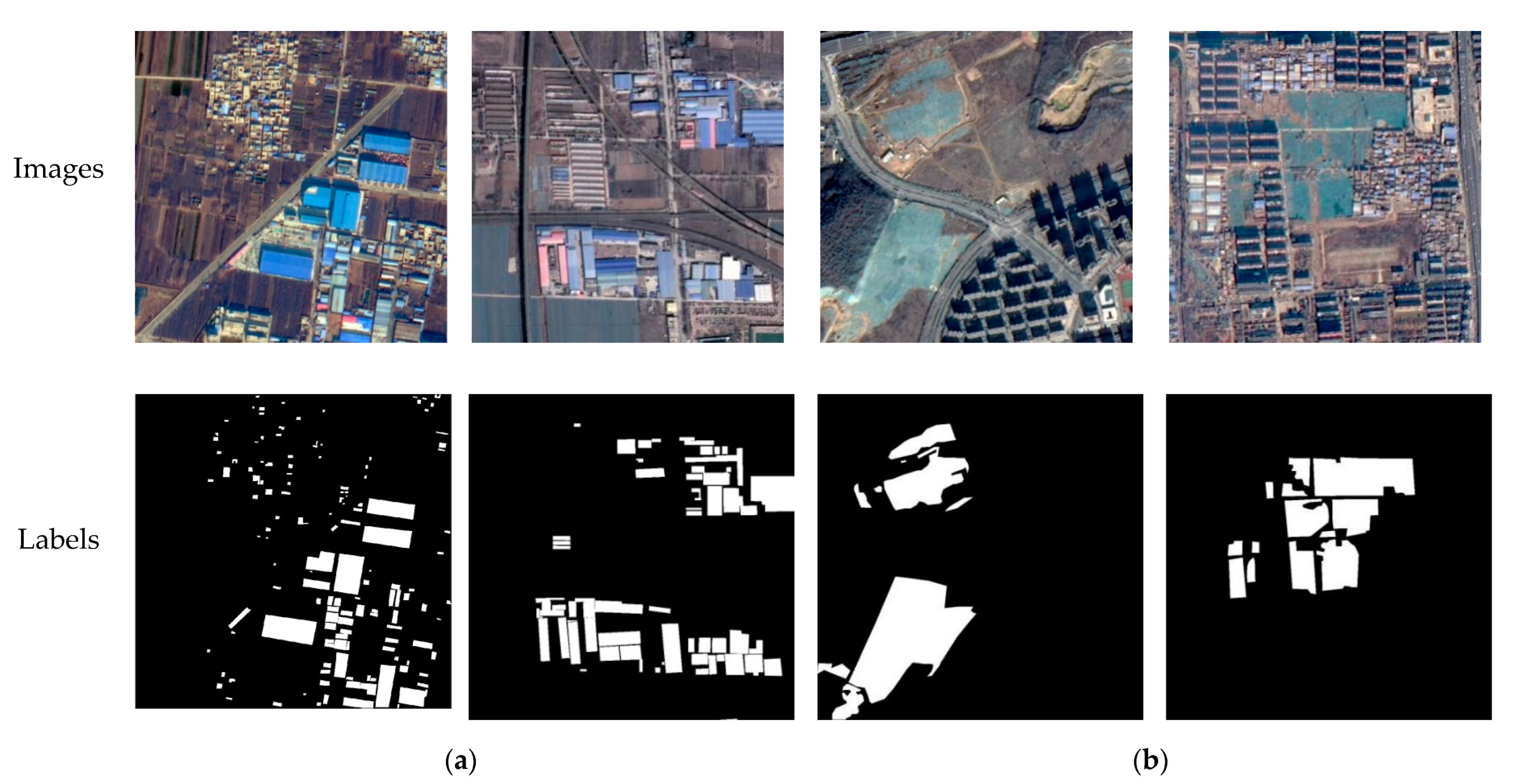

2.1. Data Labeling

Our sample data come from the multi-spectral remote sensing images of a GF2 satellite, acquired over Beijing, China. We used the LabelMe annotation tool to manually label hazard sources along the high-speed railway and to generate binary label images. The categories of hazard sources in this paper include color-coated steel houses and dust-proof nets.

Color-coated steel houses are typical urban temporary buildings, with color-coated steel sheets as the raw material. The colors are mainly blue, white, and red. The color-coated steel houses in high resolution remote sensing images usually have high brightness, and the shape is mostly rectangular or rectangular combination. Dust-proof nets are mainly used for dust control and environmental protection. Exposed surfaces, dust-prone materials, etc., in cities are usually required to be covered by dust-proof nets. Dust-proof nets are generally made of polyethylene material in a light green color. Here, we define hazard source pixels as positive samples and other pixels as negative samples, and generate binary label images, as shown in

Figure 2.

The original images in the hazard source dataset are the fusion images of the panchromatic and multi-spectral bands at a spatial resolution of 0.8 m, using the red, green, and blue bands. A total of 153 image patches of 1000 × 1000 pixels containing color-coated steel houses and 139 image patches containing dust-proof nets were selected for the dataset. Divided by the proportion 7:2:1 for training set, validation set, and testing set, we finally obtained 153, 43, and 21 pieces of color-coated steel house samples and 139, 39, and 19 pieces of dust-proof net samples. Data augmentation was performed on the training samples, after rotation by 90°, 180°, and 270°, horizontal and vertical inversion, and random cropping, and 3672 and 3336 image patches of 512 × 512 pixels were obtained, respectively, and the specific information is shown in

Table 1.

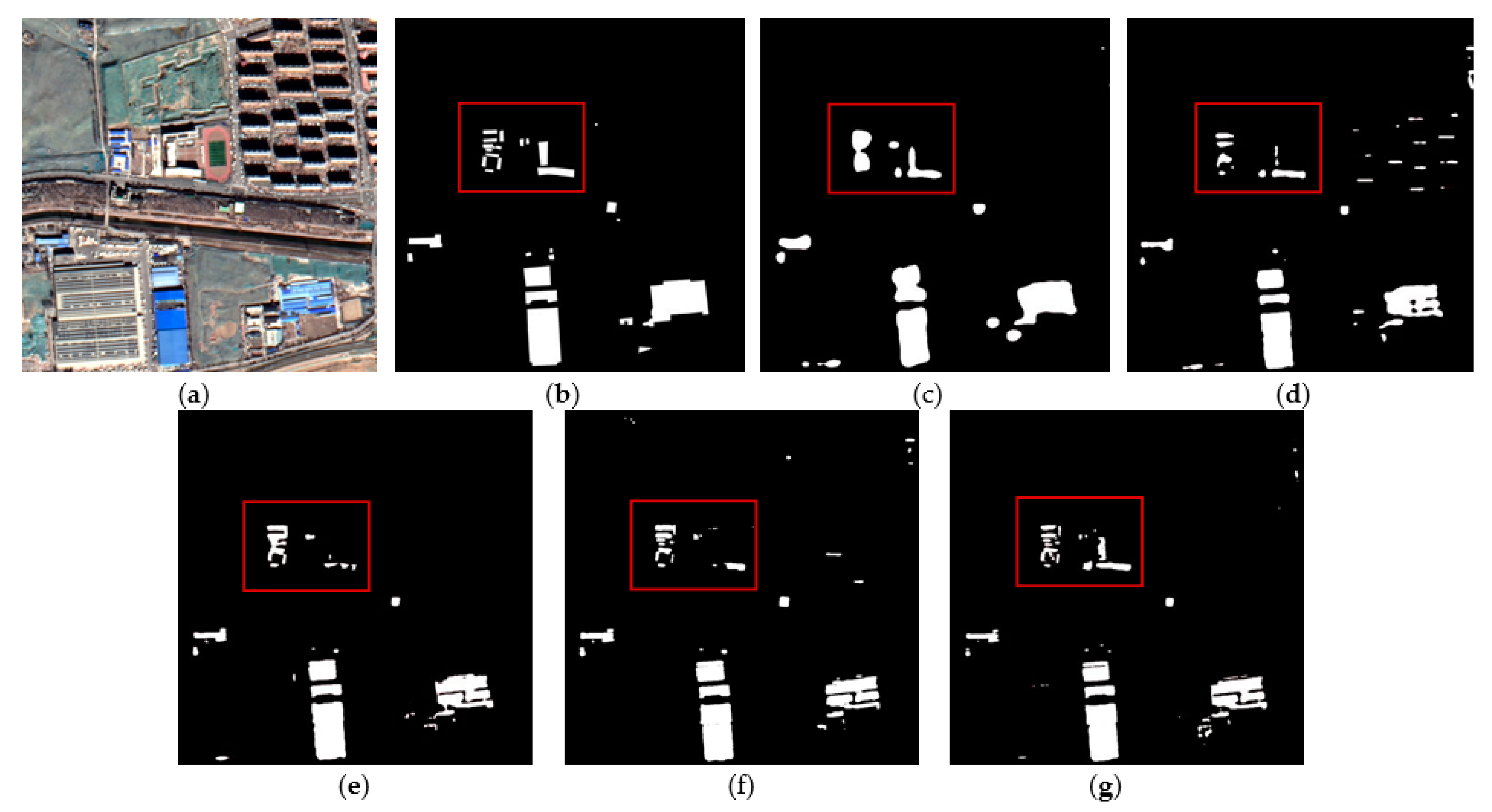

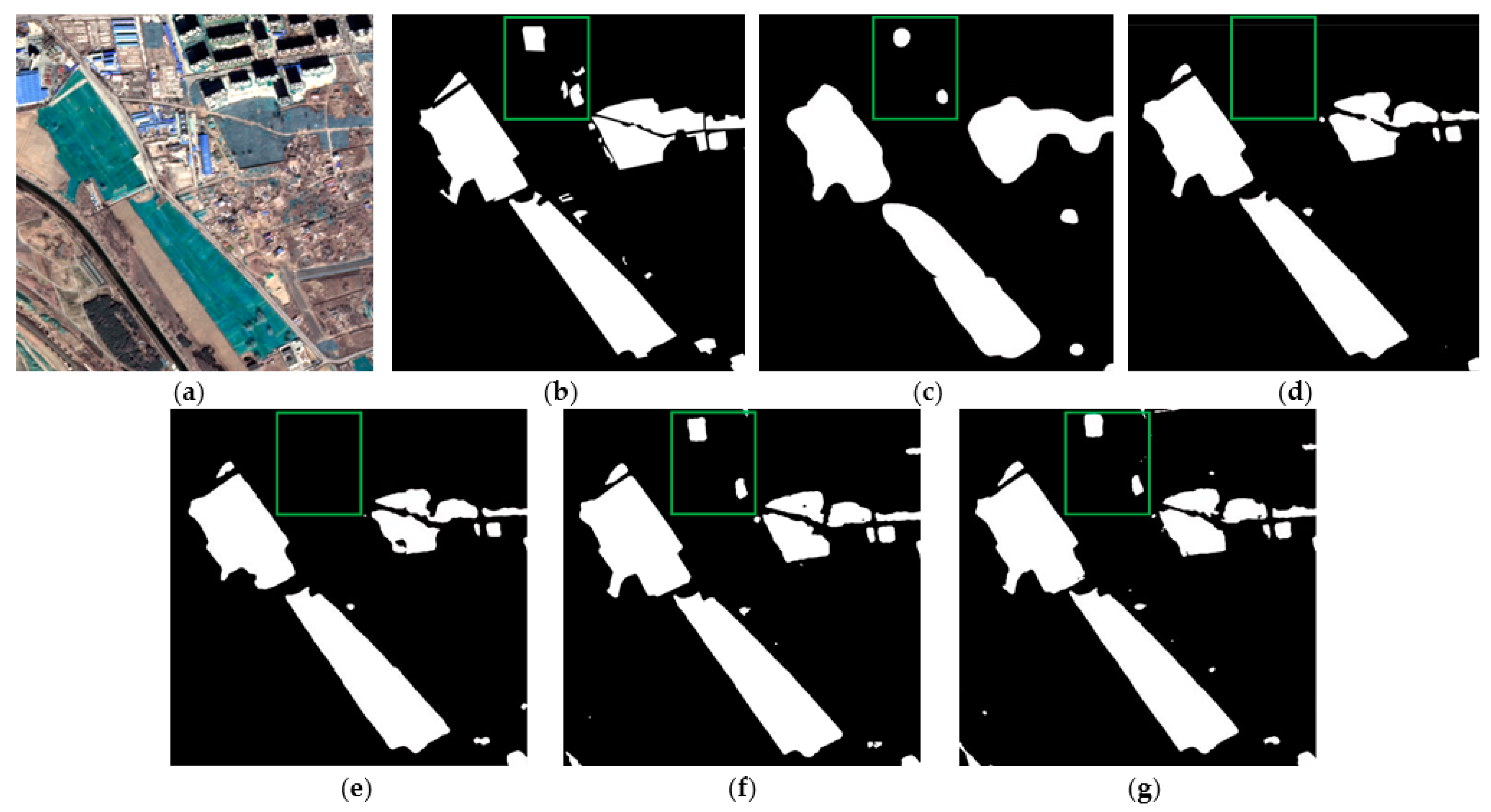

2.2. Architecture of TE-ResUNet

The proposed TE-ResUNet is a segmentation network with an encoder–decoder structure based on the UNet family model-ResUNet. ResUNet is inspired by residual network, replacing each sub-module of UNet with residual connected network, which allows the network to be deepened to obtain higher-level semantic features and with a reduced risk of gradient disappearance. Typically, the UNet family models skip connect the low-level features directly to high-level features to maintain accurate texture features. However, the low-level features extracted by the shallow layer of the network are often of low quality, especially in terms of contrast, resulting in blurred texture details that negatively affect the extraction and utilization of low-level information. This, in turn, affects the extraction accuracy of the segmentation boundary. In order to make full use of the low-level texture features, a texture-enhanced ResUNet (TE-ResUnet) is proposed for fast-speed railway hazard source extraction from high-resolution remote sensing images.

The architecture of TE-ResUNet is shown in

Figure 3, with a contracted path on the left side performs residual connected convolutions to produce feature maps, and an extended path on the right side to recover detailed and structural information related to hazard sources via convolution and upsampling modules. The whole network presents a U-shape. In particular, the texture enhancement modules are introduced to enhance the texture of the low-level features to assist the expansion path to better recover the hazard boundary, as well as the small targets.

The orange module D represents residual connected down-sampling module, including convolution, atrous convolution, and ReLU layers. The blue module U represents the upsampling module, including the convolution and upsampling layer, and the yellow module C represents the convolution, activation function, and batch normalization layers. The dark gray arrow represents skip connected operation. The low-level features at each scale are skip-connected to the higher layers, allowing the model to make full use of the low-level features. The low-level features extracted from the shallow layers are often of low quality, especially with low contrast, which leads to blurred texture details, and brings negative impacts on extraction and utilization of low-level information. Therefore, this paper introduces the texture enhancement module (purple module TE) to perform texture enhancement on the low-level features before they are delivered to the deeper layers by skip connections.

The texture enhancement (TE) module is inspired by histogram equalization, a classical method of image quality enhancement [

28]. The TE module aims to convert histogram equalization into a learnable manner. It first encodes the feature to a learnable histogram, during which the input features are quantized into multiple level intensities, and each level can represent a kind of texture statistics. Then, a graph is built to reconstruct each gray level by propagating statistical information of all original levels for texture enhancement.

Specifically, the texture enhancement module starts by generating a histogram with the horizontal and vertical axes representing each gray level and its count value, respectively. These two axes are represented as two feature vectors,

G and

F. Histogram equalization aims to reconstruct the levels as

G′ using the statistical information contained in

F. Each level

G′

n is converted to

G′

n by Equation (1):

where

N represents the number of gray levels.

The structure of the texture enhancement module is shown in

Figure 4. The input feature of TE module is of high channel dimensionality. In order to quantize and count the high dimensional representation, we compute the similarity between each vector and average feature as the counted object instead of quantizing each channel separately. The input feature map

AC × H × W is converted into the global averaged feature

gC × 1 by global average pooling. Each spatial position

Aij of

A has dimension

C × 1, and the cosine similarity of each

Aij and the mean

g is then calculated to obtain the similarity map

S1 × H × W, where

Si,j can be calculated by Equation (2).

S is then reshaped to 1 × HW and quantized with N gray levels L. L is obtained by equally dividing N points between the minimum and maximum values of S. The quantization encoding map EN × HW is thus obtained. The histogram CN × 2 is represented by concatenating E and L, where L is the horizontal coordinate indicating the number of quantized gray levels, and E is the vertical coordinate indicating the weight corresponding to each level. The quantization counting map C reflects the relative statistics of input feature map. To further obtain absolute statistical information, the global average feature g is encoded into C to obtain D.

Afterwards, according to the histogram quantization method, a new quantization level

L′ needs to be computed from

D. Each new level should be obtained by perceiving the statistical information from all the original levels, and can be treated as a graph. For this purpose, a graph is constructed to propagate the information from all levels. The statistical characteristics of each quantified level are defined as a node. In a traditional histogram quantization algorithm, the adjacency matrix is a manually defined diagonal matrix, which is extended to a learning matrix as follows:

where

ϕ1 and

ϕ2 represent two different 1 × 1 convolutional layers, and after performing Softmax operation in the first dimension as a nonlinear normalization function, each node is then updated to obtain the reconstructed quantization level

by fusing the features of all other nodes.

where

ϕ3 represents another 1 × 1 convolutional layer.

Subsequently, the reconstruction level

L′ is assigned to each pixel using the quantization encoding mapping

EN × HW to obtain the final output

R, since

E reflects the original quantization level of each pixel.

R is obtained by:

R is then reconstructed into , which is the final texture-enhanced feature map.

Texture enhancement of features F1 and F2 is performed by TE1 and TE2, which are then connected to the high-level features by depth to make full use of the low-level texture details to assist the network in generating more accurate hazard source extraction results. The specific model parameters of TE-ResUNet are shown in

Table 2.

2.3. Multi-Scale Lovász Loss Function

The hazard source dataset suffers from the class-imbalance, mainly due to the sparsity of the hazard source pixel distribution. This makes the pixel-based loss function, such as cross entropy loss, focus training on background pixels that contribute less valid information, resulting in low training efficiency and model degradation. Lovász loss [

29] is a metric-based measurement that focuses more on the metrics of the entire image instead of a single pixel. This means that there is no need to consider the problem of balanced sample distribution, and it works better for binary classification problems where the proportion of foreground samples is much smaller than the background.

Based on the above analysis, we propose to address the class-imbalance problem by applying the Lovász loss. The Lovász loss function is a smoothed expansion of the Jaccard loss for the Jaccard index. The expression for Jaccard loss is given in Equation (6):

where

y* represents the predicted result of the network model, and

represents the ground truth. As the Jaccard loss function is only applicable to the discrete case, a Lovász expansion of it can transform the input space from discrete to continuous, and the output value is equal to the output of the original function on the discrete domain. In this paper, the Lovász loss function is denoted as Δ

JL.

In order to make the network optimization go toward the accurate direction of loss decline, and to enhance the supervision of parameter learning of the two texture enhancement modules, we performed loss function calculations based on three scales of feature maps. In addition to calculating the loss of the final prediction results and the labels, we also performed channel dimensionality reduction on F8 and calculateed Lovász loss with the downsampled labels and perform channel dimensionality reduction on U4 and calculate Lovász loss with the downsampled labels. The final loss is the weighted sum of the three components, as follows.

where

represents the result of dimensionality reduction on the output of U4,

yD2* and

yD4* denotes the result of downsampling the original labels by a factor of 2 and 4,

denotes the result of channel dimensionality reduction on F8, and

λ1,

λ2 and

λ3 are the weights of the three scale loss functions.