1. Introduction

Human activity recognition (HAR) aims at verifying the individual’s activity of daily livings (ADLs) from the data captured using various multimodal modalities, e.g., time-series data from motion sensors, color and/or depth information from video cameras. Lately, HAR-based systems have gained the attention of the research community due to their use in several applications, such as healthcare monitoring applications, surveillance systems, gaming applications, and anti-terrorist and anti-crime securities. The availability of low cost yet highly accurate motion sensors in mobile gadgets and wearable sensors, e.g., smartwatches, has also played a vital role in the dramatic development of these applications. The main objective of such a system is to automatically detect and recognize the human daily life activities from the captured data by creating a predictive model that allows the classification of an individual’s behavior [

1]. ADL refers to such tasks or activities that undertake by people in their daily life [

2]. An ADL is usually a long term activity that consists of a sequence of small actions as depicted in

Figure 1. We can describe the long term activities as composite activities, e.g., cooking, playing, etc., whereas the small sequence of actions are known as atomic activities, such as raising an arm or a leg [

3].

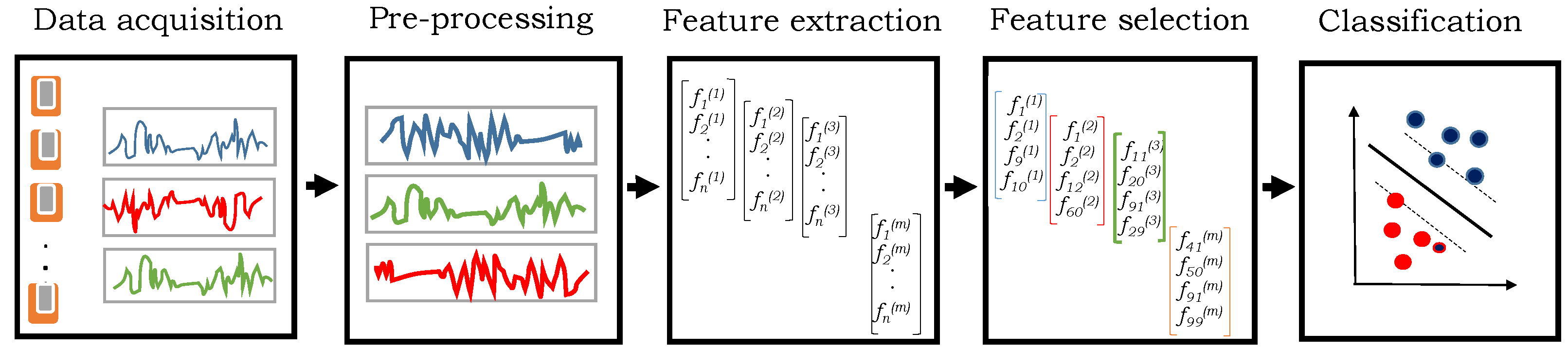

HAR systems usually follow a standard set of actions in a sequence which includes data capturing, pre-processing, feature extraction, the selection of most discriminant features, and their classification into the respective classes (i.e., activities) [

4] as depicted in

Figure 2. The first step is the data collection using the setup of wearable sensors, force plates, cameras, etc. The selection of such a sensor is very much dependent on the nature of ADL to be recognized. In many cases, it is inappropriate to use raw sensor data directly for activity recognition because it may contain noise or irrelevant components. Therefore, data pre-processing techniques, such as normalization, recovery of missing data, unit conversion, etc., are applied in the second step to make the raw data suitable for further analysis. Third, the high-level features are extracted on this pre-processed data based on the expert’s knowledge in the considered application domain. Usually, several features are extracted and the most important of them are selected such that they retain the maximum possible discriminatory information. This process is known as feature selection which not only reduces the dimensions but also reduces the need for storage and computational cost. Finally, the features are recognized into respective activities using the classifier which provides the separation between the features of different activities in the feature space. It is worth mentioning that the performance of the HAR system is very much dependent on every step of the sequence.

Numerous researchers have proposed handcrafted features on visual [

5] and time series data [

6] to detect and recognize human activities. However, there is no guarantee that the engineered handcrafted features work well for all the scenarios. Many factors, such as the nature of input data and prior knowledge, in the application domain, play an important role to extract the optimal features. Therefore, the researchers are continuously trying to explore more systematic ways to get the optimal features. Lately, deep learning-based HAR systems, e.g., References [

7,

8], have also explored to extract the features using raw data of wearable sensors automatically. These systems exploited Artificial Neural Networks (ANNs), which consist of multiple artificial neurons, arranged and connected in several layers such that the output of the first layer is forwarded as an input to the second layer, and so forth. That is, each next layer is capable to encode the low-level descriptors of the previous subsequent layer. Hence, the last layer of the deep ANN provides highly abstract features from the input data. Though the deep learning-based techniques have demonstrated excellent results on various benchmark datasets, it is, however, quite difficult to rigorously assess the performance of feature learning methods. Despite their good performance, they need a large amount of training data to tune the hyper-parameters. The lack of knowledge in the implementation of optimal features [

4,

9,

10], the selection of the relevant features that represent the ongoing activity [

11,

12], and the parameters in the classification techniques [

13,

14] make the whole process much complicated.

This paper presents a novel two-level hierarchical method to recognize human activities using a set of wearable sensors. Since the human ADL consists of several repetitive short series of actions, it is quite difficult to directly use the sensory data for activity recognition because the two or more sequences of the same activity data may have large diversity. However, a similarity can be observed in the temporal occurrence of the atomic actions. Therefore, the objective of this research is to analyze the recognition score of every atomic action to represents a composite activity. To solve this problem, we propose a two-level hierarchical model which detects atomic activities at the first level using raw sensory data obtained from multiple wearable sensors, and later the composite activities are identified using the recognition score of atomic activities at the second level. In particular, the atomic activities are detected from the original sensory data and their recognition scores are obtained. Later, the features are extracted from these atomic scores to recognize the composite activities. The contribution of this paper is two-fold. First, we propose two different methods for feature extraction using atomic scores: Handcrafted features, and the features obtained using subspace pooling technique. Second, several experiments are performed to analyze the impact of different hyper-parameters during the computation of feature descriptors, the selection of optimal features that represent the composite activities, and the evaluation of different classification algorithms. We used the CogAge dataset [

15] to evaluate the performance of our proposed algorithm which contains the data of 7 composite activities performed by 6 different subjects in different time intervals using three wearable devices: smartphone, smartwatch, and smart glasses. Each of the composite activities can be represented using the combination of 61 atomic activities. We considered each atomic activity as a feature hence, a feature vector of 61 dimension is used to represent the composite activity. The recognition results are compared with existing state-of-the-art techniques. The recognition results of the proposed technique and their comparison with the existing state-of-the-art techniques confirm its effectiveness.

The rest of this paper is organized as follows: a brief review of literature on human activity recognition is presented in

Section 2. The overview of the proposed method is described in

Section 3. The proposed activity recognition algorithm is presented in

Section 4. The experimental evaluation through different qualitative and quantitative tools is carried out in

Section 5. The conclusion is drawn in

Section 6.

2. Background and Literature Review

Human activity recognition has gained the interest of the research community in the last two decades due to its several applications in surveillance systems, rehabilitation places, gaming, and others. This section summarizes the most existing work in the field of human activity recognition using sensor-based time-series data.

A composite activity comprises several atomic actions. Numerous existing techniques focused on identifying the simple and basic human actions, whereas recognizing the composite activities remains an active problem. The applications in daily life require the identification of high-level human activities which are further composed of smaller atomic activities [

16]. This paper summarizes the existing piece of work on hierarchical activity recognition techniques to encode the temporal patterns of composite activities. The techniques proposed in References [

17,

18] have concluded that the hierarchical recognition models are effective to recognize human activities. The authors in Reference [

19] presented a method to recognize the cooking-related activities in visual data using pose-based, hand-centric and holistic approaches. In the first step, the hand positions and their movements are detected and then the shape of the knife and vegetable is determined. Lastly, the composite activities are recognized using fine-grained activities. The technique in Reference [

20] proposed a hierarchical discriminative model to analyze the composite activities. They employed a predefined sequence of atomic actions and reported improvements in recognition accuracy when the composite activities are recognized using a hierarchical approach. In Reference [

21], a hierarchical model is proposed to recognize the human activities using accelerometer sensor data of smartphone. The technique proposed in Reference [

22] employed a two-level classification method to recognize 21 different composite activities using a set of wearable devices on multiple positions of the human body. The authors in Reference [

23] presented an approach to detect the primitive actions in the recorded data of composite activities which were used to recognize the ADLs using the limited amount of training data. The technique proposed in Reference [

24] employed a Deep Belief Network to construct the mid-level features which were used to recognize the composite activities. In comparison with the aforementioned techniques, we propose a more generic and hierarchical technique to recognize the composite activities using the score of underlying atomic activities. In the first step, the score of atomic activities is computed directly from the input data. Later, the scores of atomic activities are used to recognize the composite activities. The atomic activities are defined manually to make our hierarchical approach more general. Though this paper mainly emphasizes the recognition of 7 composite activities (available in the selected dataset), we, however, believe that many other composite activities can also be recognized by including more atomic activities describing the variational movements of the human body, which reflects the generality of the proposed technique.

In Reference [

25], the authors reviewed the existing literature on human activities in the aspect that how they are being used in different applications. They concluded that activity recognition in visual data is not much effective due to the problems of clutter background, partial obstruction, changing of scale, illumination, etc. Lara et al. [

6] surveyed the state-of-the-art techniques to recognize human activity using wearable sensors. They explained that obtaining the appropriate information on human actions and behaviors is very important for their recognition, and it can be efficiently achieved using sensory data. The authors in References [

26,

27,

28,

29] have also assessed the use of wearable sensors for human activity recognition. Shirahama et al. [

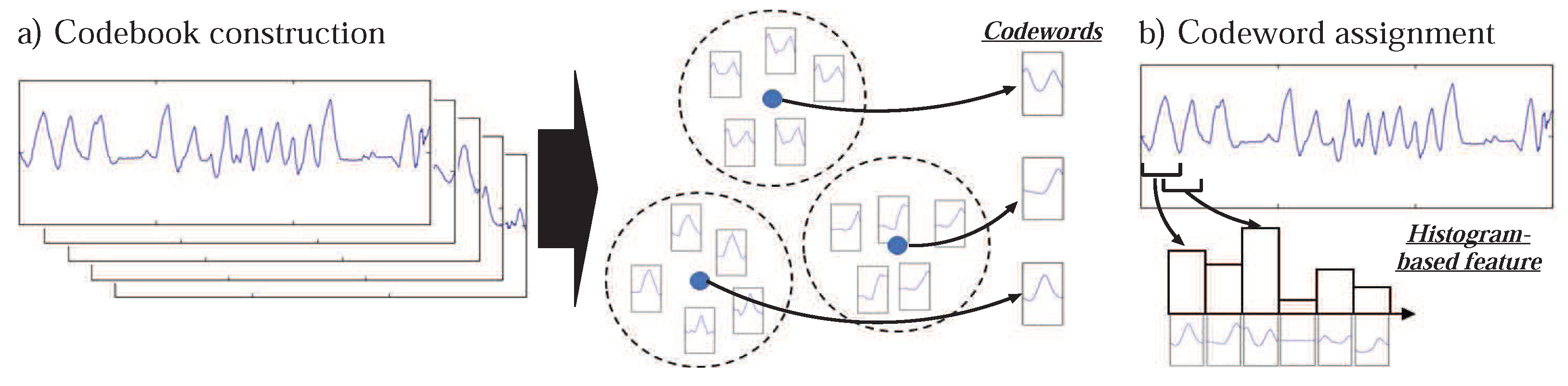

30] proposed that the current spread of mobile devices with multiple sensors may help in the recognition and monitoring of ADL. They employed a codebook-based approach to get the high-level representation of the input data which was obtained from different sensors of the smartphone. The technique proposed in Reference [

31] recognizes the human activities using the sensory data which is obtained from 2-axes of the smartphone accelerometer sensor. This research also concluded the effectiveness and contribution of each axis of the accelerometer in the recognition of human activities. In comparison with the aforementioned techniques, the proposed technique uses multimodal sensory data which is obtained from three unobtrusive wearable devices. We show that the fusion of multimodal sensory data provides higher accuracy to recognize the ADL.

In machine learning algorithms, the selection of optimal features plays a vital role to obtain excellent recognition results. Furthermore, in the case of high-dimensional data, the reduction of dimensions may not only help to improve the recognition accuracies but also to reduce the memory requirement and computational cost [

32]. The dimensionality reduction usually can be achieved using two techniques: feature selection and feature extraction [

33,

34]. The authors in Reference [

35] proposed a hybrid approach by employing both feature selection and feature extraction techniques for dimension reduction. Lately, a few authors, e.g., References [

36,

37], proposed feature extraction methods using the subspace pooling technique. The technique proposed in Reference [

36] employed singular value decomposition (SVD) for subspace pooling to obtain the optimal set of features from high dimensional data. The authors in Reference [

38] extracted a set of features using SVD and the principal singular vectors to encode the feature representation of input data. Zhang et al. [

37] also employed SVD for subspace pooling technique in their work. Guyon et al. [

39] have discussed multiple methods of feature selection in their research and concluded that clustering and matrix factorization performed best when the dimensions became very large. Similarly, the techniques proposed in References [

40,

41,

42] have also employed SVD for optimal features selection. Song et al. [

43] exploits principal component analysis (PCA) to select the most prominent and important features. They concluded that the recognition accuracy remained the same even after reducing the dimensions by selecting a few components. The authors in References [

44,

45] have also used PCA for optimal feature selection.

The researchers have also assessed the performance of different classification algorithms to recognize the ADL. For example, the authors in Reference [

46] employed several machine learning algorithms on multivariate data to recognize human activities. They assessed the performance of Random Forest (RF), kNN, Neural Network, Logistic Regression, Stochastic Gradient Descent, and Naïve Bayes and concluded that Neural Network and logistics regression techniques provide better recognition results. In Reference [

14], the authors assessed the different kernels of SVM to recognize the ADL which were recorded using Inertial sensors. The authors in Reference [

13] concluded that the selection of kernel function in SVM along with the optimal values of hyperparameters plays a critical role concerning the data. The authors in Reference [

47] have also used SVM as a classification tool in their research. Yazdansepas et al. [

48] proposed a method to recognize the human activities of 77 subjects. They assessed the performance of different classification algorithms and concluded that the random forest algorithm provides the best results. The hidden Markov model has been widely used for activity recognition [

49,

50,

51,

52]. It is a sequential probabilistic model where a particular discrete random variable describes the state of the process. The technique proposed in Reference [

22] employed Conditional Random Fields (CRFs) to encode the sequential characteristics of composite activity. Deep learning-based techniques, e.g., References [

7,

8], have also been employed to recognize human activities. Despite their good performance, they need a large amount of training data to tune the hyper-parameters [

53]. The ensemble classifier (i.e., combined predictions of several models) have been also employed to recognize the ADL. For example, the authors in Reference [

54] presented that ensemble classifiers gave more accurate results than any other single classifier. Mishra et al. [

55] explained that the increase in variety and size of data affect the performance of a classifier. They concluded that estimations of more than one classifier (i.e., ensemble classification) are required to improve the performance. Similarly, the authors in Reference [

56] employed an ensemble classifier using a voting technique in the classification of patterns. They formed a few sets of basic classifiers which were trained on different parameters. They combined their predictions by using a weighted voting technique to get a final prediction. Their research showed that ensemble classifier is quite a promising method, and it might get popular in other science-related fields. There were many techniques for ensemble classification but the voting-based technique is an efficient one.

In comparison with existing techniques, this paper presents a generic activity recognition method using the subspace pooling technique on the scores of atomic activities which were computed from the original sensory data. In particular, two different types of features are computed from the atomic scores, and their performance is assessed using four different classifiers with different parameters. The performance evaluation and its comparison with existing state-of-the-art techniques confirm the effectiveness of the proposed method.

5. Experiments and Results

We used the CogAge dataset [

15], which contains both atomic and composite activities. The data is recorded using three unobtrusive wearable devices: LG G5 smartphone [

84], Huawei smart watch [

85] and JINS MEME glasses [

86]. The smartphone was placed in the subject’s front left pocket of the jeans and it consists of 5 different sensory modalities: linear accelerometer (all sampled at 200 Hz), gyroscope, magnetometer (100 Hz), gravity, and 3-axis accelerometer. These sensory modalities are used to record body movement. Specifically, the linear accelerometer provides a three-dimensional sequence that specifies acceleration forces (excluding gravity) on the three axes. The gyroscope encodes three-dimensional angular velocities. The magnetometer sensor provides a three-dimensional sequence to describe the intensities of the earth’s magnetic field along the three axes which is quite useful to determine the smartphone’s orientation. The gravity sensing modality also generates a three-dimensional sequence that encodes the gravity forces on the three axes of the smartphone. The 3-axes accelerometer generates a three-dimensional sequence that specifies acceleration forces (including gravity) acting on the smartphone. Second, the smartwatch was placed on a subject’s left arm and it consists of two different sensory modalities: gyroscope and 3-axis accelerometer (both sampled at 100 Hz). Each of these sensing modalities generates a three-dimensional sequence of acceleration and angular velocities on the watch’s x, y, and z axes. These modalities are used to encode hand movements. Finally, the smart glasses are worn by the subject and it generates 3-dimensional data of accelerometer (sampled at 20 Hz). The accelerometer sensor in the smart glass provides three-dimensional acceleration information on the glasses’ x, y, and z axes which are used to record the head movement. Thus, the whole setup of activity encoding used eight sensor modalities through these wearable devices. The movement data of smart glasses and the watch is initially sent to the smartphone via Bluetooth connection and later all the recorded data sent to a home-gateway using a Wi-Fi connection. The entire process of recording is depicted in

Figure 5.

The CogAge dataset contains 9700 instances of 61 different atomic activities obtained from 8 subjects. Among 8, there are 5 subjects who contributed to the collection of 7 composite activities too, using the aforementioned three wearable devices. There is one subject who only contributed to the collection of composite activities. Therefore, the dataset contains the composite activities of 6 subjects. An android application in a smartphone connects the smartwatch and glasses via Bluetooth, is used to record the composite activities. Thus, the whole recording setup provides a convenient and natural way for the subject such that he/she can move freely to the kitchen, washroom, or living room with these devices to perform daily life activities. More than 1000 instances of composite activities are collected, and missing data is removed during the pre-processing phase. Finally, the dataset comprises the 471 instances of left-hand activities (i.e., the activities are mainly performed using the left hand only) and 281 instances of both hands (i.e., the composite activities are performed using both hands). Therefore, the dataset contains in total of 752 instances of composite activities, and their description is outlined in

Table 2.

The participants in data collection belong to different countries with diverse cultural backgrounds. Thus, their way of performing the same activity is also quite different (e.g., cooking). The versatility in performing the same activity makes the dataset much complex and a challenging task for the HAR systems to validate the generality of their methods, despite the low number of subjects. The data for composite activities was collected for training and testing phases separately in different time intervals. The length of every activity is not constant; it differs from 45 s to 5 min because some activities take a long time to be completed, like preparing food, and, on the other side, some activities take a shorter time, for example, handling medications. The atomic activity recognition algorithm [

15] produced atomic scores after each time interval of approximately 2.5 s. We divided each composite activity into a window of size 45 s, i.e., 18 atomic scores vectors, for each composite activity instance. The longer instances were divided into multiple windows with a stride size of 6. The data of composite activities are divided into two parts: left-hand and right-hand activities data. Since the recording of each activity comprises a different number of instances, they are empirically reduced to a fixed number.

5.1. Experimental Setting

We performed three experiments each with three different experimental settings. In each experiment, the dataset is divided into training and testing sets differently. The experiments are performed using activities data which have been performed from left-hand (i.e., the smartwatch was placed on the subject’s left arm) and using both-hand (i.e., the smartwatch was either placed on a subject’s left or right arm), separately. The experimental settings are outlined in the following:

k-fold cross-validation (CV): Training and testing data split on basis of k folds. We set the value of k = 3.

Hold-out cross-validation: We used the data of 3 subjects for training and the data of the remaining 3 subjects for testing purposes, iteratively.

Leave-one-out cross-validation: The data of 5 subjects are used for training, and the data of the remaining 1 subject is used for testing purposes, iteratively.

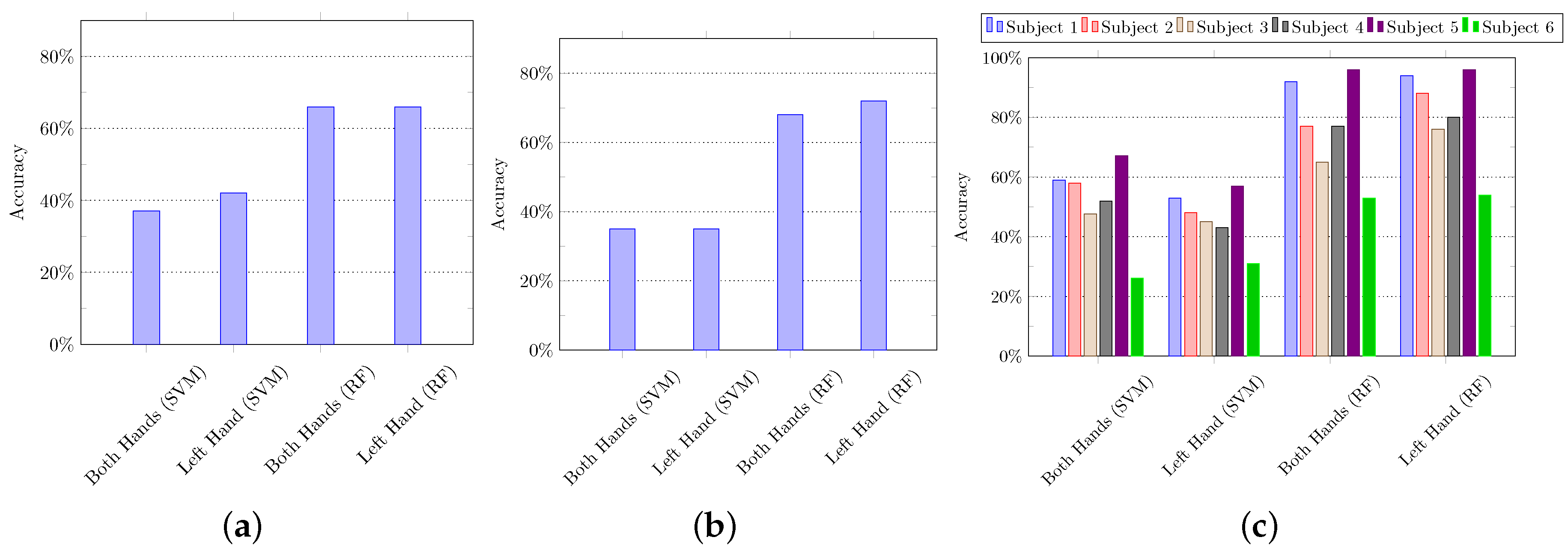

5.1.1. Using Handcrafted Features

We computed 18 features on input data (i.e., atomic scores) as described in

Section 4.2.1. These 18 features were computed against every column of features set of a single activity, i.e., 18 × total number of columns, and they are concatenated in a single row. Since the input data is 61-dimensional, the dimension of the handcrafted feature is

(i.e., 1 × 1098). We used SVM and random forest (RF) to evaluate these computed features, and their recognition accuracies are summarized in

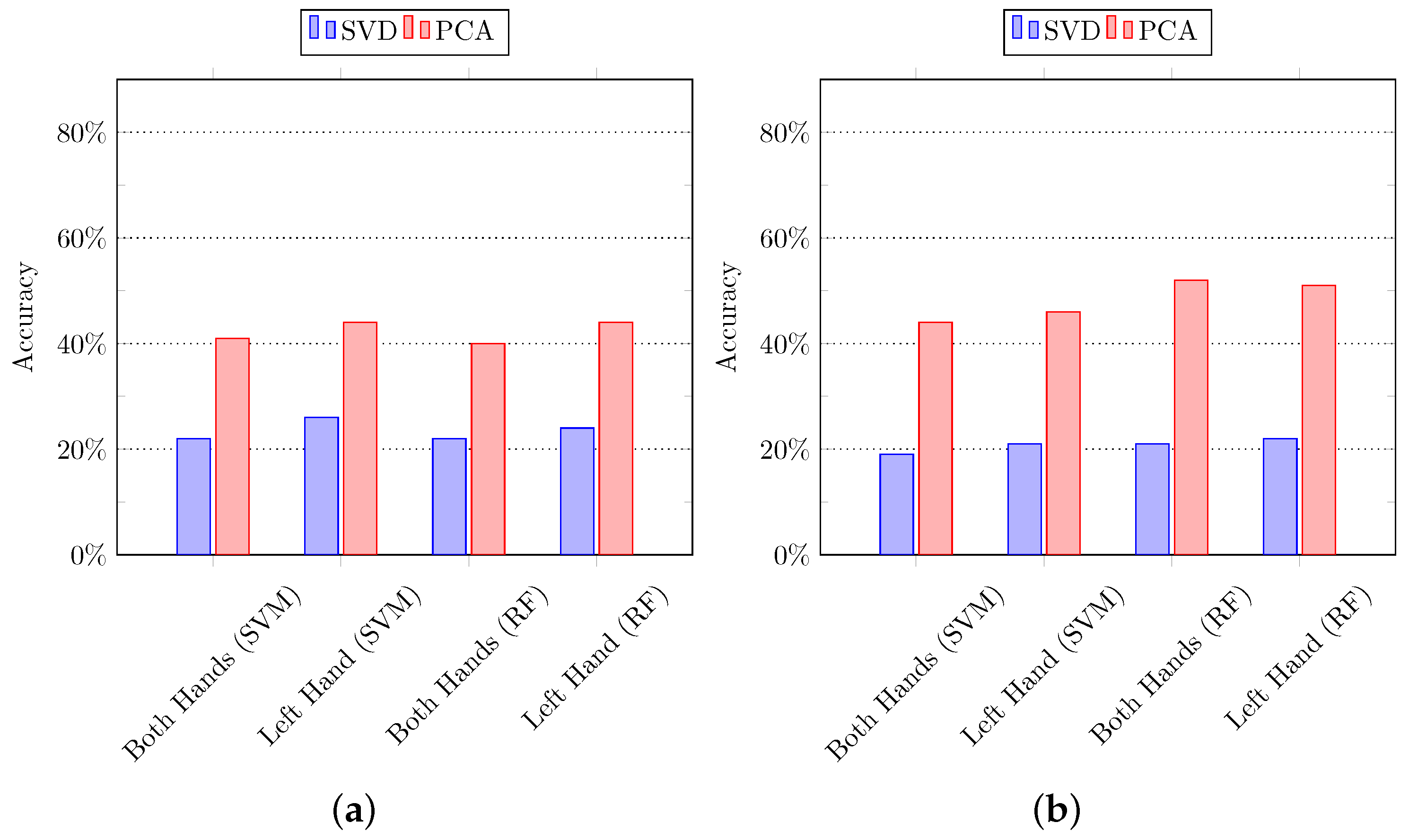

Figure 6.

5.1.2. Using Subspace Pooling Technique-Based Features

In the second set of experiments, the subspace pooling-based techniques are applied to the input data to project it into new dimensions to get the more robust representation of data in subspace [

67]. In particular, we applied SVD and PCA on the input data, and their full-length features are used to recognize the composite activities using SVM and random forest algorithms. It is important to mention that all the features in new dimensions are used in the classification process.

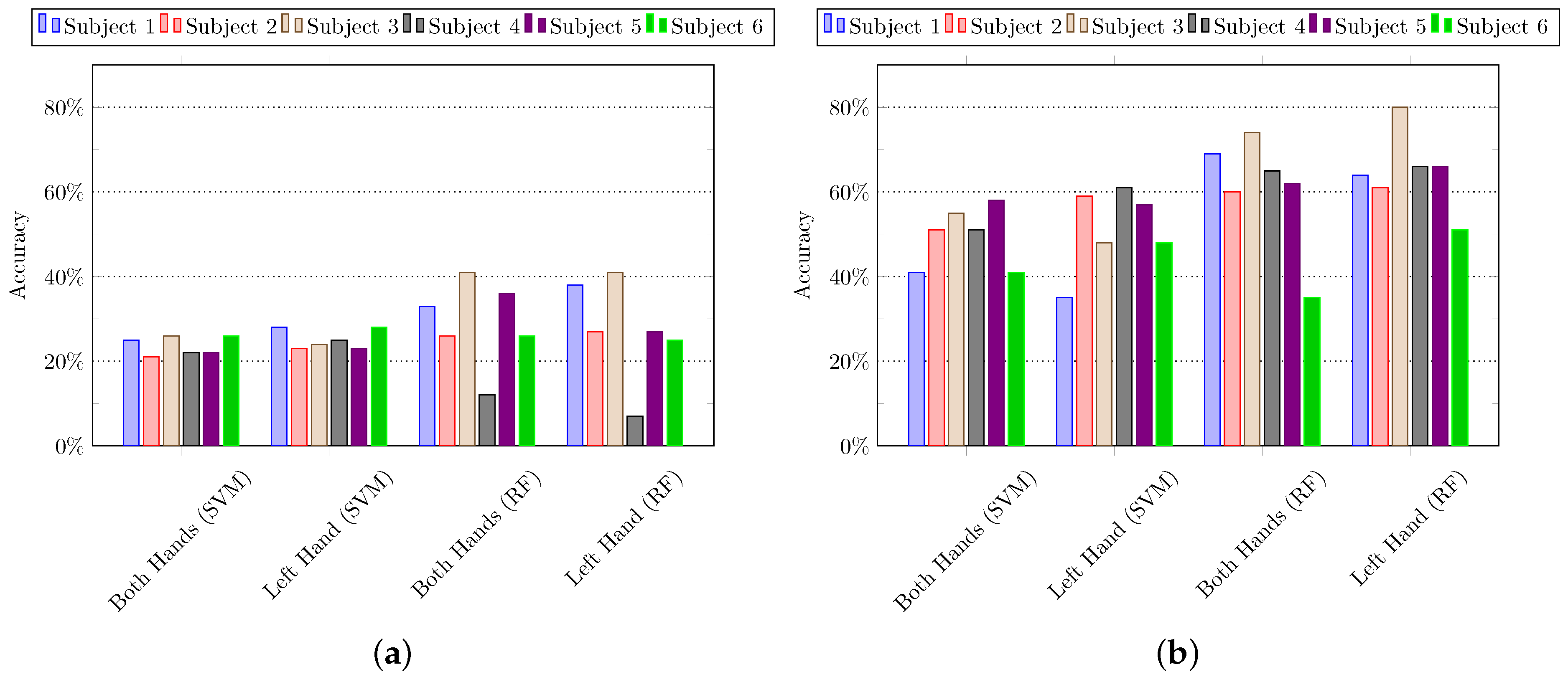

Figure 7 summarizes the results of experiments using k-fold cross-validation and hold-out cross-validation, whereas the results of leave-one-out cross-validation are summarized in

Figure 8. It can be observed that the features extracted by using PCA performed quite well as compared to SVD.

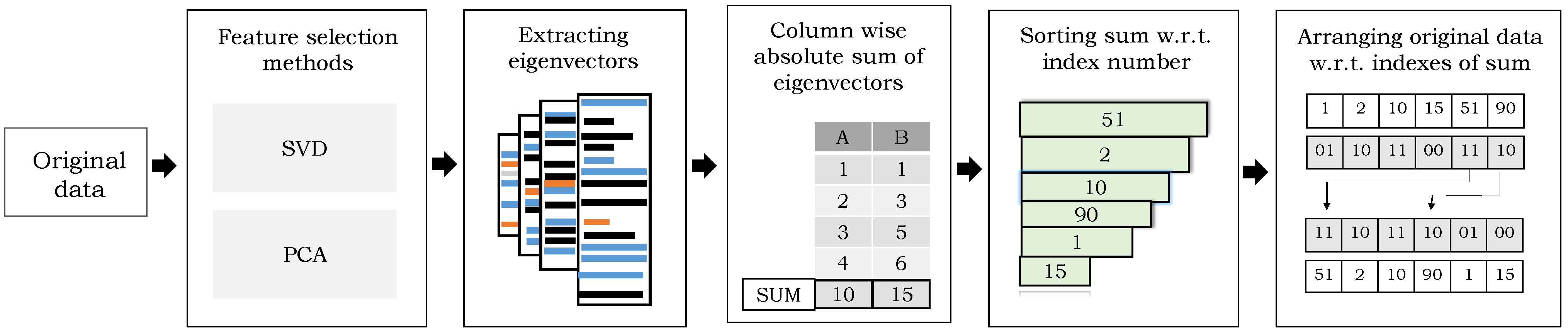

5.1.3. Using Optimal Feature Selection

We also assess the effectiveness of the optimal feature selection method on the data extracted using subspace pooling techniques. The features are selected based on the variance of eigenvectors (i.e., eigenvalues). Since there were 18 sequences in each activity of the original data and the dimension of each row is 61; they are concatenated in a 1-dimensional vector, i.e., . The data of all the activities are arranged and the following steps are performed:

First, we applied SVD and PCA separately and the matrix of eigenvectors (1098-dimensional) is extracted.

Second, the sum of absolute values of every column of eigenvectors matrix is calculated, arranged in descending order with respect to its index number, and stored in a separate matrix.

Third, original combined data was arranged with respect to the sorted sum of absolute eigenvectors.

Fourth, the dimensions were reduced by iteratively selecting the small sets of features keeping in view the variance of eigenvectors.

Finally, the selected set of features were evaluated using SVM and RF.

Similar to other experiments in the earlier categories, the

k-fold cross-validation is first employed to split the data into training and testing sets, and the optimal features are classified using SVM and random forest.

Table 3 shows the summarized results of composite activity recognition using

k-fold cross-validation. In the second set of experiments, the hold-out cross-validation technique is employed, and the activities data of 3 subjects are used in the training set, whereas the rest activities data of 3 subjects are used in the testing set. Both training and testing sets are arranged according to the sorted absolute sum with their corresponding labels, and the selected features are classified using SVM and RF.

Table 4 shows the summarized results of composite activity recognition using hold-out cross-validation. Similarly, the results of leave-one-out fold cross-validation are summarized in

Table 5.

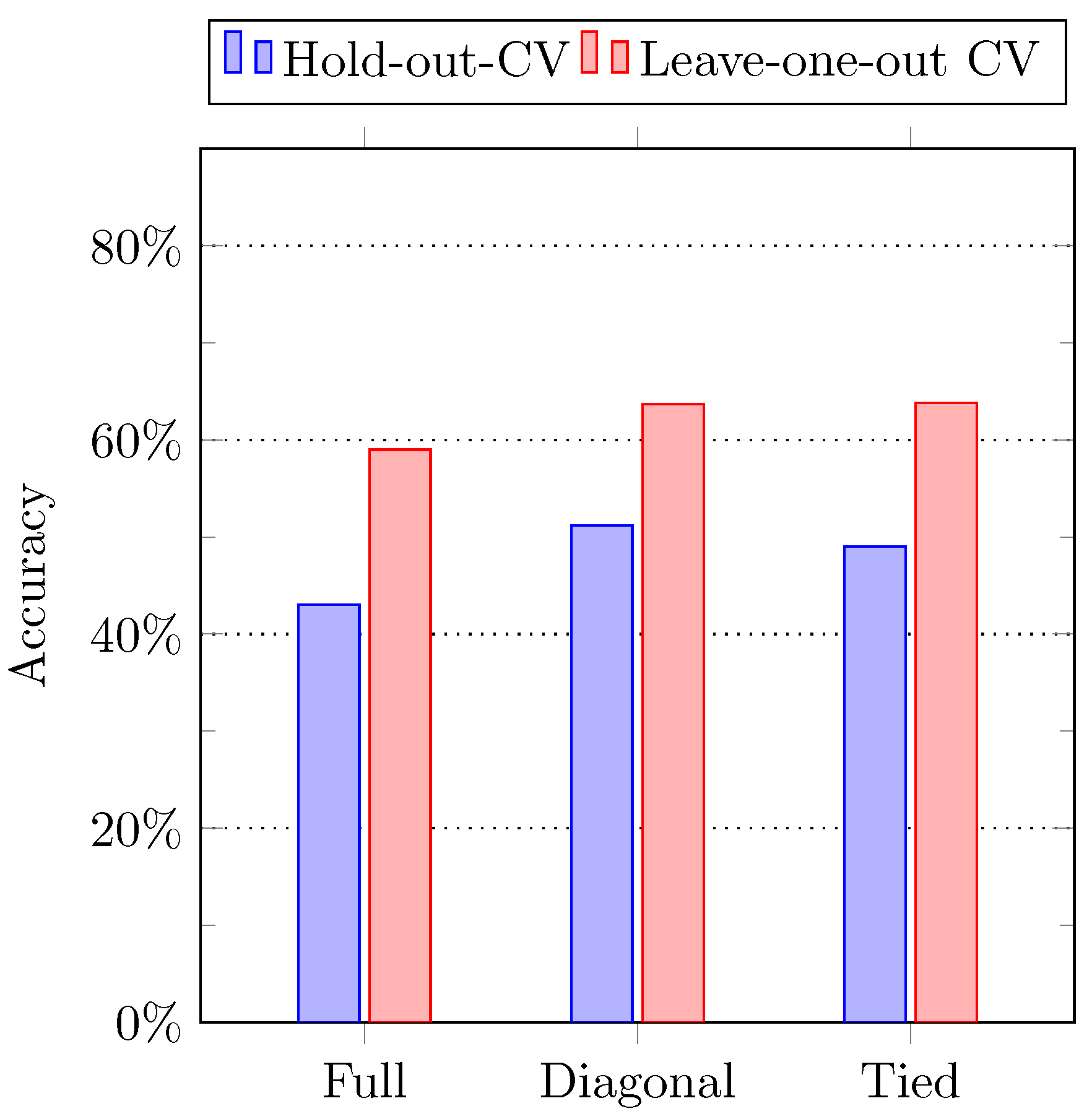

5.1.4. Using HMM

In HMM classification, the objective is to calculate the probability of hidden states given the observation sequence. Hence, a sequence of observation and 7 models were constructed to find the model which best describes the observation sequence. In this experimental setting, the Gaussian HMM model with its hyperparameters, i.e., ‘n-components’, ‘covariance type’, ‘starting probabilities’, and ‘transmission matrix’, is used. The model is trained using two methods to compute the observation sequence, and the short detail of each method is described in the following.

In the first method, the sequence of observation is calculated using the leave-one-out cross-validation technique. Since there are 6 subjects in the dataset, the Gaussian HMM model is trained using the activity data of 5 subjects, whereas the remaining 1 subject is used for testing purposes. We calculate the likelihood information while comparing the testing data to all the predicted observation sequences. The model with maximum likelihood was assigned to the input testing data. After getting all the models against all the testing data, the accuracy between the original models, and the estimated or predicted models is calculated. In the second method, the hold-out cross-validation technique is used to calculate the observation sequence. In particular, the activity data of 3 subjects is used in the model training, and the rest of the instances of 3 subjects are used for testing purposes. The maximum likelihood between trained and testing sequences is calculated as mentioned above.

Figure 9 shows the result of the testing accuracy of both methods. It can be observed that the leave-one-out performed better than the hold-out cross-validation.

5.1.5. Using Ensemble Classifier

Lastly, we also assess the performance of the ensemble classifier to recognize the activities. The idea is to obtain the prediction results from different classification algorithms, and the final result is computed based on the maximum voting technique. That is, human activities are recognized by combining all the predictions made by other individual classifiers. We employed 5 different classifiers with different feature representation of the same activity data: (1) SVM classifier using SVD-based features, (2) RF classifier using SVD-based features, (3) SVM classifier using PCA-based features, (4) RF classifier using PCA-based features, and (5) HMM model. All the models are trained with respective labels using the hold-out cross-validation technique to reduce the effect of overfitting or underfitting.

To obtain the SVD-based and PCA-based features, the same implementation is adopted as described in

Section 4.2.2. The SVM classifier is trained with hyperparameter

, and the RF classifier is used with hyperparameter

, whereas the Gaussian HMM model is trained using hyperparameters

n-component = 6, and covariance type =

. The label information is gathered from each of the aforementioned 5 learning models and the label with maximum frequency is assigned to the respective instance of testing data.

Figure 10 shows the comparison between the original results of individual classifiers and the ensemble classifier.

5.2. Discussion

This paper presents a technique to recognize the composite activities. We employed two different types of features for activity recognition: Handcrafted features and the features obtained using subspace pooling techniques. The experiments are carried out using three different settings:

k-fold cross-validation, hold-out cross-validation, and leave-one-out cross-validation. A comparative analysis between all the techniques is presented in

Table 6. It can be observed that handcrafted features perform quite well along with random forest classifiers to recognize the composite activities. Overall, it achieved average recognition accuracy of 79%.

The recognition results of the proposed features are also evaluated with state-of-the-art techniques [

15]. A comparison analysis is carried out using two different experiments. Similar to Reference [

15], in the first comparative analysis, the technique proposed in Reference [

15] employed HMM and reported better recognition results using 3 states and 1000 iteration, whereas we also employed HMM by tuning the model hyperparameters, and the best results are achieved using 4 states, tied covariance matrix, and 1000 iterations. The recognition results in comparison with state-of-the-art techniques are summarized in

Table 7. Second, the handcrafted features are used to recognize the composite activities using leave-one-out cross-validation. We performed 6 different experiments using handcrafted features, and all of them show good results. The results are shown in

Table 8.