Applying Learning Analytics to Detect Sequences of Actions and Common Errors in a Geometry Game †

Abstract

1. Introduction

- To propose two sequence and process mining metrics: one to analyze the sequences of actions performed by students and another one to analyze their most common errors by puzzle.

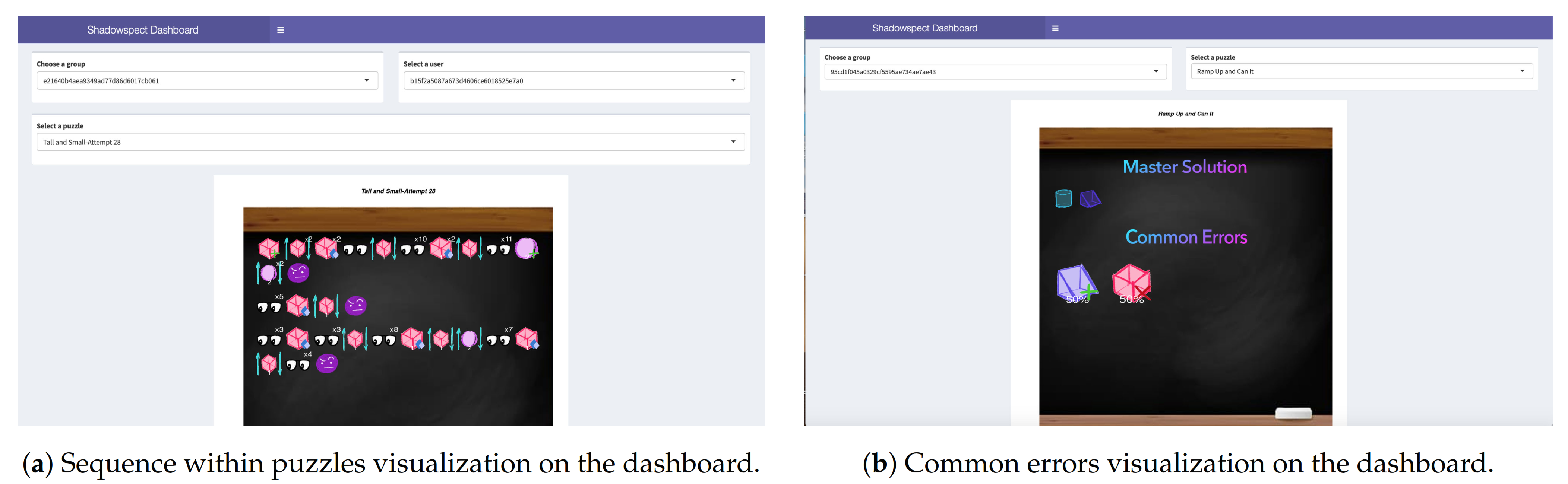

- To develop a set of visualizations embedded in an interactive dashboard that allows teachers to monitor students’ interaction with the game in real time.

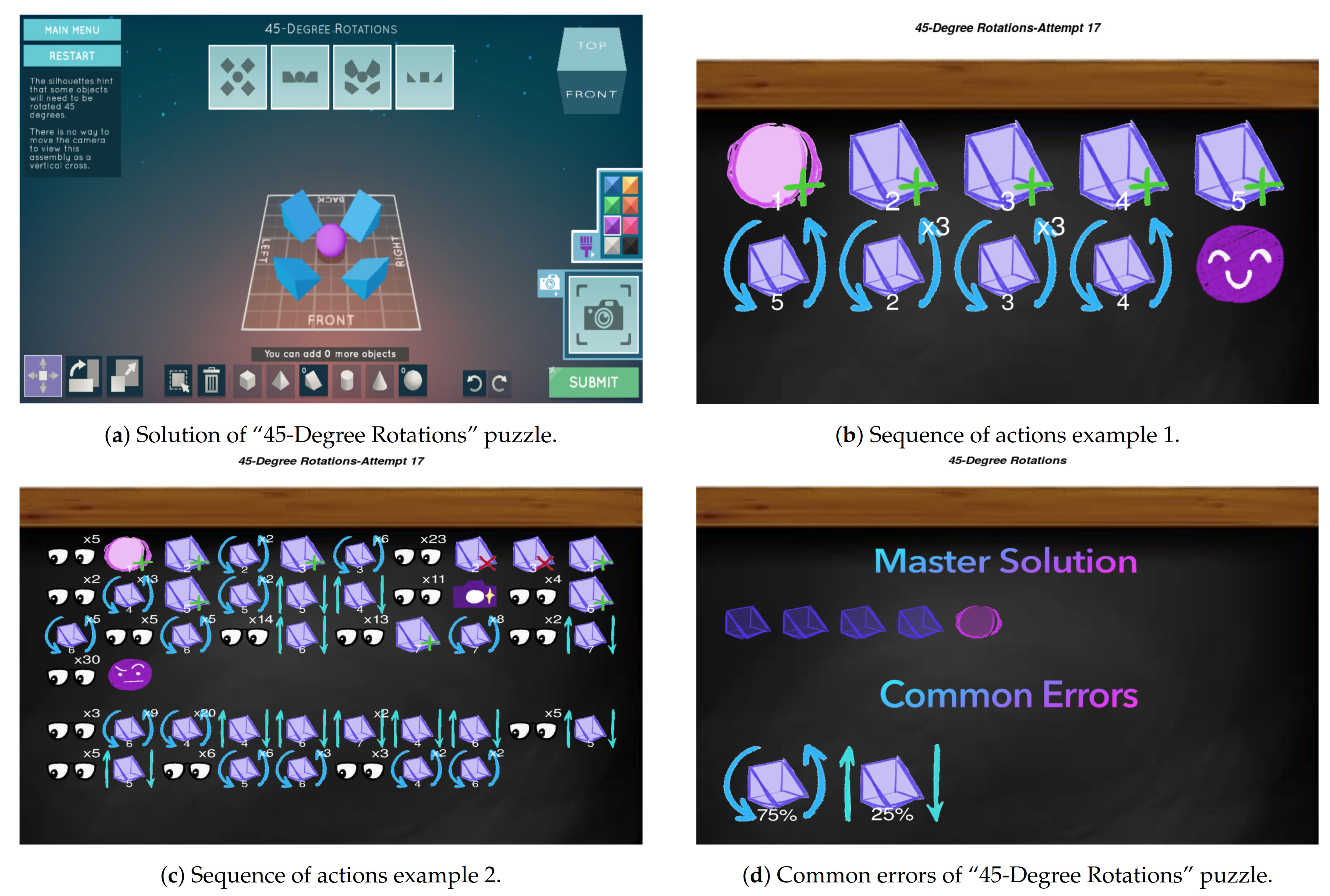

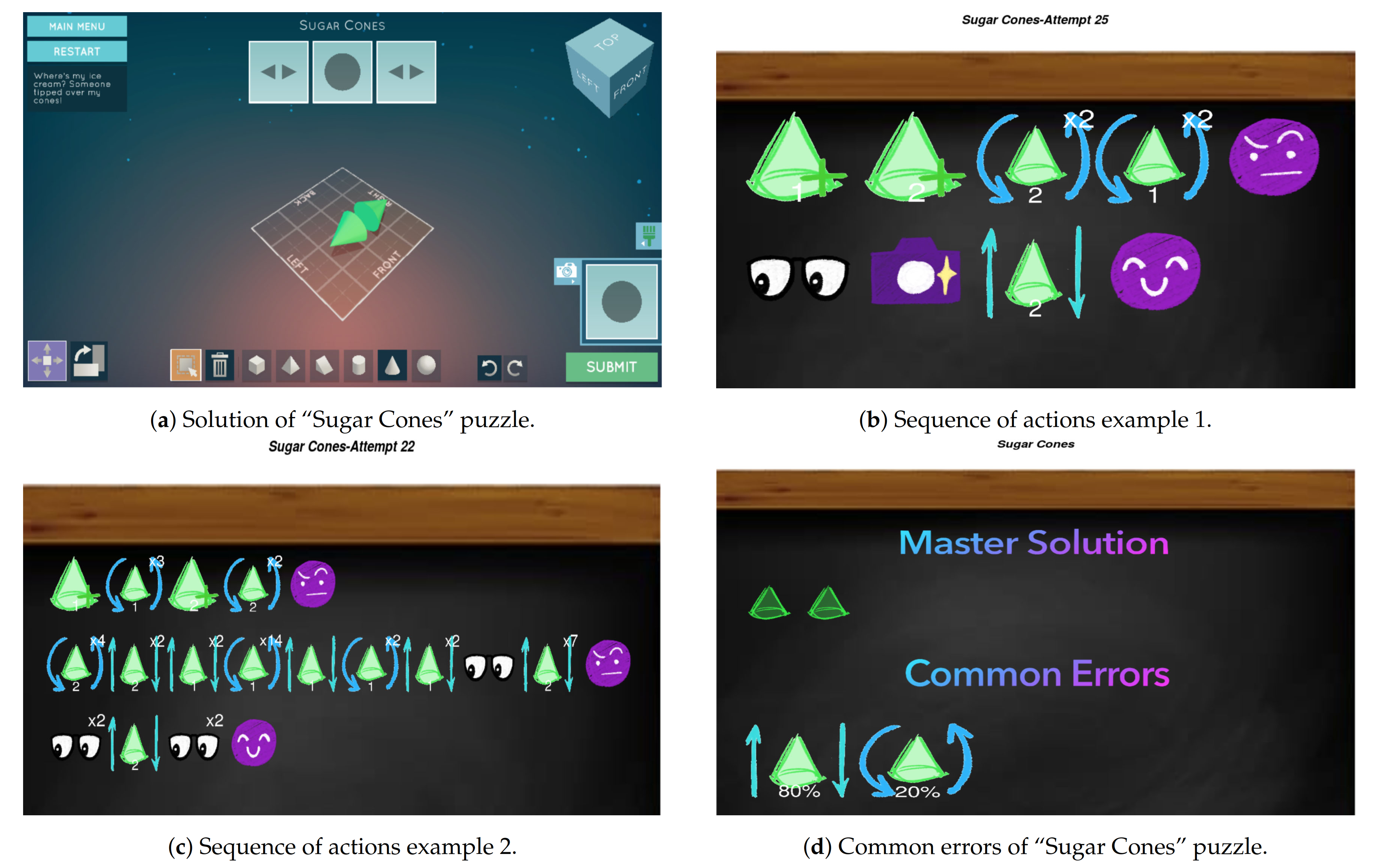

- To exemplify the potential of these metrics and visualizations with two uses cases from data collected in K12 schools across the US using Shadowspect.

2. Related Work

2.1. Educational Games

2.2. Sequence and Process Mining

2.3. Visualization Dashboards

3. Methods

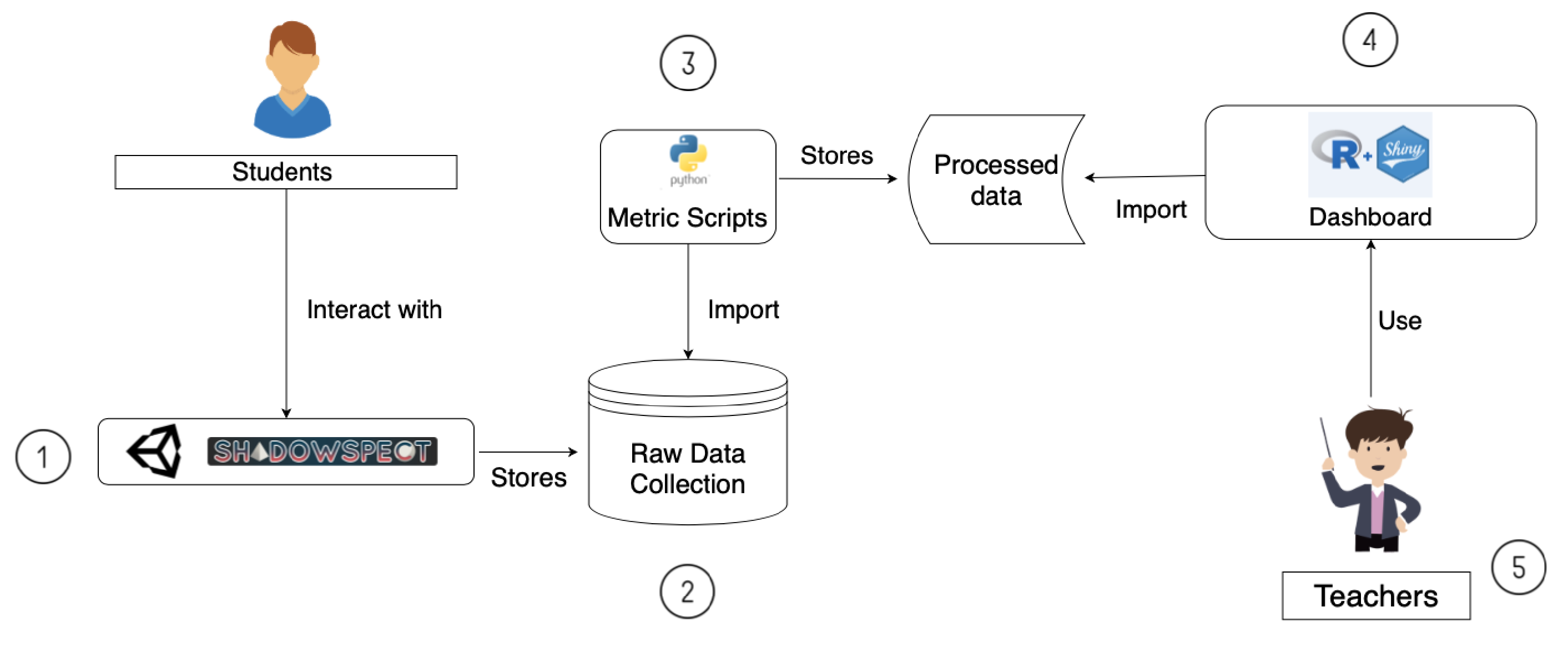

3.1. Overview of the System

- In the first step, students interact with Shadowspect. The game has been built using Unity Engine and its deployed as a web application hosted in a web server.

- The game collects every student’s interaction with the game and stores it in a database.

- Using the data collection obtained in the second step, metrics are calculated. Each one of the metrics that we have defined is a separate function that computes the required data output as defined in a Python script.

- The metric’s output is stored as processed data and used by our dashboard. We have developed the dashboard using Shiny’s R framework and we have deployed it on ShinyApps web server. This brings a good number of benefits, such as that the entire deployment pipeline is very easy as it does not need any hardware or configuration of the system.

- On the last step, we have the teachers, that are using Shadowspect in their classes and are the ones that can access the Shiny dashboard environment to visualize what their students are doing.

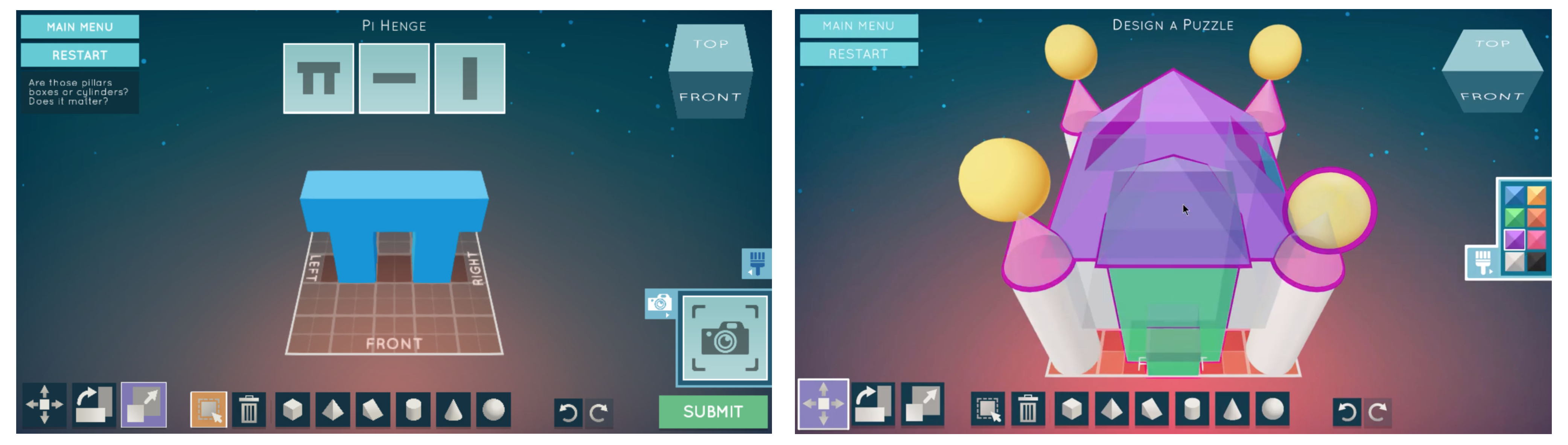

3.2. Shadowspect

3.3. Educational Context and Data Collection

4. Sequence and Process Mining Metrics Proposal

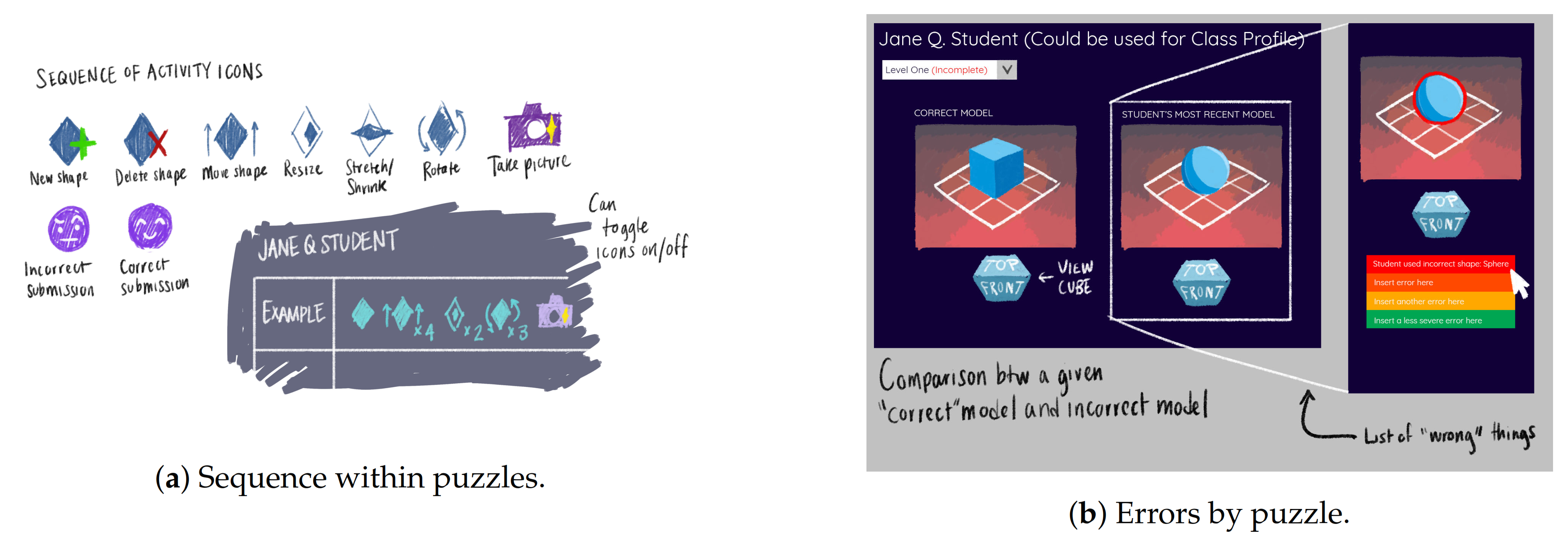

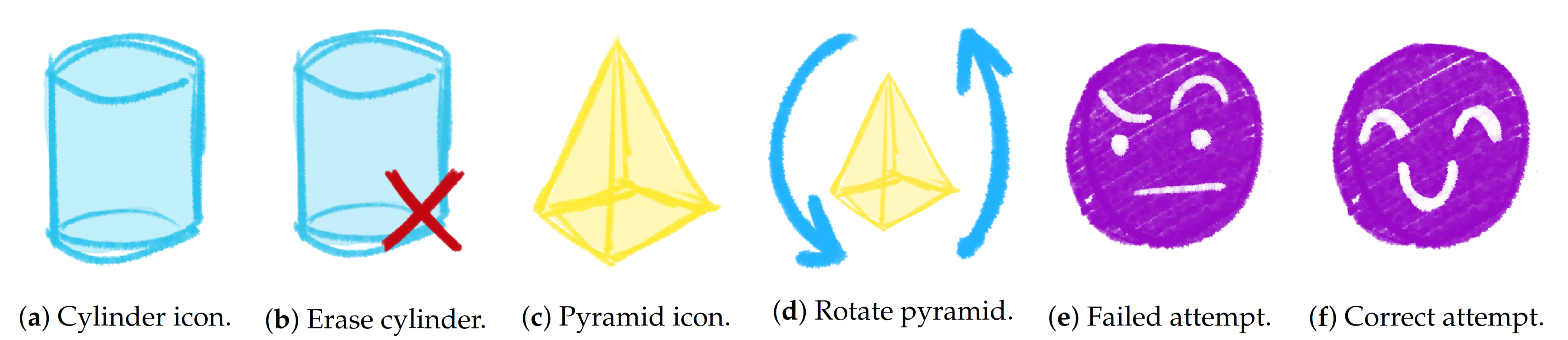

4.1. Sequences of Actions

- Data transformation: We transform the raw data into an adequate sequence of actions that are representable. This step also includes data cleaning to keep only useful events, in this case we only keep those events related to the puzzle solving process: starting a puzzle, manipulation events (create, delete, scale, rotate or move a shape), snapshots, perspective changes, and puzzles checks.

- Data compacting: We reduce the number of events without compromising the information that is needed for building a sequence of actions. We compact those events that are the same by adding an additional field that indicates the number of times that an event has been performed in a row. For example, if the student has changed the perspective of the game three times in a row, the original data containing three different events will be transformed in a single perspective change event that has been performed three times. When the event is related to the manipulation of shapes, we only compact them if they are related to the same shape identifier.

4.2. Common Errors

- Identify meaningful events: We identify the changes a student has made in the shapes between a failed submission and a correct submission. For example, if a student submits a puzzle and the solution is incorrect, and then the student creates a pyramid and deletes a cone in the scenario, those edits are registered by our algorithm as changes between submits.

- Compute most common errors: Once we have registered all those changes after wrong submissions, we group them by puzzle to obtain the shapes and manipulation events that the students have had problems with in each puzzle.

5. Visualization and Dashboard Design

5.1. Visualization Design

5.2. Dashboard Overview

6. Uses Cases

6.1. “45-Degree Rotations” Puzzle

6.2. “Sugar Cones” Puzzle

7. Discussion

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- ESA. 2020 Essential Facts About the Computer and Video Game Industry; Technical Report; Entertainment Software Association: Washington, DC, USA, 2020. [Google Scholar]

- Koster, R. Theory of Fun for Game Design; O’Reilly Media, Inc.: Newton, MA, USA, 2013. [Google Scholar]

- Gee, J.P. Are video games good for learning? Nord. J. Digit. Lit. 2006, 1, 172–183. [Google Scholar]

- Clark, D.B.; Tanner-Smith, E.E.; Killingsworth, S.S. Digital games, design, and learning: A systematic review and meta-analysis. Rev. Educ. Res. 2016, 86, 79–122. [Google Scholar] [CrossRef]

- Huizenga, J.; Ten Dam, G.; Voogt, J.; Admiraal, W. Teacher perceptions of the value of game-based learning in secondary education. Comput. Educ. 2017, 110, 105–115. [Google Scholar] [CrossRef]

- Fishman, B.; Riconscente, M.; Snider, R.; Tsai, T.; Plass, J. Empowering Educators: Supporting Student Progress in the Classroom with Digital Games; University of Michigan: Ann Arbor, MI, USA, 2014; Available online: http://gamesandlearning.umich.edu/agames (accessed on 28 January 2021).

- Kukulska-Hulme, A.; Beirne, E.; Conole, G.; Costello, E.; Coughlan, T.; Ferguson, R.; FitzGerald, E.; Gaved, M.; Herodotou, C.; Holmes, W.; et al. Innovating Pedagogy 2020: Open University Innovation Report 8. 2020. Available online: https://www.learntechlib.org/p/213818/ (accessed on 20 January 2021).

- Kim, Y.J.; Ifenthaler, D. Game-based assessment: The past ten years and moving forward. In Game-Based Assessment Revisited; Springer: Berlin/Heidelberg, Germany, 2019; pp. 3–11. [Google Scholar]

- Loh, C.S.; Sheng, Y.; Ifenthaler, D. Serious games analytics: Theoretical framework. In Serious Games Analytics; Springer: Berlin/Heidelberg, Germany, 2015; pp. 3–29. [Google Scholar]

- Owen, V.E.; Baker, R.S. Learning analytics for games. In Handbook of Game-Based Learning; MIT: Cambridge, MA, USA, 2020. [Google Scholar]

- Harpstead, E.; MacLellan, C.J.; Aleven, V.; Myers, B.A. Replay analysis in open-ended educational games. In Serious Games Analytics; Springer: Berlin/Heidelberg, Germany, 2015; pp. 381–399. [Google Scholar]

- Gómez, M.J.; Ruipérez-Valiente, J.A.; Martínez, P.A.; Kim, Y.J. Exploring the Affordances of Sequence Mining in Educational Games. In Proceedings of the Seventh International Conference on Technological Ecosystems for Enhancing Multiculturality, Salamanca, Spain, 16–18 October 2020. [Google Scholar]

- Hamari, J.; Shernoff, D.J.; Rowe, E.; Coller, B.; Asbell-Clarke, J.; Edwards, T. Challenging games help students learn: An empirical study on engagement, flow and immersion in game-based learning. Comput. Hum. Behav. 2016, 54, 170–179. [Google Scholar] [CrossRef]

- Saputri, D.Y.; Rukayah, R.; Indriayu, M. Need assessment of interactive multimedia based on game in elementary school: A challenge into learning in 21st century. Int. J. Educ. Res. Rev. 2018, 3, 1–8. [Google Scholar] [CrossRef]

- Romero, C.; Ventura, S. Educational data mining: A review of the state of the art. IEEE Trans. Syst. Man, Cybern. Part C Appl. Rev. 2010, 40, 601–618. [Google Scholar] [CrossRef]

- Prensky, M. Digital game-based learning. Comput. Entertain. CIE 2003, 1, 21. [Google Scholar] [CrossRef]

- Klopfer, E.; Osterweil, S.; Groff, J.; Haas, J. Using the technology of today in the classroom today: The instructional power of digital games, social networking, simulations and how teachers can leverage them. Educ. Arcade 2009, 1, 20. [Google Scholar]

- Squire, K.; Jenkins, H. Harnessing the power of games in education. Insight 2003, 3, 5–33. [Google Scholar]

- Gros, B. Digital games in education: The design of games-based learning environments. J. Res. Technol. Educ. 2007, 40, 23–38. [Google Scholar] [CrossRef]

- Ndukwe, I.G.; Daniel, B.K.; Butson, R.J. Data science approach for simulating educational data: Towards the development of teaching outcome model (TOM). Big Data Cogn. Comput. 2018, 2, 24. [Google Scholar] [CrossRef]

- Freire, M.; Serrano-Laguna, Á.; Manero, B.; Martínez-Ortiz, I.; Moreno-Ger, P.; Fernández-Manjón, B. Game learning analytics: Learning analytics for serious games. In Learning, Design, and Technology; Springer Nature: Cham, Switzerland, 2016; pp. 1–29. [Google Scholar]

- Hauge, J.B.; Berta, R.; Fiucci, G.; Manjón, B.F.; Padrón-Nápoles, C.; Westra, W.; Nadolski, R. Implications of learning analytics for serious game design. In Proceedings of the 2014 IEEE 14th International Conference on Advanced Learning Technologies, Athens, Greece, 7–10 July 2014; pp. 230–232. [Google Scholar]

- LAK. LAK 2011: 1st International Conference Learning Analytics and Knowledge; LAK: Banff, AB, Canada, 2011. [Google Scholar]

- Bogarín, A.; Cerezo, R.; Romero, C. A survey on educational process mining. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2018, 8, e1230. [Google Scholar] [CrossRef]

- Taub, M.; Azevedo, R. Using Sequence Mining to Analyze Metacognitive Monitoring and Scientific Inquiry Based on Levels of Efficiency and Emotions during Game-Based Learning. J. Educ. Data Min. 2018, 10, 1–26. [Google Scholar]

- Taub, M.; Azevedo, R.; Bradbury, A.E.; Millar, G.C.; Lester, J. Using sequence mining to reveal the efficiency in scientific reasoning during STEM learning with a game-based learning environment. Learn. Instr. 2018, 54, 93–103. [Google Scholar] [CrossRef]

- Karlos, S.; Kostopoulos, G.; Kotsiantis, S. Predicting and Interpreting Students’ Grades in Distance Higher Education through a Semi-Regression Method. Appl. Sci. 2020, 10, 8413. [Google Scholar] [CrossRef]

- Kinnebrew, J.S.; Biswas, G. Identifying Learning Behaviors by Contextualizing Differential Sequence Mining with Action Features and Performance Evolution; International Educational Data Mining Society: Chania, Greece, 2012. [Google Scholar]

- Martinez, R.; Yacef, K.; Kay, J.; Al-Qaraghuli, A.; Kharrufa, A. Analysing frequent sequential patterns of collaborative learning activity around an interactive tabletop. In Proceedings of the 4th International Conference on Educational Data Mining (EDM), Eindhoven, The Netherlands, 6–8 July 2011; pp. 111–120. [Google Scholar]

- Kinnebrew, J.S.; Loretz, K.M.; Biswas, G. A contextualized, differential sequence mining method to derive students’ learning behavior patterns. J. Educ. Data Min. 2013, 5, 190–219. [Google Scholar]

- Nasereddin, H.H. Stream Data Mining. Int. J. Web Appl. 2011, 3, 90–97. [Google Scholar]

- Corbett, A.T.; Anderson, J.R. Knowledge tracing: Modeling the acquisition of procedural knowledge. User Model. User-Adapt. Interact. 1994, 4, 253–278. [Google Scholar] [CrossRef]

- Ware, C. Information Visualization: Perception for Design; Morgan Kaufmann: Burlington, MA, USA, 2019. [Google Scholar]

- Cukier, K. A special report on managing information. Economist 2010, 394, 3–18. [Google Scholar]

- Kelleher, C.; Wagener, T. Ten guidelines for effective data visualization in scientific publications. Environ. Model. Softw. 2011, 26, 822–827. [Google Scholar] [CrossRef]

- Grinstein, U.M.F.G.G.; Wierse, A. Information Visualization in Data Mining and Knowledge Discovery; Morgan Kaufmann: Burlington, MA, USA, 2002. [Google Scholar]

- Huertas Celdrán, A.; Ruipérez-Valiente, J.A.; García Clemente, F.J.; Rodríguez-Triana, M.J.; Shankar, S.K.; Martínez Pérez, G. A Scalable Architecture for the Dynamic Deployment of Multimodal Learning Analytics Applications in Smart Classrooms. Sensors 2020, 20, 2923. [Google Scholar] [CrossRef] [PubMed]

- Yoo, Y.; Lee, H.; Jo, I.H.; Park, Y. Educational dashboards for smart learning: Review of case studies. In Emerging Issues in Smart Learning; Springer: Berlin/Heidelberg, Germany, 2015; pp. 145–155. [Google Scholar]

- Verbert, K.; Duval, E.; Klerkx, J.; Govaerts, S.; Santos, J.L. Learning analytics dashboard applications. Am. Behav. Sci. 2013, 57, 1500–1509. [Google Scholar] [CrossRef]

- Martínez, P.A.; Gómez, M.J.; Ruipérez-Valiente, J.A.; Pérez, G.M.; Kim, Y.J. Visualizing Educational Game Data: A Case Study of Visualizations to Support Teachers. In Learning Analytics Summer Institute Spain 2020: Learning Analytics. Time for Adoption? CEUR-WS: Aachen, Germany, 2020. [Google Scholar]

- Ruiperez-Valiente, J.A.; Gaydos, M.; Rosenheck, L.; Kim, Y.J.; Klopfer, E. Patterns of engagement in an educational massive multiplayer online game: A multidimensional view. IEEE Trans. Learn. Technol. 2020. [Google Scholar] [CrossRef]

- Ruiperez-Valiente, J.A.; Munoz-Merino, P.J.; Gascon-Pinedo, J.A.; Kloos, C.D. Scaling to massiveness with analyse: A learning analytics tool for open edx. IEEE Trans. Hum. Mach. Syst. 2016, 47, 909–914. [Google Scholar] [CrossRef]

- Holstein, K.; McLaren, B.M.; Aleven, V. Intelligent tutors as teachers’ aides: Exploring teacher needs for real-time analytics in blended classrooms. In Proceedings of the Seventh International Learning Analytics & Knowledge Conference, Vancouver, BC, Canada, 13–17 March 2017; pp. 257–266. [Google Scholar]

- Verbert, K.; Govaerts, S.; Duval, E.; Santos, J.L.; Van Assche, F.; Parra, G.; Klerkx, J. Learning dashboards: An overview and future research opportunities. Pers. Ubiquitous Comput. 2014, 18, 1499–1514. [Google Scholar] [CrossRef]

- Naranjo, D.M.; Prieto, J.R.; Moltó, G.; Calatrava, A. A Visual Dashboard to Track Learning Analytics for Educational Cloud Computing. Sensors 2019, 19, 2952. [Google Scholar] [CrossRef]

- Playful Journey Lab. Shadowspect Trailer. 2019. Available online: https://youtu.be/j1w_bOvFNzM (accessed on 20 January 2021).

- Deeva, G.; De Smedt, J.; De Koninck, P.; De Weerdt, J. Dropout prediction in MOOCs: A comparison between process and sequence mining. In International Conference on Business Process Management; Springer: Berlin/Heidelberg, Germany, 2017; pp. 243–255. [Google Scholar]

- Vellido, A. The importance of interpretability and visualization in machine learning for applications in medicine and health care. Neural Comput. Appl. 2019, 1–15. [Google Scholar] [CrossRef]

- Ruipérez-Valiente, J.A.; Muñoz-Merino, P.J.; Pijeira, D.H.J.; Santofimia, R.J.; Kloos, C.D. Evaluation of a learning analytics application for open EdX platform. Comput. Sci. Inf. Syst. 2017, 14, 51–73. [Google Scholar] [CrossRef][Green Version]

- Mazza, R.; Milani, C. Gismo: A graphical interactive student monitoring tool for course management systems. In Proceedings of the International Conference on Technology Enhanced Learning, Milan, Italy, 15–18 September 2004; pp. 1–8. [Google Scholar]

- Wise, A.F. Designing pedagogical interventions to support student use of learning analytics. In Proceedings of the Fourth International Conference on Learning Analytics and Knowledge, Indianapolis, IN, USA, 24–28 March 2014; pp. 203–211. [Google Scholar]

- Minović, M.; Milovanović, M. Real-time learning analytics in educational games. In Proceedings of the First International Conference on Technological Ecosystem for Enhancing Multiculturality, Salamanca, Spain, 14–15 November 2013; pp. 245–251. [Google Scholar]

- Viberg, O.; Khalil, M.; Baars, M. Self-regulated learning and learning analytics in online learning environments: A review of empirical research. In Proceedings of the Tenth International Conference on Learning Analytics & Knowledge, Newport Beach, CA, USA, 1 December 2020; pp. 524–533. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gomez, M.J.; Ruipérez-Valiente, J.A.; Martínez, P.A.; Kim, Y.J. Applying Learning Analytics to Detect Sequences of Actions and Common Errors in a Geometry Game. Sensors 2021, 21, 1025. https://doi.org/10.3390/s21041025

Gomez MJ, Ruipérez-Valiente JA, Martínez PA, Kim YJ. Applying Learning Analytics to Detect Sequences of Actions and Common Errors in a Geometry Game. Sensors. 2021; 21(4):1025. https://doi.org/10.3390/s21041025

Chicago/Turabian StyleGomez, Manuel J., José A. Ruipérez-Valiente, Pedro A. Martínez, and Yoon Jeon Kim. 2021. "Applying Learning Analytics to Detect Sequences of Actions and Common Errors in a Geometry Game" Sensors 21, no. 4: 1025. https://doi.org/10.3390/s21041025

APA StyleGomez, M. J., Ruipérez-Valiente, J. A., Martínez, P. A., & Kim, Y. J. (2021). Applying Learning Analytics to Detect Sequences of Actions and Common Errors in a Geometry Game. Sensors, 21(4), 1025. https://doi.org/10.3390/s21041025