Clustering-Based Plane Segmentation Neural Network for Urban Scene Modeling

Abstract

:1. Introduction

2. Related Work

2.1. Hough Transform

2.2. RANSAC

2.3. Region Growing

2.4. Clustering

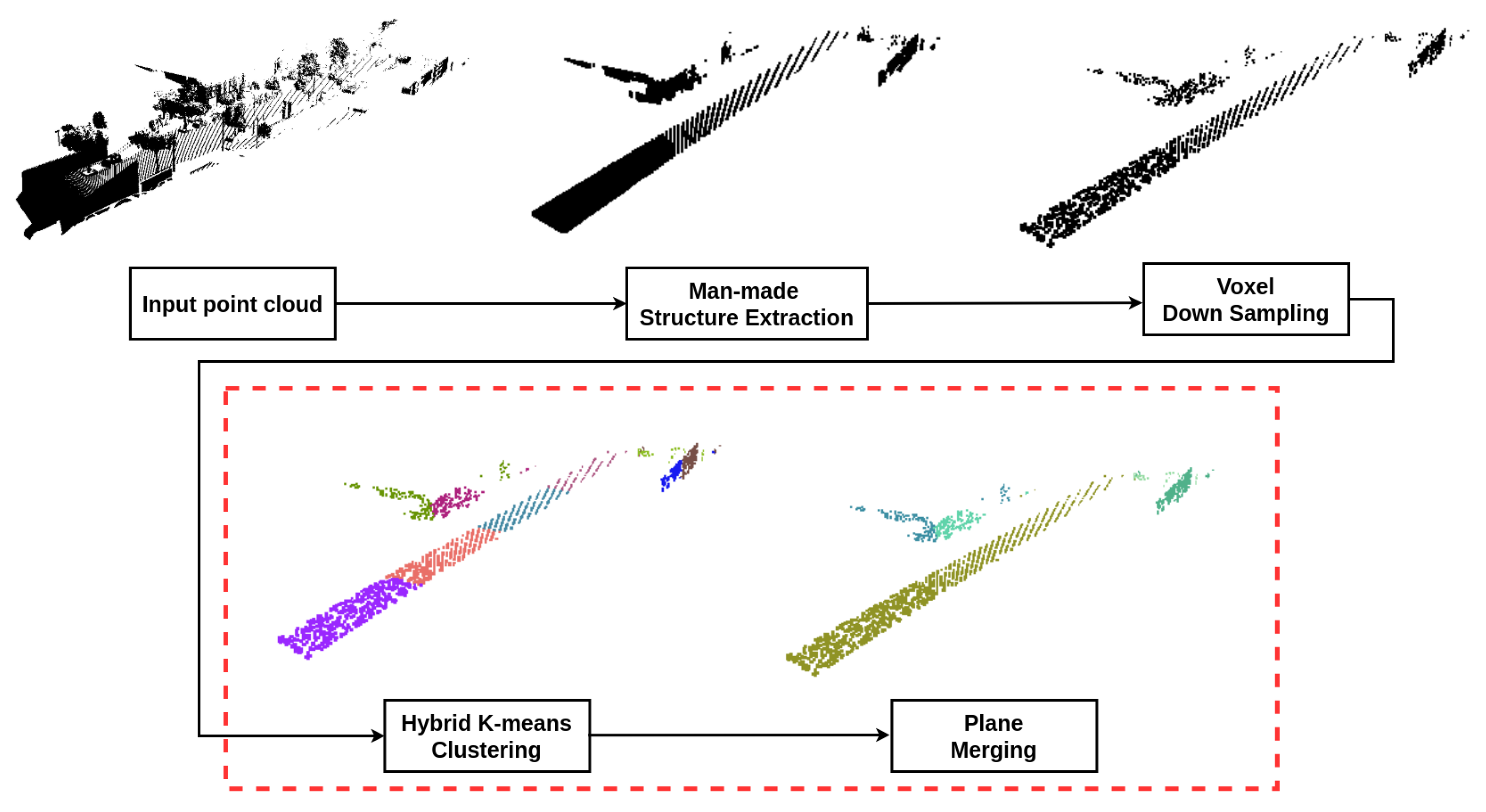

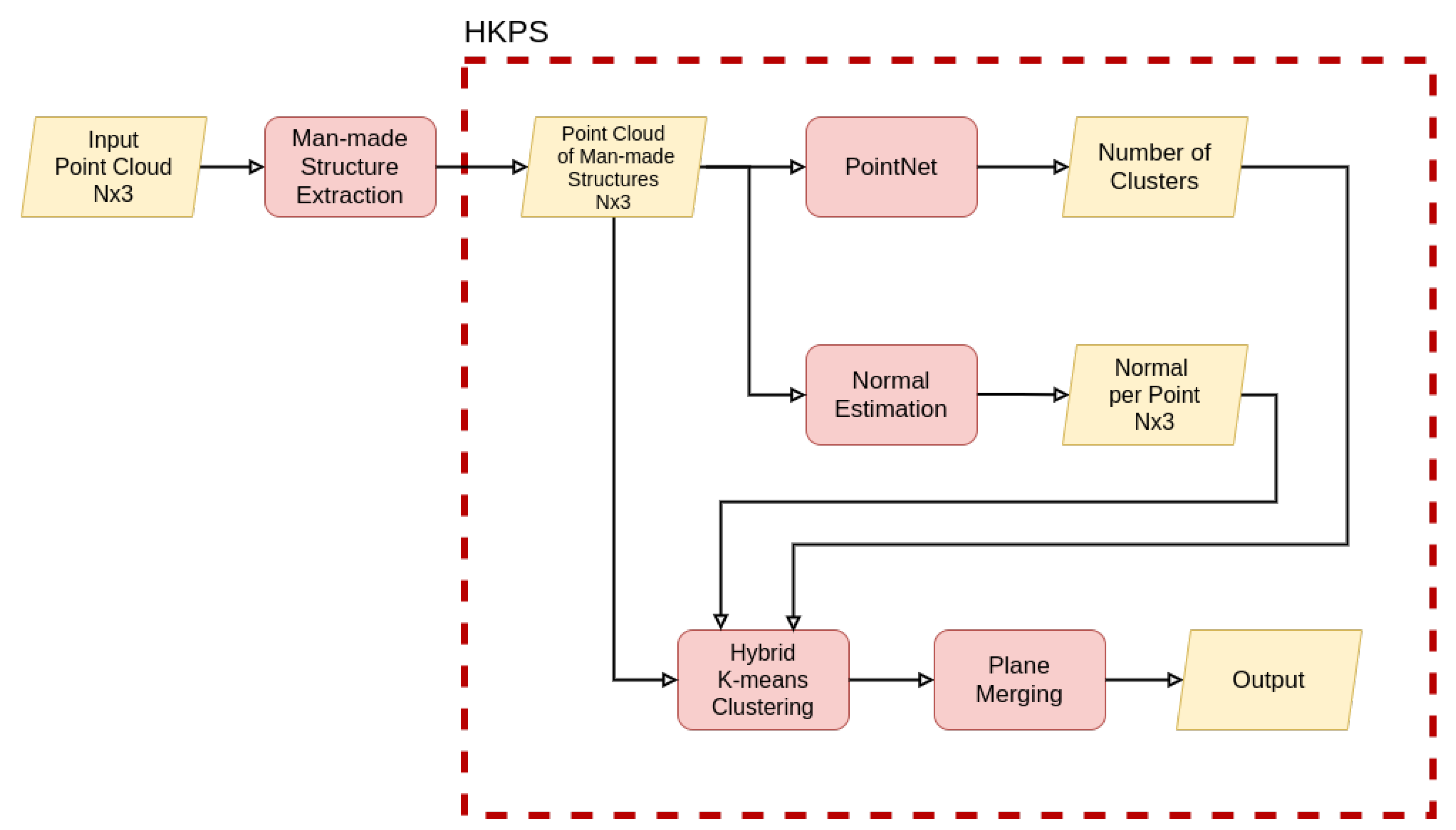

3. Hybrid K-Means Plane Segmentation Neural Network

3.1. Hybrid K-Means Clustering

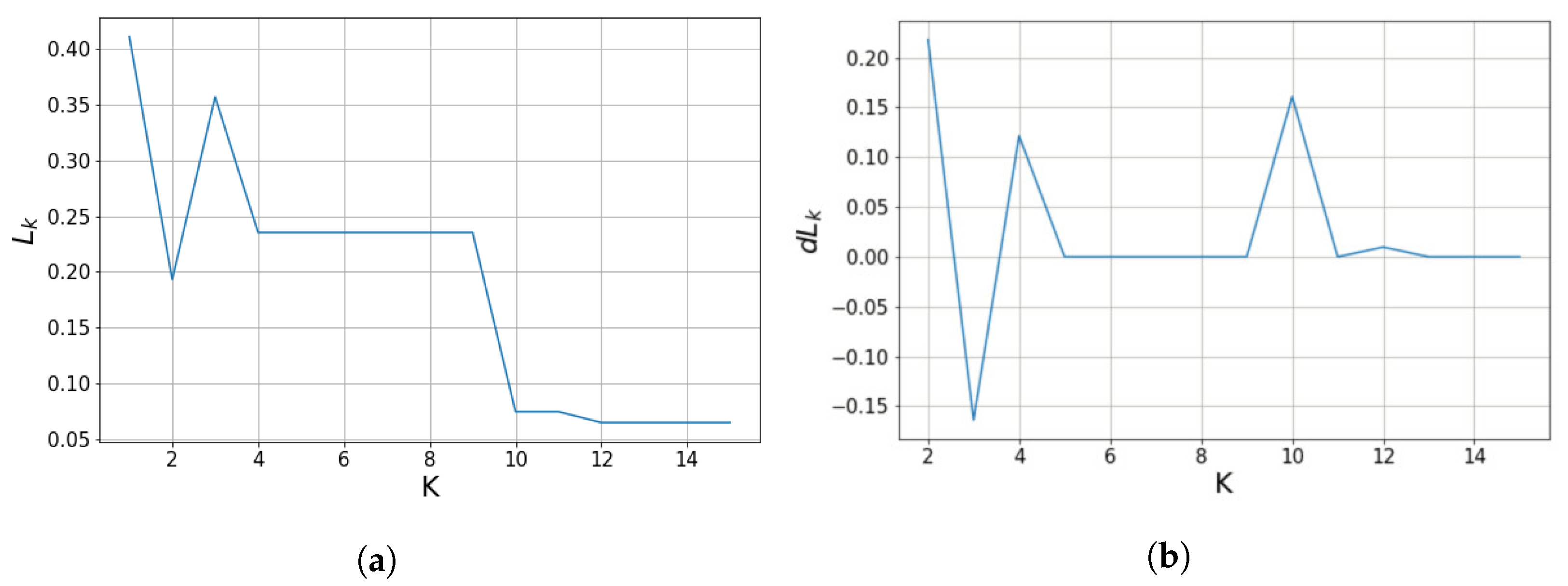

3.2. Parameter Estimation

3.3. Plane Merging

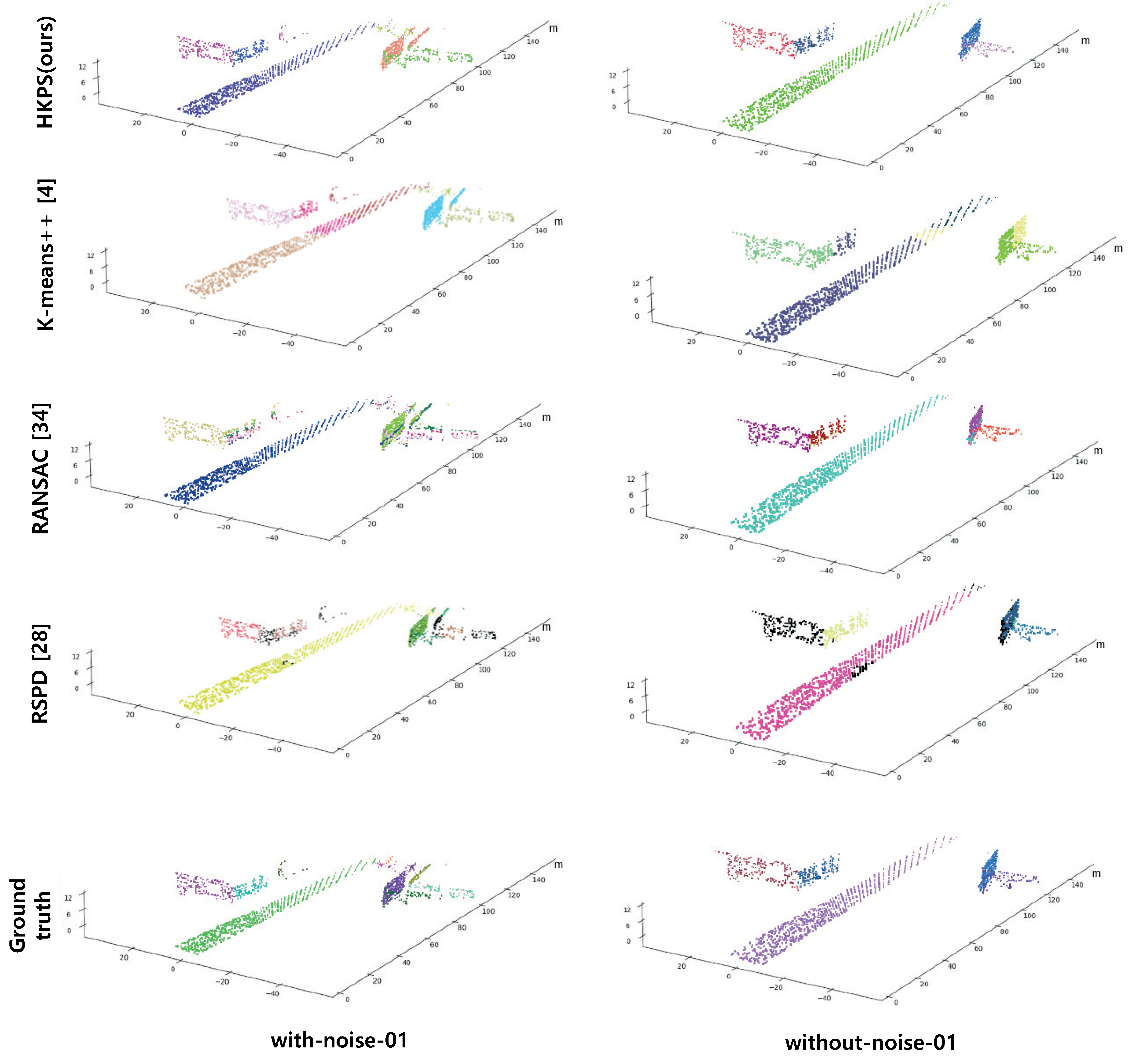

4. Results

4.1. Voxel Down Sampling

4.2. Performance Evaluation

4.3. Scalability

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Feng, C.; Taguchi, Y.; Kamat, V.R. Fast plane extraction in organized point clouds using agglomerative hierarchical clustering. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 6218–6225. [Google Scholar]

- Torr, P.H.; Zisserman, A. MLESAC: A new robust estimator with application to estimating image geometry. Comput. Vis. Image Underst. 2000, 78, 138–156. [Google Scholar] [CrossRef] [Green Version]

- Schaefer, A.; Vertens, J.; Büscher, D.; Burgard, W. A maximum likelihood approach to extract finite planes from 3-D laser scans. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 72–78. [Google Scholar]

- Arthur, D.; Vassilvitskii, S. k-Means++: The Advantages of Careful Seeding; Technical Report; Stanford University: Stanford, CA, USA, 2006. [Google Scholar]

- Lloyd, S. Least squares quantization in PCM. IEEE Trans. Inf. Theory 1982, 28, 129–137. [Google Scholar] [CrossRef]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. PointNet: Deep learning on point sets for 3D classification and segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Lee, H. HKPS. 2021. Available online: https://www.github.com/jimmy9704/plane-segmentation-network (accessed on 1 December 2021).

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Su, H.; Maji, S.; Kalogerakis, E.; Learned-Miller, E. Multi-view convolutional neural networks for 3D shape recognition. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 945–953. [Google Scholar]

- Li, L.; Sung, M.; Dubrovina, A.; Yi, L.; Guibas, L.J. Supervised fitting of geometric primitives to 3D point clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 2652–2660. [Google Scholar]

- Hůlková, M.; Pavelka, K.; Matoušková, E. Automatic classification of point clouds for highway documentation. Acta Polytech. 2018, 53, 165–170. [Google Scholar] [CrossRef]

- Alonso, I.; Riazuelo, L.; Montesano, L.; Murillo, A.C. 3D-mininet: Learning a 2D representation from point clouds for fast and efficient 3D lidar semantic segmentation. IEEE Robot. Autom. Lett. 2020, 5, 5432–5439. [Google Scholar] [CrossRef]

- Zhu, X.; Zhou, H.; Wang, T.; Hong, F.; Ma, Y.; Li, W.; Li, H.; Lin, D. Cylindrical and asymmetrical 3D convolution networks for lidar segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 9939–9948. [Google Scholar]

- Paigwar, A.; Erkent, Ö.; Sierra-Gonzalez, D.; Laugier, C. Gndnet: Fast ground plane estimation and point cloud segmentation for autonomous vehicles. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October–24 January 2021; pp. 2150–2156. [Google Scholar]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. In Proceedings of the 31st Conference on Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Hough, P.V. Method and Means for Recognizing Complex Patterns. US Patent 3069654, 18 December 1962. [Google Scholar]

- Vosselman, G.; Gorte, B.G.; Sithole, G.; Rabbani, T. Recognising structure in laser scanner point clouds. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2004, 46, 33–38. [Google Scholar]

- Limberger, F.A.; Oliveira, M.M. Real-time detection of planar regions in unorganized point clouds. Pattern Recognit. 2015, 48, 2043–2053. [Google Scholar] [CrossRef] [Green Version]

- Fernandes, L.A.; Oliveira, M.M. Real-time line detection through an improved Hough transform voting scheme. Pattern Recognit. 2008, 41, 299–314. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Gotardo, P.F.; Bellon, O.R.P.; Silva, L. Range image segmentation by surface extraction using an improved robust estimator. In Proceedings of the 2003 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), Madison, WI, USA, 18–20 June 2003; Volume 2, pp. 33–38. [Google Scholar]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for point-cloud shape detection. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2007; Volume 26, pp. 214–226. [Google Scholar]

- Gallo, O.; Manduchi, R.; Rafii, A. CC-RANSAC: Fitting planes in the presence of multiple surfaces in range data. Pattern Recognit. Lett. 2011, 32, 403–410. [Google Scholar] [CrossRef] [Green Version]

- Nurunnabi, A.; Belton, D.; West, G. Robust segmentation in laser scanning 3D point cloud data. In Proceedings of the 2012 International Conference on Digital Image Computing Techniques and Applications (DICTA), Fremantle, WA, Australia, 3–5 December 2012; pp. 1–8. [Google Scholar]

- Vo, A.V.; Truong-Hong, L.; Laefer, D.F.; Bertolotto, M. Octree-based region growing for point cloud segmentation. ISPRS J. Photogramm. Remote Sens. 2015, 104, 88–100. [Google Scholar] [CrossRef]

- Araújo, A.M.; Oliveira, M.M. A robust statistics approach for plane detection in unorganized point clouds. Pattern Recognit. 2020, 100, 107115. [Google Scholar] [CrossRef]

- Ester, M.; Kriegel, H.P.; Sander, J.; Xu, X. A density-based algorithm for discovering clusters in large spatial databases with noise. In Proceedings of the kdd, Portland, OR, USA, 2–4 August 1996; Volume 96, pp. 226–231. [Google Scholar]

- Dhillon, I.S.; Modha, D.S. Concept decompositions for large sparse text data using clustering. Mach. Learn. 2001, 42, 143–175. [Google Scholar] [CrossRef] [Green Version]

- Zhou, Q.Y.; Park, J.; Koltun, V. Open3D: A Modern Library for 3D Data Processing. arXiv 2018, arXiv:1801.09847. [Google Scholar]

- Gaidon, A.; Wang, Q.; Cabon, Y.; Vig, E. Virtual Worlds as Proxy for Multi-Object Tracking Analysis. In Proceedings of the CVPR, Las Vegas, NV, USA, 27–30 June 2016; Available online: https://europe.naverlabs.com/research/computer-vision/proxy-virtual-worlds-vkitti-1/ (accessed on 1 October 2021).

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for Autonomous Driving? The KITTI Vision Benchmark Suite. In Proceedings of the IEEE International Conference on Computer Vision (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 3354–3361. [Google Scholar]

- Mariga, L. pyRANSAC-3D. 2021. Available online: https://github.com/leomariga/pyRANSAC-3D (accessed on 1 October 2021).

- Hoover, A.; Jean-Baptiste, G.; Jiang, X.; Flynn, P.J.; Bunke, H.; Goldgof, D.B.; Bowyer, K.; Eggert, D.W.; Fitzgibbon, A.; Fisher, R.B. An experimental comparison of range image segmentation algorithms. IEEE Trans. Pattern Anal. Mach. Intell. 1996, 18, 673–689. [Google Scholar] [CrossRef] [Green Version]

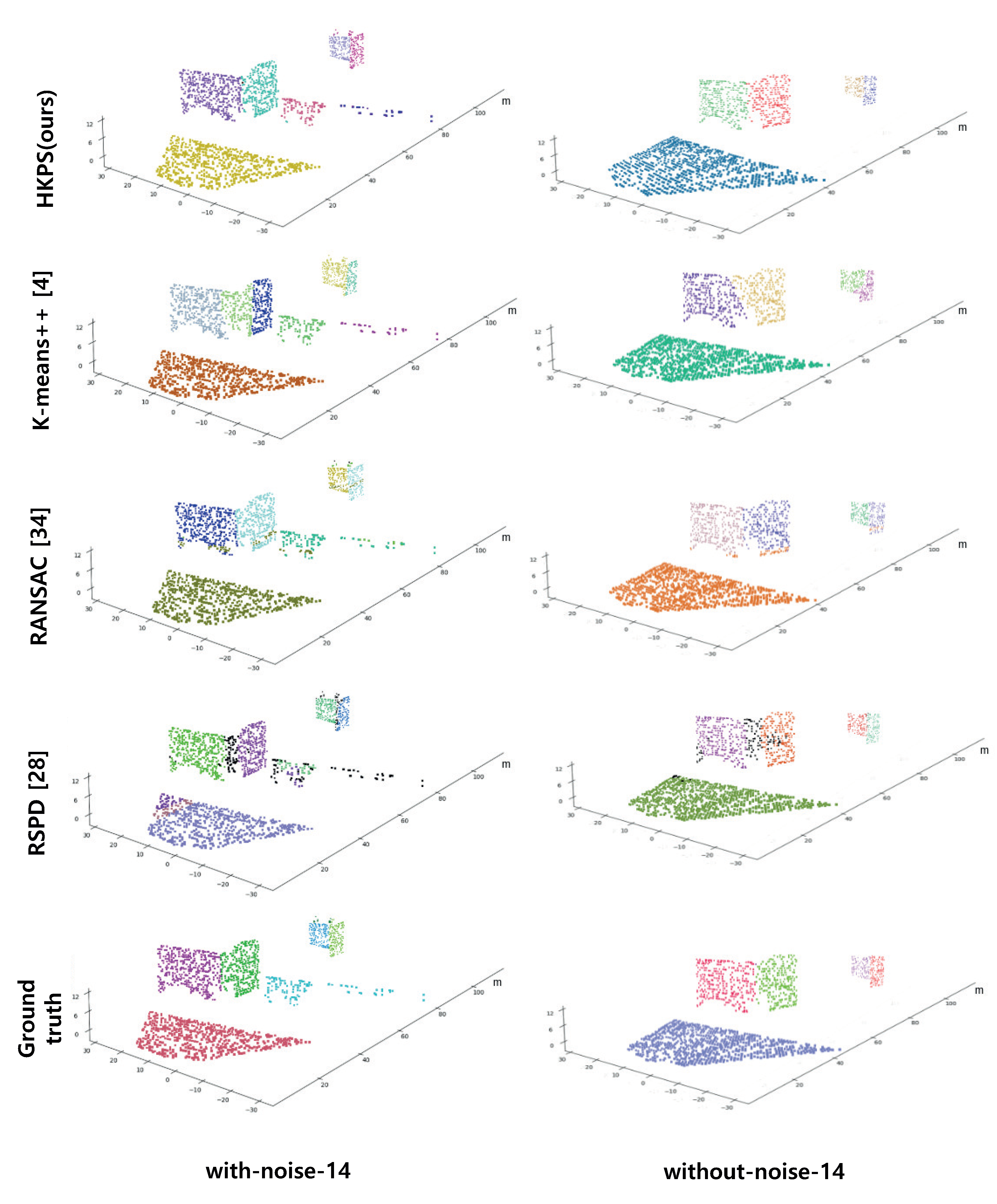

| Method | Correct Detection [%] | Under- Segmentation [%] | Over- Segmentation [%] | Missed [%] | Noise [%] | Avg. Cosine Distance |

|---|---|---|---|---|---|---|

| HKPS (ours) | 84.26 | 3.18 | 11.31 | 0.19 | 0.1 | 0.0337 |

| K-means++ [4] | 70.56 | 0.76 | 17.27 | 9.41 | 9.40 | 0.0820 |

| RANSAC [34] | 72.36 | 0.06 | 6.97 | 13.28 | 13.69 | 0.0606 |

| RSPD [28] | 72.54 | 12.35 | 0.07 | 10.05 | 14.78 | 0.0182 |

| Method | Correct Detection [%] | Under- Segmentation [%] | Over- Segmentation [%] | Missed [%] | Noise [%] | Avg. Cosine Distance |

|---|---|---|---|---|---|---|

| HKPS (ours) | 83.80 | 1.46 | 9.50 | 2.76 | 2.10 | 0.0315 |

| K-means++ [4] | 51.87 | 14.70 | 17.93 | 11.68 | 10.76 | 0.0916 |

| RANSAC [34] | 70.93 | 2.42 | 4.14 | 18.30 | 16.44 | 0.1284 |

| RSPD [28] | 65.96 | 9.94 | 1.21 | 17.11 | 18.69 | 0.0106 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, H.; Jung, J. Clustering-Based Plane Segmentation Neural Network for Urban Scene Modeling. Sensors 2021, 21, 8382. https://doi.org/10.3390/s21248382

Lee H, Jung J. Clustering-Based Plane Segmentation Neural Network for Urban Scene Modeling. Sensors. 2021; 21(24):8382. https://doi.org/10.3390/s21248382

Chicago/Turabian StyleLee, Hongjae, and Jiyoung Jung. 2021. "Clustering-Based Plane Segmentation Neural Network for Urban Scene Modeling" Sensors 21, no. 24: 8382. https://doi.org/10.3390/s21248382

APA StyleLee, H., & Jung, J. (2021). Clustering-Based Plane Segmentation Neural Network for Urban Scene Modeling. Sensors, 21(24), 8382. https://doi.org/10.3390/s21248382