Experimental Study on Wound Area Measurement with Mobile Devices

Abstract

1. Introduction

2. Related Work

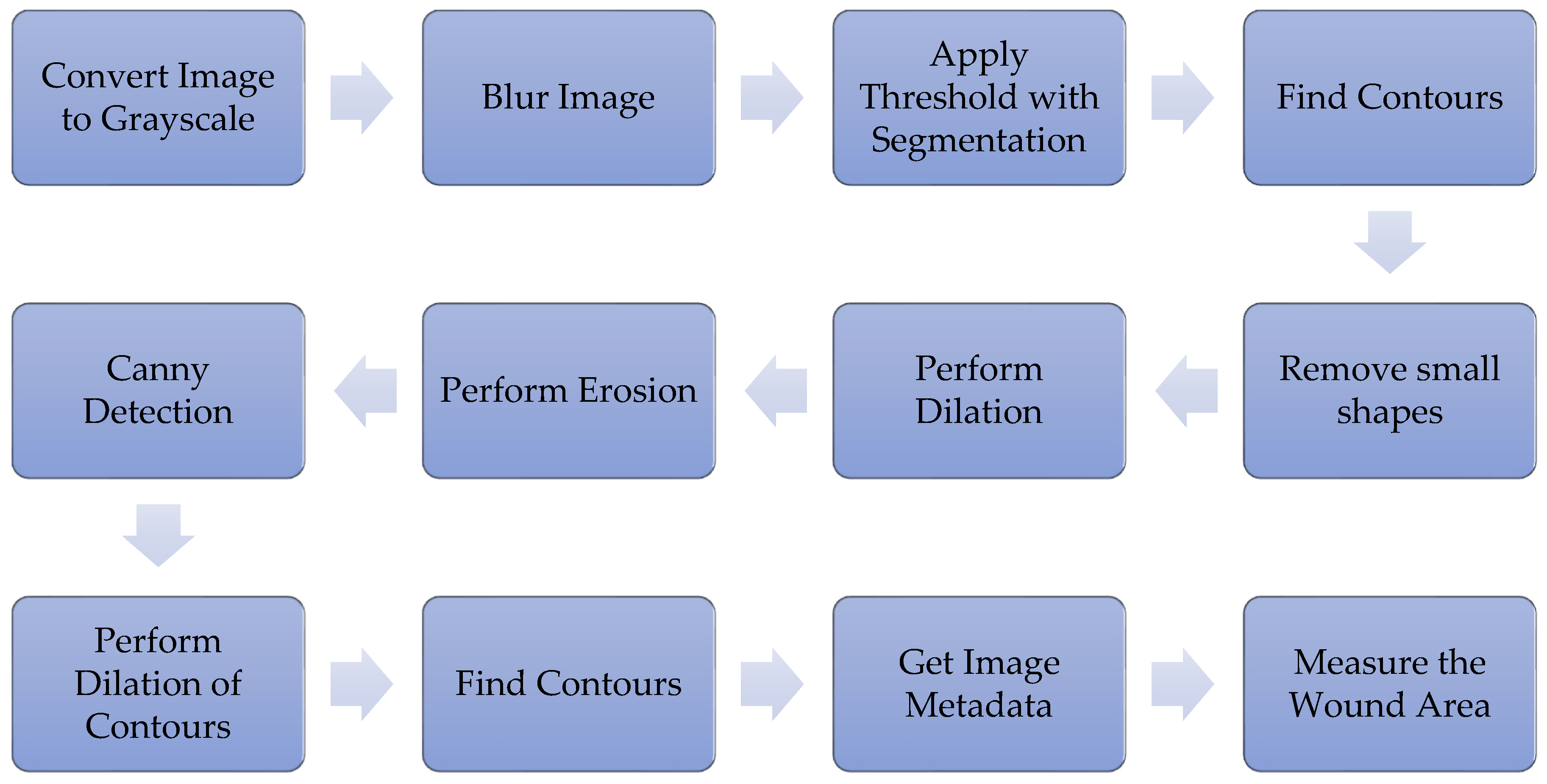

3. Methodology

3.1. Dataset

3.2. Data Processing Steps

3.2.1. Convert the Image to Grayscale

3.2.2. Blur the Image

3.2.3. Threshold with Segmentation

3.2.4. Find Contours

3.2.5. Remove Small Shapes

3.2.6. Perform Dilation for Canny Detection

3.2.7. Perform Erosion for Canny Detection

3.2.8. Canny Detection

3.2.9. Perform Dilation to Find Contours

3.2.10. Obtain Image Metadata

3.2.11. Measure the Wound Area

3.3. Evaluation Criteria

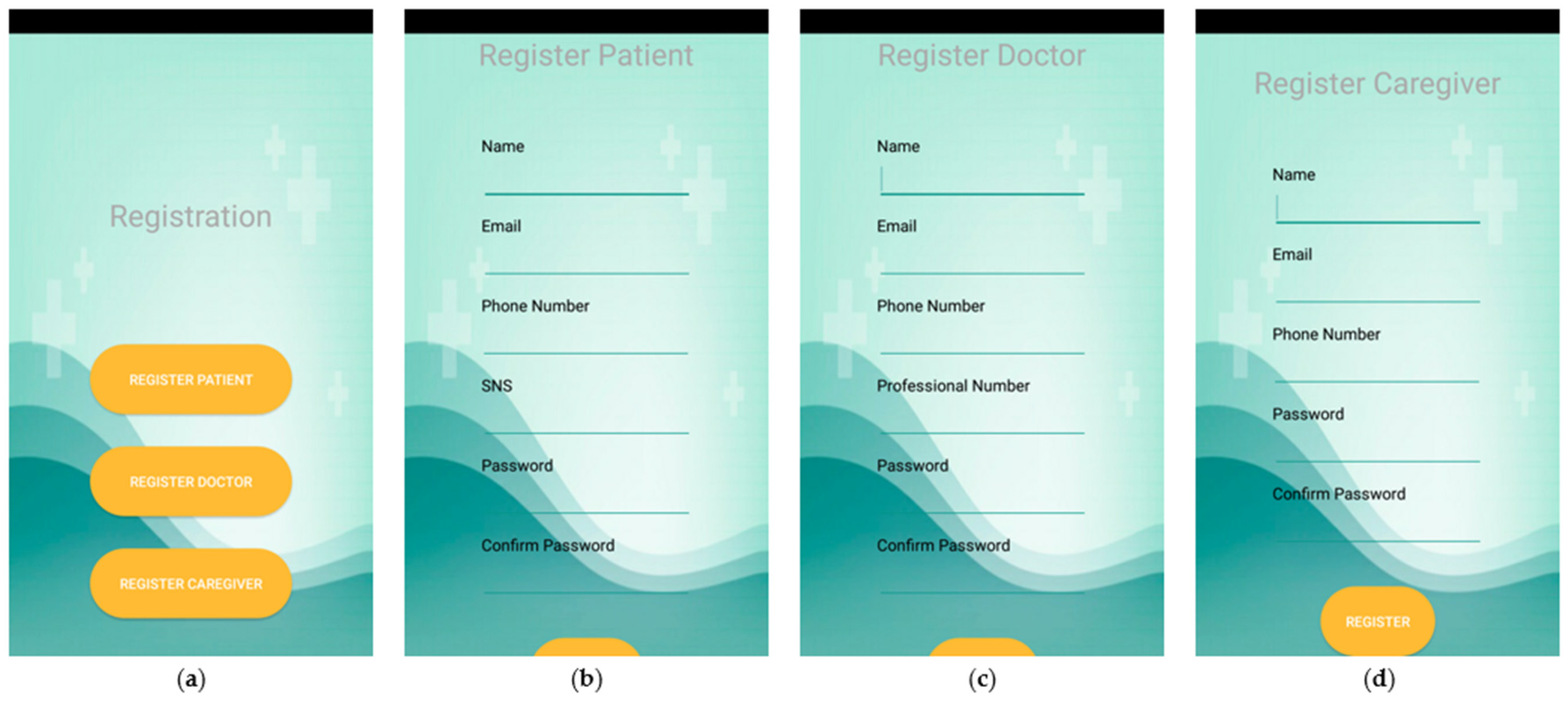

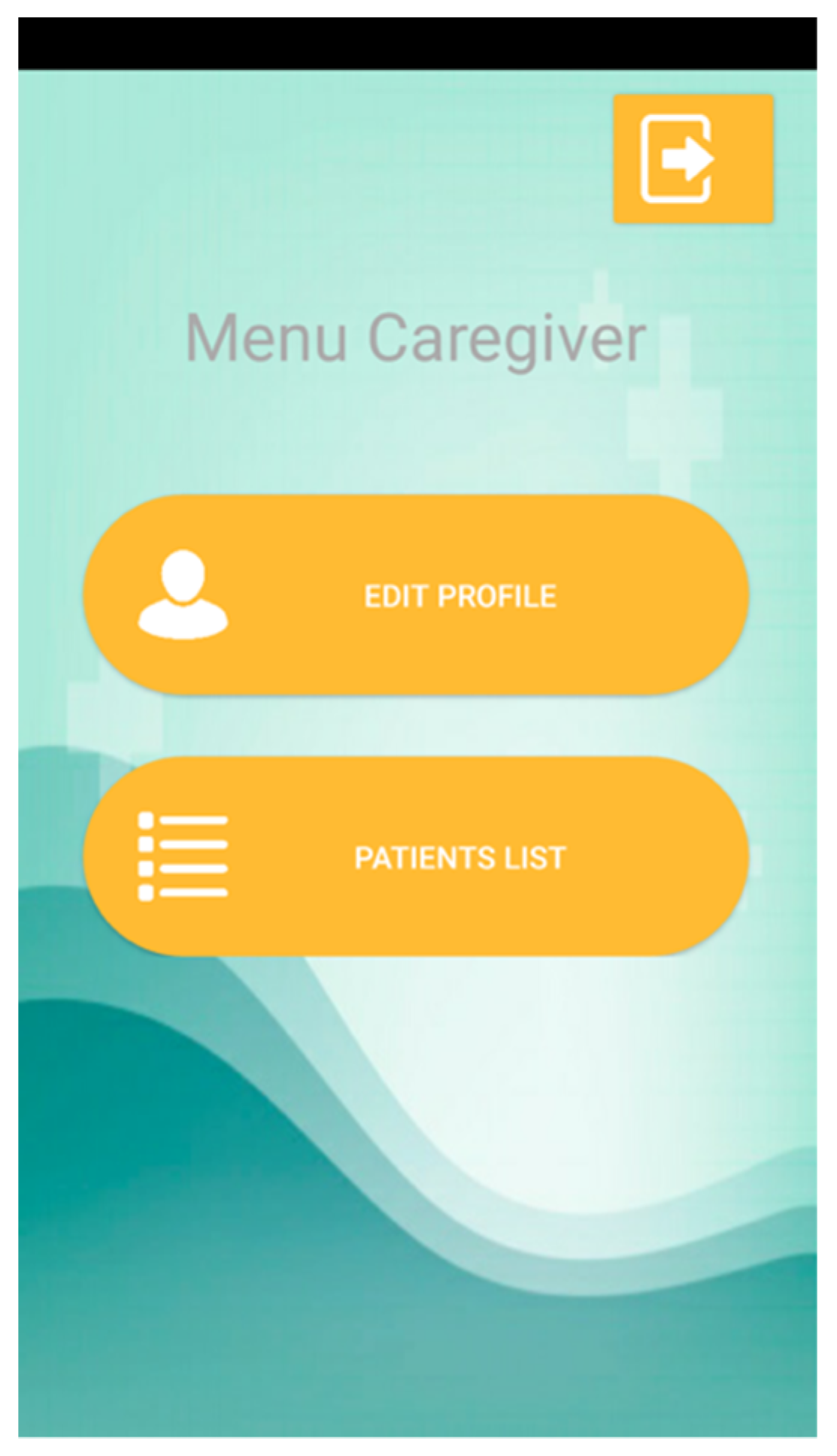

3.4. Mobile Application

4. Results

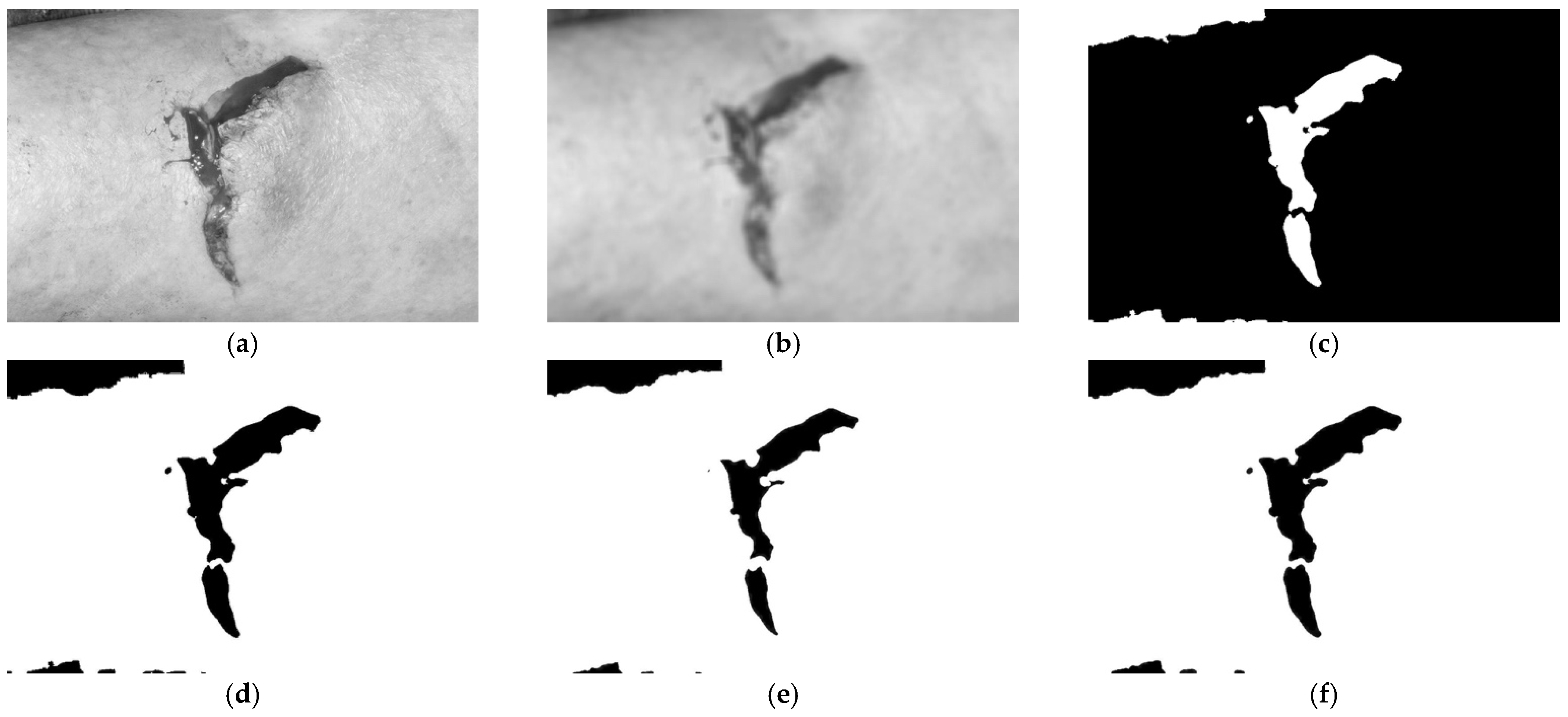

4.1. Convert the Image to Grayscale

4.2. Blur the Image

4.3. Apply the Threshold with Segmentation

4.4. Find Contours and Remove Small Shapes

4.5. Perform Dilation

4.6. Perform Erosion

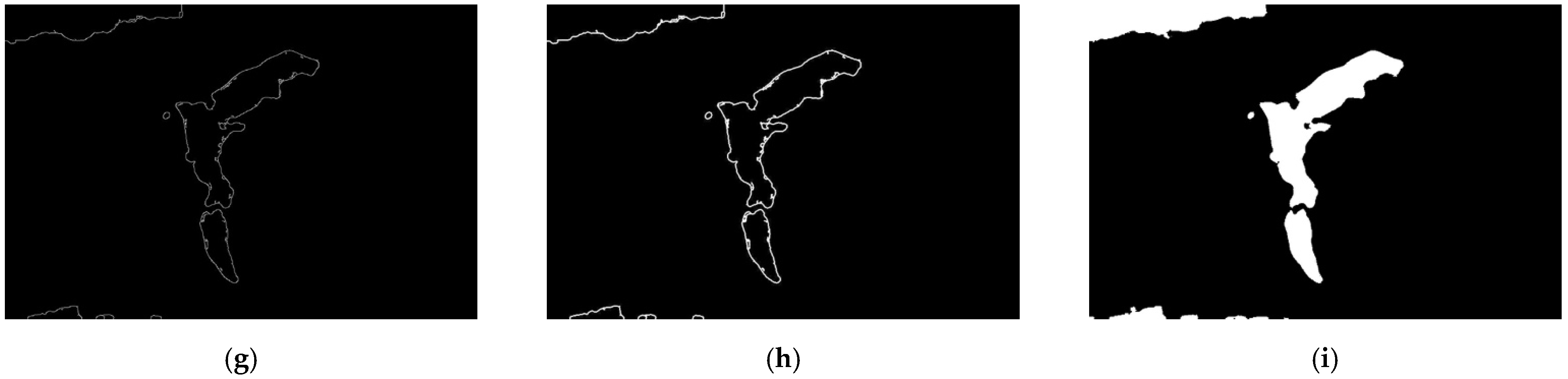

4.7. Canny Detection

4.8. Perform Dilation of the Contours

4.9. Find Contours, Obtain Image Metadata, and Measure the Wound Area

4.10. Measurement of the Wound Area

4.11. Summary of the Analysis of the Dataset with the Desktop Application

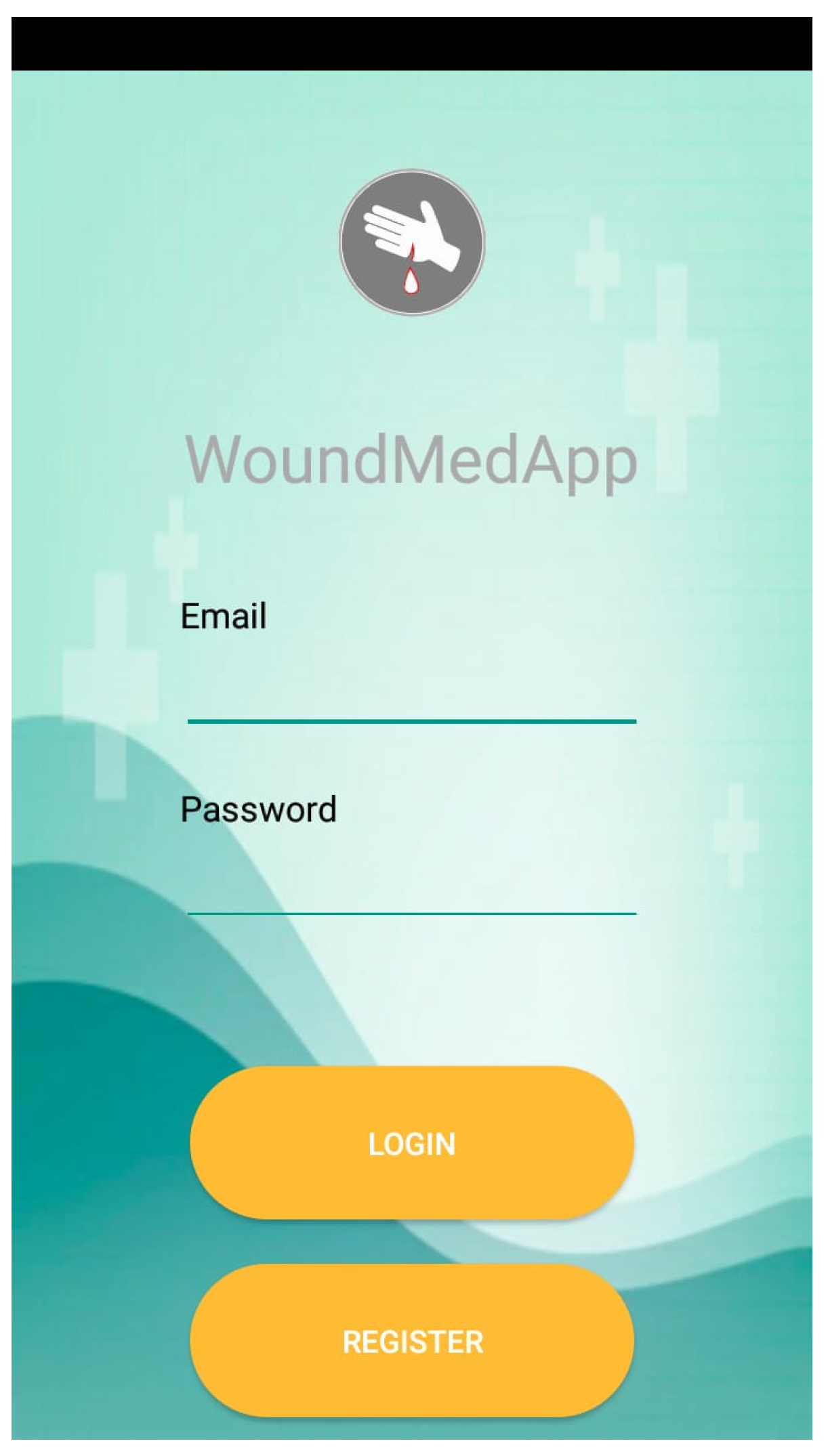

5. Mobile Application

5.1. Description of the Functionalities

5.2. Summary of the Analysis of the Dataset with the Mobile Application

6. Discussion

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Morikawa, C.; Kobayashi, M.; Satoh, M.; Kuroda, Y.; Inomata, T.; Matsuo, H.; Miura, T.; Hilaga, M. Image and Video Processing on Mobile Devices: A Survey. Vis. Comput. 2021, 1–19. [Google Scholar] [CrossRef]

- Cheng, M.; Ma, Z.; Asif, S.; Xu, Y.; Liu, H.; Bao, W.; Sun, J. A Dual Camera System for High Spatiotemporal Resolution Video Acquisition. arXiv 2020, arXiv:1909.13051v2. [Google Scholar] [CrossRef]

- Lucas, Y.; Niri, R.; Treuillet, S.; Douzi, H.; Castaneda, B. Wound Size Imaging: Ready for Smart Assessment and Monitoring. Adv. Wound Care 2020, 10, 641–661. [Google Scholar] [CrossRef] [PubMed]

- Moreira, D.; Alves, P.; Veiga, F.; Rosado, L.; Vasconcelos, M.J.M. Automated Mobile Image Acquisition of Macroscopic Dermatological Lesions. In Proceedings of the HEALTHINF 2021, 14th International Conference on Health Informatics, Online Streaming, 11–13 February 2021; pp. 122–132. [Google Scholar]

- El Kefi, S.; Asan, O. How Technology Impacts Communication between Cancer Patients and Their Health Care Providers: A Systematic Literature Review. Int. J. Med. Inf. 2021, 149, 104430. [Google Scholar] [CrossRef]

- Erikainen, S.; Pickersgill, M.; Cunningham-Burley, S.; Chan, S. Patienthood and Participation in the Digital Era. Digit. Health 2019, 5, 2055207619845546. [Google Scholar] [CrossRef] [PubMed]

- Seyhan, A.A.; Carini, C. Are Innovation and New Technologies in Precision Medicine Paving a New Era in Patients Centric Care? J. Transl. Med. 2019, 17, 114. [Google Scholar] [CrossRef]

- Pervez, F.; Qadir, J.; Khalil, M.; Yaqoob, T.; Ashraf, U.; Younis, S. Wireless Technologies for Emergency Response: A Comprehensive Review and Some Guidelines. IEEE Access 2018, 6, 71814–71838. [Google Scholar] [CrossRef]

- Alrahbi, D.A.; Khan, M.; Gupta, S.; Modgil, S.; Jabbour, C.J.C. Challenges for Developing Health-Care Knowledge in the Digital Age. J. Knowl. Manag. 2020. [Google Scholar] [CrossRef]

- Majumder, S.; Deen, M.J. Smartphone Sensors for Health Monitoring and Diagnosis. Sensors 2019, 19, 2164. [Google Scholar] [CrossRef] [PubMed]

- Byrom, B.; McCarthy, M.; Schueler, P.; Muehlhausen, W. Brain Monitoring Devices in Neuroscience Clinical Research: The Potential of Remote Monitoring Using Sensors, Wearables, and Mobile Devices. Clin. Pharmacol. Ther. 2018, 104, 59–71. [Google Scholar] [CrossRef]

- Ruggeri, M.; Bianchi, E.; Rossi, S.; Vigani, B.; Bonferoni, M.C.; Caramella, C.; Sandri, G.; Ferrari, F. Nanotechnology-Based Medical Devices for the Treatment of Chronic Skin Lesions: From Research to the Clinic. Pharmaceutics 2020, 12, 815. [Google Scholar] [CrossRef]

- Benjamens, S.; Dhunnoo, P.; Meskó, B. The State of Artificial Intelligence-Based FDA-Approved Medical Devices and Algorithms: An Online Database. NPJ Digit. Med. 2020, 3, 118. [Google Scholar] [CrossRef] [PubMed]

- Guo, C.; Ashrafian, H.; Ghafur, S.; Fontana, G.; Gardner, C.; Prime, M. Challenges for the Evaluation of Digital Health Solutions—A Call for Innovative Evidence Generation Approaches. NPJ Digit. Med. 2020, 3, 110. [Google Scholar] [CrossRef] [PubMed]

- Porter, M.E.; Teisberg, E.O. How Physicians Can Change the Future of Health Care. JAMA 2007, 297, 1103–1111. [Google Scholar] [CrossRef] [PubMed]

- Fonder, M.A.; Lazarus, G.S.; Cowan, D.A.; Aronson-Cook, B.; Kohli, A.R.; Mamelak, A.J. Treating the Chronic Wound: A Practical Approach to the Care of Nonhealing Wounds and Wound Care Dressings. J. Am. Acad. Dermatol. 2008, 58, 185–206. [Google Scholar] [CrossRef]

- Stefanopoulos, P.K.; Pinialidis, D.E.; Hadjigeorgiou, G.F.; Filippakis, K.N. Wound Ballistics 101: The Mechanisms of Soft Tissue Wounding by Bullets. Eur. J. Trauma Emerg. Surg. 2017, 43, 579–586. [Google Scholar] [CrossRef]

- Kapur, S.; Thakkar, N. Mastering OpenCV Android Application Programming Master the Art of Implementing Computer Vision Algorithms on Android Platforms to Build Robust and Efficient Applications; Packt Publishing: Birmingham, UK, 2015; ISBN 978-1-78398-821-1. [Google Scholar]

- Lameski, P.; Zdravevski, E.; Trajkovik, V.; Kulakov, A. Weed Detection Dataset with RGB Images Taken Under Variable Light Conditions. In ICT Innovations 2017; Communications in Computer and Information Science; Trajanov, D., Bakeva, V., Eds.; Springer: Cham, Switzerland, 2017; Volume 778, pp. 112–119. ISBN 978-3-319-67596-1. [Google Scholar]

- Liu, X.; Song, L.; Liu, S.; Zhang, Y. A Review of Deep-Learning-Based Medical Image Segmentation Methods. Sustainability 2021, 13, 1224. [Google Scholar] [CrossRef]

- Zdravevski, E.; Lameski, P.; Apanowicz, C.; Ślȩzak, D. From Big Data to Business Analytics: The Case Study of Churn Prediction. Appl. Soft Comput. 2020, 90, 106164. [Google Scholar] [CrossRef]

- Ferreira, F.; Pires, I.M.; Costa, M.; Ponciano, V.; Garcia, N.M.; Zdravevski, E.; Chorbev, I.; Mihajlov, M. A Systematic Investigation of Models for Color Image Processing in Wound Size Estimation. Computers 2021, 10, 43. [Google Scholar] [CrossRef]

- Ferreira, F.; Pires, I.M.; Ponciano, V.; Costa, M.; Garcia, N.M. Approach for the Wound Area Measurement with Mobile Devices. In Proceedings of the 2021 IEEE International IOT, Electronics and Mechatronics Conference (IEMTRONICS), Toronto, ON, Canada, 21–24 April 2021; pp. 1–4. [Google Scholar]

- Bulan, O. Improved Wheal Detection from Skin Prick Test Images. In Proceedings of the Image Processing: Machine Vision Applications VII 2014, San Francisco, CA, USA, 3–4 February 2014; p. 90240J. [Google Scholar]

- Gupta, A. Real Time Wound Segmentation/Management Using Image Processing on Handheld Devices. J. Comput. Methods Sci. Eng. 2017, 17, 321–329. [Google Scholar] [CrossRef]

- Sirazitdinova, E.; Deserno, T.M. System Design for 3D Wound Imaging Using Low-Cost Mobile Devices. In Proceedings of the Medical Imaging 2017: Imaging Informatics for Healthcare, Research, and Applications 2017, Orlando, FL, USA, 15–16 February 2017; p. 1013810. [Google Scholar]

- Petrovska, B.; Atanasova-Pacemska, T.; Corizzo, R.; Mignone, P.; Lameski, P.; Zdravevski, E. Aerial Scene Classification through Fine-Tuning with Adaptive Learning Rates and Label Smoothing. Appl. Sci. 2020, 10, 5792. [Google Scholar] [CrossRef]

- Liu, S.; Wang, S.; Liu, X.; Gandomi, A.H.; Daneshmand, M.; Muhammad, K.; De Albuquerque, V.H.C. Human Memory Update Strategy: A Multi-Layer Template Update Mechanism for Remote Visual Monitoring. IEEE Trans. Multimed. 2021, 23, 2188–2198. [Google Scholar] [CrossRef]

- Garcia-Zapirain, B.; Shalaby, A.; El-Baz, A.; Elmaghraby, A. Automated Framework for Accurate Segmentation of Pressure Ulcer Images. Comput. Biol. Med. 2017, 90, 137–145. [Google Scholar] [CrossRef] [PubMed]

- Satheesha, T.Y.; Satyanarayana, D.; Giri Prasad, M.N. Early Detection of Melanoma Using Color and Shape Geometry Feature. J. Biomed. Eng. Med. Imaging 2015, 2, 33–45. [Google Scholar] [CrossRef][Green Version]

- Naraghi, S.; Mutsvangwa, T.; Goliath, R.; Rangaka, M.X.; Douglas, T.S. Mobile Phone-Based Evaluation of Latent Tuberculosis Infection: Proof of Concept for an Integrated Image Capture and Analysis System. Comput. Biol. Med. 2018, 98, 76–84. [Google Scholar] [CrossRef] [PubMed]

- Wu, W.; Yong, K.Y.W.; Federico, M.A.J.; Gan, S.K.-E. The APD Skin Monitoring App for Wound Monitoring: Image Processing, Area Plot, and Colour Histogram. Sci. Phone Apps Mob. Device 2019, 5, 3. [Google Scholar] [CrossRef]

- Tang, M.; Gary, K.; Guler, O.; Cheng, P. A Lightweight App Distribution Strategy to Generate Interest in Complex Commercial Apps: Case Study of an Automated Wound Measurement System. In Proceedings of the 50th Hawaii International Conference on System Sciences, Hilton Waikoloa Village, HI, USA, 4–7 January 2017. [Google Scholar]

- Yee, A.; Patel, M.; Wu, E.; Yi, S.; Marti, G.; Harmon, J. IDr: An Intelligent Digital Ruler App for Remote Wound Assessment. In Proceedings of the 2016 IEEE First International Conference on Connected Health: Applications, Systems and Engineering Technologies (CHASE), Washington, DC, USA, 26–29 June 2016; pp. 380–381. [Google Scholar]

- Hettiarachchi, N.D.J.; Mahindaratne, R.B.H.; Mendis, G.D.C.; Nanayakkara, H.T.; Nanayakkara, N.D. Mobile Based Wound Measurement. In Proceedings of the 2013 IEEE Point-of-Care Healthcare Technologies (PHT), Bangalore, India, 16–18 January 2013; pp. 298–301. [Google Scholar]

- Chen, Y.-W.; Hsu, J.-T.; Hung, C.-C.; Wu, J.-M.; Lai, F.; Kuo, S.-Y. Surgical Wounds Assessment System for Self-Care. IEEE Trans. Syst. Man Cybern. Syst. 2019, 50, 5076–5091. [Google Scholar] [CrossRef]

- Huang, C.-H.; Jhan, S.-D.; Lin, C.-H.; Liu, W.-M. Automatic Size Measurement and Boundary Tracing of Wound on a Mobile Device. In Proceedings of the 2018 IEEE International Conference on Consumer Electronics-Taiwan (ICCE-TW), Taichung, Taiwan, 19–21 May 2018; pp. 1–2. [Google Scholar]

- Kumar, S.; Jaglan, D.; Ganapathy, N.; Deserno, T.M. A Comparison of Open Source Libraries Ready for 3D Reconstruction of Wounds. In Medical Imaging 2019: Imaging Informatics for Healthcare, Research, and Applications; Bak, P.R., Chen, P.-H., Eds.; SPIE: San Diego, CA, USA, 2019; p. 9. [Google Scholar]

- Lu, M.; Yee, A.; Meng, F.; Harmon, J.; Hinduja, S.; Yi, S. Enhance Wound Healing Monitoring through a Thermal Imaging Based Smartphone App. In Medical Imaging 2018: Imaging Informatics for Healthcare, Research, and Applications; Zhang, J., Chen, P.-H., Eds.; SPIE: Houston, TX, USA, 2018; p. 60. [Google Scholar]

- Au, Y.; Beland, B.; Anderson, J.A.E.; Sasseville, D.; Wang, S.C. Time-Saving Comparison of Wound Measurement Between the Ruler Method and the Swift Skin and Wound App. J. Cutan. Med. Surg. 2019, 23, 226–228. [Google Scholar] [CrossRef]

- Novas, N.; Alvarez-Bermejo, J.A.; Valenzuela, J.L.; Gázquez, J.A.; Manzano-Agugliaro, F. Development of a Smartphone Application for Assessment of Chilling Injuries in Zucchini. Biosyst. Eng. 2019, 181, 114–127. [Google Scholar] [CrossRef]

- Pires, I.M. A Review on Diagnosis and Treatment Methods for Coronavirus Disease with Sensors. In Proceedings of the 2020 International Conference on Decision Aid Sciences and Application (DASA), Sakheer, Bahrain, 8–9 November 2020; pp. 219–223. [Google Scholar]

- Capris, T.; Melo, P.; Pereira, P.; Morgado, J.; Garcia, N.M.; Pires, I.M. Approach for the Development of a System for COVID-19 Preliminary Test. In Science and Technologies for Smart Cities; Lecture Notes of the Institute for Computer Sciences, Social Informatics and Telecommunications Engineering; Paiva, S., Lopes, S.I., Zitouni, R., Gupta, N., Lopes, S.F., Yonezawa, T., Eds.; Springer: Cham, Switzerland, 2021; Volume 372, pp. 117–124. ISBN 978-3-030-76062-5. [Google Scholar]

- Science and Medical Images, Photos, Illustrations, Video Footage—Science Photo Library. Available online: https://www.sciencephoto.com/ (accessed on 20 August 2021).

- Open Wounds—Stock Photos e Imagens—iStock. Available online: https://www.istockphoto.com/pt/fotos/open-wounds (accessed on 20 August 2021).

- Prasad, S.; Kumar, P.; Sinha, K.P. Grayscale to Color Map Transformation for Efficient Image Analysis on Low Processing Devices. In Advances in Intelligent Informatics; Advances in Intelligent Systems and, Computing; El-Alfy, E.-S.M., Thampi, S.M., Takagi, H., Piramuthu, S., Hanne, T., Eds.; Springer: Cham, Switzerland, 2015; Volume 320, pp. 9–18. ISBN 978-3-319-11217-6. [Google Scholar]

- Saravanan, C. Color Image to Grayscale Image Conversion. In Proceedings of the 2010 Second International Conference on Computer Engineering and Applications, Bali Island, Indonesia, 19–21 March 2010; pp. 196–199. [Google Scholar]

- Sen, C.K.; Ghatak, S.; Gnyawali, S.C.; Roy, S.; Gordillo, G.M. Cutaneous Imaging Technologies in Acute Burn and Chronic Wound Care. Plast. Reconstr. Surg. 2016, 138, 119S–128S. [Google Scholar] [CrossRef]

- Cazzolato, M.T.; Ramos, J.S.; Rodrigues, L.S.; Scabora, L.C.; Chino, D.Y.T.; Jorge, A.E.S.; Azevedo-Marques, P.M.; Traina, C.; Traina, A.J.M. Semi-Automatic Ulcer Segmentation and Wound Area Measurement Supporting Telemedicine. In Proceedings of the 2020 IEEE 33rd International Symposium on Computer-Based Medical Systems (CBMS), Rochester, MN, USA, 28–30 July 2020; pp. 356–361. [Google Scholar]

- Taufiq, M.A.; Hameed, N.; Anjum, A.; Hameed, F. m-Skin Doctor: A Mobile Enabled System for Early Melanoma Skin Cancer Detection Using Support Vector Machine. In eHealth 360°; Lecture Notes of the Institute for Computer Sciences, Social Informatics and Telecommunications Engineering; Giokas, K., Bokor, L., Hopfgartner, F., Eds.; Springer: Cham, Switzerland, 2017; Volume 181, pp. 468–475. ISBN 978-3-319-49654-2. [Google Scholar]

- Commons Imaging—Commons. Available online: https://commons.apache.org/proper/commons-imaging/ (accessed on 28 July 2021).

| Parameters | Values |

|---|---|

| Physical Width (DPI) | 300 |

| Physical Height (DPI) | 300 |

| Width (px) | 1024 |

| Height (px) | 706 |

| Total area (px) | 749,772 |

| Parameters | Values |

|---|---|

| Width (px) | 1024 |

| Height (px) | 706 |

| Width (cm2) | 8.99 |

| Height (cm2) | 5.98 |

| Total area (px) | 749,772 |

| Total area (cm2) | 53.7602 |

| Wound area (px) | 54,746.5 |

| Wound area (cm2) | 3.9254 |

| Figure | Wound Area Measured by Desktop Application (cm2) |

|---|---|

| 1a | 3.92 |

| 1b | 8.56 |

| 1c | 7.52 |

| 1d | 4.98 |

| 1e | 3.50 |

| 1f | 7.07 |

| 1g | 6.81 |

| 1h | 1.74 |

| 1i | 4.58 |

| 1j | 2.27 |

| Figure | Wound Area Measured by a Mobile Application (cm2) |

|---|---|

| 1a | 2.70 |

| 1b | 6.50 |

| 1c | 6.69 |

| 1d | 2.52 |

| 1e | 2.06 |

| 1f | 5.95 |

| 1g | 2.91 |

| 1h | 0.83 |

| 1i | 3.20 |

| 1j | 1.16 |

| Figure | Wound Area Measured by a Desktop Application (cm2) | Wound Area Measured by a Mobile Application (cm2) | Difference (cm2) |

|---|---|---|---|

| 1a | 3.92 | 2.70 | −1.22 (−31.1%) |

| 1b | 8.56 | 6.50 | −2.06 (−24.0%) |

| 1c | 7.52 | 6.69 | −0.83 (−11.0%) |

| 1d | 4.98 | 2.52 | −2.46 (−49.4%) |

| 1e | 3.50 | 2.06 | −1.44 (−41.1%) |

| 1f | 7.07 | 5.95 | −1.12 (−15.8%) |

| 1g | 6.81 | 2.91 | −3.90 (−57.3%) |

| 1h | 1.74 | 0.83 | −0.91 (−52.3%) |

| 1i | 4.58 | 3.20 | −1.38 (−30.1%) |

| 1j | 2.27 | 1.16 | −1.11 (−48.9%) |

| Figure | Adjusted Wound Area Measured by a Mobile Application (cm2) | Difference (cm2) |

|---|---|---|

| 1a | 3.64 | −0.28 (−7.1%) |

| 1b | 8.76 | +0.20 (+2.3%) |

| 1c | 9.01 | +1.49 (+19.8%) |

| 1d | 3.39 | −1.59 (−31.9%) |

| 1e | 3.42 | −0.08 (−2.3%) |

| 1f | 8.01 | +0.94 (+13.3%) |

| 1g | 3.92 | −2.98 (−43.8%) |

| 1h | 1.12 | −0.62 (−35.6%) |

| 1i | 4.31 | −0.27 (−5.9%) |

| 1j | 1.56 | −0.71 (−31.3%) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ferreira, F.; Pires, I.M.; Ponciano, V.; Costa, M.; Villasana, M.V.; Garcia, N.M.; Zdravevski, E.; Lameski, P.; Chorbev, I.; Mihajlov, M.; et al. Experimental Study on Wound Area Measurement with Mobile Devices. Sensors 2021, 21, 5762. https://doi.org/10.3390/s21175762

Ferreira F, Pires IM, Ponciano V, Costa M, Villasana MV, Garcia NM, Zdravevski E, Lameski P, Chorbev I, Mihajlov M, et al. Experimental Study on Wound Area Measurement with Mobile Devices. Sensors. 2021; 21(17):5762. https://doi.org/10.3390/s21175762

Chicago/Turabian StyleFerreira, Filipe, Ivan Miguel Pires, Vasco Ponciano, Mónica Costa, María Vanessa Villasana, Nuno M. Garcia, Eftim Zdravevski, Petre Lameski, Ivan Chorbev, Martin Mihajlov, and et al. 2021. "Experimental Study on Wound Area Measurement with Mobile Devices" Sensors 21, no. 17: 5762. https://doi.org/10.3390/s21175762

APA StyleFerreira, F., Pires, I. M., Ponciano, V., Costa, M., Villasana, M. V., Garcia, N. M., Zdravevski, E., Lameski, P., Chorbev, I., Mihajlov, M., & Trajkovik, V. (2021). Experimental Study on Wound Area Measurement with Mobile Devices. Sensors, 21(17), 5762. https://doi.org/10.3390/s21175762