Review of Wearable Devices and Data Collection Considerations for Connected Health

Abstract

1. Introduction

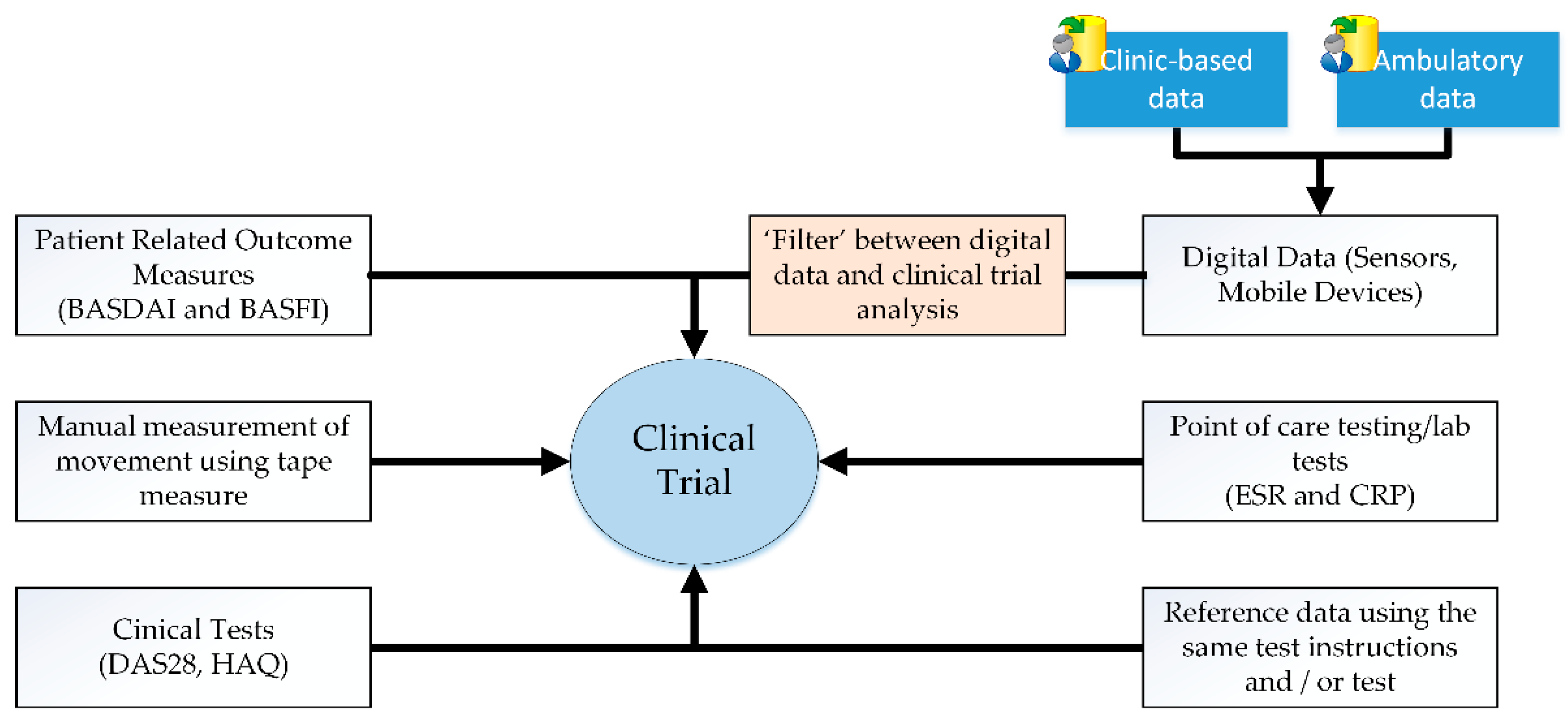

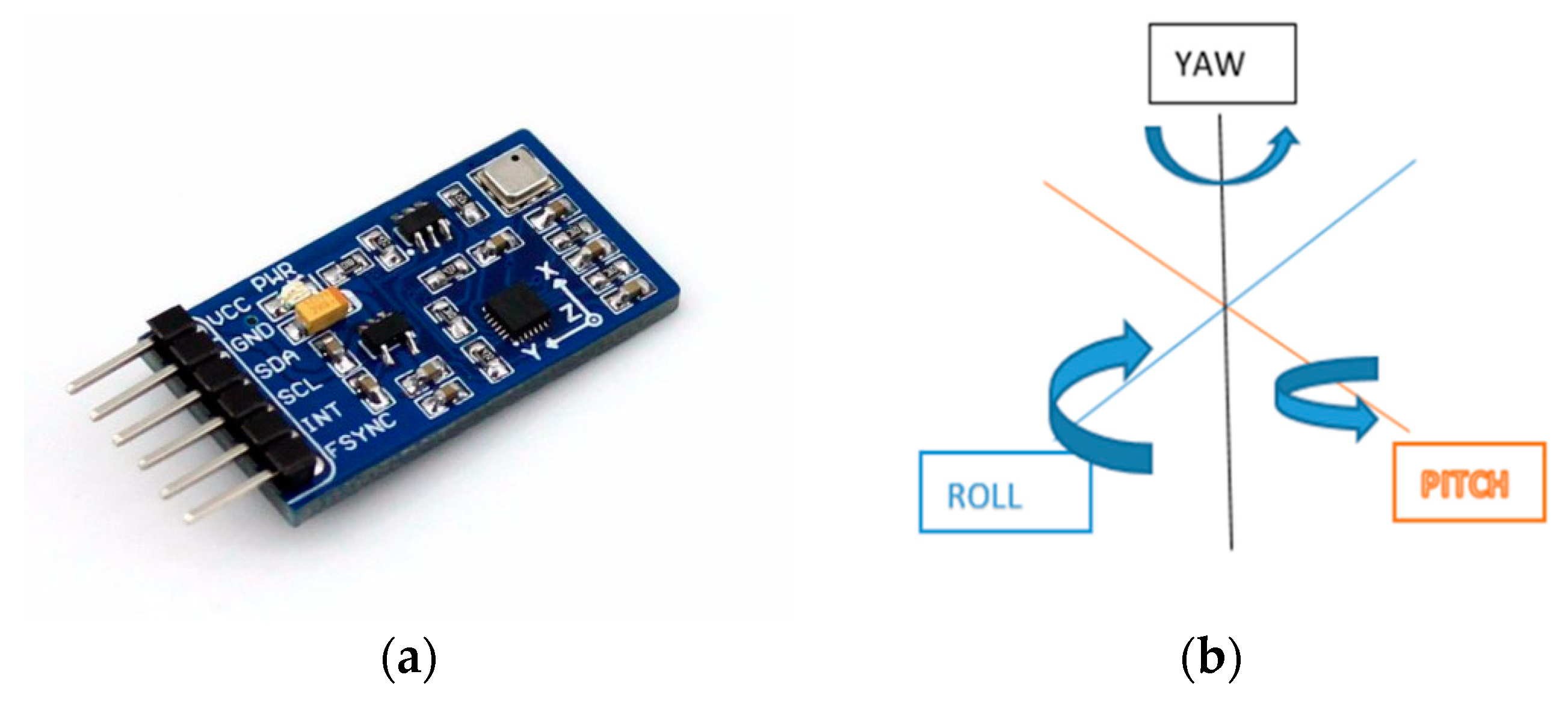

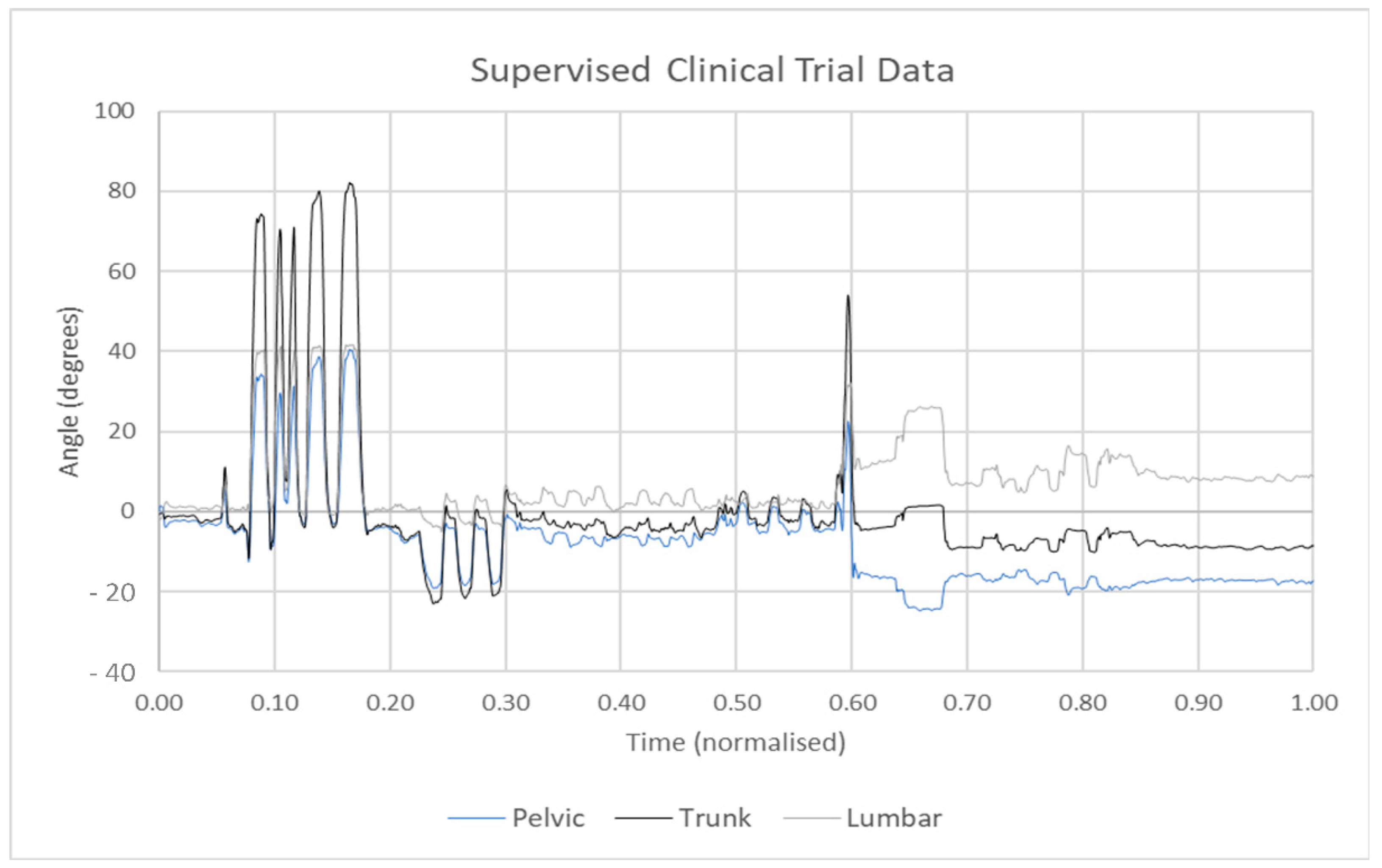

2. Wearable Technology in Clinical Trials

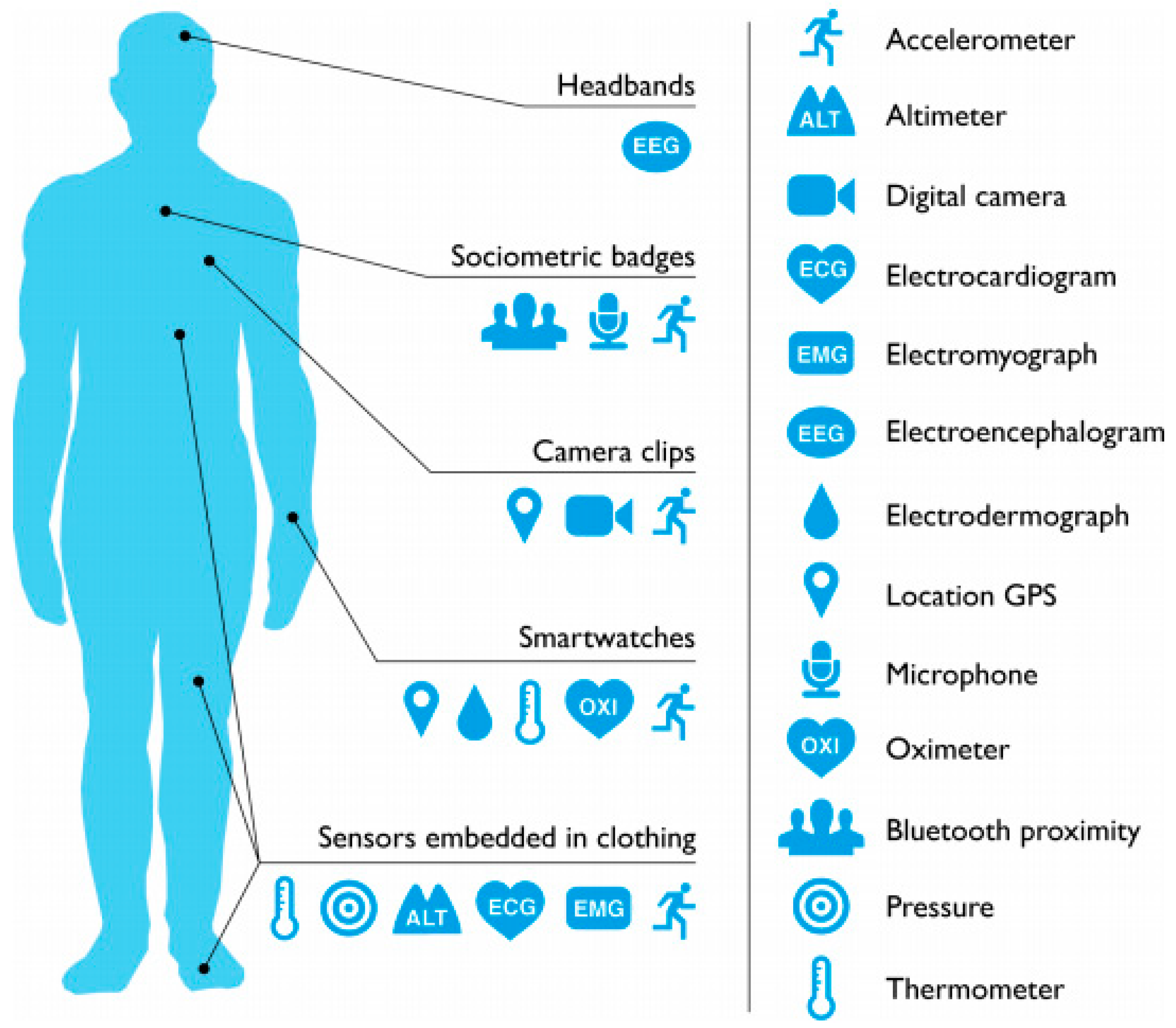

3. Wearable Devices in the Healthcare Environment

4. Wearable Devices for Quantified Self

5. Measurement Accuracy

6. Other Considerations for Wearable Technology

6.1. Psychological Aspects

6.2. Data Privacy and Security

7. Human Activity Detection Using Deep Learning Techniques

| Ref | ML Model/NN Type | Details | Epochs | No. of Participants | Test for Analysis | Results |

|---|---|---|---|---|---|---|

| [166] | (CNN) and (LSTM-RNN) | TensorFlow is used to implement the NN. | 40 | 22 | Accuracy (84%) | CNNs may perform better than LSTM-RNN for real-time datasets. |

| [167] | CNN with the Deep Q Neural Network (DQN) model compared with LSTM models and DQN | CCR, EER, AUC, MAP and the CMC. | 50 | Classification accuracy (98.33%) | CNN model performing better than the LSTM model. | |

| [176] | 1-D Convolutional neural network (1-D CNN)—a RNN model with LSTM | 3+3 C-RNN designed for data processing. | 1000 | 80 | Accuracy (90.29%) | Model works well for lower sampling rates. However, for large data set accuracy is getting lower. |

| [135] | Hierarchical Dirichlet process (HDP) model to detect human activity levels | SVM | 27 | Precision of 0.81 and recall of 0.77. | (HDP) model that can infer the number of levels automatically from a sliding window time duration. | |

| [168] | Apriori Algorithm and Pattern Recognition (PR) Algorithm | New algorithm for PR is designed and implemented in MATLAB. | 9 | Standard deviation of Predicted v/s Actual Graph (Standard Deviations were around 2.6 for PR-Algorithm and 3.32 for Apriori algorithm). | PR algorithm indicated better prediction than the Apriori algorithm. | |

| [177] | Hierarchical Dirichlet Process Model (HDPM) | Feed forward neural network. | 50 | 201 | Simple accuracy (sitting—78.60%, standing—9.45%, walking—26.87%) | The physical activity levels are automatically learned from the input data using the HDPM. |

| [169] | HAR method based on U-Net | CNN | 100 | 266,555 samples and 5026 windows | Accuracy and Fw-score (Max. Accuracy of 96.4% and Fw-Score of 0.965). | U-Net method overcomes the multiclass window problem inherent in the sliding window method and realises the prediction of each sampling point’s label in time series data. |

| [170] | InnoHAR—DL model | Combination of inception neural network and RNN structure built with Keras. | 9 | Opportunity, PAMAP2, and Smartphone datasets with F-scores of 0.946, 0.935 and 0.945, respectively. | Consistent superior performance and has good generalisation performance. | |

| [171] | Deep Neural Network | Combination of convolutional and recurrent NN. | 417 | F1-Score in between 0.8–0.9 for different activities. | Simulated sensor data demonstrates the feasibility of classifying athletic tasks using wearable sensors. | |

| [172] | Deep Neural Network | Fully connected CNN. | 50 | 5 (20 actions per person) | cross validated accuracy for action classification. (Camera only—85.3% IMU only 67.1%, Combined—86.9%). | Action recognition algorithm utilising both images and inertial sensor data that can efficiently extract feature vectors using a CNN and performs the classification using an RNN. |

| [173] | Hybrid DL model | Combines the simple recurrent units (SRUs) with the gated recurrent units (GRUs) of neural networks. | 50 | 1007 | Accuracy (99.8%) | Deep SRUs-GRUs networks to process the sequences of multisensors input data by using the capability of their internal memory states and exploit their speed advantage. |

| [174] | CNN | Akamatsu Transform | 120 | Accuracy (85%) | Proposed a human action recognition method using data acquired from wearable sensors and learned using a Neural Network. | |

| [178] | SVM, ANN and HMM, and one compressed sensing algorithm, SRC-RP | DL using MATLAB. | 4 people with 5 different tests | Recognition accuracy for different datasets (Debora—93.4%, Katia—99.6%, Wallace—95.6%). | Three different ML algorithms, such as SVM, HMM and ANN, and one compressed sensing-based algorithm, SRC-RP are implemented to recognise human body activities. | |

| [179] | ML | Ensemble Empirical Mode Decomposition (EEMD), Sparse Multinomial Logistic Regression algorithm with Bayesian regularisation (SBMLR) and the Fuzzy Least Squares Support Vector Machine (FLS-SVM). | 23 | Classification accuracy (93.43%). | A novel approach based on the EEMD and FLS-SVM techniques is presented to recognise human activities. Demonstrated that the EEMD features can make significant contributions in improving classification accuracy. | |

| [180] | ML | WEKA | 30 | Accuracy (98.5333%) | Sensors on a smartphone, including an accelerometer and a gyroscope were used to gather and log the wearable sensing data for human activities. | |

| [151] | Real-time Gesture Pattern Classification | Neural network-based classifier model. | 1040 | Accuracy (77%) | Human hand gesture recognition using manually collected data and processed by LSTM layer structure. Accuracy is denoted using unity visualisation. | |

| [181] | Pattern Recognition Methods for Head Gesture-Based Interface of a Virtual Reality Helmet (VRH) Equipped with a Single IMU Sensor | Classifier uses a two-stage PCA-based method, a feedforward artificial neural network, and random forest. | 975 gestures from 12 patients | Classification rate (0.975) | VRH with sensors are used to collect data. Dynamic Time Warping (DTW) algorithm used for pattern recognition. | |

| [182] | Hand Gesture Recognition (HGR) System. | Restricted Coulomb Energy (RCE) neural networks distance measurement scheme of DTW. | 252 | Accuracy (98.6%) | Hand Gesture Recognition (HAR) system for Human-Computer Interaction (HCI) based on time-dependent data from IMU sensors. | |

| [183] | Motion capturing gloves are designed using 3D sensory data | Classification model with ANN. | 6700 | Accuracy (98%) | Data gloves with IMU sensors are used to capture finger and palm movements. | |

| [184] | Quaternion-Based Gesture Recognition Using Wireless Wearable Motion Capture Sensors | SVM and ANN | 11 | Accuracy (90%) | Multisensor motion capturing system that is capable of identifying six hand and upper body movements. |

8. Algorithms for Activity and Sleep Recognition

9. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Pantelopoulos, A.; Bourbakis, N.G. A Survey on Wearable Sensor-Based Systems for Health Monitoring and Prognosis. IEEE Trans. Syst. Man. Cybern. Part C Appl. Rev. 2009, 40, 1–12. [Google Scholar] [CrossRef]

- Liu, S. Wearable Technology–Statistics & Facts Statista. 2019. Available online: www.statista.com/topics/1556/wearable-technology/ (accessed on 3 August 2021).

- Frost, B. Market Research Report. Strategy R 2014, 7215, 1–31. [Google Scholar]

- Pando, A. Wearable Health Technologies and Their Impact on the Health Industry. Forbes. 2019. Available online: www.forbes.com/sites/forbestechcouncil/2019/05/02/wearable-health-technologies-and-their-impact-on-the-health-industry/#4eac185f3af5 (accessed on 31 May 2021).

- Tankovska, H. Global Connected Wearable Devices 2016–2022 Statista. Statista. 2020. Available online: www.statista.com/statistics/487291/global-connected-wearable-devices/ (accessed on 5 February 2021).

- Connolly, J. Wearable Rehabilitative Technology for the Movement Measurement of Patients with Arthritis. Ulster University, February 2015. Available online: https://ethos.bl.uk/OrderDetails.do?did=1&uin=uk.bl.ethos.675471 (accessed on 3 August 2021).

- Song, M.-S.; Kang, S.-G.; Lee, K.-T.; Kim, J. Wireless, Skin-Mountable EMG Sensor for Human–Machine Interface Application. Micromachines 2019, 10, 879. [Google Scholar] [CrossRef]

- Massaroni, C.; Saccomandi, P.; Schena, E. Medical Smart Textiles Based on Fiber Optic Technology: An Overview. J. Funct. Biomater. 2015, 6, 204–221. [Google Scholar] [CrossRef]

- Jouffroy, R.; Jost, D.; Prunet, B. Prehospital pulse oximetry: A red flag for early detection of silent hypoxemia in COVID-19 patients. Crit. Care 2020, 24, 1–2. [Google Scholar] [CrossRef] [PubMed]

- Best, J. Wearable technology: Covid-19 and the rise of remote clinical monitoring. BMJ 2021, 372, n413. [Google Scholar] [CrossRef] [PubMed]

- Vijayan, V.; McKelvey, N.; Condell, J.; Gardiner, P.; Connolly, J. Implementing Pattern Recognition and Matching techniques to automatically detect standardized functional tests from wearable technology. In Proceedings of the 2020 31st Irish Signals and Systems Conference (ISSC), Letterkenny, Ireland, 11–12 June 2020. [Google Scholar] [CrossRef]

- Majumder, S.; Mondal, T.; Deen, M.J. Wearable Sensors for Remote Health Monitoring. Sensors 2017, 17, 130. [Google Scholar] [CrossRef] [PubMed]

- Sun, H.; Zhang, Z.; Hu, R.Q.; Qian, Y. Wearable Communications in 5G: Challenges and Enabling Technologies. IEEE Veh. Technol. Mag. 2018, 13, 100–109. [Google Scholar] [CrossRef]

- Schrader, L.; Toro, A.V.; Konietzny, S.; Rüping, S.; Schäpers, B.; Steinböck, M.; Krewer, C.; Müller, F.; Güttler, J.; Bock, T. Advanced Sensing and Human Activity Recognition in Early Intervention and Rehabilitation of Elderly People. J. Popul. Ageing 2020, 13, 139–165. [Google Scholar] [CrossRef]

- Cha, J.; Kim, J.; Kim, S. Hands-free user interface for AR/VR devices exploiting wearer’s facial gestures using unsupervised deep learning. Sensors 2019, 19, 4441. [Google Scholar] [CrossRef] [PubMed]

- Sensoria Fitness: Motion and Activity Tracking Smart Clothing for Sports and Fitness. 2021. Available online: https://store.sensoriafitness.com/ (accessed on 6 August 2021).

- TEKSCAN. Gait Mat|HR Mat|Tekscan. Photo Courtesy of Tekscan™, Inc. Available online: www.tekscan.com/products-solutions/systems/hr-mat (accessed on 4 July 2021).

- Image Courtesy 5DT.com; DT Technologies Home—5DT. Available online: https://5dt.com/ (accessed on 29 July 2020).

- NEXGEN. NexGen Ergonomics–Products–Biometrics–Goniometers and Torsiometers. Available online: www.nexgenergo.com/ergonomics/biosensors.html (accessed on 3 August 2021).

- Bell. J. Wearable Health Monitoring Systems; Technical Report; Nyx Illuminated Clothing Company: Culver City, CA, USA, 2019; p. 218857. [Google Scholar]

- Lee, J.; Kim, D.; Ryoo, H.-Y.; Shin, B.-S. Sustainable Wearables: Wearable Technology for Enhancing the Quality of Human Life. Sustainability 2016, 8, 466. [Google Scholar] [CrossRef]

- Hurdles, C. Applied Clinical Trials- Your Peer-Reviewed Guide to Gobal Clinical Trials Management. 2017. Available online: https://cdn.sanity.io/files/0vv8moc6/act/346a82766960a17da2099b5f1268d0efba485d2a.pdf (accessed on 3 August 2021).

- Grimm, B.; Bolink, S. Evaluating physical function and activity in the elderly patient using wearable motion sensors. EFORT Open Rev. 2016, 1, 112–120. [Google Scholar] [CrossRef]

- Dhairya, K. Introduction to Data Preprocessing in Machine Learning. 2018. Available online: https://towardsdatascience.com/introduction-to-data-preprocessing-in-machine-learning-a9fa83a5dc9 (accessed on 3 August 2021).

- Mavor, M.P.; Ross, G.B.; Clouthier, A.L.; Karakolis, T.; Graham, R.B. Validation of an IMU Suit for Military-Based Tasks. Sensors 2020, 20, 4280. [Google Scholar] [CrossRef]

- Balsa, A.; Carmona, L.; González-Alvaro, I.; Belmonte, M.A.; Tena, X.; Sanmartí, R. Value of Disease Activity Score 28 (DAS28) and DAS28-3 Compared to American College of Rheumatology-Defined Remission in Rheumatoid Arthritis. J. Rheumatol. 2004, 31, 40–46. [Google Scholar]

- Callmer, J. Autonomous Localization in Unknown Environments. Master’s Thesis, Linköping University Electronic Press, Linköping, Sweden, 2016; p. 1520. [Google Scholar]

- Estevez, P.; Bank, J.; Porta, M.; Wei, J.; Sarro, P.; Tichem, M.; Staufer, U. 6 DOF force and torque sensor for micro-manipulation applications. Sens. Actuators A Phys. 2012, 186, 86–93. [Google Scholar] [CrossRef]

- Pandey, A.; Mazumdar, C.; Ranganathan, R.; Tripathi, S.; Pandey, D.; Dattagupta, S. Transverse vibrations driven negative thermal expansion in a metallic compound GdPd3B0.25C0.75. Appl. Phys. Lett. 2008, 92, 261913. [Google Scholar] [CrossRef]

- Sparkfun. Accelerometer, Gyro and IMU Buying Guide–SparkFun Electronics. 2016, p. 1. Available online: www.sparkfun.com/pages/accel_gyro_guide (accessed on 3 August 2021).

- On Board Mpu9255 10 Axial Inertial Navigation Module10 Dof Imu Sensor(b)gyroscope Acceleration Sensor–Buy Mpu9255 10 Axial Inertial Navigation Module, 10 Dof Imu Sensor, Gyroscope Acceleration Sen. Available online: www.alibaba.com/product-detail/on-board-MPU9255-10-axial-inertial_60838848689.html (accessed on 11 May 2021).

- CANAL GEOMATICS. IMU Accuracy Error Definitions Canal Geomatics. Available online: http://www.canalgeomatics.com/ (accessed on 21 February 2021).

- Ahmed, H.; Tahir, M. Improving the Accuracy of Human Body Orientation Estimation with Wearable IMU Sensors. IEEE Trans. Instrum. Meas. 2017, 66, 535–542. [Google Scholar] [CrossRef]

- Guner, U.; Canbolat, H.; Unluturk, A. Design and implementation of adaptive vibration filter for MEMS based low cost IMU. In Proceedings of the 2015 9th International Conference on Electrical and Electronics Engineering (ELECO), Bursa, Turkey, 26–28 November 2015; pp. 130–134. [Google Scholar] [CrossRef]

- Paina, G.P.; Gaydou, D.; Redolfi, J.; Paz, C.; Canali, L. Experimental comparison of kalman and complementary filter for attitude estimation. Proc. AST 2011, 205–215. [Google Scholar]

- De Arriba-Pérez, F.; Caeiro-Rodríguez, M.; Santos-Gago, J.M. Collection and Processing of Data from Wrist Wearable Devices in Heterogeneous and Multiple-User Scenarios. Sensors 2016, 16, 1538. [Google Scholar] [CrossRef] [PubMed]

- Iman, K.; Al-Azwani, H. Integration of Wearable Technologies into Patient’s Electronic Medical Records. Qual. Prim. Care 2016, 24, 151–155. [Google Scholar]

- Lawton, E.B. ADL and IADL treatment; Assessment of older people: Self-maintaining and instrumental activities of daily living. Gerontologist 1969, 9, 179–186. [Google Scholar] [CrossRef]

- Camp, N.; Lewis, M.; Hunter, K.; Johnston, J.; Zecca, M.; Di Nuovo, A.; Magistro, D. Technology Used to Recognize Activities of Daily Living in Community-Dwelling Older Adults. Int. J. Environ. Res. Public Health 2020, 18, 163. [Google Scholar] [CrossRef] [PubMed]

- Lee, Y.; Kim, M.; Lee, Y.; Kwon, J.; Park, Y.-L.; Lee, D. Wearable Finger Tracking and Cutaneous Haptic Interface with Soft Sensors for Multi-Fingered Virtual Manipulation. IEEE/ASME Trans. Mechatron. 2018, 24, 67–77. [Google Scholar] [CrossRef]

- Sayem, A.S.M.; Teay, S.H.; Shahariar, H.; Fink, P.L.; Albarbar, A. Review on Smart Electro-Clothing Systems (SeCSs). Sensors 2020, 20, 587. [Google Scholar] [CrossRef] [PubMed]

- Xsens. Home Xsens 3D Motion Tracking. 2015. Available online: www.xsens.com/ (accessed on 11 May 2021).

- Caeiro-Rodríguez, M.; Otero-González, I.; Mikic-Fonte, F.A.; Llamas-Nistal, M. A Systematic Review of Commercial Smart Gloves: Current Status and Applications. Sensors 2021, 21, 2667. [Google Scholar] [CrossRef]

- Smart Glove. Neofect. Available online: www.neofect.com/us/smart-glove (accessed on 28 May 2021).

- Shen, Z.; Yi, J.; Li, X.; Lo, M.H.P.; Chen, M.Z.Q.; Hu, Y.; Wang, Z. A soft stretchable bending sensor and data glove applications. Robot. Biomimetics 2016, 3, 1–8. [Google Scholar] [CrossRef]

- Henderson, J.; Condell, J.; Connolly, J.; Kelly, D.; Curran, K. Review of Wearable Sensor-Based Health Monitoring Glove Devices for Rheumatoid Arthritis. Sensors 2021, 21, 1576. [Google Scholar] [CrossRef]

- De Pasquale, G. Glove-based systems for medical applications: Review of recent advancements. J. Text. Eng. Fash. Technol. 2018, 4, 1. [Google Scholar] [CrossRef]

- Djurić-Jovičić, M.; Jovičić, N.S.; Roby-Brami, A.; Popović, M.B.; Kostić, V.S.; Djordjević, A.R. Quantification of Finger-Tapping Angle Based on Wearable Sensors. Sensors 2017, 17, 203. [Google Scholar] [CrossRef]

- Shyr, T.-W.; Shie, J.-W.; Jiang, C.-H.; Li, J.-J. A Textile-Based Wearable Sensing Device Designed for Monitoring the Flexion Angle of Elbow and Knee Movements. Sensors 2014, 14, 4050–4059. [Google Scholar] [CrossRef]

- Mjøsund, H.L.; Boyle, E.; Kjaer, P.; Mieritz, R.M.; Skallgård, T.; Kent, P. Clinically acceptable agreement between the ViMove wireless motion sensor system and the Vicon motion capture system when measuring lumbar region inclination motion in the sagittal and coronal planes. BMC Musculoskelet. Disord. 2017, 18, 1–9. [Google Scholar] [CrossRef]

- Ortiz, M.; Juan, R.; Val, S.L. Reliability and Concurrent Validity of the Goniometer-Pro App vs. a Universal Goniometer in determining Passive Flexion of Knee. Int. J. Comput. Appl. 2017, 173, 30–34. [Google Scholar]

- Totaro, M.; Poliero, T.; Mondini, A.; Lucarotti, C.; Cairoli, G.; Ortiz, J.; Beccai, L. Soft Smart Garments for Lower Limb Joint Position Analysis. Sensors 2017, 17, 2314. [Google Scholar] [CrossRef] [PubMed]

- Veari Presents Fineck Smart Wearable Device for Neck Health. Available online: www.designboom.com/technology/veari-fineck-smart-wearable-device-neck-health-11-25-2014/ (accessed on 28 May 2021).

- Lo Presti, D.; Carnevale, A.; D’Abbraccio, J.; Massari, L.; Massaroni, C.; Sabbadini, R.; Zaltieri, M.; Bravi, M.; Sterzi, S.; Schena, E. A Multi-Parametric Wearable System to Monitor Neck Computer Workers. Sensors 2020, 20, 536. [Google Scholar] [CrossRef]

- BTS Products Applications_BTS Bioengineering. Available online: https://www.btsbioengineering.com/applications/ (accessed on 11 May 2021).

- ViMove2. Analyse Patient Movement & Muscle Activity–DorsaVi EU. Available online: www.dorsavi.com/uk/en/vimove/ (accessed on 10 May 2021).

- Amazon’s New Fitness Tracker Halo Will Monitor Your Tone of Voice—Quartz. Available online: https://qz.com/1897411/amazons-new-fitness-tracker-halo-will-monitor-your-tone-of-voice/ (accessed on 18 May 2021).

- Schätz, M.; Procházka, A.; Kuchyňka, J.; Vyšata, O. Sleep Apnea Detection with Polysomnography and Depth Sensors. Sensors 2020, 20, 1360. [Google Scholar] [CrossRef]

- Liebling, S.; Langhan, M. Pulse Oximetry. Nurs. Times 2018, 98–102. [Google Scholar] [CrossRef]

- Aliverti, A. Wearable technology: Role in respiratory health and disease. Breathe 2017, 13, e27–e36. [Google Scholar] [CrossRef] [PubMed]

- Kumar, H.S. Wearable Technology in Combination with Diabetes. Int. J. Res. Eng. Sci. Manag. 2019, 2, 1–4. [Google Scholar]

- Baig, M.M. Early Detection and Self-Management of Long-Term Conditions Using Wearable Technologies. Ph.D. Thesis, Auckland University of Technology, Auckland, New Zealand, 2017. [Google Scholar]

- Nishiguchi, S.; Ito, H.; Yamada, M.; Yoshitomi, H.; Furu, M.; Ito, T.; Shinohara, A.; Ura, T.; Okamoto, K.; Aoyama, T. Self-Assessment Tool of Disease Activity of Rheumatoid Arthritis by Using a Smartphone Application. Telemed. e-Health 2014, 20, 235–240. [Google Scholar] [CrossRef] [PubMed]

- Managing Rheumatoid Arthritis–NPS MedicineWise. Available online: www.nps.org.au/consumers/managing-rheumatoid-arthritis (accessed on 8 July 2021).

- How Is a Person Affected by Ankylosing Spondylitis (AS)_ _ SPONDYLITIS. Available online: https://spondylitis.org/about-spondylitis/possible-complications/ (accessed on 3 August 2021).

- Swinnen, T.W.; Milosevic, M.; Van Huffel, S.; Dankaerts, W.; Westhovens, R.; De Vlam, K. Instrumented BASFI (iBASFI) Shows Promising Reliability and Validity in the Assessment of Activity Limitations in Axial Spondyloarthritis. J. Rheumatol. 2016, 43, 1532–1540. [Google Scholar] [CrossRef]

- Irons, K.; Harrison, H.; Thomas, A.; Martindale, J. Ankylosing Spondylitis (Axial Spondyloarthritis). The Bath Indices. 2016, p. 1. Available online: www.nass.co.uk (accessed on 3 August 2021).

- Annoni, F. The health assessment questionnaire. J. Petrol. 2000, 369, 1689–1699. [Google Scholar] [CrossRef]

- Rawassizadeh, R.; Momeni, E.; Dobbins, C.; Mirza-Babaei, P.; Rahnamoun, R. Lesson Learned from Collecting Quantified Self Information via Mobile and Wearable Devices. J. Sens. Actuator Netw. 2015, 4, 315–335. [Google Scholar] [CrossRef]

- Çiçek, M. Wearable Technologies and Its Future Applications. Int. J. Electr. Electron. Data Commun. 2015, 3, 45–50. [Google Scholar]

- Piwek, L.; Ellis, D.; Andrews, S.; Joinson, A. The Rise of Consumer Health Wearables: Promises and Barriers. PLoS Med. 2016, 13, e1001953. [Google Scholar] [CrossRef] [PubMed]

- Whitney, L. 21 Tips Every Apple Watch Owner Should Know PCMag. PCMag. 2020. Available online: www.pcmag.com/how-to/20-tips-every-apple-watch-owner-should-know (accessed on 1 June 2021).

- Fitbit Sense In-Depth Review_All the Data Without the Clarity_DC Rainmaker. Available online: www.dcrainmaker.com/2020/09/fitbit-sense-in-depth-review-all-the-data-without-the-clarity.html (accessed on 1 June 2021).

- Stein, S. Samsung Gear 2 Review_A Smartwatch that Tries to Be Everything–CNET. Available online: www.cnet.com/reviews/samsung-gear-2-review/ (accessed on 1 June 2021).

- Available online: https://www.google.com/search?client=firefox-b-d&q=smartdevice-samsung-gear-s-um+ (accessed on 3 August 2021).

- Activity, W.; Tracker, S.; Guide, Q.S. Wireless Activity and Sleep Tracker. Available online: https://uk.pcmag.com/migrated-99802-smartwatches/122576/21-tips-every-apple-watch-owner-should-know (accessed on 3 August 2021).

- PEBBLE-WATCH BLUETOOTH Watch User Manual Pebble Technology. Available online: https://fccid.io/RGQ-PEBBLE-WATCH/User-Manual/user-manual-1868584 (accessed on 1 June 2021).

- Xiaomi Mi Band 6 User Manual Download (English Language). Available online: www.smartwatchspecifications.com/xiaomi-mi-band-6-user-manual/ (accessed on 21 July 2021).

- Mannion, P. Teardown: Misfit Shine 2 and the Art of Power Management. EDN. Available online: https://www.edn.com/teardown-misfit-shine-2-and-the-art-of-power-management/ (accessed on 1 June 2021).

- Apps and Fitness–Sony Smartwatch 3 Review TechRadar. Available online: www.techradar.com/reviews/sony-smartwatch-3/4 (accessed on 1 June 2021).

- Bennett, B. Fitbit Flex Review_A Most Versatile, Feature-Packed Tracker–CNET. 2016. Available online: www.cnet.com/reviews/fitbit-flex-review/ (accessed on 1 June 2021).

- ONcoach 100. Available online: https://support.decathlon.co.uk/oncoach-100 (accessed on 1 June 2021).

- ActiGraph Link. Available online: https://actigraphcorp.com/actigraph-link/ (accessed on 1 June 2021).

- Garmin VivoSmart HR+. Available online: https://www.expansys.jp/garmin-vivosmart-hr-regular-size-black-taiwan-spec-291780/ (accessed on 3 August 2021).

- MotionNode Bus. Wearable Sensor Network. Available online: www.motionnode.com/bus.html (accessed on 22 July 2021).

- Wilson, S.; Laing, R.M. Wearable Technology: Present and Future. In Proceedings of the 91st World Conference, Leeds, UK, 23–26 July 2018. [Google Scholar]

- Bohannon, R.W.; Bubela, D.J.; Magasi, S.R.; Wang, Y.-C.; Gershon, R.C. Sit-to-stand test: Performance and determinants across the age-span. Isokinet. Exerc. Sci. 2010, 18, 235–240. [Google Scholar] [CrossRef]

- Maenner, M.J.; Smith, L.E.; Hong, J.; Makuch, R.; Greenberg, J.S.; Mailick, M.R. Evaluation of an activities of daily living scale for adolescents and adults with developmental disabilities. Disabil. Heal. J. 2013, 6, 8–17. [Google Scholar] [CrossRef] [PubMed]

- Vallati, C.; Virdis, A.; Gesi, M.; Carbonaro, N.; Tognetti, A. ePhysio: A Wearables-Enabled Platform for the Remote Management of Musculoskeletal Diseases. Sensors 2018, 19, 2. [Google Scholar] [CrossRef]

- Rodgers, M.M.; Alon, G.; Pai, V.M.; Conroy, R.S. Wearable technologies for active living and rehabilitation: Current research challenges and future opportunities. J. Rehabil. Assist. Technol. Eng. 2019, 6, 2055668319839607. [Google Scholar] [CrossRef]

- Chen, K.-H.; Chen, P.-C.; Liu, K.-C.; Chan, C.-T. Wearable Sensor-Based Rehabilitation Exercise Assessment for Knee Osteoarthritis. Sensors 2015, 15, 4193–4211. [Google Scholar] [CrossRef]

- Swan, M. The Quantified Self: Fundamental Disruption in Big Data Science and Biological Discovery. Big Data 2013, 1, 85–99. [Google Scholar] [CrossRef]

- Hessing, T. Measurement Systems Analysis (MSA) Six Sigma Study Guide. Available online: https://sixsigmastudyguide.com/measurement-systems-analysis/ (accessed on 23 March 2021).

- Tim, D. Getting the Most out of Wearable Technology in Clinical Research. J. Clin. Stud. 2018, 10, 54–55. [Google Scholar]

- Patringenaru, I. Temporary Tattoo Offers Needle-Free Way to Monitor Glucose Levels. 2015. Available online: http://ucsdnews.ucsd.edu/pressrelease/temporary_tattoo_offers_needle_free_way_to_monitor_glucose_levels (accessed on 3 August 2021).

- Yamada, I.; Lopez, G. Wearable sensing systems for healthcare monitoring. In Proceedings of the 2012 Symposium on VLSI Technology (VLSIT), Honolulu, HI, USA, 12–14 June 2012; pp. 5–10. [Google Scholar] [CrossRef]

- Zhang, Y.; Song, S.; Vullings, R.; Biswas, D.; Simões-Capela, N.; Van Helleputte, N.; Van Hoof, C.; Groenendaal, W. Motion Artifact Reduction for Wrist-Worn Photoplethysmograph Sensors Based on Different Wavelengths. Sensors 2019, 19, 673. [Google Scholar] [CrossRef] [PubMed]

- Bent, B.; Goldstein, B.A.; Kibbe, W.A.; Dunn, J.P. Investigating sources of inaccuracy in wearable optical heart rate sensors. NPJ Digit. Med. 2020, 3, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Piccinini, F.; Martinelli, G.; Carbonaro, A. Accuracy of Mobile Applications versus Wearable Devices in Long-Term Step Measurements. Sensors 2020, 20, 6293. [Google Scholar] [CrossRef] [PubMed]

- Size, S.; For, S.; Detection, I. Grid-Eye State of the Art Thermal Imaging Solution. 2016; pp. 1–17. Available online: https://eu.industrial.panasonic.com/sites/default/pidseu/files/whitepaper_grid-eye.pdf (accessed on 3 August 2021).

- Schrangl, P.; Reiterer, F.; Heinemann, L.; Freckmann, G.; Del Re, L. Limits to the Evaluation of the Accuracy of Continuous Glucose Monitoring Systems by Clinical Trials. Biosensors 2018, 8, 50. [Google Scholar] [CrossRef] [PubMed]

- Stanley, J.A.; Johnsen, S.B.; Apfeld, J. The SensorOverlord predicts the accuracy of measurements with ratiometric biosensors. Sci. Rep. 2020, 10, 1–11. [Google Scholar] [CrossRef]

- Rose, D.P.; Ratterman, M.E.; Griffin, D.K.; Hou, L.; Kelley-Loughnane, N.; Naik, R.R.; Hagen, J.A.; Papautsky, I.; Heikenfeld, J.C. Adhesive RFID Sensor Patch for Monitoring of Sweat Electrolytes. IEEE Trans. Biomed. Eng. 2014, 62, 1457–1465. [Google Scholar] [CrossRef]

- Bandodkar, A.J.; Jia, W.; Wang, J. Tattoo-Based Wearable Electrochemical Devices: A Review. Electroanalysis 2015, 27, 562–572. [Google Scholar] [CrossRef]

- How Accurate Can RFID Tracking Be RFID Journal. Available online: www.rfidjournal.com/question/how-accurate-can-rfid-tracking-be (accessed on 12 May 2021).

- De Castro, M.P.; Meucci, M.; Soares, D.; Fonseca, P.; Borgonovo-Santos, M.; Sousa, F.; Machado, L.; Vilas-Boas, J.P. Accuracy and Repeatability of the Gait Analysis by the WalkinSense System. BioMed Res. Int. 2014, 2014, 348659. [Google Scholar] [CrossRef]

- Weizman, Y.; Tan, A.M.; Fuss, F.K. Accuracy of Centre of Pressure Gait Measurements from Two Pressure-Sensitive Insoles. MDPI Proc. 2018, 2, 277. [Google Scholar] [CrossRef]

- Mohd-Yasin, F.; Nagel, D.J.; Korman, E.C. Noise in MEMS. Meas. Sci. Technol. 2009, 21, 012001. [Google Scholar] [CrossRef]

- Yu, Y.; Han, F.; Bao, Y.; Ou, J. A Study on Data Loss Compensation of WiFi-Based Wireless Sensor Networks for Structural Health Monitoring. IEEE Sensors J. 2015, 16, 3811–3818. [Google Scholar] [CrossRef]

- ElAmrawy, F.; Nounou, M.I. Are Currently Available Wearable Devices for Activity Tracking and Heart Rate Monitoring Accurate, Precise, and Medically Beneficial? Health Inform. Res. 2015, 21, 315–320. [Google Scholar] [CrossRef]

- Pardamean, B.; Soeparno, H.; Mahesworo, B.; Budiarto, A.; Baurley, J. Comparing the Accuracy of Multiple Commercial Wearable Devices: A Method. Procedia Comput. Sci. 2019, 157, 567–572. [Google Scholar] [CrossRef]

- Mardonova, M.; Choi, Y. Review of Wearable Device Technology and Its Applications to the Mining Industry. Energies 2018, 11, 547. [Google Scholar] [CrossRef]

- Ra, H.-K.; Ahn, J.; Yoon, H.J.; Yoon, D.; Son, S.H.; Ko, J. I am a “Smart” watch, Smart Enough to Know the Accuracy of My Own Heart Rate Sensor. In Proceedings of the 18th International Workshop on Mobile Computing Systems and Applications, Sonoma, CA, USA, 21–22 February 2017; pp. 49–54. [Google Scholar] [CrossRef]

- Ciuti, G.; Ricotti, L.; Menciassi, A.; Dario, P. MEMS Sensor Technologies for Human Centred Applications in Healthcare, Physical Activities, Safety and Environmental Sensing: A Review on Research Activities in Italy. Sensors 2015, 15, 6441–6468. [Google Scholar] [CrossRef]

- Bieber, G.; Haescher, M.; Vahl, M. Sensor requirements for activity recognition on smart watches. In Proceedings of the 6th International Conference on PErvasive Technologies Related to Assistive Environments, Rhodes, Greece, 29–31 May 2013; pp. 1–6. [Google Scholar] [CrossRef]

- Khoshnoud, F.; De Silva, C.W. Recent advances in MEMS sensor technology-mechanical applications. IEEE Instrum. Meas. Mag. 2012, 15, 14–24. [Google Scholar] [CrossRef]

- Ghomian, T.; Mehraeen, S. Survey of energy scavenging for wearable and implantable devices. Energy 2019, 178, 33–49. [Google Scholar] [CrossRef]

- Ching, K.W.; Singh, M.M. Wearable Technology Devices Security and Privacy Vulnerability Analysis. Int. J. Netw. Secur. Appl. 2016, 8, 19–30. [Google Scholar] [CrossRef]

- Byrom, B.; Watson, C.; Doll, H.; Coons, S.J.; Eremenco, S.; Ballinger, R.; Mc Carthy, M.; Crescioni, M.; O’Donohoe, P.; Howry, C. Selection of and Evidentiary Considerations for Wearable Devices and Their Measurements for Use in Regulatory Decision Making: Recommendations from the ePRO Consortium. Value Health 2018, 21, 631–639. [Google Scholar] [CrossRef]

- Patel, S.; Park, H.; Bonato, P.; Chan, L.; Rodgers, M. A review of wearable sensors and systems with application in rehabilitation. J. Neuroeng. Rehabil. 2012, 9, 21. [Google Scholar] [CrossRef]

- Ameri, S.K.; Hongwoo, J.; Jang, H.; Tao, L.; Wang, Y.; Wang, L.; Schnyer, D.M.; Akinwande, D.; Lu, N. Graphene Electronic Tattoo Sensors. ACS Nano 2017, 11, 7634–7641. [Google Scholar] [CrossRef] [PubMed]

- Chandel, V.; Sinharay, A.; Ahmed, N.; Ghose, A. Exploiting IMU Sensors for IOT Enabled Health Monitoring. In Proceedings of the First Workshop on IoT-Enabled Healthcare and Wellness Technologies and Systems, Singapore, 30 June 2016; pp. 21–22. [Google Scholar] [CrossRef]

- Healthcare-in-Europe. Smart Watches and Fitness Trackers Useful but May Increase Anxiety. Available online: https://healthcare-in-europe.com/en/news/smart-watches-fitness-trackers-useful-but-may-increase-anxiety.html (accessed on 23 February 2021).

- Andersen, T.O.; Langstrup, H.; Lomborg, S. Experiences with Wearable Activity Data during Self-Care by Chronic Heart Patients: Qualitative Study. J. Med. Internet Res. 2020, 22, e15873. [Google Scholar] [CrossRef] [PubMed]

- Zawn Villines, L. Mood Tracker Apps_ Learn More About Some of the Best Options Here. Medical News Today. 2020. Available online: www.medicalnewstoday.com/articles/mood-tracker-app (accessed on 3 August 2021).

- Mendu, S.; Baee, S. Redesigning the Quantified Self Ecosystem with Mental Health in Mind; ACM: Honolulu, HI, USA, 2020. [Google Scholar] [CrossRef]

- Majumdar, N. Quantified Self Detecting and Resolving Depression by Your Mobile Phone–Emberify Blog. Available online: https://emberify.com/blog/quantified-self-depression/ (accessed on 5 February 2021).

- Projects Institute for Health Metrics and Evaluation. 2016. Available online: www.healthdata.org/projects (accessed on 10 May 2021).

- Cilliers, L. Wearable devices in healthcare: Privacy and information security issues. Health Inf. Manag. J. 2019, 49, 150–156. [Google Scholar] [CrossRef]

- Tawalbeh, L.; Muheidat, F.; Tawalbeh, M.; Quwaider, M. IoT Privacy and Security: Challenges and Solutions. Appl. Sci. 2020, 10, 4102. [Google Scholar] [CrossRef]

- Kapoor, V.; Singh, R.; Reddy, R.; Churi, P. Privacy Issues in Wearable Technology: An Intrinsic Review. In Proceedings of the International Conference on Innovative Computing and Communication (ICICC-2020), New Delhi, India, 21–23 February 2020. [Google Scholar] [CrossRef]

- Sankar, R.; Le, X.; Lee, S.; Wang, D. Protection of data confidentiality and patient privacy in medical sensor networks. In Implantable Sensor Systems for Medical Applications; Woodhead Publishing: Sawston, UK, 2013; pp. 279–298. [Google Scholar] [CrossRef]

- Alrababah, Z. Privacy and Security of Wearable Devices. December 2020. Available online: https://www.researchgate.net/publication/347558128_Privacy_and_Security_of_Wearable_Devices (accessed on 10 May 2021).

- Paul, G.; Irvine, J. Privacy Implications of Wearable Health Devices. In Proceedings of the 7th International Conference on Security of Information and Networks, Glasgow, Scotland, UK, 9–11 September 2014; Association for Computing Machinery: New York, NY, USA, 2014. [Google Scholar] [CrossRef]

- Nguyen, T.; Gupta, S.; Venkatesh, S.; Phung, D. Nonparametric discovery of movement patterns from accelerometer signals. Pattern Recognit. Lett. 2016, 70, 52–58. [Google Scholar] [CrossRef]

- Ushmani, A. Machine Learning Pattern Matching. J. Comput. Sci. Trends Technol. 2019, 7, 4–7. [Google Scholar] [CrossRef]

- Pendlimarri, D.; Petlu, P.B.B. Novel Pattern Matching Algorithm for Single Pattern Matching. Int. J. Comput. Sci. Eng. 2010, 2, 2698–2704. [Google Scholar]

- Sarkania, V.K.; Bhalla, V.K. Android Internals. Int. J. Adv. Res. Comput. Sci. Softw. Eng. 2013, 3, 143–147. [Google Scholar]

- Mohammed, M.; Khan, M.B.; Bashie, E.B.M. Machine Learning: Algorithms and Applications; CRC Press: Boca Raton, FL, USA, 2016. [Google Scholar]

- Gmyzin, D. A Comparison of Supervised Machine Learning Classification Techniques and Theory-Driven Approaches for the Prediction of Subjective Mental Workload Subjective Mental. Master’s Thesis, Technological University Dublin, Dublin, Ireland, 2017. [Google Scholar]

- Osisanwo, F.Y.; Akinsola, J.E.T.; Awodele, O.; Hinmikaiye, J.O.; Olakanmi, O.; Akinjobi, J. Supervised Machine Learning Algorithms: Classification and Comparison. Int. J. Comput. Trends Technol. 2017, 48, 128–138. [Google Scholar] [CrossRef]

- Rajoub, B. Supervised and unsupervised learning. In Biomedical Signal Processing and Artificial Intelligence in Healthcare; Academic Press: Cambridge, MA, USA, 2020; pp. 51–89. [Google Scholar] [CrossRef]

- Rani, S.; Babbar, H.; Coleman, S.; Singh, A. An Efficient and Lightweight Deep Learning Model for Human Activity Recognition Using Smartphones. Sensors 2021, 21, 3845. [Google Scholar]

- Pietroni, F.; Casaccia, S.; Revel, G.M.; Scalise, L. Methodologies for continuous activity classification of user through wearable devices: Feasibility and preliminary investigation. In Proceedings of the 2016 IEEE Sensors Applications Symposium (SAS), Catania, Italy, 20–22 April 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Ben-gal, I. Outlier detection Irad Ben-Gal Department of Industrial Engineering. In Data Mining and Knowledge Discovery Handbook; Springer: New York, NY, USA, 2014; p. 11. [Google Scholar] [CrossRef]

- Colpas, P.A.; Vicario, E.; De-La-Hoz-Franco, E.; Pineres-Melo, M.; Oviedo-Carrascal, A.; Patara, F. Unsupervised Human Activity Recognition Using the Clustering Approach: A Review. Sensors 2020, 20, 2702. [Google Scholar] [CrossRef]

- Hailat, Z.; Komarichev, A.; Chen, X.-W. Deep Semi-Supervised Learning. In Proceedings of the 2018 24th International Conference on Pattern Recognition (ICPR), Beijing, China, 20–24 August 2018; pp. 2154–2159. [Google Scholar] [CrossRef]

- Stikic, M.; Larlus, D.; Schiele, B. Multi-graph Based Semi-supervised Learning for Activity Recognition. In Proceedings of the 2009 International Symposium on Wearable Computers, Linz, Austria, 4–7 September 2009; pp. 85–92. [Google Scholar] [CrossRef]

- Lee, J.; Bahri, Y.; Novak, R.; Schoenholz, S.S.; Pennington, J.; Sohl-Dickstein, J. Deep neural networks as gaussian processes. arXiv 2017, arXiv:1711.00165. [Google Scholar]

- Xu, H.; Li, L.; Fang, M.; Zhang, F. Movement Human Actions Recognition Based on Machine Learning. Int. J. Online Eng. (iJOE) 2018, 14, 193–210. [Google Scholar] [CrossRef]

- Fu, A.; Yu, Y. Real-Time Gesture Pattern Classification with IMU Data. 2017. Available online: http://stanford.edu/class/ee267/Spring2017/report_fu_yu.pdf (accessed on 3 August 2021).

- Bujari, A.; Licar, B.; Palazzi, C.E. Movement pattern recognition through smartphone’s accelerometer. In Proceedings of the 2012 IEEE Consumer Communications and Networking Conference (CCNC), Las Vegas, NV, USA, 14–17 January 2012; pp. 502–506. [Google Scholar] [CrossRef]

- Baca, A. Methods for Recognition and Classification of Human Motion Patterns—A Prerequisite for Intelligent Devices Assisting in Sports Activities. IFAC Proc. Vol. 2012, 45, 55–61. [Google Scholar] [CrossRef]

- Farhan, H.; Al-Muifraje, M.H.; Saeed, T.R. A new model for pattern recognition. Comput. Electr. Eng. 2020, 83, 106602. [Google Scholar] [CrossRef]

- Harvey, S.; Harvey, R. An introduction to artificial intelligence. Appita J. 2016, 51, 20–24. [Google Scholar]

- Neapolitan, R.E.; Jiang, X. Neural Networks and Deep Learning; Determination Press: San Francisco, CA, USA, 2018; pp. 389–411. [Google Scholar] [CrossRef]

- Maurer, U.; Smailagic, A.; Siewiorek, D.; Deisher, M. Activity Recognition and Monitoring Using Multiple Sensors on Different Body Positions. In Proceedings of the International Workshop on Wearable and Implantable Body Sensor Networks (BSN’06), Cambridge, MA, USA, 3–5 April 2006; pp. 113–116. [Google Scholar] [CrossRef]

- Lara, D.; Labrador, M.A. A mobile platform for real-time human activity recognition. In Proceedings of the 2012 IEEE Consumer Communications and Networking Conference (CCNC), Las Vegas, NV, USA, 14–17 January 2012; pp. 667–671. [Google Scholar] [CrossRef]

- Tapia, E.M.; Intille, S.S.; Haskell, W.; Larson, K.; Wright, J.; King, A.; Friedman, R. Real-time recognition of physical activities and theirintensities using wireless accelerometers and a heart monitor. In Proceedings of the 2007 11th IEEE International Symposium on Wearable Computers, Boston, MA, USA, 11–13 October 2007. [Google Scholar]

- Tzu-Ping, K.; Che-Wei, L.; Jeen-Shing, W. Development of a portable activity detector for daily activity recognition. In Proceedings of the IEEE International Symposium on Industrial Electronics, Seoul, Korea, 5–8 July 2009; pp. 115–122. [Google Scholar]

- Bhat, G.; Deb, R.; Ogras, U.Y. OpenHealth: Open-Source Platform for Wearable Health Monitoring. IEEE Des. Test 2019, 36, 27–34. [Google Scholar] [CrossRef]

- Nakamura, Y.; Matsuda, Y.; Arakawa, Y.; Yasumoto, K. WaistonBelt X:A Belt-Type Wearable Device with Sensing and Intervention Toward Health Behavior Change. Sensors 2019, 19, 4600. [Google Scholar] [CrossRef]

- Munoz-Organero, M. Outlier Detection in Wearable Sensor Data for Human Activity Recognition (HAR) Based on DRNNs. IEEE Access 2019, 7, 74422–74436. [Google Scholar] [CrossRef]

- Lara, O.D.; Labrador, M.A. A Survey on Human Activity Recognition using Wearable Sensors. IEEE Commun. Surv. Tutor. 2013, 15, 1192–1209. [Google Scholar] [CrossRef]

- Kaghyan, S.; Sarukhanyan, H.G. Activity recognitionusing k-nearest neighbor algorithm on smartphone with triaxial accelerometer. Int. J. Inform. Models Anal. 2012, 1, 146–156. [Google Scholar]

- Arias, P.; Kelley, C.; Mason, J.; Bryant, K.; Roy, K. Classification of User Movement Data. In Proceedings of the 2nd International Conference on Digital Signal Processing, Tokyo, Japan, 25–27 February 2018. [Google Scholar] [CrossRef]

- Seok, W.; Kim, Y.; Park, C. Pattern Recognition of Human Arm Movement Using Deep Reinforcement Learning Intelligent Information System and Embedded Software Engineering; Kwangwoon University: Seoul, Korea, 2018; pp. 917–919. [Google Scholar]

- Gupta, S.M.; Mujawar, A. Tracking and Prediciting Movement Patterns of a Moving Object in Wiresless Sensor Network. In Proceedings of the 2018 2nd International Conference on Trends in Electronics and Informatics (ICOEI), Tirunelveli, India, 11–12 May 2018; pp. 586–591. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, Z.; Zhang, Y.; Bao, J.; Zhang, Y.; Deng, H. Human Activity Recognition Based on Motion Sensor Using U-Net. IEEE Access 2019, 7, 75213–75226. [Google Scholar] [CrossRef]

- Xu, C.; Chai, D.; He, J.; Zhang, X.; Duan, S. InnoHAR: A Deep Neural Network for Complex Human Activity Recognition. IEEE Access 2019, 7, 9893–9902. [Google Scholar] [CrossRef]

- Clouthier, A.L.; Ross, G.B.; Graham, R.B. Sensor Data Required for Automatic Recognition of Athletic Tasks Using Deep Neural Networks. Front. Bioeng. Biotechnol. 2020, 7, 473. [Google Scholar] [CrossRef]

- Hwang, I.; Cha, G.; Oh, S. Multi-modal human action recognition using deep neural networks fusing image and inertial sensor data. In Proceedings of the 2015 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI), San Diego, CA, USA, 14–16 September 2017; pp. 278–283. [Google Scholar] [CrossRef]

- Gumaei, A.; Hassan, M.M.; Alelaiwi, A.; Alsalman, H. A Hybrid Deep Learning Model for Human Activity Recognition Using Multimodal Body Sensing Data. IEEE Access 2019, 7, 99152–99160. [Google Scholar] [CrossRef]

- Karungaru, S. Human action recognition using wearable sensors and neural networks. In Proceedings of the 2015 10th Asian Control Conference (ASCC), Kota Kinabalu, Malaysia, 31 May–3 June 2015; pp. 1–4. [Google Scholar] [CrossRef]

- Choi, A.; Jung, H.; Mun, J.H. Single Inertial Sensor-Based Neural Networks to Estimate COM-COP Inclination Angle during Walking. Sensors 2019, 19, 2974. [Google Scholar] [CrossRef] [PubMed]

- Xie, B.; Li, B.; Harland, A. Movement and Gesture Recognition Using Deep Learning and Wearable-sensor Technology. In Proceedings of the 2018 International Conference on Artificial Intelligence and Pattern Recognition, Beijing, China, 18–20 August 2018; pp. 26–31. [Google Scholar] [CrossRef]

- Nguyen, T.; Gupta, S.; Venkatesh, S.; Phung, D. A Bayesian Nonparametric Framework for Activity Recognition Using Accelerometer Data. In Proceedings of the 2014 22nd International Conference on Pattern Recognition, Stockholm, Sweden, 24–28 August 2014; pp. 2017–2022. [Google Scholar] [CrossRef]

- Cheng, L.; You, C.; Guan, Y.; Yu, Y. Body activity recognition using wearable sensors. In Proceedings of the 2017 Computing Conference, London, UK, 18–20 July 2017; pp. 756–765. [Google Scholar] [CrossRef]

- Chen, Y.; Guo, M.; Wang, Z. An improved algorithm for human activity recognition using wearable sensors. In Proceedings of the 2016 Eighth International Conference on Advanced Computational Intelligence (ICACI), Chiang Mai, Thailand, 14–16 February 2016; pp. 248–252. [Google Scholar] [CrossRef]

- Mekruksavanich, S.; Jitpattanakul, A. Classification of Gait Pattern with Wearable Sensing Data. In Proceedings of the 2019 Joint International Conference on Digital Arts, Media and Technology with ECTI Northern Section Conference on Electrical, Electronics, Computer and Telecommunications Engineering, Nan, Thailand, 30 January–2 February 2019; pp. 137–141. [Google Scholar] [CrossRef]

- Hachaj, T.; Piekarczyk, M. Evaluation of Pattern Recognition Methods for Head Gesture-Based Interface of a Virtual Reality Helmet Equipped with a Single IMU Sensor. Sensors 2019, 19, 5408. [Google Scholar] [CrossRef]

- Kim, M.; Cho, J.; Lee, S.; Jung, Y. IMU Sensor-Based Hand Gesture Recognition for Human-Machine Interfaces. Sensors 2019, 19, 3827. [Google Scholar] [CrossRef]

- DiLiberti, N.; Peng, C.; Kaufman, C.; Dong, Y.; Hansberger, J.T. Real-Time Gesture Recognition Using 3D Sensory Data and a Light Convolutional Neural Network. In Proceedings of the 27th ACM International Conference on Multimedia, Nice, France, 21–25 February 2019; pp. 401–410. [Google Scholar] [CrossRef]

- Alavi, S.; Arsenault, D.; Whitehead, A. Quaternion-Based Gesture Recognition Using Wireless Wearable Motion Capture Sensors. Sensors 2016, 16, 605. [Google Scholar] [CrossRef]

- Santhoshkumar, R.; Geetha, M.K. Deep Learning Approach for Emotion Recognition from Human Body Movements with Feedforward Deep Convolution Neural Networks. Procedia Comput. Sci. 2019, 152, 158–165. [Google Scholar] [CrossRef]

- Hu, B.; Dixon, P.C.; Jacobs, J.; Dennerlein, J.; Schiffman, J. Machine learning algorithms based on signals from a single wearable inertial sensor can detect surface- and age-related differences in walking. J. Biomech. 2018, 71, 37–42. [Google Scholar] [CrossRef] [PubMed]

- Lin, W.-Y.; Verma, V.K.; Lee, M.-Y.; Lai, C.-S. Activity Monitoring with a Wrist-Worn, Accelerometer-Based Device. Micromachines 2018, 9, 450. [Google Scholar] [CrossRef] [PubMed]

- Estévez, P.A.; Held, C.M.; Holzmann, C.A.; Perez, C.A.; Pérez, J.P.; Heiss, J.; Garrido, M.; Peirano, P. Polysomnographic pattern recognition for automated classification of sleep-waking states in infants. Med. Biol. Eng. Comput. 2002, 40, 105–113. [Google Scholar] [CrossRef] [PubMed]

- Procházka, A.; Kuchyňka, J.; Vyšata, O.; Cejnar, P.; Vališ, M.; Mařík, V. Multi-Class Sleep Stage Analysis and Adaptive Pattern Recognition. Appl. Sci. 2018, 8, 697. [Google Scholar] [CrossRef]

- Gandhi, R. Introduction to Machine Learning Algorithms: Linear Regression. Toward Data Science. 2018. Available online: https://towardsdatascience.com/introduction-to-machine-learning-algorithms-linear-regression-14c4e325882a (accessed on 3 August 2021).

- Duffy, S.A. HHS Public Access Author manuscript. J. Community Health 2013, 38, 597–602. [Google Scholar] [CrossRef][Green Version]

- Migueles, J.H.; Rowlands, A.V.; Huber, F.; Sabia, S.; Van Hees, V.T. GGIR: A Research Community–Driven Open Source R Package for Generating Physical Activity and Sleep Outcomes from Multi-Day Raw Accelerometer Data. J. Meas. Phys. Behav. 2019, 2, 188–196. [Google Scholar] [CrossRef]

- Kim, Y.; Hibbing, P.; Saint-Maurice, P.F.; Ellingson, L.D.; Hennessy, E.; Wolff-Hughes, D.L.; Perna, F.M.; Welk, G.J. Surveillance of Youth Physical Activity and Sedentary Behavior with Wrist Accelerometry. Am. J. Prev. Med. 2017, 52, 872–879. [Google Scholar] [CrossRef]

- Cole-kripke, T.; Daniel, F.; Sadeh, T. ActiGraph White Paper Actigraphy Sleep Scoring Algorithms. 1992. Available online: https://actigraphcorp.com/ (accessed on 3 August 2021).

- Quante, M.; Kaplan, E.R.; Cailler, M.; Rueschman, M.; Wang, R.; Weng, J.; Taveras, E.M.; Redline, S. Actigraphy-based sleep estimation in adolescents and adults: A comparison with polysomnography using two scoring algorithms. Nat. Sci. Sleep 2018, 10, 13–20. [Google Scholar] [CrossRef] [PubMed]

- Haghayegh, S.; Khoshnevis, S.; Smolensky, M.H.; Diller, K.R.; Castriotta, R.J. Performance comparison of different interpretative algorithms utilized to derive sleep parameters from wrist actigraphy data. Chrono. Int. 2019, 36, 1752–1760. [Google Scholar] [CrossRef]

- Lee, P.H.; Suen, L.K.P. The convergent validity of Actiwatch 2 and ActiGraph Link accelerometers in measuring total sleeping period, wake after sleep onset, and sleep efficiency in free-living condition. Sleep Breath 2016, 21, 209–215. [Google Scholar] [CrossRef] [PubMed]

| Wearable Device | Body Location | Typical Use Case/Disease Condition | Captured Movement |

|---|---|---|---|

| Wearable Cutaneous Haptic Interface (WCHI) [40] | Finger | Parkinson’s Disease | Three degrees of freedom to measure disease conditions, such as tremor and bradykinesia. |

| Smart Electro-Clothing Systems (SeCSs) [41] | Heart | Health Monitoring | Surface electromyography (sEMG); HR, heart rate variability. |

| Xsens DOT [42] | All over the body | Healthcare, sports | Osteoarthritis |

| 5DT data glove [43] | Fingers and wrist | Robust Hand Motion Tracking | Fibre optic sensors measure flexion and extension of the Interphalangeal (IP), metacarpophalangeal (MCP) joints of the fingers and thumb, abduction and adduction, and the orientation (pitch and roll) of the user’s hand. |

| Neofect Raphael data glove [44] | Fingers, wrist and forearm | Poststroke patients | Accelerometer and bending sensors measuring flexion and extension of finger and thumb. |

| Stretchsense data glove [45] | Hand motion capture | Gaming, augmented and virtual reality domains, robotics and the biomedical industries. | Flexion, extension of fingers and thumb. |

| Flex Sensor (Data glove) [46] | Finger | Rheumatoid Arthritis (RA), Parkinson’s disease and other neurological conditions/rehabilitative requirements. | Flexion and extension of the (IP), (MCP) joints of the fingers and thumb and the abduction and adduction movements. |

| X-IST Data Glove [47] | Hand and fingers | Poststroke patients | Five bend sensors and five pressure sensors measure MCP, PIP finger and thumb movement. |

| MoCap Pro (Smart Glove) [48] | Hand and fingers | Stroke | Capture bend of each MCP and proximal interphalangeal (PIP) joint. |

| Textile-Based Wearable Gesture Sensing Device [49] | Elbow and knee | Musculoskeletal disorders | Flexion angle of elbow and knee movements |

| VICON system [50] | Shoulder and elbow | Musculoskeletal disorders | Humerothoracic, scapulothoracic joint angles and elbow kinematics. |

| Goniometer-Pro [51] | Knee | Stroke | Passive flexion of knee. |

| Smart Garment Sensor System [52] | Leg | Strain sensor | Lower limb joint position analysis. |

| Fineck [53,54] | Neck | Monitor neck movements and respiratory frequency. | Flexion-extension and axial rotation repetitions, and respiratory frequency. |

| SMART DX [55] | All over the body | Gait clinical assessment and multifactorial movement analysis. | Dynamic analysis of muscle activity, postural analysis, motor rehabilitation. |

| ViMove [56] | Neck, lower back and knee | Movement and Activity Recognition in sports and clinical monitoring. | Flexion-extension and axial rotation. |

| Dubbed Halo [57] | Wrist | Voice monitoring application called ‘Tone’. | Detect the “positivity” and “energy” from the human voice. |

| Polysomnography sensors [58] | Chest, hand, leg and head | Identify sleep apnoea | Breathing volume and heart rate. |

| Pulse oximetry [59] | Finger | Pulmonary disease | Monitor oxygen saturation, respiratory rate, breathing pattern and air quality. |

| TZOA [60] | Textile | Respiratory disease | Measure air quality and humidity. |

| Eversense Glucose Monitoring, Guardian Connect System and Dexcom CGM [61] | Hand | Diabetes | Glucose level monitoring. |

| Wearable Device for QS | Type | Technology Used | Well Known Applications | Battery Life |

|---|---|---|---|---|

| Apple Watch [72] | Smartwatch | IMU, Blood oxygen sensor, electrical heart sensor, optical sensors. | Basic fitness tracking, Blood Oxygen Level, ECG, step count, sleep patterns. | 1 day |

| Fitbit Sense [73] | Smartwatch | IMU, blood oxygen sensor, electrical heart sensor, optical sensors, temperature sensor, electrodermal sensor. | Basic fitness tracking, stress management, SpO2, skin temperature, sleep and FDA-cleared ECG, tracking electrodermal activity. | 6 days |

| Samsung Gear2 [74] | Smartwatch | IMU, electrical heart sensor. | Basic fitness tracking. | <1 day |

| Samsung GearS [75] | Smartwatch | IMU, electrical heart sensor. | Basic fitness tracking. | <1 day |

| iHealth Tracker (AM3) [76] | Fitness Tracker | IMU | Steps taken, calories burned, distance travelled, sleep hours and sleep efficiency. | 5–7 days |

| Pebble Watch [77] | Smartwatch | IMU, ambient light sensor. | Cycling app to measure speed, distance and pace through GPS. | 3–6 days |

| Mi Band 6 [78] | Fitness Tracker | IMU, PPG heart rate sensor, capacitive proximity sensor. | Heartrate measurements, sleep tracking, sport tracking. | 14 days |

| MisFit Shine [79] | Fitness Tracker | IMU, capacitive touch sensor. | Tracks steps, calories, distance, automatically tracks light and deep sleep, activity tagging feature for any sports. | 4–6 months |

| Sony Smartwatch 4 (SWR10) [80] | Smartwatch | GPS, IMU, optical heart rate sensor and altimeter. | Distance and duration of workout, heart rate monitoring, steps count | 2–4 days |

| Fitbit Flex [81] | Fitness Tracker | IMU, heart monitor, altimeter. | Track steps, sleep and calories. | Up to 5 days |

| Decathlon ONCoach 100 [82] | Activity Tracker | GPS, IMU, altimeter | Step count, track light and deep sleep, record the start and the end of a sport session, average speed and distance and calories consumed. | 6 months |

| Actigraphy [83] | Activity recognition/Sleep pattern recognition | IMU | Inclination, gait analysis, fall detection, sleep quality analysis. | 14 days |

| Garmin VivoSmart HR+ [84] | Activity recognition/Sleep analysis | IMU, heartrate monitor altimeter, GPS | Steps, distance, calories, floors climbed, activity intensity and heart rate. | 8 h |

| MotionNode Bus [85] | Motion tracking | miniature IMU | Motion tracking using IMU data. | 7 h |

| Medical Service | Place of Care | Required Sensor Performance and Accuracy | Requirements | |

|---|---|---|---|---|

| Healthcare Use | Self-Monitoring | |||

| Domiciliary care | Patient’s home | High | Medium | Portable, robust, ease of use |

| Hospital care | Hospital environment | High | Medium | Portable within a hospital setting, high accuracy |

| Wearable health monitoring | Anywhere, Any time | Medium | Medium | Small and light, highly portable and unobtrusive |

| Wearable Sensor | Usage | Sensor Technology | Reported Accuracy |

|---|---|---|---|

| Grid-eye [100] | Human tracking or detection | Temperature sensing using Infrared radiation | 80% |

| Wearable Biochemical Sensors [101] | Detect biomarkers in biological fluids | Physicochemical transducer | 95% |

| Wearable Biophysical Sensors [102] | Detect biophysical parameters, such as heartrate, temperature and blood pressure | Sensor electrodes | 94% |

| Adhesive patch-type wearable sensor [103] | Monitoring of sweat electrolytes | Radio-frequency identification (RFID) | 96% |

| Tattoo-Based Wearable Electrochemical Devices [104] | Monitor fluoride and pH levels of saliva | Body-compliant wearable electrochemical devices on temporary tattoos | 85% |

| RFID Tag Antenna [105] | Tracking of patients in a healthcare environment | RFID | 99% |

| Pedar system [106,107] | Human gait analysis | Pressure sensors capture insole-based foot pressure data | 88% |

| Wearable IMU Sensor [21,22,23] | Motion tracking, activity tracking, gait altitude, fall detection | Accelerometer, Gyroscope, Magnetometer | 99% |

| Metric | Smartwatch | Accuracy (Steps) | Typical Cost (May 2021) | |

|---|---|---|---|---|

| 200 Steps | 1000 Steps | |||

| Step count | Apple Watch | 99.1% | 99.5% | €480 |

| MisFit Shine | 98.3% | 99.7% | €185 | |

| Samsung Gear 1 | 97% | 94% | €150 | |

| Heart rate measurement | Apple Watch | 99% | 99.9% | €480 |

| Motorola Moto 360 | 89.5% | 92.8% | €110 | |

| Samsung Gear Fit | 93% | 97.4%, | €150 | |

| Samsung Gear 2 | 92.3% | 97.7% | €130 | |

| Samsung Gear S | 91.4% | 89.4% | €110 | |

| Apple iPhone 6 (with cardio application | 99% | 99.2% | €180 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vijayan, V.; Connolly, J.P.; Condell, J.; McKelvey, N.; Gardiner, P. Review of Wearable Devices and Data Collection Considerations for Connected Health. Sensors 2021, 21, 5589. https://doi.org/10.3390/s21165589

Vijayan V, Connolly JP, Condell J, McKelvey N, Gardiner P. Review of Wearable Devices and Data Collection Considerations for Connected Health. Sensors. 2021; 21(16):5589. https://doi.org/10.3390/s21165589

Chicago/Turabian StyleVijayan, Vini, James P. Connolly, Joan Condell, Nigel McKelvey, and Philip Gardiner. 2021. "Review of Wearable Devices and Data Collection Considerations for Connected Health" Sensors 21, no. 16: 5589. https://doi.org/10.3390/s21165589

APA StyleVijayan, V., Connolly, J. P., Condell, J., McKelvey, N., & Gardiner, P. (2021). Review of Wearable Devices and Data Collection Considerations for Connected Health. Sensors, 21(16), 5589. https://doi.org/10.3390/s21165589