UAV flight data is mainly generated by radar-related companies, agencies, or the military for particular purposes. Thus, datasets are not publicly released and it is difficult to find published references. In particular, the dataset recorded by radar sensors is even rarer. Due to characteristics of the research field, many researchers generate their own datasets and carry out research. However, most of datasets do not reflect the diversity of data, such as measuring only at a close range to obtain a clear signal or simulating only limited movements of UAVs. Unlike these datasets, we recorded various types of UAVs and ground moving targets in diverse scenarios. After pre-processing the well-recorded signals, we generated our radar spectrogram dataset.

3.1. Measurement

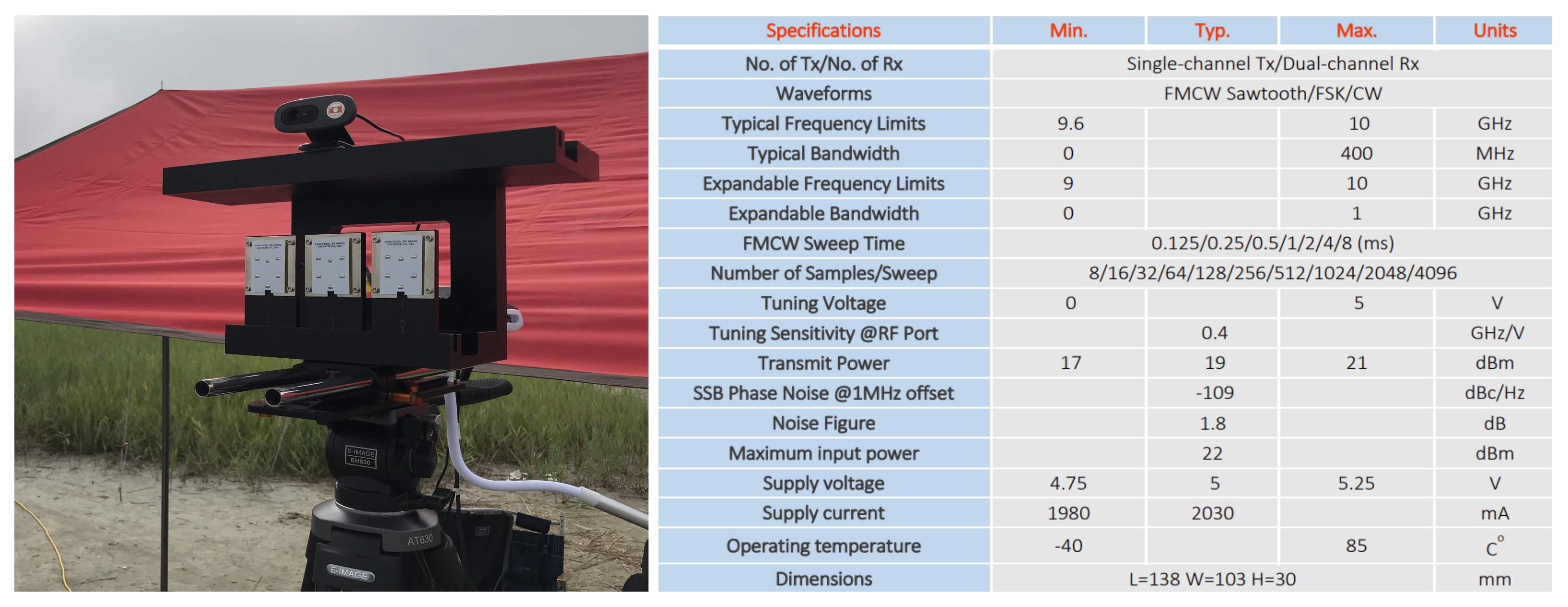

Radar signals are less vulnerable to low visibility and weather conditions than video signals and have fewer restrictions on the line of sight (LOS), which indicates a straight line between the target and the sensor. These radars are divided into two types by the principle of radio wave emission; (1) ‘pulse radar,’ which transmits pulse signals and receives signals reflected from objects and (2) ‘continuous wave (CW) radar’, which continuously transmits and receives signals without a pause. To detect time-varying changes for low radar cross section (RCS) targets, the continuous wave radar is suitable and we decided to use an FMCW radar that continuously emits a frequency modulated signal at regular intervals to obtain time information. Our model is Ancortek’s SDR KIT 980AD2 and the specifications are described in

Figure 3. Additionally, to select well-recorded files, we installed a video camera synchronized with the radar and double-checked video files and radar spectrograms.

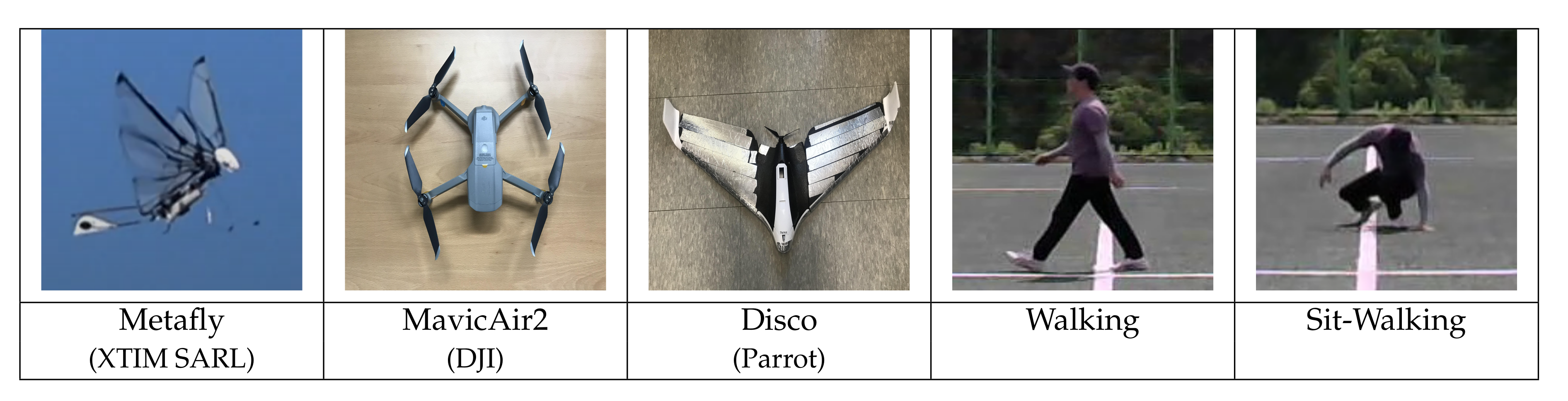

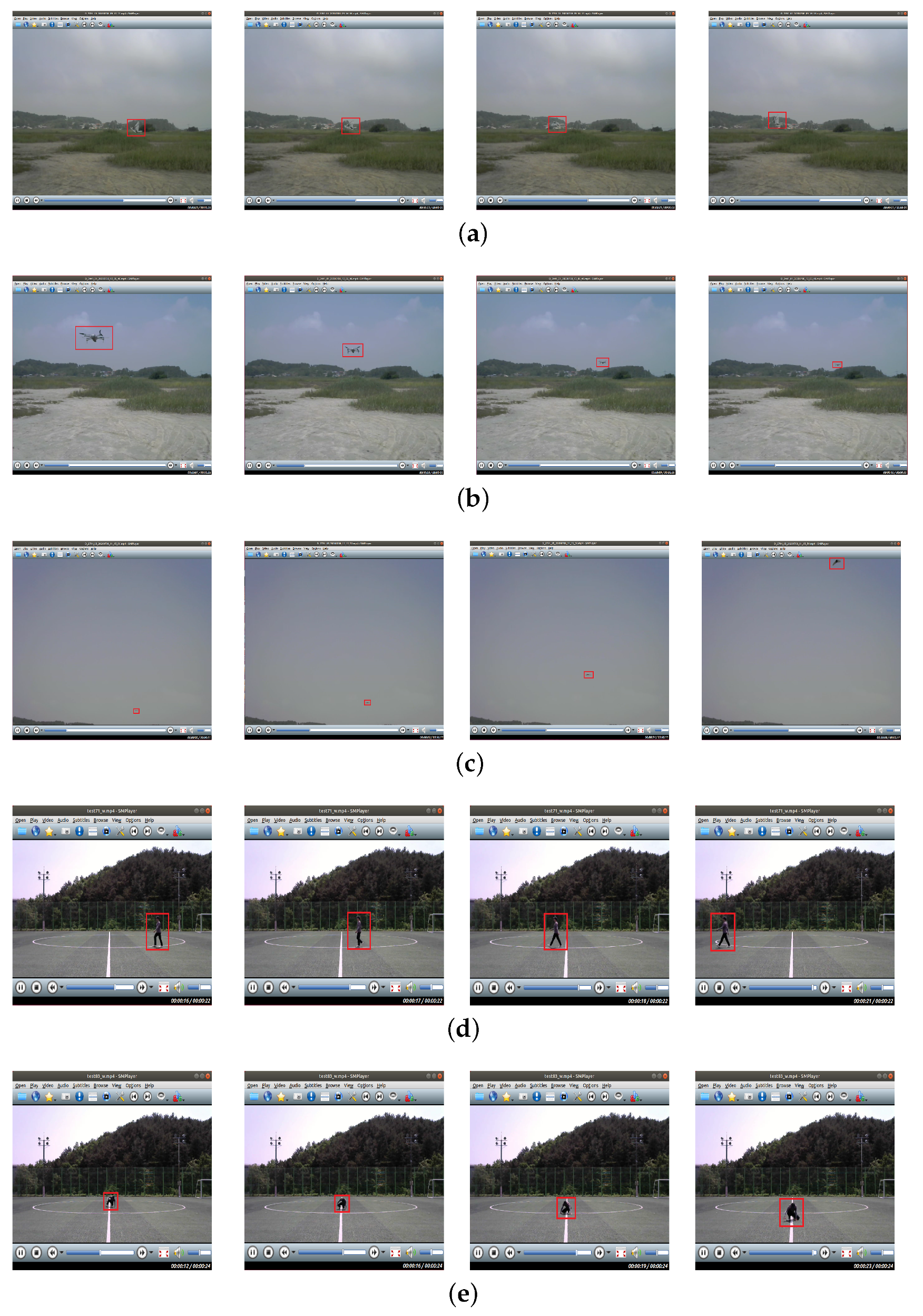

We recorded five different LSS targets with the FMCW radar. Assuming the enemy approaching the local area, we selected three types of UAVs as aerial moving targets and two different human activities as ground moving targets. The three flight types of UAVs are ‘Metafly’, a wing-flapping drone that mimics wings of a bird and ‘Disco’, a fixed-wing, and ‘Mavic Air 2’, a quad-copter (4 rotors). ‘Walking’ and ‘Sit-walking’ are data of the same person.

Figure 4 shows the images of the five targets.

We recorded various movements of targets within the 100 m range. UAVs were recorded while changing altitude, speed, and direction freely, and humans were recorded while changing the distance and the direction at a constant pace. Only two UAVs (Metafly and Disco) were given some restrictions for the proper recording. Metafly was recorded within the 10 m range because of its low signal intensity. Disco was recorded only in the left and right, front and rear, and concentric circular flight at an altitude of 10 m with low-velocity settings because of its high-speed and wide turning radius. Disco is equipped with a single rotor at the rear of the fuselage so that thrust acts only forward and changes direction gradually by changing the Angle of the Attack (AoA) of the aileron at the wing-tips. So it requires a wide turning radius and often be placed outside of the radar’s detection range. Besides, because it moves at high speed, it quickly leaves the radar’s detection range.

Table 1 shows the movements for each target and the settings for recording.

Figure 5 is sequential video frames of a specific movement for targets.

We operated Metafly and Mavic Air 2 manually, and Disco operated automatically by entering flight plans through the ‘Free Flight Pro’ mobile application. We recorded many times for each target and removed abnormal files such as overly noisy files or files intruded by other objects by cross-checking video files and spectrograms. Basically, we selected 10 well-recorded files for each target and divided the training dataset and the test dataset by a ratio of 8:2. (The exception is for Disco; 25 files were used because the recorded section was too short).

3.2. Pre-Processing

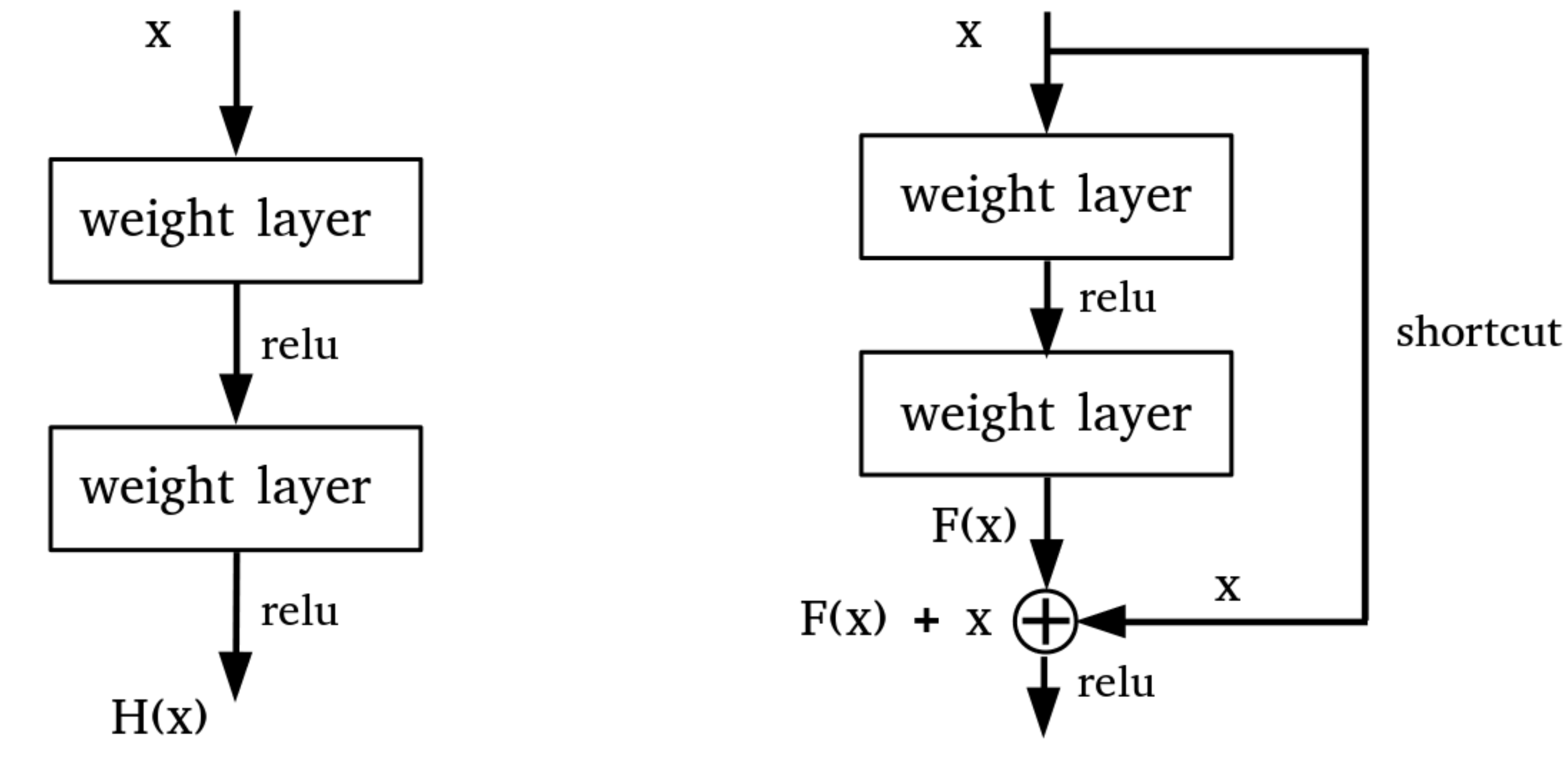

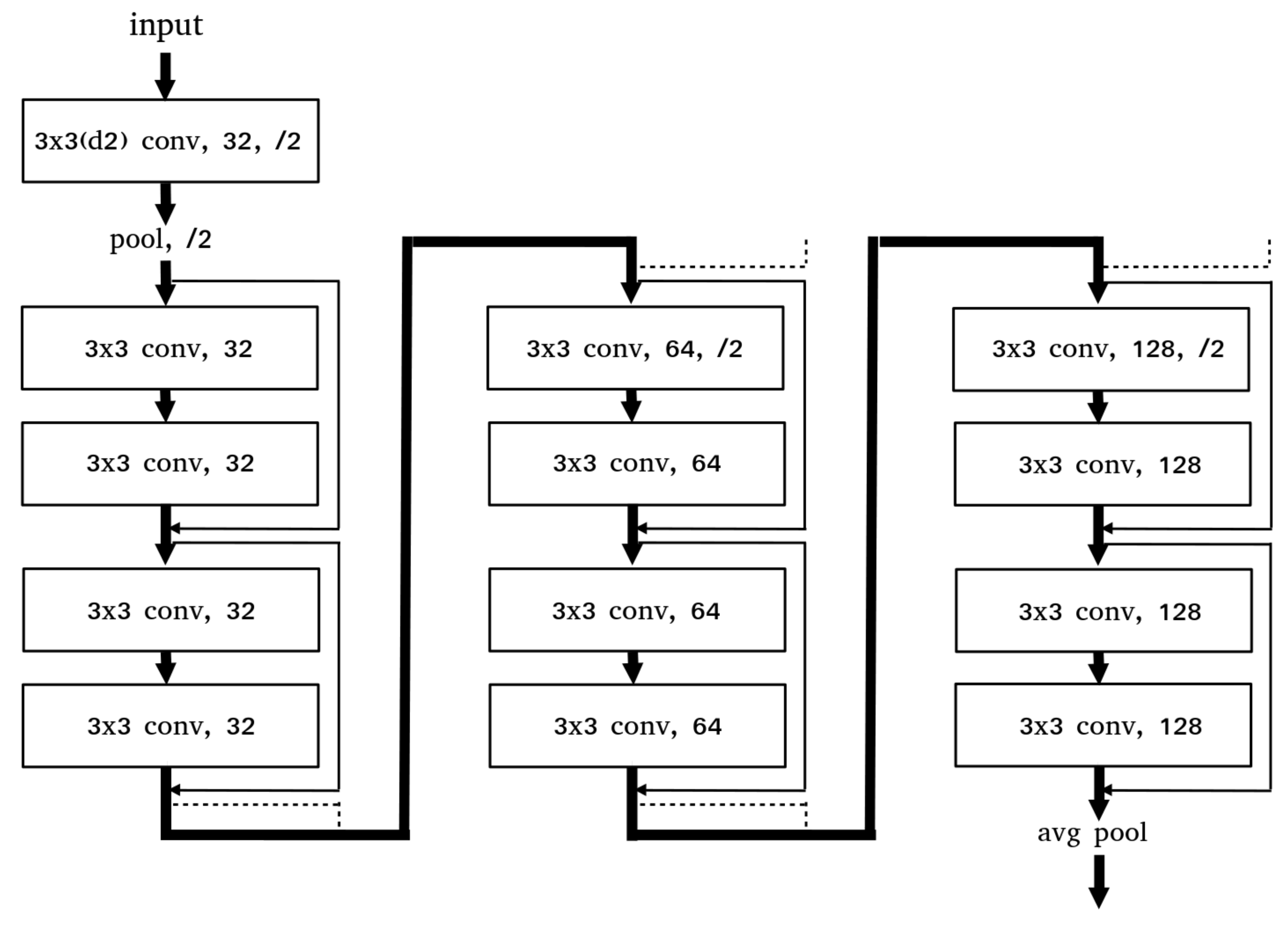

In the pre-processing step, the recorded radar signals are transformed into spectrogram images through STFT and completed into the dataset after the data refinement and augmentation. The data refinement is the step for removing the spectrogram section in which the target is not recorded. To do this, we cut the spectrogram into short time intervals and removed cut images with an average intensity below a threshold. To increase the amount of data, we applied three data augmentation methods, keeping the format of the spectrogram: the x-axis represents time, the y-axis represents frequency and the color at each point represents the amplitude of a specific frequency at a specific time.

In the signal processing of STFT, we applied different window sizes (128, 256 and 512) and the window overlap ratios (50%, 70% and 85%) to get spectrograms of different resolutions. In addition, we applied the vertical flip after the data refinement to obtain spectrograms with reversed radial velocity sign.

A spectrogram [

13] reveals the instantaneous spectral content of the time-domain signal and the spectral content variations over time. A spectrogram is obtained by the squared magnitude of the STFT of a discrete signal. With the spectrogram, we can visually observe the spectrum of frequency changing over time. But when converting the spectrogram, finite-size sampling in a recorded signal may result in a truncated waveform from the original continuous-time signal, introducing discontinuities into the recorded signal. These discontinuities are represented in the FFT as high-frequency components, even though not present in the original signal. This appears as a blurry form, rather than a clear form on the spectrogram. This is called ‘spectral leakage’ because it looks as if energy is leaking from one frequency to another. In order to mitigate the spectral leakage, window functions are generally applied. The spectrogram resolution is determined by the window size and there is a trade-off between time and frequency resolution [

14].

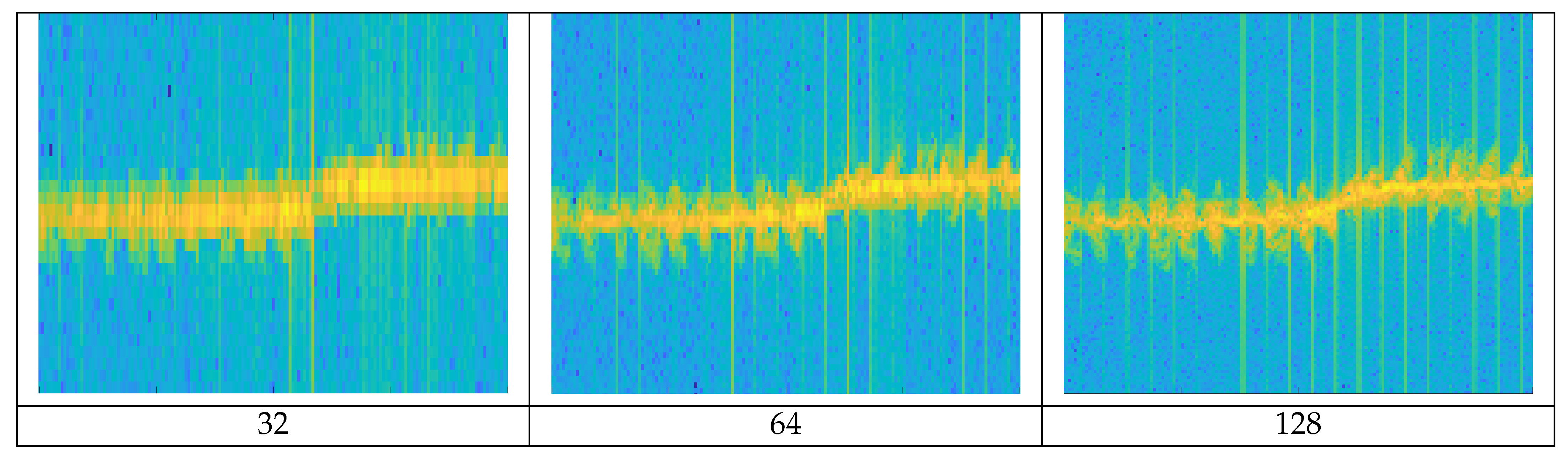

Figure 6 shows the differences in the spectrogram resolution according to window sizes.

If a narrow window size is applied, a fine time resolution can be obtained due to a short time interval, but the frequency resolution is degraded due to the wide frequency bandwidth. Conversely, if wide window size is applied, a fine frequency resolution is obtained due to a wide time interval and a narrow frequency bandwidth, but the time resolution is degraded. The higher the resolution, the more detailed the object’s MDS waveform is represented. We generated spectrogram images with different resolutions by applying three window sizes (128, 256, 512) to the original signal.

Even when the window size is determined, if several different frequencies are included in a window, they may not be distinguishable. One can use a window overlap that applies for redundancy when applying the next window in the STFT process to reduce this effect. The higher the overlap ratio is applied, the higher the resolution, but it requires more computations.

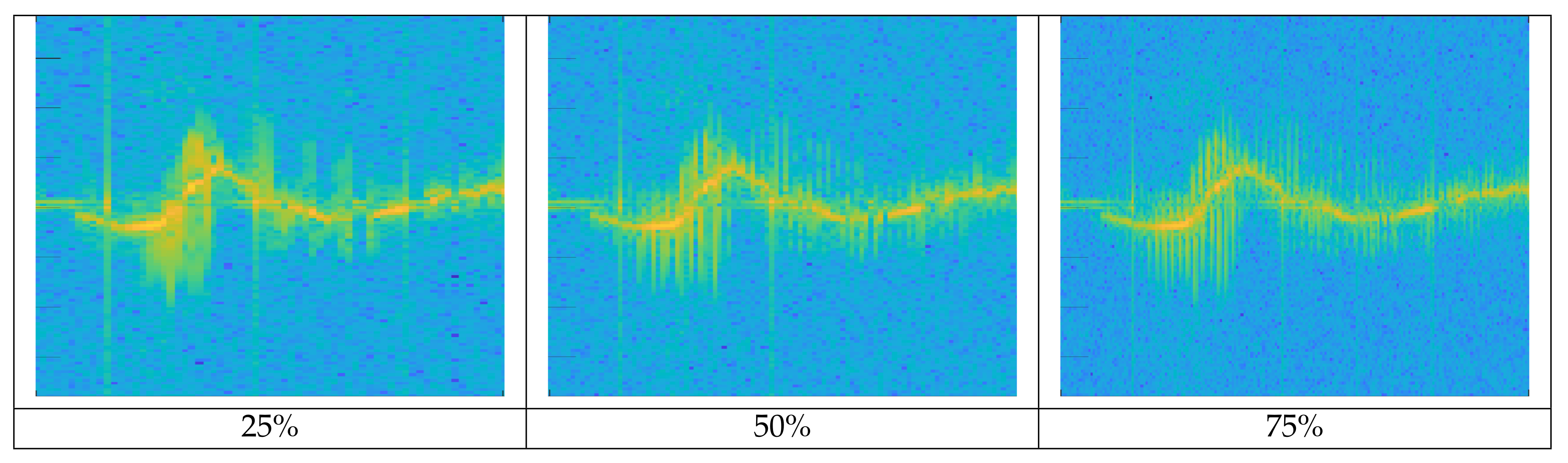

Figure 7 shows the differences in the spectrogram resolution of Metafly (wing flapping UAV) according to different window overlap ratios. The higher the overlap ratio in the given window size, the more detailed the MDS signal is. The trajectory of radial velocity by the entire body of the target is also precisely expressed.

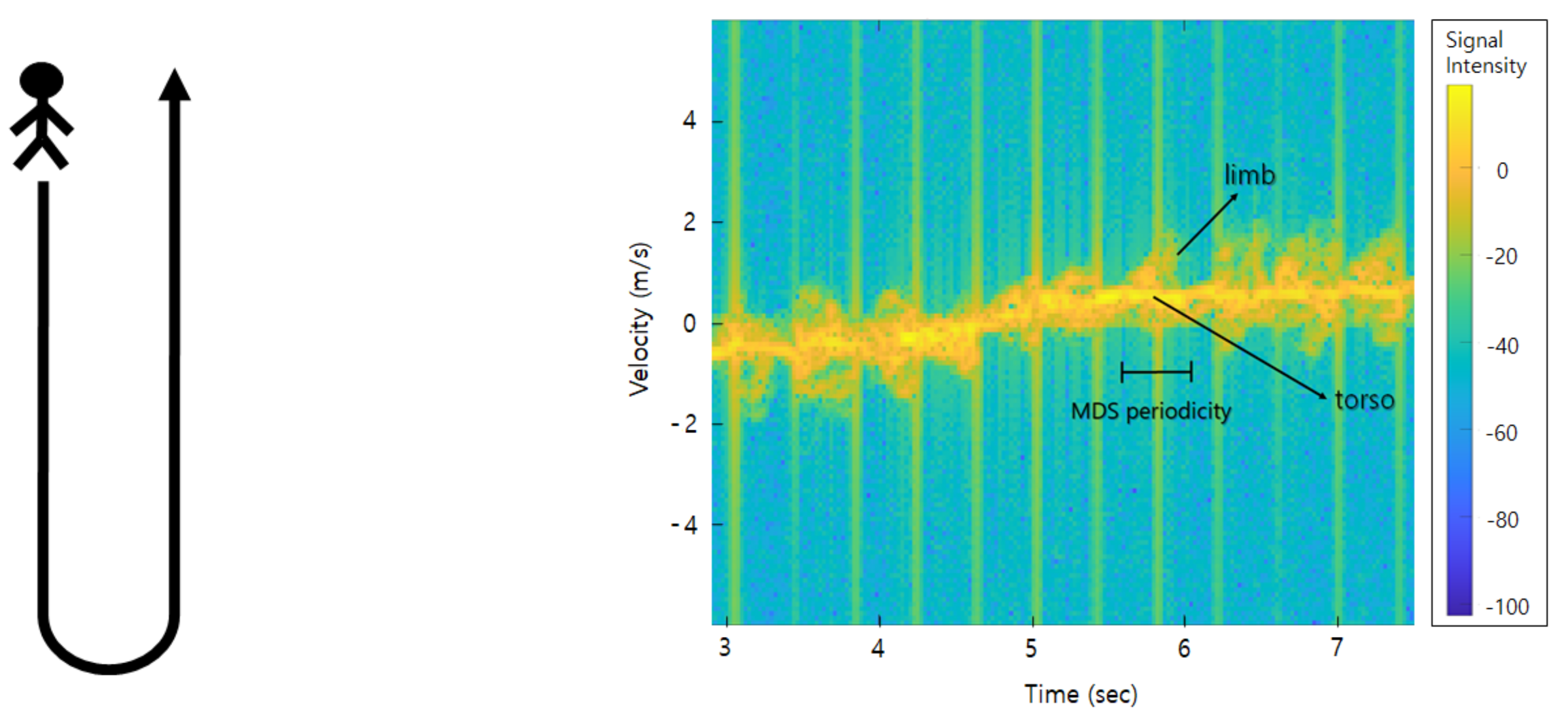

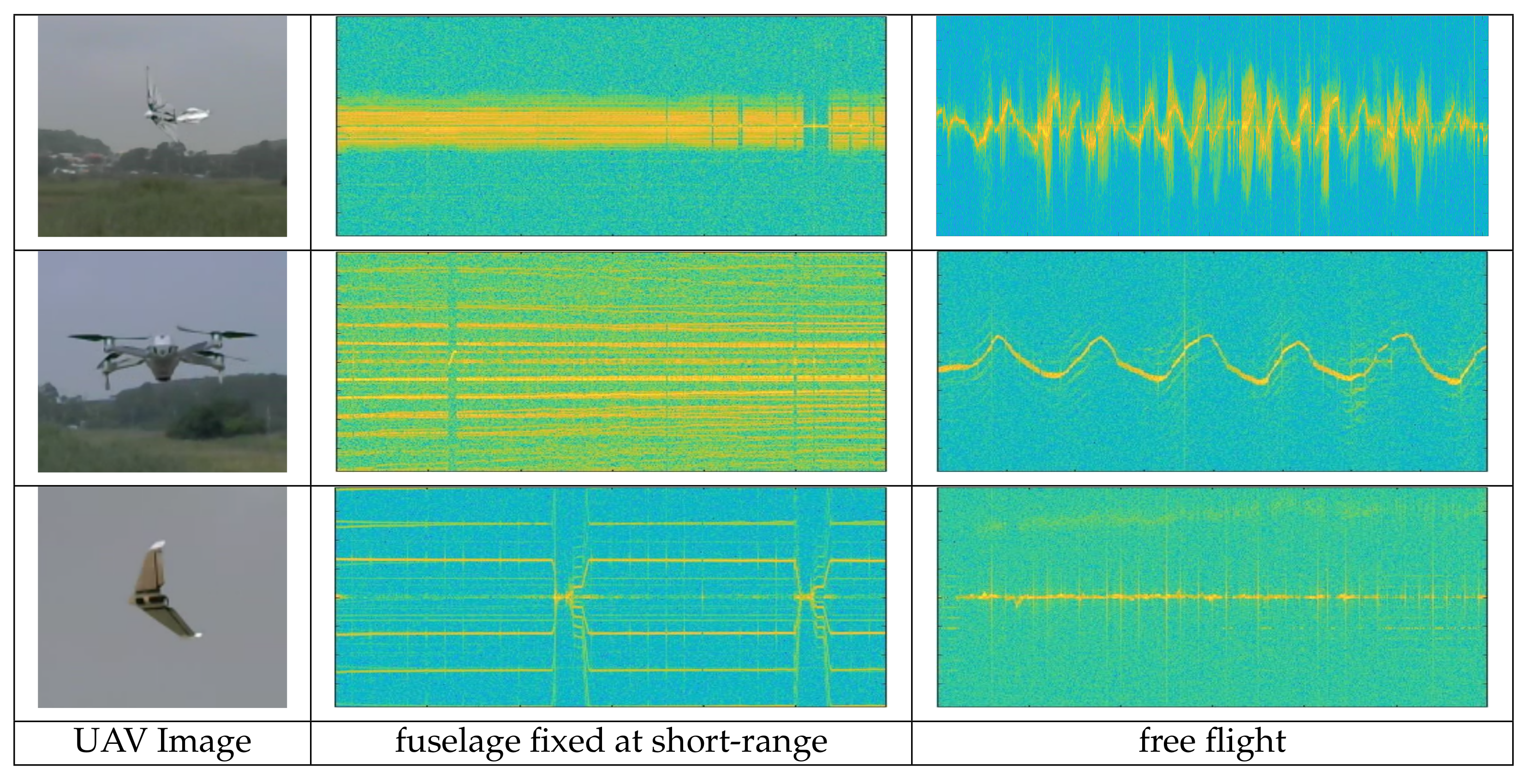

In the time-velocity spectrogram, the height represents the target’s radial velocity relative to the radar; the radial velocity component that appears on the upside (positive velocity) from the center represents the target is moving away from the radar, the downward (negative velocity) from the center represents that the target is moving toward the radar and center represents velocity zero. The continuous waveform of the target over time generates a trajectory representing the movement characteristics according to the type of target on the spectrogram. For example, the difference in trajectory due to flight dynamics between fixed-wing aircraft and multiple helicopters is explained below. First, in fixed-wing UAVs, the propeller is fixed in the front or rear, so the thrust works only in one direction. Accordingly, the direction changes gradually by three factors; the inclination of the aileron at the rear of the main wing, the elevator of the horizontal tail wing, and the rudder of the vertical tail wing. In contrast, in a multi-copter, several rotors are distributed over the top of the fuselage. When changing the direction, it uses fuselage-tilting caused by the difference at each rotor rotation rate, so not only a gradual change of the direction but also a drastic change of the direction in all azimuth is possible. These distinctive flight characteristics appear as time-varying trajectories on the spectrogram; in the former case, it is gradual and curved and in the latter case, it appears in a sharp and vertical form. This trajectory will be trained with the target’s characteristics along with the spectrogram shape and the spectrogram with a high overlap ratio will represent the radial velocity change in more detail. We applied three window overlap ratios for each window size. In the STFT process, data augmented nine times by applying three window sizes and three window overlap ratios to one original signal.

We performed data refinement after STFT. UAV signal has low intensity due to its small size and material such as plastic or reinforcement styrofoam. So, as the distance increases, the signal intensity drops sharply or is not detected at all. So there are many unrecorded sections like background clutter in the spectrogram.

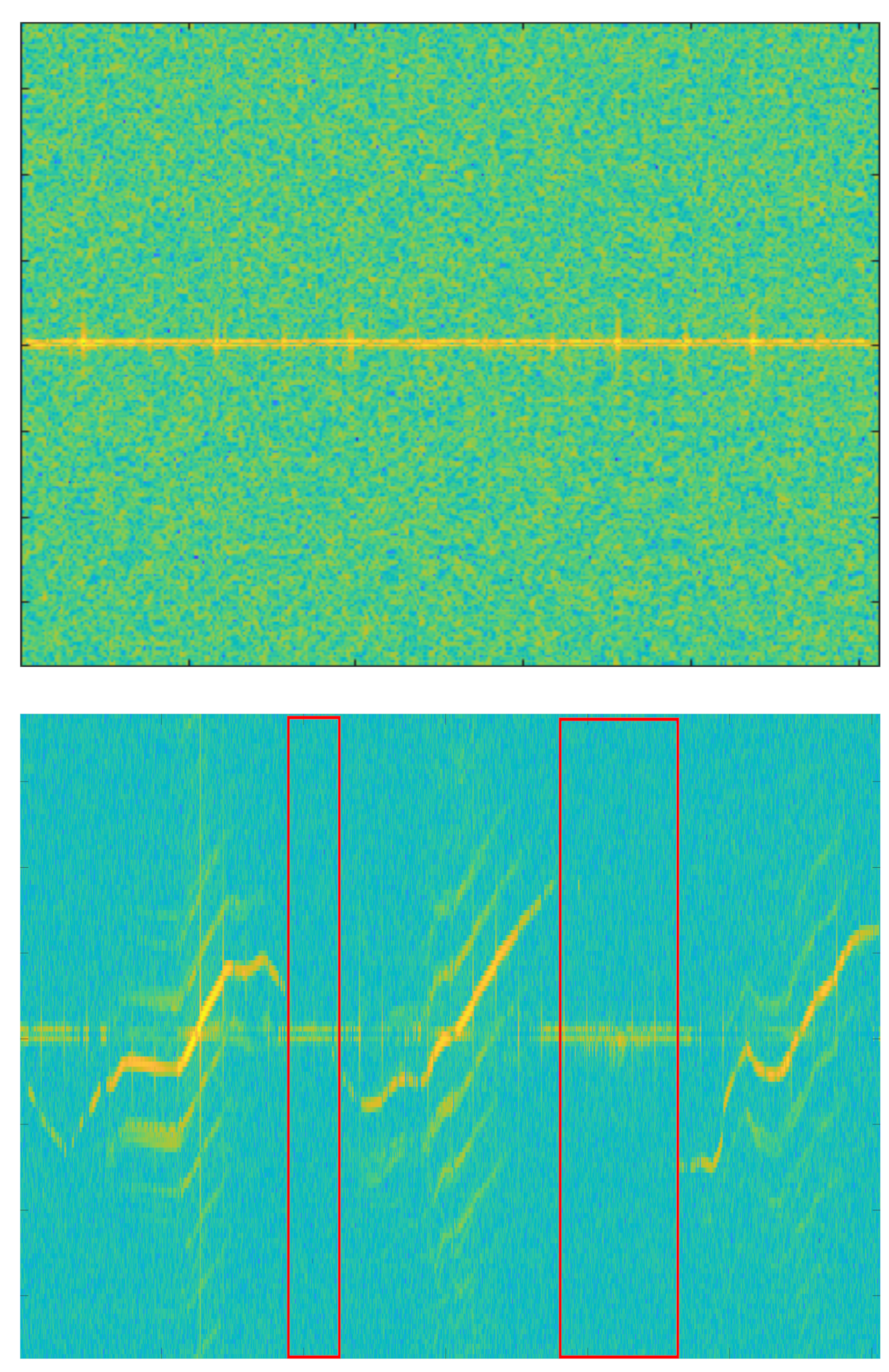

Figure 8 shows the spectrogram for the background clutter and Mavic Air 2.

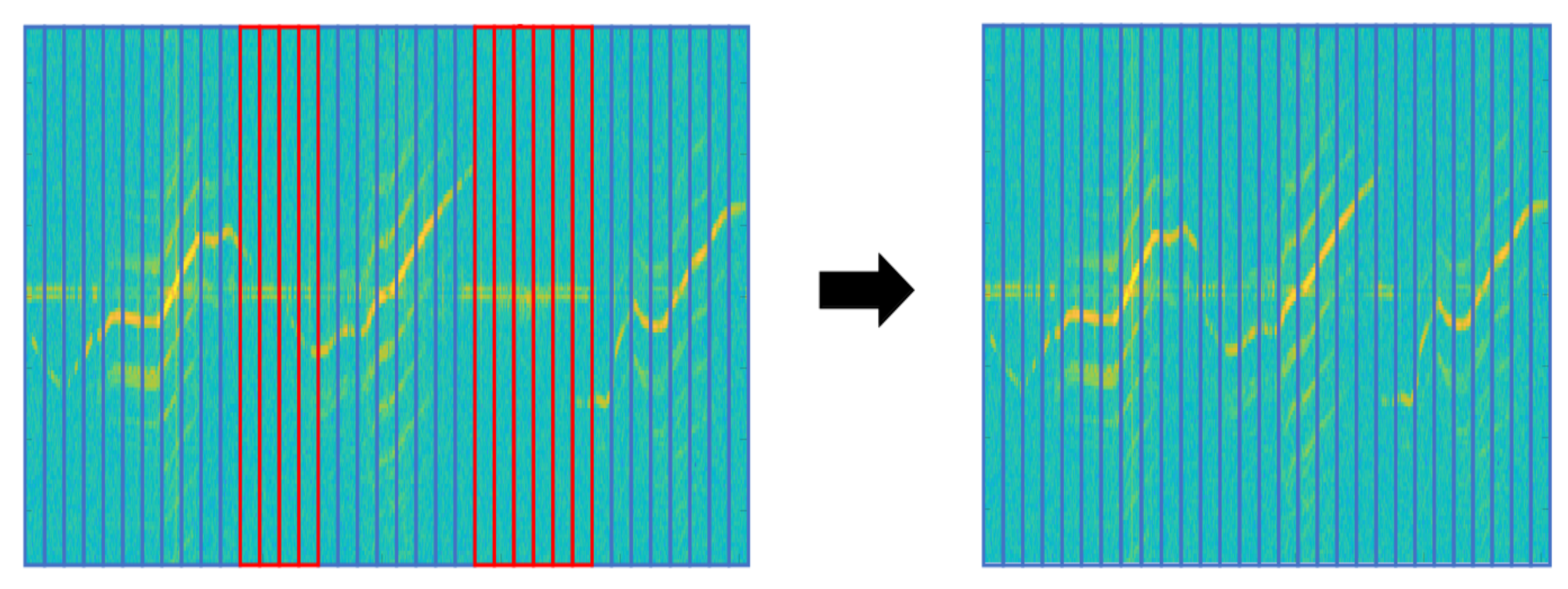

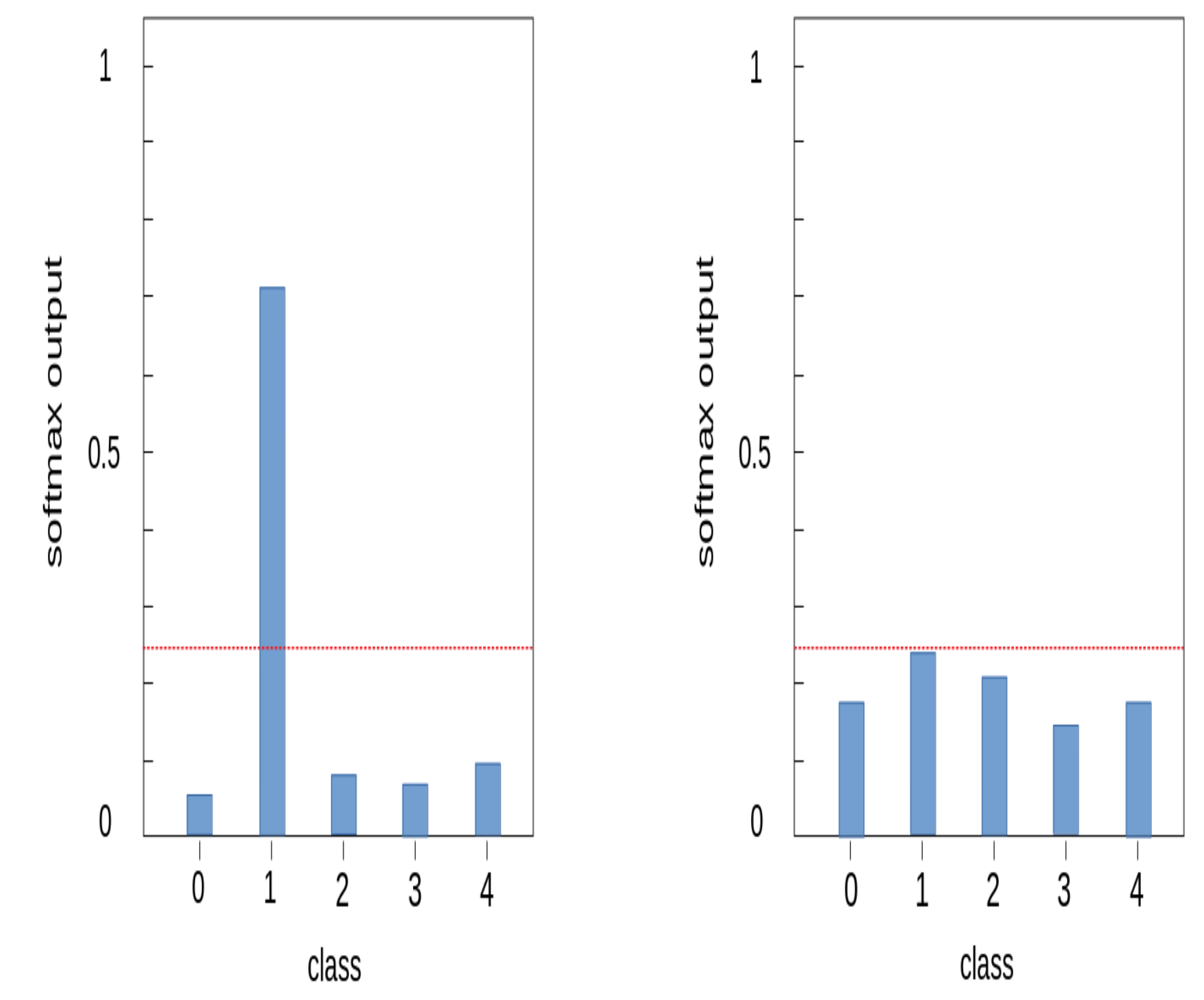

In the spectrogram of Mavic Air 2 on the right, the red box is non-recorded sections because of the target’s low signal intensity. When these non-recorded sections are trained with data, it is hard to expect the correct performance of the deep learning model. So we applied the following data refinement process to remove abnormal data. If the target is well captured, the clear spectrogram shape with strong intensity appears around a specific velocity component on the spectrogram and harmonic components are represented parallel around it. Based on this property, we first chopped the image at a time interval, which is the MDS periodicity of the target. Then, we removed chopped images with an average intensity below the threshold and stitched chopped images with an average intensity above the threshold. If the threshold is too high, only high-intensity signals recorded at a short-range would be retained and low-intensity signals at a long-range could be removed even though the MDS shape was represented. Conversely, if the threshold is too low, non-recorded sections of the target cannot be removed. So we determined the threshold by referring to the average intensity values of the background clutter and non-recorded sections of UAV spectrograms.

Figure 9 represents the data refinement process for the Mavic Air 2 spectrogram. The spectrogram is cut at the same time interval, and the cut images with average intensity below threshold (red-box) are removed. Images above the threshold (blue boxes) are stitched together to generate a refined spectrogram. MDS periodicity (approx.): Walking (1/2 s), Metafly (1/24 s), Mavic Air 2 (1/92 s), Disco (1/183 s)

The data refinement process was applied to only two UAV targets (Mavic Air 2 and Disco) with many non-recorded sections on the spectrogram.

Table 2 shows the change in the spectrogram size before and after the refinement for these two targets. After refinement, the spectrogram size of Mavic Air 2 was reduced by about 25 % and the Disco by about 50%.

In particular, the spectrogram size of the Disco was significantly reduced due to the flight characteristics of fixed-wing UAV. Disco has a single rotor mounted at the rear of the fuselage, so the thrust acts only forward, and the direction changes gradually by the ailerons at the wing-tips. Therefore, it requires a wide turning radius, which often leaves the radar detection range. Besides, its RCS is very low because of the fuselage material which is reinforced styrofoam. In other words, it was difficult to record due to the low RCS, and due to flight characteristics such as high-speed movement and wide turning radius, it was within the radar detection range only for a short time. The data refinement process resulted in increased model stability.

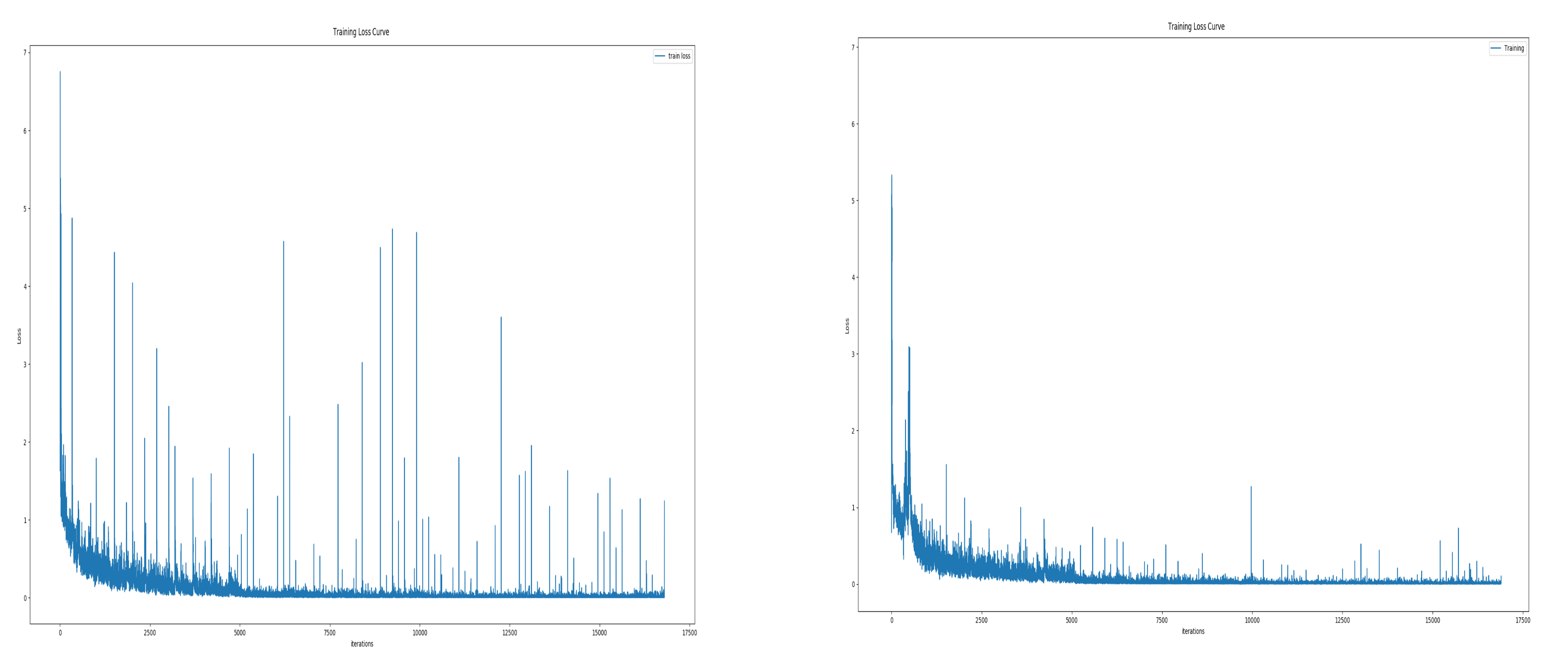

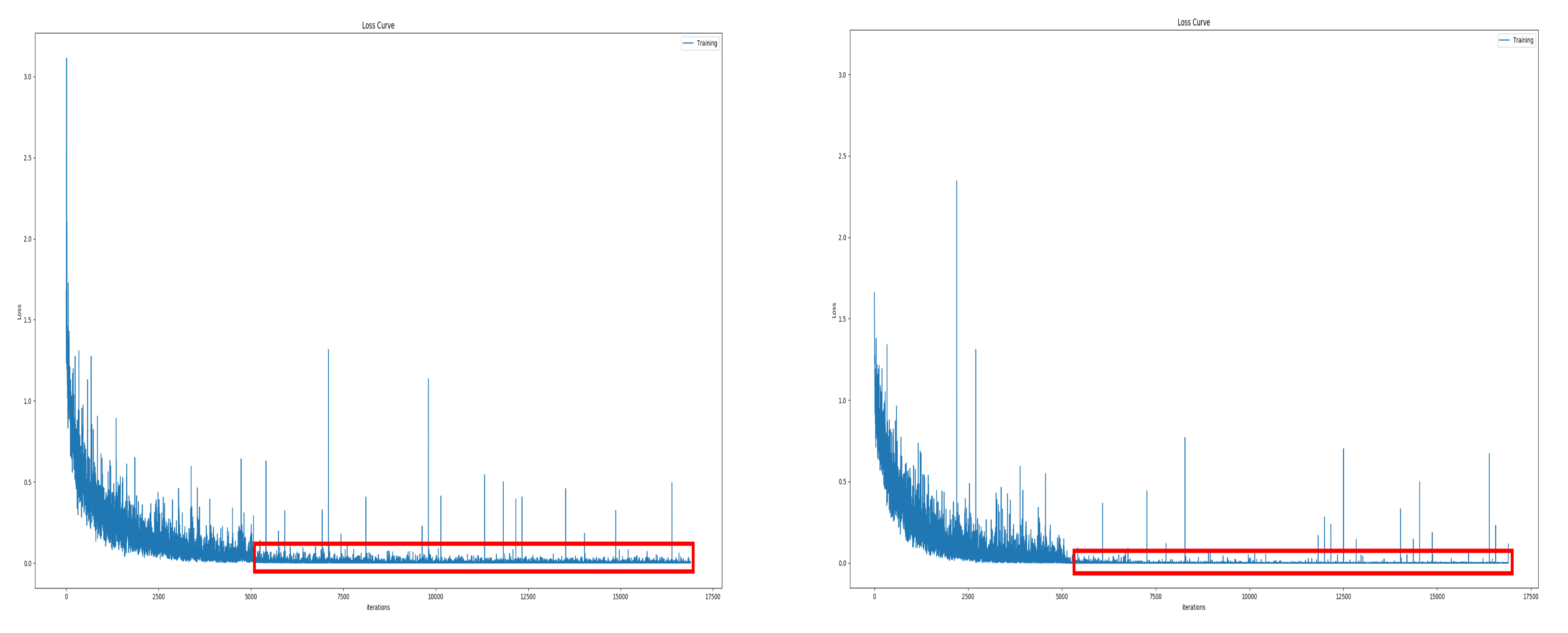

Figure 10 is the training loss curve before and after data refinement. In training with unrefined data, accuracy often fell significantly during training and the test accuracy also had a large deviation. You can see this by the number and size of spikes in the training loss curve on the left. Conversely, with refined spectrogram data, the phenomenon of drastic accuracy drop during training was and the variation of test accuracy were reduced. This can be seen in the figure on the right as the size and number of spikes decreased.

After the refinement process, we applied the vertical flip to spectrograms. By using the vertical flip, we got additional spectrograms with reversed radial velocity sign. Totally we could generate 18 different spectrograms from one original radar signal by applying three window sizes, three window overlap ratios and vertical flip.

Table 3 shows applied data augmentation methods when generating the training data. The test dataset was generated by applying only one window size (128) and the overlap ratio (70%), without using the data augmentation.

For the training data, after pre-processing, the height of each spectrogram is resized to 128 and then cut into a 128 × 128 spectrogram image by applying a 50% overlap ratio. The test data is cut into a 128 × 128 spectrogram image by applying a 75% overlap ratio after the pre-processing process. To prevent the class imbalance, the number of each class of the training data and the test data was balanced. The number of examples for each class was set to about 2000 in the training data and about 200 in the test data.

Table 4 shows the number of examples for each class in our dataset.