A Method for Chlorophyll-a and Suspended Solids Prediction through Remote Sensing and Machine Learning †

Abstract

1. Introduction

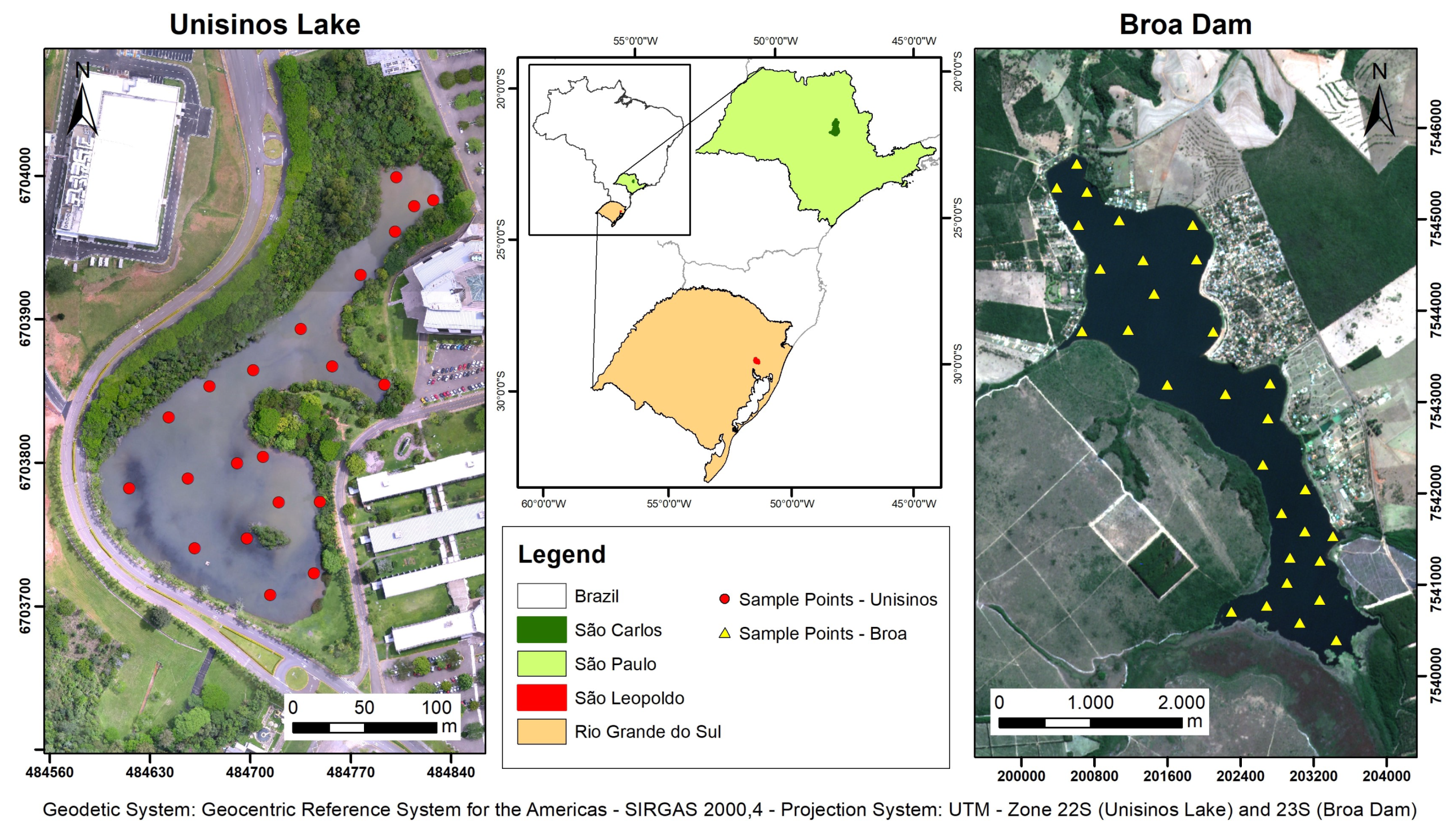

2. Materials and Methods

2.1. Water Quality Assessment

2.2. Remote Sensing

2.3. GIS

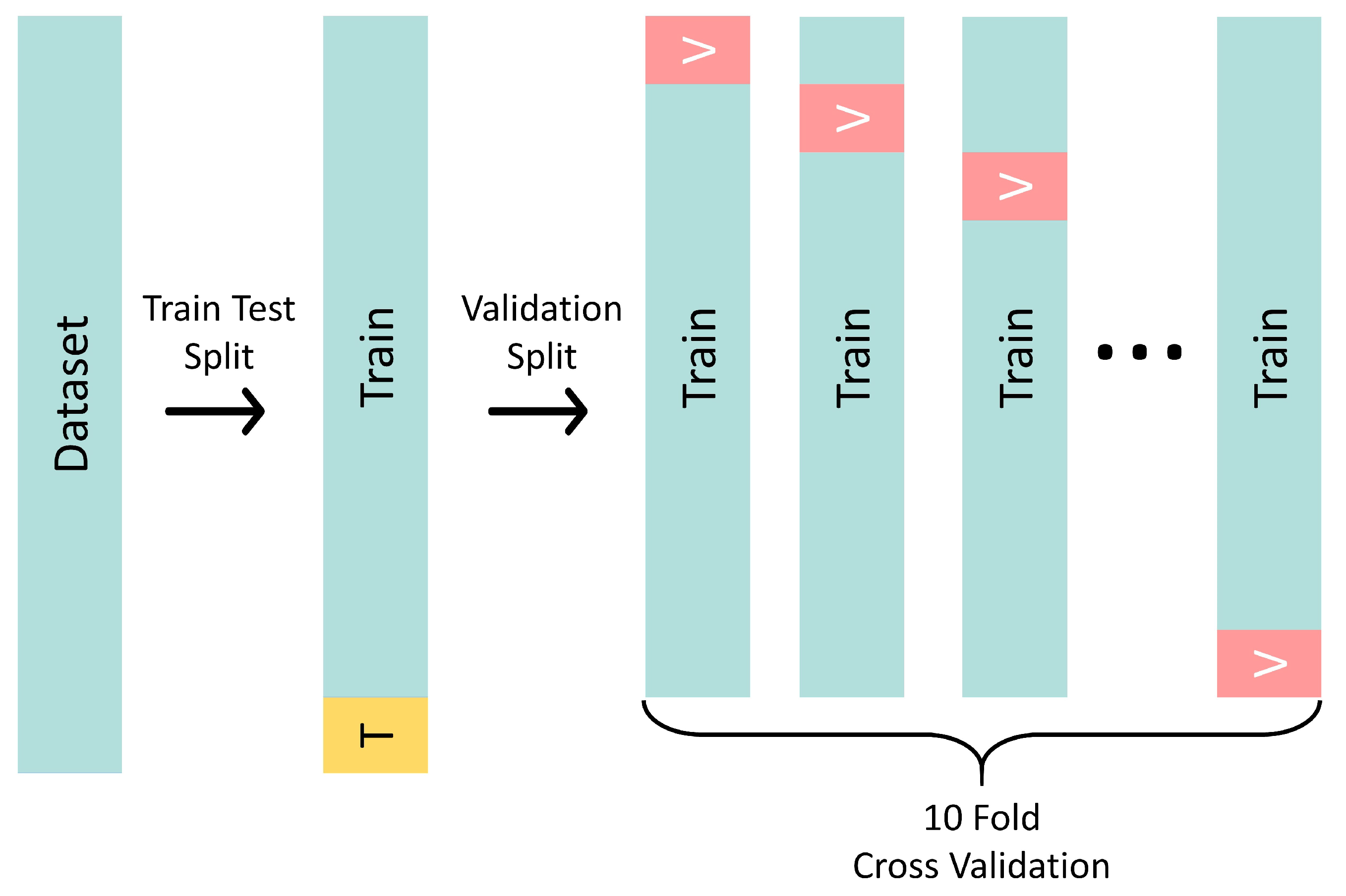

2.4. Machine Learning

3. Case Studies

4. Results

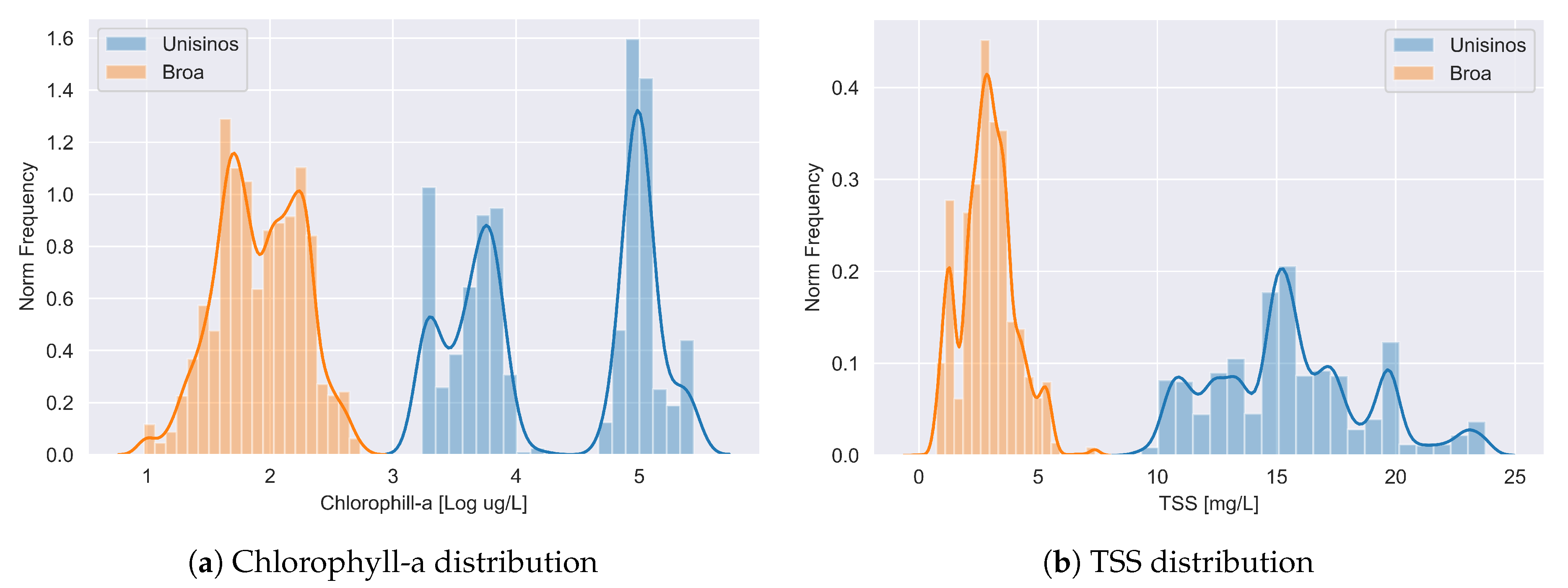

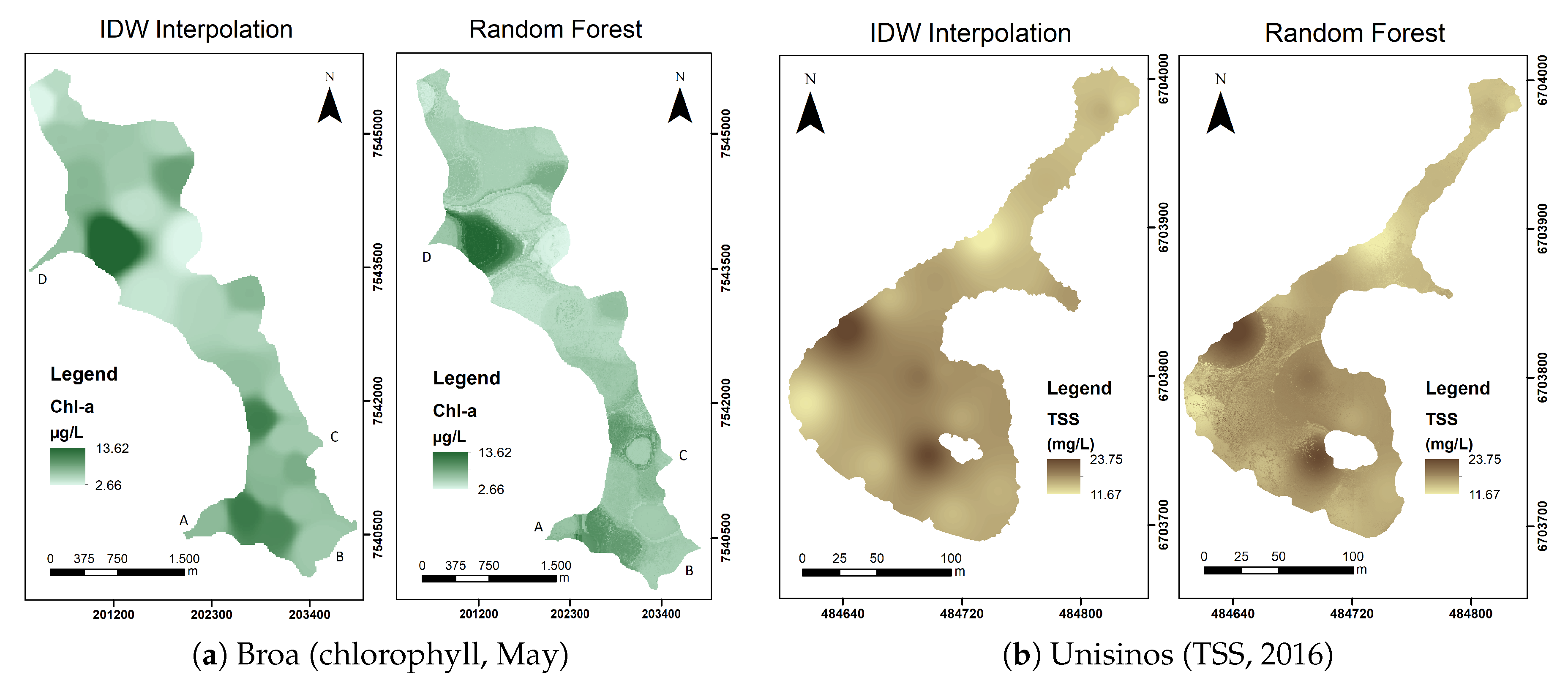

4.1. Water Quality and GIS Results

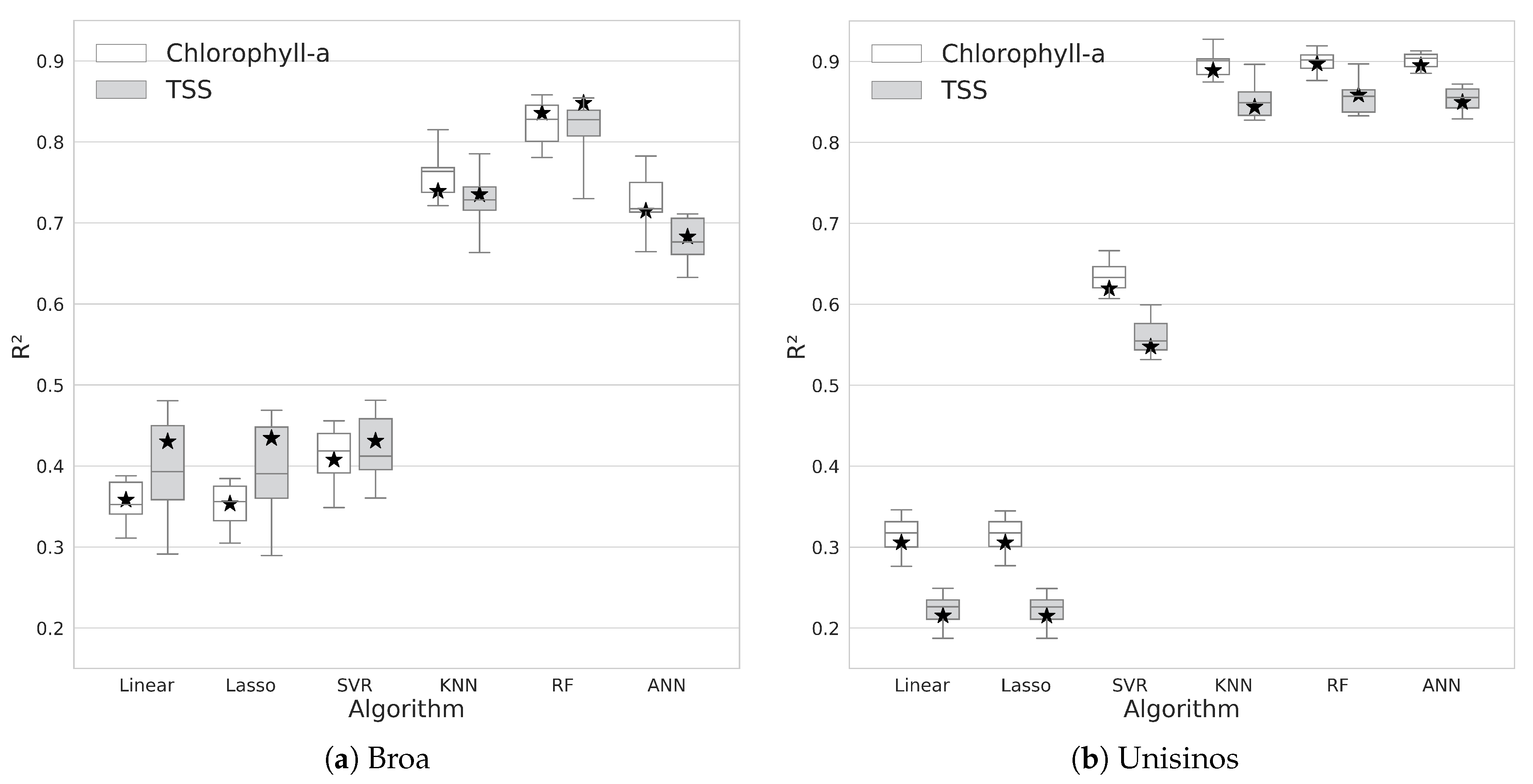

4.2. Machine Learning Results

4.3. Application of the Trained Model

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Masocha, M.; Dube, T.; Nhiwatiwa, T.; Choruma, D. Testing utility of Landsat 8 for remote assessment of water quality in two subtropical African reservoirs with contrasting trophic states. Geocarto Int. 2018, 33, 667–680. [Google Scholar] [CrossRef]

- Silva, H.A.N.; Rosato, A.; Altilio, R.; Panella, M. Water Quality Prediction Based on Wavelet Neural Networks and Remote Sensing. In Proceedings of the 2018 International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–6. [Google Scholar]

- Tundisi, J.G.; Tundisi, T.M. Limnologia; Oficina de Textos: São Paulo, Brazil, 2008; Volume 1, ISBN 978-85-86238-66-6. [Google Scholar]

- Jensen, J.R.; Epiphanio, J.C.N.; Formaggio, A.R.; dos Santos, A.R.; Rudorff, B.F.T.; de Almeida, C.M.; Galvao, E.S. Sensoriamento Remoto do Ambiente uma Perspectiva em Recursos Terrestres; Parentese Editora: São Paulo, Brazil, 2009; ISBN 9788560507061. [Google Scholar]

- Batur, E.; Maktav, D. Assessment of Surface Water Quality by Using Satellite Images Fusion Based on PCA Method in the Lake Gala, Turkey. IEEE Tran. Geosci. Remote Sens. 2018, 57, 2983–2989. [Google Scholar] [CrossRef]

- Kuhn, C.; de Matos Valerio, A.; Ward, N.; Loken, L.; Sawakuchi, H.O.; Kampel, M.; Richey, J.; Stadler, P.; Crawford, J.; Striegl, R.; et al. Performance of Landsat-8 and Sentinel-2 surface reflectance products for river remote sensing retrievals of chlorophyll-a and turbidity. Remote Sens. Environ. 2019, 224, 104–118. [Google Scholar] [CrossRef]

- Guo, Q.; Wu, X.; Bing, Q.; Pan, Y.; Wang, Z.; Fu, Y.; Wang, D.; Liu, J. Study on retrieval of chlorophyll-a concentration based on Landsat OLI Imagery in the Haihe River, China. Sustainability 2016, 8, 758. [Google Scholar] [CrossRef]

- Maier, P.M.; Keller, S. Estimating Chlorophyll a Concentrations of Several Inland Waters with Hyperspectral Data and Machine Learning Models. arXiv 2019, arXiv:1904.02052. [Google Scholar] [CrossRef]

- Ansper, A.; Alikas, K. Retrieval of Chlorophyll a from Sentinel-2 MSI Data for the European Union Water Framework Directive Reporting Purposes. Remote Sens. 2019, 11, 64. [Google Scholar] [CrossRef]

- Kageyama, Y.; Takahashi, J.; Nishida, M.; Kobori, B.; Nagamoto, D. Analysis of water quality in Miharu dam reservoir, Japan, using UAV data. IEEJ Trans. Electr. Electron. Eng. 2016, 11, S183–S185. [Google Scholar] [CrossRef]

- Peterson, K.; Sagan, V.; Sidike, P.; Cox, A.; Martinez, M. Suspended Sediment Concentration Estimation from Landsat Imagery along the Lower Missouri and Middle Mississippi Rivers Using an Extreme Learning Machine. Remote Sens. 2018, 10, 1503. [Google Scholar] [CrossRef]

- Kislik, C.; Dronova, I.; Kelly, M. UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities. Drones 2018, 2. [Google Scholar] [CrossRef]

- Taddia, Y.; Russo, P.; Lovo, S.; Pellegrinelli, A. Multispectral UAV monitoring of submerged seaweed in shallow water. Appl. Geomat. 2019. [Google Scholar] [CrossRef]

- Banerjee, A.; Chakrabarty, M.; Rakshit, N.; Bhowmick, A.R.; Ray, S. Environmental factors as indicators of dissolved oxygen concentration and zooplankton abundance: Deep learning versus traditional regression approach. Ecol. Indic. 2019, 100, 99–117. [Google Scholar] [CrossRef]

- Bisoyi, N.; Gupta, H.; Padhy, N.P.; Chakrapani, G.J. Prediction of daily sediment discharge using a back propagation neural network training algorithm: A case study of the Narmada River, India. Int. J. Sediment Res. 2019, 34, 125–135. [Google Scholar] [CrossRef]

- Ruescas, A.B.; Mateo-Garcia, G.; Camps-Valls, G.; Hieronymi, M. Retrieval of Case 2 Water Quality Parameters with Machine Learning. In Proceedings of the IGARSS 2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 124–127. [Google Scholar]

- Saberioon, M.; Brom, J.; Nedbal, V.; Souček, P.; Císař, P. Chlorophyll-a and total suspended solids retrieval and mapping using Sentinel-2A and machine learning for inland waters. Ecol. Indic. 2020, 113, 106236. [Google Scholar] [CrossRef]

- Haykin, S.S.; Haykin, S.S.; Haykin, S.S.; Elektroingenieur, K.; Haykin, S.S. Neural Networks and Learning Machines; Pearson Education: Upper Saddle River, NJ, USA, 2009; Volume 3. [Google Scholar]

- Russell, S.J.; Norvig, P. Artificial Intelligence: A Modern Approach; Prentice Hall: Upper Saddle River, NJ, USA, 2016. [Google Scholar]

- Kupssinskü, L.; Guimarães, T.; Freitas, R.; de Souza, E.; Rossa, P.; Ademir Marques, J.; Veronez, M.; Junior, L.G.; Mauad, F.; Cazarin, C. Prediction of chlorophyll-a and suspended solids through remote sensing and artificial neural networks. In Proceedings of the 2019 13th International Conference on Sensing Technology (ICST2019), Xian, China, 2–4 December 2019. [Google Scholar]

- APHA; AWWA; WEF. Standard Methods for the Examination of Water and Wastewater; American Public Health Association: Washington, DC, USA, 1989. [Google Scholar]

- Mush, E. Comparison of different methods for chlorophyll and phaeopigment determination. Arch. Hydrobiol. Beih 1980, 14, 14–36. [Google Scholar]

- Sentinel-2-Missions-Sentinel Online. Available online: https://sentinel.esa.int/web/sentinel/missions/sentinel-2 (accessed on 31 January 2020).

- McDonald, W. Drones in urban stormwater management: A review and future perspectives. Urban Water J. 2019, 16, 505–518. [Google Scholar] [CrossRef]

- Tonkin, T.; Midgley, N. Ground-control networks for image based surface reconstruction: An investigation of optimum survey designs using UAV derived imagery and structure-from-motion photogrammetry. Remote Sens. 2016, 8, 786. [Google Scholar] [CrossRef]

- Amanollahi, J.; Kaboodvandpour, S.; Majidi, H. Evaluating the accuracy of ANN and LR models to estimate the water quality in Zarivar International Wetland, Iran. Nat. Hazards 2017, 85, 1511–1527. [Google Scholar] [CrossRef]

- Guimarães, T.T.; Veronez, M.R.; Koste, E.C.; Souza, E.M.; Brum, D.; Gonzaga, L.; Mauad, F.F. Evaluation of Regression Analysis and Neural Networks to Predict Total Suspended Solids in Water Bodies from Unmanned Aerial Vehicle Images. Sustainability 2019, 11, 2580. [Google Scholar] [CrossRef]

- Murphy, R.R.; Curriero, F.C.; Ball, W.P. Comparison of spatial interpolation methods for water quality evaluation in the Chesapeake Bay. J. Environ. Eng. 2010, 136, 160–171. [Google Scholar] [CrossRef]

- Gong, G.; Mattevada, S.; O’Bryant, S.E. Comparison of the accuracy of kriging and IDW interpolations in estimating groundwater arsenic concentrations in Texas. Environ. Res. 2014, 130, 59–69. [Google Scholar] [CrossRef]

- Almodaresi, S.A.; Mohammadrezaei, M.; Dolatabadi, M.; Nateghi, M.R. Qualitative Analysis of Groundwater Quality Indicators Based on Schuler and Wilcox Diagrams: IDW and Kriging Models. J. Environ. Health Sustain. Dev. 2019, 4, 903–912. [Google Scholar] [CrossRef]

- Lai, K.K. An Integrated Data Preparation Scheme for Neural Network Data Analysis. IEEE Trans. Knowl. Data Eng. 2006, 18, 217–230. [Google Scholar] [CrossRef]

- Kotsiantis, S.; Kanellopoulos, D.; Pintelas, P. Data preprocessing for supervised leaning. Int. J. Comput. Sci. 2006, 1, 111–117. [Google Scholar]

- Suits, D.B. Use of Dummy Variables in Regression Equations. J. Am. Stat. Assoc. 1957, 52, 548–551. [Google Scholar] [CrossRef]

- Weiss, N.A.; Weiss, C.A. Introductory Statistics; Pearson, Addison-Wesley: Boston, MA, USA, 2008; Volume 10. [Google Scholar]

- Deisenroth, M.P.; Faisal, A.A.; Ong, C.S. Mathematics for Machine Learning; Cambridge University Press: Cambridge, UK, 2020. [Google Scholar]

- Picard, R.R.; Cook, R.D. Cross-Validation of Regression Models. J. Am. Stat. Assoc. 1984, 79, 575–583. [Google Scholar] [CrossRef]

- Kohavi, R. A study of cross-validation and bootstrap for accuracy estimation and model selection. In Proceedings of the 14th International Joint Conference on Artificial Intelligence, Montreal, QC, Canada, 20–25 August 1995; Volume 14, pp. 1137–1145. [Google Scholar]

- Kuhn, M.; Johnson, K. Applied Predictive Modeling; Springer: Berlin, Germany, 2013; Volume 26. [Google Scholar]

- Ng, A.Y. Preventing “overfitting” of cross-validation data. In Proceedings of the Fourteenth International Conference on Machine Learning, Nashville, TN, USA, 8–12 July 1997; Volume 97, pp. 245–253. [Google Scholar]

- Domingos, P. A few useful things to know about machine learning. Commun. ACM 2012, 55, 78–87. [Google Scholar] [CrossRef]

- Fernández-Delgado, M.; Cernadas, E.; Barro, S.; Amorim, D. Do we need hundreds of classifiers to solve real world classification problems? J. Mach. Learn. Res. 2014, 15, 3133–3181. [Google Scholar]

- Lu, Z.; Pu, H.; Wang, F.; Hu, Z.; Wang, L. The expressive power of neural networks: A view from the width. In Proceedings of the NIPS 2017 Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 6231–6239. [Google Scholar]

- Hornik, K. Approximation capabilities of multilayer feedforward networks. Neural Netw. 1991, 4, 251–257. [Google Scholar] [CrossRef]

- Leshno, M.; Lin, V.Y.; Pinkus, A.; Schocken, S. Multilayer feedforward networks with a nonpolynomial activation function can approximate any function. Neural Netw. 1993, 6, 861–867. [Google Scholar] [CrossRef]

- Caruana, R.; Niculescu-Mizil, A. An empirical comparison of supervised learning algorithms. In Proceedings of the 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, 25–29 June 2006; Volume 148, pp. 161–168. [Google Scholar] [CrossRef]

- Salzberg, S.L. On comparing classifiers: Pitfalls to avoid and a recommended approach. Data Min. Knowl. Discov. 1997, 1, 317–328. [Google Scholar] [CrossRef]

- Friedman, M. The Use of Ranks to Avoid the Assumption of Normality Implicit in the Analysis of Variance. J. Am. Stat. Assoc. 1937, 32, 675–701. [Google Scholar] [CrossRef]

- R Veronez, M.; Kupssinskü, L.S.; T Guimarães, T.; Koste, E.C.; Da Silva, J.M.; De Souza, L.V.; Oliverio, W.F.; Jardim, R.S.; Koch, I.É.; De Souza, J.G.; et al. Proposal of a Method to Determine the Correlation between Total Suspended Solids and Dissolved Organic Matter in Water Bodies from Spectral Imaging and Artificial Neural Networks. Sensors 2018, 18, 159. [Google Scholar] [CrossRef] [PubMed]

| Field Data | IDW | ||||

|---|---|---|---|---|---|

| Broa | Unisinos | Broa | Unisinos | ||

| Sample Size | 90 | 42 | 3933 | 35,028 | |

| Chlorophyll-a (g/L) | Mean | 7.35 | 94.30 | 7.18 | 96.88 |

| Median | 7.20 | 67.52 | 6.71 | 91.65 | |

| Mode | 6–10 | 50–150 | 5.5–10 | 44–137 | |

| Standard Deviation | 2.66 | 59.04 | 2.46 | 62.12 | |

| Total Suspended Solids (mg/L) | Mean | 3.05 | 14.93 | 2.92 | 15.56 |

| Median | 2.92 | 14.92 | 2.91 | 15.34 | |

| Mode | 3 | 15 | 3 | 16 | |

| Standard Deviation | 1.26 | 3.29 | 1.12 | 3.19 | |

| pH | Mean | 5.70 | 8.67 | 5.69 | 8.89 |

| Median | 5.84 | 8.85 | 5.81 | 9.15 | |

| Standard Deviation | 0.55 | 0.82 | 0.53 | 0.68 | |

| Unisinos | Broa | ||||

|---|---|---|---|---|---|

| Chl-a | TSS | Chl-a | TSS | ||

| Linear regression | 0.31521 | 0.22380 | 0.35389 | 0.39648 | |

| 0.03642 | 0.04681 | 0.01642 | 0.01345 | ||

| LASSO | 0.31517 | 0.22379 | 0.35126 | 0.39457 | |

| 0.03643 | 0.04681 | 0.01649 | 0.01349 | ||

| KNN | 0.89644 | 0.85111 | 0.76172 | 0.72900 | |

| 0.00549 | 0.00895 | 0.00607 | 0.00602 | ||

| SVR | 0.63457 | 0.56024 | 0.41329 | 0.42219 | |

| 0.01942 | 0.02650 | 0.01491 | 0.01290 | ||

| RF | 0.90012 | 0.85603 | 0.82338 | 0.81371 | |

| 0.00531 | 0.00867 | 0.00450 | 0.00415 | ||

| ANN | 0.90138 | 0.85371 | 0.72573 | 0.67819 | |

| 0.00524 | 0.00882 | 0.00700 | 0.00719 | ||

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Silveira Kupssinskü, L.; Thomassim Guimarães, T.; Menezes de Souza, E.; C. Zanotta, D.; Roberto Veronez, M.; Gonzaga, L., Jr.; Mauad, F.F. A Method for Chlorophyll-a and Suspended Solids Prediction through Remote Sensing and Machine Learning. Sensors 2020, 20, 2125. https://doi.org/10.3390/s20072125

Silveira Kupssinskü L, Thomassim Guimarães T, Menezes de Souza E, C. Zanotta D, Roberto Veronez M, Gonzaga L Jr., Mauad FF. A Method for Chlorophyll-a and Suspended Solids Prediction through Remote Sensing and Machine Learning. Sensors. 2020; 20(7):2125. https://doi.org/10.3390/s20072125

Chicago/Turabian StyleSilveira Kupssinskü, Lucas, Tainá Thomassim Guimarães, Eniuce Menezes de Souza, Daniel C. Zanotta, Mauricio Roberto Veronez, Luiz Gonzaga, Jr., and Frederico Fábio Mauad. 2020. "A Method for Chlorophyll-a and Suspended Solids Prediction through Remote Sensing and Machine Learning" Sensors 20, no. 7: 2125. https://doi.org/10.3390/s20072125

APA StyleSilveira Kupssinskü, L., Thomassim Guimarães, T., Menezes de Souza, E., C. Zanotta, D., Roberto Veronez, M., Gonzaga, L., Jr., & Mauad, F. F. (2020). A Method for Chlorophyll-a and Suspended Solids Prediction through Remote Sensing and Machine Learning. Sensors, 20(7), 2125. https://doi.org/10.3390/s20072125