2.1. Design of the Speckle Pattern

The speckle pattern is projected onto the object’s surface to provide rich textures; therefore, the first step is to design a good pattern. Preferably, pseudo-random dots are utilized [

24]. The random distribution characteristics of speckle dots form the prerequisite and foundation for unique feature matching. To further assist robust and accurate feature matching, the design of the speckle distribution should meet one basic requirement: rich textures.

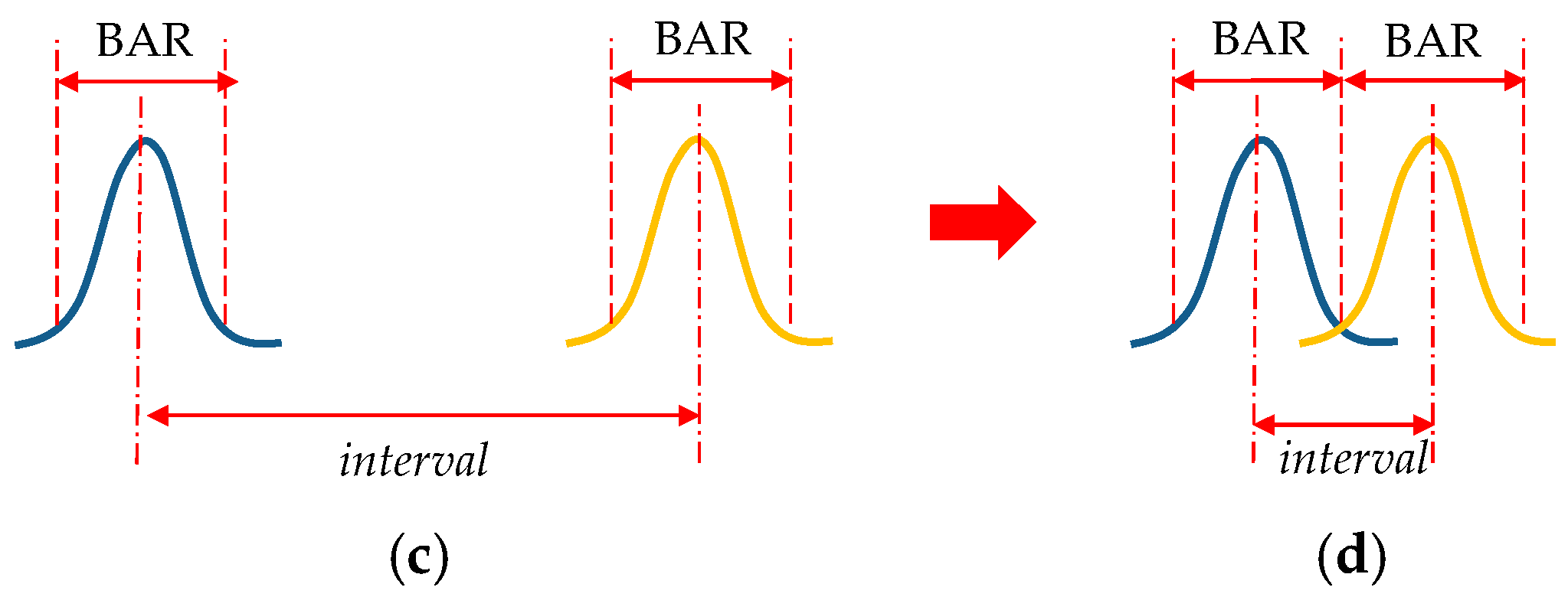

Before elaborating on the method of designing a rich-textured speckle pattern, two important definitions should be introduced first. The distance between adjacent speckle dots, called an interval, is a key index that determines the texture richness. The value of the interval is closely related to the brightness attenuation range (BAR) of each projected dot. The definitions of the interval and BAR are illustrated in

Figure 2. Ideally, the brightness distribution of optical dots conforms to a Gaussian distribution, as shown in

Figure 2a. The BAR is generally defined as the pixels where the brightness is reduced by 95%, which is displayed in

Figure 2b. In practice, the actual BAR of the projected dots is independent of the speckle pattern itself but is affected by two main factors: (a) the optical design and engineering capability in manufacturing the DOE projector, and (b) the projection distance. Therefore, being affected by different processing levels and working distances of the DOE projector means that different BARs may occur. In this case, the actual BAR can only be empirically determined, which can take the mean BAR as a reference.

As can be seen from

Figure 2c, the interval is a key index that determines the texture richness. If the interval is too large, the distribution of dots is sparse, which may lead to less texture; if the interval is too small, the distribution is denser, yet it may lead to more overexposure areas with unrecognizable textures. Therefore, it is important to choose an appropriate interval. It is not hard to see that one of the most reasonable and convenient ways is to set the value of the interval equal to the value of the BAR, as shown in

Figure 2d.

Now we return to the question of designing a good pattern. To make use of the above-mentioned observation, an algorithm to generate a pseudo-random speckle pattern and a predefined constraint window was proposed and illustrated with pseudo-code, as shown in Algorithm 1. In practice, the size of the constraint widow is set according to the BAR of each projected dot.

| Algorithm 1. Generation of a Speckle Pattern |

| // pre-define the constraint window |

| // set the resolution of speckle pattern: width * height |

| For loop = 1:height * width, do |

| u: generate a random row coordinate within the range of {1, height} |

| v: generate a random column coordinate within the range of {1, width} |

| // Judge whether the constraint condition is satisfied or not |

| If there are no dots in the constraint window centered at (u,v) |

| Put a dot at (u,v) |

| Else |

| No dots are added |

| End If |

| End For |

In this paper, we designed a pattern with a resolution of 640 × 480. Before designing the speckle pattern, we learned the empirical BAR value from the manufacturer, which helped to conduct multiple simulations and machining tests before the final version. At the current machining level, the BAR was about 5 pixels. Thus, the predefined constraint window was set at 5 × 5 pixels. The designed speckle pattern is displayed in

Figure 3a. This pattern was then projected onto the surface of a standard plane using a DOE projector, as shown in

Figure 3b. It can be seen that most speckle dots could be projected correctly. The overexposure areas at the center of the pattern were caused by the projection device, which is known as the zero-order diffraction phenomenon. It can be eliminated by improving the optical design of the microprojection module, which will not be further discussed here since it is not the focus of this paper. The texture richness of the designed pattern was evaluated by using the method described in

Appendix A and the results are shown in

Figure 3c. The textured regions are marked by red dots, and the textureless regions are marked by yellow dots. It can be seen that most regions covered by the projected pattern are textured.

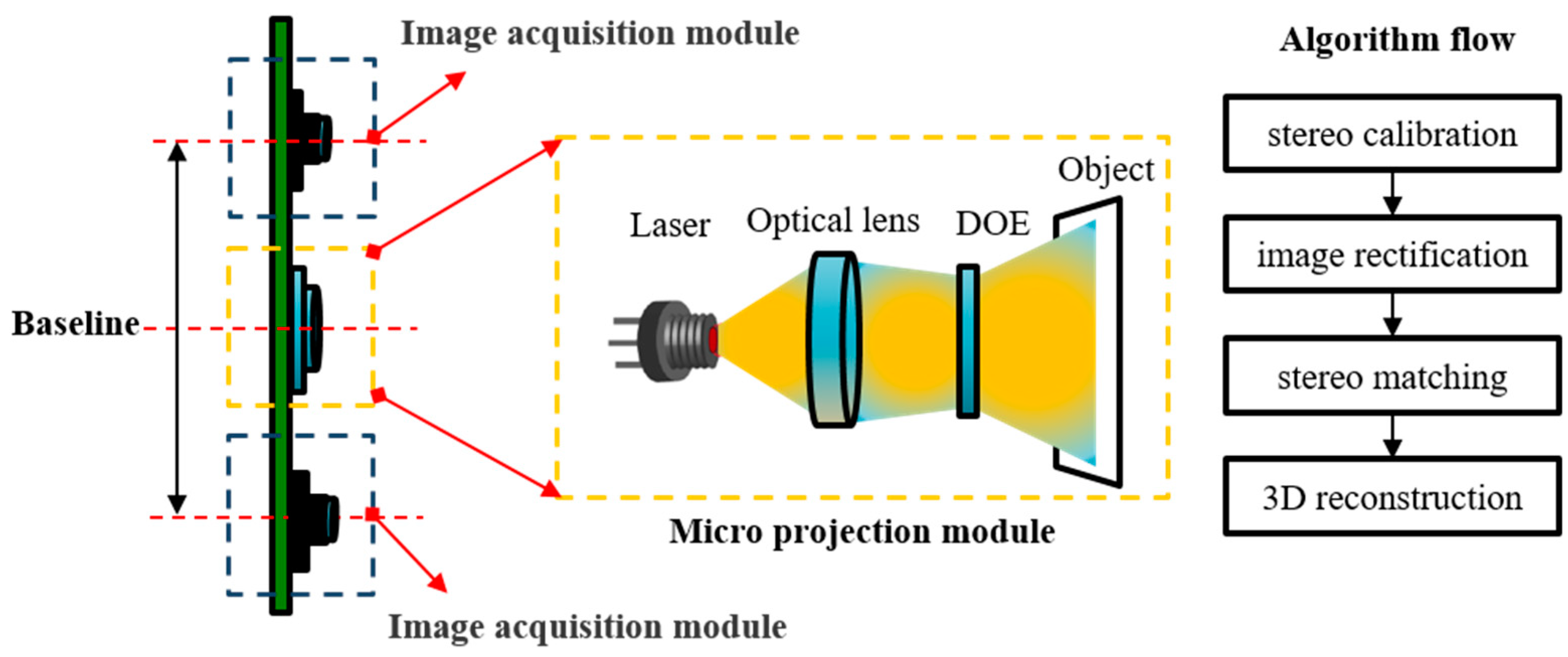

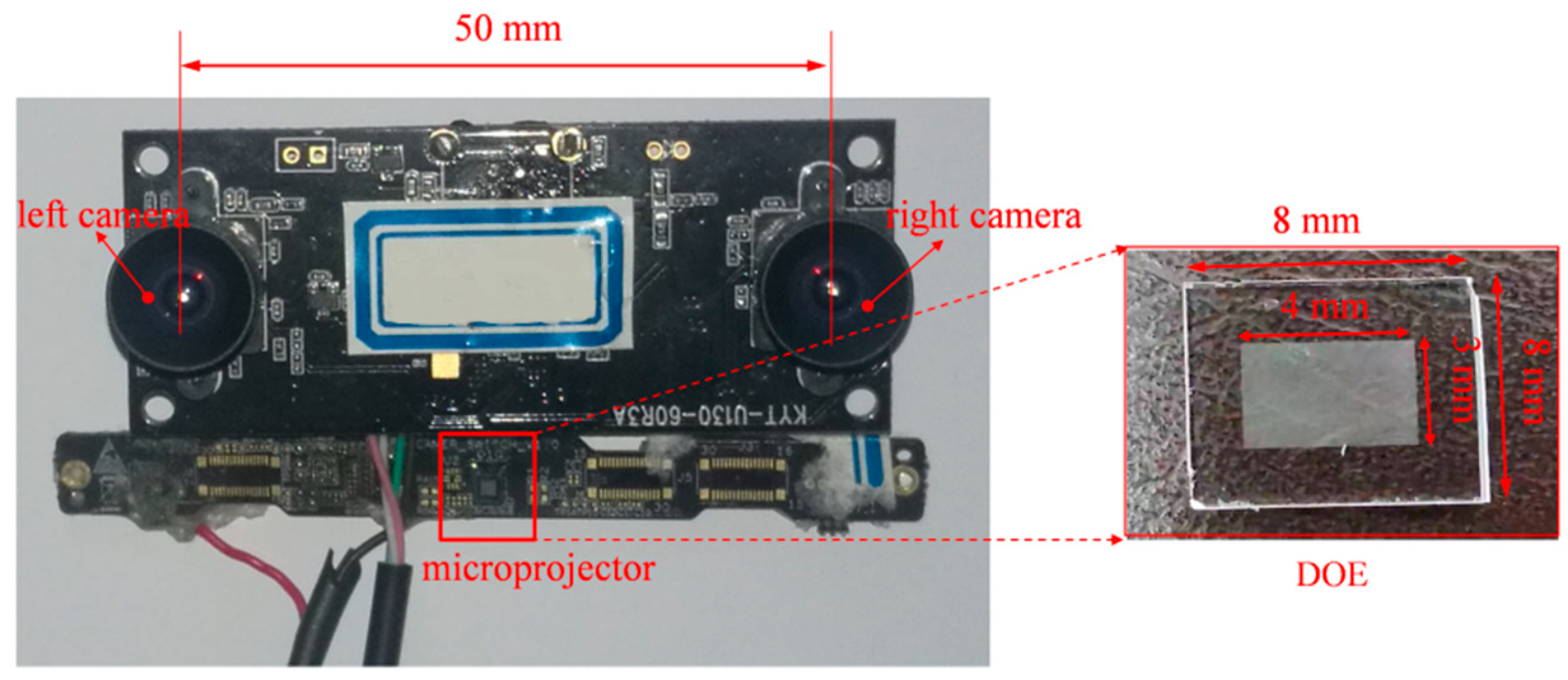

After projecting the speckle pattern onto the target surface, the two cameras shown in

Figure 1 captured the textured images. In speckle-based SL, the primary problems most likely occur in the processes of camera calibration, epipolar rectification, and stereo matching; therefore, we will elaborate on these three problems.

2.2. Epipolar Rectification

Given the fact that the two cameras in speckle-based SL cannot be strictly aligned in the process of assembly, stereo rectification is necessary before stereo matching. The transformation of a stereo rig before and after epipolar rectification is illustrated in

Figure 4. Taking the left camera coordinate system (

CCSL) as the reference coordinate system, before rectification, the relative transformation of the right camera coordinate system (

CCSR) against

CCSL is [

R T]. To make all epipolar lines parallel to assist feature matching, the rectified

CCSL and

CCSR should be horizontally aligned, which means there is no rotation, but only a translation

exists between them.

In this paper, we put forward an efficient epipolar rectification method based on two rotations of both cameras. The detailed procedure is displayed in

Figure 5.

First rotation: Assume the original angle between the left optical axis (OA) and the right OA is

2θ. To minimally rotate both cameras such that they have the same orientation, rotate each camera with an angle of

θ in opposite directions. This is exactly half of the current rotation relationship

between the two cameras. In order to relate

and

θ directly, the rotation vector

was introduced.

, a 3 × 1 vector, is a more compact way to describe

. The direction of

is the rotation axis and the length of

is the rotation angle around the rotation axis. Therefore

. In this way, the rotation matrix of the left camera can be determined according to Rodrigues’s equation:

In Equation (1),

denotes the antisymmetric matrix. The rotation axis is denoted by

:

.

is a 3-order identity matrix. The rotation matrix of the left camera is in the opposite direction, which can be determined using

. Through the first rotation, the left camera and right camera are brought to the same orientation, as shown by the blue dotted lines in

Figure 5. The translation vector after the first rotation is:

Second rotation: After the first rotation, the new OAs are parallel, yet they are not perpendicular with the axis

constituted by optical centers

and

. Therefore, a common rotation

for both cameras was necessary. Denote

. Therefore, a second rotation was required to bring the translation vector in alignment with

. The direction of the new rotation axis

can be calculated using

and

:

The rotation angle around

can be determined using:

Here, denotes the inverse cosine. Then, the rotation axis in the second rotation can be uniquely determined using . By using the same equation as in Equation (1), the second rotation can be obtained.

Combining the above two rotations, the renewed rotation and translation between the left and right cameras after stereo rectification are obtained as follows:

Rectified intrinsic parameters: To get the rectified focal length, lens distortions of the camera should be considered. The common model can be generalized as:

Here,

are the distorted pixel coordinates and

is the imaging center. Furthermore,

,

, and

.

and

are the coefficients of the radial and tangential distortions, respectively. To eliminate the effects of lens distortion, the rectified focal length is defined as:

Assume the image resolution of the camera is . In Equation (7), , , and . is the original focal length. The rectified focal length of the left and right cameras is set the same: .

As for the imaging center, the average value of both cameras’ imaging centers was generally taken as the rectified cameras’ imaging centers, which is inaccurate in practice [

25]. In fact, rectified images will be twisted or rotated more or less compared with original images. The positions of imaging centers will also likely affect the sizes of visible areas in rectified images. With other parameters being determined (extrinsic parameters, focal length, etc.), the rectified image centers can be better located based on known parameters. The detailed procedure is illustrated in

Figure 6.

First, as shown in

Figure 6a, four vertices

of the original image plane are projected into the rectified

by assuming that the rectified imaging center is at

:

Here,

. For the left camera (

),

; for the right camera (

), and the value of

refers to Equation (5). The overall imaging area can be achieved as shown in

Figure 6b, with the image coordinate system (

ICS) centered at

. Generally, the rectified area is of a twisted and irregular shape.

Second, the rectified area in

Figure 6b is fit into the image plane of size

as displayed in

Figure 6c. To maximize the visible area of

Figure 6b in

Figure 6c, the renewed imaging center

after rectification can be adjusted using:

Here,

. Then, the renewed

ICS and

CCS after the epipolar rectification can be built as shown in

Figure 6c.

After obtaining the rectified intrinsic and extrinsic parameters of the two cameras, epipolar rectification can be accomplished by nonlinearly mapping original images into the rectified ICS. With both left and right images rectified, feature matching can be conducted along the direction of the image rows, which reduces the complexity of matching and speeds up the matching process.

The advantage of the above rectification method is obvious. First, both cameras rotate around the same angle in each step of the transformation, which can minimize feature differences between the left and right images. Second, the rectified images keep the same resolution with the original images, which is very convenient in stereo matching because most of the accessible dense matching methods require the same size left and right images. Lastly, the maximal visible image area can be guaranteed in the rectified image, which can minimize image information loss during the stereo rectification process.

2.3. Stereo Calibration

It is not hard to see from

Section 2.1 that the accuracy of epipolar rectification is closely related to the accuracy of the stereo calibration. However, traditional camera calibration methods do not consider the accuracy of epipolar rectification; most of them focused on estimating the optimal stereo parameters that can minimize 2D re-reprojection errors [

26] or 3D reconstruction errors [

27]. Among them, Zhang’s method [

26] has been widely used as a flexible camera calibration method that takes 2D reprojection errors as the cost function. In practice, a slim misalignment of epipolar lines may lead to large errors in feature matching, which will inevitably hinder accurate reconstruction.

In our previous work [

27], an accurate camera calibration method was proposed based on the backprojection process, in which 3D reconstruction errors were designated as the cost function to improve the 3D reconstruction accuracy of a stereo rig. Details can be found in Gu et al. [

27]. In this paper, an additional term describing the epipolar distance errors was added in the cost function of the camera calibration, which is defined as:

Here,

denotes the distance from point

to line

. The fundamental matrix

can be uniquely determined once calibration is done.

is a pair of matching points of the left and right images. Then, the new cost function

was redefined as:

Here, denotes the term representing 3D reconstruction errors and . is a coefficient that adjusts the weight of the two cost terms, which is set to 0.5 in practice. By minimizing , optimal camera parameters guaranteeing minimal 3D reconstruction errors and epipolar distance errors can be achieved.

2.4. Stereo Matching Based on an Improved SGM

According to

Section 2.2 and

Section 2.3, feature matching can be conducted along the rectified epipolar lines, which now remain in accordance with the direction of the image rows. Accurate feature matching has a pivotal role in accurate 3D reconstruction. The SGM algorithm achieves a good balance between high-quality depth maps and running efficiency [

23]. Besides the pixel cost calculation, additional constraints are added in the energy function

to support smoothness, as shown in Equation (12). The problem of stereo matching is to find an optimal disparity map

D that can minimize the energy

:

In Equation (12), the first term is the sum of all pixel-matching costs of the disparities in

D. The second term adds constant penalties for all pixels

in a neighborhood

of

. This extra term penalizes changes in neighboring disparities. One characteristic of SGM the aggregation of matching costs of 1D from all directions equally. Along each direction

, the cost of pixel

at disparity

is defined recursively as:

The first term is the calculated cost of disparity

. The second term is the lowest cost of the previous pixel of

along the path.

is a penalty term that penalizes changes in neighboring disparities. It is defined by Equation (14). The second term in Equation (12) can be specifically interpreted as follows: If the disparity in pixel

stays the same as the disparity in

, no penalty is added. If the disparity in pixel

changes only one pixel, the penalty factor

is utilized. For larger disparity changes (that is, greater than one pixel), the penalty factor

is added. Generally, pixels with larger disparity changes have little effect on the final results; therefore,

is generally set to a large value.

Recent works have found that SGM has the same assumption as local matching methods, namely that all pixels in the matching window have constant disparities. This implicit assumption does not hold for slanted surfaces and leads to a bias toward reconstructing frontoparallel surfaces. The famous PatchMatch method proposed by Bleyer [

20] tries to overcome this problem by estimating an individual 3D plane at each pixel onto which the current matching block is projected. However, it inevitably increases the execution time, which impairs the real-time performance of the 3D vision system.

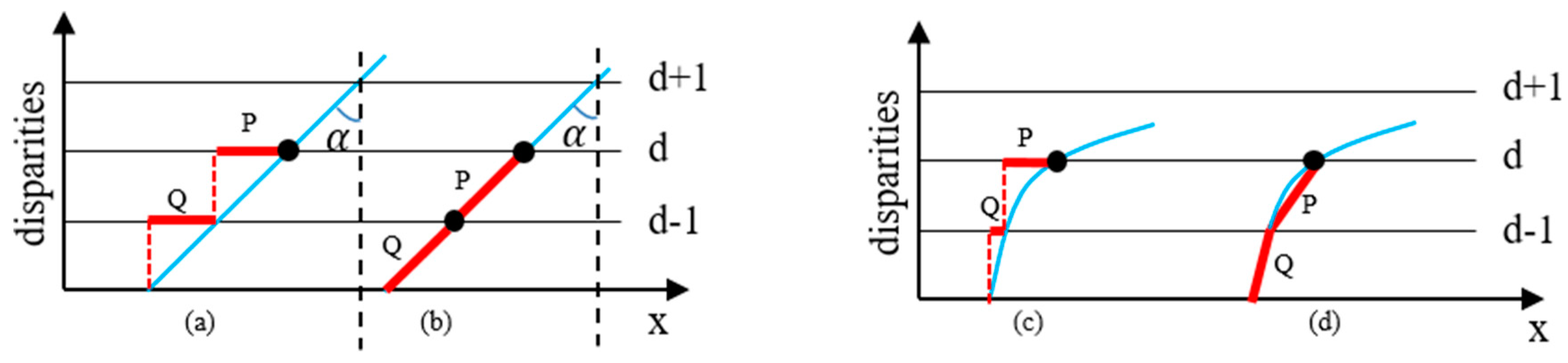

We experimentally found that the penalty term

reinforced the above-mentioned assumption to a great extent.

Figure 7 illustrates this problem. Take a slanted surface and a curved surface as examples. Ideal disparity estimation routes are displayed in

Figure 7b,d as a comparison. However, in the traditional SGM algorithm, the case of keeping constant disparities is encouraged by giving a smaller penalty (

) to the state of

. This inevitably leads to inaccurate disparity results, such as P and Q in

Figure 7a,c. In fact, the penalty mechanism in Equation (13) only works well for frontoparallel surfaces (

in

Figure 7a) and does not hold for slanted or curved surfaces. In practice, it will lead to a ladder effect in reconstructing non-frontoparallel surfaces (refer to

Figure 8). The ladder effect causes a perceptible change when the angle of

changes. It can be seen that with the increase of

, the ladder effect becomes gentler and gentler, which is in accordance with the analysis in

Figure 7a.

Therefore, we adapted the traditional SGM algorithm to accommodate this new observation. In practice, a new penalty term is defined, as given in Equation (15), to alleviate this kind of bias. In this case, equal penalties were utilized for unchanged disparities and disparities that change only one pixel.

Using the above improvement, the ladder phenomenon can be alleviated effectively. Detailed experiments are described in

Section 4. The improved SGM plays an important role in improving the 3D measurement accuracy of speckle-basedSL. After stereo matching, 3D information can be achieved based on the triangular structure constituted by the two cameras in the system.