4.1. Dataset Structure

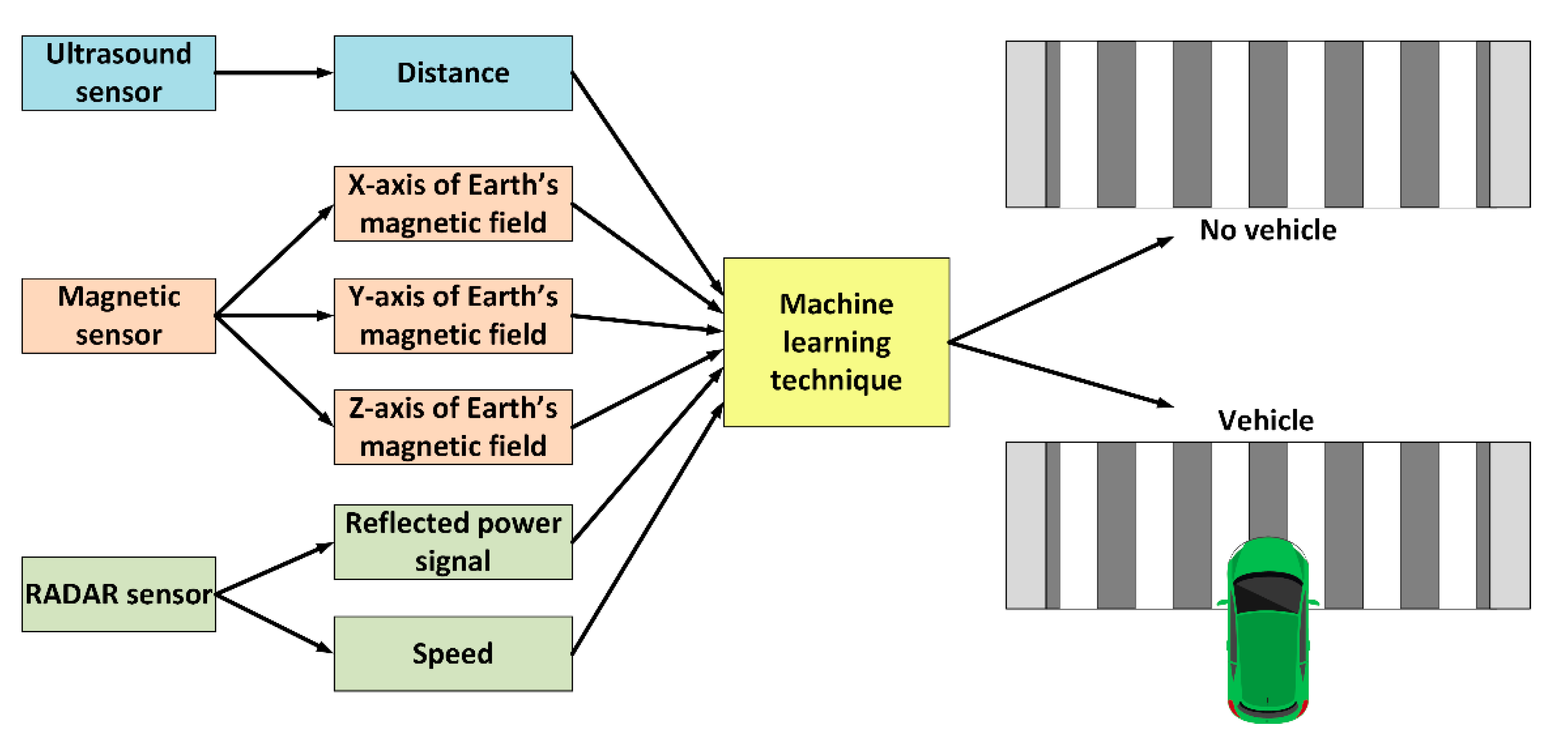

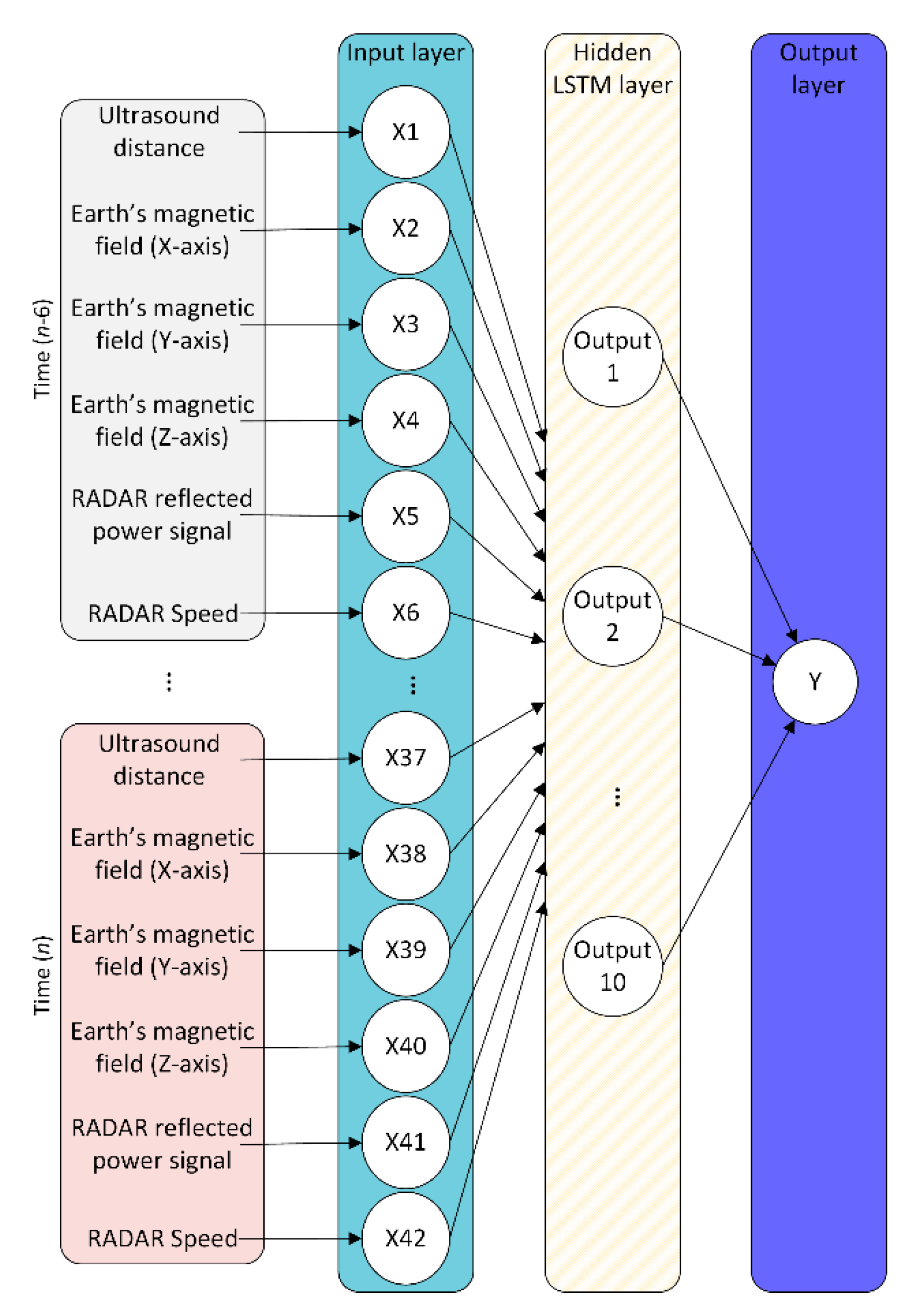

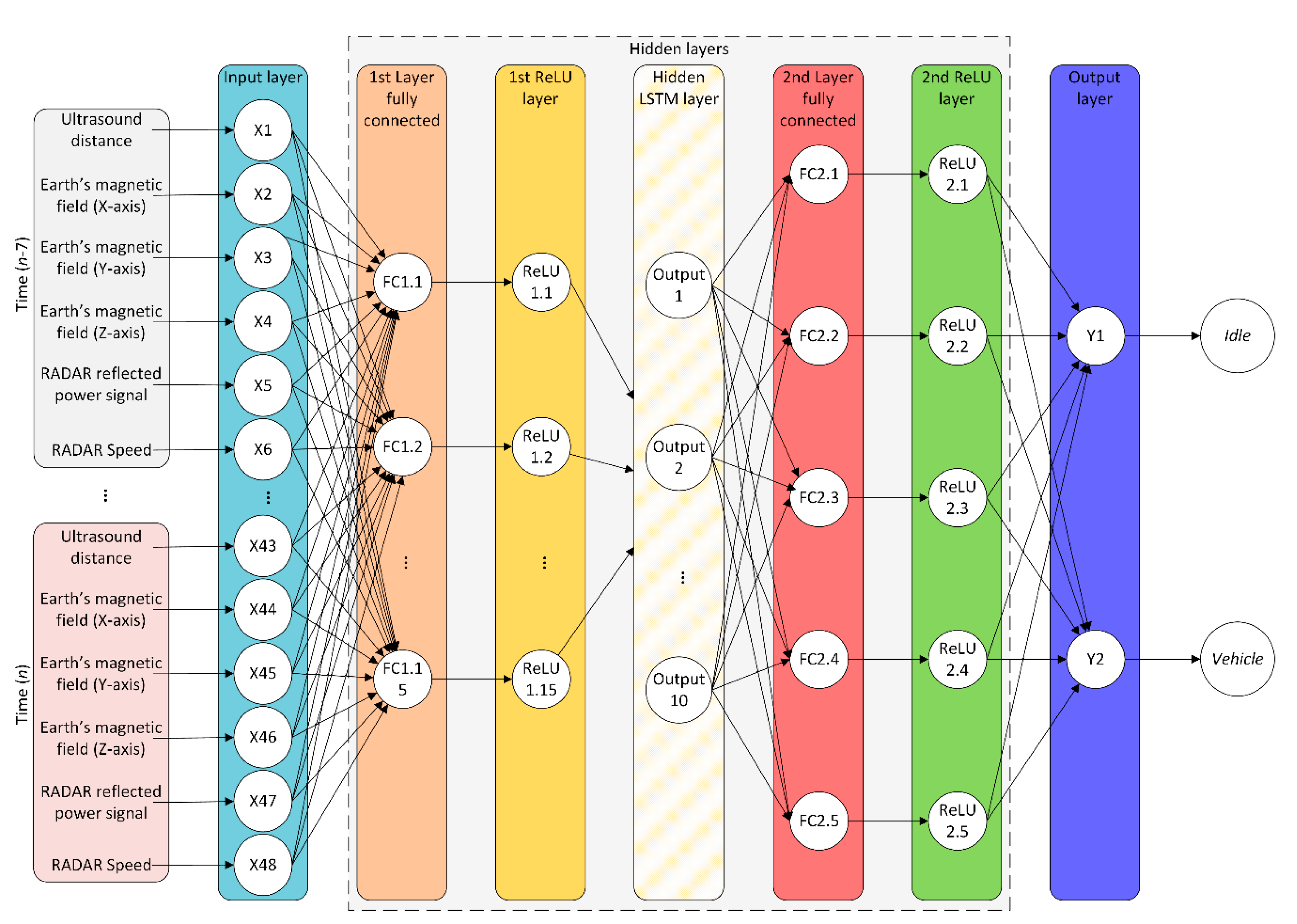

The proposed approach uses data generated by three different sensors as predictor variables.

Table 1 shows such set of predictor variables and range values. The data are used by the models to identify whether there is, or is not, a vehicle circulating through the intelligent pedestrian crossing.

For this purpose, two classes are used: “Vehicle” and “No vehicle”, represented as 1 and 0, respectively. These classes are used as ground truth (GT). Measurements from sensors were normalized using the Min–Max method for an adequate performance of the ML algorithms [

42]. Normalization values are also shown in

Table 1.

The dataset used to train the ML models, for these to determine whether a vehicle is approaching a pedestrian crossing or not, was collected from a real environment and is available at

http://www.uhu.es/tomas.mateo/investigacion/dataset.zip. This dataset includes a total of 86,960 labeled tuples. The dataset is imbalanced: the “No vehicle” class has a total of 80,915 tuples (93.05%); whereas the “Vehicle” class has a total of 6045 tuples (6.95%). To balance the dataset, the NearMiss subsampling technique was used [

43]. The balanced dataset ends up having 6362 tuples for the “No vehicle” class and 6045 tuples for the “Vehicle” class, which corresponds to 51.28% and 48.72%, respectively. Therefore, the size of the dataset used at the classification stage consists of 12,407 labeled tuples. The dataset is ordered by a tuple index to keep time linearity, which is necessary for some ML models to work properly.

The data collection process has been carried out in several real environments under fluid traffic conditions. The devices were placed on the roadway near the line of the pedestrian crossing, while the vehicles passed over devices—or on their sides—with an average speed of 18.20 ± 26.16 Km/h. This paper focuses on the detection of vehicles only as the basis to study the feasibility of using ML techniques instead of a fuzzy logic approach. So, the data collection procedure consisted of monitoring the system’s interactions with vehicles and environment, and then storing the data with both vehicles and no vehicles circulating. Because of this, cases involving pedestrians were filtered out and no data was recorded. Tests were performed both in Portugal and Spain, more specifically in the urban areas of Gambelas (Faro) and Bollullos Par del Condado (Huelva). Four points were in the University of Algarve, two points were in Rua Manuel Gomes Guerreiro, one point was in Rua Comandante Sebastião da Costa, one point was in Praceta Orlando Sena Rodriguez, and another point was in Sector Pp1 Cruz de Montañina. These locations present different terrestrial magnetic fields either due to their nature or to different elements found on public roads (e.g., traffic signs and streetlights among other ferromagnetic elements). To illustrate the different magnetic field values at these locations,

Table 2 shows the average values and standard deviations for the X, Y, and Z axes of the magnetic sensor for both circulating and non-circulating vehicles. On the one hand, the table shows that there is a small difference between the values for “Vehicle” and “No vehicle” conditions at each location (i.e., a minimum of 0.0051% and maximum of 0.6532%), which is in practice very difficult to calibrate. On the other hand, the average values for the magnetic field sensor have no correspondence between sites with very similar values (e.g.,

X-axis for the column “No vehicle” of Praceta Orlando vs. the

X-axis for the column “Vehicle” of Cruz Montañina). This means that a calibration process at one site is unrelated to another, being necessary to start a new labeling procedure of the system to differentiate circulating and non-circulating vehicles. The complexity of generating a single vehicle-classifier for multiple locations elevates to

N ×

L,

N being the number of nodes of the intelligent pedestrian crossing and

L the number of locations. Details on the weather conditions for which the data were collected as well as the duration of each data collection process are given in

Table 3. Finally, the system uses wireless communication to collect the data from the sensors. A portable access point (AP), a personal computer with WampServer software, and hypertext transfer protocol (HTTP) were used. The WampServer software has the capability to handle HTTP requests from the nodes (through an Apache server) and store the data in a MySQL database. The collected tuples were manually labelled as “No vehicle” or “Vehicle”.

4.3. Results and Discussion

The experiments carried out consisted of submitting the balanced data to the techniques described in the previous section. The cross-validation approach was used for this purpose [

44]. The 12,407 tuples, resulting from the preprocessing and data balancing process, were divided into five folds, each one having a total of 2480 tuples. Among them, four folds were used for training whilst one was used for testing. The splitting of the dataset was performed to keep the required temporal linearity. The class distribution of the dataset, according to the folds, is shown in

Table 5.

To determine the efficiency of each algorithm, the ROC analysis [

45] was used (i.e., sensitivity of each algorithm to detect vehicles). The performance was obtained from a confusion matrix of 2 × 2 elements that relates positive (

p) and negative (

n) outcomes (

Table 6). A vehicle detection is considered positive whilst the detection of the “No vehicle” class is considered negative.

After training the models, taking into account the previous considerations, these were subjected to tests with data not previously used in the training phase. The model that best detected the vehicles was random forest, which achieved a true positive rate (TPR) of 96.82%, false positive rate (FPR) of 1.73%, precision of 98.63%, F1 of 97.68%, accuracy (ACC) of 97.85%, and AUC of 0.98. This can be considered as excellent in a scale of [0.97, 1), as argued in [

46]. One drawback of RF is that its decisions are difficult to interpret. The following best-performing models are those that consider the time dimension of the data. These were the deep reinforcement learning (TPR = 92.94%, FPR = 3.73%, precision = 95.00%, F1 = 93.70%, ACC = 94.51%, and AUC = 0.98) and LSTM (TPR = 92.60%, FPR = 5.07%, precision = 95.14%, F1 = 93.18%, ACC = 93.83%, and AUC =0.97), both considered as excellent. The anomaly detector model, one-class SVM, also offers very good results—although lower than the previous models—achieving a TPR of 93.38%, FPR of 15.59%, ACC of 92.08%, and AUC of 0.94. Finally, the multi-layer perceptron and logistic regression had a performance that can be considered as good. Nevertheless, their accuracy rate is reduced when compared to the other models. A summary of these results is shown in

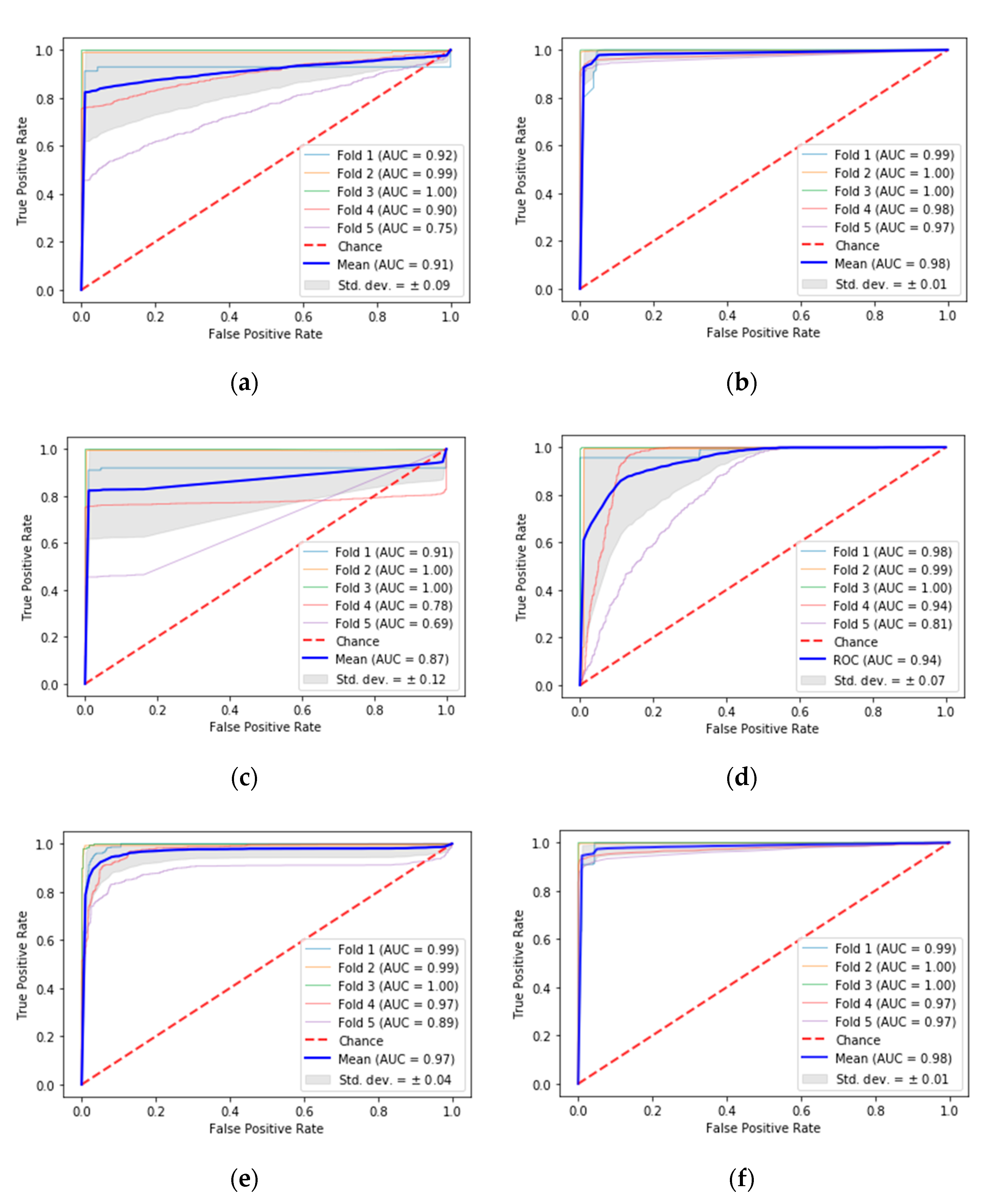

Table 7, while the ROC curves for each of the ML techniques are shown in

Figure 5. The blue color represents the average ROC curve, the gray shaded area shows the standard deviation, and the soft-blue, orange, green, pink, and purple colors stand for each of the folds used to test the models.

Table 7 also includes the performance achieved with the fuzzy classifier used previously. This classifier provided an excellent performance as it was calibrated for a specific location prior to testing. Despite a slight performance drop, the proposed machine learning approaches allow generalization of vehicle detection without the need to calibrate the system.

The overall results are summarized as follows. Firstly, LR offers a good result but is not as outstanding as other techniques. The LR reaches an accuracy of 90.84%; its performance is good, but its accuracy, TPR, and ACC are easily surpassed by other models. Its FPR is, however, the lowest of all the techniques used. Additionally, this model is not very reliable because it presents a high standard deviation for the TPR and ACC. Secondly, RF offers the best success rate from all the models, complying with the theory of its ability to optimize the accuracy. Moreover, this model is very stable as it achieves a low standard deviation in all measurements. Thirdly, the model based on MLP offers good results (ACC of 90.98%), but it is not reliable due to the high standard deviation of the TPR and the ACC. Fourthly, the one-class SVM is the least reliable of all the methods because it presents the highest FPR and the highest standard deviation. Fifthly, LSTM offers excellent and stable results, as expected in a theorical way, since it considers the time series. In this regard, the vehicle detection depends largely on the time because two representations of the crosswalk state can be similar for different situations in different locations. Finally, DRL also offers excellent and stable results due to the construction of a specialized agent. The input structure based on current and historical data allows this method to identify the temporal events generated by vehicles when approaching a pedestrian crossing.

In addition to the previous analysis, the AUC was studied to empirically determine the performance of each model used. The results obtained confirm the outcomes previously exposed, being that the models based on RF and DRL are the best techniques, with an AUC of 0.98 and a standard deviation of 0.01 each. That is, they have similar separability of classes, which can be confirmed by the shape of their ROC curves in

Figure 5. These were followed by LSTM, which achieved an AUC of 0.97 and a standard deviation of 0.04. The one-class SVM offered a very good performance, reaching an AUC value of 0.94 and a standard deviation of 0.07. Finally, the methods based on LR and MLP presented the lowest performance, which is in line with the AUC results of 0.91 and 0.87, respectively.

From all the results exposed it is possible to state that ML techniques are an adequate solution to detect vehicles near pedestrian crossings, and a better choice than the fuzzy classifiers used in our previous work. Although the results obtained with the ML techniques offer a lower performance than those achieved with the fuzzy classifier calibrated for a specific site, the experimentation carried out demonstrate their feasibility to replace the fuzzy classifier. These techniques allow us to place the system in any location without it needing to be calibrated, with a very good or excellent performance. The most effective and reliable ML methods were RF and LSTM–DRL due to their high performance and stability, as shown in

Table 7 and

Figure 5. On the contrary, the least-recommended methods to detect vehicles, under this system, were LR, MLP, and one-class SVM in particular. LR and MLP are least-recommended due to the high deviations present in their TPR and ACC, and one-class SVM is least-recommended due to its high FPR and standard deviation. In general, the temporal recognizing methods, like DRL and LSTM, offer better performance than the other methods, except for RF. From another perspective, the datasets can be seen as having a limitation: the locations used for data collection were always around the 37th parallel.

This fact, however, does not prevent us from confirming that the models under analysis are capable of replacing the classic fuzzy calibration method. Furthermore, the current dataset—although being representative and including captures of cars, motorcycles and buses—does not reflect all types of possible vehicles that can circulate on public roads (e.g., bicycles, electric scooters, trucks or vans, among others). This lack of samples in the current dataset may have limited the performance of the system for these kinds of targets.