In this study, to identify the emotions, we describe a model that learns the log-mel spectrogram on a large dataset, and then propose a method that fine-tunes the pretrained model when learning a small dataset. We use the characteristics of the log-mel spectrogram to recognize emotions in utterances.

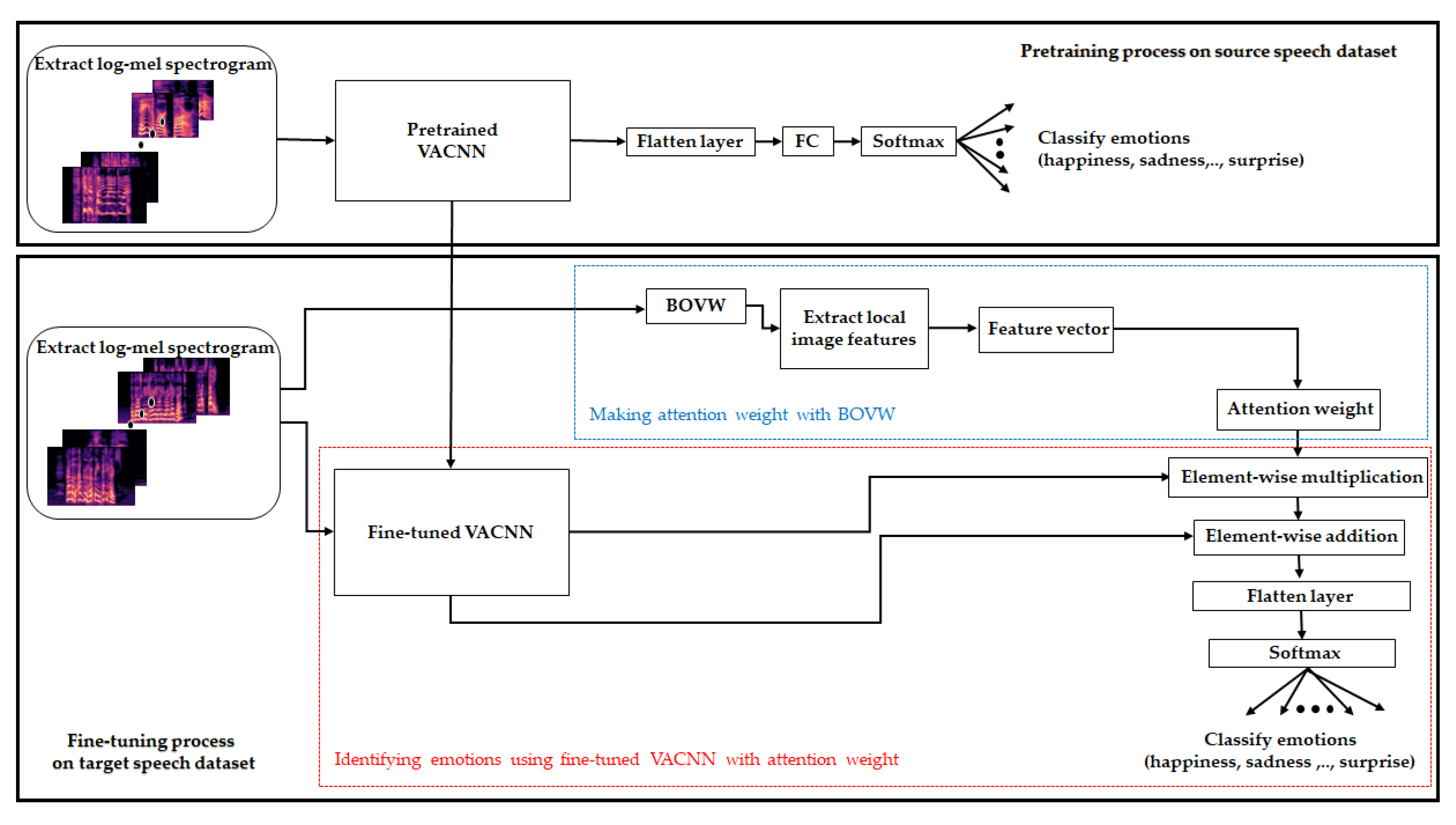

Our method consists of two steps, as illustrated in

Figure 1. First, we designed and pretrained our VACNN model to a log-mel spectrogram extracted from the utterance of the large dataset (

Figure 1, top). The VACNN model is designed as a 2D CNN model that includes convolution blocks and a spatial- and channel-wise visual attention module. The convolution block is composed of convolution layers, group normalization (GN) [

45], and rectified linear unit (ReLU) to learn high-level neural features. In addition, both visual attention modules assist VACNN to capture the refined features in the spatial- and channel-wise aspects. Second, we fused the fine-tuned VACNN model and the attention weight of visual vocabulary for the log-mel spectrogram to identify the emotions for a small dataset (

Figure 1, bottom). We fine-tuned with the pretrained VACNN model on a large dataset in the first step. To improve the fine-tuned VACNN, we extracted feature vectors from local image features on a log-mel spectrogram using the BOVW and expressed it as an attention vector to assist VACNN. For joint learning with feature vectors of BOVW, we computed the element-wise multiplication of the attention vector and the fine-tuned VACNN. Then, we computed the element-wise sum to summarize the features of high-level neural features in the VACNN and the feature vector with the BOVW. Finally, a fully connected layer and softmax classifier were used to classify emotion in the log-mel spectrogram on a small dataset. In the second step, we captured general and specific features using the fine-tuned model (red block in the fine-tuning process), and utilized global static features represented by visual vocabulary using the BOVW to capture the informational features in the target dataset (blue block in the fine-tuning process).

3.1. Visual Attention Convolutional Neural Network for Pretraining

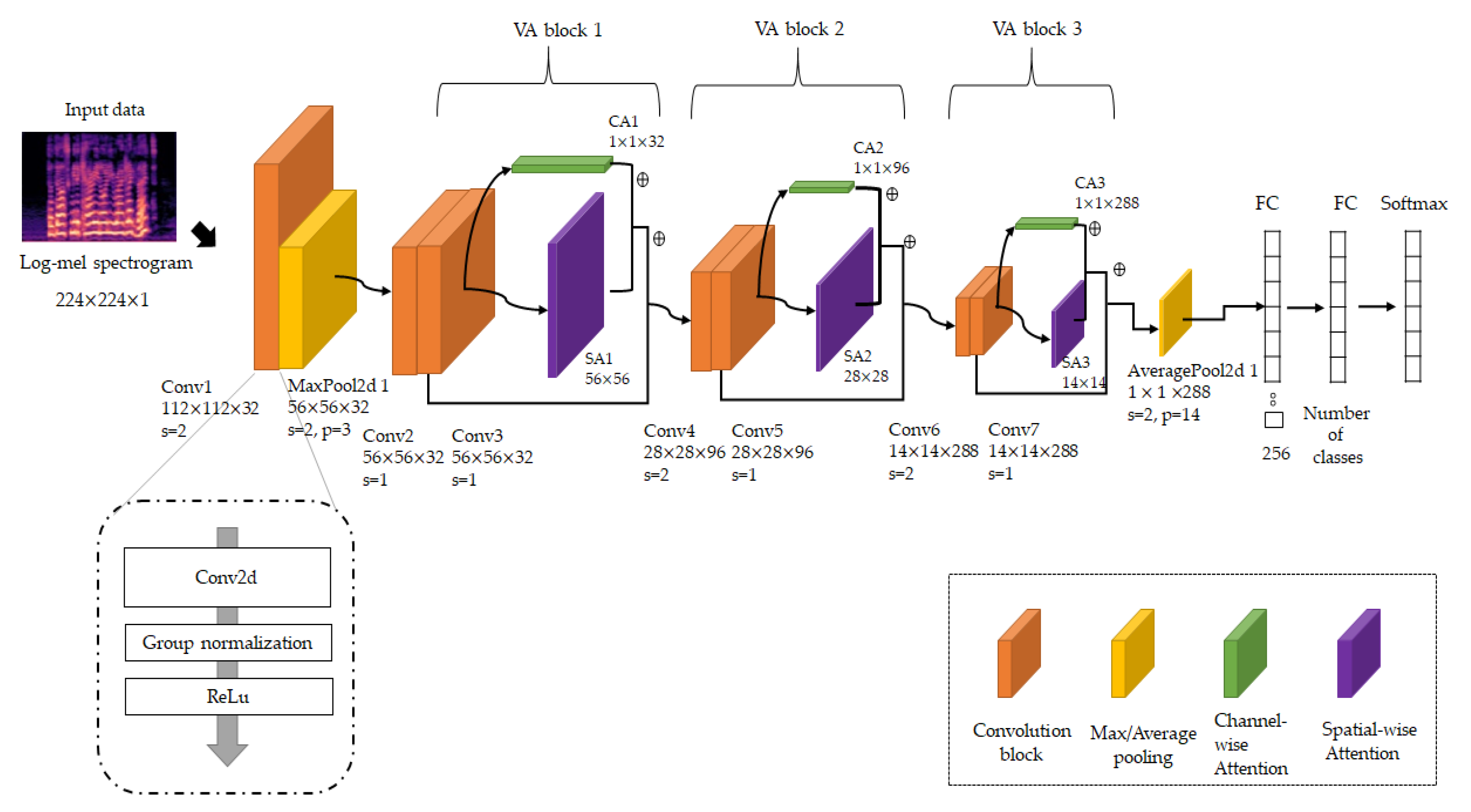

We designed a 2D CNN model to capture high-level neural features of the log-mel spectrogram on the source dataset for SER. We used the log-mel spectrogram as a grayscale image shape with 224

as the image height and width size, and the number of channels, where each pixel has a value from 0 to 255 for black and white shading. To identify emotions in utterances, our model designs similar architecture to residual neural network (ResNet) [

46], which is mainly used as a backbone model for vision recognition and designs channel and spatial-wise attention module inspired by convolutional block attention module (CBAM). The proposed VACNN model for the source speech dataset is shown in

Figure 2.

We first obtained 112 112 feature maps, which have high-level features extracted from 3 3 convolution layers (stride = 2), which have 32 filters. To obtain the fine-grained features, we also applied GN and ReLU after the convolution layer. We identified the three processes (convolution-GN-ReLU) as a convolution block. The GN is inspired by layer normalization that normalizes along the channel dimension, and instance normalization that normalizes each sample. To utilize the advantage that the computations of layer and instance normalization are independent of batch size, GN divides the channels into groups and computes normalization. We denoted the number of groups and the number of channels . After the convolution layer, GN decides the number of channels per group as . For , GN uses a feature-normalization method, such as batch normalization along the height and width axes. Because GN’s computation is dependent on batch size, GN can show stable performance in a wide range of batch sizes. Therefore, we used GN to normalize the feature maps with high-level features on a log-mel spectrogram, such that the model can be normalized stably, regardless of the small batch size according to the number of training samples. In addition, we applied the ReLU function to activate the model. Then, we downsampled the content of feature maps to 5656 by using 33 max pooling (stride = 2) to reduce the execution time while maintaining their salient features.

Next, we designed three VA-blocks to sequentially learn high-level neural features. Each VA block has two convolution blocks as a first step. The convolution block is composed of 33 convolution layers, GN, and ReLU activation, but shows a difference in stride size. In the first VA block, both convolution blocks use a 11 stride with a convolution layer, which is convolved with the input at every pixel with overlapping receptive fields, resulting in a 565632 refined feature map. Otherwise, the second uses a 22 stride with convolution layers in the first convolution block to capture high-level neural features with a downsampled feature map of 282896, using a 11 stride with convolution layers in the second convolution block to find cross-channel correlations. The third VA block uses two convolution blocks with the same structure as the second VA block with 288 filters and obtains 14 refined feature maps. Each convolution block in the VA blocks has a normalized layer by GN and is activated by ReLU. In this model, we used 33 filters with convolution layers to learn local features with a small receptive field proven by ResNet and VGG, and incrementally increase the number of filters of the same size to extract the high-level neural features from spectrograms. Thus, we employed the number of filters for convolutional layers, which resulted in the best performance on the validation set.

After the last convolution block of VA blocks, we applied channel-wise visual attention and spatial visual attention modules to obtain fine-grained features. We concatenated the VA blocks by adding convolutional features. However, we subsequently performed spatial and channel-wise attention for features captured from convolution blocks, so that features can determine where to focus and what is meaningful on feature maps. These modules assist the model in learning target-specific features of spatial and channel-wise aspects by passing the fine-grained feature from an earlier VA block to a later VA block. Our VA modules were inspired by the CBAM by Woo et al. [

47]. They jointly exploit the inter-channel relationship initially investigated by Hu et al. [

48] and the inter-spatial relationship initially investigated by Zagoruyko and Komodakis [

49]. High recognition performance in object detection research is obtained with CBAM by exploiting the inter-channel relationship of features, and then exploiting the inter-spatial relationship of the channel-wise refined feature. Our VA module is similar to CBAM, but we devised a suitable VA module to refine the high-level neural features. We denoted the height of the feature map as

, and the width of the feature map as

. The channel-wise refined features

from the channel-wise attention module are represented to emphasize the informative features according to the channel relationship in the learned feature map

, as follows:

Here, is the sigmoid activation. and are parameters with a multi-layer perceptron (MLP) layer, where is the reduction ratio for the squeeze layer to emphasize the informative feature between channels.

Our channel-wise attention module squeezes and restores the number of filters to aggregate and emphasize inter-channel relationships in feature maps and applies sigmoid activation to parameterize squeezed weight around the non-linearity. In [

47,

48,

50], it is reported that the bottleneck configuration with two FC layers, which reduces and restores the number of filters, is useful to emphasize channel-wise dependencies. Sigmoid functions are inherently non-linear and thus the neural networks, perform channel-wise recalibration by multiplying a value between 0 and 1 for each channel of the feature maps. In addition, we applied global average pooling (GAP) and global max pooling (GMP) to generate channel descriptors that include static information in feature maps. Woo et al. argued that max-pooling and average pooling which are useful for obtaining channel-wise refined features. Max-pooling encodes the degree of the most salient part, whereas average pooling encodes global features to the channel. However, in previous studies [

51,

52], because GAP aggregates channel-wise statistics of each feature map into one feature map, it forces the feature map to recognize certain elements within the spectral features related target class. Additionally, GAP reduces overfitting because there are no parameters to be learned in the global average pooling layer, reported in [

51]. The GMP focuses on a highly localized area on spectral features to find interpretable features within feature maps. The GMP’s advantage with localized features is aligned with previous research [

53,

54]. Therefore, we argue that it is useful for GAP, which represents the global feature of all channels and GMP, which extracts the most specific portion of all channels to refine deep spectral features in the channel axis in learning SER spectral features.

Next, we utilized our spatial attention module to find the inter-spatial relationship in the feature map. Spatial attention encodes the spatial area of features by aggregating the local feature at spatial position to capture inter-spatial relationship [

17]. Spatial attention is commonly constructed by computing statistics across the channel dimension. We obtained a spatially refined feature map

as follows:

Here, is the sigmoid activation. denotes a convolution operation with stride, aggregating high-level features to a feature map having one channel. is the average operation of values across the channel dimension, , and is the maximum operation of values across channel dimensions.

For the feature map from the convolution layer, we computed the average and max pooling operations across the channel axis. Then, we concatenated the average and maximum-pooled features and apply a convolution layer. These processes are similar to CBAM, but the convolution layer is different. The CBAM adopts a standard convolution layer, but we used unbiased convolution with a stride and activated refined features using sigmoid. Because the convolution layer calculates spatial attention, we used an unbiased convolution layer to reduce the risk of model overfitting due to bias that diversifies the model computation. In addition, we set the number of channels to one to aggregate and regularize the spatial information on average- and max-pooled features.

The CBAM applies the spatial attention module to a channel-wise refined feature and adopts sigmoid activation, which is argued to show better performance. However, we applied the spatial attention module and channel-wise attention module separately. By separately extracting the spatial and channel relationship information in the feature map extracted from the convolution block, the relationship of the original characteristic can be more widely detected. In addition, we computed the element-wise sum of spatial refined features and channel-refined features to reduce information transformation for learning high-level neural features.

The features from the third VA block with the convolution block and both spatial and channel-wise attention modules were normalized in group normalization and ReLU activation. In addition, we obtained an average pooled feature map with 11288, calculating the average for feature maps. The average pooled feature map enters a fully connected (FC) layer with 256 neurons, limiting redundancy and the softmax classifier identifies emotions using the log-mel spectrogram.

This model was previously trained with large datasets such as Toronto Emotional Speech Set (TESS) [

55] and the Ryerson Audio-Visual Database of Emotional Speech and Song (RAVDESS) for detecting emotions in log-mel spectrograms. The model architecture and pretrained weights are transferred to the learning process of the target datasets. In the next section, we describe the method of leveraging the pretrained model and visual vocabulary with the BOVW when learning other speech datasets.

3.2. Fusing Fine-Tuned Model and Attention Weight with Bag of Visual Words

In this section, we describe the fine-tuning method to learn high-level features of small datasets using pretrained layers for a large dataset. Transfer learning shows better performance to freeze the initial several layers and fine-tuned other layers when the target domain dataset is different from the source domain dataset and has fewer training samples than the sourced domain dataset. Speech datasets have a close relationship, including emotions, but they have different features such as language, intensity, and speed. Therefore, when taking the pretrained layers on a large dataset, we freeze layers up to the first VA block and unfreeze and retrain other layers. In the fine-tuning model, we downsampled feature maps to with average pooling to aggregate feature maps while preserving the localization of high-level neural features. Furthermore, we reshaped feature maps to 49288 to jointly learn feature vectors from the BOVW and to adapt to the FC layer.

In addition, to learn specific features of the target dataset, we jointly learned the feature vector extracted by analyzing the log-mel spectrogram through the BOVW and fine-tuned model. The proposed fine-tuned model is shown in

Figure 3.

We ensemble the BOVW and fine-tuned models to improve classification performance by learning features of both feature vectors expressing which part of the log-mel spectrogram primarily appears according to emotion and high-level neural features learned by the VACNN model. The BOW is a feature extraction technique primarily used in NLP and represents each sentence or document as a feature vector with each word by counting the number of times each word appears in a document. The BOW model is also applicable for vision-recognition research as BOVW. We use the BOVW to represent the features of the log-mel spectrogram in three steps. First, we extracted local features from images using the KAZE descriptor, which is robust to rotation, scale, and limited affine. Then, we divided the feature space through a clustering algorithm as K-means clustering and defined the center point of each group as a visual word. In this step, we used the silhouette value, which measures how similar a point is to its own cluster compared to other clusters. In previous studies [

56,

57], it is reported that silhouette achieves the best result in most case. In addition, [

57] reported that the silhouette shows most robust performance among cluster validity indices in their research. Given observation

, let

be the average dissimilarity between the data point and all other points in its own cluster. Let

be the lowest average dissimilarity between data points and all data points in another cluster. The silhouette

for each data point

is computed as follows:

We computed the average for the silhouette value to range from −1 to 1; an average silhouette value close to 1 indicates that the point is correctly placed. We computed the average silhouette value for visual words, and then selected the largest number of clusters that show a high silhouette value.

Finally, we created histograms with 128 visual words as feature vectors to represent the features of the log-mel spectrogram. We employed the number of visual words that resulted in the best performance on the validation set. The feature vector is entered into the MLP layer with 49 neurons to squeeze the information of the feature vector. We expanded the dimensions and entered the MLP layer with 288 neurons to assist the fine-tuned model.

In addition, we applied context attention to assist the fine-tuned model to jointly learn the refined feature vector. Whereas the aforementioned visual attention emphasizes the salient features on the feature map learned by the convolution layer, context attention compresses all the necessary information of sequence data into a fixed-length vector in a different manner with visual attention. In our implementation, the attention vectors are computed and applied to features extracted from the VACNN, as follows:

Here,

denotes the last hidden state of the refined feature vector for the target sequence, denotes the dot product, and

denotes all hidden states of the refined feature vector for the input sequence.

denotes the index of hidden states,

denotes the number of hidden states, and denotes concatenate.

is a learnable parameter with an MLP. In addition,

is extracted features using the VACNN model. We utilize all the hidden states of the refined feature vector

to calculate scores

using Luong’s multiplicative style [

58], which generalizes all the hidden states with unbiased MLP and dot product to the last hidden state to compare their similarity. Then, we employed softmax on these scores to produce the attention weights

as a probabilistic interpretation to which state

is to be paid or to be ignored. The context vector

is generated by performing an element-wise multiplication of

with each state of

. The context vector is generated using the sum of the hidden states weighted by the attention weight. We can attain an attention vector

by applying a hyperbolic tangent (tanh) for the concatenated context vector

and the last hidden state

. For joint learning with feature vectors, we fed the attention vector

into feature maps

of the VACNN using element-wise multiplication, and used tanh as the nonlinear activation. Then, we can attain summarized features

by computing the element-wise sum with

to enforce efficiency of learning salient features in both high-level neural features in the VACNN and the feature vector with the BOVW. We applied ReLU activations

and then classified emotions using a classification layer and a softmax classifier with output classes.