Low Dimensional Discriminative Representation of Fully Connected Layer Features Using Extended LargeVis Method for High-Resolution Remote Sensing Image Retrieval

Abstract

1. Introduction

2. Related Works

2.1. Dimensionality Reduction Method

- LargeVis uses the distance relationship of data to reduce dimensionality, and cannot reduce the dimensionality of high-dimensional data of a single image, so it is necessary to extend LargeVis to meet the requirements of image retrieval.

- LargeVis is unfavorable for image retrieval since it has a high degree of randomness, leading to the different results of dimensionality reduction. It is necessary to eliminate the randomness while taking advantage of the clustering characteristics of LargeVis data.

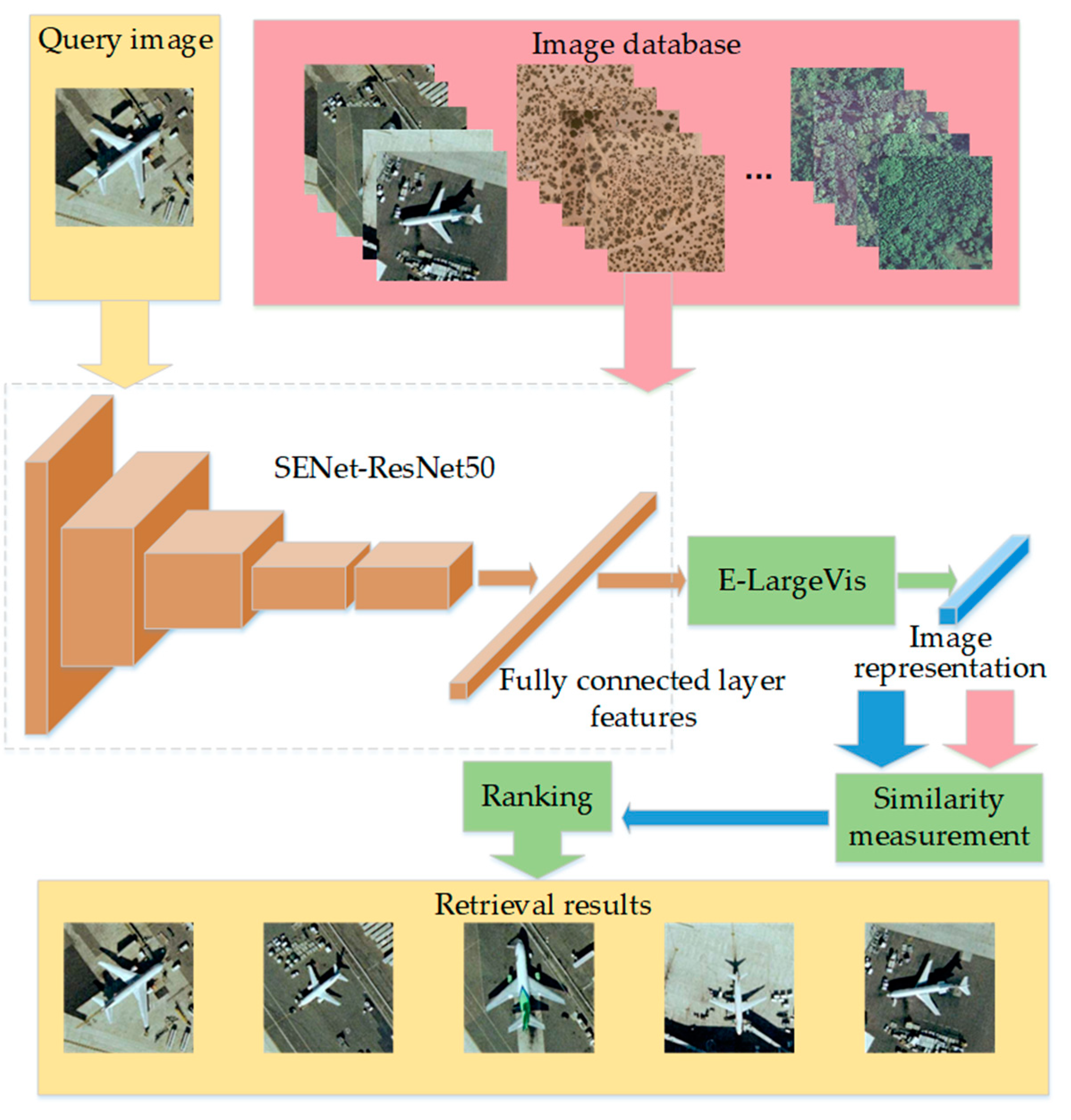

2.2. High-Resolution Remote Sensing Image Retrieval Based on CNN

3. The Proposed E-LargeVis Method

3.1. Principle of LargeVis Dimensionality Reduction Method

3.2. Extended LargeVis Method

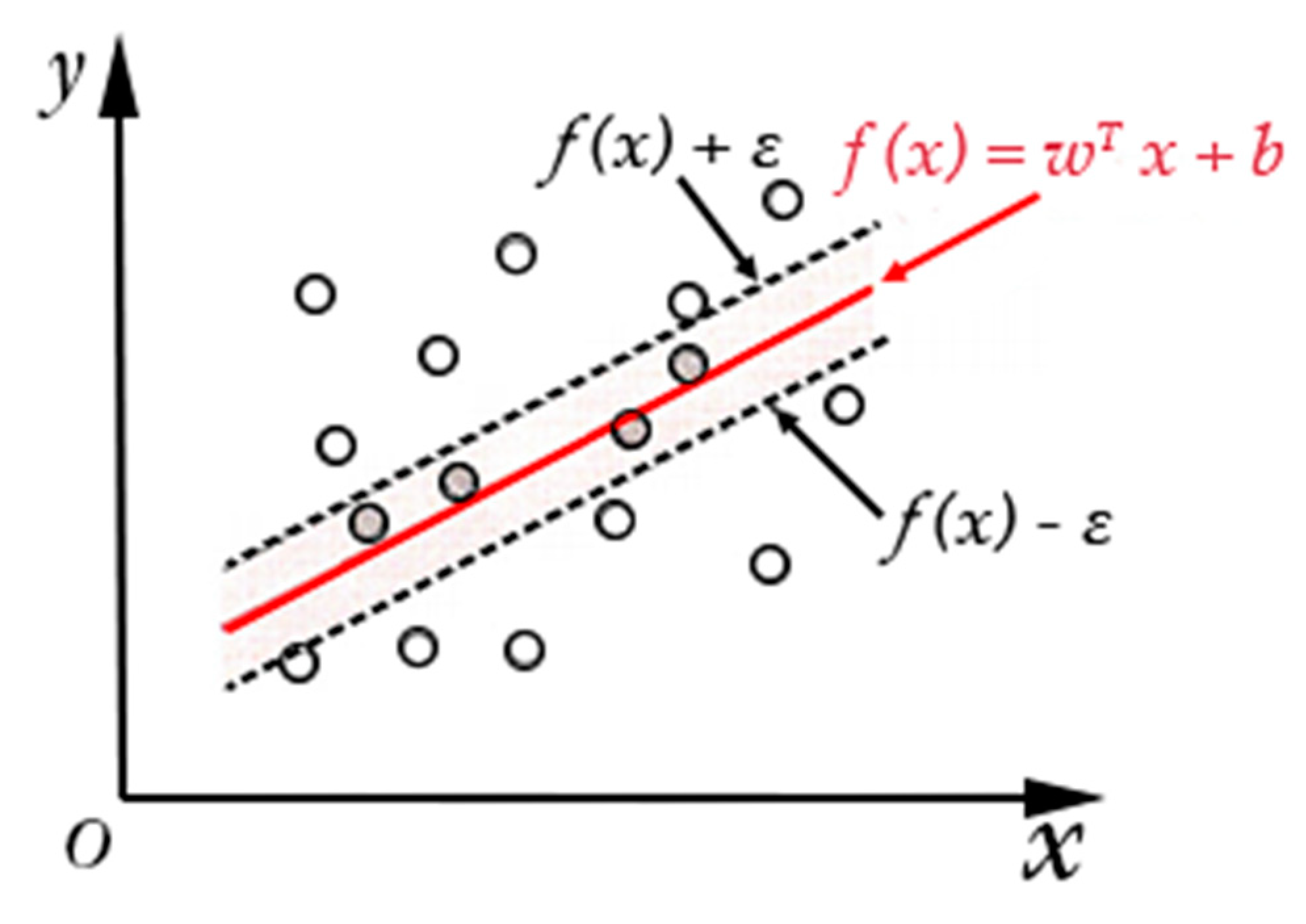

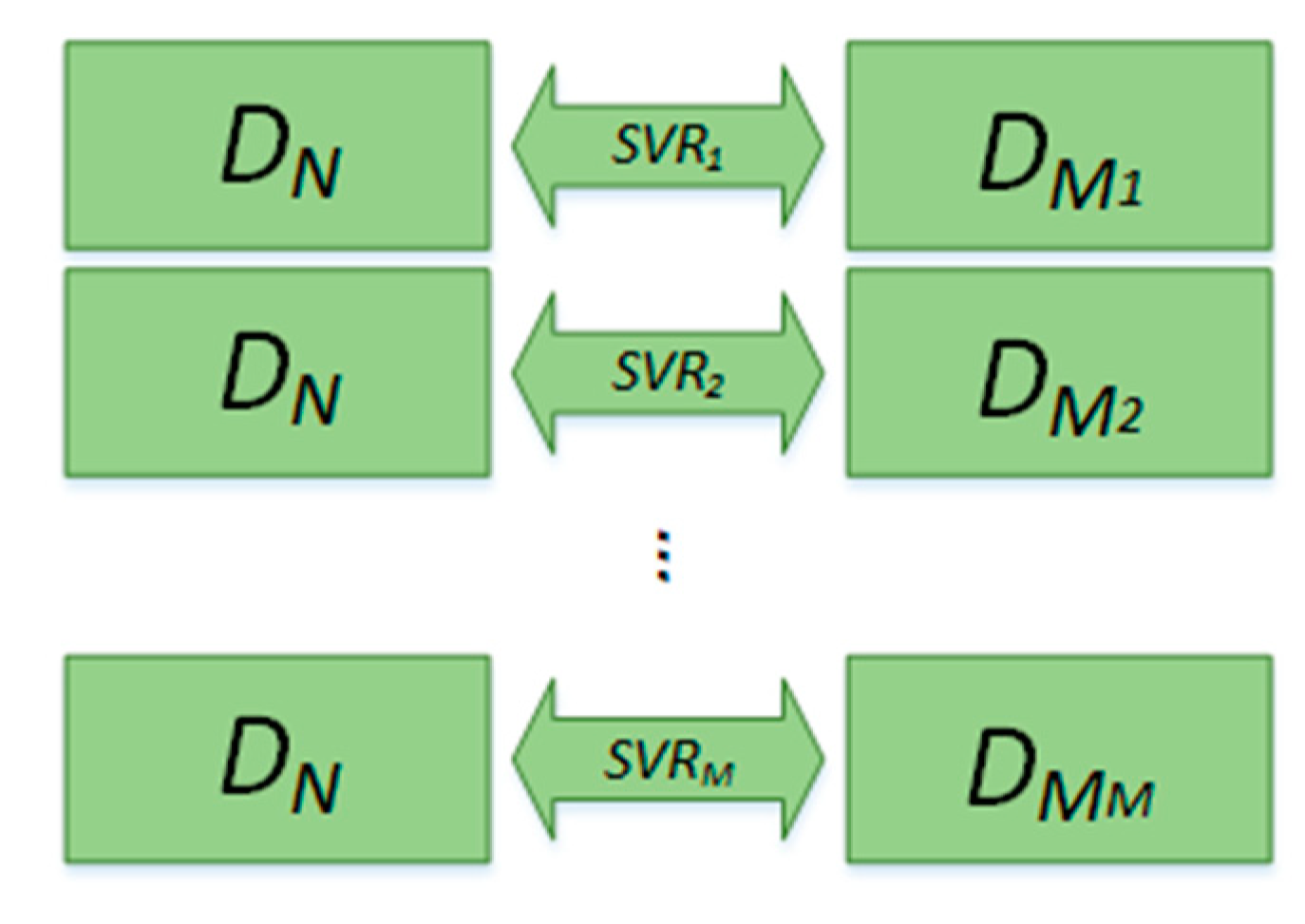

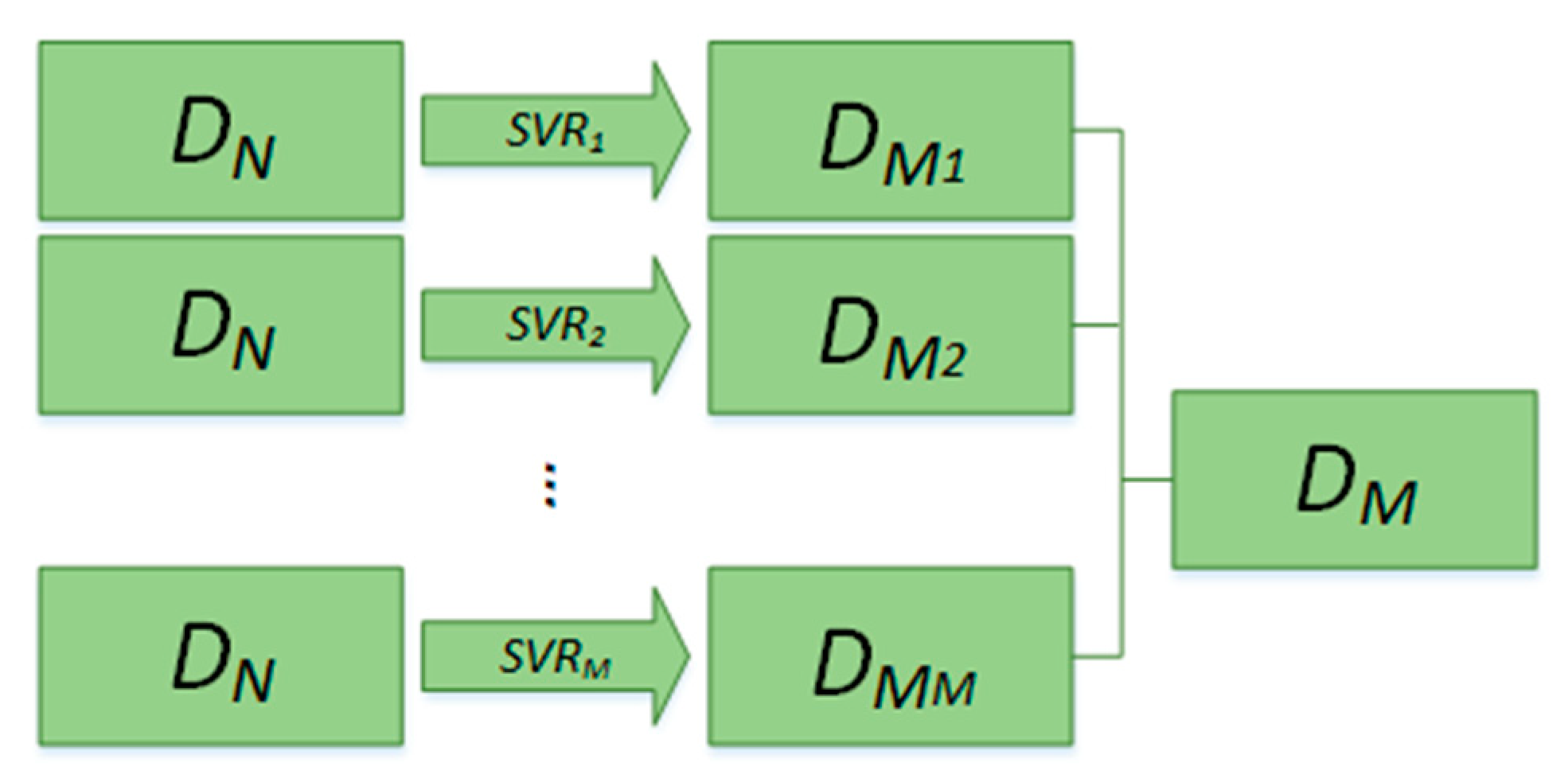

- Low-dimensional data of all training sets are obtained by LargeVis. It is necessary to reduce the high-dimensional data to a different dimensionality to meet different requirements. The sample pairs of training data are composed of high-dimensional and low-dimensional data, in the form of , , where is high-dimensional training data with N dimensionality and is low-dimensional training data with M dimensionality, is the first dimension of , and is the Mth dimension of .

- The sample pairs are input into the SVR to build a mapping model of high-dimensional data to low-dimensional data. In particular, each component of low-dimensional data needs one SVR fitting model.

4. High-Resolution Remote Sensing Image Retrieval Method

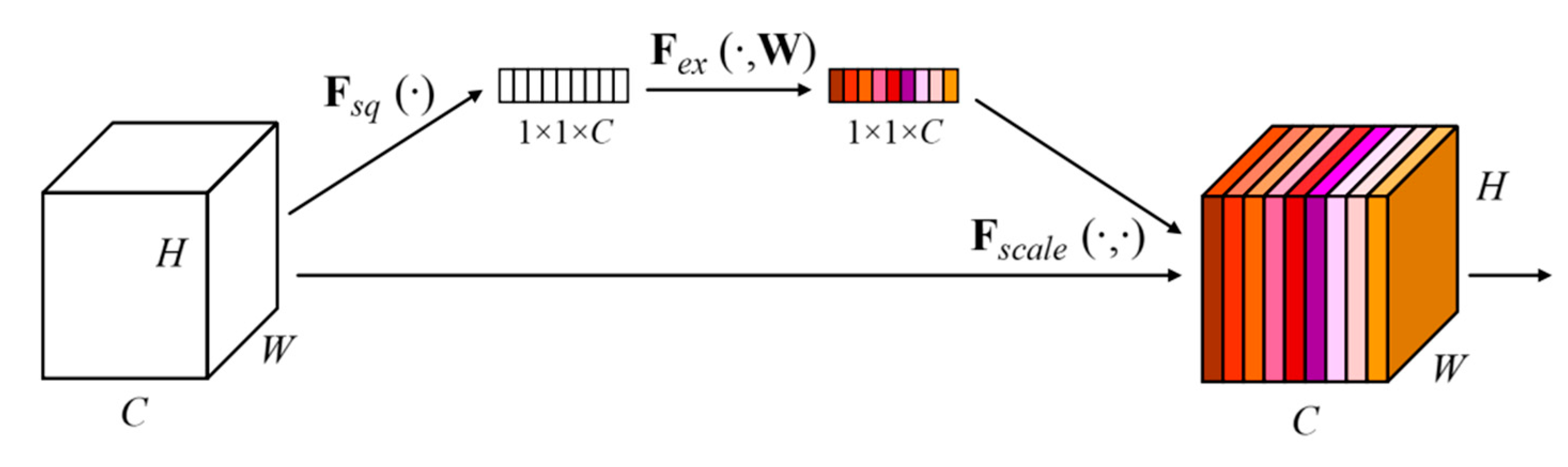

4.1. Channel Attention-Based ResNet50

4.1.1. ResNet50 Network

4.1.2. Channel Attention Mechanism

4.2. Similarity Measurement

5. Experimental Results and Analysis

5.1. Datasets and Evaluation Metric

5.2. Experimental Setting

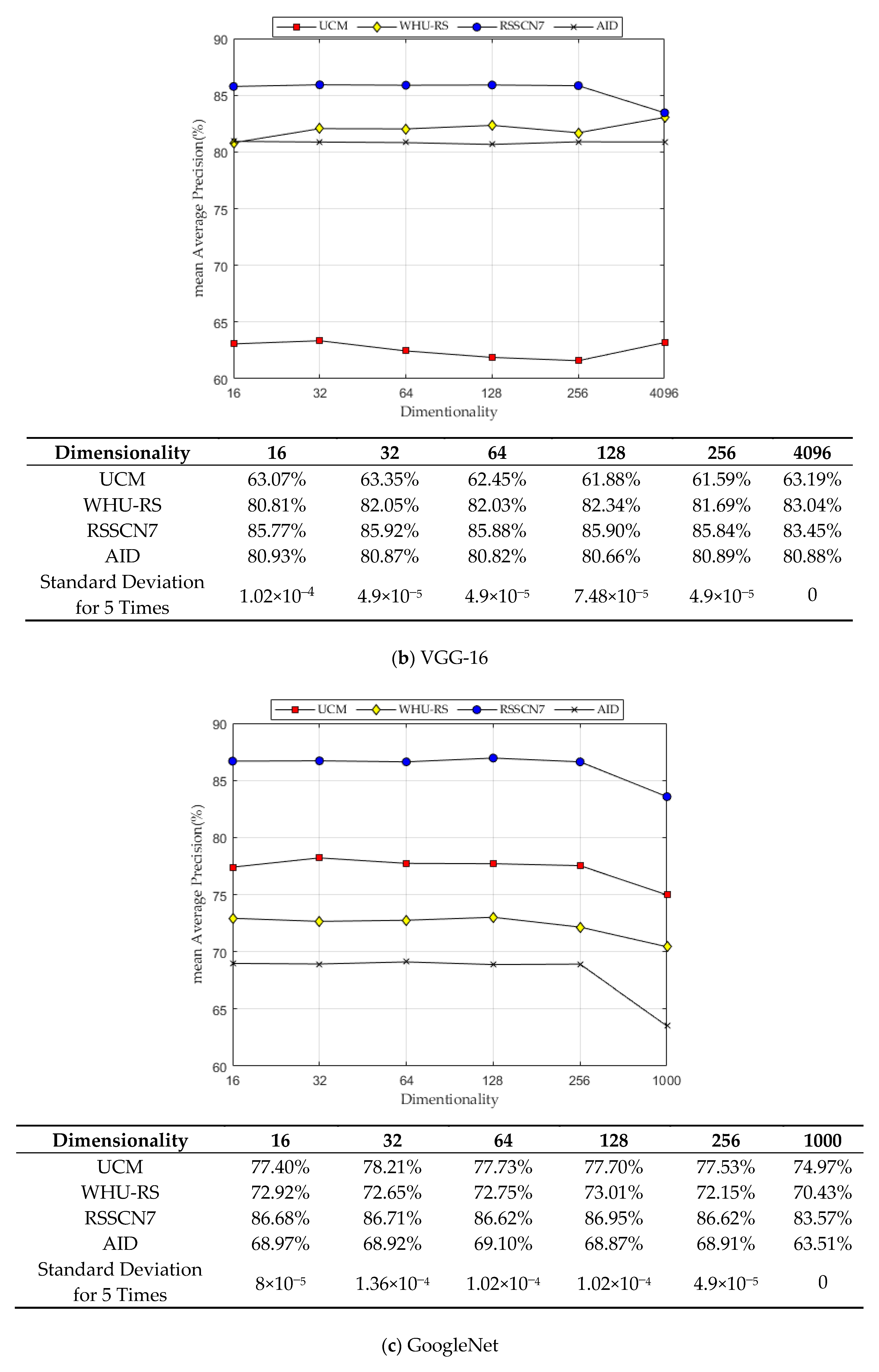

5.3. Experiment I: Performance Comparison of Different CNN

5.4. Experiment II: Performance Comparison of SVR Regression Method

5.5. Experiment III: Performance Comparison of E-LargeVis Dimensionality Reduction

5.6. Experiment IV: Performance Comparison of Euclidean and Other Similarity Measurement Methods

5.7. Experiment V: Performance Comparison of E-LargeVis and Other Dimensionality Reduction Methods

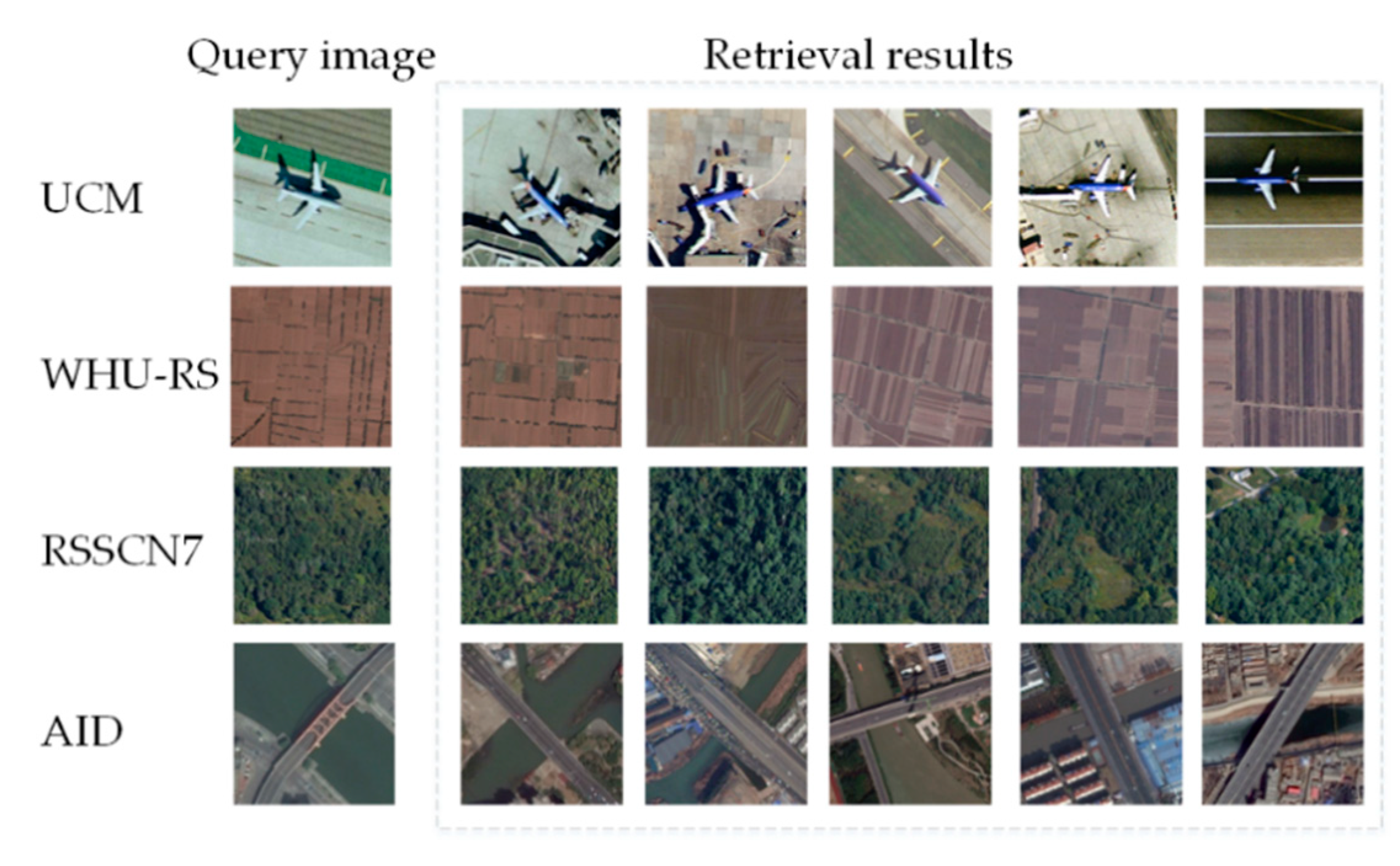

5.8. Experiment VI: Image Retrieval Results

5.9. Experiment VII: Performance Comparison with the Existing Methods

6. Discussion

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Faloutsos, C.; Barber, R.; Flickner, M.; Hafner, J.; Niblack, W.; Petkovic, D.; Equitz, W. Efficient and effective querying by image content. J. Intell. Inf. Syst. 1994, 3, 231–262. [Google Scholar] [CrossRef]

- Hinton, G.; Roweis, S. Stochastic neighbor embedding. In Proceedings of the Neural Information Processing Systems, Vancouver, BC, Canada, 8–13 December 2003; pp. 857–864. [Google Scholar]

- Van der Maaten, L.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Jolliffe, I.T.; SpringerLink, O.S. Principal Component Analysis; Springer: New York, NY, USA, 1986. [Google Scholar]

- Rayens, W.S. Discriminant analysis and statistical pattern recognition. Technometrics 1993, 35, 324–326. [Google Scholar] [CrossRef]

- Torgerson, W.S. Multidimensional scaling: I. Theory and method. Psychometrika 1952, 17, 401–419. [Google Scholar] [CrossRef]

- Roweis, S.T.; Saul, L.K. Nonlinear dimensionality reduction by locally linear embedding. Science 2000, 290, 2323–2326. [Google Scholar] [CrossRef] [PubMed]

- He, X.; Niyogi, P. Locality preserving projections. In Proceedings of the Neural Information Processing Systems, Vancouver, BC, Canada, 8–13 December 2003; pp. 234–241. [Google Scholar]

- Tang, J.; Liu, J.; Zhang, M.; Mei, Q. Visualizing large-scale and high-dimensional data. arXiv 2016, arXiv:1602.00370. [Google Scholar]

- Zhang, J.; Chen, L.; Zhuo, L.; Liang, X.; Li, J. An efficient hyperspectral image retrieval method: Deep spectral-spatial feature extraction with DCGAN and dimensionality reduction using t-SNE-based NM hashing. Remote Sens. 2018, 10, 271. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. ImageNet classification with deep convolutional neural networks. In Proceedings of the Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; pp. 1097–1105. [Google Scholar]

- Sajjad, M.; Ullah, A.; Ahmad, J.; Abbas, N.; Rho, S.; Baik, S.W. Integrating salient colors with rotational invariant texture features for image representation in retrieval systems. Multimed. Tools Appl. 2017, 77, 4769–4789. [Google Scholar] [CrossRef]

- Mehmood, I.; Ullah, A.; Muhammad, K.; Deng, D.; Meng, W.; Al-Turjman, F.; Sajjad, M.; de Albuquerque, V.H.C. Efficient image recognition and retrieval on IoT-assisted energy-constrained platforms from big data repositories. IEEE Internet Things J. 2019, 6, 9246–9255. [Google Scholar] [CrossRef]

- He, N.; Fang, L.; Plaza, A. Hybrid first and second order attention Unet for building segmentation in remote sensing images. Sci. China Inf. Sci. 2020, 63, 14030515. [Google Scholar] [CrossRef]

- Zhou, W.; Newsam, S.; Li, C.; Shao, Z. Learning low-dimensional convolutional neural networks for high-resolution remote sensing image retrieval. Remote Sens. 2017, 9, 489. [Google Scholar] [CrossRef]

- Hu, F.; Tong, X.; Xia, G.; Zhang, L. Delving into deep representations for remote sensing image retrieval. In Proceedings of the IEEE International Conference on Signal Processing, Chengdu, China, 6–10 November 2016; pp. 198–203. [Google Scholar]

- Xia, G.; Tong, X.; Hu, F.; Zhong, Y.; Datcu, M.; Zhang, L. Exploiting deep features for remote sensing image retrieval—A systematic investigation. arXiv 2017, arXiv:1707.07321. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A.B.I. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Sivic, J.; Zisserman, A. Video Google: A text retrieval approach to object matching in videos. In Proceedings of the IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; pp. 1470–1477. [Google Scholar]

- Perronnin, F.; Sánchez, J.; Mensink, T. Improving the fisher kernel for large-scale image classification. In Proceedings of the European Conference on Computer Vision, Crete, Greece, 5–9 September 2010; pp. 143–156. [Google Scholar]

- Jegou, H.; Douze, M.; Schmid, C.; Perez, P. Aggregating local descriptors into a compact image representation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, San Francisco, CA, USA, 13–18 June 2010; pp. 3304–3311. [Google Scholar]

- Babenko, A.; Lempitsky, V. Aggregating deep convolutional features for image retrieval. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 11–18 December 2015; pp. 1269–1277. [Google Scholar]

- Kalantidis, Y.; Mellina, C.; Osindero, S. Cross-dimensional weighting for aggregated deep convolutional features. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; pp. 685–701. [Google Scholar]

- Wang, Y.; Ji, S.; Lu, M.; Zhang, Y. Attention boosted bilinear pooling for remote sensing image retrieval. Int. J. Remote Sens. 2020, 41, 2704–2724. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.; Li, K.; Li, F. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Miami Beach, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Napoletano, P. Visual descriptors for content-based retrieval of remote-sensing images. Int. J. Remote Sens. 2018, 39, 1343–1376. [Google Scholar] [CrossRef]

- Xiao, Z.; Long, Y.; Li, D.; Wei, C.; Tang, G.; Liu, J.; Chunshan, W.; Gefu, T.; Zhifeng, X.; Yang, L.; et al. High-resolution remote sensing image retrieval based on CNNs from a dimensional perspective. Remote Sens. 2017, 9, 725. [Google Scholar] [CrossRef]

- Li, P.; Ren, P.; Zhang, X.; Wang, Q.; Zhu, X.; Wang, L. Region-wise deep feature representation for remote sensing images. Remote Sens. 2018, 10, 871. [Google Scholar] [CrossRef]

- Ye, F.; Dong, M.; Luo, W.; Chen, X.; Min, W. A new re-ranking method based on convolutional neural network and two image-to-class distances for remote sensing image retrieval. IEEE Access 2019, 7, 141498–141507. [Google Scholar] [CrossRef]

- Cao, R.; Zhang, Q.; Zhu, J.; Li, Q.; Li, Q.; Liu, B.; Qiu, G. Enhancing remote sensing image retrieval using a triplet deep metric learning network. Int. J. Remote Sens. 2020, 41, 740–751. [Google Scholar] [CrossRef]

- Zhang, J.; Chen, L.; Liang, X.; Zhuo, L.; Tian, Q. Hyperspectral image secure retrieval based on encrypted deep spectral–spatial features. J. Appl. Remote Sens. 2019, 13, 018501. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G.B.I. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Li, J.; Li, Y.; He, L.; Chen, J.; Plaza, A. Spatio-temporal fusion for remote sensing data: An overview and new benchmark. Sci. China Inf. Sci. 2020, 63, 140301. [Google Scholar] [CrossRef]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the ACM Sigspatial International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 3–5 November 2010; pp. 270–279. [Google Scholar]

- Dai, D.; Yang, W. Satellite image classification via two-layer sparse coding with biased image representation. IEEE Geosci. Remote Soc. 2011, 8, 173–176. [Google Scholar] [CrossRef]

- Zou, Q.; Ni, L.; Zhang, T.; Wang, Q. Deep learning based feature selection for remote sensing scene classification. IEEE Geosci. Remote Soc. 2015, 12, 2321–2325. [Google Scholar] [CrossRef]

- Xia, G.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Trans. Geosci. Remote 2017, 55, 3965–3981. [Google Scholar] [CrossRef]

- Deselaers, T.; Deselaers, T.; Keysers, D.; Keysers, D.; Ney, H.; Ney, H. Features for image retrieval: An experimental comparison. Inf. Retr. J. 2008, 11, 77–107. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, Y.; Huang, X.; Zhu, H.; Ma, J. Large-scale remote sensing image retrieval by deep hashing neural networks. IEEE Trans. Geosci. Remote 2018, 56, 950–965. [Google Scholar] [CrossRef]

- Liu, Y.; Liu, Y.; Chen, C.; Ding, L. Remote-sensing image retrieval with tree-triplet-classification networks. Neurocomputing 2020, 405, 48–61. [Google Scholar] [CrossRef]

| AlexNet | VGG-16 | GoogLeNet | ResNet50 | SENet-ResNet50 | |

|---|---|---|---|---|---|

| Dimensionality | 4096 | 4096 | 1000 | 2048 | 2048 |

| AlexNet | VGG-16 | GoogLeNet | ResNet50 | SENet-ResNet50 | |

|---|---|---|---|---|---|

| UCM | 77.19% | 63.19% | 74.97% | 90.63% | 96.64% |

| WHU-RS | 72.00% | 83.04% | 70.43% | 93.76% | 97.69% |

| RSSCN7 | 81.60% | 83.45% | 83.57% | 74.22% | 85.10% |

| AID | 69.57% | 80.88% | 63.51% | 81.37% | 89.03% |

| Method | UCM | WHU-RS | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 16 | 32 | 64 | 128 | 256 | 16 | 32 | 64 | 128 | 256 | |

| Ridge Regression | 96.59% | 96.45% | 96.54% | 96.39% | 96.33% | 97.19% | 97.41% | 97.20% | 97.27% | 97.33% |

| Lasso | 95.68% | 95.54% | 95.63% | 95.48% | 95.42% | 96.27% | 96.49% | 96.28% | 96.35% | 96.42% |

| SVR | 98.27% | 98.13% | 98.22% | 98.07% | 98.01% | 98.88% | 99.11% | 98.89% | 98.96% | 99.03% |

| Method | RSSCN7 | AID | ||||||||

| 16 | 32 | 64 | 128 | 256 | 16 | 32 | 64 | 128 | 256 | |

| Ridge Regression | 91.64% | 91.92% | 92.10% | 92.03% | 91.97% | 92.95% | 92.67% | 92.93% | 92.92% | 92.74% |

| Lasso | 90.79% | 91.07% | 91.24% | 91.17% | 91.11% | 92.09% | 91.81% | 92.07% | 92.06% | 91.88% |

| SVR | 92.22% | 92.50% | 92.68% | 92.61% | 92.55% | 93.54% | 93.26% | 93.52% | 93.51% | 93.33% |

| Dimensionality | Method | UCM | RS19 | RSSCN7 | AID |

|---|---|---|---|---|---|

| 256 | Euclidean | 98.01% | 99.03% | 92.55% | 93.33% |

| Cityblock | 96.15% | 98.24% | 93.13% | 93.37% | |

| Chebychev | 97.66% | 98.25% | 84.80% | 92.82% | |

| Cosine | 98.01% | 99.03% | 92.55% | 93.33% | |

| Correlation | 98.02% | 99.02% | 92.53% | 93.34% | |

| Spearman | 89.76% | 95.04% | 92.62% | 92.42% | |

| 128 | Euclidean | 98.07% | 98.96% | 92.61% | 93.51% |

| Cityblock | 97.66% | 98.22% | 90.99% | 93.31% | |

| Chebychev | 97.68% | 98.74% | 89.16% | 93.25% | |

| Cosine | 98.07% | 98.96% | 92.61% | 93.51% | |

| Correlation | 98.09% | 98.95% | 92.57% | 93.51% | |

| Spearman | 94.60% | 96.75% | 90.48% | 93.02% | |

| 64 | Euclidean | 98.22% | 98.89% | 92.68% | 93.52% |

| Cityblock | 98.07% | 98.71% | 91.42% | 93.35% | |

| Chebychev | 97.60% | 98.30% | 91.40% | 93.24% | |

| Cosine | 98.22% | 98.89% | 92.68% | 93.52% | |

| Correlation | 98.22% | 98.92% | 92.69% | 93.52% | |

| Spearman | 97.36% | 96.86% | 89.53% | 93.03% | |

| 32 | Euclidean | 98.13% | 99.11% | 92.50% | 93.26% |

| Cityblock | 98.22% | 98.68% | 91.85% | 93.20% | |

| Chebychev | 97.61% | 98.62% | 91.30% | 93.04% | |

| Cosine | 98.13% | 99.11% | 92.50% | 93.26% | |

| Correlation | 98.13% | 99.13% | 92.35% | 93.25% | |

| Spearman | 96.04% | 95.15% | 85.58% | 93.37% | |

| 16 | Euclidean | 98.27% | 98.88% | 92.22% | 93.54% |

| Cityblock | 98.26% | 98.90% | 92.02% | 93.48% | |

| Chebychev | 98.04% | 98.23% | 91.60% | 93.42% | |

| Cosine | 98.27% | 98.88% | 92.22% | 93.54% | |

| Correlation | 98.23% | 98.74% | 92.41% | 93.51% | |

| Spearman | 94.84% | 66.44% | 90.38% | 84.06% |

| Method | UCM | WHU-RS | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 16 | 32 | 64 | 128 | 256 | 16 | 32 | 64 | 128 | 256 | |

| PCA | 97.29% | 97.17% | 96.91% | 96.74% | 96.70% | 98.85% | 98.39% | 98.07% | 98.01% | 98.02% |

| LPP | 96.94% | 87.77% | 73.50% | 77.66% | 86.53% | 98.92% | 89.59% | 78.50% | 83.72% | 80.69% |

| LLE | 67.73% | 74.01% | 81.04% | 83.35% | 85.85% | 71.18% | 83.80% | 79.42% | 82.47% | 84.59% |

| E-LargeVis | 98.27% | 98.13% | 98.22% | 98.07% | 98.01% | 98.88% | 99.11% | 98.89% | 98.96% | 99.03% |

| Method | RSSCN7 | AID | ||||||||

| 16 | 32 | 64 | 128 | 256 | 16 | 32 | 64 | 128 | 256 | |

| PCA | 87.91% | 87.01% | 86.75% | 86.70% | 86.68% | 88.56% | 92.04% | 90.54% | 89.97% | 89.71% |

| LPP | 76.07% | 76.45% | 78.71% | 85.54% | 88.66% | 82.44% | 91.74% | 82.64% | 83.96% | 85.21% |

| LLE | 67.93% | 77.41% | 79.80% | 73.51% | 76.74% | 56.00% | 57.52% | 64.49% | 75.32% | 79.35% |

| E-LargeVis | 92.22% | 92.50% | 92.68% | 92.61% | 92.55% | 93.54% | 93.26% | 93.52% | 93.51% | 93.33% |

| Author | Year | UCM | WHU-RS | RSSCN7 | AID |

|---|---|---|---|---|---|

| Hu, F. [16] | 2016 | 62.23% | 82.53% | - | - |

| Napoletano, P. [28] | 2017 | 98.05% | 98.69% | - | - |

| Zhou, W. [15] | 2017 | 54.44% | 64.60% | 46.28% | 37.61% |

| Xia, G. [17] | 2018 | 64.56% | 82.53% | 59.04% | |

| Li, Y. [41] | 2018 | 98.30% | - | - | - |

| Wang, Y. [25] | 2019 | 90.56% | 89.51% | 81.32% | - |

| Ye, F. [31] | 2019 | 95.62% | - | - | - |

| Liu, Y. [42] | 2020 | 70.39% | - | - | - |

| Cao, R. [32] | 2020 | 96.63% | - | - | - |

| Ours | 98.27% | 99.11% | 92.68% | 93.54% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhuo, Z.; Zhou, Z. Low Dimensional Discriminative Representation of Fully Connected Layer Features Using Extended LargeVis Method for High-Resolution Remote Sensing Image Retrieval. Sensors 2020, 20, 4718. https://doi.org/10.3390/s20174718

Zhuo Z, Zhou Z. Low Dimensional Discriminative Representation of Fully Connected Layer Features Using Extended LargeVis Method for High-Resolution Remote Sensing Image Retrieval. Sensors. 2020; 20(17):4718. https://doi.org/10.3390/s20174718

Chicago/Turabian StyleZhuo, Zheng, and Zhong Zhou. 2020. "Low Dimensional Discriminative Representation of Fully Connected Layer Features Using Extended LargeVis Method for High-Resolution Remote Sensing Image Retrieval" Sensors 20, no. 17: 4718. https://doi.org/10.3390/s20174718

APA StyleZhuo, Z., & Zhou, Z. (2020). Low Dimensional Discriminative Representation of Fully Connected Layer Features Using Extended LargeVis Method for High-Resolution Remote Sensing Image Retrieval. Sensors, 20(17), 4718. https://doi.org/10.3390/s20174718