Energy Efficiency in RF Energy Harvesting-Powered Distributed Antenna Systems for the Internet of Things

Abstract

1. Introduction

- Although some existing works investigated the EE for DAS with RF EH, only the constraint of power control was considered, where the user’s information rate requirement was not involved; see, e.g., [17,18], while in our work, the system EE is maximized with the user’s minimal information rate requirement, which is much closer to the users’ demands.

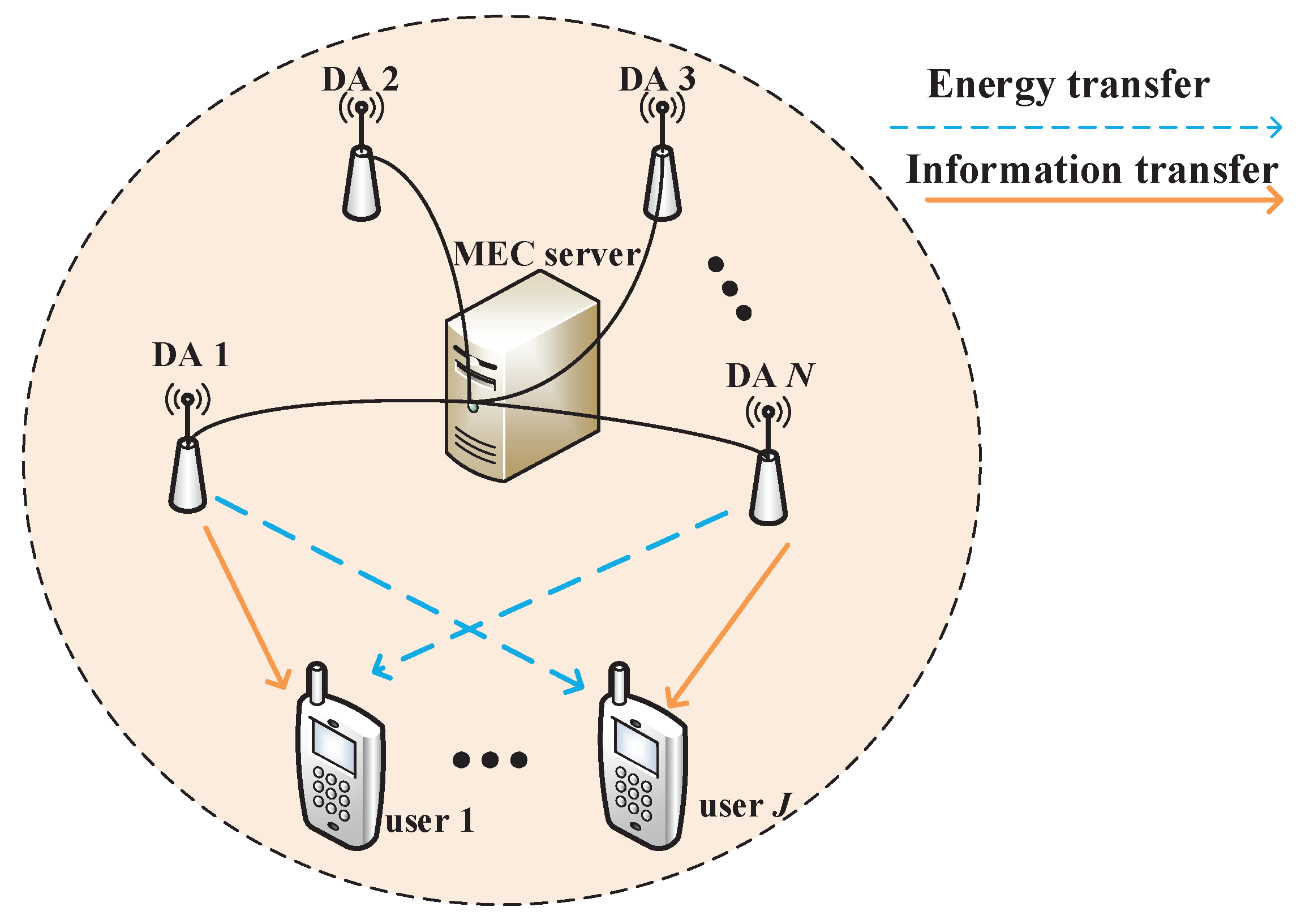

2. System Model

3. Problem Formulation and Solution

3.1. Problem Formulation

3.2. Problem Analysis

| Algorithm 1: The presented solution approach for solving problem P1. |

| 1 Initializeq |

| 2 While , do |

| 3 Initialize |

| 4 While , do |

| 5 Initialize e and |

| 6 While L stops the improvement, do |

| 7 Compute the that maximizes L. |

| 8 Compute e with fixed . |

| 9 Update the Lagrangian multipliers of with the ellipsoid method via subgradients. |

| 10 Update q |

| 11 Obtain . |

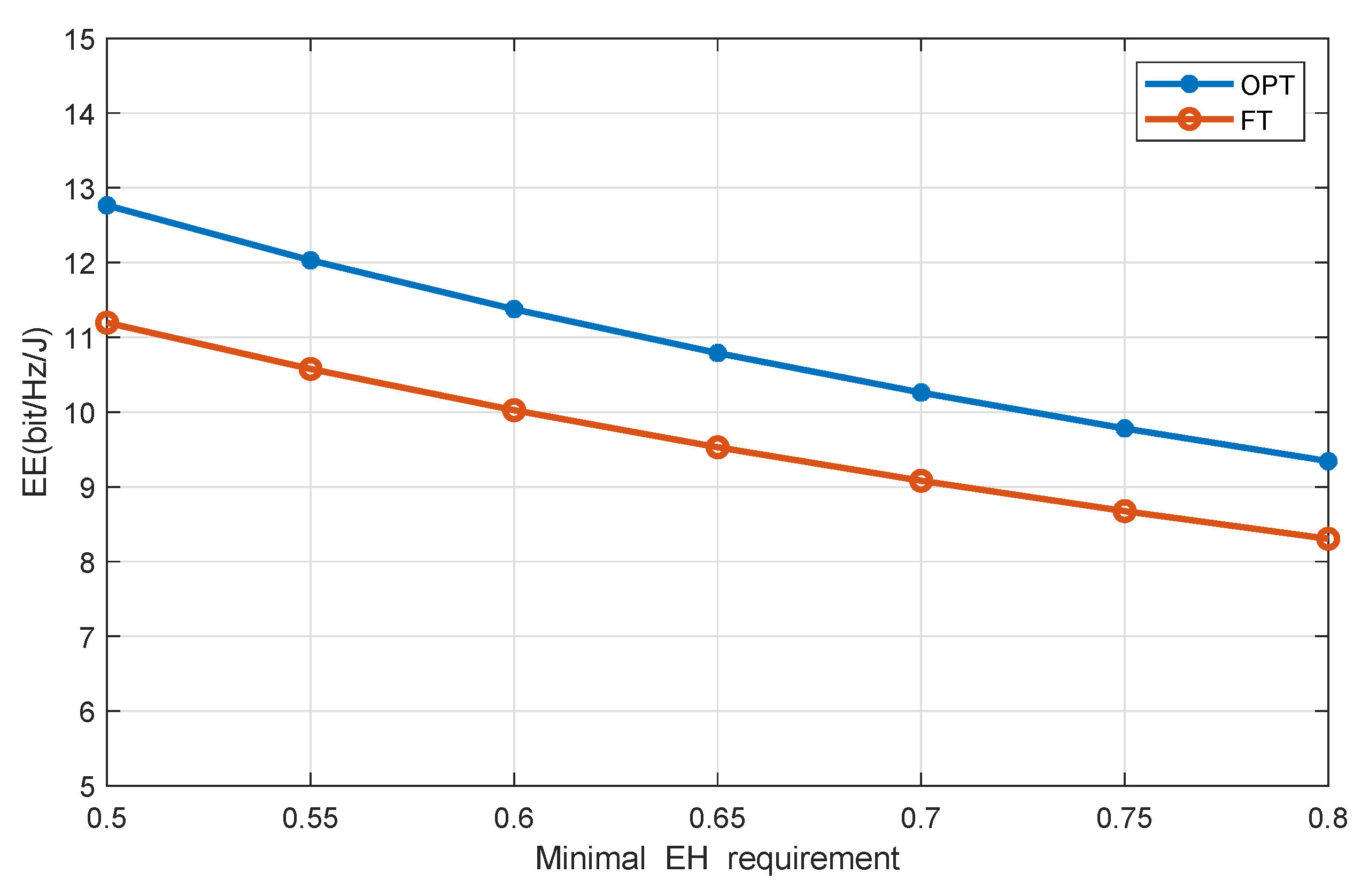

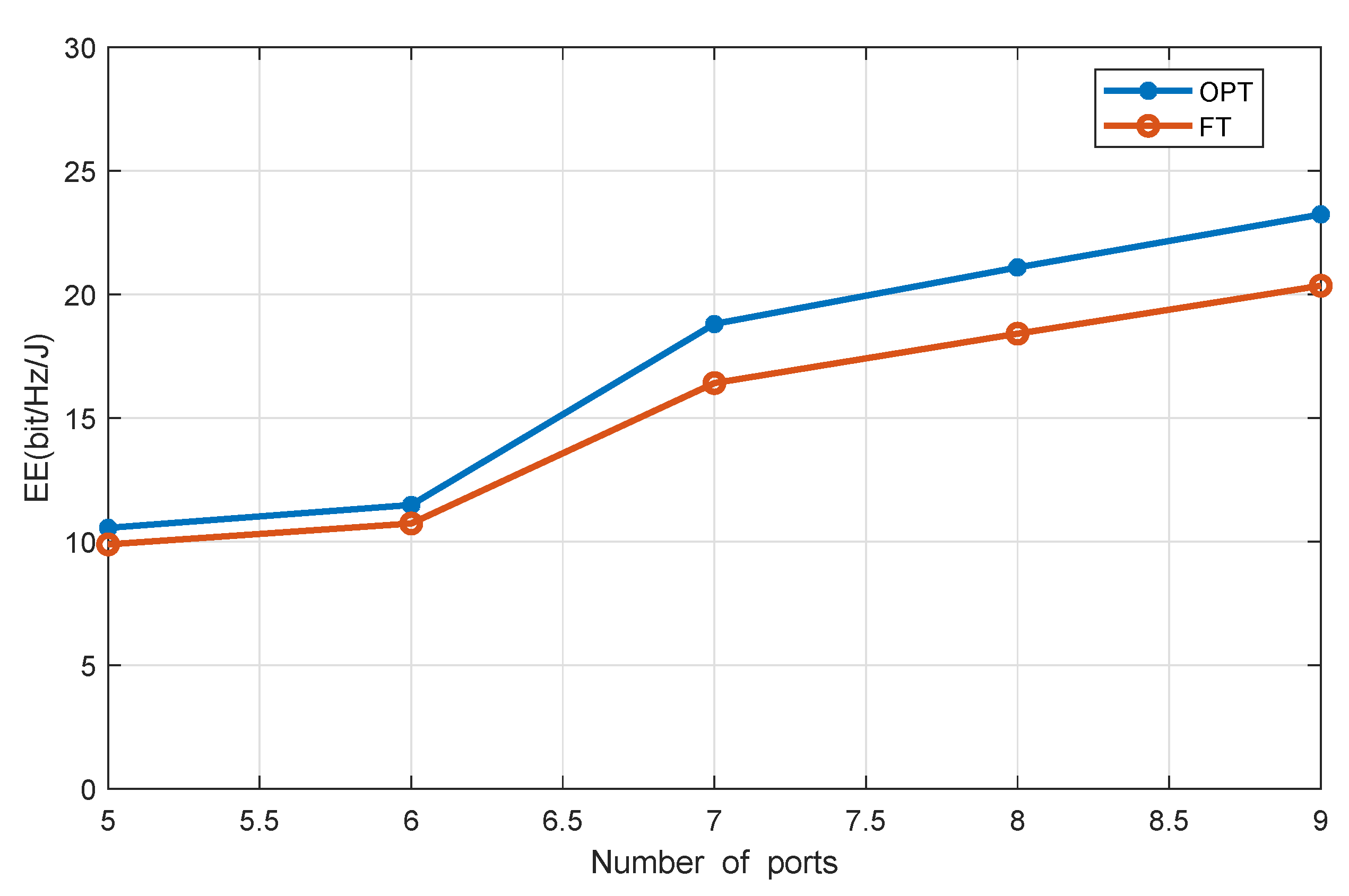

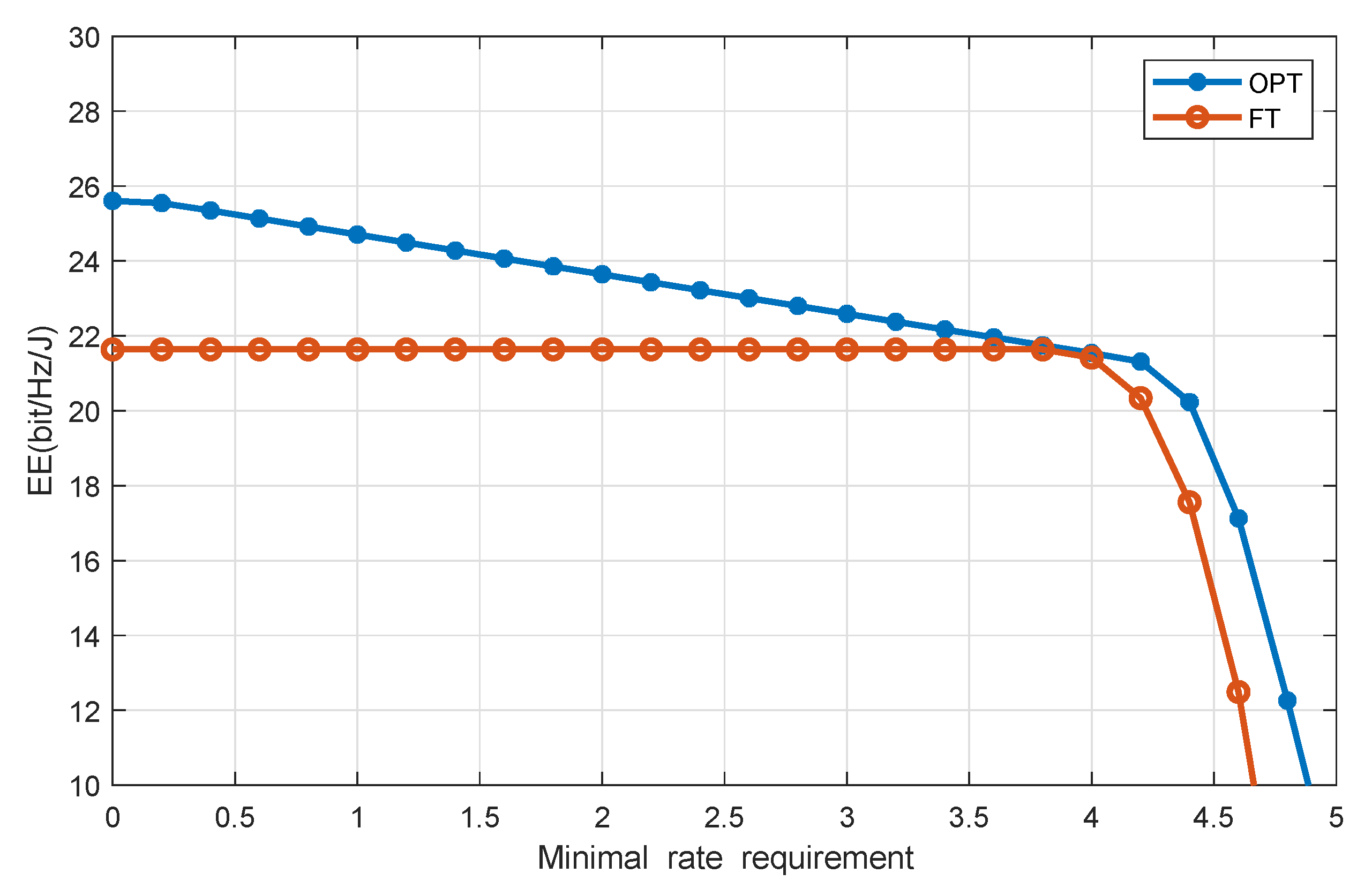

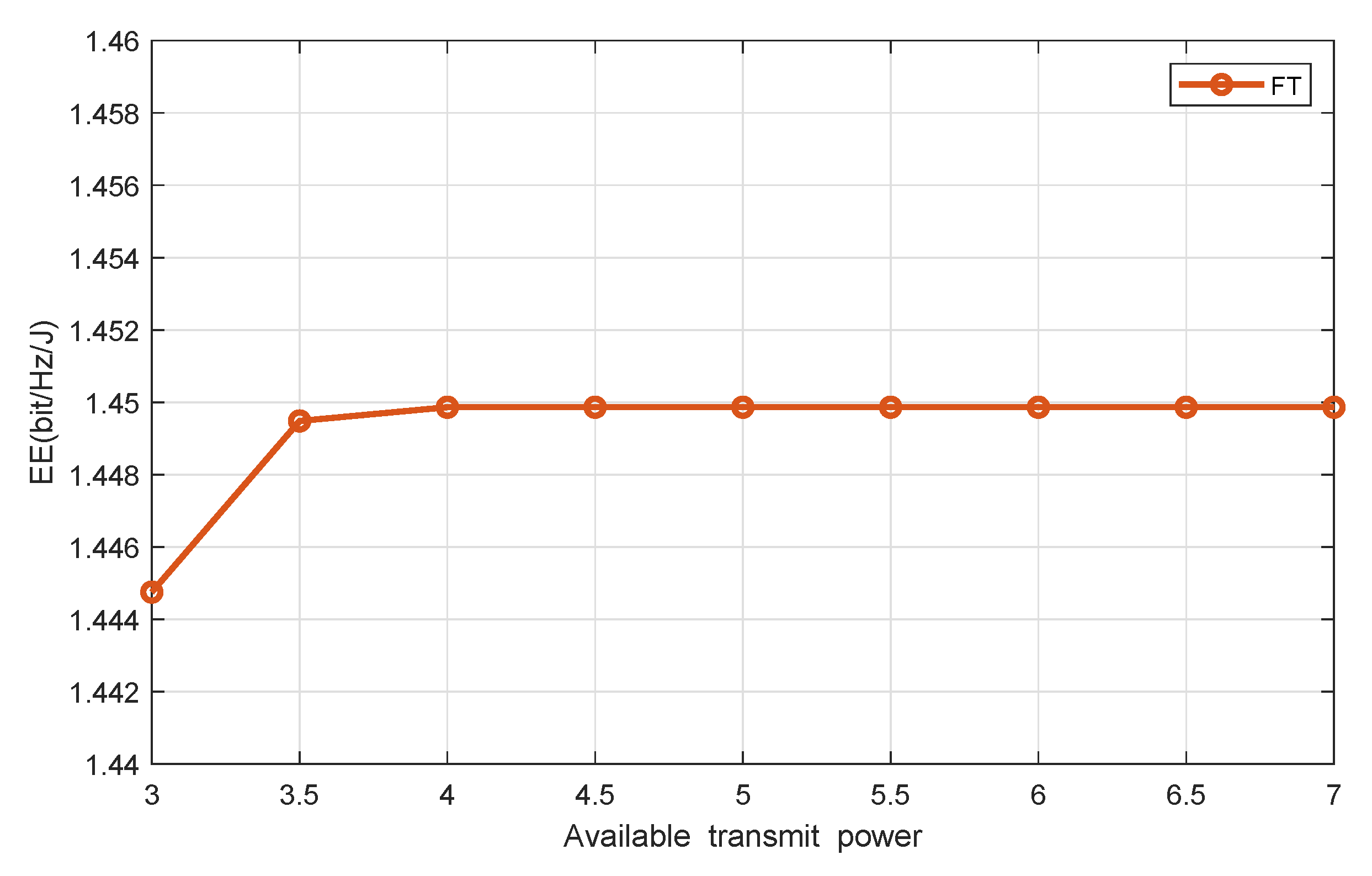

4. Simulations and Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| DAS | distributed antenna system |

| DAPs | distributed antenna ports |

| RF | radio frequency |

| TDMA | time division multiple access |

| EE | energy efficiency |

| IoT | Internet of Things |

| EH | energy harvesting |

| WPT | wireless power transmission |

| SWIPT | simultaneous wireless information and power transfer |

| MEC | mobile edge computing |

| HAP | hybrid access point |

| BCD | block-coordinate descent |

| MRT | maximum ratio transmission |

| AWGN | additive white Gaussian noise |

References

- Hui, H.W.; Zhou, C.; Xu, S.; Lin, F. A novel secure data transmission scheme in industrial internet of things. China Commun. 2020, 17, 73–88. [Google Scholar] [CrossRef]

- Yuan, T.; Liu, M.; Feng, Y. Performance Analysis for SWIPT Cooperative DF Communication Systems with Hybrid Receiver and Non-Linear Energy Harvesting Model. Sensors 2020, 20, 2472. [Google Scholar] [CrossRef] [PubMed]

- Cao, J.; Wu, Z.; Wu, J.; Xiong, H. SAIL: Summation-bAsed Incremental Learning for Information-Theoretic Text Clustering. IEEE Trans. Cybern. 2013, 43, 570–584. [Google Scholar] [PubMed]

- Yu, X.; Leung, S.; Xu, W.; Wang, J.; Dang, X. Precoding for Uplink Distributed Antenna Systems With Transmit Correlation in Rician Fading Channels. IEEE Trans. Commun. 2017, 65, 4966–4979. [Google Scholar] [CrossRef]

- Wang, Z.; Vandendorpe, L. Power Allocation for Energy Efficient Multiple Antenna Systems With Joint Total and Per-Antenna Power Constraints. IEEE Trans. Commun. 2018, 66, 4521–4535. [Google Scholar] [CrossRef]

- Feng, Y.; Zhang, W.; Ge, Y.; Lin, H. Frequency Synchronization in Distributed Antenna Systems: Pairing-Based Multi-CFO Estimation, Theoretical Analysis, and Optimal Pairing Scheme. IEEE Trans. Commun. 2019, 67, 2924–2938. [Google Scholar] [CrossRef]

- Xiong, K.; Chen, C.; Qu, G.; Fan, P.; Letaief, K. Group Cooperation With Optimal Resource Allocation in Wireless Powered Communication Networks. IEEE Trans. Wirel. Commun. 2017, 16, 3840–3853. [Google Scholar] [CrossRef]

- Mekikis, P.V.; Antonopulos, A.; Kartsakli, E.; Lalos, A.S. Information Exchange in Randomly Deployed Dense WSNs with Wireless Energy Harvesting Capabilities. IEEE Trans. Wirel. Commun. 2016, 15, 3008–3018. [Google Scholar] [CrossRef]

- APAMekikis, P.V.; Lalos, A.S.; Antonopoulos, A.; Alonso, L. Wireless Energy Harvesting in Two-Way Network Coded Cooperative Communications: A Stochastic Approach for Large Scale Networks. IEEE Commun. Lett. 2014, 18, 1011–1014. [Google Scholar]

- Gupta, S.S.; Pallapothu, S.K. Ordered Transmissions for Energy-Efficient Detection in Energy Harvesting Wireless Sensor Networks. IEEE Trans. Wirel. Commun. 2020, 68, 2525–2537. [Google Scholar] [CrossRef]

- Yuan, F.; Jin, S.; Wong, K.; Zhao, J.; Zhu, H. Wireless Information and Power Transfer Design for Energy Cooperation Distributed Antenna Systems. IEEE Access 2017, 5, 8094–8105. [Google Scholar] [CrossRef]

- Ning, P.; Mohammad, R.; Steven, C.; Dominique, S.; Sofie, P. Transmission Strategy for Simultaneous Wireless Information and Power Transfer with a Non-Linear Rectifier Model. Electronics 2020, 9, 1082. [Google Scholar]

- Cao, J.; Bu, Z.; Wang, Y.; Yang, H.; Jiang, J.; Li, H. Detecting Prosumer-Community Group in Smart Grids From the Multiagent Perspective. IEEE Trans. Syst. Man-Cybern. Syst. 2019, 49, 1652–1664. [Google Scholar] [CrossRef]

- Xue, L.; Wang, J.L.; Li, J.; Wang, Y.L.; Guan, X.P. Precoding Design for Energy Efficiency Maximization in MIMO Half-Duplex Wireless Sensor Networks with SWIPT. Sensors 2019, 19, 4923. [Google Scholar] [CrossRef]

- Ojo, K.F.; Mohd Salleh, F.M. Energy Efficiency Optimization for SWIPT-Enabled Cooperative Relay Networks in the Presence of Interfering Transmitter. IEEE Commun. Lett. 2019, 23, 1806–1810. [Google Scholar] [CrossRef]

- Salem, A.; Musavian, L.; Hamdi, K.A. Wireless Power Transfer in Distributed Antenna Systems. IEEE Trans. Wirel. Commun. 2019, 67, 737–747. [Google Scholar] [CrossRef]

- Huang, Y.; Liu, Y.; Li, G.Y. Energy Efficiency of Distributed Antenna Systems With Wireless Power Transfer. IEEE J. Sel. Areas Commun. 2019, 37, 89–99. [Google Scholar] [CrossRef]

- Liu, Y.; He, C.; Li, X.; Zhang, C.; Tian, C. Power Allocation Schemes Based on Machine Learning for Distributed Antenna Systems. IEEE Access 2019, 7, 20577–20584. [Google Scholar] [CrossRef]

- Rajaram, A.; Khan, R.; Tharranetharan, S.; Jayakody, D.N.K.; Dinis, R.; Panic, S. Novel SWIPT Schemes for 5G Wireless Networks. Sensors 2019, 19, 1169. [Google Scholar] [CrossRef]

- Nguyen, H.-S.; Ly, T.T.H.; Nguyen, T.-S.; Huynh, V.V.; Nguyen, T.-L.; Voznak, M. Outage Performance Analysis and SWIPT Optimization in Energy-Harvesting Wireless Sensor Network Deploying NOMA. Sensors 2019, 19, 613. [Google Scholar] [CrossRef]

- Nguyen, H.V.; Nguyen, V.-D.; Shin, O.-S. In-Band Full-Duplex Relaying for SWIPT-Enabled Cognitive Radio Networks. Electronics 2020, 9, 835. [Google Scholar] [CrossRef]

- Wang, G.; Meng, C.; Heng, W.; Chen, X. Secrecy Energy Efficiency Optimization in AN-Aided Distributed Antenna Systems With Energy Harvesting. IEEE Access 2018, 6, 32830–32838. [Google Scholar] [CrossRef]

- Zhang, M. Energy Efficiency Optimization for Secure Transmission in MISO Cognitive Radio Network With Energy Harvesting. IEEE Access 2019, 7, 126234–126252. [Google Scholar] [CrossRef]

- Xiong, K.; Fan, P.Y.; Lu, Y.; Letaief, K. Energy Efficiency with Proportional Rate Fairness in Multirelay OFDM Networks. IEEE J. Sel. Areas Commun. 2016, 34, 1431–1447. [Google Scholar] [CrossRef]

- Xiong, K.; Lu, Y.; Fan, P.Y. Secrecy Energy Efficiency in Multi-Antenna SWIPT Networks with Dual-Layer PS Receivers. IEEE Trans. Wirel. Commun. 2020, 19, 4290–4306. [Google Scholar]

- Lu, Y.; Xiong, K.; Fan, P.Y.; Ding, Z.; Zhong, Z.D.; Letaief, K. Global Energy Efficiency in Secure MISO SWIPT Systems With Non-Linear Power-Splitting EH Model. IEEE J. Sel. Areas Commun. 2019, 37, 216–232. [Google Scholar] [CrossRef]

- Lu, Y.; Xiong, K.; Fan, P.Y.; Zhong, Z.D.; Letaief, K. Robust Transmit Beamforming With Artificial Redundant Signals for Secure SWIPT System Under Non-Linear EH Model. IEEE Trans. Wireless Commun. 2018, 17, 2218–2232. [Google Scholar] [CrossRef]

- Boshkovska, E.; Ng, D.W.K.N.; Zlatanov, N.; Schober, R. Practical Non-Linear Energy Harvesting Model and Resource Allocation for SWIPT Systems. IEEE Commun. Lett. 2015, 19, 2082–2085. [Google Scholar] [CrossRef]

- Dinkelbach, W. On nonlinear fractional programming. Manage. Sci. 1967, 13, 492–498. [Google Scholar] [CrossRef]

- Boyd, S.; Vandenberghe, L. Convex Optimization II. Cambridge; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Richtarik, P.; Takac, M. Iteration complexity of randomized block-coordinate descent methods for minimizing a composite function. Math. Program. 2011, 144, 1–38. [Google Scholar] [CrossRef]

- Bürgisser, P.; Cucker, F. The Ellipsoid Method Condition; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

| Parameters | Notation | Value |

|---|---|---|

| Noise power | W | |

| Path loss at a reference distance of 1m | a | |

| Energy conversion efficiency | 0.6 | |

| Length of the square | l | 30 m |

| Path loss exponent | 2 | |

| Circuit power consumption | 0.5 W | |

| Weight of users | 1 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, J.; Xiong, K.; Cao, J.; Yang, X.; Liu, T. Energy Efficiency in RF Energy Harvesting-Powered Distributed Antenna Systems for the Internet of Things. Sensors 2020, 20, 4631. https://doi.org/10.3390/s20164631

Li J, Xiong K, Cao J, Yang X, Liu T. Energy Efficiency in RF Energy Harvesting-Powered Distributed Antenna Systems for the Internet of Things. Sensors. 2020; 20(16):4631. https://doi.org/10.3390/s20164631

Chicago/Turabian StyleLi, Jiaxin, Ke Xiong, Jie Cao, Xi Yang, and Tong Liu. 2020. "Energy Efficiency in RF Energy Harvesting-Powered Distributed Antenna Systems for the Internet of Things" Sensors 20, no. 16: 4631. https://doi.org/10.3390/s20164631

APA StyleLi, J., Xiong, K., Cao, J., Yang, X., & Liu, T. (2020). Energy Efficiency in RF Energy Harvesting-Powered Distributed Antenna Systems for the Internet of Things. Sensors, 20(16), 4631. https://doi.org/10.3390/s20164631