A Subspace Based Transfer Joint Matching with Laplacian Regularization for Visual Domain Adaptation

Abstract

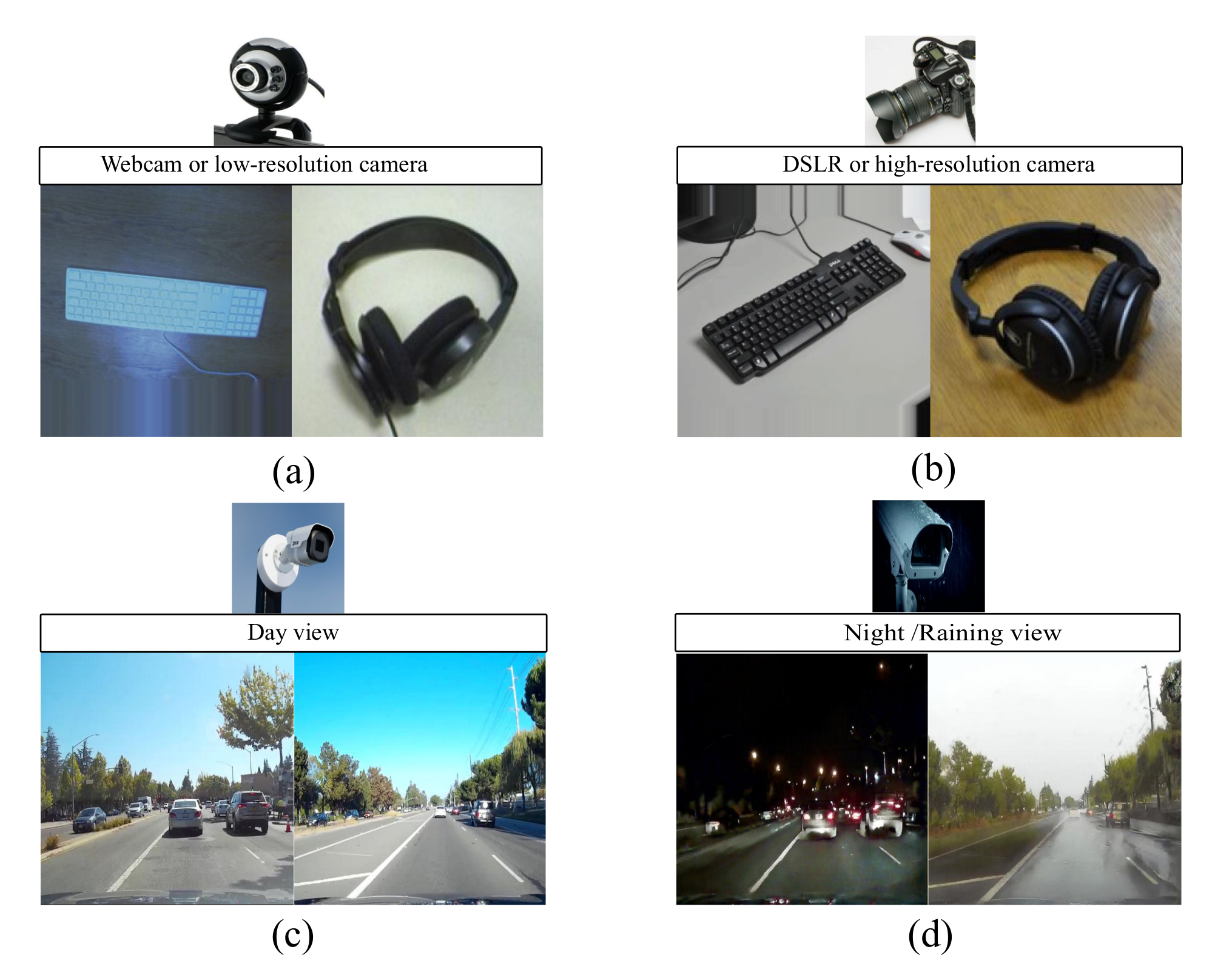

1. Introduction

- The proposed method STJML is the first framework that crosses the limits of all the comparative cutting edge methods, by considering all inevitable properties such as projecting both domain data into a low dimensional manifold, instance re-weighting, minimizing marginal and conditional distributions, and geometrical structure of both domains in a common framework.

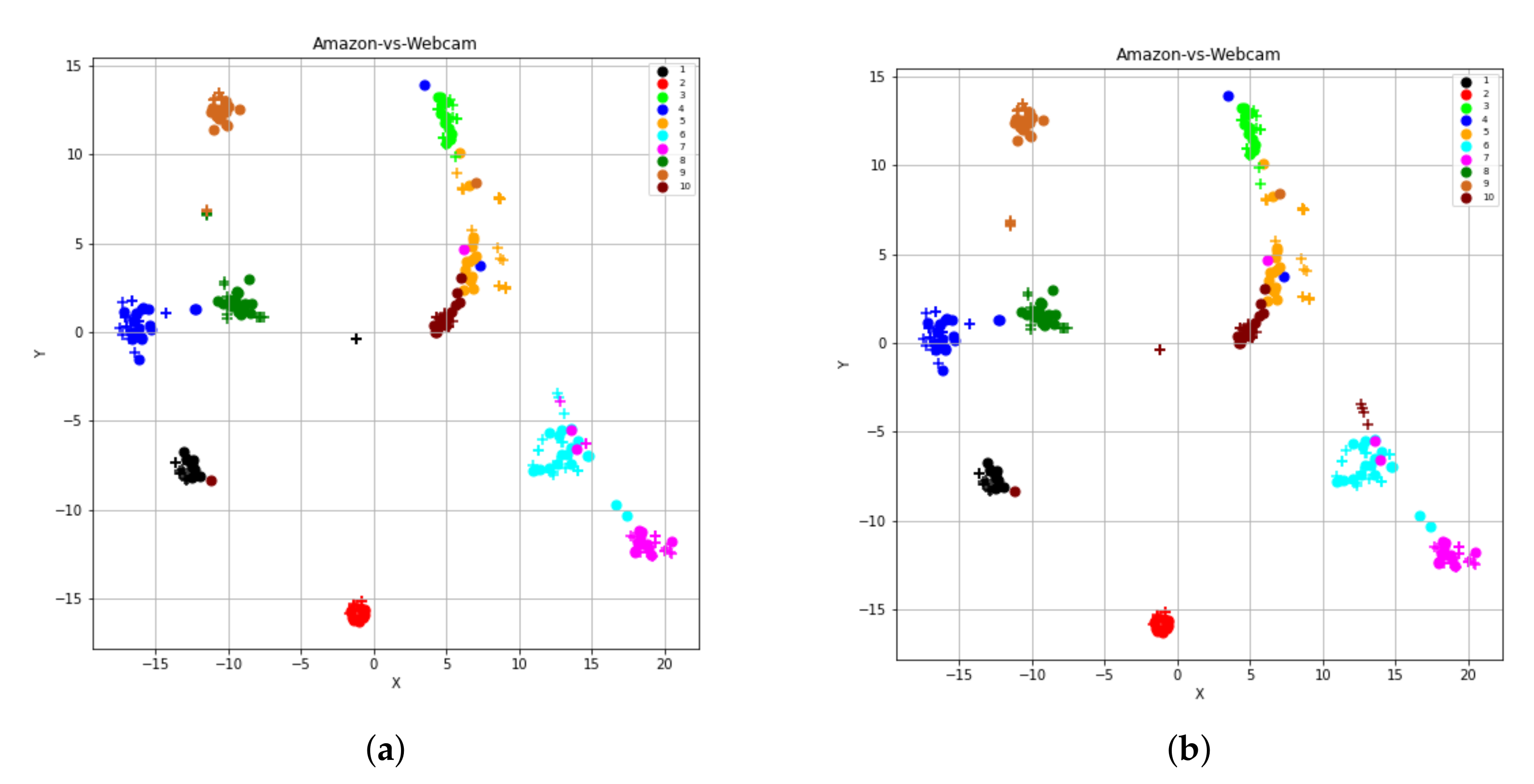

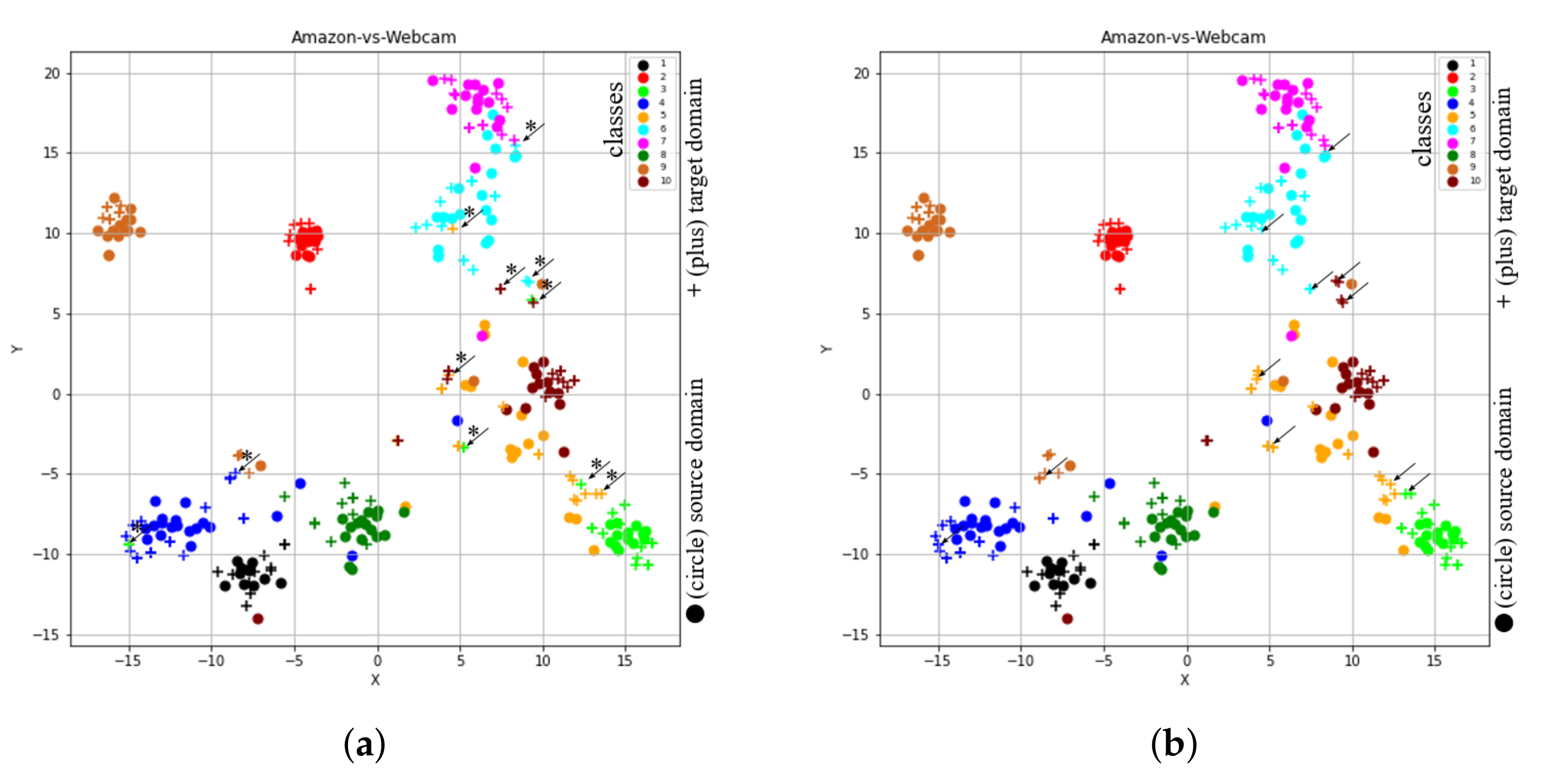

- With the help of the t-SNE tool, to illustrate the reason for the inclusion of all the components (or inevitable properties), we have graphically visualized the features learned by the proposed method after excluding any component.

2. Related Work

3. A Subspace Based Transfer Joint Matching with Laplacian Regularization

3.1. Problem Definition

3.2. Formulation

3.3. Subspace Generation

3.4. Feature Transformation

3.4.1. Feature Matching with Marginal Distribution

3.4.2. Feature Matching with Conditional Distribution

3.5. Instance Re-Weighting

3.6. Exploitation of Geometrical Structure with Laplacian Regularization

3.7. Overall Objective Function

3.8. Optimization

4. Experiments

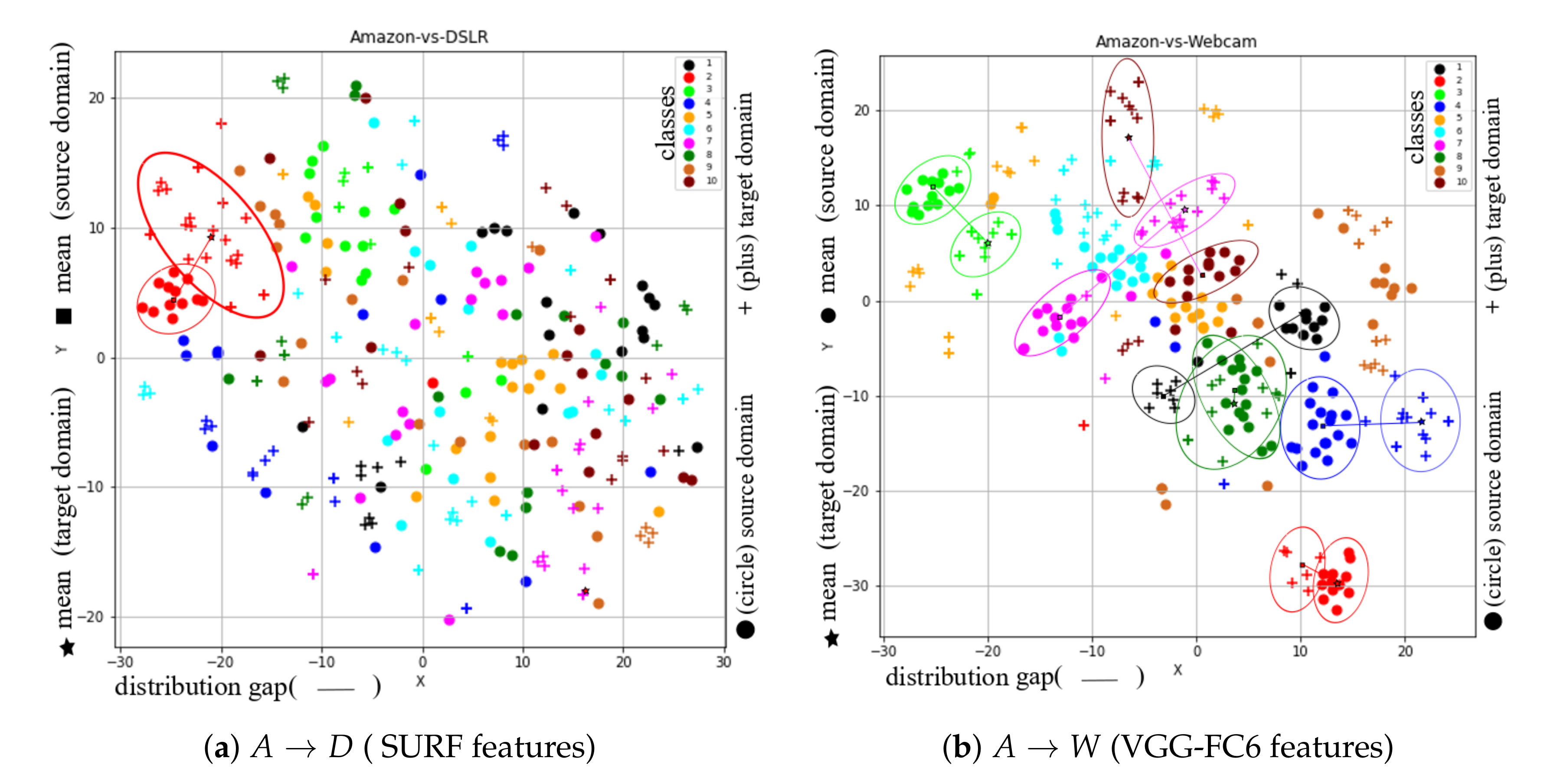

4.1. Data Preparation

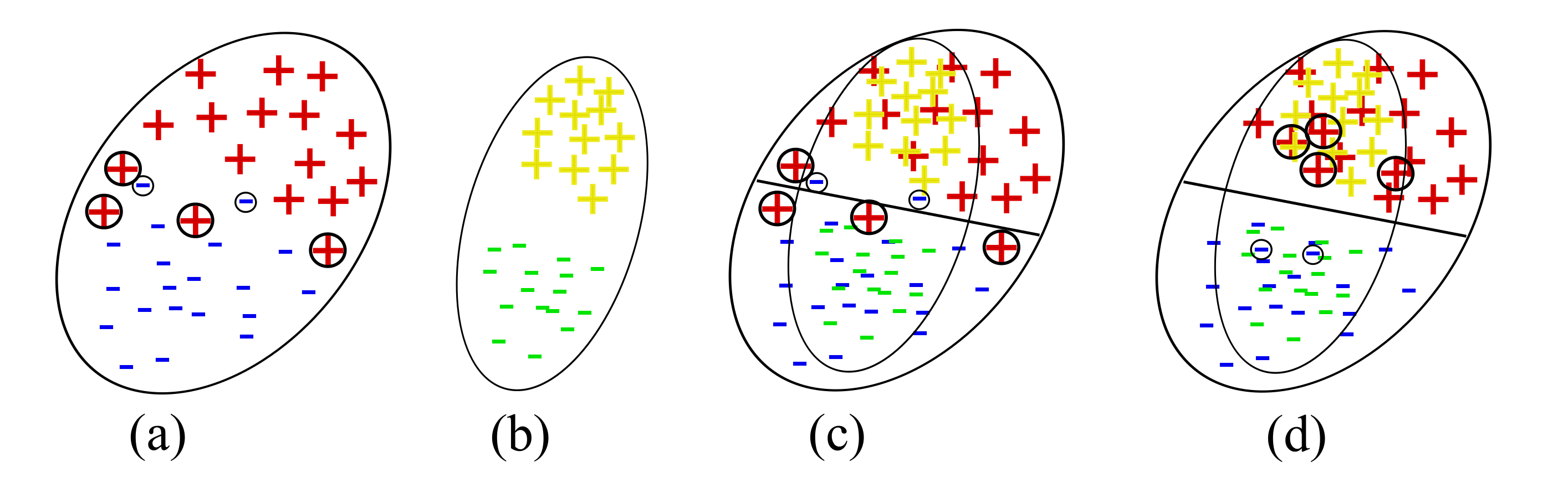

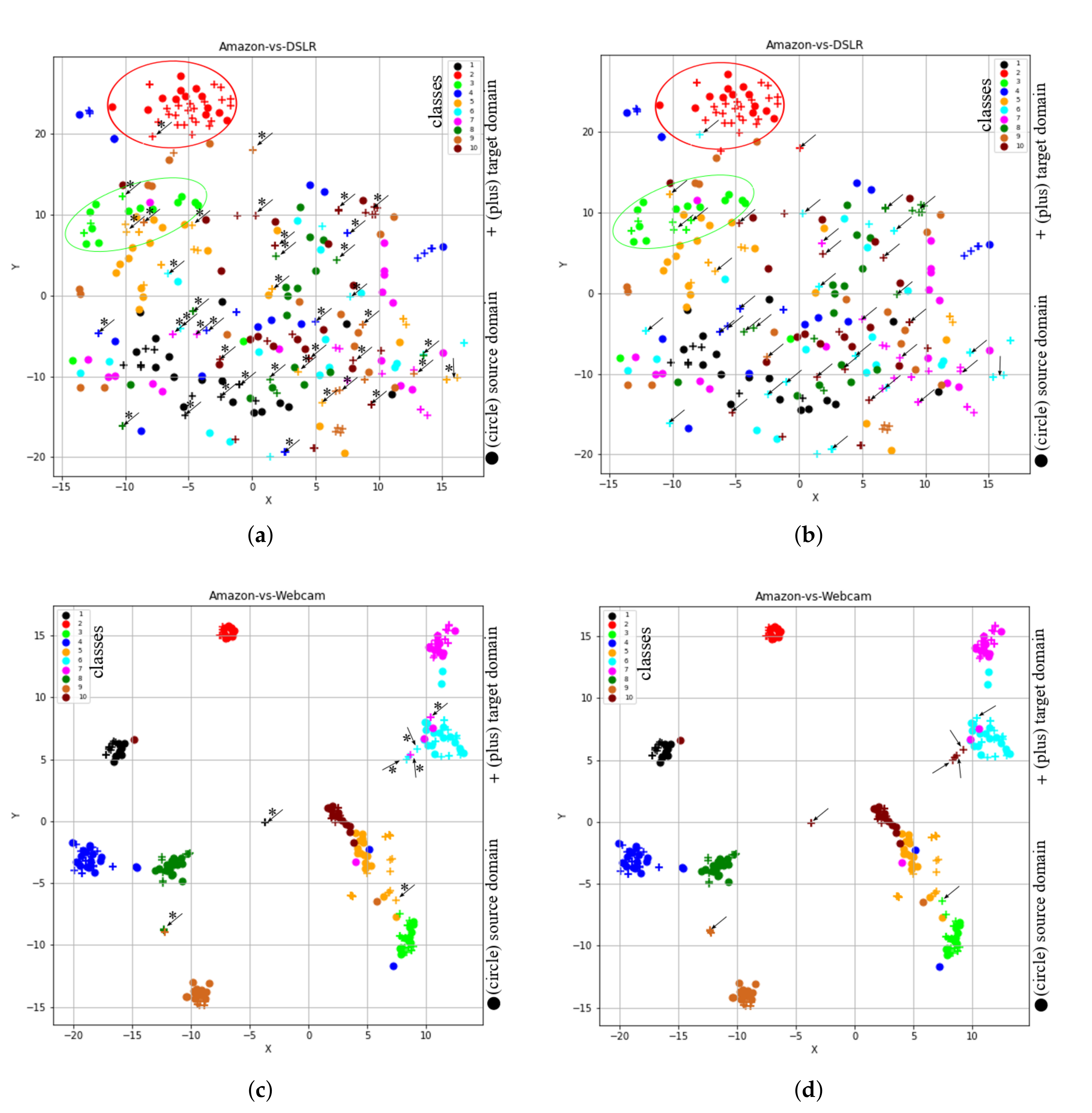

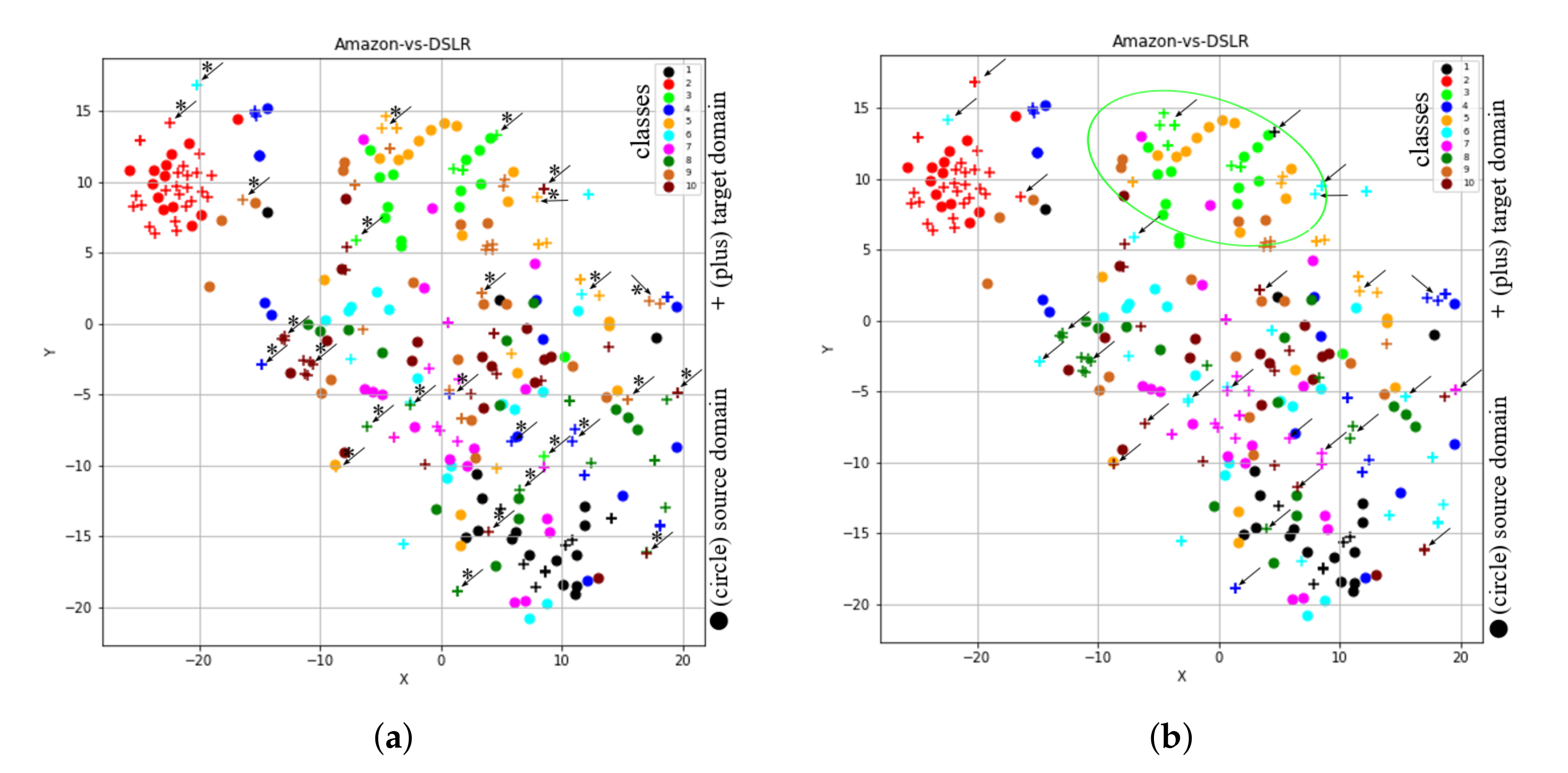

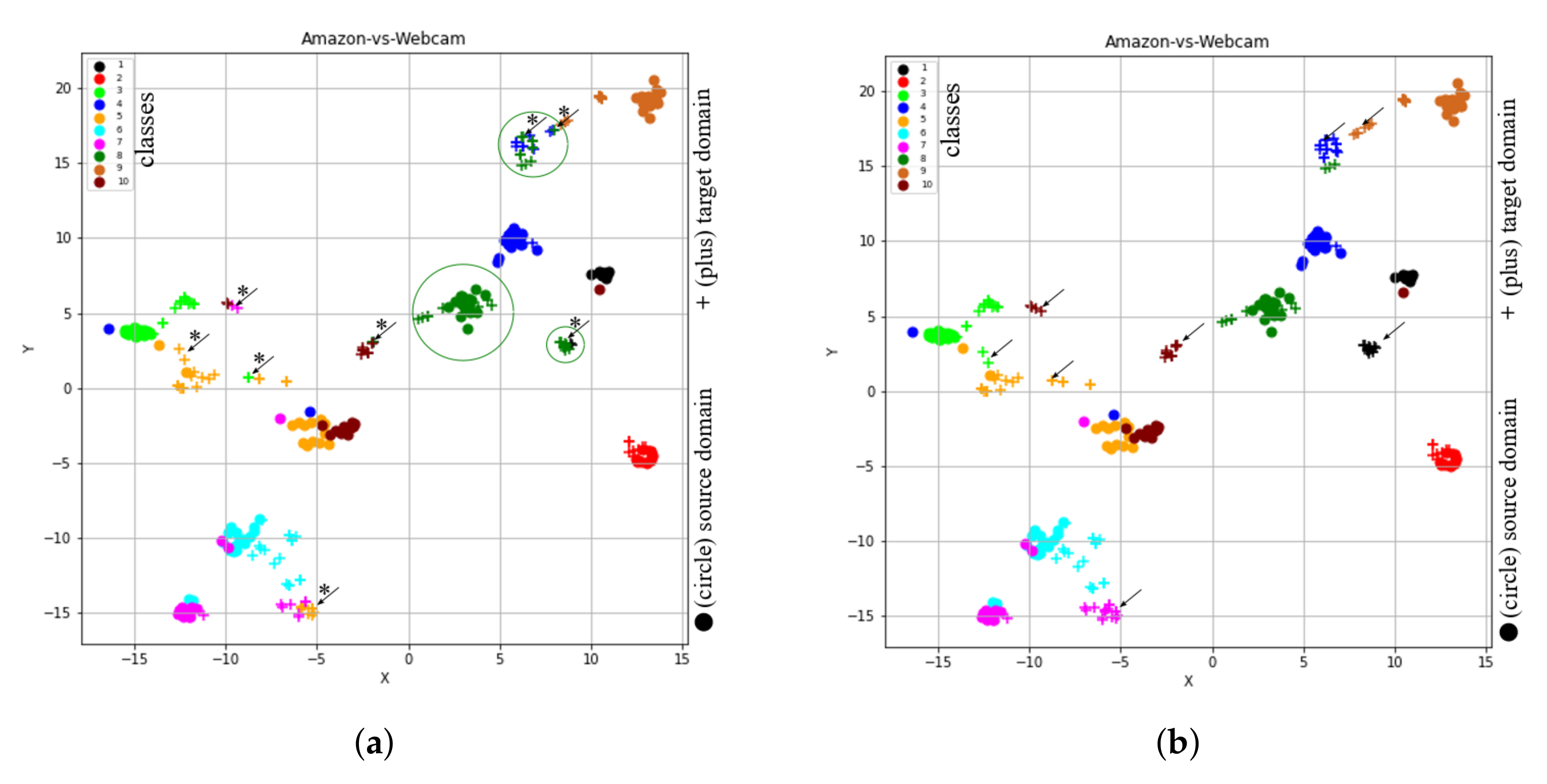

4.2. t-SNE Representation of Feature Spaces Learned by the Proposed Method (STJML)

4.3. What Happens if One Component Is Omitted from the Proposed Method (STJML)

4.3.1. Omitting Consideration of Subspaces of Both Domains ()

4.3.2. Omitting Instance-Re-Weighting Term ()

4.3.3. Omitting Marginal Distribution Term ()

4.3.4. Omitting Conditional Distribution Term ()

4.3.5. Omitting Laplacian Regularization Term ()

4.4. Comparison with State-Of-The-Art Methods

- NN, PCA+1NN, and SVM: These are the traditional machine learning algorithms, which assume that both training and test data should follow a uniform distribution.

- Transfer Component Analysis (TCA) [5]: TCA is a feature transformation technique that aligns only the marginal distribution of both domains.

- Joint Distribution Alignment (JDA) [6]: This method aligns both the marginal and conditional distributions of both domains.

- Geodesic Flow Kernel (GFK) [29]: In order to characterize the changes of geometric and statistical properties from the source domain to the target domain, GFK integrates countless subspaces.

- Transfer Joint Matching (TJM) [7]: This method demonstrates both feature learning and instance re-weighting for minimizing distribution differences between both domains.

- Subspace Alignment (SA) [15]: SA first projects both domain samples into lower-dimensional subspaces, then aligns both domains.

- Scatter Component Analysis (SCA) [30]: This method is based on a simple geometrical measure, i.e., scatter.

- CORAL [31]: It aligns both domain covariance matrices.

- Adaptation Regularization (ARTL) [14]: This method learns domain classifier in original space.

- Cross-Domain Metric Learning (CDML) [32]: It is a novel metric learning algorithm to transfer knowledge in an information-theoretic setting.

- Close yet Discriminative DA(CDDA), Geometry Aware DA (GA-DA), and Discriminative and Geometry Aware DA (DGA-DA) [33]: CDDA enhances the performance of the JDA method by incorporating a new repulsive force objective into its model to improve the discriminative power of the common feature subspace. Likewise, the GA-DA method includes the original parity of a data point in CDDA to improve its performance. Then, finally, the GA-DA method involves preserving the discriminative information term to improve the DGA-DA method performance further.

- Invariant Latent Space (ILS) [34]: This TL method makes use of the Riemannian optimization methods to match statistical properties.

- Balanced Distribution Adaptation (BDA) [35]: It is a novel TL approach to adaptively balance both the marginal and conditional distributions of both domain data.

- Joint Geometrical and Statistical Alignment (JGSA) [8]: It extends the JDA by adopting two projection vector matrices and considering subspace alignment and source discriminant information.

- Robust Transfer Metric Learning (RTML) [22]: This method considers two directions, i.e., sample space and feature space, to mitigate the distribution gap.

- Domain Invariant and Class Discriminative (DICD) [36]: This DA method is to learn a latent feature space while preserving important data properties.

- Explicit Map-based Feature Selection (EMFS) [37]: It attempts to: (1) reveal high-order invariant features by explicit feature map, (2) integrate feature learning and model learning, and (3) remove non-discriminative features from invariant features.

- Domain Irrelevant Class clustering (DICE) [38]: This method specifically deals with the intra-domain structure for the target domain in addition to other common properties.

- Linear Discriminant Analysis-inspired Domain Adaptation (LDADA) [39]: The key insight of this approach is to leverage the discriminative information from the target task, even when the target domain labels are not given.

- Kernelized Unified Framework for Domain Adaptation (KUFDA) [16]: This TL method improves the JGSA method by adding the Laplacian regularization term.

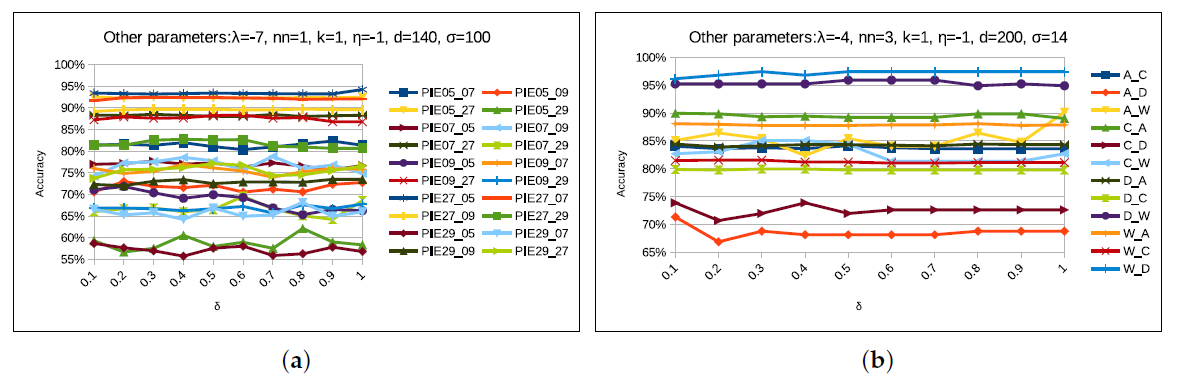

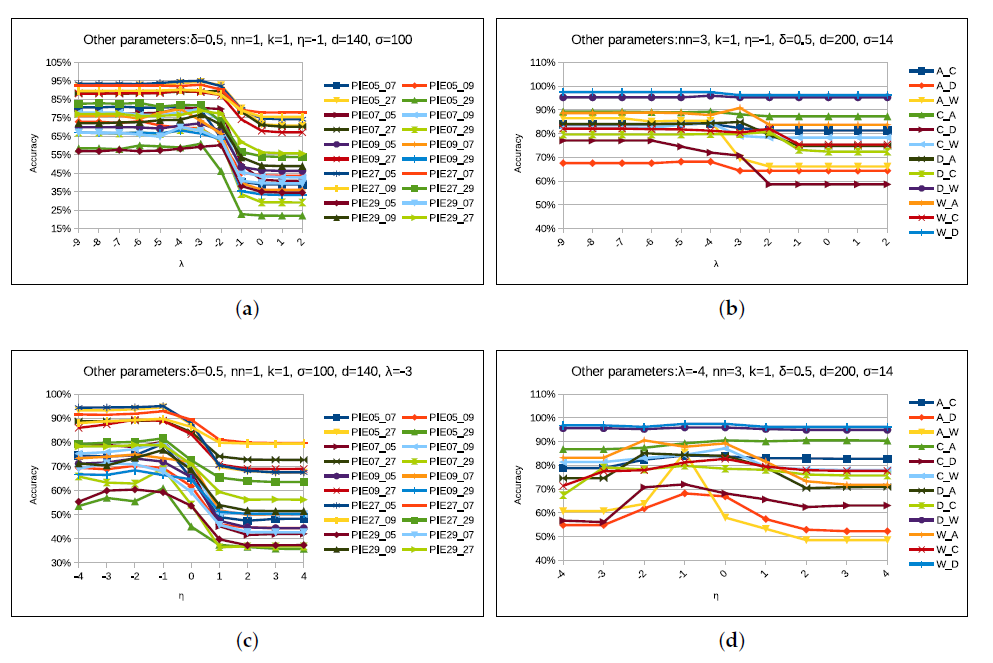

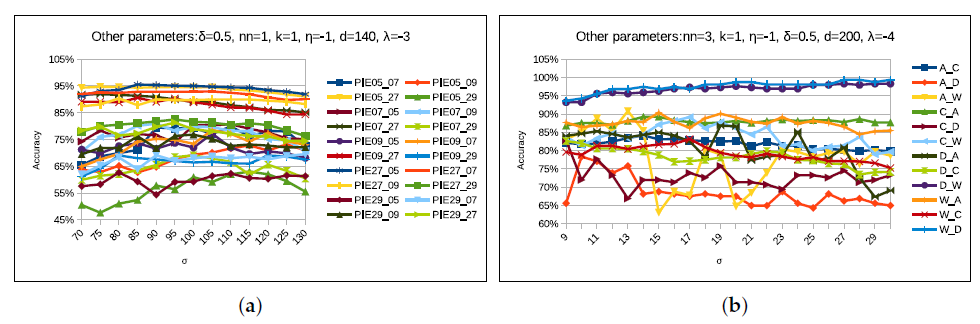

4.5. Parameter Sensitivity

4.5.1. k Parameter

4.5.2. Parameter

4.5.3. Parameter

4.5.4. Parameter: and

4.5.5. Parameter:

4.5.6. Parameter: d

4.6. Experimental Setup

4.7. Experimental Results and Analysis

- Primitive machine learning approaches such as NN, PCA, and SVM are not performing well due to the distribution gap between training (source data) and testing (target data) datasets.

- Among domain adaptation methods, the GFK method’s performance is worse for an average accuracy of all tasks in the Office + Caltech dataset for both SURF and VGG-FC6 features.

- The JDA method’s performance for all the three datasets is higher than that of the TCA method because it adopts the conditional distribution in addition to the marginal distribution.

- The ILS method works well compared to other subalignment methods (such as SA, GFK, and CORAL) because of considering the more robust discriminative loss function for the Office + Caltech dataset with deeper features.

- As TJM adopts the term instance re-weighting, its performance is better than other DA methods such as TCA, GFK, JDA, SA, CORAL, ILS, and BDA for the deep features of Office + Caltech dataset. However, for the SURF features, TJM gives better average accuracy than SCA, ARTL, GFK, and TCA, but performs poorly compared to JGSA, CORAL, LDADA, DICE, RTML, ILS, and JDA.

- The average accuracy (65.09%) of the DGA-DA method for all tasks in the PIE face dataset is higher than that of other methods (i.e., TCA, JDA, CDML, TJM, TDA-AL, CDDA, BDA, RTML, EMFS, and LDADA) because it involves novel repulsive force term and the Laplacian regularization term.

- Since JGSA improves JDA by considering two projection vector matrices and preserving source domain discriminant information, the mean accuracy (82.60%) of the JGSA method for all tasks in the Office + Caltech dataset with deep features is higher than other methods (i.e., TCA, JDA, GFK, SA, TJM, ILS, BDA, DICE, and CDDA). Similarly, for the Office + Caltech dataset with SURF features, it performs well compared to all other comparative methods except KUFDA and DICE.

- As KUFDA improves JGSA by considering the term Laplacian regularization, its average accuracy is much higher than other methods for the deep features of the Office + Caltech dataset, but less than the DICE method for most of the tasks in the PIE face dataset.

- For the PIE face and the Office + Caltech10 with SURF features datasets, DICE is performing better than all other methods (except STJML) because it is taking care of the intra-domain structure, especially for the target domain. However, its performance is abysmal for deep features of the Office + Caltech 10 dataset.

- Since our proposed method covers all the important objectives, as well as works on the projected subspaces of both the domains, the average accuracy of the proposed STJML method for all the tasks in all the considered datasets, is higher than all the other comparative methods. However, KUFDA beats our proposed algorithm for some tasks in the Office + Caltech dataset with deep features such as and . Similarly, DICE beats the proposed method for eight tasks of the PIE face dataset.

4.8. Computational Complexity

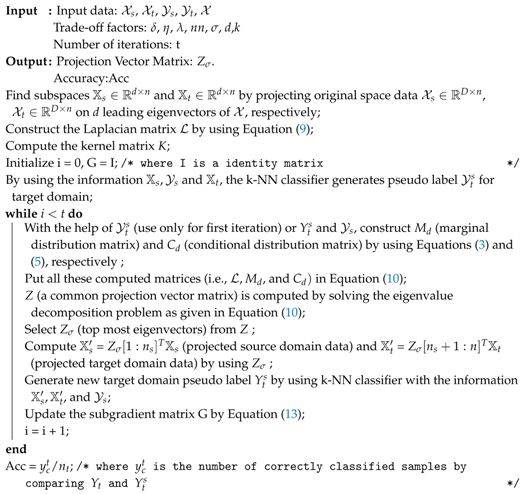

| Algorithm 1: Subspace based Transfer Joint Matching with Laplacian Regularization (STJML) |

|

4.9. Running Time Analysis

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Wang, X.; Liu, F. Triplet loss guided adversarial domain adaptation for bearing fault diagnosis. Sensors 2020, 20, 320. [Google Scholar] [CrossRef] [PubMed]

- Chen, Z.; Li, X.; Zheng, H.; Gao, H.; Wang, H. Domain adaptation and adaptive information fusion for object detection on foggy days. Sensors 2018, 18, 3286. [Google Scholar] [CrossRef] [PubMed]

- Joshi, K.A.; Thakore, D.G. A survey on moving object detection and tracking in video surveillance system. Int. J. Soft Comput. Eng. 2012, 2, 44–48. [Google Scholar]

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Pan, S.J.; Tsang, I.W.; Kwok, J.T.; Yang, Q. Domain adaptation via transfer component analysis. IEEE Trans. Neural Netw. 2011, 22, 199–210. [Google Scholar] [CrossRef]

- Long, M.; Wang, J.; Ding, G.; Sun, J.; Yu, P.S. Transfer feature learning with joint distribution adaptation. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 2200–2207. [Google Scholar]

- Long, M.; Wang, J.; Ding, G.; Sun, J.; Yu, P.S. Transfer joint matching for unsupervised domain adaptation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1410–1417. [Google Scholar]

- Zhang, J.; Li, W.; Ogunbona, P. Joint geometrical and statistical alignment for visual domain adaptation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1859–1867. [Google Scholar]

- Xu, Y.; Pan, S.J.; Xiong, H.; Wu, Q.; Luo, R.; Min, H.; Song, H. A unified framework for metric transfer learning. IEEE Trans. Knowl. Data Eng. 2017, 29, 1158–1171. [Google Scholar] [CrossRef]

- Dai, W.; Yang, Q.; Xue, G.R.; Yu, Y. Boosting for transfer learning. In Proceedings of the 24th International Conference on Machine Learning, Corvallis, OR, USA, 20–24 June 2007; pp. 193–200. [Google Scholar]

- Pardoe, D.; Stone, P. Boosting for regression transfer. In Proceedings of the 27th International Conference on International Conference on Machine Learning, Haifa, Israel, 21–24 June 2010; pp. 863–870. [Google Scholar]

- Wan, C.; Pan, R.; Li, J. Bi-weighting domain adaptation for cross-language text classification. In Proceedings of the Twenty-Second International Joint Conference on Artificial Intelligence, Barcelona, Spain, 16–22 July 2011. [Google Scholar]

- Wang, J.; Feng, W.; Chen, Y.; Yu, H.; Huang, M.; Yu, P.S. Visual domain adaptation with manifold embedded distribution alignment. In Proceedings of the 2018 ACM Multimedia Conference on Multimedia Conference, Seoul, Korea, 22–26 October 2018; ACM: New York, NY, USA, 2018; pp. 402–410. [Google Scholar]

- Long, M.; Wang, J.; Ding, G.; Pan, S.J.; Philip, S.Y. Adaptation regularization: A general framework for transfer learning. IEEE Trans. Knowl. Data Eng. 2014, 26, 1076–1089. [Google Scholar] [CrossRef]

- Fernando, B.; Habrard, A.; Sebban, M.; Tuytelaars, T. Unsupervised visual domain adaptation using subspace alignment. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 2960–2967. [Google Scholar]

- Sanodiya, R.K.; Mathew, J.; Paul, B.; Jose, B.A. A kernelized unified framework for domain adaptation. IEEE Access 2019, 7, 181381–181395. [Google Scholar] [CrossRef]

- Pan, S.J.; Kwok, J.T.; Yang, Q. Transfer Learning via Dimensionality Reduction; AAAI: Chicago, IL, USA, 2008; Volume 8, pp. 677–682. [Google Scholar]

- Hofmann, T.; Schölkopf, B.; Smola, A.J. Kernel methods in machine learning. Ann. Stat. 2008, 1171–1220. [Google Scholar] [CrossRef]

- Shao, M.; Kit, D.; Fu, Y. Generalized transfer subspace learning through low-rank constraint. Int. J. Comput. Vis. 2014, 109, 74–93. [Google Scholar] [CrossRef]

- Kulis, B.; Saenko, K.; Darrell, T. What you saw is not what you get: Domain adaptation using asymmetric kernel transforms. In Proceedings of the IEEE CVPR 2011, Providence, RI, USA, 20–25 June 2011; pp. 1785–1792. [Google Scholar]

- Zhang, Y.; Yeung, D.Y. Transfer metric learning by learning task relationships. In Proceedings of the 16th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Washington, DC, USA, 25–28 July 2010; pp. 1199–1208. [Google Scholar]

- Ding, Z.; Fu, Y. Robust transfer metric learning for image classification. IEEE Trans. Image Process. 2017, 26, 660–670. [Google Scholar] [CrossRef] [PubMed]

- Gretton, A.; Borgwardt, K.; Rasch, M.; Schölkopf, B.; Smola, A.J. A kernel method for the two-sample-problem. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2007; pp. 513–520. [Google Scholar]

- Sun, Q.; Chattopadhyay, R.; Panchanathan, S.; Ye, J. A two-stage weighting framework for multi-source domain adaptation. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2011; pp. 505–513. [Google Scholar]

- Zhou, D.; Bousquet, O.; Lal, T.N.; Weston, J.; Schölkopf, B. Learning with local and global consistency. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2004; pp. 321–328. [Google Scholar]

- Sanodiya, R.K.; Mathew, J.; Saha, S.; Thalakottur, M.D. A new transfer learning algorithm in semi-supervised setting. IEEE Access 2019, 7, 42956–42967. [Google Scholar] [CrossRef]

- Sim, T.; Baker, S.; Bsat, M. The CMU Pose Illumination and Expression Database of Human Faces. Available online: https://www.ri.cmu.edu/pub_files/pub2/sim_terence_2001_1/sim_terence_2001_1.pdf (accessed on 10 July 2020).

- Maaten, L.v.d.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Gong, B.; Shi, Y.; Sha, F.; Grauman, K. Geodesic flow kernel for unsupervised domain adaptation. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2066–2073. [Google Scholar]

- Ghifary, M.; Balduzzi, D.; Kleijn, W.B.; Zhang, M. Scatter component analysis: A unified framework for domain adaptation and domain generalization. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1414–1430. [Google Scholar] [CrossRef] [PubMed]

- Sun, B.; Saenko, K. Subspace Distribution Alignment for Unsupervised Domain Adaptation. In Proceedings of the British Machine Vision Conference, Swansea, UK, 7–10 September 2015; Volume 4, pp. 24–31. [Google Scholar]

- Wang, H.; Wang, W.; Zhang, C.; Xu, F. Cross-domain metric learning based on information theory. In Proceedings of the Twenty-Eighth AAAI Conference on Artificial Intelligence, Quebec City, QC, Canada, 27–31 July 2014. [Google Scholar]

- Luo, L.; Chen, L.; Hu, S.; Lu, Y.; Wang, X. Discriminative and geometry aware unsupervised domain adaptation. arXiv 2017, arXiv:1712.10042. [Google Scholar] [CrossRef]

- Herath, S.; Harandi, M.; Porikli, F. Learning an invariant hilbert space for domain adaptation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3845–3854. [Google Scholar]

- Wang, J.; Chen, Y.; Hao, S.; Feng, W.; Shen, Z. Balanced distribution adaptation for transfer learning. In Proceedings of the 2017 IEEE International Conference on Data Mining (ICDM), New Orleans, LA, USA, 18–21 November 2017; pp. 1129–1134. [Google Scholar]

- Li, S.; Song, S.; Huang, G.; Ding, Z.; Wu, C. Domain invariant and class discriminative feature learning for visual domain adaptation. IEEE Trans. Image Process. 2018, 27, 4260–4273. [Google Scholar] [CrossRef]

- Deng, W.Y.; Lendasse, A.; Ong, Y.S.; Tsang, I.W.H.; Chen, L.; Zheng, Q.H. Domain adaption via feature selection on explicit feature map. IEEE Trans. Neural Netw. Learn. Syst. 2018, 30, 1180–1190. [Google Scholar] [CrossRef]

- Liang, J.; He, R.; Sun, Z.; Tan, T. Aggregating randomized clustering-promoting invariant projections for domain adaptation. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 1027–1042. [Google Scholar] [CrossRef]

- Lu, H.; Shen, C.; Cao, Z.; Xiao, Y.; van den Hengel, A. An embarrassingly simple approach to visual domain adaptation. IEEE Trans. Image Process. 2018, 27, 3403–3417. [Google Scholar] [CrossRef]

- Zhang, J.; Li, W.; Ogunbona, P. Transfer learning for cross-dataset recognition: A survey. arXiv 2017, arXiv:1705.04396. [Google Scholar]

- Shu, L.; Latecki, L.J. Transductive domain adaptation with affinity learning. In Proceedings of the 24th ACM International on Conference on Information and Knowledge Management, Melbourne, Australia, 19–23 October 2015; pp. 1903–1906. [Google Scholar]

- Ruder, S. An overview of multi-task learning in deep neural networks. arXiv 2017, arXiv:1706.05098. [Google Scholar]

- Nguyen, B.H.; Xue, B.; Andreae, P. A particle swarm optimization based feature selection approach to transfer learning in classification. In Proceedings of the Genetic and Evolutionary Computation Conference, Kyoto, Japan, 15–19 July 2018; pp. 37–44. [Google Scholar]

- Liu, W.; Wang, Z.; Liu, X.; Zeng, N.; Liu, Y.; Alsaadi, F.E. A survey of deep neural network architectures and their applications. Neurocomputing 2017, 234, 11–26. [Google Scholar] [CrossRef]

| Tasks | Primitive Algorithms | Transfer Learning Algorithms | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| NN | PCA | TCA [5] | GFK [29] | JDA [6] | CDML [32] | TJM [7] | TDA-AL [41] | CDDA [33] | BDA [35] | GA-DA [33] | DGA-DA [33] | JGSA [8] | RTML [22] | EMFS [37] | DICD [36] | LDADA [39] | KUFDA [16] | DICE [38] | STJML Proposed | |

| PIE 1 5→7 | 26.09 | 24.80 | 40.76 | 26.15 | 58.81 | 53.22 | 29.52 | 35.97 | 60.22 | 23.98 | 57.40 | 65.32 | 68.07 | 60.12 | 61.8 | 73.0 | 34.5 | 67.67 | 84.1 | 82.32 |

| PIE 2 5→9 | 26.59 | 25.18 | 41.79 | 27.27 | 54.23 | 53.12 | 33.76 | 32.97 | 58.70 | 24.00 | 60.54 | 62.81 | 67.52 | 55.21 | 58.8 | 72.0 | 44.9 | 70.34 | 77.9 | 73.22 |

| PIE 3 5→27 | 30.67 | 29.26 | 59.63 | 31.15 | 84.50 | 80.12 | 59.20 | 35.24 | 83.48 | 48.93 | 84.05 | 83.54 | 82.87 | 85.19 | 86.8 | 92.2 | 61.5 | 86.06 | 95.9 | 94.65 |

| PIE 4 5→29 | 16.67 | 16.30 | 29.35 | 17.59 | 49.75 | 48.23 | 26.96 | 28.43 | 54.17 | 24.00 | 52.21 | 56.07 | 46.50 | 52.98 | 52.6 | 66.9 | 35.4 | 49.02 | 66.5 | 62.99 |

| PIE 5 7→5 | 24.49 | 24.22 | 41.81 | 25.24 | 57.62 | 52.39 | 39.40 | 38.90 | 62.33 | 49.00 | 57.89 | 63.69 | 25.21 | 58.13 | 59.2 | 69.9 | 31.4 | 72.62 | 81.2 | 81.27 |

| PIE 6 7→9 | 46.63 | 45.53 | 51.47 | 47.37 | 62.93 | 54.23 | 37.74 | 49.39 | 64.64 | 24.00 | 61.58 | 61.27 | 54.77 | 63.92 | 64.5 | 65.9 | 34.9 | 74.34 | 74.0 | 82.1 |

| PIE 7 7→27 | 54.07 | 53.35 | 64.73 | 54.25 | 75.82 | 68.36 | 49.80 | 53.26 | 79.90 | 48.97 | 82.34 | 82.37 | 58.96 | 76.16 | 77.9 | 85.3 | 53.5 | 87.86 | 88.6 | 91.91 |

| PIE 8 7→29 | 26.53 | 25.43 | 33.70 | 27.08 | 39.89 | 37.34 | 17.09 | 36.95 | 44.00 | 24.00 | 41.42 | 46.63 | 35.41 | 40.38 | 44.3 | 48.7 | 26.4 | 61.70 | 68.8 | 69.54 |

| PIE 9 9→5 | 21.37 | 20.95 | 34.69 | 21.82 | 50.96 | 43.54 | 37.39 | 34.03 | 58.46 | 49.00 | 54.14 | 56.72 | 22.81 | 53.12 | 53.8 | 69.4 | 38.2 | 73.91 | 78.8 | 77.16 |

| PIE 10 9→7 | 41.01 | 40.45 | 47.70 | 43.16 | 57.95 | 54.87 | 35.29 | 49.54 | 59.73 | 23.95 | 60.77 | 61.26 | 44.19 | 58.67 | 59.8 | 65.4 | 30.5 | 72.56 | 76.7 | 80.9 |

| PIE 11 9→27 | 46.53 | 46.14 | 56.23 | 46.41 | 68.45 | 62.76 | 44.03 | 48.99 | 77.20 | 48.97 | 77.23 | 77.83 | 56.86 | 69.81 | 70.6 | 83.4 | 60.6 | 86.96 | 85.2 | 90.68 |

| PIE 12 9→29 | 26.23 | 25.31 | 33.15 | 26.78 | 39.95 | 38.21 | 17.03 | 39.34 | 47.24 | 24.00 | 43.50 | 44.24 | 41.36 | 42.13 | 41.9 | 61.4 | 40.7 | 69.85 | 70.8 | 71.01 |

| PIE 13 27→5 | 32.95 | 31.96 | 55.64 | 34.24 | 80.58 | 75.12 | 59.51 | 42.20 | 83.10 | 49.00 | 79.83 | 81.84 | 72.14 | 81.12 | 82.7 | 93.1 | 61.3 | 90.00 | 93.3 | 95.55 |

| PIE 14 27→7 | 62.68 | 60.96 | 67.83 | 62.92 | 82.63 | 80.53 | 60.58 | 63.90 | 82.26 | 23.96 | 84.71 | 85.27 | 88.27 | 8.92 | 85.6 | 90.1 | 56.7 | 88.40 | 95.00 | 93.01 |

| PIE 15 27→9 | 73.22 | 72.18 | 75.86 | 73.35 | 87.25 | 83.72 | 64.88 | 61.64 | 86.64 | 24.00 | 89.17 | 90.95 | 86.09 | 89.51 | 88.2 | 89.0 | 67.8 | 84.62 | 92.3 | 90.37 |

| PIE 16 27→29 | 37.19 | 35.11 | 40.26 | 37.38 | 54.66 | 52.78 | 25.06 | 46.32 | 58.33 | 24.00 | 53.62 | 53.80 | 74.32 | 56.26 | 57.2 | 75.6 | 50.4 | 75.24 | 81.1 | 82.65 |

| PIE 17 29→5 | 18.49 | 18.85 | 26.98 | 20.35 | 46.46 | 27.34 | 32.86 | 32.92 | 48.02 | 49.00 | 52.73 | 57.44 | 17.52 | 29.11 | 49.4 | 62.9 | 31.3 | 54.05 | 73.8 | 63.17 |

| PIE 18 29→7 | 24.19 | 23.39 | 29.90 | 24.62 | 42.05 | 30.82 | 22.89 | 37.26 | 45.61 | 23.89 | 47.64 | 53.84 | 41.06 | 33.28 | 45.1 | 57.0 | 24.1 | 67.46 | 71.2 | 75.5 |

| PIE 19 29→9 | 28.31 | 27.21 | 29.90 | 28.49 | 53.31 | 36.34 | 22.24 | 36.64 | 52.02 | 24.00 | 51.66 | 55.27 | 49.20 | 39.85 | 55.9 | 65.9 | 35.4 | 70.77 | 74.1 | 76.83 |

| PIE 20 29→27 | 31.24 | 30.34 | 33.64 | 31.33 | 57.01 | 40.61 | 30.72 | 38.96 | 55.99 | 48.94 | 58.82 | 61.82 | 34.75 | 47.13 | 59.6 | 74.8 | 48.2 | 76.78 | 81.8 | 82.9 |

| Average | 34.76 | 33.85 | 44.75 | 35.35 | 60.24 | 53.69 | 37.29 | 42.14 | 63.10 | 33.98 | 62.56 | 65.09 | 53.39 | 58.80 | 62.8 | 73.1 | 43.4 | 74.42 | 80.5 | 80.88 |

| Tasks | Primitive Algorithms | Transfer Learning Algorithms | ||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| NN | PCA | SVM | TCA [5] | GFK [29] | JDA [6] | SA [15] | TJM [7] | CORAL [31] | CDDA [33] | ILS [34] | BDA [35] | JGSA [8] | KUFDA [16] | DICE [38] | STJML Proposed | |

| A→C | 70.1 | 76.49 | 74.2 | 80.14 | 77.73 | 82.01 | 77.1 | 82.45 | 79.0 | 82.1 | 78.9 | 80.23 | 81.12 | 85.12 | 83.6 | 84.14 |

| A→D | 52.3 | 59.87 | 51.7 | 65.60 | 59.23 | 70.06 | 64.9 | 72.61 | 67.1 | 68.2 | 72.5 | 64.97 | 68.78 | 78.34 | 66.0 | 78.34 |

| A→W | 69.9 | 69.15 | 63.1 | 76.94 | 73.89 | 83.72 | 76.0 | 82.71 | 74.8 | 78.1 | 82.4 | 76.61 | 78.30 | 80.16 | 76.6 | 91.11 |

| C→A | 81.9 | 86.43 | 86.7 | 86.63 | 86.01 | 88.10 | 83.9 | 85.80 | 89.4 | 86.5 | 87.6 | 86.01 | 86.22 | 89.83 | 89.5 | 90.51 |

| C→D | 55.6 | 61.14 | 61.5 | 69.42 | 62.42 | 72.61 | 66.2 | 75.79 | 67.6 | 66.1 | 73.0 | 66.88 | 77.07 | 80.13 | 69.9 | 87.89 |

| C→W | 65.9 | 74.23 | 74.8 | 74.91 | 74.91 | 80.67 | 76.0 | 77.96 | 77.6 | 77.1 | 84.4 | 75.93 | 76.61 | 87.83 | 79.8 | 89.15 |

| D→A | 57.0 | 67.43 | 58.7 | 75.15 | 68.58 | 77.13 | 69.0 | 80.79 | 75.6 | 82.6 | 79.2 | 74.32 | 86.95 | 85.21 | 83.2 | 86.84 |

| D→C | 48.0 | 58.50 | 55.5 | 69.18 | 59.57 | 70.52 | 62.3 | 74.44 | 64.7 | 76.1 | 66.5 | 69.72 | 78.09 | 80.38 | 78.7 | 82.63 |

| D→W | 86.7 | 95.59 | 91.8 | 96.61 | 95.93 | 97.62 | 90.5 | 96.94 | 94.6 | 93.7 | 94.2 | 97.63 | 97.62 | 98.87 | 95.8 | 98.3 |

| W→A | 62.4 | 75.15 | 69.8 | 80.27 | 79.01 | 84.2 | 76.6 | 82.25 | 81.2 | 86.5 | 85.9 | 80.79 | 90.81 | 91.56 | 88.8 | 90.51 |

| W→C | 57.5 | 69.01 | 64.7 | 75.24 | 70.16 | 74.79 | 70.7 | 78.45 | 75.2 | 80.1 | 77.0 | 76.22 | 76.66 | 84.12 | 82.0 | 83.17 |

| W→D | 83.9 | 94.90 | 89.4 | 93.63 | 94.90 | 96.81 | 90.4 | 94.90 | 92.6 | 92.8 | 87.4 | 92.36 | 92.99 | 100 | 88.1 | 100 |

| Average | 65.93 | 73.9 | 70.15 | 78.64 | 75.19 | 81.52 | 75.3 | 82.09 | 78.2 | 80.8 | 80.7 | 78.47 | 82.60 | 86.83 | 81.8 | 88.59 |

| Tasks | Primitive Algorithm | Transfer Learning Algorithms | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1NN | PCA | SVM | GFK [29] | TCA [5] | JDA [6] | CORAL [31] | TJM [7] | SCA [30] | JGSA [8] | ARTL [14] | ILS [34] | RTML [22] | DICD [36] | LDADA [39] | DICE [38] | STJML Proposed | |

| C→A | 23.7 | 39.5 | 53.1 | 46.0 | 45.6 | 43.1 | 52.1 | 46.8 | 45.6 | 51.5 | 44.1 | 48.5 | 49.3 | 47.3 | 54.8 | 50.2 | 49.69 |

| C→W | 25.8 | 34.6 | 41.7 | 37.0 | 39.3 | 39.3 | 46.4 | 39.0 | 40.0 | 45.4 | 31.5 | 41.4 | 44.7 | 46.4 | 60.2 | 48.1 | 46.1 |

| C→D | 25.5 | 44.6 | 47.8 | 40.8 | 45.9 | 49.0 | 45.9 | 44.6 | 47.1 | 45.9 | 39.5 | 45.9 | 47.6 | 49.7 | 41.5 | 51.0 | 50.32 |

| A→C | 26.0 | 39.0 | 41.7 | 40.7 | 42.0 | 40.9 | 45.1 | 39.5 | 39.7 | 41.5 | 36.1 | 40.0 | 43.7 | 42.4 | 38.4 | 42.7 | 41.85 |

| A→W | 29.8 | 35.9 | 31.9 | 37.0 | 40.0 | 38.0 | 44.4 | 42.0 | 34.9 | 45.8 | 33.6 | 39.0 | 44.3 | 45.1 | 49.3 | 52.2 | 53.22 |

| A→D | 25.5 | 33.8 | 44.6 | 40.1 | 35.7 | 42.0 | 39.5 | 45.2 | 39.5 | 47.1 | 36.9 | 40.1 | 43.9 | 38.9 | 39.1 | 49.7 | 49.04 |

| W→C | 19.9 | 28.2 | 28.8 | 24.8 | 31.5 | 33.0 | 33.7 | 30.2 | 31.1 | 33.2 | 29.7 | 31.2 | 34.8 | 33.6 | 31.7 | 37.8 | 33.21 |

| W→A | 23.0 | 29.1 | 27.6 | 27.6 | 30.5 | 29.8 | 36.0 | 30.0 | 30.0 | 39.9 | 38.3 | 37.6 | 35.3 | 34.1 | 35.1 | 37.5 | 43.01 |

| W→D | 59.2 | 89.2 | 78.3 | 85.4 | 91.1 | 92.4 | 86.6 | 89.2 | 87.3 | 90.5 | 87.9 | 86.0 | 91.0 | 89.8 | 74.6 | 87.3 | 93.9 |

| D→C | 26.3 | 29.7 | 26.4 | 29.3 | 33.0 | 31.2 | 33.8 | 31.4 | 30.7 | 29.9 | 30.5 | 34.6 | 34.6 | 34.6 | 29.9 | 33.7 | 33.3 |

| D→A | 28.5 | 33.2 | 26.2 | 28.7 | 32.8 | 33.4 | 37.7 | 32.8 | 31.6 | 38.0 | 34.9 | 41.2 | 33.3 | 34.5 | 40.6 | 41.1 | 39.35 |

| D→W | 63.4 | 86.1 | 52.5 | 80.3 | 87.5 | 89.2 | 84.7 | 85.4 | 84.4 | 91.9 | 88.5 | 85.8 | 89.0 | 91.2 | 74.7 | 84.1 | 93.22 |

| Average | 31.4 | 43.6 | 41.1 | 43.1 | 46.2 | 46.8 | 48.8 | 46.3 | 45.2 | 50.0 | 44.3 | 47.6 | 49.3 | 49.0 | 47.5 | 51.3 | 52.18 |

| Methods | Running Time (s) | Methods | Running Time (s) |

|---|---|---|---|

| 1-NN | 2.22 | CORAL | 47.05 |

| TCA | 3.42 | JGSA | 482.665 |

| GFK | 19.64 | TJM | 22.15 |

| JDA | 21.96 | Proposed Method | 72.74 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sanodiya, R.K.; Yao, L. A Subspace Based Transfer Joint Matching with Laplacian Regularization for Visual Domain Adaptation. Sensors 2020, 20, 4367. https://doi.org/10.3390/s20164367

Sanodiya RK, Yao L. A Subspace Based Transfer Joint Matching with Laplacian Regularization for Visual Domain Adaptation. Sensors. 2020; 20(16):4367. https://doi.org/10.3390/s20164367

Chicago/Turabian StyleSanodiya, Rakesh Kumar, and Leehter Yao. 2020. "A Subspace Based Transfer Joint Matching with Laplacian Regularization for Visual Domain Adaptation" Sensors 20, no. 16: 4367. https://doi.org/10.3390/s20164367

APA StyleSanodiya, R. K., & Yao, L. (2020). A Subspace Based Transfer Joint Matching with Laplacian Regularization for Visual Domain Adaptation. Sensors, 20(16), 4367. https://doi.org/10.3390/s20164367